Abstract

This article analyzes the translation of law into computer code and the use of automated decision-making systems in government to make legal distinctions. Specifically, how are algorithmic decisions tied to law, and what happens when legal effects are mediated through technologies? The sociology of translation and Bruno Latour's theory of law, as elaborated by Kyle McGee, provides the means to study associations between law and technology. I trace how the force of law can be extended when mediated through computer systems and analyze the associations of law and technology in Canada's government, through projects exemplifying the shift to “code-driven law.” These include the translation of “rules-as-code,” and several of the sociotechnical systems governing Canada's borders, demonstrating how design choices in government digital services inevitably shape the outcomes of public policy and can have legal effects. While Latour's legal scholarship avoided traditional questions of legitimacy, a key consideration for automated government systems is how legitimacy is constructed and contested. For rules-as-code, legitimate algorithmic outcomes should be traceable to law, but existing government systems commonly maintain legitimacy by identifying a human actor “in-the-loop” as the ultimate decision-maker, thereby obscuring how thoroughly imbricated human and algorithmic agency are in contemporary governance.

Introduction

We live in near-constant contact with algorithmic actants, even if we are unaware of them or do not understand how they function. These algorithms act upon us in various ways and “govern” many aspects of our lives, including our social media feeds, online recommendations, access to credit, and eligibility for services (Issar and Aneesh 2022). But the increasing use of algorithmic systems by governments, often referred to by the acronym ADM (automated decision-making; see Cobbe 2019), raises additional concerns about the association between code and law.

Science and technology studies (STS) approaches to law have dealt with questions of legal facts, authoritative knowledge, and situations where science and technology become involved in legal disputes (Jasanoff 2015; Cole and Bertenthal 2017), but of primary interest here are ways that STS can inform an analysis of the materiality of law (Faulkner, Lange, and Lawless 2012), as well as questions of nonhuman or hybrid agency, particularly as these relate to controversies involving algorithmic systems (Cellard 2022; Dahlin 2024). This article analyzes the mediation of law and technology through the sociology of translation, by drawing chiefly on the work of Bruno Latour, as extended by Kyle McGee (2014), and supplemented by Mireille Hildebrandt's (2015, 2020) work on “data-driven agency” and “code-driven law.” While Latour (2010) analyzes the “passage of law” within the Conseil d'Etat, McGee (2014, xx) further develops these arguments to examine how “the force of law” is carried and transformed through the “many [technological] mediators” that exist “outside” a legal institution. Few empirical analyses have subsequently followed these threads, with more attention paid to recognizably legal practices (legislating, litigating; see McGee 2015; Seear 2020), than on how legal effects are materialized through regularly encountered technologies. The sociology of translation is particularly valuable for conceptualizing algorithmic systems (Christin 2020), and McGee's (2014) underappreciated theorization of technology's normative effects provides a way to combine Latour's sociology of translation with newer scholarship on algorithmic governance. This study contributes to STS-informed analyses of law and materiality, with a focus on the widespread consequences of “digitalization” (Plesner and Justesen 2022), where the force of law is mediated through computer code and algorithmic systems. The development of algorithmic systems that can contribute to legal effects has created controversies of legitimacy, as can be seen through empirical examples of Canadian digitalization projects. I highlight the importance of tracing the associations through which the force of law is constituted and expressed, including written rules, algorithmic translations, and their individualized legal effects.

***

Digitalization is part of a broad trend among state bureaucracies internationally, to make greater use of computer systems when interacting with individuals and making decisions about their legal status. This has been conceptualized as a transformation of “street-level” bureaucracy into its digital successors: “screen-level” and “system-level” bureaucracies (Bullock, Young, and Wang 2020, 493-494). This bureaucratic transformation necessitates translating written rules into computer code, and delegating to algorithms some of the work previously carried out by humans. In short, the growing use of digital technologies to mediate relationships between governments and their subjects necessitates a transformation in how law is expressed—a translation from law as it was once written and practiced, to a world where law is increasingly expressed in computer code.

The sociology of translation was developed in the 1980s by Michel Callon, Bruno Latour, and John Law (Law 1986), with actor-network theory (ANT) being the best-known result, and probably the most common point of engagement between STS and socio-legal scholarship since the 1990s (Cole and Bertenthal 2017). Latour (1999, 179) writes that in his earlier work, he “used translation to mean displacement, drift, invention, mediation, the creation of a link that did not exist before and that to some degree modifies the original two.” Many of the problems in the sociology of translation are about how collectives are formed and actants linked together (Latour 1986). When the sociology of translation has been used to inform translation theory (Freeman 2009) or studies of policy translation (Balen and Leyton 2016; Berger and Esguerra 2019), the emphasis is on how the process of translation involves forging new linkages and associations, rather than the preservation of meaning in a text.

In this article, the sociology of translation provides a method or “toolkit” for “telling interesting stories” (Law 2009, 142) about the relations involved in technological change. These are stories about the uneven shift toward “code-driven law” (Hildebrandt 2020) in Canada, encompassing translations of written law into computer code, and the use of computer code to assist or automate decision-making. Among these, rules-as-code (RaC) is a story of largely unrealized ambitions to transform government, while the more commonplace reality of digital decision-making is seen in algorithmic systems for border governance. The fundamental problem these systems confront is the translation of law into computer code, and the consequences of this process, including the legitimacy of algorithmic decisions. RaC tackles this translation problem directly through a foundational and systematic process of legal translation (OECD Observatory for Public Sector Innovation 2020), but remains largely an experimental approach. Existing practices in algorithmic decision-making favor keeping a “human-in-the-loop” as a means of maintaining legitimacy for the “final decision” (IRCC 2022), even though “algorithmic agency” (Peeters 2020) plays a significant and often unexamined part.

The cases discussed in this article draw on Canadian government documents collected since 2018 as part of a study of automation in the federal public service. 1 The Government of Canada (2023) is notable for its ambitious “digital transformation” of government and the numerous challenges and controversies these efforts have produced in recent years, which have been documented across a range of government sources. RaC experiments were documented in open or public venues (i.e., McNaughton 2021; Team Babel 2021), while the more opaque associations constituting border governance have been revealed through government investigations and documents obtained through access-to-information requests, particularly as these have followed public controversies about algorithmic systems.

The algorithmic systems discussed in this article have legal effects, which is to say they work in ways that have consequences for the legal status of human and nonhuman entities—and this is true regardless of whether they are designed as decision-making systems, or ADMs. These legal effects are not just derived from a representation of law as computer code, but are shaped by the many “microchoices” made by programmers and designers (Merigoux, Alauzen, and Slimani 2023), the constellation of actants held together within a socio-technical system, and the interaction of human and algorithmic agency (Peeters 2020). Furthermore, the translation of law into computer systems can lead to widespread (“at scale”), durable, and incontestable legal effects. Because of this, the shift to code-driven law risks “freezing” (Hildebrandt 2020) translations of law into layers of inscrutable technologies, necessitating the development of new channels of contestation and traceable associations.

Governing Through Code: Algorithmic Legal Effects and Legitimacy

Lawrence Lessig helped establish the idea that code regulates through the design or “architecture” of computer systems, arguing that code should be taken seriously by legal scholars, because its ultimate effects were comparable to law (Lessig 2006). More recent scholarship demonstrates how choices made in the design of government systems have major consequences for how people are governed (Gritsenko and Wood 2022; Mulligan and Bamberger 2019). Users of digital services are required to follow a “script” or “framework of action” (Akrich, as cited in Merigoux, Alauzen and Slimani 2023, 3) as specified by coders, digital designers, and system architects, the outcome of which can include legal effects.

Here, “legal effect” refers to the “legal consequences of a certain event, action or state of affairs”—one that “changes the legal status of a person or other entity” (Hildebrandt 2015, 144, 168). For instance, an ADM system that algorithmically approves or rejects visa applications has a legal effect, but so does a system that triages applications into high- and low-risk categories for further human review. Because human and machine agency are intertwined in socio-technical systems (Enarsson, Enqvist, and Naarttijärvi 2022; Leonardi 2011; Peeters 2020), the legal effects of technologies are mediated through human action, and vice versa.

While algorithms deployed by private actors can have legal effects (as when they discriminate between “protected characteristics,” see Adams-Prassl, Binns, and Kelly-Lyth 2023), public sector algorithms or ADMs exist in closer association to the law. This is because the operations of government typically have an explicit legal justification, and government decisions should accord with principles of administrative law (see Latour 2010) in order to be seen as legitimate. The legitimacy of algorithmic systems in government is a significant problem, in that algorithmic decision-making has been presented as a “threat” to legitimacy (Danaher 2016; Grimmelikhuijsen and Meijer 2022), particularly when work formerly carried out by a human public servant is effectively delegated to an autonomous technology. Rather than beginning with a theory about where legitimacy resides or how it is obtained (see Diver 2022), in this article I study how the problem of legitimacy is confronted in governments undergoing automation and “digital transformation.”

Suchman's influential definition of legitimacy is that of “a generalized perception or assumption that the actions of an entity are desirable, proper, or appropriate” (as cited in Grimmelikhuijsen and Meijer 2022, 234). In contrast, the approach taken in this article follows what actors say and do, without recourse to a society's “generalized perception.” Therefore, legitimacy exists insofar as it is asserted and challenged. Rather than an element that is present or absent, we witness processes of legitimation and delegitimation; legitimacy must be raised, can be contested, and (potentially) adjudicated. Characterizing something as legitimate or illegitimate invites evaluation—following associations that tie some entity to a stated source of legitimacy. It is common for the legitimacy of algorithmic systems that contribute to legal effects to be “backstopped” by reference to human agency. This neutralizes the threat of new technologies by appealing to human actors as somehow inherently legitimate, or whose legitimacy is taken for granted. I argue we need ways to better construct legitimacy through contestability in computer code instead, and to examine how human and machine agency relate in government decision-making.

Extending the Sociology of Legal Translation into Technical Artifacts

The sociology of translation, represented here primarily through the work of Bruno Latour, provides a valuable means of theorizing these problems, including the processes through which the law is itself produced (Latour 2010), and the extension of this law through technological artifacts (McGee 2014). For socio-legal studies, Latour's work serves as a demonstration of methodology rather than as an explanatory theory (see Sayes 2014), or “a way of following the disparate people and materials that comprise law” (Cole and Bertenthal 2017, 360). While Latour (2010) and McGee (2018) have little use for the concept of legitimacy in their analysis of law, my interest is in the way legitimacy is raised in arguments about law and code. Latour's approach provides a way to study associations that are justified or questioned on the basis of legitimacy.

As a starting point, we can say that “the law” does not cause legal effects, but acts instead as a “regime of enunciation,” within which legal statements are linked up, assigned or attributed to persons, mediated through material artifacts, and evaluated in terms of their “felicity conditions” (Latour 2010; McGee 2014). Studying the law through Latour's ethnographic methods means: to approach legality not by way of its…grandeur and its totalizing power, but by way of the myriad associations composing legality (or legalities), the mundane performances of legality, and the slight but decisive displacements that the intervention of legal objects and actors makes in constructing societies and rendering them durable or transient, supple or rigid. (McGee 2014, 124)

In The Making of Law, Latour (2010) closely observes the workings of France's highest institution of administrative law. McGee (2014, 147) argues that insights from this legal ethnography can be “plugged into” Latour's other works, particularly those dealing with technoscience. As a socio-legal analysis that extends Latour's (2010) argument and draws connections between his earlier and later scholarship, McGee (2014, 181) is particularly valuable in theorizing “the media of law's expression,” or the “technical artifacts” through which “law's force condenses, solidifies, and becomes rigid.”

In line with Latour's (1992) earlier work on delegation and the use of technology to make governance durable, such as the example of a police officer being replaced by a cardboard cutout or concrete speed bump, technical artifacts can extend the force of law “into the physical world of things, altering its nature by functionalizing it and incarnating one particular though irrefutable interpretation of the legal utterance” (McGee 2014, 182). This “irrefutability” means that technological effects are often nonnegotiable; there is no negotiating with a speed bump or turnstile, although they may sometimes be circumvented.

Rather than a process of technology making law more durable, the more general process at work is the translation or transformation of a “legal utterance” from one medium of expression to another, as the two become associated. While Latour's ideas are often distilled as an argument for the existence of nonhuman agency (Sayes 2014), the focus of ANT is always on associations rather than individual “actants.” McGee (2014, 166) cautions that one will never find law in an “unmediated” form or “sufficient unto itself.” A physical barrier that restricts access is not the material embodiment of some abstract law. Instead, the law is materialized in written text, and the association between those words and the barrier is a horizontal one; the “transmission from one substance of expression to another,” bound together “through the chains of obligations” (McGee 2014, 166).

McGee (2014, 167) argues that the relationship between law and technology “cuts both ways,” through “mutual or dual inscription,” referring to how “law makes technicity durable as technicity makes law durable.” The technology that has contributed most to the durability of the law is that of writing, which entails the “externalization of law on material carriers,” such as the printed page, allowing for “the polity [to] be extended, enabling a shift from local to translocal law” (Hildebrandt 2015, 177, emphasis in original). Through writing, law becomes external to the lawmaker, creating continuity and durability, and extension across time and space. Moving in the other direction, we can see how physical things, made of materials that would otherwise crumble and degrade, can be made durable by regulations that mandate construction standards and maintenance, as with building codes and road infrastructure. Repair work can be legally obligated, but this also means that legal disputes can lead to a breakdown of infrastructure (Turner 2023). In short, materiality and law reinforce one another.

Through “delegative legality,” material infrastructure increases the durability and reach of law in general, while embedding specific limitations and points of brittleness, “rendering it at once more expansive and more fragile” (McGee 2014, 169). Technologies can make law mobile and inflexible, as when an app forces users of a service to “agree” to a legal contract. However, these technologies have their “vulnerabilities, or frailties” (McGee 2014, 182), such as when a system fails for users with an incompatible device, it experiences a “glitch,” is hacked, circumvented, or otherwise ignored. While traditional law enforcement is notoriously patchy, inconsistent, and discretionary, enforcement through technical means can be entirely undermined by a single systematic flaw, like a coding error that causes a system-wide crash. Computer code is particularly distinctive in this regard, being easily reproducible and widely deployable. Encoding law can therefore produce uniformity and consistency across any system that can run the code, but this can also lead to the perfect reproduction of error, which “elevates the impact if something were to go wrong” (IRCC 2022, 2). To approach these developments through the sociology of translation means attending to how an algorithmic decision-making system has been stabilized as a durable host of associations and complex arrangements, which can include enduring points of failure, as in the example of the ArriveCAN “glitch” discussed later in this article. First, we must consider more fundamentally what the translation from written law to computer code entails.

From Written Law to Encoded Law

As mentioned above, written text can be considered a foundational technology of modern law, and understanding the relevant characteristics of written language is a starting point for appreciating the changes associated with emerging technologies. This is the approach of Mireille Hildebrandt (2015), who argues that the reach of modern law across space and time is a product of a particular information and communication infrastructure based on writing and the printing press. Because law today depends on the technologies through which it was recorded and distributed, we can consider how changes in information and communication infrastructures necessitate changes in law.

The first project considered here, RaC, is a purposeful digital transformation of the legal foundations of government. In Canada and elsewhere it is articulated around the desire to address the problems of digital government at the root, through a new vision of rule-making that accommodates computer systems from the outset, and which aims to build trust and legitimacy for digitally mediated decision in government (see Crnomarkovic et al. 2022). While RaC is a normative project for how rules should be translated into code, the projects considered subsequently are more commonplace examples of situations where rules must be translated into code. As with RaC, the legitimacy of these sociotechnical systems is open to question, but rather than attempting to construct a new basis for legitimacy (as RaC does), they rely on established assumptions about the legitimacy of human decision-making, even for hybrid sociotechnical processes that are highly reliant on automated translations. All of the projects covered here are part of a gradual shift toward code-driven law, which may lead to the folding of law's “enactment, interpretation, and application into one stroke, collapsing the distance between legislator, executive and court” (Hildebrandt 2020, 70). This carries the danger of imposing rigid legal effects on the basis of largely unaccountable design decisions, challenging some of the fundamental principles around which our legal systems developed (Diver 2022; Hildebrandt 2015, 2020).

Translating Law Through RaC and the Problem of Legitimacy

Encoding rules is a very specific kind of translation problem, but making these translations widely deployed and durable is a challenge that is common to technological projects involving government (Latour 1996). The primary goal of RaC is to solve the problem of translating rules that are ordinarily expressed in written, human language, into machine-readable code, recognizing “machines as users” of the law (Crnomarkovic et al. 2022). The second problem involves translating the interests of a variety of actors to make the first goal possible. Both problems can benefit from an analysis based on the sociology of translation. RaC is a project to solve the problem of digitalizing rules, but to do so, it must convince, enroll, or seduce the necessary actors in government.

RaC begins with the recognition that our world is premised on written rules, but digital systems and automation are involved in government decision-making, therefore creating a problem in translation. People interacting with government systems that produce legal effects (including tax filing and benefits applications) often do so through digital systems that encode different kinds of written policy, such as tax regulations or eligibility criteria for government programs (Mohun and Roberts 2020, 17-18). Laws may be written first and then used as a basis for code, but encoding a written rule necessitates narrowing down one possible legal interpretation among many. Those who must translate written rules into code typically confront numerous ambiguities about how exactly this should be done (Barraclough, Fraser, and Barnes 2021; Merigoux, Alauzen, and Slimani 2023), whether in specifying how an algorithm should calculate some output, or in the making of design choices, such as the layout and functionality of a digital form.

At present, many such translations of a law can theoretically coexist. Each tax filing software developer creates their own model of tax law, and individual employers are responsible for making sure that their payroll systems correctly calculate overtime obligations (see CSPS 2021). RaC imagines a world where rule-making is transformed for the “better” (Barraclough, Fraser, and Barnes 2021; Mohun and Roberts 2020), by drafting written rules in a standardized (and potentially authoritative) 2 coded form, to be used by computer systems and as a basis for further translations. Decision-making systems could then legally account for their decisions, which would be consistent with and “traceable” to coded rules (Crnomarkovic et al. 2022). Explanatory systems based on RaC could inform users about complex regulations and help them navigate the web of relevant, inter-related rules for a specific situation (CSPS 2020a). Policy-makers could benefit from RaC-based simulations to model the effects of rule changes or amendments (CSPS 2021). These potential uses appeal to a range of desires that might help align interested actors with RaC: desires for legality, accountability, predictability, consistency, and efficiency.

The legitimacy of RaC remains an open question and a point of contestation (see Kennedy 2024). If we equate legitimacy with legality (see Diver 2022, chap. 4), then RaC is indeed a way to bolster the legitimacy of coded rules by associating them as closely as possible with written law and “reducing the likelihood of ‘incorrect translation’” (Barraclough, Fraser and Barnes 2021, sec. 111). If we presume the legitimacy of established institutions for lawmaking and adjudication (namely democratic processes and the courts), then the legitimacy of RaC can be evaluated in terms of how it conforms with these normative standards. But how and where is it decided when a translation is “correct,” given the flexibility of legal interpretation? Encoded laws may lack legitimacy if these are not produced through a democratic process equivalent to the written law and subject to comparable judicial review (Barraclough, Fraser, and Barnes 2021; Kennedy 2024).

If we accept that a key requirement of legitimacy is contestability (Diver 2022), and that lack of contestability is one of the ways that the “force of code differs from the force of law” (Hildebrandt 2020, 78), then RaC appears to both enable and restrict possibilities for legal contestation. On the one hand, contestability is presented as a justification for RaC, because systems built using RaC allow individuals to follow or “audit” the chain of legal reasoning for decisions made about them, and appeal as needed (CSPS 2021). However, as Hildebrandt asks, if we assume that the rule has been translated “correctly,” thereby eliminating the problem of “conflicting interpretation, why should people contest things?” (Crnomarkovic et al. 2022). Contestability may be limited to identifying errors in the information inputs used to determine legal obligations, without providing a way to “contest the precise boundaries of these obligations, or the legal justification of a government's authority to impose them” (Barraclough, Fraser, and Barnes 2021, sec. 534).

Democratic challenges are also relevant, with more ambitious visions of RaC (as “better rules,” see Barraclough, Fraser, and Barnes 2021) imagining a transformation in policy development through multidisciplinary teams combining technical, legal, and policy expertise. 3 This could provide better alignment between written law, code, and “policy intent,” improving communication across government “silos.” However, there has been less emphasis on democratic engagement involving people from outside government (Barraclough, Fraser and Barnes 2021). RaC advocates have also imagined a more dynamic and “iterative” approach to rule-making (CSPS 2020b; Mohun and Roberts 2020), but it is unclear what changes this would require of existing democratic processes.

Rather than transforming democracy or the rule of law, RaC practitioners in Canada have experimented with more limited instances of translating existing prescriptive regulations 4 into coded form, namely eligibility for vacation pay (McNaughton 2020c), maternity benefits (Sotoudeh, Mahmood, and Meloche 2021), and overtime (CSPS 2021). The following section discusses these experimental efforts, before moving on to examine some of the more established projects to encode rules in Canada's public sector.

Governing Desires and Realities: RaC in Canada

As with any attempt to transform existing or “entrenched” ways of doing things, RaC requires champions who can recruit allies, enlisting a growing actor network of expanded competence and articulation to endow their project with greater reality. As McGee (2014, 61, 15) notes, one reading of Latour emphasizes how this occurs through agonism; “combat and competition,” in which allies are recruited (or “made to do something”) through “force and fraud.” Yet McGee (2014, 62) also draws on an “erotic” reading of Latour's (1996) Aramis, or the Love of technology, to identify “love” as a key associative strategy in the formation of chains of translation. In Latour's (1996, 86) account, the Aramis project's ability to advance beyond the prototype stage and be “up and running” depended on whether it interested and excited key officials—whether they became convinced that the technology “translates their deepest desires.” Sociotechnical projects must often “seduce” to succeed, attracting and linking actors, and translating their interests.

Early interest and excitement started cohering around RaC following the work of New Zealand's Service Innovation Lab (SIL), which began its experimental governance projects in 2017, and was influenced by previous developments in France, where Matti Schneider had been working on digital services and encoding law since 2014. Schneider joined the New Zealand's SIL in 2018, acting “partly as a bridge” between developments in France and New Zealand (OECD Observatory for Public Sector Innovation 2020). Pia Andrews, who led SIL between 2017 and 2018, subsequently became an international advocate for RaC, continuing to work for different governments and presenting at public sector conferences, including in Canada (FWD50 2020).

In 2019, a Canadian public servant expressed an interest in trying to “replicate” what had been done with RaC in New Zealand and “bring this exciting work to Canada” (McNaughton 2019). This developed into a cross-department “proof of concept” project (McNaughton 2020a), which had, by April 2020, resulted in a simple RaC prototype (HabitatSeven 2020). 5 Meanwhile, Pia Andrews's status as a “public sector transformer” (FWD50 2021) was being increasingly recognized, at a time when Canada's government was endeavoring on a slow and expensive “digital transformation” (Auditor General of Canada 2023a). Andrews was recruited from New South Wales to Canada in 2020 to work on digital services as part of the federal government's largest-ever IT project (Andrews 2020; Auditor General of Canada 2023b), while continuing to champion RaC more broadly (Australian Society for Computers & Law 2021). She was praised for being “a charismatic, experienced and clear vision leader,” resulting in “the attraction of many great talents” to her “team” (Nasr 2021), but RaC was never the main focus of Andrews's work on Canada's massive “Benefits Delivery Modernization” (BDM) project. A three-person team was hired (through collaboration with the nonprofit Code for Canada) to develop an RaC prototype as part of BDM, and a three-week cross-department RaC collaboration occurred in 2021, resulting in another prototype (CSPS 2021). However, by 2022 this work had concluded and Andrews had left the Canadian government. A Canadian “Director of Rules as Code” 6 was employed to develop technologies for encoding law until late 2023, and some experimental projects remained underway in 2024 (Government of Canada 2024b), but the momentum has slowed significantly since the more optimistic moments of 2021 (CSPS 2021).

Like Aramis (Latour 1996), RaC has at different times been constituted around utopian imaginings of technological futures, and occasionally instantiated or materialized as narrowly focused prototypes. In general, these modest experiments have affirmed the challenges of disambiguating even small portions of written rules in order to formalize them as code (Burdon et al. 2023; Witt et al. 2023). As long as it is limited to big ideas and small experiments in governance, we can expect RaC to remain polymorphous; there is no consensus around what it is and what it should become, and this vagueness can be helpful when seeking to interest “different groups with divergent interests [in] a project that they take to be a common one” (Latour 1996, 48). The success or failure of RaC remains an open question, and one that Latour would encourage us to approach “symmetrically”: neither outcome is preordained, and all projects must have “reality” or “existence…added to them continuously” (Latour 1996, 78) in order to become and remain technological objects. The work of adding reality to RaC remains ongoing, along multiple directions, inside and outside of Canada.

In Canada's federal government, however, failures in digital transformation are more prominent than success stories (see Auditor General of Canada 2023a), and Canada is far from exceptional in this regard (Anthopoulos et al. 2016). Any new project built around desires to transform government will immediately confront “a system of hostile conditions” (Evans and Cheng 2021, 606): durable technologies and processes with realities that must actively be unmade, or painfully transformed against the reinforced desires of interested actors. Love and excitement for digital transformation have been effective in pulling together optimistic networks of (often early-career) public servants, but love is not enough, and excitement can be eventually extinguished by an overwhelming “resistance to change” and “preference for the status quo” (Evans and Cheng 2021, 603).

For established actor networks, it remains “possible to get along without” (Latour 1996, 85) RaC; to continue, as Andrews says, to use “lawyers as modems” (CSPS 2020b; Mohun and Roberts 2020) in translating written law to other forms. However, it is also true, as Andrews says, that rules are already encoded “as the current state…across the entire system,” although this often involves “legislation…mashed up…like a spaghetti sauce with operational policy” (Crnomarkovic et al. 2022). As exemplified by the projects described below, the translation of rules into code is routinely happening as part of how government systems operate, providing ongoing illustrations of the problems of code-driven law.

Algorithmic Legal Effects at Canada's Borders

The story of RaC as told above is that of an exciting idea, which, in some circles and for a period of time “captured the imagination of policy innovators” (CSPS 2021). In contrast, this section deals with the somewhat more mundane reality of encoded rules in Canada's federal public sector. Rather than small teams of public servants working on experiments to shift the status quo through new technologies and processes, these are stories of massive bureaucracies confronting problems of scale, and reaching for technological solutions. The projects discussed below all pertain to governing human mobility and national borders through increased automation and digitalization, as rooted in necessity and pragmatic needs of a specific context.

Whereas much of the work on RaC has been carried out “in the open” as an explicit part of its ethos (Sotoudeh, Mahmood, and Meloche 2021), the projects described in this section have been developed in a much more “closed” and opaque context that is more typical of Canada's federal bureaucracy (Clarke 2019). In the context of immigration decisions, some withholding of information is explicitly justified in order to maintain system “integrity” and to prevent applicants from “gaming” decisions (IRCC 2022, 9). But the use of technologies in algorithmic or “hybrid” decisions (Enarsson, Enqvist, and Naarttijärvi 2022) can also be rendered opaque by foregrounding human agency (Peeters 2020). Humans are held accountable or positioned as having the final say for reasons of legitimacy, but this can obscure the role played by nonhumans in decision-making.

Quarantined by Code

Between 2020 and 2022, Canada's government was deeply engaged in responding to the COVID-19 pandemic, including through a series of border controls, as justified by the Quarantine Act. Section 58 of the Quarantine Act (Government of Canada 2005) states that the Governor in Council 7 may make emergency orders (OICs) that impose conditions on border entry to limit disease spread. One such OIC, issued in late October 2020, stated that people arriving in Canada by air, must “provide information by electronic means specified by the Minister of Health” (Government of Canada 2020), and a system called ArriveCAN was identified as the mandatory means for doing so (PHAC 2020). According to a public health official, “the ArriveCAN app was merely a tool to operationalize the OICs…which were the meat of the Canadian response” to the pandemic. As Canada's pandemic response was regularly updated through the issuing of successive OICs, each had to be translated into a software update to ArriveCAN (House of Commons Standing Committee on Government Operations and Estimates 2022). Since updates could take weeks to prepare and test, these had to be coordinated to coincide with the release of planned OICs (CBSA 2023a).

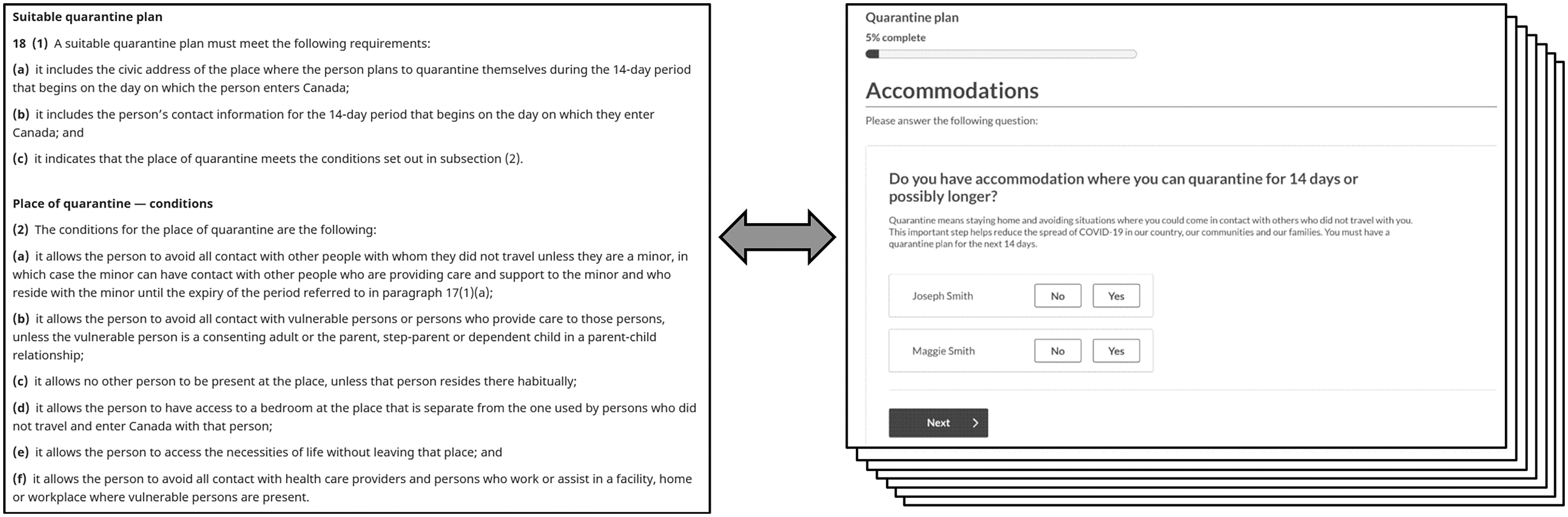

Figure 1: Quarantine plan requirements, as stated in the OIC emergency orders of June 25, 2022 (Government of Canada 2022) and the ArriveCAN app (CBSA 2023b, 53).

Quarantine plan requirements, as stated in the OIC of June 25, 2022 and the ArriveCAN app.

Following one such translation of an OIC issued in June 2022, more than 10,000 people entering Canada were notified that they should quarantine or face fines and jail time. This decision was not made by a human border official, as should have been the case, but as a result of computer code erroneously inserted into the ArriveCAN iOS app. While the stated purpose of ArriveCAN was to “help” border officials “determine eligibility” (Malone 2023), the June 2022 “glitch” resulted in the app itself making the “decision” to quarantine, “at odds with [and superseding] decisions made by…screening officers” (Office of the Privacy Commissioner of Canada 2023, sec. 19).

These notifications were sent in error, but they nevertheless communicated to recipients that their status required isolation from others, and that their compliance could be compelled through law. This information was transferred to a public health agency whose representatives would make “compliance verification calls” to some recipients, under the assumption that the quarantine order was valid (Office of the Privacy Commissioner of Canada 2023, sec. 7). It took government agencies until late July 2022 to learn of and address the issue, and in the meantime many of those being commanded to quarantine could not find any meaningful explanation or accountability for this algorithmic decision (Malone 2023).

It is possible to see the “glitch” as an unauthorized exercise of machine agency, or a usurpation of human authority, as the app “autonomously emailed” (Hill 2022) quarantine orders that it had no right to issue, and which it should not have been able to. But as the sociology of translation has told us repeatedly, there is no easy distinction between the agency of humans and nonhumans (Peeters 2020; Sayes 2014). The agency of the various humans that were supposed to make decisions about border entry and quarantine was already heavily mediated by ArriveCAN, which was built to receive and verify documents that informed these decisions. Furthermore, the “glitch” did not emerge spontaneously within computer circuits. It too was the result of mediated human action, specifically as an unanticipated result of an app update to expand the system's scope (Malone 2023).

As stated earlier, the relation between legal text and its material enactment is horizontal rather than vertical (McGee 2014, 166). The technology assumes a “delegative legality” (McGee 2014, 169), but rather than simply being an instance of law translated into technology, the technology gains a legal status (and the ability to impose a legal effect) through its association with the law. ArriveCAN's existence did not depend on law—the app was released before it was legally mandated and has continued on even after its association to the Quarantine Act was severed in October 2022 (PHAC 2022). However, between 2020 and 2022, as the relevant laws were updated every few weeks, updates to pandemic regulations and computer code were planned in concert (CBSA 2023a), and it was ArriveCAN's “chain of obligations” (McGee 2014) to the Quarantine Act that compelled people to use it and enabled the system to have significant legal effects during the COVID-19 pandemic.

One way of guarding against algorithmic harms in Canadian federal government systems is a mandated process known as Algorithmic Impact Assessment (Reevely 2021b), which under ideal circumstances would have flagged an automated system that made opaque high-impact decisions as being problematic. ArriveCAN's relatively low impact under this assessment was justified by presenting it as an assistive technology that would not actually make any decisions, with border officials ultimately deciding how the “tool” would be used (TBS 2021). As discussed below, the legitimation of algorithmic technologies as merely assistive tools for human decision-makers is common in government, which can deflect scrutiny from the ways that human and algorithmic agency are interrelated. 8

ArriveCAN was developed in the context of pandemic response, and it thereby reflected these dynamic and unusual circumstances of urgent necessity. However, in a number of ways the system also represents something more typical in existing digital services—a series of “one-off” translations between law and code, lacking in traceability, foregrounding human agency to obscure the agency of algorithms, and with the law leaving ample room for governance decisions to be made through design. The following section will more closely attend to these hybrid forms of decision-making at Canada's borders, including how attributions of human agency serve to legitimate automation.

Automated Legal Effects at Immigration, Refugees, and Citizenship Canada

The adoption of algorithmic decision-making at Immigration, Refugees and Citizenship Canada (IRCC) has been the leading edge of service automation in Canada's federal government, and where the first controversy over government algorithms emerged (Molnar and Gill 2018). The need for efficiency is the predominant desire driving this kind of automation in Canada and internationally (Calo and Citron 2021; Nalbandian 2022). At IRCC, rising volumes of applications to enter Canada result in growing backlogs and processing times (IRCC 2023). Rather than increasing the human workforce to match the need, the delegation of some of this work to algorithms provides a lower-cost, “scalable” solution. However, given that this entails the automation of human decision-making, and that such decisions are made (at least in part) through the application of rules, these rules must somehow be encoded.

In contrast with some of the relatively straightforward eligibility requirements that have been encoded in RaC projects, IRCC's “rules” also include “data-driven… factors that correlate with approvals” (IRCC 2023, 63). This confidential “combination of business rules and rules generated by an advanced analytics algorithm” (43), includes rules reviewed and validated by human experts, as well as ones based on historical patterns in officers’ decisions. Routine testing compares the determinations made by the algorithm with that of a human reviewing the same application, in order to bring the outcomes of the two into as close alignment as possible (see Nalbandian 2022, 4-5).

IRCC has been using algorithmic systems to sort or “triage” applications in various ways since 2014. Generally, what this means is that applications to enter or stay in Canada are reviewed by an automated system that makes the decision about what to do next, based on whether the application meets eligibility requirements, is classified as low or high “risk,” 9 or in terms of its “complexity” (IRCC 2023; Standing Committee on Citizenship and Immigration 2022). Applications may be flagged or sorted accordingly—either for expedited processing in less complex and “low risk” situations, or for more in-depth human review. These technologies, which have often been characterized as “artificial intelligence” but are most often internally referred to as different kinds of “analytics,” have proceeded from early “pilot” projects dealing with visa applications from specific countries (China, then India, see CIAJ 2021; IRCC 2020), to encompass a growing number of application “lines” (IRCC 2023). Along the way, these programs have received scrutiny from scholars (Molnar and Gill 2018), journalists (Balakrishnan 2021), lawyers (Tao 2024), and even other federal government agencies. Most notably, the IRCC's early experiments raised concerns at Treasury Board Secretariat (TBS), which is responsible for developing policies for all federal departments to follow. As a consequence, the TBS developed the Algorithmic Impact Assessment process in 2018 (Reevely 2021b). This context of additional legal and political scrutiny for automated systems led to the development of an internal IRCC Policy Playbook on Automated Support for Decision-Making (2022; see Balakrishnan 2022; CIAJ 2021), which became an important source of guidance for future projects and their legitimation.

First, it is notable that the Playbook characterizes automation in terms of the Support it can provide for decision-making, which locates the decision somewhere in the human or organizational realm rather than the automated technology itself. The Playbook begins with the need for IRCC (2022, 5) to “build legitimacy and public trust around its use of automation, analytics and Al” and to “maintain the confidence of Canadians.” One way of doing so is to keep a “human-in-the-loop” of decision-making, as this represents “a form of transparency and accountability that is more familiar to the public than automated processes” (IRCC 2022, 6). In other words, “humans (not computer systems) are accountable for decision-making, even when decisions are carried out by automated systems.” Such accountability means that even if a “final” decision is automated (something the Playbook cautions against), a “human decision-maker must be identified as the officer of record” (IRCC 2022, 6). At earlier stages in the process, while “automated systems can change the time and place of human intervention…automated systems should not displace the central role of human judgment in decision-making.” This human role might encompass “deciding which types of systems to use, which cases to apply them to…which values to encode” or “setting business rules for an automated triage system” (IRCC 2022, 6).

IRCC's processes are explicitly designed to have legal effects through the approval or rejection of applications, and computer code is heavily involved in these processes. However, entanglements between human and machine agency can occur at multiple stages, with shifting relations in these “human/machine constellations” making it difficult to attribute agency to specific actors or objects (Dahlin 2024, 73). However, as in Emma Dahlin's (2024) study, we see that the attribution of agency or autonomy is carefully managed by the humans involved, who may emphasize the machine's autonomy in some situations while “denying” it in others, based on what the humans deem to be important. Here, legitimacy is upheld by public servants who emphasize the human component while downplaying automation as “just supporting one of the many, many decisions that are part of that” (Reevely 2021a). Public communications from IRCC emphasize that algorithms do “not make or recommend refusals” (IRCC 2023, 49), but spokespeople also minimize the role of automation in approving applications—these technologies merely “help officers to identify applications that are routine and straightforward for faster processing” (Reevely 2021a). Specifically, algorithms may automate eligibility decisions where eligibility can be approved with “low risk,” but eligibility is typically followed by an admissibility assessment (using a combination of human and algorithmic review) and a “final decision” attributed to a human (IRCC 2023).

Critics have raised questions over whether these systems algorithmically perpetuate discrimination and human “bias,” and the extent to which human decisions are influenced by algorithms, both of which can be difficult to evaluate given a “lack of transparency” (Standing Committee on Citizenship and Immigration 2022, 49). These threats to legitimacy were clearly illustrated in 2021 when IRCC disclosed that immigration officials had been using a triaging “tool” called Chinook since 2018 to process multiple applications at a time and draft formulaic rejection letters. The existence of the tool was first revealed in a legal case arguing that an immigration official had not properly read the applicant's file. Media reports questioned whether the use of Chinook was linked to an increase in rejections with reduced accountability for decisions (Balakrishnan 2021; Keung 2021).

As argued in the Policy Playbook on Automated Support for Decision-Making for use by IRCC, the ideal algorithmic system is an assistive one; it provides “automated decision support” (such as a recommendation) to a human, and yet the human is still able to “genuinely exercise independent judgment” (IRCC 2022, 56). Rather than being “led to conclusions,” the human decision-maker is “informed” (IRCC 2022, 9), and therefore remains fundamentally accountable for the decision. However, as long as the “genuine” exercise of agency is attributed to the “human-in-the-loop,” the principle of human accountability can limit overall accountability for these hybrid algorithmic systems (see Crootof, Kaminski, and Nicholson II Price 2023; Tao 2024). Holding a human accountable for a final decision is useful for legitimacy and accountability, but it does not mean that the human had a meaningful role to play in a decision arrived at the end of a long series of steps involving a sociotechnical system. What is needed is an approach to algorithmic governance that places less weight on the distinction between genuinely human or autonomous nonhuman agency, but can address how computer programs increasingly “determine the script that both users and administrations have to follow… [shaping] the relationship between the state and citizens” (Merigoux, Alauzen, and Slimani 2023, 3).

Conclusion

This analysis has contributed to the extension of the sociology of translation to the study of associations between law and technology, and the tracing of legal effects through algorithmic systems in government. Such systems can widely extend the force of law through its translation into replicable and executable computer code, but every translation of a rule is a transformation that requires choices to be made in ambiguous circumstances, and for algorithmic systems this includes questions of technological design. While the inherent multiinterpretability of law is key to its flexibility, computer code requires exactitude and consistency (Hildebrandt 2020). RaC is an attempt to reconcile this tension, as existing algorithmic translations of law and policy lack the transparency and contestability promoted by RaC advocates. Instead, the decisions made using ADM systems are often legitimated through association with a human (final) decision-maker, based on a fiction of genuine, independent human agency. This contrasts with the findings of Cellard's (2022) ethnography of algorithms in the French government, which showed how administrative and bureaucratic procedures can be “redefined” as algorithms to make them less visible and to limit human responsibility. In the systems governing Canada's borders, we see the opposite: the role of an algorithm is minimized by situating it within a set of procedures where humans exercise control and remain accountable.

All of this is particularly relevant at a time when governmental outcomes are increasingly shaped by algorithms rather than written rules, or when written rules must be translated into algorithms. Latour (2010) avoided the well-worn path of positioning legitimacy as a basis of legal rule, focusing instead on the institutional processes and material associations that make up the law. However, the shift to digitalization and code-driven law calls the legitimacy of algorithmic or hybrid legal effects into question, as actors work to legitimate and delegitimate these controversial new technologies of governance. One way of doing so is through a strategic attribution of human agency, and so this study contributes to analyses that highlight the importance of such attributions (Cellard 2022; Dahlin 2024), bringing questions of legitimacy to the fore. The idea of nonhuman agency has become quite commonplace through widespread discussions of artificial intelligence, but for hybrid systems involving complex constellations of people and machines, highlighting the human component remains a way to affirm legitimacy.

Whether a decision is ultimately a human or algorithmic one is the wrong question to ask; what is needed is a better understanding of the associations that contribute to legal effects. Assessing legitimacy requires that these associations be made visible, but democratic legitimacy also requires meaningful opportunities for legal interpretation and contestation, and public involvement in the creation of rules (whether written or coded). RaC advocates make a persuasive case for traceability as a form of accountability, where citizens are entitled to know the legal basis for decisions made about their lives, providing the means to trace these to relevant sources of authority. However, encoding laws also leads to closure and rigidity (Hildebrandt 2020), potentially limiting the scope of contestability.

Legitimacy and trust should not be equated with acceptance and agreement, and the challenge for the future is to create digital services that are inherently contestable or open to agonism in a democratic society (see Crawford 2016; Mulligan and Bamberger 2019; Walters 2022). Ideally, this scope of contestation needs to be greater than the question of whether a given decision is “legal,” and extend into greater democratization of rule-making (Viljoen 2021). The shift to code-driven law is not inevitable in its contours or eventual outcome, and many opportunities remain to contest or reconfigure these transformations in governance.

Footnotes

Acknowledgments

This article received valuable research assistance from Emily Kwong and Donya Hatami. The study of ArriveCAN benefited greatly from Matt Malone's efforts to obtain access to government documents.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Funding for this research was provided by the Social Sciences and Humanities Research Council of Canada (Grant/Award Number: 430-2021-00810).