Abstract

To understand how robots, such as self-driving cars, drones, or rovers, generate spaces of interaction, it is important to move away from the idea that they simply represent the world through their sensors and then decide upon an appropriate action to reach a predefined goal. The question is how they generate their own worlds in the first place. This article reassesses the concept of virtuality as an analytical tool to describe the world-making capacities of autonomous, environmentally adaptive machines. Such robots employ the probabilities of virtual world models, generated by algorithmically filtering sensor data, as statistical specifications. This sensor-algorithmic virtuality enables them to decide upon actions to be actualized in the real world by playing through a multiplicity of virtual options. They don’t have access to an outside view but rather operate based on a multiplicity of virtual, possible, and more or less probable worlds. In a case study on the Mars rover Perseverance, this article describes in detail the technical procedures and the underlying epistemological challenges of machinic virtuality and shows that this virtuality—as a multiplicity of virtual worlds—rests upon the algorithmic filtering of sensor data.

On February 18, 2021, the Perseverance rover began exploring the surface of Mars (Williford et al. 2018). With its ability to dig holes, pulverize rocks, perform spectral analysis, and take aerial photos with its supplemental drone (Covasan et al. 2020; Sasaki et al. 2020), Perseverance is there to explore—in our stead—an environment that is inaccessible to us. To fulfill its mission, Perseverance needs a certain degree of autonomy that consists of localizing itself in its environment and creating a preliminary map of its surroundings. This problem situates the place of Perseverance in something that, freely adopting a phrase from Herbert Simon (1969), we might call the sciences of the artificial. Perseverance stands in as a proxy for humans, adapting itself to an environment and tasks which no human could realize. No technology, not even on Mars, is autonomous in the sense of total independence from human manufacture and maintenance. Still, the rover’s embeddedness on Mars and its technical capacity for sensor-based navigation allow us to better understand robotic ways of world-making and to derive the challenges that autonomous technologies on Earth will bring with them.

In this environment, we cannot currently inhabit, the rover must transform its surroundings into a map of possibilities—lest that treacherous environment bring about its untimely demise. It goes to work in a real space, but all of its work in that space depends on making it more predictable through practices of projection, probability, and modeling. To explore Mars, the rover is constantly interacting with engineers and researchers on Earth, and its embeddedness in human-machine-configurations has been described in detail in recent works in Science and Technology Studies (Messeri 2017; Mirmalek 2008; Vertesi 2015). But is this approach sufficient for understanding the robot’s spatial agency and its adaptation to an unknown environment? As I will show, in its AutoNavigation-mode, the robot is able to operate solely on the basis of a multiplicity of virtual models, and to understand how it thereby creates its own world by translating incoming sensor data into virtual world models, I suggest to tentatively take it out of the networks of human labor into which it is embedded, only to return it to these networks afterward. Perseverance’s autonomous mode is only a small component of its overall embeddedness within transactional networks, but as I will explain, virtual modeling is crucial and will become even more relevant with recent developments of, for example, autonomous cars or drones. In this regard, social accounts of technology would benefit from an awareness of sensor-based machinic action and model-based processes of technological world-making.

In the following, I will take the problem of spatial localization and mapping as a starting point because this problem directly leads to the question of how the robot relates to the world on its own terms. Localization and mapping are fundamental challenges in robotics: a robot does not know where it is, what is around it, or what a world is. It can be programmed and supported by humans, but no information given beforehand is sufficient to deal with a fundamentally uncertain environment. To navigate and to interact with objects and other entities in its surroundings, the robot needs to create probabilistic models of its environment. These models are not representations of the outside world per se. Rather, by analyzing the underlying procedures of algorithmically filtering incoming sensor data, I argue that these models are instantiations of a specific kind of machinic virtuality. This virtuality is not separate from the world, but a technical translation insofar as it consists of sensor-based data that does not address human senses, of probabilities that are given to each element and consequently of a multiplicity of possible worlds.

To understand the relation of Perseverance to its Martian surroundings and, more generally, of sensor-based robots to the world, I refer to a concept that is supposedly passé today—virtuality—and has only recently undergone a theoretical reassessment (e.g., Nakamura 2020; Messeri 2021; Rieger, Schäfer, and Tuschling 2021). Three decades ago, “virtual realities” were conceived as otherworldly, computer-generated spaces and forms of interaction with artificial avatars. The virtual was generally considered as a derivative of or a welcome addition to the real with respective consequences for those who use it. It was conceived as something disembodied, as a new dimension of experience for users, or regarded as an equivalent of the digital (Rheingold 1991; Baudrillard 1994; Turkle 1994; Weibel 1999).

Today, accounts of the virtual are less commonly invoked for describing the rise of ubiquitous and smart technologies, the politics of platforms, or the algorithmic penetration of everyday life. Looking back from the present, the vocabulary of the 1990s has turned out as overly narrow and seems inadequate today with its focus on the question of reality and its discontents. The imaginary of the virtual, it seems, didn’t stand the test of time. But if we follow recent suggestions to disencumber virtuality of the former euphoria and fears of the 1990s and their conceptual constraints (Messeri 2022; Kasprowicz and Rieger 2019), the concept develops a new descriptive potential. In the following, I refer to Charles Sanders Peirce’s (1920) almost forgotten concept of the virtual to describe the world-making capacities of such machines and the sociopolitical impacts of their use. My text attempts to reconsider this term—in contrast to “virtual reality” as a specific application of hardware and software—to differentiate the modalities, which is to say the different registers of relating to the world, needed by sensor-based to navigate and map their surroundings.

Machinic virtuality is employed, to varying degrees, in technologies that are fundamentally changing our lives, today or in the near future, in terms of street mobility, parcel delivery, lawn mowing, senior care, logistics, industrial production, warfare, and last but not least, in explorations of volcanoes, the deep ocean, the polar regions, and outer space—perhaps not always in the way imagined by their developers but certainly in a way that raises important questions about the co-creation of common worlds between humans and machines. To use the term virtuality for the modality of these technologies is not simply a conceptual decision: what I call “sensor-algorithmic virtuality” is not only used in robotics but is fundamental for technologies that translate real-world environments into virtual environments, for example, for three-dimensional scanning, virtual reality (VR)-scenarios, or augmented reality. In fact, a smartphone with an augmented reality (AR)-application utilizes a variation of the sensor-algorithmic procedures described below to position a virtual object on the screen. To understand how such technologies generate spaces of interaction and achieve spatial agency, it is important to move away from the idea that they simply represent the world through their sensors and then decide upon an appropriate action to reach a predefined goal. The question is how they generate their own worlds in the first place, by technically translating sensor data into world models.

The virtuality that I am interested in is therefore characteristic of many different technologies that operate at the intersection of the machine and the outside world, precisely where sensor data are processed. The case study presented here comprises a set of technical processes that generate a multiplicity of virtual worlds and decide to turn one option from this multiplicity into a concrete action. Machinic virtuality, therefore, turns out as a modality in which the machine itself interacts with a world it has created.

Mars rovers are particularly apt for exploring the barebones of machinic virtuality because they are well documented and easy to understand at least on a general level in terms of the fundamental challenges of robotics described below. Their place of deployment strips away many of the elements (namely, living entities and moving objects) that make terrestrial navigation indefinitely complex. Their technical capacities are reduced to such a degree that only the most basic of solutions are viable. But while the rover is technically rather simple, its relation to the environment in which it operates is not.

In the last two decades, robots that are able to time-critically adapt to constantly changing conditions of their environment and to interact with human and nonhuman actors within it have reached new stages of autonomy. Autonomy here does not refer to total independence, but to what philosopher Christoph Hubig (2015) has called operational autonomy (in contrast to moral and strategic autonomy): machines with operational autonomy are not deterministic, because they are free to select their means in accordance with given criteria, but they are not able to select their own purposes or goals. However, they are still determinate in that the criteria and goals of the selected means have been determined in advance. With strategic autonomy, the system undertakes not only the selection of means but also the “selection of purposes among the specified goals, according to the chances and risks of their realization” (Hubig 2015, 131f.). Moral autonomy applies to the setting of overarching principles and intentions. With reference to Hubig, we can consider the Mars rover as operationally, but neither strategically or morally autonomous.

Machines with different degrees of operational autonomy are in the process of transforming the situatedness of technology in the world (Thrift 2004). In this regard, virtuality amounts to a basic element of an advanced form of embodied and situated cognition that cannot be reduced to artificial intelligence or the processing of symbols and mental representations. Virtuality, as I will show, is embedded within the time-critical operations (Stine and Volmar 2021) that adapt the robot to its environment. In this regard, virtuality does not consist of a representational relation to the world but is embedded within it because it is a result of a chain of translations that transform the world into a model. In this context, virtuality serves as a basis for processes of automated decision-making, or microdecisions (Sprenger 2015), between different options for possible actions that are made automatically according to probabilities, generated in volumes that cannot be processed by humans, in an extremely short time, in order to select the action to be implemented from a variety of options. The challenge for the robot’s autonomy, as I will show, lies precisely in these microdecisions for one of the virtual worlds available to it.

In this context, virtuality does not refer to a mode of existence that stands in opposition to reality. Rather, I am interested in the operationalization of virtuality—its integration into robotic interaction with the world, its “spatial media/tion” (Leszczynski 2015; Lynch and Del Casino 2020). As a modality of relating to the world by algorithmically filtering sensor data, virtuality is part of what Katherine Hayles (2017) called “nonconscious cognition.” Describing these robots simply as “intelligent” or “smart” machines with the ability to adapt to new situations is not enough to understand their ways of world-making, and this reductionism contributes to a depoliticization of these machines. The perspective offered here troubles this simplified understanding of intelligence as smartness by epistemologically reengineering the different steps of those operations that constitute the spaces in which sensor-based robots can interact with human and nonhuman actors. To understand machinic virtuality, it is therefore necessary to scrutinize these technologies in detail with attention to their conceptual boundaries.

This approach implies that the empirical material of this text are technical literature, engineering reports, diagrams, and snippets of code. While the ethnographic studies of Vertesi (2015), Messeri (2017), and Mirmalek (2008) have provided invaluable insights into the human-machine-network of Mars exploration, my text takes a different approach by focusing on the robot’s mechanisms for translating the world into a virtual model. For these questions, technical sources, despite all their shortcomings for other questions, provide a good point of entry. In this regard, my text also brings up methodological questions that are beyond the scope of this article, insofar as it challenges some of the well-established paths of actor-network theory by focusing on machinic agency. I am well aware that the scope of my article is rather narrow: I will not be able to fully account for the complexities of driving on Mars, something that has been done brilliantly in recent research. Instead, I move the focus and take Perseverance as a very specific specimen among a wider development of autonomous, sensor-based technologies.

To describe the operationalization of virtuality, it is important to understand how different modalities relate to each other. As I show below with reference to Charles Sanders Peirce, the as-if of virtuality is not the no-more or not-yet of the real. This conceptual approach will prove itself in describing some of the epistemological challenges of robotics (the second section), which motivated the technical solutions developed in the last decades. In the third section, I present the case study of Perseverance. This example sketches how sensor-algorithmic virtuality enables such technologies to generate options for (inter-)action and how automated microdecisions provide the means to realize one world out of a multiplicity of calculated possibilities of virtual worlds (the fourth section). In the conclusion, I will come back to the restrictions and methodological challenges of my approach.

Machinic Virtuality and Operational Autonomy

How does a virtual object relate to a real object? This question is at the core of a definition by Charles Sanders Peirce dating back to 1920. Peirce’s short dictionary entry provides a middle ground between the understanding of virtuality as a modality that is introduced by the medieval philosopher Duns Scotus, and Gilles Deleuze’s famous conception of the virtual (and recent iterations, e.g., by Brian Massumi). By going back to Peirce, I try to circumvent some of the pitfalls this term has fallen into in latter decades. Peirce’s concise definition offers a different, perhaps even more versatile, approach to virtuality that could also be useful for endeavors on virtualization in other areas, such as virtual machines in computers, cloud computing, platforms, or augmented reality.

Peirce understands virtuality, derived from the medieval virtus for power, from vir for man or manliness (Bas 2015), as a modality in which “a virtual X (where X is a common noun) is something, not an X, which has the efficiency (virtus) of an X” (Peirce 1920, 763). A virtual object shares efficiency with a nonvirtual object without being, as Peirce calls it, of the same nature as the object. A virtual table is an object that shares specific attributes with a real table, for example, that you can sit at it or put something on it, without being of the nature of a table. In this sense, the virtual table is actual without being real, such as in virtual reality applications. Its virtual modality does not prevent the table from becoming efficient, because it is actual but not real. The words real and reality are used here not to refer to the world as it is or to a single standard of the given, but to one of several modalities of existence.

Compared to other concepts of the virtual or virtuality, Peirce’s definition has the advantage of not being derived from the phenomenal appearance of the virtual object (and therefore not being susceptible to the shortcomings of “virtual reality”). For Peirce, the virtual stands in for something else, but is as efficient as that for which it stands. Peirce’s concept of this modality is useful to describe a mode of machinic operations that use virtuality, that means virtual worlds that share specific efficiencies with the real world without being the real world. Rather, both real and virtual are considered as modalities of the world. In its efficiency, virtuality is embedded in different degrees and specifications within the operations of machines that use sensors and filter algorithms to create virtual models of their environment. The resulting sensor-algorithmic virtuality, in the understanding presented here, enables the machine to relate to the world and to bring forth spaces of interaction within it. To study virtuality as a machinic operation therefore means identifying its efficiency, its virtus, the attributes that a virtual world model shares with the real world without being of its nature. These attributes should not be considered as isomorphic representations but as effects of technical processes of translation between the real and the virtual.

The modality of these world models invites further consideration. They are challenging because they are virtual and potential at the same time. As Peirce indicates, virtual should not be mistaken for potential or possible. What is virtual is not a potential awaiting actualization: “For the potential X is of the nature of X, but without its actual efficiency” (Peirce 1920, 763). A potential table is a table at which you cannot sit, but that is of the nature of a table (but only in reality, not in actuality). The potential table is in a different state of existence, because it is real but not actual. This definition does not contradict the observation that virtual worlds can also be potential worlds but makes it necessary to carefully distinguish their modalities and analyze the means of their technical production. A virtual model stands in for the real world and thus enables the planning of paths through it because it shares the necessary efficiencies with the real world, for example, traversability. A possible model of the world references a world that has not yet come into being but has the potential of becoming a world. The virtual model of the world can be possible at the same time because technically it consists of nothing else than probabilities. As I will explain in detail, for a robot, these probabilities are effects of an algorithmic comparison of sensor data collected at different points in time, resulting not in a single representation of the world but a chain of translations that results in a multiplicity of worlds that could be realized depending on their probabilities. These probabilities are then used to determine possible actions.

Fundamental Challenges of Robotic Navigation: The Bayesian Statistics of Comparing Worlds

In order to navigate in a complex environment, autonomous robots must continuously register the states—shape, position, and movement—of surrounding objects and locate themselves in relation to them. They must constantly recalculate their own location and possible reactions to their environment. They may enact preprogrammed instructions, but they also have to technically create a relation to a world of uncertainty. To scrutinize the different modalities with which they translate the world into a model, it is necessary to understand how they “compose” their environment.

The environment can be mapped only by moving and collecting data with a robot’s available sensors, but these sensors are unreliable and inconsistent. The technical challenge consists of a safe approach to the uncertainty of the environment and to the unpredictability of the behavior of other actors. These problems of environmental uncertainty were discussed in robotics around 1990 under the name “simultaneous location and mapping” (SLAM; Durrant-Whyte 1987; Smith and Cheeseman 1986). Various algorithmic techniques were developed to solve this problem (e.g., Kalman filter, FastSLAM, particle filter localization). Generally speaking, these solutions attempt to merge data collected from available sensors at different points in time into a temporary model of relevant aspects of the environment. As the robot moves and measures its environment both at the point of origin and during movement, it acquires different sets of data about the environment depending on its positions. These datasets are compared by probabilistic techniques that broadly fall under the rubric of Bayesian filters, named after the eighteenth-century mathematician Thomas Bayes.

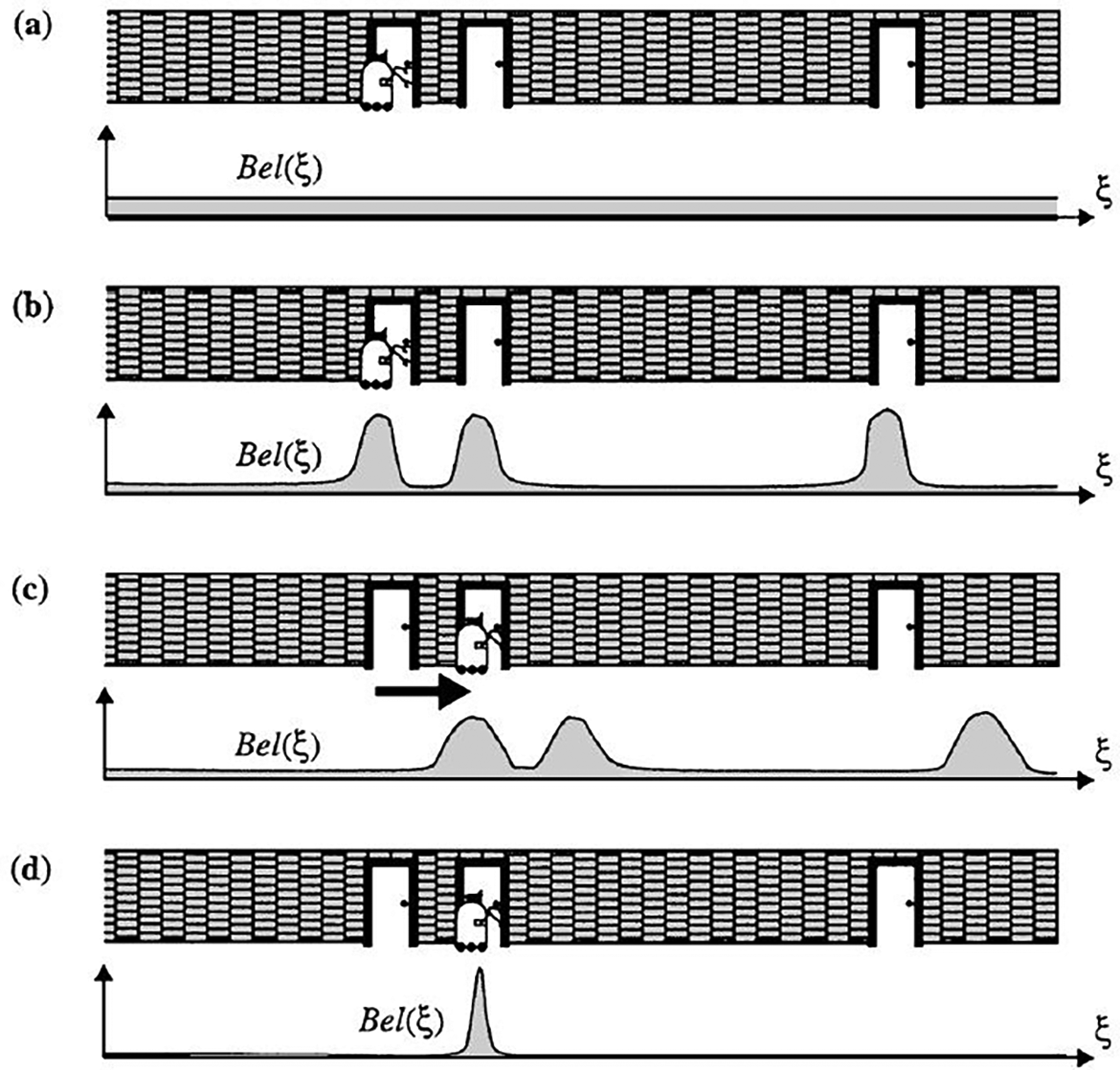

The significance of the Bayesian approach, further formalized by Pierre-Simon Laplace in the late eighteenth century and now seen in applications ranging from spam filters to motion tracking to face recognition, lies in its rejection of exact knowledge in favor of increasingly precise probabilities (Rieder 2017; Joque 2022). Bayesian methods start with an assumption, the belief that is then refined through inferences drawn from new information (incoming sensor data). The word “belief” denotes that the autonomous system is deemed neither to achieve objective knowledge nor to accurately determine its situation (Thrun, Burgard, and Fox 2006, 3). A Bayesian filter compares the sensor data of the environment at time t − 1 with the sensor data measured from another position at time t (Figure 1). To compare in this context means to filter by highlighting a concurrence as significant whenever something exists in both datasets at the same position. Referring to this superposition of both measurements, the robot calculates the probability of its localization, and of other objects in the environment. If the measurements add up, the probability that something is at this location becomes operational. Since the present t can always turn out to be different from the future calculated from the past t − 1, this process results in probability values for the new position of the robot as well as for the shape, position, and movement of surrounding objects. In short, to locate itself, the robot needs a map, and to create a map, it must locate itself (Burgard et al. 2016, 1134). The tasks can only be solved simultaneously: location and mapping are inseparable (see Kanderske and Thielmann 2019).

Probabilistic locatization. Source: From Thrun (1998, 48), used with permission from Springer Nature.

With Bayesian methods, a robot adjusts its hypotheses about the world based on continuously collected data. Bayesian filters are used in this sense as statistical filters to fuse discrete-time measurement data. This approach results in a provisional virtual model of the environment (or certain aspects of it). This model can be operationalized, for example, by calculating reactions to the environment or by playing through options for future action. In this sense, the model is virtual but should not be mistaken for a representation: “The world modeling system serves as a virtual demonstrative model of the environment for the whole autonomous system” (Beyerer et al. 2012, 138).

In this context, virtuality, in all its synchronous multiplicity, probabilistic inferences, and digital constitution, is not a space to turn away from reality. The term virtual in this context indicates not only the status of as-if attributed to each of these world models. Instead, virtuality is the operational framework for those modules of the robot that determine its relation to the world. An autonomous, mobile and adaptive system such as Perseverance has nothing at its disposal besides virtuality for systematically testing out and playing through possible actions. Instead of being indexical representations of the environment, its models of the world are based on mathematical probabilities, making them virtual in the sense of sharing an efficiency with that for which they stand without being of its nature. Considering the robotic exploration of Mars allows us to get a glimpse at what these worlds might look like.

Mars Exploration

The project of exploring the Martian surface poses many important questions about exobiology and extraterrestrial life but also about colonialist impulses (Dittmer 2007) and the phantasms of interplanetary expeditions in light of the Anthropocene. Here, I want to focus on a different set of questions: how does the rover orient itself in this world? What are the conditions for its autonomy, considering its great distance from Earth, varying between 50 and 400 million kilometers? What are the uses of virtuality on Mars?

Constraints of Autonomy on Mars

The development of Mars landers and rovers can be traced back to the Viking probes of the 1970s, with different technologies tested out with each mission (Muirhead 2004). Perseverance (Figure 2) and, to a lesser degree, its predecessor Curiosity were the first rovers to employ a new mode of navigation called Autonav, in which the rover is able to autonomously navigate the surface of Mars for a given period of time without input from Earth. The need to develop autonomous modes of navigation is the result of three fundamental restrictions that determine the use of technology on Mars: first, commands to the rover and data exchanges with Earth are subject to an extraordinary transmission problem: depending on current distance, signals need between four and twenty-two minutes to travel from one planet to the other. The available transmission channel is also used for many other missions. Hence, low bandwidth, high latency, and limited time frames for transmission go hand in hand. This means it is not possible to communicate with a Mars rover in real time, nor on a continual basis (Kerruish 2019).

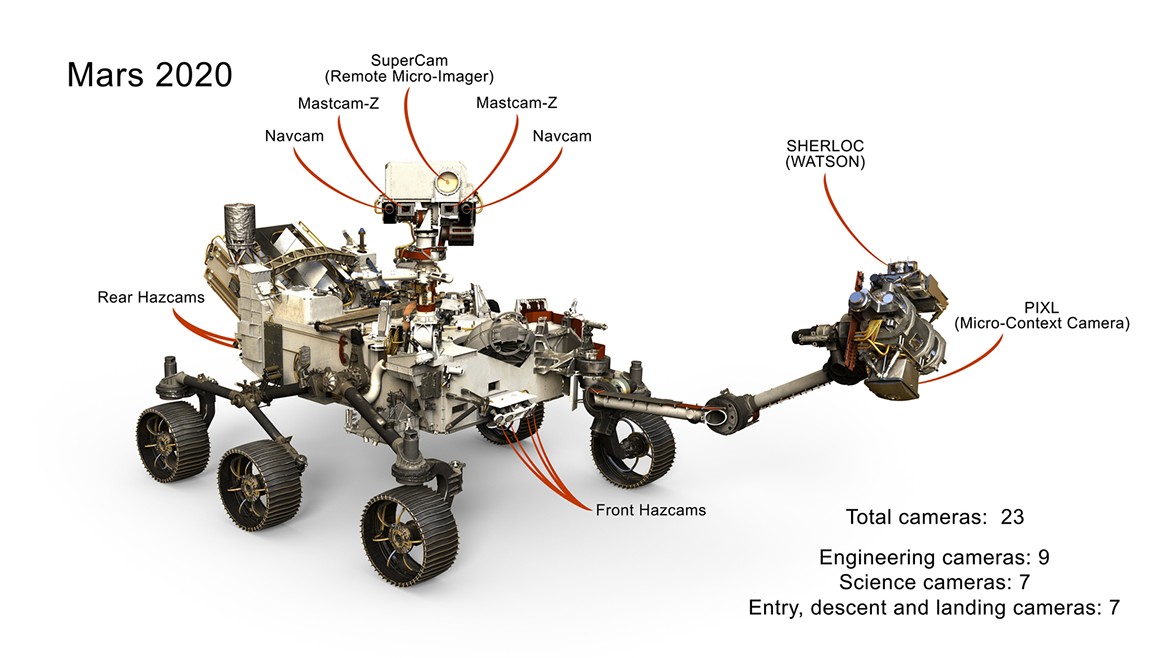

Sensors of the Mars rover Perseverance. https://mars.nasa.gov//imgs/2017/10/mars_2020_cameras_labeled_web-full2.jpg. Source: NASA/JPL-Caltech, no copyright.

Second, a Mars rover must be extremely risk averse: every action must account for the worst-case scenario. Perseverance’s top speed is 300 meters per hour. Every remote-controlled command involves detailed discussion on Earth, because no repairs are possible and danger is always lurking in the desert-like environment of Mars (Vertesi 2015). Spirit, for example, was trapped by soft sand in 2010, and Opportunity was silenced by a dust storm in 2018. Every action has to be weighed against inevitable wear and tear, and all components—especially the wheels—have a finite life expectancy.

Third, the Mars rover is equipped with comparatively trivial technology. The onboard computer is comparable to a late-1990s Intel Pentium processor but resistant to radiation. More powerful navigation sensors, such as laser, sonar, and lidar, were rejected for being too energy-hungry and failure-prone. Instead, the rover uses optical cameras along with accelerometers and motion sensors (Figure 2). The maps prepared before the trip, based on satellite imagery, are not detailed enough for onsite navigation (Sasaki et al. 2020). All this means that rovers have a very limited range: until spring 2023, Perseverance had only traveled sixteen kilometers.

These three constraints set the conditions for autonomous navigation on Mars. Because of these basic parameters, the rover is a machine that is both conservative and speculative. From a technical point of view, the challenge is to combine the greatest possible autonomy with the least possible risk. Despite its distance from Earth, its every movement is closely monitored. Each action is discussed in detail; with Curiosity, this meant 60,000 actions in two years. For all its great distance, the rover is not an isolated robot: as Vertesi (2015), Messeri (2017), and Mirmalek (2008) have shown in their respective field studies at NASA, Mars rovers can be seen as just the implementing arm of a broader interplanetary network of actors, one that includes the Deep Space Network and the Mars Reconnaissance Orbiter, along with 16 drivers and 400 scientists at the Jet Propulsion Laboratory in Pasadena who undertake the daily analysis of the collected data—and all we have from Mars is digital data.

Navigating the Martian Surface

In order to move through an unknown Martian environment, Perseverance and its team on Earth must rely on a suite of sensors to measure its surroundings and create models from the collected data so that the rover can calculate potential routes through the terrain. It cannot just go “wherever.” Each move is played through in advance, whether on location or back on Earth. Playing through is meant quite literally here: in order for the rover to move as safely as possible, different options for possible action must be compared and weighed against each other, whether algorithmically by the rover or back on Earth with the help of discussions, simulations, and a physical “test bed” in a sandbox. Every action of the rover is preceded by a number of as-ifs.

In order to move through an unknown environment, Perseverance (like any robot) has to register the objects around it and locate itself in relation to them using a variation of SLAM. It has no access to an external view of the situation and never knows where it is, but must instead continuously recalculate its current location and its potential responses to its surroundings. While earthbound systems can use powerful sensor technologies such as sonar, laser, radar, and lidar to generate high-resolution, near-instantaneous three-dimensional models of the environment, Perseverance only has optical cameras. All these cameras are stereoscopic, meaning they have two lenses, allowing for a certain amount of three-dimensionality through the comparison of the two images, which in turn allows for a more effective comparison between pairs of images (Mai et al. 2020). Perseverance is the first rover carrying a separate processor for handling images, meaning that calculations can continue even while in motion, extending the range of autonomous navigation.

Using a specially developed software tool called the Rover Sequencing and Visualization Program (RSVP), all sensor data are merged on Earth to create three-dimensional visualizations of the rover in its environment so that potential rover paths and positions can be simulated before the corresponding text-based commands are generated (Wright et al. 2006). According to participating engineer Guy Pyrzak, “You don’t just see a picture and guess how far away things are but can actually spin it around and look at it as a VR image on your computer” (Scannell 2020). Every command is first modeled in this virtual environment—and if necessary, can also be tried out with test drives in the real-world sandbox—before it is transmitted to the rover (Toupet et al. 2020).

In executing each day’s set of commands, Perservance has three navigation modes for traversing unknown environments. To understand Perseverance’s use of virtuality, it is important to describe how these modes of navigation complement each other depending on the situation. With blind navigation, earthbound technicians set a goal in RSVP and the rover precisely follows the specified path: for example, go straight for 2.2 meters, rotate thirty degrees, then go straight for seventy centimeters (the rover is not designed for curved trajectories and so rotates in place instead, thereby avoiding uneven wear on the tires). The rover locates itself through dead reckoning and odometry, measuring axle rotations that reflect the direction of movement and the speed toward the target. The problem with this method is that instabilities in the terrain may cause the wheels to slip, thus altering the trajectory during driving. This means that the rover can only estimate its final location and whether its goal has been reached.

The second navigation mode uses what is called “visual odometry” (Maimone, Cheng, and Matthies 2007; Yousif, Bab-Hadiashar, and Hoseinnezhad 2015). 1 Earthbound technicians set a rough path to the destination, but the actual route taken is corrected by the rover while driving, doing so by comparing—in the sense described above—sequential stereoscopic images of the surroundings. While significantly slower than blind navigation, this mode provides increased accuracy and safety because the rover is also detecting previously hidden obstacles, slopes, and rough patches. However, visual odometry only works with terrain that includes sufficient contrasts, textures, and landmarks, such as prominent rocks.

In this mode, the rover must stop at least once per meter in order to take a photo. These images gathered at different times from different locations are then compared to each other by means of algorithmic filters (in this case an improved implementation of the extended Kalman filter) so that distinctive markers and spatial correlations can be ascertained. A superimposition of these images results in probabilities concerning the positions and contours of objects and terrain. This allows the rover to pinpoint its own position, avoid potentially dangerous objects, and predict spatial situations in which it might go off course or get stuck. The rover is capable of performing these calculations on its own, guided by a specified route but not following it blindly.

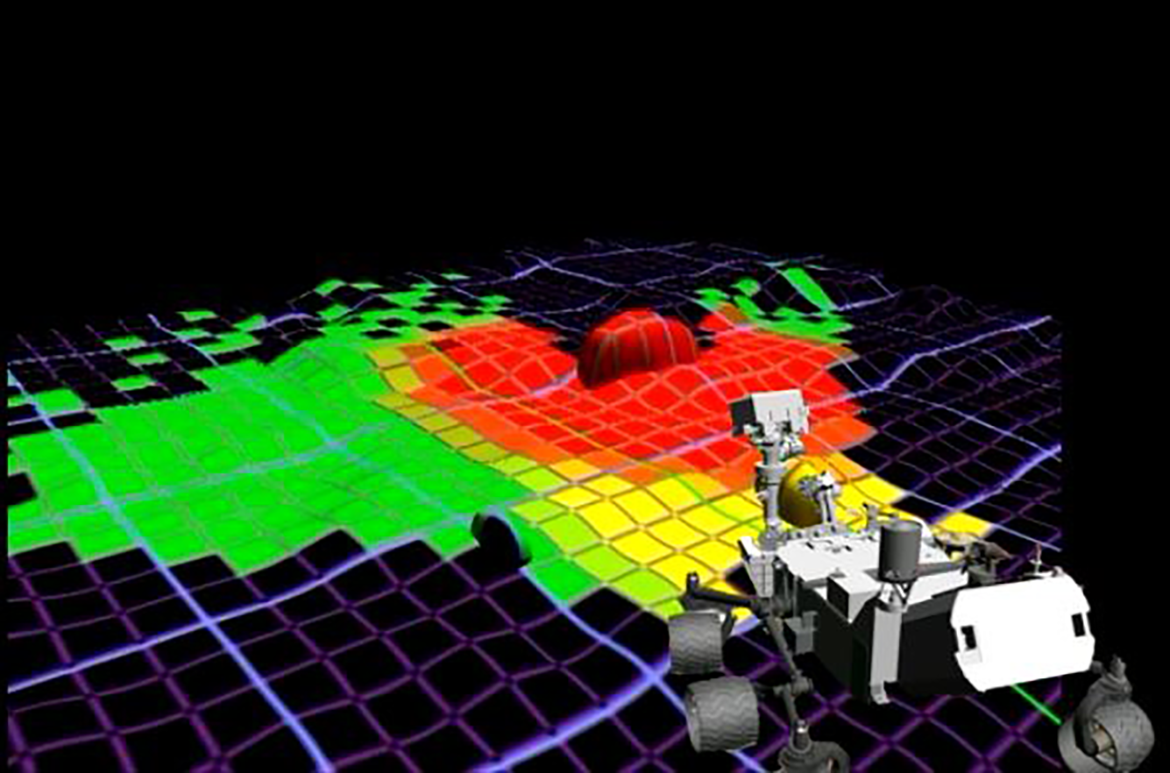

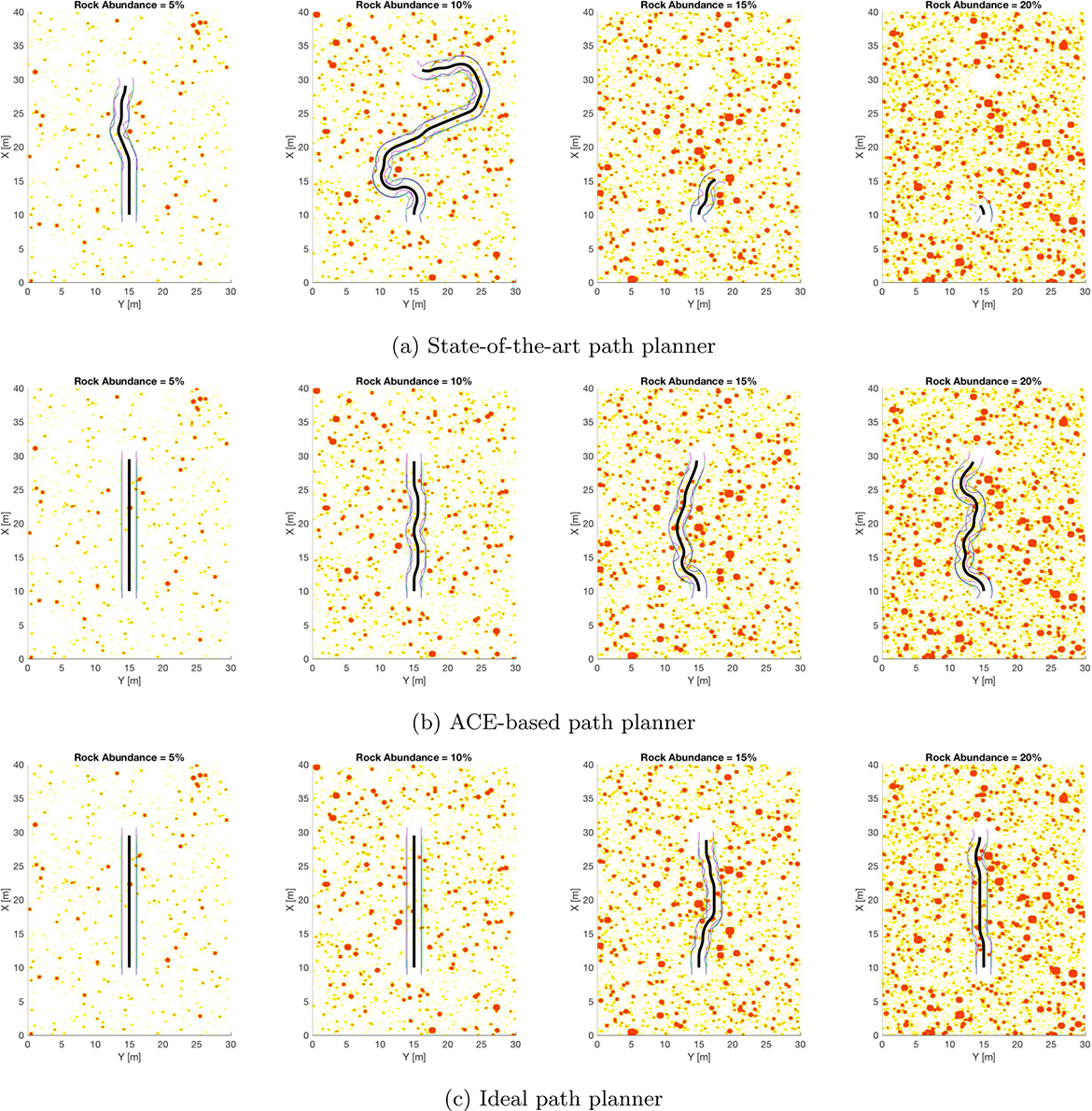

The third mode, AutoNav, was first used by Curiosity in 2013 to traverse an unknown terrain for which the teams on Earth had no footage. In this mode, the rover is only given a target destination, and it must try to autonomously find the best path (Abcouwer et al. 2021). Because it is operationally autonomous, it is determined but not deterministic. AutoNav consists of two steps. First, the rover generates a hazard map of the environment based on data already collected by visual odometry (Figure 3). In addition to localization, the sensor data are fused together by taking the image pairs from all the cameras and combining them into a virtual model, which is then divided into an “occupancy grid” made up of quadrants about the size of the rover. Using an algorithm called GESTALT (Grid-based Examination of Surface Traversability Applied to Local Terrain), each quadrant is assigned a probability value regarding the likelihood of an obstacle, a slope, or a rough patch, and given a traversability rating of either red, yellow, or green (Figure 3; Biesiadecki and Maimone 2006; Janis and Bade 2016; Helmick, Angelova and Matthies 2009). The second step takes the resulting hazard map and feeds it through an algorithm known as Approximate Clearance Evaluation (ACE; Figure 4), which calculates all possible paths and positions from the three-dimensional model (Otsu et al. 2019).

Video still, from a 2013 NASA-produced video, “Leave the Driving to Autonav,” https://mars.nasa.gov/resources/20147/leave-the-driving-to-autonav/.

Approximate Clearance Evaluation. Source: Otsu et al. (2019, 782), used with permission from John Wiley & Sons—Books.

At this point, the virtual world models are multiplied and this multiplicity is operationalized. The ACE algorithm calculates possible paths by creating a tree diagram, the branches representing different options in terms of which direction to drive. The first branching offers fourteen possible turns-in-place (including the option of turning around), followed by another branching offering eleven trajectories projected out three meters, and then another offering the same. So, for each six-meter leg of the journey, the rover is calculating 1,694 possible paths (14 × 11 × 11; Abcouwer et al. 2021, 2). Based on the hazard map and its probability values, each path is assigned a cost factor, and the list of all 1,694 possible paths is ranked according to these calculated costs with the help of a simple sorting routine. The algorithm determines this hierarchy of paths by comparing them according to their probable riskiness. This allows the selection of the least dangerous path and the decision for the corresponding action. However, for safety reasons, instead of executing the entire path, the rover only does the first maneuver, which means the first turn in place or the first meter forward, before repeating the process until it has reached its goal.

In this mode of navigation, the rover has all the possible paths at his disposal as entries on the table of 1,694 possible paths with individually calculated costs (Abcouwer et al. 2021). The advantage of this mode lies in the autonomy of the rover, which can travel to its given destination—even across unknown terrain—without any guidance from Earth. Although this navigation mode is the slowest, it does allow for continued operation between windows of communication with Earth. However, the rover has difficulties recognizing sand and small sharp stones, which still requires the visual judgment of human eyes and experience (Vertesi 2015, 56). This navigation mode is therefore suitable only for a certain type of terrain, but here it saves a lot of bandwidth.

A Multiplicity of Virtual Worlds

What, then, are the modalities of the rover’s operations in AutoNav and its different relations to the environment? The rover never has exact knowledge about the world, but only about a probability of the model of its current surroundings, which then becomes the basis for playing through 1,694 options of possible paths through the environment. The rover never knows where it is or whether a particular location contains an object. It only has information about the probabilities determined by comparing several datasets, and in AutoNav mode, it operates by feeding these probabilities into a model of the surroundings that can be understood, following the Bayesian terminology, as a belief about the world. As soon as the rover moves, these probability values change, meaning that it has to update its model of the surroundings with each step. In this process, possibilities become operationally tied to probabilities. The world of the rover is therefore in constant flux and consists entirely of probabilities. The generated model, with its multiplicity of possible paths, is virtual in that it does not represent reality but rather allows the rover to virtually play through possible paths because, in the sense of Peirce, each virtual option shares the efficiency of traversability with the real world. A comparison of these paths according to particular criteria, for example, the likelihood of an accident, enables the creation of a hierarchy of possible options of behavior: in other words, a list from which the most appropriate option for the current situation is selected and turned into the basis for a concrete action. The execution of the action that the system decided for and the realization of a virtually tested path’s first meters will then necessitate new localization calculations and the creation of another multiplicity. These 1,694 paths are more than simple options, because each of these options contains a bifurcation of another set of 1,694 options.

All of these options can be considered as individual virtual worlds, since they all encompass specific spatial and temporal relations. Each path calculated from the virtual world model defines a possible position in the environment both spatially and temporally. These virtual worlds are possible in that they could potentially be realized by the rover’s actions. The virtualization of possible worlds does not coincide with the calculation of a single definitive path, that is, the application of an algorithm to deliver a definitive result in the end. When the ACE algorithm delivers a result, it is only because it has calculated 1,693 other paths as well, without which no decision could be made about the 1,694th. Every action of the rover is preceded by the generation of multiple virtual worlds contained within each path. These calculations have to be repeated constantly in large numbers and as fast as possible (even though in this case, the number is limited and the computer is comparatively slow). As a result of these microdecisions, the rover is able to interact with its surroundings by deriving an action from this table.

Virtuality plays a role here on two levels. Both concern virtuality in the sense of an efficiency that provides a variety of options for the rover’s actual situation. First, virtuality is crucial for computing the model of the surroundings through a comparison of sensor data acquired by the rover at a location it has already left with sensor data from its current location (visual odometry). And second, this model is the basis for the creation of a hazard map with its multiplication of possible paths as virtual options. In both of these applications, the virtual models share a certain degree of traversability with the real Martian surface. When the rover decides for one path and drives it, the virtual model of this path becomes real. But the virtual model is already actual because its efficiency is the basis for the comparison with other possible paths.

The sensor-algorithmic virtuality of the rover (and other autonomous systems) is well illustrated by a metaphor coined by German literary scholar Joseph Vogl, who drew upon Jorge Luis Borges and Gottfried Wilhelm Leibniz in describing the virtual by imagining “the event [e.g. an action of the rover] as an open-bottomed pyramid in which the occurrence at the apex opens up onto an infinity of its variations” (Vogl 1998, 46). The worlds of the rover may not be infinite, because they are limited in each calculation cycle by temporal and spatial constraints to a tree diagram of 1,694 possible worlds. But what is important here is that these worlds are neither true nor false, nor even mere possibilities. They are not “combinations of incorporeal properties, [or] timeless and spaceless qualities” (Vogl 1998, 47), but calculated and quantified options generated anew with each cycle. It is the decision in favor of 1 world of 1,694 that transforms the modality of the object from virtual to real. And yet all the worlds that have not been realized are also available as calculated options and occupy a place in the hierarchy of all possible paths. Although only one world is realized through the actions of the rover, the other worlds remain possible and probable, precisely because they are available as virtual options. In this sense, each of the rover’s actions represents the apex of a pyramid of 1,693 other worlds, each of whose realization would in turn coincide with 1,694 other new worlds, similar to the garden of forking paths described by Borges.

The existence of these possible worlds depends on all of them being calculated, which means that they simply possess more risks compared to the 1,694th. This world shares the most fitting efficiency with the real world. The other worlds are operational possibilities, and to be possible, they must already have been calculated. In this sense, we can say that all these possible virtual worlds need to be materialized, that is, they must all exist as calculations already done and manifested on the table and in the hazard map, and not only calculated when needed (on this materiality of the virtual, see Dourish 2016). For the rover, though, that world is categorically not different from all the other worlds that are just as open to it, but which it cannot select due to the greater probability of encountering a hazard there. None of these worlds is “true” or “singular” to the rover: they are simply either more probable or less, riskier or less, more navigable or less. The robot can only deal with the multiplicity of its worlds, because it operates in the mode of as-if. These worlds are compared with one another so that the best of all possible worlds can be selected and realized.

In this regard, it is crucial for the rover’s autonomy that all of these worlds are simultaneously available, comparable, and quantifiable. It is only these virtual-but-possible futures that allow the rover to make a decision about its behavior in the present. Each future is an as-if option that results in another possible position for the rover. Although each of these actions is possible as an option, the rover’s choice of action is not free, being bound by the hierarchy of paths with different risk assessments and the operator’s tasks at hand.

This observation allows us to come back to the question of what autonomy means in the context of adaptive machines. The autonomy of such a system consists precisely of its ability to adapt to its surroundings: its probabilistic method of operation means that it is working with environmental models that could also have turned out otherwise, thereby opening up the very possibility of making a decision at all, including a potentially different one. This process of adapting to unpredictable futures would be impossible if the machine had only one representation of the world. The robot needs to try out each of its actions in virtuality itself. In this constant playing through of actions, it is dependent not only on the current virtual world model but also on the virtuality of a multiplicity of possible worlds generated from this model. This is precisely the epistemological core of Perseverance’s autonomy, which could not operate without the virtuality of a multiplicity of possible worlds, each with different probabilities.

To speak of “worlds,” and to ascribe to these machines a capacity traditionally limited to living entities, is therefore not simply a provocation of anthropomorphism: the rover does, in fact, relate to the world both temporally and spatially. It relates temporally in that it compares sensor data from the past and present by means of filter algorithms in order to determine its future movements, and it relates spatially through virtuality because it can locate itself only in relation to its surroundings. The rover is not a stimulus-response machine reacting directly to what it senses but instead is situated in a spatial and temporal relation to the world. It evaluates the past (of previous locations) in order to predict the future (of possible actions) and to make decisions in the present that will either enable subsequent futures or prevent them. This relation of the machine to the world hinges upon the difference between the modalities of the virtual, the possible, and the real. The real manifests itself to the rover only through a virtual model of the rover’s surroundings that serves as the basis for calculating possible ways of relating to particular characteristics of this environment, which in turn are available only as probabilities. As a result, the rover needs to constantly interact with the world in order to locate itself therein.

This analysis of different modalities demonstrates that for autonomous machines such as robots, along with drones and self-driving cars, virtuality is not just a tool but an operational requirement. In this text, I could only describe one very specific and comparatively simple, but unique, case of sensor-algorithmic virtuality. I am not arguing that, for example, of self-driving cars are simply more sophisticated instantiations of Perseverance; rather, there are many different approaches and solutions for achieving operational autonomy. For further understanding the world-making of robots, it would be necessary to describe a wider range of different operationalizations of virtuality and its embeddedness within interactional spaces. The analysis of the fundamental challenges of robotic navigation presented here suggests that all adaptive, mobile systems that are situated in their environment operate with probabilistic models to transform uncertainty into action. With virtual models and their inherent microdecisions, they condense, as Louise Amoore writes, “multiple potential futures to a single output” (Amoore 2020, 4). By taking virtuality, in the Peircian sense, as an analytical starting point, it is possible to describe the modalities of this world-making and consequently to delineate the conditions of the machine’s interaction with other actors.

Conclusion

Taking virtuality, and not algorithms, artificial intelligence, mental states, or the processing of symbols as a starting point offers a chance to reconsider robotic relations to the world and the sociality in which humans and machines interact. As I have shown, a robot’s models of the world are virtual precisely because they enable the machine to compare and play through multiple possible worlds with different probabilities. With the example of Perseverance, I have scrutinized how such possible and, at the same time, virtual worlds are technically produced but also how a world is selected from a multiplicity of virtual possibilities as the one to be realized. The operational use of this virtuality is restricted to the AutoNav mode and used only in specific situations in which the engineers and researchers define tasks and frameworks. But as a method for autonomously locating and mapping surroundings, this approach is also more generally relevant for the spaces in which robots and other sensor-based technologies interact with humans.

Virtuality, in the context presented here, is not simply an alternative to reality, but a modality in which a multiplicity of worlds which share something with the real world can become efficient. If we follow this conception of virtuality not as a derivative of the real or as a new experiential space for digital technologies, then the question no longer arises of a hierarchy of between virtuality and reality. What remains, though, are the chains of translation that constitute virtuality, the specific efficiencies of models and their embeddedness within the operations of the system. With this approach, Perseverance turns out as a machine that is not simply an object in the world, but an actor that, with AutoNav, creates its own multiplicities of worlds. These worlds should not be reduced to smart spaces of prediction, preemption, and control. In the beginning of this text, I made the assertion that understanding these technologies as instantiations of artificial intelligence that react to the world with predefined categories is a depoliticizing act. With the understanding of sensor-algorithmic virtuality developed so far, it is possible to further qualify this claim.

The concept of virtuality presented here amounts to more than an addition to or a derivative of the real. Instead, the focus here is on technologies that operate with this virtuality, that is, that produce not just one virtual reality but a multiplicity of possible worlds. The simple binary of “virtuality versus reality” prevents us from grasping this multiplicity. We need to go deeper and ask how such virtual modalities are transforming spaces for interaction between machines and humans. What happens when the rover’s path is occupied not by stationary rocks but by walking pedestrians? For the rover, there is no categorical difference between these two objects, but it still needs to classify them differently. It is precisely in this virtualization of agency, this transforming of existing actors into virtual agents and the microdecisions this entails, that a new space for interactions is opening up between humans and machines. The questions of who is being classified in this interactive space, in which situations, and with what potential for agency are no longer abstract issues. They are already affecting the co-constituted spaces we share with sensor-based machines in determining who is allowed to move and how, with all the discriminations, biases, and essentializations inherent in this process.

Recognizing this machinic virtuality and the multiplicity of possible worlds as inherent to the operations of sensor-based machines offers opportunities to imagine and create alternative futures to those enacted by the “smartness mandate” (Halpern, Mitchell, and Geoghegan 2017). The computational capacities of robots are not reducible to algorithmic processes but should be understood as embodied relations between the machine and its environment mediated, in this case, by virtuality. Because these technologies employ specific forms of virtuality, their relations to the world can be imagined differently, in a way that recognizes alternative forms of “nonconscious cognition” (Hayles 2017), as possibilities to rethink the situatedness of technology in the world. A robot can operate in worlds that are fundamentally different from our world—on Mars, in the deep sea, in a volcano. But all robotic worlds share a common denominator: for the robot, they are virtual. To understand how robots, sensors, and algorithms transform the worlds we live in, we should try to understand this virtuality as a modality of our world that is not subordinate to the real, even if we cannot experience it.

Footnotes

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Deutsche Forschungsgemeinschaft (CRC 1567 Virtual Life Worlds).