Abstract

Calls for an ethically aligned technology design have led companies to publish lists of value principles that their engineers should adhere to. However, it is questionable whether such lists can grasp a technology’s wide-ranging ethical implications. The bottom-up elicitation of values from the specific technology context avoids problems that predefined lists of values have but has been criticized for lacking an ethical foundation. In this empirical study, we explore how three grand ethical theories of Western philosophy—utilitarianism, virtue ethics, and deontology—can support the discovery of values. Based on three technologies, our results show that ethical perspectives can support IT professionals in identifying values that are not only context-specific but also cater to higher ethical principles (i.e., intrinsic values) and a broad spectrum of sustainability goals (e.g., economic, technical, individual). Each theory of ethics served a unique role in the identification of ethical issues and value potentials of a technology. However, results also suggest a focus on mainstream values and individual values while environmental issues were neglected. We conclude that theories of ethics encourage different perspectives on a specific technology and thus argue for a pluralist ethical basis for values in technology design.

Introduction

With new technologies reaching into sensitive areas such as our privacy, the call for an ethically aligned technology design is more topical than ever. Contemporary scholars and philosophers of technology have long moved past the view that technology is “neutral” and technological development “inevitable” (Franssen, Lokhorst, and van de Poel 2009; Johnson 2015; Miller 2021). Technology mediates how we experience the world and influences how we make moral decisions (Verbeek 2006). For example, interfaces can be purposefully designed to bring about specific human behavior, such as voting or addictive use. Most importantly, scholars have observed that constant interaction with technologies impacts our conduct and our virtues: “we make things which in turn make us” (Ihde and Malafouris 2019, 196). All this makes technology design inter alia a moral activity (Johnson 2015; Verbeek 2006). Thus, designers and engineers are requested to consider the ethical implications of the technologies they develop and proactively address them (Martin, Shilton, and Smith 2019). But how can values be considered in practice?

In recent years, almost hundred private and public organizations as well as research institutions have tried to demonstrate their ethical engagement by publishing lists of value principles that employees, developers, designers, and so on should adhere to (Jobin, Ienca, and Vayena 2019). These lists promote an organization’s commitment to protect values, such as digital privacy, transparency, absence from algorithmic bias, and so on. However, it is questionable whether predefined value sets can indeed lead to sustainable technology design and represent the wide range of moral implications a technology might have. Innovation teams and engineers are no longer seen as providing strictly technical or economic value to society, but also human, social, and environmental value (Penzenstadler and Femmer 2013). When preconfigured value lists are used in the field of human computer interaction (HCI) design, they project values onto empirical cases by applying the logic of the list to the problem at hand (Le Dantec, Poole, and Wyche 2009). This inadvertently leads to a limited view on the value spectrum affected by a technology. Also, the moral foundation of predefined value lists has been questioned (Mittelstadt 2019). To avoid these limitations, scholars have argued for the bottom-up elicitation of values from the specific technology context (Le Dantec, Poole, and Wyche 2009; Reijers and Gordijn 2019). Value sensitive design (VSD) as described by Friedman and Hendry (2019) is the most prominent approach in this regard—yet, VSD methods have been criticized for lacking an ethical foundation (Manders-Huits 2011; Jacobs and Huldtgren 2021). Reijers and Gordijn (2019) have argued that only proper ethical reflection can ensure that the value elicitation process identifies the values of moral relevance and not just arbitrary stakeholder preferences.

In this paper, we explore whether normative ethical theories can contribute an ethical foundation to the value elicitation phase in technology design. More concretely, we explore how three grand ethical theories of Western philosophy—utilitarianism, virtue ethics, and deontology—can support the discovery of moral values in technology design. These three theories have been assessed as overarching moral theories in an early VSD paper (Friedman and Kahn 2003) and form the ethical basis of value-based design (Spiekermann 2016). Based on three different technology systems, we investigate in an empirical study whether value elicitation with the help of philosophical perspectives can identify values that are context-specific, pertain to higher ethical principles (i.e., intrinsic values) and support a broad spectrum of sustainability goals (e.g., individual, social, environmental). Moreover, we compare how the unique reasoning of each ethical perspective leads to identifying theory-specific value ideas.

Many empirical studies of ethical decision-making have studied deontology and utilitarianism as underlying ethical theories, whereas virtue ethics has been included only rarely (Drašček, Rejc Buhovac, and Mesner Andolšek 2020). Moreover, the respective studies did not focus on the technology design context, where the VSD community can look back upon many technology design projects and methods (Friedman, Hendry, and Borning 2017). Still, only few studies have investigated how a specific ethical theory plays out in the discovery of relevant values. Noteworthy in this regard is van Wynsberghe’s (2013) project on care ethics in robotic healthcare assistants, the discussion of a pragmatist approach to value identification by Boenink and Kudina (2020), and the theoretical criticism by Reijers and Gordijn (2019), who argue for a virtue focus instead of a value focus in ethical technology design. Against this background, our empirical study is unique and novel in that it compares different ethical perspectives and analyzes the resulting value ideas based on their underlying sustainability dimensions and theory-driven specificity. Our analysis of empirical data promises new and unique insights that can help to advance the value-oriented technology paradigm.

Our paper is structured as follows. First, we critically reflect on the current top-down and bottom-up approaches to values in technology design. Then, we briefly review utilitarianism, deontology, and virtue ethics, examining how their core philosophical perspectives can contribute to the value elicitation process in technology design as well as discussing the critical arguments with which each theory has been met. In the empirical part, we present insights from our study of seventy-one young IT professionals in training who applied the three ethical perspectives to the early technology design phases of one existing start-up project and two technology systems. We discuss the effects of employing normative theories in the value elicitation process along with the implications for current value-oriented design approaches. Our aim is to contribute an empirically founded argument for systematically eliciting values in technology design with the help of moral philosophy.

Values and Ethics in Technology Design

Triggered by pessimistic Artificial Intelligence (AI) scenarios and a detrimental amount of data protection and security breaches, investors have become sensitive to the many risks and uncertainties that a technological innovation can create (Jobin, Ienca, and Vayena 2019). Designers have an ethical obligation to protect and enhance the welfare of direct users, as well as the public and the environment (Russ 2019). In this spirit, van Wynsberghe (2021) has recently proposed a new definition of “sustainable AI,” which aims at sustainability through means such as machine learning but also considers the ecological, social, and economic impact of AI development itself. Values provide a promising concept that represent social and ethical considerations (van de Kaa et al. 2020) and can help to capture an aspiration for a greater good, such as sustainability goals (Penzenstadler and Femmer 2013; Winkler and Spiekermann 2019). It has been argued that a technology’s design should address not only economic (i.e., capital and long-term investments) and technical values (i.e., long-term usage and evolution of systems) but also social (i.e., social capital), individual (i.e., human capital and private good), and environmental (i.e., natural resources) values (Penzenstadler and Femmer 2013; Winkler and Spiekermann 2019).

Values represent what matters to humans, what they strive for and seek to protect, and as such have a moral connotation (Fuchs 2020). They can be defined as “conceptions…of the desirable” that influence human choices (Kluckhohn 1962, 395) or as principles of the “ought-to-be” (Hartmann 1932). When applied to a technology domain, value ethics sees value harms when a plane is not safe, a car engine is not environmentally friendly, or a social network is manipulative. Furthermore, it extends the discourse to positive value potentials, such as an algorithm’s transparency or a robot’s politeness. Technology design approaches that focus on values usually assume value pluralism (as opposed to value monism). Value pluralism assumes the existence of a diversity of values (e.g., friendship, respect, autonomy) rather than one “supervalue” that all other values can be reduced to (e.g., pleasure; Chang 2015; Anderson 1993).

Values can capture what is good instrumentally to achieve what is good intrinsically—good and valuable in itself (Hartmann 1932; van de Poel 2009; Scheler 1913 –1916/1973; Spiekermann 2016). An intrinsic value such as environmental friendliness or health is a “good in itself, and not because it is a means to another end or contributes to another value” (van de Poel 2009, 975). In contrast, instrumental values in the technology context, such as ease of use or transparency, are “a means to achieving a good end, i.e. another positive value” (van de Poel 2009, 976). Intrinsic values are therefore “higher”, they are experienced as deeper, more durable, and fulfilling and do not depend on other values (Scheler 1913–1916/1973).

A third group of human values inherent in the good character and conduct of a person are virtues. More recently, virtues have experienced a renaissance in the field of computer ethics (Vallor 2016). A virtue is “a disposition, habit, quality, or trait of the person or soul, which an individual either has or seeks to have” (Frankena 1973, 64). Examples are honesty, courage, loyalty, or humility. Including virtue-ethical considerations in a technology design process can help to capture the implications of a technology for the personal development of individuals interacting with the technology (Ihde and Malafouris 2019; Verbeek 2006). In other words, an ethical technology design framework should capture not only values but also virtues.

The List-based Approach to Values

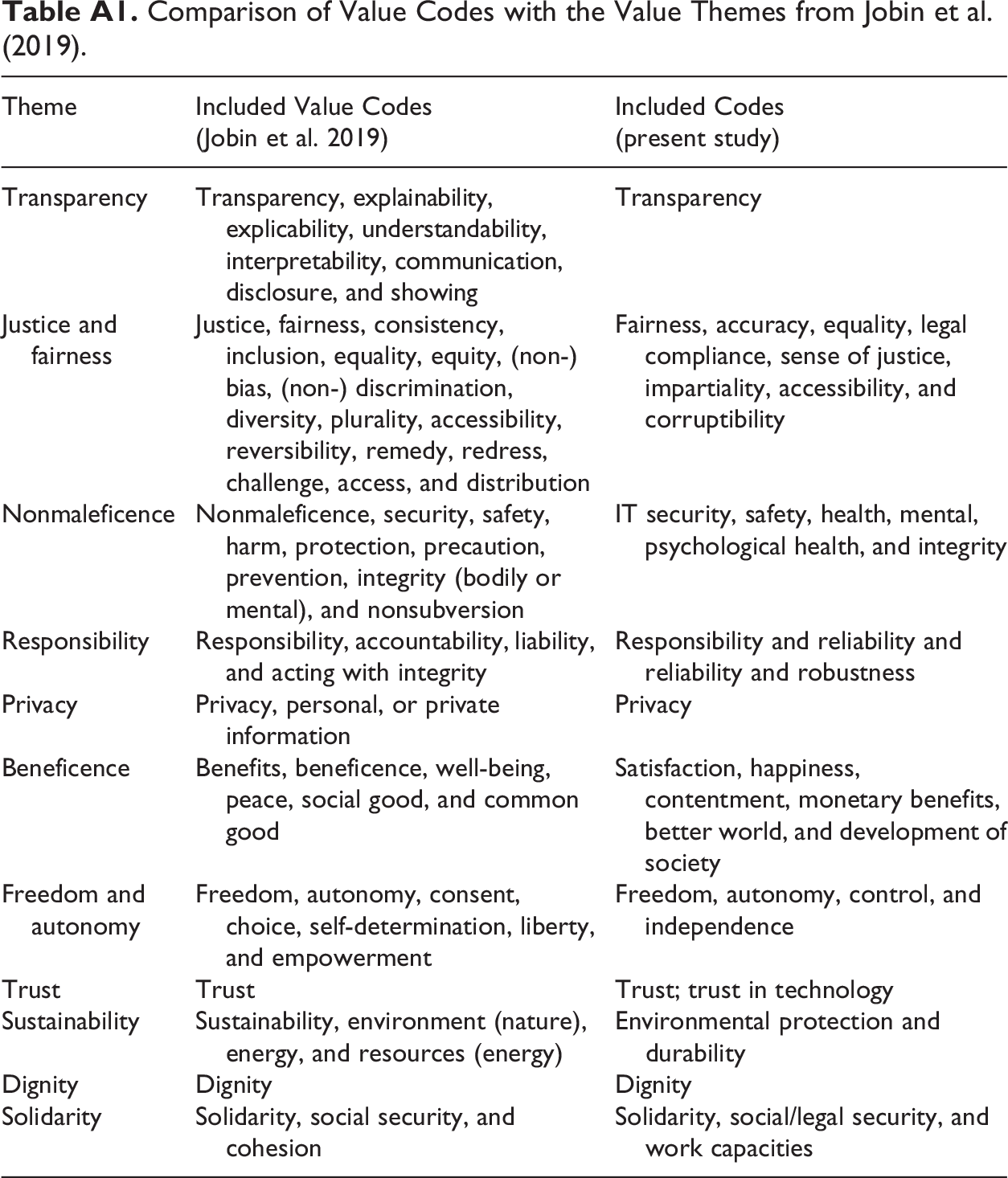

A common approach to an ethical technology design is to commit to value principles lists. Such lists have been published mostly by corporate, political, or industry representatives and seek to apply values top-down to a technology context. Jobin, Ienca, and Vayena (2019) identified eighty-four policy documents in the field of AI alone, reaching consensus on eleven shared values (see Table A1 in the Appendix): transparency, justice and fairness, nonmaleficence, responsibility, privacy, beneficence, freedom and autonomy, trust, sustainability, dignity, and solidarity. While the increasing prominence of ethical guidelines is certainly desirable, applying values in a top-down manner is problematic in at least three ways.

First, published guidelines predominantly focus on preventing value harms and on avoiding negative consequences. They tend to neglect the potential inherent in the active promotion of positive values (Jobin, Ienca, and Vayena 2019). However, values should set constraints on design while also helping to uncover creative technological solutions (van den Hoven, Lokhorst, and van de Poel 2012; Shilton 2013) and foster new forms of added value for companies (Spiekermann 2016).

Second, any predefined list risks to narrow the focus on values that are being promoted through the list rather than the problem at hand (Friedman, Hendry, and Borning 2017). This is especially problematic when technology development focuses mainly on technical and economic values (such as efficiency and ease of use), while the social and environmental impact is neglected (Lago et al. 2015). A truly ethical perspective should aim for a broadly sustainable technology design that acknowledges values relevant for the individual, as well as social, economic, and environmental development (Penzenstadler and Femmer 2013; Winkler and Spiekermann 2019; van Wynsberghe 2021). Development in this context can commit to the protection of human dignity and health or the preservation of natural resources.

Third, the consideration of broadly established values can lead to neglecting values relevant to the specific cultural context (Borning and Muller 2012), the specific context of use, and the stakeholders affected by the technology (Pommeranz et al. 2012). Every technology embodies highly unique and context-specific values that engineers and developers need to explore, discuss, and ethically reflect upon (Miller 2021). Especially with transformative technologies, such as nanotechnology, biotechnology, information technology, and cognitive science, there is an increased need for more flexible ways of moral deliberation (Umbrello 2020b).

To avoid the practical danger of projecting values top-down onto empirical cases, scholars have stressed that values need to be discovered within the specific context given (Boenink and Kudina 2020; Le Dantec, Poole, and Wyche 2009). Such a bottom-up value discovery process can help to overcome the narrow and one-sided focus on commonly accepted “central” values and unveil context-specific “marginal cases” (Agre 1997, 45). Shifting the focus away from central themes to the marginal areas of a technology project “generates a more complete spectrum of relevant system requirements that lie out of today’s corporate mainstream thinking” (Spiekermann 2016, 182). To illustrate this, Spiekermann discusses the example of a digital travel agent, where the marginalized concept of gaining time represented user needs and preferences much better than the mainstream value of saving time or efficiency, values which are much more easily accessible for designers engaged in a design task.

Bottom-up Value Discovery: In Need of Ethical Reflection Methods

VSD methods (Friedman, Hendry, and Borning 2017) explicitly support the bottom-up elicitation of values through the identification of potential harms and benefits and the inclusion of stakeholders in the design process. Thus, they can avoid the problems with which predefined lists of values are confronted. Still, some scholars have leveled the criticism that VSD cannot distinguish relevant moral values from mere stakeholder preferences, and that it would benefit from an additional ethical grounding in moral philosophy (Manders-Huits 2011; Reijers and Gordijn 2019). To ensure that a value elicitation process actually leads participants to identify higher, morally relevant values, it is necessary to set up a moment of ethical reflection and commitment (Shiell, Hawe, and Seymour 1997; Reijers and Gordijn 2019; Jacobs and Huldtgren 2021) or “philosophical mode” (Flanagan, Howe, and Nissenbaum 2008).

At the same time, it has been argued that a mere theory-driven approach is both too strict (Umbrello 2020b) and too indeterminate (Jacobs and Huldtgren 2021) to guide the design of a technology based on moral claims. Thus, what is needed is a combination of a top-down approach providing moral justification with a bottom-up approach that takes the context into consideration. In the VSD tradition, this should be accomplished at the conceptual and at the empirical level (Friedman, Hendry, and Borning 2017). However, VSD scholars have never specified an underlying ethical framework for VSD. They have discussed the moral philosophies of utilitarianism, deontology, and virtue ethics as a potential moral basis for human values (Friedman and Kahn 2003) and have contemplated that implications for technology use depend on the respective ethical perspective taken (Friedman and Hendry 2019). Still, they have not offered any solution to the disagreements of Western-centric perspectives or other non-Western worldviews, leaving it up to the people involved in the design process to determine what makes a value “moral” (Friedman and Hendry 2019). This paper fills this gap with a first empirical study.

Are there specific advantages and challenges that the philosophical perspective of ethical theory bears for the value elicitation process? After all, utilitarianism, virtue ethics, and deontology differ significantly in the way they define what is good and right, so their unique approaches could inspire different ideas on how human values are impacted by technology—and potentially complement each other in producing a more holistic value perspective on a specific technology. In the following section, the three ethical perspectives and their specific advantages and downsides are discussed and their relations to values.

Utilitarianism: Weighing Beneficial and Harmful Consequences

Fields of study focusing on technology research and reflection, such as technology assessment, ethics of science and technology, or science and technology studies (STS), typically try to “anticipate the implications of scientific and technological advances and to assess the results of the anticipations with respect to social desires, political goals and ethical values” (Grunwald 2017, 140). With this focus on implications and results, they essentially follow a consequentialist approach when assessing technologies (Grunwald 2017). Utilitarianism is a specific form of consequentialism that seeks to maximize the general good for the greatest number of people (Frankena 1973). The utilitarians Jeremy Bentham (1748–1832) and John Stuart Mill (1806–1873) interpreted this good in psychological terms as pleasure, social utility, or well-being (Mill 1879/2009; Bentham 1789/1907), which all can be considered important values. In so doing, they provided a strong reasoning for the evaluation of what is morally right as well as the philosophical origin of two basic concepts of neoclassical economics. The analysis of costs and benefits suggests weighing the expected costs of a decision, project, or product against the expected resulting monetary value, while the maximization principle mandates choosing the action that is expected to result in the highest positive value. VSD projects often follow a similar approach (Friedman, Hendry, and Borning 2017) by brainstorming potential stakeholder harms and benefits and mapping them onto corresponding values (e.g., Rector et al. 2015). Just as there are many possible actions that can maximize the general good, that is, the highest value to be pursued, there could be different values related to technologic capabilities that support this general good. Thus, a consequentialist perspective can lead to the identification of relevant values, such as human health and environment (Doorn 2012).

However, the emphasis of possible consequences, for example, the implications of a technological capability, also raises issues. First and foremost, it can lead to the justification of actions that cause harm. An example for where this becomes relevant in technology design is the Moral Machine experiment (https://www.moralmachine.net/) conducted at MIT. In this experiment, participants weigh the benefits and costs of an autonomous car killing some pedestrians at the expense of others in an unavoidable accident, depending on their worth to society, the economy, and so on (Awad et al. 2018). This “utilitarian calculus” is contrasted with the deontological position that optimizing decisions on who is supposed to die through maximizing economic or other societal principles can never justify the breach of moral principles, such as human dignity and equality. Moor (1999, 68) argued that “good ends somehow blind us to the injustice of the means.” This can be mitigated by a form of “general utilitarianism,” which does not focus only on the consequences of one particular person and her action in a specific situation (as is the case for “act utilitarianism”), or the consequences of adhering to a particular rule (“rule utilitarianism”), but considers “what would happen if everyone were to do so and so in such cases” (Frankena 1973, 37).

Deontology: Addressing Moral Obligations

While consequentialist theories such as utilitarianism focus on the consequences of an act, deontological theories put the emphasis on duty, as deon, the Greek word for duty, implies. From a deontological perspective, a moral agent has to consider the universal laws inherent in an action. Kant formulated this in the first part of his categorical imperative: “act only according to that maxim by which you can at the same time will that it should become a universal law”—and added that the outcome of an action can never justify the action itself (Kant 1785/2011). Duties in the form of rules have a long tradition in many societies and even form a common instrument of moral guidance in the corporate context, for example, in the form of professionals’ codes of ethics, such as the “ACM Code of Ethics and Professional Conduct” (2018). A deontological perspective can also help to uncover values that moral agents should seek to protect. For example, Friedman and Kahn (2003) have argued that privacy can be derived from vendors’ unconsented data collection, which is to be considered an immoral action from a deontological perspective. Scanlon (1998) has presented a similar argument based on a modified version of the categorical imperative, which, in brief, stresses that it is “what we owe to each other” that motivates “the good” in values.

However, the deontological focus on universal principles can be difficult to apply to concrete situations. Also, deontology faces a difficulty in the tension between alternative moral duties that seem equally important but lead to different behavioral outcomes. Ironically, deontological theories can deal with these issues by incorporating consequentialist elements; for example, the duty to emphasize actions that “promote the aggregate good” (Ross 1930). In this way, deontology and utilitarianism can complement each other (Brady and Dunn 1995). Another danger inherent in applying Kant’s philosophy was famously portrayed by Arendt’s (1965/2006) documentation of the Eichmann trial in Jerusalem, where Adolf Eichmann proclaimed that he did not feel guilty because he had acted in accordance with Kantian principles. Eichmann’s error was to uncritically embrace the evil principle of Arianism because he confused an ideology of his time with a morally valid universal law. In the current business and technology environment, principles such as profit, innovation, or growth could also be mistakenly considered as ethically desirable principles solely because they represent the current corporate norm. This problem relates to Agre’s (1997) critical discussion of technology discourses that only focus on “central” themes. When combining the perspective of deontology on values with other ethical theories, it seems plausible to conduct the deontological analysis last in order. Ideally, it will re-evaluate previously identified values and virtues and emphasize those that deserve the greatest attention in the design process instead of overemphasizing central or mainstream value themes.

Virtue Ethics: Supporting Good Character Traits

Virtue ethics is one of the oldest and most prominent theories that emphasizes the moral excellence of a person’s character rather than her adherence to rules of action, duties, or resulting consequences. A virtuous person will tell the truth, not because she has to or because it leads to the best outcomes but because she is a truly honest person and wants to lead a morally good life. According to classical virtue ethics, represented especially by Aristotle (384–334 BC; 2004), only a really virtuous person will live in true happiness or eudaimonia. Virtues are bound to the character and behavior of individuals but at the same time bear relevance to the moral thriving of a community at large. They represent “a balance between excess and deficiency,” where any set of values is in balance with an individual’s social context (van Staveren 2007, 27). Thus, virtues can help to emphasize the importance of society and social practices instead of only focusing on the individual in moral questions (MacIntyre 1981/2007). With reference to Hartmann’s (1932) ethics, virtues can be understood as moral values possessed or carried by a person (Kelly 2011). Friedman and Kahn (2003, 1181) have also recognized that specific values such as friendliness, caring, or compassion “fit within a virtue orientation.” Thus, when we refer to “values” in the following, we also include virtues in this sense.

While virtue ethics played a subordinate role in modernity, it has recently shown great potential in dealing with the ethical issues posed by new technological developments. Among the most important proponents of virtue ethics today is Vallor (2016), who presented a set of technomoral virtues including honesty, self-control, and empathy, which she sees as particularly important for dealing with the “increasing global complexity, instability, plurality, interdependence, rapid change, and growing opacity of our technosocial future” (p. 245). While these virtues are universally important, Vallor (2012) has also presented a more context-specific virtue-ethical analysis of friendship on social media.

A virtue ethical perspective seems important for a wise management of the technoscientific power in our society. Focusing on the concept of virtue in the design process can support business people and engineers to consider the moral development of affected stakeholders, who they might otherwise only see as “user,” “human resource,” or “consumer.” This aspect has come more to the forefront of critical technology discussion and the concern about the degradation and symbolic impoverishment of humanity (Stiegler 2019). However, virtue ethics has also been criticized, as it does not offer straightforward guidance on morally good actions, for example, through moral guidelines or universal principles. By contrast, virtue ethicists such as Vallor (2016) would argue that this apparent weak point of virtue ethics is actually one of its strengths: good character traits are flexible in responding to new challenges in our everyday routines, which a pre-established set of rules is not easily able to do. This idea has been taken up Reijers and Gordijn (2019), who made the case for a “virtuous practice design,” which focuses on practices in relation to the technology and the various stakeholders, thus including considerations of human development. It has been argued that this virtue-based approach can complement already established VSD methods (Umbrello 2020a). Thus, virtue ethics might be especially suited to complementing a bottom-up elicitation process of values and virtues relevant for a specific technology.

Empirical Study on Ethical Theories in the Value Elicitation Process

Over the course of two semesters, seventy-one young IT professionals enrolled as students in a master’s program in Information Systems participated in an empirical study to analyze one of three innovative digital technologies: a bike courier service, a smart teddy bear, or a telemedicine platform. The goal was to explore how the practical application of the core philosophical reasoning of three ethical theories (utilitarianism, virtue ethics, and deontology) could guide the value elicitation process.

In the first semester, thirty-six participants were split into two groups and worked individually either on the fictitious product scenario of a smart teddy bear dedicated to the entertainment of children (N = 24, age: M = 24.4, SD = 3.0; 54.2 percent female; sixteen different nationalities) or on a bike courier app for food delivery to households (N = 12, M = 23.0, SD = 1.5; 50 percent female; 9 different nationalities). In the following semester, thirty-five participants (age: M = 24.6, SD = 2.6; 38.2 percent female; fourteen different nationalities) worked in pairs and analyzed a telemedicine platform that connects doctors to patients through an online video interface to make a first diagnosis and then refer them to specialists from the platform’s own recommender database, which benchmarks specialists’ performance.

All study participants had considerable training in both business management and engineering due to the master program’s admission criteria and substantial professional experience. 1 Also, they received a detailed lecture- and literature-based introduction to the three moral philosophies. They learnt about the different forms of general, act- and rule-based utilitarianism (Frankena 1973) as well as the criticisms utilitarianism has faced in the philosophical literature (MacIntyre 1981/2007; Frankena 1973; Nagel 1989). Drawing on role models in literature and film, students learnt that the concept of virtue is grounded in the wider notion of Aristotelian golden-mean behavior as well as the concepts of arete and phronesis (Aristotle 2004). When introducing Kant’s deontology and categorical imperative, great emphasis was put on explaining the concept of personal maxims, along with La Rochefoucauld’s taxonomy of duty (La Rochefoucauld 1664/2005). Emphasis was also put on not confusing personal maxims with timely value norms or ideologies, as criticized by Arendt (1965/2006), that is, abstaining from thinking for instance what CEOs of our time would want.

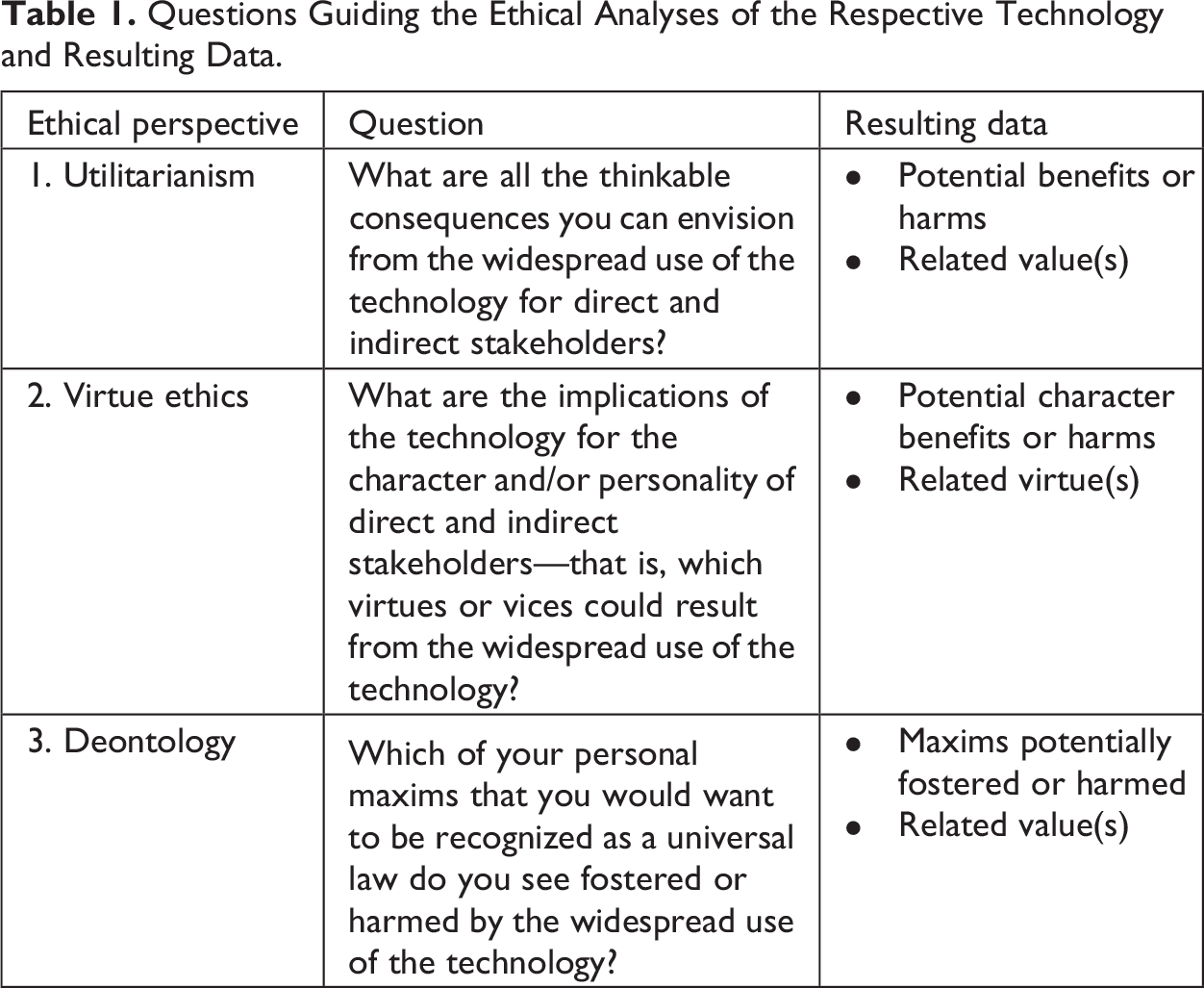

Students were then asked to apply the three ethical perspectives to one of the three technologies to identify values that the respective technology system should cater to and protect. Guided by the core reasoning of the ethical theories, participants were instructed to first describe harms or benefits (utilitarian perspective), then personal character implications (virtue ethical perspective), and lastly relevant personal moral principles impacted (deontological perspective) in an open text form. Furthermore, they were asked to assign a name to the value that would best represent their critical moral thinking. Table 1 shows the questions that summarize the central idea of every ethical perspective used to guide participants in this process and the type of data we retrieved to conduct the analyses presented below. When we use “utilitarianism,” “virtue ethics,” or “deontology” in the following presentation and discussion of our findings, what we refer to is the utilitarian/virtue ethical/deontological analysis conducted by our participants.

Questions Guiding the Ethical Analyses of the Respective Technology and Resulting Data.

All in all, these ethical perspectives led our seventy-one participants to describe 1,471 positive and negative implications related to the introduction of the three technology systems. We applied a mixed-method approach in various data analysis cycles to reliably capture and represent participants’ ideas within different categories. First, we conducted qualitative content analyses (Mayring 2014) to group the 1,471 ideas into the following five categories: intrinsic values (e.g., equality), instrumental values (e.g., ease of use), virtues (e.g., truthfulness), emotions (e.g., feeling lonely), and personal characteristics/abilities (e.g., tech-savviness). Below, we only focus on the 1,264 ideas that relate to values or virtues. We then assigned to each of these values and virtues an underlying sustainability dimension, guided by a theoretical framework that connects five dimensions of sustainability (individual, social, technical, economic, and environmental; Penzenstadler and Femmer 2013) to values (Winkler and Spiekermann 2019). For example, we determined fairness as a social value and IT security as a technical value. We iteratively assigned categories and resolved disagreements through discussion until full agreement was reached, which is a common approach in qualitative research (Chong and Reinders 2021). Our final category system included a total of 113 values, which consisted of forty-one instrumental and twenty-five intrinsic values as well as forty-seven virtues. Lastly, we created variables that showed the frequency of ideas for each participant and category and used this quantitative output for a comparison of ideas resulting from the different ethical analyses. We describe the coding process in more detail in a related paper, where we compare ethical analyses to a traditional product roadmap approach (Bednar and Spiekermann-Hoff 2021).

Comparison of Elicited Values across Ethical Perspectives

The value elicitation task inspired by the utilitarian perspective triggered by far the greatest number of ideas that participants related to values (N = 583; compared to 386 ideas in the virtue ethical and 295 ideas in the deontological analysis). This is not surprising, given that the utilitarian calculus invites us to consider as many value effects as possible when weighing harms and benefits. However, when we look at the actually identified values and virtues (which can each subsume several ideas), the results of the three ethical perspectives are comparable (utilitarianism: seventy-eight, virtue ethics: seventy-nine, and deontology: seventy-four).

Most Frequent Values

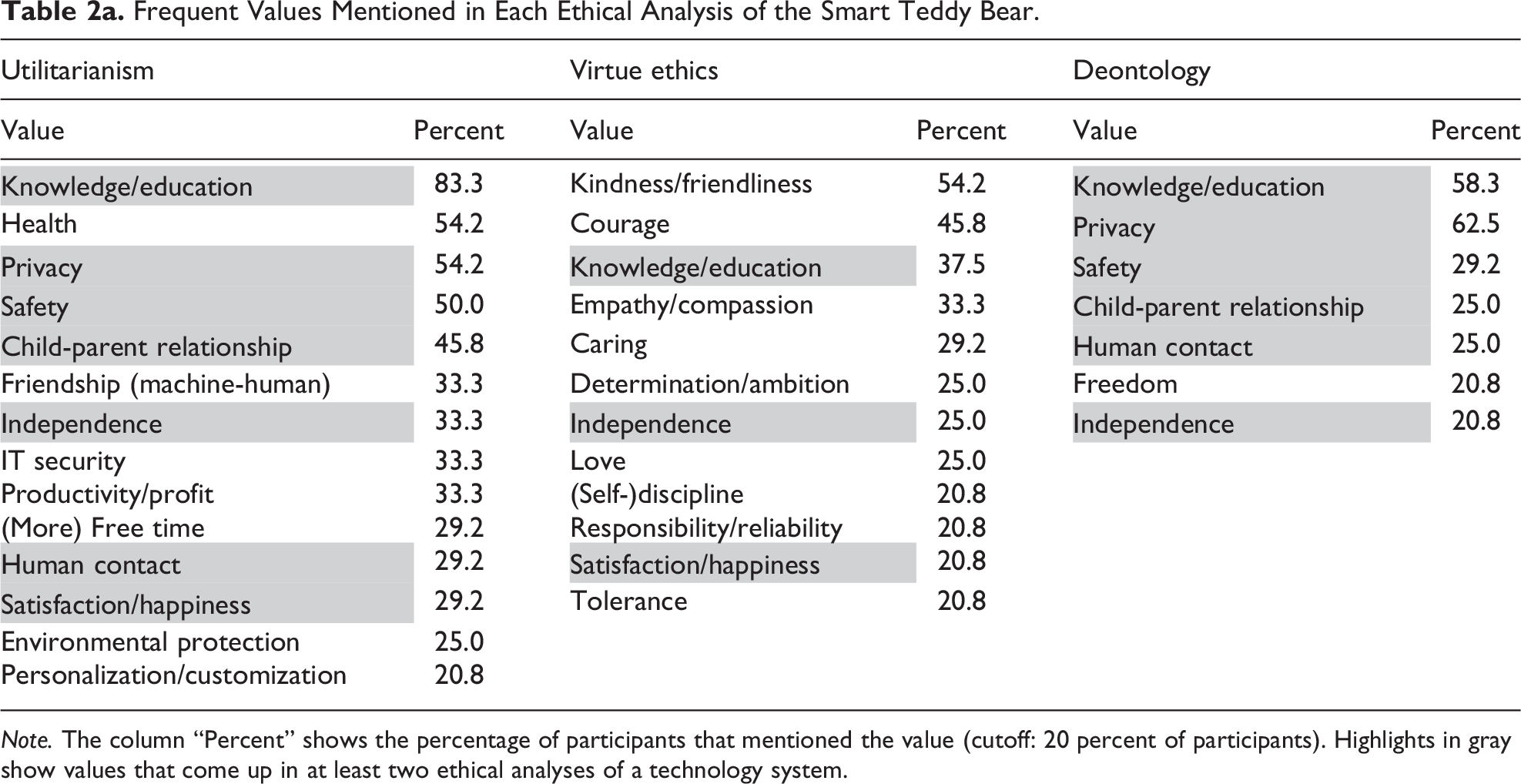

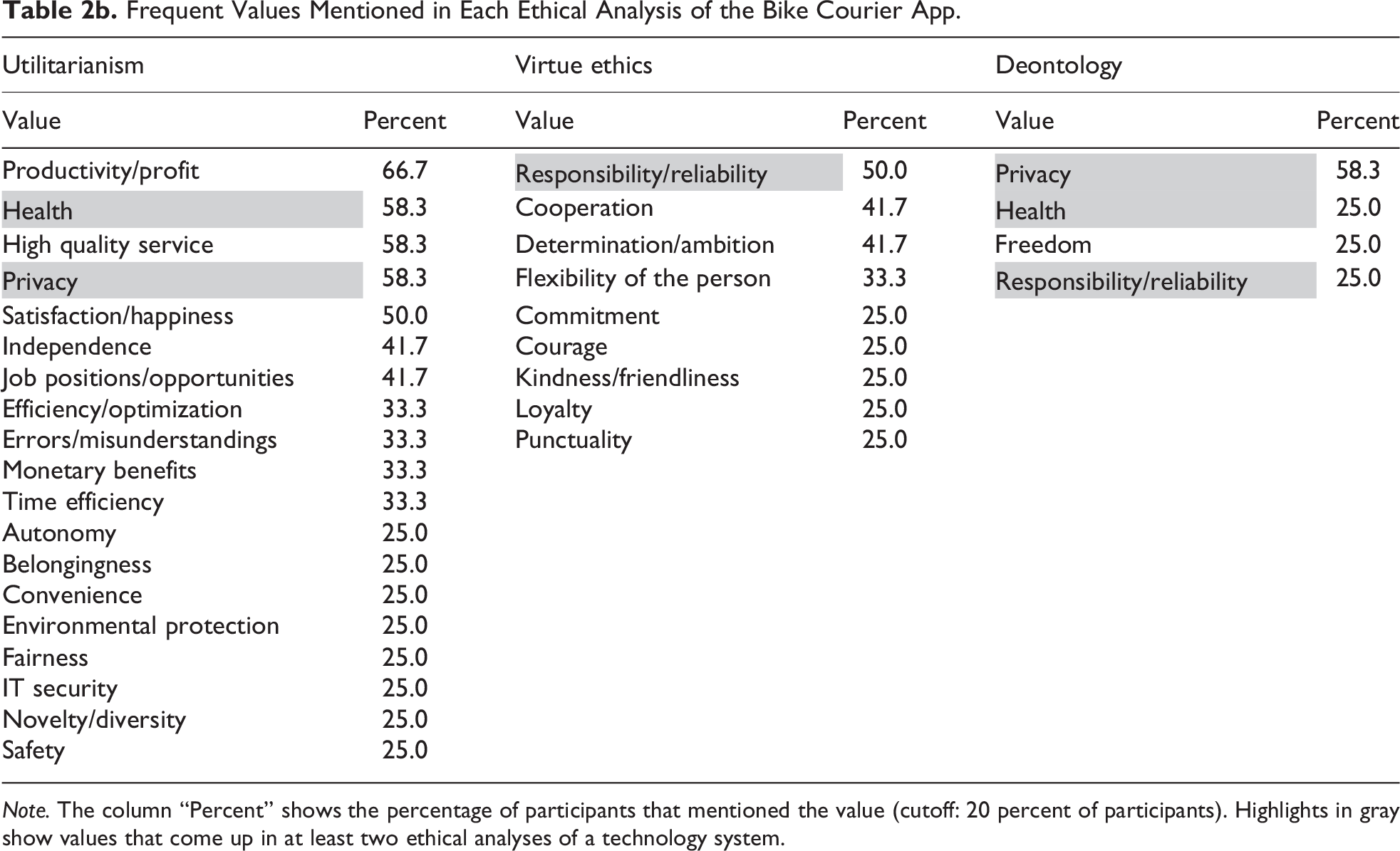

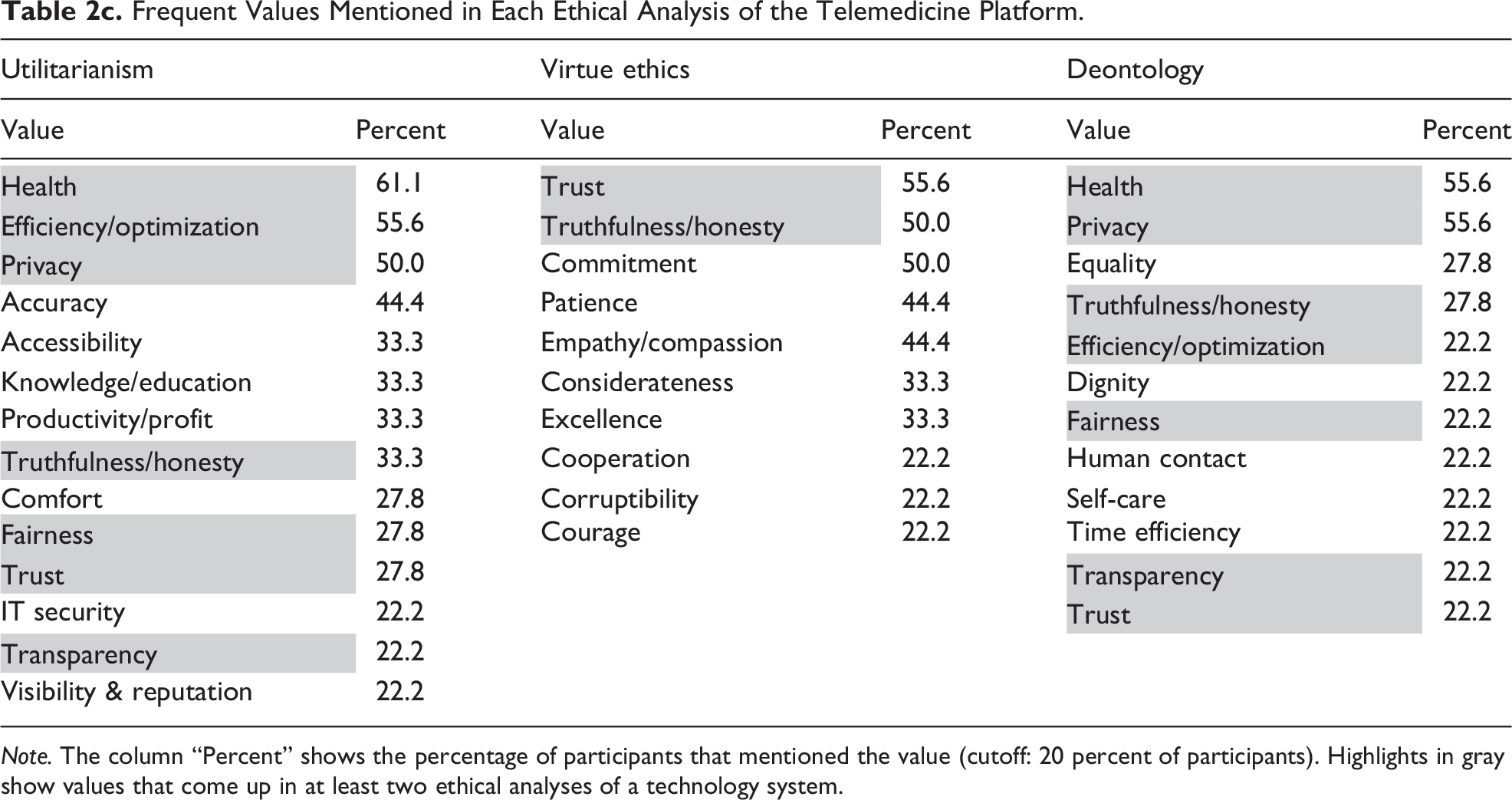

The pool of frequently elicited values showed a high sensitivity for the respective technology context, with very few overlaps across the three technologies. Still, several intrinsic values reoccurred frequently across all technology systems and ethical theories, consider, for example, knowledge, privacy, and health. Table 2a-c contains the details on frequent values found for each technology system and ethical perspective.

Frequent Values Mentioned in Each Ethical Analysis of the Smart Teddy Bear.

Note. The column “Percent” shows the percentage of participants that mentioned the value (cutoff: 20 percent of participants). Highlights in gray show values that come up in at least two ethical analyses of a technology system.

Frequent Values Mentioned in Each Ethical Analysis of the Bike Courier App.

Note. The column “Percent” shows the percentage of participants that mentioned the value (cutoff: 20 percent of participants). Highlights in gray show values that come up in at least two ethical analyses of a technology system.

Frequent Values Mentioned in Each Ethical Analysis of the Telemedicine Platform.

Note. The column “Percent” shows the percentage of participants that mentioned the value (cutoff: 20 percent of participants). Highlights in gray show values that come up in at least two ethical analyses of a technology system.

The three ethical value elicitation tasks differ in the type of values they emphasize. Utilitarianism seems to be good at capturing the central values that the respective technology is designed to foster: most participants mentioned knowledge/education for the smart teddy bear, productivity/profit for the bike courier app, and health for the telemedicine platform. At the same time, some values reappear as prominent values in the utilitarian analysis across all three technologies: health, privacy, and productivity/profit were mentioned by at least one-third of the participants. All three values are highly relevant from different technology design perspectives. Their seemingly universal relevance and easy accessibility in a technology design task motivate their classification as “mainstream values” (Spiekermann 2016). Privacy was mentioned by at least half of the participants for all three technologies. Due to numerous reported data breaches and rising public concerns about health and location information, it has become a mainstream area of research in the past years (Yun, Lee, and Kim 2019). Privacy is also represented as an ethical principle in more than half of the policy documents for ethical AI development that Jobin et al. (2019) reviewed. The value health holds a comparably prominent place among the frequently mentioned values from the utilitarian perspective for all three technologies. This is in line with recent empirical research on values in design, which reported health as the most important value for participants with different cultural backgrounds (Kheirandish et al. 2020). The third value that was mentioned across all three technologies in the utilitarian analysis is productivity/profit. We explain this from the fact that our participants were students of economics and business, who probably have the company’s primary goal in the back of their mind when analyzing a technology system. Taken together, these findings lead us to speculate that the value ideas elicited through the utilitarian perspective are prone to capturing obvious values that represent central themes of a technology, universally acknowledged principles, or timely discourses.

In the deontological analysis, participants re-embraced some values discovered in the utilitarian analysis. For example, more than half of the participants again mentioned the mainstream value privacy for the three technology systems. Value elicitation from a deontological perspective thus runs the risk of promoting duties mechanically by repeating values that everyone talks about (e.g., in the press), but not “out of duty,” as Kant (1785/2011) himself would have wanted it. That said, participants did not repeat all prominent values from the utilitarian analysis. For example, the instrumental economic value productivity/profit was not among the frequent values of the deontological analysis. What is more, the deontological analysis regularly led participants to identify intrinsic values not often mentioned in any of the other two ethical analyses, such as freedom, equality, or a fear of losing human contact. Thus, in spite of the potential pitfall to repeat easily available values, the deontological perspective still contributes a unique ethical perspective.

The virtue ethical perspective unveiled fewer mainstream values, probably because a technology’s character effects are rarely discussed in today’s public technology discourse. Virtues mentioned by at least half of the participants included the reliability of bike couriers that can be fostered through the constant usage of a time-sensitive app, the kindness of children that might be promoted through the smart teddy bear’s polite form of conversation, and the commitment of patients to their personal healthcare supported by a telemedicine platform that is easier to access than a physical practice. The virtue ethical analysis also inspired more nuanced reflections about virtue: the bike courier’s potential loss of a healthy ambition resulting from a lack of human interaction, the child’s loss of courage due to the ubiquitous presence of its digital companion, or a doctor’s increased considerateness due to extended video sessions with patients.

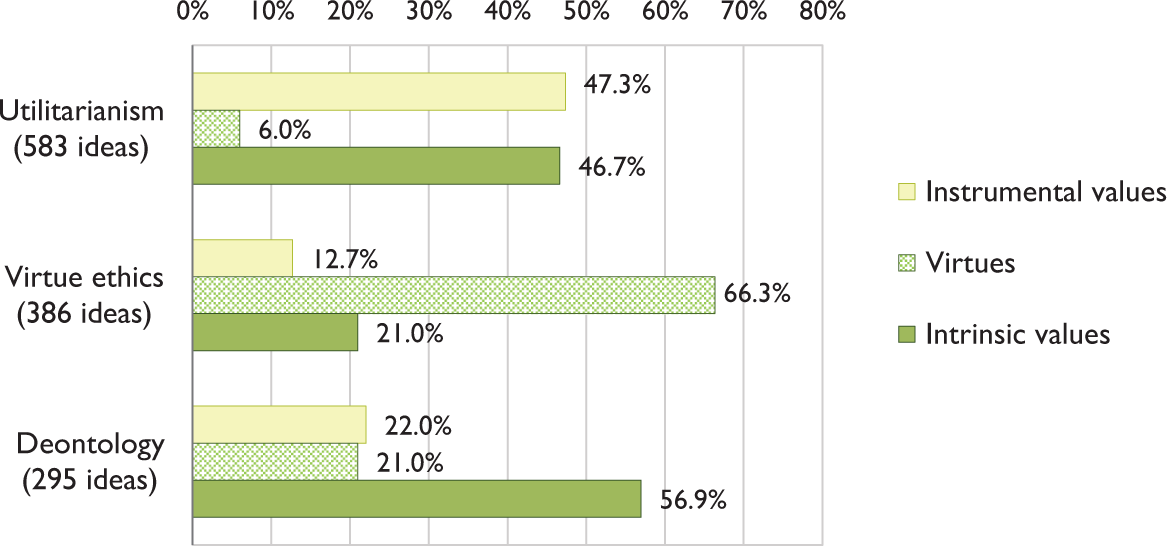

Elicited Intrinsic Values, Instrumental Values, and Virtues

Participants identified instrumental values, intrinsic values, and virtues. In line with the philosophical reasoning behind each of the ethical perspectives, the three category groups vary significantly in their prominence for the utilitarian, virtue ethical, and deontological analysis. Figure 1 shows an overview of the pool of ideas aggregated for all three technology systems.

Share of instrumental/intrinsic values and virtues among the pool of value ideas aggregated for the three technology systems.

The utilitarian perspective clearly elicited the greatest share of instrumental values (47.3 percent) compared to virtue ethics (12.7 percent) and deontology (22 percent). This relates well to general utilitarian reasoning because values such as efficiency and productivity cater to the classic utilitarian good. That said, Figure 1 shows that utilitarian reflections also led to the identification of many intrinsic value ideas (46.7 percent). This finding is prompted by our general utilitarian study setup, which invited participants to consider the consequences of everyone acting in the same manner in a specific situation instead of directing participants to focus only on their action in a specific situation (act utilitarianism) or on rules (rule utilitarianism). This might have inspired participants to think about values that are highly relevant for everyone and hence cater to intrinsic values such as health and knowledge/education. Mill’s call for maximizing the good for the greatest number of people also came up regularly in the value satisfaction/happiness, which was mentioned most often in the utilitarian analysis.

That said, deontological reflections elicited the highest share of intrinsic value ideas (56.9 percent), in line with the deontological focus on universal principles. Most importantly, participants named values that had not been captured in the other analyses, such as personal growth in the cases of the bike courier app and the smart teddy bear, or the development of society in the telemedicine case. In other words, the deontological focus seems to successfully inspire participants to think about values of higher rank and universal applicability during the value elicitation process. However, neither deontological nor utilitarian reasoning can reveal the spectrum of a technology’s implications for human character and virtuousness.

The virtue ethical perspective predictably inspired participants to come up with ideas linked to virtues (66.3 percent). A total of forty-four of the forty-seven virtues (93.6 percent) identified through the three ethical perspectives were uncovered by the virtue ethical analysis (ranging from 80.8 percent to 100 percent for the three technology systems). Participants’ reflections described how stakeholders’ virtuous character traits and habits could be affected by the technology (e.g., the bike courier’s increased flexibility and punctuality due to the use of an app) and also that virtues could affect how the technology plays out in a certain context (e.g., a doctor’s commitment, patience, or excellence when using the telemedicine platform). Furthermore, 21 percent of the ideas uncovered by the virtue ethical perspective related to intrinsic values important for individuals, such as trust, knowledge/education, or independence.

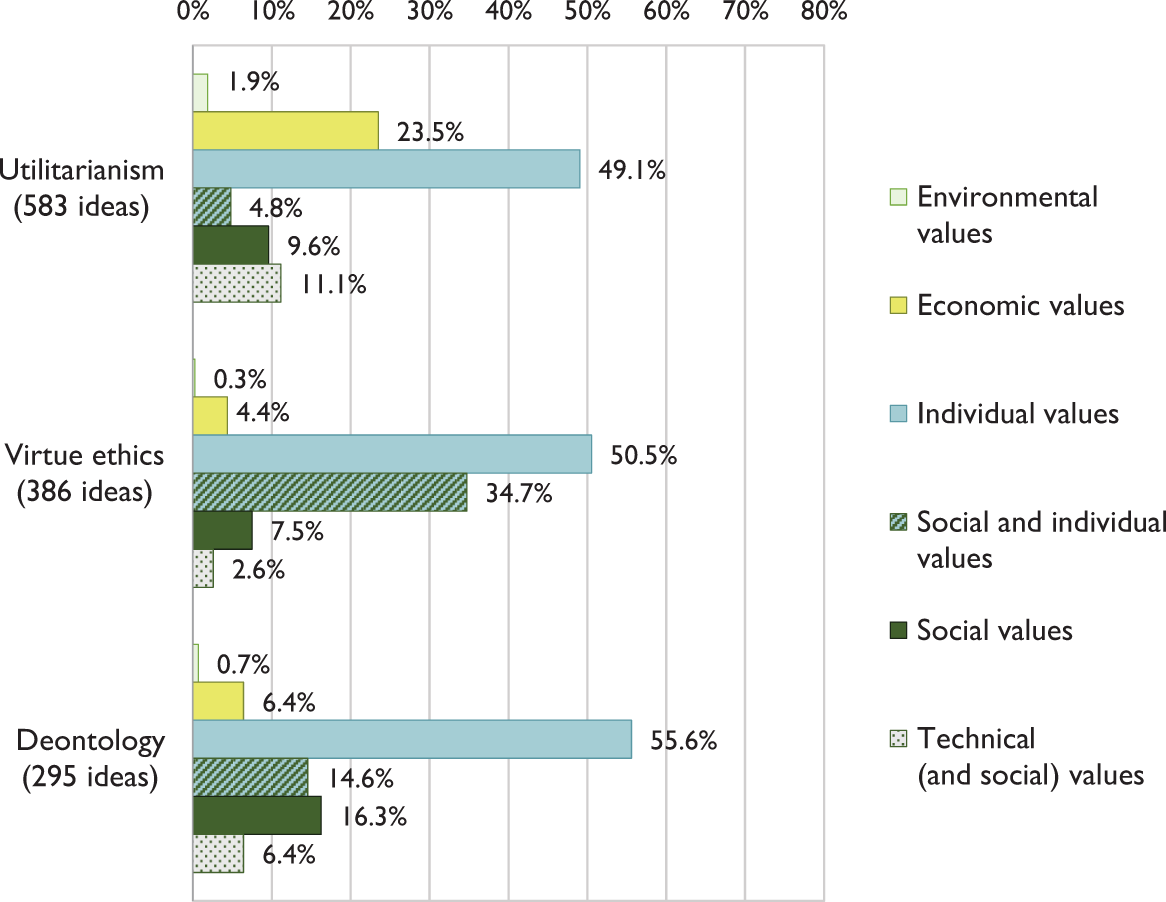

Sustainability Dimensions Addressed by the Values Elicited

The pool of value ideas aggregated for all three technology systems and categorized according to their underlying sustainability dimension (Figure 2) shows that in all three ethical perspectives around half (49.1 percent–55.6 percent) of the value ideas centered on individual values, that is, values like convenience or health and virtues like frugality or perseverance—all of which cater to an individual’s well-being. Compared to the identified social values, which together only covered 10.5 percent of ideas, this seems to hint at an overall bias toward individual development and well-being and a neglect of societal development, social welfare, and mutual care. Health, privacy, knowledge, satisfaction, safety, and independence cover almost half (49.1 percent) of all individual value ideas, and they represent intrinsic values that are morally relevant. Still, it is remarkable that participants’ ideas related to individual values almost five times as often as to social values. This finding resonates with MacIntyre’s criticism of individualistic moral thinking in modern societies and leads to speculate whether current generations are at all capable of appreciating the moral difference between individualistic and social virtues and to perceive the higher moral relevance of the social. MacIntyre (1981/2007) heavily criticized the predominant focus on the individual in moral questions, arguing that we should draw on classic moral philosophy to correct this flawed modern understanding of ethics and rediscover the importance of virtue as social practice. While half of the ideas in the virtue ethical analysis showed the same individual focus as the other ethical perspectives, virtue ethics also inspired around twice as many socially related value implications than the utilitarian perspective (virtue ethical perspective: 163 and utilitarian perspective: eighty-four) as well as the highest number of ideas with a combined individual and social relevance, as discussed in more detail below.

Underlying sustainability dimensions in the pool of value ideas aggregated for all three technology systems.

A second important finding of our study is that all three ethical perspectives failed to inspire value ideas that relate to the natural environment. Only one environmental value was detected by the utilitarian analysis in the bike courier app, where a greener city was envisioned when bikes instead of cars delivered food. This is a meager result at a time when environmental discussions are common. Participants could have raised concerns about waste created when analog products are digitalized as in the case of the smart teddy bear or the CO2 emissions caused by AI implementations. It could be argued that the general focus of traditional ethical theories has never been so much on the natural environment as on human beings and their moral development (Russ 2019). This result is critical because it suggests that a combination of three ethical theories can still fail to see the most pressing value issue in a technology assessment study, that is, the depletion and destruction of natural resources.

Delving into further nuances foregrounded by the three ethical frameworks, the utilitarian perspective captured especially economic and technical values. The entanglement of utilitarian with a primary relevance for the theories with economic history and concepts such as utility and maximization could explain why participants often thought of how the company could increase its productivity, efficiency, and reputation by producing the technology system that they assessed. Among the technical value ideas, IT security came up most often. Participants also mentioned values that spanned technical and social dimensions, such as the accessibility of the telemedicine platform for elderly and handicapped users. That said, the utilitarian analysis also uncovered some social values. For example, participants identified a potentially negative value implication of the bike courier app on human contact or the smart teddy bear on the child–parent relationship. To summarize, the utilitarian perspective led to the highest number of ideas, as well as a diverse value spectrum.

Our results show that even the direct reference to virtue ethical reasoning could not shift participants’ thinking toward prioritizing the social over the individual, which according to MacIntyre would be of higher moral relevance. Contrary to MacIntyre’s assumption, the virtue ethical analysis led participants to identify more virtues with a primary relevance for the individual (courage or patience) than virtues that are rather based on individuals interacting with their social environment (empathy or kindness). Still, the virtue ethical perspective clearly inspired participants to focus on the development of individuals—an aspect at the core of the Aristotelian moral theory—and is largely missing from the values identified in the utilitarian analysis. Consider, for instance, privacy and convenience, which benefit an individual but do not depend on the person’s moral development. Also, the virtue ethical perspective helped to elicit the highest share of ideas on virtues and values that combine individual and social relevance. Participants mentioned bike couriers’ kindness/friendliness, children learning to care for both the smart teddy bear and for other people, but also thought of doctors’ truthfulness/honesty and empathy/compassion toward patients they engaged through telemedicine. This fits with the Aristotelian view that virtues are bound to an individual but are still worthy for the social contex universality that the categorical imperative is based on. Stil, that is, the community. For example, a person who is kind or honest can neither develop nor express the underlying virtue without a social environment. For this reason, we see a special moral relevance in this combined value category.

Value elicitation from a deontological perspective inspired participants to re-emphasize previously mentioned technical (e.g., IT security) and economic values (e.g., efficiency/optimization) as personal maxims. For the bike courier app, participants emphasized the individual value of privacy. Such an emphasis could be interpreted as empirical support of Hannah Arendt’s critique that contemporary norms and principles are often misinterpreted as Kantian principles, which ignores the reciprocity and universality that the categorical imperative is based on. Still, we also see that deontology takes socially related values into account, emphasizing intrinsic values, such as equality and fairness or friendship and love in the case of the smart teddy bear. While we only observe this tendentially, the underlying shift to socially relevant values inspired by an ethical perspective that emphasizes moral duty is noteworthy.

Implications for Value-oriented Research and Technology Design

Value Elicitation from Context Versus List-based Approaches

In this paper, we focus on the value elicitation phase at the beginning of the design process of an information technology system. Most likely, not every identified value that is potentially relevant can be considered in the subsequent design steps. Weighing values is a complex matter and it has been suggested that a set of relevant negative values might lead to ruling out designing a technology, rather than weighing harms against benefits (Miller 2021). Several methods have been suggested for prioritizing values such as value dams and flows (Miller et al. 2007) or, more recently, a best worth method (van de Kaa et al. 2020). While we do not advocate any specific method for the final selection of values, we do stress that it is important to start from a set of relevant and context-specific values for consideration later in the design process. Our results show that an ethics-based value elicitation process can help to identify a wide spectrum of relevant values that go far beyond value lists.

Utilitarian, virtue ethical, and deontological perspectives inspired participants to identify values that took into account the specific context and the affected stakeholders of each technology system. The three perspectives also helped elicit relevant instrumental values, intrinsic values, and virtues. The identified values were relevant for the areas of sustainability beyond the technical or economic dimension, although they neglected especially the environmental dimension, which was conspicuous by its almost complete absence.

Above we discussed the dangers inherent in the use of preconfigured value lists. We introduced the meta-review of Jobin, Ienca, and Vayena (2019), who identified eleven shared value themes in eighty-four reviewed policy documents. Table A1 in the Appendix shows the comparison of value codes that were included in the value themes by Jobin et al. and compares them to value codes from the present study. A direct comparison shows that the context-bound capturing of values with ethical theories that we tested covered all eleven value themes for every technology system, with two exceptions: environmental sustainability was not mentioned in the telemedicine platform and transparency did not come up in the analysis of the smart teddy bear. Still, the rich spectrum of values that participants discovered for every technology system goes far beyond the themes mentioned in the list, which becomes most apparent in the following three aspects.

First, Jobin, Ienca, and Vayena (2019) have reported that “it appears that issuers of guidelines are preoccupied with the moral obligation to prevent harm” (p. 396). Companies certainly need to anticipate potentially adverse effects that digital technologies and media can entail (Gimpel and Schmied 2019). However, the results of our study show that the consideration of beneficial effects opens up a much vaster space for a positive design. Ethics is not only about preventing harm but also about fostering what is good, true, and beautiful. For example, participants did not only identify potential dangers to the privacy and independence of a child playing with a smart teddy bear: they also thought of different ways that a child could learn from the toy as well as the advantages of a user-friendly and aesthetic design. For the telemedicine platform, participants saw a potential negative impact on patients’ truthfulness and problematic establishment of a trustful patient–doctor relationship, but also provided ideas on how to improve the efficiency of a doctor’s appointment (e.g., through an optimized scheduling of appointments, a digital anamnesis, and an improved visualization of a patient’s data) to further support an empathic relationship between doctors and their patients.

Second, a narrow focus on values promoted by timely lists of principles leaves out a technology’s impact on human virtues and vices. Above we discussed the reliability of bike couriers, the kindness of children playing with a smart teddy bear, patients’ commitment to their personal healthcare, and the bike courier’s potential loss of a healthy ambition. Value lists can be useful as a heuristic, especially in industrial settings with limited capacities (Borning and Muller 2012). Also, important values like the United Nation’s Sustainable Development Goals can certainly complement a bottom-up value identification process, as suggested by Umbrello and Van de Poel (2021). It has been argued that there are sacred or protected values that should never be compromised because of another value’s prioritization (Van de Poel 2009). Value lists could provide a checklist for such protected values, as in the case of value themes collected by Jobin, Ienca, and Vayena (2019) for the AI context. However, our findings show there is much to discover beyond the values currently promoted by lists.

Third, our results provide many examples of value nuances that represent a specific moral issue in the respective technology system context, which would most probably not be recognized from a mere top-down value perspective. For example, trust was identified as an important value when doctors are confronted with patients who ask for illegitimate sickness notes, when parents fear that the smart teddy bear unnoticeably records moments of their family life and leaks the data, or when a food-delivery company tracks bike couriers.

A Pluralist Ethical Foundation for the Value Elicitation Process

Our findings support previous claims that an ethics-based approach to values in technology needs a moment of ethical reflection and should not be constrained through the use of value lists. What is more, they can serve as an argument for a pluralist ethical foundation for values: if values can represent what is good and morally desirable, any perspective on what is good and morally desirable can contribute to analyzing social and ethical implications of a technology in terms of values. Taken together, our findings point to the advantages of combining ethical perspectives for a pluralist ethical basis for the value elicitation process, which identifies values that relate to potential consequences as much as the adherence to duties and virtues.

The results of our study suggest that utilitarianism offers a powerful perspective by inspiring numerous value ideas for a specific context and covers various value dimensions, although it does not consider the impact on the moral development of individuals within their social environment. The utilitarian perspective was also especially prone to emphasizing values that are central to a technology system as well as mainstream values that are prominently represented in current discourses. Our results show that a virtue ethical perspective crucially complements the utilitarian focus by emphasizing individual growth and personal development, acknowledging the intersection of individual and social values. Virtues have been suggested as the basis for a technology design method that tries to discover ways, in which a technology supports or obstructs the cultivation of virtues (Reijers and Gordijn 2019). Thus, the integration of a virtue ethical perspective in value-oriented research and practice seems warranted, although we have identified an overall individualistic bias in our participants’ value ideas. We have shown that deontology, too, adds a unique ethical perspective. Value elicitation from a deontological perspective inspired most of the ideas that capture intrinsic values with broad social import, such as equality or freedom.

Two limitations of the empirical study design should be noted. First, our application of utilitarianism, deontology, and virtue ethics in the value elicitation process can only represent a selected and thus limited understanding of what is often referred to as the three big ethical theories of the Western canon. At the same time, we have operationalized the respective ethical theory by formulating questions that summarize the textbook understanding of every ethical theory in order to test a simplistic version. Of course, this leaves out other or more specific versions of these three ethical theories, as well as alternative philosophical and cultural approaches to ethics such as Confucianism, Buddhism, and so on. To complement our results, we would like to see future empirical research that investigates varieties of consequentialist, deontological, and virtue ethical theories and compares them to other theories of ethics as well. Second, we have investigated how different ethical theories inspire young IT professionals enrolled in university courses to identify relevant values. We don’t know what these results would look like for senior IT professionals or other samples (e.g., ethicists, IT philosophers, engineers). While we have discovered a heavy focus on individual values across all three ethical analyses, future research could examine whether the same value elicitation exercise generates different results when conducted with samples from a collectivist culture or using another philosophical perspective.

Conclusion

In this paper, we argue that every theory of ethics contributes to the discussion of what is right and wrong in technology design. We investigate three normative theories and their potential to support an ethics-based value elicitation process. Our results show that the perspectives of utilitarianism, virtue ethics, and deontology lead to identifying a broad variety of context-specific values that cater to various sustainability dimensions and go beyond the value themes listed by public institutions and tech corporations. Moreover, we discovered that every ethical perspective contributes to the identification of different values in unique ways: utilitarianism inspires instrumental values with a special focus on economic and technical sustainability but also intrinsic values such as well-being. Virtue ethics complements this set of ideas with a focus on the affected stakeholders’ character and good behavior, leading to a set of diverse virtues for each context, which can contribute to individuals’ sustainable development within their social context. Deontology results in the highest proportion of intrinsic values and emphasizes important values and virtues mentioned in the foregoing analyses, with a focus on intrinsic values and value ideas that relate to social sustainability. These results illustrate that each theory of ethics serves a specific role in the identification of ethical issues and value potentials of a technology. However, we also find a focus on mainstream values and an overrepresentation of individual values, while environmental issues are neglected. Based on these findings, we conclude that the identification of relevant values should not be open to any theory of one’s preference. Rather, the theory guiding an ethics-based value elicitation process needs to be chosen consciously and carefully. Different theories of ethics encourage unique perspectives on a specific technology system, which together can provide a pluralist ethical basis for values in technology design.

Footnotes

Appendix

Comparison of Value Codes with the Value Themes from Jobin et al. (2019).

| Theme | Included Value Codes (Jobin et al. 2019) | Included Codes (present study) |

| Transparency | Transparency, explainability, explicability, understandability, interpretability, communication, disclosure, and showing | Transparency |

| Justice and fairness | Justice, fairness, consistency, inclusion, equality, equity, (non-) bias, (non-) discrimination, diversity, plurality, accessibility, reversibility, remedy, redress, challenge, access, and distribution | Fairness, accuracy, equality, legal compliance, sense of justice, impartiality, accessibility, and corruptibility |

| Nonmaleficence | Nonmaleficence, security, safety, harm, protection, precaution, prevention, integrity (bodily or mental), and nonsubversion | IT security, safety, health, mental, psychological health, and integrity |

| Responsibility | Responsibility, accountability, liability, and acting with integrity | Responsibility and reliability and reliability and robustness |

| Privacy | Privacy, personal, or private information | Privacy |

| Beneficence | Benefits, beneficence, well-being, peace, social good, and common good | Satisfaction, happiness, contentment, monetary benefits, better world, and development of society |

| Freedom and autonomy | Freedom, autonomy, consent, choice, self-determination, liberty, and empowerment | Freedom, autonomy, control, and independence |

| Trust | Trust | Trust; trust in technology |

| Sustainability | Sustainability, environment (nature), energy, and resources (energy) | Environmental protection and durability |

| Dignity | Dignity | Dignity |

| Solidarity | Solidarity, social security, and cohesion | Solidarity, social/legal security, and work capacities |

Authors’ Note

Kathrin Bednar is the recipient of a DOC Fellowship of the Austrian Academy of Sciences at the Institute for Information Systems and Society, Vienna University of Economics and Business.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Note

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.