Abstract

Artificial intelligence (AI) is again attracting significant attention across all areas of social life. One important sphere of focus is education; many policy makers across the globe view lifelong learning as an essential means to prepare society for an “AI future” and look to AI as a way to “deliver” learning opportunities to meet these needs. AI is a complex social, cultural, and material artifact that is understood and constructed by different stakeholders in varied ways, and these differences have significant social and educational implications that need to be explored. Through analysis of thirty-four in-depth interviews with stakeholders from academia, commerce, and policy, alongside document analysis, we draw on the social construction of technology (SCOT) to illuminate the diverse understandings, perceptions of, and practices around AI. We find three different technological frames emerging from the three social groups and argue that commercial sector practices wield most power. We propose that greater awareness of the differing technical frames, more interactions among a wider set of relevant social groups, and a stronger focus on the kinds of educational outcomes society seeks are needed in order to design AI for learning in ways that facilitate a democratic education for all.

Keywords

Introduction

Since its inception in the 1950s, artificial intelligence (AI) and “the possibility of programming an electronic computer to behave intelligently” (Buchanan 2005, 56) have captured the attention of many groups of people, including those from academia, government, and business. AI has been subject to cycles of attention and indifference over the past seven decades. At the time of writing, AI is experiencing a resurgence of interest across all these groups. AI is broadly understood as intelligence exhibited by machines (Russell and Norvig 2002) and covers a range of techniques including natural language processing, neural networks and machine learning. Yet, like all technologies, AI is not one thing: it is a complex sociotechnical artifact that needs to be understood as a phenomenon constructed through complex social processes (Pinch and Bijker 1984; Bijker, Hughes, and Pinch 1987; Bijker 1995). As Bijker (2010) notes, “technology comprises, first, artifacts and technical systems, second the knowledge about these and, third, the practices of handling these artifacts and systems” (p. 64). Indeed, this complex system can be seen in the emergence of networks of AI institutes and initiatives across the public and private sector worldwide (e.g., Accenture 2016; OECD 2019).

In parallel with the revitalized attention toward AI, there is a renewed focus on the purpose of education due to the “fourth industrial revolution” (Schwab 2016). Lifelong learning—especially ideas around reskilling for a twenty-first-century workforce—is attracting increasing attention from academia, policy makers, and the media (Faure et al. 1972; Delors et al. 1996; Jarvis 2014; Tuckett 2017), and these calls are growing as governments around the world begin to prepare society for a world where AI becomes a feature of most aspects of everyday life. Numerous national and international initiatives have been announced in the past five years, including the Adult Education 100 campaign in the UK, which under “…research on the history and contribution of adult education…and engage with communities about the impact of lifelong learning” (Allen-Kinross 2019), and the SkillsFuture initiative in Singapore (2015), which brought lifelong learning back into mainstream policy in an attempt to reskill existing workers (Sung and Freebody 2017).

As part of this growing attention, there has been a resurgence of interest in some quarters in the use of AI in Education (AIED) as a tool to facilitate learning across the lifecourse (Roll and Wylie 2016). For example, the use of AI to facilitate a skill that requires repetition (e.g., language learning) or the use of recommender systems to help adults identify content to learn about a particular topic. Indeed, AI in lifelong learning is seen as one of the “AI Grand Challenges in Education” (Woolf et al. 2013). There is, therefore, an interesting intersection between the call for educational reform (in this case lifelong learning to support the “fourth industrial revolution”) and the use of advances in technology (in this case AI) to achieve that process (Ball 2018; Saltman 2016). In this paper, we examine this intersection to explore the emerging field of AI in the context of lifelong learning.

As AI for lifelong learning experiences a surge of interest, with an increasing number of stakeholders engaged in related initiatives, AI is becoming an increasingly contested arena. As Pinch and Bijker (1984, 421) note, “…artifacts are culturally constructed and interpreted…[and] there is flexibility in how people think of, or interpret, artifacts.” This “interpretative flexibility,” where technological artifacts have different meanings for different people, also has significant implications for “design flexibility,” how AI “artifacts are designed” (Williams and Edge 1996; MacKenzie and Wajcman 1999). The process of design is not fixed; the design of technology is an “open process that can produce different outcomes depending on the social circumstances of development” (Klein and Kleinman 2002, 29). To date, there is very little work that has utilized insights from the social construction of technology (SCOT) to illuminate the diverse perceptions of, and practices around, AI, and its implications for design, particularly in the context of learning. Given the importance of education in shaping the nature of the society in which we live (2013), and with questions of what AI means for society at the fore (OECD 2019), this is a significant oversight that we seek to address in this paper.

The Framing of AI and Lifelong Learning

There are three central stakeholders, or “relevant social groups” (Pinch and Bijker 1984; Bijker 1995, 45-46), interested in the facilitation of lifelong learning by AI: academia, industry, and government. Based on past phases of interest in technology and education, it is likely that these three groups have diverging interpretations of AI in the context of lifelong learning. As Pinch and Bijker (1984) note, “the sociocultural and political situation of a social group shapes its norms and values, which in turn influence the meaning given to an artifact” (p. 428). Thus, these three social groups are likely to attribute different meanings to AI within the context of lifelong learning; that is, they construct and Artifacts are, so to speak, described through the eyes of the members of relevant social groups. The interactions within and among relevant social groups constitute the different artifacts, some of which may be hidden within the same “thing.” In that case, the “interpretative flexibility” of that “thing” is revealed by tracing the different meanings attributed to it by the various different relevant social groups (Bijker 1995, 252).

The aim of this paper, then, is to explore the differences in definitions of what AI “is” for academia, industry, and government in the context of education and how this in turn may shape how AI is designed and “used” in lifelong learning, to provide a means with which to examine and critically assess the likely social and educational implications.

Method

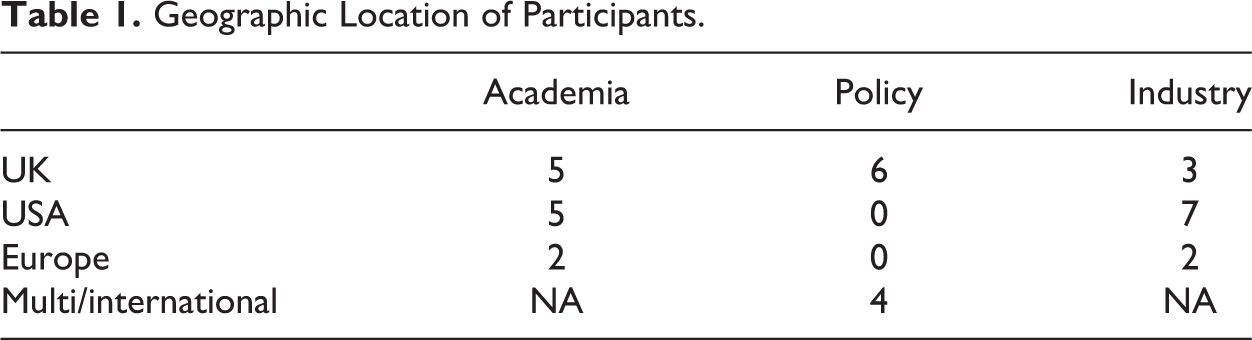

We selected a methodological approach that ensured we could explore the interpretative flexibility of AI for the relevant stakeholders in line with the use of SCOT. Thus, we chose semi-structured interviews to understand the perceptions of stakeholders from academia, industry, and government with regard to AI in the context of lifelong learning. Interviews were conducted with stakeholders (

Geographic Location of Participants.

The interviews explored participants’ reflections on AI in the context of lifelong learning, with a focus on investigating what they thought AI offered them and their sector within the field of education, how they would define AI, and how they felt the area would develop in future. The interviews were semi-structured and were deliberately quite open in style due to the emerging nature of the topic and the diversity of experiences that were captured within the study (Kvale and Brinkman 2014). Example questions included: How has the learning and AI landscape changed over the past two decades? What in your view are the most promising uses of AI to support lifelong learning and to what extent have these been realized? Who is tending to lead efforts in AI and lifelong learning? Time was taken at the outset of each interview to discuss the blurred boundaries between AI and other forms of “EdTech” and the varied definitions of lifelong learning. During the interviews, we focused primarily, though not exclusively, on examples of adult, workplace, and informal learning in the interviews. This is because the policy focus is quite different, there has already been quite significant attention on AI in schools (e.g., Luckin et al. 2016), and designing AI becomes particularly challenging outside the boundaries of a specific aspect of a curriculum (Woolf et al. 2013). The interviews took place via video conference or in person and were audio recorded. These recordings were then transcribed and analyzed through multiple rounds of thematic coding to examine the diverging interpretations of AI in the context of lifelong learning and to understand how the construction of AI by different stakeholder groups influenced perceptions and practices about the use of AI in lifelong learning. This was achieved through an iterative coding process to refine the themes, visualize the data, and test alternative explanations (Dey 2003; Saldaña 2015). As part of the research and analysis process, we also examined relevant policy documents, articles, and commercial websites where appropriate to extend our understanding of each group and to test out propositions that emerged from the interview data.

Findings

Constructions of AI: Three Social Groups

From our analysis, we found limited connections between stakeholders in their understandings and conceptualizations of what AI “is,” as well as differences in motivations for engagement, across academia, industry, and government. The three frames we identified can be described as AI as methodology, AI as legend, and AI as rhetoric.

AI as methodology: Academia

In academia, there is a long-established field of “AI in Education” known as AIED. With the resurgence of interest in AI, academics from the field of AIED were also experiencing more attention, though many were wary that this would only be a passing phase: The field of AI and education has been going for a long time, and it is…it has had to weather the various ups and downs of AI, so AI in the 70s and 80s was kind of regarded as the great new thing, and then it kind of got into the doldrums. And now it is flavor of the month again. (A2) AIED has been the subject of academic research for more than 30 years. The field investigates learning wherever it occurs, in traditional classrooms or in workplaces, in order to support formal education as well as lifelong learning. It brings together AI, which is itself interdisciplinary, and the learning sciences (education, psychology, neuroscience, linguistics, sociology, and anthropology) to promote the development of adaptive learning environments and other AIED tools that are flexible, inclusive, personalized, engaging, and effective. [We should] deploy the AI in some really sound, robust, pedagogically valuable learning design so the student’s engaging in an activity that’s valuable in and of itself. (A9) It’s the oldest question on the planet in education, which is, “What kinds of learners are we trying to create here and how are we going to assess them?” If I want someone who is going to ace a high stakes test then I’ll write an AI that does that. (A4) There are lots of pedagogy out there that is profoundly important, and only one type of pedagogy has been picked up by the AIED community and that, as I say, is this instructionalist, “spoon-feeding” approach. (A7) A powerful tool to open up what is sometimes called the “black box of learning,” giving us deeper, and more fine-grained understandings of how learning actually happens…. This knowledge about the world is represented in so called “models.” There are three key models at the heart of AIED: the pedagogical model, the domain model, and the learner model…. In addition to the learner, pedagogical, and domain models, AIED researchers have also developed models that represent the social, emotional, and meta-cognitive aspects of learning. This allows AIED systems to accommodate the full range of factors that influence learning (Luckin et al. 2016, 20-21). What AI has tried to bring in is, I think, the next level of adaptivity based on trying to build cognitive models of the learner, in terms of their knowledge, their misconceptions, and to provide appropriate content or appropriate other sorts of resources. It’s using AI techniques…and other sorts of deep learning techniques to try and adapt to the material, either, based on a cognitive model, or based on some sort of predictive model. (A3) I do think the challenge there is learning how to be a self-learning scientist…. But, that’s not going to be easy and would maybe take a lot longer than it would to build systems towards particular, widespread important lifelong learning skills. (A5) As the job market becomes more dynamic [with] the demand for particular learning goals…. I think the place that it would be very useful to see that personalization activity is where the learner can specify a high-level objective, then a sequencing of courses can be put together that is informed by the learner’s profile. (A11) And if [a consumer doesn’t] know what [the edtech product] should do, then they are not so quick to figure out that it is not really giving them that, or they get disappointed and say, “Oh yeah, AI can’t do anything.” (A1)

In summary, for those working in AIED, it is a well-established field, with a strong identity and history. AI for the AIED academic community is constructed as a “methodology” to better understand learning and to achieve practical impact on learning and education outcomes. The precise ways, or philosophy behind about how this should be achieved, is the subject of debate and disagreement within the community—with most, but not all, tending to favor more individual cognitivist and constructivist approaches—but the underlying construction of AI is consistent.

AI as legend: Industry/commercial

The commercial stakeholders we interviewed did not focus on the pedagogical aspects of AI in lifelong learning or view AI as methodology. Rather, they discussed AI as if it were “legend”; that is, a popular (and old) story, echoing the AI hype cycles of the past few decades, but a story that contained some “truth,” in that advances in technology meant AI could be utilized to support some of their product goals.

Many participants were open about the importance of AI is a “selling point,” situated within an AI summer, which helped draw potential consumers toward their lifelong learning products: I think vendors are starting to talk about AI and to begin to use it, you know, partly for marketing reasons, obviously partly we talk about it for marketing reasons too…. I think it’s a little bit less clear, I mean definitionally, what people call AI. (C1) AI and personalized learning right now is really hot. (C6) AI is used as a marketing term versus what it’s actually able to do. (C10) I think that even if it’s hype…there was a basis for that…the results that we see are actually coming from the algorithmic improvements and access to more data. (C5)

ITS tended to be different in kind to those developed by academics in that the content was not tailored to the individual but more to groups of learners who behaved similarly; likewise, recommender systems did not require the careful modeling of a learner or a domain in the ways described in the section above. In general, the AI that was embedded in such designs was relatively “simplistic” often akin to “narrow” AI. AI was deliberately designed in ways that sacrificed much of the nuance of systems built in academic contexts but ensured the development of a reliable product, while keeping costs down: As a business we decided from the beginning, we wanted to build a system that would be good enough to charge for…we can’t afford to run the company for free. We needed to build something that was valuable enough and worked well enough that students are paying for it…. We achieved that by reducing the scope of what we were trying to achieve, and then building stepping-stones towards the larger goals, doing something that we considered intelligent by today’s standards. (C2) There’s always the need to sustain a business model, so when your work is based on funding then the companies’ research always needs to be backed by some commercial offerings, and that poses some limitations on what things could be done in corporate. (C5) The budget and the amount of time it takes to make a good AI based assessment does not fit into the amount of budget and time we have…. So, the thing is, what we will then release is, you know, is not going to be cutting edge. (C3) Economics are an incredibly strong force in shaping what these tools turn out to do and how we make use of them. (C8) From an industry perspective, it’s really about picking things that a machine learning model can optimize to work on, and then hopefully finding an intersection between that and something that someone will pay money for. (C7)

This, in turn, strongly suggests that the only AI systems for learning that are developed are those which can make a profit (i.e., targeted at the workplace or where money can be made from users in some way) and thus for learning goals that are required by those audiences. For the most part, this leads to reliable systems that facilitate the learning of narrow tasks and content recommendations, supporting “just-in-time” learning typically for work based purposes.

Similarly, and also in contrast to the academics’ views, the framing of “success” for commercial AI and lifelong learning systems for industry stakeholders was about the performance of the system, not the learning outcomes per se: So our performance metrics are a good measure of how well we’re doing, and ultimately revenues are the best version, because if we produced a product that is good, that people appreciate, they’ll pay for it. (C2) The challenge back to a [big tech] company is to say, is that the sort of models that you’re producing, the deep learning models, are they sufficient for driving really effective teaching and learning, and I would argue they’re not at the moment. (A3) Industry sometimes gets distracted by what they think is marketable instead of building on the methodologies. So, there’s a lot of noise around personalization and activity because they think that’s marketable. (A11) The folks coming with the big AI skills don’t have any understanding of learning and teaching. (C3) There’s also huge naivety, I think. There are these, you know, organizations of individuals who come in thinking that education is simple. (A3) What I see a lot in EdTech—most especially in those subsets of EdTech which are pretty heavy on artificial intelligence or machine learning—is really the propensity to ignore, so to speak, the learning science and the educational sociology in favor of computer science that regards the problem no differently than it would any other objective, like ad targeting or ecommerce optimization…. What it doesn’t do is do anything to help bridge the gap between academia and industry. (C7)

In summary then, the construction of AI as “legend” by industry is ultimately based upon leveraging the current hype to build what is technically feasible within the bounds of maintaining profit and ensuring reliability. Companies building AI products for lifelong learning must be able to scale, and at the same time please the “learner-consumer”; yet there are potential risks in tipping over AI from legend to myth if their emerging construction of AI endures.

AI as rhetoric: Policy

Our findings suggest a complex and partial engagement between policy and AI in the context of lifelong learning. There is, as noted in the Introduction, a clear commitment to the need for lifelong learning in societies where AI could lead to automation and de-skilling (Bughin, Lund, and Hazan 2018). These are raised in policy documents across the globe and echoed by the interviewees: Not only in the most advanced economies, but…in all of the parts of the globe…there is this push now to re-skill, up-skill;…[for] investing in lifelong education or lifelong learning…or at least this is what is being said. (P3) I think the likelihood of AI super-empowering those who are highly skilled and leaving others further behind is [great]. That depends very much on the policy framework that we put around it. (P4)

In relation to AI in particular, governments across the globe are investing in AI as a way to secure future economic success (Accenture 2016). Yet the use of AI to support learning opportunities for adults is, in practical terms, relatively nascent in many countries: There isn’t much policy around it. (P2)

Indeed, the majority of the impetus to develop AI for lifelong learning appears to be coming from outside the policy sector: From what I can see, nearly all the oomph is coming from people who are not in government, basically…The development of AI in education, I don’t think it’s very high up the government agenda. (P1) Technology is not a silver bullet…. I think you know absolutely we should be harnessing what technology has to offer, but fundamentally if the Adult Education Project remains as it is, and if the government initiative continues to be so uncoordinated, then technology is only going to be able to go so far, and then no further, because of those constraining elements. (P6) In the health sector we spend about 16, 17 times as much on research, innovation, and technology than in the education sector…. We have no idea how we deal with big data in education. We have no idea how you strike the balance between learning analytics and data confidentiality and privacy. None of these questions are actually ones that we have seriously talked about. (P4) I think my biggest worry is that we will not be accurate enough in the way that we build ethical frameworks and principles that embrace what education is about. (A9)

Taken together, we argue that policy makers therefore construct AI as a “rhetorical tool” in the context of lifelong learning, emphasizing the perceived power of AI to upskill and reskill the workforce for a new era in the “fourth industrial revolution.” Although it is important to pay attention to different national contexts, we suggest that in Britain, at least, this policy rhetoric is often much stronger than the reality. It seems that policy levers such as investment and regulation are less well utilized to shape the construction of AI in lifelong learning and its implementation across different stakeholder groups.

Disconnected Stakeholder Constructions of AI in Lifelong Learning

AI in the context of lifelong learning is understood to be and is

Participants we spoke to were well aware of a widespread elasticity in AI definitions: “…it depends how you define AI” (P3); “It’s a suitcase term, but because we have one word for it, we think that it means it’s just one thing” (C2). They were also aware of how the varied social, political, and economic contexts in which they operated led to significantly different AI frames by each social group.

These differing constructions of AI, and the practices around the development and use of AI for lifelong learning, have significant implications for design and ultimately lifelong learning experiences and opportunities. When asked, many participants we spoke to saw value in trying to “reconcile” these different framings in order to positively shape AI within the field of lifelong learning. These primarily related to issues of expertise: I do a lot to actually encourage a deeper dialogue between policy and the industry around these questions. At least about the framework conditions, these issues around how do we encourage data flows, inter-operator compatibility? How do we deal with issues around on the one hand we want to learn from big data, on the other hand we want to protect individual rights? These are the kind of strategic questions that we almost have to address before we will see transformation of the sector. (P4) I think we need to be better at making teams who can communicate well, from the educator, “This is the problem I’m trying to solve in terms of teaching and learning” and from the AI person, “Hey, here’s how we might be able to do that, you know, kind of AI make that happen.” (C3) I’m not sure what kind of impact we could have with a phenomenon like this without having ties to the industry. (A10) Academic research has been leading the way for some time. I think where the balance is shifting to some degree, is that in the commercial world we now have access to learner data which is providing insights about learning that are kind of harder for folks in academics to have access to. So, I would like to see more in terms of collaboration between researchers and commercial partners who have access to data about learning and are willing to make it available for research purposes. (C4) [It is] absolutely critical to get education institutions and others all co-operating…. We have to have conversations in which everyone thinks about what we should do about it and not just the experts, if you see what I mean, and that’s an issue around sort of democratic citizenship really, isn’t it? (P5) Some of the big corporations have been very active in actually engaging with decision makers at a country level, both in developed and developing countries, really to push, to convince them that technology was the answer to many of the problems we were facing in education, both in terms of access, but also in terms of quality…. The influence of the industry sector in education reform processes in relation to the use of the technology often resulted I would say in really wasting money for countries…. It really calls for a stronger capacity within the governments to engage in a meaningful and constructive way. That’s not to say that industry doesn’t have a contribution to make, because they are the ones who develop the technology, and we can really see how best it can be used to some extent. (P3) The factors are socioeconomic, I think, how we ensure that everybody can have, and pay for, the education that they want. If they’re choosing to learn as an adult, that they aren’t financially constrained, and it’s got to be made possible for them. I think that’s a governmental level problem. I don’t think that’s the companies to solve. I think that’s for society to solve, that we make sure that everybody is economically above a certain baseline. (C2) The reason it’s commercially there is because somebody wants to pay for it, so if they wanted to pay for other things…then I think we’d see other things. (C12)

Controversies in AI and Lifelong Learning

From our analyses, we see that the commercial sector actively leverages the current “hype” around AI to their benefit. They build reliable systems focused on specific learning contexts to facilitate the learning of narrow tasks and content recommendations that are valued by the largest market possible. This tends to translate to designs for lifelong learning that are focused on work-based needs. This is a different framing to academic stakeholders from the AIED community who seek to utilize AI to understand and facilitate learning across contexts and life stages. Yet, very few designs created by academics make their way into everyday educational and learning practice due both to their complexity and lack of connections with industry and practice (Baker 2016; Luckin et al. 2016). For policy makers, AI is constructed as a rhetorical tool, providing little in the way of specific practical investments or regulations—instead being used as a rhetorical device to signal to the world the “modern” education system in their country, largely deferring responsibility for setting the lifelong learning agenda to industry and the commercial sector (Biesta 2006; Jarvis 2007).

In some quarters, there are moves to develop infrastructure to support collaboration among these different groups to stabilize AI and lifelong learning (see, e.g., Luckin et al. 2016; Roll and Wylie 2016). Indeed, from the analysis above, there are varying incentives to work together, for example, for the commercial sector to share their data with academia to enhance knowledge and understanding, for academics working with the commercial sector to increase impact, and for policy makers to encourage (or indeed regulate where necessary) the commercial sector to ensure responsible codes of practice that commit to high ethical standards (Müller-Eiselt 2018). However, while this may lead to a wider array of the development and dissemination of AI-enabled lifelong learning opportunities, this does not necessarily ensure that the lifelong learning agenda is shaped in a desirable way. Instead, there is a risk such moves are likely to lead to the design of AI in lifelong learning that is relatively narrow, and one that risks marginalizing its social, personal, and democratic benefits to individuals and wider society.

This is in part because of the power the commercial sector is beginning to wield over others through their constructions of technology (Kline and Pinch 1996). As research on technical frames suggests (Bijker 1995), it is likely that a particular framing around AI and lifelong learning will become the most powerful, even with closer collaborations among the different groups. We believe this frame will be one of “legend” that benefits the commercial sector the most. This trend has been shown in analyses of education reform in school contexts, where philanthropists have lobbied policy makers to develop neoliberal initiatives that support the corporatization of education (Ball 2012; Reckhow and Tompkins-Stange 2018; Saltman 2016), and companies have similarly lobbied policy makers in order to create new ways to make future profit for themselves by generating demand for products that they design (Ball 2018; Saltman 2016).

These opportunities are often in relation to the digitalization of different aspects of education from decisions about curriculum, pedagogy, to the education and professional development of teachers and assessment (Ball 2018; Williamson 2017). Such activities lead to fundamental changes in the experience of education and “what it means to teach and to learn” (Ball 2018, 587). They tend to automate and standardize knowledge, curriculum, and pedagogy in ways that are highly problematic and often work against the creation of a democratic school system for all (Saltman 2016). Academics specializing in AIED, who are concerned most with the process of (typically individualized) learning not what the goals of lifelong learning should be, together with a policy system that leaves educational decisions largely to the market (Biesta 2006), are likely to exacerbate this problem. The result is a focus on content that tends not to challenge the status quo and is aimed at the primarily economic concerns, to which the privileged only have access (Apple 2012).

In applying SCOT, there is no way of determining that one frame of interpretation is “better” than others (Hacking 1983). We suggest that a more expansive conceptualization of lifelong learning (with or without AI) is essential for the overall “health” of a society (Biesta 2006; Field 2006) and crucially ensures the design of lifelong learning strategies to support alternative desirable futures for us all (Facer 2011; Levitas 2013). So how might a more expansive conceptualization of lifelong learning and co-construction of AI in this context, to support the overall “health” of a society, be achieved?

Destabilizing and Reconfiguring AI and Lifelong Learning

“Technology development is a process in which multiple groups, each embodying a specific interpretation of an artifact, negotiate over its design, with different social groups seeing and constructing quite different objects” (Klein and Kleinman 2002, 29-30). Thus, if AI and lifelong learning is being constructed in way that may be problematic, then it is possible to change this through the engagement of different groups. As Bijker (2010) argues: If it is accepted that a variety of relevant social groups are involved in the social construction of technologies and that the construction processes continue through all phases of an artifact’s life cycle, it makes sense to extend the set of groups involved in political deliberation about technological choices (p. 72).

As Bijker (2010) proposes, one important way to do this is to expand the range of stakeholders engaged in debates about AI and lifelong learning. For example, those academics from critical education or more sociological disciplines, who contest the more personalized frames of learning often seen in AIED and argue for a more democratic education for all (e.g., Apple 2012; Biesta 2015; Leaton Gray and Kucirkova 2018; Williamson 2019); adults who are using the Internet to learn; teachers and educators. This would aid in making the values of AI and lifelong learning more explicit, for the good of society as opposed to just the good of learning theory advances, the market, or the next election.

During a time of change, democratic education becomes more crucial. Often, in research on education and technology, a democratic education is reduced to a notion of the use of digital means to open up education to a wider audience, through its reach and possibilities for access to no or low cost education. This narrow view of democratic education is rightly critiqued (Funes and Mackness 2018; Selwyn 2016). What we are proposing here is to design AI and lifelong learning systems to support a “thick” democracy, in direct opposition to the “thin” democracy promoted by more neoliberal agendas (Apple 2012; see also Biesta 2019). As Martin (2003, 566) argues, it is “to expand our notions of what it means to be active citizens in a democratic society.” Community-based education with a strong participatory element is one important way to help move away from the narrow economic and individual focus of lifelong learning and is essential to restore everyday democracy—which has seen a decline as people have limited opportunities to engage meaningfully in political and civic life (Biesta 2005).

This is not about providing more training to get people to participate in society. Instead, “adult learning can make community action into a reflexive process, one that, among other things, helps participants to see beyond their immediate interests and to understand that their empowerment has to be connected with the empowerment of others” (Biesta 2005, 701-02). It can also shape and change wider society (Apple 2012). Democratic education only works if society also enables people to act in the world to make a difference (Biesta 2007). Thus, policy makers must take specific moves in this direction and make investments accordingly, and we need to decide on the values we are promoting. As Biesta argues, education should not just be about how to achieve learning in efficient and effective ways. We may learn something efficiently and effectively but that may not fit with our values, and “concrete aims always need to be considered in light of the more encompassing purpose of education” (Biesta 2019, 4). There is no reason why AI and lifelong learning could not be reframed and redesigned in this light.

In paying more attention to the democratic values and purposes of education, alongside the needs of the economy, AI frames in the context of lifelong learning can be reshaped, and constructions of AI and lifelong learning can be destabilized and reconfigured. It enables us to find ways to “pluralize progress” and to “enable greater social agency, political equality and economic equity in knowledge, innovation and development [through] ‘broadening out’ social appraisal and ‘opening up’ a more vibrant politics of choice and diversifying across a variety of disparate pathways” (Stirling 2009, 28).

Conclusion

As Bijker (2010) notes: When a technological system grows by investments in capital, technology and people, it builds up technological momentum—it seems to acquire a certain directional development and speed. As a result of all of those investments, it becomes more and more difficult to change its course and the system starts to have increasing impact on its environment (p. 70).

Footnotes

Acknowledgments

We would like to thank Dr. Huw Davies for his contribution to this article and the wider project and the two anonymous reviewers who provided very helpful feedback on earlier versions of this paper.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Google.org Charitable Giving Fund of Tides Foundation (Grant Number TFR17-01696).