Abstract

Background:

Despite intense national focus on the positive impact of tutoring, there is little empirical evidence on how best to train tutors. This is particularly pertinent given that tutors could serve as a potential pipeline into teaching.

Objective:

This mixed-methods study explores the implementation of modules about developing knowledge for high-quality instructional materials for tutor professional development.

Research Design:

The modules were distributed and completed within four tutoring-based sites in the United States that are part of a national network for aspiring teachers. Quantitatively, we examined pre-/post-assessment data to probe changes in tutor knowledge and career plans. Qualitatively, we interviewed tutors and their program trainers about their module experience.

Conclusions:

Results indicate growth in tutor knowledge as well as participant recommendations for specific improvements to technology and module engagement to enhance curricular experience. Findings suggest the value and limitations of tutor training within this model of training intervention.

Rigorous evaluations establish high-impact tutoring as one of the most effective educational interventions (Dietrichson et al., 2017; Fryer & Howard-Noveck, 2020; Nickow et al., 2020). Spurred by concerns about academic recovery in the wake of the pandemic, tutoring programs have proliferated across the United States (Arundel, 2022). The federal government and state education agencies have invested countless resources into building and expanding these programs, with some educational researchers establishing these types of programs as standard throughout districts (Kraft & Falken, 2021; White House Council of Economic Advisors, 2023).

Despite the rapid proliferation of tutoring programs, little is known about how best to train the tutors themselves. Although there is evidence suggesting an increased benefit of having educators (as opposed to noneducators) serve as tutors (Nickow et al., 2020), there is little else that explains how tutors should be prepared to implement their work. Further, despite tutoring being deeply instructional work, little empirical evidence has identified how individuals develop the pedagogical decision-making required to effectively accelerate student learning. At present, most tutor training is either focused on specific instructional materials—often developed by the curriculum providers themselves—or designed for noneducators and centered on compliance or establishing tutor rapport (Gill, 1984; White et al., 2022).

Building a strong tutoring corps is particularly salient given that tutoring has been identified (and could be used) as a formative experience for entering the teaching workforce (Bartanen et al., 2024; Bartanen & Kwok, 2023; Kraft & Falken, 2021; Kwok et al., 2022). The inequitable supply of tutors is a significant barrier to ensuring that the benefits of tutoring reach all students, particularly Black and Latinx students, and students experiencing poverty (National Student Support Accelerator [NSSA], 2021). This problem could be addressed by mobilizing aspiring teachers as high-impact tutors, while also helping to address teacher staffing challenges faced by the United States (Wall, 2023).

We took a concurrent mixed-methods approach to explore one tutor training intervention implemented in four tutoring sites across the United States, representing 211 tutors. We focused specifically on two related constructs of the intervention: the content and engagement of the tutor training modules, and the experience and perceptions of the tutors. The content and engagement were examined through pre- and post-assessments of the tutor training across four diverse programs to identify changes in tutor beliefs, career plans, and knowledge. Then, we analyzed a subsample of interviews with tutors and training leads to learn about intervention accessibility, pedagogical development, and training considerations. Through these analyses, we contribute to a thin evidence base on tutor training and curricular changes for tutor development. The research questions guiding our study are:

1. How did tutor knowledge, feelings of preparedness, and career plans change over the course of the tutor training program?

a. To what extent was there variation by tutor demographics?

2. What were participant experiences with the tutor training program?

b. To what extent was there variation by type of program?

Literature Review

The Rise of Tutoring Programs

Although there is some variation in describing tutoring, a predominant definition is “one-on-one or small-group human (i.e., non-computer) instruction aimed at supplementing classroom-based education” (Nickow et al., 2020). Tutoring incorporates learning interactions that focus on an area of curriculum content that needs improvement or strengthening (Roe & Vukelich, 2001; Woolley & Hay 2007), and although it involves aspects of pedagogy, tutoring is not teaching and should not dilute or replace teaching (Topping, 1998). Tutoring can also be implemented through technology (Robinson & Loeb, 2020) but still requires interactions between a tutor and tutee.

Evidence has overwhelmingly affirmed the positive effects of tutoring on student learning (Cortes et al., 2024; Guryan et al., 2023; Loeb et al., 2023; Ritter et al., 2009). This staunch evidence base prompts persistent calls to expand tutoring services across U.S. public schools (Kraft & Falken, 2021) to reduce COVID-19 learning loss (Kraft & Goldstein, 2020; White House Council of Economic Advisors, 2023). For instance, in a meta-analysis of rigorous causal evidence, Nickow et al. (2020) found consistent and large pooled effect size of 0.37 standard deviations for tutoring, though there was meaningful variation across individual studies. Recently, the White House Council of Economic Advisors (2023) released analyses of the effects of an Illinois statewide tutoring effort, finding a significant jump in the National Assessment of Educational Progress test scores relative to other states. Importantly, positive effects were strongest in programs that were conducted by professionals (e.g., teachers), in earlier grades, and within school.

The heterogeneity of who carries out the tutoring has often been explained as understanding the tutor skill, role, or experience. That is, programs vary in who serves as a tutor; tutors include older students, educators, and parents. However, there is a difference in effect by these roles. For example, studies that examine difference in tutors have identified that professional educators (e.g., teachers) have larger effects than those who have no education background (e.g., parents, nonprofessionals; Lopez et al., 2020; Robinson et al., 2021). Further, evidence indicates that trained tutors are more effective than untrained tutors (Fresko & Chen, 1989). This emphasis on tutor training changes the discussion from differentiating

Tutor Training

Evidence has established that preparing tutors is valuable for student learning (Fountas & Pinnell, 1996; Roe & Vukelich, 2001; Waltz, 2019; Woolley & Hay 2007) and has shown some positive effects on the tutors themselves (Cohen et al., 1982; Topping, 1998). For instance, Skelley et al. (2022) identified that tutor training (debriefing sessions and reflections) increases tutors’ confidence. Specifically, these tutors were initially hesitant and insecure about their role and responsibility as a tutor, but after training, there were significant increases in their self-perception after they realized that they could learn from their peer tutors. However, the training was individually designed and provided to the sample of only eight tutors for elementary literacy.

Despite the efficacy of and demand for tutoring (Hampel & Stickler, 2005), there are some challenges to establishing tutoring programs from a structural standpoint. White et al. (2022) found through a systematic literature review that successful tutoring implementation often hinges on the support of key school leaders who have the power to direct the use of school funding, space, and time. Additionally, the authors found that tutoring setting and schedule, tutor recruitment and training, and curriculum identification influence whether students can access tutoring services, and the quality of the instruction provided.

In terms of what high-quality tutor training should entail, some have pointed to key characteristics. First, ongoing training and support throughout the tutoring duration are more beneficial than a one-time introductory training session (Hill, 2016; Skelley et al., 2022). Second, training should include endorsement from the tutors, meaning that tutors should find value in the training as applied to their work (Hampel & Stickler, 2005; Stickler & Hampel, 2007); however, this is less assured, given that evidence was from individual case studies and only within online contexts. Finally, training must be specific to tutors’ work and include strategies for potential challenges that may arise (Topping, 1998).

Perhaps the most comprehensive suggestions come from the National Student Support Accelerator (NSSA), a leading source for tutoring research in the United States. Specifically, NSSA offers recommendations for tutor preparation, including tutor expectations, content proficiency, and supporting students with learning and thinking differences. In fact, this is the only framework that we have encountered that offers explicit guidance on how to train and support tutors. However, these recommendations are built from a limited empirical base, and NSSA does not provide any citations that support these recommendations for training tutors. This, in conjunction with the aforementioned studies, largely draws from weak data from a hyper-specific context and offers little empirical evidence for how to effectively train tutors. That is, programs often draw from anecdotal best practices, as opposed to research-based strategies on how to train tutors. To successfully realize tutoring as both a scalable, high-quality educational intervention and a pipeline to the teaching profession, we need to establish best practices around the training and support of new tutors. To contribute to this emerging evidence base, our study examines one model to prepare tutors for their work.

Study Background

Deans for Impact Intervention

Deans for Impact (DFI) is a national nonprofit organization committed to ensuring that every child is taught by a well-prepared teacher. DFI works toward this vision through policy advocacy and programmatic efforts to ensure that pathways into teaching are affordable, practice based, and focused on high-quality instruction. Guided by principles of learning science, DFI aims to help teachers create rigorous, equitable and inclusive classrooms so that all children thrive. In the fall of 2023, DFI launched the Aspiring Teachers as Tutors Network, a national collaborative of 28 tutoring initiatives across 15 states that are working to increase the number of aspiring teachers serving as tutors for K–12 students and to improve preservice teachers’ instructional skills through field experiences and training.

As part of the Aspiring Teachers as Tutors Network, DFI sought to examine the efficacy of tutor training for aspiring teachers and the conditions associated with implementing the training across diverse tutoring programs. DFI designed a series of training modules for aspiring teachers serving as tutors that were adapted from those originally developed in partnership with educator preparation programs as part of a high-quality instructional materials network. The training focused on key components of instructional decision-making and were designed to be embedded into educator preparation programs, enabling programs to integrate tutoring experiences within existing coursework required to complete a preparation program, or into ongoing training provided by tutoring providers outside of teacher certification programs.

DFI offered a series of four asynchronous training modules to prepare tutors on elements of effective high-impact tutoring. Each of the modules follows a research-based sequence that allows tutors to understand, analyze, and apply the concepts covered in the materials. Specifically, tutors (1) build background knowledge by exploring a key concept or practice, (2) explore strategies related to the practice, grounded in authentic instructional artifacts, and (3) complete a culminating activity in which they respond to a real-world example.

The four modules included approximately 24 hours of content in K–8 mathematics, 1 representing the knowledge and skills requisite for developing successful high-impact tutors. The modules are intended to be completed in sequence and in full, and prepare aspiring teachers and tutors to do the following (see Appendix A for a more detailed description of each module):

Participating Program Context

Four sites partnered with DFI to complete the modules for this pilot study. A convenience sample was used from the DFI network of programs to identify sites that wanted to leverage more robust training, had a decent sample size, and were committed to piloting in sequence and in full with their candidates and tutors. The sites are anonymized but briefly described here (full details are in Appendix B):

Aspiring teachers at Site A, a teacher preparation program in Ohio, served as high-impact tutors in spring 2023 and completed the modules as part of a required math methods course for their teacher preparation program.

Aspiring teachers at Site B, a community college-approved bachelor’s educator preparation program in Texas, completed the modules as part of their elementary math methods course during a semester-long work-based learning experience.

Aspiring teachers serving as tutors and other volunteer tutors through Site C, a statewide tutoring program on the East Coast, completed the modules as part of their mandated tutor training.

Undergraduate students at Site D completed the modules as part of a community-based component of their educational psychology course while they served as tutors through a collegewide initiative at a private, not-for profit institution in the Midwest. 2

Each program was provided with links to the four asynchronous training modules. Training was provided to faculty and staff on best practices for incentives, support, accountability structures, sequencing, and pacing of the modules to ensure completion. DFI staff also provided ongoing support via email communication, phone, and Zoom, including an initial onboarding and subsequent training session around module content. Tutors completed all training modules during the spring 2023 semester.

Methods

Data Collection

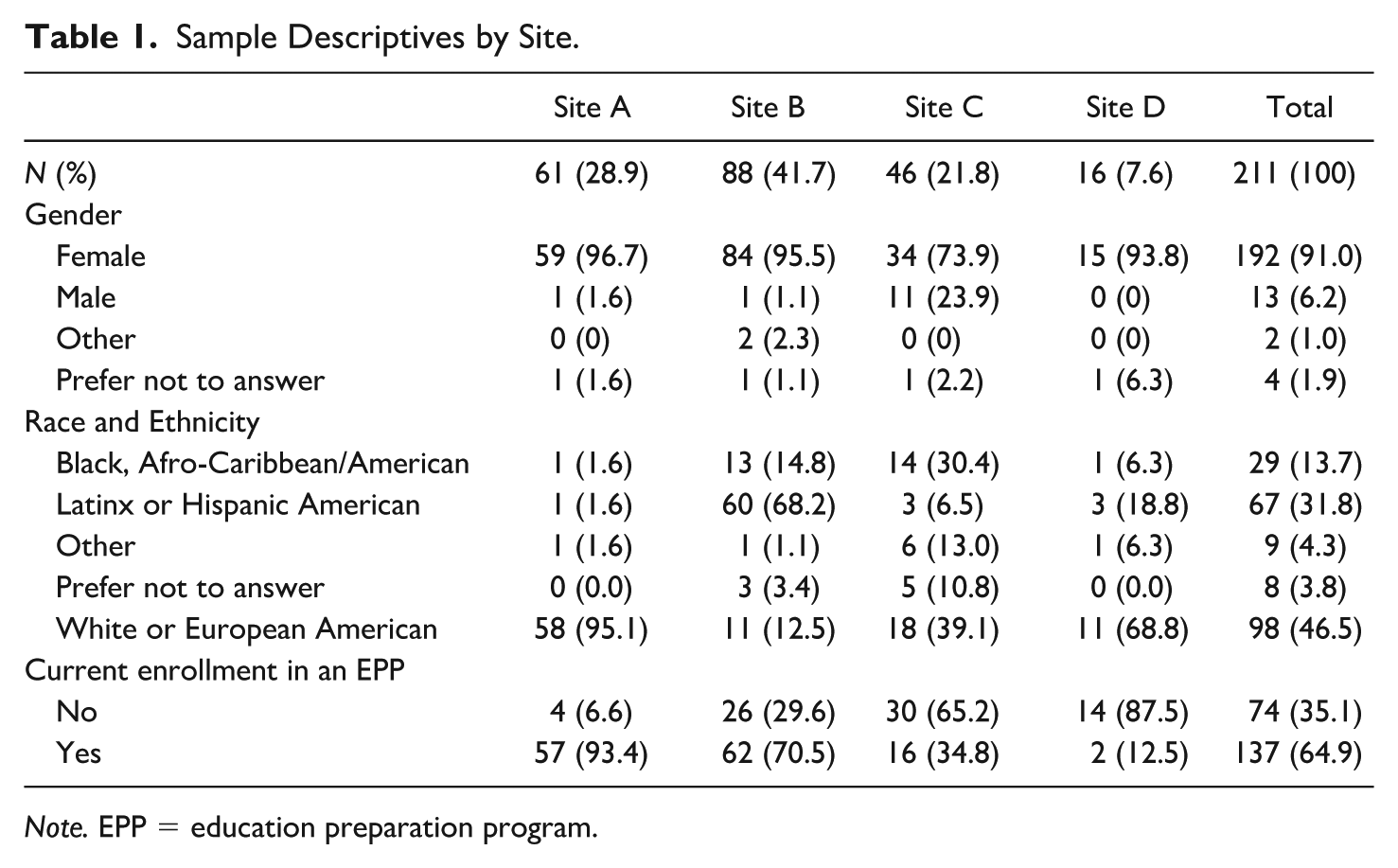

DFI staff collected two main types of data throughout the pilot study. A pre-assessment and post-assessment were electronically administered to all participating tutors at each of the four sites; descriptive statistics of participants are shown in Table 1. In total, 211 participants completed at least one of the assessments (with 166, or 77.7%, completing both); the overwhelming majority were female (91.0%), and nearly half were white (46.5%). However, only 65% of individuals were currently enrolled in an educator preparation program, indicating the variety in programs and career aspirations.

Sample Descriptives by Site.

The pre-assessment was completed before starting the modules, and the post-assessment upon completion of the modules. 3 These assessments collected changes in tutor knowledge and skills, inquiring about participant understanding and application of the core knowledge and skills necessary to make effective instructional decisions that support all learners. These were predominantly through detailed case study illustrations, in which participants considered how to approach various tutoring scenarios. Although the pre- and post-assessment used the same questions, tutors did not receive a grade or any feedback on right or wrong answers. Further, sufficient time passed (i.e., a semester), such that the change in the percentage of correct answers between pre and post was likely not simply an artifact of increased familiarity with the assessment. After the assessment, DFI also captured descriptions of changes in tutor mindset and beliefs about (1) students, (2) the work of teaching mathematics, (3) tutoring, and (4) standards-aligned instruction and high-quality instructional materials. Finally, they queried changes in their preparedness to teach mathematics and plans to stay in teaching.

DFI also requested interviews with all training leads and at least one tutor per program. Training leads were individuals, often program administrators or faculty members, responsible for setting up, implementing, and monitoring progress of the tutoring modules. A 30-minute semi-structured interview was conducted using an interview protocol designed to identify participant experiences related to module implementation. Following are descriptions of interviewed participants by program; all were given pseudonyms.

Site A

Angela was a third-year tutor majoring in inclusive pre-K–5 education. The training lead was Dr. Thomas, an associate professor who taught math education and STEAM education courses and served as the inclusive pre-K–5 co-coordinator.

Site B

Dr. Shannon served as the associate dean of educator certification and an instructor and course designer for elementary math methods. She supported other faculty who were also teaching that course. Unfortunately, there was no tutor interview at this site.

Site C

Site C offered multiple participants per group to interview. As such, more comprehensive descriptions are available for these participants.

Tutors

Jason was a third-year tutor at Site C. He had just graduated from a local college with a degree in elementary education with history and was also accepted to a master's program in elementary educational technology. Jasmin had finished one year with Site C, which marked a career change for her. She earned her BA in English, and her previous experience was with the public library as a youth services programmer. Lyndsey was a recent graduate and taught fourth-grade autistic support. She was teaching and tutoring at the same time and expressed that it was a lot for her to manage.

Trainers

Ms. Ashley was the chief operating officer and brought the pilot to Site C to streamline or improve the training that her tutors and coaches received. She said, “[I want to] figure out how to use these modules to increase and measure the competency our staff have around using high-quality instructional materials in math.” Ms. Chelsea led the training for the tutors and the instructional coaches at the beginning of the program. She also maintained support for the instructional coaches throughout the program to help them learn how to logistically support their tutors. This was Ms. Jessica’s 17th year in education, where she taught seventh-grade English language arts, served as the middle school coordinator and instructional coach, and was an adjunct for a local university, which connected her to Site C.

Site D

Katie was a sophomore majoring in psychology with a Spanish supplementary and an education minor. She was enrolled in a community-based learning class through the minor and connected with Site D, where she was placed with second- and third graders to provide literacy support. The trainer was Dr. Kelly, a postdoctoral researcher at the university. She taught the course where the training modules were embedded within the psychology department and offered through the education minor. In this course, students learned science and cognitive principles and then applied these principles in tutoring.

Data Analysis

We took a concurrent mixed-methods approach (Tashakkori & Teddlie, 2021) to allow for separate related analyses of different type of data: quantitative analysis of the pre-/post-assessments, and qualitative analysis of participant interviews.

Quantitatively, we examined changes on the pre-/post-assessment, and we considered variation across key tutor demographics and other relevant characteristics—with our focus being on understanding change in their measured skills, career plans, and knowledge. In addition to reporting the raw pre–post changes, we also estimated least squares regression models that predicted post-assessment measures as a function of tutor demographics, controlling for the relevant pre-assessment measure. Because we examined pre–post changes, our analytic sample was restricted to the 166 tutors who responded to both assessments. Appendix Table C1 shows a comparison of characteristics between included and excluded tutors. Excluded tutors were much more likely to be from Site C and report their race as something other than Black, Latino, or White. 4 We report results from two model specifications—with and without site fixed effects. The inclusion of site fixed effects accounts for the nonrandom sorting of tutors, given that sites differed substantially in terms of their demographic composition. However, we could not rule out any other program or campus-level experiences that may have accounted for changes in participant assessment.

One open-response item queried tutor knowledge on HQIM elements. HQIM are predesigned vetted pedagogical materials (e.g., lesson plans) that are provided to instructors to reduce time in preparation, and are increasingly being throughout the United States. We used the four elements explicitly stated from the modules and categorized responses by each element. We allowed for the idea to be conveyed, as opposed to direct use of the terms; in practice, though, participants mostly used the actual terms in their responses.

Qualitatively, we transcribed the interview data verbatim and imported the transcripts into the qualitative software, Dedoose. From there, we engaged in constant comparative analysis (Glaser & Strauss, 2017) to identify main patterns of tutors’ experiences of the tutor training intervention. We began by open coding within each program, which allowed us to highlight individual programmatic differences. After identifying patterns within program, we conducted axial coding to identify key patterns across tutoring programs for characteristics of module implementation, suggestions for improvement for the tutoring training, and major areas of value for trainers and tutors.

Results

RQ1: How did tutor knowledge, feelings of preparedness, and career plans change throughout implementation?

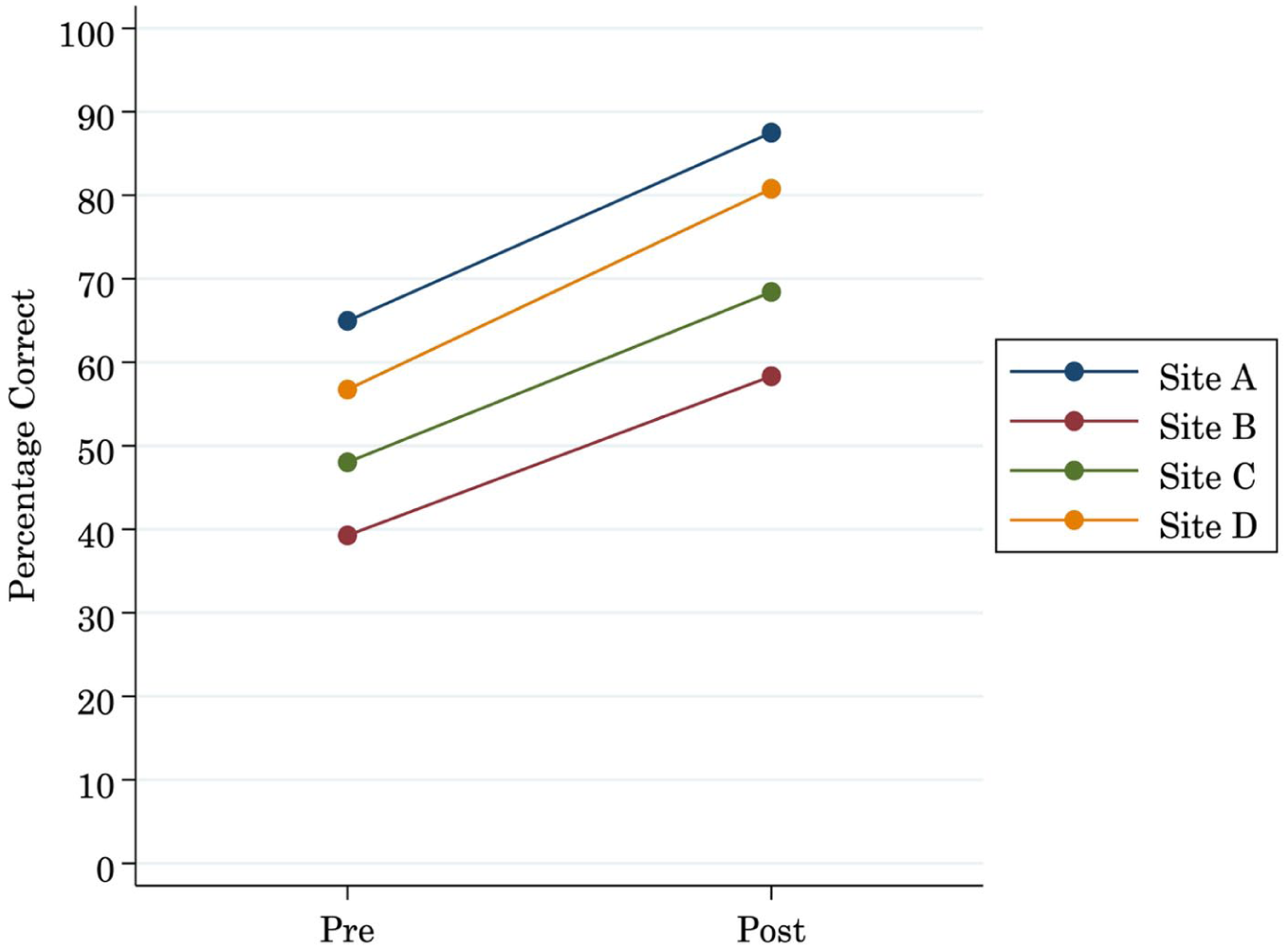

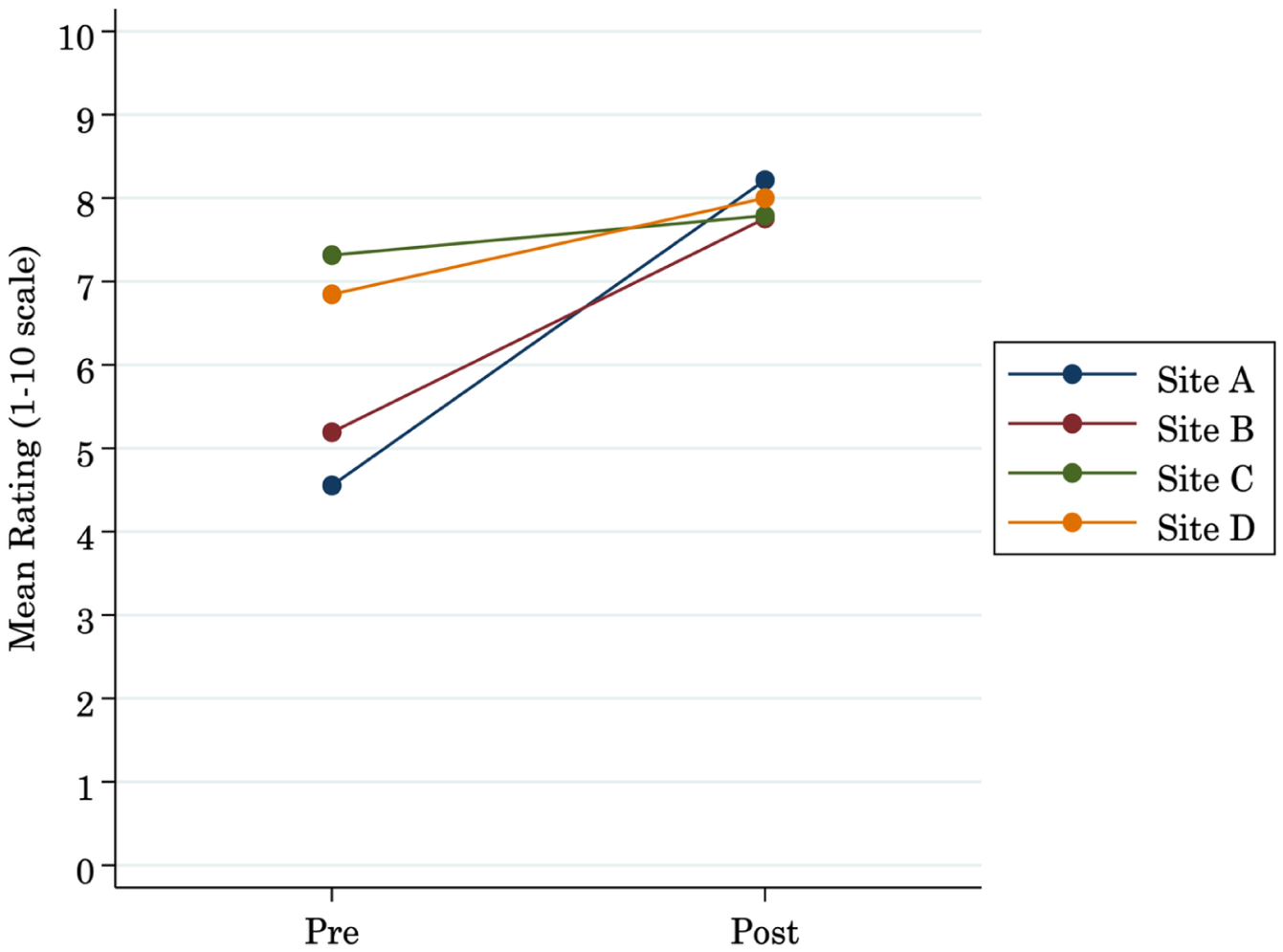

We probed the participant experience by examining what tutors found valuable for their own development and how the modules accentuated their contextual teaching and learning. Participants improved their instructional knowledge and skills as measured by the pre- and post-assessments (see Figure 1). On average, participants improved by roughly 20 percentage points (in terms of the percentage of correct responses on the assessment). Using a dependent

Improvement in score from pre- to post-assessment by site.

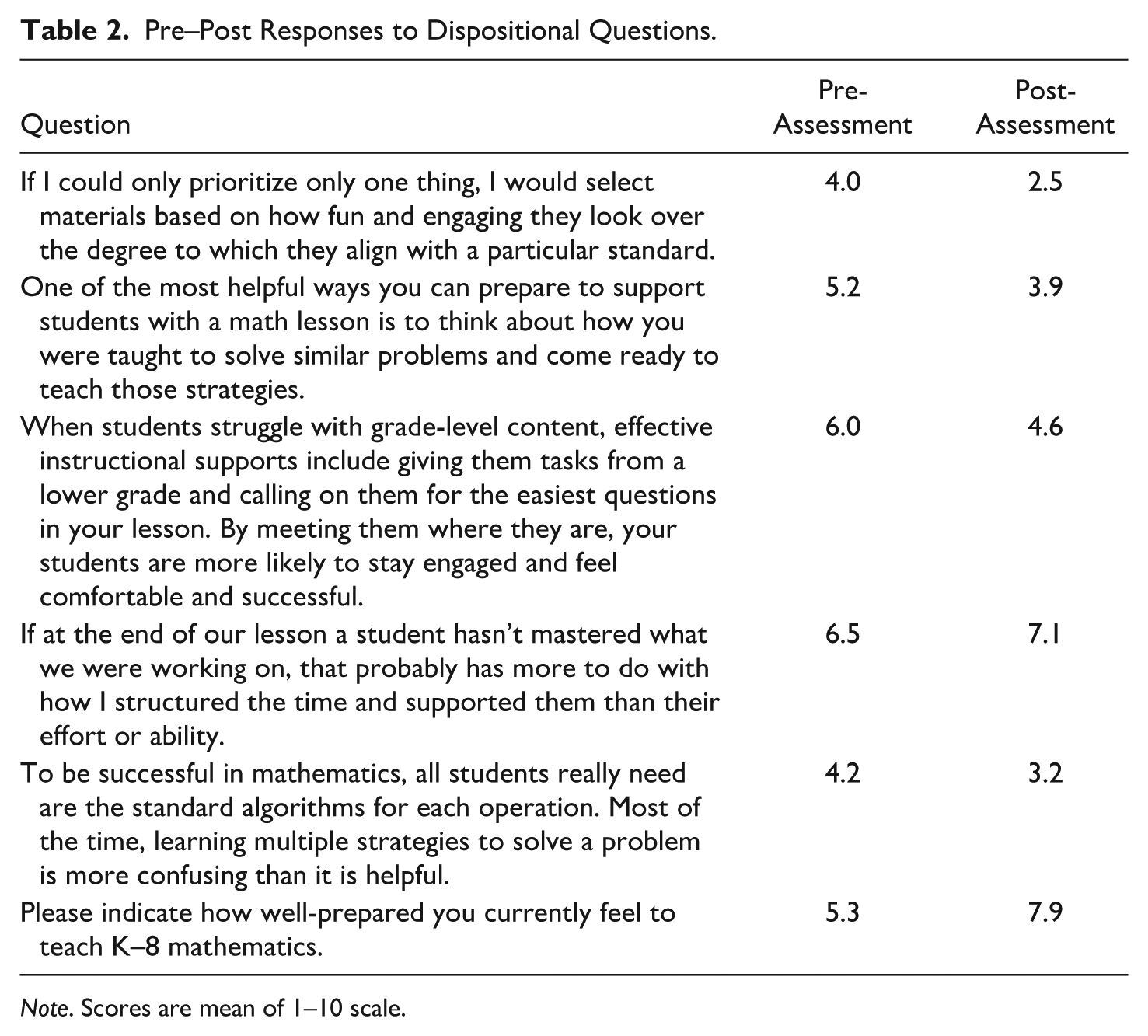

We also examined changes in the dispositional items on the pre- and post-assessments, shown in Table 2. These items asked tutors to rate their agreement with the statement on a 1–10 scale, with 10 being most in agreement. We compared the average rating for the pre- and post-assessments using the common sample of tutors who responded to both instruments. In general, we observed changes in attitudes and beliefs that were consistent with the assessment results showing improved skills. Tutors were more likely to respond negatively to statements that did not align with HQIM principles, such as prioritizing materials based on fun or engagement rather than alignment with standards (4.0 pre-assessments to 2.5 post-assessments). The smallest observed change was in taking ownership over student mastery. Here, there was a slight increase, from 6.5 to 7.1.

Pre–Post Responses to Dispositional Questions.

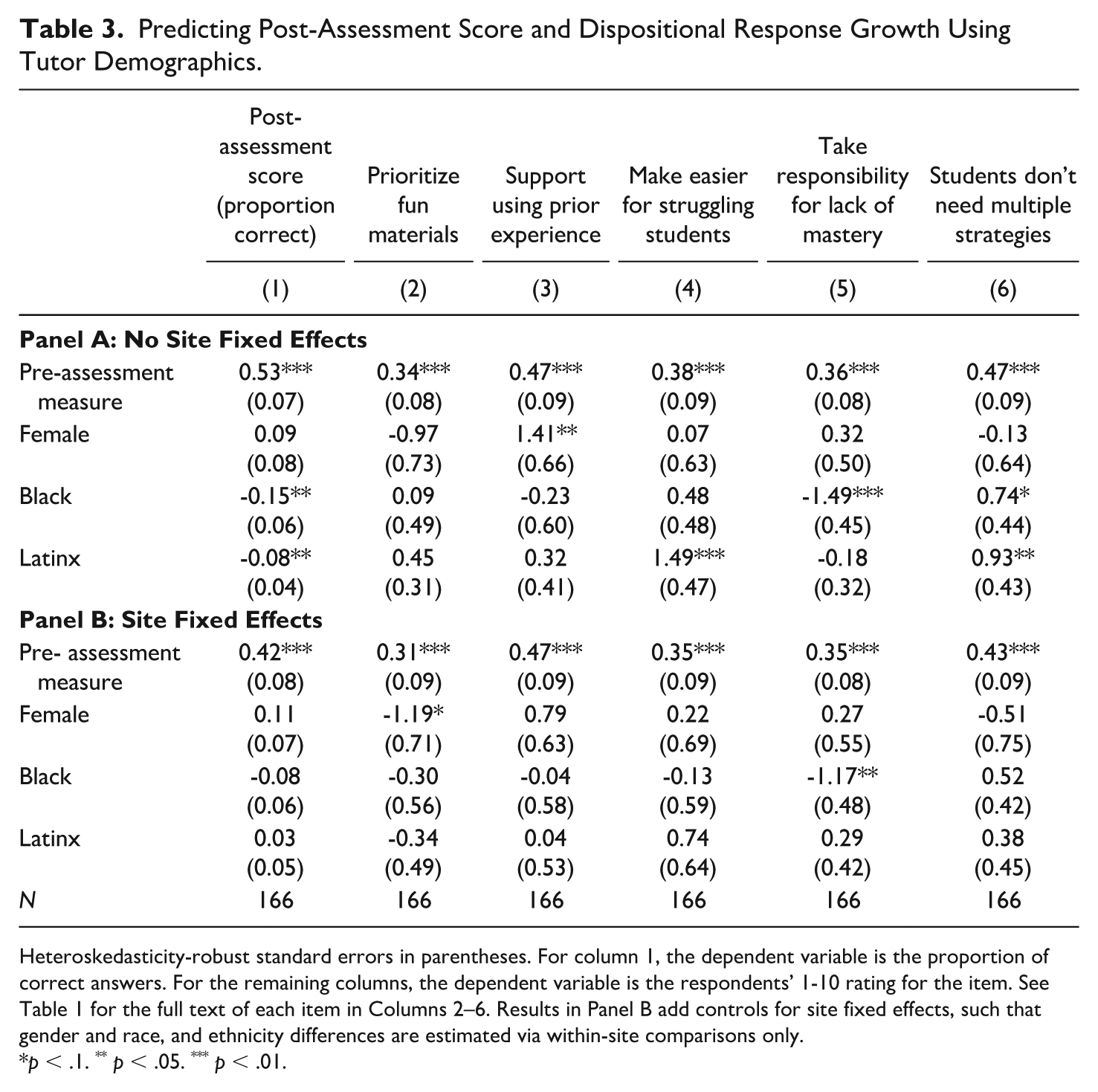

To understand variability by tutor characteristics, we estimated regression models using the post-assessment response as a dependent variable (controlling for the pre-assessment response) shown in Table 3. Panels A and B show results without and with site fixed effects, respectively. Focusing first on Panel A, we found several statistically significant differences based on tutor race and ethnicity, though not for gender. On average, Black and Latinx tutors scored lower on the post-assessment relative to White tutors. Column 1 shows the magnitude of these gaps: 15 percentage points for Black tutors and 8 percentage points for Latinx tutors. The remaining columns examine the 1–10 scale responses to the dispositional questions. There were statistically significant differences between White and Black tutors for two questions: taking responsibility for lack of student mastery (Black tutors responded 1.5 points less favorably, on average), and belief that students do not need multiple strategies for math problem-solving (White tutors responded 0.7 points less favorably, on average). Latinx tutors were more likely to endorse making things easier for students struggling with grade-level content (1.5 points greater than White tutors) and believed that students did not need multiple strategies for math problem-solving (0.9 points greater). These differences were quite large relative to the overall variability in responses (the standard deviation was roughly 2 points for each). Although we could not pinpoint their origin, these gaps suggest that nonwhite tutors approached the training and work differently. Note that there were insufficient numbers of tutors from other racial or ethnic groups to conduct statistical analyses.

Predicting Post-Assessment Score and Dispositional Response Growth Using Tutor Demographics.

Heteroskedasticity-robust standard errors in parentheses. For column 1, the dependent variable is the proportion of correct answers. For the remaining columns, the dependent variable is the respondents’ 1-10 rating for the item. See Table 1 for the full text of each item in Columns 2–6. Results in Panel B add controls for site fixed effects, such that gender and race, and ethnicity differences are estimated via within-site comparisons only.

It is important to note that we could not pinpoint the origin of these gaps, and they should be interpreted with caution. As one way to probe these gaps, we could reestimate the models, controlling for site fixed effects. Black and Latinx tutors, in particular, were concentrated in just one or two sites, such that the results in Panel A could reflect site-level differences that were correlated with race and ethnicity. Controlling for site fixed effects (Panel B) restricts these comparisons to tutors who were in the same site, which effectively controls for any between-site differences that could affect the outcome. Doing this eliminates most of these gaps in terms of their statistical significance; given the small sample size, the gaps must be quite large to be statistically significant. These results may suggest that the differences by race and ethnicity in Panel A are explained by between-site differences, though we cannot speak definitively to what these between-site differences actually are.

To assess changes in feelings of preparedness, we examined pre–post differences in items that asked tutors to rate their preparedness to teach mathematics on a scale from 1 to 10. There was an additional item that explicitly asked tutors to rate whether their preparedness had increased as a result of the HQIM modules. We found strong alignment between both of these measures.

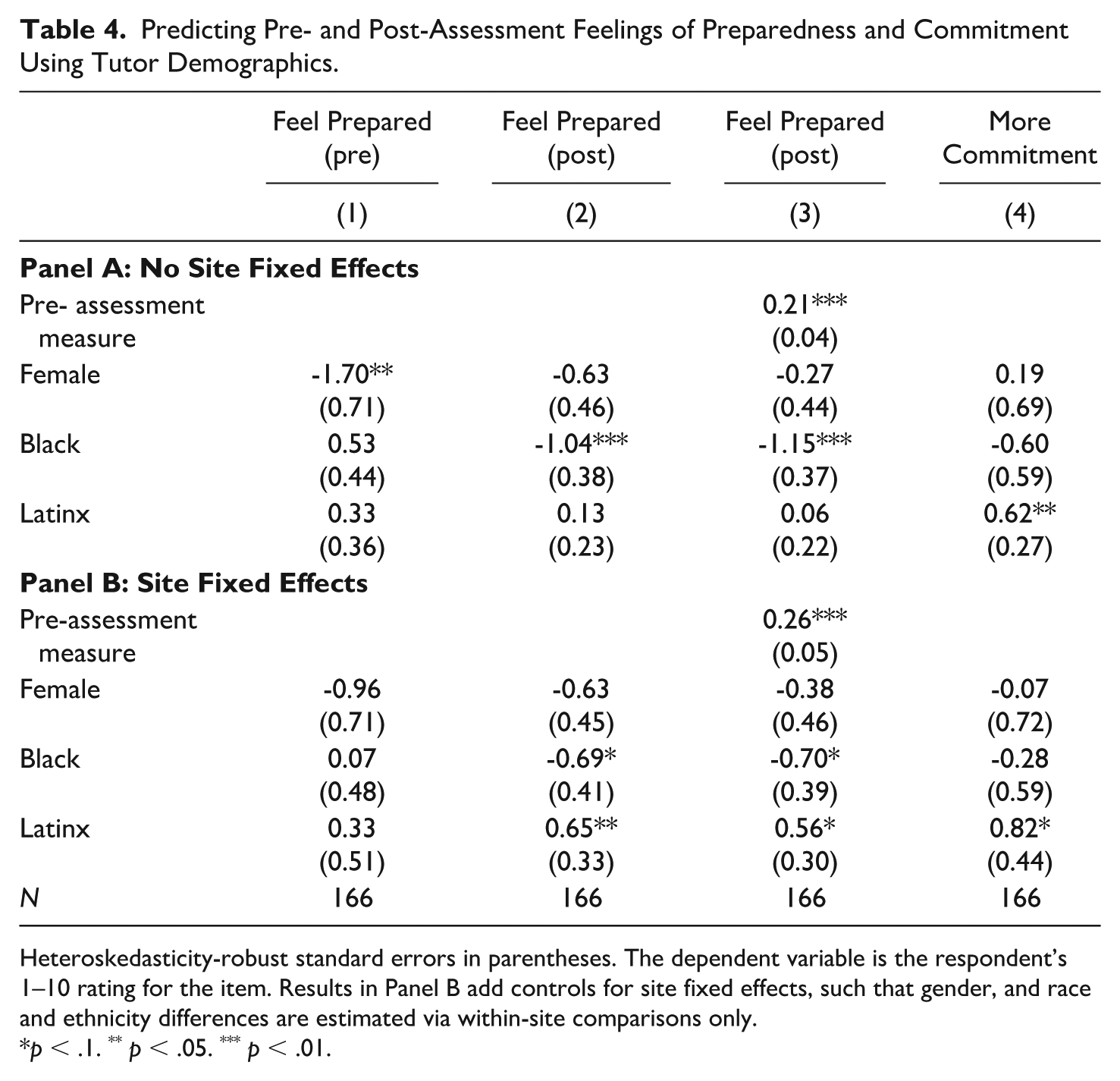

The bottom row of Table 4 shows that the mean rating of preparedness increased by 2.6 points (statistically significant at a 99% level) between the pre-assessment and the post-assessment (5.3 to 7.9 points). Figure 2 disaggregates this change by site. Here we found substantial differences. Specifically, there was large variability across sites in the feeling of preparedness on the pre-assessment. For instance, Site C tutors rated their preparedness at 7.3 (out of 10), on average, compared with 4.6 for Site A tutors. The juxtaposition with the assessment results is interesting: Site A tutors felt less prepared at the time of the pre-assessment but actually had the highest scores. However, we see a convergence in perceptions of preparedness to teach after completing the training modules. Despite the large differences on the pre-assessments, the post-assessments means were very similar. They were also quite high, on average, hovering around 8 out of 10, indicating that tutors felt fairly well prepared to teach.

Predicting Pre- and Post-Assessment Feelings of Preparedness and Commitment Using Tutor Demographics.

Heteroskedasticity-robust standard errors in parentheses. The dependent variable is the respondent’s 1–10 rating for the item. Results in Panel B add controls for site fixed effects, such that gender, and race and ethnicity differences are estimated via within-site comparisons only.

Change in feeling of preparedness from pre- to post-assessment by site.

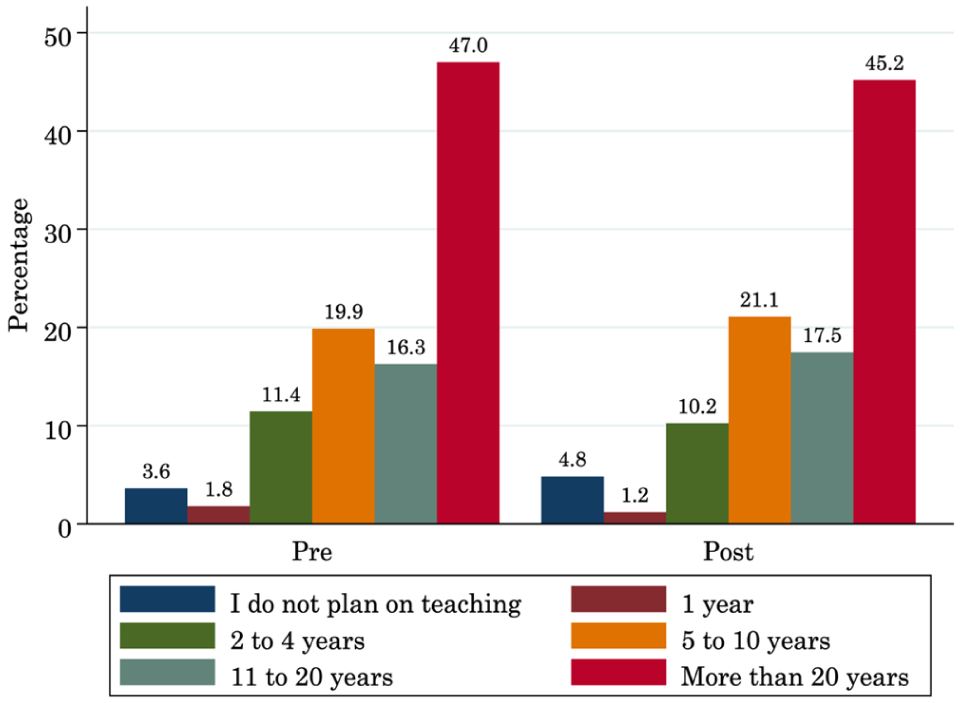

Tutors’ feelings of preparedness increased—what about their commitment to teaching? Figure 3 shows the frequency distribution based on the pre-assessment and post-assessment responses. Here, instead of a 1–10 rating, tutors choose one of several possible time ranges, from “I do not plan on teaching” to “I plan to stay more than 20 years.” Overall, tutors’ intended commitment to teaching was high at baseline and did not change much after they completed the training modules. Just under half of tutors reported that they planned to teach for more than 20 years, with most of the remaining respondents in the intermediate ranges of five to 20 years. Only a small handful of tutors reported that they did not plan on going into teaching or that they would remain for just one year. As noted earlier, there was essentially no change in the distribution at the post-assessment. Further, the stability of individuals’ responses was high, with a correlation coefficient of 0.82. This indicates strong alignment between what an individual tutor reported on the pre-assessment and what they reported on the post-assessment. Thus, the training did not appear to appreciably change commitment, but it also started from a very high baseline.

How long do you plan to stay in teaching? Pre-/post-assessment differences.

Table 4 shows results from regression models predicting feelings of preparedness and commitment by tutor demographics. Here, we observe that women felt substantially less prepared than men, on average, at the time of the pre-assessment (gap of 1.7 points). This gap was largely eliminated in the post-assessment. We found the opposite pattern for Black tutors; they reported slightly higher preparedness on the pre-assessment (0.5 points, not statistically significant) but substantially lower preparedness on the post-assessment (-1.0 points,

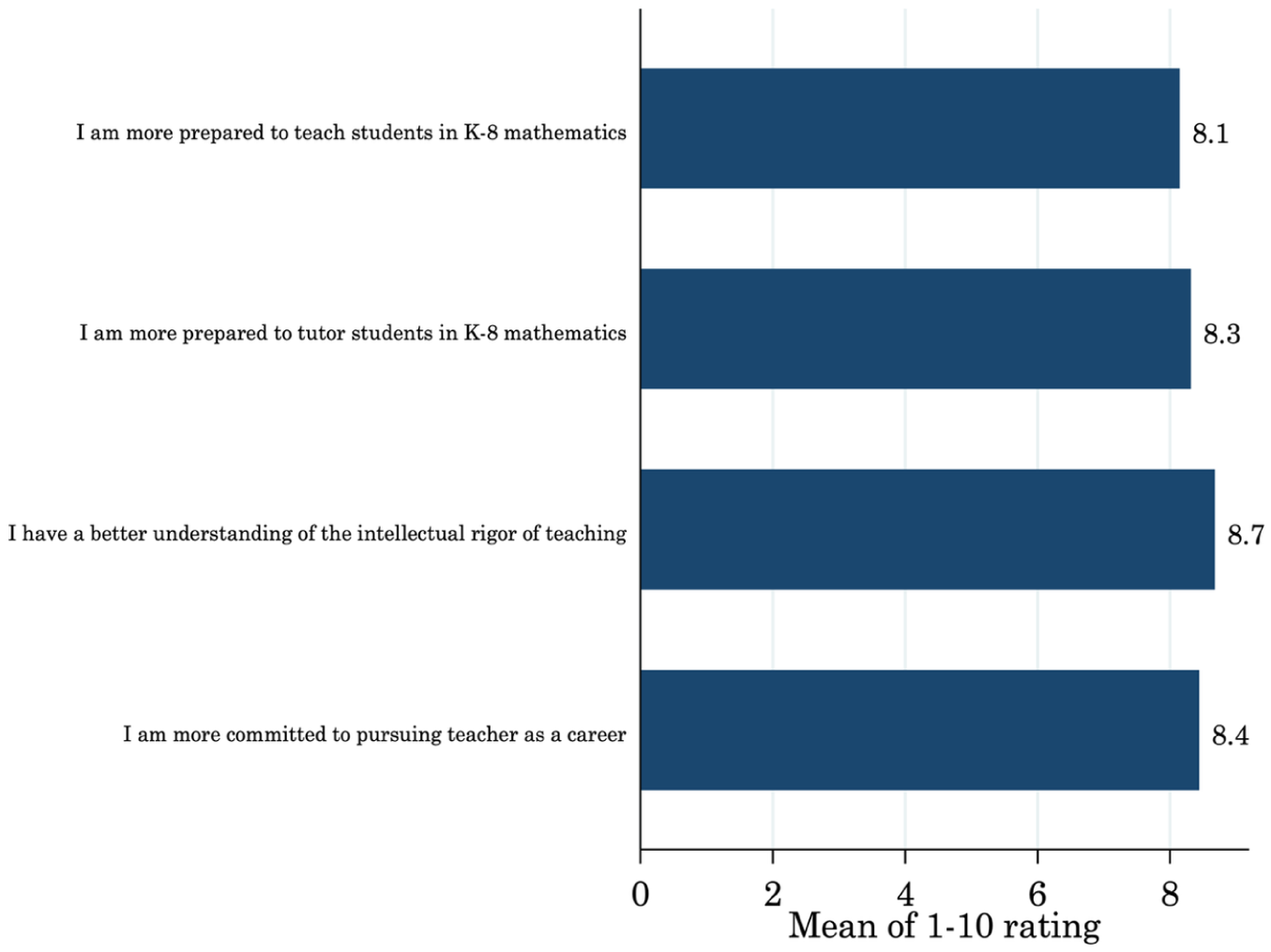

Despite some evidence of differences by demographics, there was strong evidence that overall, tutors found the training valuable in terms of increasing their preparedness for and commitment to teaching. Figure 4 shows mean responses from a post-assessments scale that asked respondents to specifically evaluate changes in these constructs as a result of the training. For each of the four, the mean rating was slightly above 8 out of 10, indicating strong agreement. This evidence is consistent with the pre–post changes for feeling of preparedness, which also showed a substantial increase.

After completing the HQIM modules.

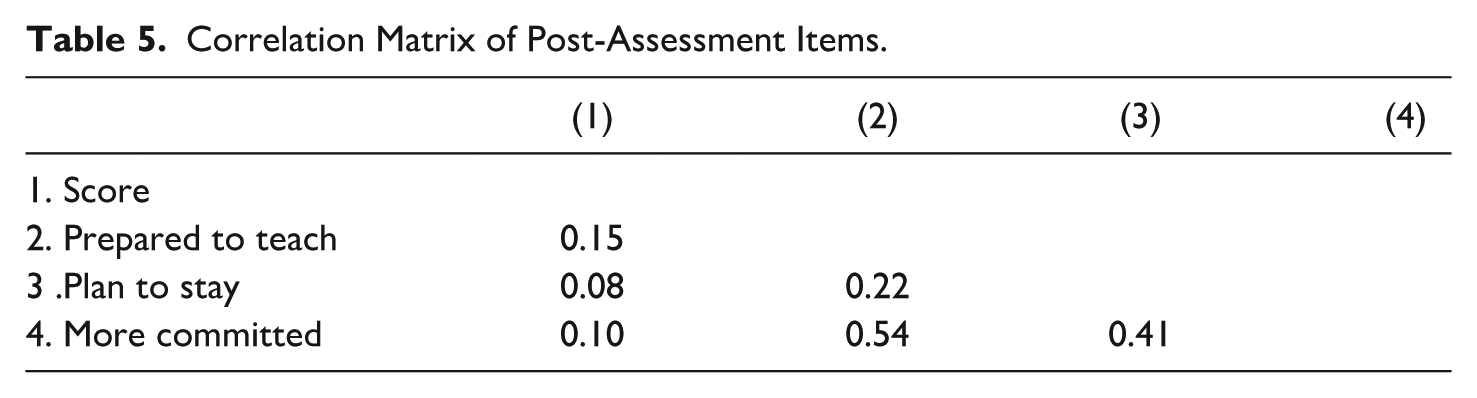

Next, we considered the relationship among the various constructs. Do higher scoring tutors (in terms of the assessment) feel more prepared to teach? Are they also more committed? We examined these relationships using a simple correlation matrix for the post-assessment responses, which is shown in Table 5. A higher positive number (up to 1.0) indicates a stronger relationship. Generally, we found that commitment and feelings of preparedness to teach were only weakly correlated with performance on the post-assessment. These correlations ranged from .08 to .15 and were only marginally statistically significant. We did find, however, evidence of a moderate positive relationship between feelings of preparedness and commitment to teaching or plans to stay. That is, tutors who perceived themselves as more prepared to teach were more likely to report that the training increased their commitment to teaching (

Correlation Matrix of Post-Assessment Items.

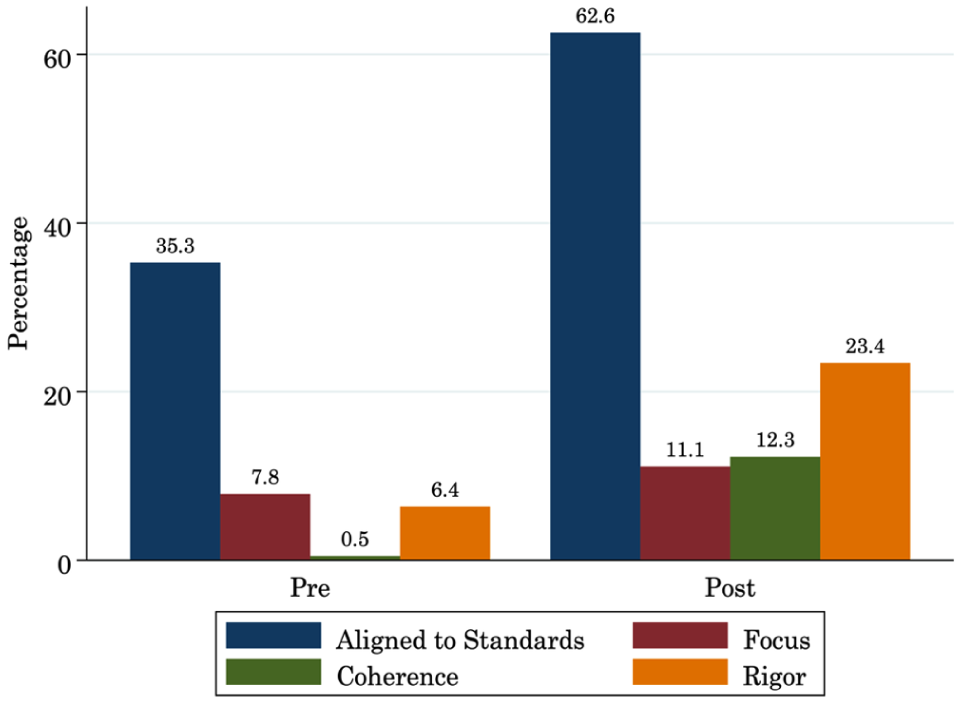

Finally, to examine the extent to which tutors processed the underlying intent of tutoring modules, we analyzed responses to the open-ended item, “What are the key elements of high-quality instructional materials?” We coded it against the four elements listed throughout modules: aligned to standards, coherence, focus, and rigor. Figure 5 illustrates the percentage of respondents who answered each element correctly by assessment. Tutors increased in their knowledge of each element across assessments, though minimally for the element of

Percentage of respondents for each key element of HQIM, pre- and post-assessment.

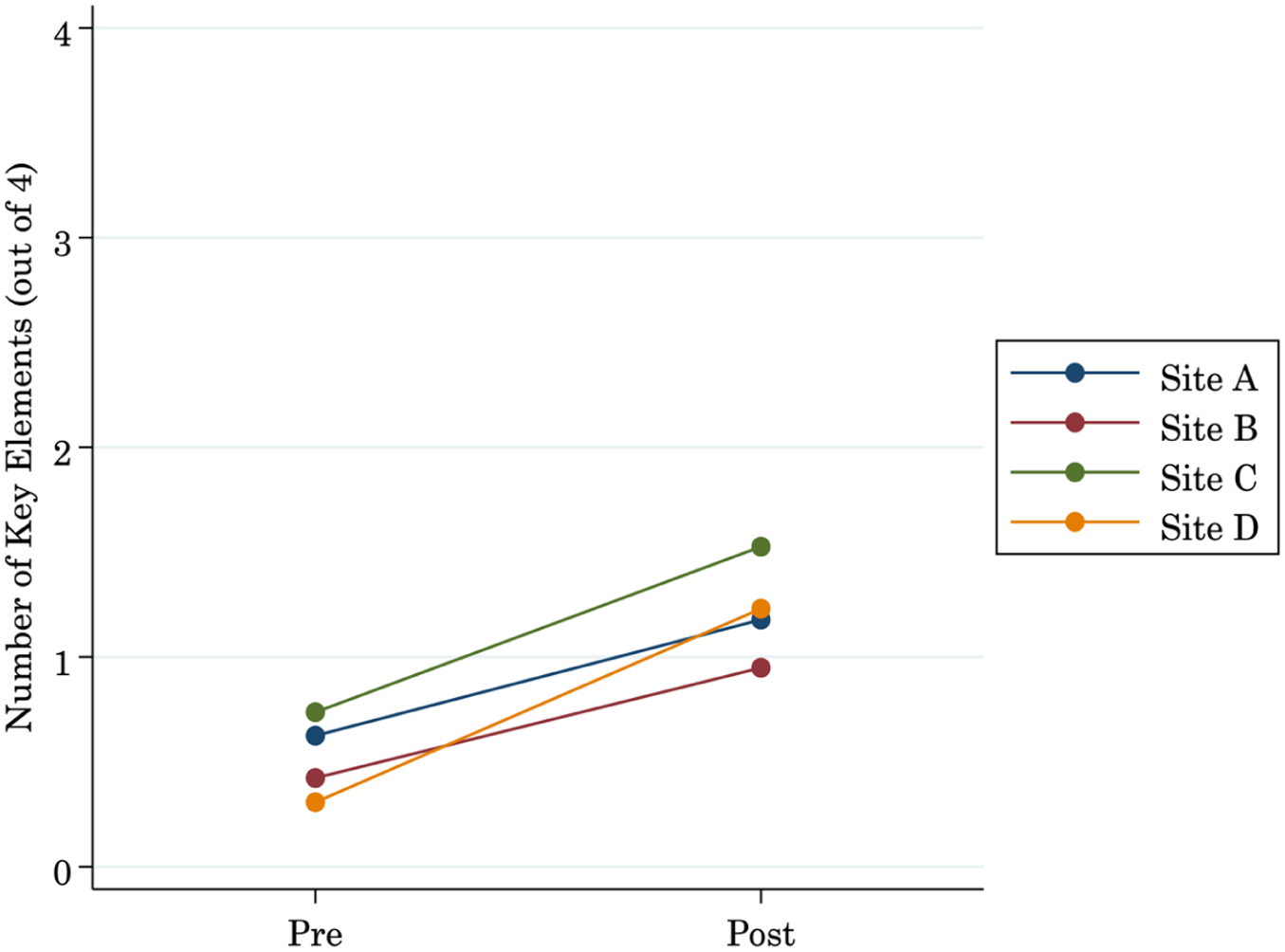

Figure 6 similarly examines the number of key elements that participants got correct by program. On average, tutors increased in the number of correct key elements listed from pre- to post-assessment. However, the mean number correct by program hovered around one, with little difference by program, indicating minimal participant knowledge of four elements of identifying high-quality instructional materials.

Change in key elements across pre-/post-assessment by program.

RQ2: What are participant experiences with the tutor training intervention?

Because we identified some tutor development from our quantitative findings, we aimed to explore how the modules may have facilitated this growth. Through participant interviews, three themes arose across their responses: module accessibility, pedagogical development, and training considerations. In other words, participants spoke about how accessible the modules were and what they learned, and they offered suggestions to improve modules.

Module Accessibility

The most salient feature among trainers was the ease of module integration into existing course structures.

5

Often, content within modules readily aligned with concurrent course content, reiterating established course goals for developing teaching skills. For instance, one trainer noted, "There were a lot of connections between the modules and the course as already designed. . . . The modules reinforced what we were already talking about in class” (Dr. Shannon). Some of the content alignment was facilitated by the trainers, complemented through consistent in-class discussion: “Each particular week or every two weeks, [the module content] was embedded in our reflections for the week . . . [I was] continuing to try to weave it into our weekly conversations with our tutors” (Ms. Jessica). The modules bolstered the coursework or training program, supporting connections between theory and application:

We don’t want them to just learn about the principles but to see it in real time. A large part of their course grade is designing a study and testing an intervention, so that directly linked to the work they were doing with tutoring. (Dr. Kelly)

Trainers appreciated how the modules engaged tutors by having them

read, check out this video, process and try it out. I think that was a lot better than read an article, answer some multiple-choice questions. So they thought about it a lot more. That performance-based approach is really good and one of the big strengths. (Dr. Thomas)

On the other hand, accessibility problems—often around technology— were present throughout the learning management system that housed the training modules. Simple navigational issues prevented access to the content of the modules, where tutors “had to have a lot of tabs open with the links. So much clicking around because tabs were so embedded” (Lyndsey). There were also challenges in saving work, resulting in tutors having to redo or resubmit assignments: “Even though supposedly they were able to complete it piecemeal, they were completing it two or three times, just because they were opening different tabs, I think. So, I think it was a little bit frustrating” (Dr. Kelly). Finally, support was not always available to troubleshoot larger technical issues, as stated by Dr. Roberts: "The biggest obstacle was not having university support for learning management system." Thus, participants seemingly wasted valuable time dealing with undue technological challenges.

Pedagogical Development

Tutors reported positive tutoring experiences that facilitated their pedagogical development. First, tutors valued learning a plethora of practical skills. By the end of the training, tutors could list an array of strategies that they had learned:

Doing things step-by-step and giving specific praise was a good thing that I learned and integrated into my tutoring. For sure the how and why questions were really helpful as well as think aloud. I had never thought about that before. And also modeling. I didn’t see the point of reading something for them or showing them how you do it, so that was helpful. Allowing them to think of the answer themselves before jumping in and giving them the answer. And coming prepared with questions and how to address misconceptions. (Katie)

Likewise, Lyndsey was able to list various teaching strategies that she found helpful: “Breaking it down and explaining it to the kids is really helpful. Using the anchor charts and visual models. And explaining step by step. And then the turn and talks. Modeling and thinking aloud piece.”

Of these pedagogical strategies, tutors found particular value in lesson internalization; this was defined in the modules as “the preparation a teacher engages in to make sense of a unit and prepare to teach a particular lesson,” including learning “how curricula are structured, how to navigate curricular resources, and how high-quality instructional materials can support the development of content knowledge and pedagogical content knowledge of teachers.” This essentially means understanding what students will process throughout a particular lesson, and it was best described by Angela:

The biggest takeaway for me was the internalizing. You have to put yourself in your students’ shoes, and as I was doing that with tutoring, I could see where they have misconceptions and I knew how to help them. . . . More than the self-management skills, it really helped me think about what I need to do to help my students.

Internalizing the lesson allowed tutors to focus their mental efforts on supporting students’ learning rather than on the procedural aspects of lesson implementation. The lesson internalization process also helped tutors explicitly understand the work that goes behind preparing for teaching:

Overall, it shows me how important lesson planning is—you have to think about how to explain this in ways, not just the ways you learned, but how students might be thinking. It taught me that teaching is a lot, but if you prepare yourself, your students will be successful. (Jason)

The value of lesson internalization was supported by trainers as well. As Dr. Roberts stated, “The lesson internalization was really helpful for the ‘why’—you don’t lesson plan just to do an activity. This lesson internalization allowed them to think more about the process of planning, what it looks like, why it looks like that.”

Tutors also expressed their enjoyment in building student relationships and witnessing their academic growth. As an example, Katie explained,

My favorite part was the relationship with the kids and seeing them grow in their confidence. I liked it when they were proud of themselves or responded well to praise and constructive feedback, when I would scaffold them into learning something new.

Another tutor reiterated his appreciation of “the kids being excited to come! You could see the development of relationships between February and May too” (Jason). Most poignantly, Jasmin reflected, “The wonderful thing was the amount of growth I saw in such a limited amount of time—less than 40 minutes, and the amount of growth I saw was just amazing. It was truly amazing.”

Training Considerations

Amid this training pilot, opportunities for module improvement arose. Foremost, tutors were often busy with full-time schedules and working on the modules took low priority or were difficult to finish in a timely manner. Jason explained, “We were tutoring for about eight hours a day and then trying to do this, so it was a lot.” Others went on to add, “[the modules were] labor intensive, and we had to fit that into 25 hours a week, that includes 15 hours of tutoring and prep time and this” (Jasmin). Trainers saw this burden and attempted to ameliorate it. For example, several programs adjusted expectations to accommodate tutors; “[we recognized] the length of time was really hard for our staff to juggle in addition to their workloads, so we were often more lax in changing deadlines” (Ms. Chelsea & Ms. Ashley), whereas another site “had them submit plans for two weeks at a time” (Dr. Roberts) to reduce the consistent workload.

Another improvement was to reduce the material throughout modules. Participants appreciated the content but suggested that it could be better consolidated. They stated, “The content could be really impactful, but it needs to be broken down in a different way” (Jason), and although “it was lengthy . . . [it was] still good information but felt like a slog” (Lyndsey). Rather, participants suggested using more creative modes of engagement: “more videos of teachers teaching kids—I can see it and hear it and how they are saying it” (Lyndsey), or “maybe a little less open-ended questions. Maybe you could do some game format to keep it interesting. . . . More varied and more choice in question response” (Jason).

A third recommendation was to consider transferring tutoring skills to other content areas. Because the modules were situated solely in mathematics, some tutors who were unfamiliar with the content or were placed in tutoring assignments for other content areas found the math content challenging to understand. Trainers specifically requested more support with math for their tutors. They expressed, “math language was overwhelming—felt very fish out of water” (Ms. Jessica) and that “one thing we are thinking about is supporting students with actual math content. . . . A lot of our candidates are not comfortable with the math content—getting into fraction concepts—in higher grades” (Dr. Shannon). Tutors also said, “[I] felt like I didn’t have the content knowledge, so I had an added step which took a very long time” (Jasmin), and “Since I was tutoring literacy, it was a bit difficult to make connections to math” (Katie). Further support in content or content transfer could accommodate more tutors.

Finally, tutors wished to learn more about differentiation to adapt content to individual students’ needs. Jason said, “I wish I would have had the learning about looking across grade-level standards to support the teaching.” Although Jessica appreciated the module on equitable instruction, she wanted to extend her learning:

I also think this program raises a lot of good points and ideas that we aren’t really talking about in other areas. Like being equitable beyond just SES, in terms of disability etc., which I experience. Some scholars were at different levels and learning how I accommodate all.

In this way, tutors could enhance their craft.

Discussion

We explored the pilot implementation of modules distributed to four sites as required training for tutors. Through a concurrent mixed-methods analysis of pre-/post-assessments and interviews, we identified changes in tutors’ self-reported beliefs, career plans, and knowledge, as well as recommendations for module adjustments. Results indicate several important findings.

Tutoring interventions appear to have promise in developing aspiring teachers. The modules yielded nearly equivalent growth in tutoring knowledge across the four sites, increased and established a relatively uniform feeling of preparedness, and improved tutors’ attitudes and dispositions for teaching math. These feelings of preparedness are particularly important because tutors who felt more prepared reported greater commitment, though overall, most tutors planned to stay in and were committed to teaching for an extended period. Tutors also grew in their knowledge, specifically about identifying high-quality instructional materials and how materials should be aligned to standards. However, tutors, on average, did not remember the majority of the four key elements consistently across programs, leaving room for continued growth in this area. Although there has been little evidence about tutor training (Leary et al., 2013), these results offer foundational content for programs and illuminate appropriate professional development related to tutor outcomes.

There was recognition throughout participants that the tutoring modules provided necessary content and accessibility for tutors. They appreciated the high-quality content, particularly around lesson internalization and building student relationships. Tutors gained self-confidence by practicing authentic teaching skills in small settings. This established the value of tutor training and proposed an online model for their preparation. Another value was ease of implementation. Certain programs found that the material aligned with their coursework or was easy to embed alongside current structures. That is, adding modules was not a programmatic burden, and implementation was achievable. However, modules could still be improved. Participants desired more accessibility from a technical standpoint, more modules in other content areas, and more variation of activities within the module to increase their engagement with the content. Shortening the modules would help tutors complete the modules in a timely manner, reduce the risk of technical issues with saving their work, and reduce cognitive overload. These adjustments could enhance participant experience and shed light on important considerations for module implementation.

Limitations

Despite some promising findings, this study faces a number of limitations. First, the four tutoring programs are not nationally representative of tutoring programs. Rather, the sites included in this study were connected to and sampled by DFI as well as part of an aspiring teachers’ network; further research needs to identify how modules would be implemented in other contexts. Specifically, to get a better idea of whether tutoring and the module intervention have a relationship with tutors’ career plans, studies should consider identifying tutoring programs separate from educator preparation programs. Because of our sample’s relatively high commitment to the profession already, it was difficult to disentangle effects of professional commitment from individuals who had already largely committed to entering teaching (Redding & Baker, 2019). Studies can also follow whether tutors indeed enter the profession and for how long, particularly in comparison with individuals who either did not go through the tutoring intervention or were not tutors.

Second, although the pre–post design supports a cautious interpretation that tutors’ understanding of HQIM improved, there was no comparison group, which could have helped to rule out competing hypotheses, such as increased familiarity with the assessment items or the effects of other interventions (e.g., the other coursework that tutors may have been completing). Future studies using an experimental or quasi-experimental design could speak more credibly to the extent to which the modules improved tutors’ skills. Research could also consider additional measures of tutor engagement and effectiveness to better capture growth and to potentially validate our study instruments. Third, qualitative data could be more representative and triangulated. Site B did not have a tutor available to interview, Site C had multiple participants, and overall, tutoring experiences were retrospective in nature. This allowed for slight imbalance of reporting by program. Future studies could incorporate consistent interviewing throughout implementation, collect qualitative data on more participants, and incorporate observations, sample work, or other types of data to get closer to tutors’ experiences.

Implications

Despite limitations, our findings offer implications for policy, research, and practice. For policy, the intense attention to the effects of tutoring must now be balanced with supporting tutors. Because programs are looking to further expand, it is an opportune time to intentionally consider how to train and develop tutors. Tutor training appears to help tutors feel more prepared, and thus, policymakers need to invest in programs that do exactly that. Policymakers should ensure that tutoring programs are equipped to support tutors and offer appropriate curriculum so that tutors and ultimately, students can succeed.

For researchers, continued studies on tutors, their experiences, their growth and development, and their career trajectories remains underexamined. Although tutoring impacts student achievement, it has also been touted as a potential entry point for the teacher workforce. Studies need to investigate the extent to which tutors become teachers, as evidence would suggest (Kraft & Falken, 2021). Additionally, further studies are needed that focus on what supports may be needed to help tutors. Given empirical evidence on good tutoring practices, researchers need to identify how that information gets disseminated throughout programs and ensures tutor learning so that best practices can occur, and student learning acceleration can happen at scale. Finally, more data are needed on the context and experiences of tutors. Models should consider accounting for information, such as the types of schools and students that tutors work with, interactions they may have with school personnel, the quality of the curriculum that they use, and amount of modular engagement, to more precisely measure the effect of a tutoring intervention.

For practitioners, content, curriculum, and tutoring experience must be constantly evaluated and self-reflected on. Programs need to consider how they are training their tutors, identify appropriate tutoring curriculum, or create content around empirical strategies. They cannot assume that tutors have the requisite knowledge and skills to accelerate student learning and need to proactively provide or enhance training to ensure tutoring delivers consistent learning benefits for all students. There should also be some consideration of tutor selection, particularly around individuals who take ownership in student mastery. Identifying individuals who would want to invest in student success could be a distinct trait that expedites tutor development.

Supplemental Material

sj-docx-1-tcz-10.1177_01614681251389032 – Supplemental material for Tutoring the Tutors: Piloting Online Modules for Tutor Training

Supplemental material, sj-docx-1-tcz-10.1177_01614681251389032 for Tutoring the Tutors: Piloting Online Modules for Tutor Training by Andrew Kwok, Brendan Bartanen, Michelle Kwok, Kathy Ogden Macfarlane and Tracey Weinstein in Teachers College Record

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.