Abstract

Background:

As more education practitioners adopt techniques of improvement science to address problems of practice, there is an increasing demand for leaders with the knowledge and capacity to lead improvement efforts. However, little research explores how school leaders learn to lead improvement science in their school context or the challenges they may face in doing so. To ensure leaders are supported in learning increasingly common school improvement frameworks, there is a need to understand better the contextual conditions that may influence how practitioners come to learn and lead improvement science in their school contexts.

Purpose:

The purpose of this research paper is twofold: first, to understand how contextual conditions throughout a learner’s journey into, during, and after an educational leadership program could influence aspiring and current leaders’ efforts to lead improvement science. Second, the study aimed to explore the broader question of how context influences a practitioner’s ability to mobilize new knowledge into action. The researchers introduced the concept of “improvement science fluency” as a methodological contribution to specify the capabilities required to learn and lead improvement science effectively. The research question guiding the inquiry was: “What are the conditions that influenced the development of educational leaders’ improvement science fluency?”

Research Design:

The study focused on participants who completed a 10-week improvement science course as part of an educational leadership preparatory program at a large public university in Southern California. The research team conducted semistructured interviews with 17 participants who met the inclusion criteria of recalling and using the course concepts in their work since graduating from the program. Interviews were audio-recorded, transcribed, and coded using a weighted coding schema to assess participants’ improvement science fluency in guiding principles, tools, and dispositions. The research team analyzed the data to identify conditions that influenced participants’ application and adaptation of improvement science, categorized as professional experiences prior to the course, experiences within the course, and conditions in the organizational environment post-course. Contrasting cases were considered to enhance the robustness of the analysis and acknowledge potential variations within the dataset.

Conclusions/Recommendations:

The findings provided insights into the contextual factors that challenge or support the application of improvement knowledge to action, offering implications for designing and enhancing school leadership preparation programs to cultivate effective educational leaders for sustainable school improvement. The study emphasizes that organizational role and slack play a crucial role in shaping practitioners’ authority, opportunities for practice and application, and access to continued training and coaching, ultimately impacting their fluency in improvement science. To effectively support moving improvement knowledge to action, the paper recommends: (1) activating prior knowledge to prepare for future learning; (2) attending to the importance of the learning problem and its relevance to practitioners’ roles, scaffolding for role by providing support for practicing new knowledge within professional contexts; (3) addressing the organizational context to create an environment conducive to innovation; and (4) offering follow-up learning opportunities through sustained communities of practice. The study concludes that changing practice is not solely a matter of will and skill but requires careful attention to the broader context before, during, and after the learning experience to support innovations in practice effectively.

Improvement science has emerged as an increasingly common framework for managing the complexities of school improvement. Though it has many foci, including engagement in Networked Improvement Communities (Dolle et al., 2013) and Plan-Do-Study-Act cycles (Tichnor-Wagner et al., 2017), improvement science is united by a set of core commitments and tools for developing, testing, implementing, and spreading change. Over the last quarter-century, improvement science has gained momentum in manufacturing and health care and has spread quickly to other sectors (Lewis, 2015). Within the field of education, it has been used to improve graduation rates, classroom instruction, and student engagement (Bryk, 2020). As more education practitioners take up improvement, a growing body of scholarship exists that suggests that improvement techniques help leaders and teachers make demonstrable progress in addressing the problems they care about (Bryk, 2020; Daley, 2017; Lewis, 2015; Pearce, 2015).

As schools and districts express greater interest in improvement science, there is a growing demand for leaders with the knowledge and capacity to lead these efforts. However, little research explores how school leaders learn and lead improvement science in their school context or the challenges they may experience in doing so. Improvement science has been called a “counter-normative” approach to school improvement, signaling its potential obscurity and difficulties for educators as they learn its core concepts and ideas (Hannan et al., 2015, p. 505). To ensure leaders are supported in learning this valuable and increasingly common school improvement framework, there is a need to understand better the contextual conditions that may influence how practitioners come to learn and lead improvement science in their school contexts.

The task of supporting leaders in learning and using improvement science is one instantiation of a larger challenge pervasive in education: supporting practitioners in moving new knowledge to action. Most current educational reform sets ambitious goals for schools, calling on educational practitioners to learn and use new knowledge, skills, and tools aligned with aspirations for transformational teaching and learning. This reform requires that practitioners not only have access to new knowledge, but also be able, willing, and supported to move that knowledge to practice. Although there is a large body of professional development research, there is a less robust body of research that explores how professional development produces changes in practice, especially in contexts that may not be supportive of new practices (Yoon et al., 2007). This is especially true of professional development for leaders and aspiring leaders (Brown et al., 2014; Darling-Hammond et al., 2007; McCarthy, 2002).

Therefore, this paper has two purposes, one specific to leading improvement science and one more globally related to the project of moving ideas into practice. For the first purpose, we sought to understand how contextual conditions across a learner’s journey into, during, and post-completion of a program could support or limit aspiring and current leaders’ efforts to learn and lead improvement science. This helps to address the need to better examine professional development for school leaders and the development of improvement leadership. However, in doing so, this research offers implications for the broader question of how context influences a practitioner’s journey to mobilize new knowledge to action. Thus, although the inquiry is explicitly concerned with improvement science knowledge, it also responds to the more inclusive question of how to best support practitioners in moving knowledge presented in a professional learning program to practice in their schools.

To explore these questions and to serve our goal of better understanding the conditions that influence how leaders move new knowledge to practice, we developed the concept of improvement science fluency, which we describe later in this paper. Improvement science fluency is a methodological contribution to specify better the capabilities entailed in learning and leading improvement science. It refers to an individual’s ability to act upon the big ideas, wield the tools, and lean on the dispositions of improvement science, and therefore provides a way to identify conditions that influence how school leaders move new knowledge to practice in their professional context.

We explore variations in improvement science fluency among a sample of graduates from a principal preparation program, which offered professional development on improvement science. Although not all participants held traditional leadership titles at the time of interview, each led improvement projects and took on informal and formal leadership roles in their school contexts. We highlight the conditions that influenced their efforts to act upon new knowledge presented in one 10-week course on improvement science. This inquiry was guided by the question, “What are the conditions that influenced the development of educational leaders’ improvement science fluency?”

Given the importance of leadership for school improvement (Leithwood et al., 2004), it is incumbent upon leadership preparation programs to position future leaders with the capacities needed to manage the complexities of continuous school improvement. If professional programs seek to foster new and impactful leadership practices, they need insight into the contextual conditions that challenge or support moving improvement knowledge to action. The findings and discussion presented in this paper offer important implications for designing and improving school leadership preparation programs that develop educational leaders ready to lead and sustain ambitious school improvement.

Background

Getting Knowledge to Action

Scholars as early as Kurt Lewin have lamented the challenges practitioners experience using new knowledge and research to address practical problems and guide change in professional settings (Adelman, 1993; Lewin, 1939). Within this field of scholarship, many terms have been offered up to describe and support the process of moving theory into practice (i.e., Argote et al., 2000; Argyris & Schön, 1992; Tugwell, 2006). In this paper, we rely on the concept of knowledge to action because we are interested in understanding how practitioners use and adapt new knowledge and tools in the repertoires of their role. Professional development does not seek to provide new knowledge for its own sake but for the purpose of inspiring changes in practice that align with increasingly ambitious educational aspirations. However, the question of how professional development fosters changes in practice remains somewhat murky and underexplored. Although there is a substantial body of research on professional development, much of this research emphasizes the outcomes of increasing teacher knowledge or student achievement without exploring the contemporaneous changes that occur in practices (Bell et al., 2010; Blank et al., 2008; Liu & Phelps, 2019; Yoon et al., 2007). Therefore, there exists an opportunity for future research to build on the scholarly contributions of existing research by providing greater information regarding the mechanisms and conditions that influence how practitioners mobilize new knowledge presented in professional development to action in school contexts.

Research underscores how context shapes learning and practice (Engle, 2006; Saxe, 1999). One implication this research offers to professional development is the importance of attending not only to the content and context of the classroom, but also, more broadly, to the contextual conditions that mark a practitioner’s journey toward incorporating new knowledge into their practice. We can think of these contextual conditions as grouped in three interactive ways: pre-learning, during-learning, and post-learning experiences.

In the category of pre-conditions, prior experience has been shown to influence practitioners’ sensemaking of new knowledge, priming their understanding of and attitude toward new ideas and concepts (Schwartz & Martin, 2004; Weick, 1995). Active learning experiences, continued opportunities for practice, and scaffolding that frames connections between the learning and transfer context have all been identified as important during conditions influencing the effectiveness of professional development (Desimone, 2011; Engle, 2006; Franke et al., 2001). Additionally, scholarship specific to school leadership preparation suggests that professional development for current and aspiring leaders is effective when it meets a number of criteria. As Fluckiger and colleagues (2014) describe, the quality of school leader professional development can be evaluated based on six criteria: the extent to which the program is goal-oriented, research-informed, time-rich, practice centered, purposefully designed, peer-supported, and context-sensitive.

Finally, in the category of post-conditions, supportive conditions for improvement science practice include collegial relationships, organizational cultures and routines that emphasize incremental learning over accountability, and the presence of psychological safety that supports the collaboration and risk-taking necessary to innovate (Biag & Sherer, 2021; Bryk & Schneider, 2002; Tichnor-Wagner et al., 2017). Moreover, research suggests the capacity of individuals to engage in innovation and reform is a function of the slack within their organizations, where slack refers to the resources that promote experimentation and innovation (Bourgeois, 1981; Grossman & Shapiro, 1987). There is evidence that when practitioners engage in improvement science, they perceive it to be laborious and time-consuming, which may be attributed to their time constraints and the highly structured nature of their work that makes engagement in iterative reform difficult (Collinson & Fedoruk Cook, 2001; Spillane & Thompson, 1997; Tichnor-Wagner et al., 2017). Taken together, this literature suggests that how leaders move new knowledge to action in their school contexts is likely to be influenced by pre-, during-, and post-program experiences, which this research project was motivated to explore further.

Examining this research on individual and organizational learning highlights a relative gap in the professional development literature, which fixates on the content and context of the learning environment or on the outcomes occurring in what Engle (2006) calls the “transfer context.” We focus instead on the broader contextual conditions likely to influence one’s ability to put new knowledge into practice. If professional development seeks not only to provide access to new knowledge, but also to, in turn, improve practices, it is necessary to understand how these conditions support or constrain practitioners’ attempts to mobilize new knowledge to action. Therefore, in this project, we sought to understand the pre-, during-, and post-program experiences that may explain variation in improvement science fluency. Although we use pre-conditions, during conditions, and post-conditions as a category for our exploration of leaders’ learning journeys, we do not mean to suggest that learning is a linear process that divorces learning from doing. As others have highlighted (e.g., Cochran-Smith & Lytle, 1999), practitioners construct knowledge as they practice. Our research endeavor explores the conditions that influence the development of practice before, during, and upon completion of a professional development program.

Improvement Science: Evidence, Domains, and Fluency

Although the general focus of this research project was to better understand the conditions that influenced how practitioners moved new knowledge to action, we explored this by examining how leaders learned and led improvement science. Improvement science is becoming increasingly common in leadership preparation programs that seek to train school and district leaders with the knowledge, skills, and dispositions to improve the complex problems facing schools (Perry et al., 2020).

In 2011, Bryk and colleagues published an article detailing their efforts to understand and build networked improvement communities (NICs), communities of diverse expertise and actors engaged in solving complex and common education problems by using the tools and methodology of improvement science. The authors began the article by arguing that the educational research and development infrastructure was badly broken, noting how insights and innovations arising from research have largely failed to catalyze significant improvements in schools. The authors argued that NICs, along with the tools and principles of improvement science, offer a way to move ideas from research to action in schools. However, although this paper offered a nascent theory of improvement, it offered little specificity on how individuals and organizations might learn to do this work.

Since that time, the field has worked to better understand the mechanisms and supporting conditions for improvement science. Informed by this research, we suggest the work of educational improvers can be understood across three domains of activity: their knowledge and use of improvement principles, their knowledge and use of improvement tools, and the strength of improvement dispositions.

First, educational improvement science is guided by six principles (Bryk et al., 2015) that can be considered the conceptual knowledge that undergirds improvement science practice. They are grouped into three “interdependent, overlapping, and highly recursive” aspects of improving schools: (1) defining and deeply understanding the complex problem, (2) building knowledge to support improvement, and (3) accelerating learning through collaboration (LeMahieu et al., 2015, p. 447). These guiding principles, which are discussed in much greater detail in Bryk et al.’s Learning to Improve (2015), are described briefly here.

Defining and deeply understanding the complex problem

1. Make the work problem-specific and user-centered. This principle directs practitioners to begin improvement work by bringing together those most proximately experiencing the problem in collaboratively defining the challenge and the specific aims the improvement project seeks to address.

2. Variation in performance is the core problem to address. This principle directs practitioners to explore variation in outcomes or processes, to understand and monitor progress toward fixing a problem.

Building Knowledge to Support Improvement

3. See the system producing the current outcomes. This principle directs practitioners to engage in system thinking to address the roots rather than the symptom of a problem.

4. We cannot improve at scale what we cannot measure. This principle directs practitioners to select and monitor improvement measures to understand if the potential solutions they pursue support improvement.

5. Anchor improvement in disciplined inquiry. This principle directs improvers to implement fast, iterative cycles of action and reflection, where educational improvers design an intervention, study its impact, and determine necessary modifications.

Accelerating learning through collaboration

6. Accelerate improvements through networked communities. This principle directs practitioners to harness collective wisdom by fostering learning communities.

In addition to the six principles, educational improvers rely on tools to diagnose problems, analyze root causes, and monitor change over time (Bryk et al., 2015). These tools are connected to the six principles and include, but are not limited to, empathy interviews, process maps, driver diagrams, fishbone diagrams, run charts, Plan-Do-Study-Act cycles (PDSAs), and Pareto charts. A district might make its work on reducing rates of chronic absenteeism user-specific by using an empathy interview to understand better why students are not attending school (Hinnant-Crawford, 2020, p. 59). The district might visualize the system using a process map to understand better how systems interact to contribute to the problem (Bryk, 2020).

Third, improvement science scholars have begun to specify the dispositions that may facilitate authentic engagement with the principles and tools of improvement science (Biag & Sherer, 2021; Lucas & Nacer, 2015). We use the definition of dispositions offered by Perkins and colleagues (1993), where dispositions entail inclinations to engage in a behavior, sensitivities to when a certain behavior is appropriate, and abilities to engage in a behavior. Biag and Sherer (2021) found that educational improvers reported orientations to engage in disciplined inquiry, adopt a learning stance, take a systems perspective, possess an orientation toward action, seek the perspectives of others, and persist beyond initial improvement attempts. Biag and Sherer’s dispositions map well onto the work of other scholars who have identified improvement dispositions (i.e., Lucas & Nacer, 2015).

Looking across these three domains of improvement science activity, we conjectured that how a practitioner uses improvement science in their context will vary according to their knowledge and application of the guiding principles, their knowledge and use of tools, and the strength of their improvement dispositions. This conjecture is aligned with a sociocultural view of learning, wherein knowledge for practice expands beyond robust conceptual knowledge (Nasir et al., 2007; Schwartz & Martin, 2004), to account for the role of individuals’ identities and the conceptual and physical artifacts at their disposal (Bransford et al., 2000; Lave & Wenger, 1991; Nasir et al., 2007; Schön, 1983; Wenger, 1998). As we discuss later, we use the concept of improvement science fluency to unite these domains and construct a holistic picture of the resources practitioners draw on as they move knowledge to action. Given that this definition of improvement science fluency incorporates not only what participants know, but also what they do with that knowledge and the dispositions that support those actions, we considered it a relatively good proxy for understanding knowledge to action.

Methods

Research Context and Participants

The research team interviewed participants who completed a 10-week improvement science course as part of an educational leadership preparatory program at a large, public university in Southern California. During this seminar, students identified a tractable problem of practice affecting their school’s learning community. Working with their classmates in small improvement teams organized around a problem of common interest, learners in this course deepened their understanding of these problems and learned to address them using the guiding principles and tools of improvement science, either at a single school site or across multiple sites. This course has been a graduation requirement of the program since 2015. All six cohorts (2015– 2020) have been taught by the same instructor, who was also a member of the research team. 1 Although the core content included in the syllabi remained the same, instruction of the course evolved over time in a number of ways. First, over time the course allowed for the incorporation of more student interests and for students to personalize how they applied concepts and materials to their own contexts. Moreover, over time, the problems that students engaged with as they practiced improvement science became more centered on connecting issues of equity to issues of improvement.

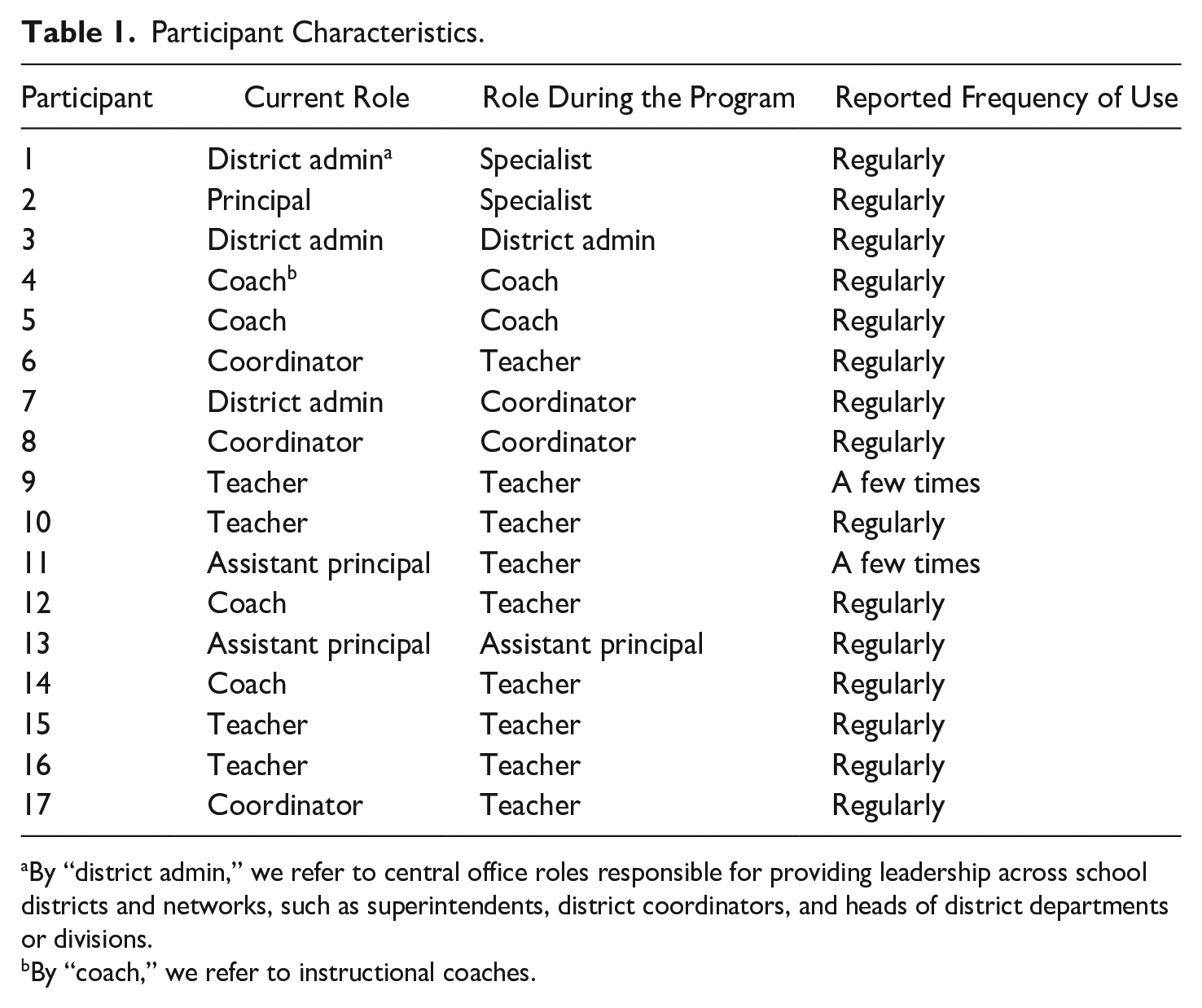

After receiving institutional research board approval, we began the recruitment process by distributing a short screening questionnaire by email to all 210 graduates from these six cohorts, providing them with a brief written description of the study. The purpose of this questionnaire was to identify graduates who both recalled and believed that they used the concepts presented in the course. Additionally, the questionnaire asked respondents to identify their role and how frequently they believed they used improvement science currently in their role. Of the 63 questionnaire respondents, four did not recall taking the class, and five indicated they never used the material presented in the course. The 54 remaining respondents indicated they both remembered the course and had used content presented in the course in their work at least once since graduating from the program. Because they met these inclusion criteria, all 54 remaining respondents were invited to participate in the study. Of the 54 recruited, 17 indicated interest and all 17 completed interviews. There were a number of noticeable similarities between the graduates who participated in this interview and the larger population of graduates who completed the course. Like the larger population, the sample was majority female, and most participants held an in-school leadership role, such as a principal or instructional coach. Like the larger population, participants worked in large urban public school districts. Table 1 describes participant characteristics.

Participant Characteristics.

By “district admin,” we refer to central office roles responsible for providing leadership across school districts and networks, such as superintendents, district coordinators, and heads of district departments or divisions.

By “coach,” we refer to instructional coaches.

Although all participants graduated with an administrative credential and a master’s degree in education leadership, not all graduates were in administrative or leadership roles at the time of interviewing. Our decision to include classroom educators as participants in this study reflects our belief that one does not need to hold a traditionally defined school leadership title, such as principal or assistant principal, to lead improvement projects. We hypothesized that graduates of the program might adapt their improvement science training to lead projects of various purposes and sizes, regardless of their title or salary schedule. This decision is also aligned with other research suggesting the ways that leadership is often distributed across roles within schools and how informal leadership can play an important role in school improvement (Printy, 2008, Spillane et al., 2004).

Data Collection

The first author conducted semistructured interviews over Zoom over a period of roughly two months, from early March to late April 2021. Each participant was interviewed once. The purpose of each interview was to understand school leaders’ perceptions of the conditions that influenced their application and adaptation of improvement science in their post-graduation role. Given our interest in exploring the pre-, during-, and post-class conditions that might influence improvement science, the interviewer asked participants about their current role and organizational context, their prior and continued encounters with improvement science concepts, their knowledge and use of improvement science tools and principles, and the antecedents leading to their use of improvement science.

All interviews were audio-recorded and transcribed. Transcripts were then checked against audio recordings to ensure accuracy. We began with multiple reads of the interview transcripts to familiarize ourselves with respondents’ experiences learning and using improvement science. The first author coded the transcripts using a combination of a priori, open, and axial coding methods (Miles et al., 2014; Strauss & Corbin, 1998). A priori codes were used to examine participants’ knowledge and use of the guiding principles of improvement science, their knowledge and use of improvement science tools, and their improvement science dispositions. We created six codes aligned with the guiding principles, which helped us capture if and how participants understood and used each principle in their role. We created seven codes for seven improvement science tools that were featured in the course, capturing if and how participants recognized, defined, and used each tool in their work. We used Biag and Sherer’s (2021) descriptions of the six improvement science dispositions to create six disposition codes. Commensurate with Biag and Sherer’s descriptions of the six improvement science dispositions, we used Perkins, Jay, and Tishman’s (1993) definition of dispositions as having three interrelated elements: inclinations to engage in a behavior, sensitivities of when a certain behavior is appropriate, and abilities to engage in a behavior. Because we believe dispositions are demonstrated in practice, we looked for evidence of dispositions where participants discussed how they defined, addressed, and involved others in solving problems that arose in their work. We then organized all codes related to principles, tools, and dispositions in profiles of practice for each participant. We used these profiles as tools to understand the depth and flexibility of participants’ improvement science knowledge, as well as the form their improvement projects took. Although this project would have been greatly aided by observational data, COVID-19 prevented us from gathering and using observational data to triangulate our interview findings.

Operationalizing Improvement Science Fluency

Given that the research team was interested in examining the conditions that influenced how participants enacted and adapted what they learned in their improvement science course, we sought to clearly understand and represent variation among participants’ improvement science fluencies. We operationalized fluency by constructing a weighted coding schema for each guiding principle, tool, and disposition. This process yielded six initial codes aligned to the guiding principles of improvement science, seven codes aligned to the seven tools of improvement science, and six initial codes for the six dispositions of improvement science. For each guiding principle, strong evidence that the participant knew and used the principle in their work was weighted as two points, moderate evidence was weighted as one point, and weak evidence was weighted as zero points. When participants recognized the guiding principle and offered examples throughout the transcript demonstrating they used this principle in their work, we considered this strong evidence in support of their knowledge of the principle. When participants did not recognize the guiding principle, but the examples they gave throughout the transcript demonstrated they used the principle in their work, we considered this moderate evidence. When participants neither recognized nor indicated they used the principle, we rated this as weak evidence. A weighted coding schema allowed us to depict variations in improvement science and its three compositional elements.

We followed a similar process for both tool use and dispositions, in which strong evidence was weighted as two points, moderate evidence was weighted as one point, and weak evidence was weighted as zero points. If participants could define how a tool was used, indicated they used a tool in their work, and gave an example of how they used the tool, we considered this strong evidence. If participants could define a tool but had not used the tool in their work, we considered this to be moderate evidence. If a participant neither defined the tool nor indicated they used it, we considered this weak evidence. When participants’ descriptions of their use of improvement science or the ways they approached problems of practice in their contexts demonstrated a disposition, such as persisting beyond initial challenges or seeking the perspectives of others to help them define and address problems, this was considered strong evidence in favor of the disposition. If no such evidence existed, we considered this weak evidence of the disposition. There was no moderate category for dispositions. This decision was informed by our dataset. Throughout participant responses, we found no moderate evidence of what might be considered an improvement disposition.

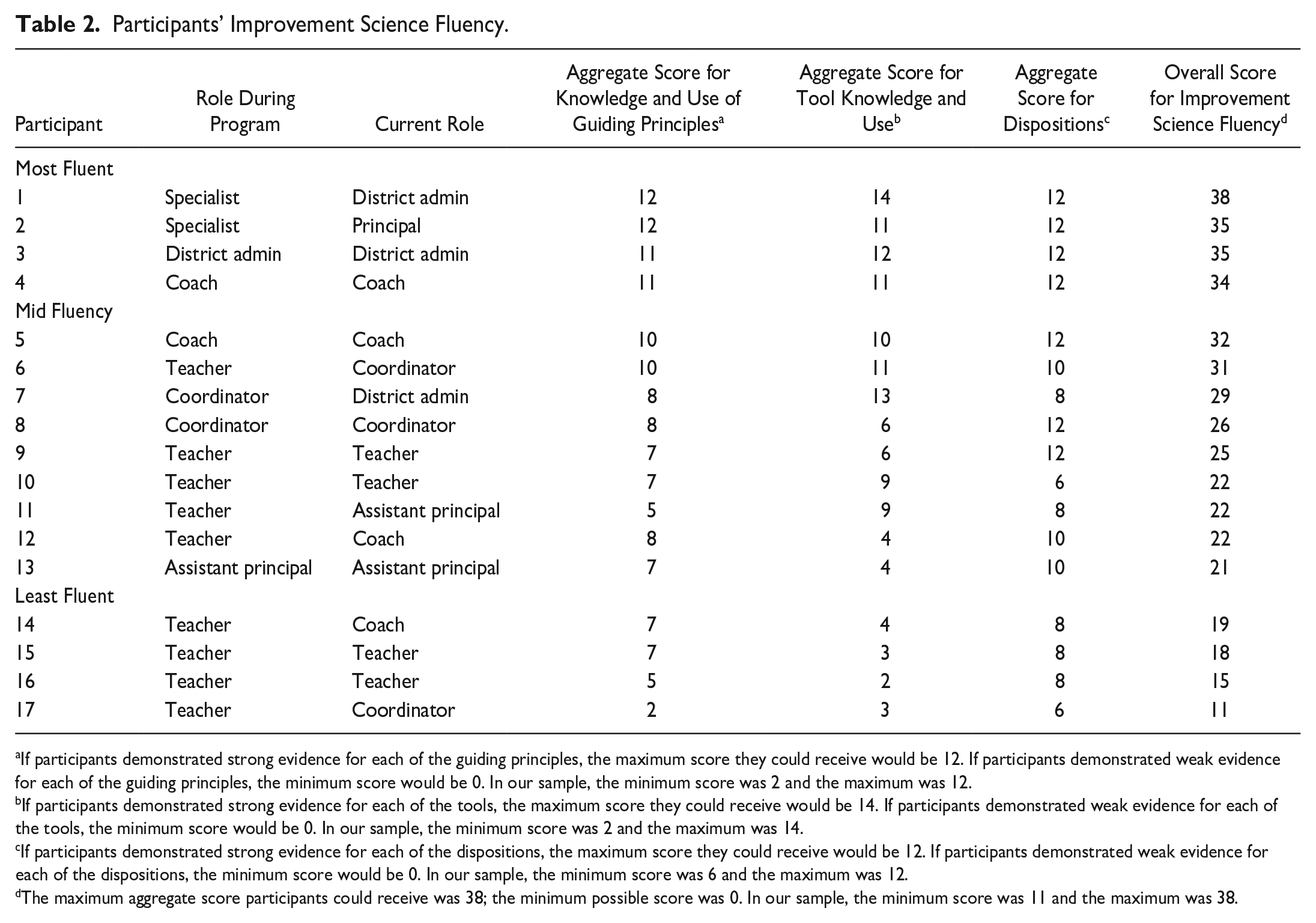

The research team then aggregated the scores in each domain, creating a domain-specific score for each participant’s knowledge of the principles, knowledge of the tools, and improvement science dispositions. We added domain scores into an overall aggregate score for each participant. These aggregate scores allowed us to represent which participants were the most and least fluent users of improvement science across these three domains. These domain-specific and overall scores are depicted in Table 2. The median fluency score was 25. The research team considered the most fluent users to be those in the highest quartile of overall scores, which consisted of participants 1, 2, 3, and 4. The least fluent users were considered those in the lowest quartile, which included participants 14, 15, 16, and 17. Throughout our analysis, we pay special, but not exclusive, attention to the most and least fluent users and to the conditions that may explain variation in their fluency.

Participants’ Improvement Science Fluency.

If participants demonstrated strong evidence for each of the guiding principles, the maximum score they could receive would be 12. If participants demonstrated weak evidence for each of the guiding principles, the minimum score would be 0. In our sample, the minimum score was 2 and the maximum was 12.

If participants demonstrated strong evidence for each of the tools, the maximum score they could receive would be 14. If participants demonstrated weak evidence for each of the tools, the minimum score would be 0. In our sample, the minimum score was 2 and the maximum was 14.

If participants demonstrated strong evidence for each of the dispositions, the maximum score they could receive would be 12. If participants demonstrated weak evidence for each of the dispositions, the minimum score would be 0. In our sample, the minimum score was 6 and the maximum was 12.

The maximum aggregate score participants could receive was 38; the minimum possible score was 0. In our sample, the minimum score was 11 and the maximum was 38.

Analysis

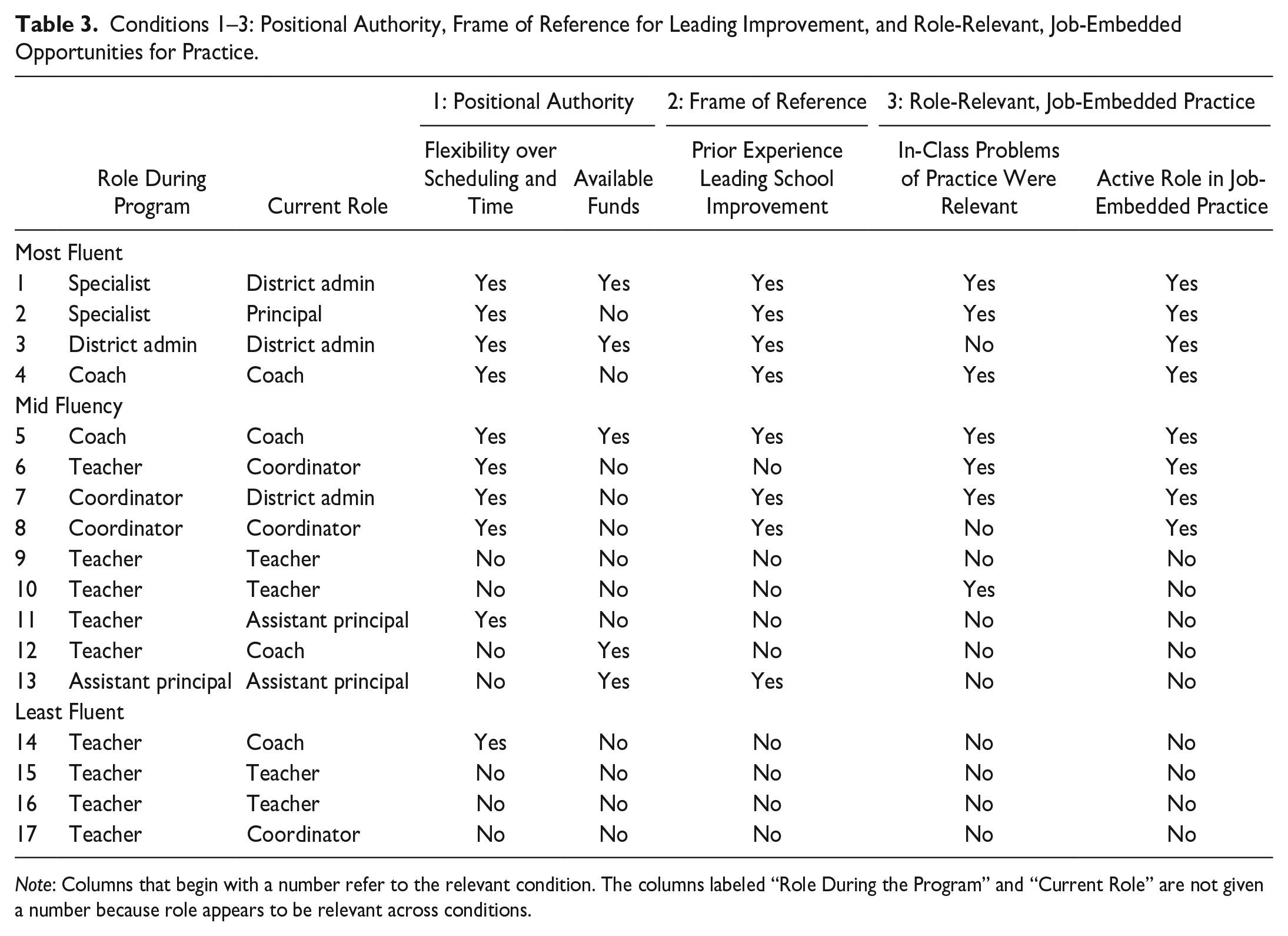

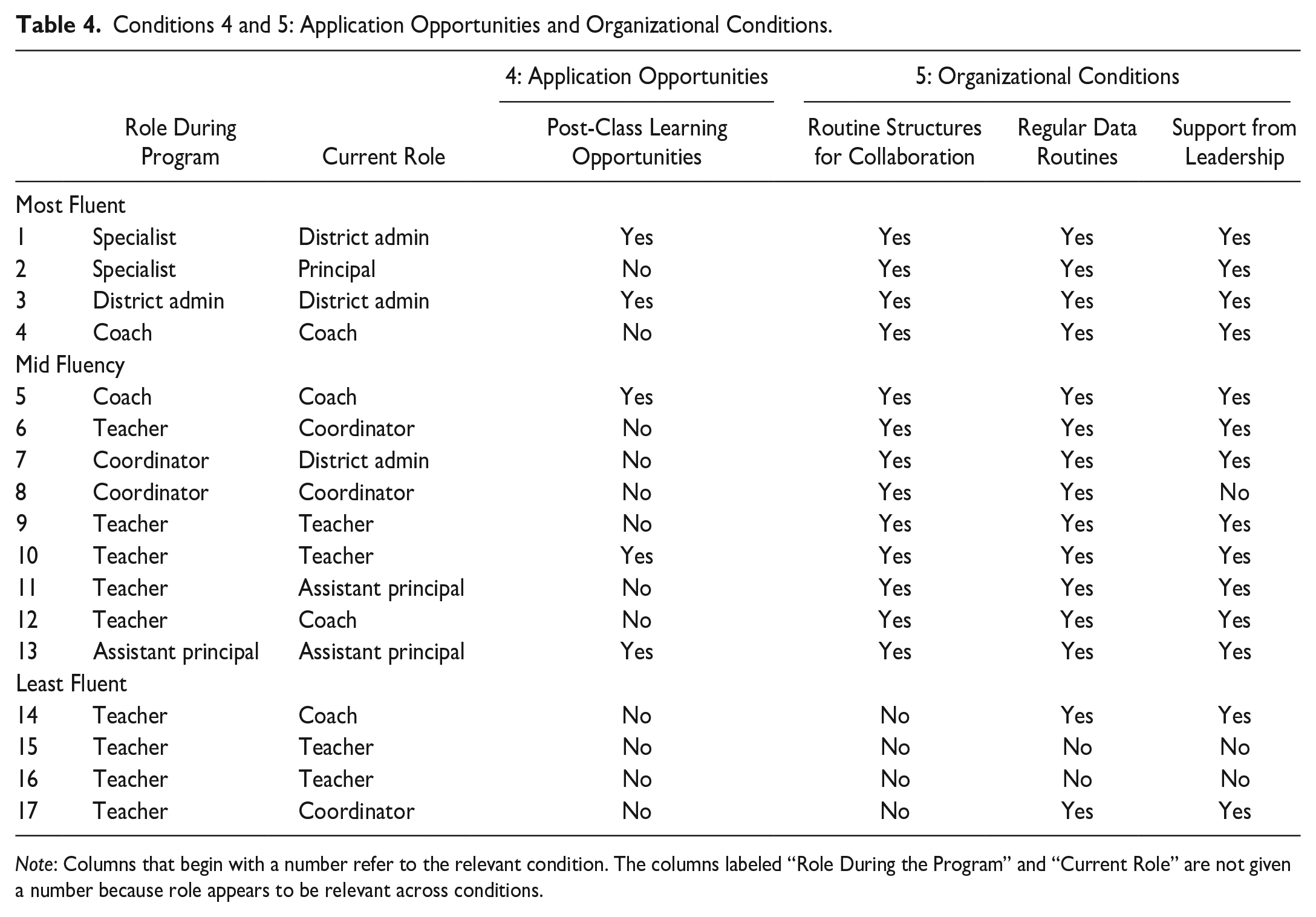

Once the research team understood the distribution of participants’ improvement science fluencies, the first author used open and axial coding to identify conditions that influenced graduates’ use and adaptation of improvement science. In the initial round of open coding, the research team focused on the conditions that supported or challenged participants’ understanding and use of improvement science. Together, we looked across these open codes for emergent patterns. Doing so revealed that these supporting and limiting conditions could be grouped in three ways: professional experiences prior to the course, experiences within the course, and conditions of the organizational environment in which leaders worked following the course. Looking across these patterns, we generated a set of descriptive codes within each category. These descriptive codes, which are depicted later in this paper in Tables 3 and 4, included flexibility over scheduling and time, availability of funds, prior experience leading school improvement, in-class problems of practice were relevant, active role in job-embedded practice, post-class learning opportunities, routine structures for collaboration, regular data routines, and support from leadership. Then the research team applied these codes to the transcripts using Dedoose software to explore their prevalence. This process was helpful because it allowed us to examine how the conditions reported by individual participants could offer a plausible explanation for the variation in improvement science competencies depicted in Table 2. Throughout our analysis, the research team sought disconfirming evidence by paying explicit attention to documenting contrasting cases. In analytic memos, we documented contrasting cases in our data set to not only enhance the robustness of our analysis, but also acknowledge the complexity and potential variations within the data set.

Conditions 1–3: Positional Authority, Frame of Reference for Leading Improvement, and Role-Relevant, Job-Embedded Opportunities for Practice.

Note: Columns that begin with a number refer to the relevant condition. The columns labeled “Role During the Program” and “Current Role” are not given a number because role appears to be relevant across conditions.

Conditions 4 and 5: Application Opportunities and Organizational Conditions.

Note: Columns that begin with a number refer to the relevant condition. The columns labeled “Role During the Program” and “Current Role” are not given a number because role appears to be relevant across conditions.

Researcher Positionalities

All three researchers had prior experience with the improvement science course. One of the researchers had served as the instructor for the course, another as the teaching assistant, and the third had observed the course. This prior familiarity with the improvement science course proved valuable during our interviews because it enabled us to ask targeted follow-up questions, drawing upon our knowledge of the course. Furthermore, our research team comprised individuals with diverse professional, academic, and cultural backgrounds, and demographic characteristics such as race, age, and gender. This diversity of perspectives greatly contributed to our analytic process. Throughout data analysis, the research team actively challenged each other’s interpretations based on our individual experiences and histories with the course and improvement science in general. This collaborative process was instrumental in identifying and addressing our personal biases while also fostering a more comprehensive and nuanced understanding of the collected data.

Findings

Changing practice is often discussed as a function of will and skill (Marris, 1974; Spillane, 2017). All participants expressed interest in and a willingness to use improvement in their work. The reasons participants expressed for their interest in improvement science could be grouped in three ways. First, 11 of 17 participants voiced they appreciated improvement science because it disciplined their approach to understanding complexity and solving problems. Nine participants reported that they gravitated toward improvement science because they felt it centers the voices of those most proximally experiencing the problem. For example, Participant #1, who used improvement science as a teacher and a school administrator, said, I like [improvement science] because it allows for change to happen from teachers. You came up with the aim statement, you came up with your theory, and you tested it through iterative cycles. I felt like it allowed teachers to have the power to do change and change to come from within rather than consultants or other things saying, “Oh, this is what we know to work and we’re gonna impose this on you.” That’s the beauty of it. It’s deep change, and it’s improvement that comes from within, from the people that best know. It’s empowering.

Five participants reported that improvement science resonated with their identities as individuals who aim to continuously improve their practice or as individuals inclined to test new ideas. For example, Participant #4 spoke of how her disposition to engage in experimentation was an important reason she was motivated to apply improvement science in her own context.

As a teacher, I was always experimenting. Like a kid will have an idea and I’m like, “Let’s try it.” I would experiment and have fun with it and that would give another teacher a heart attack. I think that’s why for this class I was all in. I was fine, but I could see other people not feeling fine with it because it didn’t fit what they had expected or what they’re comfortable with.

As participants came to see the value of improvement science, they were motivated to take it forward into their own contexts. Yet, how they did so varied considerably. That each participant was motivated to use improvement science in their own contexts suggests the need to look for explanations of improvement science fluency outside individuals’ motivation or will. The remainder of this section focuses on skill and how practitioners are supported in developing skill. By comparing the most fluent practitioners to the least fluent improvement science users, we identified conditions before, during, and after the learning experience that have some explanatory value in understanding variation in improvement science fluency and why some practitioners may have been more successful in moving improvement knowledge into action in their school contexts. In the remainder of this section, we report findings on these conditions.

Conditions

By coding and analyzing the interview data, we identified five conditions: positional authority, frame of reference for leading improvement, role-relevant and job-embedded practice opportunities, post-class learning opportunities, and supportive organizational context. Throughout our findings section, we use the word “conditions” to describe the influences on improvement science fluency, yet we do so with some hesitation. We cannot say that these conditions are or are not necessary prerequisites to acting on new knowledge; rather, we have come to understand the conditions that follow as additive influences. The more supportive conditions a participant reported, the higher their improvement science fluency. The conditions we identified are discussed below and represented in Tables 3 and 4.

Condition 1: Role and Positional Authority to Act on New Knowledge

Role, during and after the program, appeared to explain variation in improvement science fluency. The four most fluent users held out-of-classroom leadership roles during the program and at the time of interview, whereas those in teaching positions during the program and at the time of interview were clustered near or below the mean fluency score. Participants felt their role, during and after the program, structured authority over processes and resources. This authority appeared to support or constrain attempts to act upon improvement science knowledge.

Role and authority over process

Role influenced the relative power people had over their own and others’ schedules. The four participants who were teachers at time of interview expressed that their tightly scheduled days, filled with instructional and administrative tasks, challenged their attempts to engage the tools and apply the core principles of improvement science. They had little control to restructure their work so that they could collect data, plan PDSA cycles, or bring others together in the type of collaborative work that improvement science demands. Participant #16, a special education teacher, summarized his difficulty in bringing his colleagues together in collaborating and collecting data for improvement science.

It’s difficult to get people to meet on their own time, which I don’t blame them for. Teaching is a really tough job and improvement science, actually improving anything, takes a lot of time. I don’t think it’s that people don’t want to improve. I think it’s the operational aspect that really gets in the way of things. Operations as in you have to invest a lot of time. You have to have meetings, pass out surveys, and do all sorts of stuff. It’s what makes improvement hard; it takes a lot of time.

By contrast, those in non-teaching roles had more control over their or others’ work processes and schedules so that they were better able to clear competing commitments, for themselves and others, to foster collective participation in improvement science projects. The most fluent users of improvement science, all of whom were in non-teaching roles, were able to reconfigure existing processes and responsibilities so that their use of improvement science was integrated into, rather than added onto, their task list. For example, the three district administrators in the sample reported that improvement science helped them craft agendas, strategize for improvement, and carefully facilitate the selection and definition of bounded but complex problems experienced across contexts. These district administrators were already engaged in these activities, but using improvement science gave them a new set of tools and a framework for meeting the expectations of their role. They used their authority over process to reconstruct their schedules and how they met the professional expectations of their role. In doing so, these participants deepened their understanding of improvement science through repeated opportunities to learn while leading and to lead while learning.

Role and authority over resources

In addition to authority over process, role influenced authority over resources, especially discretionary funds. These discretionary funds helped them allocate more resources to their improvement efforts. One resource participants allocated was professional development. Three of the five most fluent users of improvement science reported funding was made available to them, which they used for their improvement science projects. Another important use of discretionary funds was to incentivize teacher participation. Improvement science is predicated on engaging frontline workers, and in education, these frontline workers are typically teachers. Teacher participants reported their days are typically tightly scheduled and their workloads are typically overburdened, which required improvement leaders to address competing commitments. Not feeling they were able to ameliorate competing commitments, Participants #3 and #5 provided stipends for teachers to incentivize participation in improvement science projects. In contrast to the most fluent users, none of the least fluent users reported available funds to support their improvement science projects.

Condition 2: Frame of Reference for Making Sense of New Knowledge

Participants made sense of improvement science instruction through the lens of their current role and their previous experience. In the course, improvement science was presented as a school improvement framework, rather than as a framework for lesson study or instructional improvement, as others have used it. Given the way improvement science was presented as a school improvement framework, those without experience leading school improvement were more likely to report trouble understanding why improvement science might be useful for their practice. Those in teaching roles were less likely to report prior experience leading school improvement. For example, during the program, Participant #17, who was working as a teacher, struggled to make sense of improvement science. However, when she moved to a new leadership role post-graduation, she began to see the value of improvement science for her work.

I think that what I was lacking maybe when I did take [the class] was even though I provided support to the administration at the time, it’s nothing compared to right now that I’m out of the classroom. So I feel that there was a difference as to improvement science within the classroom, and then now taking it out of the classroom on bigger projects or projects that entail the whole school instead of just my classroom.

Conversely, those who came to the program with experience in out-of-classroom roles, such as instructional coaches, coordinators, and school leadership, had prior experience managing improvement across classrooms. For example, Participant #8, a Title I coordinator, discussed how the experience of working in an out-of-the-classroom administrator role gave her a frame of reference for making sense of improvement science and its potential utility to the work she was engaged in daily. Throughout the class, she saw parallels to work she was already engaged in, which helped her draw connections to how improvement science might help discipline and further her efforts to improve outcomes and experiences for students.

I was already doing a little bit of the type of work that the class led us in the direction of doing. But I think [the class] helped to shape it so that I could do it even better. I had gone from being the Title III coordinator and working with long-term English learner teachers, where I piloted different strategies with them and looked at lesson design. I wouldn’t say it was my job to pilot, but it was my job to figure out how to improve the English Learner Program and how to help kids gain proficiency. So, I had already been doing some piloting the class taught.

Participant #8’s experience piloting solutions to the complex problems that emerged in her work served as a frame of reference that helped her construct her understanding of how improvement science might be useful in her role. She was able to lean on her experience piloting new ideas as a lens to understand and see the value of improvement science, which holds iterative experimentation as a core principle.

Condition 3: Role-Relevant and Job-Embedded Problems of Practice

All participants reported that improvement science was difficult and sometimes confusing to learn. Given this difficulty, participants appreciated opportunities to apply new concepts and tools on problems of practice. However, problems of practice varied in how useful practitioners found them. According to participants, problems of practice were most useful as learning fodder when problems met two criteria: (1) they were relevant to the work that participants were currently engaged in; and (2) participants were able to apply what they were learning to their school context. We discuss each criterion.

Role-relevant problems of practice

During the program, participants held different roles across diverse school contexts. At the beginning of the course, participants self-selected into improvement groups, where they navigated group dynamics in selecting and defining a problem they would examine over the next 10 weeks. In this process of navigating and compromising, some participants found themselves in groups working on a problem that was either not interesting to them or not relevant to their role. Ten participants, who were clustered near or below the median fluency score, reported this process of negotiation frustrated their experiences using improvement science because they were not interested in the problem or did not feel it was relevant to their work. The five least fluent users had difficulty recalling the precise problem they worked on during class, though some had graduated as recently as a year before.

In contrast, four of the five most fluent users of improvement science reported that, compared to their classmates, they went out of their way to apply improvement science on a problem or practice that was interesting to them and relevant to their current role. They did so even when it entailed finding opportunities to practice that went beyond the requirements of their class project. Both Participants #1 and #2 felt their group project was neither interesting nor relevant to the daily work they were engaged in, so they crafted opportunities to apply improvement science in their schools outside their class project. Speaking of why he did not remember his class project but did remember many of the tools and principles, Participant #2 said: I think the fact that I can remember the tools that I used and the people that I was with, but not necessarily the problem that I was facing, is just illustrative of something that it seemed like some of my classmates were missing from the class, too, and some of the frustrations that they had because I think it was trying to discover a problem that may or may not have been relevant to them in the time. If I compared my experience with my classmates’, I think that’s probably the biggest difference. I was able to apply what I was learning on another problem that I had chosen.

Job-embedded opportunities for application

As Participant #2 underscores, there was a clear connection between a participant’s role during the program and the degree they felt they were able to apply improvement science tools and principles. The five least fluent users did not report a particularly active role in applying the tools and principles within their own school context during their time in the leadership course. However, many of those in out-of-classroom roles during the program spoke of how they were able to leverage the freedom of their role to better apply what they were learning. Participant #2, who at the time of the program was an out-of-classroom educational technology specialist, went on to say: I had a little bit more freedom to do the work because I was not in the classroom at the time, and the rest of my group was. So, I remember there being more of a focus on my site because I was able to have more flexibility to run around and have conversations and collect data there.

The same sentiment was echoed by Participant #4, who said: I think that my group leaned on me pretty heavily because I was able to get out. Everybody else was talking about getting release time or going down a conference period, and I just said, “Let me just make this part of my day, and I’ll collect data.”

In summary, though all participants had opportunities to engage with problems of practice during the course, their experiences varied according to two criteria: (1) the relevancy of the problem to their role and interests, and (2) their opportunities to engage with the problem of practice. In the context of moving knowledge to action, these criteria suggest the importance of a certain amount of organizational freedom and a parallel to the work happening in their school.

Condition 4: Post-Class Opportunities to Extend Learning

A fourth condition that appeared to explain variation in improvement science fluency was the availability of and engagement in post-class learning opportunities, such as coaching, workshops, professional development programs, or networked improvement communities. Five participants received ongoing or extended improvement science training after they graduated from the program, including three of the five most fluent users. The most fluent user, Participant #1, took advantage of multiple post-class learning opportunities, including attending an annual improvement science summit, seeking new resources, and participating in improvement science facilitation training. Reflecting on how this training was helpful, Participant #1 said: In my opinion, [learning improvement science] has to be a parallel track where you’re introducing things slowly and with real improvement projects that are happening at the school site and building knowledge as you go. Because it’s hard to learn everything at once. There’s so many different things and some of them are especially difficult and you really don’t know when to use them. Because of my training, I’ve gotten much better about understanding when to use some of those things than I did when I was in class.

In contrast, none of the least fluent users of improvement science reported formal post-class opportunities for practice, despite their expressed interest in more resources and training opportunities. Although there was a general appetite for more support and resources to deepen knowledge of improvement science, we noticed a trend that those occupying higher leadership roles were more likely to report post-class opportunities to extend and act upon their improvement science knowledge.

Condition 5: Supportive Organizational Context

The final set of conditions pertains to the organizational context in which participants worked. We briefly discuss these conditions, which are also depicted in Table 4. First, participants reported the importance of frequent and consistent structures for collaboration, including professional learning communities, cohort meetings, and team teaching structures. All but the four least fluent users of improvement science reported their organization had frequent, consistent, collaborative structures that supported their use of improvement science. These less fluent users of improvement science met less regularly with their colleagues, and their meetings were often overridden by action items unrelated to their improvement efforts. For example, Participant #15 reflected on how she struggled to find time to collaboratively measure, monitor, return to, and use data in the improvement process.

We usually have about one hour a month that’s reserved to meet, and that’s not enough time to address people’s concerns and try to do data analysis and all that stuff, so allocating time also for grade levels to meet to do this like designated time for that [is important].

In contrast, the most fluent users met more frequently with their colleagues and reported designated times specific to their improvement science projects. For example, Participant #1 reflected on the need for regularly scheduled meetings as a precondition for his work using improvement science with the principals he supported as a district administrator.

I was able to convince the school site principal to do weekly meetings for both schools, and that was really important. If you met twice a month, then you made much less progress. So actually, I think we met once a week with all three schools, but I know there’s a lot of other schools in my district that meet twice a month, and they didn’t make much progress at all. It has to be a separate space, and it has to be a regular. You have to have a regular rhythm in which you’re meeting on a weekly basis to make any kind of, I think, substantial kind of progress or some kind of progress.

Second, participants reported their efforts to deepen and apply their knowledge of improvement science were aided by regular routines and habits of examining data, returning to data, and using data to plan for improvement. All but two participants worked in organizations that, as they described, fit this description. These two participants (#15 and #16) were among the four least fluent users of improvement science.

Third, participants understood the importance of leadership. All but three participants reported they had the support of administrative and instructional leaders at their schools to try new ways of working and to bring others together in collaborative action. Their school leaders might not have been familiar with improvement science, yet they trusted participants to use improvement science. The three participants who did not report such trust spoke of needing to negotiate the expectations and preferences of school leadership. They often did not feel they had the autonomy to restructure their work or bring others together in using improvement science. Without leadership trust, they were forced to adapt their practice so that their improvement science projects were more private, less iterative, less frequent, and increasingly siloed from other colleagues and classrooms. In these cases, although participants were mobilizing new knowledge, they were often doing so in ways misaligned with improvement science principles.

Contrasting Cases

In our analysis, we documented contrasting cases to acknowledge the complexity and potential variations within the data set. In doing so, two interesting cases emerged: Participants #10 and #13. These participants reported many of the conditions that appeared to explain some of the variation in improvement science fluency. They were the only two participants in this sample that worked in organizations with organization-wide resources, training, and explicit expectations to use improvement science. Both had organization-wide protocols and materials to help them use improvement science. Through their organizations, both had been exposed to improvement science before enrolling in the leadership preparation program. Both reported leadership support for using improvement science. Both reported consistent, frequently scheduled, collaborative meeting times, and both reported organization-wide routines and expectations of using data for improvement. It is therefore surprising that both their improvement science fluency scores fall below the median.

One plausible explanation may lie in the distribution of improvement work within organizations. Because improvement science was an expected and shared practice in these organizations, others on the team may take on more of the heavy lift of engaging improvement science tools and principles, giving these individuals less opportunity to apply what they have learned. There is some support in the interview transcripts for this explanation. As an assistant principal, Participant #13 worked as a member of her school’s Culture and Climate team, which met regularly to analyze and monitor culture and climate data. In the examples she gave throughout the interview, she made it clear that the work she engaged in and spoke of was a team activity. Likewise, Participant #10 spoke of how, over the years, his department had begun to use improvement science more independently and less collaboratively. As participation in a formal collective practice of improvement science waned, he reported his improvement science practice became less routine, less formal, and less disciplined. It may be, then, that as individuals learn improvement science as part of their team, their understanding of it is highly mediated by their role within the team and how, given that role, they are able to apply what they have learned.

Furthermore, it could be that in our measurement of improvement science fluency, we overestimated the extent to which these two participants displayed evidence of improvement science dispositions. This is to say that by looking at their descriptions of their practice to determine the presence of these dispositions, we may have simply captured their rote participation in institutional norms, rather than well-established inclinations toward the improvement science approach. The implications of this could support our assertion that these conditions are additive, yet also may be an indication of the value that observations might have brought to this inquiry had they been feasible. Although these are plausible explanations for an interesting occurrence in the data, they deserve greater consideration in future work.

Discussion

We begin this section by examining the unexpectedly powerful influence role appeared to exert on efforts to use new knowledge. We then offer design considerations for professional development programs to help practitioners accelerate moving knowledge into action.

The Importance of Role and Slack in Practice Improvement

Though we had not anticipated it, the importance of role before, during, and after the learning experience emerged as a particularly salient theme. This is not to suggest classroom educators cannot learn or practice improvement science. On the contrary, participants in this study demonstrated remarkable diligence in their efforts to integrate improvement science into their classrooms and their everyday work. We were inspired by the ways classroom educators worked to make improvement science usable to their role and their continued investment in practicing what the course offered. They did this in the face of enormous obstacles that crowded their time, impeded their autonomy, and restricted their capacity to act on new knowledge. In the project of getting knowledge into action, we should not assume that teachers are unwilling to act upon professional development experiences or inherently lack some skill to do so. Rather, they exist within organizational contexts and roles that complicate efforts to improve practice. Professional development that expects to generate change in practices must address the constraints associated with role that greatly challenge teachers’ efforts to change their practices.

The importance of role is particularly relevant to improvement science, which posits the importance of engaging frontline workers. In schools, frontline workers typically refer to teachers. However, the finding that those who were teachers during the program and at time of interview tended to have lower fluency scores underscores a challenge for improvement science scholars and advocates. It highlights the specific supports they might need and the considerable challenges they must overcome to integrate improvement science into their professional routines. If we do not address the problem of overburdened, tightly scheduled teachers, we should continue to expect improvement to be the exception rather than the rule. We need to build educational infrastructures that make way for teachers to engage in and lead this work. Improvement scholarship should further explore the power and challenges associated with role and how school leaders can explicitly attend to teacher capacity if they desire robust and sustainable engagement by teachers in improvement projects.

The influence of role implies a set of experiences, practices, and resources that support acting on new knowledge. Role appeared to structure practitioners’ authority over time, money, and people; their frame of reference for understanding and drawing connections to the content presented in the learning experience; their opportunities for practice and application; and their access to continued training and coaching. These experiences and assets are distributed unequally and hierarchically across roles, such that those in leadership are better supported in moving knowledge to action. The concept of slack is useful in understanding why role appeared to exert such a powerful influence on knowledge to action. Organizational slack refers to the pool of resources, such as time and money, that eases adaptation to the ebbs and flows of the innovation process (Grossman & Shapiro, 1987), promotes experimentation (Bourgeois, 1981; Nohria & Gulati, 1997), and lessens managerial control (Cyert & March, 1963). In organizations with low levels of slack, managerial attention “is likely to be consumed by short-term performance issues rather than by long-term, innovative projects” (Nohria & Gulati, 1997, p. 605). This description maps well onto the traditional organization of schools, where cultures of compliance and urgency may impinge upon the collaboration and freedom for innovation.

Although slack tends to be operationalized as a characteristic of organizations, this study highlights the ways that slack is distributed unevenly across roles within schools, such that those in leadership roles experience greater slack than classroom educators. Findings from this study suggest that within educational organizations, slack may predominantly be a characteristic of role. If there is an optimal amount of slack for supporting innovation at the organizational level, it stands to reason that there is likewise an optimal amount of role slack needed for innovation. Evidence from this study suggests the rigid nature of an educator’s workload and the professional expectations they are held to may not be optimal for practice innovation.

Before concluding this discussion of the influence of role, we consider how the professional development program of this study may differ from others in that it was role-diverse. Participants, interested in earning their leadership credentials and preparing for social justice school leadership, completed the program as teachers, instructional coaches, coordinators, and assistant principals. Other professional learning programs may cater to less role-diverse cohorts. For these learning contexts, we believe that findings from this study suggest the importance of framing connections between one’s prior experience and the content presented in the course. Those who came to the program with formal leadership experience were better able to understand why and how they might incorporate improvement science into their work because improvement science was presented as a school improvement framework. Perhaps if the class had been more fully centered on how improvement science could support effective instruction, those in teaching roles might have been better positioned to understand and act on improvement science knowledge. The point we make here is that programs should support practitioners in fostering connections between prior experience and new knowledge for action.

Recommendations for Supporting Knowledge to Action

Given the discussion presented above, what are the implications for instruction and for designing effective professional development? We elevate a set of areas for further inquiry that may provide support to those who teach and design improvement science courses.

Activate prior knowledge to prepare for future learning: Those with experience leading change in school improvement before and during the program leaned on their experiences of leading others, using data, and strategizing for improvement to make sense of and draw connections to the principles and tools of improvement science. This is analogous to what Schwartz and Martin (2004) labeled “transferring in” knowledge, referring to the ways that learners make sense of new situations and ideas using prior knowledge and experiences. The interpretations that practitioners “transfer into” a learning context can serve as a productive sensemaking tool, but they may also interfere with identifying new possibilities for action. This was the case of classroom educators in this study, who struggled to understand how they might mobilize improvement science in their contexts. This was also the case for the two participants who had prior encounters and experience with improvement science, which had primed them for moving new knowledge from professional development to action in their contexts. In their interviews, the participants underscored how the new knowledge from the course helped them make sense of their past experiences with improvement science, and they were able to use past experiences to understand how to move that new knowledge into action. This study highlights that the instructional challenge for instructors is how to support learners in transferring knowledge conducive to learning new materials and practices.

Attending to the importance of the learning problem: Building on prior research on goal-oriented professional development (i.e., Fluckiger et al., 2014), findings from this study emphasize opportunities to learn through doing are most successful in supporting knowledge to action when they are role-relevant and capitalize on practitioner interest. Working through problems of practice was an important learning aid to some, but to others, this was problematic, especially for those who were working on problems that they were uninterested in or unable to engage with. When participants were unmotivated by their problem, their engagement waned, and their fluency scores were lower. We recommend that professional development programs take every effort to ensure that practitioners can select a learning problem that is interesting to them and relevant to the everyday work they are engaged in as part of their role.

Scaffold for role: Research suggests that job-embedded training is more effective than training that is removed from application contexts (Ball & Cohen, 1999; Desimone, 2011). Yet, how this training is embedded deserves greater attention. Our research suggests that certain roles may find it harder to change their practices because of the distribution of power within their community of practice and the organizational slack that follows such power. As discussed, it is our contention that educators are just as capable of learning and leading improvement science when given the knowledge and tools to do so. However, they may need support carving out opportunities to engage more deeply with the knowledge presented to them. Organizations and individuals that facilitate professional learning programs should not assume everyone has the slack to practice what they have learned. This requires that they scaffold and support practitioners in their efforts to practice what they learn within their professional contexts.

Address organizational context: Engle (2006) writes, “If learners have chosen to use something they know in a new context, then it is likely that the context is one in which it is desirable, appropriate, or at least socially acceptable for them to do so” (p. 455). If the goal is to move knowledge to action, professional development must provide resources, support, and learning communities that frame acting upon knowledge as something that is desirable, appropriate, and socially acceptable. This requires attending to the organizational conditions of the transfer context, which influences opportunities for deepening and practicing knowledge. One implication of this finding is that programs may support moving improvement knowledge to action by helping students better understand their organizational context, the ways that improvement science fits into what already exists, and the ways that they might frame improvement science with their colleagues to gain more support.

Offer follow-up learning opportunities: Prior research suggests that professional learning programs should provide or forge partnerships that support follow-up learning opportunities (Desimone, 2011; Franke et al., 2001). Our study demonstrates that, regarding improvement science, these learning opportunities are typically made available to those in leadership positions. However, classroom educators expressed a desire for greater opportunities to understand how to integrate improvement science into their practice. Professional development providers can support ongoing learning by fostering, facilitating, and sustaining an ongoing community of practice that includes cohorts of learners who completed the professional development program across time and space. In these communities of practice, learners can crowdsource solutions, learn from others’ challenges, and connect to opportunities for training and coaching.

Thus far, we have discussed the project of getting knowledge into action and the project of developing improvement science fluency as one and the same. Given that our definition of improvement science fluency incorporates not only what participants know, but also what they do with that knowledge and the dispositions that support those actions, we have considered it a relatively good proxy for understanding knowledge to action. However, the organizational conditions we have presented may very well be specific to improvement science. The core principles of improvement science demand using data and collaborating with others. It is therefore not surprising that in contexts where these organizational conditions were weaker, practitioners had fewer opportunities to continue practicing improvement science knowledge. In the more global project of understanding the conditions that influence getting knowledge into action, findings from this study emphasize that not all contexts are equally conducive to practicing new professional learning. If the ultimate goal of professional development is to support practice improvement, instructors and organizations that provide professional development must understand and as much as possible attend to the inertial or innovation-supportive qualities of organizational context.

This study highlights important opportunities for future research. A limitation of this study is that it does not incorporate observational data of participants’ practices. We recommend future research incorporate observational and longitudinal designs to better understand how practitioners change the core of their practice to align with improvement science learnings and how they adapt improvement science to fit their professional contexts. Moreover, it is unclear the extent to which the class experience developed the dispositions for taking improvement science forward or capitalized on pre-existing dispositions. Of the three domains of improvement science activity, aggregate scores for dispositions tended to be the highest. Moreover, many participants spoke of having a disposition to reflect, inquire, and experiment before the class. The high dispositions scores and participant reflections on their pre-class dispositions suggest that pre-existing dispositions may have served as what Spillane (1999) refers to as “personal resources” for learning improvement science and for acting upon new knowledge. Future research should explore the development of improvement science–aligned identities and dispositions. Finally, we acknowledge that the level of specificity regarding contextual details in our study is limited. We recognize that the absence of in-depth information about specific teachers, their individual talents, and the nuanced dynamics within professional communities may restrict the transferability of our findings to on-the-ground, in situ contexts. Additional research should explore with much greater attention how these organizational and professional features impact knowledge to action.

Conclusion

This paper was organized around two purposes. First, we were interested in better understanding how to generally support practitioners’ learning journey from encountering to acting on new professional knowledge. Second, we were interested in specifically exploring how practitioners learned to use and lead improvement science. We explored these purposes using the concept of improvement science fluency in order to identify a set of conditions that appeared to influence how practitioners were able to act on new knowledge. This exploration underscored that, within professional development programs, changing practice is often discussed as a function of will and skill. The assumption goes that if we foster a person’s motivation to enact or engage in reform and develop their capacity to do so, we can then effectively support them in practice innovation. However, practices are social activities deeply connected to both content and context (Engle, 2006; Tobin et al., 2005). Our discussion highlights that getting knowledge into action must include an enormous amount of attention to context before, during, and after the learning experience. This includes attending to practitioner role and the organization in which practitioners operate. These findings help us make partial sense of why reform or professional development often struggles to generate substantial changes in practices when it devotes the bulk of the attention to the content or format of the in-class learning experience. What occurs during the professional learning experience is only one component of supporting innovations in practice. Effectively, transforming practice entails transforming will, skill, and context. It requires supporting practitioners before, during, and upon maturation from the professional learning context.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.