Abstract

Nonlinear and interactive effects (NIEs) are central to management theory. Consequently, although researchers commonly include linear control variables, the omission of nonlinear and interactive control variables (NICs) can lead to incorrect conclusions because the omission can distort statistical tests and effect-size estimates. We reviewed 548 quantitative articles published between 2021 and 2023 in Academy of Management Journal, Journal of Management, and Strategic Management Journal. We discovered that about 73% tested for NIEs, but only 3% included NICs. Also, by reanalyzing a published study, we demonstrate that the exclusion of theoretically relevant NICs can reverse substantive conclusions, highlighting the threat such omissions pose to theory advancement. To address this methodological challenge, we introduce a five-step guide for systematically identifying, evaluating, and integrating NICs into research involving NIEs. The guide offers a structured, theory-driven approach that uses associations among model variables and their linear controls to determine which NICs are critical for unbiased estimation of NIEs. We also explain how to avoid over-control, maintain statistical efficiency, and transparently manage omitted NICs. Applying the five-step approach strengthens the validity of causal inference in studies of nonlinear and interactive effects and enhances the robustness of empirical results. In addition to improving estimation accuracy, the systematic and theory-based inclusion of NICs advances theory development by clarifying boundary conditions, distinguishing competing explanations, and enabling the cumulative integration of empirical results.

Keywords

Nonlinear and interactive effects (NIEs) are central to management theory (Aguinis, 2025; Haans, Pieters, & He, 2016; Murphy & Aguinis, 2022). NIEs refer to any departures from linear relationships (Becker, Robertson, & Vandenberg, 2019; Rönkkö, Aalto, Tenhunen, & Aguirre-Urreta, 2022). The two most prominent forms of NIEs emerge from the effect of X on Y varying with the level of X, yielding quadratic or higher-order patterns (Haans et al., 2016), and from varying with a third variable, yielding interaction (also called moderating) effects (Aguinis, Edwards, & Bradley, 2017). NIEs are central to macro-level theories such as optimal distinctiveness, dynamic capabilities, and entrepreneurial orientation (e.g., Soublière, Lo, & Rhee, 2024; van Angeren, Vroom, McCann, Podoynitsyna, & Langerak, 2022; Wales, Parida, & Patel, 2013). NIEs also play a critical role in micro-level theories of rivalry, goal-setting, and psychological contrast theories (e.g., Mo & Andiappan, 2024; Schilpzand, Houston, & Cho, 2018; Shin & Grant, 2019).

While it is well known that linear control variables address potential confounding effects in linear relationships resulting from other omitted linear effects (e.g., Aguinis, 2025; Becker, Atinc, Breaugh, Carlson, Edwards, & Spector, 2016; Bernerth & Aguinis, 2016; Spector & Brannick, 2011), contemporary management research largely neglects threats from omitted nonlinear and interactive confounding effects. Linear controls do not address the challenges posed by the omitted nonlinear and interactive controls when estimating NIEs. Other NIEs can correlate with a focal NIE while being unrelated to the linear variables in the model and can independently affect the dependent variable beyond the effects of those linear variables. Omitting such NIEs, therefore, creates an omitted-variable problem that is not resolved by including linear controls alone. Hence, controlling for other NIEs can be critical for accurately estimating and testing the focal NIE. Not addressing this methodological problem can result in misleading conclusions about the effects estimated and predicted by substantive management theory.

As we uncovered in the literature review described later in our article, although approximately 70% of quantitative articles published in management journals estimated and tested NIEs by including nonlinear terms in the models, only about 3% of those included nonlinear and interactive terms as control variables in the analysis. Accordingly, we propose the systematic use of nonlinear and interactive control variables (NICs) to identify and evaluate nonlinear and interactive effects more accurately. Our suggestions build upon but go beyond linear control strategies (Aguinis, 2025; Becker et al., 2016; Bernerth & Aguinis, 2016) and prior work on curvilinear and interactive confounding effects (Aguinis, 2004; Cortina, 1993; Feigenberg, Ost, & Qureshi, 2025; Ganzach, 1997; Lubinski & Humphreys, 1990; MacCallum & Mar, 1995; Shepperd, 1991; Yzerbyt, Muller, & Judd, 2004). Building on this research stream and generalizing it, we introduce a step-by-step guide for NIC usage that identifies relevant NICs based on the associations of linear terms combined with the systematic replacement of linear terms in NIEs. This replacement rule does not merely serve as a technical device for bias reduction; it also functions as a theory-advancing heuristic that makes competing mechanisms explicit.

Our approach complements contemporary work on omitted-variable bias, sensitivity analysis, and the estimation of nonlinear and interactive effects (Angrist & Pischke, 2009; Cinelli, Forney, & Pearl, 2024; Dawson, 2014; Haans et al., 2016; Wooldridge, 2016). Our contribution lies in introducing a systematic identification of alternative explanations for NIEs. This identification enables more precise theory testing by pinpointing the necessary nonlinear and interactive control variables, with additional implications for theory development and theory integration. To demonstrate the practicality and value of the proposed step-by-step guide, we focus on theory testing and apply it to replicate studies by Mo and Andiappan (2024). 1 Specifically, after we included an NIC in their model in one of their studies, the originally statistically significant NIE changed direction and became nonsignificant. In the second of their studies, the effect remained robust. Thus, the appropriate use of NICs in study design and data analysis can alter or further support substantive conclusions. Thus, our guide raises awareness of and provides researchers with specific actions they can take to anticipate critical boundary conditions and alternative explanations for observed and hypothesized NIEs. When testing competing theories or combining them, our systematic five-step procedure also helps identify alternative explanations that emerge from the interplay of constructs from different theories. So, it not only enables more robust theory testing but also results in improved cumulative knowledge.

The remainder of our article is organized as follows. First, we explain the mechanism behind the omission of NICs and illustrate the consequences of this omission. We do so by analytically deriving the omitted NIC bias, reanalyzing a published study, and conducting a systematic review of NIC usage in prior management research. Second, we present the step-by-step guide for NIC usage along with best practices. These five steps cover identifying NICs systematically, using them appropriately in regression analyses, and revisiting research that omits critical NICs. We also discuss estimation efficiency as a reason for excluding identified NICs. Additionally, we demonstrate how the guide helps more accurately assess NICs by systematically identifying necessary terms in a reanalyzed study. Lastly, we summarize the guide’s contributions, address the limitations of our study, and explore promising opportunities for future research.

The Omitted Nonlinear and Interactive Control Variable Problem When Assessing Nonlinear and Interactive Effects

In this section, we explain the mechanism behind the omission of NICs that leads to a bias in NIE estimates and derive the bias magnitude. We illustrate this problem through a reanalysis of a published study. Finally, we describe a literature review that assessed the pervasiveness of the omitted NIC problem in management research.

Bias Emerging from the Omitted NIC Problem: Analytic Explanation

As with linear effects, NIEs are usually hypothesized in terms of causal mechanisms. The identification requires distinguishing a hypothesized NIE from plausible alternatives that would produce the same outcome (Shaver, 2020). Estimated NIEs can be challenging to distinguish from other nonlinear and interactive effects (e.g., Cortina, Dormann, Markell, & Keener, 2023; Ganzach, 1997; Lubinski & Humphreys, 1990; MacCallum & Mar, 1995; Shepperd, 1991). First, what looks like the effect of predictor X being moderated by another correlated predictor Z may instead reflect X moderating its own effect; that is, a quadratic effect of X (Ganzach, 1997; Lubinski & Humphreys, 1990; MacCallum & Mar, 1995). Second, what appears to be Z moderating the effect of X on Y could actually be an antecedent C of Z that moderates the effect of X on Y, rather than Z itself (Cortina et al., 2023). Third, C affecting Z is an unnecessarily restrictive assumption for identifying potentially confounding effects. Any variable C that is causally driven by antecedents of Z causes the same omitted NIC problem.

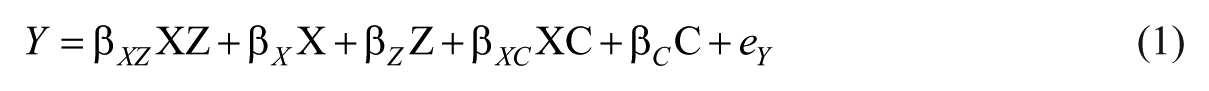

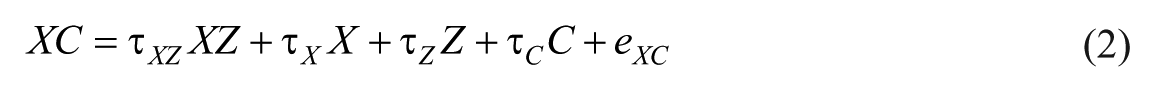

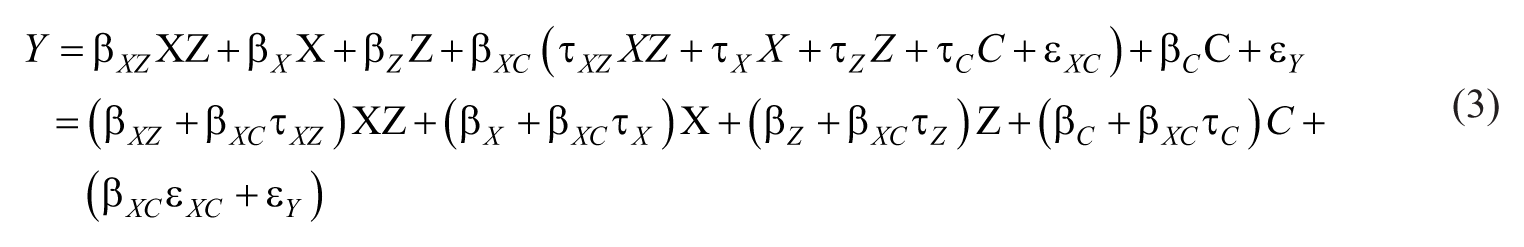

Based on the three possible scenarios described above, the objective is to distinguish the moderation of the X-Y relationship by Z from the alternative explanation of the X-Y relationship being moderated by a control variable C. Thereby, C can be any antecedent of either of the interaction’s constituent terms, X (i.e., predictor) or Z (i.e., moderator). Moreover, as special cases, C or Z could equal the model variable X, thereby generating confounded nonlinear effects or confounding relating to correlated model variables rather than control variables. Failing to control for XC (i.e., omitting an NIC) will bias estimates and statistical tests of XZ (i.e., the focal NIE). The regression models in Equations 1 and 2, with variables X, Z, and C, related parameters

Omitting any relevant variables that correlate with the focal model variables leads to biased estimates and biased tests of the linear effects (Cinelli et al., 2024; Hill, Johnson, Greco, O’Boyle, & Walter, 2021). The same logic applies to nonlinear and interactive effects: When XC explains Y (relevance), as when

Following the approach by Antonakis et al. (2010), and substituting Equation 2 into Equation 1 makes the omitted-NIC bias explicit.

2

As shown in Equation 3, the bias in the estimated effect of XZ equals

Impact of Omitted NICs on Substantive Conclusions: Illustration Using a Published Study

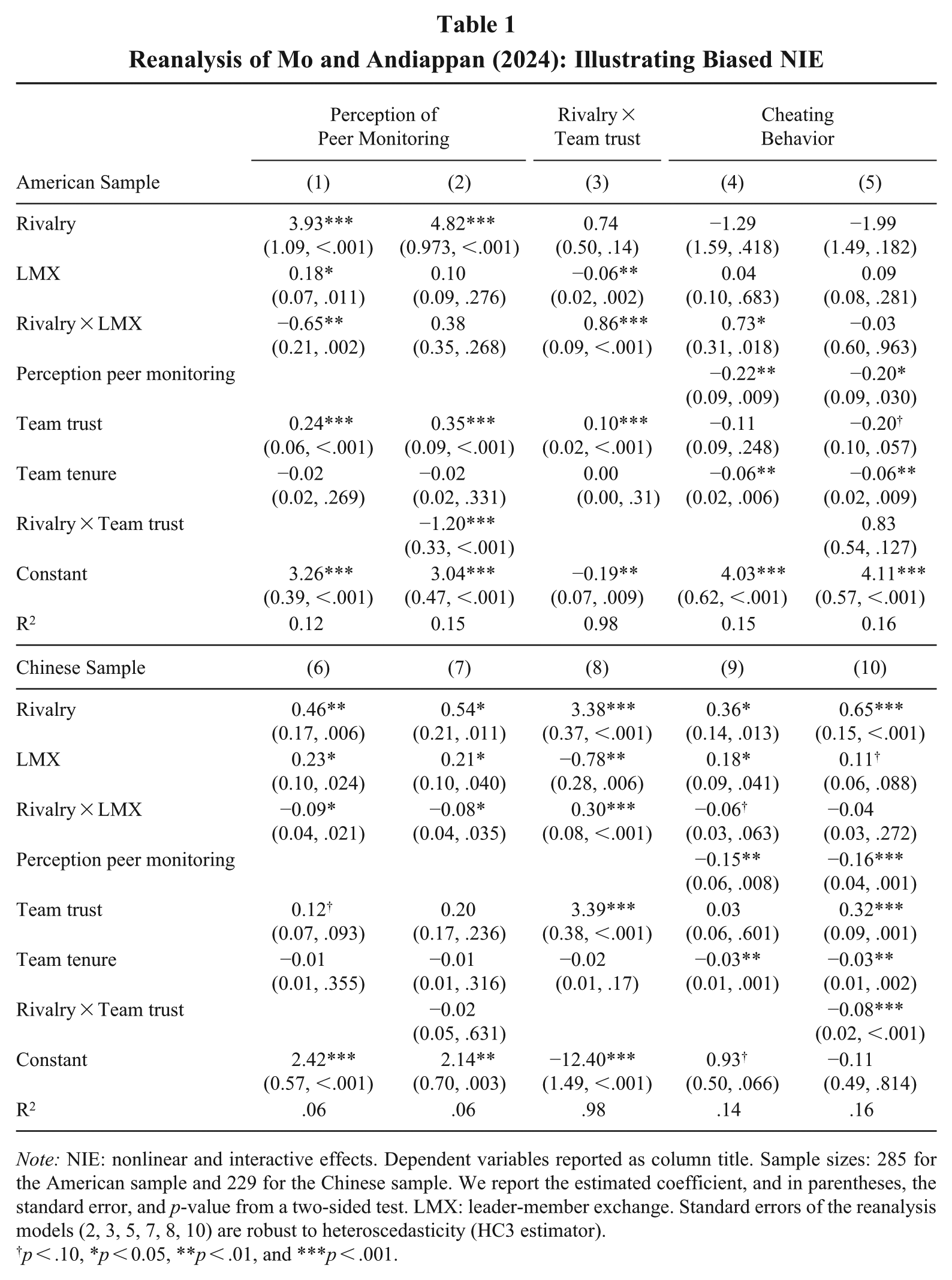

To offer a concrete illustration of the omitted NIC problem and its impact on substantive conclusions, we reanalyzed data from Mo and Andiappan (2024). We will briefly introduce their case, introduce an NIC, and document how the test and the conclusion change. Drawing on rivalry theory (Kilduff, Elfenbein, & Staw, 2010), Mo and Andiappan (2024) demonstrated that higher levels of rivalry within a work group (X) lead to more intense perceptions of peer monitoring (Y) and, subsequently, lower levels of workplace cheating. Better relationships with leaders (Z), operationalized as leader-member exchange (LMX), negatively moderated the positive relationship between colleague rivalry and perceptions of peer monitoring. Additionally, Mo and Andiappan controlled for perceptions of team trust (C) in their study “to rule out the alternative explanation that perceptions of peer monitoring may be a result of team trust” (Mo & Andiappan, 2024: 1075). They also controlled for team tenure as an important additional antecedent of rivalry. In their study with an American sample, replicated in Table 1 (Model 1), they reported a statistically significant moderating effect (XZ) on Y.

Reanalysis of Mo and Andiappan (2024): Illustrating Biased NIE

Note: NIE: nonlinear and interactive effects. Dependent variables reported as column title. Sample sizes: 285 for the American sample and 229 for the Chinese sample. We report the estimated coefficient, and in parentheses, the standard error, and p-value from a two-sided test. LMX: leader-member exchange. Standard errors of the reanalysis models (2, 3, 5, 7, 8, 10) are robust to heteroscedasticity (HC3 estimator).

p < .10, *p < 0.05, **p < .01, and ***p < .001.

Mo and Andiappan (2024) did not include NICs to improve the odds of an unbiased estimation of the interaction effect of rivalry × LMX. Considering Mo and Andiappan’s own argument that team trust may confound LMX, rather than LMX, team trust may moderate rivalry within work groups. According to Mo and Andiappan (2024) and Kilduff, Galinsky, Gallo, and Reade (2016), rivalry triggers perceptions of peer monitoring when rivals are perceived as threats to one’s identity or status. Team trust notably reduces threats to identity (Han & Harms, 2010). Hence, team trust, rather than LMX, may reduce the positive relationship between rivalry and peer monitoring, and thus, perceived monitoring.

With additional collinearity between the hypothesized interaction effect of rivalry × LMX and the theoretically also relevant interaction of rivalry × team trust, the alternative moderator becomes a critical interactive confounding effect. To examine the collinearity between the NIE and NIC using Equation 2, we regressed the NIC rivalry × team trust on rivalry × LMX as the focal NIE, including all other model variables (see Table 1, Model 3). The estimated collinearity is substantial at

Pervasiveness of the Omitted NIC Problem: Literature Review

To explore the prevalence of neglecting nonlinear and interactive control variables when assessing nonlinear and interactive effects, we reviewed the quantitative articles published between 2021 and 2023 in Academy of Management Journal (AMJ), Journal of Management (JOM), and Strategic Management Journal (SMJ). Of these 548 articles, 398 (73%) included a regression-type analysis (including path analysis and SEM) involving nonlinear or interactive effects (e.g., an interaction, a curvilinear term, or a combination of both) and at least one linear control variable. Only thirteen of these articles (i.e., 3%) included an NIC. Only four of these studies included interactions as NICs, which is the case we motivated above (Garrido, Giachetti, & Maicas, 2023; Kudesia, Pandey, & Reina, 2022; Li & Tangirala, 2021; Odziemkowska & McDonnell, 2024). Nine studies included only squared terms of control variables as additional NICs.

The Online Supplement A lists the reasons for including NICs reported in these thirteen studies, and we briefly summarize them here. The reasons can be clustered into three different types: First, researchers included NICs to correctly display the functional form of effects, with an assumed or known curvature (N = 7). For example, Chen, Zhang, Li, and Turner (2022) included product age squared and time since last update squared to account for the influence of app lifecycle and update recency when estimating the effects of app-platform experiencing a generational product innovation and its interactions with top market ranking, prior GPIs, and product age on customer adoption of a mobile game.

Second, other researchers included NICs because of requirements of standard implementations of methods, such as the response surface analysis (Edwards & Parry, 1993), which includes squared effects of interacting model variables along with the interaction by default (N = 1). Ziegert, Knight, Resick, and Graham (2022) included intrateam squared and interteam squared effects as part of a response-surface specification when they analyzed intrateam and interteam interaction effects on team performance, with no additional substantive NIC rationale given beyond the method’s standard implementation.

The third type of motives is the focus of our study; it relates to ruling out alternative explanations by isolating NIEs from NICs, with the NICs either relating to model or control variables (N = 5). Garrido et al. (2023), for instance, studied the effect of demand windows and technological windows on market share and considered interactions with international experience with such windows for a sample of multinational companies (MNCs). To disentangle the moderating effect of the size of MNCs from the moderating effects of correlated international experience with demand and technological windows, they included interactions of technological and demand windows with MNC size. Notably, while Li and Tangirala (2021) employed a response surface analysis with the interactions and squared effects of model variables being included, they additionally acknowledged nonlinear and interaction effects of control variables as necessary to get an unbiased surface analysis. Overall, only 5 out of 398 studies (1.3%) that estimated NIEs along with linear controls intentionally included NICs to account for confounding effects. Moreover, we did not observe a systematic screening for potentially omitted nonlinear and interactive controls.

Identifying and Using NICs: A Five-step Guide and Best Practices

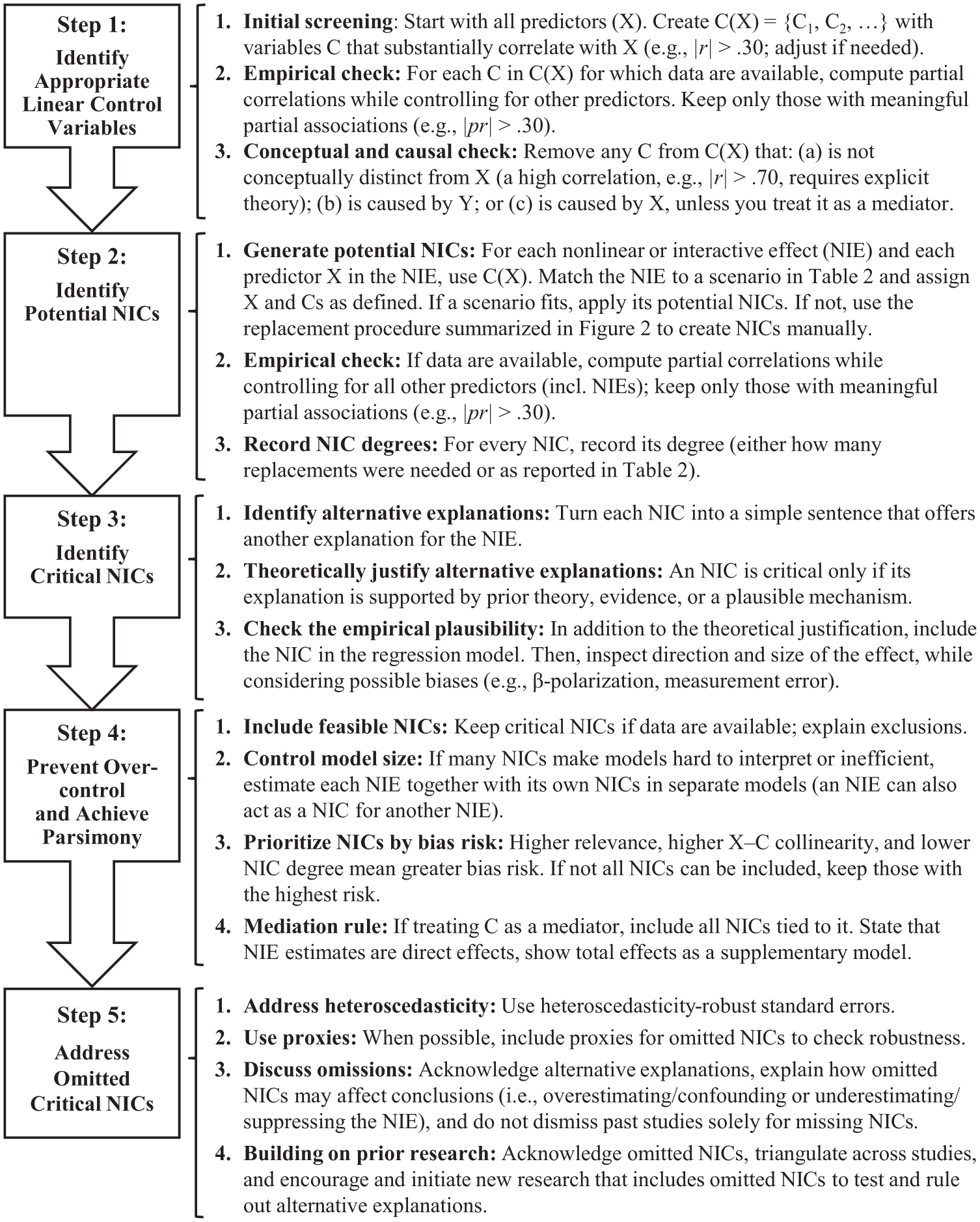

We present a five-step guide for systematically identifying and using nonlinear and interactive control variables (NICs). Figure 1 summarizes the guide and provides specific recommendations. Building on our analytic derivation of the omitted NIC bias, which depends on both the relevance of an NIC for the dependent variable and its collinearity with the focal NIE, the guide clarifies the steps required to address the omitted NIC bias when estimating nonlinear and interactive effects. Although researchers frequently acknowledge the theoretical importance of nonlinear and interactive terms (e.g., Dawson, 2014; Haans et al., 2016; Murphy & Aguinis, 2022), they rarely assess whether there is sufficient collinearity between a focal NIE and an NIC to explain the effect of the focal NIE through the NIC. Existing work has identified only isolated cases in which replacing a constituent variable of an interaction with its correlated moderator or one of the moderator’s antecedents yields a competing nonlinear or interactive effect (Cortina et al., 2023; Ganzach, 1997). Our guide generalizes these isolated insights into a systematic replacement procedure that derives potential NICs from associations among the underlying linear terms. The Appendix formally illustrates how the collinearity of two nonlinear or interactive terms depends on the collinearity of their constituent terms. Importantly, because collinearity and relevance alone do not justify including control variables, the guide requires researchers to establish the conceptual and causal appropriateness of each NIC to avoid over-control. The guide enables researchers to systematically identify NICs that provide alternative explanations for NIEs, whether they are developing theory or empirically testing NIEs. The following subsections provide the rationale for and description of the guide’s steps and recommendations.

Guide for Using Nonlinear and Interactive Controls (NICs)

Step 1: Identify Appropriate Linear Control Variables

We first identify appropriate linear control variables C(X) = {C1, C2, . . .} for each linear component X of any estimated NIE. Identifying and using linear control variables to reduce bias in regression analyses is a well-established practice (Becker et al., 2016; Bernerth, Cole, Taylor, & Walker, 2018; Spector & Brannick, 2011). Hence, we refer to these studies for more details. We focus our discussion on the identification of appropriate linear control variables by means of establishing a correlation between two variables and justifying that the relationship is such that including one of these variables along with the other does not increase, but potentially decreases biases in regression analyses.

Initial screening

As outlined earlier, the existence of bias depends on collinearity, specifically on the partial correlation between a variable (X) and its potential control variable (C), when partialling out all other model variables. When identifying NICs in preparation for data collection or evaluating other researchers’ work, this information is usually unavailable. Thus, an initial screening may first identify substantially correlated variables (e.g.,

Empirical check

Although the initial screening is a good starting point for searching for controls, it is the partial association (after controlling for all other explanatory variables) that determines the biasing risk, not the zero-order correlation. When data are available, an empirical check can evaluate the decisive partial correlations between X and the Cs in C(X) to more reliably identify appropriate linear controls (e.g.,

Conceptual and causal check

Theoretically clarifying the specific causal role of each linear control as part of a conceptual and causal check prevents over-control regarding linear associations (Becker et al., 2016; Bono & McNamara, 2011; Cohen, 1990). It also avoids over-control for resulting NICs. Over-control usually occurs when part of the studied effect is unintentionally partialed out. First, this occurs when two variables, X and C, capture the same construct. Therefore, if two variables strongly correlate (e.g.,

In sum, this first step yields a list of appropriate linear controls, C(X), whose inclusion facilitates unbiased estimation of X’s effects on Y.

Step 2: Identify Potential NICs

Generate potential NICs

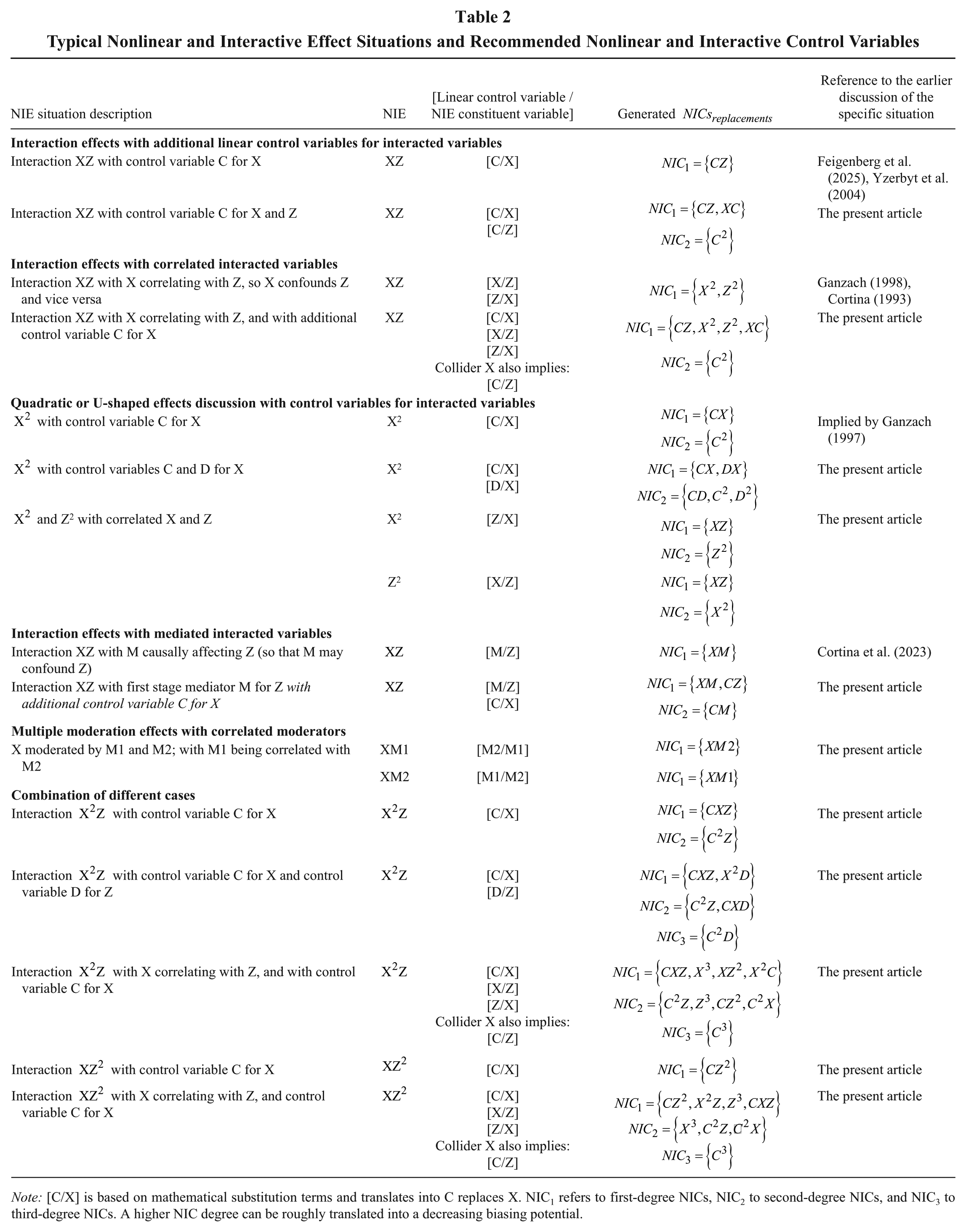

Having identified the appropriate linear controls C(X) for each X that is an element of any of the NIEs, we now generate NICs, defined as nonlinear and interactive terms that likely correlate with the focal NIEs. There are two ways to generate NICs. First, we use Table 2 for typical cases. Alternatively, we use a replacement procedure for more complex scenarios. The following paragraphs describe these two ways of generating NICs. Note that when competing theories are tested, correlations between variables from the different theories can lead to cross-theory interactions, facilitating a more sensitive combination of these theories.

Typical Nonlinear and Interactive Effect Situations and Recommended Nonlinear and Interactive Control Variables

Note: [C/X] is based on mathematical substitution terms and translates into C replaces X. NIC1 refers to first-degree NICs, NIC2 to second-degree NICs, and NIC3 to third-degree NICs. A higher NIC degree can be roughly translated into a decreasing biasing potential.

Table 2 lists typical cases of NIE estimation, including the NIE with exemplary control variables for its components. What remains is to identify the appropriate case, use one’s own controls C(X), and assign them to the roles indicated in the table to identify the potential NICs correlated with the NIE being estimated. The table also notes where and if these cases have been discussed in prior research. If Table 2 does not offer a solution, we can generate NICs based on the replacement procedure, as described below. It is the same procedure that we have used to prepare Table 2 to simplify the identification of NICs. Table 2 also shows that our approach generates NICs discussed in previous research, 4 but also draws attention to additional ones.

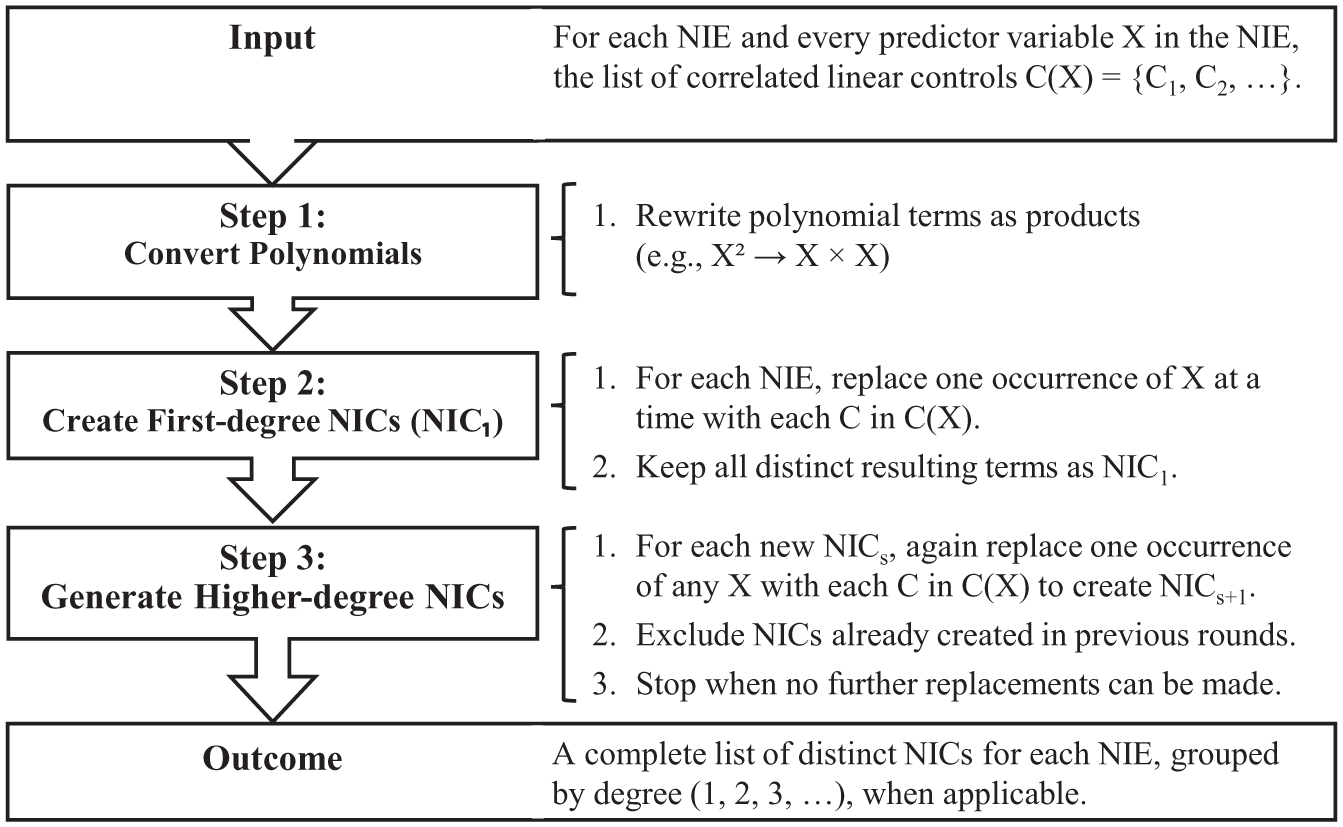

The replacement procedure used to generate NICs as an alternative to Table 2 is summarized in Figure 2 and includes three steps. First, we convert polynomials into products, that is, each hypothesized NIE is written in its product rather than exponential form (i.e., X2 = XX and XZ2 = XZZ). Second, we create first-degree NICs (NIC1), ones that correlate with the NIEs and emerge from a single replacement. For each of the NIEs in the model, one occurrence of the constituent variable X is replaced with one of its corresponding linear control variables from C(X). For example, consider the interaction term XZ. Suppose there is a linear control variable C for Z. Replacing Z with C produces a new interaction term XC, which is a potential NIC1. This term is associated with the original NIE due to the association between Z and C. Multiple potential NIC1s can be generated for a single focal NIE by systematically replacing different constituent variables with their associated control variables, or by replacing the same constituent variable with different control variables. Third, the replacement can be applied iteratively to generate higher-degree NICs. For iterative application, researchers take all the potential NICs generated in one round and replace the variables in these terms based on the same rule as outlined before.

Generating NICs from Given NIEs: The Replacement Procedure

Empirical check

The procedure employed so far generated NICs that tend to have a collinearity with the focal NIE that is as strong as the product of collinearities between the constituent terms from the NIE and the variables with which these terms are replaced. However, this equality holds only when the other constituent terms from the NIE do not correlate with either the replaced or the replacing variable (see the Appendix). When data are available, an empirical check can evaluate key partial correlations between the NIE and its NICs to identify potential NICs more reliably (e.g.,

Record NIC degrees

Following the generation of NICs, one needs to record NIC degrees. The degree of an NIC is the smallest number of replacements needed to generate a potential NIC. Note that this is also included in Table 2. As shown more formally in the Appendix, the NIC degree relates to collinearity and, consequently, the biasing potential of an NIC. That is, if a linear control is deemed important due to its strong association with a model variable, the resulting potential NIC1 tends to be similarly important for understanding the focal NIE. The association of an NIE with an NIC of a higher degree depends multiplicatively on the associations of the model and control variables used for the replacement. As a general rule of thumb, based on the idea that all pairwise associations are roughly of equal size, the strength of collinearity between an NIE and an NIC exponentially decreases with each replacement. Thus, NICs of higher degrees tend to exhibit weaker collinearity with the focal NIE, which reduces their potential bias when omitted.

In sum, implementing Step 2 results in a set of nonlinear and interactive terms that are collinear with focal NIEs. In other words, it yields a list of pairs of focal NIEs and potential NICs that can be analyzed further to derive the set of critical NICs.

Step 3: Identify Critical NICs

When deciding whether to include or omit a potential NIC (i.e., to qualify a potential NIC as a critical NIC), considerations similar to those in previous research on linear control variables apply (Bernerth & Aguinis, 2016; Carlson & Wu, 2012). To warrant inclusion, the correlated variable must meet the theoretical criterion of relevance, in addition to empirically or theoretically grounded collinearity and absence of reverse or mediated causality.

Identify alternative explanations

An initial step in giving mechanically derived NICs theoretical meaning is to translate them into verbal hypotheses, which leads us to identify alternative explanations. Instead of replacing the model variable with its control in the term entering the regression, we replace the related constructs in the corresponding verbal hypotheses. This yields an explicit alternative explanation for the focal NIC, which helps researchers to tie the technical terms to substantive management theory. Online Supplement B illustrates this approach using nonlinear or interactive effect hypotheses from prior studies, covering various fields in management research.

Theoretically justify alternative explanations

We can then ground the explicitly formulated alternative explanations in theory. We can embed it in prior evidence or offer a theoretically plausible mechanism that substantiates the alternative explanation. As a result, a set of nonlinear and interactive terms that are collinear with each focal NIC and also relevant to the dependent variable is returned; that is, pairs of focal NIE and critical NICs.

Check the empirical plausibility

While the decision to include a NIC is primarily a theoretical matter (Jaccard, Wan, & Turrisi, 1990), in cases when the relevance or applicability of NICs is ambiguous, possibly just for the specific context, we may explore the specific data. We may even be tempted to treat a potential NIC as relevant whenever its estimated coefficient after including it is statistically significant. While significance tests can be a first step for exploring relevance, the nonsignificance of an NIC may reflect multicollinearity with the focal NIE or other NIEs, which reduces the ability to identify relevance (Wooldridge, 2016). Furthermore, large estimates may arise from methodological artifacts (e.g., beta-polarization; Kalnins & Praitis Hill, 2025). Therefore, the magnitude of the effect should be compared to the magnitude of other effects in the model. Finally, if the NIE suffers more from measurement error than the NIC, the NIC’s significance might be misleading (Busemeyer & Jones, 1983). Ultimately, the empirically observed effect can be informative, but it should be judged in the context of theoretical arguments.

Step 4: Prevent Over-control and Achieve Parsimony

Many studies hypothesizing NIEs, particularly those investigating effect heterogeneity, estimate multiple NIEs (e.g., Garrido et al., 2023; Kor & Tan, 2025). While two different NIEs may share the same set of NICs, this is likely the exception. Typically, NICs are specific to NIEs. Therefore, the greater the number of NIEs, the greater the number of NICs required, raising the risk of over-control. In that sense, double-checking in Step 1 to ensure that none of the remaining NICs impose a risk of over-controlling should be standard practice. Using only appropriate linear controls as input for NIC identification is the most critical safeguard against over-control concerning NICs. However, a few additional issues must be considered.

Include feasible NICs

Steps 1 through 3 in our guide can be implemented without data but based on prior theory and prior reports of correlations. This makes deriving critical NICs accessible to editors, reviewers, and all other researchers critically evaluating prior research. However, NICs can be included in a particular study only when data are available, which may require collecting additional data. Since each study may have a different focus and constraints, we recommend considering which additional NICs can be collected. Otherwise, the exclusion of critical NICs identified in the first three steps should be discussed (e.g., in an appendix).

Control model size

When considering multiple NIEs, it is not a rare practice to test them in isolation from one another. This is usually an attempt to avoid inefficiencies arising from model size and correlations among multiple moderating effects (e.g., Courtright, Gardner, Smith, McCormick, & Colbert, 2016; Ding, Murray, & Stuart, 2013). We can control model size and take advantage of the fact that NICs are NIE-specific by testing NIEs in separate regressions, and in each regression, including only the NICs necessary for the focal NIE. Rather than excluding NICs for NIEs not tested in a particular step, we might even exclude other NIEs, as in the aforementioned studies. When following this practice, it is important to ensure that these omitted NIEs do not act as NICs for the focal NIE (see also Table 2, case 10). Since the omitted NIE is most likely a hypothesized effect and therefore relevant, it is crucial to demonstrate that the partial correlation between the two NIEs is sufficiently small. Conversely, if an NIC stands out due to high collinearity with an NIE or substantial relevance for the dependent variable, it must be included in the main analysis (e.g., Garrido et al., 2023). In other words, when testing NIEs in isolation, researchers must ensure that none of the omitted NIEs acts as an NIC for the focal NIE. Using this approach, the number of variables can be substantially reduced without compromising the unbiasedness of the focal NIE estimates.

Prioritize NICs by bias risk

When it is not feasible to include all critical NICs in the model due to limited resources (e.g., limited survey length, limited access to data, or limited size in reports), we recommend prioritizing by bias risk to obtain the least biased NIE estimate possible. As a reminder, the bias risk emerges from higher collinearity of linear terms, higher NIC relevance, and a lower NIC degree. We recommend that researchers consider these three elements conjointly when justifying the choice of NICs. For example, an NIC2 may, on average, cause a smaller bias than an NIC1, but if the NIC2 emerges from stronger collinearities, it could still display a higher risk for bias. Similarly, an NIC2 with less collinearity could matter more than an NIC1 if its relevance compensates for its lower NIC degree. In practice, such discussions will likely be brief and based on potentially contested assumptions. However, even a brief discussion contributes more to further research than the current state of research, which almost entirely neglects the necessity of NICs for unbiased NIE estimates.

Mediation rule

One issue remains when considering over-control. As discussed in Step 1, we advise against using a control variable C that is affected by X, unless the explicit intention is to estimate a mediated relationship. When C is included, a direct (not total) effect of X is estimated. To maintain consistency in the mediation analysis and, consequently, model parsimony, researchers must adhere to the mediation rule: When a mediator C is included as a control for X, all critical NICs generated in the first three steps that include C must also be included in the model. Otherwise, the linear coefficient of X would be interpreted as a direct effect, while the NIE would remain a total effect. This would render combinations of direct and interaction or nonlinear effects largely uninterpretable (e.g., as part of slope or marginal effects analyses; for an in-depth discussion of such inconsistencies, see Cortina et al., 2023). As a result of carefully implementing Step 4, we selected the necessary variables and NICs for focused yet robust estimations and statistical tests of nonlinear and interactive effects, and are aware of omitted critical NICs.

Step 5: Address Omitted Critical NICs

Even a well-justified NIC with the potential to be critical may be excluded when data are missing, when inclusion would extend the analysis beyond the scope of a single study, or for the sake of efficiency (e.g., Courtright et al., 2016; Ding et al., 2013). In any case, the omission has implications that must be addressed, as described next.

Address heteroscedasticity

Omitting a critical NIC creates heteroscedasticity for the model variables that form the omitted term (King & Roberts, 2015; Klein, Gerhard, Büchner, Diestel, & Schermelleh-Engel, 2016). Heteroscedasticity renders estimation less efficient and biases the estimation of standard errors, consequently affecting statistical tests (Wooldridge, 2016). Therefore, it is strongly advisable to test for and address heteroscedasticity when NICs are omitted. One way to address heteroscedasticity robustly, as well as perform more accurate hypothesis testing and estimation of confidence intervals, is to estimate heteroscedasticity-robust standard errors (Long & Ervin, 2000).

Use proxies

When NICs are omitted because the required interacting variables are unobserved, we should search for and use proxies of these variables. Then, we should also include the NICs derived for these proxies in the regression (Wooldridge, 2016: 67). An earlier made claim for linear control variables also holds for NICs; that is, using a proxy variable is better than having no control over the omitted variable at all (Mändli & Rönkkö, 2025).

Discuss omissions

When important controls cannot be observed, and no proxies are available, we recommend discussing these omissions transparently. Accordingly, we urge editors and reviewers to value such transparency over directly disqualifying a study based on the omission of an NIC. Suppressing this discussion to present an “ideal” study risks overlooking competing explanations, hinders the transparent accumulation of evidence, and misguides theory development. This discussion does not need to be lengthy or include every possible NIC. It should focus on NICs that are strong in terms of collinearity and relevance because they have the potential to introduce substantial bias.

Building on prior research

Reflecting on omitted NICs is problematic not only when doing so in one’s own study but also when building on prior research that omitted critical NICs. When conducting systematic reviews and meta-analyses, analyzing NICs in previously published work can substantially alter the evaluation and aggregation of prior research in evidence-based theory development. Studies less affected by omitted NICs are considered more trustworthy and can be given more weight in literature reviews and meta-analyses. When different studies omit different NICs, researchers can assess the robustness of a finding across multiple, limited investigations. Eventually, they can triangulate by excluding different confounds in different studies. Meta-regressions can be used to compare subsamples exposed to different omitted NICs to explain inconsistent findings or unexpected results (Gonzalez-Mulé & Aguinis, 2018). For example, this method could be employed when a theoretically predicted NIE proves to be statistically insignificant or reverses sign. Such supplementary NIC analyses can contribute to Alvesson and Kärreman’s (2007) mystery-solving approach, in which anomalies lead to deeper theoretical insights (Ketokivi, Mantere, Aguinis, Makadok, Swink, Bendoly, & Oliva, in press). Moreover, identifying omitted critical NICs can effectively guide further research, such as constructive replications of nonlinear and interactive effects, by motivating preregistered tests of the most plausible competing explanations when original data are inaccessible or incomplete (Köhler & Cortina, 2021).

When to Exclude Identified NICs: Unbiasedness Versus Efficiency

In this section, we adopt an estimation view that trades bias against efficiency to maximize overall precision in effect-size estimation (i.e., minimizing its mean squared error, MSE, or root mean squared error, RMSE), rather than pursuing unbiasedness as the dominant goal. Related management research makes the same trade-off through choices between fixed- and random-effects estimators, or when and how to include control variables (Becker et al., 2016; Clogg, Petkova, & Haritou, 1995), and in studies that estimate moderators separately to preserve efficiency (e.g., Courtright et al., 2016; Ding et al., 2013). In other words, when researchers prioritize how close an estimate is to the true value in terms of mean squared error, they may accept small biases and exclude relevant NICs that cause only minor biases, if doing so substantially reduces the standard error of the estimator. These estimators are often assessed using coefficient comparison tests as a decision aid, also referred to as the Hausman test (Certo, Withers, & Semadeni, 2017; Hausman, 1978). A significant test indicates that the NICs should not be excluded.

Since NICs behave fundamentally like usual linear control variables, the same arguments apply to them. We conducted a Monte Carlo simulation, reported in the Online Supplement C, to illustrate this point. First, adding the relevant NIC explains previously unexplained variance and reallocates variance away from the error term and toward the model variables. It also reduces heteroscedasticity that emerges from omitting interactions with the model variables. Thus, inclusion improves the RMSE and is typically recommended (Klein et al., 2016; Wooldridge, 2016). Second, the omitted NIC bias and the resulting RMSE increase with the omitted NIC’s relevance and collinearity with the focal NIE, strengthening the case for inclusion in typical settings. Third, there is a boundary condition. Under extreme collinearity, standard errors can inflate to the point that the loss of efficiency outweighs the reduction in bias. Such inflation decreases the overall estimation precision in terms of the RMSE. Under such extreme collinearity, excluding critical NICs can increase estimation precision, even though a small bias is accepted. Fourth, the coefficient comparison test can inform decisions about the inclusion or exclusion of a NIC in an attempt to increase the precision of an estimate.

While the simulation focused on a single NIC for simplicity, multiple moderately collinear NICs can jointly undermine precision as much as a single, highly collinear NIC, even when pairwise correlations appear small. Hence, in case of doubt, researchers should not rely solely on pairwise correlations when judging collinearity. In fact, it appears that a statistically nonsignificant coefficient comparison test can justify excluding an NIC. While the test-based exclusion marginally increases the RMSE for moderate to high collinearity, the RMSE decreases substantially for very high collinearity. That is, using the coefficient comparison test implies accepting a small systematic bias in exchange for avoiding a much higher probability of unsystematic variation in the estimates, particularly at higher levels of collinearity. For transparency, we recommend that researchers additionally report the full model when an NIC is excluded.

Although the coefficient comparison test has benefits for precisely estimating effect sizes, we highlight three possible drawbacks. First, statistical testing is inherently uncertain, and there are specific risks associated with multiple (two-step) testing (Wooldridge, 2016). That is, if there is strong theory supporting the relevance and the collinearity of an NIC, such tests should not be (ab-)used to make purely empirically guided decisions about including critical NICs with potentially huge impact. We recommend using theory-based arguments that suggest only minor biases due to either small relevance or small collinearity, alongside the coefficient comparison test. Second, small changes may become significant with large samples, while large changes may not reach significance with small ones (Becker et al., 2016; Spector, 2020). Therefore, it is important to consider whether the change in the coefficient meaningfully alters the conclusions (Schwab, Abrahamson, Starbuck, & Fidler, 2011). Moreover, when the effect sizes of NIEs substantially increase or even change direction after NICs are included, researchers may face suppression effects. This occurs when the omitted NIC bias renders a truly present effect smaller, making it statistically insignificant, or reverses its direction (MacKinnon, Krull, & Lockwood, 2000). However, switches in sign and large coefficients after including controls may also result from regression artifacts, such as beta-polarization (Kalnins & Praitis Hill, 2025). That is, significance appearing only after including an NIC can be a valid finding, but the implied suppression effect should be theoretically substantiated. Furthermore, when interpreting effect sizes, researchers should keep in mind that, while a .10 difference in standardized coefficients provides an initial indication (Becker et al., 2016), including the NICs is essential when there is a significant change in the conclusions, regardless of the size of the effect. Third, Kalnins and Praitis Hill (2025) demonstrated that, when theory testing emphasizes the unbiasedness of statistical tests rather than the precision of effect estimation, excluding collinear terms is never advantageous. In other words, researchers should recognize that the efficiency-unbiasedness trade-off reflects an estimation rather than a testing perspective. In sum, coefficient comparison tests can inform specification choices when the goal is precise effect-size estimation under collinearity. Insignificance can justify the exclusion of an NIC, while significance can provide an initial indication for its inclusion. However, the test must not be employed mechanically. Researchers must carefully inspect and theoretically consider changes resulting from omitting critical NICs. Ultimately, it is up to the researcher to determine which NICs theoretically affect the NIE estimate (Jaccard et al., 1990).

Illustrative Application: Reconsidering Mo and Andiappan

Building on the earlier section that used Mo and Andiappan (2024) to illustrate the omitted NIC problem, we revisited their study to demonstrate how our step-by-step guide can prevent estimation biases in NIEs. Mo and Andiappan’s focal NIE is the interaction between rivalry and LMX predicting perceptions of peer monitoring. We re-ran the analytical process to show how our guide could have prevented the previously discussed potentially erroneous conclusions. To illustrate how applying our guide can produce different decisions and conclusions, we applied our reanalysis to the American and Chinese samples reported by Mo and Andiappan. The two samples come from cultural contexts that previous research has shown to differ with respect to the roles of LMX and trust-related mechanisms (Rockstuhl, Dulebohn, Ang, & Shore, 2012). To keep this example concise, we limited the conceptual discussion and focused on the methodological illustration of the tests of Mo and Andiappan’s hypothesized NIE.

Step 1: Identify Appropriate Linear Control Variables

Implementing the first step in our guide, we identified the appropriate linear controls for rivalry and LMX, the interaction’s constituent terms. We started with the initial screening of Mo and Andiappan’s correlation tables and found that in the American and Chinese samples, LMX has peak correlations with team trust (r = .61 in the American sample, r = .40 in the Chinese sample). Rivalry has no such high correlations with other linear controls. The observed cross-cultural differences in the correlation patterns are consistent with prior research suggesting cultural differences in the association between LMX and trust-related processes (Fulmer & Gelfand, 2012).

Next, as data were available, we conducted a supplementary empirical check regarding the partial correlations between LMX and team trust, controlling for the remaining linear variables. Our initial screening was confirmed by the partial correlations, which were large for LMX and team trust (pr = 0.58 in the American sample, pr = 0.43 in the Chinese sample), but small for all other partial correlations (

For the conceptual and causal check, we followed Mo and Andiappan (2024), who included this variable explicitly to rule out an alternative explanation for their hypothesized effects of LMX. Mo and Andiappan’s discussion and their inclusion of team trust imply that they do not assume that (a) the two constructs are identical or heavily overlapping (not conceptually distinct), (b) LMX causes team trust (reverse causality), or (c) team trust acts as a mediator of the relationship between rivalry and perceptions of peer monitoring. Finally, because LMX and team trust each reflect mutual positive expectations and willingness to be vulnerable, they are likely to have common antecedents that generate their observed association (Brower, Schoorman, & Tan, 2000). Accordingly, we follow Mo and Andiappan and regard team trust as an appropriate linear control for LMX.

Step 2: Identify Potential NICs

With the linear control established, we proceeded to generate potential NICs. Specifically, we looked for NICs for the NIE (rivalry × LMX), given that its constituent term LMX is collinear with team trust. We found that Case 1 from Table 2 fits our case. Variable X from Table 2 reflects LMX, C reflects team trust, and Z reflects rivalry. Table 2 indicates that there is a single NIC (i.e., C × Z), which, after switching the factor order to Z × C, translates into rivalry × team trust. If the case was not available in Table 2, the same result was derived by the replacement procedure (see Figure 2). Replacing LMX with team trust in rivalry × LMX yields the interaction of rivalry × team trust as a first-degree NIC1. Since there is only one linear control variable that turned out to be a replacement candidate, our NIC generation is complete.

Since the collinearity between NIC and NIE depends on correlations between NIE’s remaining linear constituent terms with the linear model and its control, i.e., rivalry with both LMX and team trust, we did an empirical check of the actual collinearity between the NIC and the NIE. We calculated the partial correlation of rivalry × team trust with rivalry × LMX while controlling for all other variables. It is substantial for the American sample (pr = 0.85) and much smaller for the Chinese sample (pr = 0.31). The same pattern showed up when regressing the NIC on the NIE together with other variables (

Since the degree of an NIC relates to its collinearity with NIE, which in turn contributes to the bias risk, we must record NIC degrees. Here we only have a single first-degree NIC but no NICs of higher degrees.

Step 3: Identify Critical NICs

Next, we determined whether the potential NIC is critical by evaluating its relevance to the dependent variable. To identify alternative explanations for the NIE, we translated the NIC into its verbal form using the original hypothesis as the basis: “Team trust moderates the negative indirect effect of rivalry on workplace cheating behavior via perceptions of peer monitoring, such that the negative effect is stronger at lower levels of team trust and weaker at higher levels.”

We then consulted prior research that offers theoretical arguments, empirical evidence, or plausible mechanisms to theoretically justify alternative explanations associated with the NICs. Building on our earlier discussion of prior work (Han & Harms, 2010; Kilduff et al., 2016; Mo & Andiappan, 2024), rivalry can increase perceptions of peer monitoring by threatening one’s identity or status. Team trust can mitigate the effects of these threats (Han & Harms, 2010). Therefore, team trust, rather than leader-member exchange (LMX), may weaken the positive link between rivalry and perceived monitoring. However, cultural-difference theories suggest that the relevance of team trust varies across cultures. In individualistic, lower–power-distance contexts such as the United States, team trust-based processes are more consequential (Hofstede, 2001; Rockstuhl et al., 2012; Shavitt, Lalwani, Zhang, & Torelli, 2006), whereas in collectivistic, higher–power-distance contexts, such as China, interdependence and deference to authority make team trust less central (Dickson, den Hartog, & Mitchelson, 2003; Dulebohn, Bommer, Liden, Brouer, & Ferris, 2012; Triandis, 1995). Hence, the rivalry × team trust interaction is relevant and, hence, a critical NIC in the American sample, but less relevant and possibly not a critical NIC in the Chinese sample.

As data were available and prior research suggests that we may need to treat the two samples differently, we proceeded to check the empirical plausibility of the NIC’s relevance. We included the NIC in the regression analyses (see Table 1, Model 2 and Model 7). Consistent with the theoretical argument, the NIC’s coefficient was large and statistically significant in the American sample (β = −1.20, p < .001) but was statistically nonsignificant and of negligible magnitude in the Chinese sample (Table 1, Model 7: β = −0.02, p = .631). In sum, the NIC was relevant in the American sample but less so and possibly irrelevant in the Chinese sample.

Step 4: Prevent Over-Control and Achieve Parsimony

In the next step, we aimed to prevent over-control and achieve parsimony in the model. Because the necessary data were available, the identified NIC qualified as feasible, allowing us to include feasible NICs. Moreover, although this is not the case in our example, if we end up with many NICs for multiple NIEs, we can control model size and reduce over-control issues by including NICs for each NIE separately. When resource limitations prevent us from collecting data on all critical NICs and thereby constrain the feasible model size, we prioritize NICs by bias risk when deciding which regression terms to drop and which variables (including NICs) not to measure. This implies judging the collinearity, the relevance, and the degree of a NIC. With only first-degree NICs, we face NICs with potentially high collinearity. As shown above, the collinearity of the linear terms, the resulting collinearity between the NIE and the NIC, and the relevance of the NIC, and consequently the bias risk, are all higher for the American sample than for the Chinese sample. As a result, we had to include the NIC in the analyses of the American sample and could reasonably exclude the NIC from analyses of the Chinese sample. Because team trust was not treated as a mediator for the effects of LMX on peer monitoring in the original Mo and Andiappan study, the mediation rule did not apply in our case. Accordingly, the corresponding NIE estimates could be interpreted as the total size of an NIE.

Step 5: Address Omitted Critical NICs

If data were not available for one or more of the identified critical NICs, we would need to follow the procedures described in Step 5. Specifically, we would need to address heteroscedasticity resulting from omitted NICs by using heteroscedasticity-robust standard errors (as done, for instance, in Models 2, 3, 5, 7, 8, and 10 in Table 1). Where possible, we would use proxies for the omitted NICs to probe the robustness of our NIE estimates. We would discuss omissions transparently by explicitly acknowledging the alternative explanations that cannot be empirically ruled out. Finally, we would build on prior research by encouraging, and where feasible initiating, new studies that help disentangle these competing mechanisms. Because our short example does not omit any identified critical NICs, we do not need to implement these additional procedures.

As a result of implementing the five-step guide, we estimated and tested the NIE (rivalry × LMX) via regression analyses while controlling for the critical NIC (rivalry × team trust). For the U.S. sample, adding rivalry × team trust substantially altered the results: the coefficient for the focal NIE on peer monitoring became smaller and nonsignificant (changing from −0.65 to 0.38, p = .268; Table 1, Models 1 and 2), indicating that the initially supported moderating effect was primarily driven by the omitted NIC rather than by LMX itself. For the Chinese sample, our procedure indicated that we could exclude the NIC due to a low bias risk. Indeed, including the NIC did not alter the results; the effect of rivalry × LMX remained similar in size and statistically significant (coefficient changed from −0.08 to −0.09, p ≤ .035; Table 1, Models 6 and 7). These results can be reported as a robustness check, indicating that the NIE is robust to the inclusion of a critical alternative explanation. While not consistently falsifying Mo and Andiappan’s theory, these findings call for an adjustment, specifically with respect to culture-related boundary conditions. 5 Taken together, this illustrative reanalysis shows that applying the guide to the example of Mo and Andiappan (2024) is a practical way to systematically check the robustness of NIE estimates against alternative explanations, thereby improving the accuracy of substantive theorizing about NIEs.

Discussion

Contributions to Theory Testing and Development

Our analyses revealed that omitting nonlinear and interactive control variables (NICs) can introduce systematic bias when estimating and interpreting nonlinear and interactive effects (NIEs). Although NIEs are central to many micro and macro theories, our review of published articles showed that standard practice relies almost exclusively on linear control variables. Of the 548 recent empirical articles we reviewed across three leading journals, 73% tested NIEs, but fewer than 3% included NICs, and even fewer justified their inclusion based on considerations of bias in NIE estimates. Through analytical methods and the reanalysis of a previously published study, we demonstrated that linear controls are insufficient for addressing confounding effects in models that include NIEs. Omitting NICs can lead to biased estimates and incorrect conclusions.

To address this issue and facilitate the systematic identification and use of NICs, we developed a five-step guide (see Figure 1). To provide a balanced perspective, our guide also outlines situations in which the use of NICs may be inappropriate. By systematically identifying alternative explanations, NICs help distinguish focal mechanisms from competing explanations. This supports the further development of existing theories, the integration of adjacent theoretical frameworks, and more precise study designs and analyses. Ultimately, this contributes to more transparent evidence accumulation and more substantial theoretical advancement.

Our work contributes both to the literature on correctly analyzing and testing theories that predict nonlinear and interactive effects and to the literature on control variable usage. The latter body of literature discusses how linear controls reduce bias when estimating linear relations (e.g., Bernerth & Aguinis, 2016; Spector & Brannick, 2011). The former explains how to estimate NIEs while addressing the correlation between constituent terms of interactions and, more recently, endogeneity from moderators’ antecedents (Aguinis et al., 2017; Cortina, 1993; Cortina et al., 2023; Dawson, 2014; Ganzach, 1998; Haans et al., 2016). Thus far, these two streams of methodological research have remained separate. Research on linear control variables has ignored alternative explanations of NIEs, and work on correctly testing theories involving NIEs has considered only special cases of NICs (Cortina, 1993; Cortina et al., 2023; Ganzach, 1998). Consequently, many NICs that bias NIE estimates have gone unrecognized, leaving studies vulnerable to hidden endogeneity and misspecification. We bridge this gap by applying to NICs the familiar criteria used for linear controls, namely collinearity and theoretical relevance. Our step-by-step guide maps each model variable to theory-based controls, systematically derives potential NICs, identifies mandatory ones, and clarifies when exclusions are justified. Our guide expands the set of NICs beyond previously considered cases and helps prevent bias when linear control variable procedures are applied to NIE analyses. In turn, this strengthens causal inference.

Limitations and Future Research

Our introduction and discussion of the step-by-step guide for the use of NICs represent an important initial step toward incorporating nonlinear and interactive control variables into management research. However, our work is subject to limitations that warrant further research. First, we assessed the prevalence of the problem only by reviewing management research from the past three years in three journals and by reanalyzing a previously published study. Therefore, generalizability may be limited. Nevertheless, similar concerns have been raised in other fields, such as psychology (e.g., Malter, 2014) and economics (e.g., Feigenberg et al., 2025). Because substantially more data for reanalysis are available in economics, Feigenberg et al. (2025) demonstrated that more than 50% of estimates may change when considering NICs derived from fixed-effects panel analyses. Therefore, our discussion likely generalizes beyond the selected cases. Finally, future research would benefit from greater data availability to better evaluate the scope of this problem in management research through independent reanalysis.

Second, we included illustrative reanalyses and additional examples in the manuscript and the Online Supplements B and D to demonstrate the importance and application of the step-by-step guide for NIC usage. Given the focus of this manuscript, we could not include in-depth theoretical discussions for each example. Further exploration would require extensive additional empirical analysis and theoretical reflection, both of which fall beyond the scope of our primary objective. Thus, these examples should be regarded as illustrations. Nevertheless, the examples discussed here and those reported in Online Supplements B and D warrant further empirical and theoretical examination.

Third, we identify NICs based on the linear association of the variables comprising focal NIEs (Cortina et al., 2023; Ganzach, 1997). Our formal analysis in the Appendix and the Monte Carlo simulation in the online supplement focus on an idealized model in which the association between a focal NIE and its NIC is assumed to be as strong as the association between the linear model and the control variable used to generate the NIC. Future research could expand these analyses in three directions. First, future research could examine the role of NICs in situations where the relationship between model variables and control variables is nonlinear rather than linear. Second, one could analyze other nonlinear forms of relationships, such as exponential or logarithmic effects. Third, it would be useful to determine under what conditions the association between NICs and focal NIEs might be smaller or larger despite strong linear associations among the constituent linear terms. To further develop such guidance, future research could follow Aguinis, Beaty, Boik, and Pierce (2005) by investigating typical effect sizes of nonlinear and interactive effects together with their associations, thereby shedding light on potential biases caused by NICs arising from different numbers of replacements.

Finally, as has been discussed for linear control variables (Cuervo-Cazurra, Andersson, Brannen, Nielsen, & Reuber, 2016), nonlinear and interactive controls (NICs) can also enhance research quality throughout the entire research process. Beyond guiding data collection toward variables that merit inclusion even when they do not have linear or direct effects on dependent variables, NICs offer a framework for identifying alternative explanations that can stimulate new theorizing. By transforming potential confounds into conceptually meaningful alternative hypotheses, the systematic analysis of NICs helps researchers articulate and test boundary conditions and mechanisms more precisely. Moreover, associations revealed through NICs can connect constructs from distinct theoretical domains, highlighting cross-theory interactions and higher-order relationships that warrant further conceptual elaboration. Online Supplement B offers examples. For instance, future research could bridge distinctiveness and category life-cycle theories by testing whether cross-theory NICs, such as distinctiveness × category maturity or maturity-squared qualify or displace an originally hypothesized baseline inverted-U between distinctiveness and performance (Anderson & Zeithaml, 1984; Suarez, Grodal, & Gotsopoulos, 2015; van Angeren et al., 2022). That is, future research could explore NIC-guided theory integration as combining elements from two or more theories, e.g., based on observed pairwise associations, to generate new insights and develop more comprehensive explanations of complex organizational phenomena (Mayer & Sparrowe, 2013).

Conclusions

Nonlinear and interactive effects (NIEs) are fundamental to management theory. Although linear control variables are commonly used, neglecting nonlinear and interactive controls (NICs) can bias estimates and invalidate inferences about nonlinear and interactive effects (NIEs). A review of 548 articles revealed that while 73% tested for NIEs alongside linear controls, fewer than 3% accounted for NICs. We developed a step-by-step NIC guide that provides a five-step procedure for identifying and using NICs. This tool helps researchers incorporate NICs into their study designs, analyses, and theories, resulting in more accurate findings. The guide also offers best practices and addresses concerns such as over-control and loss of efficiency. By guiding the identification and inclusion of NICs, the guide improves the validity of empirical results and supports stronger theorizing. Ultimately, systematically and validly including NICs advances management theory by appropriately accounting for alternative explanations. This approach ensures more rigorous testing of complex relationships and enhances the development and integration of theoretical insights.

Supplemental Material

sj-pdf-1-jom-10.1177_01492063261431571 – Supplemental material for Identifying and Using Nonlinear and Interactive Control Variables

Supplemental material, sj-pdf-1-jom-10.1177_01492063261431571 for Identifying and Using Nonlinear and Interactive Control Variables by Andreas Salmen, Diemo Urbig and Herman Aguinis in Journal of Management

Footnotes

Appendix

Acknowledgements

We thank Journal of Management Associate Editor Michael Withers and two anonymous reviewers for their constructive feedback and guidance throughout the review process. Previous versions of this manuscript were presented at the Academy of Management meetings in Chicago, August 2024, where it received the Academy of Management Research Methods Division Best Student Paper Award, and at the Strategic Management Society meetings in Istanbul, October 2024. Andreas Salmen gratefully acknowledges a travel grant from the Brandenburg University of Technology Graduate Research School.

Supplemental material for this article is available on the JOM website.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.