Abstract

The evolution of innovation ecosystems hinges on the effective coordination between ecosystem leaders and complementors. While ecosystem-sponsored architectural innovations create new technological opportunities for complementors, they may unintentionally disrupt the performance of complementor products that were originally developed based on legacy architectural designs. This study provides empirical evidence of these disruptive effects and explores how complementors’ alignment with the ecosystem leader mitigates such challenges. Focusing on Apple’s release of Core ML—a proprietary artificial intelligence module—in its mobile ecosystem, we employ a difference-in-differences design to investigate variations in complementor performance after adopting this ecosystem-sponsored architectural innovation. Our findings reveal that complementors’ technological and flow alignment with the ecosystem leader is critical for minimizing performance disruptions and enhancing value creation during ecosystem evolution. This study enriches our understanding of architectural changes and complementor heterogeneity in innovation ecosystems.

Introduction

In innovation ecosystems, the leader typically introduces technological innovations that form the basis for the development of downstream complements (Adner & Kapoor, 2010; Ghazawneh & Henfridsson, 2013; Parker, Van Alstyne, & Jiang, 2017). Complementors capitalize on these innovations, integrating them into specialized products to serve their own niches (Boudreau, 2012; Iansiti & Levien, 2004). This fundamental way of coordination shapes the direction and pace of ecosystem evolution, as the leader’s innovations renew the technological opportunities for complementors (Cohen & Levinthal, 1990), who, in turn, enhance the ecosystem’s value proposition through their complementary offerings. While existing research on ecosystems acknowledges the benefits complementors could gain from leveraging such innovations (e.g., Miric, Ozalp, & Yilmaz, 2023; Um, Zhang, Wattal, & Yoo., 2023), far less attention has been paid to the substantive challenges complementors may encounter, and how they can navigate such challenges.

The challenges for complementors in co-evolving with the ecosystem leader become particularly pronounced when ecosystem-sponsored innovations involve architectural changes, which Henderson and Clark (1990: 10) define as “innovations that change the way in which the components of a product are linked together, while leaving the core design concepts untouched.” Ecosystem leaders introduce these innovations with diverse motivations, such as establishing control or setting technological standards, and, notably, to create technological opportunities for downstream applications (Adner, 2017; Baldwin & Woodard, 2009; Van Der Geest & Van Angeren, 2023). By releasing architectural innovations, leaders reshape how technological components interact within the ecosystem (Henderson & Clark, 1990; Jacobides, Cennamo, & Gawer, 2018), while also potentially generating negative externalities for complementors. For instance, when Microsoft launched the graphical user interface (GUI), developers faced the challenge of revising up to 50% of their coding practices, leading many to delay adoption for nearly 7 years until the GUI’s benefits surpassed the integration costs (Baldwin & Clark, 2000). Despite these long-standing anecdotes, empirical investigation into the varying impacts of ecosystem-sponsored architectural innovations on complementors remains limited. Meanwhile, recent studies have shed light on how complementors deal with significant ecosystem shifts (Chen, Yi, Li, & Tong, 2022), focusing mainly on their intrinsic attributes like experience, size, and location (e.g., Koo & Eesley, 2021; Rietveld & Eggers, 2018; Ozalp, Eggers, & Malerba, 2023). Yet, we still know little about how complementors align with the evolving ecosystem to mitigate the disruptive effects of such innovations.

To address this gap, we propose that complementors’ alignment with the ecosystem leader plays a vital role in mitigating the disruptive effects of architectural innovations. Prior research argues that the realization of the ecosystem’s novel value proposition primarily results from their consistent and integrated efforts (Altman, Nagle, & Tushman, 2022; Kapoor & Lee, 2013; McIntyre & Srinivasan, 2017). As Adner (2017: 49) puts it, “if the heart of traditional strategy is the search for competitive advantage, the heart of ecosystem strategy is the search for alignment.” In extending this view, we theorize that complementors who implement technology or flow alignment with the ecosystem leader are better equipped to absorb the externalities induced by architectural innovations, enabling them to minimize short-term performance shortfalls. Technological alignment refers to the extent to which a complementor integrates the ecosystem leader’s proprietary technologies into their own product development, while flow alignment involves synchronizing a complementor’s technology adoption with the ecosystem’s renewal cycle. We propose that technology alignment allows complementors to generate greater synergistic value from adopting ecosystem-sponsored architectural innovations while seamlessly adapting product designs to the leader’s evolving architecture. On the other hand, flow alignment enables complementors to enhance their products’ appeal by showcasing the ecosystem’s latest value propositions in architectural innovations, while also leveraging the unsettling ecosystem renewal to downplay any potential performance hiccups. By conceptualizing complementor alignment as a key boundary condition, our study seeks to develop a more nuanced understanding of the process by which ecosystem evolution affects joint value creation.

To empirically examine the discontinuities induced by architectural innovations and the mitigating effects of complementor alignment, we take advantage of a unique setting of mobile ecosystems, where multihoming apps adopt an ecosystem-sponsored architectural innovation in Apple’s ecosystem, but not in Google’s. In 2017, Apple pioneered in developing an on-device AI technology—known as Core ML—which reshaped the fundamental architecture to prepare smartphone devices for handling artificial intelligence (AI) or machine learning (ML) tasks locally (McGregor, 2020). 1 In gauging the effect of Apple’s Core ML, multihoming apps constitute an ideal sample as they are almost identical except for operating in distinct ecosystems (i.e., Apple and Google). We employ a difference-in-differences (DID) estimation comparing multihoming apps that deployed Core ML in Apple’s ecosystem but not in Google’s ecosystem. In so doing, we address the confounding issues of endogenous app- and developer-level factors, including self-selection biases (Shaver, 1998). Thus, by utilizing DID estimation in the unique mobile ecosystems setting, our study yields new insights into complementor heterogeneity in performance disturbances during ecosystem evolution.

Our study makes three key contributions to the literature on innovation ecosystems. First, we extend the literature on architectural innovations by demonstrating their disruptive effects on complementors’ product performance in an ecosystem setting, thus broadening the scope of innovation theory to the context of complex ecosystem interdependencies. Second, we highlight complementor alignment in mitigating the negative externalities of ecosystem-sponsored architectural innovations. While prior research has identified inherent complementor attributes that facilitate adaptation to ecosystem renewals (e.g., Agarwal & Kapoor, 2023; Ozalp et al., 2023), specific complementor strategies remain underexplored. We address this gap by demonstrating that complementors can actively align their offerings—technologically and with renewal cycles—to ride out ecosystem evolution. Third, we enrich our understanding of ecosystem-sponsored innovations, a prominent way in which the lead firm coordinates and advances an ecosystem. In complementing prior work that equates ecosystem renewals with architectural changes (Kapoor & Agarwal, 2017), we look into the specific changes in component interactions of the ecosystem, and consolidate the conceptual foundation for assessing the implications of ecosystem evolution.

Theory and Hypotheses

The Role of Architectural Innovations in Ecosystems

Research on ecosystems underscores the pivotal role of technological innovations in managing interdependencies between ecosystem leaders and their complementors (McIntyre & Srinivasan, 2017; Wareham, Fox, & Cano Giner, 2014). Broadly defined, technological innovation encompasses any advancement in the technology underlying a product that impacts its design, performance, or application (Schumpeter, 1942). This includes a wide spectrum of changes, from incremental refinements and radical redesigns to modular additions and shifts in component interactions. In ecosystem settings, leaders typically develop and share such innovations—often as modular features like Amazon’s Seller Central API, which allows third-party merchants to automate inventory management without disrupting existing workflows (Ghazawneh & Henfridsson, 2013; Parker et al., 2017)—relieving complementors of the need to build general functions themselves (Boudreau, 2012; Iansiti & Levien, 2004). Recent studies confirm that complementors, such as app developers, may benefit significantly from leveraging these ecosystem-sponsored technological innovations (Miric et al., 2023; Um et al., 2023). There seems to be a consensus that technological innovations sponsored by ecosystem leaders will allow complementors to seamlessly adopt new technologies and enhance value creation for end users.

Within this expansive category of technological innovation lies a distinct subset—architectural innovation—which is the primary focus of our study. Architectural innovation, as Henderson and Clark (1990: 10) define it, involves “innovations that change the way in which the components of a product are linked together, while leaving the core design concepts untouched.” Unlike other technological innovations that stress the overhaul of critical components, architectural innovation centers on system-level relationships, redefining how components interact with each other. For example, the transition from fuel-powered vehicles with internal combustion engines to electric vehicles with traction motors reconfigures interactions among automotive components, even as the components’ core functions persist (Baldwin & Woodard, 2009; Henderson & Clark, 1990). Extending to ecosystem contexts, we refer architectural innovations to those sponsored by leaders which introduce new structural interdependencies, reshaping how existing components interact within the workflow of a complementary product. This distinction is critical, as architectural innovations differ from the broader technological innovation category by their focus on linkage reconfiguration, presenting unique challenges and opportunities for complementors.

In sum, technological innovations provide a crucial foundation for joint value creation within ecosystems, as leaders offer modular and adaptable advancements that relieve complementors of foundational development burdens, thereby enabling them to focus on enhancing the ecosystem’s overall performance. Unlike other technological innovations that emphasize improvement of individual components, architectural innovation is defined by the reconfiguration of the linkages and interactions among existing components, while their core functions remain largely untouched (Henderson & Clark, 1990). Importantly, architectural innovations often emerge from strategic motives beyond simply facilitating joint value creation. Leaders may introduce these interface changes to assert control and establish standards or new design rules within their innovation networks, or to strategically reconfigure architectural boundaries to gain a competitive advantage (Baldwin & Woodard, 2009; Habib, Kristiansen, Rana, & Ritala, 2020; Hofman, Halman, & van Looy, 2016; Reiter, Stonig, & Frankenberger,2024; Wareham et al., 2014). Such strategic architectural innovations can impose significant adaptation costs on complementors, as altered interfaces disrupt their established workflows and create performance discontinuities that complementors must actively navigate and manage (Habib et al., 2020).

Architectural Innovations and Complementors’ Product Performance

While ecosystem-sponsored architectural innovations hold strong potential for complementors to renew their value propositions for users, they might also introduce negative externalities that complementors must grapple with. As complementors continually acquire and integrate new component knowledge from ecosystem leaders’ innovations, they often take for granted a stable architecture where the functional interactions between components mostly remain unchanged (Agarwal & Kapoor, 2023). However, architectural innovations often disrupt previously stable component interactions (Henderson & Clark, 1990), leading to breakdowns in the coordinated workflow among interdependent components in complementors’ product designs, and thereby displaying faulty functionalities to end users (e.g., Gawer & Cusumano, 2014; Wareham et al., 2014). Moreover, it might take a considerable amount of time for complementors to assimilate this new architectural knowledge and experiment with alternative product designs regulating such interdependent components. Therefore, they may struggle to adapt their product designs in the near term. As a result, complementors’ product offerings may experience reduced efficiency, unforeseen malfunctions, and overall instability after adopting architectural innovations, ultimately diminishing value creation and undermining the product’s appeal to end users.

Core ML is Apple’s software development kit, introduced in 2017, that enables developers to integrate AI capabilities into iOS apps. Traditionally, the interaction between the System-on-Chip (SoC) and RAM followed a stable, predefined pattern in which processing tasks were assigned to specific hardware components (e.g., CPU for general computing, GPU for graphics), allowing developers to anticipate memory usage and optimize performance accordingly. Core ML disrupts the established interaction by dynamically distributing AI workloads between the CPU and GPU in real time based on system demands. This constant reallocation of processing tasks makes RAM access patterns unpredictable, creating new challenges for developers in optimizing application performance.

An analogy helps illustrate this shift. The traditional system resembled a factory with a fixed division of labor. This created a highly structured and predictable workflow. Core ML, however, operates more like a flexible workforce system, dynamically reassigning tasks based on ongoing production needs. This enhances adaptability and optimizes resource utilization, but also introduces coordination challenges, as workers (analogous to SoC and RAM) must constantly adjust to new tasks rather than following a predictable routine. Similarly, Core ML’s fluid reallocation of AI workloads creates both efficiency gains and operational complexities, requiring developers to rethink how their app workflows manage memory and processing tasks. The performance issues became so prominent that the developer community responded with a 500-page “Core ML Survival Guide” (Hollemans, 2018). 2 Thus, Core ML adoption may lead to degraded app performance, increased incidence of crashes, and user dissatisfaction, highlighting the challenges that complementors face when integrating architectural innovations.

Hypothesis 1 (H1): Leveraging architectural innovations in an ecosystem induces performance shortfalls in a complementor’s existing product.

While it is not uncommon for technological discontinuities—particularly architectural innovations—to cause performance shortfalls, detailed analyses of their externalities remain scarce. In this study, we zoom in on complementors’ ability to alleviate the negative externalities from ecosystem-sponsored architectural innovations. Extant ecosystems research has cast a spotlight on the ecosystem leader due to its central role in coordination (Daymond, Knight, Rumyantseva, & Maguire, 2022; Kretschmer, Leiponen, Schilling, & Vasudeva, 2022; Stonig, Schimid, & Müller-Stewens, 2022); yet, it is the myriad of autonomous complementors that determine the joint value creation of an ecosystem. As an ecosystem evolves, successful adaptation by third-party complementors is essential to sustaining the coherence and effectiveness of their contributions to value creation (Adner, 2006). Recent research has started to explore why some complementors outperform others in the face of significant ecosystem changes, often attributing these differences to inherent attributes like development experience, entry timing, location, and so forth (e.g., Koo & Eesley, 2021; Rietveld & Eggers, 2018; Ozalp et al., 2023). However, our understanding remains limited regarding how complementors align with the evolving ecosystem to mitigate the disruptive effects of such innovations. These alignment dynamics are crucial because the ecosystem leader, by means of technological innovations both in the core product and for complementors’ use, sets the direction and pace of ecosystem evolution (Altman et al., 2022; Rietveld & Schilling, 2021). To stay relevant and competitive, complementors may need not only advantageous attributes or positions, but also the strategic capacity to align their offerings with the ecosystem leader’s innovations. In the next section, we examine how varying levels of alignment with the ecosystem leader can address the performance shortfalls associated with ecosystem-sponsored architectural innovations.

The Role of Complementor Alignment

Our theory focuses on the role of complementors’ alignment with the ecosystem leader in absorbing the externalities induced by architectural innovations. In general, alignment represents the extent of consistency among interacting firms regarding their activities and positions (Gulati, 1998; Schilling, 2015). Tighter alignment grants firms better access to external resources, which can help buffer them from technology-related changes and disturbances (Kapoor & Lee, 2013; Lavie & Drori, 2012). This focus on alignment is central to the theory of ecosystem strategy, because the realization of an ecosystem’s value proposition depends on consistent, integrative efforts between the ecosystem leader and its complementors (Adner, 2017; Dyer, Singh, & Hesterly, 2018; McIntyre & Srinivasan, 2017). We take a complementor perspective to examine how they align their activities and positions with those of the ecosystem leader. Although complementors have equal access to architectural innovations, the level of alignment with the leader determines their ability to harness these advancements for value renewal.

We contend that those complementors closely aligned with the ecosystem leader may experience lesser negative impacts from architectural innovations, because they can better mobilize the ecosystem leader’s resources to absorb the externalities arising from ecosystem evolution. Specifically, we propose that complementors’ alignment with the ecosystem leader can take place in two forms, technological alignment, which specifies the extent to which a complementor incorporates ecosystem-sponsored proprietary technological components in developing product offerings, and flow alignment, which concerns whether complementors synchronize their adoption activities with the activity flow orchestrated by the ecosystem leader. By introducing these forms of alignment, we provide novel insights into the activity configurations in ecosystem organizations from a complementor’s standpoint.

It is worth noting that complementors do not universally align with ecosystem leaders, despite the purported benefits. Several critical factors influence whether complementors maintain high or low alignment levels. For example, complementors may intentionally distance themselves from the ecosystem leader’s unique technology to mitigate appropriation risks and remain independent (Cutolo & Kenney, 2021). Wang and Miller (2020) found that while some publishers adapt their offerings to fit Kindle’s e-book format, others withhold key titles to prioritize their own distribution channels for fear of the ecosystem leader’s dominance and appropriation. Aligning with one ecosystem leader may require specialized design that could compromise the functioning and performance of the complementor’s products in other ecosystems (Cennamo, Ozalp, & Kretschmer, 2018). Tight alignment may also reduce a complementor’s distinctiveness from other competing complementors, leading to more homogeneous product offerings and diminishing innovativeness in its product (Miric et al., 2023). Thus, complementors face important trade-offs in alignment, weighing the advantages of leveraging the ecosystem leader’s resources against the need to maintain flexibility and preserve differentiation (Kang & Grodal, 2025). These trade-offs help explain why complementors differ in their alignment with the ecosystem leader. Below, we illuminate in detail how complementors utilize technological and flow alignment to mitigate discontinuities and improve their product performance upon integrating architectural innovations.

Technological alignment

In ecosystem organizations, complementors typically have uniform access to the ecosystem leader’s technological components (Adner, 2017; Ghazawneh & Henfridsson, 2013; Tiwana, 2018), which offer a shared foundation for developing their products in alignment with the ecosystem’s architectural design. However, architectural innovations, by disrupting existing component interactions, could render problematic those prior development efforts embedded in legacy architectures (Henderson, 2021). For complementors with high alignment, these efforts may pose fewer challenges. High technological alignment—where most components utilized are sponsored by the leader—allows complementors to harness synergies through a seamless mix-and-match approach (Yoo, Henfridsson, & Lyytinen, 2010). As the ecosystem leader proactively resolves undesired interdependencies among their sponsored components before releasing architectural innovations (Teece, 2018), it can preempt frictions for adopters, and, as a result, facilitate new synergistic functions without demanding extensive design reconfiguration by complementors, such that aligned products are better positioned to absorb the negative externalities arising from architectural innovations.

For example, the combination of Core ML and ARkit, a framework that allows developers to create augmented reality (AR) experiences for iOS devices, demonstrates powerful synergy in the iOS ecosystem. Core ML can be used to enhance AR by improving real-time object recognition, scene understanding, and interactive elements, while ARkit leverages these ML/AI capabilities to create more immersive and dynamic experiences for users. Together, Apple designed these technologies to jointly support real-time, intelligent AR functions such as object detection or gesture recognition, aimed at improving AR-related value propositions for iOS over its competing ecosystems. Thus, technological alignment better equips complementors for the ecosystem’s technological evolution, enabling them to capitalize on new combinatorial capabilities unlocked by architectural innovations.

By contrast, complementors with low technological alignment may face significant adaptation hurdles. Without the support of ecosystem-sponsored components, they cannot rely on the leader’s valuable knowledge of the new architecture (Yayavaram, Srivastava, & Sarkar, 2018), forcing them to independently navigate new interdependencies. This often requires a lengthy and costly trial-and-error process (Chen, Wang, Cui, & Li, 2021) as they experiment with new designs to adapt their existing products to the changing architecture. The lack of predefined solutions and built-in optimizations inevitably complicates this process, rendering it more time-consuming and prone to performance penalties (Burford, Shipilov, & Furr, 2022). To summarize, we expect that higher technological alignment allows complementors to better leverage their pre-existing integration, thereby mitigating the disruptions caused by architectural innovations, and enabling a more efficient and seamless product reconfiguration.

Hypothesis 2 (H2): Technological alignment weakens the negative effect of architectural innovations on complementors’ product performance.

Flow alignment

Flow alignment refers to the strategic synchronization of a complementor’s technology adoption with ecosystem’s renewal cycles. By aligning with these windows, complementors can quickly integrate architectural innovations into their product offerings, being among the first to deliver the ecosystem’s latest value propositions to end users (Adner, 2017; Ganco, Kapoor, & Lee, 2020). This alignment ensures that new functions are well-timed with the ecosystem leader’s marketing efforts and heightened user expectations, leading to smoother rollouts and better user reception. For example, app developers adopting Core ML close to Apple’s iOS release timepoint can offer cutting-edge functions, such as real-time image recognition, capitalizing on Apple’s promotion of its on-device AI technologies and meeting growing user demand for ML/AI functions. By pioneering applications of a new architectural innovation, complementors not only enhance their products’ attractiveness through cutting-edge functionalities but also signal the ecosystem’s potential.

Meanwhile, we contend that flow alignment acts as a protective mechanism for complementors by mitigating the challenges posed by ecosystem-sponsored architectural innovations. This alignment shifts user attribution towards the broader ecosystem renewal, reducing the scrutiny over individual apps’ performance issues (Tsiros, Mittal, & Ross, 2004). As such, users may be more likely to attribute performance hiccups to the ecosystem-wide changes, instead of the complementor’s flawed design. Consider again the Core ML example. Even if app performance issues arose (e.g., slower processing or frequent app crashes) after adopting the Core ML SDK, users would ascribe these to the transitional disturbances in the new generation of iOS which are likely transient, rather than fundamental flaws in the app’s design which could persist. Hence, high flow alignment enables complementors to more effectively navigate the performance externalities by capitalizing on the hype surrounding the leader’s core product renewal and mitigating the costs associated with such complex shifts.

Conversely, when flow alignment is low, complementors face heightened externalities in adopting architectural innovations. Without synchronizing their technology adoption with the ecosystem’s renewal cycles, the new functionalities may lack visibility and fail to capture users’ interests. Furthermore, performance issues arising from architectural innovations are more likely to be attributed to the complementor’s own product design, increasing user dissatisfaction. In sum, complementors with high flow alignment can mitigate the impact of performance shortfalls and achieve smoother transitions when adopting architectural innovations, while those with low alignment may encounter greater scrutiny and costs

Hypothesis 3 (H3): Flow alignment weakens the negative effect of architectural innovations on complementors’ product performance.

Empirical Analysis

Research Context and Data Sources

To examine these hypotheses, we use as context the release of Core ML in Apple’s mobile ecosystem. As a central force behind recent technological change, AI creates new opportunities and holds considerable potential for mobile ecosystems to foster novel value propositions and stimulate complementary innovation. By empowering mobile apps to offer a wide array of transformative features—such as voice recognition, image analysis, and natural language processing—AI can help to secure a decisive competitive edge for mobile ecosystems that successfully integrate AI into their mobile apps (MIT Technology Review, 2020). As such, mobile ecosystem leaders like Apple and Google are racing to adopt AI, recognizing the substantial rewards at stake for those who lead the way in joining this AI bandwagon.

In the race to advance AI capabilities, Apple took an early lead by unveiling its machine learning framework, Core ML, at the 2017 Worldwide Developers Conference (McGregor, 2020). Unlike traditional SDKs, which merely package standardized software components for developers, Core ML dynamically redistributes AI tasks across Apple’s processors based on real-time workloads (Axon, 2020). This dynamic allocation maximizes the utilization of SoC computing power, but it also fundamentally reshapes interactions between the SoC and other critical components like RAM, introducing a level of fluidity and unpredictability. As Core ML dynamically routes AI tasks like image recognition or text processing among different processors, it may constrain memory access for other app functions.

While Core ML empowers iOS developers to revolutionize their applications, it also highlights the challenges of adopting architectural innovations that disrupt established workflows and legacy app designs. These disruptions give rise to observable performance discrepancy when comparing the same app across ecosystems. Specifically, mobile ecosystems provide an ideal setting, as identical mobile apps often multihome in two unique ecosystems (e.g., iOS and Android). Multihoming in the app development context represents the practice of designing an app to operate across multiple platforms, such as iOS and Android (Cennamo et al., 2018). While such apps preserve a consistent core user experience and product feature set, each ecosystem’s proprietary tools and resources may create opportunities for certain ecosystem-specific enhancements (Cennamo & Santaló, 2019). By comparing the performances between multihoming apps in both iOS and Android ecosystems, we can tease out the impact of unique interactions between mobile apps and their local ecosystems—such as the use of Apple’s Core ML in mobile apps—while holding constant other app and developer differences.

To test our hypotheses, we acquired data from a leading analyst firm, Apptopia, which tracks and archives information related to all mobile apps developed for the iOS and Android ecosystem. Its data is extensively used by app developers, venture capital firms, and financial analysts. Our data set comprises detailed mobile apps information for the period from January 1, 2015 to October 31, 2019 across the 58 major country markets on both iOS and Google Play app stores that were available from the analyst firm. We obtained information on SDK (un)installment, app performance and basic app characteristics.

Difference-in-Differences Design and Sampling

We apply a DID approach that leverages the natural context in which a mobile app operates across two ecosystems (i.e., iOS and Android). Since such multihoming apps are almost identical except for their ecosystem environment, comparing them allows us to empirically tease out the effect of their unique interactions with each ecosystem, such as their use of the ecosystem-sponsored AI technology. This allows us to account for inherent endogeneity issues like self-selection (Shaver, 1998), where apps with advanced features or released by tech-savvy developers may choose to integrate the Core ML in the iOS ecosystem because they expect themselves to be better off from leveraging ecosystem’s AI capabilities. Our DID estimation between multihoming apps is free from the impact of such potentially endogenous app- and developer-level factors, because these factors largely remain consistent across ecosystems (Chen, Zhang, Li, & Turner, 2022).

To construct our sample, we followed prior literature and employed a “top segmentation” approach (e.g., Kapoor & Agarwal, 2017). We first selected top grossing apps that ranked in the top 500 in each month from January 2015 to May 2017 (before the release of Core ML) in 58 countries in each iOS app category (181,421 apps). To construct matched pairs for DID analysis, we searched for the counterparts of these iOS apps in the Android ecosystem from the same data source. We then screened all these pairs of apps in the sample and identified pairs that have integrated Core ML in their iOS apps between June 2017 and December 2019.

We conducted a series of steps to ensure that the same apps homed in different ecosystems share very similar product characteristics. During our inspection of data, and consistent with our interviews with app developers, we found that the same app in different ecosystems may still be somewhat different from each other in product design. However, when version names (e.g., 3.4.2) for the same app in different ecosystems are the same, the product characteristics are most likely to be identical. Our step is to ensure that when Core ML is integrated, both treatment and control groups share very similar product characteristics. We started by focusing on the pairs of multihoming mobile apps that share the same version names before adopting Core ML. Second, we dropped out those pairs if one of them had experienced updates shortly before adopting Core ML (less than 7 days), so as not to conflate with app updates. Third, we kept the pairs if the iOS app has deployed Core ML, while the Android app remained unchanged for at least 7 days after the adoption date. Finally, to isolate the effect of adopting Core ML, we excluded pairs that have experienced additional changes within 1 week of the Core ML adoption. In so doing, we generated a 7-day period after the adoption in the iOS app while the Android app remained unchanged. In summary, we had a final sample of 6,131 Core ML adoption events across all the sampled apps in all country markets. Appendix B, Figure B1 (see the online supplemental material) helps to illustrate our sampling procedures. We treated the date of the event as Day 0 and kept data that ranges from Day −7 to Day 7 for each selected pair. In this way, the matched samples are equivalent in unobserved covariates at the firm level, product level, and even product-day level.

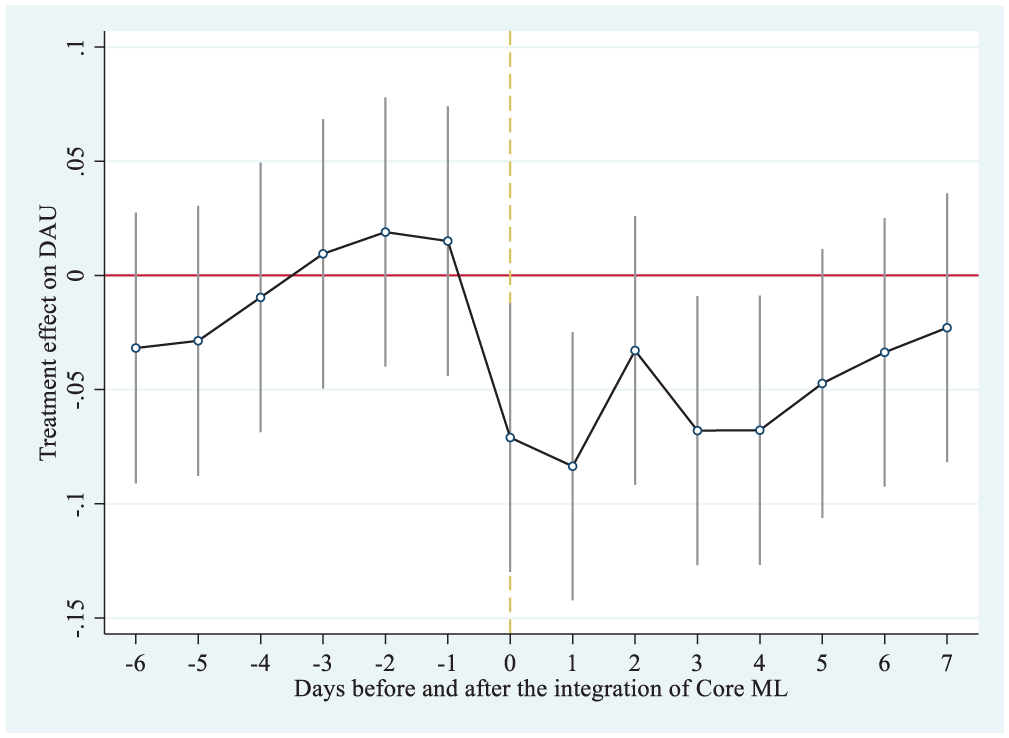

Our identification assumption is that, absent Core ML adoption, an app’s performance on iOS would have followed the same trend as its Android version. This assumption is plausible given our DID design, which leverages matched-dyad fixed effects to account for time-invariant heterogeneity and differences out common time-varying developer-level confounders. We empirically examine the plausibility of this assumption later (see Figure 1). Building on this assumption, we next detail the empirical strategy and sampling procedure underlying our DID analysis. The identification of the treatment effect relies on comparing changes in product performance over time between iOS apps that adopted Core ML during our observation window and the matched, unchanged apps that operate in the Android ecosystem. In our models, we followed previous studies to conduct pooled regressions that include matched-dyad fixed effects or, in other words, app fixed effects (Kovács & Sharkey, 2014; Singh & Agrawal, 2011). In line with prior DID studies (Choudhury & Kim, 2019; Zhang, Li, & Tong, 2022), we also examined the common trend assumption by employing the lead and lag model. It allows us to compare the treatment and control groups during the pretreatment period and test for any potential anticipatory effects before integrating AI. Specifically, the lead and lag model includes interactions between the treatment dummy and lead and lag terms for Core ML adoption. We plotted the coefficients and confidence intervals of all interaction terms in Figure 1, which shows that, before the adoption of Core ML, the difference in our dependent variable between treatment and control groups remains stable, supporting the prerequisite for a DID design.

The Treatment Effect on Log-Transformed DAU for Days Before and After the Integration of Core ML

Measures

Dependent variable

We measured complementors’ product performance in our context by the number of unique users of the mobile app on a given day in a focal country market, often referred to by practitioners as daily active users, or DAU (Loh & Kretschmer, 2022). To normalize its distribution, we log-transformed the measure such that consumer adoption equals to ln(number of daily active users + 1). By log-transforming the number of consumers, we captured the within-app percent change in consumer adoption as a function of the adoption event and change in covariates (Wang, Aggarwal, & Wu, 2020). Similar measures of consumers’ usage within a specific time interval (e.g., day, week, month) have been frequently used to gauge product performance of mobile apps, online games, or social networking services (Kovács & Sharkey, 2014; Tiwana, 2015). We recognize that using DAU as a performance metric is most meaningful when revenue is sourced through advertising or in-app purchases which rely on continuous user engagement. To ensure robustness, we conducted additional tests by narrowing our sample to exclude apps that lack these revenue streams.

Independent variables

Given that we seek to examine research questions with a matched DID design, we are interested in the coefficient, p value and magnitude of the difference estimator (Bertrand, Duflo, & Mullainathan, 2004). The difference estimator is the interaction of the treatment and post dummy variables. The variable treatment is a dummy variable, which is coded as 1 for the iOS apps as they adopted Core ML in the observation window and 0 for the Android apps. The post variable is also a dummy variable, which receives a value of 1 from Day 0 to Day 7 and 0 from Day −7 to Day −1. Day 0 is the date when the focal app integrated Core ML. In essence, the difference estimator captures whether the dependent variable has changed at a significantly different rate for the treated group as compared to the control group.

Moderators

According to our theory, the treatment effect of adopting Core ML varies depending on the focal app’s conformance and synchronization with Apple’s iOS ecosystem. Therefore, we included both as moderators to construct triple-differences (DDD) models. First, we expect technological alignment to influence the effect of Core ML. The ecosystem leader offers a set of foundational yet optional SDKs that can integrate important functions into its mobile devices. Such ecosystem-sponsored SDKs are well designed to be compatible with and even enhance each other (Ghazawneh & Henfridsson, 2013; Tiwana, 2018). For example, Apple’s ARKit performs better when coupled with Core ML. Thus, we coded technological alignment as the ratio of ecosystem-sponsored SDKs recognized in the focal app to the total number of SDKs it contains. For example, if an iOS app uses 10 SDKs in total, and one of them, such as ARKit, is recognized to be developed by Apple, then its technological alignment would be 0.1. This measurement indicates the extent to which the app relies on the ecosystem leader’s own technological component.

Second, we expect that flow alignment will also moderate the effect of Core ML on app performance. Our main measure of flow alignment captures how closely the timing of a complementor’s Core ML adoption aligns with the broader ecosystem’s renewal cycle, such as a major iOS release. Specifically, we operationalize flow alignment as the inverse of the log-transformed number of days since the complementor adopted Core ML, relative to the most recent ecosystem renewal (e.g., iOS 11). This timing matters because major iOS renewals often represent coordinated activity across Apple, hardware suppliers, third-party SDK providers, and developers, creating a window of joint innovation. Adopting Core ML close to such a renewal indicates greater alignment with this collective activity flow, while a longer delay reflects weaker synchronization. For example, if iOS 13 was released on September 19, 2019, and App A integrated Core ML on September 20, 2019 (1 day later), the flow alignment value would be: Flow alignment = 1/log(1 + 1) = 1/log(2) ≈ 1.443. In contrast, if App B adopted Core ML 100 days after iOS 13 (on December 28, 2019), the value would be: Flow alignment = 1/log(100 + 1) = 1/log(101) ≈ 0.217. This measure thus assigns higher values to apps that adopt Core ML closer to the ecosystem’s renewal, reflecting stronger flow alignment.

Controls

While matching multihoming apps together obviates the need for app and developer level controls (Foerderer & Heinzl, 2017; Kovács & Sharkey, 2014), we still need to take into account the idiosyncrasy between the multihoming apps due to ecosystem differences. Since most ranking lists display only the top 30 apps in a category, we measure market position as a binary indicator, which equals to 1 if the focal app ranks within the top 30 in the local market on the focal country-platform, and 0 otherwise. We also used the log transformed number of previous updates for each app-ecosystem observation to measure technological legacy.

Analysis Techniques

We conducted DID regressions to estimate the adoption difference between treatment and control apps, with app-country fixed effects and robust standard errors clustered at the app–country level. Specifically, we estimate the DID effect from the following equation:

where α = constant term; αi = app-country fixed effects; β = treatment group specific effect (treatment/control); γ = time trend common to control and treatment groups (pre/post-focal adoption); δ = true effect of treatment.

Results

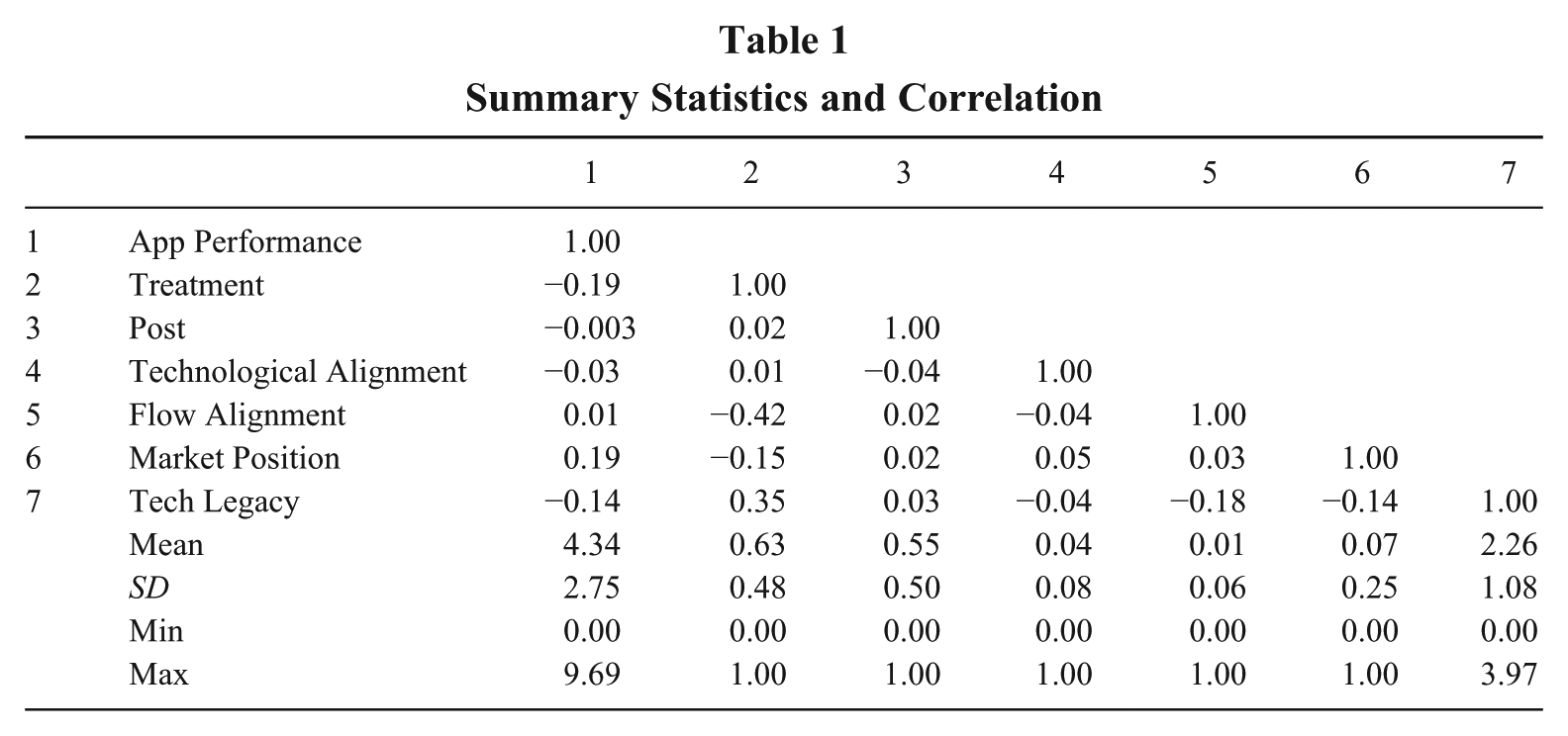

Table 1 presents summary statistics and the correlations between covariates. While raw DAU data for both treatment and control groups is presented in Appendix B (Figure B2 in the online supplemental material) and offers visual support for the common trend assumption, Figure 1 further provides statistical validation for the parallel trends assumption underlying our DID design. It examines the parallel trends assumption by presenting the coefficients of the treatment effect for each day in the observation window. This approach, commonly used in DID analyses, shows a single line reflecting the dynamic effects of treatment. Coefficients prior to Day 0 are not significantly different from zero, indicating no significant differences between the treatment and control groups before the Core ML integration. After Day 0, the coefficients turn negative, confirming the hypothesized treatment effect.

Summary Statistics and Correlation

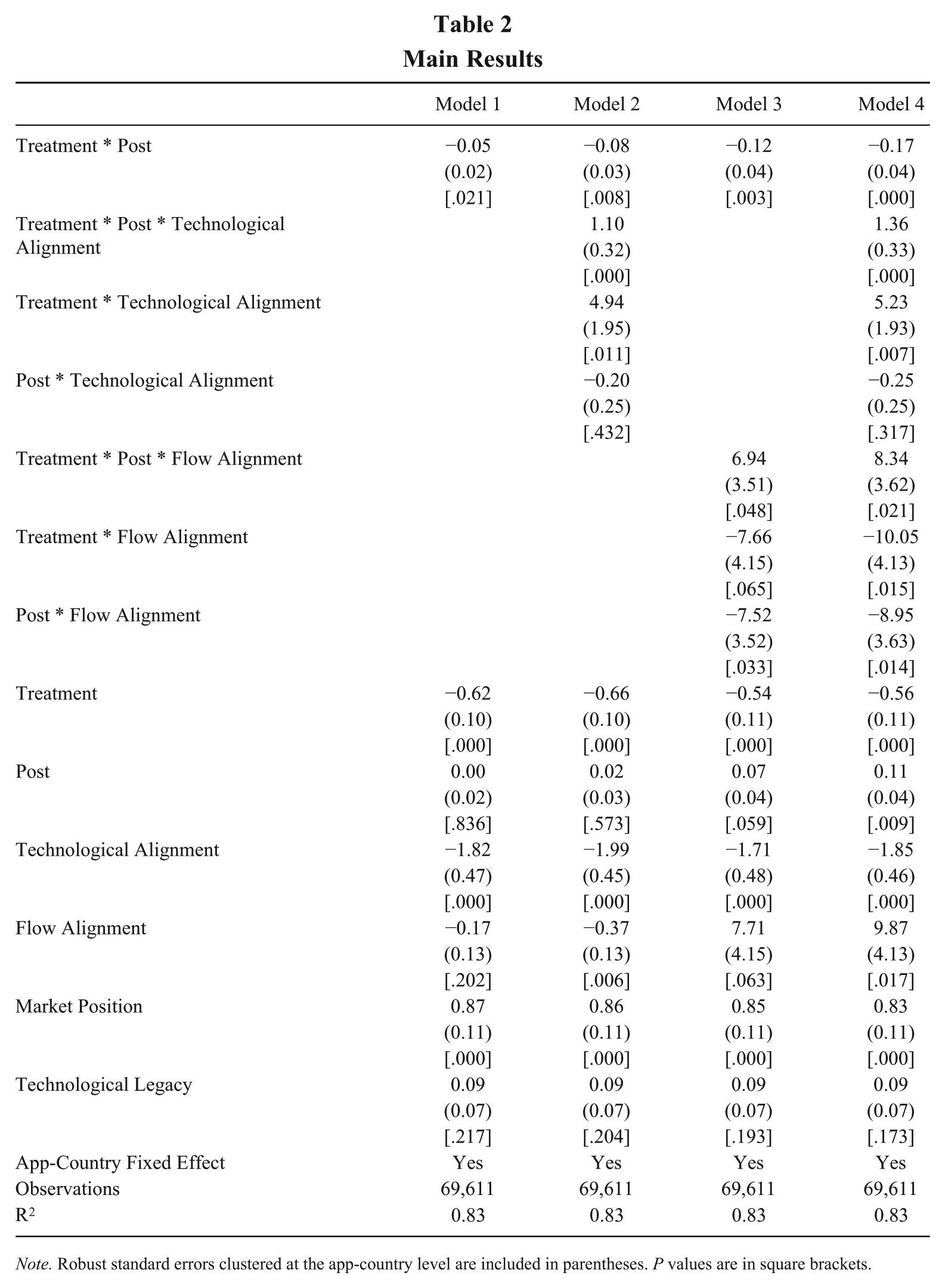

Table 2 presents results in Models 1 to 4 that examine Hypotheses 1 and 2. In Model 1, we first provided the baseline analysis of the effect of Core ML. The classic DID estimator, which is the coefficient of Treatment*Post, is negative and has a p value of .021, indicating that the adoption of Core ML decreases consumers’ usage of the product in the near term, corroborating our baseline expectation. The coefficient suggests that mobile apps that just integrated Core ML will see a 4.97% drop in app performance (i.e., the number of active users), relative to virtually identical apps that did not integrate AI technology. 3

Main Results

Note. Robust standard errors clustered at the app-country level are included in parentheses. P values are in square brackets.

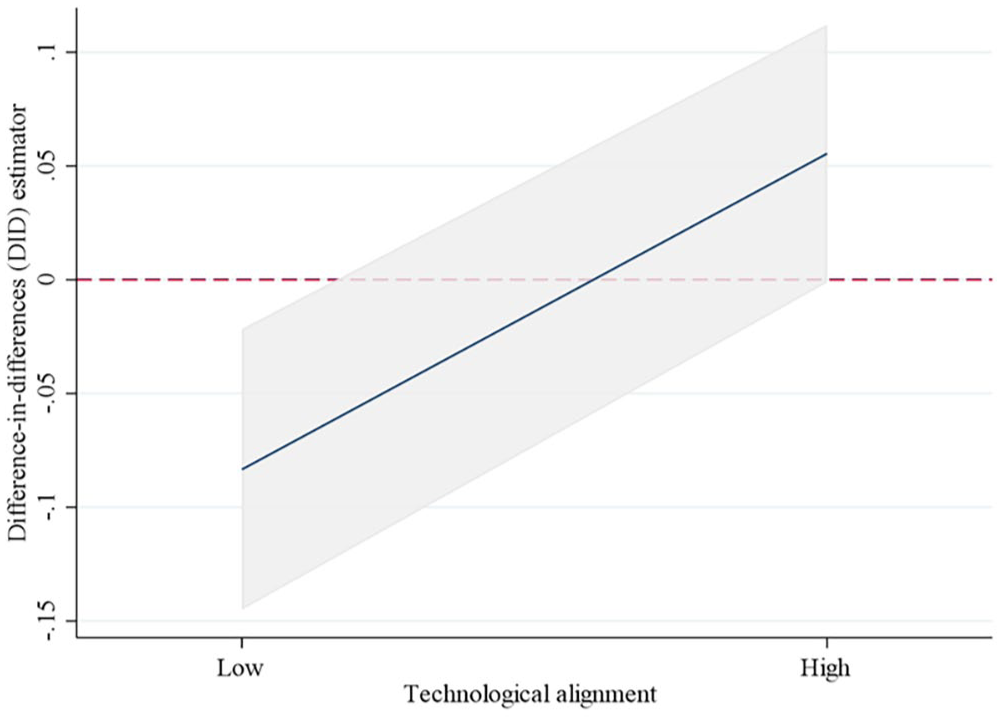

Next, we employed a DDD design to examine the role of technological alignment (H2) and flow alignment (H3) in the implication of deploying Core ML. We predicted that the negative effect of Core ML on product performance would be mitigated by technological and flow alignment. In Model 2, the coefficient of Treatment* Post* Technological alignment is positive and significant. Figure 2 provides graphical illustration, where the solid line represents the coefficient of the DID estimator under varying levels of technological alignment. For apps with low technological alignment (mean – 1 SD, which is 0), app performance decreases by 7.96% after adopting Core ML, as compared to the control group; for apps that strongly favor ecosystem-sponsored technological components (mean + 1 SD, which is 0.13), app performance increases by 5.65% after deploying Core ML, as compared to the control group. Our results suggest that high technological alignment in fact helps to improve the performance of Core ML, suggesting that, in the near term, the benefits of Core ML outweigh the ensuing discontinuities for apps using more ecosystem-sponsored technologies. In addition to the three-way interaction terms, our models also include a set of two-way interactions to capture potential baseline differences and temporal patterns, and their coefficients likely reflect context-specific dynamics. The positive and significant coefficient of Treatment * Technological alignment may suggest that before the adoption of Core ML, iOS apps with higher technological alignment generally performed better than their Android counterparts. This indicates platform heterogeneity in the degree to which self-sponsored SDKs empower complementors. In addition, the coefficient of Post * Technological alignment is statistically insignificant in both Model 2 and 4, indicating that the value-creating potential of Android-sponsored SDKs does not systematically change across time.

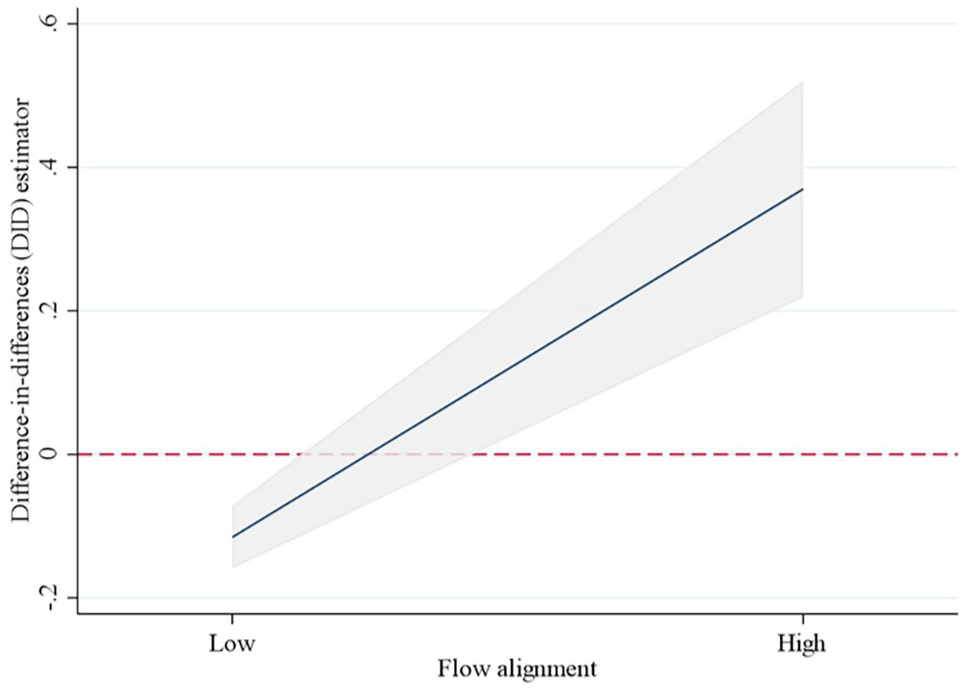

The Moderating Effect of Technological Alignment

Models 3 and 4 report the coefficient of Treatment * Post * Flow alignment, which indicates a positive and significant moderating effect. Figure 3 assists in interpretation. For apps with a low level of flow alignment (mean – 1 SD, which is 0), app performance decreases by 10.86% after adopting Core ML, as compared to the control group; for apps with a high level of flow alignment (mean + 1 SD, which is 0.07), app performance increases by 44.77% after the adoption, as compared to the control group. Therefore, our results show that achieving high synchronization with the ecosystem’s renewal cycle when integrating ecosystem-sponsored AI technology can significantly improve app performance. The negative coefficient of Treatment * Flow alignment suggests that before Core ML adoption, iOS apps with a high level of flow alignment tend to perform worse than Android counterparts with similar alignment levels. This may reflect that new versions of iOS are typically more disruptive to app usage than Android updates. Meanwhile, the negative and significant coefficient of Post * Flow alignment suggests that flow alignment is not associated with better performance following the release of Core ML. Taken together, these results underscore that flow alignment alone may impose short-term constraints, with its benefits only emerging in the context of architectural innovation. This is also consistent with our argument that timing plays a critical role in complementors’ responses to architectural innovations.

The Moderating Effect of Flow Alignment

Post-Hoc Analyses

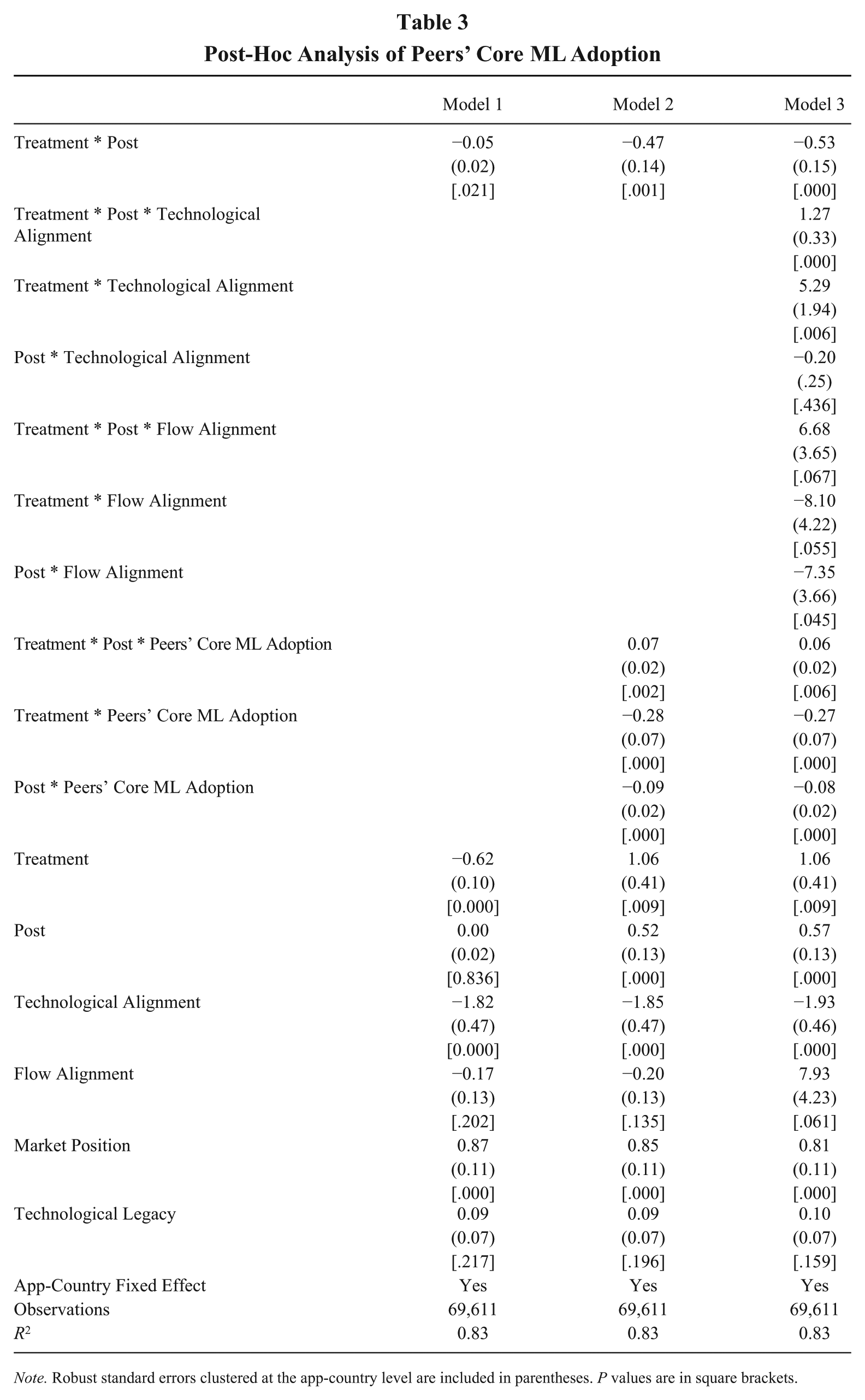

The main findings of this study highlight the critical role of the interplay between the focal complementor and the ecosystem leader in deriving value from architectural innovations like Core ML. Beyond this dyadic interaction, we further wondered whether multilateral dynamics involving diverse actors would influence the utilization of Core ML. 4 Our contextual investigation suggests that peer developers play a significant role. For instance, iOS app developers who have adopted Core ML often share experiences and insights online, contributing to a repository of architectural knowledge related to Core ML adoption (Hollemans, 2018). Industry experts further emphasize that this peer community not only facilitates knowledge diffusion among developers but may also influence Apple’s configuration of the development environment as it adapts to the feedback. It is therefore plausible that the interactions between the focal developer and its peers, as well as between peers and Apple, may shape how effectively Core ML is utilized. Thus, we next examined whether the benefits of Core ML adoption increase with the number of peer complementors who have adopted the technology.

Specifically, we introduced a new variable: peers’ Core ML adoption, defined as the number of apps within the same category that adopted Core ML SDK before the focal app. The direct effect of this variable on app performance was absorbed by fixed effects, as shown in the Model 1 of Table 3. In Model 2, a significant positive three-way interaction (Treatment * Post * Peers’ Core ML adoption) indicated that as more peers adopt Core ML, the negative effect of adopting Core ML on focal complementors diminish. This finding suggests that peers’ accumulated architectural knowledge may benefit late adopters, either by facilitating peer learning or by improving Apple’s system-level configuration through feedback loops. Finally, in Model 3, we confirmed that our original hypotheses remain mostly robust after including these additional terms related to peers’ Core ML adoption.

Post-Hoc Analysis of Peers’ Core ML Adoption

Note. Robust standard errors clustered at the app-country level are included in parentheses. P values are in square brackets.

In sum, this post-hoc analysis provides meaningful insights into the multilateral dynamics within ecosystems. It reinforces the notion that ecosystem evolution is not solely driven by the dyadic interplay between any two actors, but also by the coordination and knowledge-sharing among multiple actors. These findings complement our main findings on how to overcome challenges associated with adopting architectural innovations in the ecosystem.

Robustness Tests

We conducted a series of robustness tests using alternative samples, measures, and techniques to verify the main findings. First, we performed several sensitivity checks to evaluate the effect of AI in a longer time window. If the performance dip caused by Core ML only surfaces for a few days, it may not pose a major concern for app developers. Conversely, if the negative effect can last for a longer period, it may take a significant toll on the apps given their short lifecycles and hypercompetitive industry. In our main analyses, having a relatively tight time window can be helpful for identification, as it limits sample shrinkage that results from confounding events occurring within the time window. In robustness tests, we expanded our post-adoption time window from 7 days to 14 days, 30 days, and even 60 days after the day of integrating Core ML. We reported our results in Appendix B Table B1 (see the online supplemental material), which shows that our findings are robust to a longer time window.

Second, to address potential confounding effects related to the timing of Core ML adoption, we conducted a robustness test by including control variables that account for temporal factors. Specifically, we introduced time lag, measured as the number of months between Apple’s release of Core ML and the focal app’s adoption, to capture variations in developers’ decision-making processes—whether they adopt immediately to reap potential benefits or take a wait-and-see approach. In addition, we included app age, which accounts for the maturity and lifecycle stage of the app, as older apps may have more experience with ecosystem dynamics. As shown in online Appendix B Table B2, our main hypotheses remain well-supported, demonstrating that the observed effects are not driven by those timing-related strategies.

Third, a potential concern in our setting is that developers with stronger capabilities may be more likely to adopt Core ML and also tend to perform better regardless of adoption, raising the risk of self-selection bias. To address this concern, we conducted two sets of analyses. We controlled for developers’ capabilities measured by the number of prior iOS apps the focal developer had released before adopting Core ML, and added a three-way interaction term among developer capability, treatment, and post. This approach allows us to assess whether our results are confounded by the performance advantages of more capable developers. The main effect of Core ML adoption remained negative and significant after including this control (see online Appendix B Table B3), suggesting that the observed patterns are not merely driven by developer capability. Notably, the positive coefficient of Treatment* Post* Developer capabilities suggests that developers with greater capabilities are better equipped to harness Core ML to create value.

In addition, to probe the influence of unobserved selection further, particularly from unobserved time-varying confounders, we conducted an Impact Threshold of a Confounding Variable (ITCV) analysis using the konfound command in Stata (e.g., Busenbark, Yoon, Gamache, & Withers, 2022; Li, Jiang, Shen, Ding, & Yu, 2024). The ITCV quantifies the minimum strength of correlation that an omitted variable would need to have with both the treatment variable and the outcome to nullify the observed effect. Although it does not eliminate endogeneity, the ITCV offers a diagnostic tool that allows us to assess how strong an unobserved confounder would need to be to invalidate our results. The result shows that the ITCV value (0.0030) is higher than the impact of the most influential observed control variable in our model (0.0022), significantly reducing the concern for omitted variable bias (see online Appendix B Table B4 for details). Meanwhile, our empirical setting also helps alleviate the concern of omitted time-varying confounders, where both treatment and control apps operate in parallel ecosystems and face similar temporal shocks (e.g., macro trends, seasonal demand), reducing the likelihood of systematic unobserved heterogeneity. The robustness results offer evidence that our findings are not unduly biased by omitted variable concerns.

Finally, to further assess the robustness of our findings and clarify underlying mechanisms, we conducted a series of additional analyses (see the Online Appendix A for details), including validation of our dependent variable across business models, examination of user attribution mechanisms, and exploration of dual alignment synergies.

Discussion

In this study, we investigate the performance implications of adopting ecosystem-sponsored architectural innovations. While existing research emphasizes the opportunities for complementors by integrating such innovations, challenges often arise when the ecosystem leader introduces architectural changes. To explore these dynamics, we examine the adoption of Core ML, an AI-enabled architectural innovation developed by Apple, in iOS apps. Leveraging a DID “twin” design comparing multihoming apps across Apple and Google ecosystems, our findings reveal that complementors’ ability to derive value from ecosystem-sponsored architectural innovations hinges on their alignment with the ecosystem leader. Specifically, technological and flow alignment with the leader’s innovations enable complementors to absorb negative externalities, mitigate integration challenges, and enhance their product performance.

This study extends previous research in several ways. First, we contribute to the architectural innovation literature by advancing its scope to include the interdependencies and challenges faced within ecosystem settings. Architectural innovation involves reconfiguring the interactions among components while leaving the components themselves largely unchanged (Henderson & Clark,1990). These innovations are difficult to coordinate within firms and can hinder joint value creation between ecosystem partners. Existing research has predominantly focused on firm-level dynamics (e.g., Galunic & Eisenhardt, 2001; Park, Ro, & Kim, 2018), overlooking the growing role of architectural innovations as the backbone of modern ecosystems where diverse actors specialize and co-innovate.

Our work addresses this gap by situating architectural innovation within the context of these dynamic and open ecosystems and examining a specific, highly significant case of platform-level architectural innovation: Apple’s introduction of Core ML. Unlike firm-level architectures, ecosystem architectures evolve continually, requiring ecosystem leaders and complementors to adapt their strategies collaboratively. We show that these innovations impose substantial short-term performance costs on complementors due to complex interdependencies with ecosystem leaders. By examining these effects, we enrich the understanding of how architectural innovations disrupt not only individual firms but also the broader networks of co-innovation and specialization that define modern ecosystems. In answering Henderson’s (2021) call for deeper exploration of systemic innovations, we are among the first to identify the unique effects of architectural innovations in ecosystems and propose actionable strategies for ecosystem partners to mitigate integration challenges proactively. This focus is particularly critical given the growing prevalence of ecosystems as a dominant organizational form for technological and product development. Our findings open new avenues for research on how architectural innovations shape ecosystem dynamics and what strategies ecosystem partners can deploy to adapt effectively. This nuanced perspective advances architectural innovation theory by emphasizing its implications for interdependent and evolving ecosystem settings.

Second, this study highlights complementor alignment as a key mechanism to mitigate the negative externalities of ecosystem-sponsored architectural innovations. While prior research on ecosystems has emphasized the central role of ecosystem leaders in shaping coordination and setting the pace of evolution (Daymond et al., 2022; Kretschmer et al., 2022; Stonig et al., 2022), it is the myriad of autonomous complementors that ultimately determine the ecosystem’s joint value creation. These complementors face significant adaptation challenges as architectural innovations disrupt established workflows, evidently resulting in performance shortfalls. Recent studies have attributed complementor performance heterogeneity during ecosystem evolution to inherent characteristics, such as development experience, entry timing, or geographic location (Koo & Eesley, 2021; Rietveld & Eggers, 2018; Ozalp et al., 2023). However, less attention has been given to the strategic choices complementors can make to manage these disruptions.

We introduce complementor alignment as a proactive strategy that enables complementors to get prepared for adopting ecosystem-sponsored architectural innovations. Specifically, we identify two dimensions of alignment: technological integration with the ecosystem leader’s standards and synchronization with ecosystem’s renewal cycles. Drawing on our quantitative case study of Apple’s Core ML innovation, our findings provide strong, context-specific evidence that complementors that align closely with the ecosystem leader on these dimensions can minimize disruptions, mitigate performance declines, and capitalize on the new opportunities introduced by architectural innovations. Importantly, by highlighting complementors’ ability and readiness to adapt, shaped by prior alignment decisions, our study extends Adner’s (2017) work on ecosystem strategy. While prior research has emphasized alignment at the ecosystem level as a collective property (Altman et al., 2022; Rietveld & Schilling, 2021), we highlight the critical role of individual complementor alignment with the ecosystem leader in ensuring co-evolutionary joint value creation. This complementor perspective underscores the importance of ecosystem strategy and resilience for complementors to grapple with ecosystem evolution. Our study also opens avenues for future research on how complementors navigate trade-offs between aligning closely within one ecosystem and maintaining flexibility to operate across multiple ecosystems.

Third, drawing on our quantitative case study of Apple’s Core ML innovation, we enrich the literature on ecosystem-sponsored innovations by providing a clearer, empirically grounded understanding of how architectural changes affect complementors. Prior research has often equated ecosystem generational renewals with architectural innovations, implicitly assuming that such transitions inherently involve reconfigurations in component interactions without explicitly specifying these underlying linkages (Agarwal & Kapoor, 2023; Kapoor & Agarwal, 2017). We address this gap by examining precisely how ecosystem-sponsored architectural innovations alter specific component interactions to enable new technological capabilities, like on-device AI processing. Using a DID design, we identify which complementors experience performance disruptions due to these changes, offering nuanced evidence of architectural innovation’s implications. Moreover, our study highlights architectural change’s dual role: on the one hand, it serves as a strategic imperative, enabling ecosystems to remain competitive by adapting swiftly to technological advancements and shifting market demands (Baldwin & Woodard, 2009; Van Der Geest & Van Angeren, 2023). Without such flexibility, ecosystems risk stagnation and vulnerability to more innovative rivals. On the other hand, architectural innovation functions as a critical orchestration mechanism, empowering ecosystem leaders to strategically manage complementor activities by redefining component interactions and design rules. This orchestration guides complementors toward innovations that align closely with the ecosystem’s strategic objectives, coherence, and long-term value proposition (Chen, Tong, Tang, & Han, 2022; Constantinides, Henfridsson, & Partker, 2018; Zhang, Priem, Wang, & Li, 2023). By consolidating the conceptual foundation of architectural evolution and illustrating its nuanced effects on complementors, our findings encourage future research to explore further how ecosystem leaders balance innovation with architectural control across evolving technological ecosystems.

The findings and inferences from this study are subject to several caveats that nonetheless provide valuable opportunities for future research. First, our analysis focuses on Core ML, an architectural innovation introduced in a fast-paced digital ecosystem, providing a detailed, real-world examination of the performance consequences of complementor alignment but limiting generalizability. In line with recent guidance on reporting quantitative case studies (King, 2023), we treat this as a single-case, embedded-unit design, and caution that architectural changes in slower-cycling industries (e.g., automotive or heavy manufacturing) may face different challenges and adaptation costs. We encourage future research to test our model across diverse industries and innovation types. Second, while Core ML positions Apple as a leader in AI-enabled mobile applications, it also raises governance challenges that we do not fully explore. Our focus is on complementors, and the lead firm’s role in facilitating architectural transitions is only briefly discussed, representing a limitation and opportunity for future research. Third, although we emphasize the benefits of complementor alignment, it may also entail significant costs, such as reduced flexibility and increased dependence. Future research could examine when complementors prioritize autonomy over alignment, and how they navigate the trade-offs. Fourth, while we focus on alignment as the primary adaptation mechanism, complementors may also rely on alternatives such as vicarious learning or experimentation. We control for some of these effects (e.g., learning from Core ML adopters), but not exhaustively, and future research could further explore alternative strategies. Fifth, our use of DAU offers key insights but may be less suitable for apps with upfront paywalls or one-time purchases, where other performance metrics may be more appropriate. Sixth, our post hoc analysis hints at peer-driven adoption dynamics, but the underlying mechanisms remain unclear. Future studies could examine how interactions among complementors shape ecosystem evolution beyond the leader-complementor dyad. Seventh, while our design mitigates observable selection, we acknowledge that adoption of Core ML may still be subject to non-random self-selection, particularly from unobserved, time-varying confounders. More rigorous identification strategies such as instrumental variables or Heckman correction are infeasible in our context due to data and assumption constraints. This limitation invites future studies leveraging quasi-experimental settings for stronger causal identification. Lastly, we acknowledge the possibility of iOS-Android spillover effects, particularly as AI technologies continue to advance.

In conclusion, this study advances our understanding of architectural innovation in ecosystems by revealing its disruptive effects on complementors and emphasizing alignment as a critical mechanism for mitigating these challenges. Using the context of Apple’s Core ML adoption, we demonstrate how ecosystem-sponsored architectural innovations can impose performance disruptions, delaying joint value creation. Our findings shed light on the trade-offs inherent in technology-driven ecosystem governance, and provide a foundation for future research on how complementors manage architectural evolutions of ecosystems.

Supplemental Material

sj-docx-1-jom-10.1177_01492063251368267 – Supplemental material for Marching to the Beat: The Role of Complementor Alignment in the Architectural Evolution of Ecosystems

Supplemental material, sj-docx-1-jom-10.1177_01492063251368267 for Marching to the Beat: The Role of Complementor Alignment in the Architectural Evolution of Ecosystems by Pengxiang Zhang, Liang Chen, Yang Yang and Sali Li in Journal of Management

Footnotes

Acknowledgements

We are deeply grateful to Professor Elisa Operti, along with two anonymous reviewers, for their invaluable guidance and constructive comments throughout the review process. This research was supported by the National Natural Science Foundation of China (Grant No. 72202004, 72091314, 72192844, 72442018, 72442026, 72372146) and the University of South Carolina’s Center for International Business Education and Research (CIBER) grants.

Supplemental material for this article is available with the manuscript on the JOM website.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.