Abstract

Use of medical imaging continues to increase, making the largest contribution to the exposure of populations from artificial sources of radiation worldwide. The principle of optimisation of protection is that ‘the likelihood of incurring exposures, the number of people exposed, and the magnitude of their individual doses should all be kept as low as reasonably achievable (ALARA), taking into account economic and societal factors’. Optimisation for medical imaging involves more than ALARA – it requires keeping individual patient exposures to the minimum necessary to achieve the required medical objectives. In other words, the type, number, and quality of images must be adequate to obtain the information needed for diagnosis or intervention. Dose reductions for imaging or x-ray-image-guided procedures should not be used if they degrade image quality to the point where the images are inadequate for the clinical purpose. The move to digital imaging has provided versatile acquisition, post-processing, and presentation options, and enabled wide and often immediate availability of image information. However, because images are adjusted for optimal viewing, the appearance may not give any indication if the dose is higher than necessary. Nevertheless, digital images provide opportunities for further optimisation, and allow the application of artificial intelligence methods.

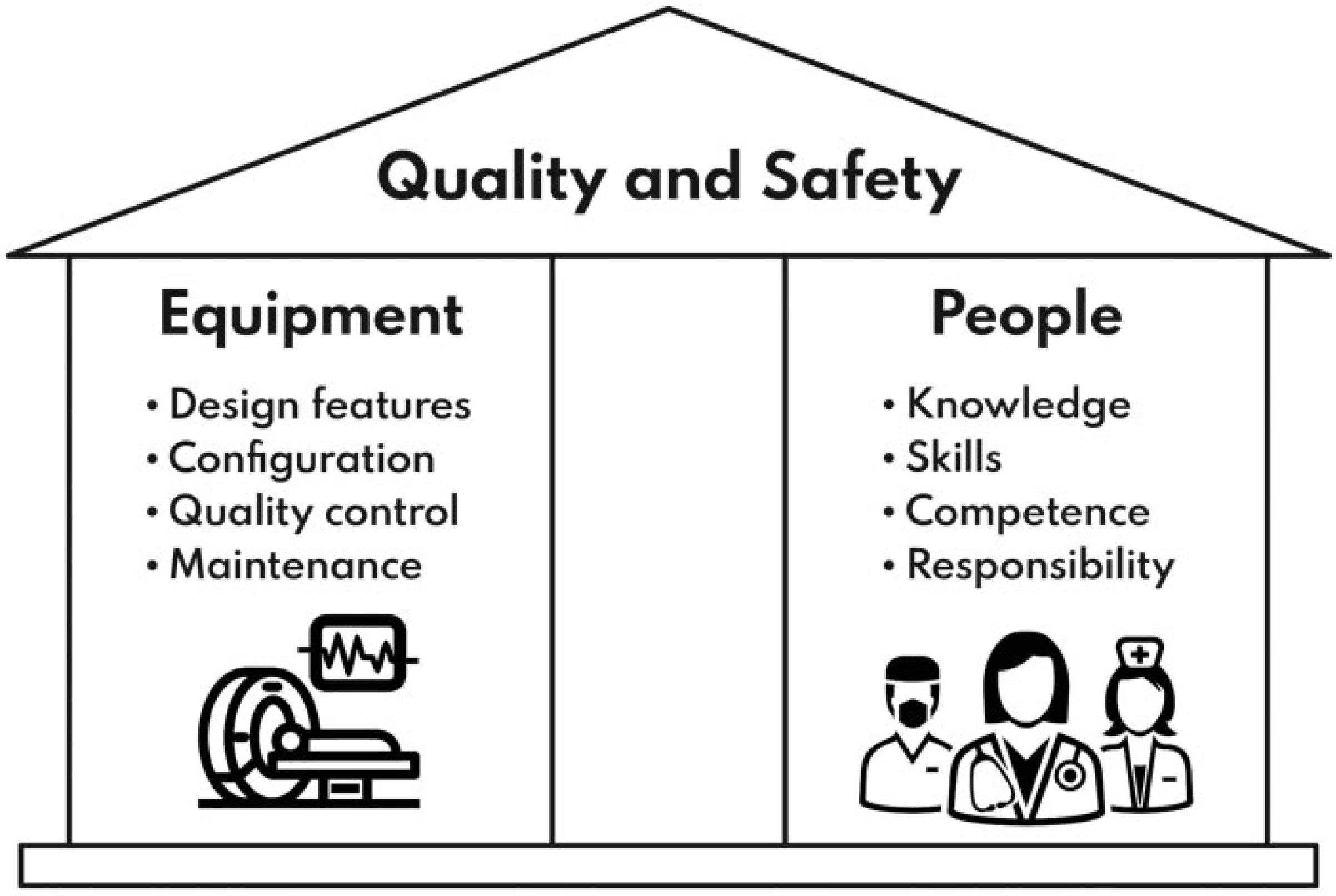

Optimisation of radiological protection for digital radiology (radiography, fluoroscopy, and computed tomography) involves selection and installation of equipment, design and construction of facilities, choice of optimal equipment settings, day-to-day methods of operation, quality control programmes, and ensuring that all personnel receive proper initial and career-long training. The radiation dose levels that patients receive also have implications for doses to staff. As new imaging equipment incorporates more options to improve performance, it becomes more complex and less easily understood, so operators have to be given more extensive training. Ongoing monitoring, review, and analysis of performance is required that feeds back into the improvement and development of imaging protocols. Several different aspects relating to optimisation of protection that need to be developed are set out in this publication. The first is collaboration between radiologists/other radiological medical practitioners, radiographers/medical radiation technologists, and medical physicists, each of whom have key skills that can only contribute to the process effectively when individuals work together as a core team. The second is appropriate methodology and technology, with the knowledge and expertise required to use each effectively. The third relates to organisational processes which ensure that required tasks, such as equipment performance tests, patient dose surveys, and review of protocols, are carried out.

There is wide variation in equipment, funding, and expertise around the world, and the majority of facilities do not have all the tools, professional teams, and expertise to fully embrace all the possibilities for optimisation. Therefore, this publication sets out broad levels for aspects of optimisation that different facilities might achieve, and through which they can progress incrementally: Level D – preliminary; Level C – basic; Level B – intermediate; and Level A – advanced. Guidance from professional societies can be invaluable in helping users to evaluate systems and aid in adoption of best practice. Examples of systems and activities that should be in place to achieve the different levels are set out. Imaging facilities can then evaluate the arrangements they already have, and use this publication to guide decisions about the next actions to be taken in optimising their imaging services.

Keywords

MAIN POINTS

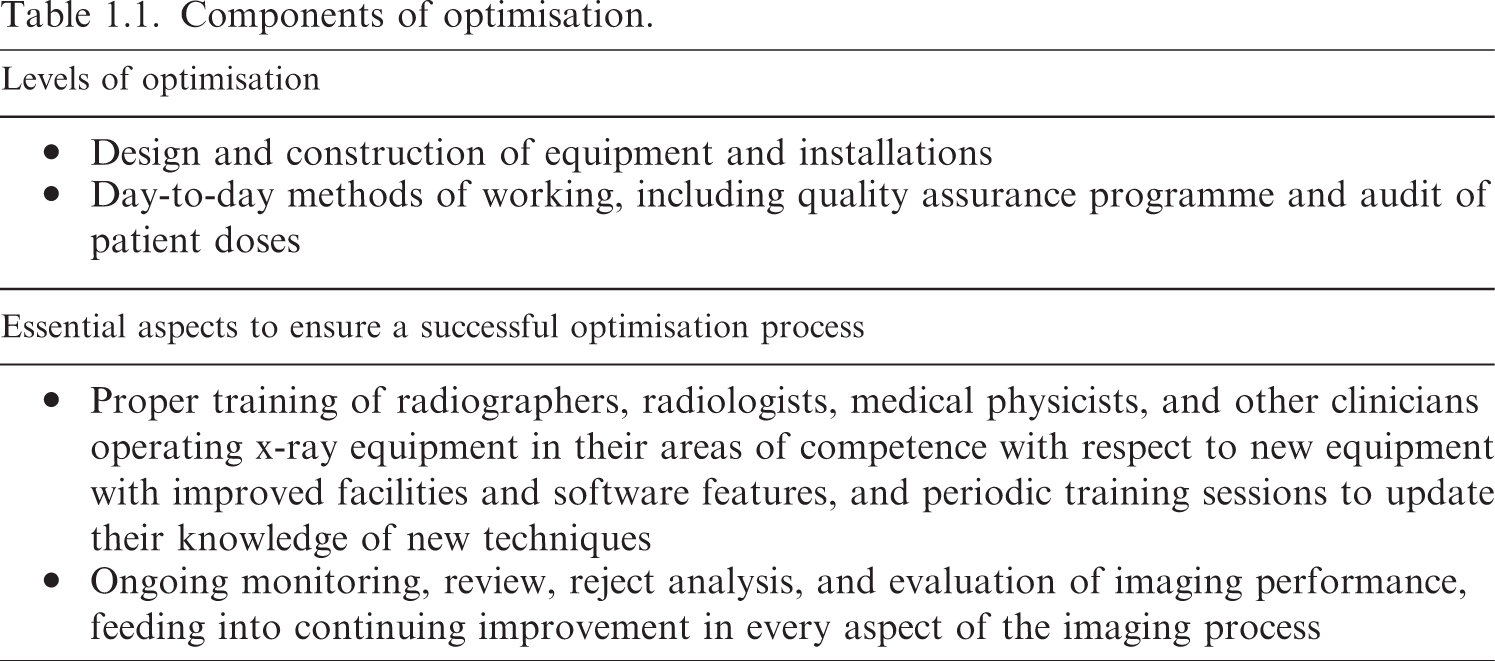

Optimisation of radiological protection in diagnostic imaging and image-guided procedures requires provision of clinical images for individual patients that are of sufficient quality to ensure an accurate and reliable diagnosis, with radiation exposure minimised according to the applied imaging technology. In medical imaging, optimisation of protection is at two levels: (i) the design and construction of the equipment and the installation where it is used; and (ii) the day-to-day working procedures performed by the staff involved. Optimisation will only occur if all staff are properly trained in their roles, and equipment operation is assured through a comprehensive quality assurance programme, with ongoing review of performance that feeds into affirmation and development of protocols. Different aspects contribute to optimisation. These are: professionalism within optimisation teams comprising radiologists, radiographers, and medical physicists, each using their unique sets of skills to improve imaging performance; methodology and technology coupled with the necessary expertise to evaluate performance; and organisational processes to manage quality improvement within a structured framework. Complex digital x-ray equipment allows dose levels to be reduced without compromising image quality. This requires high levels of knowledge and skill from imaging professionals, as if features are used incorrectly, patient doses can be unnecessarily high without this being apparent. All members of the imaging team must be given the necessary expertise through training, updated regularly, so they fully understand equipment operation. The degree to which an organisation has implemented optimisation will depend on the personnel, facilities, level of knowledge and experience available, and regulatory oversight. This publication sets out a layered approach to the development of optimisation with broad categories for systems that might be expected to be in place to achieve different levels: Level D – preliminary; Level C – basic; Level B – intermediate; and Level A – advanced. The aim is to guide managers and staff in decisions about the next step to take in their programme of optimisation.

EXECUTIVE SUMMARY

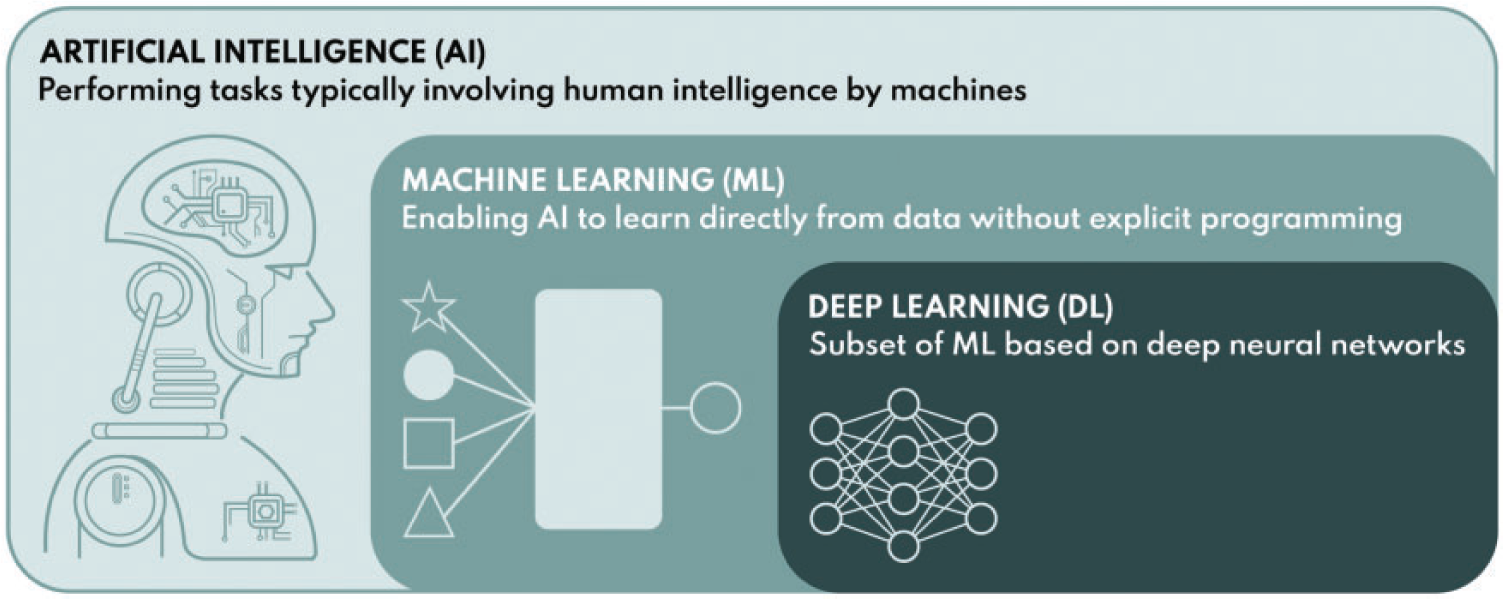

(a) Optimisation is a key principle of radiological protection. Medical exposures make the largest contribution to the exposure of populations from artificial sources of ionising radiation worldwide, so optimisation of such exposures is particularly important. Optimisation of radiological protection for imaging requires radiation dose to be minimised in a manner that is consistent with providing the images/information required for the intended purpose. Digital radiology encompasses all radiological techniques that present images in digital form, for which the appearance can be manipulated to display the image in a form that best suits the purpose, and includes digital radiography, fluoroscopic techniques, and computed tomography. The emphasis on image quality has become crucial in digital radiology with more versatile image acquisition, post-processing, and presentation options. These techniques require a more rigorously defined optimisation process, awareness of underlying technical factors that are not always obvious, and comprehension of the impact of information technology on the displayed image. The clinical risk of patient mismanagement resulting from an examination for which the dose has been reduced to the point at which the image quality is insufficient to allow changes in diseased or damaged tissue to be characterised is likely to be high compared with any additional risk from a higher radiation exposure that gives sufficient image quality. However, cumulative radiation doses from the ever-increasing use of radiology may result in health consequences that, although not immediately apparent, could manifest at a later point in time. Thus, it is a question of balance between different types of risk (potential long-term effects from dose and more immediate clinical consequences), and achieving the correct balance is a challenging task from both technical and professional perspectives.

(b) To achieve successful optimisation, a facility must have sufficient imaging equipment, and enough staff who have been adequately trained in use of the equipment and the information technology features that are available. The optimisation process starts with specification of the equipment required to fulfil the clinical need, and continues through its purchase, installation, acceptance, and commissioning. It includes maintenance and the quality assurance programme which continue throughout the life cycle of the equipment. Optimisation then continues during clinical use of the equipment, with requirements for provision of necessary clinical information by those referring patients for examinations based on accepted guidelines, and appropriate processes for reporting and acting on results of imaging procedures.

(c) Optimisation requires the input of knowledge and skills on many different aspects of how radiological images are formed, and so requires contributions from different healthcare professionals working together as a team. A radiologist, other appropriately trained radiological medical practitioner, or radiographer can judge whether the image quality is sufficient for the diagnostic purpose. A radiographer should know the practical operation and limitations of the equipment and associated information technology, and have a basic knowledge of the physical principles of image formation and interpretation of measurements on images. A medical physicist should have a deeper understanding of the physical principles behind image formation, and be able to perform and interpret measurements of dose and image quality. In order to achieve optimisation, the three specialities, together with other healthcare professionals who will sometimes be involved, must have mutual respect for their individual skills and work together as a cohesive group (i.e. professionalism). Unfortunately, at the time of preparation of this publication, the levels of knowledge and skills in many countries are often inadequate to achieve good optimisation on more complex digital radiology systems due to lack of resources. Increasing technical and computational complexity in radiology equipment and applications underlines the importance of multi-professional collaboration and dependency on the combined knowledge of different professionals. Dedicated time must be made available for professionals to work together to meet emerging challenges in optimisation as applications of new equipment are developed.

(d) Digital imaging provides the potential for images to be obtained with lower exposures than previously possible using film screen combinations, enabling levels to be adapted to the diagnostic requirements of specific examinations. New techniques are continuously becoming available that can improve image quality and potentially enable diagnostic images to be obtained with lower patient doses. As an example, automated exposure control systems are continuously developed to be more effective in ensuring consistent image quality while reducing patient dose by adapting the radiation level to each procedure and the patient. However, all of these features introduce additional complexities and require settings to be chosen correctly for proper operation of software controls. If users do not deploy them effectively due to limited awareness of their mode of operation, the doses received by patients may not be optimal, but this will not be apparent to the user. Therefore, more complex equipment requires knowledgeable staff with more extensive training for its operation. Knowledge and skills, in combination with the instruments and test objects to evaluate the performance of the equipment, form the basis of optimisation (i.e. methodology).

(e) A key component of optimisation is keeping the radiation dose to the patient as low as reasonably practicable, while maintaining an adequate level of image quality and diagnostic information. At the basic level, this requires regular assessments of doses from groups of patients to determine the dose levels, and comparisons with diagnostic reference levels to confirm acceptability. Evolving technical optimisation features and quality management systems will enable extension of the optimisation process to individual patients and procedures based on clinical indication. Operators must have the knowledge and skills to use such features appropriately, and if they do not, important opportunities will be lost. Such an indication and patient-specific level of optimisation is applied routinely every day in radiology departments, and is a fundamental extension of the conventional optimisation principle (known as ‘as low as reasonably achievable’) as applied to patients. Indication orientation and patient specificity connect the optimisation process directly to the justification process, and enable them to be mutually supportive and comprehensive, forming a unitary process for radiological protection.

(f) Evaluation of image quality as part of quality assurance/quality control programmes typically involves evaluation of clinical images by an experienced radiologist, other appropriate radiological medical practitioner, or radiographer against established good image quality criteria, and objective analysis of phantom images by a medical physicist. Further net improvements could be gained in the future through automated image quality evaluation based directly on clinical patient images, and may involve artificial intelligence algorithms implemented directly into image archives or imaging modalities. Regardless of the present or future methodology, the process of measuring image quality involves many interdependent parameters and, due to this comprehensive nature, is a pivotal part of the overall assessment of performance. Results from evaluations of clinical image quality, coupled with results from patient dose and image quality measurements, feed into the development of examination protocols optimised for the clinical purpose. To ensure that optimisation processes are carried out consistently, management systems need to be in place to confirm that measurements and assessments are made, to ensure that available data from clinical use and performance measurements are used in making adjustments to protocols to address any deficiencies, and to monitor the progress that is made (i.e. process management).

(g) The degree to which any organisation has implemented optimisation in digital radiology will depend on the personnel, facilities, and level of knowledge and experience available. Within the aspects of professionalism, methodology, and process, there will be different levels of performance that radiology facilities will have achieved. This publication sets out broad categories for the systems that would be in place to achieve different levels of optimisation: Level D – preliminary; Level C – basic; Level B – intermediate; and Level A – advanced. It is hoped that evaluation of the arrangements that radiology facilities already have in place will provide a guide to decisions about what actions should be taken next to improve optimisation of their imaging service. It is also noted that these categories (Levels D, C, B, and A) with increasingly advanced optimisation methods also reflect the increasing capability to reach indication-oriented and patient-specific optimisation processes.

(h) There is a need for a cultural change in order to enable improvements and developments in optimisation methods, and to avoid key processes being overlooked. Optimisation will only be achieved through facilities investing in adequate staffing levels to operate their imaging equipment, and providing the appropriate training, together with continuing professional development opportunities for their staff. This begins at the stage of entry into medical imaging professions with sufficient courses for the education of trainees with opportunities to learn under the guidance of experienced practitioners. Knowledge and understanding are key to successful optimisation of radiological imaging. The cultural shift towards multi-professionalism required can only occur if the professional roles and competences are built to support this fundamental shift.

(i) This publication provides guidance on the adaptation of levels of dose and image quality to clinical tasks, taking advantage of the wide dynamic range offered by digital imaging equipment. Practical aspects that depend on specific x-ray image acquisition techniques are covered in a separate companion publication.

1. INTRODUCTION

1.1. Background

(2) X rays have been used to obtain images of the body to aid in diagnosis of disease since their discovery by Roentgen in 1895. X-ray imaging has provided an invaluable aid in diagnosis, follow-up, and management of patient treatments, and over the last few decades, with the rapid development of interventional techniques, it has allowed many complex procedures in cardiology and in specialities dealing with other parts of the body to be performed with reduced surgical intervention, improving patient comfort and survival. X-ray imaging procedures are the most widely used form of medical imaging, and make the largest contribution to human exposure to ionising radiation from artificial sources. As such, an x-ray examination carries an associated risk that, although not large, must be taken into account when patients are imaged. (3) The benefits to the patient undergoing a radiological procedure that is going to influence management of their treatment or aid in diagnosis will almost always outweigh the risk resulting from the radiation exposure. The term ‘patient’ includes not only persons undergoing medical treatment, but also volunteers subject to exposure as part of a programme of biomedical research. Similar principles with regard to optimisation will also apply to planned non-medical imaging exposures carried out for legal purposes of any kind. (4) If there is no benefit from performing an exposure, it is not justified. Awareness of associated risks has encouraged the development of facilities and tools on equipment to allow radiation doses to be kept as low as reasonably achievable (ALARA), consistent with the intended clinical purpose (i.e. according to the traditional ALARA principle). Those using x rays need to understand the imaging process and the interplay between equipment factors and settings, as well as being trained in the practical techniques for use of information technology, in order to ensure that patient radiation doses are kept to the minimum for obtaining the image quality required for the specific imaging task. The need for this understanding has become more crucial with the increased complexity of digital radiology techniques, which include digital radiography, computed tomography (CT), and interventional x-ray equipment, which can deliver significant radiation doses if used incorrectly.

1.2. Image quality levels and clinical diagnostic requirements

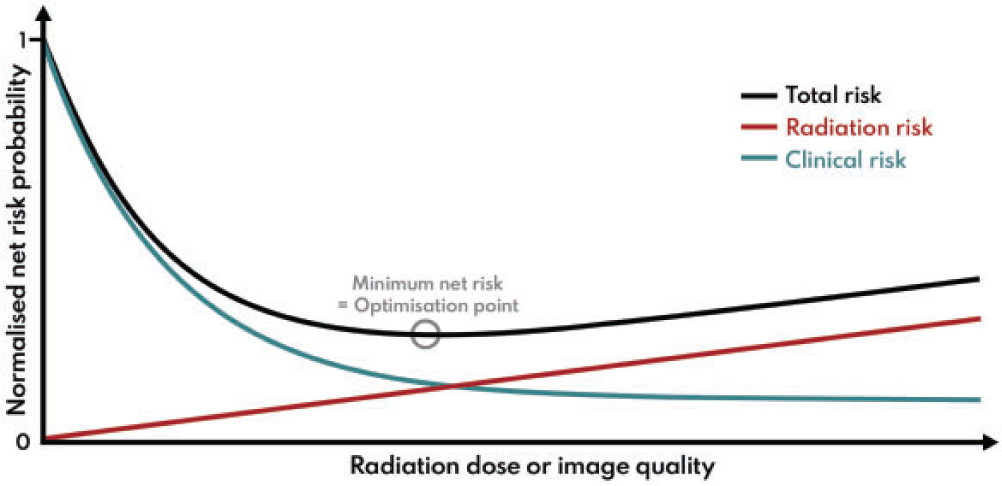

(5) Optimisation in simple terms involves achieving a balance between clinical benefit and risk from radiation exposure. First and foremost, it requires provision of clinical images for individual patients that are of sufficient quality to ensure accurate and reliable diagnoses, so that correct care decisions can be made. In addition, the radiation doses used in acquiring such clinical images should be adjusted so that, while being adequate to produce the images, they are minimised to the level appropriate for the applied imaging technology. (6) The level of image quality can affect the diagnosis, and the aim is to achieve a balance between the clinical and radiation risks in order to minimise the overall risk. In many imaging indications, the clinical risk related to possible sub-optimal image quality from an examination, for which the exposure has been reduced more than it should have been, is likely to outweigh the small additional risk from using a higher radiation exposure. A patient will not benefit from an examination that is incapable of visualising the appropriate pathology, and the dose will be wasted no matter how low it might be. Thus, there could be a consequent clinical risk of misdiagnosis, which may increase as image quality declines (Fig. 1.1); in such situations, there may be a need to increase the dose (Samei et al., 2018). Therefore, while in the general context of the System of Radiological Protection, optimisation is understood as keeping doses ALARA, in the case of medical imaging, this means delivering the lowest possible dose necessary to acquire adequate diagnostic images. This is best described as ‘managing the radiation dose to be commensurate with the medical purpose’ (ICRP, 2007a,c). Managing the radiation dose for any application requires an understanding of the way in which an image is formed, and how different factors influence both the image quality and the radiation dose received by the patient (Martin et al., 1999).

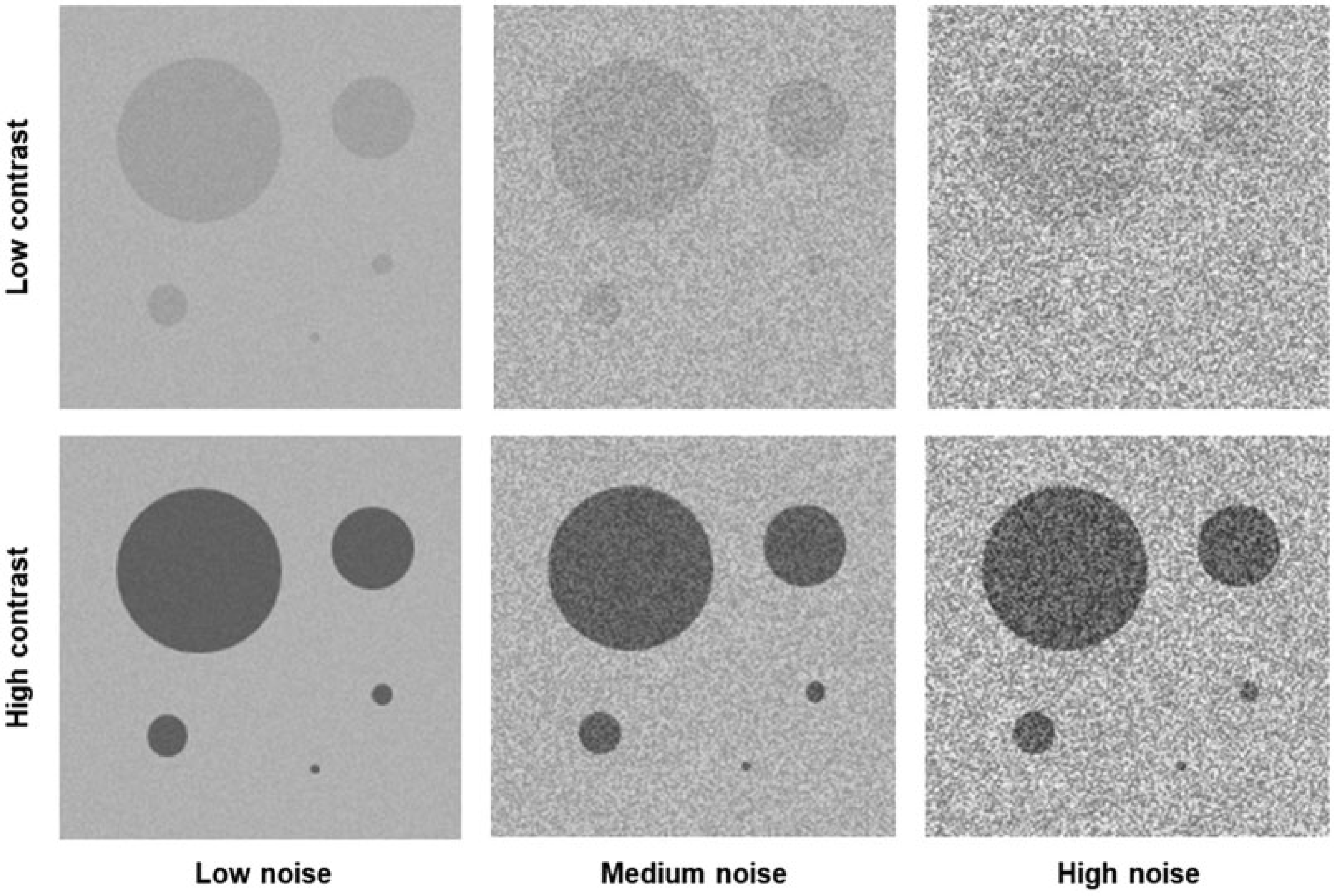

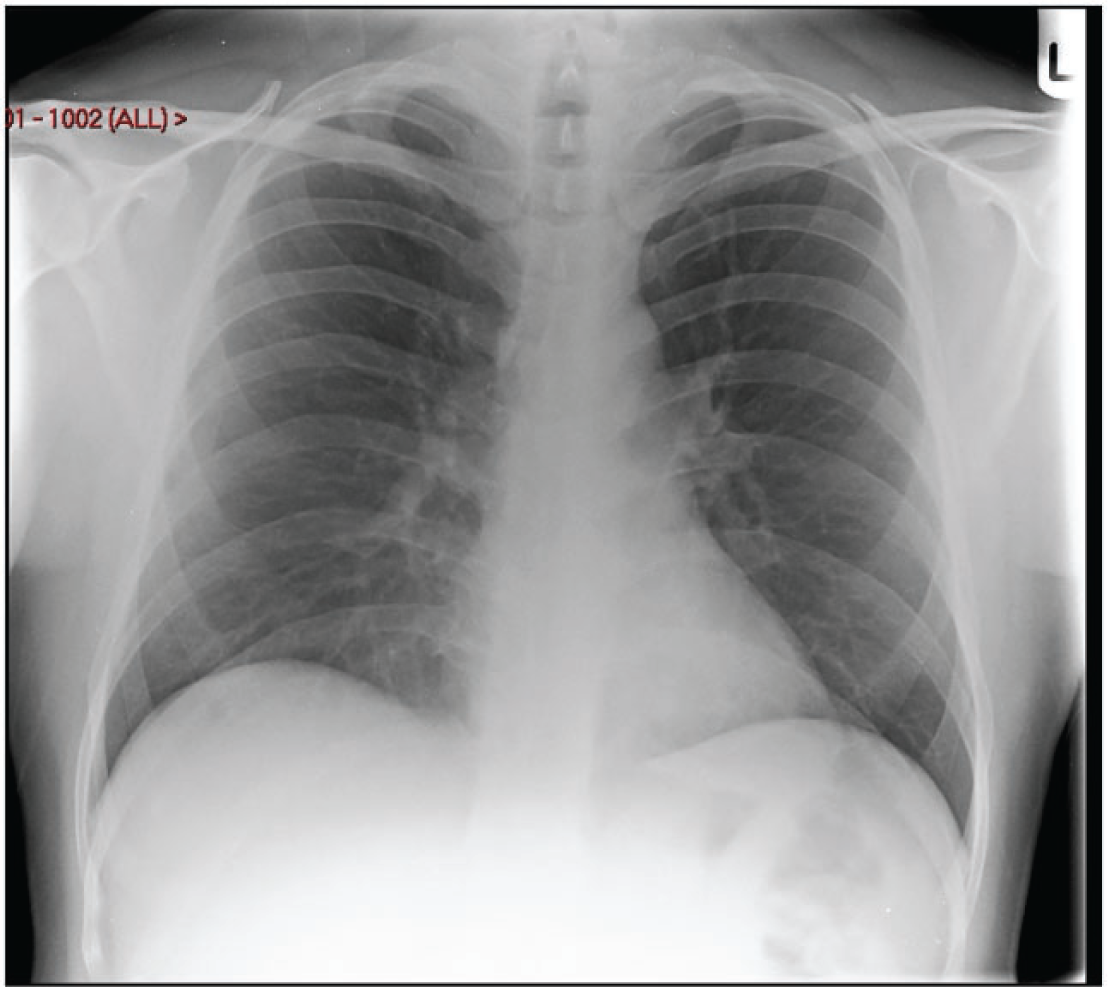

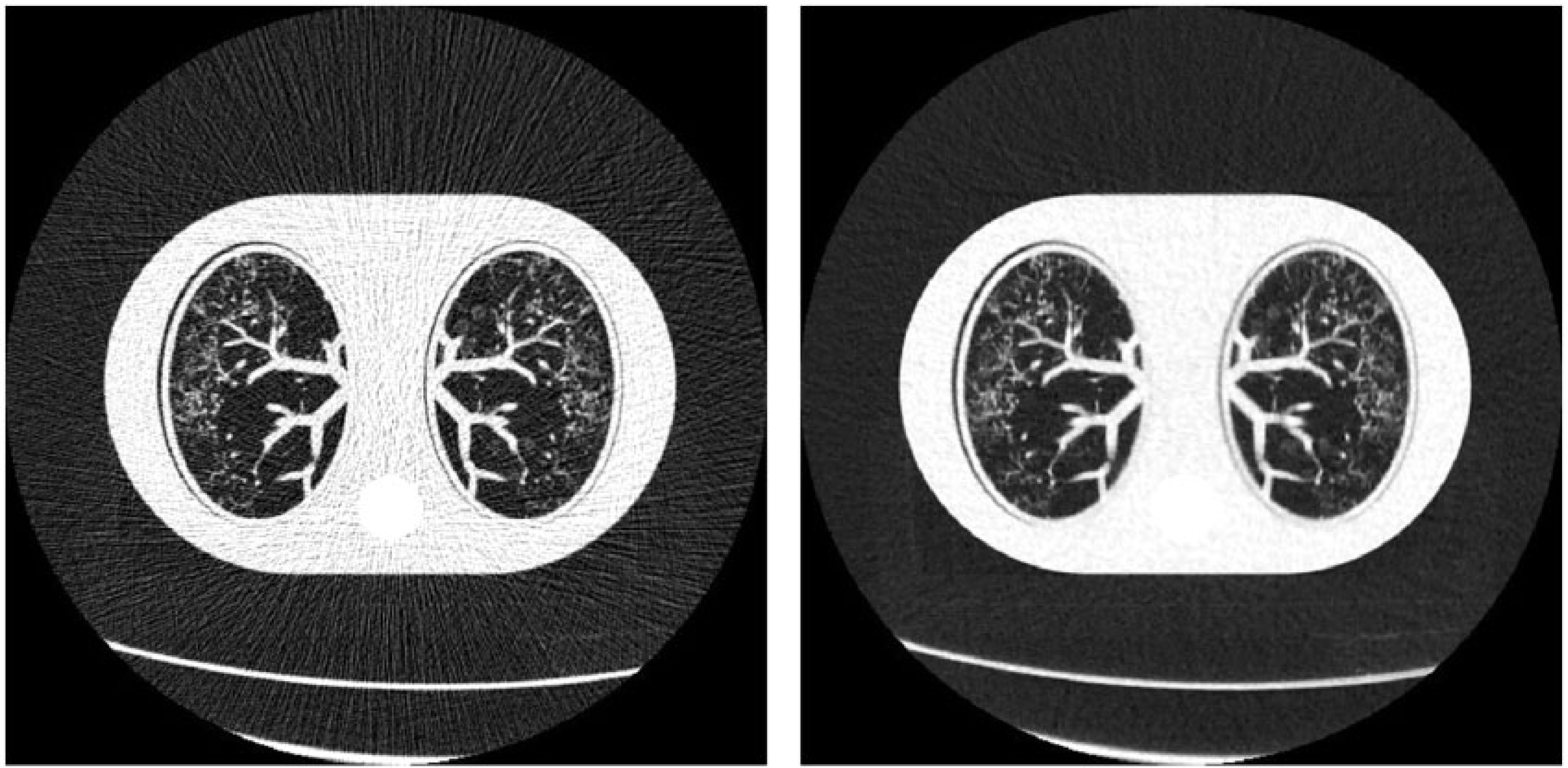

The total net risk from a radiological examination is a sum of radiation risk and clinical risk. The radiation risk is assumed to increase linearly with dose according to the linear non-threshold model. Clinical risk is assumed to decrease with dose as the image quality is improved to provide adequate clinical information. In this example, the clinical risk decreases according to an exponential model, but there will be a lower limit where residual clinical risk is maintained regardless of the imaging method. The minimum net risk for the summed components will be the optimisation target point. Adapted from: Samei et al. (2018). (7) This publication addresses both radiation dose and image quality. Since assessment of an image is both a clinical task and reader dependent, it is not a trivial matter to decide what quality of image is adequate for the clinical task in hand (NCRP, 2015). Significant reliance is placed on judgements made by radiologists or other radiological medical practitioners, but opinions vary about image quality requirements, and less experienced practitioners may need a higher level of image quality to make a diagnosis. Thus, quantification that can aid in a decision about the appropriate level of image quality is difficult when it is based on subjective evaluation of image quality against quality criteria (Section 5.4). (8) The tools used for measurement of image quality for image receptors during performance tests relate to the ability to detect low contrast objects of varying size and shape within a uniform background, and depend on the noise level and texture. These cannot easily be compared and translated into analogous clinical tasks. Research groups are investigating methods of image quality analysis that can be more closely allied with clinical tasks using simulations with model observers or by artificial intelligence (AI)-based methods (Section 5.3). Although the field is developing, there is still some way to go before such methods provide solutions which can be implemented more widely in clinical practice, but their application is likely to be important in the future. (9) The optimisation process may involve not only selection of the appropriate level of image quality, but tailoring the examination protocol to the clinical needs of each individual patient (Samei et al., 2018). Decisions may have to be made about the extent of the imaging required to answer the clinical question (e.g. this might include the possible need for rotational three-dimensional (3D) imaging in image-guided procedures). In some countries, this option is included in the initial justification, but reasons for electing to carry it out are part of optimisation. As more technical optimisation features and quality management systems (QMSs) evolve, the optimisation process will extend to focus more on the needs of individual patients. (10) The ultimate objective is to maximise the benefit-to-risk ratio for the patient, and is related to clinical effectiveness and, with more controlled scenarios, to clinical efficacy. Since clinical outcome depends on a very large number of factors and clinical data types, such outcome quantification cannot be done with any simple model or modality. This will include AI algorithms, tailored to the needs of individual patients; for example, based on physical characteristics and body habitus as well as clinical indication. Adapting the protocol to individual patients will also have to take their medical conditions/limitations into account. Therefore, a big data approach using methods related to AI would seem to offer considerable potential for the handling of such multi-dimensional data, constructed from many clinical data types, and involving complex correlations and interdependencies, and is likely to become increasingly important in the future. This would enable an indication-oriented and patient-specific optimisation methodology to be implemented as an organisation-wide and consistent process, with measurable effectiveness and extensive use of other performance indicators.

1.3. Risks from radiation exposure due to medical imaging

(11) Something should be said about risks from radiation in order to establish the context for optimisation. Potential effects of radiation exposure are tissue reactions (deterministic effects that occur in the days, weeks, and months following an exposure) and stochastic effects (risk of induced cancer or hereditary effects in the long term). There will only be a risk of tissue reactions for interventional cardiology or radiology for a very limited cohort of patients with serious medical conditions, who undergo one or more complex procedures within a period of a few months. However, there could be a risk of lens opacities from cumulative exposures of the eyes to doses >500 mGy received over an extended period, and these may develop with time (ICRP, 2012). Methods for the avoidance of tissue reactions are addressed in (12) Although the evidence is derived predominantly from the atomic bomb survivors, other studies on radiation workers in the nuclear industry, patients receiving high localised radiation doses from medical therapies, or individuals exposed during radiation accidents provide further evidence that the risk exists. It is often only through meta-analyses combining data from several studies that results for population sizes with sufficient statistical power to show a link between radiation and cancer are obtained. The epidemiological results are consistent with a linear relationship between the risk of cancer induction and mean absorbed organ dose at doses extending below 100 mGy. Based on this, for purposes of radiological protection, a linear non-threshold (LNT) model is used to extrapolate down to lower doses in order to estimate potential risks (ICRP, 2005). Recently, the National Council on Radiation Protection and Measurements (NCRP) has published a review of epidemiological studies, including those of the atomic bomb survivors, pooled results for nuclear industry workers, and data from other exposed populations, undertaken in order to assess the quality of the data and evaluate the support that they provide for the LNT model (NCRP, 2018; Shore et al., 2018). NCRP judged that, although the risks are small and uncertain, the available evidence provided broad support for an LNT model as the most pragmatic approach for radiological protection. Although the atomic bomb survivors received their radiation dose as a single exposure at one point in time, other populations such as nuclear industry workers were exposed to small doses incrementally over time, and the cumulative value was tens of mSv effective dose. In addition, more evidence is emerging from recent reviews of epidemiological data that doses from exposures <100 mSv are associated with cancer risks in both children and adults (Lubin et al., 2017; Little et al., 2018, 2022; Hauptmann et al., 2020; Rühm et al., 2022). It is within this context that the risks from medical exposures should be appraised. (13) Medical imaging modalities are designed to investigate conditions that generally only affect certain parts of the body, so regions of the body irradiated in any imaging procedure are localised. Moreover, the x rays are attenuated as they pass through the body, so superficial tissues receive higher radiation doses than those deeper within the body. Therefore, the organs and tissues irradiated and the distributions of radiation dose within individual tissues are different for every type of examination, and also depend on the size and shape of the body for each patient. As individual tissues also vary in their sensitivity to radiation, this means that the risk of any stochastic effect from every examination will be different, and will depend on the exact conditions of exposure, and the age and size of the patient (ICRP, 2021). The mean absorbed doses to organs and tissues from diagnostic radiology are generally in the range from fractions of a mGy to tens of mGy. The potential detriment to health from sequences of exposures performed for diagnosis and management of disease could be significant if dose levels are higher than they need to be or if examinations are repeated unnecessarily, although patients form a sub-group of the general population who may have other competing morbidities.

1.4. Justification and optimisation of medical exposures

(14) (15) Justification requires the radiologist or other radiological medical practitioner to weigh the expected benefits from imaging against the potential cost including radiation detriment, and to consider available alternative techniques that do not involve exposure to radiation. For radiological medical practitioners to make such decisions, they should understand the clinical indications and the health status of their patients in order to determine which imaging tests or fluoroscopically guided interventions (FGIs) are appropriate. The process of justification in the medical context will not be considered here, except in relation to highlighting the need for radiological medical practitioners, whether they be radiologists or other clinicians, to always be provided with the relevant clinical history for the patient who is to undergo the procedure, in order that the justification process can take place. Collaboration between the referring clinician or healthcare professional and the radiological medical practitioner to provide the information about the patient’s condition is the first step in the process, and is crucial for ensuring that the imaging task is adapted to the clinical need of the patient so that optimisation is carried out satisfactorily. (16) Optimisation is defined as the process of determining the level of protection and safety to make exposures ALARA, with economic and societal factors being taken into account (ICRP, 2007b). The Commission explained the concept and principle of optimisation as applied to medical exposures in (17) The two levels of optimisation outlined above are not sufficient to ensure that the radiological protection of procedures is optimal, as there will be continual development in equipment facilities and knowledge and skill of the operators that should feed into a process of steady improvement. Therefore, the proper training of operators with periodic sessions to update knowledge on new techniques and improved facilities on equipment is essential. Evolving technical optimisation features and QMSs will extend the optimisation process to focus more on individual patients and procedures based on clinical indication, which is an extension of the ALARA optimisation principle as applied to patients (Oenning et al., 2018). Optimisation is not a static process to be ignored and forgotten once it has been achieved. It requires constant attention with frequent monitoring and analysis of performance, reject analysis, feedback of experience, and regular review to provide continual improvement in every aspect of the imaging process and refinement of the service to the patient. This last component is key to achieving higher levels of optimisation. (18) Technical requirements for optimisation for the various modalities used within radiology, namely radiography, fluoroscopy, and CT, are very different. Therefore, subsequent to (19) Both justification and optimisation have become increasingly important with the passage of time as part of the effort to ensure that patients receive the best-possible service from their imaging departments. The two principles are to ensure that patient doses are not only low enough to justify a particular examination, but also, through optimisation, are kept ALARA without being reduced to the extent that the level of image quality required for the clinical task is jeopardised. The mutual connection between optimisation and justification may be strengthened with more indication-oriented and patient-specific optimisation processes in the more advanced categories described in this publication. (20) Individual patients may undergo many imaging procedures from which they could receive effective doses reaching hundreds of millisieverts (Brambilla et al., 2020; Rehani et al., 2020). Although the majority of patients who receive recurrent exposures will be in the later stages of life when risks will be less (Martin and Barnard, 2022), there are some children with particular health problems that require frequent follow-up with recurrent imaging. Particular attention should be paid to developing care plans for these individuals in which the frequency and performance of imaging are optimised (IAEA, 2021a). Significant further reductions in dose from recurrent imaging procedures may be possible through optimisation based on information from earlier procedures. (21) Furthermore, increasing access to diagnostic and clinical data by evolving radiological information systems (RISs), picture archiving and communication systems (PACSs), and hospital information systems (HISs) will help to implement a more advanced comprehensive process of justification and optimisation. The expansion in the use of radiological imaging worldwide in recent decades, coupled with the introduction of new digital technologies that require higher levels of expertise to operate, makes the effective practice of optimisation techniques more important than ever. The welfare of patients and the population at large will be enhanced if radiation exposures resulting from x-ray examinations can be kept to a minimum without reducing the medical benefits.

1.5. The scope for optimisation with digital imaging modalities

(22) Radiographic imaging is, in essence, a fairly simple procedure, with x rays being used to produce a ‘shadow’ image of tissues in the body. As components of the tissue attenuate the x rays to different extents, structures can be visualised within tissues. The denser abdominal and pelvic tissues attenuate x-ray beams more than lung tissue, but attenuation of the x-ray beams also depends on the energies of the x-ray photons. Any potential health detriment will depend on the tissues and organs irradiated, and the distribution of absorbed dose within them. Simple examples of poor optimisation are if a larger field size is used for a radiographic exposure than is necessary, or if a chest x ray is performed with a lower energy beam (e.g. 70–80 kV) from which little scattered radiation is generated, but an anti-scatter grid is inserted behind the patient which also attenuates the primary beam, as these will increase the dose to the patient. Radiographers or radiological medical practitioners will operate the imaging equipment, and the skill of these radiological professionals encompasses selection of the best imaging exposure factors, equipment, and technique available for each type of examination and personalised for each patient depending on their size, shape, and weight. Approaches have changed as techniques with digital equipment have evolved and become more sophisticated, with the need for a greater knowledge of information technology and system software. (23) The appearance of images recorded and stored in digital form can be adjusted through post-processing to give an acceptable range of grey levels for optimal viewing (ICRP, 2004). If a much higher dose is given than is appropriate, the image may appear slightly better or essentially the same, but this may be difficult to determine from simply viewing the image. A high dose in digital radiography will not produce a black image, as it would with film, so more attention needs to be paid to monitoring dose levels. (24) Digital images offer many advantages and have the potential to allow images to be obtained with lower exposures, adapted to the diagnostic requirements of particular examinations. However, this facility is often not considered, and standard image detector exposure levels are often used for a wide variety of examinations. The relevance of image processing in digital radiology is more significant than might be anticipated from a first glance, as the digital image data typically include a range of thousands or even tens of thousands of grey-scale values whereas the human eye can see <1000 separate grey-scale levels even in optimal lighting conditions and when using advanced medical displays (Kimpe and Tuytschaever, 2007). Therefore, there is the potential for digital image processing to enhance the features relevant to diagnosis, and present them more clearly in the final images. Achieving the correct balance between dose and image quality becomes a more complex task with the additional need to understand the operation of the software controls. However, proper application of digital radiology should enable sufficient image quality to be achieved, often with lower dose levels. (25) CT scanners have become more complex, and although they have more capabilities to enable doses to be kept at a reasonable level, achieving this requires a high standard of knowledge, skills, and scientific expertise from the healthcare professionals involved in radiological imaging and optimisation. If these are not in place, doses delivered to patients could be unnecessarily high without staff being aware that anything is wrong. Even in countries with highly developed healthcare systems, optimisation is frequently not fully implemented. For example, radiation accidents involving tissue reactions from CT scanners have been reported in the USA, where the necessary expertise might always have been expected to be available (ICRP, 2007a; Martin et al., 2017). The availability of staff fully trained in use of all the hardware and software features when new equipment is purchased is of utmost importance. (26) There have been substantial developments in the application of FGIs during recent decades. These allow surgical procedures to be performed with less invasion of the body than is required by conventional surgery, resulting in lower risks, shorter recovery times, and lower costs (Maudgil, 2021). FGI is frequently the method of choice for complex interventions by a variety of medical specialists (UNSCEAR, 2008), so the number of procedures has increased substantially. FGIs may be performed in a variety of settings, and sometimes by radiological medical practitioners with less training in radiological techniques and awareness of radiation exposure than radiologists. In addition, the increasing complexity of the procedures that are now possible means that longer exposure times may be required, which carry a potential risk of radiation tissue reactions in the skin (ICRP, 2000a, 2010, 2013a; IAEA, 2010). (27) As the level of sophistication develops, the variety and complexity of procedures that are possible increases (NCRP, 2019), and the level of optimisation should be increased in parallel. Recent technological innovations that are now being implemented have the potential to provide a higher degree of optimisation through analysis of the levels of image quality necessary for imaging different organs, tissues, and pathologies, and through the collation and analysis of image-related data. However, effective use of these techniques requires that continual attention is paid to monitor the performance of equipment and develop examination protocols based on experience gained. Practical optimisation applied to the different imaging modalities will be considered in a separate publication.

1.6. Adaptation of patient dose levels

(28) The dose that a patient receives from imaging should be consistent with the clinical question that needs to be answered. Radiology and other medical imaging facilities aim to keep doses at a reasonable level based on good practice, using reference dose levels as a guide. To achieve this requires input from radiological medical practitioners, radiographers, and medical physicists working together as a team within an organisation that provides a structure which facilitates the process. An important component of the optimisation process is having information on doses that patients are receiving, and knowledge of whether these dose levels are reasonable. (29) Although many users may only have limited awareness of radiation doses for the examinations they perform, dose is a quantity that can be measured or calculated with relative ease. So, when optimisation programmes are set up, there can be a tendency to place undue emphasis on dose reduction, which can be quantified or read directly from the equipment, ignoring the potential detriment to the provision of clinical information, which, in almost all cases, is a far more important factor for the quality of care and effective clinical outcome. (30) A tool that ICRP adopted over 20 years ago to aid in ensuring that doses for procedures are at reasonable levels is the DRL, the application of which is described in detail in

1.7. The process of optimisation

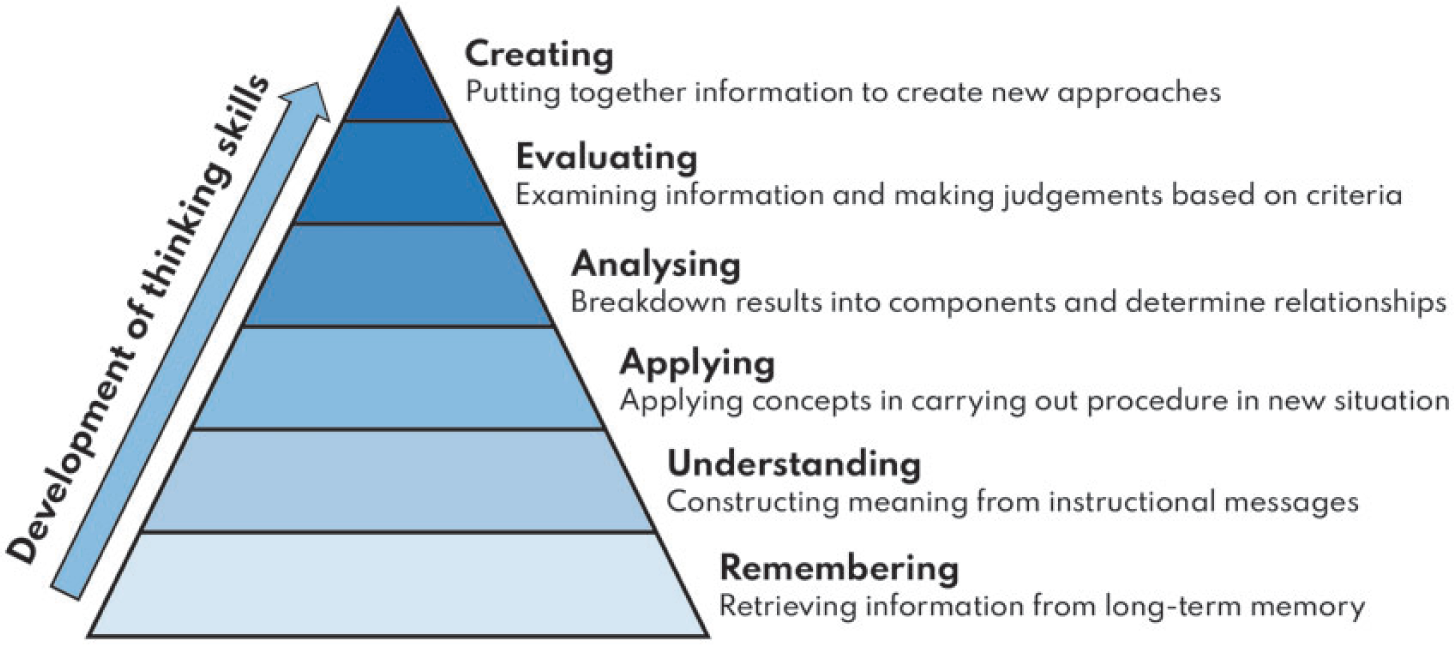

Components of optimisation.

(32) Optimisation of radiological protection requires input from several groups of staff with different skill sets, and these must have been acquired through proper training. The staff groups need understanding from training and experience to be aware of dose levels and their dependence on different factors that affect image quality. They need to be able to make judgements and determine reasons for any deficiencies, and be able to adjust protocols and procedures to address them.

(33) Optimisation also requires collaboration between the professional groups; without this, progress is unlikely to occur. Performance tests on equipment may be carried out by medical physics staff, but without feedback of information from physicists to users, assessment of the optimal equipment settings, and adjustment to clinical protocols, there will be little progress. Physicists might provide dose information from surveys and trace technical aspects related to image quality, but it is the radiographer and radiologist or other radiological medical practitioner who can judge whether the quality of the clinical image is adequate. Unless the three groups work together to identify when doses for any procedure are higher or substantially lower than expected, or the image quality is higher than necessary or too poor for diagnosis, there will not be any change in practice. Encouraging staff engagement in these aspects enables optimisation to become a habit that is part of routine practice.

(34) There need to be systems in place to manage optimisation, as follows: (i) ensure that monitoring, review, and analysis of performance are part of an ongoing process; (ii) establish and modify clinical protocols, taking account of available data; and (iii) apply the results across the whole organisation.

(35) This publication considers how the different aspects of the optimisation process might be addressed by radiology services and countries with varying levels of infrastructure and optimisation tools. It attempts to provide guidance across the full spectrum from countries with limited expertise through to advanced services with access to patient radiation exposure monitoring and image quality assessment software, taking into account the greater flexibility in image processing and presentation afforded through new techniques. Section 2 will deal with management of x-ray equipment through its life cycle. Section 3 will examine the structure of the optimisation process. Section 4 will review practices in measuring and analysing patient dose data. Section 5 will consider the assessment and requirements for image quality in more detail than in previous reports. Finally, Section 6 will consider the requirements and provision of training, which is a key element in establishing a successful optimisation programme.

(36) The target audience for this publication includes not only radiologists, radiographers, medical physicists, cardiologists, other radiological medical practitioners, and healthcare professionals operating x-ray equipment, who deal directly with the processes described, but also managers involved in allocation of resources for equipment and training, x-ray equipment vendors, x-ray engineers, applications specialists, and regulators.

2. THE X-RAY INSTALLATION AND X-RAY EQUIPMENT LIFE CYCLE

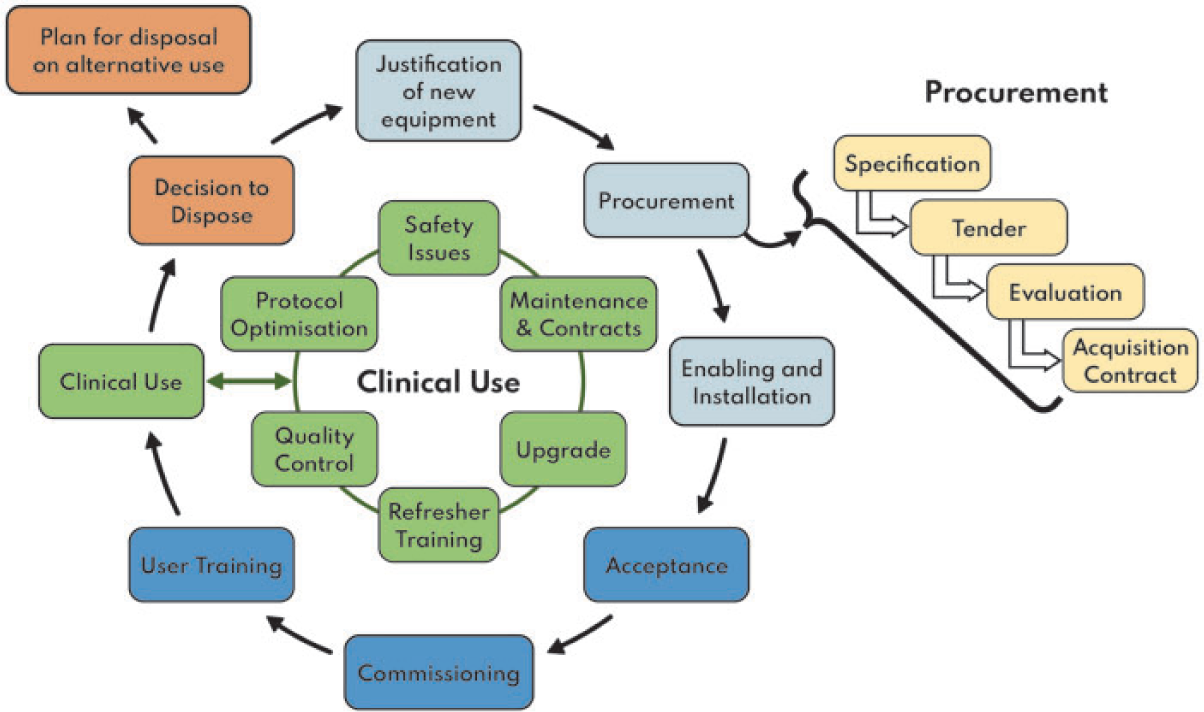

2.1. The life cycle of medical imaging equipment

(38) Setting up a new x-ray imaging service, or replacing an existing service, requires careful planning by a team that includes radiological professionals, radiology managers, facilities personnel, and clinical engineers. The equipment life cycle is a well-understood concept, and describes medical equipment, including imaging equipment, from ‘cradle to grave’. X-ray equipment is procured through a tender process wherein equipment suppliers are invited to submit a bid to supply the equipment or services. The team needs to prepare a technical and operational specification based on the clinical requirements, stating what the equipment is to be used for, where it is to be installed, the major system components, any accessories (such as contrast injectors and QA test objects) that might be required, and include the maintenance and repair arrangements. They should also agree an evaluation/scoring system to select the equipment that meets their needs. Once a contract has been agreed, the equipment will be installed and commissioned according to agreed standards, with personnel trained in its use, and a QA programme put in place to ensure that standards are maintained.

(39) The initial conception of the clinical need for medical imaging must first be developed into a proper robust justification for procurement. This is the embryo stage of the life cycle shown at the top of Fig. 2.1. The life cycle of imaging equipment should be included in a healthcare organisation’s planning process, which should aspire to incorporate a systemic approach to the procurement, deployment, maintenance, quality control (QC), repair, and disposal or alternative use of imaging equipment. Every stage in the life cycle is critical in terms of optimisation of patient protection. Professional skills, methodology, and process all play a vital role in the management of the equipment life cycle; understanding and managing this appropriately is essential if optimisation is to be achieved.

(40) Fig. 2.1 shows the basic life cycle of x-ray equipment, and how it involves a continual sub-cycle to maintain performance and improve optimisation once the equipment is put into clinical use. It also shows the acquisition process in some detail; appropriate acquisition is essential if optimisation is to be achieved. The stages are described below, with an emphasis on relevance to optimisation.

The imaging equipment life cycle.

2.2. Acquisition of x-ray equipment

2.2.1. Justification of equipment

(41) The stages in the life cycle of equipment include justification, procurement, installation, acceptance, commissioning, user training, clinical use, and disposal or alternative use. The procurement of all medical imaging equipment should be justified in terms of clinical need and radiation dose. Justification should be evidence driven, and should consider present and future clinical applications and revisions of workflow whilst ensuring that there is no unnecessary proliferation of equipment. Justification of new or replacement equipment requires the involvement of radiologists or other medical radiological practitioners, radiographers, medical physicists, and administrators.

2.2.2. The acquisition and procurement process

2.2.2.1. Specification

(42) Once procurement of equipment has been justified, it is essential that a full performance specification of the entire system is established before any purchases are made in order to reduce the possibility of inappropriate devices being purchased. In the context of optimisation, the performance specification should include consideration of the intended clinical use of the equipment and technical requirements relating to patient dose and image quality.

(43) The type and amount of training required should be specified, as should the manner (e.g. procedures and their resulting technical documentation) in which the manufacturer/installer demonstrates that the equipment supplied meets the performance specification and local regulatory requirements (see Section 2.3.1). Maintenance requirements should also be included in the specification, as should detail of any regulatory requirements that the equipment will be expected to meet. Delivery timescales should also form part of the specification.

(44) Specification is a task that requires input from radiologists or other medical radiological practitioners, radiographers, managers, medical physicists, information and communications technology (ICT) professionals, engineers, and procurement experts. The specification document should address the issue of enabling any infrastructure work required; for example, what level of connectivity is required for the equipment to function appropriately, and how will the vendor address those requirements within the organisation’s ICT infrastructure? Specifications should also include the resourcing and vendor activity involving the initial optimisation of equipment imaging or exposure protocols. This will ensure that the purchase not only includes the technology and applications, but also the correct setting of the technology that is appropriate for the first practical phase of optimisation. In our modern world with wide connectivity to networks, it is also important that data security and data safety issues are considered in the specification.

(45) In the case of used or refurbished equipment, the specification should be clear that the equipment should function as originally intended, and meet all the performance and safety requirements that it did when new. IEC 63077 describes and defines the process of refurbishment of used medical imaging equipment (IEC, 2019b).

2.2.2.2. Tender, evaluation, and acquisition

(46) A tender comprises the specification and terms and conditions under which the equipment is to be procured. A tender is normally issued after a call for expressions of interest is issued to potentially interested parties. Responses to the tender will form the basis for the evaluation process, so it is important that the questions posed by the specification document and stipulations regarding terms and conditions are formulated correctly. The tender may require the vendor to identify options for the disposal of redundant equipment.

(47) On receipt of tender returns, a multi-disciplinary group comprising radiologists or other medical radiological practitioners, radiographers, radiology managers, medical physicists, biomedical engineers, ICT professionals, and procurement experts should convene to consider the responses from those vendors offering their products. Evaluation should be carried out in an objective manner against predetermined criteria to maintain neutrality and to ensure that the most optimal equipment or system is chosen. After evaluation, a purchase order can be placed, and lead-in times identified. The contract should address all the items included in the specification and the associated terms and conditions, including the initial protocol settings.

2.3. Enabling and installation of x-ray equipment

(48) Enabling and installation works are essential components of the equipment life cycle. Planning and construction of the x-ray room, protection, and electrical and other services all need to be prepared beforehand, and consideration needs to be given to facilitating the appropriate movement of the patient and positioning of the attending staff. If the installation is not completed correctly or the correct infrastructure and building work is not carried out appropriately then at best delays will be encountered. There are likely to be ongoing issues throughout the life of the equipment. Basic connectivity issues and possible mitigation should be identified at this stage, as should issues around licensing and registration (WHO, 2019).

2.3.1. Acceptance

(49) Acceptance testing is the process whereby the purchaser satisfies themselves that the equipment supplier has provided what has been ordered, that it is safe to use, and that it functions according to the manufacturer’s and purchaser’s specification. This will involve both medical physicists and radiographers, in consultation with radiologists or other medical radiological practitioners, and will include identifying the inventory, and performing electrical and mechanical safety checks. Regulatory requirements may require demonstration of radiation safety, which should be carried out at this stage. Acceptance tests often involve quantitative measurements to demonstrate that the equipment specification is met. These tests are vendor-specific and follow the vendor’s methodology. Information technology (IT) connectivity and configurations to PACSs and other relevant IT systems (e.g. image processing workstations and analysis servers) should also be verified during acceptance testing.

(50) Depending on the complexity of the equipment and its specification, the manufacturer/equipment supplier may be best placed to demonstrate conformity with certain aspects of the specification. For example, if the equipment specification quotes a modulation transfer function (MTF) at a particular spatial frequency, the installer can reasonably be expected to demonstrate this in some way, which may be by presenting the factory test sheet or direct measurement. Section 2.2.2.1 discusses specification during the procurement phase. The presence of operator and service manuals should be verified at this stage.

2.3.2. Commissioning

(51) In the commissioning phase, the purchaser should ensure that the equipment is ready for clinical use, and establish baseline values against which the results of subsequent routine performance tests (constancy or QC tests) can be made (IPEM, 2005; Stevens, 2021). The set of QC tests should guarantee that the system parameters, modes, and programmes are optimised for the intended clinical use, and their deviations during the life of the equipment are within acceptable limits. Protocols to be used for performance testing purposes should be identified; if clinical protocols are to be used for performance testing purposes, commissioning should not take place until they have been installed.

(52) After any major work on the equipment, the relevant baseline test may have to be repeated; for example, when a detector or x-ray tube is replaced. Commissioning should also address issues of interoperability in the case of highly complex digital imaging equipment (AAPM, 2019b).

(53) Clinical protocols for acquiring images should be evaluated at the commissioning phase, and checked for consistency with other equipment operated by the healthcare organisation to ensure that, to as great a degree as possible, there is a systemic approach to imaging. In the case of digital radiography, for example, expected values of exposure index and technique factors should be established for routine examinations. Another example is that of CT, where all examinations for specific clinical indications in an organisation should be performed with similar protocols, or protocols matched to give as similar a level of performance as equipment factors permit. As mentioned before, the purchase should not only include the technology and applications, but also the optimised initial setting of the technology for clinical use.

2.3.3. User training for clinical use

(54) User training is critical for safe, optimised use of any imaging equipment. Organisations should have a policy for user training that should be part of the quality management programme, where one exists. Vendors have responsibility for providing users with training that includes a full understanding of imaging options available that can enable full optimisation. Initial user training should ideally be provided by the representative of the installer/manufacturer (applications specialist) following acceptance and before the equipment is put into clinical use. Different users may well require different levels of training; for example, medical physics personnel may be required to use equipment in service or similar modes. It is fairly likely that some end users of the equipment will not be able to receive this initial training, which should also be given to anyone who is required to use the equipment after installation, including qualified medical physicists. In this case, training should be delivered by an agreed cascade process. It is important that the most educated ‘superusers’ are identified for dissemination of user knowledge, and should provide practical guidance for subsequent refinement of protocol optimisation as members of the local multi-professional team.

(55) Users need to understand the intended use and normal functioning of the device in order to use it effectively and safely. Training should cover requirements for equipment once in clinical use. For example, the UK Medicines and Healthcare Products Regulatory Agency (MHRA, 2015) requires that, where relevant, training should cover:

any limitations on equipment use;

how to fit accessories and be aware of how they may increase or limit use of the device;

how to use controls appropriately;

the meaning of any displays, indicators, alarms, etc., and how to respond to them;

requirements for maintenance and decontamination, including cleaning;

how to recognise when the device is not working properly, and know what to do about it;

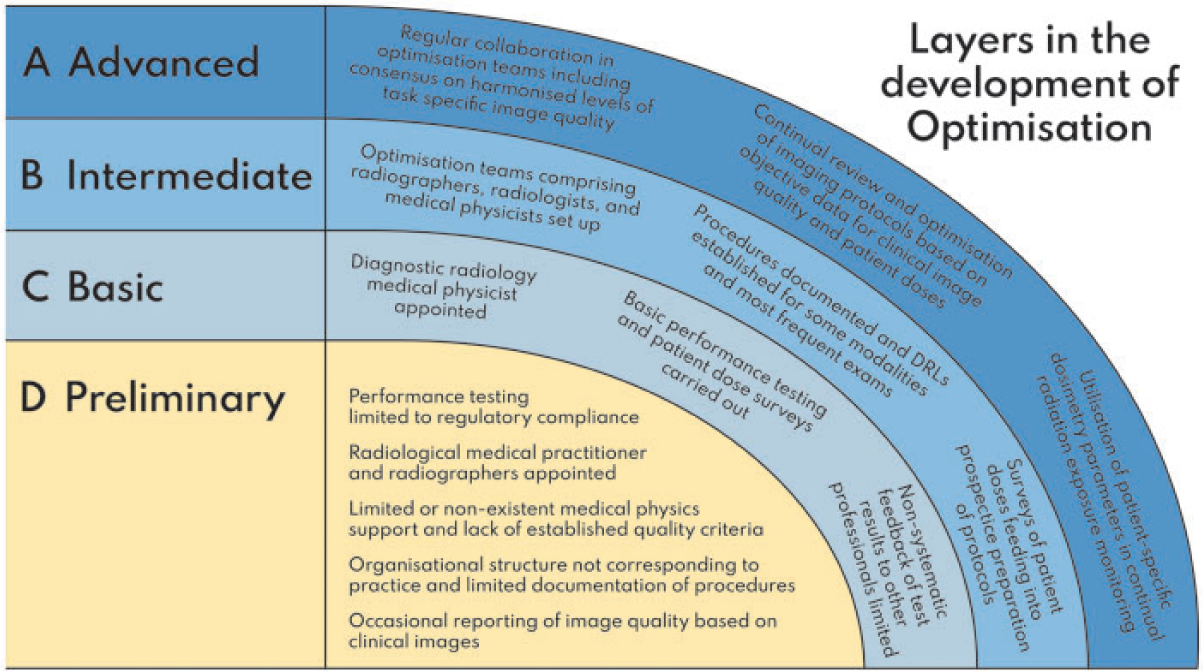

understanding the known pitfalls in use of the device, including those identified in safety advice from government, vendors, and other relevant bodies; and

understanding the importance of reporting device-related adverse incidents (a fault book or similar should be linked to the equipment, and should include details of faults and when they were rectified).

Training should be recorded for quality, continuing professional development (CPD), and safety purposes.

2.4. Operational requirements for x-ray equipment in clinical use

2.4.1. Quality control

(56) QC in medical imaging is a continual multi-disciplinary process, and should not be confined to performance or compliance testing. QC involves collecting and analysing data, investigating results that are outside the acceptable tolerance levels for the QC programme, and taking corrective action to bring these results back to an acceptable level (Jones et al., 2015). The establishment of equipment performance and a QC programme constitute a tool in the process of optimisation of all radiology equipment. A QC programme should be structured, and should involve radiologists or other medical radiological practitioners, radiographers, and medical physicists. A medical physicist or a senior radiographer should be appointed to supervise the whole programme, to oversee the records, and to review the data, especially in larger departments (IPEM, 2005; Stevens, 2021). Ideally, a QC programme should form part of a wider, managed, QA programme (see Section 3.7).

(57) The move to digital imaging has resulted in a need to change the approach to QC in a radiology department, especially, but not exclusively, in the field of plain film imaging. In traditional ‘pre-digital’ imaging, the film itself acted as a final QC tool. Inappropriate exposure or processing would result in a film being marked as ‘reject’. This is no longer the case. However, standardised tools are now available to identify inappropriate exposures, and these should be put into routine use. Reject analysis and artefact identification should form an essential part of radiographer-led QC.

(58) Many QC measurements may be undertaken by radiographers, but the programme, especially for more complex systems, should be performed with the guidance and advice of a qualified medical physicist. The radiographers and medical physicists should understand how the system works, its characteristics, modes of operation and image acquisition, image quality requirements, and image processing for different clinical programmes and clinical uses. In addition, they should be able to interpret test results and advise on parameters to be measured. Close cooperation with the equipment vendor and service engineers is needed, as well as involvement of clinical staff operating and using the equipment.

(59) Routine performance testing should include task-specific evaluation of the imaging system to reflect the intended clinical use of the equipment, and to guarantee the production of required image quality at a reasonably low dose, commensurate with the desired clinical outcome. The test results should be compared with baseline performance values recorded at installation, and there should be criteria for acceptable changes in performance. These limiting values and the test frequency should be specific to the clinical task for which the equipment is used. If, during the life of the equipment, the clinical use of the equipment changes, that will require a change to the system settings and default programmes, and the QC baseline values and the QC programme will need to be modified accordingly. These tests should be carried out at regular intervals, or after service or repair.

(60) The level of complexity of the performance test often dictates who performs it and how often it is performed. In some regions, performance testing is split into two levels: Level 1 and Level 2. Level 1 tests are generally of a simple pass/fail nature, and do not require sophisticated test equipment or analysis. They are performed by radiographers at regular intervals that may be weekly or even daily depending on the equipment. Level 2 tests are carried out less frequently, perhaps at intervals of 6, 12, or even 24 months depending on the complexity of the system, and require more resources and expertise. They are usually performed by a medical physicist, biomedical engineer, or vendor service engineer (IPEM, 2005; Jones et al., 2015), and the results are reported to radiology staff. Medical physicists should also undertake investigations when regular Level 1 QC tests identify performance factors that are out of tolerances, and after any relevant changes in the system’s acquisition (e.g. an x-ray tube change) or major post-processing software updates.

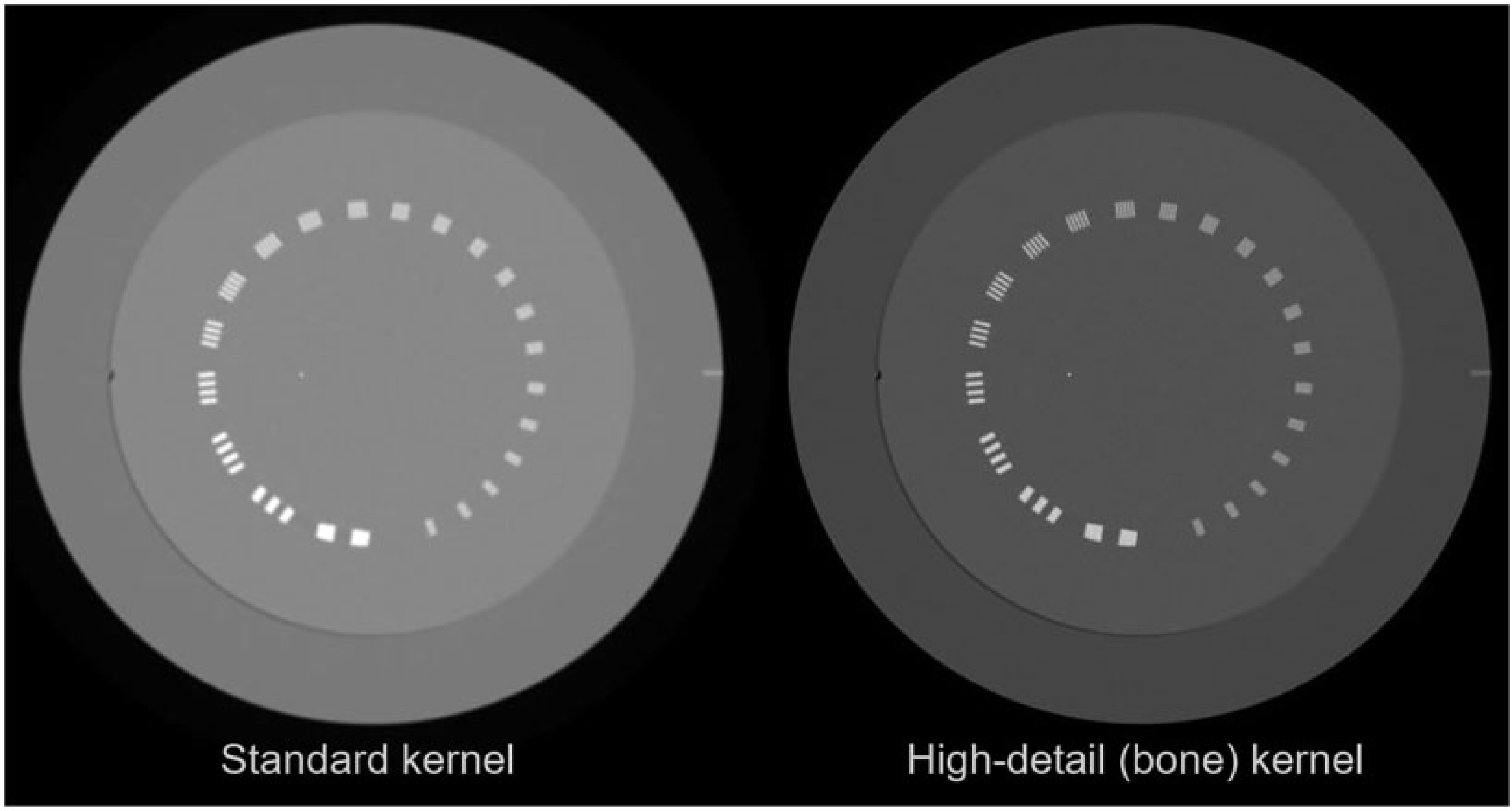

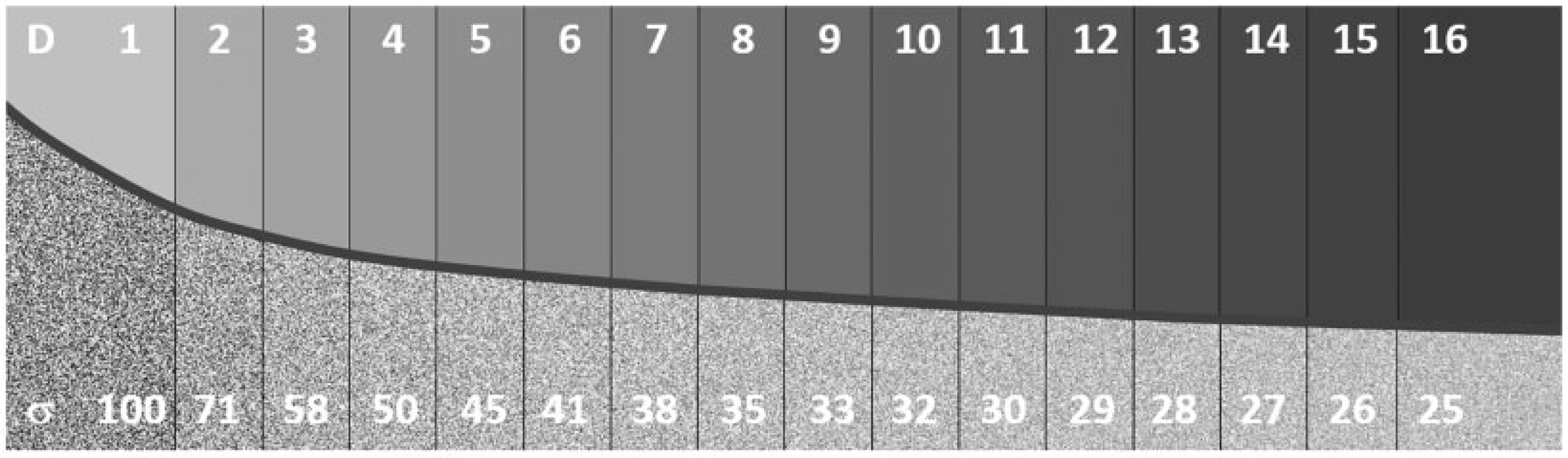

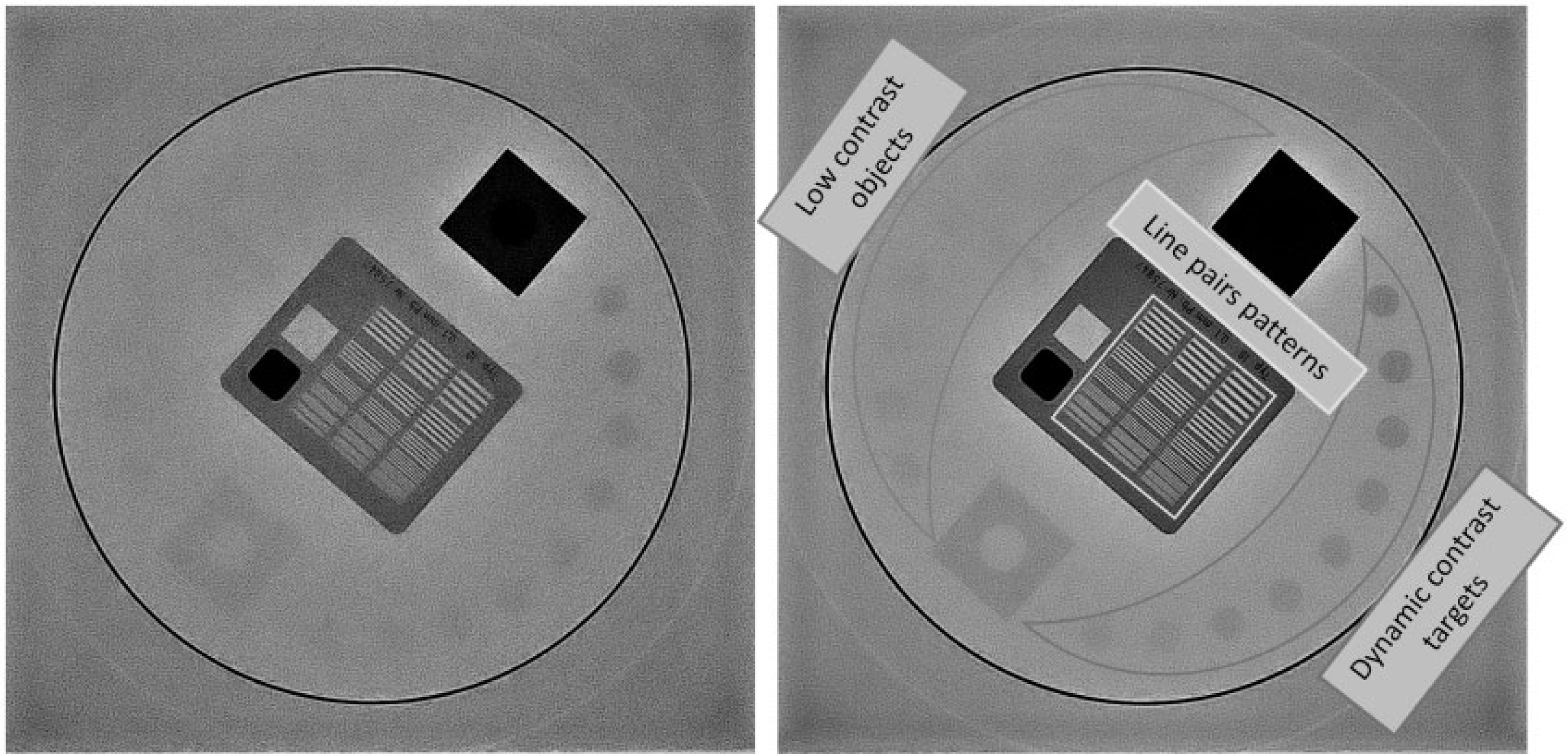

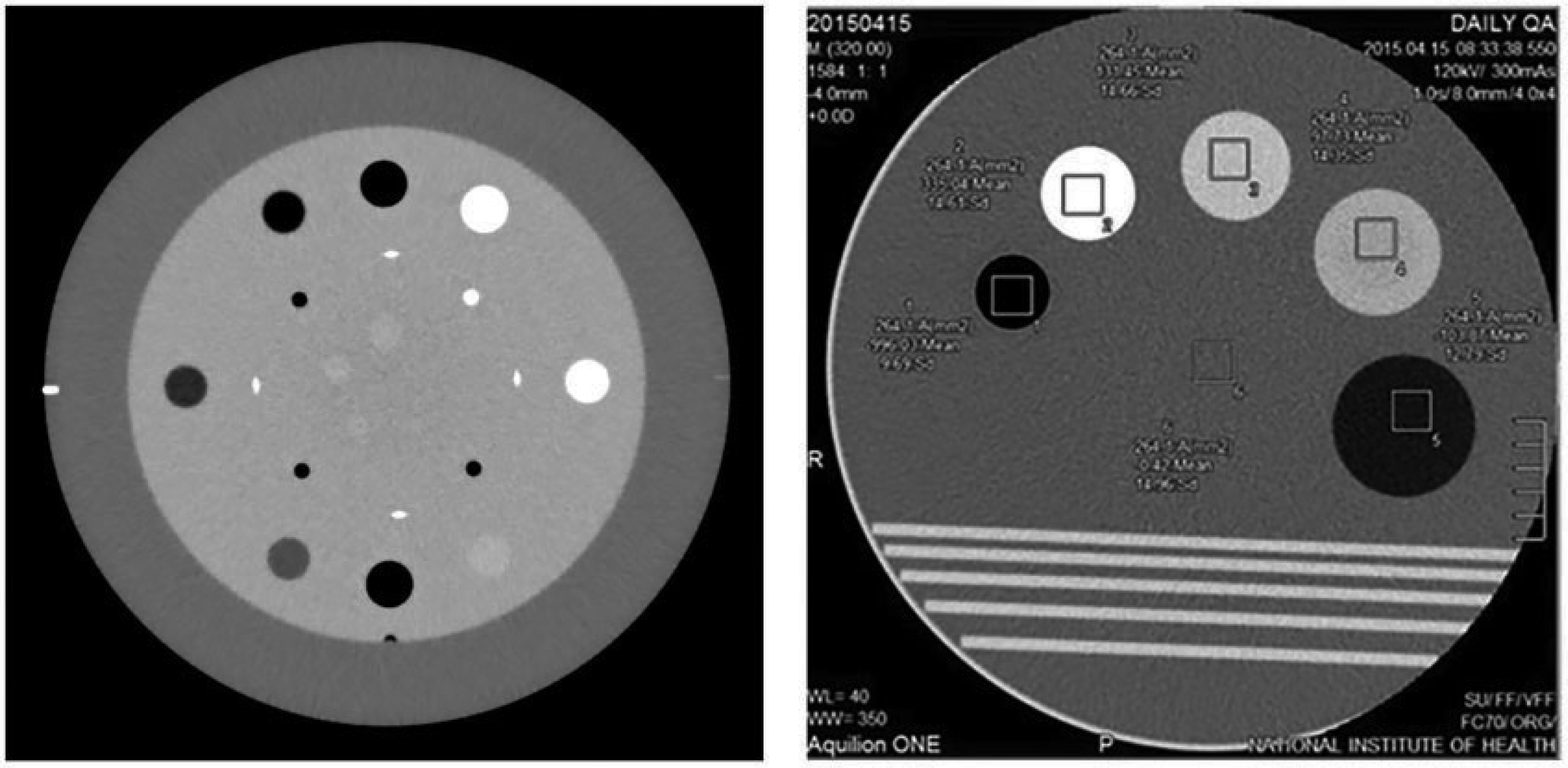

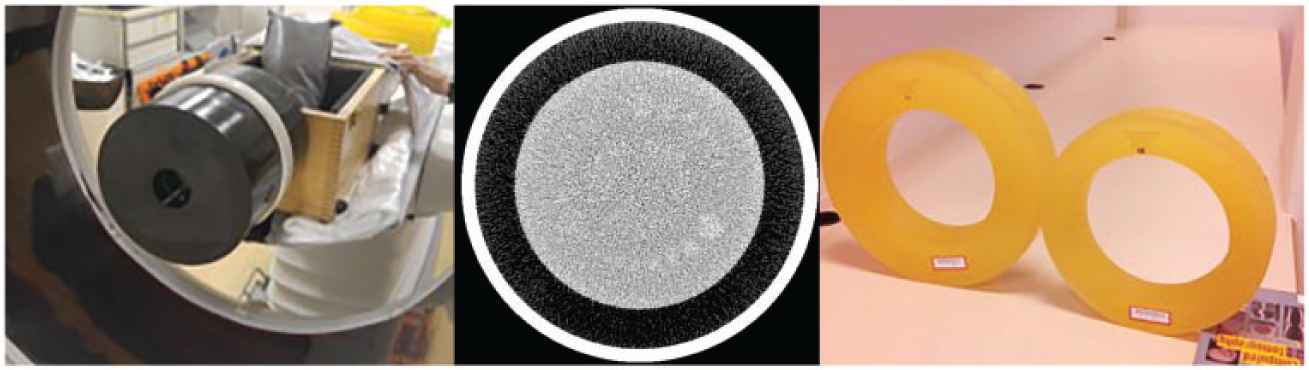

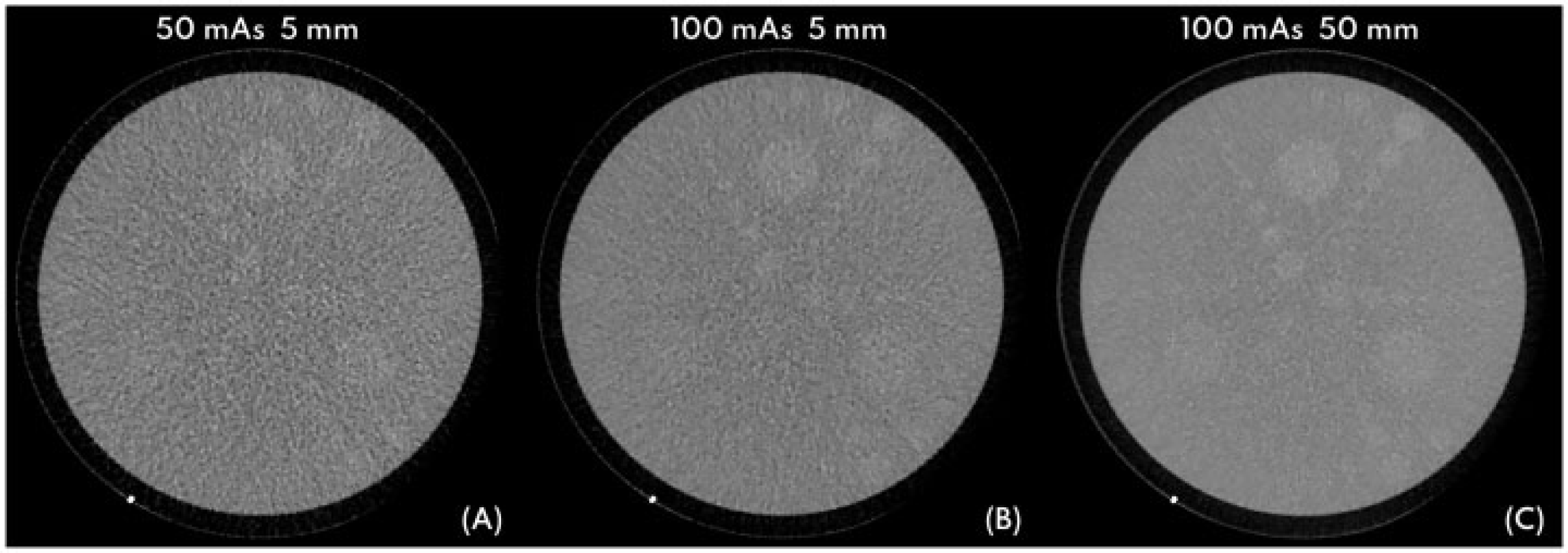

(61) Simpler tests of image quality characteristics are based on observer evaluation using test objects (AAPM, 2001; IPEM, 2005; Stevens, 2021), and the user should follow guidance on use of the specific object and be aware of its limitations. Reproducibility of the measurement conditions, including geometry, exposure settings, and the viewing conditions, could have a significant impact on the results. More detailed image quality assessment may involve physical measurements to define conventional system characteristics, such as contrast, noise, and resolution parameters represented objectively by technical parameters such as MTF, noise power spectrum (NPS), and detective quantum efficiency (DQE) (Annex A). The future trend is towards more clinically realistic test objects that enable task-based evaluations of system imaging performance (see Section 5.3.3 and Annexes B, C, and D).

(62) Optimisation is a continual process and is inextricably bound up with the minutiae of the imaging equipment life cycle. Each element of the life cycle contributes to successful optimisation. QA of the whole system helps to ensure that this is achieved through focusing attention on the many different aspects of performance that need to be maintained.

2.4.2. Upgrades and refresher training

(63) Upgrades occur at all points during the life cycle of imaging equipment. It is important that the purpose of an upgrade is understood by users and radiology management. It is equally important that appropriate commissioning tests are performed after an upgrade (software or hardware), and that staff groups are properly trained, either by an applications expert from the company or via cascaded documentation, as training is critical for safe, optimised use of any imaging equipment. Staff should be provided with refresher training throughout the life of the equipment and after any upgrade. All training should be recorded, which might be through a QMS to provide ready access and traceability.

2.4.3. Safety issues

(64) An adverse incident is an event that causes, or has the potential to cause, unexpected or unwanted effects involving the safety of patients or other persons (MHRA, 2015). In the context of the optimisation of medical imaging, the definition of adverse incident could include exceeding a notification level for deterministic effects in an FGI (NCRP, 2010; ICRP, 2013b). Equally, any overexposure to a patient (or staff member) that required reporting to a regulator would count as a safety issue. However, it is important to consider incidents with the potential to cause harm, so near-miss evaluation and local adverse event reviews should be integral to the routine use of medical imaging equipment.

2.4.4. Contract management and maintenance

(65) All medical imaging equipment must be maintained appropriately. Equipment often comes with a limited warranty, providing maintenance to vendors’ specifications for a set time. In addition to this traditional model, there are other arrangements such as those whereby equipment purchased is part of a comprehensive supply and ongoing maintenance and repair arrangement for a set period (i.e. a ‘managed service contact’). Whatever the model, subsequent arrangements should be made using an evidence and risk-based approach to decision making – costs alone should not be the determining factor. Decisions about maintenance and contract management are often made by radiology management, and it is important that these key stakeholders understand the clinical implications of any decisions made. Maintenance contracts should be specific and auditable, and those personnel (in-house or external) performing service and maintenance should be adequately trained and competent on the equipment they work with. Appropriate calibration of measuring equipment used in maintenance (to verify the performance or radiation output of the imaging equipment) should be a requirement of maintenance contracts. Contracts must ensure that schedules are available for planned preventative maintenance (PPM), and when equipment is returned to clinical use from either PPM or repair, service personnel should leave an indication of what changes they have made and whether those changes could affect patient dose or image quality. If a repair or PPM has resulted in a potential change to image quality or dose, the radiographer should perform a predetermined QC test in collaboration with, or with advice from, a qualified medical physicist to confirm that the equipment is safe to return to clinical use.

2.5. The end of clinical use and equipment disposal

(66) At some point during its life cycle, the equipment will become a candidate for retirement and disposal. This may be, for example, because it can no longer be repaired or be brought economically back to acceptable specification by the manufacturer, it is no longer supported by the manufacturer, a lease or managed service contract has expired, it is obsolete, its clinical performance is no longer sufficient for the task, or repurposing is required. At that point, a decision to remove it from service might be made. However, a policy on removal from service is an essential part of device management (MHRA, 2015), and planning for replacement should be in hand before any decision is necessary. The planning cycle should include considerations on the justification for the new equipment that is to be obtained, and go on to consider all of the other items in the equipment life cycle identified above. The cycle should consider Health Technology Assessments where they exist.

(67) Due to their diversity and complexity, there are many methods for disposal of medical devices such as x-ray equipment. Options range from scrappage to resale for subsequent reuse. In most cases, consultation between the user and manufacturer or perhaps prospective reseller is critical, especially for high-technology items, in order to decide the best method for disposal (WHO, 2017). No equipment should be scrapped without appropriate consideration of environmental impact and relevant regulatory controls.

(68) Charitable donations of x-ray equipment can be very helpful; may improve the efficiency of health facilities; may save the costs of purchasing new equipment; and may make some diagnoses or therapies accessible to patients, especially in resource-limited settings. Such donations can also cause health risks if their safety and performance are not verified prior to donation. They should also be furnished with full documentation sets in the correct language. The donor should ensure that the infrastructure exists for appropriate and cost-effective maintenance and QC in the recipient country. As emphasised earlier, used or refurbished equipment should function as originally intended, and meet all the performance and safety requirements that it did when new (IEC, 2019a).

(69) According to the World Health Organization (WHO), quality problems associated with donated medical devices have been reported in many countries. These problems often result in receiving countries incurring unwanted costs for maintenance and disposal, and may also create the impression that the equipment is ‘substandard’ and has been ‘dumped’ on a receiving country (WHO, 2017). Specific advice on the donation of medical imaging equipment can be found in WHO (2011) and THET (2013).

(70) All donated equipment should meet the suitability criteria defined by WHO (2011), namely:

the equipment is appropriate to the setting;

the equipment is good quality and safe;

the equipment is affordable and cost-effective;

the equipment is easy to use and maintain; and

the equipment conforms to the recipient's policies, plans, and guidelines.

3. THE OPTIMISATION PROCESS

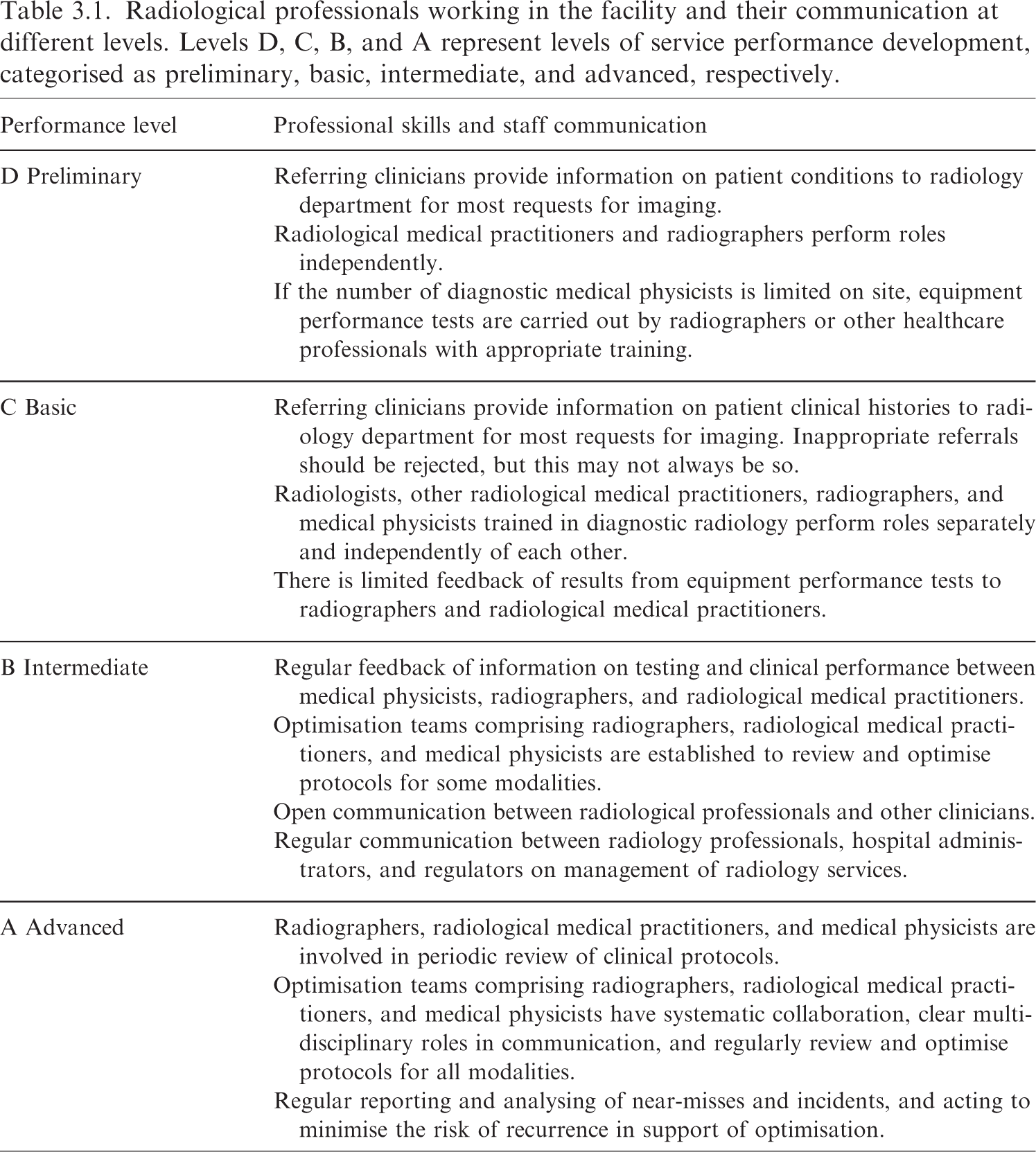

Radiological professionals working in the facility and their communication at different levels. Levels D, C, B, and A represent levels of service performance development, categorised as preliminary, basic, intermediate, and advanced, respectively.

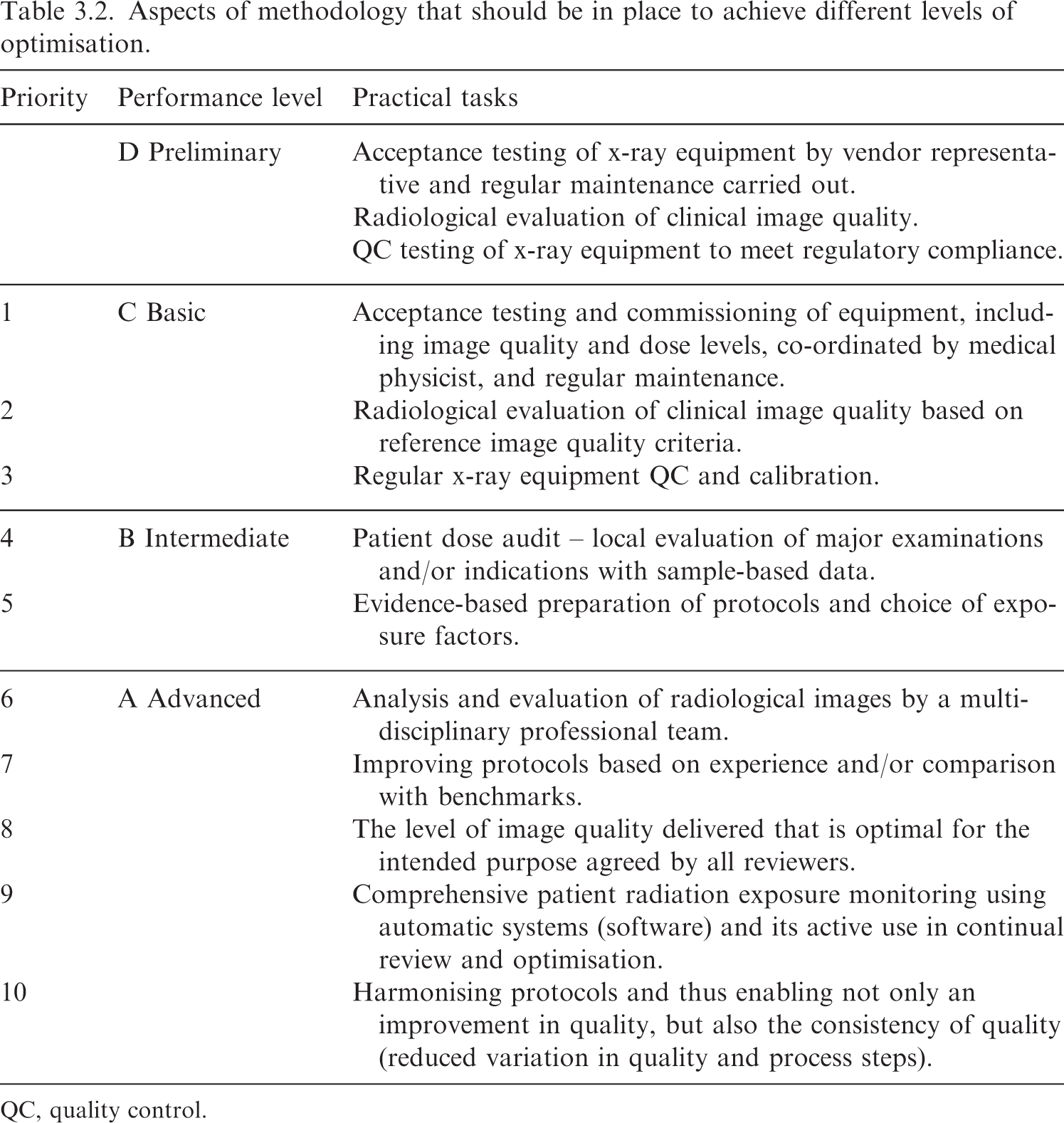

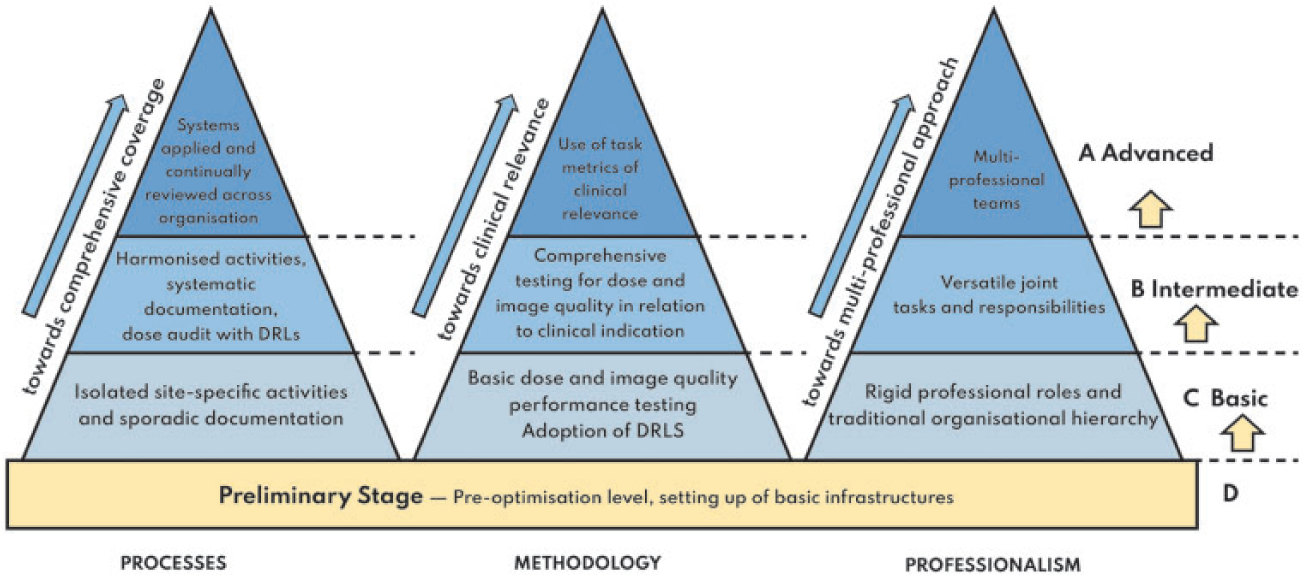

Aspects of methodology that should be in place to achieve different levels of optimisation.

QC, quality control.

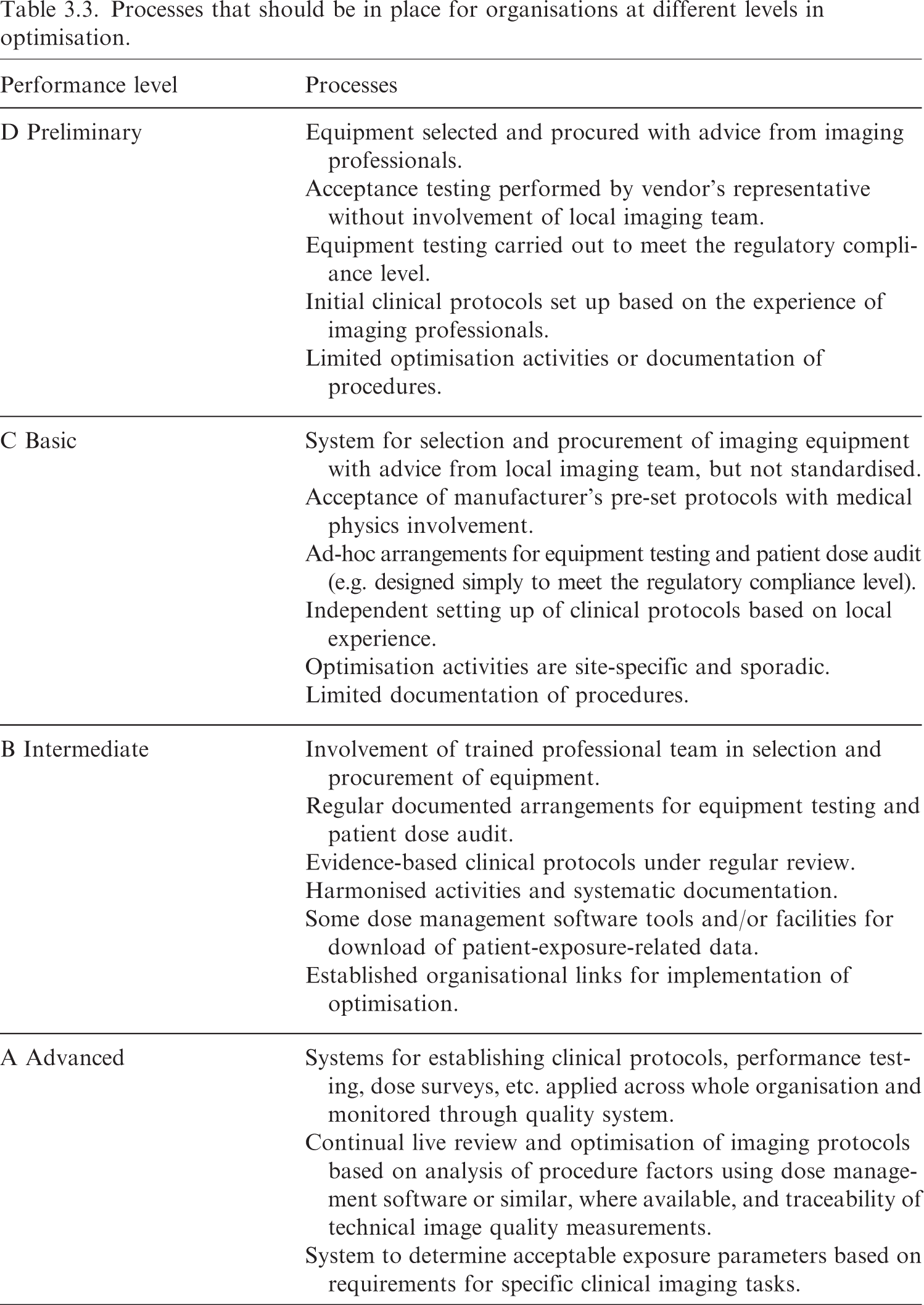

Processes that should be in place for organisations at different levels in optimisation.

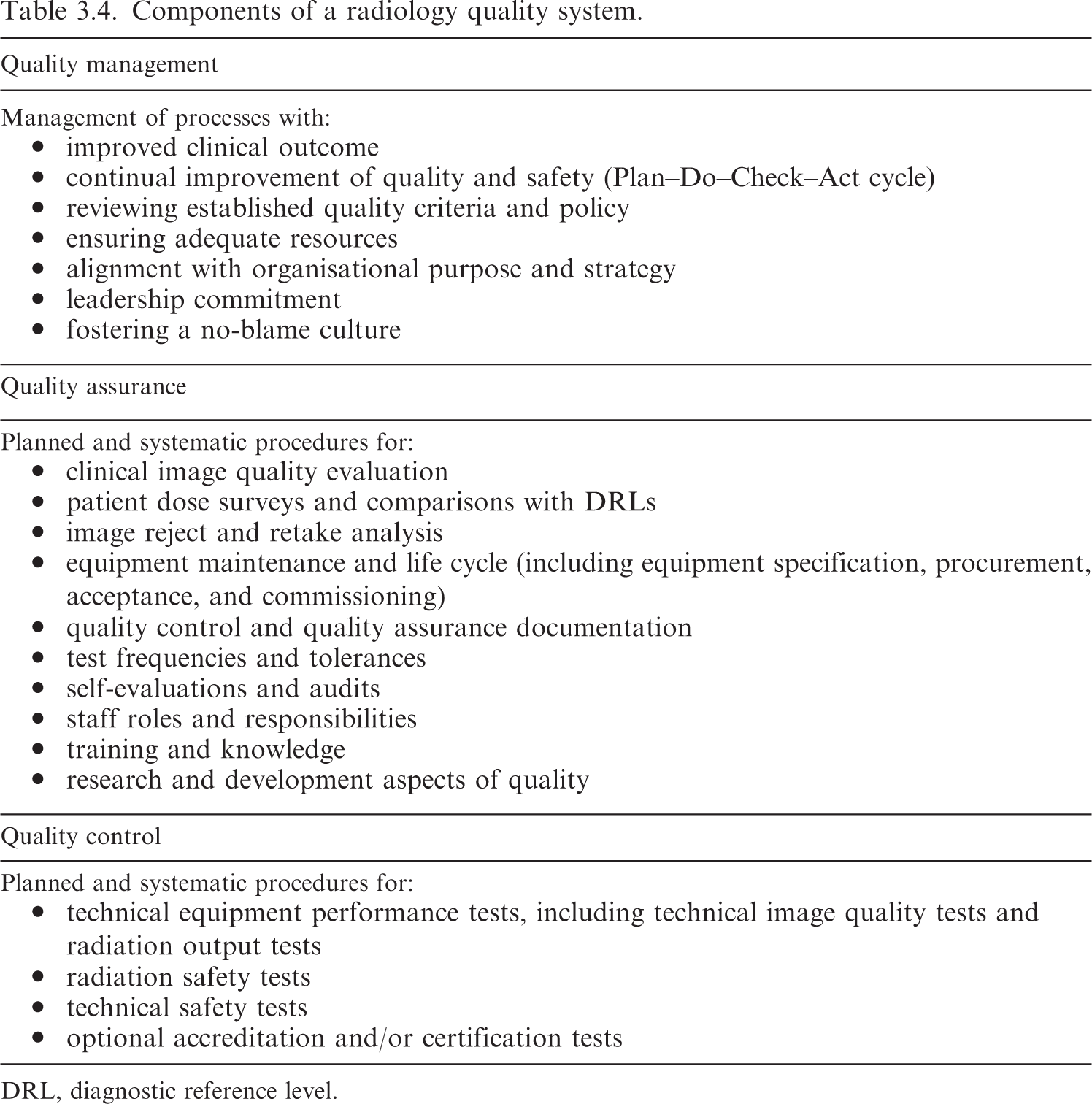

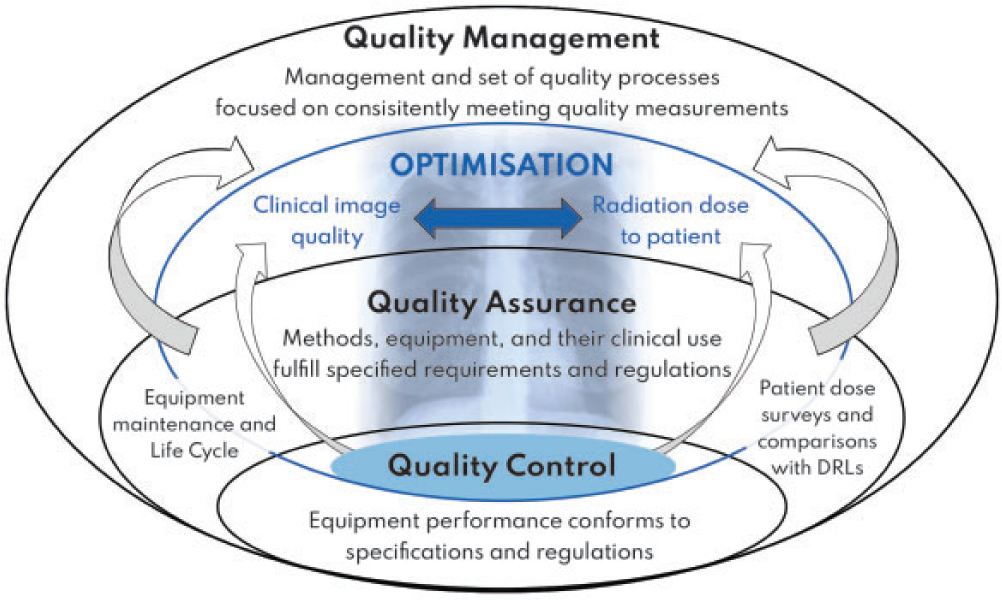

Components of a radiology quality system.

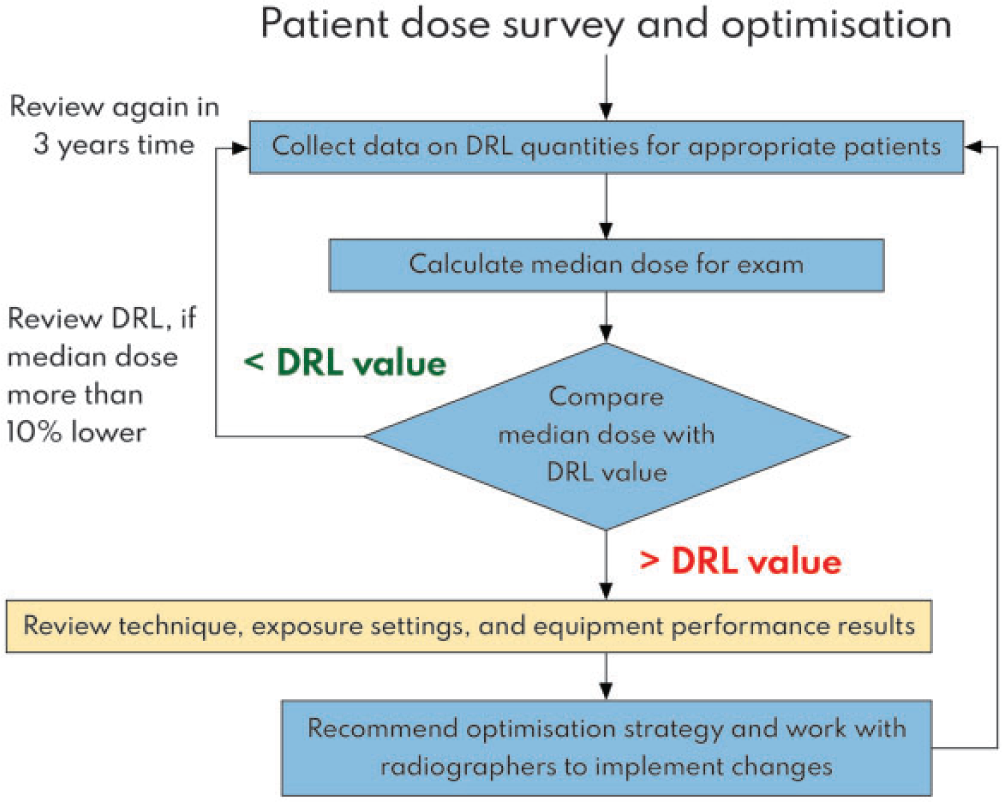

DRL, diagnostic reference level.

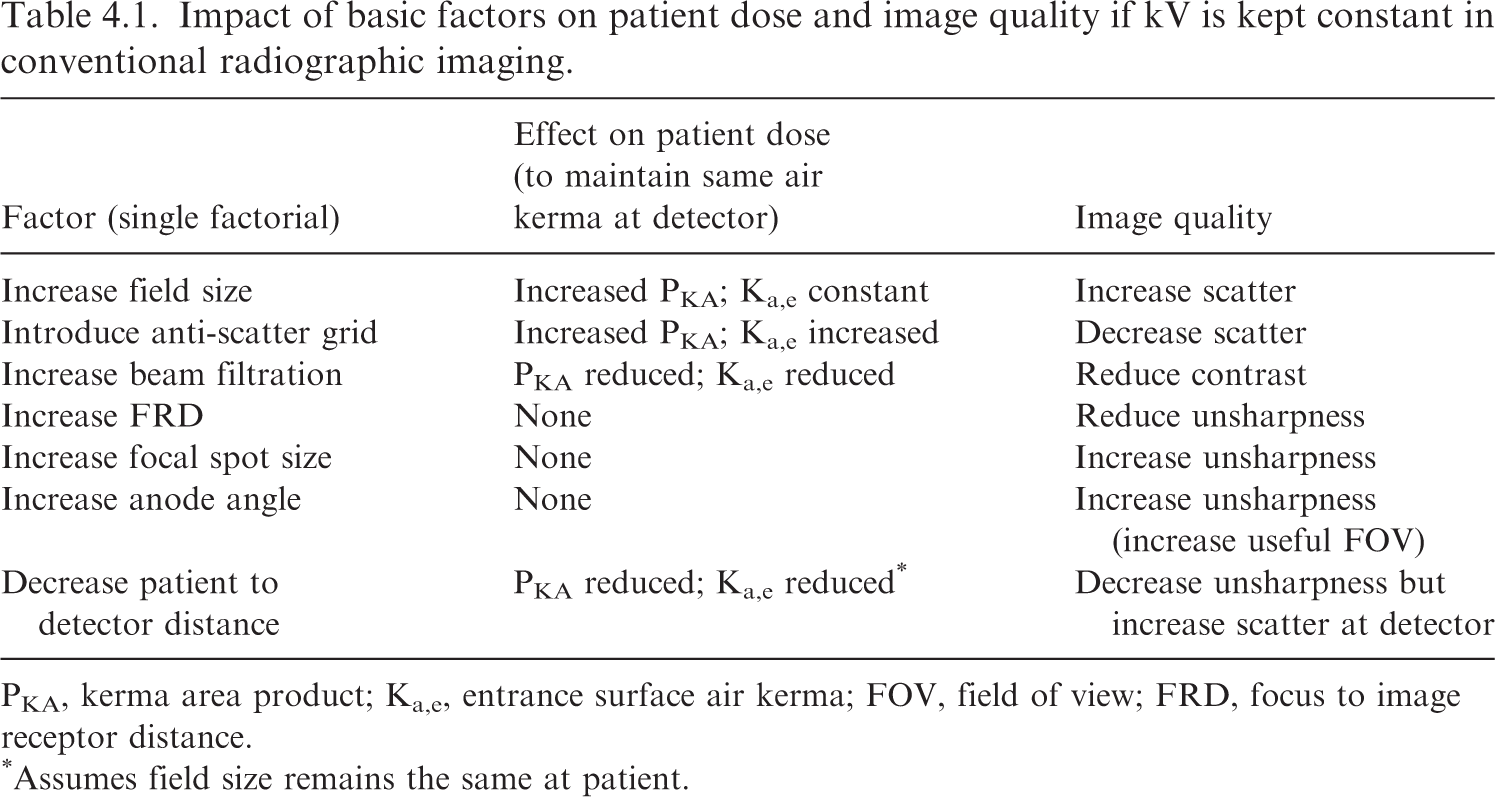

Impact of basic factors on patient dose and image quality if kV is kept constant in conventional radiographic imaging.

PKA, kerma area product; Ka,e, entrance surface air kerma; FOV, field of view; FRD, focus to image receptor distance.

Assumes field size remains the same at patient.

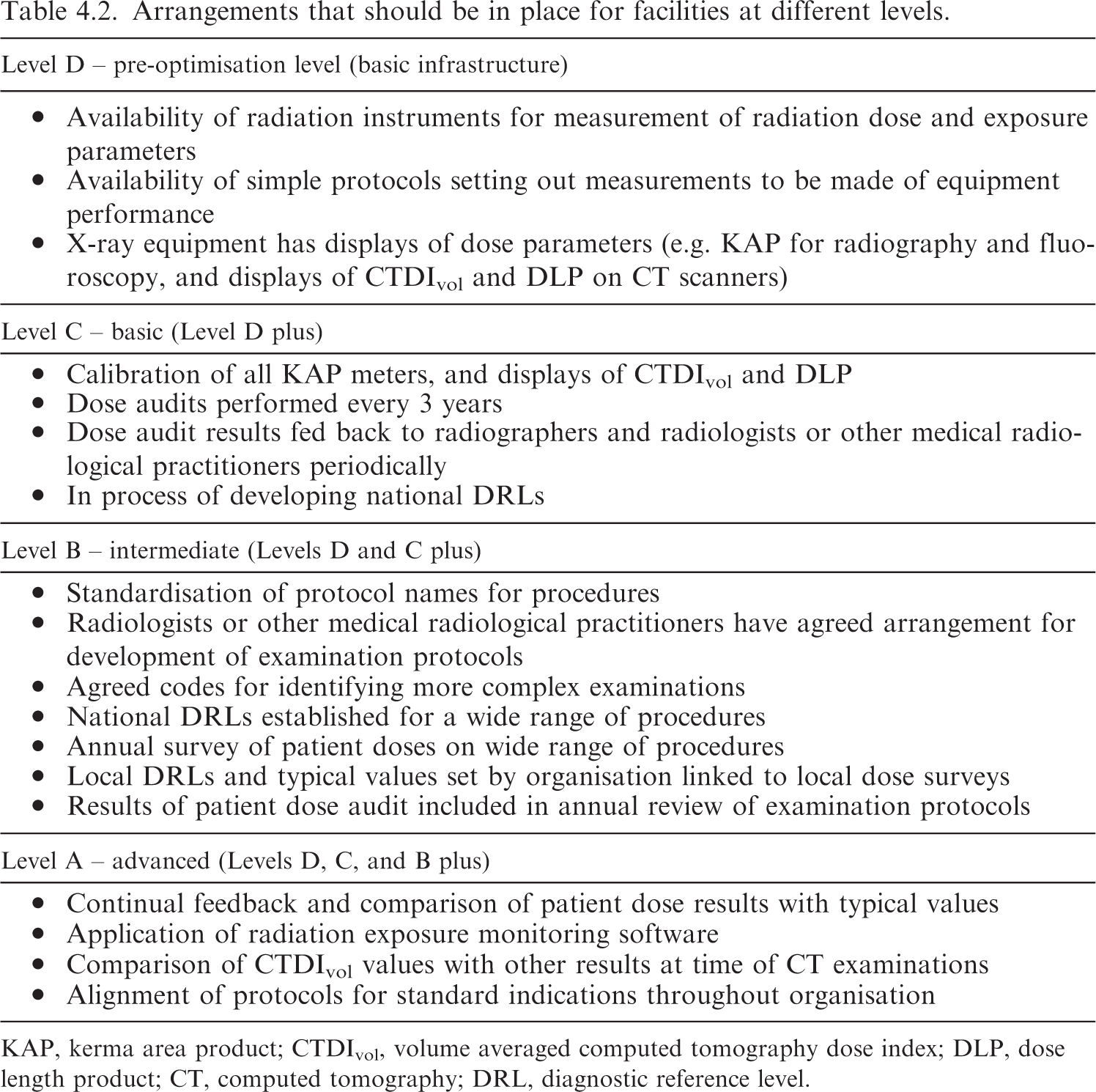

Arrangements that should be in place for facilities at different levels.

KAP, kerma area product; CTDIvol, volume averaged computed tomography dose index; DLP, dose length product; CT, computed tomography; DRL, diagnostic reference level.

3.1. The status of optimisation and the challenges

(72) The areas that need to be tackled first to improve optimisation in any facility depend heavily on the available tools, the technical infrastructure, and the professional expertise available. At the present time, the majority of facilities around the world do not have the necessary tools, teams, or expertise to fully embrace optimisation and take it forward to the same end-point. There are specific concerns when digital imaging equipment is introduced into centres for the first time. The replacement of older equipment with digital equipment often creates a perception that the digital equipment is ‘intrinsically’ better and safer just because it is newer or digital. However, the dose levels could be unreasonably high or too low without anyone realising, because the grey-scale images are scaled based on the recorded data. What is important depends on the available tools and expertise. Lower-income countries with less-developed facilities may not have the capability of making full use of methods that are accessible to them with the existing technical infrastructure and limited availability of multi-professional expertise. (73) Access to diagnostic imaging facilities enables accurate diagnosis, treatment, management, and optimal outcomes, but this is limited in lower-income and low–middle-income countries, and in some rural parts of high-income countries, due to a lack of adequate resources (DeStigter et al., 2021). However, access to imaging services needs to be developed at every level of the health system. This includes provision of radiographic x-ray equipment for community primary healthcare services, as this is a mainstay for the investigation of common conditions such as pneumonia and fractures in many parts of the world, as well as CT and interventional equipment in specialised hospitals. (74) This publication attempts to address this range through introduction of a layered approach with resources and activities being added as a radiology facility develops more of the requirements for optimisation (Fig. 3.1). An imaging service would move upwards through Levels D to A as more aspects to improve optimisation are added or achieved. It is hoped that imaging facilities will be able to identify arrangements that they have in place, and use the information provided to prioritise the next step. When a primary care radiography facility is set up, this would be at Level D, and basic prescriptive requirements in terms of staff and equipment would need to be put in place to achieve optimisation at Level C. The majority of established x-ray facilities are likely to be at Levels B and C, and the aim to achieve Level A will require considerable development of multiple aspects of the service. Regardless of level, optimisation requires a continuous process of improvement through a quality dose management programme. The model of level-based imaging optimisation is designed to inform and guide policymakers and radiology managers in prioritising requirements and budgetary decisions. Illustration showing layers linked to resources and activities in the implementation and development of optimisation within a radiology department. DRL, diagnostic reference level.

3.2. Steps in the development of optimisation

(75) Optimisation depends on a comprehensive set of factors which have to work together in order to reach a continuous and effective process. Continuous improvement and consistency of the outcome do not occur with separate functions in compartmentalised environments. The goals and development steps can be described in terms of three different perspectives or aspects in the order in which they would evolve chronologically:

professionalism (professional skills and collaboration);

methodology (methodology and technology); and

processes (organisational processes and documentation).

(76) This is a development of proposals by Samei et al. (2018). Within each of these areas, there are different levels of performance and optimisation that radiology facilities will have achieved. A combination of multi-professional skills, utilisation of clinically relevant parameters for measurement and evaluation, and integration with organisation-wide processes with continuous monitoring are required to enable an effective optimisation process. Fig. 3.2 sets out broad categories for the system that would be in place to achieve different levels of optimisation.

The three main components in the development and maturation of optimisation. Processes are placed on the left, as once the system has been set up, these would set in motion the performance of other tasks. The levels represent different stages in achievement moving upwards from Level D towards Level A. Level D represents a basic infrastructural level as a prerequisite for initiation of the optimisation process. Levels A, B, and C set out the arrangements that will be in place for each component when that level is achieved. DRL, diagnostic reference level.

(77) The first step is for facilities to evaluate the arrangements that are in place, using this system to identify how much of each component they have in place in order to guide them in decisions about what actions need to be taken. Use of the model can be flexible, in that it might potentially be applied either to x-ray rooms within a single hospital, or to several facilities that come under the management of one organisation. The levels achieved within each component have been labelled Level A – advanced, Level B – intermediate, Level C – basic, and Level D – preliminary for centres that have been set up recently. The layer levels currently fulfilled and those that it is possible for different facilities to achieve will vary by facility type and by country.

(78) Facilities may be at different levels in the three components in Fig. 3.2. For instance, medical physicists may undertake all the compliance testing needed to check that dose and image quality performance is maintained, but communication channels with radiographers and radiologists may be limited, perhaps because testing is done by an external medical physics group, and arrangements may vary from one facility to another within a multi-site organisation. Thus, the levels within the model would be: professional skills, Level C; methodology, Level B; and processes, Level C. Taking another example, there may be a medical physicist based within the radiology department with regular communication with other specialities, but still undergoing training and accumulating experience, who has only limited equipment for testing x-ray equipment performance, and still developing arrangements with other sites. The levels for this organisation within the model would be: professional skills, Level C; methodology, Level C; and processes, Level C, but with the potential to move to Levels B, B, and B and onward over time.