Abstract

Due to the specific character of the radiological risk, judgements on whether the use of nuclear technology would be justified in society have to consider knowledge-related uncertainties and value pluralism. The justice of justification not only informs the right of the potentially affected to participate in decision making, but also implies the responsibility of concerned actors to give account of the way they rationalise their own position, interests, hopes, hypotheses, beliefs, and concerns in knowledge generation and decision making. This paper characterises the evaluation of whether the use of nuclear technology would be justified in society as a ‘complex social problem’, and reflects on what it would imply to deal with its complexity fairly. Based on this assessment, the paper proposes ‘reflexivity’ and ‘intellectual solidarity’ as ethical attitudes or virtues for all concerned actors, to be understood from a specific ‘ethics of care’ perspective ‘bound in complexity’. Consequently, it argues that there is a need for an ‘interactive’ understanding of ethics in order to give ethical attitudes or virtues a practical meaning in a sociopolitical context, and draws conclusions for the case of radiological risk governance.

1. INTRODUCTION

What do we talk about when we talk about the risk that comes with the use of nuclear energy as an energy technology? In the general case of evaluating a practice or conduct that involves a health risk, we obviously need knowledge about the nature of cause and effect, and the probability that an adverse effect will occur. However, similar to the case of many other risk-inherent technologies with a wider impact on society (such as the use of fossil fuels, food preservatives, or genetically modified organisms), assessment of the risk of nuclear energy, in the sense of the evaluation of its acceptability as a possible threat to our health and the environment, is complicated in two ways. First, there is the fact that, in judging whether the risk is acceptable in view of the anticipated ‘benefits’, one has to deal with uncertainty due to incomplete and speculative knowledge. Second, there is the fact that evaluations of nuclear risk are also made with reference to the existence of alternative energy technologies, or with reference to more ‘fundamental’ values such as the value of nature. These will be commented on briefly below 1 .

In short, the elements of knowledge-related uncertainty, or the ‘epistemic complexity’, to take into account can be summarised as follows.

The possibility of a nuclear accident [due to technology failure, human error, force majeure (e.g. the earthquake and tsunami in Fukushima), or a combination of those factors]. The lack of ultimate control of the future behaviour or integrity of a nuclear waste disposal facility. Due to the stochastic nature of low dose effects and the fact that health effects such as leukaemia and solid cancer can also have other causes, the prediction of radiation health effects at low doses remains a complex endeavour. What is known of low dose effects can be summarised in three steps:

There is evidence of health effects below 100 mSv, which means that it can be said with certainty that the ‘so-called’ 100-mSv-threshold hypothesis is false. For an in-depth analysis, see Smeesters (2014). Current understanding of mechanisms and quantitative data on dose and time–dose relationships supports the linear-non-threshold (LNT) hypothesis (ICRP, 2005). This insight supports the idea that the LNT hypothesis that was first introduced based on the precautionary principle is the correct hypothesis to maintain. Scientific discussions are ongoing with regard to possible concrete health effects of low doses in concrete situations, such as the scientific discussion on the INWORKS epidemiological study (e.g. Doss, 2015; Leuraud et al., 2015; Richardson et al., 2015), and the scientific discussion on the possibility of thyroid cancer in children in Fukushima (Kageyama, 2015; Tsuda et al., 2016). While serious scientific discussions are taking place, it has to be acknowledged that there is no definite scientific conclusion on the actual manifestation and predictability of these concrete health effects in these concrete situations, which does not mean that specific indications, even if they are preliminary, cannot be used as factors to consider in the evaluation of the acceptability of the concrete risk.

Of course, assessment of the risk of nuclear energy is not a pure knowledge problem. Evaluation of whether a nuclear risk would be socially acceptable is also influenced by ‘external’ value references. These values could serve ‘in favour of’ or ‘against’ nuclear energy, and include:

the value of nuclear energy as a ‘low-carbon’ energy technology in energy policy concerned with climate change; the fact that nuclear technology can be used to develop nuclear weapons; the vulnerability of nuclear installations to terrorist attacks; the value of alternative energy technologies (taking into account the benefits and potential adverse consequences of their use); the value of nature (‘one should not mess around with nature’ – an argument that, in the context of climate change discussions, is now used as well against nuclear energy as in its favour); and more general values such as ‘sustainability’, ‘justice’, ‘precaution’ and ‘freedom’ (the last in the sense of possibility of self-determination in caring for one’s own health or the environment).

Last but not least, it is known that evaluations of the acceptability of nuclear risk (taking into account knowledge-related uncertainty and external value references) are particularly complicated as they now unavoidably take place in a sociopolitical context that is marked by controversy around the nuclear issue as such. Also, given that one cannot ‘escape’ history or make abstraction of it, this controversy is triggered by:

the accidents that happened; the link with the military context; the ‘democratic deficit’ with respect to the way in which nuclear energy has been introduced, and power plant and waste disposal siting has been undertaken in the past (and still undertaken at the present time); and the fact that nuclear energy policy is associated with neoliberal global strategies of profit and power driven by large corporations and their political supporters.

Therefore, taking into account the existence of these external value references and the context of controversy, one has to accept that even if we would all agree on the available knowledge to evaluate a nuclear risk, opinions would probably still differ about its acceptability. In these cases of ‘value pluralism’ or ‘moral pluralism’, science may thus inform us (to a certain extent) about the technical and societal aspects of options, but it cannot always instruct or clarify the choice to make.

The above characterisation of the risk of nuclear energy may support the idea that there is no overall evidence that would unequivocally lead to consensus on the unacceptability of the risk of nuclear energy (in view of the potential adverse consequences), nor on its acceptability (in view of its benefit as a low-carbon energy technology). People may have informed valuable and serene opinions in favour of or against nuclear energy, but none of the two camps can ‘prove’ that the other is wrong. As a consequence, as with many other practices that come with a collective health risk, fairness with respect to justifying or rejecting the risk of nuclear energy as such can only relate to how we make sense of it in knowledge generation and decision making. This brings us to a reflection on the idea of ‘fair risk governance’ and how to understand it from the perspective of ethics.

2. ETHICS, FAIRNESS, AND TRUST: THE IDEA OF FAIR RISK GOVERNANCE

What do we talk about when we talk about ethics? Ethics is about being concerned with questions of right and wrong, but there are different ‘levels’ of thinking about these questions. Philosophy identifies ‘meta-ethics’ as that discipline or perspective that deals with concepts of right and wrong (What is rightness? What is goodness?). Next to that, philosophers speak of ‘normative ethics’ as the discipline or perspective that considers the criteria that can be used to evaluate a specific practice or conduct. In that sense, normative ethics thus refers to ‘what ought to be’ in the absence of ‘evidence’ that would facilitate straightforward judgement, consensus, and consequent action. That absence of evidence can relate to the knowledge as well as to the values we may want to use to evaluate a specific practice or conduct.

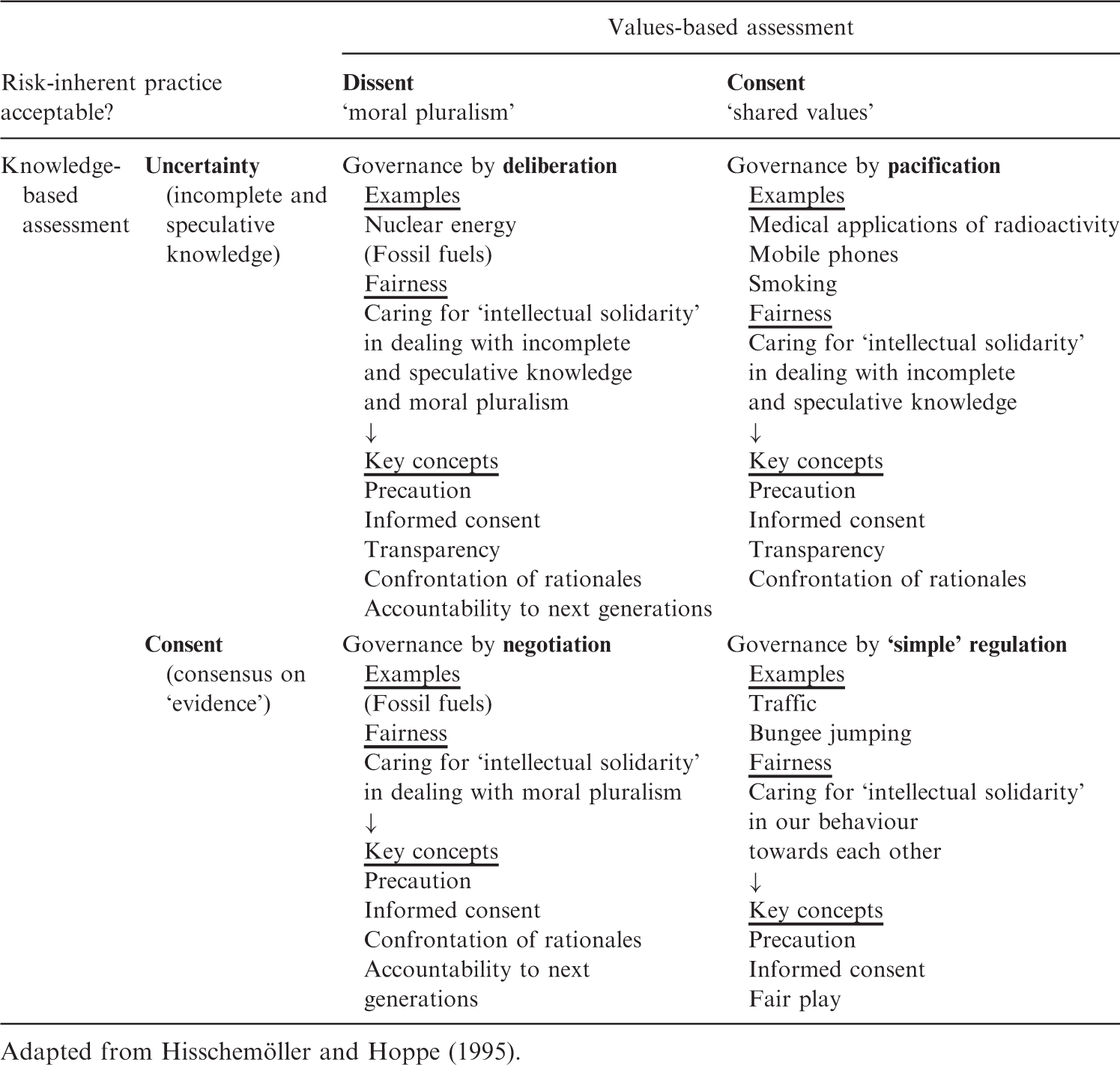

Justifying risk – mapping the field.

Adapted from Hisschemöller and Hoppe (1995).

The context of this text does not allow broad elaboration on Table 1, but it shows primarily that the risks of bungee jumping, mobile phones, or nuclear energy are incomparable, as the evaluation of their acceptability depends in different ways on knowledge and values.

The bungee jumper will not ask to see the test procedures of the rope before making a jump. In general, the jumper trusts that these ropes will be safe, but, more importantly, he/she makes the decision to jump on a voluntary basis. Despite the fact that more than one million people die each year in car accidents globally, 2 no reasonable person is advocating a global car ban. Similar to bungee jumping, the key concepts of fairness related to taking the risk are precaution, informed consent, and fair play. In the case of driving a car, precaution not only refers to protection measures such as air bags, but also to the value of driving responsibly. In that case, fair play refers to the idea that one can only hope that other drivers also drive responsibly.

Evaluation of the risk that comes with smoking or the use of mobile phones is what one could call a ‘semi-structured’ or ‘moderately structured’ problem (Hisschemöller and Hoppe, 1995) that can be handled on the basis of ‘pacification’. The reason is that, despite uncertainties that complicate the assessment of those specific risks, 3 people agree to take or allow them on the basis of ‘shared values’. Shared values are thus about those situations wherein relevant but incommensurable values may still exist, but in which we also have the feeling that we all accept or allow a specific ‘risky’ practice in light of a ‘higher’ shared value. This shared value can be a shared practical benefit (such as in the case of mobile phones), but also a specific freedom of choice ‘to hurt yourself’ in view of a personal benefit, taking into account that this behaviour should not harm others (such as in the case of smoking). With reference to Table 1, one could say that fairness is thus the way we care for ‘intellectual solidarity’ in dealing with incomplete and speculative knowledge, and the key concepts of fairness in this sense are precaution, informed consent, transparency (with respect to what we know and do not know, and with respect to how we construct our knowledge), and our joint preparedness to give account of the rationales we use to defend our interests (‘stakes’). Due to the uncertainties that complicate the assessment, protection measures are essentially inspired on and supported by the precautionary principle. In the case of mobile phones, this principle translates as the recommendation to use them in a ‘moderate way’ and the recommendation to limit the use by children. For smoking, it translates as anti-smoking campaigns towards (potential) smokers (with special attention to young people), and as measures to protect those ‘passively involved’ (the passive smoker). Given the awareness of the addictive character of smoking, additional measures are gradually adopted to ‘assist’ smokers who want to quit. In a similar sense, evaluating the risk coming with the use of radiation in a medical context can also be called ‘governance by pacification’. The value of informed consent remains central and also applies to the close relations of the patient (family members), but essentially all agree that the patient takes the risk of a delayed cancer (due to diagnosis or therapy) in light of a ‘higher’ benefit (information about a health condition or the hope that the actual cancer will be cured, respectively).

In contrast to complex problems that are handled on the basis of ‘pacification’, justifying or rejecting nuclear energy seems to be an unstructured problem that will always need deliberation. Not only do we need to deliberate the available knowledge and its interpretation, but deliberation will also need to take into account the various ‘external’ values that people find relevant to judge this case, and the arguments that they construct on the basis of these values. Therefore, the fairness of evaluation relates to ‘intellectual solidarity’ in dealing with incomplete and speculative knowledge, but also in dealing with moral pluralism. The key criteria are again precaution, informed consent, transparency, and (the preparedness for a) confrontation of rationales, now completed with a sense of accountability towards those who cannot be involved in the evaluation (next generations). In comparison with nuclear energy, the evaluation of the risk that comes with the use of fossil fuels is a complex problem that, in principle, can be treated on the basis of ‘consent on causality’. The Intergovernmental Panel on Climate Change (2014) states in its Fifth Assessment Report that ‘… human influence on the climate system is clear… and that… warming of the climate system is unequivocal, and since the 1950s, many of the observed changes are unprecedented over decades to millennia. The atmosphere and ocean have warmed, the amounts of snow and ice have diminished, and sea level has risen…’. Despite this evidence of a ‘slowly emerging adverse effect’, the assessment of whether concrete draughts or storms can be contributed to human-induced climate change, or what the concrete effect of specific mitigation or adaptation policies would be remains troubled by knowledge-related uncertainty. Therefore, the use of fossil fuels is a complex problem that requires ‘deliberation’, and the key concepts of fairness remain the same as for the evaluation of nuclear energy: precaution, informed consent, transparency, confrontation of rationales, and accountability to next generations.

The discussion of Table 1 allows three reflections related to ethics, fairness, and trust to be made in relation to risk governance. Obviously, these reflections are based on the author’s specific understanding of risk assessment in relation to fairness, and are therefore presented as a list of ideas that are open to discussion.

The assessment of what is an acceptable health risk for society is not a matter of science; it is a matter of justice.

I.a. Health risk is not a mathematical formula: it is a potential harm that you cannot completely know and cannot fully control, but that you eventually want to face in light of a specific benefit. People will accept a risk that they cannot completely know and that they cannot fully control simply when they trust that its justification is marked by fairness. Fairness relates primarily to the value of precaution, but also to the possibility of self-determination (‘informed consent’). I.b. Despite the differences between the cases discussed, they can all be characterised in relation to one idea with respect to self-determination: the idea that ‘connecting’ risk and fairness is about finding ground between ensuring people the right to be protected on one hand, and the right to be responsible themselves on the other hand. The right to be responsible leans thereby on the prime criterion of the right to have information about the risk and the possibility of self-determination based on that information, but one has to consider that, in a society of capable citizens, self-determination with respect to risk taking can have two opposing meanings: it can translate as the right to co-decide in the case of a collective health risk (as in the case of nuclear energy), but also as the freedom to hurt yourself in the case of an individual health risk (as in the case of smoking or bungee jumping). I.c. For any health risk that comes with technological, industrial, or medical practices and that has a wider impact on society, ‘the right to be responsible’ equals ‘the right to co-decide’. Enabling this right is a corollary of justice. Societal trust in the assessment of what is an (un)acceptable collective health risk for society should be generated ‘by method instead of proof’.

II.a. With respect to nuclear energy, no scientific or political authority can determine alone whether the risk would be an acceptable collective health risk for society. Good science and engineering, open and transparent communication, and the ‘promises’ of a responsible safety and security culture would be necessary conditions, but they can never generate societal trust in themselves. The reason is that there will always be essential factors beyond full control: nature, time, human error, misuse of technology, etc. II.b. The fact that people take specific risks in a voluntary way and often based on limited information may not be used as an argument to impose risks on them that might be characterised as ‘comparable’ or even less dangerous. That principle counts to the extreme. For example:

‐ the fact that the risk of developing cancer from smoking may be ‘higher’ than that from low-level radiation may not be used as an excuse to impose a radiation risk on people; and ‐ the fact that a nuclear worker may voluntarily accept an accumulated occupational dose of 20 mSv y−1 may not be used as an argument to justify a citizen’s dose of 1 mSv y−1 originating from a nuclear technology application without asking for his/her informed consent. Fair risk governance is risk governance of which the method of knowledge generation and decision making is trusted as fair by society. When the method is trusted as fair, that risk governance also has the potential to be effective, as the decision making will also be trusted as fair by those who would have preferred another outcome. Dealing with the complexity of risk assessment and justification in a fair way requires new governance methods.

III.a. Is fair risk governance with respect to collective health risks as characterised above possible today? In other words, do the methods we use to produce policy supportive knowledge and to make political decisions have the potential to enable ‘the right to co-decide’ (as a principle of justice) and to generate trust by their method instead of by their potential or promised outcome? The short answer is no. Meskens (2015a) argued in depth why and how the ‘governance methods’ we use today to make sense of the complexity of assessment and justification of typical collective health risks remain to be driven by the doctrine of scientific truth and the strategies of political ‘positionism’ and economic profit. As the context of this text does not allow deeper reflection on this general argument, the following reflections are restricted to the case of nuclear energy in the context of energy governance. III.b. For the case of nuclear energy in particular, Meskens (2013) argued that, because of the doctrinal working of science and the strategies of political ‘positionism’ and economic profit, the issue of nuclear energy is now locked in a comfort of polarisation that does not only play in public discourse but that is deeply rooted in the working of science, politics, and the market. As a result, in sharp contrast with the way that fossil fuel energy technologies are now subject of global negotiations driven by the doom of climate change, nuclear energy technology remains to ‘escape’ a deliberate justification approach as an energy technology on a transnational level. III.c. The question of whether one is ‘in favour of’ or ‘against’ nuclear energy remains meaningless if not integrated in a consensus framework for energy governance as such. In principle, that consensus framework is possible, as it would follow three policy principles with which most people would easily agree. These principles are (in this specific order):

the policy principle to minimise energy consumption (or thus to maximise energy savings) through democratic deliberation on its practical implementation; the policy principle to maximise renewables through democratic deliberation on its practical implementation; and the policy principle to organise a fair debate on how to produce what cannot be done yet with (1) and (2), and ‘confront' fossil fuels and nuclear energy, being the two ‘nasty’ risk-inherent energy technologies, with each other. Democracy in this sense implies that a society would need to be able to decide on how to produce ‘the rest' of its energy required for the future: with nuclear energy, with fossil fuels, or with a combination of both. In line with the reasoning above, a fair method of decision making would, in this context, be a method that would be sensed as fair because of its method by all concerned, regardless of whether the decision making would result in the acceptance or the rejection of the use of nuclear energy or fossil fuels. The fact that we are in a historically evolved situation where nuclear energy and fossil fuels are present while there have never been real democratic debates on their introduction cannot be used as an excuse not to organise this type of debate now. While it is true that, in terms of their adverse effects, nuclear energy and fossil fuels are ‘incomparable', that additional complexity would not prevent a democratic society from making informed and deliberate decisions on them.

Although we do not live in a world where politics, science, and the market would be prepared to engage in deliberation that would put policy principles (1) and (2) upfront, and that would take principle (3) seriously, we have the capacity to put that deliberation into practice. Justice with regard to how a specific collective health risk, such as the risk of nuclear energy or fossil fuels, is evaluated in society remains the central ethical principle, and that ethical principle translates in practice as the need for transdisciplinarity and civil society’s participation in scientific debate, and the need for participation of the potentially affected in democratic decision making.

3. THE BIGGER PICTURE: THE IDEA OF DEALING FAIRLY WITH COMPLEXITY

3.1. A neutral characterisation of complexity (of complex social problems)

It has now become trivial to say that we live in a complex world. Industrialisation, technological advancement, population growth, and globalisation have brought ‘new challenges’, and the global political agenda is now set by issues that burden both our natural environment and human well-being. Sketching what goes wrong in our world today, the picture does not look very bright: structural poverty; expanding industrialisation and urbanisation, and consequent environmental degradation; spill of precious resources, water, food, and products; adverse manifestations of technological risk; economic exploitation; anticipated overpopulation; and derailed financial markets. All of this adds up to old and new forms of social, political, and religious oppression and conflict, and makes the world a difficult place to live for many people. The stakes are high and the need to take action is manifest.

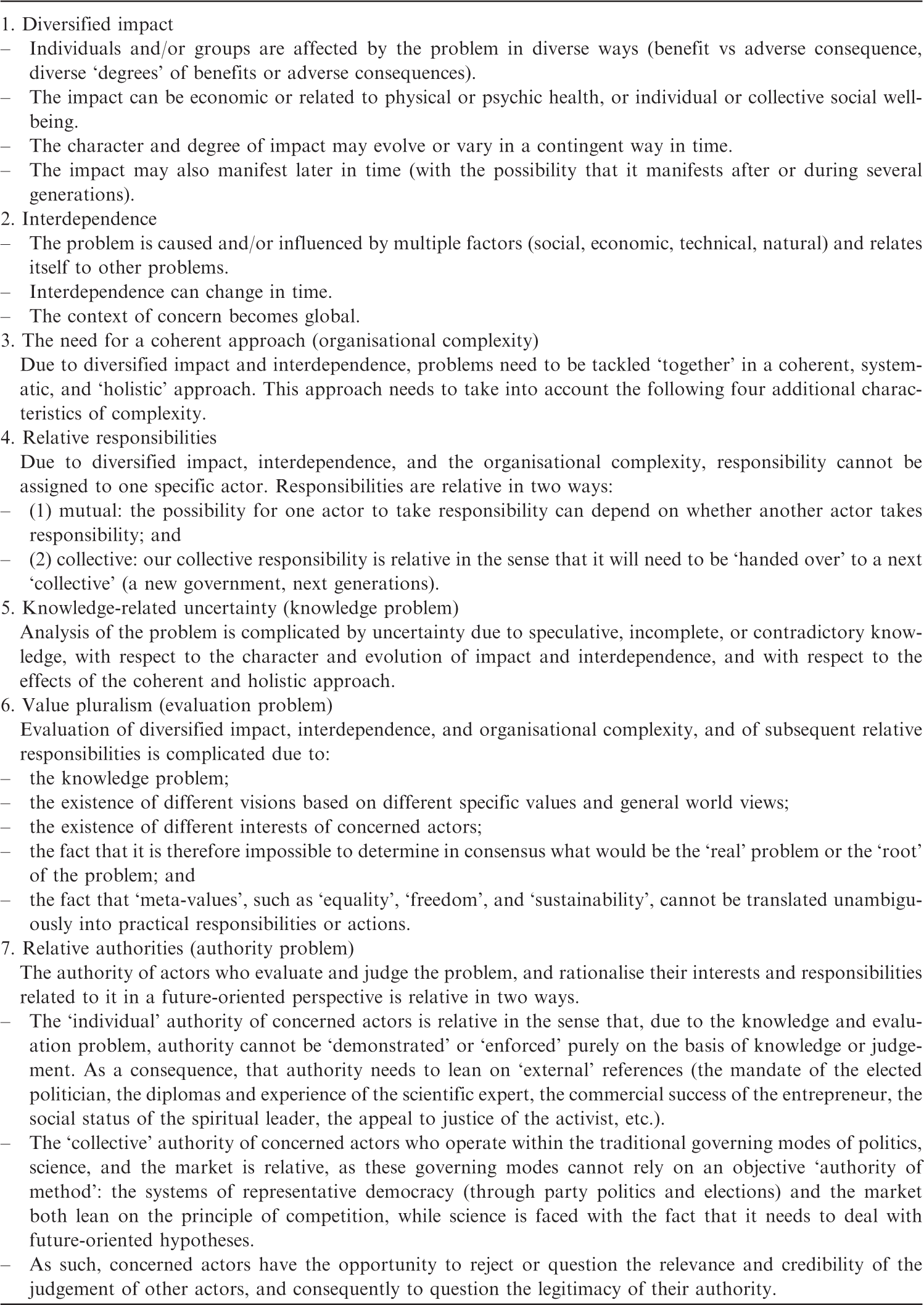

Seven characteristics of a complex social problem.

This text does not want to propose a manual, procedure, or instrument to solve complex social problems. Rather, the characterisation of complexity is meant as an incentive and a basis for ethical thinking, as it opens the possibility to reflect on what it would imply to ‘deal fairly with the complexity’ of those specific social problems, and of the organisation of our society accordingly. The possibility of doing so is in the fact that the characterisation of complexity in the form of the seven proposed characteristics can be called a ‘neutral’ characterisation, in the sense that it does not specify wrongdoers and victims as such (which, of course, does not mean there cannot be any). Representing the complexity as a complexity of interpretation enables the responsibility to be described ‘in the face of that complexity’ as a joint responsibility that is, as such, not divisive, which means that, in principle, it provides the possibility of rapprochement.

This joint responsibility ‘in the face of complexity’ has, at the same time, a binding and a liberating character for all concerned. Regarding the binding character, although nobody is blamed or suspected of reckless behaviour or of escaping responsibility, one could say that the characterisation of complexity is imperative for all concerned. First of all, any reflection on what it would imply to deal fairly with the complexity of the problem at stake would imply the need for each concerned actor to transcend the usual thinking in terms of their own interests, and the preparedness to become ‘confronted’ with the way he/she rationalises their own interests within the bigger picture. At the same time, due to the knowledge and evaluation problem, every concerned actor would need to acknowledge his/her specific ‘authority problem’ in making sense of the complexity of that problem, taking into account that not only the way he/she rationalises the problem as such, but also the way he/she rationalises his/her own interests, the interests of others, and the general interest in relation to that problem is simply relative. That relativity is reciprocal, in the sense that nobody can claim higher authority based on a deeper understanding of the problem that would lead to a view on the ‘solution’ that all others concerned would simply need to accept. This reasoning provides us with the possibility to argue that joint responsibility is not only binding but also liberating: as the authority of all concerned actors is relative in relation to the authority of others, it implies that all actors have the right to participate in making sense of the problem, and the right to co-decide on possible solutions to that problem.

The context of this text does not allow deeper reflection on the character of complexity as described above, and on how it can be ‘translated’ into characteristics of concrete complex social problems such as those referred to above. More important here is the focus on an ethical framework that would follow from the general and neutral characterisation of complexity of complex social problems, and on how that framework can inspire the new governance methods that would, as emphasised previously, enable ‘confrontation of rationales’ and ‘the right to co-decide’ in particular.

3.2. Ethics of care for our modern co-existence

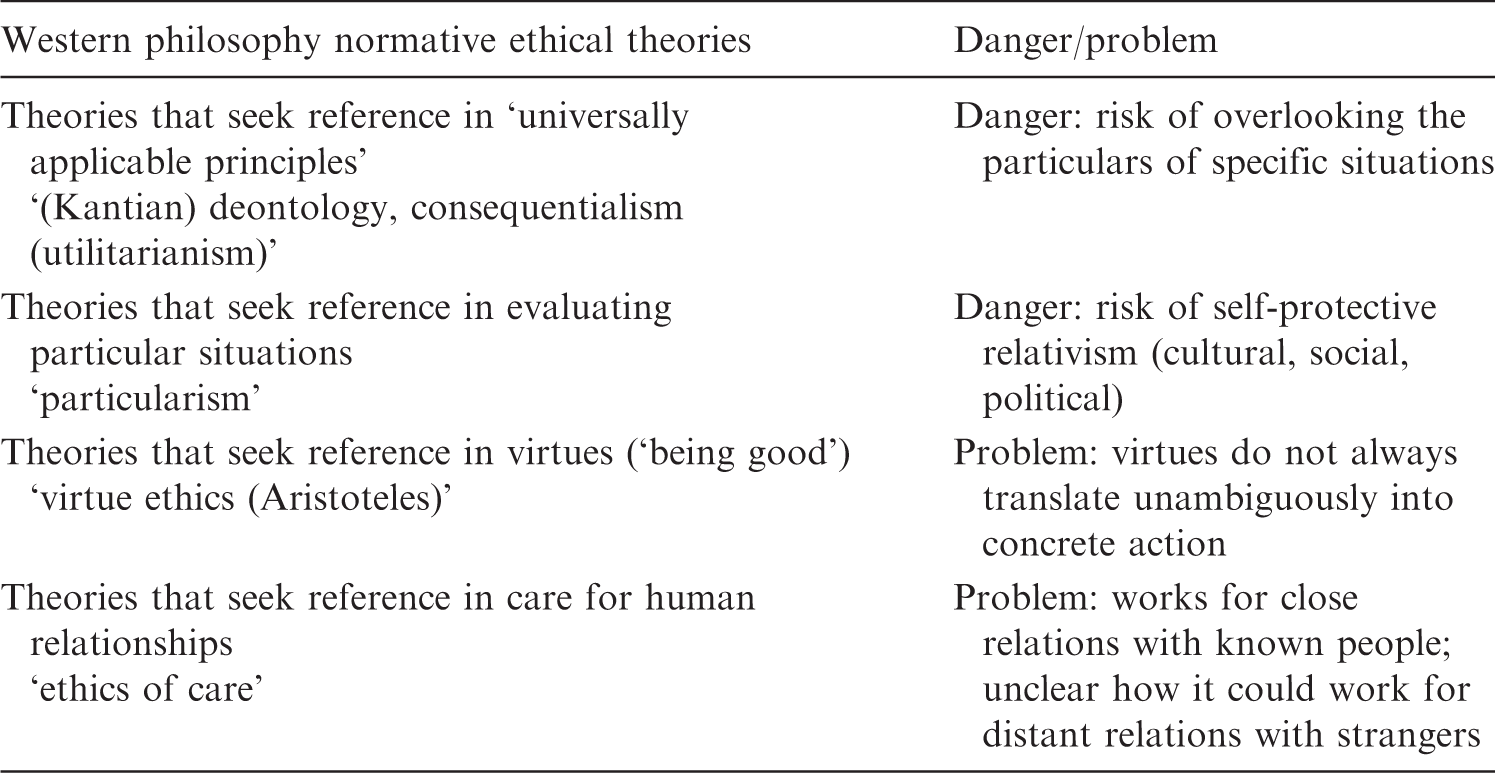

Four types of normative reference in Western philosophy normative ethical theories.

Table 3 can now be used as a backdrop for the formulation of a specific virtue ethics and ethics of care theory that could guide evaluation and action ‘in face of complexity’ in the context of complex social problems, as characterised above. The idea is that these theories would not face the traditional problems formulated above, as they do not aim to instruct concrete practical action of concerned actors, but rather inspire specific modes of reflective and deliberative interaction among them. The two theory proposals will be discussed briefly below.

3.2.1. Reflexivity and intellectual solidarity as ethical attitudes or virtues

Recalling the previous considerations on what it would imply to ‘deal fairly’ with the complexity of complex social problems, we could say that the joint responsibility of all concerned can be rephrased as the joint preparedness to adopt a specific responsible attitude or to foster a specific virtue ‘in face of complexity’. That responsible attitude or virtue is identical for all concerned actors (be it the scientist, the politician, the engineer, the manager, the entrepreneur, the expert

4

, the civil society representative, the activist, or the citizen), and can be described in a three-fold manner.

The preparedness to acknowledge the complexity of complex social problems and the organisation of our society as a whole. (Following 1) The preparedness to acknowledge the imperative character of that complexity, or thus to acknowledge the own authority problem (in addition to the knowledge and evaluation problem) in making sense of that complexity; that preparedness can be reformulated for each concerned actor as the preparedness to see ‘the bigger picture and oneself in it’, each with his/her specific interests, hopes, hypotheses, beliefs, and concerns. (Following 2) The preparedness to seek rapprochement with other concerned actors, and this through specific advanced formal interaction methods in research, politics, and education that would enable sense to be made of that complexity.

The three-fold preparedness suggested here can be considered as a ‘concession’ to the complexity as sketched above, and it may be clear that, with these reflections, we now enter the area of ethics. A first simple but powerful insight in that sense is the idea that if nobody has the authority to make sense of a specific problem and of consequent solutions, then concerned actors have nothing other than each other as equal references in deliberating that problem. In his book ‘The Ethical Project’, the philosopher Philip Kitcher makes a similar reflection by saying that ‘there are no ethical experts’ and that, therefore, authority can only be the authority of the conversation among the concerned actors (Kitcher, 2014). From the perspective of normative ethics, we can now (in a metaphorical way) interpret the idea of responsibility towards complexity as if that complexity puts an ‘ethical demand’ on every concerned actor, in the sense of an appeal to adopt a reflexive attitude in face of that complexity. That reflexive attitude would not only concern the way each actor rationalises the problem as such, but also the way he/she rationalises his/her own interests, the interests of others, and the general interest in relation to that problem.

The responsible attitude considered here can thus be described as a reflexive attitude in face of complexity, and, as a concession towards that complexity, that attitude can now also be called an ‘ethical attitude’ or virtue. However, given that the responsibility as suggested above would also imply rapprochement among concerned actors, one can understand that, in practice, this ethical attitude needs to be adopted in public, and that one needs specific formal interaction methods to make that possible. The joint preparedness for ‘public reflexivity’ of all concerned actors would enable a dialogue that, unavoidably, will also have a confrontational character, as every actor would need to be prepared to give account of his/her interests, hopes, hypotheses, beliefs, and concerns with respect to the problem at stake. That joint preparedness can be described as a form of ‘intellectual solidarity’ as, in arguing about observable unacceptable situations (e.g. extreme poverty), perceived worrisome situations or evolutions (e.g. climate change or population growth), or practices or proposed policy measures with a potential controversial character (e.g. the use of nuclear energy, genetically modified organisms, or a tax on wealth), concerned actors would need to be prepared to reflect openly towards each other and towards ‘the outside world’ about the way they not only rationalise the problem as such, but also their own interests, the interests of others, and the general interest in relation to that problem. Similar to understanding reflexivity as an ethical attitude or virtue, one can understand the sense of intellectual solidarity as an ethical attitude or virtue, and one could say that the second should and could be ‘stimulated’ by the first. In other words, a sense of intellectual solidarity implies reflexivity as an ethical attitude with respect to the own position, interests, hopes, hypotheses, beliefs, and concerns, and this in any formal role or social position (as scientist, politician, engineer, manager, entrepreneur, expert, civil society representative, activist, citizen, etc.). It is important to emphasise that intellectual solidarity is not some high-brow elite form of intellectual cooperation. It simply denotes our joint preparedness to accept the complexity of co-existence in general and of specific complex social problems in particular, and the fact that no one has a privileged position to make sense of it all. Intellectual solidarity, as an ethical commitment, is the joint preparedness to accept that we have no reference other than each other.

3.2.2. An ethics of care, ‘bound in complexity’

Section 3.2.1 elaborated on the meaning of reflexivity and (a sense of) intellectual solidarity as ethical attitudes or virtues, and on the need to adopt these attitudes or to foster these virtues because of complexity. In addition to that, it is possible to develop an ethical theory on how to deal fairly with the complexity of complex social problems based on the simple insight that we are all bound in that complexity. The idea that ‘we are all in it together’ informs the view that we should care for our relations with each other, in the sense that we need each other to make sense of complex social problems such as climate change, and to tackle them.

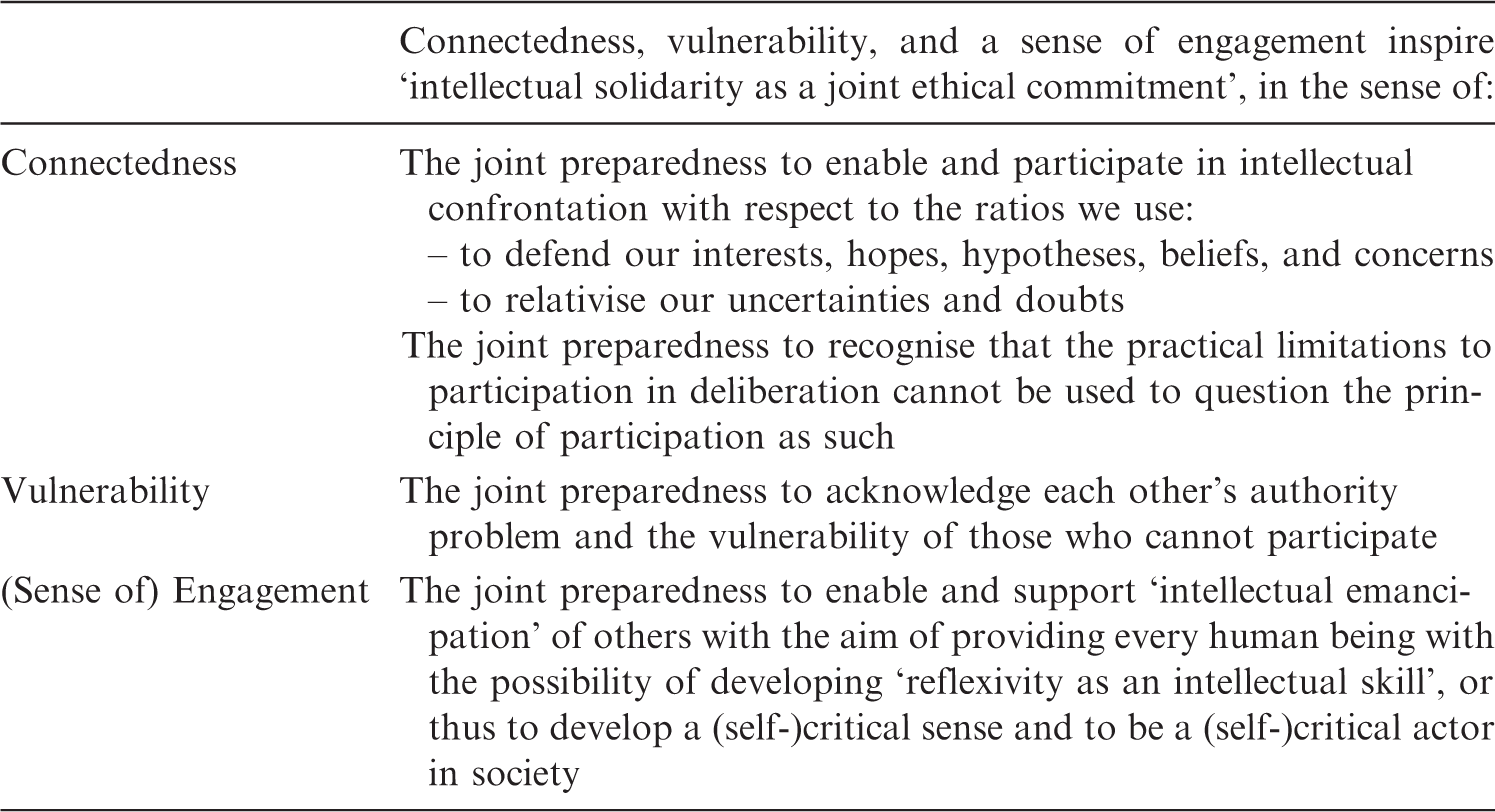

In short, the characterisation of complexity as sketched above enables a formulation of an ethics of care that could work for our distant relationships with strangers. The basic idea is that the ‘fact of complexity’ brings along three new characteristics of modern co-existence that can be named ‘connectedness’, ‘vulnerability’, and ‘sense of engagement’. Their meaning in relation to the complexity of complex social problems can be summarised as follows:

Connectedness. We are connected with each other ‘in complexity’. We cannot any longer escape or avoid it. Fair dealing with each other implies fair dealing with the complexity that binds us. Vulnerability. In complexity, we became intellectually dependent on each other while we face our own and each other’s ‘authority problem’. We should care for the vulnerability of the ignorant and the confused, but also for that of ‘mandated authority’ (such as that of ‘the scientific expert’, ‘the teacher’ or ‘the elected political representative’). Last but not least, we should care for the vulnerability of those who cannot be involved in joint reflection and deliberation at all. Obviously, without wanting to make evaluative comparisons between them, these can be identified as the next generations, but also as those among us who are intellectually incapable to join (animals, children, and humans with serious mental disabilities). (Sense of) Engagement. Our experiences now extend from the local to the global. As intelligent reflective beings, becoming involved in deliberating issues of general societal concern became a new source of meaning and moral motivation for each one of us. Intellectual solidarity as an ethical attitude or virtue in an ethics of care framework.

3.3. Enabling virtues: intellectual solidarity in democracy, science, and education

Moving now from normative ethical thinking to applied ethical thinking, the advanced formal interaction modes to enable reflexivity and a sense of intellectual solidarity referred to above can be given a name and a practical meaning. Taking into account the knowledge problem and the evaluation problem as the central characteristics of the complexity of complex social problems, reflexivity and intellectual solidarity as public ethical attitudes or virtues would need to inspire the method used to generate knowledge about these problems, and the method used to negotiate and make decisions related to them accordingly. So the question becomes, in what way could these virtues inspire the practice of research and decision making?

With the presentation of virtue ethics as one of the four traditional theories of ethics (of Western philosophy), it was noted that the problem with virtue ethics as a theory of normative reference is that virtues not always translate unambiguously into concrete action. First of all, virtues such as being ‘good’, ‘honest’, or ‘prudent’ obviously need to be considered in a practical context or situation in order to understand their practical meaning. However, even then, different virtues can come into conflict with each other, or acting from the perspective of one virtue can be complicated because of the existence of conflicting values to take into account. To give one example, what would it imply for a medical expert to be ‘prudent’ in advice with respect to mammography campaigns? Would it mean intensifying them in order to detect more breast cancers, or restricting them in order to limit the possibility of false-positive results and the risk of radiation?

In the same perspective, it is true that neither reflexivity nor (a sense of) intellectual solidarity can unambiguously inspire concrete action of concerned actors, but they can inspire interaction methods to enable and enforce them as virtues in the interest of meaningful dialogue. The following reasoning may clarify this. The previous section stated that a sense of intellectual solidarity implies reflexivity as an ethical attitude with respect to the own position, interests, hopes, hypotheses, beliefs, and concerns, and this in any formal role or social position (as scientist, engineer, politician, manager, medical doctor, citizen, civil society representative, activist, etc.). However, one may understand that the ability to adopt this attitude requires reflexivity as an ‘intellectual skill’, seeing the bigger picture and yourself in it (with your interests, hopes, hypotheses, beliefs, and concerns). The important thing is that reflexivity as an intellectual skill may benefit from solitary reflection, but it cannot be ‘instructed’ or ‘thought’. Neither can it be ‘enforced’ or ‘stretched’ in the same way as one can do with transparency in a negotiation or deliberation setting. For all of us, reflexivity as an intellectual skill essentially emerges as an ethical experience in interaction with others. That interaction may be informal, but it may be clear that the meaningful and ‘logical’ interactions in this sense are to be organised in the formal practices of scientific research, political deliberation, and education. In the interest of keeping this text concise, a brief comment on how this can be understood is given below.

As the challenge of science in making sense of complex social problems is no longer the production of credible proof but the construction of credible hypotheses, reflexivity and intellectual solidarity as ethical attitudes inspire the need to engage in advanced methods that are self-critical and open to visions from outside the traditional disciplines of science. In other words, knowledge to advise policy would need to be generated in a ‘transdisciplinary’ and ‘inclusive’ way, or thus as a joint exercise of problem definition and problem solving with input from the natural and social sciences, and the humanities as well as from citizens and informed civil society. An advanced method of political negotiation and decision making inspired by the ethical attitudes of reflexivity and intellectual solidarity would be a form of ‘deliberative democracy’ that sees deliberation as a collective self-critical reflection and learning process among all concerned, rather than as a competition between conflicting views driven by self-interest. Political deliberation liberated from the confinement of political parties and nation states, and enriched with opinions from civil society and citizens, and with well-considered and (self-)critical scientific advice would have the potential to be fair in the way it would enforce actors to give account of how they rationalise their interests from strategic positions, but also in the way it would enable actors to do so from vulnerable positions. It would be effective as it would have the potential to generate societal trust based on its method instead of on promised outcomes. While the utopian picture sketched here would imply a total political reform on all levels, intellectual solidarity can already open up old political methods for the good of society. At both local and global levels, politicians could organise public participation and deliberation around concrete issues, and engage in taking the outcome of that deliberation seriously. Last but not least, there is the need for a new vision on education. Fair dealing with complex social problems needs an education that cares for ‘critical-intellectual capacity building’. It would be naïve to think that scientists, politicians, engineers, entrepreneurs, managers, experts, activists, or citizens will adopt the ethical attitudes of reflexivity and intellectual solidarity simply on request. The preparedness of someone to be reflexive about his/her own position and related interests, hopes, hypotheses, beliefs, and concerns can be called ‘moral responsibility’, but it essentially leans on the capability to do so. Insight into the complexity of our co-existence in general and into our complex social problems in particular, and an understanding of the ethical consequences for politics, science, and the market, need to be stimulated and fostered in basic and higher education. Education should move beyond the 19th Century disciplinary approaches and cultural and religious comfort zones, and should become pluralist, critical, and reflexive in itself. Instead of educating young people to function optimally in the strategic political, cultural, and economic orders of today, they should be given the possibility to develop as a cosmopolitan citizen with a (self-)critical mind and a sense for ethics in general and for intellectual solidarity in particular.

An ethics of care perspective on our modern co-existence ‘bound in complexity’ provides a powerful reference to defend the value of (and the need for) these advanced interaction methods. Recognising the meaningful relations between the advanced approaches to education, research, and decision making presented above, together they not only enable and stimulate reflexivity and intellectual solidarity based on their discursive potential, but also provide the possibility to generate societal trust with their working. That societal trust considered here is not the trust that the outcome of deliberation will be the ‘correct one’, but that its method has the potential to be judged as fair by everyone in consensus, given the complexity of the problem.

So what is the real problem with living in a complex world? Whether we speak of clearly observable unacceptable situations (such as extreme poverty), perceived worrisome situations or evolutions (such as climate change or population growth), or practices or proposed policy measures with a potential controversial character (such as the use of nuclear energy, genetically modified organisms, or a tax on wealth), we can say that our social challenges have become more complex. The real trouble with these challenges is not their complexity as such, but the traditional governance methods we use to make sense of them in politics, science, and the market. Inherited from modernity, the idea is that these methods are no longer able to ‘grasp’ the complexity of these social problems. Meskens (2015a) argues in depth about why and how these traditional governance methods are not inspired by reflexivity as an ethical attitude and intellectual solidarity as an ethical commitment, driven as they still are by the doctrine of scientific truth and the strategies of political ‘positionism’ and economic profit. On the other hand, it may be clear that we do not need deep utopian reform of our society to make research transdisciplinary and inclusive, and to make education pluralist, critical, and reflexive. Even in the old modes of political conflict, steered and limited by party politics and nation state sovereignty, it is possible in principle to organise public and civil society participation in deliberation around concrete issues, and to take the outcome of that deliberation seriously. So, although we do not live in a society inspired by intellectual solidarity, we have the capacity to foster it and to put it in practice.

4. CONSEQUENCES FOR RADIOLOGICAL RISK GOVERNANCE

In light of the previous reflections, we can now characterise the evaluation of the eventual use of nuclear technology in society as a complex social problem that requires fair dealing with its complexity, in the energy application context as well as in the medical application context. As a conclusion to this text, we may now formulate a set of considerations on how all this applies to radiological risk governance.

4.1. Importance of considering different neutral application contexts

Although Chapter 1 indicated a difference between the energy and medical application context in terms of fairness with respect to dealing with knowledge-related uncertainty (due to incomplete and speculative knowledge) and value pluralism, one could call the problem a complex problem in both cases because its complexity follows the seven characteristics proposed above, and a complex social problem because it concerns the whole ‘range’ of relevant social actors in that application context.

It is true that, from a societal perspective, one could call the nuclear energy problem ‘more complex’ than the use of nuclear technology in the medical context, but the essential message here is that a comparison between both technology applications is meaningless in terms of fairness of justification. One has to acknowledge that ethics for the medical and nuclear energy application contexts lean on the same principles, but also that they mean different things in practice when it comes to ensuring participation and informed consent of potentially affected persons. Therefore, in any discourse related to risk justification, a joint preparedness to evaluate the eventual use of nuclear technology in its neutral application context (being the energy or medical context) is a principle of intellectual solidarity in itself.

From a different perspective, one can say that assessment of the justice of justification of a radiological risk is meaningless in the absence of a context of application. Consequently, in terms of applied ethics, it is rather meaningless to speak of ‘radiological protection’, ‘radiological risk management’, or ‘radiological risk governance’ without specifying a specific nuclear technology, or without specifying the societal context of the nuclear technology application. Even more, it becomes meaningless to speak of ‘risk management’ or ‘risk governance’ as such, as, within these distinct application contexts, the radiological risk becomes a concern if and only if those involved jointly agree to consider the eventual use of the nuclear technology in function of ‘the higher good’ (the eventual use of nuclear energy in energy governance, the eventual use of nuclear technology in health care).

4.2. Enabling virtues in radiological risk governance

Reflections on ethics in relation to radiological protection 5 to date have largely focussed on virtue ethics. They logically and reasonably follow from the question of what it would imply to be ‘responsible’ or ‘good' as a scientist, manager, policy advisor, medical doctor, or regulator. The considerations made in this paper may now support the argument that ethical thinking in relation to radiological risk governance in general, and with respect to consequences for the radiological protection system in particular, requires broader reflection than traditional virtue ethics alone, and that it should be completed with ethical reflection with regard to the potentialities and ‘hindrances’ that characterise the systems in which these mandatories are formed and meant to operate. Looking at how politics, science, the market, and education function today, one could wonder how, in the broader societal context, virtues identified as relevant for radiological protection (beneficence, non-maleficence, prudence, justice, dignity, honesty, truthfulness, empathy, etc.) can ever ‘work’ in a world still ruled by the doctrine of scientific truth and the strategies of political positionism and economic profit. It seems as if those virtues always need to ‘resist’ the methods driven by these doctrines and strategies, and need to ‘work’ against them. This is particularly the case for policy advice. Advice on a controversial health issue formulated by an advisory council may be serene and deliberate, but today one has to accept that it becomes nothing more than an element to be taken into account (or not) in national party politics or in negotiations between nation states. Another example is the working of science in post-accident conditions. Scientific discussions such as those on possible health effects in Fukushima would benefit from a serene atmosphere, wherein not only scientists but also citizens, activists, politicians, and representatives from the nuclear industry would be able to speak openly about uncertainties, interests, and concerns. However, we do not need deep analysis to conclude that the atmosphere in and around Fukushima today is rather one of distrust instead of serenity [see the author’s thoughts on post-accident social justice in Fukushima in Meskens (2015b)].

Therefore, fair radiological risk governance also requires advanced governance methods for science, political decision making, and education in order to ‘enable’ these virtues to work to their full potential. An ethics of care for our modern co-existence does not only support these advanced governance methods, but also gives new meanings to the ethical values underpinning the system of radiological protection. For every professional concerned with radiological protection, be it the scientist, the engineer, the medical doctor, the manager, or the policy advisor, the virtues of beneficence, non-maleficence, prudence, justice, dignity, honesty, truthfulness, and empathy receive an enriched ‘interactive’ ethical meaning when understood as grounded in care for human relationships ‘bound in complexity’. The reason is that acting according to these virtues always ‘starts’ with a motivation for rapprochement towards other concerned actors.

4.3. Justice of justification as a central concern

An ethics of care for our modern co-existence ‘bound in complexity’ supports the value of the principles of fairness in risk governance that were put forward in Chapter 1, as it provides a powerful reference to defend the principles of precaution, informed consent of the potentially affected, and accountability towards next generations against the doctrine of scientific truth and the strategies of political positionism and economic profit. However, the ethics of care perspective also supports the idea that the principles of precaution and informed consent should not be ‘balanced’ as trade-offs. In simple terms, precautionary measures would need to be agreed upon with the involvement of all concerned actors. As a consequence, the idea of fair risk governance, as elaborated on in Chapter 1, integrated in the broader ethical vision of what it implies to deal fairly with the complexity of that risk governance, supports the argument that the justice of justification, ensured by the possibility of self-determination of the potentially affected (ensuring their ‘right to be responsible’), should be the central concern of risk governance and of related systems of protection. In the complex cases of nuclear technology applications, a risk cannot be justified through one-directional ‘convincing explanation’, but only through mutual agreement among concerned actors. An acceptable nuclear risk is a risk that is eventually justified relying on the formal possibility of deliberation among informed concerned actors (responsible and affected). Obviously, that mutual agreement, as the outcome of a justification exercise, can either be to reject or to accept the use of a nuclear technology. Therefore, intellectual solidarity as an ethical commitment among all concerned should ‘start’ with the joint preparedness to see justification as a mutual agreement ‘in the face of complexity’.

Seen from a different perspective, ethics in relation to the radiological protection system is also about considering and recognising the limits of the radiological protection system when it comes to providing a rationale for societal justification of a radiation risk. In other words, we cannot question the ethical dimensions of the radiological protection system without questioning the ethical dimensions of the ‘bigger’ systems in which the radiological protection system operates, and on which it depends. Given that the radiological protection system, in its concern for providing guidance for decision making, relies on science but also and essentially wants to consider human and societal values, the bigger systems that need to be questioned in terms of their ethics are those of knowledge production (research, advice) and decision making, but also that of education. For risks that manifest in an occupational context, the system of decision making is the management system. For risks that manifest on a societal level, the system of decision making is the system of democracy.

This last reflection introduces the need to raise awareness about the possibilities and limits of radiological protection and nuclear safety culture in this sense. In light of the previous, we can state that fostering a responsible radiological protection and nuclear safety culture is a necessary but insufficient condition for the societal justification of (the risk of) nuclear technology applications. Many scientists and policy makers still claim that a nuclear risk is justified when there is a responsible regime of protection put in place. Based on the ethical considerations above, we can conclude that it is actually the other way round: responsible protection needs to be put in place once all involved actors would eventually have jointly justified the use of nuclear technology.

Therefore, even when considering ethical dimensions, the radiological protection system cannot and should not be stretched to provide the full rationale for societal justification, but it can and should refer to critical considerations on how our formal methods of knowledge generation and decision making should foster autonomy and involvement of the potentially affected, and promote vigilance and fairness in justifying radiation risks. In its recommendations, the International Commission on Radiological Protection (ICRP) could highlight the importance of the advanced methods for science, politics, and education presented in Section 3.3 as a way to ensure fairness in justifying radiation risks, taking into account the different application contexts. In addition, given the central role of science in radiological protection, ICRP could actively promote a more ‘responsible’ conception of science, being a transdisciplinary and inclusive science, not only to advise politics in judgements on justification, but also to support radiological protection policies in both occupational and post-accident conditions. That science would, in principle, be able to inform policy in a more reflexive and thus deliberate way, while simultaneously being more resilient itself against strategic interpretation of its produced knowledge and hypotheses from politics, civil society, and the market.