Abstract

Introduction

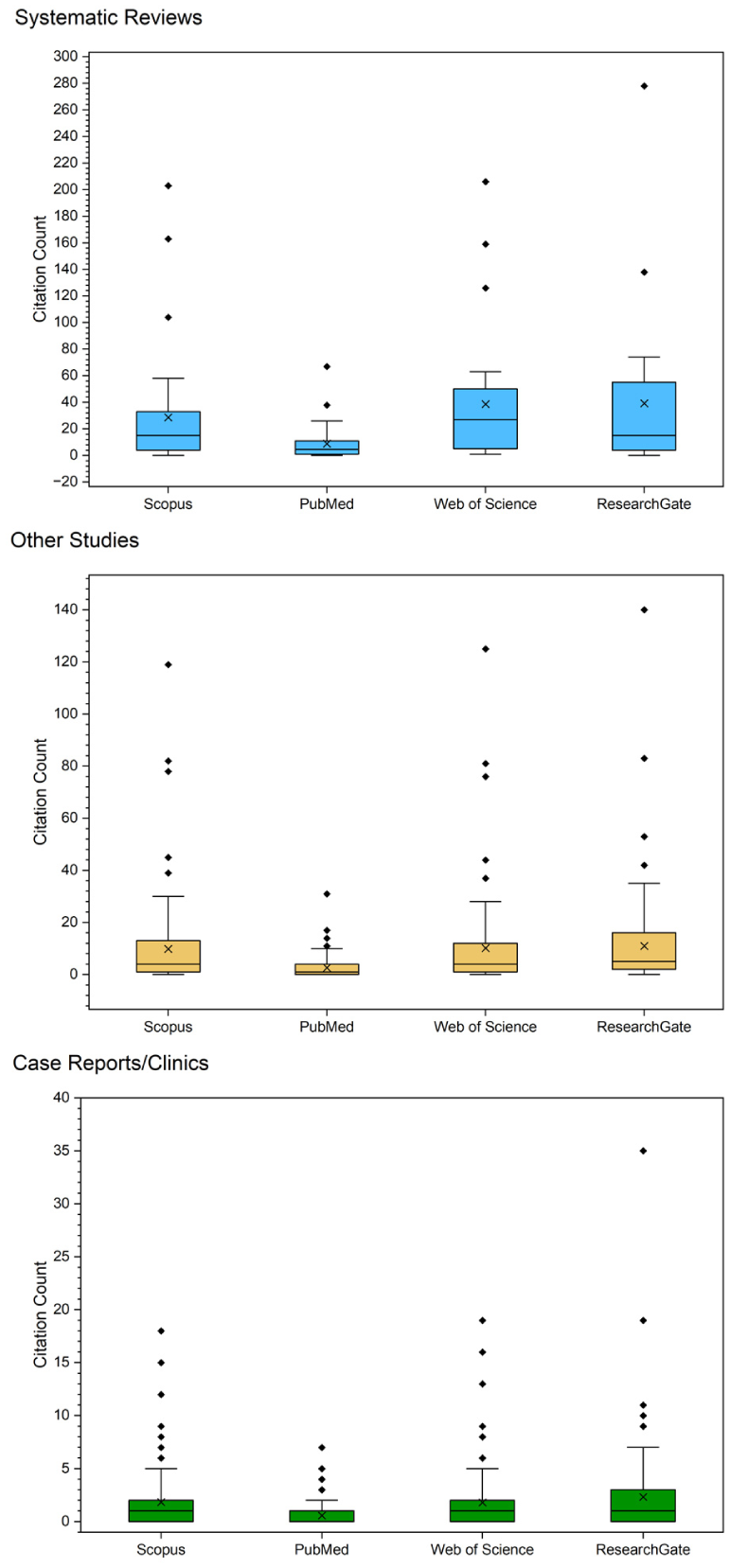

In academic settings, quantitative measures of research productivity can be used to assess both individual performance and institutional scholarly influence. Specifically, for Otolaryngology, the average number of research presentations, abstracts, and publications of matched applicants has increased substantially during the past 2 decades. In 2009, it was 4.1, in 2016 it was 8.4, and in 2024 it was 20.0.1,2 While this increase in research productivity offers applicants and institutions a competitive advantage, it prompts consideration of whether increased quantity is accompanied by high quality and scientific impact. Previous studies argue that it is not, and that although there is an increase in the quantity of medical student publications, a substantial portion receives zero citations, and the value of single-institution study design has been questioned. The purpose of this research was to perform a preliminary assessment of the value of single-center medical student research by analyzing the output from 1 institution (Drexel University College of Medicine Department of Otolaryngology by a single senior author (Robert T. Sataloff). The scholarly impact of publications can be assessed directly with citation count, which measures how often a work is referenced by other researchers and reflects its contribution to advancing knowledge in new research directions. 3 Hence, for this investigation, citation count from multiple databases was used to assess the impact factor of 275 publications from medical peer-reviewed journals from 2006 to 2025, and box and whisker plots were used to compare results.

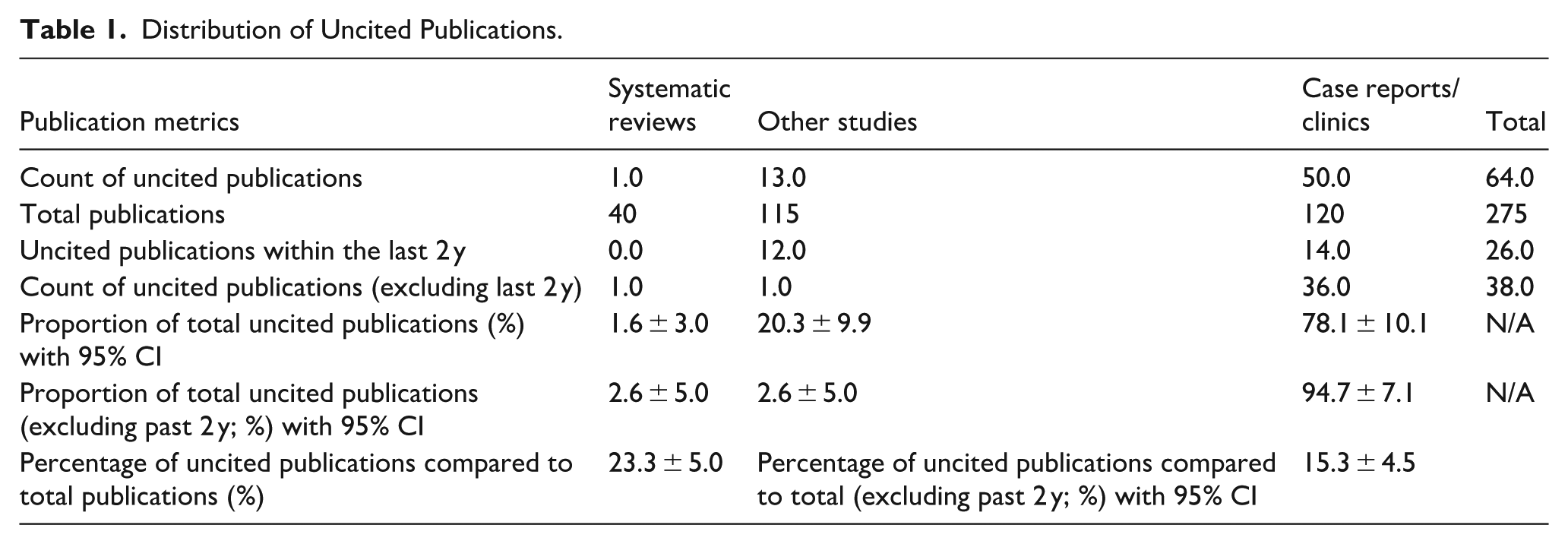

With regard to residency applications, studies argue that the pressure to publish inadvertently compromises the quality of research among medical trainees. Wickramasinghe et al 4 demonstrated that between the years 1980 and 2012, 59.1% of the 350 publications authored by medical students were never cited, and the follow-up investigation by Elliot and Carmody showed that from 2012 to 2022, 23.1% of the 5181 medical student publications received zero citations.2,5 Both of these studies categorized publications by type, such as case reports, for other queries of their research, but they did not include the proportion of uncited works by category. Based on our investigation, 23.3% ± 5.0% of publications were uncited. At first glance, this number seemed consistent with the results from the investigation conducted by Elliot et al. However, a deeper analysis of the data demonstrated that about 41% of those publications were published within the past 2 years from the time of our investigation. So, they may not have had enough time to gain recognition and accumulate citations. Based on this investigation of medical student publications, between the years of 2006 and 2025, 23.3% ± 5.0% were uncited, and excluding the past 2 years (insufficient time for citation), 15.3% ± 4.5% of the total were uncited, with the majority of uncited publications being case reports/clinics. For articles other than case reports/clinics, excluding the past 2 years, only 5.3% of students’ publications were uncited.

Direct comparison between these studies is limited by methodology. The studies performed by Elliot et al and Wickramasinghe et al both included medical school student publications from multiple institutions and varying disciplines, whereas our investigation focused on a specialized subset of academic output. In the investigation by Elliot et al, the AI-based platform Semantic Scholar was used to obtain citation count, whereas in our investigation, the citation count was verified manually across 4 databases. While there are studies that provide insight into medical student research output and citation trends as discussed above, there is a gap in studies directly comparing publication output between single institutions versus multiple institutions, which highlights the need for further investigation. Limitations of this investigation include the utilization of solely citation count to assess the impact of research products, and multiple measures might reflect better the significance of contributions in different contexts.

Overall, citation-based metrics are influenced by the type of article published and publication age, which should be considered when measuring the impact of research studies. In this investigation, the majority of uncited publications were case reports/clinics. Even though such articles receive fewer citations in academic journals, they may have substantial value for clinician readers and for the authors. 6 Also, single institution research can result in substantial impact (Figure 1 and Table 1).

Distribution of citation count within databases. The horizontal bar inside the boxes indicates the median; the marker “x” symbolizes the mean; and the lower and upper ends of the boxes are the first and third quartiles. The whiskers indicate values within 1.5x the interquartile range from the upper or lower quartile (or the minimum and maximum if within 1.5x of the interquartile range of the quartiles) and data more extreme than the whiskers are plotted individually as outliers (diamonds).

Distribution of Uncited Publications.

Footnotes

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.