Abstract

Keywords

Introduction

Vocal cord leukoplakia is a clinical descriptor for the identification of a white plaque or patch on the vocal cords upon macroscopic examination without considering corresponding histological features or prognosis. Pathologically, vocal cord leukoplakia may be accompanied by squamous hyperplasia, epithelial dysplasia, or carcinoma; and thus, it is considered a precancerous lesion within the spectrum of transformation of the laryngeal epithelium toward malignancy. 1

Laryngeal cancer is typically preceded by dysplasia, and the degree of dysplasia is directly proportional to the rate of malignant transformation of vocal cord leukoplakia. 2 While the rate of malignant transformation varies widely with estimates as low as 1.7% and as high as 46.3%, 3 early diagnosis and treatment of vocal cord leukoplakia may prevent progression and malignancy. 4 The 2017 World Health Organization Classification of Head and Neck Tumors proposed a 2-tier classification system for dysplasia, with reasonably clear histopathological criteria for the 2 groups: (1) low-grade (LG) dysplasia including squamous hyperplasia and mild dysplasia and (2) high-grade (HG) dysplasia including moderate and severe dysplasia and carcinoma in situ.5,6 In response to this classification, some otolaryngologists proposed that patients in the LG group of vocal cord leukoplakia with a low malignancy risk would generally require a conservative treatment or watch-and-wait policy, whereas patients in the HG group of vocal cord leukoplakia would demand both surgical treatment and close follow-up to monitor possible progression to a more aggressive pathology. 7 However, a clinical challenge in managing vocal cord leukoplakia is to assess the potential malignant transformation of the lesion, and to accordingly establish the optimal therapeutic schedule. 8

Laryngoscopy is the most important examination method for detecting vocal cord leukoplakia but to date laryngoscopy alone cannot determine the degree or scope of vocal cord leukoplakia without biopsy. Some otolaryngologists and pathologists therefore recommend a combination of laryngoscope and random 3-spot biopsy specimens to enable early detection and follow-up. However, this procedure is invasive, time consuming, and difficult to comply with. 9 Moreover, preoperative biopsy under laryngoscopy is unlikely to fully agree with postoperative pathology results. This discrepancy often leads to overtreatment or undertreatment even for experienced endoscopists. Another challenge in clinical practice is that not all cases of vocal cord leukoplakia need laryngoscopy with histological examination, and there is difficulty in deciding which cases indicate biopsy.

Considering the above controversies and uncertainties, further improvements in the detection of vocal cord leukoplakia, possibly using new techniques, are urgent for its clinical management. Currently, image-enhanced endoscopy (IEE), such as contact endoscopy (CE) 10 and narrow band imaging (NBI),9,11 in addition to white light imaging (WLI) has been used for accurate diagnosis of laryngeal lesions. Recent studies, such as the one by Filipovský et al, 12 demonstrate that NBI endoscopy significantly enhances the detection of mucosal lesions of the larynx that is not visible in white light endoscopy, making it a powerful tool in the preoperative and perioperative diagnosis of pretumor and tumor lesions. This method shows a high level of correlation with histopathological results, thus providing a substantial improvement in the diagnostic accuracy of laryngeal lesions.

Artificial intelligence (AI) using deep learning (DL) with convolutional neural networks (CNNs) has recently emerged and showed inspiring results as a method for the detection of gastrointestinal cancers.13-15 Moreover, 1 single-institution study showed that an AI system for detecting pharyngeal cancers had promising performance with high sensitivity and acceptable specificity. 16 However, no study to date has applied AI for simultaneous segmentation and classification of vocal cord leukoplakia. We therefore developed an AI system that applies DL to assist in automated diagnosis of vocal cord leukoplakia and uses pathological diagnosis as the gold standard.

Methods

Selection of Images

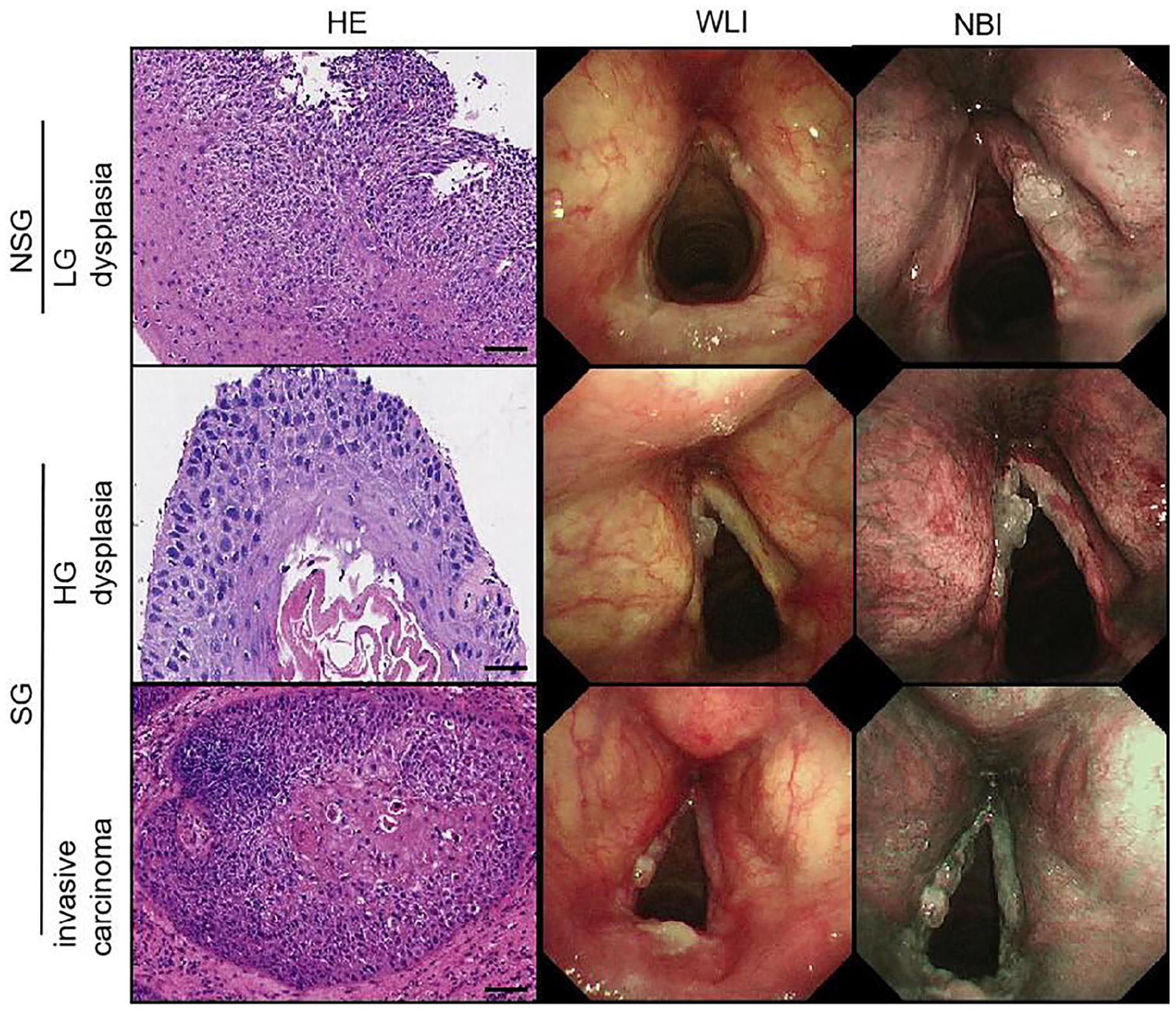

We retrospectively reviewed all laryngoscopic images from patients with vocal cord leukoplakia at our institution between October 2018 and October 2020. The laryngoscope used during this period was the Olympus BF-H290 (Olympus Medical Systems Corp., Tokyo, Japan). The images were originally taken by senior expert endoscopists and categorized by 2 imaging techniques: WLI and NBI. We classified the images of vocal cord leukoplakia into 2 types based on the pathology and the necessity of surgery (Figure 1)7,17: (1) a nonsurgical group (NSG) including LG dysplasia and (2) a surgical group (SG) including HG dysplasia and invasive carcinoma. When multiple pathological types appeared in a lesion, senior type was determined.

Classification for the laryngoscopic images of vocal cord leukoplakia. Scale bar: 50 µm.

Annotation of Images

All selected images of vocal cord leukoplakia were independently annotated by 2 senior expert endoscopists who had at least 10 years of laryngoscopy experience; they did not participate in manipulating the laryngoscope that took the targeted images, and they were not blinded to laryngoscopic findings and pathology. The boundaries of lesions in the image were annotated with the LabelMe application (https://github.com/wkentaro/labelme) and were used to represent the actual lesion area within the image.

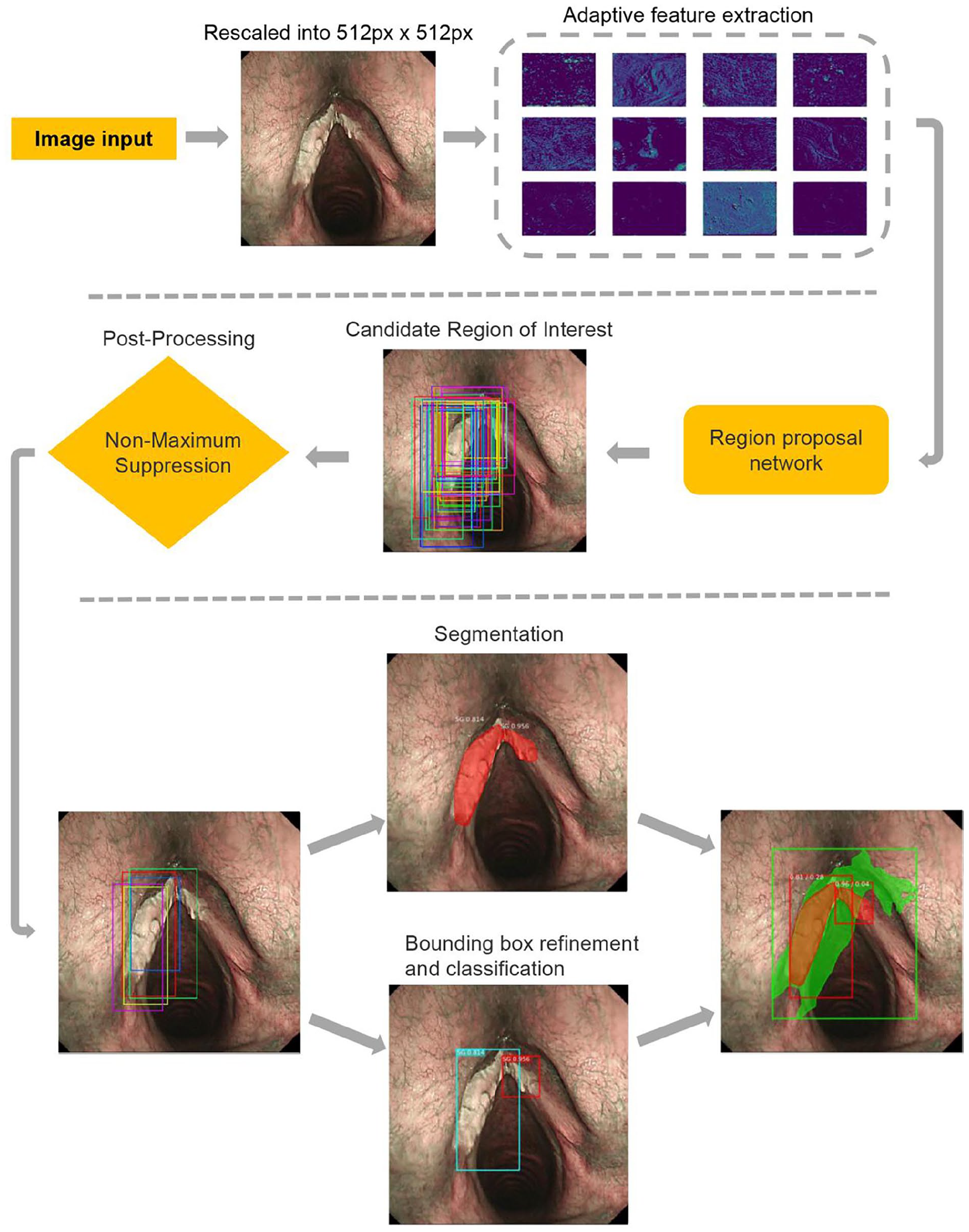

Model Architecture

Mask region-based CNN (Mask R-CNN) 17 is a 2-stage object detection method: the first stage, called region proposal network, proposes candidate object bounding boxes before being followed by R-CNN and a semantic segmentation model (MASK). We used Mask R-CNN to perform the image segmentation and classification. First, the input image was rescaled to a size of 512 × 512 pixels and input into the region proposal network with ResNet-50 (He, Zhang and Ren) as the backbone network. With several targeted regions being generated by the region proposal network, the network cropped the corresponding area of each region of interest (ROI) in the feature map. Then, we performed the RoIAlign 18 operation on the cropped area, input the aligned results into the full convolution network 19 segmentation sub-network and the classification sub-network respectively, and finally output the results (Figure 2). During training, the Mask R-CNN used a diverse array of loss functions to optimize both segmentation and classification tasks effectively. Binary cross-entropy was used for binary classification, smooth L1 loss for refining bounding box coordinates, categorical cross-entropy for classifying each ROI, and an additional binary cross-entropy for per-pixel mask prediction. The model training involved an initial learning rate of 0.001 and was capped at 100 epochs to balance sufficient learning with the prevention of overfitting, taking into account the dataset’s size and complexity.

Work flow of the model architecture.

Development of the Model

We fine tuned the model of Mask R-CNN implemented by Matterport, Inc. (Mountain View, CA, USA; https://github.com/matterport/Mask-RCNN) with a ResNet-50 backbone. 20 The DL model could learn the laryngoscopic images of vocal cord leukoplakia that contained the pre-labeled regions, and then detect the lesions autonomously.

Since the training of the model requires adequate images, we used transformations (eg, translation and rotation) that were unrelated to disease to augment the input data of our model during training. Given an input X of 512 × 512 pixels, to feed the image into the model, we first cropped an image patch (256 × 256 pixels), rotated it at a random angle [range (−30°, 30°)], flipped it randomly, then adjusted its size to the input of the model. These methods were implemented using the imgaug library (https://github.com/aleju/imgaug).

where

The weights of the model were initialized using a pre-trained model, which was the same network as those trained with the Microsoft Common Objects in Context (MS-COCO) dataset. 21 The model was developed using Python 3.5 and a TensorFlow neural network framework. Training, validation, and testing were conducted by 2 Nvidia GeForce GTX 1080 GPUs (NVIDIA Corporation, Santa Clara, California, USA) with 8 GB of memory each and an Intel® i7-5930k 3.50 GHz CPU (Intel Corporation, Santa Clara, California, USA) with a CentOS 7 (Red Hat, Raleigh, North Carolina, USA) operating system.

Evaluation of the Model

In each epoch, the loss value of the model on the training set and the test set were obtained simultaneously. Overfitting was determined when the training loss value decreased and the validation loss value increased. We then tested the performance of the model in the test set. Each lesion of vocal cord leukoplakia was considered as a unit for the evaluation of the performance. The subsequent DL workflow used by Mask R-CNN is shown in Figure 2.

Statistical Analysis

We used quantitative metrics to evaluate the performance of the proposed method. For the segmentation result, we used the intersection-over-union (IoU) value. For the classification result, we chose sensitivity, specificity, positive predictive value (PPV), and negative predictive value (NPV). For the final accuracy, we used the mean average precision (mAP). We applied a minimum threshold of IoU at 0.5 to calculate mAP, which measures the overlap between prediction and ground truth.22,23 All statistical analyses were performed using R software version 3.6.

Results

One hundred twenty-four patients with HG dysplasia and invasive carcinoma, and 92 patients with LG dysplasia were selected for this study. Because a patient may display multiple lesions, there were 168 lesions among patients with HG dysplasia and invasive carcinoma, and 92 lesions among patients with LG dysplasia. For the 3100 images taken in NBI mode, 1541 were classified as NSG, while 1559 were classified as SG. For the 3080 images in WLI mode, 1531 were classified as NSG while 1549 were SG. Given that the size of our sample is relatively limited, we partitioned the dataset on an image-by-image basis. Specifically, all images were divided into training set, validation set, and test set in the ratio of 6:2:2. In NBI mode, there were 1860 images in the training set, 618 images in the validation set, and 622 images in the test set. In WLI mode, there were 1848 images in the training set, 616 images in the validation set, and 616 images in the test set (Supplemental Tables S2 and S3). This method of division might induce some data leakage; however, we mitigated its impact as much as possible by implementing data augmentation in the training set.

We evaluated the performance of the DL model by the segmentation and classification of images in NBI and WLI modes. Model segmentation was compared against segmentation performed by senior expert endoscopists with at least 10 years of laryngoscopy experience. Model classification as SG or NSG was compared against classification by clinical decision using pathology as a gold standard.

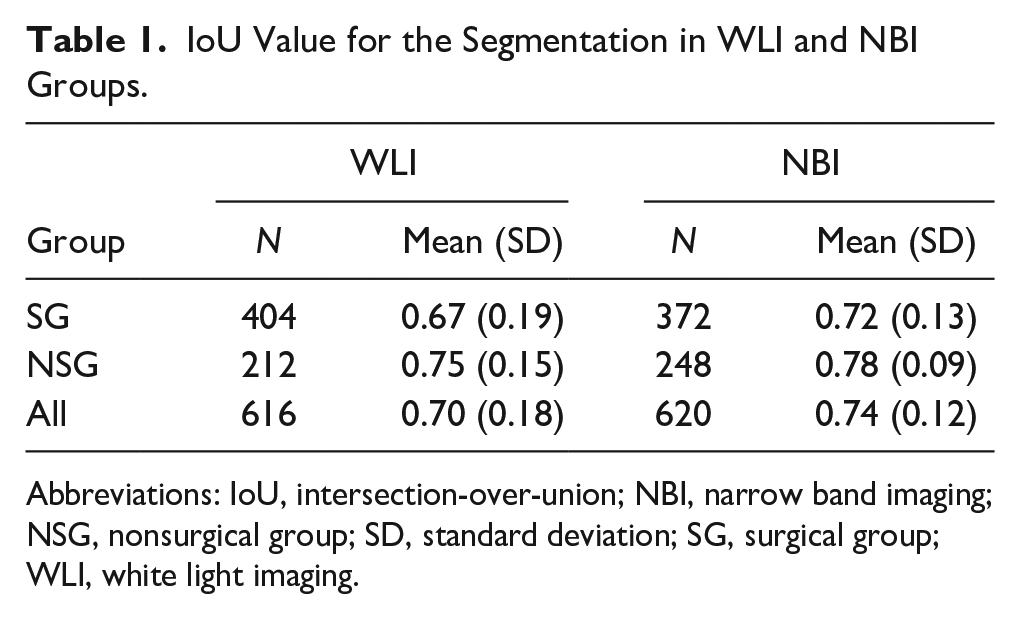

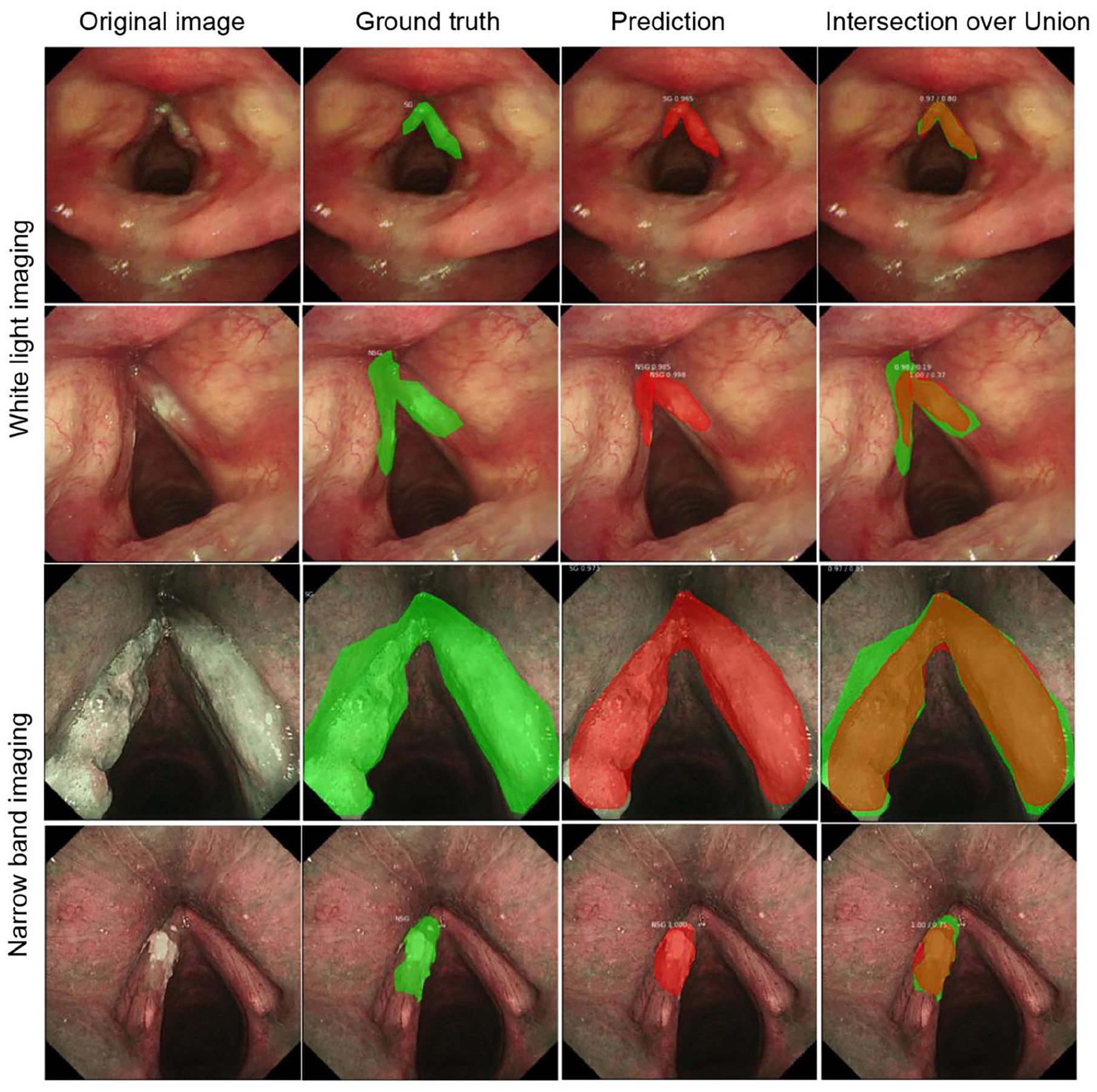

For segmentation, the average IoU value exceeded 70% in WLI and NBI modes (Table 1). The DL model can detect 87% of vocal cord leukoplakia in WLI mode and 92% of vocal cord leukoplakia in NBI mode with an IoU > 0.5. With an increased IoU criterion of >0.7, the detection rate in the 2 modes remained acceptable with a >60% detection rate (Supplemental Table S1). The partial segmentation results using the learned model in WLI and NBI modes are shown in Figure 3.

IoU Value for the Segmentation in WLI and NBI Groups.

Abbreviations: IoU, intersection-over-union; NBI, narrow band imaging; NSG, nonsurgical group; SD, standard deviation; SG, surgical group; WLI, white light imaging.

Segmentation results of model prediction in WLI and NBI modes.

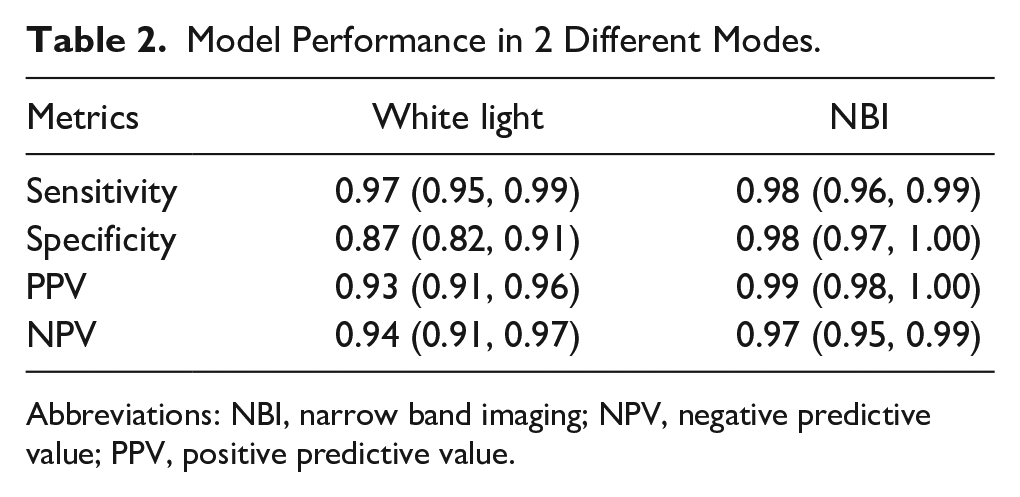

To measure the performance of pure classification of target regions, we did not initially set an IoU threshold. We abandoned images with an IoU less than or equal to 0 (only 1 image was disqualified; Table 2). The model’s binary classification of WLI test set (616 images) into SG and NSG demonstrated a sensitivity of 93% (95% CI 88%-98%) and a specificity of 94% (95% CI 88%-100%). Impressively, the model’s binary classification of NBI test set (620 images) demonstrated a higher sensitivity of 99% (95% CI 97%-101%) and a higher specificity of 97% (95% CI 93%-101%). The model’s PPV for WLI and NBI were 97% (95% CI 94%-100%) and 98% (95% CI 95%-101%), respectively. The model’s NPV for WLI and NBI were 87% (95% CI 78%-96%) and 98% (95% CI 95%-101%), respectively. The confusion matrices under the 2 modes are shown in Supplemental Figure S1.

Model Performance in 2 Different Modes.

Abbreviations: NBI, narrow band imaging; NPV, negative predictive value; PPV, positive predictive value.

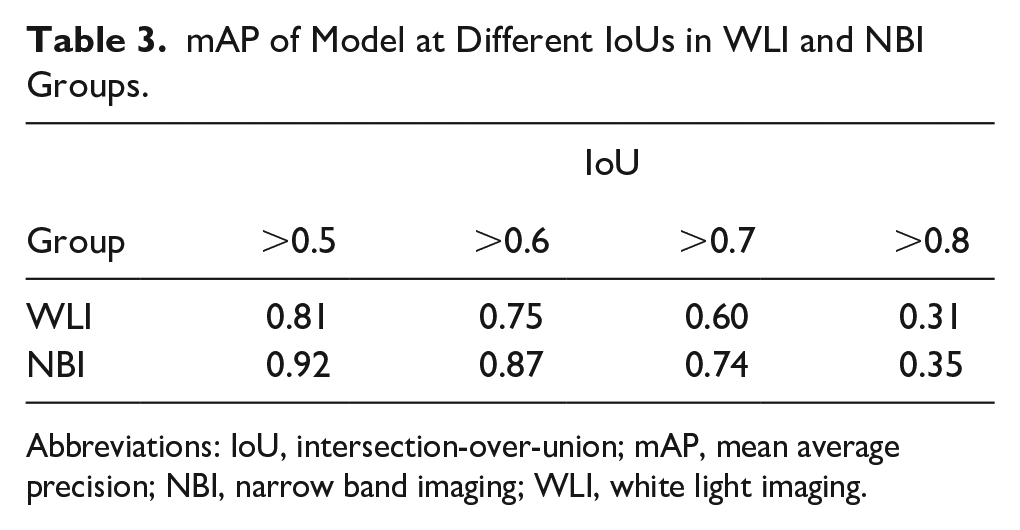

The model manifested optimal performance in the segmentation and classification. However, the accuracy of the model depends on 2 factors: the IoU of the segmented lesions compared to manual annotation needs to be greater than the preset IoU > 0.5 criterion, and simultaneously the classification of the segmented lesion area must be accurate. Thus, we calculated the mAP of the model at different IoUs (the minimum threshold of IoU is 0.5; Table 3). In our test set with an IoU > 0.5, the mAP for WLI and NBI was 0.81 and 0.92, respectively. With an IoU > 0.7, the mAP for WLI and NBI was acceptable.

mAP of Model at Different IoUs in WLI and NBI Groups.

Abbreviations: IoU, intersection-over-union; mAP, mean average precision; NBI, narrow band imaging; WLI, white light imaging.

Our video model was capable of processing at least 25 frames per second with a latency period of <40 ms in video analysis. Video clips demonstrating classification of NSG and SG are shown for WLI in Supplemental Video 1 and NBI in Supplemental Video 2.

Discussion

The management of vocal cord leukoplakia remains a challenge despite the use of IEE techniques, such as CE and NBI, for accurate diagnosis of laryngeal lesions. While surgical resection will provide a final diagnosis, LG dysplasia of vocal cord leukoplakia may not go on to be malignant, thereby resulting in potentially unnecessary surgeries. Meanwhile, the optimal opportunity for surgery may be missed if HG dysplasia and invasive carcinoma of vocal cord leukoplakia is misdiagnosed.24,25 Treatment stratification by combining laryngoscopic imaging and AI can help to alleviate this management dilemma. To the best of our knowledge, this is the first study that has applied DL with Mask R-CNN to laryngoscope WLI and NBI for the automated segmentation and classification of vocal cord leukoplakia.

The use of DL for the detection of gastrointestinal lesions has rapidly developed and has made remarkable progress in recent years.14,26 Presently, some studies have reported using computer-aided detection in the segmentation or classification of laryngoscopic images. In 2015, Irem Turkmen et al 21 classified vocal fold disorders into 5 categories using manual extraction and histogram of oriented gradients descriptors. However, one flaw in the study is that the training set was subjective and pathology was not the gold standard of classification. Ji et al 27 reported a multi-scale recurrent fully CNN for laryngeal leukoplakia segmentation. Despite favorable results, their datasets included only static images taken by WLI under optimal conditions; NBI is absent, which is crucial for the differentiation of benign from malignant lesions. Recent advancements highlight the potential of AI in improving diagnostic accuracy.28-30 Kim et al 31 created a CNN specifically for classifying vocal cord tumors, designed for use in home-based self-prescreening. Meanwhile, Fu et al 32 showcased the effectiveness of DL in aiding the diagnosis of laryngeal leukoplakia through an electronic laryngoscope. In this study, we included images of WLI and NBI in the datasets, considering that the model would be used in various modalities and applied to different hospitals. Furthermore, video detection is more demanding than static images because of complex conditions such as reflect light, blurring, and airway secretions. As seen in Supplemental Videos 1 and 2, our model in this study displays the extent and subtype of vocal cord leukoplakia without pausing. Encouragingly, our DL model also demonstrated a high sensitivity (93% for WLI and 99% for NBI) and specificity (94% for WLI and 97% for NBI) per lesion for binary classification into an SG versus NSG. While Kono et al 16 used DL with CNNs for the diagnosis of pharyngeal cancers with a sensitivity of 92%, the specificity and accuracy were 47% and 66%, respectively, significantly lower than our dataset. Meanwhile, our model also detected lesions correctly with a high mAP (0.81 for WL and 0.92 for NBI, IoU > 0.5). In contrast, Hashimoto et al 15 reported a study of CNNs with an IoU threshold at 0.3 for detection of early esophageal neoplasia in Barrett’s esophagus, and the overall mAP was 0.7533 and mAP for NBI was 0.802, significantly lower than our dataset. More importantly, however, we combined pathological diagnosis and clinical decisions to a grouped dataset, which gives a more realistic assessment and would be conducive to clinical promotion in the future.

It is possible to implement our proposed model in an embedded decision support system for identifying patients for whom directly proceeding to surgical treatment might be advantageous. Taken together, the outcomes of this study showed promise for efficient management of vocal cord leukoplakia. First, segmentation and classification would greatly shorten laryngoscopic operation time for endoscopists, especially if inexperienced. Second, this model can aid otolaryngologists in decision-making. Third, and most importantly for patients, this approach would obviate the need for unnecessary invasive procedures such as biopsy as well as mitigate medical expenses.

However, there are also some limitations to this Mask R-CNN system. First, all tested laryngoscopic images were retrospectively taken from a single center and obtained from the same video system. A second caveat is that multiple images were extracted from a single patient’s laryngoscope, so a learner bias was possible if images from the same patients existed in both the training and test sets. A third limitation is that this Mask R-CNN system could not completely exclude the influence of airway secretions and reflected light, which were major causes of pseudo-positive cases. A fourth limitation is the difference between image-level and patient-level diagnoses. In continuous video data, some frames may be misclassified, meaning the model’s results should only serve as an aid. Further research is needed on more advanced human–machine collaborative models. We believe these limitations will be overcome in the future by including datasets from a multi-center setting with different hospitals and various laryngoscopic systems.

Conclusions

This study applied DL with Mask R-CNN to laryngoscopic images in WLI and NBI modes for the automated segmentation and classification of vocal cord leukoplakia with high sensitivity, specificity, and mAP. Mask R-CNN has promising potential to assist otolaryngologists in clinical treatment decisions on vocal cord leukoplakia.

Supplemental Material

sj-docx-1-ear-10.1177_01455613241275341 – Supplemental material for Applying Deep Learning with Convolutional Neural Networks to Laryngoscopic Imaging for Automated Segmentation and Classification of Vocal Cord Leukoplakia

Supplemental material, sj-docx-1-ear-10.1177_01455613241275341 for Applying Deep Learning with Convolutional Neural Networks to Laryngoscopic Imaging for Automated Segmentation and Classification of Vocal Cord Leukoplakia by Ming Xiong, Jia-wei Luo, Jia Ren, Juan-Juan Hu, Lan Lan, Ying Zhang, Dan Lv, Xiao-bo Zhou and Hui Yang in Ear, Nose & Throat Journal

Supplemental Material

sj-docx-2-ear-10.1177_01455613241275341 – Supplemental material for Applying Deep Learning with Convolutional Neural Networks to Laryngoscopic Imaging for Automated Segmentation and Classification of Vocal Cord Leukoplakia

Supplemental material, sj-docx-2-ear-10.1177_01455613241275341 for Applying Deep Learning with Convolutional Neural Networks to Laryngoscopic Imaging for Automated Segmentation and Classification of Vocal Cord Leukoplakia by Ming Xiong, Jia-wei Luo, Jia Ren, Juan-Juan Hu, Lan Lan, Ying Zhang, Dan Lv, Xiao-bo Zhou and Hui Yang in Ear, Nose & Throat Journal

Supplemental Material

sj-docx-3-ear-10.1177_01455613241275341 – Supplemental material for Applying Deep Learning with Convolutional Neural Networks to Laryngoscopic Imaging for Automated Segmentation and Classification of Vocal Cord Leukoplakia

Supplemental material, sj-docx-3-ear-10.1177_01455613241275341 for Applying Deep Learning with Convolutional Neural Networks to Laryngoscopic Imaging for Automated Segmentation and Classification of Vocal Cord Leukoplakia by Ming Xiong, Jia-wei Luo, Jia Ren, Juan-Juan Hu, Lan Lan, Ying Zhang, Dan Lv, Xiao-bo Zhou and Hui Yang in Ear, Nose & Throat Journal

Supplemental Material

sj-docx-4-ear-10.1177_01455613241275341 – Supplemental material for Applying Deep Learning with Convolutional Neural Networks to Laryngoscopic Imaging for Automated Segmentation and Classification of Vocal Cord Leukoplakia

Supplemental material, sj-docx-4-ear-10.1177_01455613241275341 for Applying Deep Learning with Convolutional Neural Networks to Laryngoscopic Imaging for Automated Segmentation and Classification of Vocal Cord Leukoplakia by Ming Xiong, Jia-wei Luo, Jia Ren, Juan-Juan Hu, Lan Lan, Ying Zhang, Dan Lv, Xiao-bo Zhou and Hui Yang in Ear, Nose & Throat Journal

Footnotes

Acknowledgements

None.

Author Contributions

Ming Xiong, Jia-wei Luo, and Jia Ren drafted the manuscript. Lan Lan and Hui Yang studied the concept, designed the study, and revised the manuscript. Juan-juan Hu, Ying Zhang, Xiao-bo Zhou, and Dan Lv acquired, analyzed, and interpreted the data. Ming Xiong, Jia-wei Luo, and Jia Ren trained the model. All authors have read and approved the manuscript.

Availability of Data and Materials

The datasets used and/or analyzed during the current study available from the corresponding author on reasonable request.

Consent for Publication

Not applicable.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work has been supported by the Sichuan Science and Technology Program (grant no. 2021YFS0216).

Ethics Approval and Consent to Participate

Written informed consents of this study have been obtained from all patients and this study was approved by the Biomedical Ethics Review Committee of West China Hospital, Sichuan University (No. 2020-978).

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.