Abstract

Level of Evidence: 4

Introduction

In medical research, precision and accuracy are paramount. As such, and with continually growing publication rates of scholarly literature,1,2 the need for a reliable, organized system to report medical errors becomes essential to maintaining scientific integrity. Serious errors in medical publications have the potential to result in a fundamental distortion of study outcomes or their interpretation, particularly when readers do not review published errors. Recognizing this, the International Committee of Medical Journal Editors (ICMJE) 3 and Committee of Publication Ethics (COPE) 4 have developed guidelines for reporting and publishing these errors. Fortunately, many medical journals do indeed publish errata (production errors caused by the journal) and corrigenda (author’s error or mistake). 4 Yet, despite these guidelines, inconsistencies in error reporting persist,5,6 raising concerns about the ability of error corrections to effectively reach readers to avoid patient harm.

While studies in other fields have analyzed trends in publishing errors, a comprehensive examination of errors in Otolaryngology—Head and Neck Surgery (OHNS) journals has not yet been performed. Through a stronger understanding of error publishing in OHNS, we aim to determine the severity, type, and impact of these articles with an eye to addressing and avoiding future instances.

Materials and Methods

The 2022 Journal Citation Report published by Clarivate Analytics, a Web of Science group, 7 was accessed to determine the top 30 OHNS journals with the highest impact factor (IF). Via the advanced search engine of each journal, the following term was searched: “errata OR erratum OR corrigenda OR corrigendum OR correction OR corrections” for anywhere within the entire contents of the article. Only entries published online from January 1, 2000, to October 12, 2023, were included. Each entry was reviewed to ensure the entry was truly an error and not simply an article containing the search terms. Errors labeled as “correction(s)” were analyzed and categorized into either errata or corrigenda, as defined by the COPE guidelines. 4

Multiple data were collected from entries, including the type of error based on what was corrected: acknowledgements (eg, excluded or incorrect), authorship, figures, funding, methods (eg, omission of specific steps, incorrect materials/quantities, incorrect statistical analyses), publication (eg, not printed in color, printed in incorrect volume), reference, retraction, text, or other. Other data collected included the type of corrected article, online publication date of the error, online publication date of the corrected article, the country of affiliation of the primary author of the corrected article, the number of references within the corrected article, and the number of times the corrected article was cited according to CrossRef. 8

Similarly, each error was graded and assigned a severity: “Trivial,” “Minor,” or “Major,” (as defined by Hauptman et al in their analysis of errors published in the top 20 English-language general medicine and cardiovascular journals from 2009 to 2010). 5 “Trivial” errors were those that did not significantly change the methods, reporting, data interpretation, or conclusion of the original article, including misspelling an author’s name, insignificant text modifications, and figures printed in grayscale instead of color. “Minor” errors included more significant errors, such as the incorrect transposition or labeling of columns in a table, typically affecting more than one data point but without a substantial impact on the primary or critical endpoints. “Major” errors involved substantial modifications in the interpretation of data within the text, figures, or tables, or resulted in a significant change to the article’s conclusions. 5 Examples of major errors included the retraction of a published article, incorrect statistical analyses resulting in a meaningful change in significance (eg, incorrectly performing the analysis of variance (ANOVA) thereby producing a statistically significant relationship when a nonsignificant relationship truly exists), accidental inclusion of patients that do not satisfy the inclusion criteria, revision of all originally published tables due to inadvertent inclusion of errors within these tables, and more.

All analyses were performed using JMP, version 17.0.0. SAS Institute Inc. Spearman Rank correlation coefficient was computed to describe associations between IF and error occurrence and Pearson’s correlation coefficient was used to describe associations between IF and time between publication dates (online publication date of original article vs online publication date of error). The published error rate (PER) for each journal was calculated by dividing the sum of total number of published errors by the sum of total number of publications over the same period. A mean aggregate PER for all journals, across a 4 year period, was calculated to determine how the frequency of error publication compared to total article publication rates from 2000 to 2023. PubMed, provided by the US National Library of Medicine, was used to calculate the total number of publications. Journal Citation Reports 7 was used to determine the total number of publications for each journal. One-way ANOVA was utilized to examine differences across severity with regard to IF, original article citation count, and the time duration from original article publication to error publication. A P value of <.05 was considered statistically significant.

Results

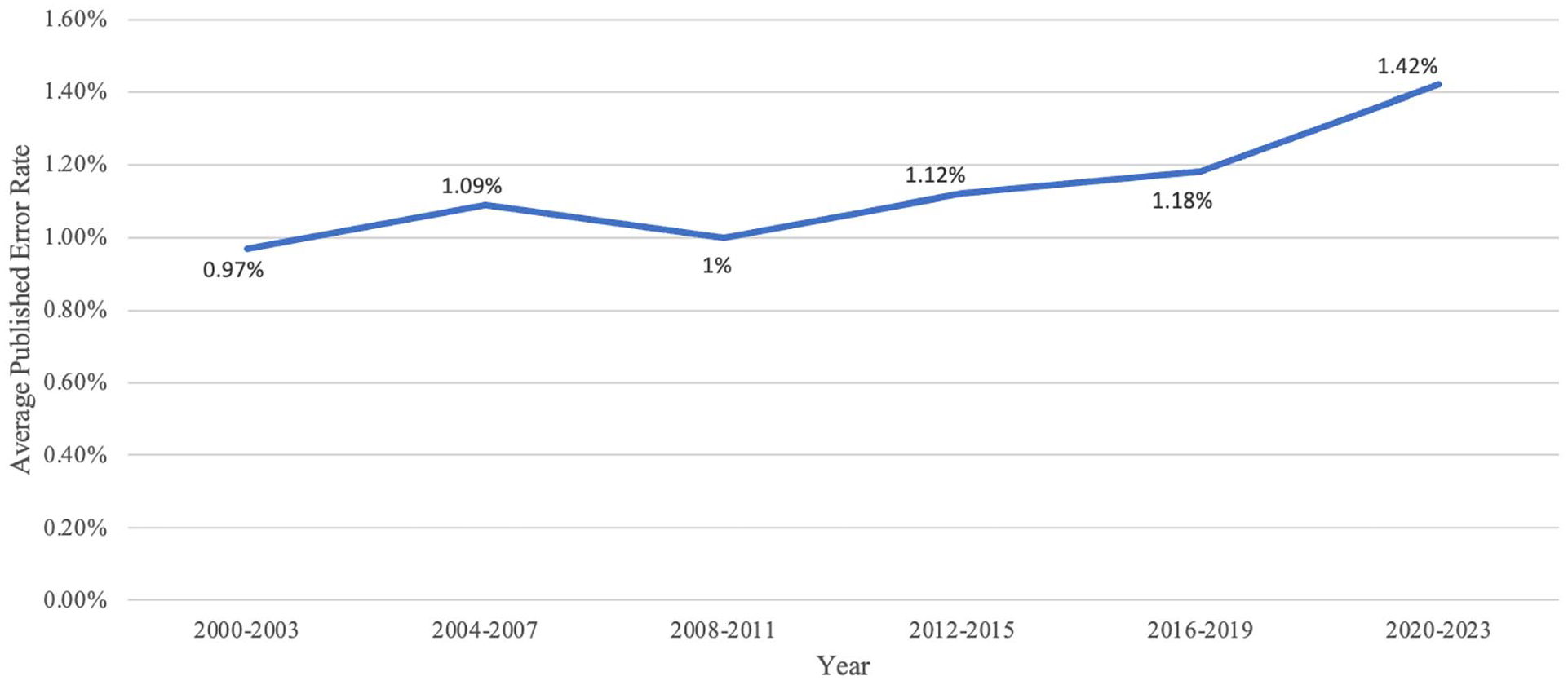

Over the study period, 739 errors were identified from a total of 93,191 entries in 28 journals. Two journals (JAMA Otolaryngology and Rhinology) reported no entries for “errata(um),” “corrigenda(um),” or “correction(s)” during this study period and were excluded from analyses. The IF of the 28 journals ranged from 1.7 to 6.4 with a mean of 2.6 ± 0.9. The calculated PER (the number of errors divided by the total number of publications) was 1.2% across all 28 journals, with the journal-specific PER ranging from 0.1% to 9.1%. The aggregate PER for all journals was calculated and separated into the following time periods: 2000 to 2003, 2004 to 2007, 2008 to 2011, 2012 to 2015, 2016 to 2019, and 2020 to 2023. The respective cumulative PER for these times period were 0.41%, 0.84%, 0.73%, 0.76%, 0.67%, and 0.89%.

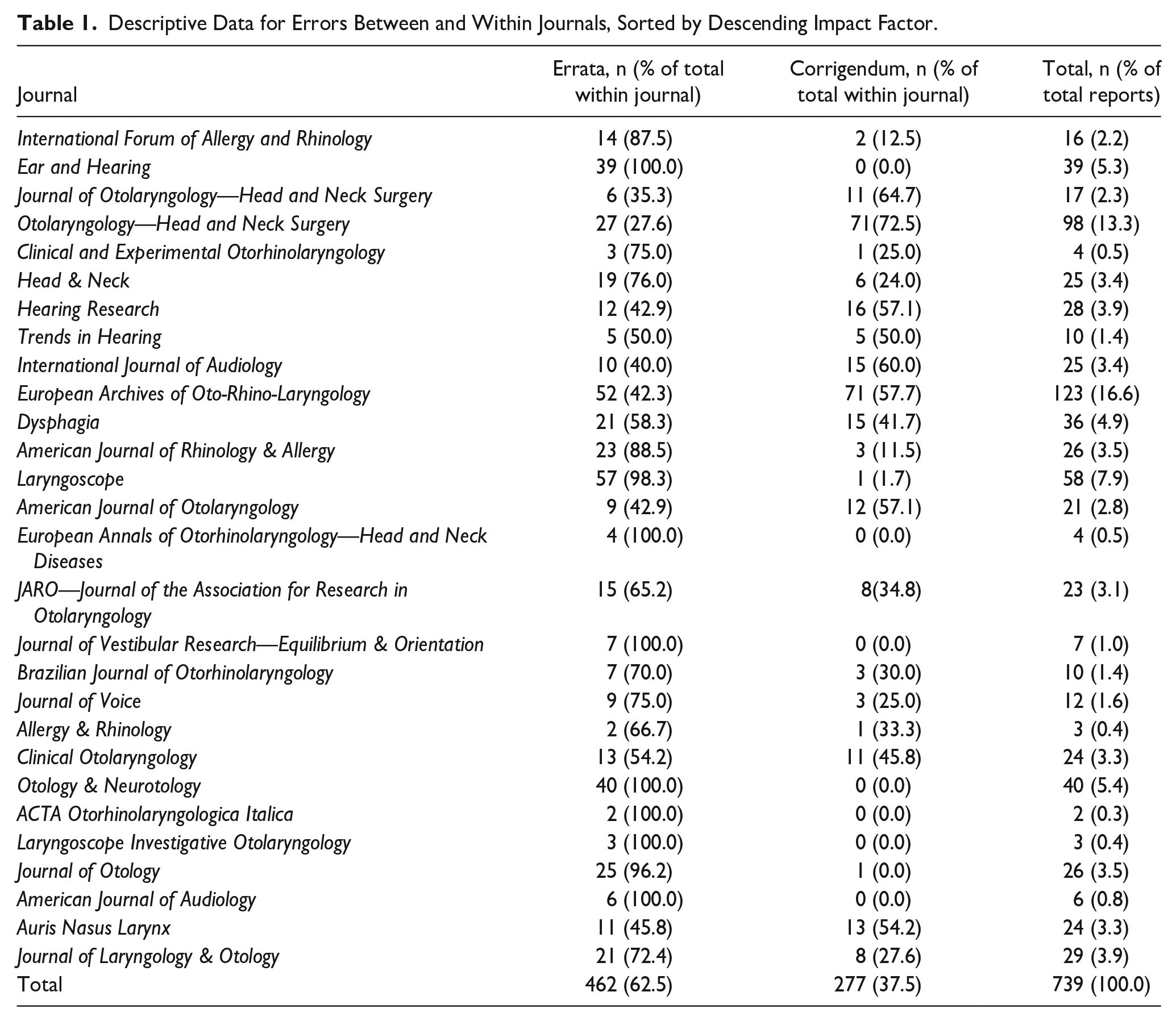

Most errors were errata (n = 462, 62.5% of total) rather than corrigenda (n = 277, 37.5% of total; Table 1). The number of errors per each journal varied significantly, ranging from 2 to 123 errors with a mean of 26.4 ± 27.5 errors per journal. The mean difference between date of online publication of the original article and date of online publication of the error was 10.8 ± 19.4 months. No significant correlation existed between a journal’s IF and this time difference (P = .953).

Descriptive Data for Errors Between and Within Journals, Sorted by Descending Impact Factor.

When stratified by 4 year periods, the PER increases over time (Figure 1). Spearman rank correlation did not show any significant relationship between IF and error occurrence (P = .979).

Published error rate versus year of online publication of errata and corrigenda as stratified into 4 year groups.

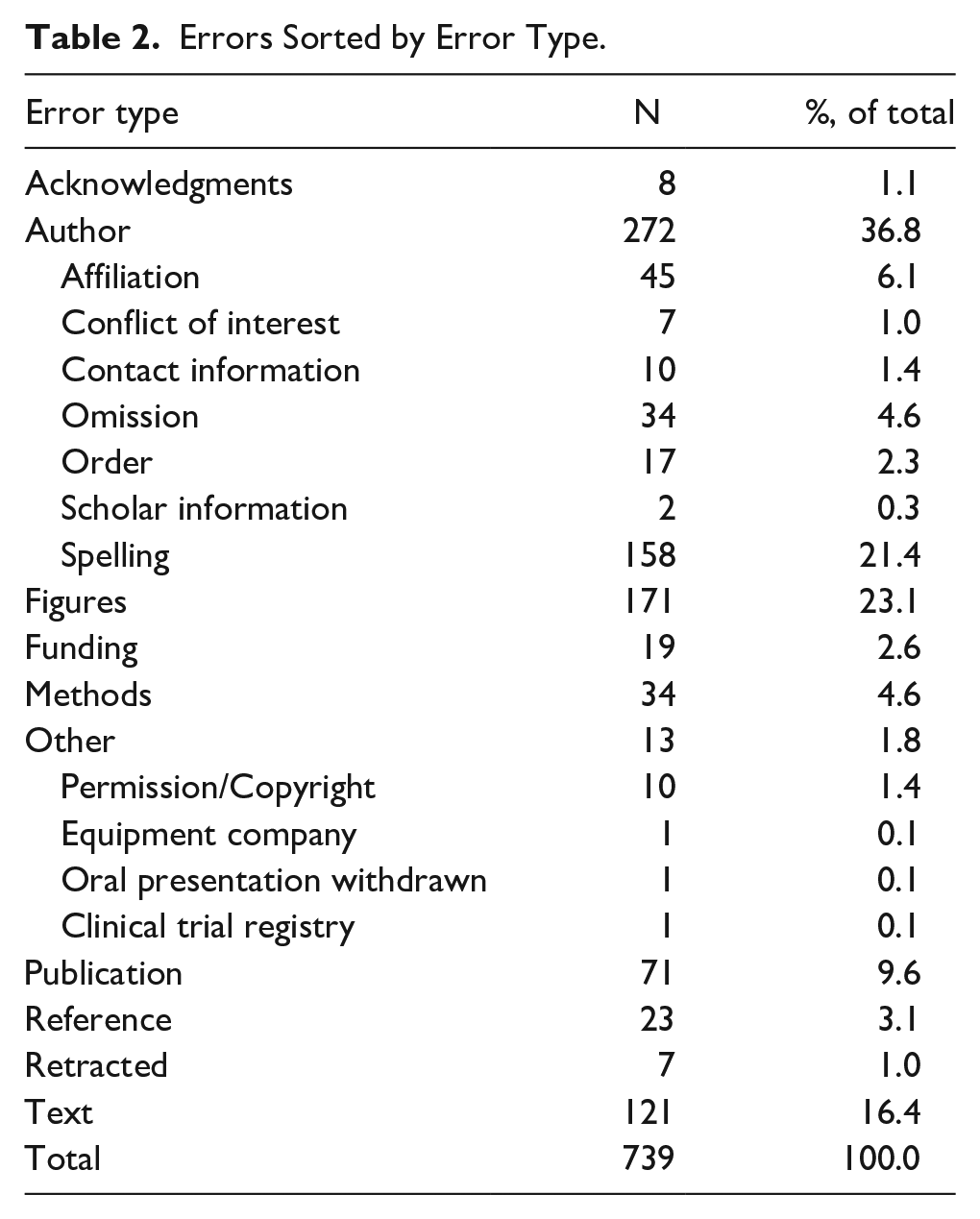

Each error was categorized by type (Table 2). Most involved authorship (eg, spelling, ordering, or author omission; n = 272, 36.8% of total), followed by figures (eg, figure legend, column headings; n = 171, 23.1% of total), and then text (eg, spelling, rephrasing/rewriting of sentences; n = 121, 16.4% of total).

Errors Sorted by Error Type.

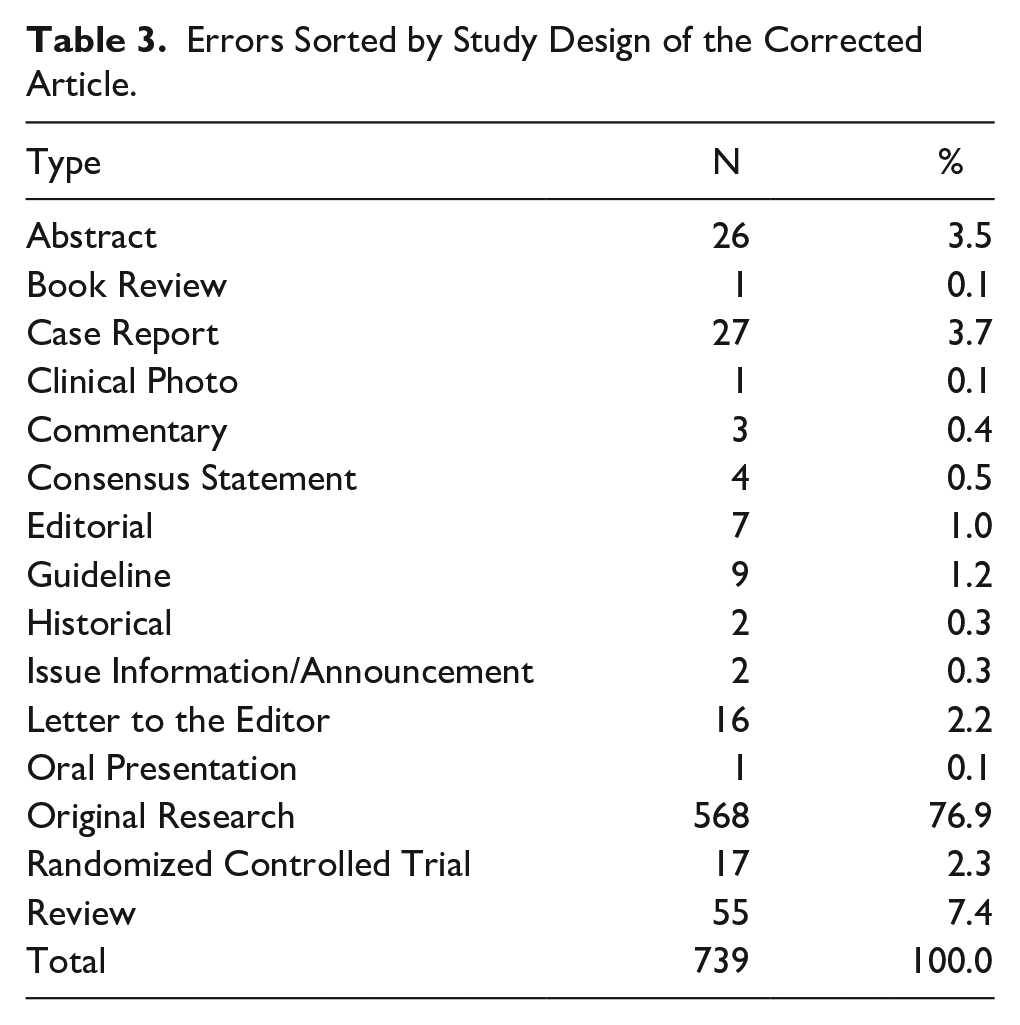

Of the 739 errors, most corrected were original research articles (n = 568, 76.9%), followed by reviews (n = 55, 7.4% of total), and then case reports (n = 27, 3.7% of total; Table 3). The first author’s country of origin in the corrected article could not be determined in 13 of the errors. Most authors were affiliated with the United States (n = 262, 36.1% of total), followed by the United Kingdom (n = 57, 7.9% of total), and then China (n = 41, 5.7% of total)

Errors Sorted by Study Design of the Corrected Article.

Of the 739 corrected articles, 731 (98.9%) provided a full list of references, with each corrected article averaging 37.6 ± 120.5 references. Furthermore, of the 739 corrected articles, 731 (98.9%) utilized CrossRef 4 to measure how often the corrected article was cited, averaging a value of 21.4 ± 38.2 citations.

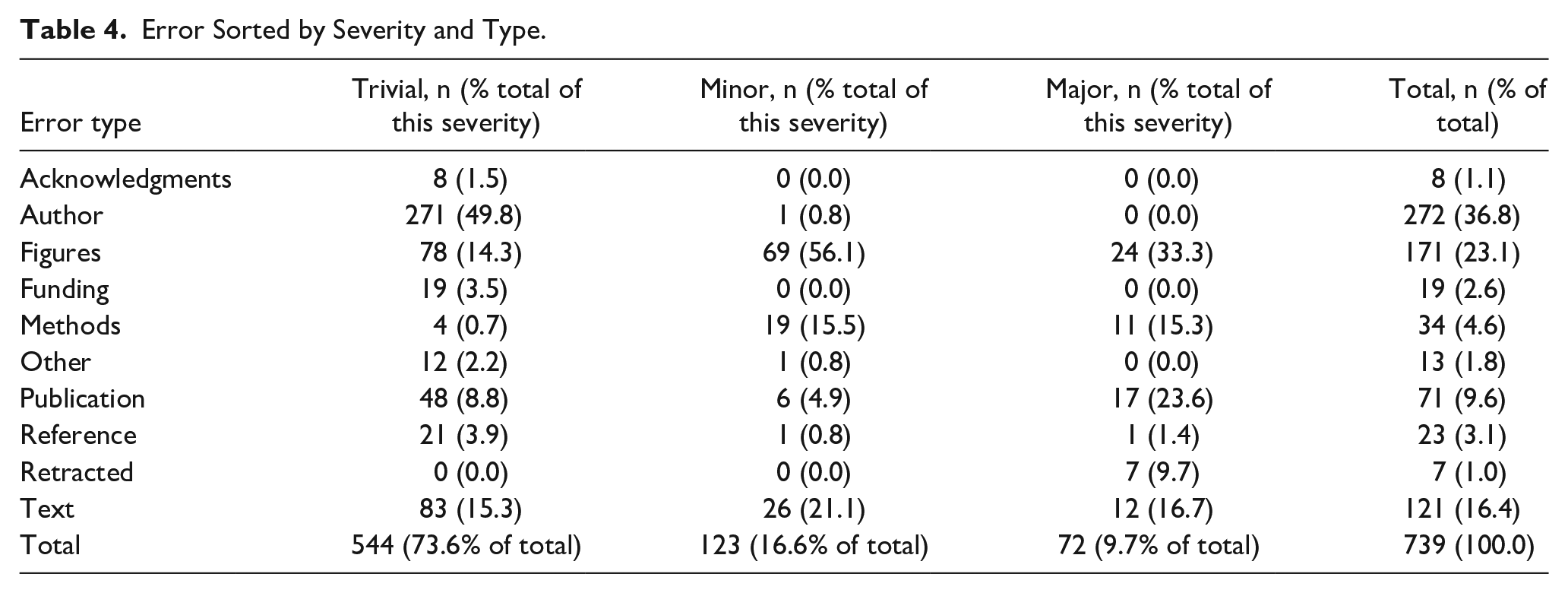

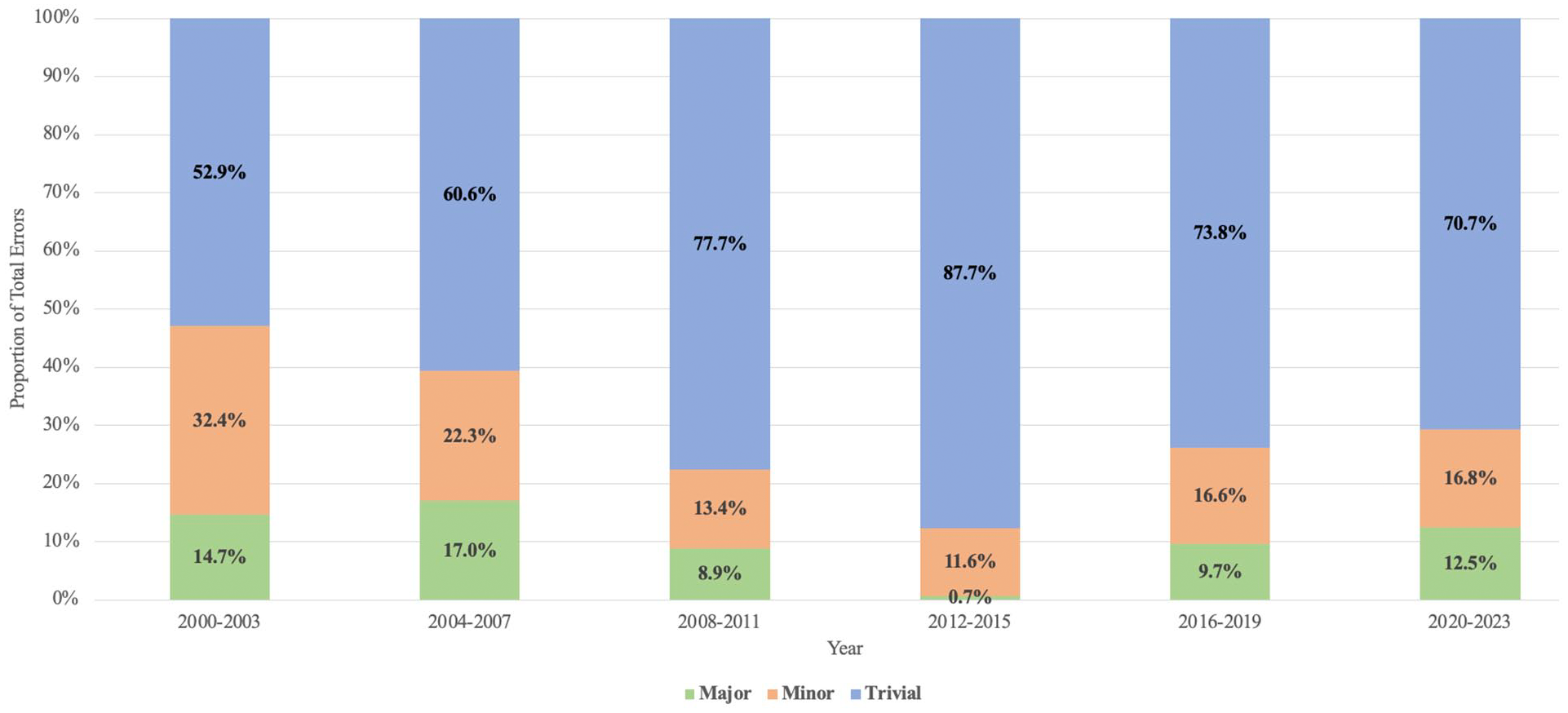

Detailed within Table 4 is the severity of each error, as categorized by this study’s first author. Most errors were “Trivial” (n = 544, 73.6% of total), followed by “Minor” (n = 123, 16.6% of total), and then “Major” (n = 72, 9.7% of total). Figure 2 demonstrates the relative proportions of errors, categorized by severity, over 4 year time intervals. Across the grades of error severity, no statistically significant difference was found with regard to journal IF (P = .293) or original article citation count (P = .139). A significant difference across error severities was found with regard to time duration from original article publication to error publication (P = .0045), with trivial errors demonstrating the shortest duration (9.5 ± 0.8 months) followed by major errors (13.6 ± 2.3 months) and then minor errors (15.3 ± 1.7 months).

Error Sorted by Severity and Type.

Error severity versus year of online publication of errors as stratified into 4 year groups.

Discussion

Growing publication rates are accompanied by an increased potential for error, with the inadvertent spread of these errors potentially affecting patient care.9,10 As corroborated by this study, rates of error publication (or at least recognition of errors) are increasing within OHNS.1,6 In our study, the aggregate PER for the 28-included journals demonstrated a positive trend, increasing from 0.41% in 2000 to 2003 to 0.89% in 2020 to 2023. Contributing factors include rising rates of manuscript publication, which increases the probability of errors, in tandem with enhanced detection capabilities afforded by Artificial Intelligence and widespread digital access, enabling greater identification of inaccuracies. Furthermore, broadening research output across the globe introduces varied standards, potentially contributing to inconsistencies and mistakes. 6 Together, this underscores the importance of stringent peer review and editorial vigilance to preserve the rapid yet reliable exchange of scientific knowledge.

To the authors’ knowledge, this present study is the first bibliometric evaluation of published errors within otolaryngology. The OHNS journals reviewed in this study published 739 errors since the year 2000, yielding a mean PER of 1.2% across all 28 journals. These rates varied considerably among the journals, ranging from 0.1% to 9.1%. In a similar analysis of the top 20 English-language general medicine and cardiovascular journals (sorted by IF) over an 18 month period, Hauptman et al found a mean PER of 4.2%. Similar to our study, the PER ranged considerably among the journals, from 18.8% with the Lancet to 0.0% with the Journal of Cardiovascular Electrophysiology. 5 In a similar study of 28 neurosurgical journals from 1990 to 2019, Akhaddar found a PER of 0.81% (range 0.08%-2.49%). 6 Ultimately, our study’s findings further corroborate the existence of a wide range of PER across the literature in multiple specialties. While likely multifactorial, variations in PER may by influenced by certain journals inherently possessing higher error rates and/or higher rates of error identification. Journals with lower IF may subject manuscripts to less stringent review processes prior to approval and subsequent publication.11,12 However, our study found no significant relationship between a journal’s IF and error frequency (P = .979). Similarly, study design may also contribute to variations in PER. More commonly read and cited study designs, such as randomized controlled trials, may undergo closer examination, leading to error discovery.13,14 In an analysis of viewership and citation rates between corrected articles and non-correct controls, spanning from September 2007 to November 2011 utilizing the PLoS ONE journal, Strothmann found that articles with corrections attracted more readers and more citations versus their non-corrected counterparts. This may suggest that by publishing errors, journal can raise their publicity, perhaps also helping to explain the discrepancy in error publication rates across journals. 15 However, the correction process is not free, and some medical journals charge authors when they are responsible for errors, presenting a possible barrier to error publication.6,16 Furthermore, the ease with which researchers identify published errors may also promote variations in PER. For example, the search term “errata OR erratum OR corrigenda OR corrigendum OR correction OR corrections” populated no results using JAMA Otolaryngology’s advanced search engine, despite the known existence of published “corrections.”17,18 Despite the guidelines created by the ICMJE and COPE to standardize error reporting,3,4 there still seems to exist variation between journals. The wide variation in terminology used to report errors, ranging from “errata(um)” to “corrigenda(um)” to “correction(s),” sometimes all found within the same journal, contributes to inconsistencies in error reporting and complicates the process of identifying errors within published works, facilitating error dissemination and putting patients at unnecessary risk.

In this study, the primary source of errors was related to authorship (n = 272, 36.8% of all errors), specifically author spelling (57.9% of all authorship errors) and author-related affiliations (16.5% of all authorship errors). As postulated by Akhaddar in his review of published errors in 28 neurosurgical journals, this may be due to the swapping of forenames and surnames in many areas, such as China and South Asia, where authors predominantly write the surname first. 6 Interestingly, these inaccuracies are the result of the authors themselves rather than the journal or publisher. While seemingly nonsignificant, inaccuracies like these prevent searching by this particular author or accurate citation of their work. 19 As suggested by Gasparyan et al, a potential solution may include digital profiles, such as the Open Researcher and Contributor ID “ORCID,” that allow for the association of literature with specific authors. By linking published works directly to their respective author names, “ORCID” can help ensure consistency in authorship and help prevent author ambiguities or misspelling. 20

Another significant source of errors occurred in figures and tables, comprising 23.1% (n = 171) of all errors. As corroborated by other studies, evidence suggests that tables and figures are less likely to be critically examined for errors during copyediting and proofreading, often resulting in errors.5,6,10,12 In addition, when categorizing corrected articles based on their type, most (n = 568, 76.9%) were original research articles (manuscripts). Other studies have corroborated this finding, demonstrating original research articles to be the most commonly corrected article type.5,6 This predominance can likely be attributed to original research articles being the most frequent publications, as suggested by major publishers including Taylor & Francis 21 and Springer. 22 The errors within this study originated from 44 different countries (as determined by the affiliation of the first author of the corrected article). The United States emerged as the predominant contributor (n = 262, 36.1% of total), followed by the United Kingdom (n = 57, 7.9% of total), and then China (n = 41, 5.7% of total). Other studies have demonstrated similar outcomes. 6 Likely owing to the prolific scholarly output of the United States, outproducing all other countries with over 1million manuscripts annually, constituting nearly one-third of all global publications in 2008, we would naturally expect the United States to significantly contribute to the observed errors. Likewise, with China and the United Kingdom being major contributors to worldwide manuscript production (the second and third most prolific countries, respectively), their noticeable presence in error publication is to also be expected. 23

This study was also the first to analyze the severity of published errors within OHNS. “Trivial” errors were the most frequent (n = 544, 73.6% of total), followed by “Minor” errors (n = 123, 16.6% of total), and “Major” errors (n = 72, 9.7% of total). Almost all authorship errors (271/272, 99.6%) and most text errors (83/121, 68.6%) were categorized as “Trivial” due to their negligible effect on findings and interpretation. In both “Minor” and “Major” errors, figures were the most common error type, accounting for 56.1% (69/123) “Minor” errors and 33.3% (24/72) “Major” errors. Hauptman et al reported a “Major” error rate of 24.2% and an average PER of 4.2% after analyzing 20 high-impact general medicine and cardiovascular journals, notably higher than our “Major” error rate of 9.9% and our PER of 1.2%. These variations may be due to multiple reasons, including the subjective nature of determining error severity. For example, Hauptman et al considered 10 author disclosure/conflict of interest errors to be “Major” errors, whereas we primarily categorized them as “Trivial” and never as “Major.” The grading of error severity, as defined by Hauptman et al, is ultimately broad, allowing for considerable subjective interpretation to how error severity is truly classified. Furthermore, Hauptman et al used the search engine PubMed 24 to search for all “errata” reports, failing to specify if this included “corrigenda(um)” or “correction(s)” as well. Likewise, Hauptman et al analyzed journals with a higher average IF (12.23), whereas our selection had a lower average IF of 2.6. This difference may influence a study’s outcomes as higher-ranking journals typically attract more viewership, potentially resulting in higher error rates. In addition, Hauptman et al exclusively analyzed original research, meta-analyses, and review articles, which may influence error patterns. On the contrary, in their review of 158 errors within 5 clinical imaging journals, Castillo et al identified a “Major” error rate of 6.3%. However, their error classification omitted the “Trivial” category and utilized different definitions for “Minor” (typographical, factual, image-related) and “Major” (statistical calculations and foundational errors) errors. Furthermore, their study was limited to examining “errata(um)” only. Despite these differences in methodology, Castillo et al reported a PER of 1.77%, close to our reported PER of 1.2%. Despite differences in methodologies across studies, there remains a need for more standardized error reporting to minimize the potentially significant impact that these inaccuracies can have.

To further explore the possibility of any relationships with error severity, our study included multiple analyses addressing this point. The severity of published errors was not found to correlate with journal IF (P = .293), suggesting that errors, regardless of their classification as major, minor, or trivial, are not exclusive to journals with lower IFs but are rather a widespread phenomenon across all journals. This observation suggests that the propensity for errors is a universal challenge within academic publishing, underscoring the need for rigorous editorial standards and quality control measures across all journals. Moreover, the frequency of citations—an indicator of an article’s influence and reach—did not correlate with error severity (P = .139), suggesting that high citation counts do not safeguard articles from the presence of errors, nor are errors the purview of less frequently cited works. Instead, the prevalence or identification of errors across articles with varying citation counts may reflect the inherent complexities of research publication and the potential oversight at multiple stages of the editorial process. Notably, the duration between the original article’s publication date and the error was significantly influenced by the severity of the errors (P = .0045), with trivial errors being addressed more expeditiously (9.5 ± .8 months) than major (13.6 ± 2.3 months) or minor errors (15.3 ± 1.7 months). Trivial errors, often pertaining to authorship issues (constituting 49.8% of such cases), are presumably easily identified and rectified more promptly due to their relative simplicity to amend. In contrast, major or minor errors—which may involve more complex revisions and have a more pronounced impact on the research’s integrity—demand a more extensive review process, which could account for the longer correction timescales. These insights highlight the nuanced nature of error corrections in academic literature and point to the importance of continuous monitoring and improvement of the publication process. This underscores the essential role of timely and effective correction mechanisms to maintain the integrity of scientific communication.

This study is not without limitations. Some publications may have been overlooked due to errors in search and due to, as discussed above, the lack of a standardized classification of errors. Despite this study’s investigation of “errata(um),” “corrigenda(um),” and “correction(s),” there may exist further published errors not captured by these terms. Notably, “publisher’s notes,” acknowledged by COPE 4 as a distinct category of potential error type, were deliberately excluded from our study. This exclusion was justified on the grounds that publisher’s notes are infrequent, limited to only a few entries for a small number of journals; content irrelevance, as they often convey journal formatting guidelines rather than specific article corrections; and their indiscriminate, often irrelevant use, with certain journals issuing a publisher’s note for each article that includes a generic statement not pertinent to the content of the article itself. In addition, it is difficult to know whether the increasing number of errors is a true reflection of more errors being made, or of increased vigilance in detecting and reporting these errors. In general, there is a lack of transparency (at least to the public) about how errors are identified and by whom. Such presents an opportunity for publishers to create an environment in which error identification (by authors or readers) is encouraged, or even rewarded. Journals may elect to recognize readers who report verified errors, either in print or via personal communication. Even if done anonymously, readers should feel emboldened to alert publishers to the possibility of error. Furthermore, the categorization of the published errors into “Trivial,” “Minor,” and “Major” is subjective and may differ when classified by other readers. Likewise, the error categorization was performed by only one author. Given this subjectivity, a more extensive review with multiple reviewers would provide a better understanding of the severity of error outcomes on medical literature. Likewise, given the time delay between publication of the original article and the error (an average of 11 months according to this study), not all errors may have been published yet. Given the limitation of this study’s findings to errors published after 2000, no trends in errors within otolaryngology before the year 2000 can be drawn.

Conclusion

To err is human, but in medical publishing, promptly addressing errors is vital to maintain scientific integrity. Despite a low error rate, averaging 1 error per 87 articles, almost 10% of errors published in leading otolaryngology journals significantly affected the article’s findings or interpretation Error rates varied substantially among journals, despite attempts for standardization of error publication by the ICMJE 3 and COPE. 4 Ultimately, while major errors are relatively infrequent, the field of Otolaryngology may benefit from better standardizing error communication to facilitate identification of published errors.

Footnotes

Acknowledgements

None.

Author Contributions

M.A.B.: conception, design, data collection, data analysis, drafting, proofing/revision. J.E.F. and D.H.C. conception, design, data analysis, drafting, proofing/revision.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.