Abstract

Research featuring adults with intellectual and developmental disabilities who engage in problem behavior has outlined various treatment approaches. The current quantitative systematic literature review identified and coded 76 peer-reviewed and gray literature articles published between January 2002 and September 2022. Following article identification and coding, we calculated effect size estimates (i.e., Tau Baseline Corrected) and assessed the methodological rigor of included articles. Through this work, we uncovered 42 unique multi-protocol treatments (i.e., treatments incorporating multiple therapeutic elements). Multi-protocol treatments were associated with larger effect sizes (more effective) compared to single-protocol treatments. The average methodological rigor score associated with peer-reviewed works was 1.6 (out of 4), while gray literature works scored 1.2. We offer commentary in response to these outcomes, alongside recommendations for future research to address the many avenues of inquiry that appear to remain largely neglected (e.g., component analysis to evaluate individual treatment elements and their efficacy).

Keywords

Intellectual and developmental disabilities are characterized by deficits in cognitive functioning (i.e., reasoning, problem solving) that result in impairments of adaptive functioning (i.e., challenges in meeting standards of personal independence and maintaining positive relationships; American Psychiatric Association, 2013). Individuals with intellectual and developmental disabilities may engage in problem behavior that can present as a variety of topographies, including but not limited to aggression, self-injurious behavior (SIB), or property destruction (Newcomb & Hagopian, 2018). In fact, up to 25% of adults with intellectual and developmental disabilities engage in problem behavior (O’Dwyer et al., 2018). This can limit their ability to effectively participate in their communities, reduce opportunities to learn new skills, interfere with engagement in meaningful activities, and lead to lower quality of life (O’Dwyer et al., 2018). As such, treating problem behavior may be of value because effective treatment can offset negative outcomes, and possibly facilitate safe community reintegration (Moore et al., 2020).

Behavior analytic theory suggests problem behavior may develop and persist for a variety of specific purposes, also called behavior functions. This perspective informs treatment approaches. In fact, best-practice recommendations in applied behavior analysis encourage a function-based approach to treating problem behavior (Melanson & Fahmie, 2023). Through this lens, behavioral practitioners and researchers alike enact what may be referred to as antecedent (i.e., non-contingent reinforcement; Newman et al., 2021) and/or consequence-based approaches (i.e., differential reinforcement; Long et al., 2005). Antecedent treatments have been described as proactive strategies implemented before problem behaviors. The idea is to alter environmental conditions to reduce the likelihood of said problem behaviors from occurring. Consequence-based approaches typically involve altering outcomes following a problem behavior to influence the future likelihood of the behavior. Relatedly, consequence-based treatments may be described as reinforcement, which has been defined as the presentation or removal of stimuli to increase the likelihood of future behavior (Cooper et al., 2020). They may also be described as punishment-based, wherein the presentation or removal of stimuli decreases future the likelihood of problem behavior (Cooper et al., 2020). Importantly, best-practice in the application of punishment-based treatments instructs behavioral practitioners to apply this technique in concert with reinforcement-based strategies (Thompson et al., 1999). Presently, it appears contemporary research has fully adopted this practice (Ayvaci et al., 2024). As it stands, function-based behavior analytic treatment strategies have been applied in various contexts with diverse components (e.g., Muharib & Gregori, 2022). However, it is imperative for researchers to periodically examine and evaluate the available evidence on a given topic so that an objective summary on the current state of knowledge may be disseminated. This process is often referred to conducting a systematic literature review, and embarking on this work may facilitate evidence-based decisions while also identifying existing research gaps (Pigott & Polanin, 2020). It follows that the following section is not an exhaustive overview of existing review papers that feature the array of potential behavior analytic treatment outcomes, as this goes beyond the scope of the current project. Our intention is to briefly provide readers with some insights into the adult problem behavior treatment research to date, and corresponding research gaps that our project endeavored to address.

Existing Systematic Literature Reviews

Functional communication training (FCT) may be described as a function-based treatment that allows individuals to access the reinforcer that is maintaining their problem behavior through appropriate communication (Cooper et al., 2020). In 2011, Kurtz et al. conducted a review paper on this topic (FCT). Through this project, the authors concluded that more research featuring adults with intellectual and developmental disabilities was required. Gerow et al. (2018) followed up on this work examining FCT articles published between 1985 and 2017, while also applying What Works Clearinghouse standards to comment on research quality. Unfortunately, it appears research completed during the approximate 7-year gap between the Kurtz et al. (2011) and Gerow et al. (2018) publication did not seem to address the scarcity in research featuring adults on this topic. This was evidenced by Gerow et al. (2018) reiterating Kurtz et al.’s (2011) sentiment regarding the need for further research on the efficacy of FCT in treating adults with intellectual and developmental disabilities who engage in problem behavior. More recently, a

Further, to our knowledge no reviews that examined this clinical population evaluated treatment type using standardized measures (e.g., effect size [ES] estimates). This may be problematic because an objective evaluation of treatment effectiveness may be limited without the inclusion of coefficients meant to quantify study outcomes. Effect size measures can better quantify treatment impact, potentially enhancing the interpretation of research findings for practical application. Further, neglecting to quantify outcomes by calculating ES estimates may preclude reviews that feature single-case design studies from being included in cross disciplinary meta-analysis (Dowdy et al., 2021). This is problematic indeed, given the bulk of problem behavior treatment literature leverages single-case design methodology. Finally, including ES estimates may enhance single-case design review visibility (Dowdy et al., 2021). In addition to ES, strong reviews will often also feature rigor assessment because it may serve to enhance a reviews’ credibility of findings. For example, if the only studies wherein a large ES was observed were poorly executed (lacked rigor)—this may cast doubt on the overall benefit of that treatment type. It follows that, both aspects (ES and rigor assessment) are required when determining whether a treatment meets evidence-based practice standards (e.g., Kratochwill et al., 2010). That is, a treatment may be labeled as evidence-based upon observing multiple high-quality studies demonstrating consistent effects.

Other limitations associated with existing reviews that targeted a similar topic include covering only specific treatment types (e.g., Chowdhury & Benson, 2011; Gerow et al., 2018; Gregori et al., 2020) and covering participants of all ages. The latter may be identified as an area for improvement because it may have prohibited detailed examination around relevant adult participant and study characteristics (e.g., Kurtz et al., 2011; Lloyd & Kennedy, 2014). Examining adult participant and study characteristics may add value by uncovering treatments needs and/or research gaps unique to this clinical population, as well as subgroups comprising this clinical population (e.g., medication present vs. medication absent).

Research Questions

This systematic literature review examined the following research questions: (1) What are the adult participant and study characteristic trends in the problem behavior treatment literature? (2) Which treatment types coincide with the largest (or smallest) ES estimate? (3) What are the ES estimates associated with peer-reviewed vs gray literature? and (4) What are the Single-Case Analysis and Framework (SCARF) scores associated with peer-reviewed vs gray literature?

Method

Search Procedure

Ian Gordon, a Resource Librarian at Brock University supported developing a search string (see Table 1). We applied the search string across five databases (Medline, Embase, Web of Science Core Collection, and ERIC, and ProQuest) to identify eligible peer-reviewed and gray literature. The search string was applied to article keywords and abstracts and included terms pertaining to three overarching categories: diagnosis of intellectual and developmental disorder, the presence of problem behavior, and treatment (see Table 1). Importantly, uncovering specific works featuring adult participants can be challenging (Briggs & Mitteer, 2022) in part because the term “adult” is not often used as a keyword or anywhere in papers featuring this clinical population. This was a reason we elected not to include any terms pertaining to age in our search string.

Article Identification Details.

Article Identification, Screening, and Coding

Article Identification

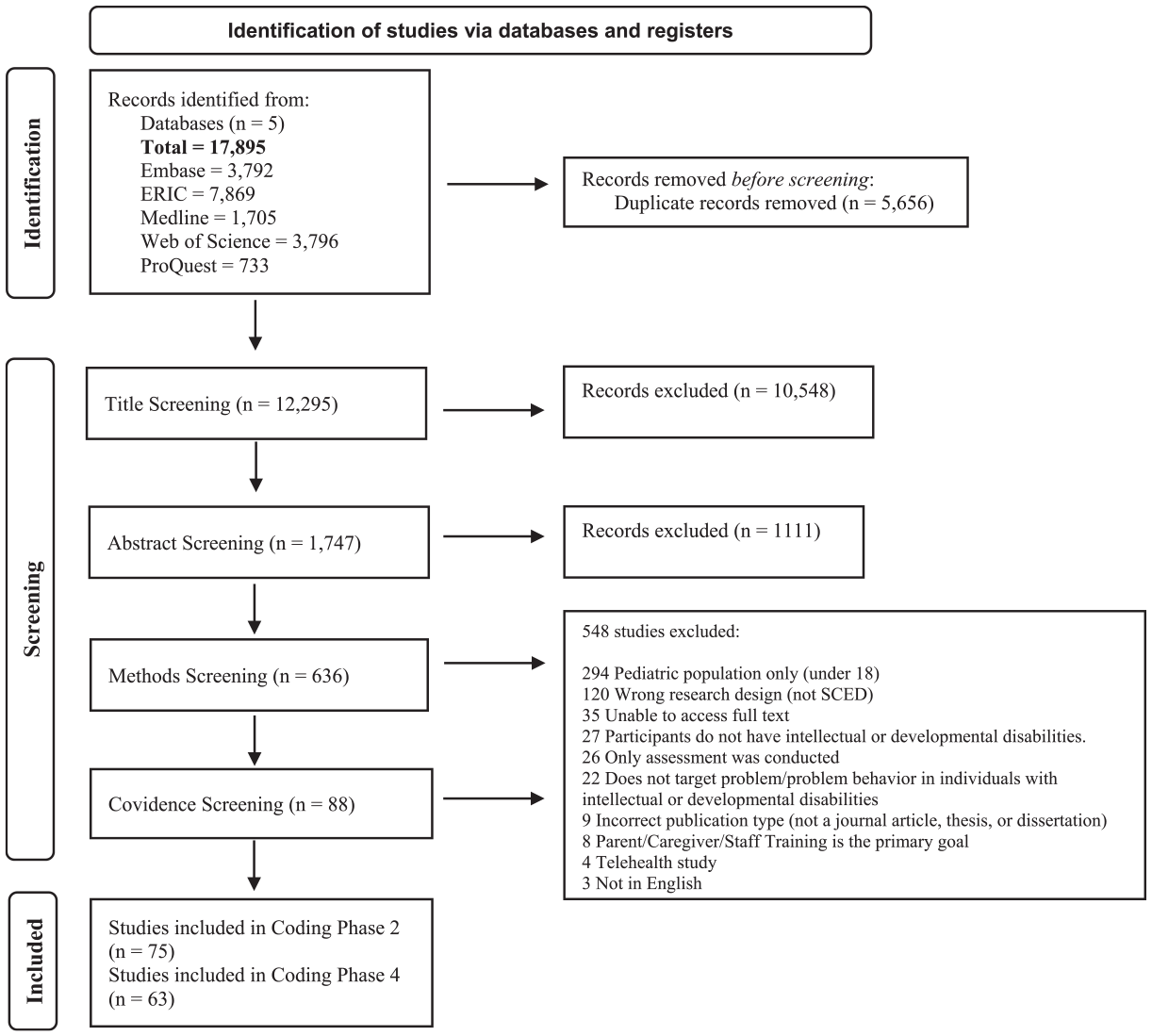

During pre-screening, articles were filtered by publication type (e.g., articles, books, etc.) through Zotero (see Figure 1). Peer-reviewed journal articles were included, while theses and dissertations were included as gray literature.

Article flowchart.

Screening

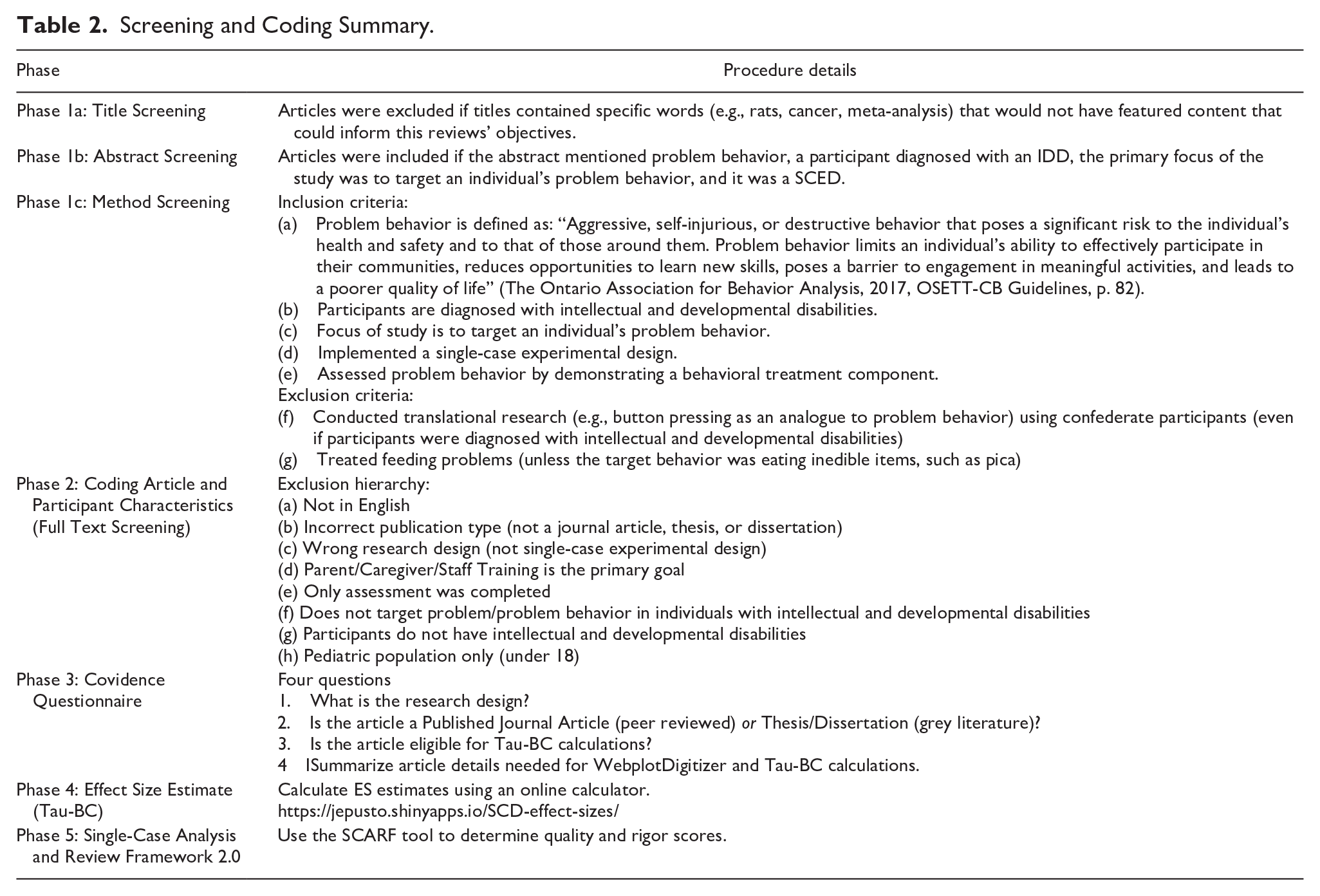

Phase 1a, 1b, and 1c

Phase 1 included title (1a), abstract (1b), and methods (1c) screening (see Table 2). We also conducted a hand search of the Reference sections of all included articles in the Muharib and Gregori (2022) review paper which resulted in a total of 10 articles that were overlooked by our search string. For example, our search string did not identify Wallace et al. (2012) because search terms relating to disability and diagnosis (i.e., autism) were not present in the abstract or keywords even though participants with these diagnoses were featured in the paper. The decision to exclude group design studies from the review was based on several considerations, including the fact that group design research frequently uses standardized measures to report findings whereas SCED often relies on repeated direct observation which can make direct comparisons difficult (Ledford & Gast, 2018; Van den Noortgate & Onghena, 2008).

Screening and Coding Summary.

Supplemental material of the coded article list has been submitted alongside this manuscript and is available upon request through the journal.

Article Coding

Phase 2: Coding Article and Participant Characteristics

During this phase, we extracted information on participant ages (years and months), problem behavior topography, setting where the treatment was implemented, presence of a FA, problem behavior function, and treatment type. Treatment types were coded based on how the original author labeled (i.e., tacted) the procedures, resulting in 29 categories. Overall, we categorized and coded study and participants characteristics in accordance with Cox et al. (2021) with some additions like, pharmacological treatment status and maintenance. Further, we applied multiple response coding for relevant categories to permit commentary on the presence of individual treatment components. Treatments were also categorized as either antecedent, reinforcement, or punishment based.

Single and Multi-Protocol Treatments

Once descriptive data were coded, articles were sorted as single or multi-protocol treatments. In other words, whether the treatment consisted of one component (single protocol) or more than one (multi-protocol) components. For example, Wallace et al. (2012) implemented a multi-treatment protocol by enacting FCT with extinction. By contrast, Bailey et al. (2002) implemented FCT without the use of extinction, exemplifying a single-protocol treatment. Given FCT is usually used with extinction (Kurtz et al., 2011), we assumed extinction was implemented unless otherwise specified (e.g., Muharib & Gregori, 2022).

Multiple Response Coding: Percentage Base

The research assistants coded variables (e.g., problem behavior topographies, setting, treatment type) that were often mentioned multiple times across a single article. For example, in Buck (2017) there were two adult participants. One engaged in aggression toward others and self, and the other engaged in aggression toward others and self, as well as property destruction. To retain as much information as possible, in this case account for all problem behavior topographies, we applied a

Phase 3: Covidence Questionnaire

The Covidence Questionnaire was used to identify publication type and reveal whether an article met criteria for Tau-Baseline Corrected (Tau-BC) calculations. Tau-BC criteria included (a) individual data reported for each participant, and (b) a minimum of three baseline and three treatment data points (see Parker et al., 2011 for more information on Tau). If the original study authors only reported percentage reduction of problem behavior (e.g., 95% reduction in problem behavior from baseline to treatment) or only indicated average values for treatment phases (e.g., Feldman et al., 2002), they were excluded because this did not allow for Tau-BC calculations. Alternatively, if the dependent variable was recorded as percentage of interval across sessions (e.g., Harper et al., 2013), or individual data was reported for each participant, this was included as there were sufficient data points for Tau-BC calculations. The questionnaire also prompted research assistants to report the number of adult participants, relevant treatment phases, and page numbers for the applicable graphs.

Tau-BC

Single-case experimental design ES estimates represent the amount of change from baseline (control group) to treatment (Vannest & Sallese, 2021). However, unlike a group design ES coefficient, clear guidelines are not available to indicate the most appropriate ES or method for SCED (Becraft et al., 2020; Manolov & Solanas, 2018; Tarlow, 2017). As such, there exist several ES estimates options each with unique advantages and disadvantages (Dowdy et al., 2021). One ES estimate example is Tau-BC. We elected to employ this ES estimate for several reasons. First, the approach uses a robust nonparametric estimator instead of a least squares regression. Given fewer assumptions may be associated with non-parametric statistical procedures, outcomes generated via Tau-BC may be less affected when the data informing the analysis do not adhere to inferential statistic assumptions (Tarlow, 2017). Further, Tau (including Tau-U, Tau-BC) are the primary statistics used to detect whether an effect is present or not in SCED (Costello et al., 2022). There are several other relevant advantages and disadvantages associated with this ES estimate. However, a comprehensive description goes beyond the scope of the current paper (interested readers may review Dowdy et al., 2021).

We enacted several steps that ultimately informed the ES estimate that was selected. First, we considered the advantages and disadvantages in relation to our datasets and the project’s aims (e.g., Dowdy et al., 2021). Following this, a random sample (10%) of articles was drawn from a similar, brief review (i.e., Cox et al., 2021). We evaluated these randomly selected articles to determine how many of the participant datasets lent themselves to generating an ES estimate. Applying Tau-BC appeared to have retained the largest sample. That is, we could produce an ES for most datasets featured in the random sample (83%) compared to other ES estimate options (e.g., between-case standardized mean difference, Dowdy et al., 2021). Importantly, the online calculator we used to generate Tau-BC effect sizes (https://ktarlow.com/stats/tau/) was designed to apply baseline corrections only for datasets wherein this was an appropriate adjustment (Tarlow, 2017). We decided to use the term Tau-BC when referencing ES estimates henceforth, to communicate to readers that each data set was carefully examined for the potential need for baseline correction even though corrections were not always applied (interested readers are referred to Tarlow, 2017 for further information).

Phase 4: Effect Size Estimate (Tau-BC)

Raw Data Extraction

We used WebPlotDigitizer to extract the data from eligible articles to generate Tau-BC coefficients. Following this, we used an online ES calculator to automate generating Tau-BC coefficients, and their corresponding standard error and 95% confidence intervals (CI; Pustejovsky et al. 2021). We entered data from baseline and

Effect Size Benchmarks

We opted to apply the benchmarks outlined by Vannest and Ninci (2015) due, in part, to similarities in population and problem behavior. Thus, a small ES was ≤ 0.19, a moderate ES was between 0.20 and 0.59, a larger ES was between 0.60 and 0.80, and a very large ES was ≤ 0.81.

Confidence Intervals and Standard Error

Recently, Walker (2016) discussed CIs for Kendall’s tau with small samples. This author concluded that for all Tau scores, as sample size increases the CI becomes narrower; a narrower CI may suggest greater certainty regarding the ES (Field, 2018). In the current context, this may mean that if a CI crosses zero it suggests that the

Phase 5: Single-Case Analysis and Framework 2.0

We applied SCARF (Ledford et al., 2020) 2.0 to assess the study quality and rigor within and across all included articles that qualified for ES calculations. This tool is generally comprised of 47 questions across ten categories with dichotomous responses (yes and no as the possible responses). Categories include participant description, dependent variable description, dependent variable reliability, condition descriptions, independent variable reliability (fidelity), social and ecological validity, stimulus generalization, response generalization, maintenance, and sufficiency of data (Ledford et al., 2020). Scores could range between 0 and 4. Scores closer to 0 coincide with low rigor and scores closer to 4 indicate higher quality evidence. Previous researchers have designated various minimum acceptable scores as review inclusion criteria (Chazin, Ledford, & Pak, 2021; Chazin, Velez, & Ledford, 2021). However, given few researchers have attempted to empirically establish acceptability cut-off scores, we decided to include all articles independent of their SCARF score. At the same time, for the purpose of commenting on quality and rigor, we elected to designate the value of 1.5 or above as an indicator of acceptable or good quality (Chazin, Ledford, & Pak, 2021). Interested readers may reference Ledford et al (2020) for further information on this quality appraisal tool.

Interobserver Agreement for Coding

Research assistants were trained virtually using behavioral skills training steps (i.e., didactic, modeling, rehearsal, feedback; Parsons et al., 2012). The first author facilitated individual research assistant training for each phase of the study.

Interobserver agreement (IOA) was conducted for 31% to 45% of each phase of the review.

Trial-by-trial IOA was applied in all phases except for Phase 2 where total count IOA was conducted. Average agreement across all phases ranged from 79% to 100%. We resolved conflicts across phases through consensus between the first author and the senior researcher on the project (BCBA-D).

Results

Applying the search string to five databases across the years 2002 to 2022 yielded a total of 17,895 articles (see Figure 1). These years were selected as it covers only contemporary articles (i.e., no more than 20 years old; King et al., 2020) ensuring relevance to current practices and methodological advancements. This is meaningful because research standards are continuously improving making it important to review articles in an equitable manner (King et al., 2020). The percentage of adult participants featured across all behavior analytic problem behavior treatment literature, which was relatively low across years (~13%). This outcome suggests articles on this topic (i.e., problem behavior treatment) appear to continue to primarily feature child participants.

Phase 2: Coding Article and Participant Characteristics

Overall, 76 articles met criteria for Phase 2: Coding Article and Participant Characteristics, 69 peer-reviewed articles and 7 gray literature papers. In short, they featured a SCED with at least one

Problem Behavior Topographies

Aggression (28%) and SIB (26%) appeared most often across the sample. Many studies discussed more than one problem behavior per participant. In fact, 28% of the sample featured adult participants who engaged in both aggression and SIB, and these behaviors were often recorded as separate dependent variables. Seventy-four percent of the sample reported other problem behavior topographies which included but were not limited to disruptive behavior (e.g., Crutchfield, 2014), property destruction (e.g., Joy, 2009), verbal aggression (e.g., Buck, 2017), inappropriate sexual behavior (e.g., Busch et al., 2022), and elopement (e.g., Lehardy et al., 2013).

Setting

The most prevalent treatment setting was a residential/state environment (32% of the sample; Harper & Luiselli, 2019), followed by outpatient/day programs (23% of the sample; Jimenez, 2011), and research/treatment centers or therapy rooms (15% of the sample; Kliebert et al., 2011). Fifteen percent of the sample reported multiple settings (e.g., Jonathan received treatment at home and in the community setting; Joy, 2009).

Functional Analysis and Behavior Function

Behavior function was confirmed via FA across 79% of the sample. Authors of the remaining 21% of the sample did not complete a FA, but instead conducted other functional behavior assessments components, including structured interviews and/or direct observations (e.g., Scibelli et al., 2022; Travis & Sturmey, 2013). Author rationale for electing to forgo FA included completion of a functional behavioral assessment instead (e.g., Scibelli et al., 2022) or a lack of ethics clearance to conduct a FA (e.g., Courtemanche, 2012). Fifty-five percent of this sample mentioned problem behavior maintained by a single function, and 45% discussed problem behavior maintained by multiple functions.

Maintenance

Maintenance was conducted and reported in 37% of the sample (e.g., Schmidt et al., 2021). In some articles, maintenance was only completed for one participant (Feldman et al., 2002).

Multi Versus Single-Protocol Treatments

Seventy-six percent of the sample employed multi-protocol treatments, while the rest were single-protocol treatments. Table 3 outlines treatment type observed, separated into antecedent, reinforcement, and punishment categories. Thirty-one percent of the sample included an antecedent treatment component (e.g., choice, non-contingent reinforcement). Fifty-one percent of the sample included a reinforcement treatment component (e.g., differential reinforcement, FCT). Eighteen percent of the sample included a punishment element in their treatments (e.g., response cost, response blocking). Taken together, FCT (with and without extinction) was the most frequently applied treatment type and was reported across 18% of the sample (e.g., Chezan et al., 2014; Conklin & Mayer, 2011). Video modeling, negative reinforcement, physical restraint, verbal reprimand, overcorrection, and mechanical restraints were each observed in 1% of the sample, respectively.

Breakdown of Categorization of Treatment Types.

Phases 3 and 4: Covidence Questionnaire and Effect Size Estimate (Tau-BC)

In applying the Covidence Questionnaire (Phase 3), 64 of the 76 articles that had met eligibility for Phase 2 screening met criteria for undergoing Phase 4. That is, these 64 articles (57 peer-reviewed articles, 7 gray literature articles) featured sufficient information to generate a coefficient. There were eight articles wherein the original authors provided aggregate data. We contacted all eight, and two of the eight authors graciously provided the requested raw data.

Effect Size Estimates

A participant could contribute more than one ES. That is, if the original study authors reported more than one problem behavior topographies (e.g., Bob and Percy; De Wein & Miller, 2009), or more than one treatment were applied and compared (e.g., Bailey et al., 2002), these would be considered separate

Single and Multi-Protocol Treatments

Eighteen percent of the Phase 4: Effect Size Estimate (Tau-BC) sample (

Regarding multi-protocol treatments, antecedent approaches were never applied without concurrent consequence-based reinforcement (e.g., differential reinforcement) elements and/or punishment elements. It follows that, reinforcement approaches were most frequently applied (80% of the sample), with punishment approaches applied across 2% of the sample. Finally, multi-protocol with punishment and reinforcement elements were applied across 18% of this sample.

Treatment Type and Effect Size

Multi-protocol treatments coincided with an average ES of 0.64 (

Excluding Extinction

Regarding FCT, differential reinforcement of alternative behavior, and differential reinforcement of other behavior procedures, several articles explicitly stated they did not use extinction. As such, we felt it prudent to explore these outcomes separately. In short, number of cases (i.e., sample size) comprising these categories ranged from 2 to 41, with results suggesting substantial ES variability (range, 0.11–0.94) and corresponding CIs across treatment condition. The CIs associated with some categories were rather wide (i.e., FCT without extinction), while other categories showcased a higher percentage of CIs crossing zero (i.e., differential reinforcement or alternative behavior without extinction). Recall, the wider the CI the less certain one can be about the outcomes. Relatedly, CIs that cross zero generally indicates greater uncertainty about whether the treatment had a meaningful effect.

Publication Type and Effect Size

Compared to gray literature, in general the peer-reviewed literature yielded higher Tau-BC scores (0.27 and 0.67, respectively), a smaller SE, narrower CIs, and fewer CIs crossing zero. However, these findings should be interpreted with caution due to differing sample sizes, as well as other considerations related to interpretation (see Discussion section for more details).

Phase 5: Single-Case Analysis and Review Framework 2.0

The average SCARF score of across all coded articles was 1.6 (out of 4). Peer-reviewed articles coincided with a slightly higher average (

Discussion

Several noteworthy outcomes may have been uncovered. First, perhaps not surprisingly, the review reiterates existing research outcomes that suggest child participants continue to be featured more often compared to adult participants (e.g., Cox et al., 2021; Gerow et al., 2018). One possible explanation for this outcome may be the emphasis on strict adherence to criteria associated with behavior analytic research (e.g., the seven dimensions of applied behavior analysis; Baer et al., 1968). This strict adherence, although important, may also serve as a bottleneck that impedes research involving adult participants with intellectual and developmental disabilities. For example, stronger research designs (e.g., reversal) may be unsuitable for those displaying severe problem behavior (see Critchfield & Reed, 2017), while concurrent multiple baselines may not be feasible for smaller agencies who may support similar clients but not simultaneous. Potential solutions may include employing naturally occurring reversals (e.g., Cox et al., 2021), leveraging technology (e.g., video recording, application-based research; Beahm et al., 2023; Tassé et al., 2020), or utilizing flexible research designs (e.g., Hagopian, 2020). Another possible contributing factor may be

Another outcome was that most studies were conducted in residential or state environments. This was noteworthy because it could suggest a greater possibility for clinical replication. This is because variables typical of residential settings (e.g., housemate presence) may have been present during study implementation, which could enhance transferability to applied settings. Future research could employ flexible research designs (e.g., consecutive controlled case series; Hagopian, 2020) to explore setting-related variables. It might also be of value to apply planning and evaluation tools that may answer treatment viability questions from an ecological validity lens (e.g., Fahmie et al., 2023).

We observed 42 unique treatment package combinations. This abundance of treatment package combinations is notable because it could suggest creative solutions may have been frequently enacted to support this clinical population. Relatedly, reinforcement-based treatments were the most frequently observed in this review, with researchers’ applying these approaches diversely. Another noteworthy outcome was that many studies reported treatment data by session number rather than duration. This could limit practical application in urgent cases involving severe problem behavior. Reporting treatment duration may be crucial for informed decision-making, especially in high-risk cases (e.g., eviction risk, potentially lethal near misses). Thus, we recommend future researchers provide additional information around latency to program mastery (e.g., problem behavior reduced by 80% from baseline in 2 months). Offering this information could enable others to conduct a systematic review with the intention of directly comparing treatment package outcomes across behavioral dimensions. In the current review, multi-protocol treatments were associated with, on average, larger ESs compared to single-protocol treatments. This may suggest multi-protocol treatments may be generally more helpful for this population.

Another noteworthy outcome was that punishment elements applied concurrently with reinforcement in a multicomponent package coincided with larger effect sizes, a result consistent with the findings of Ayvaci et al. (2024). However, in general, punishment elements tended to be less often examined compared to reinforcement-based approaches. One reason for the relative dearth of multi-protocol treatments featuring a punishment element could be attributed to the low social validity of such treatments (Blampied & Kahan, 1992). It is also possible that ethics clearance for research featuring some punishment-based treatments (e.g., time out) may be more difficult to obtain. The findings of Pelios et al. (1999) have been cited by many recent authors stating that a reduced dependence on punishment approaches might be associated with improvements in function-based treatments, stemming from an increasing reliance on FA technology. Regardless, it appears large ESs may be generally associated with multi-protocol treatments that featured a punishment element. Therefore, it may be important for future researchers to develop innovative and transparent methods to integrate these elements where applicable, as outlined by the technological principle of behavior analysis. This is partly because a deeper understanding of punishment may be essential for developing highly systematic and effective behavior change, including strategies for enhancing the efficacy of less intrusive procedures and for successfully fading treatment (Lerman & Vorndran, 2002).

Unfortunately, in alignment with existing research (e.g., Harper & Luiselli, 2019) we uncovered a persistent lack of maintenance probes. Maintenance is critical for ensuring that behavior changes are sustained long-term. One possible explanation for its absence may stem from resource limitations or assumptions about skill acquisition or retention. Future studies should prioritize maintenance, potentially using cost-effective methods like video conferencing (e.g., Crowe et al., 2022) or external validity reporting (e.g., Scott et al., 2023).

Finally, identifying publication bias can be accomplished by setting an inclusion criterion wherein both peer-reviewed and gray literature on a given topic inform the review. Broadening our inclusion criteria (e.g., including peer-reviewed and gray literature) may have enabled us to draw attention to the potential presence of publication bias, as well as corresponding selection biases that may be associated with participant availability, cost, and familiarity (Hansen et al., 2021). For the present review, we opted to apply a simple approach (e.g., average ES) to showcase our consideration of this relatively neglected feature (i.e., publication bias) in SCED quantitative reviews (Brossart et al., 2006). Recall peer-reviewed articles coincided with a

Review Strengths and Limitations

This review boasted several strengths that addressed limitations described in previous reviews (Cox et al., 2021; Lloyd & Kennedy, 2014; Matson et al., 2012; Muharib & Gregori, 2022; Robertson et al., 2015), and as such may meaningfully contribute to the literature. First, we clearly outlined that an adult is an individual who is 18 years or older (Statistics Canada, 2019). Second, a broader inclusion criterion as it relates to assessment type (e.g., including articles independent of conducting an FA) allowed us to include studies conducted in situations that may better reflect practical work settings (e.g., Busch et al., 2018). As such, the outcomes may be more ecologically valid. Additionally, including

Regarding project limitations, there were challenges accessing the full text of 64 articles during the screening process. To address this, the first author requested these resources through RACER Interlibrary Loan which is a platform that allows users to request resources that a university does not own. Through this platform, we were unable to retrieve all possibly relevant articles in part because this project was not funded. Second, eight articles required further information for us to code. Out of the eight corresponding authors that we contacted, only two responded with sufficient information to include these articles for coding. Third, there was a vast difference in the sample size of treatment types, which could limit the generalizability of the findings. We also observed sample size imbalances between peer-reviewed and gray literature. However, this discrepancy may be commonplace in systematic reviews and, best practices indicate exploring publication bias through comprehensive literature inclusion outweighs sample size limitations that may coincide with this practice (Paez, 2017).

Finally, we did not register this manuscript as advised by the Preferred Reporting Items for Systematic Reviews and Meta-Analyses guidelines. However, we did follow the guidelines very closely. We recognize the significance of pre-registration and recommend future studies to follow these standards.

There is a notable scarcity of literature on behavioral treatments for adults with intellectual and developmental disabilities compared to children, limiting practitioners’ access to research for effectively supporting adult service users. Researchers are encouraged to also focus on adult participants and collaborate with practitioners to expand the evidence base, while journals may help by adjusting publication criteria to reduce bias toward favorable outcomes. This collaborative effort can help build a stronger foundation for treating problem behavior in adults with intellectual and developmental disabilities.

Supplemental Material

sj-docx-1-bmo-10.1177_01454455251332545 – Supplemental material for Research Patterns in the Treatment of Adults With Problem Behavior and Intellectual and Developmental Disabilities: A Quantitative Systematic Review

Supplemental material, sj-docx-1-bmo-10.1177_01454455251332545 for Research Patterns in the Treatment of Adults With Problem Behavior and Intellectual and Developmental Disabilities: A Quantitative Systematic Review by Nazurah Khokhar, Alison D. Cox, Asude Ayvaci, Thurka Thillainathan and Sonia Stellato in Behavior Modification

Footnotes

Acknowledgements

The authors wish to thank the following research assistants for their support in completing this project with integrity: Autumn Kozluk, Arslaan Khokhar, Sureya Mamdani, and Ushmeet Bhatti.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.