Abstract

Policy writing assessments are increasingly used as an alternative or supplementary method of assessment within the teaching of politics and policy. Such assessments, often referred to as ‘policy briefs’ or ‘briefing memos’, are often used to develop writing skills and to encourage active learning of policy-related topics among students. While they can be readily adapted to different teaching and learning contexts, it can be challenging to make appropriate design choices to implement policy writing assessments so that are able to meet the learning aims of students. This article sets out a heuristic framework, derived from the existing literature on policy writing assessments to help clarify these choices. It advocates for viewing assessment design as embedded within course design and emphasises the pedagogical and contextual aspects of assessment design. To illustrate how this heuristic framework can help those involved in course design, this article concludes with a reconstruction of the design process for a policy writing assessment in an undergraduate course on Global Energy Politics.

Keywords

Assessments are one of the most important elements of curriculum and course design. They are the main focus of students’ attention during their time in University, as they are the means through which their learning will be evaluated and therefore how students are awarded a qualification. Assessments also serve as arguably the most important cues about what students are meant to be learning. In short, ‘If you want to change student learning then change the methods of assessment’ (Brown, 1997: 7). Over the past two decades there have been significant changes in the methods of assessment that are commonly used in our courses and programmes (Blair and McGinty, 2012). There is less reliance on traditional essays and exams, and a greater willingness to use a variety of assessment methods to support student learning in ways which challenge students and encourage the learning of skills and capabilities that go beyond subject-specific knowledge.

Policy writing assessments (PWAs) are one such method that is widely used in the teaching of Public Administration, and increasingly so in Political Science and International Relations. These assessments, the most common of which are ‘policy briefs’ and ‘briefing memos’, are a flexible alternative to traditional essays that attempt to engage students with policy-oriented topics and develop skills in writing within a policy-oriented environment. In recent years, a literature on this method of assessment has developed within disciplinary journals that highlights the comparative advantages and disadvantages of policy writing assessments over traditional ‘academic writing’ and offers detailed examples of how these have been incorporated into various courses and programmes. While this literature provides useful insights into the design and implementation of policy writing assessments, what is currently lacking is practical guidance for how to make informed choices about how we might design and implement policy writing assessments in our own courses and programmes.

In this article, set out a heuristic framework for making these design and implementation choices that focuses on the pedagogical and contextual aspects of assessment design. In the first section I review the current literature on policy writing assessments to identify the scope of such assessments, the main rationales for their implementation, and many of the ‘technical’ decisions that instructors may have to make when designing their own assessments. Based on this review and a view of assessment design as embedded within course design, in the second section I set out a heuristic framework composed of five key components:

Literature review

While policy writing can also be used as a formative assessment or as an un-assessed learning activity, my main focus in this article is on policy writing as a method of summative assessment. This reflects the substantive focus of most of the learning and teaching literature on policy writing within Public Administration, Political Science and International Relations. The most commonly used label for these assessments is the ‘policy brief’, which is often used as a catch-all term for any kind of short, self-contained policy writing, and is associated with, ‘an activity in which most professionals engage frequently: briefing one’s boss or client’ (Wiley, 1991: 216). In the most widely cited article on PWAs, a ‘policy brief’ is a document which is typically produced in a workplace setting in response to requests by individuals or groups, that aims to ‘evaluate policy options on a specific issue for a specific policy audience’ (Boys and Keating, 2009: 202).

This, of course, describes a particular type of policy writing, and many authors spend time attempting to disentangle policy briefs from other forms of writing. Pennock, for instance, distinguishes between ‘briefing memos’, ‘policy briefs’ and ‘position papers’ that may have slightly different substantive focuses and page lengths (2011: 141–143). Briefing memos are described as the shortest form of policy writing, which are intended to inform an audience about a policy problem or situation within one or two pages. This is the sort of document that may be quickly prepared by policy advisors or analysts to give a prominent individual or group some essential information for understanding an issue. Policy briefs are a more in-depth and slightly longer version of briefing notes that may include more substantive analysis of, for instance, the main dimensions of a problem and the stakeholders involved. Position papers go another step further by proposing solutions to those problems and being longer still. In an alternative categorisation, Chagas-Bastos and Burges distinguish between policy briefs and, their main focus, briefing notes. While the former largely overlap with both Pennock’s description of position papers and policy briefs by examining policy problems, solutions and, additionally, programmatic questions about implementation, governance, delivery and finance, the latter are somewhat different to his understanding of ‘briefing memos’. Instead these are longer documents than briefing memos (up to 1000 words) which, ‘extend beyond a simple recitation of facts’, take longer to prepare, and ‘offer the big picture for those interested in a question, but needing more detailed knowledge to inform the decision-making process’ (Chagas-Bastos and Burges, 2019: 239, 242).

The distinctions these authors draw help to clarify the kind of PWAs they discuss, even if they don’t perfectly align with each other. Neither set of categorisations constitutes a wholly exhaustive typology of policy writing, nor do the authors set out to do so. This is largely because the range of documents that can be regarded as forms of ‘policy writing’ is expansive. In principle, it can include almost any document produced by actors involved in some kind of policy process, regardless of whether that process involves legislation, administration or evaluation of policies or other situations. Two of the most widely used guides on writing for policy-oriented audiences, Pennock (2018) and Smith (2019) highlights a range of document types that can be regarded as forms of policy communication: One-page fact sheets Issue briefs Decision memos Position papers or white papers Legislative concept proposals Policy/legislative histories Legislative testimonies Committee reports Speeches Op-eds Non-traditional formats, such as email and social media

We can easily add other types of document to this list, such as analytical reports produced for a policy-oriented audience by, for instance, civil society organisations, think tanks, research institutes, government agencies or international organisations. Indeed, a cursory glance at document repositories for most of these actors demonstrates the sheer range of possible policy writing. These documents can vary widely in length and whether they are written for internal or external audiences (Ellison, 2006: 26). They are written for a range of different purposes and include different kinds of content. This can make it quite challenging to be precise about what ‘policy writing’ actually entails and, by extension, what forms ‘policy writing assessments’ can take.

Despite the lack of agreement on what the full range and distinctions between types of policy document may be, there is broad recognition that ‘policy writing’ itself involves a somewhat different style of writing to that typically found in academic research. While academic writing privileges conceptual clarity and the testing and development of theories, policy writing is typically viewed as a more concise and less ‘jargonistic’ style, that is orientated towards intelligent non-specialist audiences who are want to gain easy access to complex information (Smith, 2019: 17–26; Wilcoxen, 2018). In policy-oriented settings, professionals are often required to write clear and accessible documents on complex topics under time-pressure for various and similarly time-pressured ‘clients’ or audiences (Pennock, 2011: 143–144). Depending on the particular aims of the document, policy writing usually makes extensive use of visual attention points to present complex information such as tables, charts and maps. This is because authors don’t tend to have the space or time to, ‘explain in detail the theoretical ideas underlying the apparently neutral choice of relevant data and explanatory narrative’ as they would in an academic essay (Druliolle, 2017: 358). Documents will often feature an ‘executive summary’ and be structured around some variation of a background-problem-analysis-solution format to allow readers to extract essential information quickly.

The development of skills in this form of writing is one of the primary concerns of many advocates of policy writing assessments. This is underpinned by what may be regarded as the first of two primary rationales for incorporating PWAs into courses and programmes – authentic assessment. Authenticity refers to ‘how well the assessment correlates to the sort of things students need to be able to do in their career after leaving the educational institutions’ (Race, 2015: 33). In other words, it is about whether an assessment can help to bridge the gap between the ‘academy’ and the ‘real world’. The validity of such a sharp distinction is highly contestable, especially when we speak about policy-oriented environments (Ellison, 2006: 26; Eriksson, 2014; Jahn, 2017). However in the context of assessment design it does point to the fact that many students will not work in academia after they complete their studies and will instead have to make connections between their ‘academic skills’ and the tasks they will be expected to do in this ‘real world’ (Trueb, 2013: 138). Broadening the range of writing styles that graduates have experience of may be one way to help them make those connections (Boys and Keating, 2009: 201; Chagas-Bastos and Burges, 2019: 237–238). Policy writing may also be regarded as ‘authentic’ to the extent that it attempts to ‘simulate’ some of the ‘conditions relating to day-to-day performance’ in policy-oriented professions (Race, 2015: 35). This may involve the production of policy writing under time pressure, but usually it is the client-oriented and problem-based aspects of the workplace that policy writing assessments attempt to stimulate.

A second rationale that is related to but partly distinct from authenticity concerns the use of PWAs as a ‘learning activity in its own right’ (Druliolle, 2017: 356–357). The process of completing these assessments may entail ‘active learning’ on the part of students (Chagas-Bastos and Burges, 2019: 238). While difficult to define, active learning encompasses a range of techniques that involve ‘learning by doing’, encourage student ownership over their own learning, and may be more suited to the development of higher-order thinking skills that entail deeper forms of learning than more passive approaches (Leston-Bandeira, 2012: 54). Policy writing assessments may be regarded as a form of inquiry-based or problem-based learning that involves individual or collaborative efforts to analyse the kind of ill-structured and ill-defined problems that define the study of policy and politics (Moseley and Connolly, forthcoming; Wilkinson, 2013: 68–71). Such analyses will typically require students to assess and present complex information from multiple sources in an accessible format and may therefore be particularly suited to encouraging ‘synthesis’ and ‘evaluation’, i.e. the highest order skills in Bloom’s taxonomy (Pennock, 2011: 145). In this sense, ‘there is no trade-off between critical thinking and something allegedly more technical such as policy writing’ (Druliolle, 2017: 358).

Advocates of PWAs often draw from both of these rationales as a basis for the development of their own assessments. Indeed, there rationales are often interlinked. Fink (2014: 4–9; 95–97) argues that authentic assessments can constitute a ‘significant learning experience’ in their own right, that not only results in workplace preparation but also encourages more engaged forms of student learning. Beyond the particular labels used to categorise different forms of policy writing, one of the striking things about this literature is the variety of different ways in which policy writing assessments can be designed and the range of different courses in which they can be used. Chagas-Bastos and Burges (2019) for instance, highlight how they implemented their 1000 word briefing memos in both introductory International Relations courses and various other Latin American Politics, International Political Economy and International Security courses of different sizes across multiple levels of undergraduate and postgraduate study. Pennock (2011) details a variety of substantive focuses for policy writing such as briefing about the history or current domestic politics around free trade agreements or using Lijphart’s democratic systems typology to develop recommendations about the potential impacts of changing the electoral system for a state legislature. Trueb (2013) combines policy writing with a simulation of German foreign policy decision making that involves group and individual writing of papers setting out the position of different departments in the Federal Foreign Office and a final strategy paper that involves a compromise between these departmental positions.

While these examples are useful for helping instructors to develop an understanding of different rationales for adopting PWAs and the variety of possible ways of designing such assessments, it can still be challenging to make good choices about how to implement PWAs in their courses. Should they supplement or replace traditional essays and exams? Should they be individual or group assessments? Under time pressure, or not? What length should the assessment be? What guidance should be given to students, and when? What weight should the assessment have in the final grade assigned to students? What marking criteria should be used to assign grades for this assessment? Such ‘technical’ decisions are necessary and important, but also difficult to navigate. More importantly, an excessive focus on these technical decisions can result in insufficient attention being paid to the pedagogical and contextual aspects of assessment design. These wider aspects are present in all of the existing literature, however because the literature has focused mainly on advocating for and exemplifying policy writing assessments it has paid less attention to helping instructors to think through the various choices they have to make when designing their own assessments.

Designing policy writing assessments: A heuristic framework

What follows is a heuristic framework that aims to help instructors make these design choices. This framework draws from the discussions of the rationales and technical aspects of policy writing assessment design in the previous section, and situates this within principles of course design. 1 This involves viewing assessments as one of the constituent parts of course design, which also includes learning aims, learning outcomes, teaching methods, and course content. Course design involves ensuring that these constituent parts, ‘work symbiotically’ (Knight, 2002: 165), and prompts us to think about course and assessment design choices in a holistic manner. This framework serves as heuristic for thinking through these choices in a structured way in order to achieve this goal. It involves five interconnected questions which, rather than focusing on the technical aspects of assessment design, are instead more contextual and more focused on the pedagogical aims of the assessment and course. Answers to these questions do not lead seamlessly to particular choices about the technical elements of assessment design. However, by thinking through these questions instructors will be in a much better position to make technical decisions for implementing policy writing assessments than if they focus on these from the outset. These questions can also serve as a useful basis for instructors who already use policy writing assessments to think through any changes to these assessments over time.

Assessment embeddedness

Where will the assessment fit within the wider curriculum and our course?

This question is by far the broadest in this framework, but also one of the most crucial. It can be easy to forget the wider learning context when designing assessments, however we must recognise that in most cases students are being taught and are learning across a range of different courses and completing multiple assessments simultaneously. Developing a working understanding of the wider context of student learning is an important first step to understanding the kinds of skills, experience and perceptions that students possess prior to undertaking PWAs. There are two main elements to this wider context. The first is the prior experience that students may have of policy writing, either in a professional setting or in other courses. In most cases this will simply involve taking account of what other subjects, courses and assessments students are expected to complete during their studies. The second is the level of access to instruction in writing and research skills within students’ programme of study. This may, for instance, involve discipline-specific or more general training on essays and other forms of writing. Both students’ prior experience and access to skills instruction can be useful cues for deciding how to design and support the assessment.

More concretely, this question also implies we should think about assessment design as embedded within course design. This immediately prompts us to think about some of the design decisions identified in the previous section – individual or group assessments, one-off assessments or cumulative, and so on. While these technical decisions are important, what is most important at this stage is to sketch out possible options for where PWAs may ‘fit’, rather than committing to any particular option at this stage. When doing this, we should think about how policy writing could connect with other elements of course design such as the development of teaching activities, intended learning outcomes and the subject matter itself. What these connections look like might be quite obvious in courses which focus on policymaking, international negotiations or those based around simulations. However, connections can readily be made to any aspect of a course that relates to political contestation (for example, between civil society organisations, businesses or positions that are often advocated by such organisations) or any attempt to apply theories and concepts to the analysis of a policy, polity or political situation. Most importantly, there is no particular requirement to precisely model a real-world situation, as will be discussed below. The key point is that instructors should consider the

Primary learning aim

What is the primary learning aim of the assessment?

This question prompts us to clarify what we want students to learn in the process of doing these assessments from the outset. A good starting point for this is the two rationales within the existing policy writing literature – authentic assessment and active learning – and which we think should take priority. Are we more concerned with students practicing ‘writing skills’ or ‘higher order thinking skills’? Do we want to ‘simulate’ particular features of policy-oriented writing environments such as time-pressure? Of course, we may see value in both rationales and want to incorporate elements of both. However, choosing to prioritise one over the other will help us make other more ‘technical’ design choices. For instance, if our main focus is on policy writing skills then we may want to assign multiple such assessments so that students have the opportunity to practice these skills and improve on the basis of the feedback they receive for each assessment. If our focus is on the sort of higher-order thinking skills that active learning can encourage, then perhaps we will only assign one policy writing assessment and seek to embed this within teaching activities in class, so students have multiple opportunities to develop these skills.

What this question also prompts us to clarify is what our assessments are actually intended to assess. Are there particular intended learning outcomes that these assessments can be used to evaluate the achievement of? Can we use these assessments to help refine our intended learning outcomes? Reflecting on the fit between assessments and course design, do we want to use these assessments to help support students engaging with particular areas of knowledge? This pushes us further into the first question of assessment embeddedness, but with hopefully a clearer sense of what our primary learning aims are. This can help us to decide on the criteria we use to evaluate student learning. In many cases, our criteria will include evaluation of many different learning aims. Boys and Keating (2009: 204), for instance, use the criteria derived from essay marking to evaluate the quality of research and more policy writing specific criteria around accessible and professional presentation according to a four-part structure they specify. They also evaluate the strengths and weaknesses of policy options on ‘pragmatic’ or ‘normative’ grounds. Both Pennock (2011) and Chagas-Bastos and Burges (2019: 243–244) use similar criteria, but also emphasise ‘content’, understood as testing knowledge about the subject matter covered in a course. Being clear about our primary learning aims helps us to ensure that our assessments are appropriate for achieving those aims and that we are transparent with students about what how their assessments will be graded (Race, 2015: 33–34).

The writing scenario

What policy writing scenario would best serve our primary learning aims and help to explain the assessment to students?

When designing PWAs, we have to contend with the fact that our students are likely to have different assumptions of what policy-oriented environments are like and therefore what kinds of assumptions they have about policy writing. These assumptions might be detailed and nuanced, they might be based on theories and concepts they have previously learned, or they might be limited and caricatured. This is often the case regardless of whether students have experience of policy-oriented environments or not, because as highlighted in the previous section policy writing encompasses a wide range of possible types of document produced in multiple different environments. A report produced by the National Audit Office may look quite different to a briefing memo produced within the Emerging Security Challenges division of NATO. It is important to try and develop a shared understanding among our students about the kind of environment that the assessment is meant to exist within. In other words, it is useful to create a ‘writing scenario’.

Developing a writing scenario can be viewed as a creative act of political world building. While all scenarios should be believable, they do not necessarily have to be wholly grounded in reality. One of the advantages of hypothetical situations is that it can help to prevent students relying too heavily on documents similar to what they are expected to produce for the assessment (Trueb, 2013: 138). Perhaps more importantly, this is where we have an opportunity to truly embed the assessment within the overall aims of the course by tailoring it to the subject matter and learning aims that we want to prioritise. Our goal when developing a scenario should be to translate these requirements in a manner that helps to build shared assumptions about policy writing among students and to help clarify what they are expected to do. Some of the key elements we should seek to clarify through the scenario are the intended (fictional) audience for the assessment and the format, goals and content that this audience would expect. There are also opportunities for instructors to ‘reveal’ parts of this scenario over multiple weeks if that helps to create a sense of realism, or even to co-create elements of the scenario with the students themselves.

Student guidance and support

What kinds of guidance and support will be provided to students?

Answers to the previous questions should already give some indication of the kinds of support students may require. We should already know what prior experience students may have of policy writing and what opportunities they have for instruction in relevant writing and research skills. Moreover, the writing scenario is itself a form of guidance that can help to clarify students expectations about the assessment. The reason to ask this question separately is that is prompts us to read across our other answers to decide on the best ways we can sequence the guidance and support we make available, and consider whether it strikes the right balance between providing students with the support they need and leaving them space to grapple with the terms of the assessment. There are, of course, no easy answers to this question, and this is the element of assessment design that is most susceptible to revision over time.

The scholarship on policy writing offers various examples of how to guide and support students. In all cases, some form of instructions will be given to students about the assessment, and usually this contains some guidance on what information should be included in the assessment and the style of writing and presentation that are expected or allowed (e.g. bullet points, visual attention points, no abbreviations, and so on). While this guidance can be developed for a specific PWA, it is also worthwhile consulting the plethora of online and offline resources on policy writing and data communication that are readily available (see Appendix 1). There are different views within the policy writing literature about what forms of guidance to issue to students. While some outline a particular structure for students to follow (Boys and Keating, 2009; Chagas-Bastos and Burges, 2019) others leave this in the hands of the students (Druliolle, 2017). There are advantages and disadvantages to both approaches. While the former can help to clarify students’ expectations and be more readily connected to marking criteria, it can also be rather constraining. Although the latter may allow for more creativity, it can also lead to students being confused about how to approach the task.

This is why it is important to think in terms of support, rather than simply in terms of guidance. Support can take many different forms, such as opportunities to ask questions and discuss the assessment in class or on virtual learning environments. However, a more productive approach may be to focus on formative feedback and the ways in which classroom and virtual learning environment activities can help to build greater understanding of assessment requirements. Deeley and Bovill (2015) identify two particular approaches which are worthy of mention. The first is to consider incorporating some element of self-review or peer-review of draft assessments. The second, is to co-design assessment marking criteria with students. They find that these can be particularly effective approaches to improving assessment literacy, while also empowering students to take greater responsibility for their learning.

Ensuring a feedback loop

How will the implementation of the assessment be evaluated?

Everyone who studies policy making is familiar with the policy stage/cycle heuristic, which ‘ends’ with some element of policy evaluation and appraisal (or termination). What is ‘true’ for policymaking is also true for course and assessment design – there should be some means of examining what worked, what didn’t, and where improvements can be made. At a minimum, I would suggest that instructors should keep a record of any questions students ask during the preparation of their assessments. This can help us to identify the most common aspects of an assessment that are unclear or unfamiliar, so these can be addressed in future iterations of the course. Similarly, we can record what students did and did not do well in their submitted assessments. However, a more active strategy is to seek student feedback on our assessments through end-of-course evaluations, questionnaires or other methods. Feedback can be sought on various aspects of the assessment such as student perceptions of support, whether they consider PWAs to be ‘authentic’ or not, and whether they found this a more engaging learning experience that others they have on the same course or programme. However, it can also be useful to complement this with strategies for ascertaining what learning has taken place. This could include examining whether submitted assessments improve over time, whether student learning of subject material improves and whether students can successfully demonstrate the achievement of particular skills or learning outcomes.

Example: Brief the Minister

Taken together, these questions amount to a heuristic framework for thinking through some of the ‘big picture’ aspects of policy writing assessments prior to the more ‘technical’ aspects of assessment and course design. It is designed to aid thinking, rather than being an overly prescriptive checklist. To help illustrate this, the remainder of this article focuses on an example of how it can be applied based on a version of my own thought process when designing a course on Global Energy Politics that includes a ‘policy brief’ assessment, structured by the framework. This is an optional course offered to students in the third and fourth years of the University of Glasgow’s undergraduate Social Science undergraduate programme. It is primarily aimed at Politics and International Relations students, however students taking other subjects in the School – Central and East European Studies, Economic and Social History, Social and Public Policy and Sociology – are also able to enrol on this course. The course provides students with an introduction to some of the (international) political issues involved in addressing the so-called energy trilemma: ensuring secure, sustainable and affordable access to energy supplies for all. While it is primarily situated within International Political Economy, it incorporates insights from Political Geography and Policy Analysis as much of the published research on energy sits across multiple (sub-) disciplines.

Assessment embeddedness

Students in the School of Social and Political Sciences have few opportunities to undertake policy writing. While some of my colleagues in Central and Eastern European Studies assign policy briefs and situation reports as assessments, these are the exception. This meant there were few ‘templates’ to base my own assessment on and that a policy writing assessment could help to address an important gap within the curriculum. This was something I was keen to do when designing my course, as I previously worked in International Public Administration and believed that it would be beneficial for students to gain some experience of policy writing as part of their University education. However, in the process of reviewing the existing curriculum it also became clear that students had few opportunities in the School to develop a basic level of knowledge and understanding about energy as an issue area. While a few International Relations and Central and Eastern European Studies courses touched on issues such as international development and European dependence on Russian gas, this didn’t involve any sustained engagement with energy politics and policymaking.

This meant that I wanted to find a way of including policy writing in my course without reducing the time students would have to develop their understanding of key concepts and other course material. I wanted to ensure that any policy writing assessment(s) I designed could directly connect with the seminar topics for the course and be embedded within teaching activities in the seminars, although at this stage I wasn’t certain how I would do this. With regards to the assessment regime, I also had to adhere to departmental norms of using two or three summative assessments within courses and ensuring that assessments allow for recoverability, i.e. students would have opportunities to improve their grade if they didn’t do well in their first assessment. In most cases, colleagues use either two academic essays or an essay and exam format, although many colleagues also use other forms of assessments such as critical literature reviews and reflective diaries. Student participation is also assessed on several courses, to a maximum of 10% of the final grade, and usually requires students to deliver group presentations or to complete other tasks over the course of a semester. Taking this into account, I developed two options for how I might structure the assessment regime for the course. The first was to assign two policy writing assessments – one short brief followed by a longer report, thereby giving students multiple opportunities to ‘practice’ policy writing. The second was to confine policy writing to a single policy brief and to complement this with an academic essay. Depending on the sequencing of these two assessments, I could either use the essay to allow students to ‘recover’ their grade using a more familiar format, or I could use the policy brief as a means of developing writing skills after students had become more familiar with the course content.

Primary learning aim

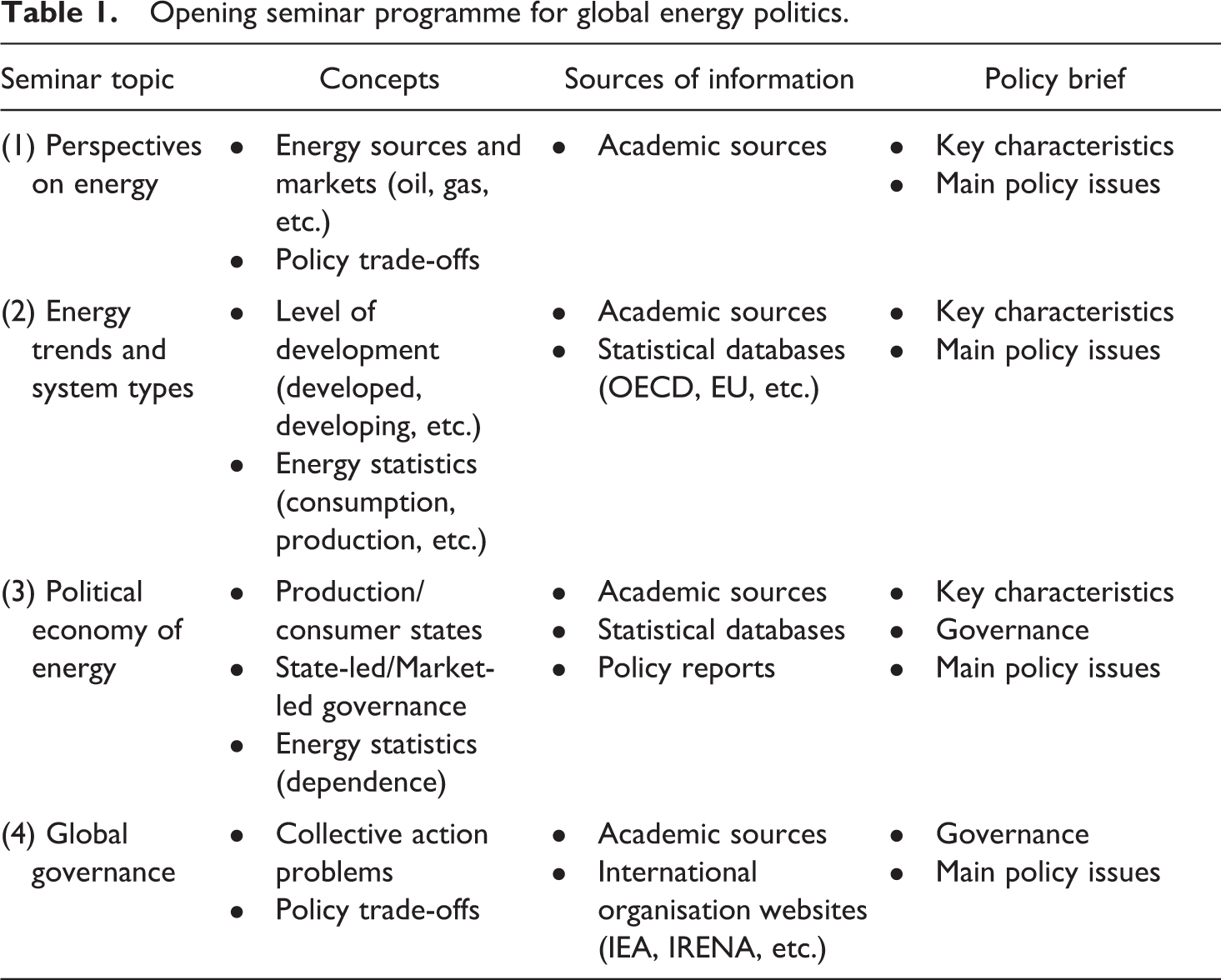

While the initial idea for this assessment came from my own lack of opportunities as a student to practice policy writing, I was clear from the outset that my primary aim was not to simulate the craft or setting or policy writing. This wasn’t the purpose of the course, whereas if I had been teaching a course on policy analysis or a related topic then I would have actively considered this. Instead, I was more concerned with students engaging in active learning about the substantive political issues involved in global energy. When I began designing the seminar programme for the course, I realised that the opening section of the course (see Table 1) could form the basis of a PWA focused on applying key concepts and interpreting sources of data.

Opening seminar programme for global energy politics.

Based on this answer, I decided to use a policy brief as the first assessment. The question remained, however, whether to assign a policy report or an essay as the second assessment. I eventually decided to use an essay, since my primary learning aim was for students to engage in active learning of the subject matter rather than practicing policy writing specifically. Because of this, and because I wanted to encourage students to ‘practice’ using key concepts and data sources, I decided to give less weight to the policy brief (25%) than the essay (65%), while including a participation grade for the course (10%). This also meant that I could base my marking criteria for the assessment primarily on the application of conceptual knowledge and appropriate use of statistical information, with the important caveat that the writing style and presentation should focus on accessible but analytical writing.

The writing scenario

With my primary learning aim clarified, I required a writing scenario in which those skills would be clearly emphasised, and which was readily intelligible to students studying Politics and International Relations in Glasgow. This required me to think carefully about which aspects of the subject material I wanted students to ‘practice’. The opening seminars would cover several areas – potential trade-offs between different energy policy goals; the impact of economic development on energy policy concerns; distinctions between producer and consumer states, and state-led and market-led approaches to the governance of energy; the role of international organisations; and, crucially, accessing and interpreting energy statistics. I decided that it would be beneficial for students to become familiar with some basic energy statistics about the ‘energy mix’ and ‘electricity mix’ and import/export dependence, since almost all published research on energy politics and policy makes extensive use of this kind of information. I also realised that if the assessment were to focus on national energy systems, this could create opportunities to select among the areas listed above, rather than being too prescriptive about what ‘must’ be included. With this decided, I needed to create a scenario that helped to communicate the focus of the assessment to students.

The scenario that I used was an imagined future in which the city of Glasgow has seceded from Scotland and the United Kingdom, following a clear and unambiguous referendum result. In order to demonstrate that the new Government represents all citizens of the fledgling city state, the First Minister has appointed a cabinet that draws from across the political spectrum. In this scenario, perhaps revealing my own delusions of grandeur, I have been appointed as the Secretary of State for energy. This is despite me having no real knowledge, understanding or experience of the energy sector. However, like any good Secretary of State, I have my own team of experts and advisers to ensure that I am fully prepared for my public duties, and the meetings I am going to have with energy companies, interest groups and officials from foreign nations that Glasgow will be keen to establish diplomatic ties with. The students are that team of experts. Their task is to individually prepare policy briefs on a national energy system of their choice – its key characteristics, how it is governed and what their main energy policy issues are – to bring me up to speed. Policy briefs have to be ‘accessible but analytical’ to provide an interested non-expert with the essential information they need to understand how the energy policies of different states differ from each other.

While this writing scenario is undoubtedly contrived and ridiculous, it serves the function of establishing clear parameters for the assessment. The audience is clearly stated as an intelligent but uninformed politician. The task is to select the most appropriate information and to present it in an accessible manner to that audience. The three areas that the briefing focuses on all draw from the material covered in the first few weeks of seminars for the course, and students were expected to use this as a means of helping to select the most appropriate information to include. The task does not require students to identify policy options, examine their feasibility or advocate for a particular solution. Instead, they are simply expected to ‘brief the Minister’.

Student guidance and support

In my answers to the earlier questions, I had already decided to integrate the policy brief into the teaching activities within the seminars. However, I had yet to decide what this would look like. While I did not want to prioritise ‘writing skills’, I was aware that students would be unfamiliar with policy writing conventions. I therefore wanted to make resources on this available and to find ways to shape in-class activities around both the subject material and the requirements of the assessment. As a first step, I introduced the writing scenario in the first seminar of the course and included a version of this in the course handbook. I complemented this with ‘how to do policy writing’ guides on the Virtual Learning Environment (VLE) for the course. As a second step, I developed various in-class activities which sought to connect the course material to the policy writing assessment. Space precludes a discussion of all of the activities in detail, but there are three that I can briefly mention.

First, I developed an exercise in which groups of students collaborated to ‘brief the Minister’ about one of four ‘types’ of national energy system that are set out in the required reading for the second seminar (Bradshaw, 2014). They were asked to deliver a 3-minute overview of the key characteristics of these system types, emphasising their most common characteristics (both political and energy consumption) and write their key points on the whiteboard. This exercise mirrors some aspects of policy writing and helps to open up discussions about both the similarities and differences between system ‘types’ (conceptual knowledge) and the advantages and disadvantages of different ways of presenting complex information (assessment knowledge). This helped to start students thinking about two key aspects of the assessment early on. Second, I asked students to search in online databases (OECD, Eurostat, etc.) for energy statistics on import dependence in various national energy systems in the third seminar as part of the discussion of producer and consumer states. This allows us to discuss what these statistics mean, different ways of interpreting them, and the different places students can get this information from. Third, I always leave time at the end of the fourth seminar for students to ask questions and to write questions on post-it notes, which I read after class and write a response to along with any other clarifications I think are necessary.

Ensuring a feedback loop

The third of these teaching activities indicates one of the ways I incorporated a feedback loop into the course, in that case for the purposes of supporting students while they are in the process of writing (or thinking about writing) the assessment. I also used questionnaires after the assessments had been marked and returned and at the end of the course to gather student’s experience of the assessment. Since running this course for the first time in 2017/18, I have made several changes to the course in response to student feedback. Many of the changes have been to the course content, for instance by spending more time on different facets of energy transitions, and most of these changes have a minimal impact on the assessment. However, there are two that are worth highlighting.

First, a significant minority of students have expressed disappointment that they don’t have the opportunity to do additional policy writing assessments in the course. This is usually because they would have liked to apply what they had learned from the feedback they received for the first assessment and to get further experience of this writing format before entering the workplace. As a result, I’ve redesigned the assessment for the course so that students can choose to do either an essay or a policy report as their second assessment. The report is longer and requires students to develop their own topic and intended audience based around the substantive areas covered in the latter half of the course.

Second, students asked for more examples of policy writing to be made available on the VLE. This was an easy request to fulfil, because countless policy briefs are produced by government departments, businesses, NGOs and think tanks on energy-related topics. However, it also prompted me to think about other ways to help students become familiar with policy writing. One is to make greater use of policy briefs and reports as required reading for seminars so that students are directly exposed to this form of writing. In the past academic year, I assigned a report on recent changes in UK energy policy that makes use of energy statistics and explicitly discusses policy trade-offs and changes in how the UK’s energy system is governed. Another, which I plan to implement this year, is based on taking Chagas-Bastos and Burges’ (2019: 240) advice on providing, ‘a briefing note for students on how to write a briefing note’ one step further. It will involve a collectively created guidance document over the course of the first few seminars, in the format of a briefing memo. In both cases, the aim is to increase familiarity with the type of writing and presentation.

Conclusion

My aim in this article was to set out a heuristic framework for making choices about how to design and implement policy writing assessments. The five elements of the framework – assessment embeddedness, primary learning aims, writing scenarios, student guidance and support, and ensuring a feedback loop – can, in my view, help to structure our thinking and make better choices. What it cannot do is make those choices for us. One of the most challenging features of policy writing assessments is that they can take many different forms and be used for many different purposes. While this might not make it easy to transpose these assessments between courses, it does mean that there is space for considerable creativity in how we design these assessments. This is, perhaps, one of the true strengths of policy writing assessments, and one of the better arguments for making greater use of them in our courses.

Footnotes

Appendix 1

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.