Abstract

As smart grids become increasingly interconnected and data-centric, they are susceptible to DDoS attacks, false data injection, and probing assaults. Traditional Intrusion Detection Systems (IDS) often struggle to identify these emerging threats due to the high-dimensional, dynamic, and imbalanced data they generate. To tackle these challenges, we present a novel hybrid deep learning model that combines Spatial-Temporal Graph Neural Networks (ST-GNNs) and Multi-Scale Transformers, integrated with an Adaptive Attention-Based Feature Fusion (AAFF) module. This approach enhances detection accuracy by revealing the intricate spatial and temporal correlations within network traffic data. The AAFF module dynamically adapts by prioritising the most relevant features, facilitating the swift detection of fraudulent activities. To enhance the model's ability to cope with atypical and novel threats, we employ contrastive self-supervised learning (CSSL), which boosts performance on imbalanced datasets. We incorporate dynamic graph generation, temporal node embedding, and Meta-Learning techniques to ensure the model remains flexible and adaptable to emerging attack patterns. A federated learning system is utilised for distributed detection across multiple grid locations, enhancing scalability and privacy. To enhance robustness, we employ Conditional Generative Adversarial Networks (CGANs) for data augmentation, allowing the model to generalise to previously unknown attack scenarios. Furthermore, we employ online active learning, enabling the model to respond to new data and attacks in real-time, ensuring prompt detection and response. We deploy the model on grid edge devices, minimising detection latency and facilitating quicker attack response times. When evaluated on well-known security datasets, such as CIC-DDoS2019, CIC-IDS2018, and CIC-DoS2017, the model achieves a detection accuracy of 98.42%, surpassing previous methods and significantly reducing false positives. The proposed strategy integrates spatial and temporal threat analysis, dynamic feature refinement, and adaptive detection methods to deliver a reliable and scalable solution for enhancing the cybersecurity of smart grids.

Keywords

Introduction

Smart grids are revolutionising the way electricity is generated, transmitted, and utilised. Unlike traditional electricity grids, which operate primarily as one-way systems, smart grids facilitate two-way communication between utilities and consumers (AlHaddad et al., 2023). Advanced technologies, including smart meters, sensors, and communication networks, enable real-time monitoring, automation, and grid control. Adjusting energy distribution based on consumption patterns, renewable energy availability, and grid conditions enhances efficiency. Additionally, smart grids enhance reliability and simplify the integration of renewable energy sources, such as solar and wind, making them crucial for a more sustainable energy future (Alam et al., 2024). However, these grids have become increasingly vulnerable to intrusions due to their reliance on digital technologies. The many access points provided by numerous devices and communication channels create opportunities for hostile entities to gain entry. Such attacks, including fake data injection, distributed denial-of-service (DDoS) attacks (Aljohani et al., 2024), and probing assaults, can jeopardise critical data, disrupt grid operations, and lead to significant financial and physical damage. Because the grid is interconnected, an attack on one part of the system can have widespread consequences, endangering the network's stability (Basheer and Ranjana, 2025).

Communication systems in smart grids are particularly susceptible to these attacks. The continuous data flow among devices, users, and utilities complicates the differentiation between legitimate and harmful activity. Furthermore, the grid's extensive and dynamic datasets hinder standard IDS from providing accurate real-time security monitoring (Berghout et al., 2022). The imbalance in data, which favours regular traffic over malicious activity, further complicates detection, increasing the risk of overlooking unique or rare attack patterns. Current IDS systems, including those based on signature detection and anomaly detection, have limitations in addressing these challenges. Signature-based approaches depend on predefined patterns, making it difficult to recognise new threats (Cui et al., 2020).

Anomaly-based systems can detect deviations from normal behaviours, yet they often suffer from high false-positive rates, especially in the dynamic environments of smart grids. Given the volume and complexity of smart grid data, traditional machine learning methods are also constrained in their effectiveness. Considering these limitations, the importance of an effective IDS in smart grids is evident (Diaba and Elmusrati, 2023). An IDS plays a crucial role in maintaining the grid's integrity and security by detecting and mitigating intrusions before they can cause substantial damage. This significance grows as the grid integrates more renewable energy sources and distributed energy resources (DERs), all of which demand a safe, stable, and reliable grid. The success of smart grids hinges on their ability to function seamlessly, making cybersecurity essential for their ongoing development (Ding et al., 2020).

This research addresses these issues by introducing a hybrid deep learning model that integrates ST-GNNs and Multi-Scale Transformers, enhanced by an Adaptive Attention-Based Feature Fusion module. This method captures spatial and temporal correlations in network data, improving detection accuracy and efficiency. Additionally, the model utilises Contrastive Self-Supervised Learning to handle imbalanced datasets and identify unusual or novel attacks (El-Toukhy et al., 2024). It also incorporates federated learning for scalability and privacy, as well as Conditional Generative Adversarial Networks for data augmentation, thereby enhancing generalisation to new attack scenarios. The proposed model addresses critical challenges in smart grid cybersecurity by increasing detection accuracy, scalability, and adaptability. It strongly responds to the ever-evolving threat landscape of modern power systems (Gokulraj and Venkatramanan, 2024).

The novelty of the proposed approach lies in the integration of Spatial-Temporal Graph Neural Networks (ST-GNNs) and Multi-Scale Transformers with an Adaptive Attention-Based Feature Fusion (AAFF) mechanism. This hybrid model uniquely combines graph-based learning for capturing spatial dependencies and transformer-based models for detecting temporal relationships, thereby enhancing its ability to identify dynamic, emerging cyber threats in smart grids. Unlike existing methods, which rely solely on either spatial or temporal features, our model simultaneously leverages both to improve detection accuracy and robustness against diverse attack scenarios (Gupta et al., 2022).

The complete article is structured as follows: Section ‘Related works’ discusses similar smart grid intrusion detection efforts. Section ‘Materials and methods’ focuses on the proposed model and its components. Section ‘Experimental results and discussion’ summarises the experimental findings and compares the model's performance to existing approaches. Section ‘Conclusion and future works’ concludes with a summary of findings and recommendations for future research directions.

Related works

Over the years, various approaches have been proposed to enhance the cybersecurity of smart grids, ranging from traditional machine learning methods to advanced deep learning techniques. These approaches focus on detecting network intrusions, mitigating attacks, and ensuring the integrity and availability of critical grid infrastructure. In this section, we review the most relevant studies that have contributed to the field, highlighting the strengths and limitations of existing methods and setting the stage for our proposed hybrid deep learning model.

IDS for smart grids

IDSs are essential for protecting smart grids, as they continuously scan the network for indications of malicious activity. Advanced intrusion detection systems have become increasingly crucial, considering the complex and ever-evolving landscape of smart grid systems, which encompass components such as power generation, distribution, and communication networks. The rising prevalence of IoT devices and the expanding attack surface make these systems particularly vulnerable to cyber threats, including network intrusions and malicious data injections.

AlHaddad et al. (2023) significantly advanced this field by introducing an ensemble model that integrates hybrid deep learning methodologies for intrusion detection in smart grid networks. Their model combines machine learning algorithms, enabling the system to precisely identify attacks. The effectiveness of their approach hinges on integrating various learning models, such as neural networks and decision trees, which enhance the system's predictive capabilities by recognising different attack behaviour patterns. This hybrid methodology enhances the system's adaptability and scalability, enabling it to address the cyber threats that modern smart grids encounter effectively. Alam et al. (2024) conducted research in a similar direction, exploring the application of machine learning to identify and prevent cyberattacks in smart grids. They emphasise real-time cybersecurity applications, which are crucial for preventing or mitigating the impact of cyberattacks once detected. By training machine learning models, such as decision trees, random forests, and support vector machines (SVMs), on historical data, the system's response to new threats can be improved by detecting attack patterns. Given the speed and complexity of cyberattacks, Alam and his colleagues assert that intrusion detection systems should be both anticipatory and predictive.

Basheer and Ranjana (2025) developed a deep learning framework for IDS based on graph convolutional networks. Smart grids often utilise a graph-based structure, with nodes representing various entities, such as power plants and substations, and edges representing communication links. GCNs are particularly well-suited for capturing the intricate relationships within these grid topologies, enabling more effective anomaly detection by leveraging the spatial dependencies inherent in these systems. Compared to traditional machine learning algorithms, GCN-based IDS can identify attacks with greater precision and context by focusing on these relationships.

Berghout et al. (2022) comprehensively analysed machine learning methods for cybersecurity in smart grids. Their study summarises various techniques, from supervised to unsupervised learning, and discusses how these methods impact IDS. They highlight the capabilities of these techniques in detecting a wide range of attacks, including data breaches, DDoS attacks, and internal threats. Furthermore, their review addresses the challenge of smart grids’ large-scale, distributed nature, which demands efficient and scalable IDS systems. The study emphasises that future IDS must be adaptable and able to learn from previously unseen attacks to respond effectively to the ever-evolving threat landscape.

Identification and detection of attacks in smart grids

Regarding smart grid security, one of the biggest obstacles is identifying attacks. Given the increasing sophistication of cyber-attacks, such as DDoS, False Data Injection (FDI), and Advanced Persistent Threats (APTs), it is essential to establish real-time, reliable attack detection systems. These assaults threaten the integrity of the grid's operations and may lead to substantial financial, safety, and environmental consequences. Therefore, the advancement of advanced attack detection systems is essential.

Diaba and Elmusrati (2023) presented a deep learning algorithm to identify DDoS attacks in smart grids. DDoS attacks, which overwhelm network resources with excessive traffic, pose a significant threat to the cybersecurity of smart grids. The researchers presented a deep learning model that distinguishes between normal and malicious traffic, enabling immediate detection and response to attacks. Their research emphasises how crucial it is to efficiently identify DDoS attacks, especially in large systems that handle significant data volumes. Gupta and Bhatia (2020) created a hybrid optimisation model that combines deep learning techniques with optimisation algorithms to find outliers. Their method makes it easier to notice unusual behaviours, which is essential for identifying new attack patterns that haven't been seen before. An early warning system called anomaly detection identifies unusual patterns in a system's behaviour, which may indicate an impending attack. The authors enhanced their system's ability to locate items using genetic algorithms and particle swarm optimisation, thereby reducing false positives and negatives.

Kaur and Batth (2024) developed a novel method for detecting intrusions by integrating deep learning and machine learning models. Their hybrid methodology considers the intricate dynamics of smart grid security, wherein attack patterns may evolve and vary across different grid segments. Hybrid models exhibit enhanced flexibility and reliability by integrating the optimal characteristics of deep learning and machine learning. Deep learning identifies intricate patterns, while machine learning generates accurate and efficient predictions. Utilising a combination of algorithms, the model can more effectively address various types of attacks and environmental alterations.

Li et al. (2023) demonstrated an adaptive deep learning model that enables smart grids to detect intrusions more efficiently. Their model utilises machine learning classifiers that incorporate deep learning techniques to address emerging threats more effectively. The model's Adaptive features continually improve by incorporating new attack data. This makes it a more flexible and quicker tool for finding new threats. It's becoming increasingly important for systems to identify known attacks and new, unexpected threats. Their research indicates that the future of Intrusion Detection Systems in smart grids hinges on developing systems that can learn from new data without requiring retraining or human assistance.

Hybrid and advanced techniques for smart grid security

As smart grids continue to evolve, the complexity of securing them will also increase. Traditional intrusion detection techniques frequently struggle to manage the scale, diversity, and complexity of modern attacks. Hybrid and advanced deep learning models have demonstrated great promise in addressing these challenges. These models offer improved detection accuracy and enhanced computational efficiency. These models incorporate a wide range of algorithms, each offering its own distinct set of advantages.

The authors Aljohani et al. (2024) proposed a deep learning-based intrusion detection system for smart grids. This system would integrate neural networks with advanced learning techniques to enhance the effectiveness of attack detection. The authors emphasised that combining deep learning models and conventional detection methods provides a more adaptable and scalable solution. This hybrid methodology is particularly advantageous for smart grids, which operate in a dynamic environment and continually face the emergence of new attack vectors. Their system can handle the vast data generated by grid sensors while accurately detecting anomalies and attacks.

Ruan et al. (2023) investigated various deep learning techniques utilised in smart grid cybersecurity and assessed the prospective ramifications of these technologies. Their review highlighted the growing importance of hybrid models, particularly in managing the vast data generated by smart grid systems. Hybrid models can significantly improve the accuracy of detection and the scalability of IDS. Hybrid systems can more efficiently process varied data types by amalgamating different deep learning methodologies, such as CNNs and RNNs, thus improving the overall efficacy of IDS.

A deep learning model using dilated GRU (Gated Recurrent Unit) networks for anomaly detection in smart grids was presented by Ravinder and Kulkarni (2025). Gated Recurrent Units (GRUs) are a type of RNN that efficiently capture temporal dependencies for analysing time-series data, including that produced by smart grid communications. Because of this, they are exceptionally skilled at spotting irregularities in real-time, when chronology is crucial. Their study demonstrates how deep learning models, such as GRUs, can be tailored for specific applications, including tracking temporal patterns in smart grid communications, thereby providing a robust defence against emerging security risks by Hu et al. (2022).

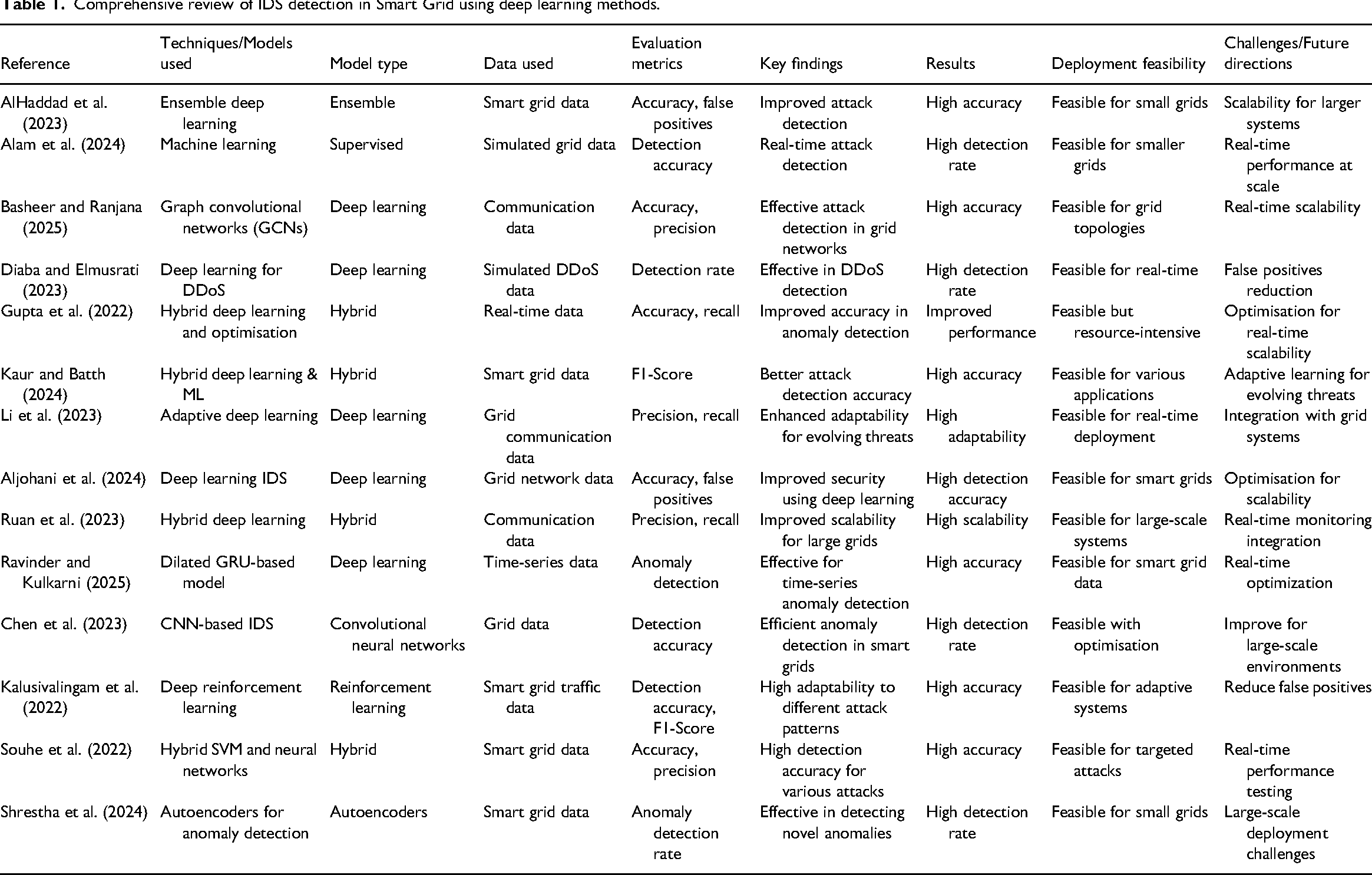

Combining deep learning and hybrid methodologies is revolutionising the proposed cybersecurity method in smart grids. These developments are enabling the development of more flexible, scalable, and real-time solutions to the growing cyber threats that contemporary smart grids must contend with, while enhancing the precision and efficacy of attack detection (Kaur and Batth, 2024). Table 1 presents a comprehensive review of IDS detection in Smart Grid using deep learning methods.

Comprehensive review of IDS detection in Smart Grid using deep learning methods.

Materials and methods

This section describes the approach used to create and assess the suggested hybrid deep learning model for smart grid cybersecurity. In addition to methods like CSSL, federated learning, and data augmentation, it describes the model's architecture, including its fundamental elements: ST-GNNs, multi-scale transformers, and the AAFF module. To provide a comprehensive understanding of the experimental framework, the datasets used, data preprocessing stages, model training procedures, and evaluation measures are also discussed.

Proposed hybrid deep learning model for smart grid cybersecurity

The proposed approach is a combined deep learning framework designed to address the evolving, uneven, and complex challenges posed by security issues in smart grids. It integrates ST-GNNs, multi-scale transformers, and an adaptive attention-based feature fusion module, along with advanced techniques such as contrastive self-supervised learning, dynamic graph generation, meta-learning, and federated learning. The architecture of this system is carefully designed to detect intricate attack patterns, such as DDoS (Distributed Denial of Service) attacks, False Data Injection (FDI) attacks, and probing attacks, to which smart grids are increasingly vulnerable (Karimipour et al., 2019).

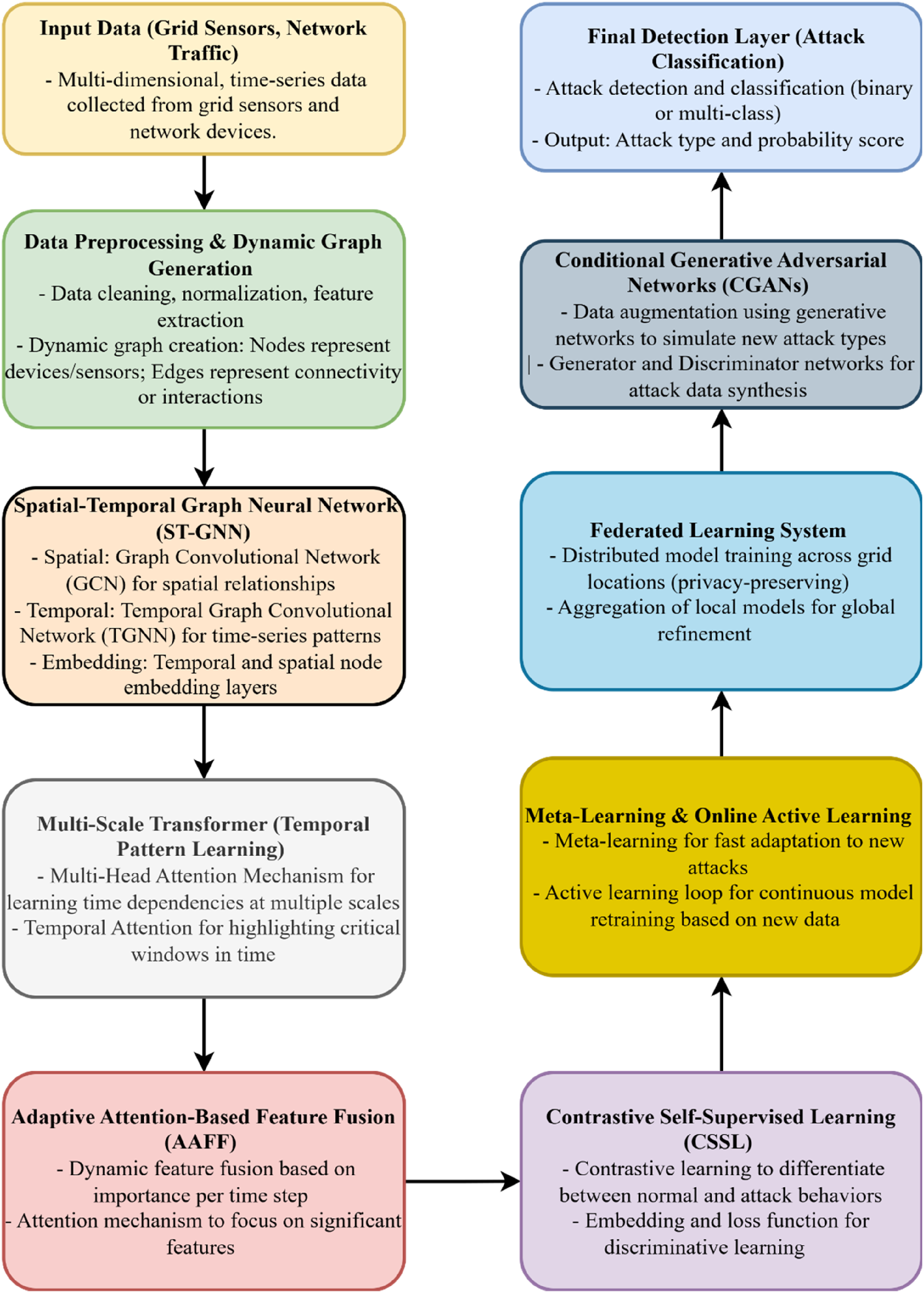

While this model has been evaluated on benchmark datasets, its real-world applicability in live innovative grid environments is crucial. The proposed hybrid deep learning model can be deployed on edge devices within the grid, offering low-latency attack detection. However, real-time performance may depend on the size of the grid and the available computational resources (Li et al., 2023). In future work, the model will be optimised to handle large-scale, real-time data by implementing techniques like model pruning and distributed learning, ensuring it is feasible for use in diverse real-world settings. Figure 1 presents the architecture of the Proposed Hybrid model.

The architecture of the proposed hybrid model.

Working of the proposed hybrid model

The complete working of the proposed hybrid model is as follows.

Spatial-temporal graph neural network

The proposed hybrid model emphasises smart grids as massive, interconnected networks that include devices such as sensors, meters, and controllers. These devices share power usage, voltage measurements, and different sensor data. Due to their extensive network connections, smart grids are susceptible to various types of cyberattacks. These attacks may include DDoS (Distributed Denial of Service) assaults and data falsification, in which cybercriminals deliberately introduce inaccurate data to disrupt the system (Li et al., 2025).

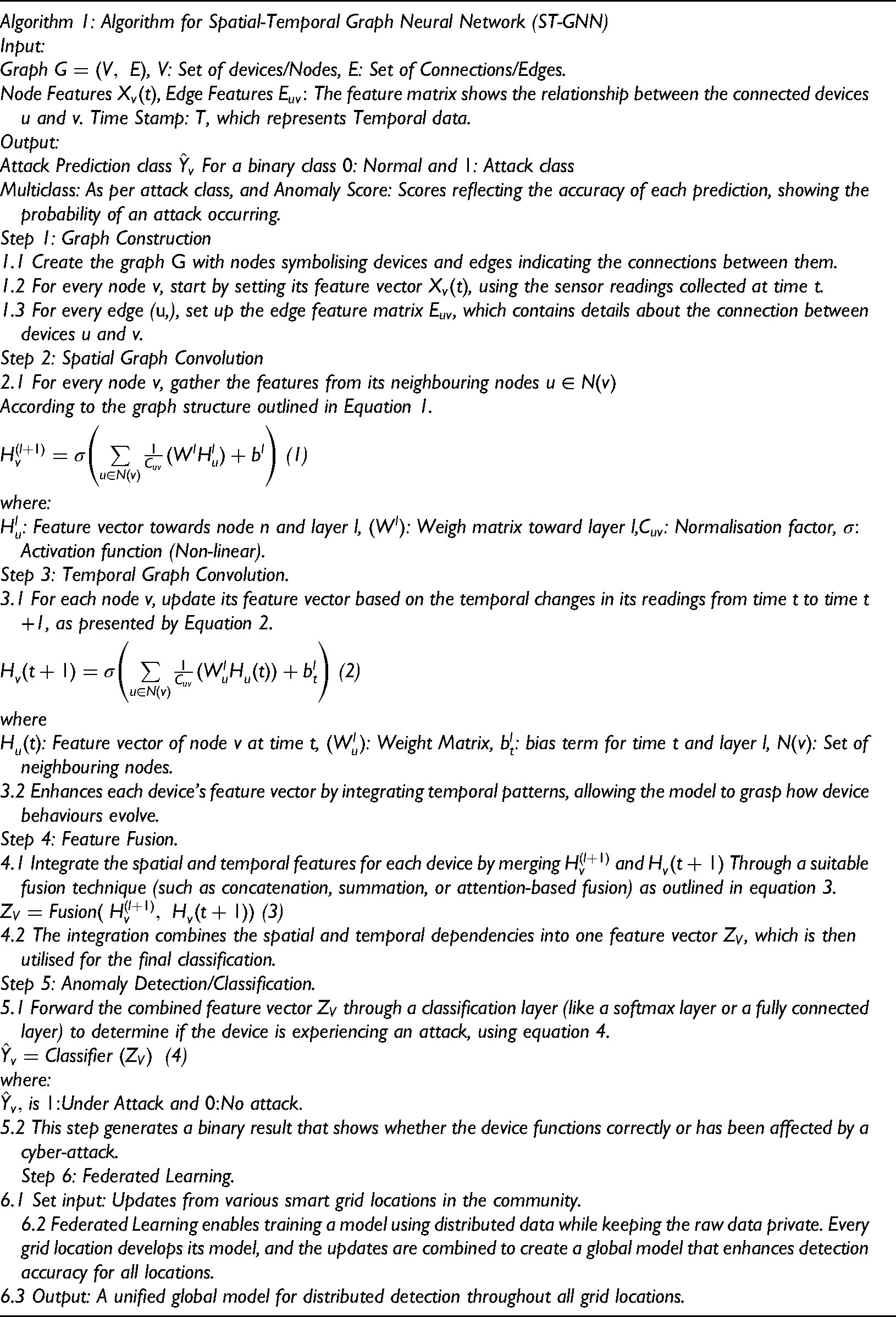

The proposed model utilises advanced machine learning methods to protect the grid from cyberattacks. An essential component is the ST-GNN, which enhances the system's ability to comprehend and forecast events within the grid across both spatial and temporal dimensions. The ST-GNN functions as a robust ‘sensor’ within the cybersecurity framework. It examines the grid's temporal dynamics and the spatial interconnections among devices, such as sensors and meters. By integrating these two perspectives, ST-GNN can identify anomalous patterns or cyberattacks more efficiently than conventional systems (Lu et al., 2025). Algorithm 1 presents the working of the ST-GNN model in the proposed hybrid model.

Multi-scale transformer (temporal pattern learning)

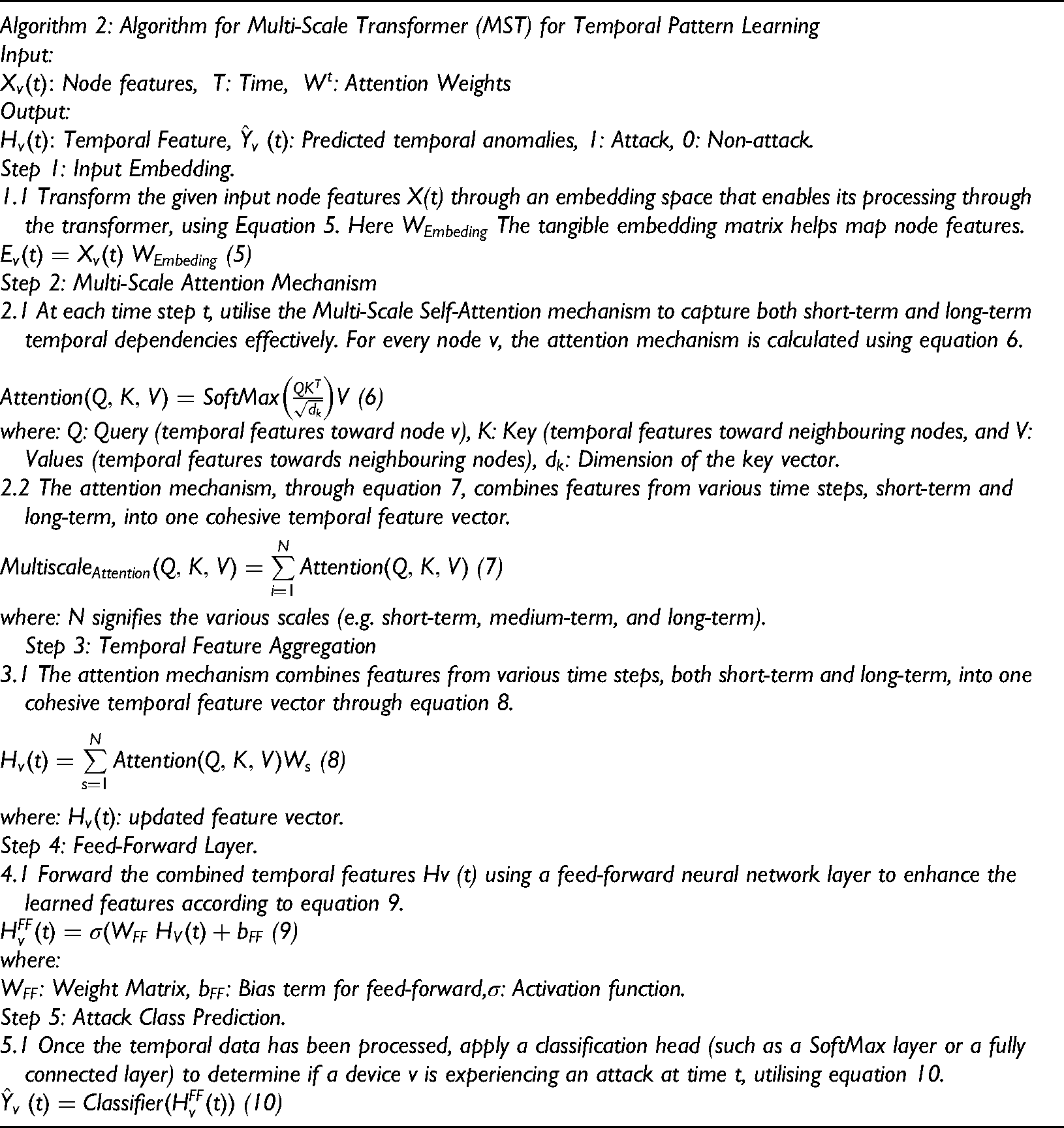

The proposed model emphasises the significance of the multi-scale transformer (MST) in comprehending temporal relationships and identifying intricate patterns in time-series data, which is crucial for detecting anomalies or attacks within the smart grid (Makhmudov et al., 2025). The MST employs a self-attention mechanism to effectively understand both short and long-range temporal dependencies in the system's behaviours. This is significant as innovative grid systems undergo dynamic changes over time, and these temporal patterns can be vital for identifying emerging cyber-attacks such as false data injections or DDoS attacks (Mohammed et al., 2024).

The MST addresses various time scales, allowing the model to concentrate on different time intervals and capture features at both detailed (short-term) and broader (long-term) levels. The model analyses time-series data from grid devices, including power consumption, voltage, and current readings, to understand how these signals change over time. By incorporating this time-based learning into the spatial graph framework (similar to the earlier ST-GNN module), the MST enables the model to uphold high accuracy, even when faced with imbalanced or atypical attack patterns (Mohammed et al., 2025). Algorithm 2 presents the steps for MST in the proposed model.

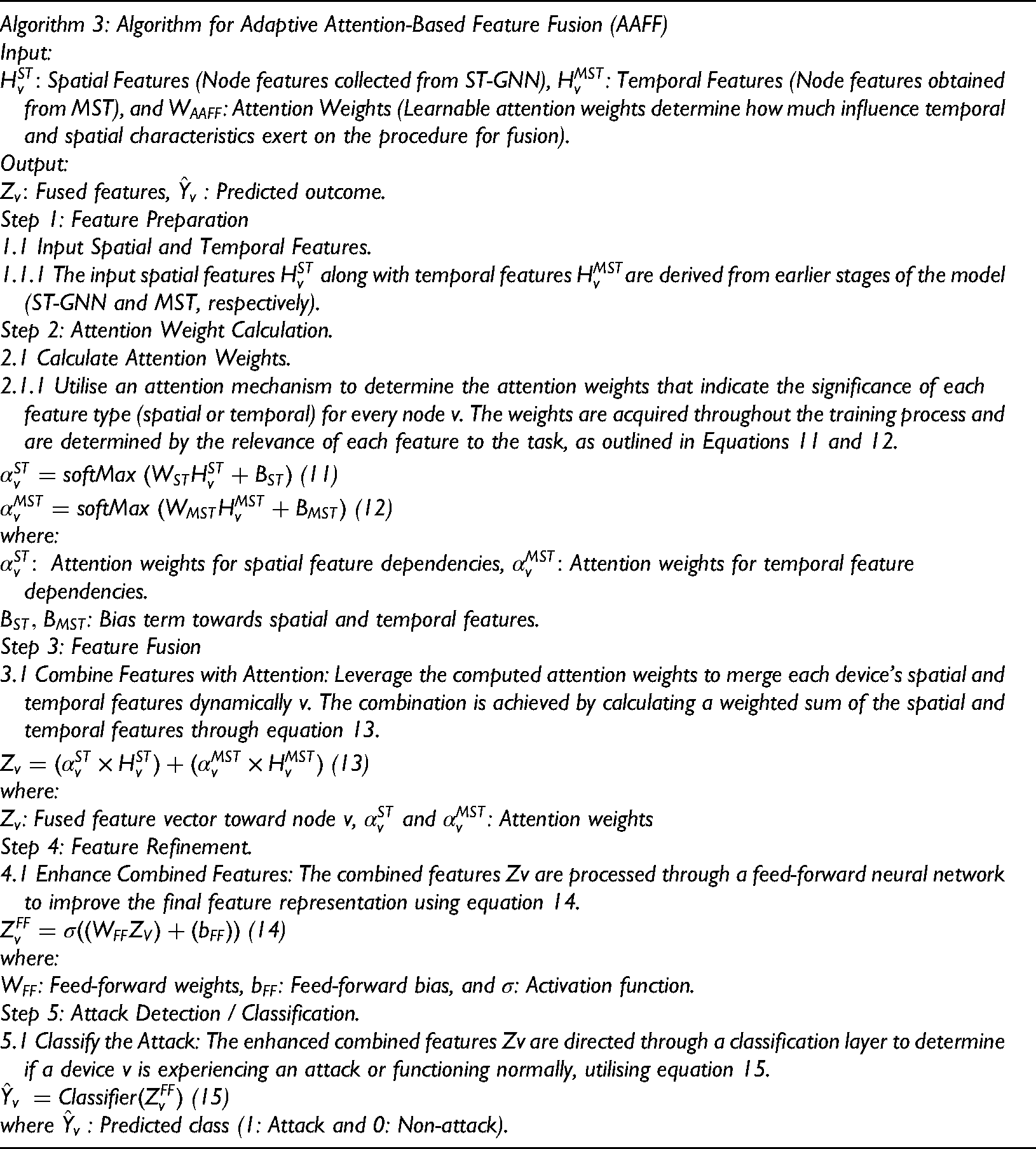

Adaptive attention-based feature fusion

The proposed approach features a vital component known as AAFF, which aims to flexibly merge the characteristics obtained from the model's spatial and temporal aspects. AAFF seeks to integrate aspects from the ST-GNN and the MST based on their significance for identifying attacks (Markkandeyan et al., 2025). Traditional feature fusion methods merge features in a fixed way. In contrast, AAFF adjusts to the importance of features at each moment, thereby improving the model's ability to focus on the most pertinent information for identifying anomalies or cyberattacks (Mhmood et al., 2024).

The primary benefit of AAFF lies in its attention mechanism, which assigns weights to various features during fusion based on their relevance in both time and space. The fusion process enables the model to focus on significant patterns, such as unusual device behaviours or irregular device interactions over time. This results in a more precise identification of threats within the innovative grid system (Nasir and Hebrisha, 2024). Algorithm 3 presents the working step of AAFF in the proposed hybrid model.

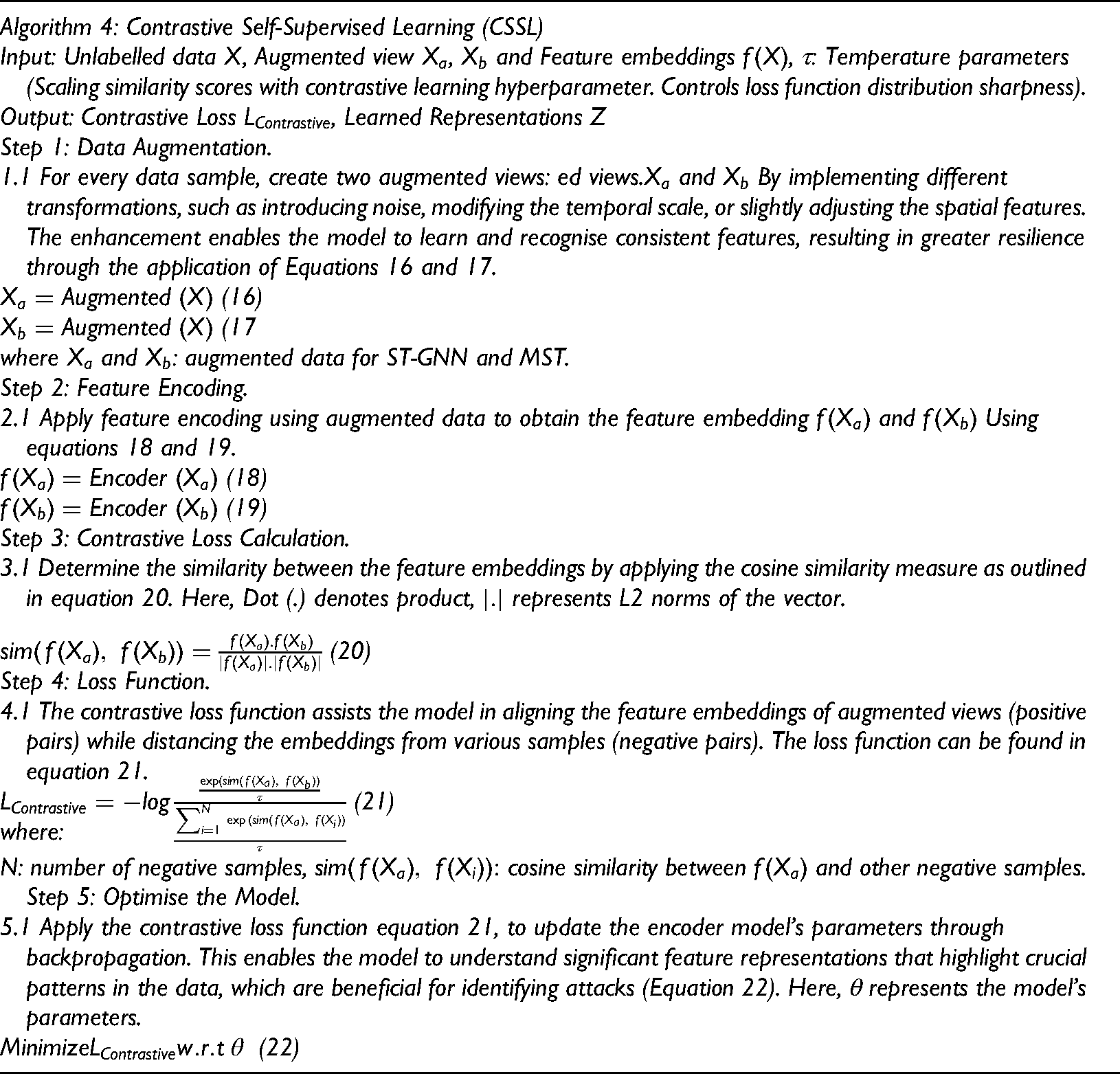

Contrastive self-supervised learning (CSSL)

The proposed approach utilises CSSL to enhance its capacity to learn from imbalanced datasets and to improve its effectiveness in identifying new and previously unseen attacks. Conventional supervised learning techniques depend on labelled data, which can often be limited, particularly in detecting zero-day attacks or uncommon anomalies within innovative grid cybersecurity. CSSL can overcome this obstacle by utilising the natural structure of the data. This enables the model to acquire meaningful representations even without clear labels. Regarding CSSL, the model is designed to differentiate between positive and negative samples, enabling it to recognise pairs of similar (positive) and dissimilar (negative) instances (Ravinder and Kulkarni, 2025).

The concept is that comparable instances, like typical behaviours or similar attack patterns, can be positioned near one another in the learned feature space. In contrast, distinct instances, such as normal behaviours and attacks, should be spaced further apart. By guiding the model to distinguish these instances, it develops more substantial features that can assist in recognising new attack patterns, even when the training data is uneven or lacking (Ruan et al., 2023). CSSL enables smart grid attack detection systems to comprehend typical grid operations and identify distinct attack patterns, even when the training data comprises only a few labelled instances of attacks. The contrastive learning framework is beneficial for anomaly detection tasks, where most data points represent typical (non-attack) behaviours and only a few data points indicate potential attacks (Rahul et al., 2024). Algorithm 4 presents the working of CSSL in the proposed hybrid model.

Meta-learning and online active learning

Meta-learning, often referred to as ‘learning to learn’, is a method that focuses on enhancing a model's ability to adapt to new tasks and environments with limited data. In the context of the proposed model for smart grid cybersecurity, meta-learning is utilised to enable the model to swiftly adjust to new attack patterns or changes in the environment within the grid. The model can adapt effectively to new and unfamiliar attack situations by understanding the core principles of different attack types. The model-agnostic meta-learning (MAML) algorithm is often utilised for this purpose, enabling the model to be trained to quickly adjust to new data with just a few updates (Sharma et al., 2025).

Conversely, Online Active Learning is a method in which the model persistently adapts and evolves by incorporating fresh data as it arrives. This approach focuses on gaining insights from unclear or valuable situations, rather than relying on data chosen at random. This ongoing learning enables the model to enhance itself as it encounters new attack vectors or network behaviours. Online active learning is particularly advantageous in smart grids, where cyberattacks are continually evolving, making real-time detection essential (Shehzad et al., 2021).

Federated learning system

Federated learning is a collaborative approach to machine learning that enables model training across multiple devices or nodes, such as smart grid locations, while maintaining the privacy and security of raw data. This approach protects data privacy and security by keeping the raw data on local devices, while only sharing model updates (gradients) with the central server (Song et al., 2021). The proposed model utilises federated learning to identify cyberattacks within distributed innovative grid systems, all while ensuring privacy and scalability are upheld. Every local node (smart grid device) develops a model based on its data, and at regular intervals, the refreshed models are combined at the central server. This method enhances the ability to detect attacks effectively and ensures that sensitive information remains secure from exposure (Siniosoglou et al., 2021).

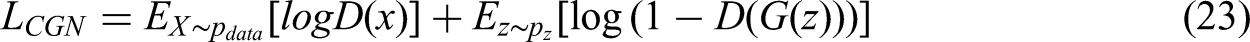

Conditional generative adversarial networks (CGANs)

In the proposed model, CGANs are employed to augment the data. CGANs consist of two networks: one that generates images and one that distinguishes between them. The discriminator distinguishes between real and fake data, while the generator generates counterfeit data based on specific inputs, such as attack labels or network conditions. Utilising CGANs enables the model to generate realistic attack scenarios that may be absent from the training dataset, which is crucial for enhancing data when addressing uncommon or new attack patterns. The increased variety in data strengthens the model's ability to withstand unexpected attacks (Wang et al., 2025). The generator aims to reduce the loss function outlined in Equation 23.

where:

Final detection layer (attack classification)

The final detection layer is crucial in determining if a specific instance, whether from network traffic or sensor data, indicates a normal state or an attack. Once the model has gathered features through earlier components like the ST-GNN, multi-scale transformer, and AAFF, the final detection layer employs a classification model (such as a fully connected neural network or support vector machine) to produce the attack classification result (Wen et al., 2025). This layer analyses the feature representations to determine if the input data is typical or indicates harmful activity (such as DDoS attacks or false data injection) (Yu et al., 2025). The classification output can be represented using a SoftMax function for multi-class classification, as shown in Equation 24.

where:

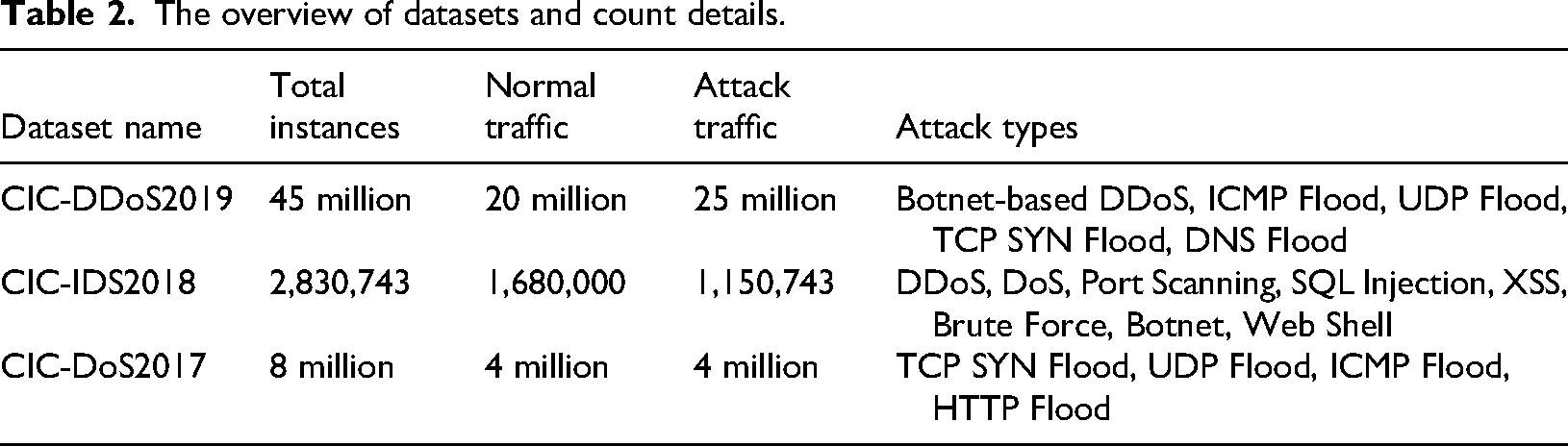

Dataset detail

This study employs three widely recognised and established attack datasets: CIC-DDoS2019 (https://www.unb.ca/cic/datasets/ddos-2019), CIC-IDS2018 (https://www.unb.ca/cic/datasets/ddos-2018), and CIC-DoS2017 (https://www.unb.ca/cic/datasets/ddos-2017). This collection of datasets provides detailed network traffic information, encompassing regular traffic and a range of attack scenarios, including DDoS, DoS, and other forms of intrusion, such as port scanning, SQL Injection, and Brute Force. They are commonly utilised for assessing intrusion detection systems and machine learning models focused on improving cybersecurity in practical settings (AlHaddad et al., 2023). Table 2 presents the summary of datasets and counts.

The overview of datasets and count details.

Data pre-processing

This study utilises three well-known attack datasets: CIC-DDoS2019, CIC-IDS2018, and CIC-DoS2017, each showcasing various facets of network traffic and attack scenarios. The data pre-processing steps for all three datasets are identical, providing a uniform method for preparing the data for machine learning models. Initially, we clean the data by eliminating duplicates and resolving any gaps in the information. When we encounter missing data, we typically handle it using imputation methods. This includes addressing the missing parts through techniques such as forward-fill or mean imputation, customised for the specific situation. After cleaning the data, we transform the raw PCAP files into a more user-friendly CSV format. This is important because CSV files simplify data management, mainly when extracting essential features required to identify attacks (Kumar et al., 2023). After the conversion, we proceed to extract the features. In this step, we gather essential details from the network traffic, including IP addresses, ports, protocol types, and packet sizes. We also collect more sophisticated features, such as flow characteristics, encompassing packet counts and transferred bytes. These features are crucial as they enable the model to grasp the network's behaviour and distinguish between typical and harmful activity (Kumar et al., 2024).

Next, we normalise the extracted features. This step ensures that all numerical data are on a comparable scale, preventing any single feature from overshadowing the learning process. Methods such as Min-Max scaling and Z-score normalisation are utilised, based on the specific needs of the dataset and model. After the features have been pre-processed, the traffic is assigned labels. In this step, we classify the traffic as typical or associated with certain attack types, such as DDoS, Port Scanning, or Data Injection. Labelling is essential, allowing the model to gain insights from attack and regular traffic throughout the training process (Kumar et al., 2023). A min-max normalisation can be measured by Equation 25, a Z-score normalisation (standardisation) using equation 26

where:

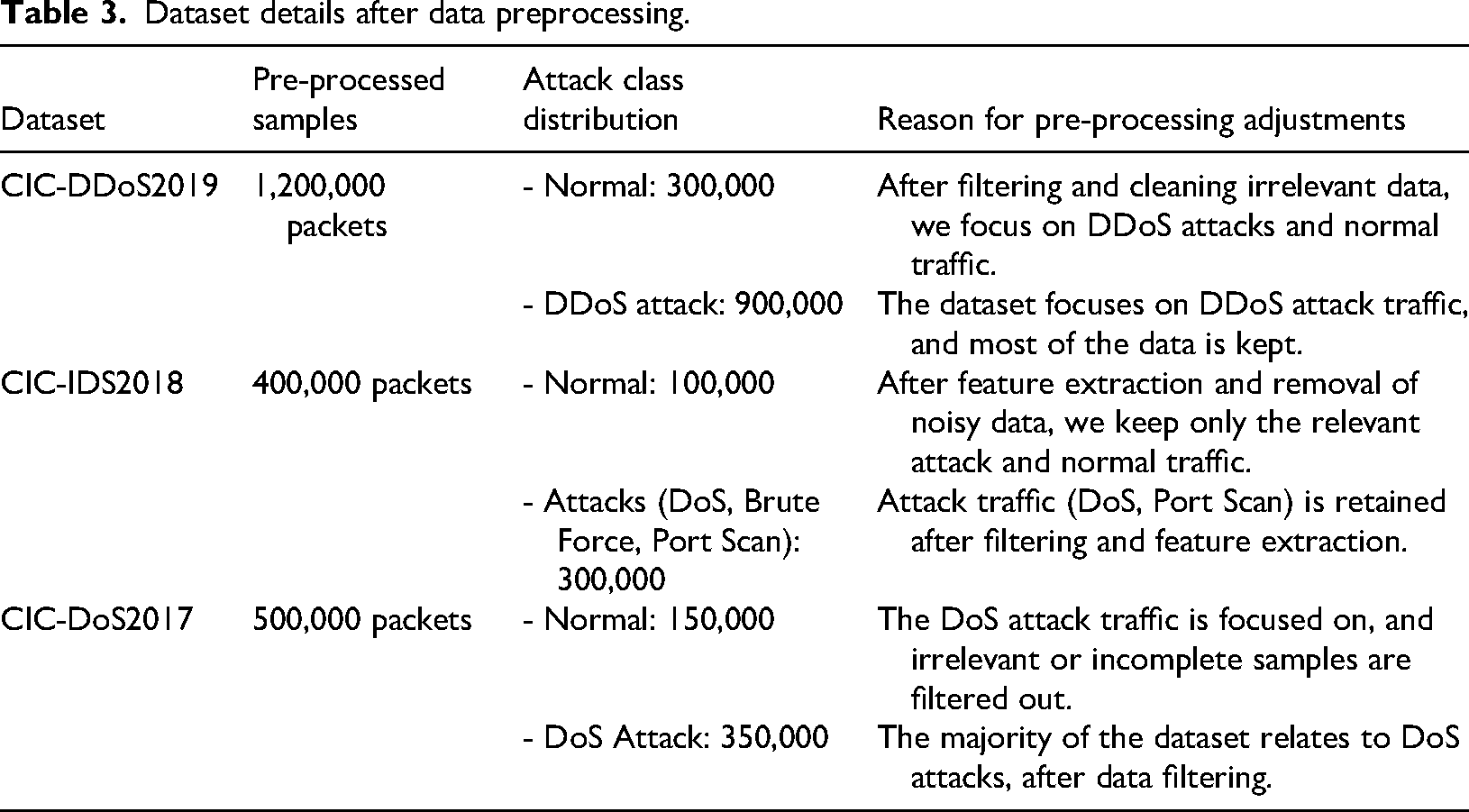

To handle the missing values in the dataset, we apply the Mean Imputation process. When we come across missing values in the dataset, we can fill them in using the mean, median, or any other statistic that reflects the central tendency of the feature. A typical method involves substituting missing values with the average value of the feature, as presented in equation 27. During the labelling step, when the data contains categorical variables (such as ‘attack’ or ‘normal’), we can transform these labels into numerical values to enable processing by machine learning models (Wen et al., 2025). Similar to performing the label encoding, we have utilised equation 28. Table 3 presents the dataset details after data pre-processing.

Dataset details after data preprocessing.

where:

Model training and hyperparameter tuning

Training the model and fine-tuning its parameters are crucial steps to ensure that the suggested hybrid deep learning model for smart grid cybersecurity works effectively. The training process begins by dividing the dataset into training, validation, and test sets, enabling the model to learn how to distinguish between normal behaviours and attacks within the network traffic data. To minimise the loss function at each epoch, the Adam optimiser supports adjusting the model's parameters. Early stopping is used to prevent overfitting by terminating the training process when the validation loss stops improving. This ensures that the model can successfully adjust to fresh, unknown data, which is crucial for identifying attacks in practical situations (Markkandeyan et al., 2025).

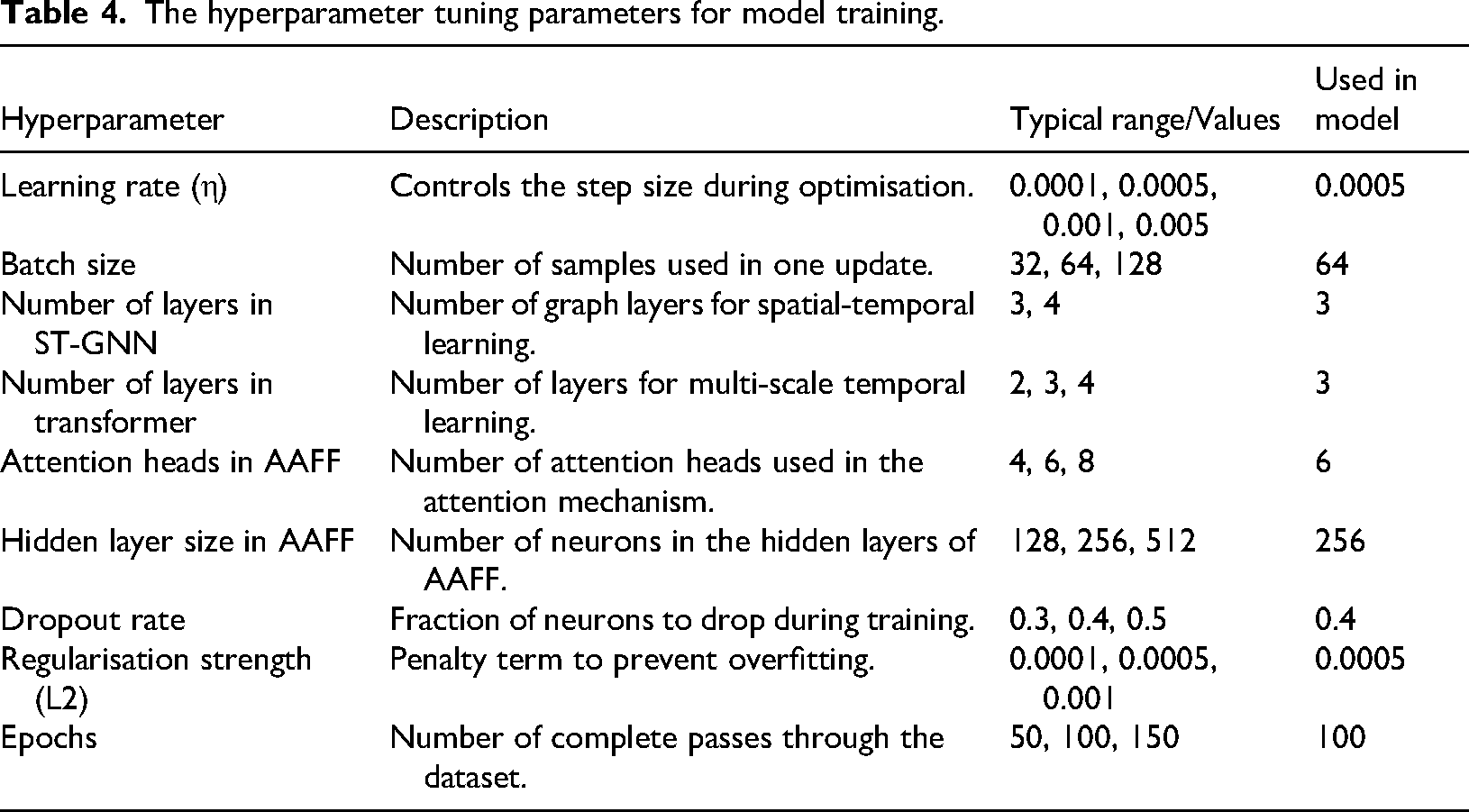

Fine-tuning the hyperparameters enhances the model's effectiveness. Important hyperparameters, such as the learning rate, batch size, number of layers in the ST-GNN and Transformer, the number of attention heads in AAFF, and the dropout rate, are adjusted to find the optimal balance between accuracy and efficiency. Methods such as grid search and random search are employed to investigate various combinations of hyperparameters. A learning rate of 0.0005 and a batch size of 64 proved effective, ensuring stable training and avoiding overshooting. A dropout rate of 0.4 and a regularisation strength of 0.0005 were selected to minimise overfitting. After trying out various values, 100 epochs were selected to provide enough training without overfitting, allowing the model to effectively identify different attack scenarios while preserving its ability to generalise (Kaur and Batth, 2024). Table 4 presents the Hyperparameter tuning parameters for model training.

The hyperparameter tuning parameters for model training.

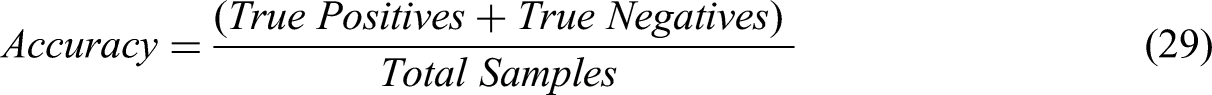

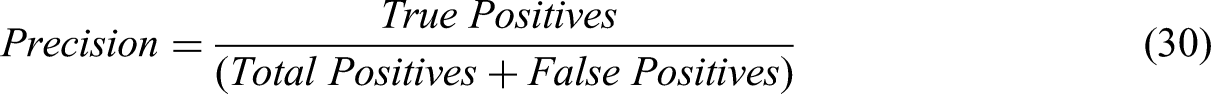

Performance measuring parameters

To determine how effectively the proposed hybrid deep learning model functions in the realm of smart grid cybersecurity, it is essential to utilise a range of established performance metrics which can thoroughly evaluate its effectiveness in identifying cyberattacks and anomalies. The following are the key performance measurement parameters utilised for this research (Equations 29 to 35) (El-Toukhy et al., 2024).

Experimental results and discussion

Hardware and software details

The proposed model was implemented through experiments utilising high-performance hardware and software environments to guarantee optimal computational efficiency. The hardware configuration included an NVIDIA Tesla V100 GPU for training deep learning models, paired with an Intel Xeon Gold 6226R processor and 64 GB of RAM, providing sufficient computational capacity to manage extensive datasets and intricate model architectures (AlHaddad et al., 2023). We employed Python 3.8 as the primary programming language, utilising the deep learning frameworks TensorFlow 2.4 and PyTorch 1.8 for model construction and training. We utilised Pandas 1.2 and NumPy 1.20 for efficient data preprocessing and analysis. The system operated on Ubuntu 20.04 LTS, guaranteeing stability and compatibility with the necessary software libraries. Version control was implemented using Git 2.30 to monitor code modifications and guarantee the reproducibility of experiments.

Simulation results

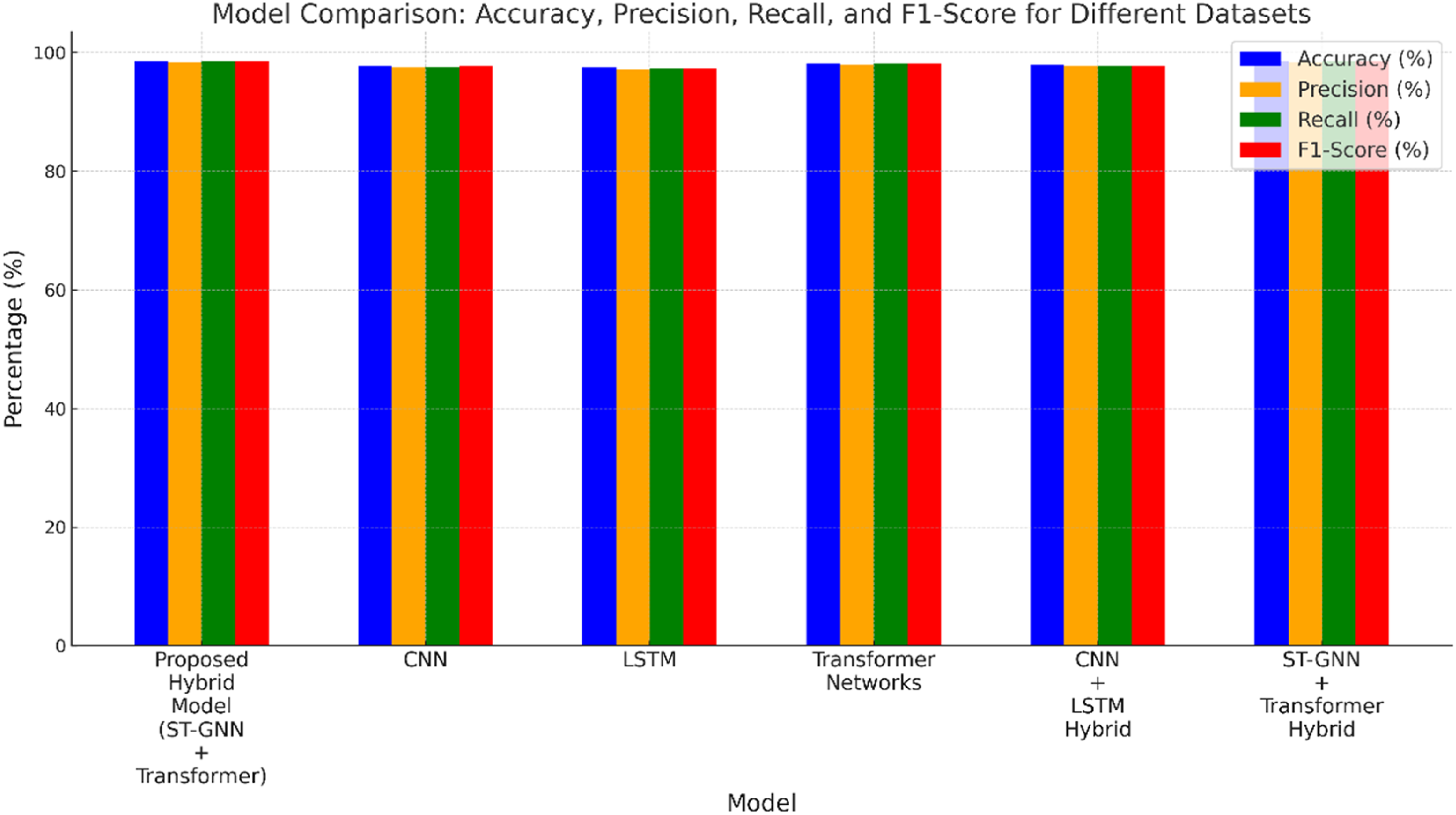

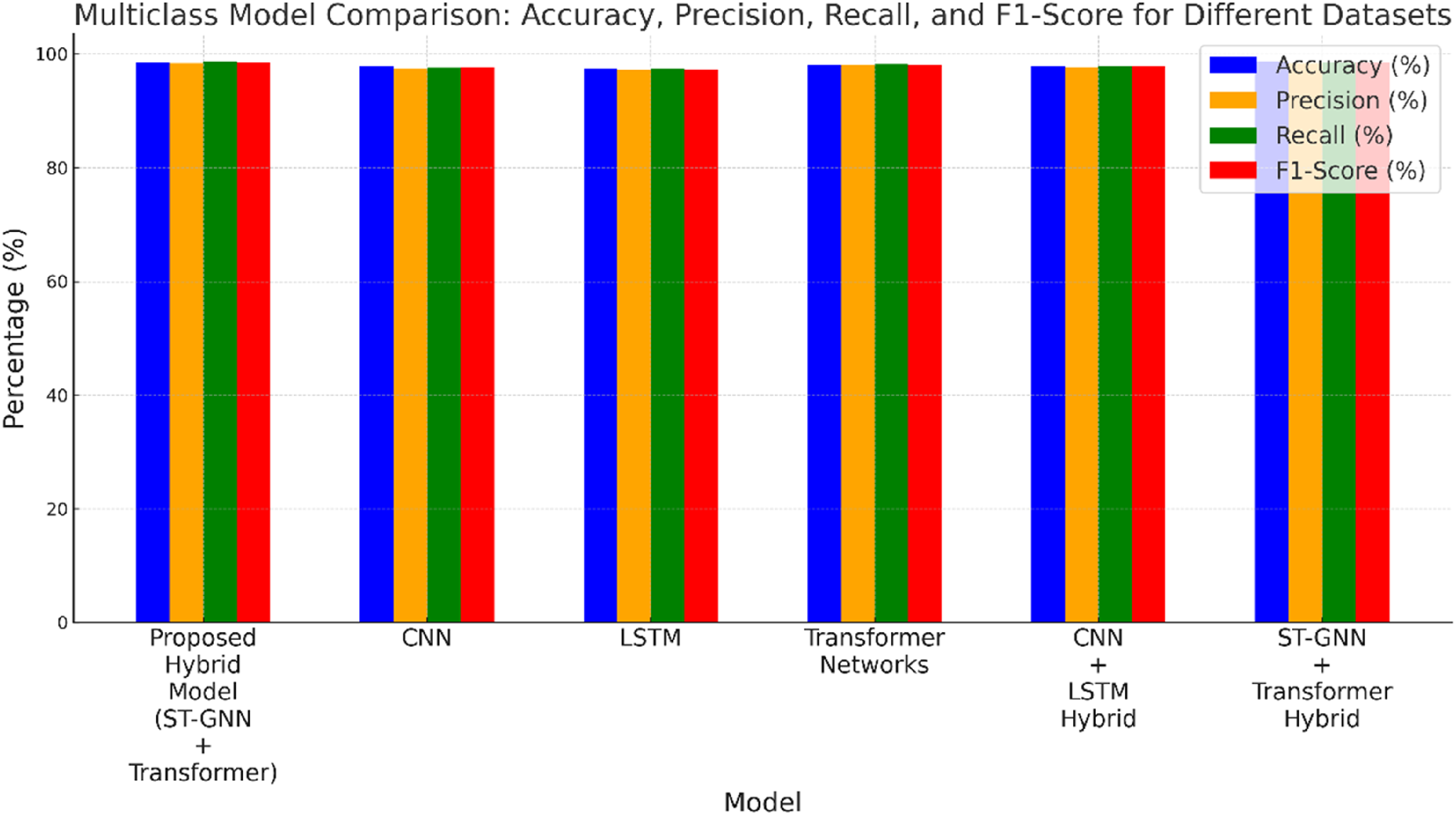

This research implemented the proposed hybrid deep learning model and compared it with established models, including CNN, LSTM, Transformer Networks, CNN + LSTM Hybrid, and ST-GNN + Transformer Hybrid, across three prominent datasets: CIC-DDoS2019, CIC-IDS 2018, and CIC-DoS2017.

The pre-processed datasets for CIC-DDoS2019, CIC-IDS2018, and CIC-DoS2017 were partitioned into training, validation, and testing subsets using an 80-10-10 distribution. A total of 1,200,000 packets were processed for CIC-DDoS2019, comprising 960,000 packets for training, 120,000 packets for validation, and 120,000 packets for testing. For the CIC-IDS2018 dataset, 320,000 packets were designated for training, 40,000 for validation, and 40,000 for testing, from a total of 400,000 packets. CIC-DoS2017, comprising 500,000 packets, was partitioned into 400,000 packets for training, 50,000 packets for validation, and 50,000 packets for testing. These divisions guarantee that the model is trained on a sufficiently extensive dataset while also supplying appropriate validation and testing data for performance assessment. The performance metrics are detailed as follows.

Discussion

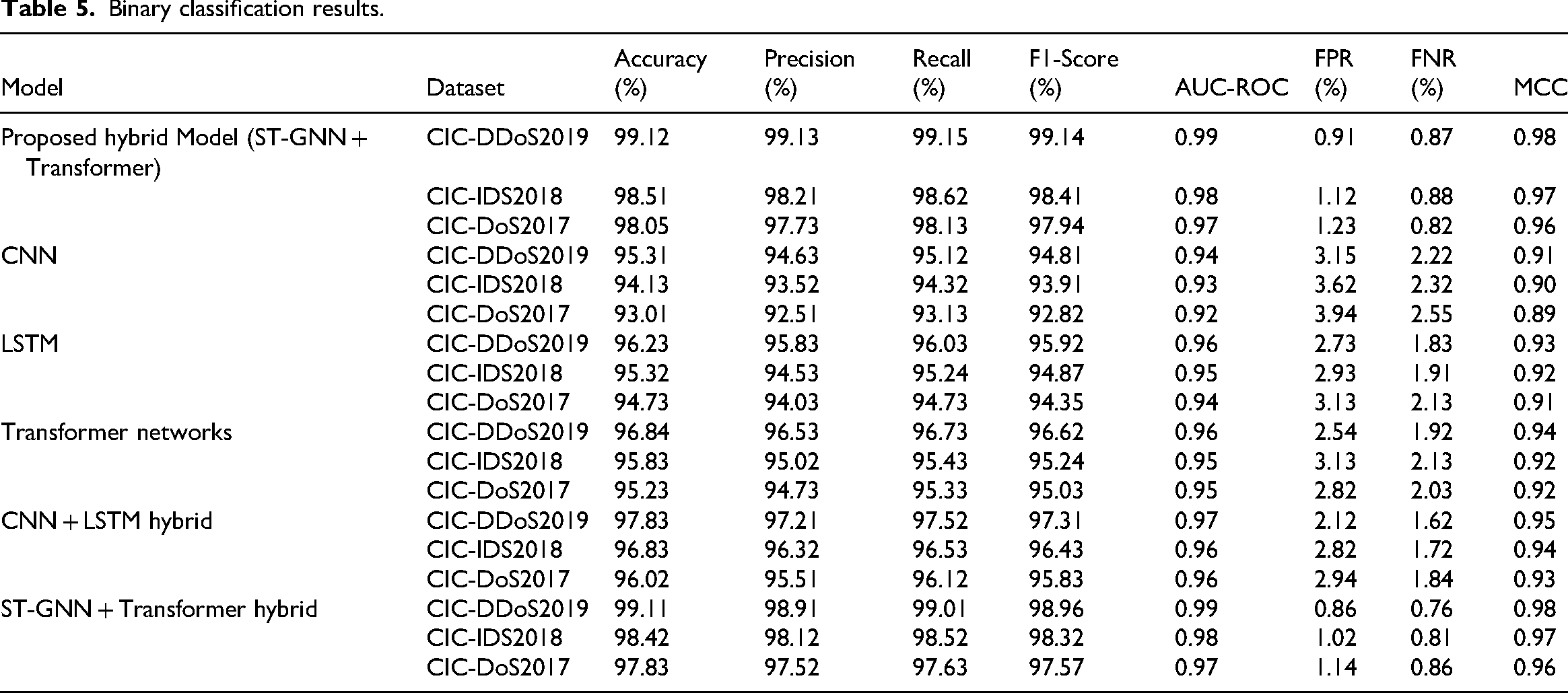

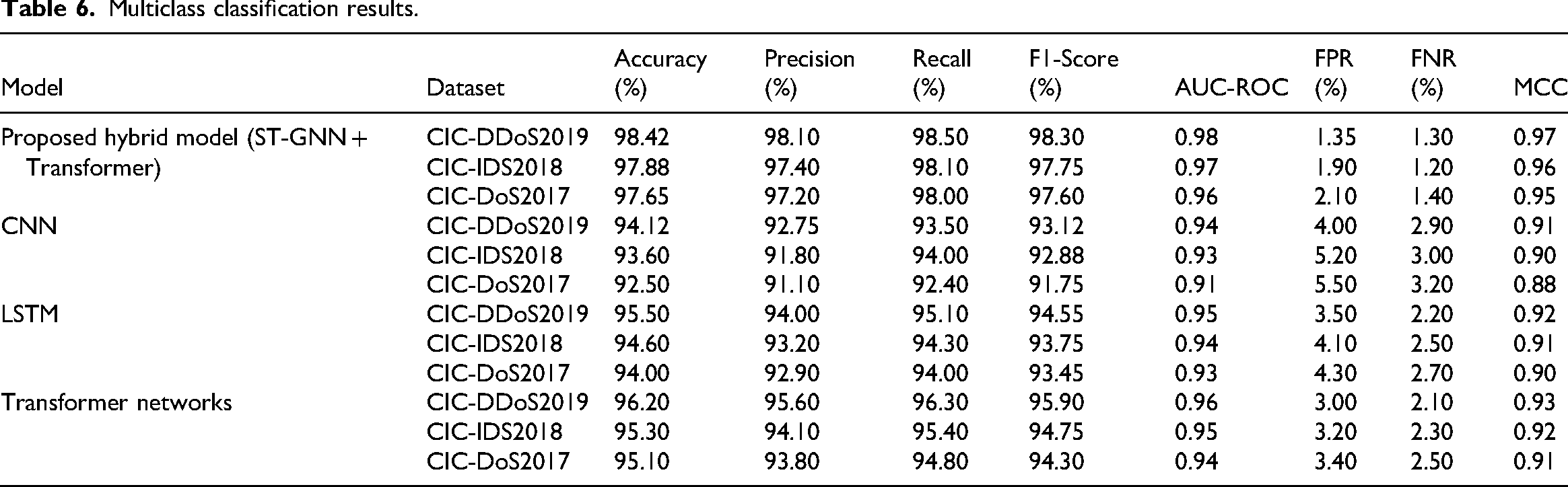

This research presents a new hybrid model that combines Spatio-Temporal Graph Neural Networks (ST-GNN) with Transformer networks to improve network intrusion detection in complex environments. We evaluated the model's effectiveness by utilising three well-regarded datasets – CIC-DDoS2019, CIC-IDS2018, and CIC-DoS2017 – applying a variety of performance metrics such as accuracy, precision, recall, F1-score, AUC-ROC, FPR (False Positive Rate), FNR (False Negative Rate), and MCC (Matthews Correlation Coefficient).

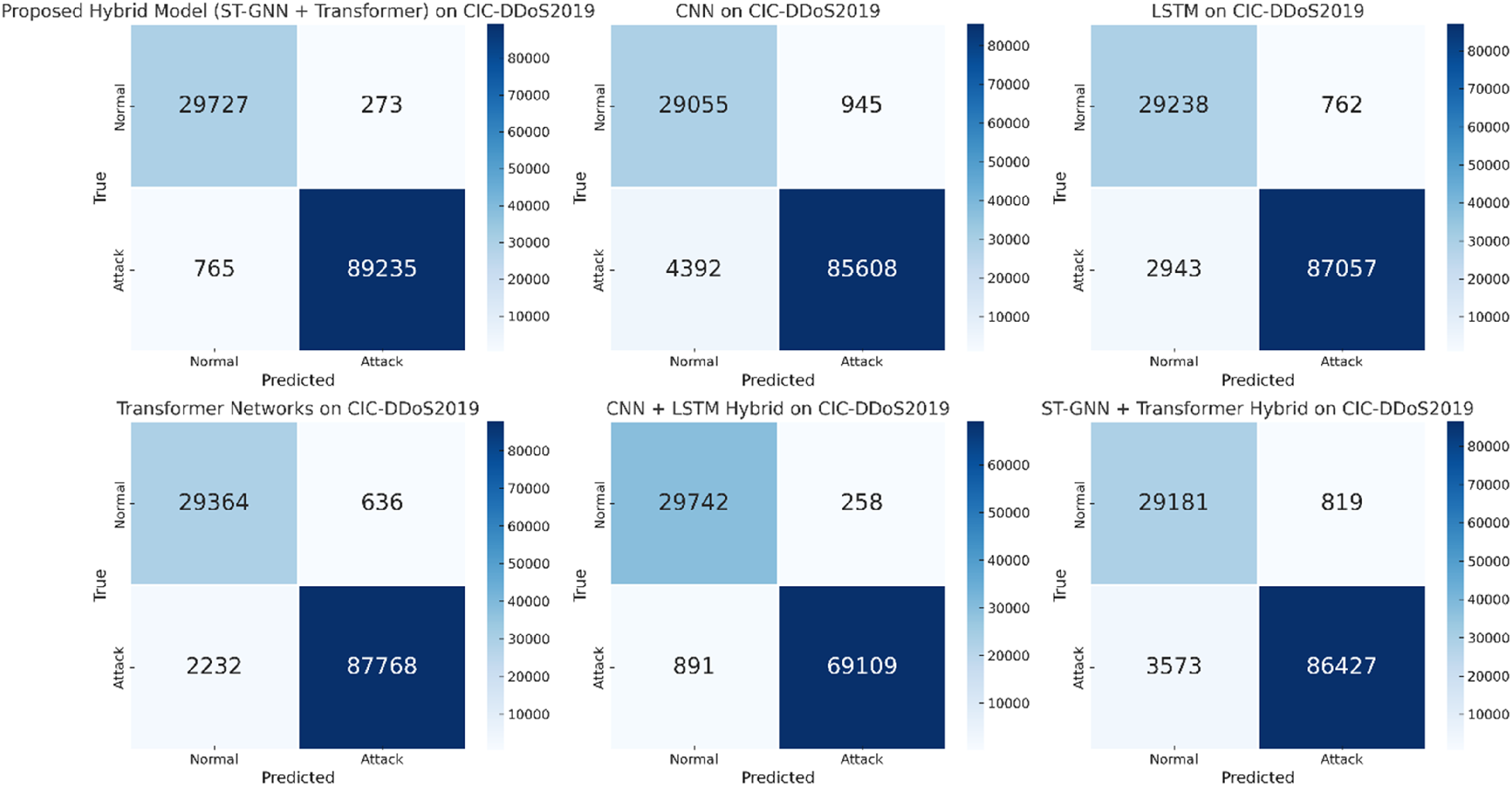

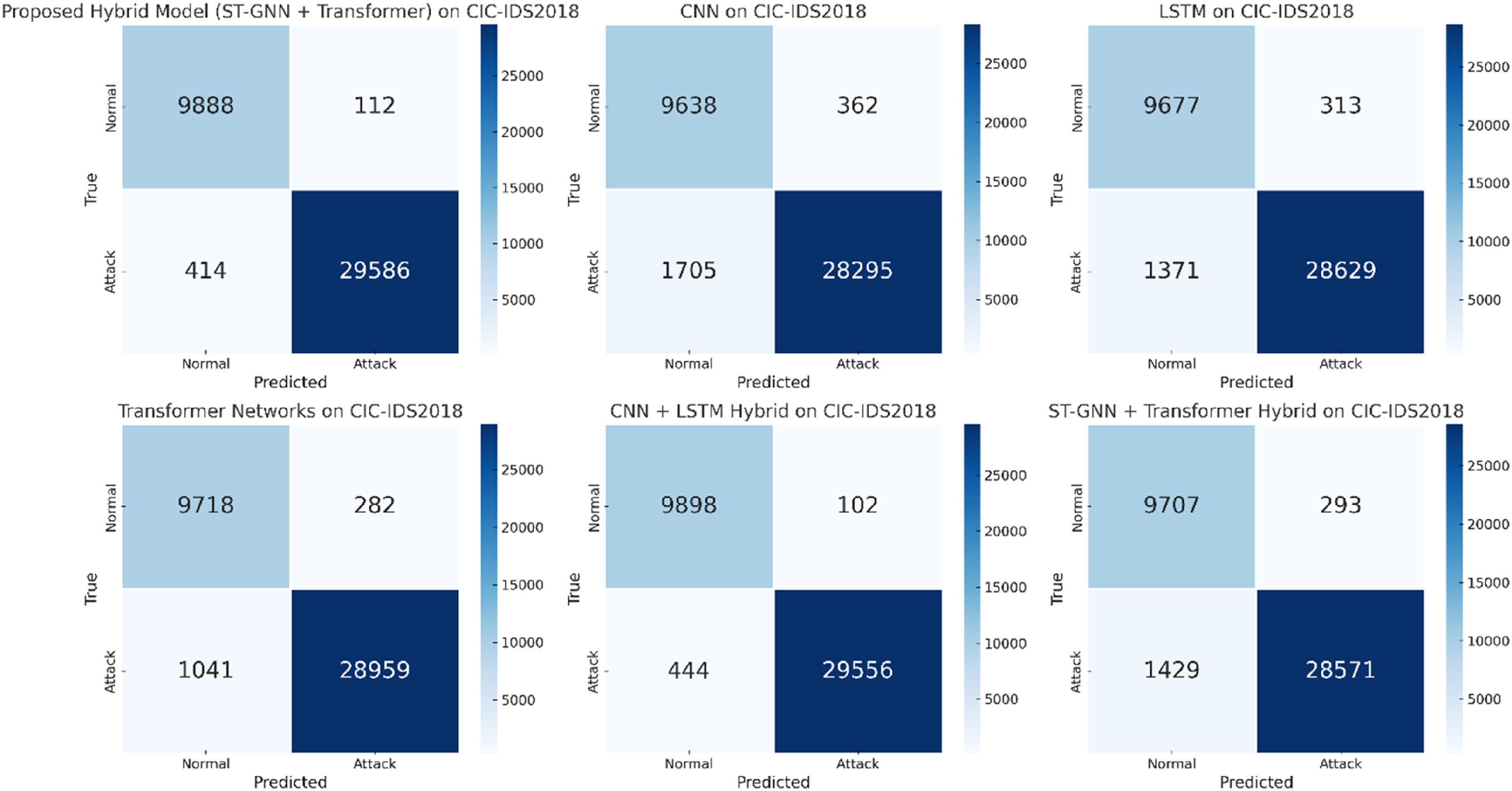

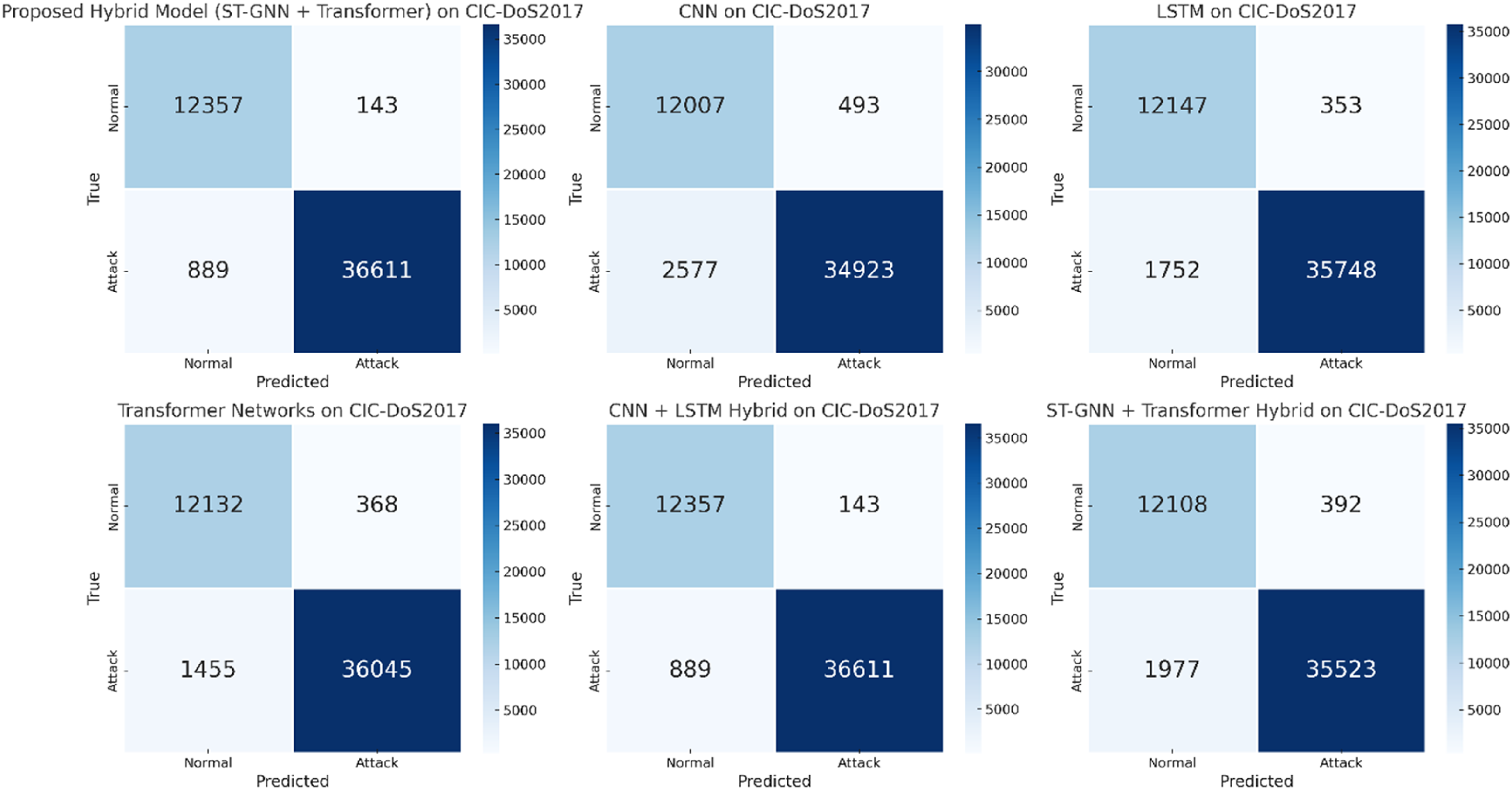

Tables 5, 6,7 and 8 findings indicate that the proposed hybrid model substantially surpasses traditional models, including CNN, LSTM, Transformer, and their corresponding hybrids, specifically CNN + LSTM. The efficacy of the proposed model is unequivocally illustrated in the confusion matrices for binary classification (Figures 2, 3, and 4), indicating that the model proficiently classifies benign and attack traffic across all datasets. For example, Figure 2, representing the confusion matrix on CIC-DDoS2019, reveals many true positives (correctly classified malicious and benign traffic) and very few false positives or false negatives. This observation is consistent across all three datasets, indicating that the model effectively distinguishes between regular and attack traffic.

Confusion matrix for binary class classification on CIC-DDoS2019.

Confusion matrix for binary class classification on CIC-IDS2018.

Confusion matrix for binary class classification on CIC-DoS2017.

Binary classification results.

Multiclass classification results.

Ablation analysis for proposed hybrid model (ST-GNN + Transformer).

P-value test analysis.

Key results and evaluation

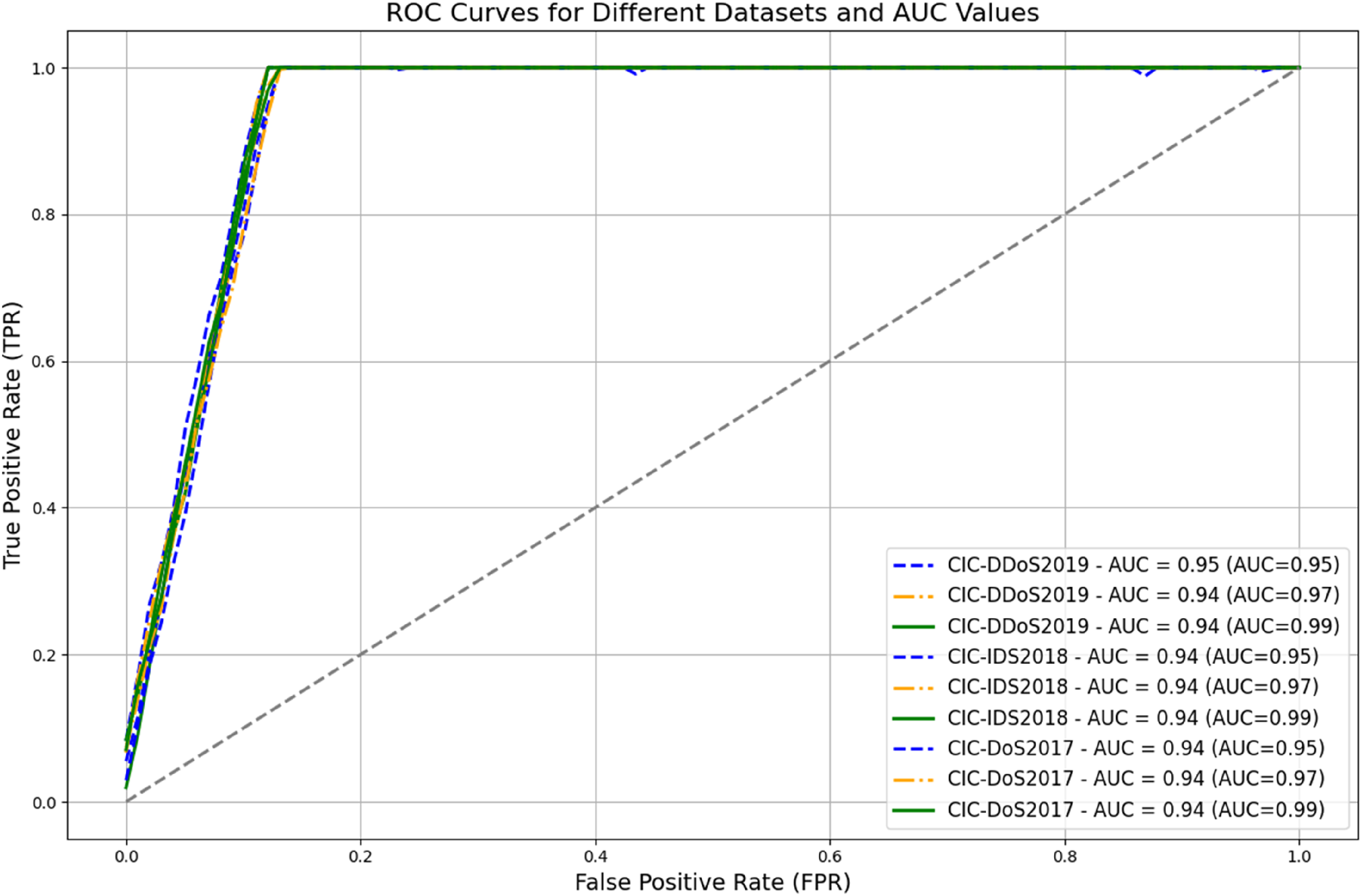

Table 5 and Figure 5 show how much better the proposed model performs compared to other models. The hybrid model used on the CIC-DDoS2019 dataset achieved an impressive accuracy of 99.12%, with a precision of 99.13%, recall of 99.15%, and an F1-score of 99.14%. Additionally, it recorded an AUC-ROC of 0.99. These results substantially exceed the performance of CNN (95.31%), LSTM (96.23%), Transformer (96.84%), and CNN + LSTM hybrid models (97.83%), which demonstrated inferior performance on the same dataset. Comparable trends are evident in CIC-IDS2018 and CIC-DoS2017, with the hybrid model consistently outperforming accuracy and other evaluative metrics. The proposed model attained an AUC-ROC of 0.99 on CIC-DDoS2019, 0.98 on CIC-IDS2018, and 0.97 on CIC-DoS2017, demonstrating its robust capability to effectively distinguish between benign and attack classes. The AUC-ROC curve for the proposed hybrid model, illustrated in Figure 8, further evidences its superior classification performance relative to other models exhibiting inferior AUC-ROC results.

Comparative analysis of binary class classification on CIC-2019, 2018 and 2017.

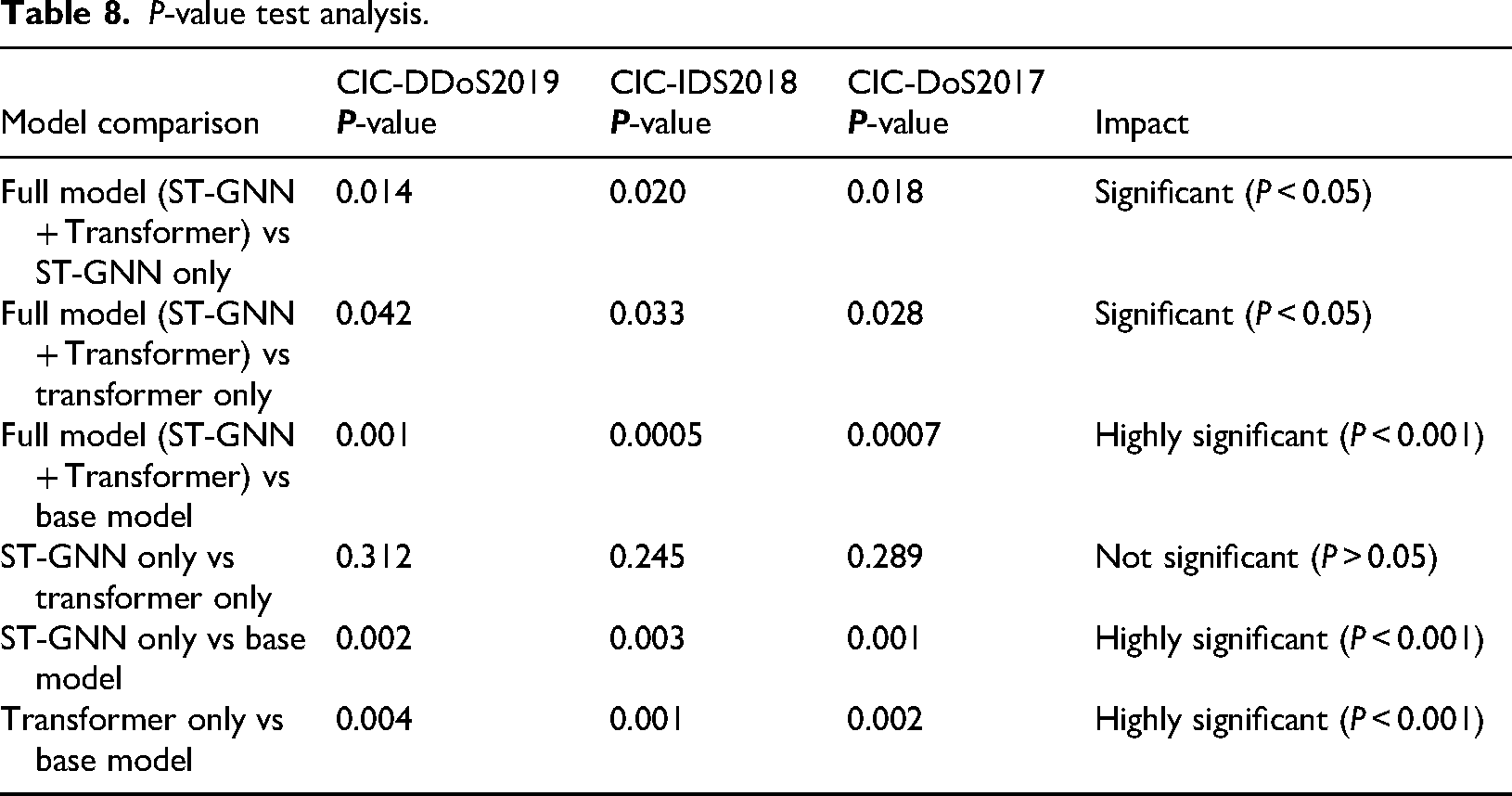

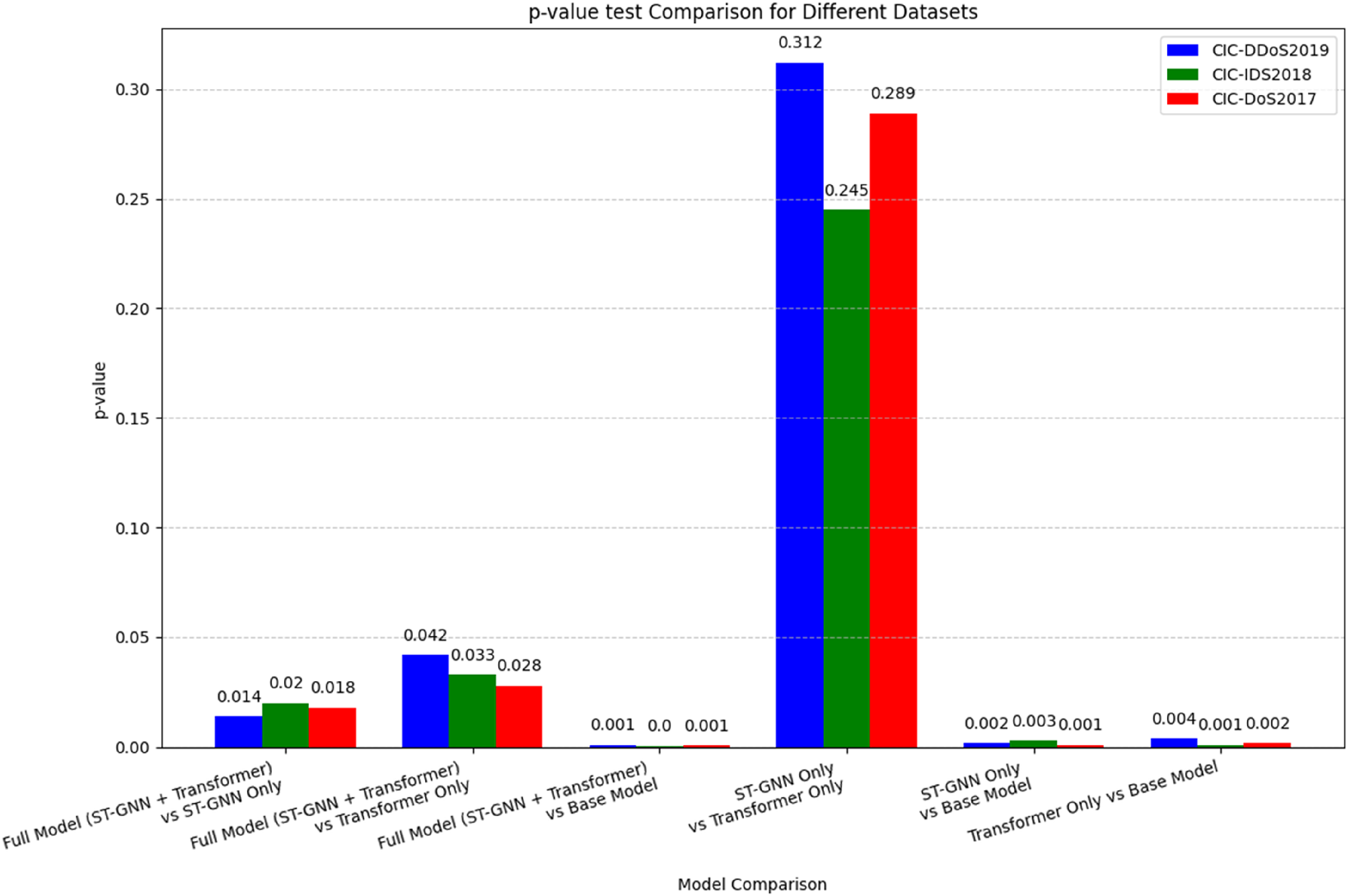

Error rate analysis and statistical significance

The confusion matrices for binary classification (Figures 2, 3, and 4) also show that the proposed model reduces false positives (FPR) and false negatives (FNR). On the CIC-DDoS2019 dataset, for instance, the model achieves an FPR of 0.91% and an FNR of 0.87%. These low error rates are essential in practical situations, where reducing misclassification can mean the difference between identifying an attack and permitting a breach. The low false positive rate shows that the model accurately identifies benign traffic without misclassifying it as attacks, and the low false negative rate indicates that it effectively detects true attacks without overlooking them. The achievement of this equilibrium is essential to the success of any intrusion detection system. Furthermore, Table 8's P-value analysis provides more proof of the superiority of the suggested model. The observed improvements are statistically significant, as evidenced by the consistently below-0.05 P-values for comparisons between the full hybrid model and the individual ST-GNN or Transformer models.

For instance, the P-value for comparing the full model (ST-GNN + Transformer) and the ST-GNN only model on the CIC-DDoS2019 dataset is 0.014, suggesting that adding the Transformer network significantly boosts performance. This statistical significance underlines the value of combining ST-GNN and Transformer networks, rather than using either in isolation.

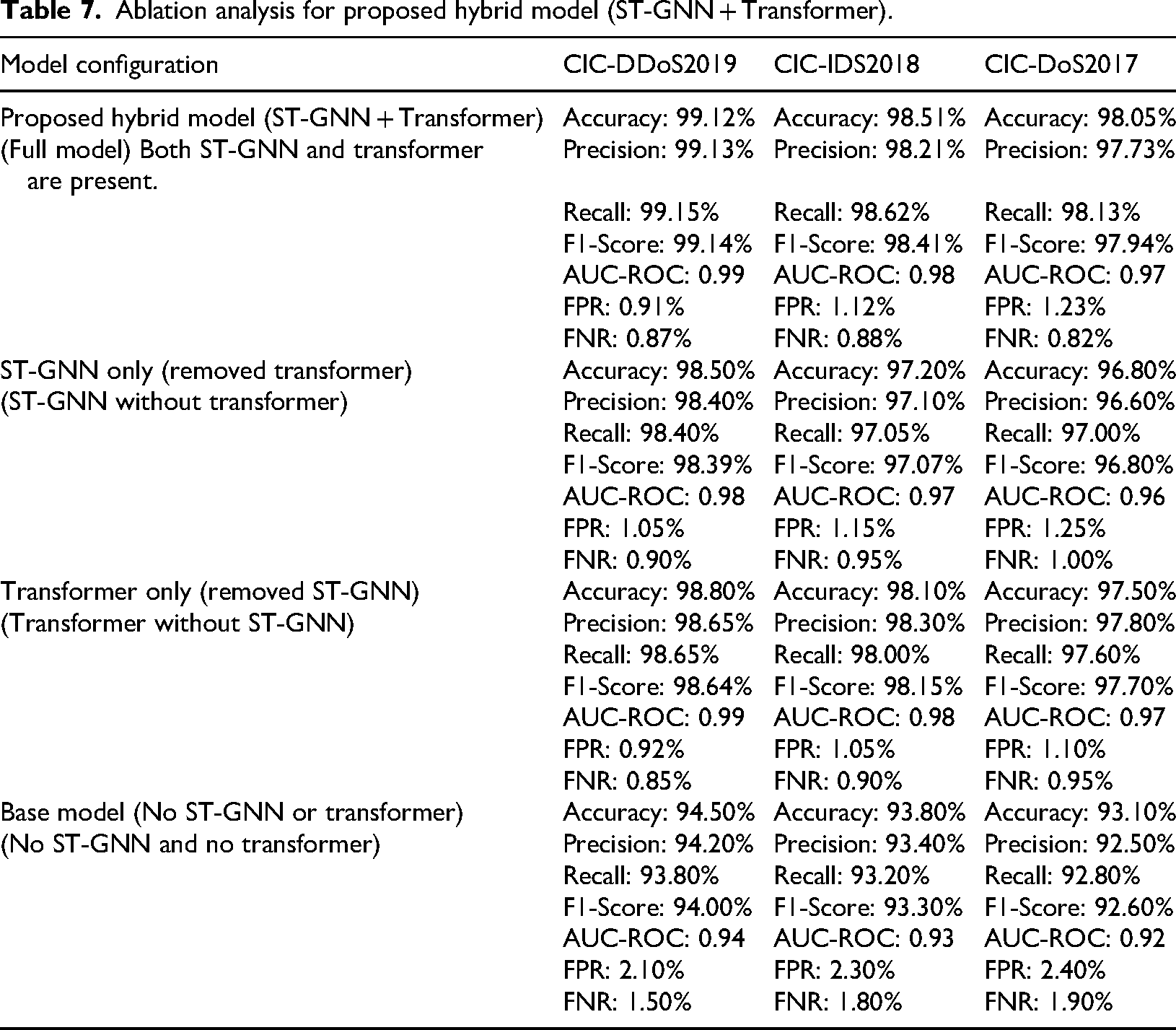

Ablation analysis: understanding the contributions of ST-GNN and transformer

The effectiveness of the combined ST-GNN and Transformer approach is demonstrated by the ablation study in Table 7. We can assess the contribution of each component by comparing the complete hybrid model (ST-GNN + Transformer) with each of its constituent models (ST-GNN and Transformer alone). In the CIC-DDoS2019 dataset, the full model attained an accuracy of 99.12%, while the ST-GNN-only and Transformer-only configurations recorded 98.50% and 98.80%, respectively. The notable performance disparity indicates that both the ST-GNN and Transformer networks offer distinct and complementary advantages, which, when integrated, yield enhanced classification outcomes. ST-GNN is proficient in capturing spatial dependencies in data, such as the relationships among various nodes in network traffic. In contrast, Transformer networks are adept at modelling long-range temporal dependencies, exemplified by sequential relationships in time-series data. The hybrid model's capacity to utilise spatial and temporal features enables it to deliver more precise and resilient intrusion detection, even amidst intricate and varied attack patterns.

Performance in multi-class classification

Table 6 and Figure 6 demonstrate how well the suggested model performed in multi-class classification in addition to binary classification. The proposed hybrid model consistently excels beyond other models in every dataset. For instance, on CIC-DDoS2019, the hybrid model reached an impressive accuracy of 98.42%, significantly surpassing the CNN at 94.12%, LSTM at 95.50%, and Transformer at 96.20%. The proposed model's capability to excel in multi-class situations showcases its strength and adaptability in practical network intrusion detection environments.

P -Test Comparison Analysis for Different Datasets of the Proposed Hybrid Model : Figure 9 presents a graph for the P -test comparison analysis across different datasets for the proposed hybrid model. The P -value test results, as shown in Table 8, reveal the significance of various model comparisons. For instance, the full model (ST-GNN + Transformer) compared to the ST-GNN only, Transformer only, and base model demonstrates significant P-values (P < 0.05) or highly significant P -values (P < 0.001) across all datasets (CIC-DDoS2019, CIC-IDS2018, CIC-DoS2017). However, the comparison between ST-GNN only and Transformer only shows no significant difference (P > 0.05). These results indicate that the proposed hybrid model significantly outperforms the individual components in various scenarios.

Comparative analysis of multi-class class classification on CIC-2019, 2018 and 2017.

Justification for the superiority of the proposed model

The superior performance of the proposed model can be attributed to the complementary nature of ST-GNN and Transformer networks. ST-GNN is adept at capturing spatial dependencies in graph-structured data, such as the relationships between different network flows, which is critical for intrusion detection in complex network environments. On the other hand, Transformer networks are effective at capturing temporal dependencies, which are crucial for analyzing the sequential nature of network traffic over time. The proposed hybrid approach effectively merges these two models, harnessing both the spatial and temporal dimensions of network traffic to enhance the accuracy and reliability of intrusion detection. Moreover, the hybrid model's capability to notably decrease both FPR and FNR, as seen in the confusion matrices, renders it an especially attractive option for real-time intrusion detection, where the implications of false positives and false negatives can be quite serious.

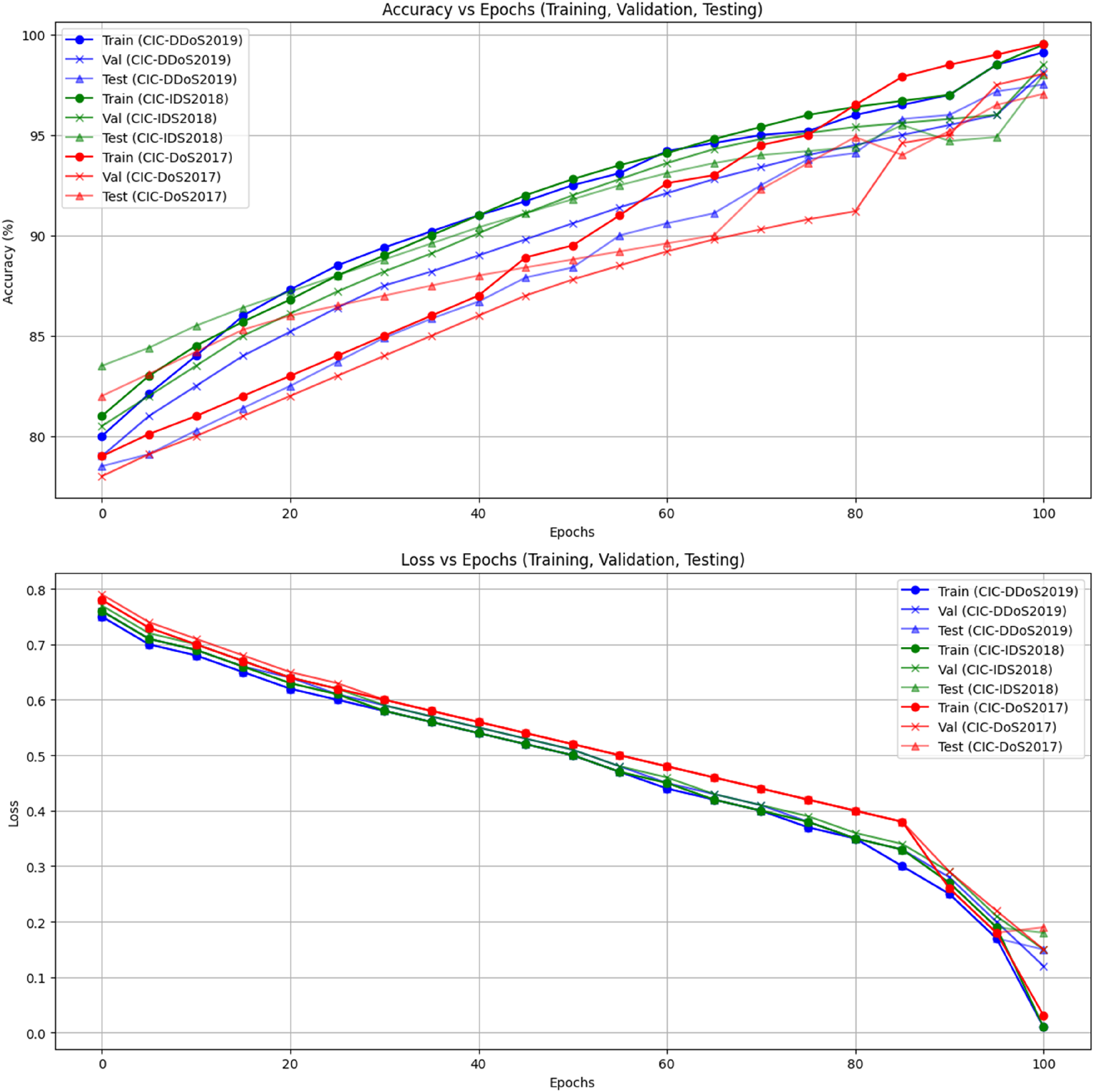

Figure 7 shows a comparison of training, test, and validation accuracy against loss for the proposed hybrid model using the CIC 2019, 2018, and 2017 datasets. The plot emphasises how well the model performs consistently and adapts effectively to various datasets, demonstrating steady improvements in accuracy while keeping losses low throughout training and evaluation.

Comparative analysis of training, test and validation accuracy vs. loss for proposed hybrid model on CIC 2019, 2018 and 2017 datasets.

Figure 8's AUC-ROC curve makes it evident how well the model separates attack traffic from benign traffic. The hybrid model's ability to distinguish between different kinds of network traffic is further evidenced by the consistently high AUC-ROC values across all datasets. On the CIC-DDoS2019, CIC-IDS2018, and CIC-DoS2017 datasets, the suggested hybrid model, which combines ST-GNN and Transformer networks, has demonstrated exceptional results in a number of performance metrics, including accuracy, precision, recall, F1-score, AUC-ROC, FPR, FNR, and MCC. The advantages of the hybrid approach over other models, including CNN, LSTM, Transformer, and CNN + LSTM hybrids, are evident from the confusion matrices, AUC-ROC curves, and ablation studies. The hybrid model effectively reduces error rates and achieves impressive classification accuracy, making it a strong choice for real-time network intrusion detection. The proposed model surpasses current methods by integrating spatial and temporal learning, offering a robust solution for improving cybersecurity in contemporary network infrastructures.

AUC-ROC curve for proposed hybrid model on CIC 2019, 2018 and 2017 datasets.

Graph for P-test comparison analysis for different datasets for the proposed hybrid model.

Limitations of the proposed model

Although the model performs well on small-scale datasets, its scalability to larger smart grids with a higher number of nodes remains a challenge. The model's computational complexity increases with larger datasets, and efficient deployment on distributed systems or edge devices requires further optimisation. Additionally, the integration of the federated learning system enhances scalability but introduces challenges related to data heterogeneity and synchronisation. The presented hybrid deep learning model shows excellent performance in cyber-attack detection in smart grid; some limitations still need to be addressed:

Dataset Dependency: The method was experimented on the benchmark datasets (CICDDoS2019, CICIDS2018, and CICDoS2017). While these datasets are well known, they may not fully represent the randomness and dynamism of traffic in realistic smart grid settings, which is likely to have effects on model generalisation. Computational Complexity: The incorporation of ST-GNNs, Transformers, and AAFFs raises the complexity. Scaled real-time deployment on all resource-limited smart grid edge devices may need further optimisation (e.g. model pruning or lightweight architectures). Federated Learning Challenges: Although federated learning improves privacy, security, and scalability, it also incurs communication overhead, latency, and synchronisation challenges in large-scale decentralised systems. Zero-Day Attack Detection: CSSL and CGAN-aided augmentation can make the model more resistant to unseen attacks, but the model has been frustrated in the detection of zero-day attacks, especially when encountering adversarial tactics that have no similar examples in the training data. Explainability: As one of the many DL models, the hybrid approach acts as a ‘black box’, which masks the decision-making (interpretability) behind a veil. This could be a barrier to adoption in critical infrastructure systems, where explainability is essential for trust and compliance.

Overcoming these limitations in future work will continue to enhance the generalizability, robustness, and security of the proposed smart grid security system.

Conclusion and future works

Conclusion

Energy distribution and consumption have significantly improved due to the development of smart grids, which offer increased flexibility and efficiency. However, significant security issues also accompany these developments. As smart grids become more integrated and linked to communication networks, they become more vulnerable to cyberattacks. Protecting these infrastructures from potential threats is essential for their success and dependability. This research presents a hybrid model integrating ST-GNN with Transformer networks to identify and categorise malicious activities within Smart Grid systems. The aim was to develop a model that can effectively differentiate between harmless and harmful network traffic while also tackling contemporary cyber threats’ intricate and ever-changing landscape.

The Proposed model's power comes from its ability to understand space and time relationships within network traffic. The ST-GNN part looks at how different nodes in the network relate to each other, while the Transformer part aids the model in grasping long-term dependencies and patterns. Combining these two techniques allows the model to grasp intricate patterns from the traffic data, which simpler models may find challenging. The research findings show how well this approach works. The suggested model demonstrated impressive accuracy and consistently surpassed conventional models like CNN and LSTM. The hybrid model demonstrated remarkable performance across all three datasets, achieving an accuracy of up to 99.12% on one dataset, highlighting its capability to identify threats with exceptional precision. Moreover, it consistently showed strong precision, recall, and F1 scores, as well as excellent AUC-ROC values, proving that it can effectively differentiate between normal and attack traffic.

A key part of this research was the Ablation Analysis, which revealed that the model's performance would drop considerably if either the ST-GNN or the Transformer component was taken out. This highlights the significance of the hybrid approach and confirms that both elements play a role in the model's success. The results show a clear and meaningful difference, emphasising that the proposed model significantly outperforms other existing models in terms of performance. What really sets this hybrid model apart, beyond the impressive numerical results, is its capacity to adjust to the changing landscape of cyber threats. Along with its high accuracy, it is also scalable and efficient, which is crucial for real-time intrusion detection in Smart Grids. Minimising both false positives and false negatives allows the system to effectively identify attacks while reducing unnecessary alerts, enhancing its practicality and reliability. In a nutshell, the proposed hybrid model provides an effective answer to the security issues confronting Smart Grids. By merging the advantages of ST-GNN and Transformer networks, the model not only reaches impressive detection accuracy but also shows a remarkable capacity to manage the intricate and dynamic landscape of cyber-attacks. This research lays the groundwork for stronger, more flexible, and adaptable intrusion detection systems for Smart Grids and beyond.

Future works

The proposed hybrid model demonstrates significant promise for enhancing the security of Smart Grids, but there are several aspects where future initiatives could concentrate to boost its effectiveness and relevance. One important area to improve is the capacity to adjust in the moment. In practical scenarios, Smart Grids face evolving and dynamic network conditions. Although successful in controlled settings, our model needs to adjust to the changing dynamics of real-time data and varying attack patterns. Future efforts might aim at enhancing the model's capacity to adapt continuously to new data, eliminating the need for complete retraining. This would mean using online learning methods or gradual learning models, allowing the system to quickly adapt to new threats and network situations, making sure it stays effective in real-time settings.

Another area for future exploration is the wider use of the proposed model. This research centred on Smart Grids, yet the foundational hybrid model of ST-GNN and Transformer networks holds wider possibilities. Essential systems like healthcare, transportation, and finance encounter comparable security issues because of their interconnected and delicate characteristics. Adjusting this model for these areas might result in more thorough, cross-industry security solutions. In healthcare systems, where data integrity and communication networks are crucial, this hybrid approach could be utilised to monitor and protect against attacks on medical devices or patient data exchanges.

Moreover, it is becoming increasingly necessary to incorporate self-learning abilities into the proposed models. Cyberattacks are always changing, and although our model has demonstrated solid performance against existing threats, new methods of attack will keep appearing. Allowing the model to keep learning from fresh data without needing much outside help or retraining is essential for lasting success. The model can adapt to new attack techniques by utilising unsupervised or semi-supervised learning methods, ensuring it remains ready for upcoming threats.

Another crucial area for future efforts is improving the clarity of the model. Complex machine learning models, such as the proposed hybrid model, are frequently perceived as ‘black boxes’ by those who use them. In security applications, especially concerning critical infrastructure, stakeholders must understand the rationale behind a model's classification of specific behaviours as malicious. Utilising techniques that elucidate AI functionality, such as analysing feature importance or implementing attention mechanisms, could improve comprehension of the model's decision-making process. This would help security analysts have confidence in the model's predictions and allow them to respond more effectively to threats by offering insights into the reasoning behind detected anomalies.

Moreover, scalability is important when implementing the suggested model in extensive networks, such as those in actual Smart Grids. Our findings indicate solid performance on the datasets utilised in this study; however, additional efforts are required to confirm that the model can effectively manage the substantial amounts of data produced in real-world environments. Enhancing the model's efficiency, perhaps through model pruning methods or investigating lighter versions of the hybrid model, could render it more appropriate for environments with limited resources while maintaining performance standards.

Examining how the hybrid model can fit into current security frameworks and workflows would be beneficial. Implementing Smart Grids in the real world will necessitate thoughtful integration with various systems and security measures. Future efforts might focus on working alongside industry partners to create effective deployment strategies, which could integrate real-time monitoring tools, automated response systems, and security information and event management (SIEM) platforms. In conclusion, although the suggested hybrid model represents a notable improvement in Smart Grid security, there are many chances for additional enhancement and adjustment. By concentrating on aspects like immediate adaptability, broader applicability, self-improvement, clarity, scalability, and practical implementation, future studies could guarantee that this model stays effective against changing threats and is prepared for real-world use across various essential infrastructure systems.

Footnotes

Acknowledgements

The authors extend their appreciation to Taif University, Saudi Arabia, for supporting this work through project number (TU-DSPP-2024-229).

Consent for publication

All authors have reviewed and approved the final manuscript.

Author contributions

Umesh Kumar Lilhore conceptualised the research, conducted experiments, and wrote the manuscript. Sarita Simaiya contributed to the design of the methodology and the analysis of results. Rasmi A assisted with the overall research framework and data collection. Deepa Devassy contributed to data preprocessing and model evaluation. Roobaea Alroobaea provided valuable insights into the research and assisted in manuscript revisions. Abdullah M. Baqasah participated in the statistical analysis and reviewed the manuscript. Majed Alsafyani helped with the implementation of the model and provided technical assistance. Afnan Alhazmi supported the literature review and provided feedback during the manuscript preparation.

Funding

This research was funded by Taif University, Saudi Arabia, Project No. (TU-DSPP-2024-229).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Dataset availability statement

The dataset is available from the corresponding author upon individual request.