Abstract

The ability to deduce implicit information about relations in a text (i.e., inferencing) is essential to understanding that text. Hence, there is increasing attention for supporting inferencing skills among children in early literacy programs including shared book reading interventions. This study investigated whether embedding scripted inferencing questions in a story that children (4.3–6.6 years) and parents (N = 32 parent–child dyads) read together increases the number of inferences during shared reading and supports children’s story comprehension. Results showed that during shared book reading parents and children made more inferences when the book contained scripted inferencing questions. However, there were no associated benefits regarding story comprehension: having read with scripted inferencing questions resulted in comparable story comprehension as reading without scripted inferencing questions. In addition, after reading with scripted inferencing questions more inferences were made during shared reading of a second book without scripted inferencing questions.

Keywords

Introduction

Over the past decades, research has demonstrated that understanding a text requires forming a coherent mental representation of that text (de Koning & van der Schoot, 2013; Kintsch, 1998). Relying on the literal textual information, however, is usually not sufficient to construct an accurate mental model: information relevant for mental model construction is often implicit, which makes successful understanding strongly dependent on a reader’s ability to make inferences based on their prior knowledge or text clues (Cain & Oakhill, 1999; Perfetti et al., 2005). Inference making is a cognitive activity where readers attempt to fill in details that are not mentioned in the text, connect ideas, and establish coherence in their mental model (Cain & Oakhill, 1999; van den Broek, Bohn-Gettler, et al., 2011). For example, to understand Eric Carle’s (1969) classic picture book The very hungry caterpillar, which tells the story of a caterpillar looking for food, children need inferencing skills to make sense of the following excerpt: On Monday he ate through one apple. But he was still hungry. On Tuesday he ate through two pears, but he was still hungry. On Wednesday he ate through three plums, but he was still hungry. On Thursday he ate through four strawberries, but he was still hungry. On Friday he ate through five oranges, but he was still hungry. On Saturday he ate through one piece of chocolate cake, one ice-cream cone, one pickle, one slice of Swiss cheese, one slice of salami, one lollipop, one piece of cherry pie, one sausage, one cupcake, and one slice of watermelon. That night he had a stomach ache! (Carle, 1969, pp. 5–15)

The causal relation between stomach ache and eating too much is something children need to infer: it is not given explicitly in the text. Research has shown that children are already able to engage in textual inferencing from an early age (Kendeou et al., 2008; Tompkins et al., 2013; van den Broek, Kendeou, et al., 2011; van den Broek et al., 2005). In a recent review of studies on preschoolers’ textual inferencing, Filiatrault-Veilleux et al. (2015) suggest a developmental order. The youngest children (three-year-olds) are able to make inferences about characters’ emotional states (‘She is sad’), but the ability to draw causal inferences such as problem resolutions (‘If he opens the window, the bird can escape’) or predictions (‘I don’t think the witch will find the little boy’) is only acquired at age five or six. The latter types of inferences are particularly important for comprehension. Research by Kendeou et al. (2008), for instance, has shown that preschoolers’ references to causal relations in story retelling significantly predicted their story comprehension. Some researchers have also shown positive relations between causal inferencing in young children and later reading comprehension (Ferreiro & Taberoski, 1982; Kendeou et al., 2009; Kontos & Wells, 1986).

Given the importance of early literacy interventions for improving text comprehension, it has been suggested that early literacy interventions should, in addition to supporting lower level reading skills like word recognition and letter-knowledge, also target inferencing skills (Lever & Sénéchal, 2011; van Kleeck, 2008). This aligns with the simple view of reading, which proposes that understanding the meaning of a text requires both word recognition skills and language comprehension skills (Gough & Tunmer, 1986). A natural way of scaffolding young children’s inferencing skills is through adult–child interactions during shared reading. Various studies have examined so-called ‘extra-textual discussions’ during parent–child shared picture book reading (Beals et al., 1994; Hammett et al., 2003; Leseman & de Jong, 1998). Usually, such studies examine the extent to which parents and children engage in ‘decontextualized talk’, which refers to whether parent and child utterances extend beyond the directly readable or visible context of the book. The use of decontextualized talk often involves making inferences, such as making predictions or providing explanations about story events (e.g., ‘What do you think Frog will do next?’ or ‘I think rabbit is unhappy, because he lost his carrot’). The frequency of such utterances has been shown to predict children’s vocabulary knowledge and narrative skills (Reese, 1995; Rowe, 2013, 2012; Sparks & Reese, 2012), even up to mid-adolescence, as has been shown recently by Uccelli et al. (2019).

Although in many shared reading interventions, particularly those following a Dialogic Reading approach (Arnold & Whitehurst, 1994; Whitehurst et al., 1994), inferencing is part of the types of parent–child interactions that are encouraged, only a few interventions have been developed that explicitly focus on promoting young children’s inferencing. Van Kleeck et al. (2006) examined the effects of ‘scripted inferencing’ in storybooks. In their intervention, preschoolers were read to by trained research assistants who made use of picture books containing question scripts. These elaborate scripts contained either literal or inferential questions to help children understand the story, prompts to aid children in responding to these questions, and (correct) answers to the questions. A control condition that did not engage in a reading activity served as a comparison group. The researchers found positive intervention effects on children’s ability to respond to decontextualized talk (including inferencing), as measured with the Preschool Language Assessment Instrument (PLAI; Blank et al., 1978). Building on van Kleeck et al.’s approach, Desmarais et al. (2013) exposed four- to six-year-olds with specific language impairment to comparable literal and inferential questions. In a 10-week intervention, speech-language pathologists read to children from five books using scripted questions (16 per book). They compared children’s progress on the PLAI during the intervention to their progress during a baseline and a maintenance phase, but found no pre- to post-intervention differences. Finally, Dawes et al. (2019) examined the effects of an inferencing intervention on the narrative comprehension skills of five- to six-year-old children with developmental language disorder. In four sessions, a researcher used modeling and inferential comprehension questions to help children build a ‘story map’ (i.e., a mental overview of the story structure) of four storybooks. The researchers found a significant intervention effect on inferential comprehension during a transfer task and this effect was maintained eight to nine weeks later.

While the intention of these studies is to be commended, they have a number of drawbacks. One limitation is that the scripts in all three studies included both literal questions and inferential questions, making it difficult to isolate the effects of inferencing. Another is the lack of a (suitable) control condition. Whereas Desmarais et al. (2013) failed to include a control group, the control children in the van Kleeck et al. (2006) study did not receive any treatment and those in the Dawes et al. (2019) study received no storybook reading but another type of intervention (phonological awareness). Therefore, it is unclear whether the positive intervention effects were a consequence of the use of extra-textual talk or whether they were the result of shared reading per se. A more precise test of the effects of scripted inferencing during shared reading would thus require an experiment in which scripted questions focus exclusively on supporting children to make inferences (i.e., scripted inferencing questions) and a comparison is made with a control group in which children are involved in shared reading without scripted inferencing. An additional drawback of previous studies is that effects were only tested by assessing children’s general ability to respond to decontextualized talk (i.e., by their PLAI scores) or by their scores on a test of narrative comprehension. The studies did not analyze whether children actually engaged in more inferencing during the interaction and whether scripted inferencing contributed to comprehension of the story at hand. A final limitation is that the studies either drew on the effort of trained staff or the intervention was researcher-delivered, whereas the family would be a more natural environment for encouraging inferencing during shared reading.

In the current study, we addressed these limitations and aimed to extend prior research by (1) experimentally testing the impact of scripted inferencing questions on the interactions between parents and children during reading and children’s story comprehension, and (2) making a comparison with a control situation in which the same books are used but no inferencing questions are offered. We were particularly interested in the effects scripted inferencing questions have during and after a shared reading activity. The following research questions were formulated:

Research question 1: Do scripted inferencing questions support parents and children in making more inferences than during natural shared reading interactions (i.e., without scripted questions)? Based on the research discussed above, it was expected that shared book reading with scripted inferencing questions would result in more inferences during parent–child interactions than shared reading without such questions.

Research question 2: Does engaging in shared reading with scripted inferencing questions result in better story comprehension? We expected that, compared to shared book reading without scripted inferencing questions, children being read to with scripted inferencing questions would show higher story comprehension on a comprehension test administered after the reading activity.

Research question 3: Do parents and children learn from using scripted inferencing questions; that is, if scripted inferencing questions result in more inferencing, does this effect transfer to a regular shared reading activity where no scripted inferencing questions are provided? Therefore, we explored whether parents and children made more inferences during a second shared book reading activity, when their first reading activity was supported with scripted inferencing questions, as compared to when their first reading activity was without scripted inferencing questions.

Method

Participants

Participants were parents and (one of) their kindergartners. They were recruited by (1) brochures about the study distributed by four Dutch primary schools, and (2) a call for participation in the study on social media. Parents who indicated their interest to participate were given more information about the content and procedural aspects of the study (without giving away the main purpose). Parents received a small financial reward and children received a small present for their participation.

Thirty-two parent–child pairs (31 mothers, one father; 14 girls, 18 boys) participated. The average age of the children was 5.5 years (SD = 0.5; range 4.3–6.6 years). Information obtained from a demographic questionnaire completed by participating parents indicated that the sample represented a range of socioeconomic backgrounds, defined in terms of parent’s reported educational level: 18 parents had received higher professional education, seven parents had a university degree, four parents had completed vocational education, and three parents had only completed secondary education. All parents spoke Dutch with their child at home. Six parents were born in another country than the Netherlands and also spoke a second language with their child. The families were generally interested in reading: 29 parents indicated that they read to their child every day, two parents did this once or twice per week, and one parent did this once or twice per month. Most of the parents (26) indicated that they read at least 15 minutes a day themselves. Only two parents reported that they hardly ever read. All parents gave written informed consent before taking part in the study, which included permission to make audio and/or video recordings during the experiment. The study was conducted in accordance with the code of ethics for the social and behavioral sciences endorsed by all universities in the Netherlands, and the guidelines of Erasmus School of Social and Behavioural Sciences, Erasmus University Rotterdam.

Design

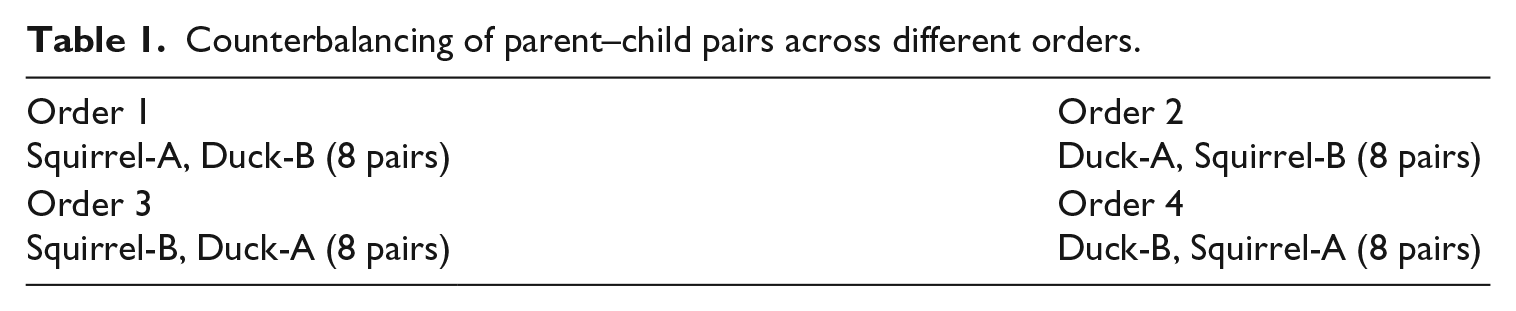

The study had an experimental set-up with provision of parent–child interaction suggestions during shared picture book reading (through scripted inferencing questions vs. no scripted questions) as a within-subjects factor. Each parent–child pair was asked to read two picture books, one with and one without scripted inferencing questions. The order of picture books and versions (both books were available with and without scripted inferencing questions) was counterbalanced across parent–child pairs and each parent–child pair was randomly assigned to one of four orders (see Table 1). This set-up allowed us to investigate (1) the effect of scripted inferencing questions on parent–child interactions, and (2) whether there was a ‘transfer’ effect from reading a book with scripted inferencing questions to reading a book without such questions, while controlling for an effect of story content.

Counterbalancing of parent–child pairs across different orders.

Materials

Stories

Two picture books were used, which were specifically written and illustrated for this study by a professional writer/illustrator. Each picture book contained 12 pages, with about four to five lines of text and one corresponding drawing per page. The picture book Eekhoorn en Ekster gaan op zoek naar eikels (in English: ‘Squirrel and Magpie go looking for acorns’) was 388 words long and described how Squirrel invites his friend Magpie to search for acorns. Because Magpie is more interested in shiny things, he overlooks all kinds of special situations (e.g., an elephant making a handstand). At the end of the story, Magpie realizes that due to his inattention he missed a lot of beautiful things in his surroundings. The picture book Het geluid van Eend (in English: ‘The sound of Duck’) was 450 words long and described the story of Duck, who seeks friendship with the animals on a farm by mimicking their sounds. However, the animals laugh at her because she cannot do it properly. Then she meets Frog, who encourages Duck to make her own sound (i.e., quacking). When the other animals hear Duck and Frog ‘singing’ beautifully together (in Dutch, representations of sounds that ducks and frogs make are similar, i.e., kwaak), they come over to listen and Duck no longer feels sad and alone.

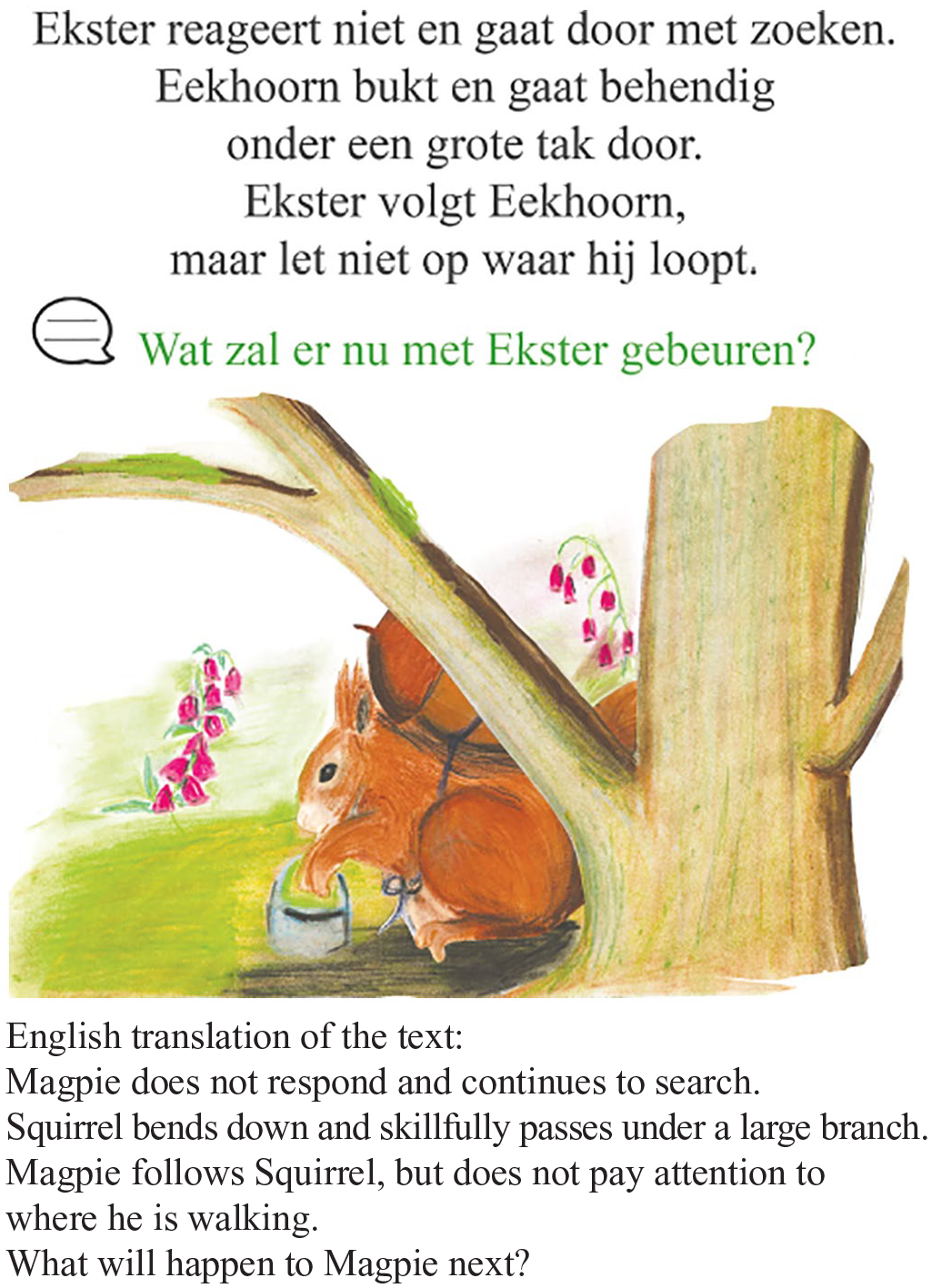

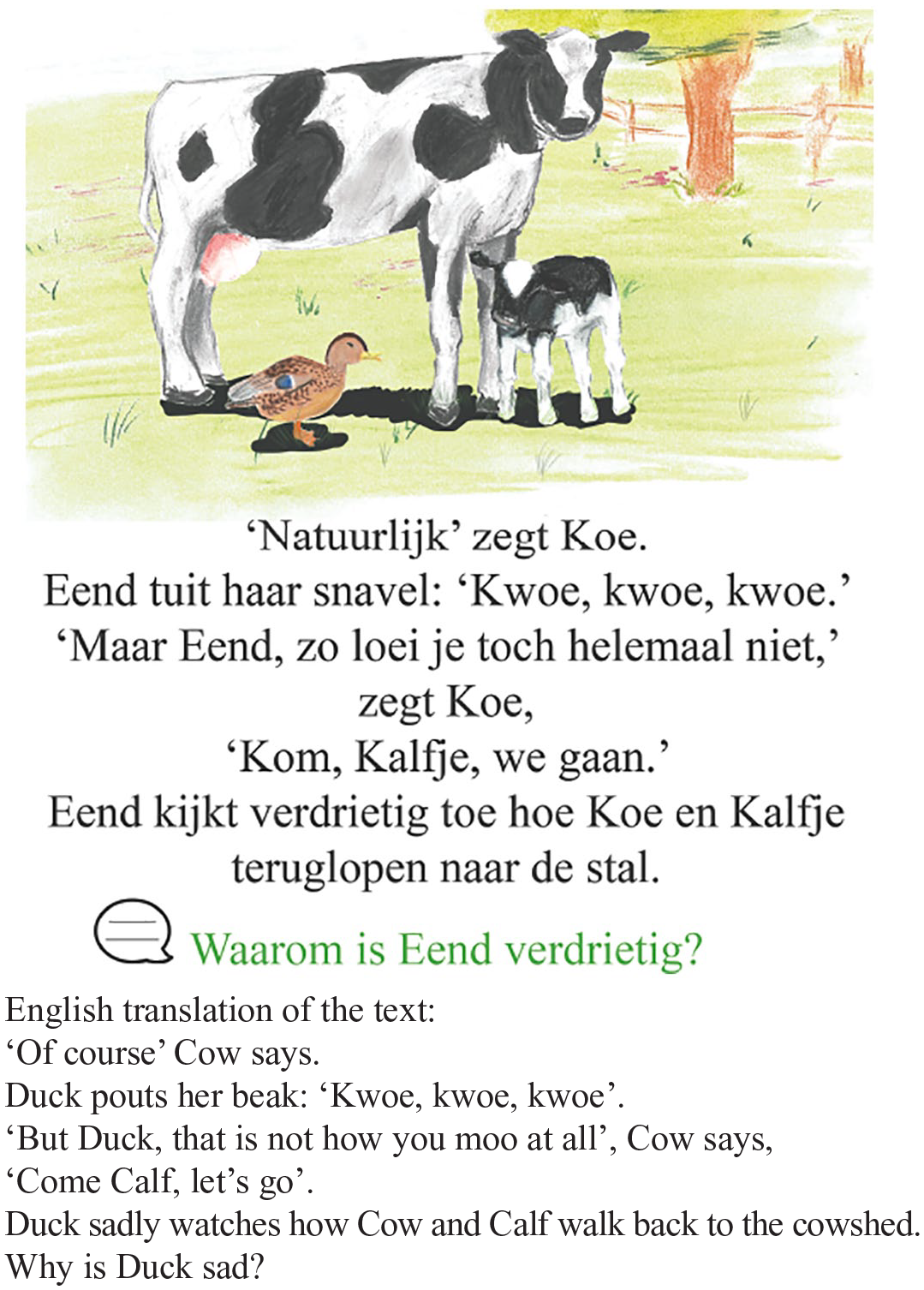

For each picture book, two versions were created: (A) a version with six scripted inferencing questions, and (B) a version without such questions. Except for the scripted inferencing questions, both versions of a picture book were identical. The scripted inferencing questions were always at the bottom of a page, and a page contained no more than one question. Each question was preceded by an icon (a speech bubble) and was presented in green, so that it was clearly distinguishable from the main text. The scripted inferencing questions required either forward inferences (e.g., requiring the child to predict what will probably happen in a subsequent scene), or backward inferences (e.g., asking the child to explain the cause of a story event or an emotion of one of the characters in the just read text). Figures 1 and 2 show an example of a scripted inferencing question from each of the two picture books (a forward and a backward inferencing question, respectively).

Example of a scripted forward inferencing question: ‘What will happen to Magpie next?’

Example of a scripted backward inferencing question: ‘Why is Duck sad?’

Measures

Comprehension

For each picture book, a comprehension test assessed the extent to which the child had an adequate understanding of the content of the picture book. An overall measure of comprehension was used as the scripted inferencing questions were expected to support children in constructing an accurate mental representation of the story elements and their relations, and hence their story comprehension. The comprehension tests were developed based on the Narrative Comprehension of Picture Books task (Paris & Paris, 2003). Each comprehension test consisted of 10 open-ended questions. Half of these questions were about explicit information in the text (i.e., literal questions) and addressed the story characters, setting, initiation event, problem, and outcome resolution. The other half required children to make inferences based on the characters’ feelings, dialogues, causal relations, predictions, and themes (i.e., inference questions). Questions were read to the children by the experimenter. For questions that referred to a picture in the picture book, the experimenter showed the corresponding picture. Children’s answers to the questions were recorded with a speech recorder and later transcribed. From the transcriptions, answers were scored according to rubrics that were developed for the present comprehension test. The rubrics contained all correct answers and their corresponding scores. Similar to the method used in Paris and Paris (2003), a 0-1-2 score was awarded per question. A score of 0 points indicated a wrong answer; 1 point represented a correct answer; 2 points were awarded if the correct answer was accompanied by a suitable explanation of why the child thought this was the correct answer. For each picture book separately, an overall comprehension score was computed by summing the scores on all 10 questions (ranging from 0 to 20 points) about the picture book. The total comprehension score was converted to proportion correct for further analyses to correct for missing data (the experimenter accidentally failed to pose one of the questions about the Duck text to one participant and failed to pose one of the questions about the Squirrel text to another participant; all other participants answered all questions). All answers on the comprehension test were independently coded by two experimenters. Inter-coder reliability, as measured by Krippendorff’s α (Hayes & Krippendorff, 2007), was high for both the comprehension test about the picture book named The sound of Duck (α = .95) and the test about the picture book Squirrel and Magpie go looking for acorns (α = .87).

Parent–child interactions

Parent–child interactions during shared picture book reading were video recorded and later transcribed. All utterances (by either parent or child) were coded based on the scheme used by de la Rie et al. (2018). This scheme was translated and adapted from the coding framework applied by van Kleeck et al. (1997), who based their categories on earlier studies (Blank et al., 1978; Sorsby & Martlew, 1991). The coding scheme distinguishes story-related utterances (questions and statements) from other utterances (e.g., interaction-related utterances such as giving feedback and procedural utterances such as ‘Let’s turn the page’). Story-related utterances are categorized into four ‘levels of decontextualization’ according to the extent to which they move beyond the literal storyline and pictures. Levels 1 and 2 contain utterances pertaining to elements within the directly observable context of the story, with utterances on Level 2 being somewhat more cognitively challenging. Levels 3 and 4 refer to utterances in which parent and child tend to go beyond the directly observable context of the story. In Level 3, this is still rather superficial and includes activities such as summarizing and connecting elements of the story. For the purpose of this study, we focused on the highest level of decontextualization (i.e., Level 4), which focused on meaning-making based on inferencing, and coded this into three additional subcategories. On Level 4, parent and child make inferences about the story by making predictions (subcategory A: ‘I think Duck will do it right.’), providing explanations (subcategory B: ‘Duck is happy, because she can quack with Frog.’), and formulate conclusions and implications (subcategory C: ‘What has Magpie learned from this?’).

Three trained experimenters coded the parent–child interactions according to the above coding scheme. To calculate inter-coder reliability, 25% of the transcripts (i.e., 8 families; 16 parent–child interactions) were coded by all three experimenters. After removing the literal text utterances (including the actual scripted inferencing questions), 1500 utterances remained which were coded into the seven aforementioned story-related and interaction-/procedure-related (sub)categories (i.e., Levels 1–3, Levels 4A, 4B, 4C, and procedural). Inter-coder reliability was sufficient (Krippendorff’s α = .69; Krippendorff, 2004) and the remaining transcripts (75% of total) were, therefore, not double coded. According to prior research (e.g., Hallgren, 2012), the reliability of the codings in a subset may be used to generalize to the full sample. Each experimenter coded another 25% of the total amount of transcripts. To ensure objectivity, experimenters only coded parent–child interactions that had been observed and transcribed by a different experimenter.

Demographic questionnaire

The demographic questionnaire consisted of questions about parents’ country of birth, the language that was spoken at home with the child, and parents’ educational attainment. A Dutch translation of the Stony Brook Family Reading Survey (Whitehurst, 1992) was included to measure the extent to which families engage in reading activities in their home environment.

Procedure

Testing

Depending on the parent’s preference, test sessions were scheduled at their home or at their child’s school. During the test session, which lasted about one hour, the experimenter followed a standardized protocol. First, a brief explanation of the research and the procedure was given, after which the parent provided written informed consent for participation in the study. Parents were told that they would read two picture books, one of which contained scripted questions embedded in the story. Then, the parent and child were asked to read the first book. During both reading activities, the experimenter was present in the room but sat apart from the dyads. If a parent had objections to their interactions being recorded with a video camera, only a voice recording was made (with the parent’s permission). Prior to reading the A-version of a book (i.e., the version with scripted inferencing questions), parents were asked to read the book to their child and were additionally told that the book contained scripted questions embedded in the story that they could use during reading. Inspection of the transcripts showed that in 98% of the cases, parents used the scripted questions as intended (i.e., three times parents altered the question and only once a question was skipped, because the child had already spontaneously answered it). Prior to reading the B-version of a book (i.e., the version without scripted inferencing questions), parents were just asked to read the book to their child. After the parent and child had finished reading the first book, the experimenter asked the child to complete the corresponding comprehension test. The parent was asked to leave during this part of the experiment to ensure that the parent could not (un)consciously help the child answer the questions. However, if the child was uncomfortable with the parent leaving, the parent was allowed to stay and was asked to refrain from any interaction with the child and not to comment on the child’s answers. After completion of the comprehension test, the parent was asked to read the second picture book, after which a corresponding comprehension test was administered using the same procedure as described above.

Results

Effect of scripted inferencing questions on parent–child interactions

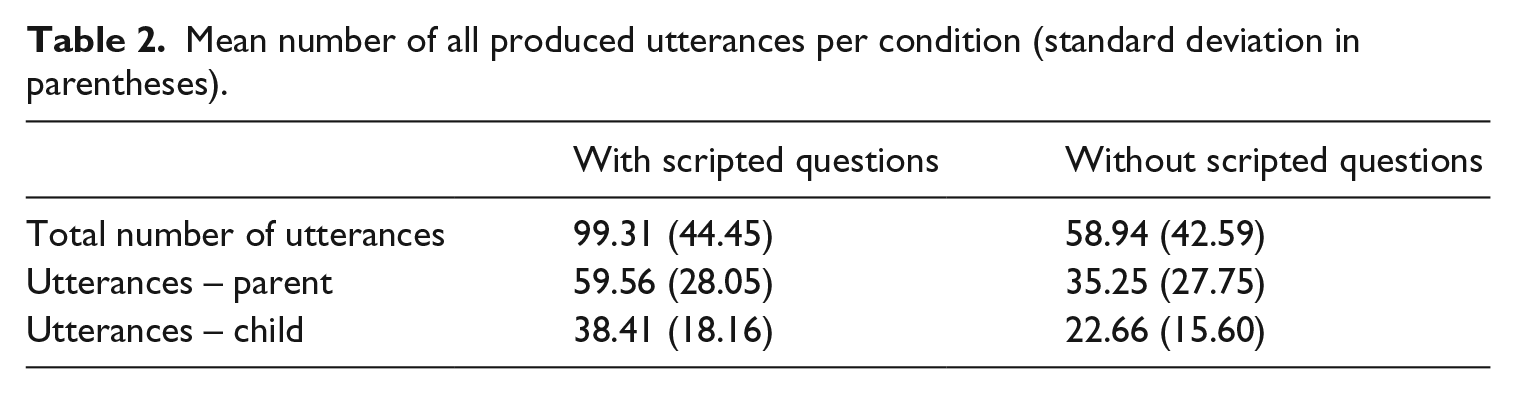

Table 2 shows the mean (and standard deviation) number of all utterances that parents and children produced (Levels 1–4) during shared book reading for the experimental and control conditions. As can be seen in this table, shared book reading with scripted inferencing questions resulted in significantly more parent–child interactions (i.e., total number of utterances) than shared book reading without such questions, t(31) = 5.55, p < .001, d = .93. This applied to both the number of utterances of parents, t(31) = 5.07, p < .001, d = .98, and of children, t(31) = 5.50, p < .001, d = .93. All three tests showed large effect sizes. 1

Mean number of all produced utterances per condition (standard deviation in parentheses).

To investigate the extent to which scripted inferencing questions impacted inference making (Research question 1), a 2 × 3 × 2 repeated measures Analysis of Variance (ANOVA) was conducted with the total number of inference-related (Level 4) utterances as the dependent variable. Speaker (parent vs. child), Sublevel (4A vs. 4B vs. 4C, see parent–child interactions coding scheme), and Condition (with vs. without scripted inferencing questions) were included as within-subjects factors. For reasons of clarity and space, we focus on reporting significant findings.

There was a significant and large 2 main effect of Speaker, F(1, 31) = 105.60, p < .001, ηp2 = .77, Sublevel, F(1.65, 51.10 3 ) = 35.16, p < .001, ηp2 = .53, and a significant medium to large effect of Condition, F(1, 31) = 4.22, p = .049, ηp2 = .12. These main effects were qualified by a significant Speaker × Sublevel × Condition interaction with a large effect, F(1.38, 42.78 4 ) = 18.17, p < .001, ηp2 = .37. Unpacking this interaction, it appeared that in the condition without scripted inferencing questions, there was a significant interaction with a large effect between Speaker and Sublevel, F(2, 62) = 5.72, p = .005, ηp2 = .16. In comparison to children, parents produced more utterances related to predicting (sublevel 4A: Mchildren = .75, SE = .21 and Mparents = 1.22, SE = .29; p = .005) and explaining (sublevel 4B: Mchildren = .69, SE = .21 and Mparents = 1.59, SE = .45; p = .001). There was no such interaction effect for formulating conclusions and implications (sublevel 4C: Mchildren = .13, SE = .10 and Mparents = .16, SE = .10; p = .745). In the condition with scripted inferencing questions the Speaker × Sublevel interaction was also significant with a large effect, F(2, 62) = 14.16, p < .001, ηp2 = .31. However, in this condition the children produced more utterances than parents with regard to predictions (sublevel 4A: Mchildren = 4.00, SE = .31 and Mparents = 1.66, SE = .28; p < .001) and explanations (sublevel 4B: Mchildren = 5.34, SE = .49 and Mparents = 4.16, SE = .59; p = .053). Once again, such an effect was not found for conclusions and implications (sublevel 4C: Mchildren = .34, SE = .15 and Mparents = .66, SE = .23; p = .057). Most interesting in light of our hypotheses, is that in the condition with scripted inferencing questions children produced more predictions (Mdifference = 3.25, SE = .28, t(31) = 11.59, p < .001, d = 2.15) and explanations (Mdifference = 4.66, SE = .45, t(31) = 10.44, p < .001, d = 2.14) in their utterances than children in the condition without these questions. Both these effects were large based on the effect size.

Effect of scripted inferencing questions on story comprehension

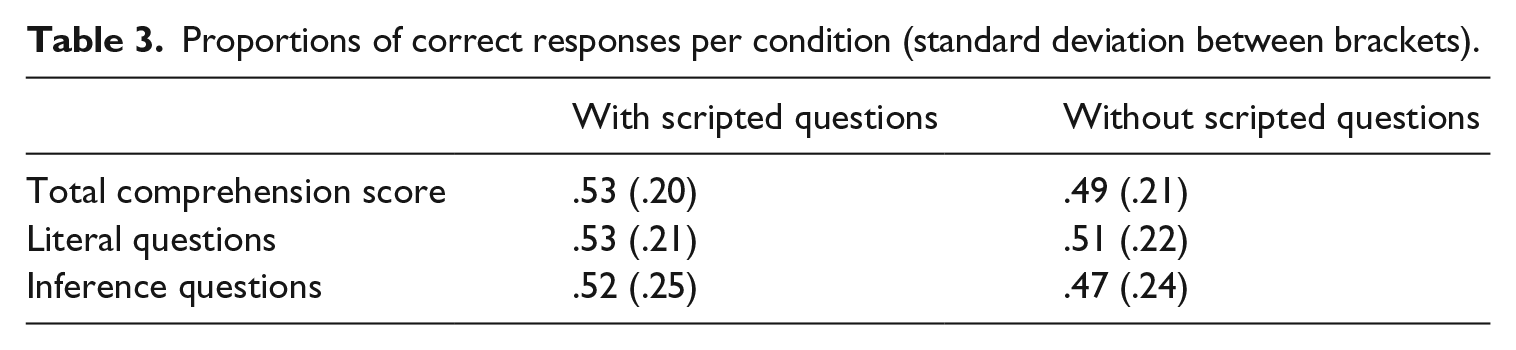

Table 3 shows the proportions of correctly answered comprehension questions separately for type of question and condition. The emerging pattern is that children answered more literal and inference questions correctly in the condition with scripted inferencing questions compared to the condition without scripted inferencing questions. This difference appeared to be largest for the inference questions compared to the literal questions.

Proportions of correct responses per condition (standard deviation between brackets).

To investigate whether shared book reading with scripted inferencing questions affected story comprehension (Research question 2), a repeated measures ANOVA was conducted on the story comprehension scores with Condition (with vs. without scripted inferencing questions) and Question type (Literal vs. Inference) as within-subjects factors. Additionally, correlational analyses were performed to investigate the relations between parent–child interactions and story comprehension.

The analyses revealed neither significant main effects of Condition and Question type nor a significant Condition × Question type interaction (all Fs < 1). When collapsing the literal and inference questions, the analyses showed a similar pattern, i.e., no significant difference in comprehension score between the conditions with vs. without scripted inferencing questions (M = .53, SD = .19; M = .48, SD = .21, respectively; t(31) < 1). 5 Nevertheless, in the condition with scripted inferencing questions, the number of sublevel 4B utterances (i.e., explaining) was significantly and positively associated with story comprehension scores (r = .70, p < .001). This relation was not observed in the condition without scripted inferencing questions (r = .29, p = .11).

Effect of scripted inferencing questions on transfer

To investigate whether the effects of shared book reading with scripted inferencing questions transfer to reading without such questions (Research question 3), a 2 × 3 × 2 × 2 mixed ANOVA was conducted on the Level 4 utterances, with Speaker (parent vs. child), Sublevel (4A vs. 4B vs. 4C), and Condition (with vs. without scripted inferencing questions) as within-subjects factors and Order (with-without vs. without-with scripted inferencing questions) as between-subjects factor.

Results revealed a large significant Condition × Order interaction, F(1, 30) = 7.47,p = .010, ηp2 = .20. This interaction showed two separate effects. First, it appeared that when reading a picture book with scripted inferencing questions overall more Level 4 utterances were produced (M = 2.70) than when reading a picture book without scripted inferencing questions (M = .76; p < .001). Second, irrespective of whether scripted inferencing questions were embedded in the picture book, in the second reading activity more Level 4 utterances were produced (M = 1.96) than in the first reading activity (M = 1.49; p = .018).

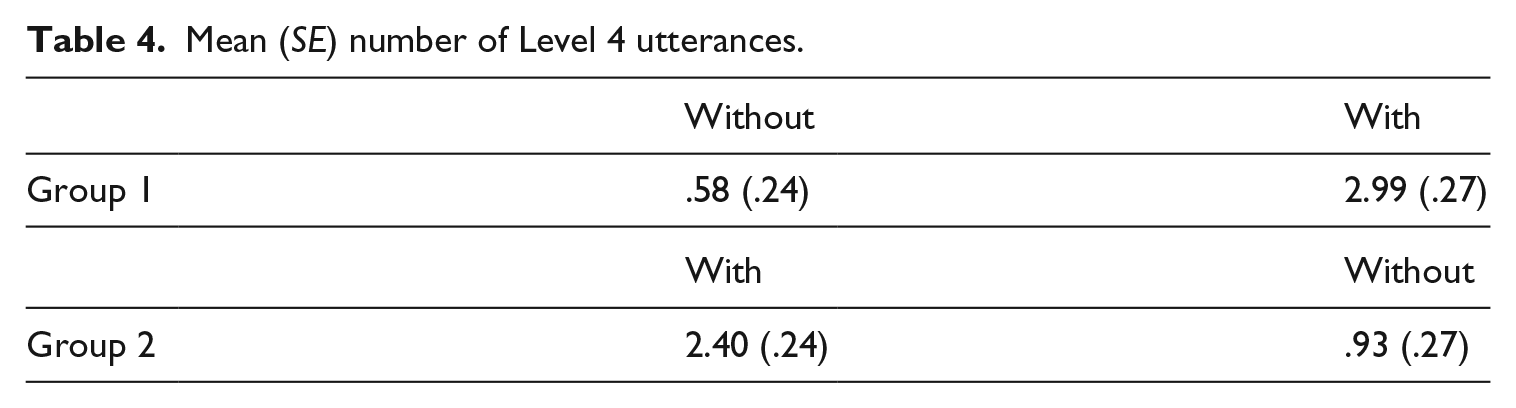

Interestingly, as can be seen in Table 4, it appeared that between the first and second reading activity, the relative difference in the number of Level 4 inferences was smaller for the picture books with than without scripted inferencing questions. When the picture book with scripted inferencing questions was read in the second reading activity, approximately 25% more inferences were made compared to when it was read in the first reading activity. For the picture books without scripted inferencing questions, however, there was almost a 50% difference between the first and second reading. This higher number of inferences produced when reading the picture book without scripted inferencing questions during the second reading activity is unlikely to result solely from parents and children being more talkative after the first reading. Rather this seems to suggest that in the ‘with-without’ order parents and children to some extent applied what they had done during the first reading activity (i.e., engaging in extra-textual talk focused on inferencing) to the second reading activity.

Mean (SE) number of Level 4 utterances.

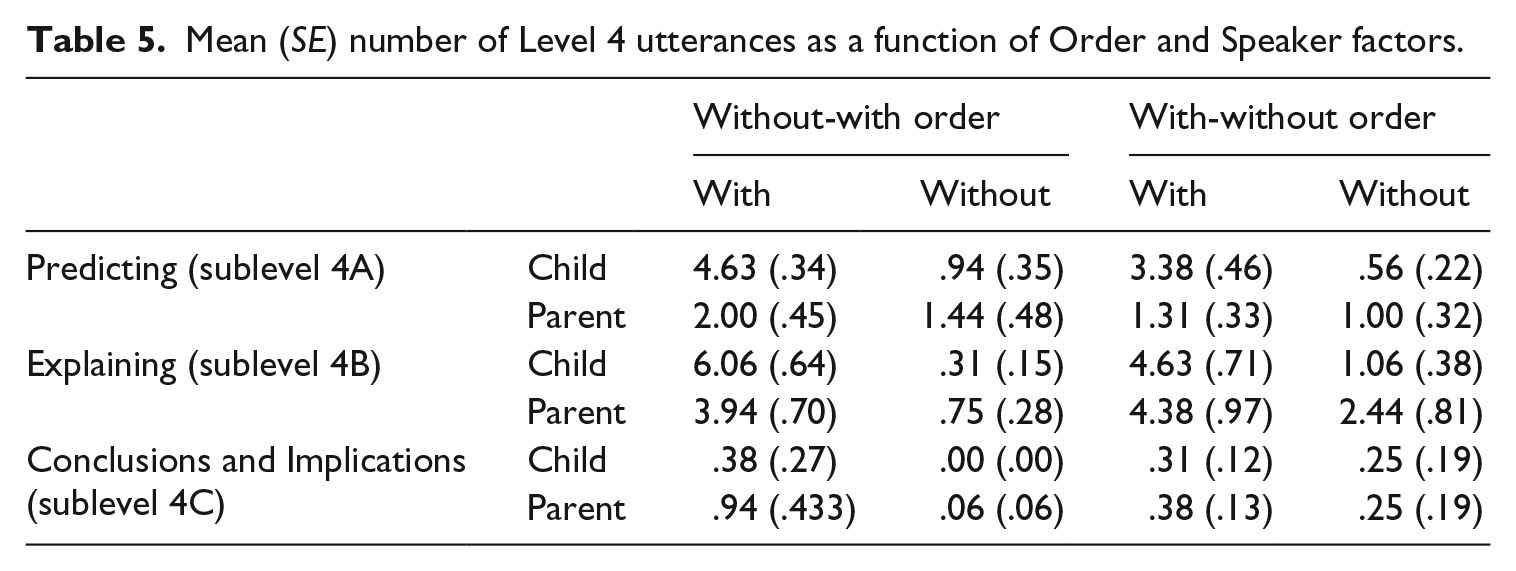

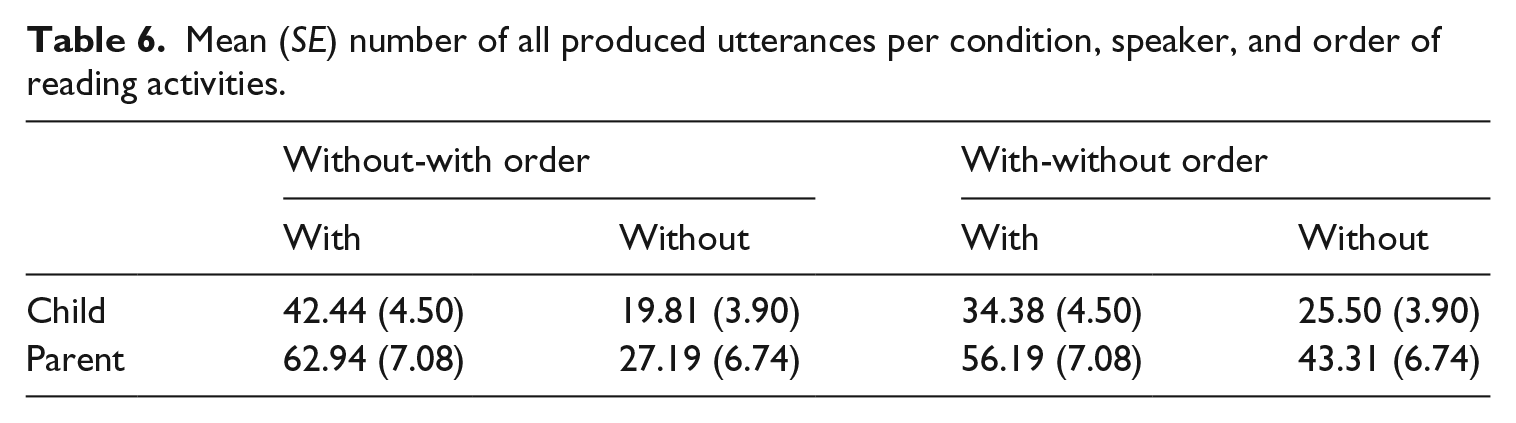

This observation is partially supported by a more fine-grained analysis. Specifically, the analysis revealed a significant Speaker × Sublevel × Order interaction (large effect), F(2, 60) = 4.83, p = .01, ηp2 = .14. Unpacking this interaction, it appeared that for both orders of book type (with/without scripted inferencing questions), there was a significant interaction between Speaker and Sublevel (without-with order: F(1, 30) = 7.31, p = .003, ηp2 = .34; with-without order: F(1, 30) = 6.06, p = .006, ηp2 = .29; both large effects). Irrespective of whether a book with scripted inferencing questions was read first or second, children made more predictive inferences (sublevel 4A) than parents compared to when reading without scripted inferencing questions. For the utterances related to explaining (sublevel 4B), a different pattern emerged. Specifically, parents made more explaining utterances than children did when reading without scripted inferencing questions but only when this was as a second reading activity. No significant effects of Order were found for the utterances at the conclusions and implications level (sublevel 4C; see Table 5). Table 6 shows an overview of the total number of utterances for each group and order of reading activities.

Mean (SE) number of Level 4 utterances as a function of Order and Speaker factors.

Mean (SE) number of all produced utterances per condition, speaker, and order of reading activities.

Discussion

This study investigated to what extent scripted inferencing questions embedded in picture books support parent–child interactions during shared book reading and improve children’s story comprehension. Different from previous studies (Dawes et al., 2019; Desmarais et al., 2013; van Kleeck et al., 2006), we isolated the effects of inferencing questions by comparing the experimental intervention to a shared reading activity where scripted inferencing questions were not embedded in the book (i.e., regular reading situation). We examined effects of inferencing questions on children’s engagement in inferencing during the parent–child interaction and on whether inferencing questions contributed to comprehension of the story at hand, rather than on children’s general ability to respond to decontextualized language. Additionally, we focused on parents rather than professional educators as the family provides the most natural context for shared reading. Finally, we examined whether the interactions elicited by the scripted inferencing questions would transfer to a regular reading situation, by having parent–child dyads first read a book with scripted inferencing questions and then a book without such questions.

The results first of all showed a clear and large effect of the scripted inferencing questions on parent–child interactions (Research question 1). Overall, parents and children produced almost twice as many utterances (both when considering utterances at all levels and utterances only at Level 4) when reading the book with scripted inferencing questions than when reading the book without scripted inferencing questions. A closer look at children’s and parents’ contributions to the interactions showed an interesting pattern. When reading books with scripted inferencing questions, children made more predictions about the story and more often produced explanations of story events than parents and than children reading without scripted inferencing questions. Parents, however, made more of such inferences when reading without scripted inferencing questions. This suggests that having scripted inferencing questions in the book created a situation where children started to make more inferences. This is especially relevant given that children are expected to develop into independent readers who are able to derive and understand the relations within a story (Bos et al., 2016). Caution is warranted regarding the interpretation of these findings given that based on the present study it could still be possible that the fact that parent–child dyads were prompted to talk about the stories per se led children to make more inferences. Put differently, it might be that any story-related question encourages children to make inferences. Future research could attempt to tease out the specific benefits of inferencing question prompts by replicating the present study with an additional control condition containing another type of question prompt (e.g., literal questions). A key challenge in such future work would be to carefully choose an alternative type of question prompt such that meaningful comparisons can be made (e.g., comparing two question types that target higher-level comprehension). To further pinpoint the effects of scripted inferencing questions, future work could also expand the previous study by investigating the extent to which the inferences children make are in direct response to the scripted inferencing questions.

We also investigated whether effects of the scripted inferencing question-intervention would transfer to a second shared reading activity immediately following the first reading activity (Research question 3). The results provided some indication in this direction. It appears that parents and children who had first read the story with scripted inferencing questions produced more inferences in the natural reading situation relative to parents and children who had not read the story with scripted questions first. Importantly, our findings suggest that this effect is primarily driven by parents explaining more to their child after they have read the book with scripted inferencing questions. We interpret this finding as being indicative of parents having learned from using the scripted inferencing questions: presumably in the second reading activity (without scripted inferencing questions) parents behaved more like in the first reading session (with scripted inferencing questions) in an attempt to encourage continued engagement in inferential extra-textual talk after the first reading activity. As parents knew they were in a test situation and their child would be tested on the story they read together, there is a reasonable chance that this made them especially open to using the type of inferencing questions they have seen in the first reading activity also when reading the second book. This may have increased their rate of inferential talk relative to what they would naturally do. Moreover, the second reading activity immediately followed the first reading activity in the same test session. With such a small time span between the reading activities it cannot be excluded that our findings simply reflect priming from the first to the second reading activity rather than actual transfer. More research is needed to investigate to what extent these findings generalize to shared reading outside of a testing situation and shared reading activities that are separated by longer intervals.

These findings add to the outcomes of prior research (van Kleeck et al., 2006) by showing that scripting results in more inferencing during parent–child interactions, that scripting particularly results in more child engagement, and that inferencing can be trained relatively easily: we were able to influence current and subsequent (unscripted) interactions during shared book reading in a single 20-minute session that took place within a natural reading context (untrained parents reading with their child) by only embedding inferencing questions at specific locations in the story. It is conceivable that the degree of transfer is stronger after more repeated exposure to shared reading activities involving books with scripted inferencing questions. This idea could be tested in a follow-up study in which parent–child dyads read stories with scripted inferencing questions in multiple sessions before offering them a new book without scripted inferencing questions. Such an approach also allows for investigating whether and how the use of scripted inferencing questions could be gradually reduced so as to optimally support children to become independent readers.

Contrary to our expectations, the positive impact of the scripted inferencing questions on inference generation during the parent–child interactions did not result in corresponding benefits on children’s story comprehension (Research question 2). In general, a similar number of comprehension questions were answered correctly irrespective of whether the book with or without scripted inferencing questions was read. These findings correspond to some extent with the outcomes found by Desmarais et al. (2013), who found no effects on the PLAI, but are at odds with the positive effects established by van Kleeck et al. (2006) and Dawes et al. (2019). There are several potential explanations. A first possible explanation lies in the nature of the sample. Van Kleeck et al. (2006) focused on Head Start children who had limited language skills and Dawes et al. (2019) examined effects on children with developmental language disorder, whereas our sample consisted mostly of highly educated families with children who (most likely) possess relatively well-developed language skills. Although there is evidence that children with better-developed language skills benefit from parent–child inferential talk (e.g., Reese & Cox, 1999), it might be that these children did not need our intervention to understand the story, but that an effect would emerge in a group of children with weaker language skills (and thus would be more likely to benefit from the provided support). Similarly, it might be that a younger sample would have benefitted. Our intervention targeted five- and six-year-olds, but a meta-analysis of Dialogic Reading interventions (Mol et al., 2008) showed that interactive reading for preschoolers is less effective than for kindergartners, probably because preschoolers need less external support to develop an understanding of the story. Further research is thus needed to test whether effects on parent–child interactions can be replicated in a younger low-skilled sample and to test whether for these children increased inferencing does contribute to story comprehension.

A second possible explanation is that the story comprehension test we used was not sensitive enough to detect an effect. What may have played a role here is that we asked for children’s general story comprehension and did not focus exclusively on the components that were covered by the built-in inference questions. Having said that, it was interesting to note that the number of utterances children produced regarding explaining events in the story was positively related with higher comprehension scores. This could indicate that the scripted inferencing questions encouraged children to reason about the story and develop a more accurate understanding of the story. However, an alternative interpretation is that scripted inferencing questions simply triggered children to speak out what they had already understood from the story. Under this view, the scripted inferencing questions did not contribute to improved story comprehension, but children’s responses to these questions were merely a reflection of how well they understood the story.

Finally, the present study was set up as a small-scale intervention consisting of one shared reading activity in which parents were not instructed or trained to use the scripted inferencing questions. It is possible that some extra guidance is necessary (perhaps over a longer period of time) for parents to use the scripted inferencing questions to the benefit of story comprehension. It is conceivable that parents have zoomed in on discussing specific story elements as dictated by the scripted inferencing questions, but in general did not manage to support children enough in creating a coherent representation of the story. This may have negatively influenced children’s performance on the comprehension test. That children in general correctly answered approximately half of all comprehension questions suggests that they could have benefitted from additional guidance from parents during shared reading to develop a better understanding of the story. Of course, the above explanations need to be tested and further corroborated in future research.

To conclude, this study shows that offering storybooks with scripted inferencing questions encourages inference generation during shared book reading. Particularly, children appear to benefit from scripted inferencing questions in terms of producing more inferences during reading. Interestingly, this study also provides some indications that after only one reading session, parent–child dyads seem to make use of their experiences with scripted inferencing questions in a new shared reading activity (without scripted inferencing questions), supporting them in producing inferences when this is not encouraged. These positive effects of scripted inferencing questions did, however, not materialize in improved story comprehension (as compared to a business-as-usual control condition). Although this could suggest that interactive shared book reading is not an effective strategy to support story comprehension (cf. Neuman, 1996), a more optimistic interpretation is that there may be some boundary conditions under which interactive reading is effective such as when it addresses specific needs. For example, based on our study it can be suggested that scripted inferencing questions are likely more effective for younger and disadvantaged children who have less well developed language or inferencing skills. The present study hopefully serves as an impetus for future development of effective interventions to support parent–child interactions and story comprehension.

Footnotes

Acknowledgements

We would like to express our gratitude to Bibi Smaal, Brechtje van Zeijts, and Tosca van Duijnen for their assistance in collecting the data and scoring the parent–child interactions. We also thank Mina Nisar for scoring the comprehension test and the schools who have assisted us in recruiting parents and children for this study. We also thank Simone Hemerik for creating the books used in this study.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship and/or publication of this article: This research was supported by a Grant from the Dutch Reading Foundation (Stichting Lezen) awarded to Bjorn de Koning, Stephanie Wassenburg, Lesya Ganushchak, and Roel van Steensel.