Abstract

Shared book reading in preschool settings plays an influential role in supporting children’s oral language and emergent literacy skills. Early childhood teachers can provide high-quality shared book reading experiences using extratextual utterances (reading beyond the story text) to maximise these learning outcomes. We report on the development and psychometric properties of the ‘Emergent Literacy and Language Early Childhood Checklist for Teachers’ (ELLECCT) tool, a comprehensive observational checklist designed to document early childhood teachers’ extratextual oral language and emergent literacy strategies during shared book reading. The ELLECCT measures teachers’ dialogic reading prompts, vocabulary promotion strategies, responsive statements, print knowledge and phonological awareness. The ELLECCT also contains a rating scale examining paralinguistic and nonverbal strategies used by early childhood teachers to support engagement during shared book reading interactions. The psychometric properties of the ELLECCT were measured in a four-phase process. Content validity was tested using the Content Validity Index and a three-round Delphi process was used to measure face validity. Both intra-rater and inter-rater reliability were evaluated from a sample of 32 shared book reading observations. The study findings provide preliminary evidence for the psychometric properties of the ELLECCT, such that it is judged as suitable for evaluation of early childhood teachers’ use of extratextual and paralinguistic strategies while engaged in shared book reading. We describe the ELLECCT’s potential application in both classroom coaching and training, and as a research tool, to support early childhood teachers’ skill-development during shared book reading.

Introduction

It is well established that adult–child shared book reading in the preschool classroom supports oral language and emergent literacy development (Mol et al., 2009; Noble et al., 2019). High-quality shared book reading experiences in preschool settings are particularly important for children from disadvantaged backgrounds to offset the impact of lower levels of home literacy (Bracken & Fischel, 2008; Rodriguez et al., 2009) and to assist in flattening the socioeconomic gradient through provision of stimulating preschool environments (Hoff, 2003). Shared book reading provides opportunities for early childhood teachers (ECTs) to adopt an interactive shared book reading style that promotes oral language and related emergent literacy skills. Regular reading to children supports oral language and emergent literacy development (Ece Demir-Lira et al., 2019; Sénéchal & LeFevre, 2002); however, the quality of the interaction between an adult and children is of particular importance for maximising this benefit (Kaderavek et al., 2014; Mol et al., 2009).

ECTs can engage children in small group or whole-class reading sessions with book-related discussion. Their utterances that extend beyond reading the story text are referred to as

This study focused on the development and psychometric properties of the ‘Emergent Literacy and Language Early Childhood Checklist for Teachers’ (ELLECCT). The ELLECCT is a shared book reading observational tool designed to measure ECTs’ extratextual utterances to support preschoolers’ oral language and emergent literacy skills using dialogic reading prompts, explicit vocabulary promotion strategies, responsive comments, and strategies to support print knowledge and phonological awareness. The ELLECCT also measures ECTs’ use of paralinguistic and nonverbal features to support engagement during shared book reading.

Supporting language development through shared book reading

Dialogic book reading is an interactive shared book reading approach that involves an adult using specific prompts to encourage children to actively engage when listening to a story (Lonigan & Whitehurst, 1998; Whitehurst et al., 1988, 1994). Towson et al. (2016) described five prompts that adults can use to engage in dialogic book reading with young children. Represented by the acronym CROWD, these are: Completion prompt, Recall prompt, Open-ended question, Wh-questions and Distancing prompt. The use of these extratextual prompts is designed to encourage dialogue between the adult and child, with a view to children’s talk gradually increasing, and the adult’s talking role gradually decreasing (Flynn, 2011). The adult evaluates a child’s response to a prompt, expands their response and provides praise (Zevenbergen et al., 2003). Dialogic book reading has benefits for children’s vocabulary (Mol et al., 2008; Opel et al., 2009) and oral narrative skills (Zevenbergen et al., 2003). Furthermore, prompting children by using open-ended questions encourages more than single word responses (Hindman et al., 2019) and allows ECTs to expand children’s utterances by adding another semantic or syntactic component (Milburn et al., 2014). ECTs can also use questioning techniques both before and after reading the story, in addition to during the story, to impact language growth (Wasik et al., 2006).

Children’s opportunities to expand their vocabulary can be optimised when they encounter a new word multiple times through repeated exposure (Wasik & Bond, 2001). Word repetition may occur during a story or through repeated readings (Horst et al., 2011; McLeod & McDade, 2011). Highlighting novel words during shared book reading is more memorable than passive exposure (Read et al., 2019), and ECTs can incorporate explicit word learning strategies to support children’s vocabulary uptake during shared book reading. Explicit word learning strategies include explaining words or providing a synonym, relating words to story events or the children’s experience and selecting, and stressing rare words that are important for story comprehension (Milburn et al., 2014). Pauses may also be used to allow a child extra time to respond to a question (Lane & Wright, 2007) and allow opportunities for child-led conversation and initiation (Colmar, 2014). Intentional dramatic pauses before a novel word have been shown to support word retention and help children to attend to a target word (Read et al., 2019).

Supporting emergent literacy development through shared book reading

Children’s

Shared book reading as a context for building phonological awareness has received less attention in the research literature compared with other areas of emergent literacy. However, embedding phonological awareness into shared reading activities has demonstrated some benefits for typically developing preschoolers when implemented by ECTs (Ukrainetz et al., 2000). Improvements for preschoolers have been demonstrated for different phonological awareness skills when targeted explicitly during shared book reading, including rhyme awareness (Justice et al., 2005; Rachmani, 2020; Ziolkowski & Goldstein, 2008), initial sound awareness (Ziolkowski & Goldstein, 2008), and alliteration awareness with mixed results (Justice et al., 2005; Ziolkowski & Goldstein, 2008). Lefebvre et al. (2011) found explicit teaching of phonological awareness incorporated into shared book reading sessions had a positive impact on the phonological awareness skills of low-income preschoolers in childcare settings. Skills such as syllable segmentation and blending, rhyming and sound deletions were included in the intervention. Thus, phonological awareness skills can be explicitly targeted by ECTs in everyday shared book reading. This is important given children’s phonological awareness skills are a strong predictor of later reading success (NELP, 2008).

Supporting engagement during shared book reading

Children must be engaged in shared book reading interactions for quality learning experiences to occur. Engagement, or interest in reading, may be demonstrated via children’s verbal participation and nonverbal contributions such as attention, posture, use of eye contact and physical positioning (Sutton et al., 2007). Research has also demonstrated that preschoolers maintain engagement during shared book reading when ECTs incorporate extratextual talk focusing on language and emergent literacy strategies (Kaderavek et al., 2014). ECTs’ linguistic responsiveness during shared book reading also impacts children’s engagement. Asking them questions encourages dialogue, while attention and engagement can be enhanced when ECTs use techniques such as commenting on the story, acknowledging children’s utterances and imitating or expanding their utterances (Girolametto & Weitzman, 2002; Milburn et al., 2014). Encouragement, praise and excitement have been found to support engagement during shared book reading (Diehl & Vaughn, 2010). ECTs can also use utterances to manage behaviour and direct attention towards the story (Wasik et al., 2006).

Children’s engagement during shared book reading may be impacted by how ECTs use paralinguistic and nonverbal features during reading. The way in which ECTs read the story, in addition to their use of extratextual utterances, promotes children’s engagement (Sutton et al., 2007). For example, an ECT may read the story in an appealing and interactive way to make the book delivery more engaging for children (McGinty et al., 2006). Evidence for the impact of paralinguistic and nonverbal features on children’s language development is preliminary as this has not been researched as extensively as extratextual utterances. However, changes in ECTs’ volume and tone when reading have been found to support children’s engagement levels and draw their attention towards key vocabulary and story content (Diehl & Vaughn, 2010; Moschovaki et al., 2007). ECTs can use gesture, facial expression and eye contact while reading to children, with body language assisting children’s story comprehension and engagement (Kefeli & Bayraktar, 2014; Moschovaki et al., 2007). Preliminary findings support vocabulary development by demonstrating the meaning of words through acting out, with stronger results found for expressive language (Wasik et al., 2006). Overall, therefore, the literature suggests that ECTs’ use of these nonverbal features may enhance the quality of shared book reading sessions with preschoolers.

Contextual and book-related features can impact the amount of extratextual talk and engagement during shared book reading sessions. Children’s engagement levels are impacted by group size; children are generally more interactive in small groups (Wasik, 2008) and less engaged in larger groups (Powell et al., 2008). Variability in the use of extratextual utterances has been linked to books that include higher print-salient features including contextualised print within illustrations and font changes. ECTs have demonstrated increased use of print referencing strategies when reading books with more print-salient text (Zucker et al., 2009). Book genre has also influenced the amount of extratextual talk during shared book reading, with ECTs using more extratextual talk when reading informational books compared with storybooks (Price et al., 2012). Reduced engagement has also been demonstrated for children with language difficulties (e.g. Skibbe et al., 2010); however, book features such as the use of text manipulatives (e.g. lift-the-flap) have been found to increase engagement levels of such children (Kaderavek et al., 2014). The duration of the reading time has been linked with the amount of extratextual talk (Price et al., 2012; Zucker et al., 2009); however, further research is needed to understand the interplay between extratextual talk and book length.

ECTs’ oral language and emergent literacy support

The literature on shared book reading has highlighted the benefits of ECTs’ extratextual utterances targeting oral language and emergent literacy skills in supporting children’s developmental outcomes (Justice et al., 2009; Mol et al., 2009). Opportunities to provide such support, however, are not always maximised by ECTs without undergoing formal training. For example, ECTs do not typically pay much attention to print during shared book reading or use many print referencing strategies (Gettinger & Stoiber, 2014; Zucker et al., 2009). Combined with the fact that preschoolers themselves do not focus much of their attention on print (Evans et al., 2008), this has implications for preschoolers receiving optimal benefits for their emergent literacy growth during shared book reading. Following training, however, ECTs have been able to increase their use of a print referencing style and thus improve children’s alphabet knowledge and print concept knowledge (Gettinger & Stoiber, 2014; Justice et al., 2009). The existing research on ECTs’ use of questioning during shared book reading indicates they tend to focus on less cognitively challenging questions (Deshmukh et al., 2019) or questions that do not elicit much verbal language (Hindman et al., 2019). Training to increase ECTs’ use of questions (such as open questions), explicit vocabulary teaching strategies or other comments results in them providing stronger language support during shared book reading (Hindman & Wasik, 2012; Milburn et al., 2014). Such changes in the frequency and nature of extratextual talk by ECTs during shared book reading can be measured with validated and reliable observational tools.

Assessing shared book reading with observational tools

A well-designed tool to measure extratextual talk, along with paralinguistic and nonverbal characteristics of language used by ECTs during shared book reading has the potential to accurately profile the quantitative and qualitative patterns of their prompts during shared book reading at a given time-point, document changes subsequent to professional development, and contribute to pre-service training and in-service professional learning. A number of tools exist to measure various features of ECTs’ behaviours during shared book reading. These tools include the following: The Systematic Assessment of Book Reading (SABR; Justice et al., 2010), The Systematic Assessment of Book Reading 2.2 (SABR 2.2) (Zucker et al., 2018) and two commercially available tools – Early Language and Literacy Classroom Observation Pre-K Toolkit (ELLCO Pre-K) (Smith et al., 2008) and The Observation Measure of Language and Literacy Instruction-Read Aloud Profile (OMLIT-RAP) (Goodson et al., 2006).

The SABR (recently updated to the SABR 2.2; Zucker et al., 2018) is a standardised observational tool that measures ECTs’ instructional behaviours during shared book reading. Using the SABR, ECTs are measured using interval coding within 15-second time periods to provide data on qualitative and quantitative features of shared book reading. ECTs’ instructional supports are measured across four domains: language development, abstract thinking, elaborations, and print/phonological skills, and emotional support on how the ECT organises and delivers the session (Justice et al., 2010). The SABR 2.2 has a standardised observational protocol that trained raters use to code shared book reading session from video, instead of relying on transcription or interval coding. The SABR 2.2 is a broader measure of shared book reading than the original version with fewer items across three sections: language-facilitating talk (e.g. asking questions and repeating, recasting, or expanding children’s utterances), literacy-related talk (e.g. utterances about print conventions and letters, words, and writing), and meaning-related talk (e.g. utterances that indicate cognition or thinking of characters to increase meaning of the text) (Pentimonti et al., 2021). Classification on the revised version, the SABR 2.2, characterises ECTs’ extratextual talk more broadly than the original version and does not provide as much detail about the specific use of teacher talk across some language and emergent literacy areas.

The OMLIT-RAP (Goodson et al., 2006) measures characteristics of adults’ instructional behaviours when reading to children in preschool classrooms. Strategies are measured across pre-reading, reading, and post-reading stages and include: print concepts (e.g. tracking print), comprehension (e.g. discussing background knowledge, directing children to text or illustrations, expanding utterances, asking and answering questions, and summarising), phonological awareness (e.g. commenting on letters and sounds), and language through higher order thinking (e.g. asking questions that prompt abstract thinking). Each strategy undergoes binary coding to indicate whether it was observed or not. Three main areas of quality including open-ended questions, story-related vocabulary, and quality of post-reading activities are scored using a global 5-point scale (Sutton et al., 2007). The use of binary codes limits the information provided as ECTs’ frequency of extratextual utterances is not taken into account. For example, an ECT is only required to demonstrate a strategy once and they would receive a binary code to indicate the strategy was present during the shared reading session. Frequency is important due to the relationship between ECTs’ use of oral language and emergent literacy strategies during shared book reading and children’s outcomes (e.g. Justice et al., 2009; Mol et al., 2009). Therefore, the use of the OMLIT-RAP as a training tool has important limitations as it cannot differentiate between a teacher who is maximising opportunities during shared book reading with a higher frequency of instructional behaviours versus a teacher who is making less frequent use of such opportunities.

The ELLCO Pre-K (Smith et al., 2008) provides a global rating of five items relating to books under the heading of ‘books and book reading’. These items relate to whether there is a range of books within the preschool, the approach to reading, such as individual or small group, and the quality of the book reading, including how engaging and interactive the reading is (Smith et al., 2008). The ELLCO Pre-K does not allow for discrete shared book reading strategies and their frequency to be measured, as it utilises a 5-point scale for each item subscale. Furthermore, only a small number of items relating to shared book reading are included on the ELLCO Pre-K. Therefore, the information provided about ECTs’ ability to implement a range of oral language and emergent literacy strategies is limited. A more comprehensive range of strategies would allow more specific details about an ECT’s ability to incorporate extratextual talk into shared book reading, including targeted behaviours that are more supportive for children’s language and literacy outcomes.

The current study

In developing the ELLECCT, we sought to extend on previous tools by providing a more comprehensive shared book reading observational measure that incorporates both oral language and emergent literacy strategies, and paralinguistic and nonverbal features that are known to support preschool children’s engagement with text. Incorporating all three domains (oral language, emergent literacy, and paralinguistic and nonverbal features) is important to comprehensively capture shared book reading characteristics and provide a nuanced evaluation of strategies used by ECTs when reading to children. A key distinguishing feature of the ELLECCT is the focus on dialogic book reading through the use of dialogic book reading prompts. Furthermore, the ELLECCT builds on previous measures through the incorporation of explicit vocabulary teaching strategies, phonological awareness prompts, and paralinguistic and nonverbal features. Another unique feature of the ELLECCT is it captures multiple aspects of the shared book reading context: ECTs’ instructional extratextual utterances to support language and literacy development, ECTs’ use of responsive statements, and engagement-building through the use of paralinguistic and nonverbal features. Furthermore, the ELLECCT incorporates theoretically and empirically driven research findings and expert opinion to characterise ECTs’ shared book reading practices relating to children’s oral language and emergent literacy skills as key developmental processes in the preschool years.

The aim of this study, therefore, was to develop and provide preliminary examination of the psychometric properties of the ELLECCT. To gauge the validity of the ELLECCT, content validity and face validity were evaluated. To determine reliability, intra-rater and inter-rater reliability were assessed. Each phase of the research reported in this article was approved by The La Trobe University Human Ethics Committee and the Department of Education and Training.

Evaluating the psychometric properties of the ELLECCT

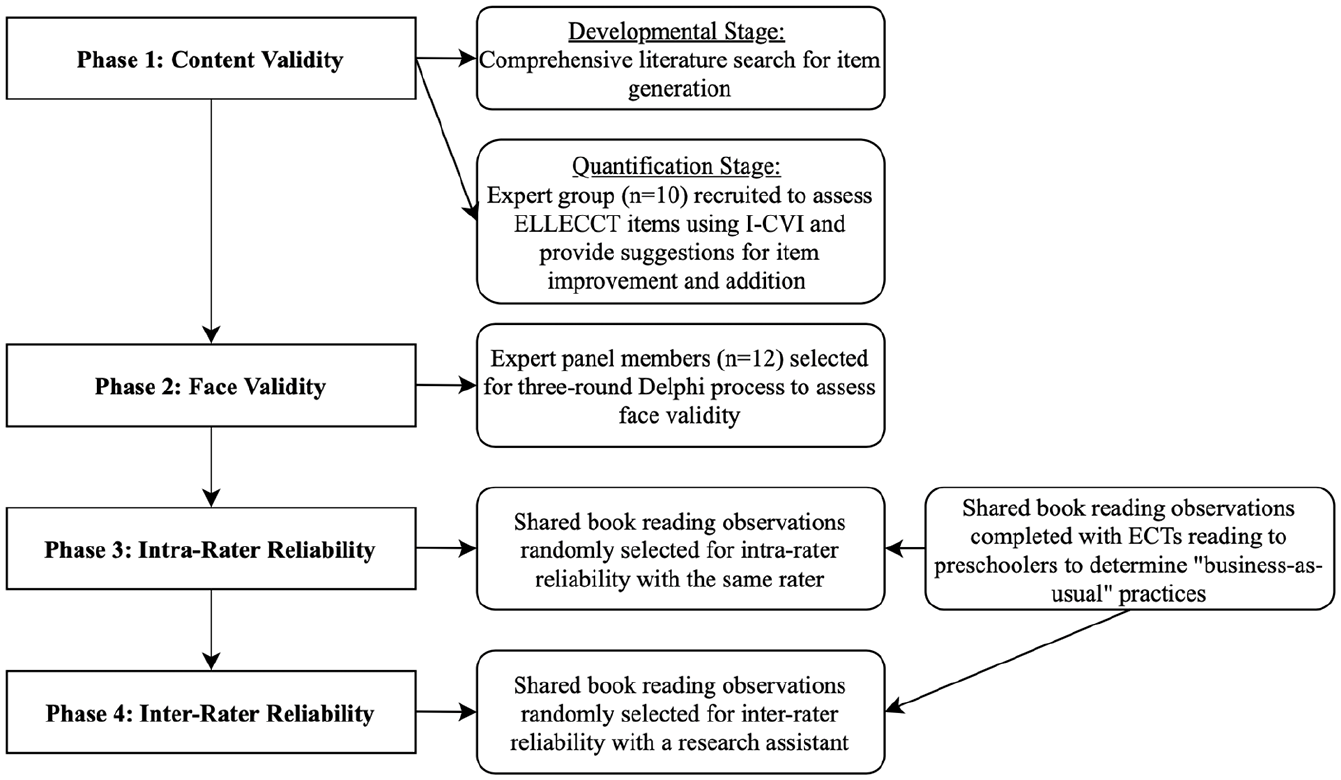

The development and psychometric evaluation of the ELLECCT occurred across four phases (Figure 1). Development of the tool occurred during the initial validation phases. In Phases 1 and 2, we sought to determine the content and face validity of the ELLECCT, respectively. These phases occurred concurrently to shared book reading observation data being collected for use in Phases 3 and 4.

The four phases of the psychometric analysis of the ELLECCT.

Phase 1: content validity

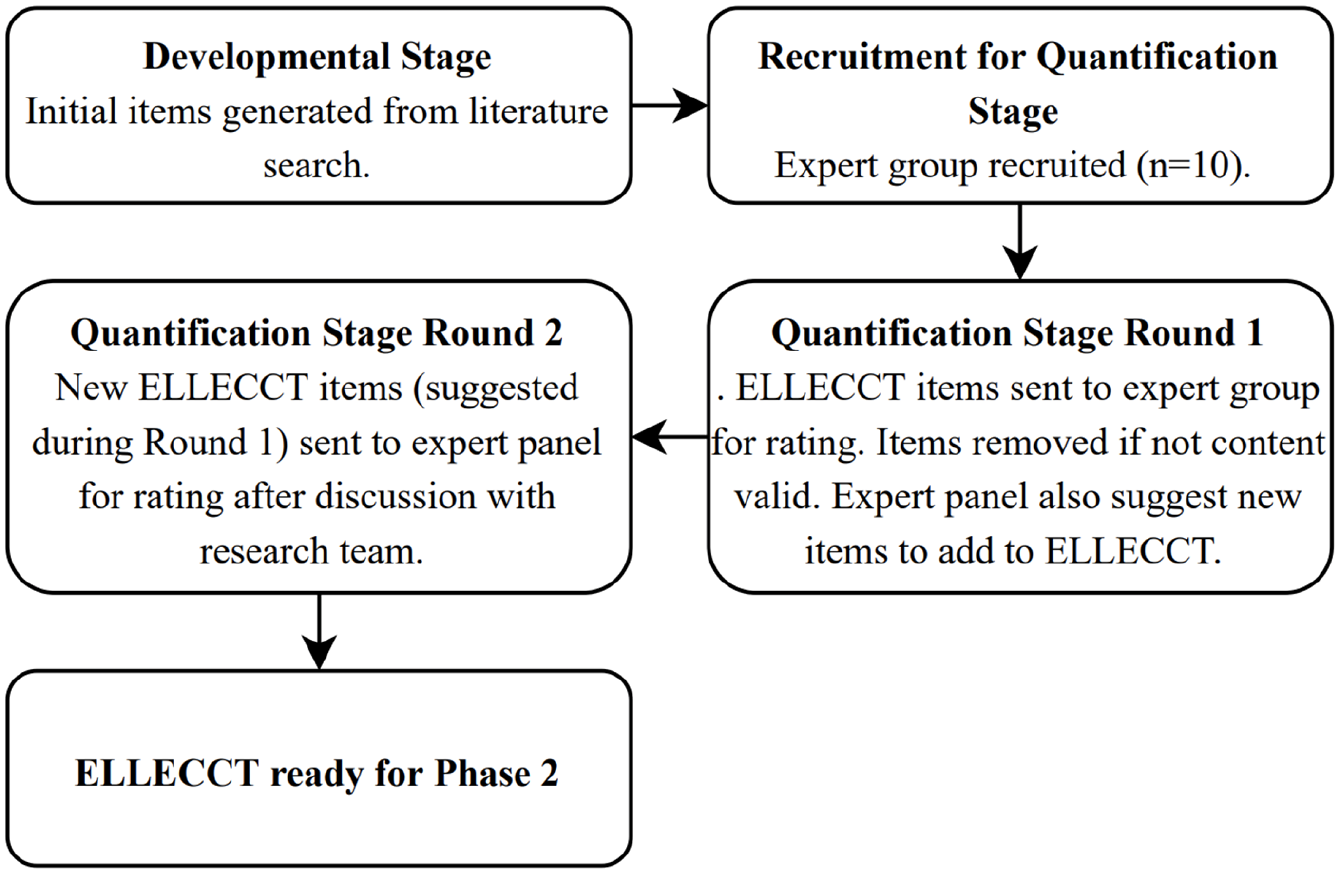

A two-stage process was undertaken to examine the content validity of the ELLECCT: a developmental stage and a quantification stage (Lynn, 1986). The steps involved in assessing the content validity are summarised in Figure 2.

The content validity Phase.

ELLECCT developmental stage

The developmental stage of the ELLECCT began with an extensive literature search to identify possible items for inclusion. The literature search involved reviewing journal articles, books, and other sources (e.g. research conducted by government or research organisations) specific to oral language and emergent literacy strategies during shared book reading. Items were selected if they were well established in the literature as effective in fostering the oral language and/or emergent literacy skills of preschoolers during shared book reading. Items were reworded to meet the suitability for the ELLECCT and categorised into different sections (e.g. prompts) around particular features of ECTs’ extratextual utterances. The first version of the ELLECCT contained a ‘question prompt’ section generated by the study authors to broadly classify questions as open-ended or closed. The original tool also contained ‘vocabulary promotion’ and ‘responsive statements’ sections adapted from Milburn et al. (2014) and a classification system for ‘print referencing’ adapted from Ezell and Justice (2000). A ‘phonological awareness’ section was generated by the study authors that included a ‘sound awareness’ item adapted from Girolametto et al. (2012).

ELLECCT quantification stage

Participants

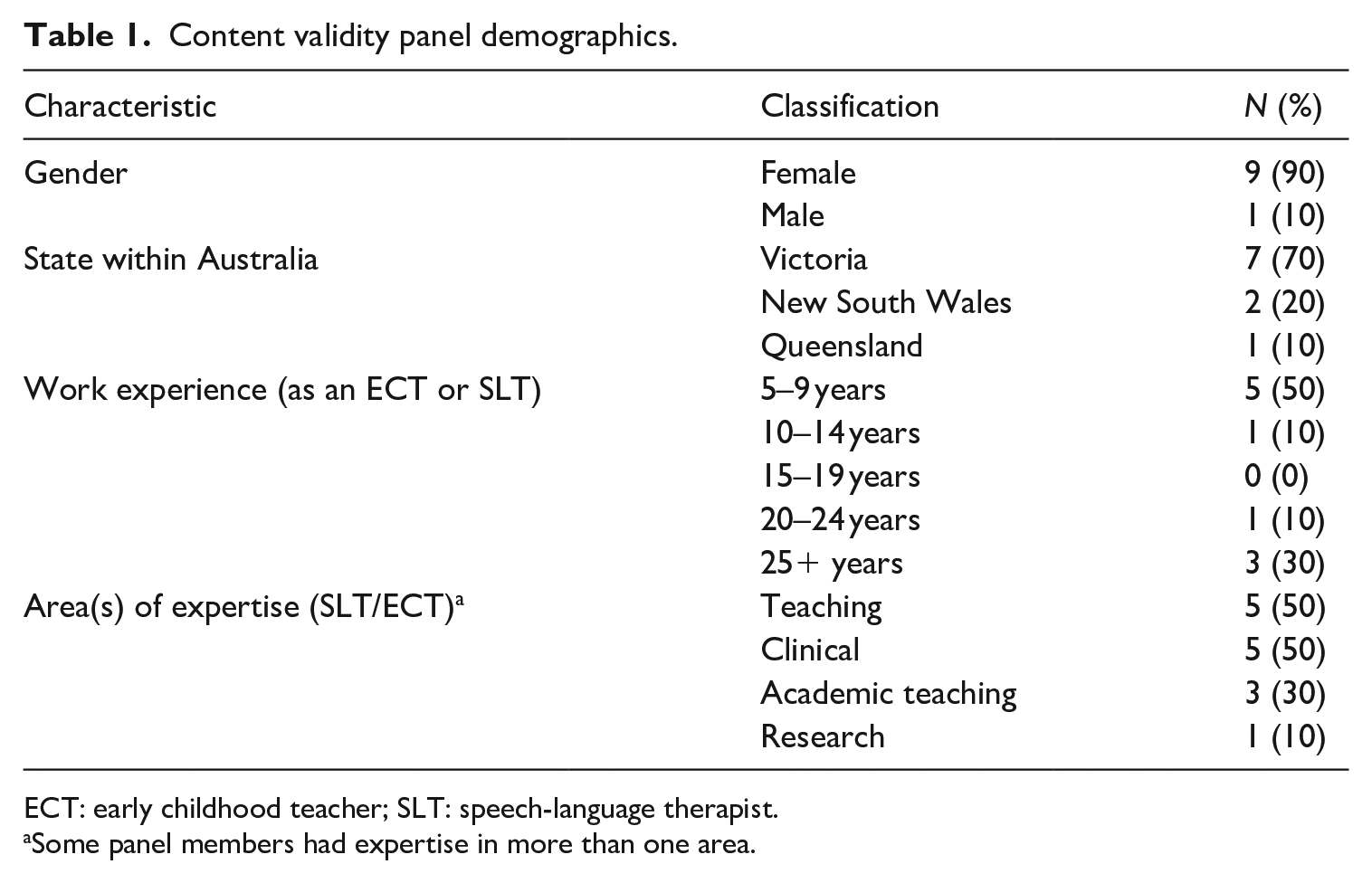

A panel of five ECTs and five speech-language therapists (SLTs) was established using purposive sampling. ECTs and SLTs were consulted for their disciplinary knowledge in children’s language and emergent literacy development. The panel was recruited through social media platforms including closed Facebook groups and professional interest groups. Eligibility for inclusion required that participants held a Bachelor’s or Master’s qualification in the area of early childhood teaching and/or speech-language therapy, with a minimum of 5 years’ experience. For SLTs, having worked in the area of paediatric oral language and emergent literacy was required for inclusion. Equal numbers of ECTs and SLTs were recruited to ensure representation of disciplinary expertise and knowledge across both disciplines. A minimum of five participants has been recommended to account for chance agreement and a maximum of 10 participants to use the Content Validity Index (CVI; Lynn, 1986). Table 1 describes the characteristics of the content validity panel.

Content validity panel demographics.

ECT: early childhood teacher; SLT: speech-language therapist.

Some panel members had expertise in more than one area.

Quantification process

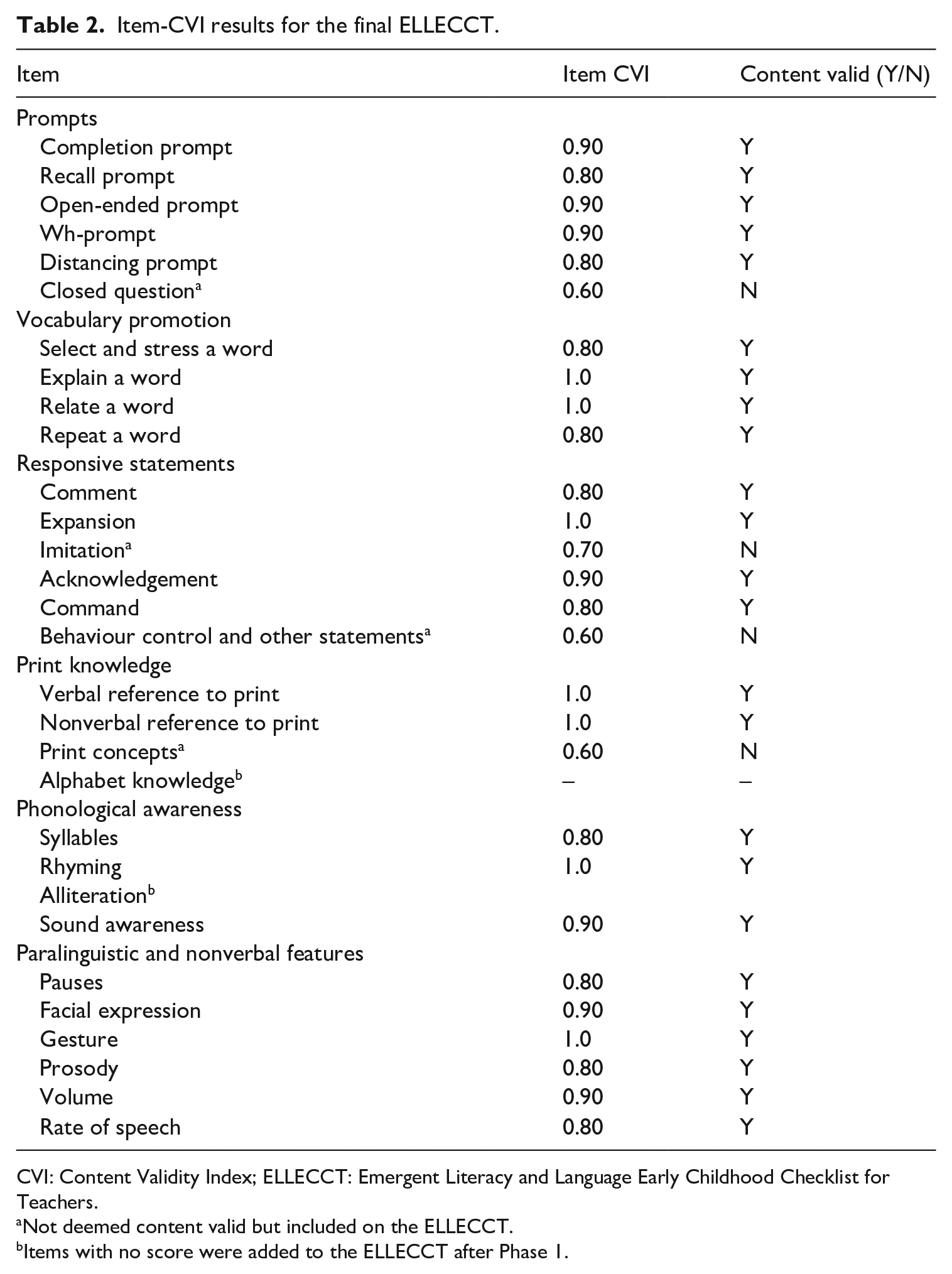

The CVI (Lynn, 1986) was used to determine the content validity of the ELLECCT. The CVI uses a proportion agreement method to quantitatively measure content validity. An Item-level CVI was selected to ensure each item on the ELLECCT had content validity (Polit & Beck, 2006). Two anonymous rounds of the quantification process were completed electronically. All individual ELLECCT items were rated using a 4-point rating scale, whereby 1 = no relevance, 2 = somewhat relevant, 3 = quite relevant and 4 = highly relevant (Polit & Beck, 2006). The CVI for each ELLECCT item was judged by the proportion of panel members who rated the item as content valid, that is, a rating of 3 or 4 (Lynn, 1986). The Item-CVI was calculated by dividing the number of panel members giving a rating of 3 or 4, by the total number of panel members (Polit & Beck, 2006). Eight panel members needed to be in agreement to endorse an item as content valid (Lynn, 1986). Items that did not achieve an Item-CVI of 0.80 were deemed to not have sufficient content validity and were considered for removal. Items were retained if there was empirical evidence for them demonstrating enhanced outcomes for children’s oral language and emergent literacy skills in the literature. Step 2 required the panel to suggest additional items or to recommend modification for improvement to existing items (Lynn, 1986). All suggestions for new items were considered in relation to their alignment with the aims of the ELLECCT and incorporated for the second round of the quantification process. The same two-step quantification process was undertaken by the panel to assess the Item-CVI of the new items suggested. Newly suggested items were sent to all panel members for the second round of the quantification process one month after the initial results were received. Once all responses were received, a second summary table was completed. Items with an Item-CVI of 0.80 or more were added to the ELLECCT.

Content validity results

In the first quantification stage, seven items did not meet the required Item-CVI threshold of 0.80. Four items were removed and three items ( ‘imitation’, ‘behaviour control and other statements’ and ‘closed questions’) did not meet the threshold but were retained for their importance in capturing ECTs’ extratextual utterances during shared book reading. For example, the ‘closed question’ item has been found to have benefits in clarifying a child’s response, checking understanding, and encouraging target word usage (Hindman et al., 2019). The item-CVI results for all ELLECCT items are displayed in Table 2. Panel members suggested using a broader range of prompts that were consistent with dialogic reading prompts (Whitehurst et al., 1988), which formed the ‘prompts’ section for the second quantification stage. The panel recommended several ‘print concept categories’ including referring to the title, author, illustrator and punctuation markers. Print concepts based on Clay’s (1993) descriptions were included in the second quantification stage. Nonverbal features to enhance story meaning were recommended by several members of the panel including the use of gesture, prosody and pause time. We used Jefferson’s (2004) Conversation Analysis conventions to generate items for a ‘paralinguistic and nonverbal features’ section to include items for facial expression, gesture, prosody, volume, rate of speech and pauses. All items, except for some individual print concept items, met the threshold for Item-CVI in the second quantification stage. The decision was made to retain these items on the ELLECCT due to the strong evidence base for print concepts and their relevance for later reading ability (NELP, 2008). The quantification stage resulted in the addition of 11 new ELLECCT items.

Item-CVI results for the final ELLECCT.

CVI: Content Validity Index; ELLECCT: Emergent Literacy and Language Early Childhood Checklist for Teachers.

Not deemed content valid but included on the ELLECCT.

Items with no score were added to the ELLECCT after Phase 1.

Additional modifications

The research team made additional changes to the ELLECCT following the completion of the Item-CVI, prior to the Phase 2 face validity recruitment. An additional item ‘alliteration’ was added to the ‘phonological awareness’ section, adapted from Pullen and Justice (2003) to include all early phonological awareness skills. A standalone item for ‘alphabet knowledge’, adapted from Girolametto et al. (2012) was added to the ‘print knowledge’ section. This would previously have come under the ‘verbal reference to print’ item. These two code-focused items were added due to their role in supporting children’s early literacy development (NELP, 2008). The ‘sound awareness’ item was removed and collapsed under the ‘alphabet knowledge’ item before being reinstated during the face validity phase.

Phase 2: face validity

The face validity of the ELLECCT was evaluated using a three-round modified Delphi process (Linstone & Turoff, 2002). A Delphi process is an established iterative method used to obtain consensus with an expert panel across multiple rounds (Hsu & Sandford, 2007; Vernon, 2009). Expert panels are often sought to review tools for logical flow, organisation, grammar and suitability for face validity (DeVon et al., 2007). A modified Delphi process was selected over a conventional face-to-face Delphi technique which involves individual interviews, due to its advantages with logistics, time, cost and preservation of participant anonymity (Linstone & Turoff, 2002).

Participants

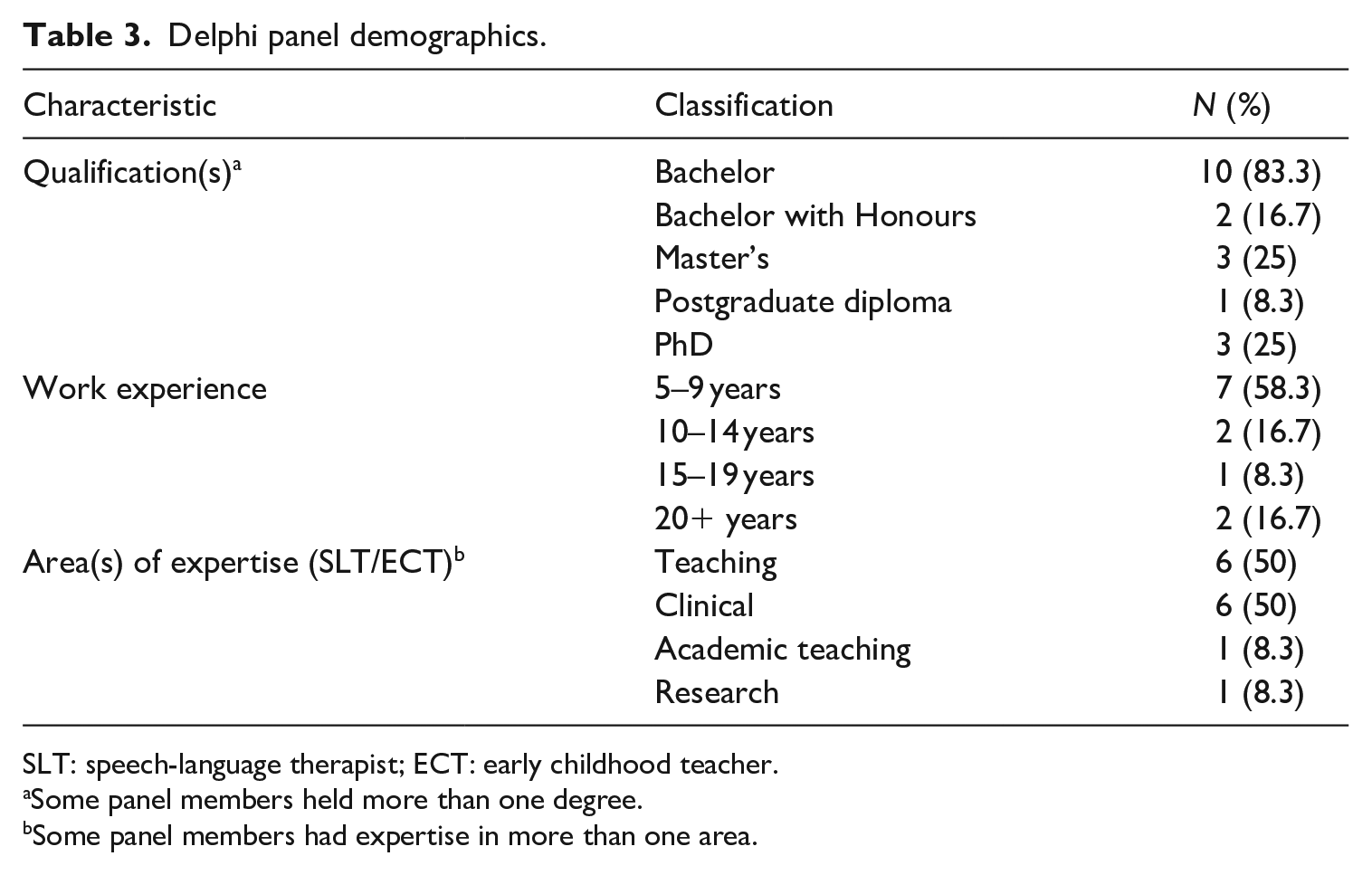

Using the same inclusion criteria as Phase 1, a new panel of six ECTs and six SLTs was established for the modified Delphi process. There is no agreed number of panel members to include in a Delphi study. Linstone (1978) recommended a minimum panel size of 7, although larger membership allows for more diversity of participant experience (Keeney et al., 2011). Overly large panels, however, can increase difficulty in reaching consensus (Keeney et al., 2011). A panel of 12 was selected for this Delphi study to allow breadth of representation and to optimise the likelihood of some level of consensus being reached by the end of the third round. This sample size was also in line with previous studies examining the face validity of social science tools using a Delphi process (e.g. Brian et al., 2016; Hernan et al., 2016). All panel members were recruited through social media platforms including Twitter and closed Facebook groups. Equal numbers of SLTs and ECTs were included once again, to maximise representativeness. Panel members were drawn from two different states in Australia and met inclusion criteria. Table 3 displays the characteristics of the Delphi panel respondents at the time of data collection.

Delphi panel demographics.

SLT: speech-language therapist; ECT: early childhood teacher.

Some panel members held more than one degree.

Some panel members had expertise in more than one area.

Method for determining face validity

Participants responded anonymously to all three rounds of the Delphi process using questionnaires hosted on Qualtrics XM (Qualtrics, 2020). Questionnaire 1 was piloted with one SLT and one ECT who made some minor suggestions about wording and formatting. Each question included a 5-point Likert-type scale, ranging from 1 = strongly agree to 5 = strongly disagree. The option of writing a comment on each questionnaire response was also provided to enable participants to provide specific feedback or additional suggestions on any of the ELLECCT items. Anonymity was assured to maximise the likelihood of participants responding candidly, to reduce the impact of any potentially dominant individuals and to minimise issues associated with group dynamics or the influence of some participants’ opinions on those of others (Linstone & Turoff, 2002; McKenna, 1994). Anonymity also meant that participants were not aware of each other’s discipline membership.

Each Delphi round was open for 4 weeks or until responses had been received from all 12 participants. Email reminders were sent to all participants in the week leading up to each questionnaire closing. There is no widely accepted benchmark about what constitutes an adequate level of consensus within a Delphi process (Biondo et al., 2008). However, 70% (summative of agree and strongly agree) is commonly reported (e.g. Stewart et al., 2017) and was selected for this study. The research team met between each Delphi round to discuss the findings (e.g. suggestions for ELLECCT item improvement) and to develop the questions for each subsequent questionnaire. For a suggestion to be adopted for the next round of the Delphi process, it was required to (1) be a measure of oral language and emergent literacy during shared book reading and (2) relate to preschoolers. Ongoing consultation between the study authors was conducted prior to each questionnaire being sent to the panel. The final version of the ELLECCT was confirmed after the reliability analysis (which is described later in the article) and is available in the Supplementary Material.

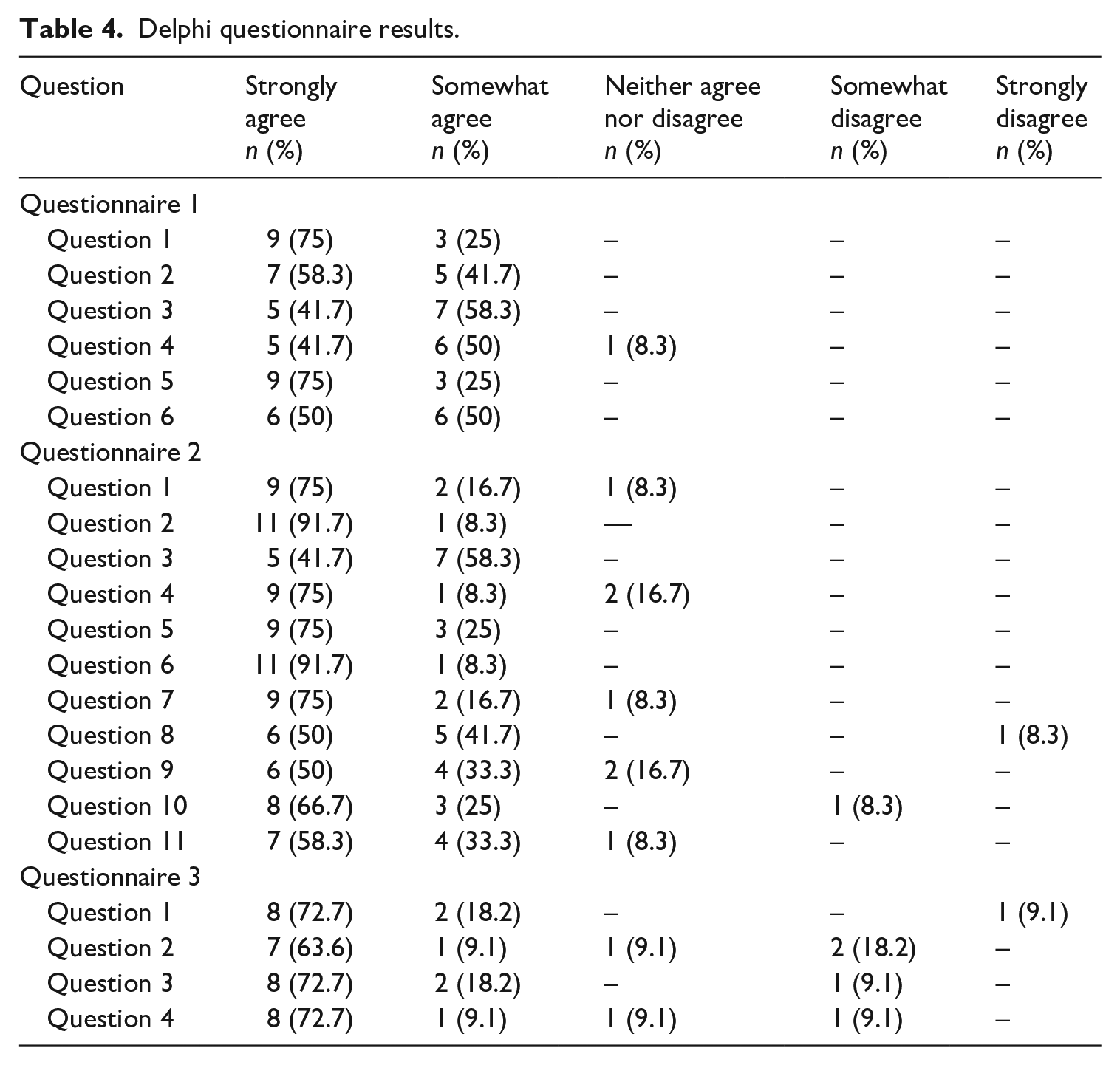

Face validity results

The three-round Delphi process resulted in a high level of consensus across each questionnaire. All questionnaires are included in the Supplementary Material and the results are displayed in Table 4. The results from the first questionnaire indicated the panel agreed the ELLECCT items had face validity and that the overall structure, scoring and use of language on the ELLECCT items and checklist was appropriate. Additional comments provided by participants did not result in any changes to the ELLECCT after round 1. Suggestions encompassed the addition of items and minor modifications to item wording and examples.

Delphi questionnaire results.

All 12 panel members responded to the second Delphi questionnaire. Consensus was reached to add examples to the ‘vocabulary promotion’ items, add an ‘additional observations’ section to the bottom of the tool and add in a ‘sound awareness’ item. The ‘sound awareness’ item that had been previously endorsed as having content validity in Phase 1 was reinstated to the tool. Agreement on the remaining questions resulted in wording changes to the scoring section, item descriptors or examples.

The third and final questionnaire contained four questions and was completed by 11 participants. Summative agreement was reached resulting in minor changes to item descriptions and examples. We decided to omit the suggestion by one of the panel members to alter the ‘explain’ item to include an example that relates the meaning of the word to the context of the child’s life. This example was considered too similar to the purpose of the ‘relate’ item, whereby a word meaning is related in context of the child’s life.

The validity of the ELLECCT is strong given 25 of the 29 final items were rated as having content validity and all items were rated as having face validity.

Phase 3 and phase 4: intra-rater and inter-rater reliability

Shared book reading observation data

Observation data of shared book reading observations with ECTs was collected to determine intra-rater and inter-rater reliability of the ELLECCT. A total of 32 ECTs working in 26 government-funded preschools across metropolitan (

A single observation of the ECT reading a book of their choice to the preschoolers in their class was videorecorded. The mean time that ECTs spent reading a story to the children was 6.94 minutes (SD: 2.91, median: 6.35, range: 2.33–15.93). In order to promote the study of ‘business-as-usual’ practices, books used by ECTs were not selected by the researchers. Most of the observations included whole-class shared book reading, although class size varied. Observations were transcribed verbatim by the first author before undergoing reliability analysis. All extratextual utterances were classified by the first author using the ELLECCT. This included extratextual utterances spoken by the ECT throughout the story, and pre- and post- book-related discussion. Reading the story text was not included as an utterance.

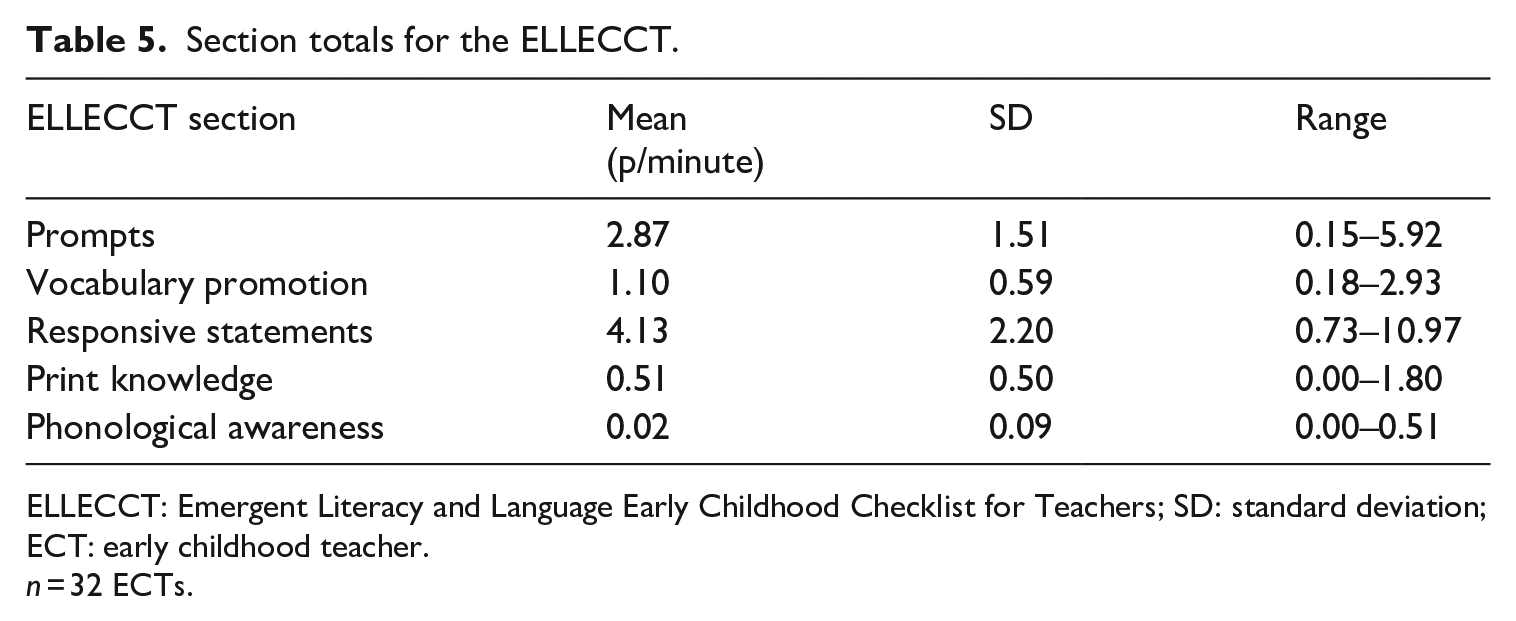

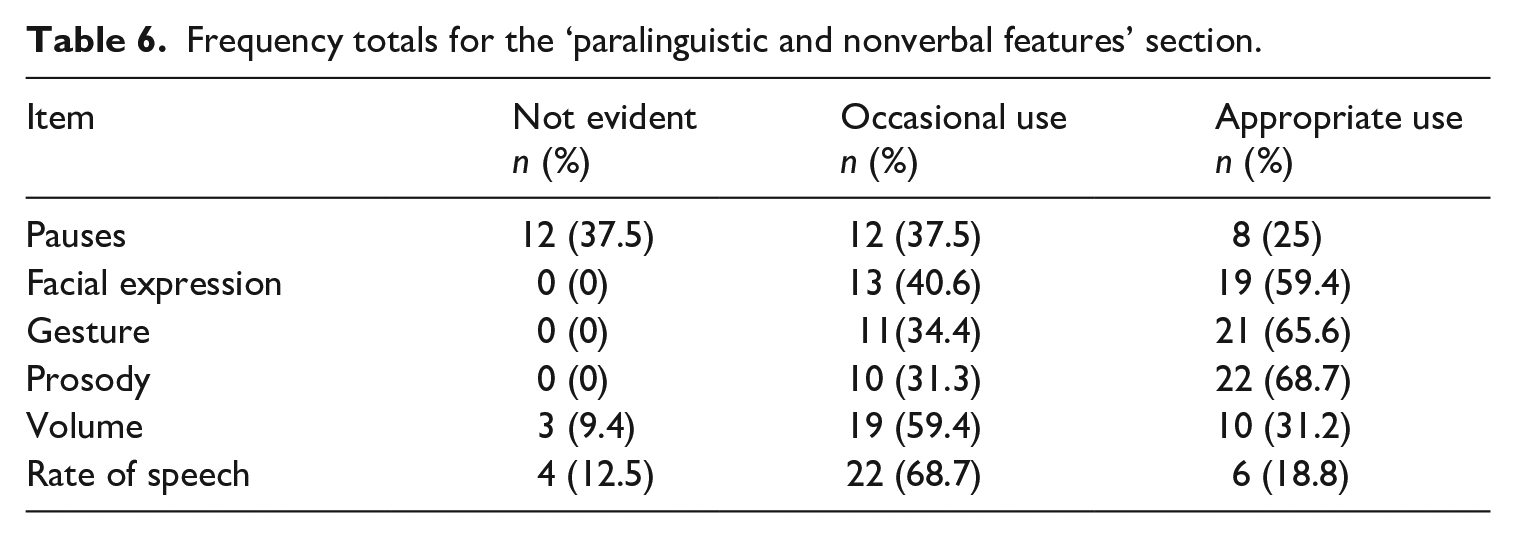

The results were obtained from the shared book reading observation videos to provide data for each section of the ELLECCT. Raw data were converted to averages per minute to account for the differences in time ECTs spent reading to children during the observations. The ELLECCT section totals for all 32-shared book reading observations are shown in Table 5. Paralinguistic and nonverbal feature item frequencies are presented in Table 6.

Section totals for the ELLECCT.

ELLECCT: Emergent Literacy and Language Early Childhood Checklist for Teachers; SD: standard deviation; ECT: early childhood teacher.

Frequency totals for the ‘paralinguistic and nonverbal features’ section.

Reliability scoring method

Reliability measures were completed using the transcripts and videorecorded observations. The written transcripts were used to measure reliability for extratextual utterances to allow for in-depth analysis of the strategies used by ECTs. The video recordings were required to assess reliability for all nonverbal items (e.g. nonverbal print references), as they could not be captured via written transcripts. Furthermore, the paralinguistic features were watched on the video recordings as audio was required to capture changes in the ECTs’ volume, prosody, and rate of speech. All utterances were coded using the final, valid ELLECCT. Utterances could be coded in more than one item on the ELLECCT (e.g. both ‘WH prompt’ and ‘open-ended prompt’). Intra-rater and inter-rater reliability were computed for each section of the ELLECCT (e.g. ‘prompts’), rather than individual items. The frequency count for all items within each section was summed to provide a score for each section. Reliability was reported for sections rather than individual items as many items received a frequency count of zero for the reliability sample data. Furthermore, this prevented inflated reliability scores for items that received a very low frequency count. A Cohen’s kappa score was used to determine intra-rater and inter-rater reliability for the ‘paralinguistic and nonverbal features’ section as it utilises categorical data.

Reliability method

Intra-rater and inter-rater reliability were completed using 25% (n = 8) of randomly selected shared book reading observational transcripts. Ratings for intra-rater reliability were completed by the first author 21–28 days apart to minimise recall bias. Videos were reassessed in a random order to minimise recall of outcomes from examination in a fixed order (Lucas et al., 2010). Inter-rater reliability was compared between the first author and an independent coder, an experienced paediatric SLT. Prior to inter-rater reliability testing, the independent coder was trained by the first author in using the ELLECCT and coding videos. After the initial training session, the researcher and the independent coder rated one video sample using the ELLECCT as practice. This offered the opportunity to discuss minor discrepancies and provide further training. The other members of the research team were included to discuss discrepancies that were unable to be resolved. Two additional videos were then classified by the independent coder as part of the practice reliability check. The first author and the independent coder met afterwards to discuss discrepancies and the first author provided additional training if required. An additional transcript was completed for the ‘paralinguistic and nonverbal features’ section, as the initial reliability comparison for this section was found to be lower than the other ELLECCT sections.

Intra-rater and inter-rater reliability results

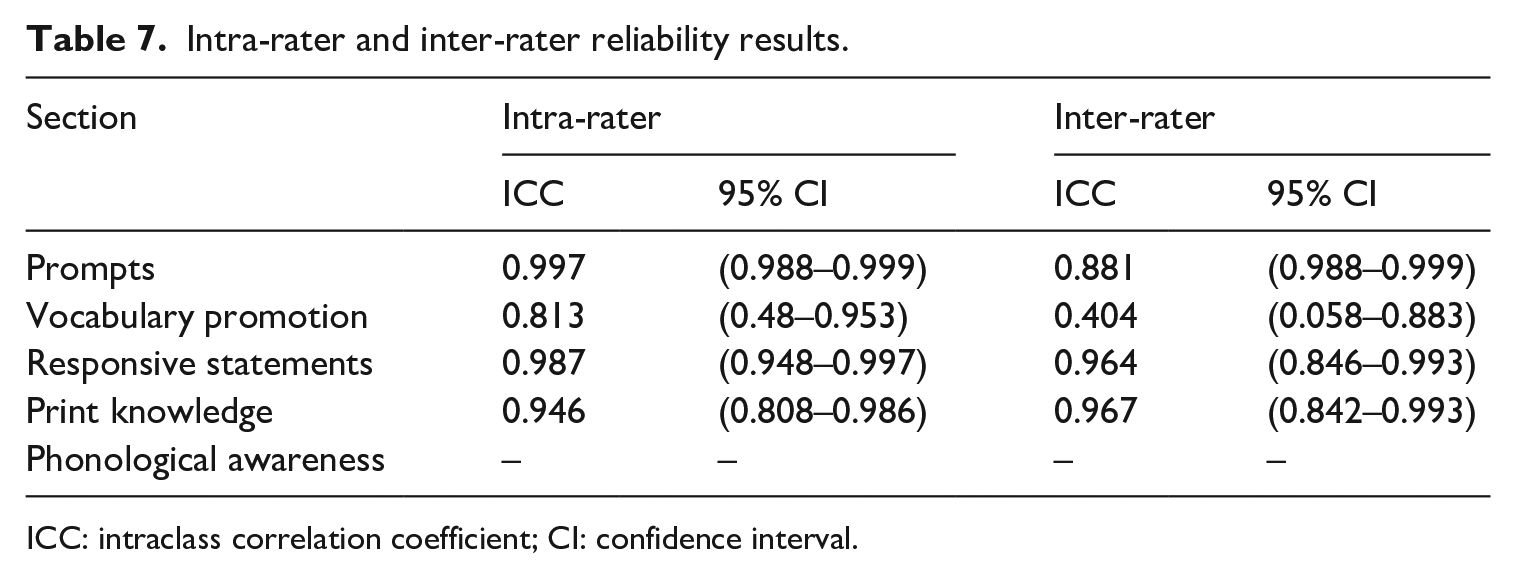

Intra-rater and inter-rater reliability were assessed using the intraclass correlation coefficient (ICC; Shrout & Fleiss, 1979). ICC scores and 95% confidence intervals were calculated using a two-way random-effects model, with absolute agreement for both reliability measures (Shrout & Fleiss, 1979). Intra-rater reliability was defined as absolute agreement between two ratings by the same rater on the eight randomly selected observation videos. For the inter-rater reliability, absolute agreement of two different raters (the first author and the research assistant) on eight randomly selected observation videos was appraised. All ICC measurements were completed using Stata Core 16.1 (StataCorp, 2019). The ICC and confidence intervals for intra-rater and inter-rater are displayed in Table 7.

Intra-rater and inter-rater reliability results.

ICC: intraclass correlation coefficient; CI: confidence interval.

Excellent intra-rater reliability was obtained across the first four ELLECCT sections (Cicchetti, 1994). For the inter-rater reliability, excellent agreement was obtained for ‘prompts’, ‘responsive statements’ and ‘print knowledge’. A reliability measurement was unable to be obtained for ‘phonological awareness’ for intra-rater or inter-rater reliability because the ECTs did not use these item codes in the sample data, and therefore they were not coded by raters. A ‘fair’ ICC (Cicchetti, 1994) score was obtained for ‘vocabulary promotion’; however, the wide confidence interval and lower limit of the 95% confidence interval indicates variability between both raters with the lower ends near zero. The largest discrepancy in achieving agreement was found with the ‘select and stress a word’ item. When measuring consistency between both raters on all other items within this section, 84% agreement was achieved.

Cohen’s kappa (Cohen, 1960) scores were obtained for the ‘paralinguistic and nonverbal features’ section as these sections were scored using categorical data. Results were measured using IBM SPSS Statistics for Mac, Version 26.0.0.0. A Cohen’s kappa score of 0.64 (moderate agreement) was achieved for intra-rater reliability and 0.54 (moderate agreement) for inter-rater reliability (Landis & Koch, 1977).

ELLECCT final sections and items

The validity and reliability process resulted in the final version of the ELLECCT that includes two main parts: a classification system and checklist (see Supplementary Material). The classification system is divided into five sections targeting oral language and emergent literacy strategies during shared book reading: Prompts, Responsive Statements, Vocabulary Promotion, Print Knowledge and Phonological Awareness. It also includes a section targeting ECTs’ use of paralinguistic and nonverbal features when reading to preschoolers. The ELLECCT contains 29 items across all six sections. The second part of the ELLECCT contains the observation checklist and scoring system. The first five sections employ frequency count data, whereby observation of an item results in the awarding of one point. Multiple points may be awarded to one utterance. The ‘paralinguistic and nonverbal features’ section includes a global scale to classify ECTs’ use of pausing, facial expression, gesture, prosody, volume and rate of speech.

Discussion

The results from the current study provide information about the development and initial evidence of the psychometric robustness of the ELLECCT, a shared book reading observation tool for use by ECTs with preschoolers. Results indicate strong evidence of validity and moderate evidence of reliability. Although preliminary indications for the psychometric features of the ELLECCT are positive, additional work is required to determine its effectiveness as a training tool for ECTs.

Using the CVI (Lynn, 1986), we have shown that ECTs and SLTs with disciplinary knowledge agreed that the ELLECCT has promising content validity and is representative of shared book reading constructs that have been shown to support the oral language and emergent literacy skills of preschoolers. The face validity analysis shows ECTs and SLTs consider the ELLECCT to include an appropriate layout and scoring system and be user-friendly which are all important considerations for the design of a quality tool. These validation results justify the use of the ELLECCT which may be applicable to support research, professional development, coaching, and/or training. For example, the ELLECCT could be used as a coaching tool to enhance ECTs’ use of extratextual utterances and their ability to engage children during shared book reading following training. We suggest that our validity method is enhanced by the recruitment of ECTs, the target users of the ELLECCT as this may increase their interest in using the tool. However, research and piloting are required to determine its efficacy as a training or coaching tool.

An important first step in promoting the uptake of the ELLECCT may be to partner SLTs with ECTs who work in community settings to provide shared book reading coaching. Evidence supports the importance of professional development and coaching in enhancing ECTs’ skill development during shared book reading (Hindman & Wasik, 2012; Powell et al., 2010) and the use of observational assessment in profiling teachers’ strengths and weaknesses, and documenting change for professional development purposes (Pianta & Hamre, 2009). Furthermore, professional learning with ECTs when delivered by SLTs has been found to be effective in the context of shared book reading (Milburn et al., 2014; Rezzonico et al., 2015). The ELLECCT was designed to identify ECTs’ oral language and emergent literacy extratextual utterances; however, the ECTs in this study did not receive any prior training. This poses a limitation for the current study because it resulted in the frequency count of some items being zero. Consistent with previous research, these ECTs did not frequently use strategies to support children’s emergent literacy skills during shared book reading (Gettinger & Stoiber, 2014; Zucker et al., 2009). Future research should include data from ECTs who have undergone explicit training and, therefore, may be using a broader range of extratextual utterances to support the psychometric features of the ELLECCT with a larger, varied sample. The results from the current study provide a basis for future investigation of the psychometric properties of the ELLECCT. Further application of the ELLECCT would enable the current validation features to be built upon and strengthened. The next step in investigating the validity of the ELLECCT would be to compare it with other available shared book reading tools to evaluate construct validity.

Reliability results indicated a high level of reliability was obtained for intra-rater reliability, with some variability for inter-rater reliability across some sections. Inter-rater reliability scores for most of the ELLECCT sections are comparable to other shared book reading tools (Pentimonti et al., 2021). Despite these positive results, it should be noted that inter-rater reliability testing for this study was gathered from only one other rater. In future studies, it would be useful to compare reliability findings using multiple trained raters. Furthermore, reliability testing for this study was completed across the different sections of the ELLECCT, rather than using an item-by-item analysis. A larger sample size would allow for a deeper examination of the individual item reliability and other reliability measures such as the tool’s internal consistency. A significant limitation of the inter-rater reliability of the ELLECCT is the low ‘vocabulary promotion’ score. The ‘select and stress a word’ item was found to reduce the overall reliability of this section due to its subjective nature and therefore, an item-by-item analysis of inter-rater reliability within this section would have been beneficial. It is important that ECTs, SLTs and researchers are aware of this limiting feature of the ELLECCT and future work is required to increase the inter-reliability of this section.

The reliability results for the ‘paralinguistic and nonverbal features’ section was lower in comparison to other areas of the tool. This section of the ELLECCT utilises a global rating scale which is advantageous as it is more time-efficient but can be less reliable as global scales are not tied to unitary behaviours (Crawford et al., 2013; Pianta & Hamre, 2009). The ELLECCT global rating scale includes a 3-point rating scale to identify ECTs’ use of paralinguistic and nonverbal features to help capture these more reliably. A disadvantage of some global ratings is focusing on several instructional components (Crawford et al., 2013). Attempts were made to address this concern by ensuring all six items within the ‘paralinguistic and nonverbal features’ section on the ELLECCT receive an individual global rating, to allow for specific instructional features such as use of pausing or gesture to be accounted for. Future versions of the ELLECCT should examine ways to improve the reliability of this section of the tool. Given the ‘paralinguistic and nonverbal features’ section of the ELLECCT captures data on less investigated shared book reading strategies, more research is also needed to investigate the impact such strategies have on supporting children’s engagement as well as their oral language skills.

Limitations of the shared book reading observations also warrant consideration. In an attempt to capture ‘business-as-usual’ practices when documenting shared book reading, ECTs were observed on one occasion only and they were invited to read a book of their choice. Given what we know about the nuances between ECTs’ extratextual utterances and contextual and book-related factors, more consideration of the shared book reading conditions is required for future research. The amount of extratextual talk used by ECTs in the current study may have been influenced by print-salient features of the text (Zucker et al., 2009) or the type of text read (Price et al., 2012). The number of children present during the shared reading session may also have impacted the use of extratextual talk and engagement (Powell et al., 2008; Wasik, 2008). Future research involving the ELLECCT should endeavour to control for these factors as well as allowing for repeated readings of the same text to assess stability of measures. In addition to the low frequency of observations of some behaviours, future research involving use of the ELLECCT should include observations of increased duration. Variation was demonstrated in the length of the shared book reading sessions and although measures were taken to address this issue by averaging extratextual utterances per minute, the amount of time spent observing the ECTs may have been less than adequate compared with the 15 minutes recommended for shared reading sessions (Dickinson & Tabors, 2001).

Conclusion

Shared book reading in preschool settings provides an important opportunity for ECTs to build children’s oral language and emergent literacy skills. The ELLECCT is a comprehensive tool designed to document ECTs’ shared book reading practices. We have reported on the development and psychometric properties of the ELLECCT. The results indicate promising support for the use of the ELLECCT in capturing and quantifying the extratextual utterances commonly used by ECTs when reading to preschoolers. The ELLECCT may be used as a tool to identify potential gaps in ECTs’ book reading practices and support training. Future research is required to address the limitations identified and further evaluate the potential of the ELLECCT as a training and coaching tool.

Supplemental Material

sj-docx-1-fla-10.1177_01427237211056735 – Supplemental material for The development and psychometric properties of a shared book reading observational tool: The Emergent Literacy and Language Early Childhood Checklist for Teachers (ELLECCT)

Supplemental material, sj-docx-1-fla-10.1177_01427237211056735 for The development and psychometric properties of a shared book reading observational tool: The Emergent Literacy and Language Early Childhood Checklist for Teachers (ELLECCT) by Tessa Weadman, Tanya Serry and Pamela C. Snow in First Language

Supplemental Material

sj-docx-2-fla-10.1177_01427237211056735 – Supplemental material for The development and psychometric properties of a shared book reading observational tool: The Emergent Literacy and Language Early Childhood Checklist for Teachers (ELLECCT)

Supplemental material, sj-docx-2-fla-10.1177_01427237211056735 for The development and psychometric properties of a shared book reading observational tool: The Emergent Literacy and Language Early Childhood Checklist for Teachers (ELLECCT) by Tessa Weadman, Tanya Serry and Pamela C. Snow in First Language

Supplemental Material

sj-docx-3-fla-10.1177_01427237211056735 – Supplemental material for The development and psychometric properties of a shared book reading observational tool: The Emergent Literacy and Language Early Childhood Checklist for Teachers (ELLECCT)

Supplemental material, sj-docx-3-fla-10.1177_01427237211056735 for The development and psychometric properties of a shared book reading observational tool: The Emergent Literacy and Language Early Childhood Checklist for Teachers (ELLECCT) by Tessa Weadman, Tanya Serry and Pamela C. Snow in First Language

Supplemental Material

sj-docx-4-fla-10.1177_01427237211056735 – Supplemental material for The development and psychometric properties of a shared book reading observational tool: The Emergent Literacy and Language Early Childhood Checklist for Teachers (ELLECCT)

Supplemental material, sj-docx-4-fla-10.1177_01427237211056735 for The development and psychometric properties of a shared book reading observational tool: The Emergent Literacy and Language Early Childhood Checklist for Teachers (ELLECCT) by Tessa Weadman, Tanya Serry and Pamela C. Snow in First Language

Footnotes

Acknowledgements

The authors acknowledge all of the early childhood teachers and speech-language therapists who participated in this study. They express their sincere thanks to Emily Greaves for assisting with reliability. They also thank Dr. Don Vicendese for his contributions to the statistical analyses included in the reliability.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical approval

Ethics approval for this study was received from the Victorian Department of Education and Training and the La Trobe University Human Ethics board.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.