Abstract

In this paper, a generic multi-sensor fusion framework is developed for the localization of intelligent vehicles and mobile robots. The localization framework is based on moving horizon estimation (MHE). Unlike the commonly used probabilistic filtering algorithms – for example, extended Kalman filter (EKF) and unscented Kalman filter (UKF) – MHE relies on solving successive least squares optimization problems over the innovation of multiple sensors’ measurements and a specific estimation horizon. In this paper, we present an efficient and generic multi-sensor fusion scheme, based on MHE. The proposed multi-sensor fusion scheme is capable of operating with different sensors’ rates, missing measurements, and outliers. Moreover, the proposed scheme is based on a multi-threading architecture to reduce its computational cost, making it more feasible for practical applications. The MHE fusion method is tested using simulated data as well as real experimental data sequences from an intelligent vehicle and a mobile robot combining measurements from different sensors to get accurate localization results. The performance of MHE is compared against that of UKF, where the MHE estimation results show superior performance.

Keywords

Introduction

Localization is one of the main modules for autonomous systems; for example, vehicles and mobile robots. It acts as the basis for decision making and navigation control. The research effort on developing more reliable and accurate localization methods has been increasing in the past few years from both academia and industry. This problem can be addressed by the use of highly accurate sensors such as the differential global positioning system (D-GPS). However, such sensors are usually costly and their accuracy degrades in remote areas or in GPS denied environments. An alternative solution is to use different low cost sensors of lower accuracy, and to apply a sensor fusion technique (Khaleghi et al., 2013) in order to get a better pose estimate of the autonomous system (Al-Kaff et al., 2018; Duan et al., 2014; Kelly and Sukhatme, 2011; Lu et al., 2017; Luo and Chang, 2012; Magrin and Todt, 2016; Osman et al., 2019a; Urmson et al., 2008).

The localization problem can be addressed by two different approaches: recursive filtering (through linearizing the nonlinear systems models) and optimization-based approaches (without the need for linearization). Recursive filtering methods are based on the Bayesian estimation methods such as extended Kalman filter (EKF) and unscented Kalman filter (UKF) (Julier and Uhlmann, 1997, 2004), which rely on the sensor measurements combined with a motion model for computing the prior distribution of the pose to estimate the posterior probability of the pose (Thrun et al., 2005). Optimization-based techniques are based on directly solving the maximum a posteriori (MAP) optimization problem without the need of any linearization. The optimization problem aims at maximizing the posterior probability over the whole estimated trajectory; this is achieved through solving a least squares optimization problem over the innovations of the measurements of different sensors (Cadena et al., 2016).

Solving the optimization problem over the whole history of states is commonly referred to as a full information estimation (Rao et al., 2003). The full information estimation becomes intractable for longer duration of operation, due to the increase in optimization variables (unknown poses in case of localization). In practice, the contribution of old states over the future ones decreases with time. This means that optimizing over the whole history of states may become unnecessary and computationally expensive. Moving horizon estimation (MHE) has the ability to deal directly with the nonlinear system models and measurement models. The computational cost of MHE can be controlled by choosing the size of the estimation horizon.

In order to solve the intractability problem of full information estimation, MHE solves a similar estimation problem with the difference being that it includes a fixed number of the most recent measurements, while older measurements are marginalized in order to keep the computational time nearly constant (Ali and Tahir, 2020; Flaus and Boillereaux, 1997; Zou et al., 2020).

In this paper, a generic multi-sensor fusion framework is developed for the localization of mobile platforms using MHE. The main contributions of this paper can be summarized as follows:

The development of a generic multi-sensor fusion scheme based on MHE for the localization of autonomous systems, which is capable of handling different sensor rates as well as missing measurements and outliers.

Incorporation of a multi-threading framework into the MHE algorithm to reduce its computational cost to become more feasible for real-time applications.

In conclusion, the paper proposes a proof-of-concept design of a generic and practical multi-sensor fusion scheme based on MHE for the localization of autonomous platforms given the historical reputation of the MHE as computationally expensive and only suitable for certain applications and configurations.

The MHE fusion scheme is tested using both numerically simulated data as well as experimental data sequences. The MHE estimation output is compared with that of a UKF. Finally, the implementation of the proposed estimation scheme is made available as an open-source package for the scientific community.

The remainder of this paper is organized as follows. An overview of the related work is presented in Section 2. The MHE problem is formulated in Section 3. The generic fusion scheme developed for fusing different odometries using MHE is presented in Section 4. Section 5 presents the numerical simulation and experimental sequences used for validating the proposed scheme. In Section 6, the MHE localization results are compared with those of the UKF multi-sensor fusion algorithm. Finally, concluding remarks and future works are presented in Section 7.

Related work

In Chen et al. (2012), the authors introduced an EKF-based localization algorithm for autonomous mobile robots, which uses a map of landmarks consisting of corner features. In this work, based on the assumption of Gaussian white noises and a Markov stochastic process, the location of the robot is estimated using the odometry information and exteroceptive observations. The exteroceptive observations are then compared with the stored map of the landmarks in order to estimate the robot location. In Marín et al. (2013), an event-based Kalman filter was presented for multi-sensor fusion of robots with limited computation resources for indoor-outdoor localization of mobile robots. In Lynen et al. (2013), a robust generic sensor fusion algorithm for the localization of mobile robots based on the EKF was proposed, which is able to process delayed, relative and absolute measurements from theoretically unlimited number of different sensors and sensor types.

In Al Hage et al. (2017), a method for rejecting outliers in the measurements of sensors was presented using Kullback-Leibler divergence (KLD). The authors integrated the proposed method to an information filter and applied it to the localization of multi-robot systems for validation.

In Moore and Stouch (2016), a generic multi-sensor fusion scheme was developed for localization using filtering methods (EKF and UKF), which can take an arbitrary number of heterogeneous sensor measurements.

In Yousuf and Kadri (2018), an information fusion scheme for GPS, Inertial Navigation System, and odometry sensors is proposed to improve the accuracy of the localization of autonomous vehicles. Additionally, the authors uses an artificial neural network as a pseudo-sensor to predict the measurement of the GPS in case the GPS signal is lost. Furthermore, in Yousuf and Kadri (2020), the authors also add a fuzzy logic system in order to reduce the wheel slippage errors that can result in an increased error in position estimation from the odometer.

In Kim and Lee (2018), an EKF fusion scheme is implemented that fuses the measurements of a GPS and cameras along with the odometers. The scheme uses the odometers in order to perform the prediction step of the filter while the GPS and the cameras are used for the measurement step.

UKF-based localization is discussed in Giannitrapani et al. (2011) and was compared with EKF-based localization for a spacecraft. It was shown that the UKF performs better in terms of the average localization accuracy and consistency of the estimates. This is because the UKF approximation, which uses the unscented transform of the prior and measurement distributions, is a better in approximating the original distribution than the EKF, which is based on linear approximation.

In Nada et al. (2018), a UKF is used for estimating the position of a wheelchair in indoors environments. The fusion scheme is based on fusing the data from two odometers, a magnetometer, and an accelerometer. The work compares between two different fusion architecture, namely, state vector fusion and measurement fusion. The paper concludes that the measurement fusion gives better accuracy. Here, we use the measurement fusion scheme to develop our fusion scheme.

In Jain and Roy (2020), a navigation system is proposed based on UKF for pose estimation of the vehicle. The UKF is used to fuse the measurements of a GPS, an IMU, and wheel encoders while using a Stanley controller for navigation control.

In Liu et al. (2020), a multi-innovation UKF is used to fuse the measurement from different sensors in order to estimate the pose and velocity of skid-steer mobile robots. Therein, a dual antenna GNSS, two encoders, and an IMU are used to localize the robot while estimating the slip error components.

MHE was used in several studies for localization: Mehrez et al. (2014) used nonlinear MHE for the relative localization of a multi-robot system. An efficient algorithm based on real-time iteration scheme was used to improve the computational efficiency. In Simonetto et al. (2011), a distributed MHE scheme for a mobile robot localization problem was proposed using sensor networks. The stability of the estimator and the approximation of the arrival costs were discussed.

Wang et. al (2014). combined the EKF with the MHE for the localization of a three dimensional underwater vehicle. It was claimed that the algorithm achieves a compromise between better accuracy and lower computational cost. In Kimura et al. (2014), MHE was used to fuse a laser sensor range measurements and the odometry information of a vehicle to perform localization, which is more robust to the outliers in the laser measurements. In Qayyum et al. (2019), a receding horizon state observer for linear time-varying systems was proposed considering known deterministic inputs.

Liu et al. (2017) proposed a multi-rate MHE for the localization of mobile robots and provided a stability analysis of the estimator. The proposed MHE scheme uses an inertial sensor and a camera with data sampled at different rates. A binary switching sequence was introduced to model the multi-rate sampling process and the input-to-state stability was investigated assuming bounded disturbances and noises. Similarly, in Dubois et al. (2018), a multi-rate MHE sensor fusion algorithm in the presence of time-delayed and missing measurements was proposed. Additionally, a computationally efficient implementation of linear MHE was introduced. The proposed approach is only applicable when an analytical solution of the linear MHE problem can be found.

In most of the aforementioned studies, the proposed algorithms used different filtering techniques, or were based on linear measurement and system models to avoid the high computational load required for the implementation of the MHE. Furthermore, most of the solutions proposed are specific to each case and cannot be generalized to arbitrary number of sensor measurements.

In this paper, a generic multi-sensor fusion scheme based on nonlinear MHE is proposed. The proposed fusion scheme is generic in the sense that it can take care of multiple sample rates (Lin and Sun, 2019), missing measurements, outlier rejection, and real-time requirements.

Notations

MHE

In this section, the mathematical formulation of the MHE problem is presented following the way shown in Rawlings and Bakshi (2006). We consider the following (disturbed) discrete nonlinear dynamics

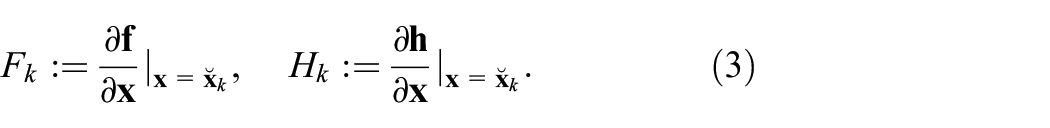

where

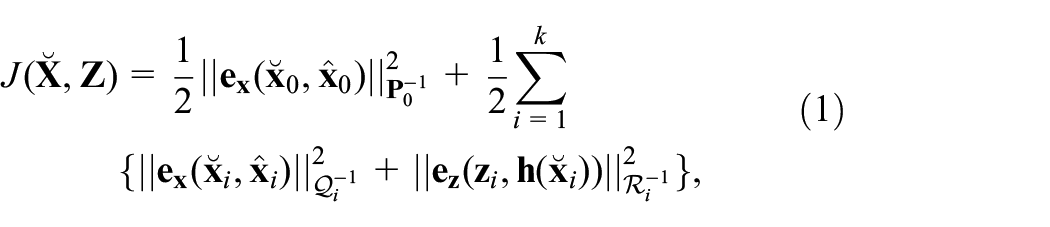

A full information MAP estimation approach solves a least squares optimization problem over the history of states by minimizing the cost function

where (.) denotes the estimated states of the system (optimization variables),

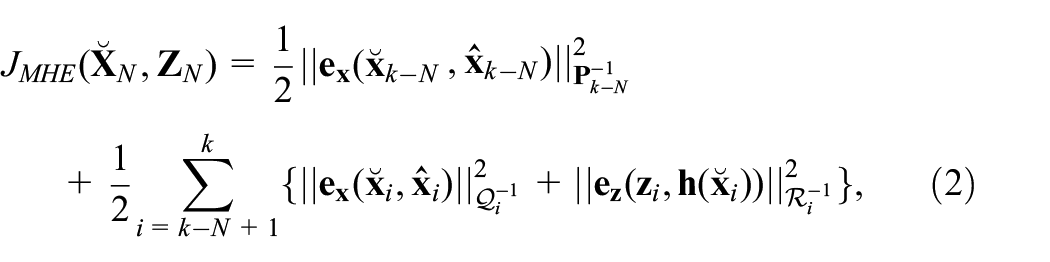

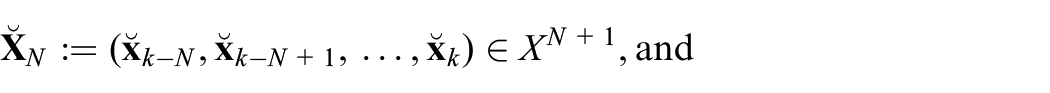

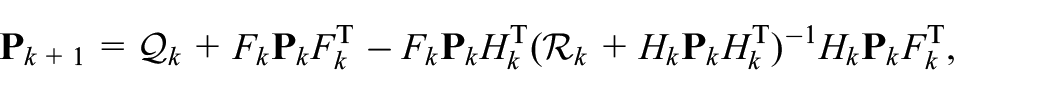

Minimizing the cost function stated in (1) becomes, in general, intractable for long duration of operation due to the growth of the number of optimization variables. Moreover, the effect of older states effect on current states decreases with time. Instead, MHE strategy can be adapted as an alternative solution to a full information MAP. To this end, we define the estimation horizon length

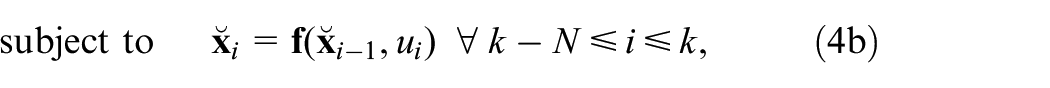

where

are the history of the estimated robot states and measurements over the estimation horizon,

where

The moving horizon optimization problem can now be stated as

We remark that, in general, the MHE problem can be defined for any noise distribution, that is,

Multi-sensor fusion using MHE for localization

In this section, we present the main components of the proposed multi-sensor fusion scheme. The generic motion model used for fusion is presented first; secondly, the measurement model and the specific (measurement) data handling and processing methodologies are stated. Finally, the proposed multi-threading fusion algorithm is presented.

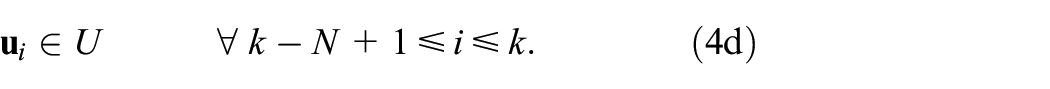

Motion model

The states of general mobile robots can be defined as

where

For

where

Measurements model

The localization of a mobile robotic platform can be accomplished through odometry computation (odometry-based); for example, LiDAR, visual, or wheel odometries, through map-based localization, for example, landmark-based and grid-based localization (Thrun et al., 2005), or by exteroceptive sensors such as GPS or magnetometer.

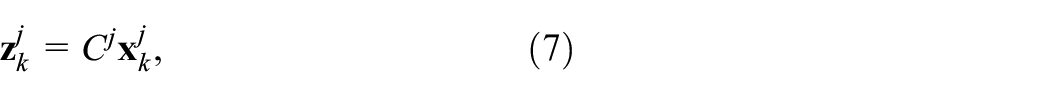

In this study, we assume that each of the robot’s on-board sensors provides a measurement for a subset of the states

where

Assuming linear measurement models is acceptable, because most of the sensors used in the localization of autonomous platforms, in general, provide a subset of the vehicle states. For example, a visual or LiDAR odometry of a ground mobile robot provides a measurement of

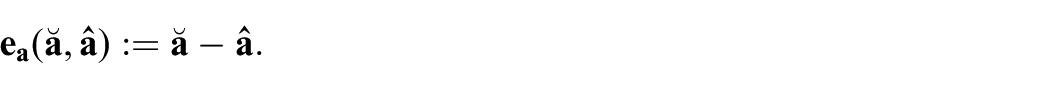

Error definition

As explained in Section 3, the MHE cost function (2) embodies the error in the model and the measurement. The error for the position, velocity, angular velocity, and acceleration in the Cartesian space as

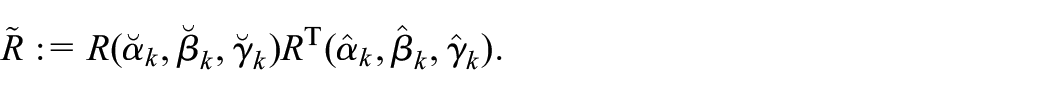

As for the orientation, we employ the formulation of the rotation error given by Campa and De La Torre (2009)

where

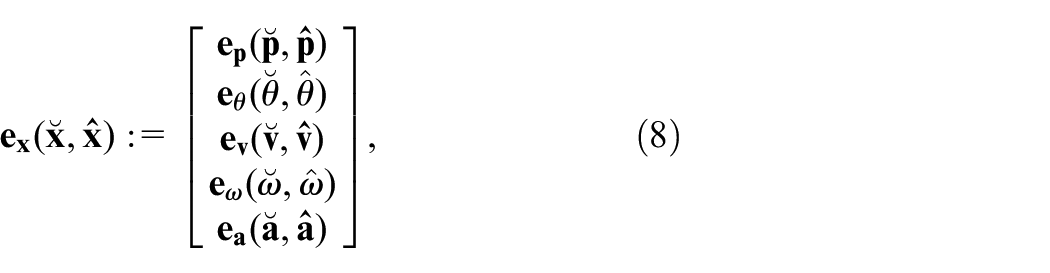

Finally, the error on the states

and the error on the measurements of each sensor

where the ° operator denotes the function composition.

Multi-sensor fusion scheme

In order to develop a generic framework for the MHE localization, the following considerations need to be addressed:

Different measurement rates of the sensors as well as the missed inter-sample values need to be properly handled.

The localization algorithm needs to be computationally efficient so that it can be implemented in real-time.

The algorithm needs to be adjustable to different kinematic constraints depending on the platform type; for example, holonomic, non-holonomic.

Outliers need to be properly handled to prevent the inclusion of spurious measurements in the optimization because they can significantly deteriorate the estimation performance.

Each of the aforementioned considerations is addressed through the proposed multi-sensor fusion scheme as follows.

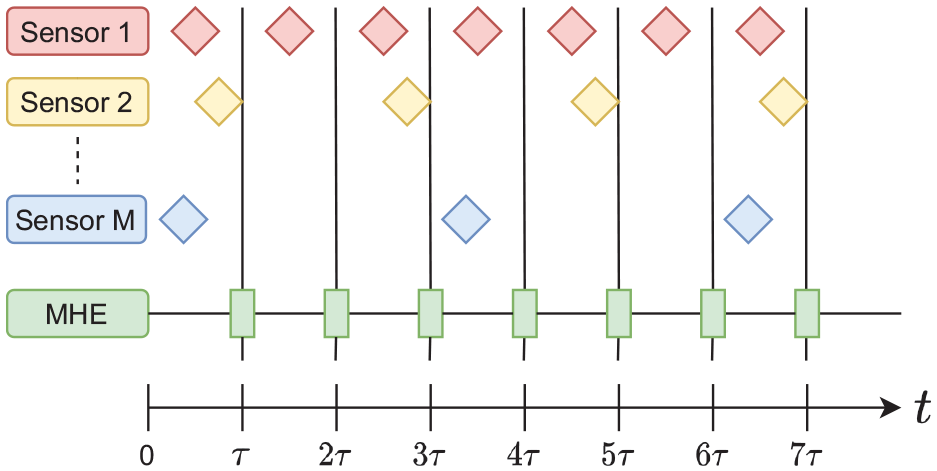

Multi-rate sensor fusion

The proposed localization algorithm does not impose any limits on the update rates of the sensors being fused. Here, the localization scheme relies on solving the optimization problem (4) using a fixed but configurable sampling rate. The different sensor measurements are received at different times, that is, no synchronization is required, and depending on the measurement received during the sampling time duration, a new iteration of the MHE problem is formulated using the available data (see Figure 1 for an illustration of the idea). The different queues of the data over the estimation horizon are updated with the new measurements; then, the measurements older than the estimation horizon are discarded. The cost function (2) is then updated by the new available measurements.

Multi-rate MHE-based localization.Note: Diamond shapes represent the available measurements produced from each sensor.

Notice that the multi-rate sensor fusion approach along with the MHE scheme that does not only rely on the previous state and measurements but also takes a window of them, adds robustness to the localization scheme. This will be highlighted in Section 6. Although the performance and accuracy of filtering-based schemes can deteriorate if measurements are missing even for one timestep, MHE can be robust to missing measurements as long as there is some measurements in the estimation horizon

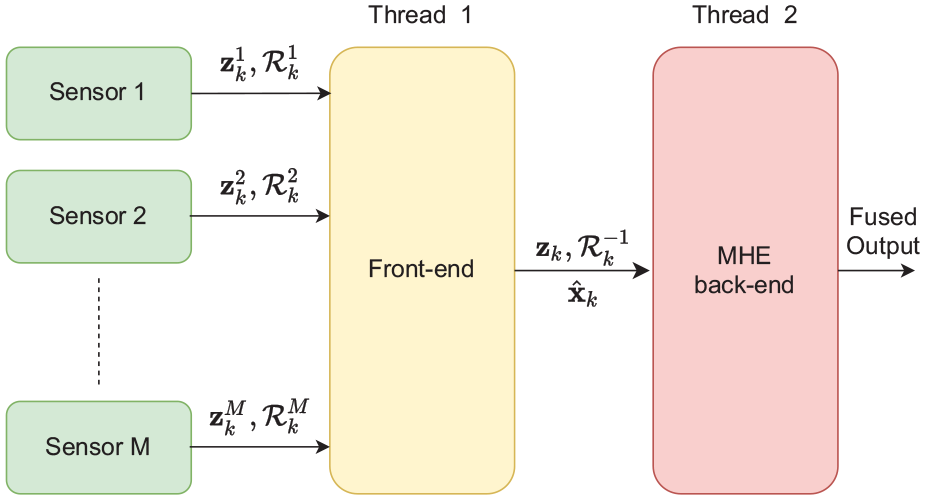

Multi-threading architecture

The computational cost of solving the MHE problem is highly dependent on the estimation horizon length

The multi-threading architecture of the MHE localization scheme.

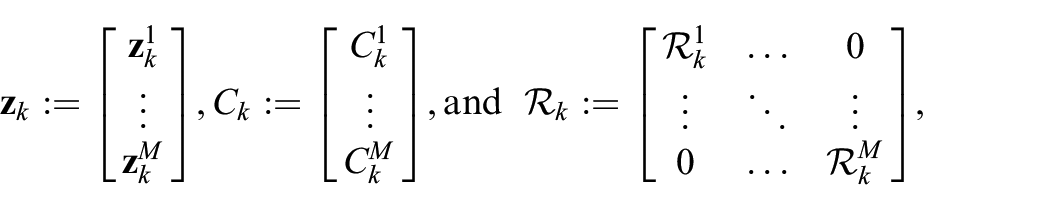

Thread 1 is responsible for computing the information matrices for each sensor, building the measurement matrices, that is

as well as propagating the motion model through the next time-step to compute the posterior covariance matrix. The measurement vector

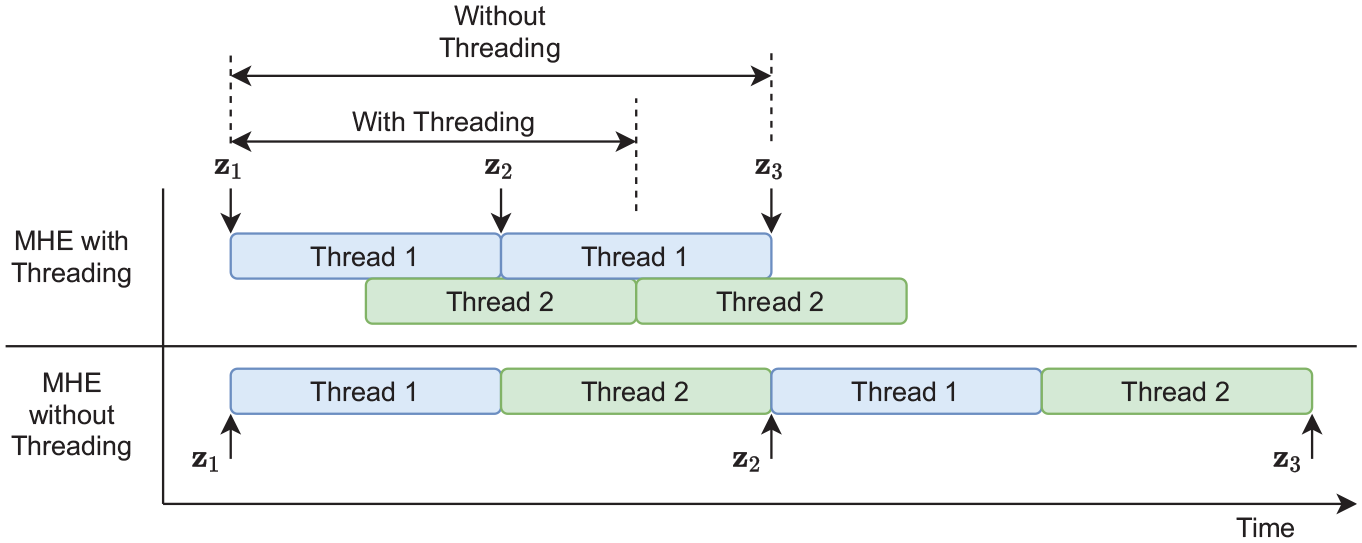

This architecture reduces the computational cost of the MHE through dividing the required computations on two threads, which can work in parallel. The first thread can receive the measurements, compute the new prediction, invert the covariance matrices, and form the measurements vector while the other thread is solving the previous optimization problem. This results in a reduction in the overall computational time of the MHE and increases lower bound on the sampling time as shown in Figure 3.

Visualization of the computational time for the MHE scheme in the multi-threading case as well as the unthreaded case.Note: The computational time decrease in case of the multi-threading case as new measurements can be received and processed before the previous optimization problem is solved.

Compensation for the vehicle type

The proposed MHE localization scheme uses a generic omni-directional motion model (equation (6)) for state prediction. Therefore, when solving the MHE optimization problem, certain kinematic constraint are further induced over the optimization problem to compensate for the vehicle type. For example, a differential mobile robot has a constraint on lateral motion, that is,

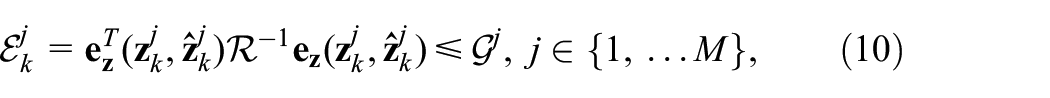

Outlier rejection

Measurement outliers are detected and removed using the Mahalanobis’ distance thresholding method (Mahalanobis, 1936) as follows

where

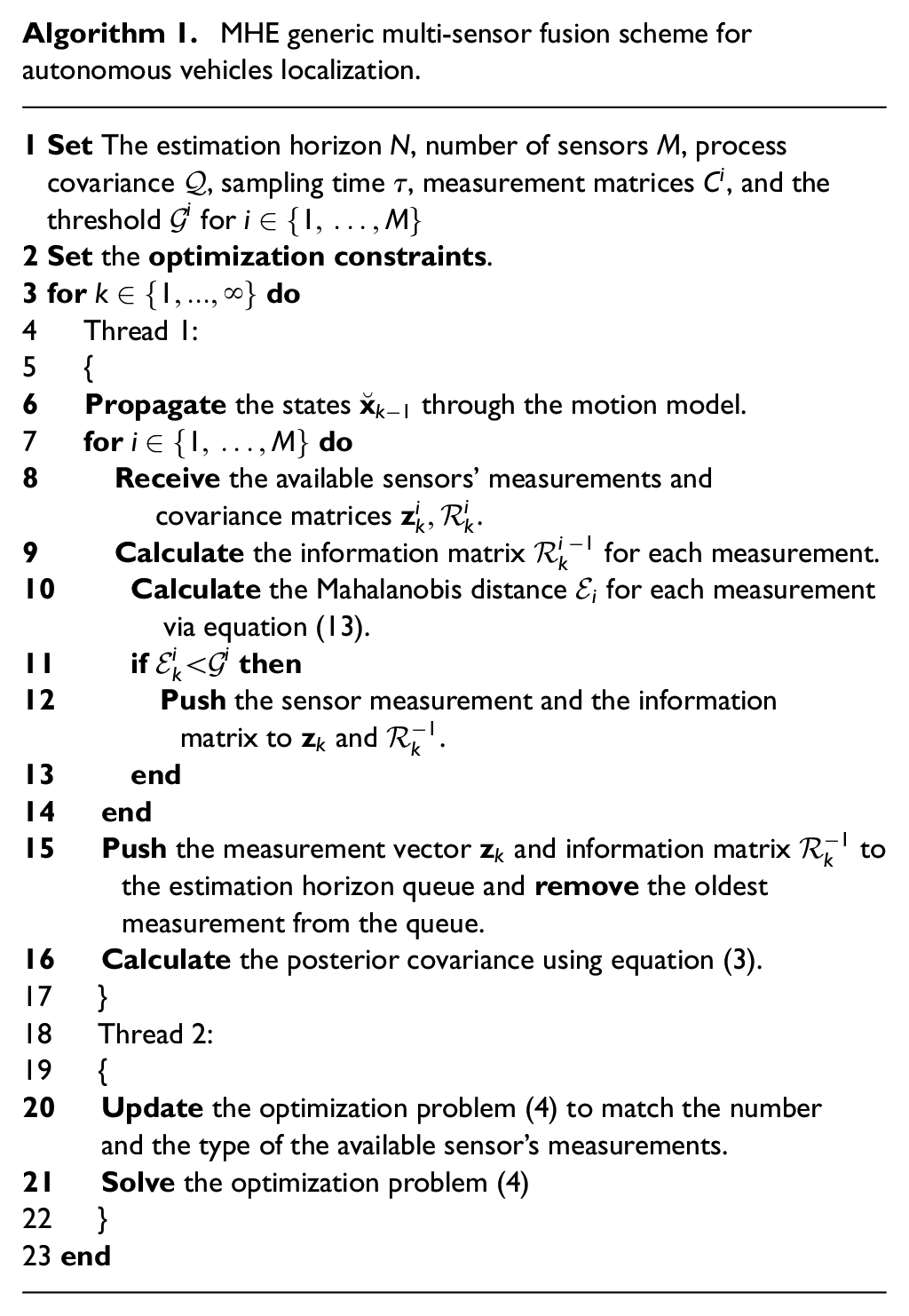

MHE generic multi-sensor fusion scheme for autonomous vehicles localization.

Data sets used for simulations and experiments

The proposed localization scheme (Algorithm 1) was developed using ROS (Quigley et al., 2009). Here, the optimization problem (4) is formulated symbolically using CasADi: an open-source software tool for numerical optimization (Andersson et al., 2019). The optimization problem is then solved using the interior point method (Helmberg et al., 1996).

In order to validate the MHE localization scheme, several numerical and experimental data sets are used: (1) a simulated data of 3D motion of an aerial vehicle; (2) several scenarios from EU long-term dataset for autonomous driving (Yan et al., 2019); and (3) experimental sequences generated using Summit-XL Steel omni-directional mobile robot, see Figure 4. All the experiments and dataset runs are performed using a computer with Intel i7-8850H 6-core processor running at 2.60 GHz and a 16 GB RAM. The computer is running Ubuntu version 16.04 and ROS Kinetic.

The omni-directional mobile robot used for validating the MHE multi-sensor fusion.

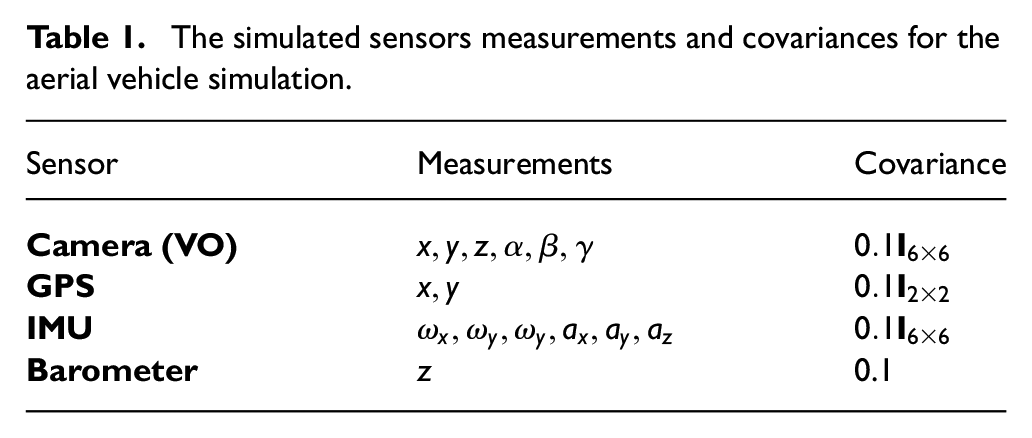

Aerial vehicle simulation

A simulated aerial vehicle is used for the validation of the proposed MHE localization scheme. The simulated vehicle is commanded to perform a spiral motion and is assumed to be equipped with a camera running a visual odometry (data are simulated), a GPS sensor, a barometer to measure the altitude of the vehicle, and an inertial measurement unit (IMU). Each of the sensors is assumed to have additive Gaussian noise with zero mean; Table 1 shows the measurements of each of the sensors as well as their covariance matrices. The simulated motion is generated using the same omni-directional model stated in equation (6) with the constant velocities

The simulated sensors measurements and covariances for the aerial vehicle simulation.

EU long-term dataset

The EU long-term dataset is an open-source dataset for autonomous driving, which contains the sensors data from multiple heterogeneous sensors. The data was collected using the University of Technology of Belfort Montbliard (UTBM) vehicle in human driving mode. The vehicle is driven in the downtown of Montpelier in France (Yan et al., 2019). For the long-term data, the driving distance is about 5.0 km per session driven in

The ground-truth for this dataset is generated by a GPS/RTK sensor that can achieve very accurate position measurements.

2

Therefore, an artificial Gaussian noise

Summit-XL Steel omnidirectional robot

Summit XL Steel is a ground mobile robot with meccanum wheels manufactured by Robotnik Inc. 3 The robot is equipped with a Velodyne LiDAR sensor 4 , an Astra RGB-D Camera 5 , a Pix-hawk 3 auto-pilot used as an IMU, 6 and wheel encoders. Several experiments were conducted using the robot to validate the proposed MHE localization algorithm while using a VICON motion capture system to generate the ground-truth data. The VICON system used here consists of 12 cameras. The VICON bridge package was used to couple VICON with ROS. 7 The sensor fusion was performed using measurements from the IMU, wheel odometry, and 2D LiDAR odometry (Censi, 2008).

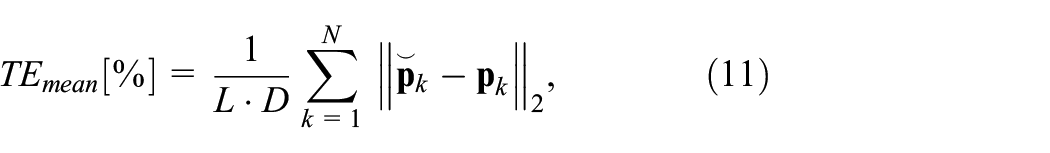

Evaluation metrics

The performance of the proposed MHE localization algorithm is compared against a UKF-based localization scheme implemented in Moore and Stouch (2014). Both schemes are evaluated by, first, the mean and the maximum error percentages of the translation, calculated as shown in equation (11) and equation (12), respectively

where

The performance of the orientation is evaluated by the mean and the maximum of the orientation error divided by

where

UKF

UKF is a nonlinear extension of the linear Kalman filter algorithm (Julier and Uhlmann, 1997, 2004). However, in case of the UKF, the algorithm deals with the nonlinear system directly without the need for linearization that leads to increased accuracy of the state estimation. UKF uses the unscented transform to propagate the error through the nonlinear system directly but still in this case both the prior prediction and observation are assumed to be Gaussian random variables.

The UKF scheme used for comparison in this paper uses the same parameters used in the MHE. However, as the UKF uses the unscented transform for noise propagation, some extra parameters a present such as the number of sigma points and their weights. Notice that these parameters were defined exactly as stated in the original paper (Wan and Van Der Merwe, 2000).

Process noise and measurement covariances

To achieve good localization accuracy using MHE (and many other sensor fusion techniques), the process noise covariance needs to be chosen carefully. Although the determination of the process noise covariance

As for the measurement covariance of the sensors, there are several methods of determining them: (i) The characteristics and specifications of the sensors could contain the variances directly; (ii) Through tuning based on multiple experiments using the sensor; (iii) The sensor itself could provide its measurements along with the covariance; (iv) Through using a covariance estimation algorithm such as Osman et al. (2019c) and Osman et al. (2018).

Results and discussion

In this section, the results of implementing Algorithm 1 are presented for the simulated aerial vehicle, UTBM dataset, and Summit-Xl Steel omnidirectional robot.

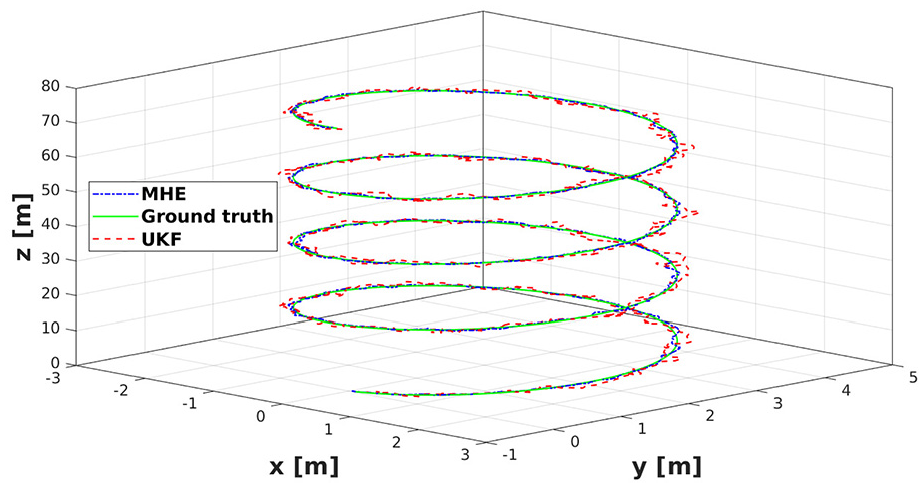

Simulated aerial vehicle

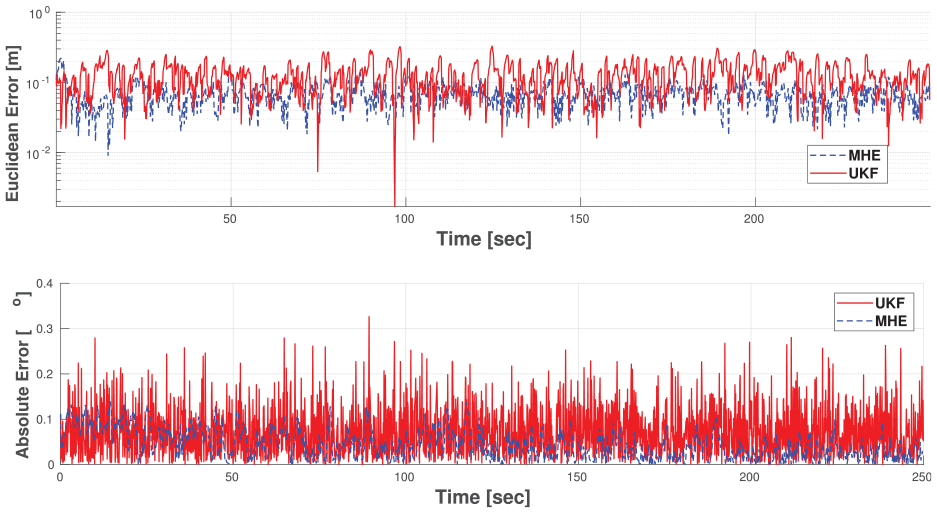

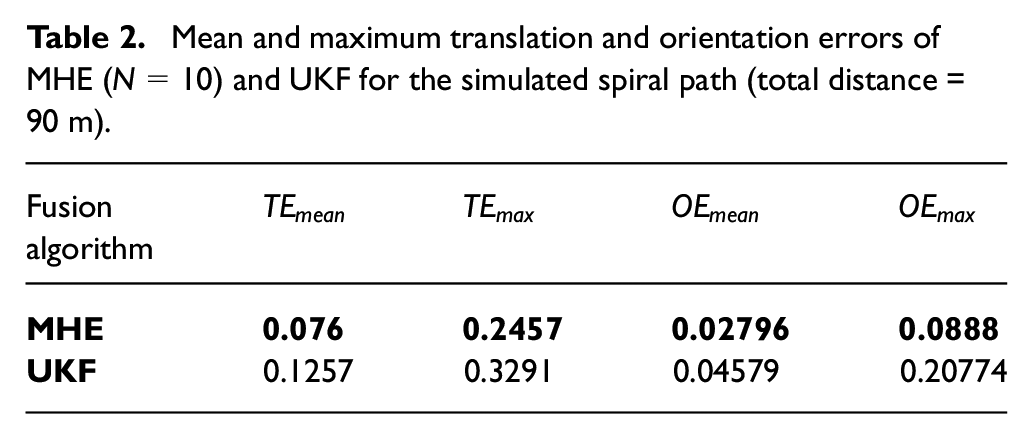

As mentioned earlier, a spiral motion of an aerial vehicle was simulated for validating the MHE localization scheme. Figure 5 shows the 3D path taken by the simulated drone as well as the MHE and UKF estimation outputs. It can be noticed that the MHE estimation is smoother and more accurate than that of the UKF. Figure 6 shows the error in the estimation of the MHE and UKF. The figure demonstrates the superior performance of the MHE compared with UKF for both translation as well as orientation estimation. Table 2 shows the quantitative results of the aerial vehicle simulation. The MHE estimation is almost as twice as accurate as the UKF. The results of this simulation concludes that the MHE localization algorithm outperforms UKF.

The estimated trajectory by MHE and UKF as well as the ground truth of the simulated aerial vehicle spiral motion.

The translation and orientation errors in MHE and UKF for the simulated aerial vehicle spiral motion.

Mean and maximum translation and orientation errors of MHE (

In order to further validate these results with real experimental data. The following two sections shows the results of the fusion using 2D sequences using the UTBM platform as well as the omnidirectional Summit-XL Steel robot. In order to show the feasibility of the algorithm for practical purposes, the computation time of the algorithm is also studied using one of the UTBM sequences.

EU long-term dataset

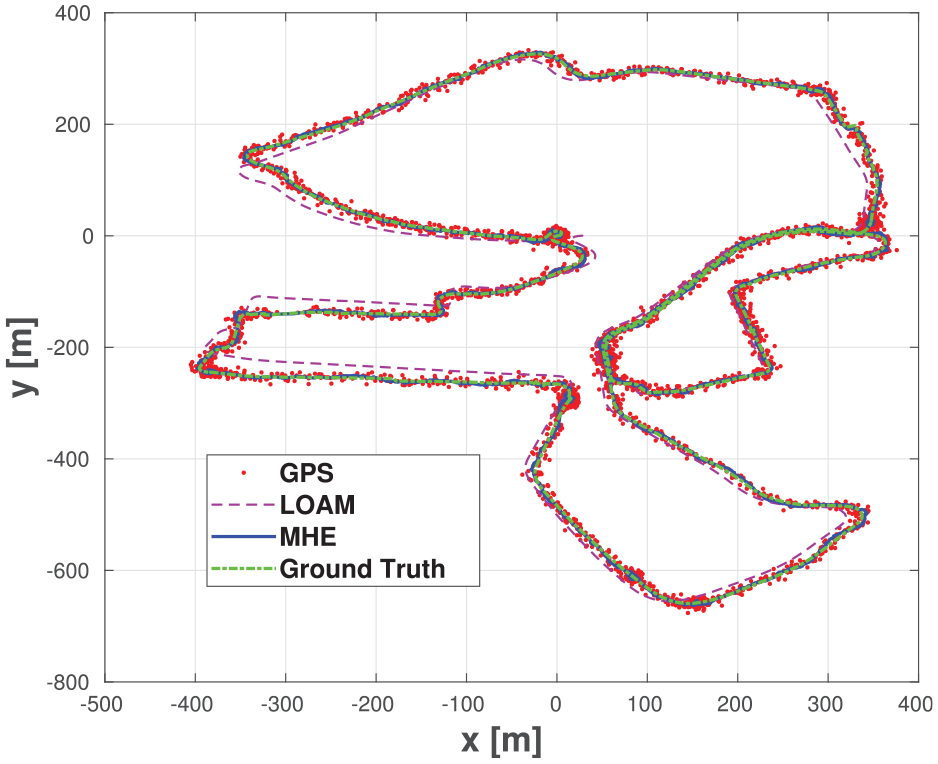

In Figure 7, the MHE output as well as the measurements from the GPS and LOAM are presented. The MHE achieves a very accurate pose estimation as well as smooth trajectory given the jumpy and discontinuous GPS measurements. As for LOAM measurement, in order to limit the amount of drift, caused by motion increments integration, the MHE or UKF output was used as a corrective feedback, in order to correct for the drift when fusing the odometry measurements. This correction along with the Mahalanobis’ thresholding outlier rejection led to better fusion results by mitigating the effect of drift from the LiDAR odometry on the fusion output. Moreover, throughout these sequences, the lateral velocity

The estimated trajectory by MHE plotted with the GPS and the LOAM measurements (without correction) as well as the ground truth data for sequence 2018-05-02 (1) from EU long-term dataset.

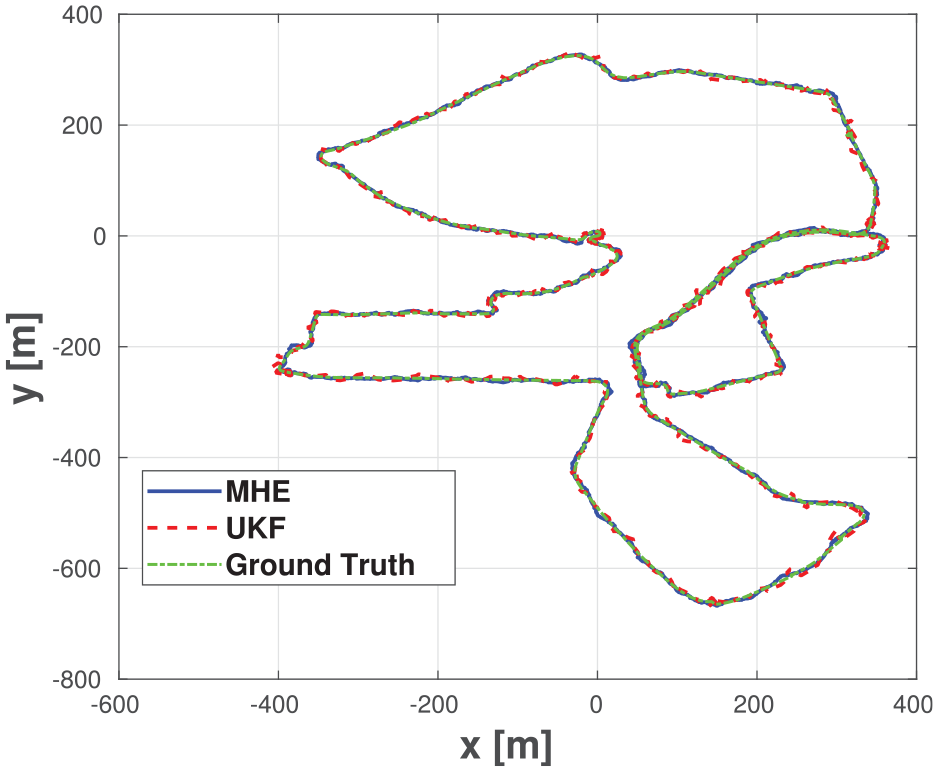

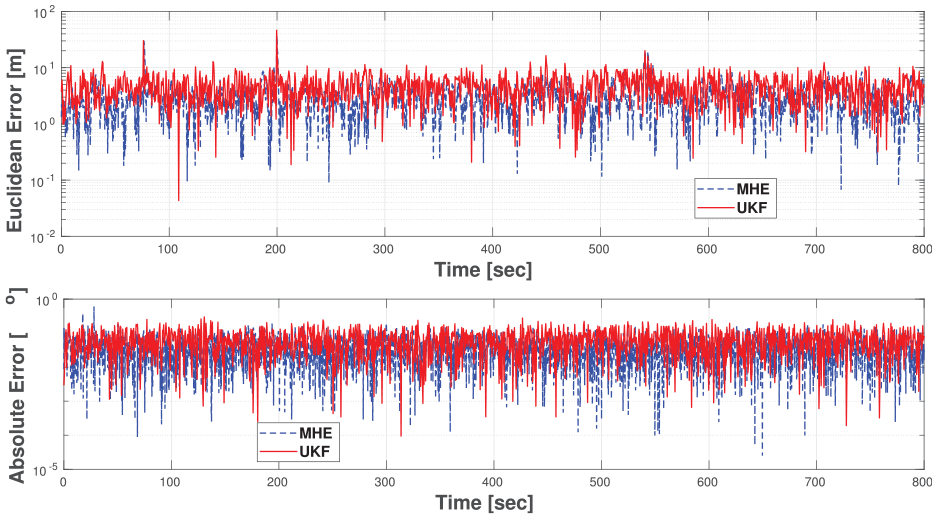

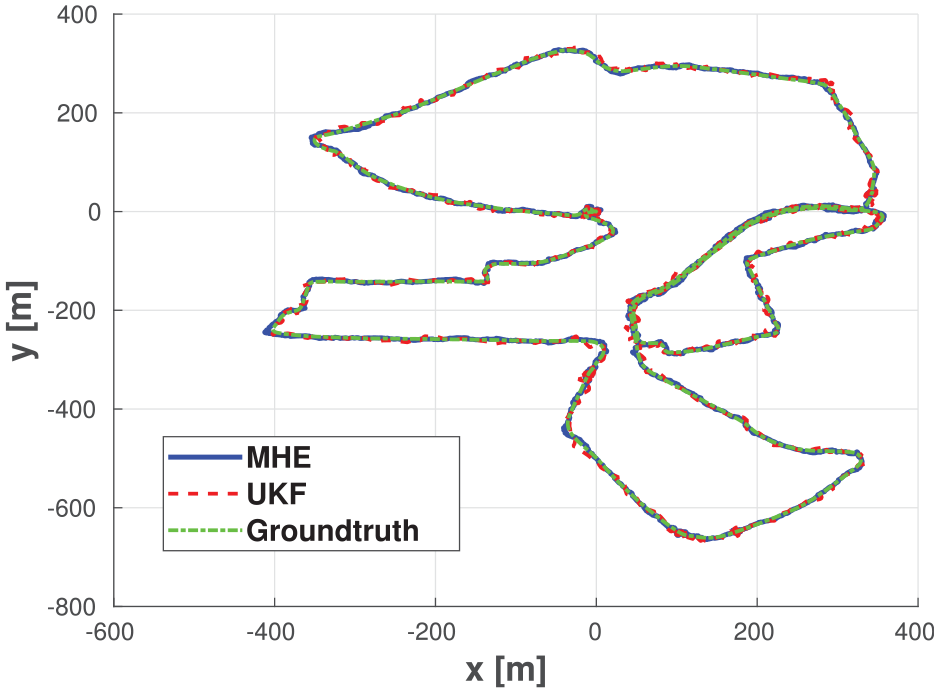

Figure 8 and Figure 9 show the estimation output from the MHE and UKF as well as the translation and orientation errors along the path of the sequence 2018-05-02 (2). The MHE output is smoother and more accurate when compared with the output of the UKF (except for one instance where the maximum error of the path in MHE case is worse than that of the UKF). Figure 10 and Figure 11 confirms that the MHE outperforms the UKF in both translation and orientation errors. It also confirms that the MHE is able to produce smoother paths.

The estimated trajectory by MHE and UKF as well as the ground truth for sequence 2018-05-02 (2) from EU long-term dataset.

Translation and orientation errors in MHE and UKF pose estimation for sequence 2018-05-02 (2) from EU long-term dataset.

The estimated trajectory by MHE and UKF as well as the ground truth for sequence 2018-07-19 from EU long-term dataset.

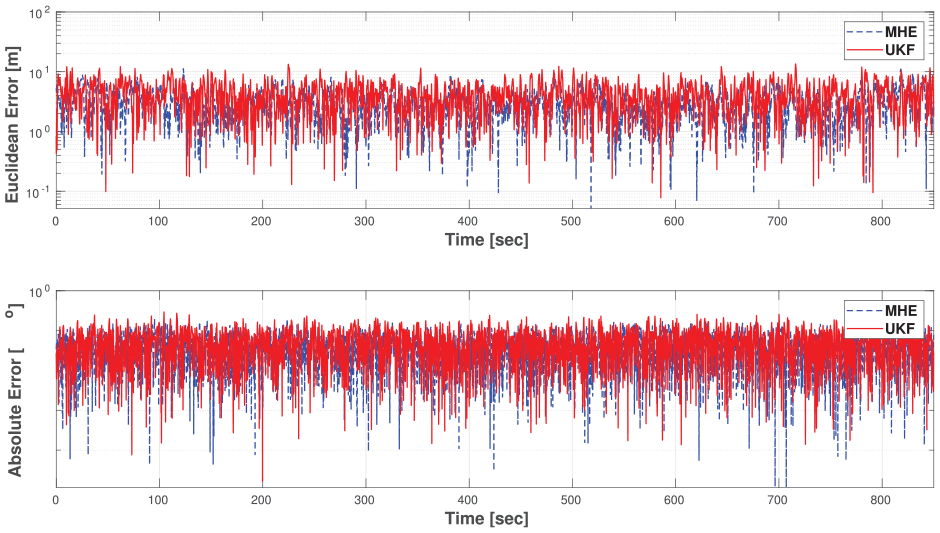

Translation and orientation errors in MHE and UKF pose estimation for sequence 2018-07-19 from EU long-term dataset.

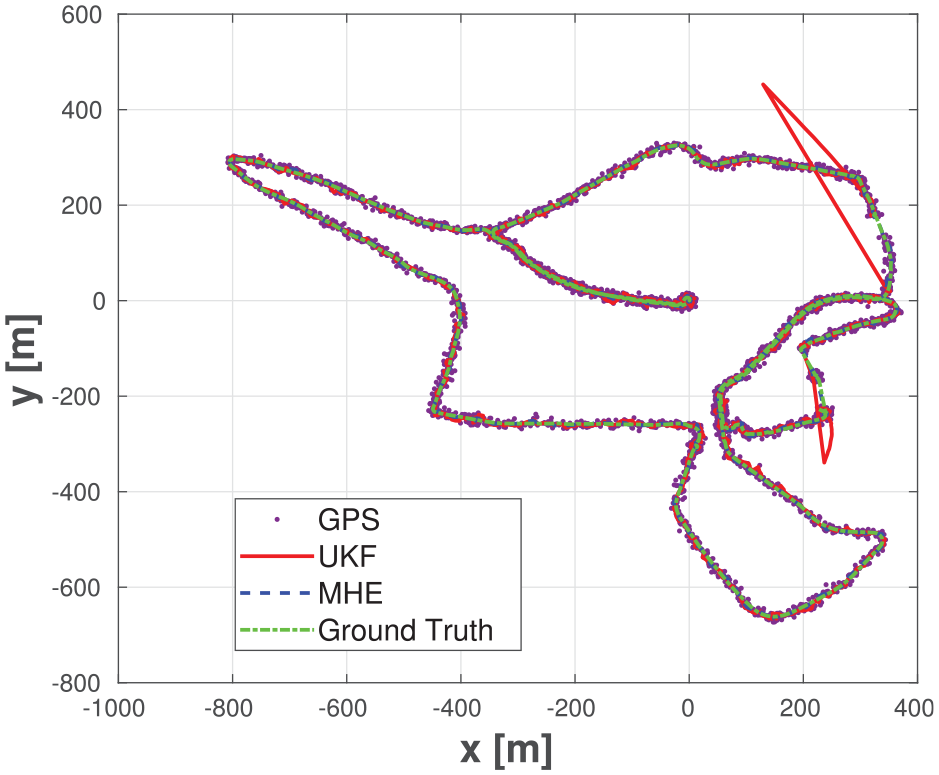

Figure 12 shows the MHE and UKF estimated paths for sequence 2019-01-31, which is slightly different and longer than the rest of the sequences. Note that during this sequence, there are missing GPS measurements as shown in the figure. At these instances, the MHE scheme managed to estimate the path correctly with minimal error while the UKF output diverged until the GPS measurements became available again. This is expected because the UKF algorithm depends on the latest measurements and prediction in order to estimate the pose while the MHE uses a window of old measurements for estimation (

The estimated trajectory by MHE and UKF as well as the ground truth and GPS measurements for sequence 2019-01-31 from EU long-term dataset.

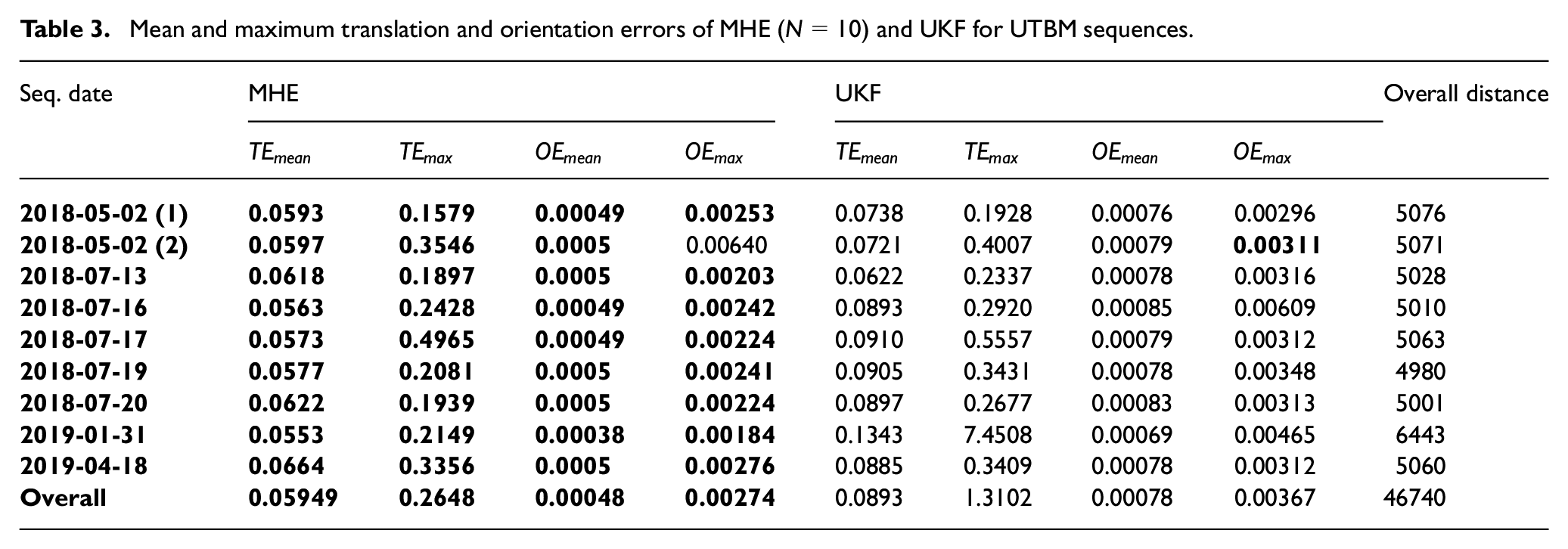

The overall quantitative results of the MHE fusion are shown in Table 3. The results show that the MHE fusion outperforms UKF fusion in every driving sequence of the EU Long-term dataset (except for the maximum orientation error of sequence 2018-05-02 (2)). The overall percentage error over the nine sequences is

Mean and maximum translation and orientation errors of MHE (

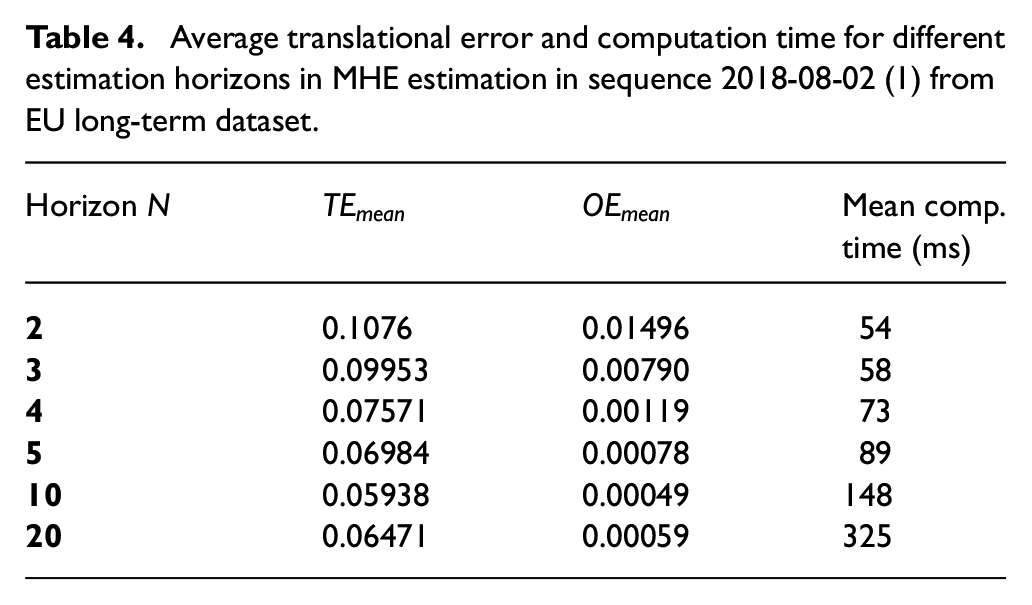

Such enhancement in the pose estimation comes with the cost of increased computation time due to solving a constrained optimization problem every time-step. Table 4 shows the mean translation and orientation errors for different estimation horizon sizes as well as the average computation time for each time-step. Notice that the estimation accuracy increases alongside the estimation horizon size. However, this in turn increases the computation cost of the algorithm. The results stated above, in Table 3, were gathered while both algorithms (UKF and MHE) were running with a frequency of

Average translational error and computation time for different estimation horizons in MHE estimation in sequence 2018-08-02 (1) from EU long-term dataset.

Although theoretically speaking, increasing the estimation horizon

Furthermore, another factor that affects the accuracy of the MHE scheme is the relation between the number of sensors used, and the computational cost of the optimization problem. As the number of sensors used increase, the computational cost of the scheme increases which might affect the performance of the MHE. Consequently, with the increase of the the number of sensors, the estimation horizon

Summit-XL steel omnidirectional robot

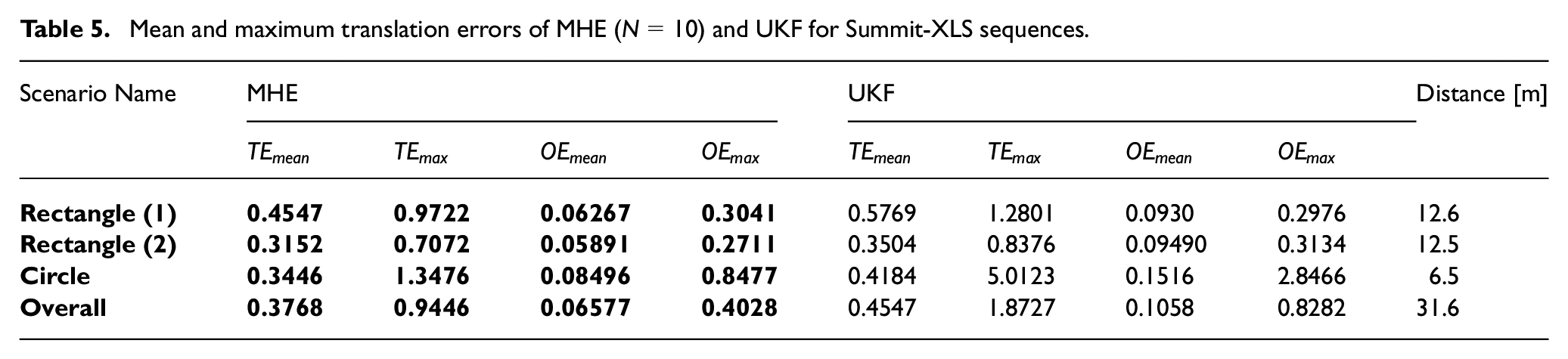

Using the Summit-XL Steel robot shown in Figure 4, three sequences of data were generated for validating the MHE localization algorithm; the generated data are from executing two rectangular paths and one circular path.

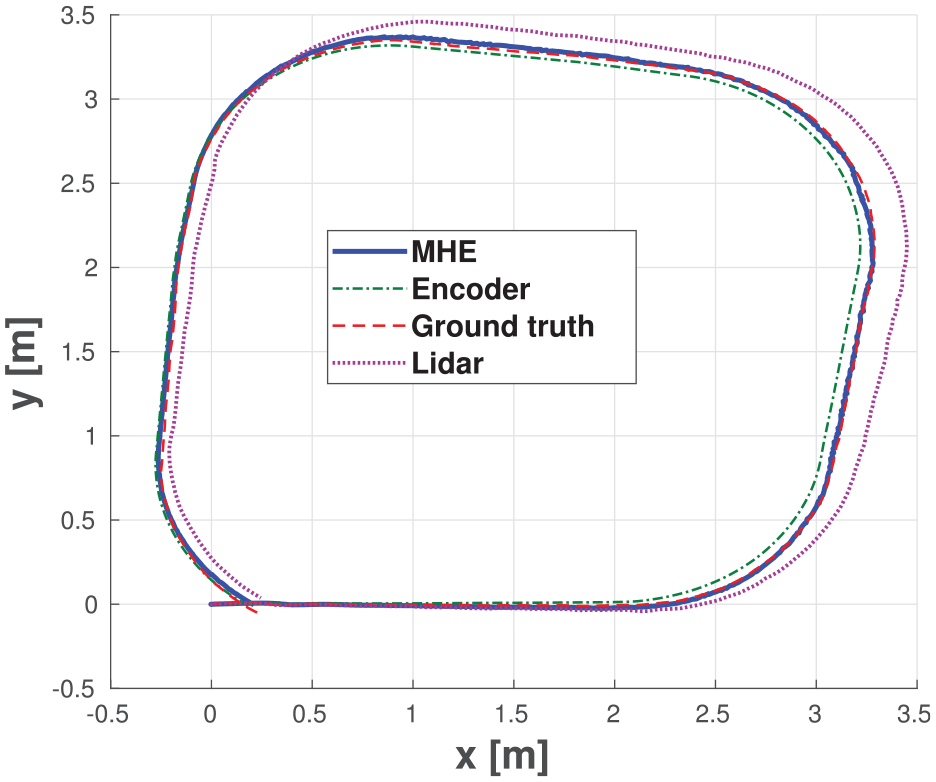

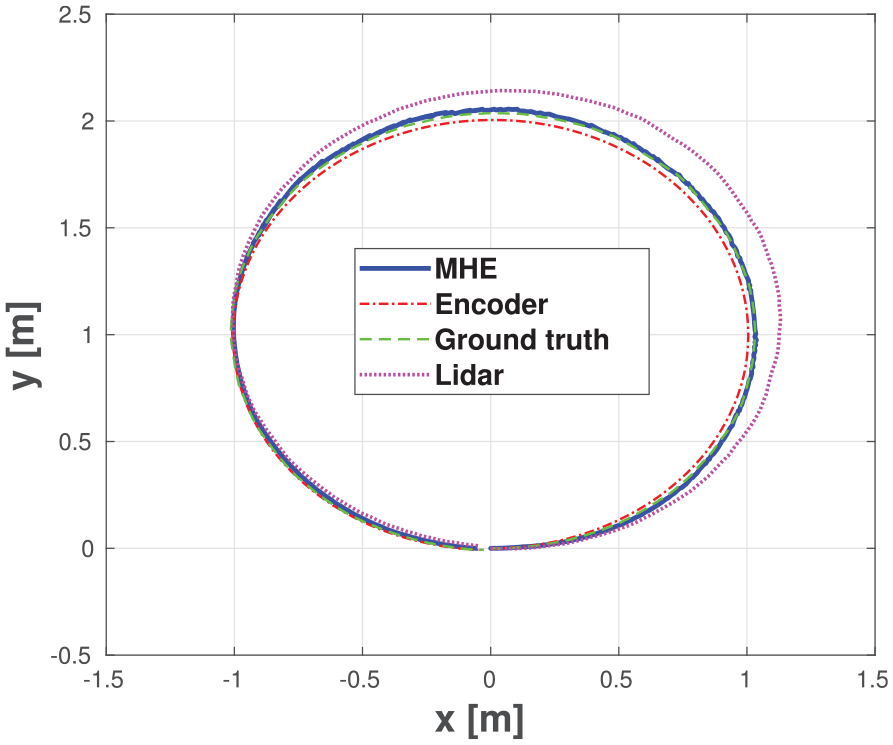

In Figure 13, the ground-truth taken by the VICON is plotted as well as each individual sensor measurement and the pose estimation using the proposed localization scheme. As can be seen, the MHE output is more accurate compared with the different odometries. Furthermore, the output of the estimation is smooth enough, which makes it suitable for navigation and control purposes.

The estimated trajectory by MHE as well as the sensors measurement and the ground truth for rectangle sequence (2) executed by Summit-XLS omnidirectional robot.

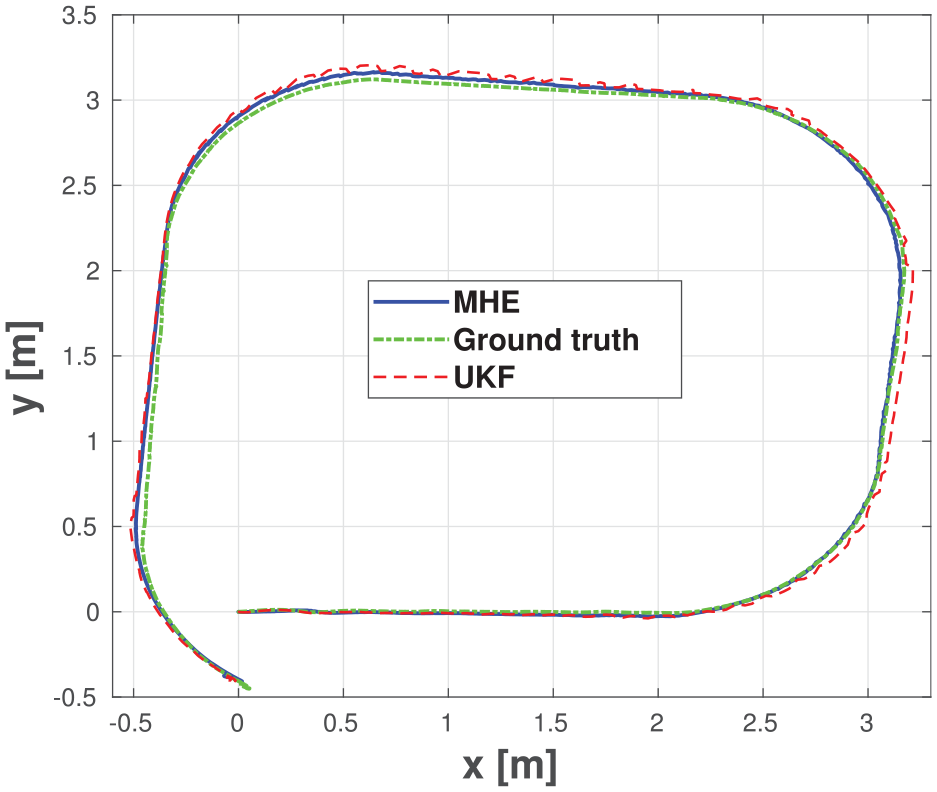

In Figure 14, another rectangular path is plotted by the ground-truth data and the estimation output from MHE and UKF algorithms. The figure shows that the accuracy of the MHE algorithm is higher than that of the UKF. Furthermore, the estimation of the UKF shows oscillations in the estimated path while the estimated path by MHE is much smoother.

The estimated trajectory by MHE and UKF as well as the ground truth for a rectangle (1) executed by Summit-XLS omnidirectional robot.

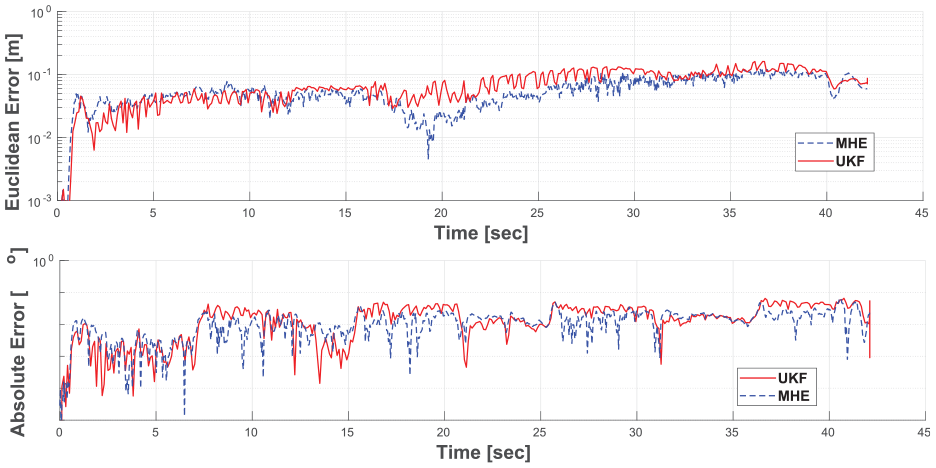

In Figure 15, the translation and orientation errors for the rectangular path (1) are reported. As can be seen, the performance of MHE is better than that of UKF. Furthermore, notice that although at some instances the estimation error in UKF seems to be better than that of the UKF, this can be attributed to the fact that the oscillations in the UKF shifted the estimation closer to the ground truth. This may show that the results of the UKF at some instances are more accurate compared with MHE; however, such oscillations indicated the lower stability and robustness of the UKF output.

Euclidean error (top) and absolution orientation error (bottom) in MHE and UKF pose estimation for rectangle sequence(1) Summit-XLS robots.

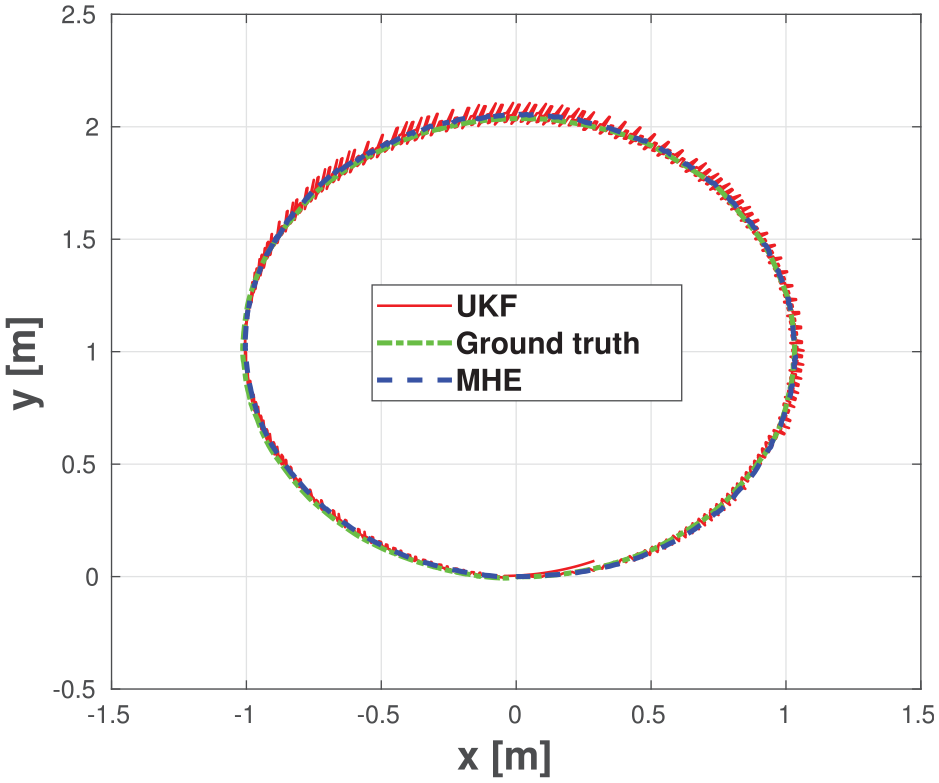

For further validation of the MHE algorithm using a different scenario, a circular path was executed using the robot. As can be seen in Figure 16 and Figure 17, the MHE estimation output is more accurate compared with that of different sensors odometries as well as the output of UKF fusion algorithm. Furthermore, in this scenario, the stability of the MHE algorithm is much more obvious as the output of UKF suffered from large amount of oscillations unlike the MHE, which did not suffer from any considerable oscillations in its output.

The estimated trajectory by MHE as well as the sensors measurement and the ground truth for a circle sequence executed by Summit-XLS omnidirectional robot.

The estimated trajectory by MHE as well as the sensors measurement and the ground truth for a circle sequence executed by Summit-XLS omnidirectional robot.

Finally, Table 5 shows the quantitative results from Summit-XL Steel robot sequences. The translation and orientation errors from both MHE and UKF estimation output is reported for the three scenarios. It can be seen that MHE outperforms UKF in all cases and for all evaluation metrics.

Mean and maximum translation errors of MHE (

Conclusion

In this paper, a generic multi-sensor fusion scheme using MHE was proposed for the localization of autonomous vehicles. The proposed scheme takes into account sensors with different update rates, missed measurements and outlier rejection; different vehicle types; and real-time applicability. The proposed strategy was tested using data generated numerically and experimentally; that is, autonomous driving sequences from EU Long-term dataset as well as experimental sequences using an omni-directional mobile robot. The MHE localization output was compared against that of the UKF. The MHE results showed superior accuracy, better stability, and robustness in all cases.

While the proposed localization scheme meet the computational requirements up to certain levels, the future work aims at further reducing the computational demand of the proposed algorithm. This will be done by investigating improved code-generation techniques to reduce the optimization problem solution time. Furthermore, the stability of the estimator will be studied based on the findings of Rao and Rawlings (2000).

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: We acknowledge the support of the Natural Sciences and Engineering Research Council of Canada (NSERC), [funding reference numbers PDF-532957-2019 (M.W. Mehrez), STPGP 506987 (S. Jeon)].