Abstract

Can discrete emotions be leveraged to combat privacy powerlessness and motivate privacy protection? Extending theorizing and research that exclusively focus on cognitive processes underlying online privacy decision-making, this study builds on gain/loss framing research and the emotions-as-frames model to understand the effectiveness of emotional appeals (i.e., hope and fear) in generating attitudinal and behavioral change in online privacy. Two online experiments that differed in message topics (Study 1: changing social media privacy settings; Study 2: rejecting website cookies) were conducted with demographically-stratified samples of U.S. adults. Results showed that gain-frame-induced hope consistently led to reduced privacy powerlessness, which was associated with increased privacy protection intention across both topics, whereas loss-frame-induced fear only led to increased protection intention in the social media context. This study advances theorizing on the role that discrete emotion plays in online privacy management from a communication perspective and highlights the novel effect of hope in motivating attitudinal and behavioral change. Findings also help to answer recent calls for remedies to privacy powerlessness and inform message-based intervention designs for consumer empowerment.

Introduction

Despite the fact that a large majority of adults in the U.S. express significant concern about companies’ data collection practices and desire more control over their personal data, few people avail themselves of existing privacy protection mechanisms, such as reading privacy policies or using secure messaging services (McClain et al., 2023). This is unfortunate because, unlike many other highly developed countries, including those in the European Union where privacy regulation is more robust, privacy protection responsibilities in most of the U.S. are largely delegated to individual users. This makes it especially important for users to be proactive in mitigating potential privacy risks online, such as identity theft, scamming, and phishing.

Of central concern to privacy scholars and advocates is thus how to motivate individual privacy protection behaviors. Previous intervention approaches have addressed this issue from a “knowledge gap” perspective, assuming that people lack awareness of or knowledge about online privacy protection. Consequently, these studies have aimed at, for example, cultivating user awareness about privacy risks, increasing technical knowledge and literacy, and training effective privacy protective strategies (Boerman et al., 2024; Strycharz et al., 2019, 2021).

Contrary to the knowledge gap assumption, however, mounting evidence suggests that people do not engage in privacy protection because they develop perceptions of resignation, powerlessness, or apathy, and consider privacy protection futile in the face of corporate dataveillance practices (e.g., Draper & Turow, 2019; Hargittai & Marwick, 2016; Hoffmann et al., 2016; Lutz et al., 2020). Indeed, recent data show that 61% of U.S. adults are skeptical that anything they do will make a difference to their online privacy (McClain et al., 2023). Moreover, such perceptions of resignation and powerlessness are even more pronounced among privacy-knowledgeable individuals (Hargittai & Marwick, 2016; Hoffmann et al., 2016; Turow et al., 2015), indicating that “having more knowledge is not the protective feature that academics have suggested” (Turow et al., 2015, p. 17). And literacy training is often expensive and requires high levels of user commitment. Given these factors, it is not surprising that knowledge-based interventions have yielded mixed evidence in terms of their effectiveness in promoting privacy protection among online consumers (e.g., Strycharz et al., 2019, 2021).

Alternatively, emotional appeals that leverage theoretically-informed message design (e.g., gain/loss framing, fear appeals) to induce discrete emotions (e.g., hope and fear) and achieve persuasive outcomes offer a promising way to motivate attitudinal and behavioral change (e.g., Nabi et al., 2018), though this approach has yet to be theoretically integrated or empirically tested in the privacy scholarship. Indeed, people’s evaluations of the online environment vis-à-vis their information safety are likely to trigger various emotions, which may have implications for their subsequent attitudes (e.g., privacy powerlessness) and behaviors (e.g., self-disclosure and privacy protection; Cho et al., 2020). Nonetheless, current theoretical and intervention approaches in the online privacy literature have overwhelmingly focused on the cognitive processes underlying privacy decision-making (e.g., risk-benefit analysis, Dienlin & Metzger, 2016) and rarely consider the role of discrete emotions.

To fill this gap, we draw from research on gain/loss framing (Kahneman & Tversky, 1984) and the emotions-as-frames model (EFM; Nabi, 2003, 2007) to investigate whether gain-/loss-framed efficacy messages about online privacy protection can help combat privacy powerlessness and motivate privacy protection intention, and whether frame-induced emotions, fear and hope in particular, can explain any observed persuasive effects. We also account for the context-dependent nature of online privacy (Masur, 2019; Nissenbaum, 2010) by exploring how message effectiveness compares between two behavioral contexts where privacy risks are prevalent: adjusting social media privacy settings and rejecting cookies. Findings from this research make several important theoretical and practical contributions to online privacy scholarship by: (1) advancing the role of emotion in the theorizing of communicative privacy management, (2) demonstrating the effect of discrete emotions in addressing privacy powerlessness as a pressing yet understudied attitudinal barrier to privacy protection, and (3) highlighting the contextual dependency nature of online privacy across message contexts. We begin with a review of theoretical and intervention approaches to online privacy protection. Then we demonstrate how the well-established literatures on gain/loss framing and the EFM can be leveraged to inform message-based interventions on combating privacy powerlessness and motivating privacy self-protection.

Theoretical and Intervention Approaches to Online Privacy Protection

Relevant Theoretical Perspectives

Seminal theoretical perspectives in the online privacy literature have an overwhelming focus on cognition. For example, the privacy calculus model (Laufer & Wolfe, 1977) and its later extensions (Dienlin & Metzger, 2016) posit that privacy decisions are guided by three cognitive factors: perceived benefits of information sharing, perceived costs to privacy, and privacy self-efficacy. When people perceive greater benefits of information sharing over privacy concerns, they tend to self-disclose (Dienlin & Metzger, 2016). However, people do not always engage in cost-benefit considerations to decide the extent to which they may disclose personal information. Rather, they may draw from other cues to help inform privacy decisions. One such cue that is under-theorized in the privacy literature is emotions, which are defined as short-lived internal mental states that characterize evaluative, valenced responses to events or objects (Ortony et al., 1988). Discrete emotions that arise from people’s appraisal of the online environment vis-à-vis their information safety may indeed serve as cues to guide privacy attitudes and behaviors. The privacy calculus model therefore does not account for such affect-based processes.

Although more recent work has applied an ostensibly emotion-oriented persuasion model—the protection motivation theory (PMT, Rogers, 1975, 1983)—to privacy decision-making (e.g., Boerman et al., 2021; van Ooijen et al., 2024; Wang et al., 2025), PMT in fact has an overwhelming emphasis on cognition. PMT was initially developed to understand how people respond to fear appeal messages, which include a threat component that aims to create a sense of severity and vulnerability and an efficacy component that highlights a viable approach to conquer the threat (Rogers, 1975). PMT predicts that people go through two sets of cognitive appraisals in response to a fear appeal: threat appraisal (i.e., perceived severity, perceived vulnerability, and perceived rewards of engaging in a maladaptive response) and coping appraisal (e.g., response efficacy, self-efficacy, and response cost; Rogers, 1983). When threat and coping appraisals are high, people are motivated to engage in the recommended behavior. Although in his later refinement of the theory, Rogers (1983) briefly acknowledged that fear could impact attitudes and behaviors indirectly through threat appraisals, he contended that PMT’s emphasis is on the cognitive processes and protection motivation rather than the emotion of fear as a visceral, physiological response. Therefore, while PMT connects communication to cognition and persuasion, and acknowledges the potential role of fear, its consideration of fear specifically is limited, and it does not address the potential role of other discrete emotions in persuasion outcomes.

More recently, Cho et al. (2020) built upon cognitive appraisal theories of discrete emotion (e.g., Izard, 1993; Lazarus, 1991) to advance a coping model of online privacy. According to these theories, discrete emotions that arise from appraisal patterns can impact the allocation of physical and mental resources and action readiness. Each discrete emotion, such as hope and fear, has a core relational theme that captures the person-environment relationship based on appraisals (e.g., fearing the worst but yearning for better for hope, imminent harm for fear). Cho et al. (2020) claimed that a person’s threat and coping appraisals of the online environment should engender a set of negative emotions (i.e., anger, frustration, regret, anxiety, and fear). Each discrete emotion varies in its associated goals, motivations, and action readiness that guide people’s coping strategies in response to online privacy threats (i.e., problem-focused, emotion-focused, and communication strategies). The primary contribution of the coping model of online privacy is recognizing the cognition-emotion-coping link in the online privacy context and specifying a range of negative emotions and their associated coping strategies. However, this model does not consider the role of communication in inducing discrete emotions; that is, a message is not required to trigger the appraisal process. For example, users may perceive high privacy threats and thus feel fear and anxiety simply due to a lack of legal privacy protection. This model also does not address the role of positive emotions such as hope in guiding privacy decisions.

In sum, current theoretical perspectives in online privacy scholarship have been foundational in guiding an understanding of how users cognitively assess online privacy risks and their coping potentials. Although emotions have been referenced, these perspectives tend to prioritize the cognitions related to emotions rather than the affective states themselves. And they consider the role of contextual, environmental factors rather than communication in these processes. Recognizing these limitations, our study aims to fill this theoretical gap in privacy research by drawing attention to the communication-emotion-attitude/behavior link.

Intervention Approaches to Online Privacy Protection

Guided by theoretical models that emphasize cognition (e.g., PMT), communication-based interventions in privacy research have consequently targeted cognitive appraisals through knowledge- and framing-based strategies. However, findings show mixed evidence in terms of their effectiveness.

Knowledge Interventions

Knowledge interventions tend to aim at cultivating user privacy awareness and educating on privacy protection strategies to achieve attitudinal and behavioral change. For example, using PMT, Strycharz et al. (2021) tested intervention messages designed to provide either technical or legal knowledge about consumer privacy against personalized advertising. Contrary to their expectations, exposure to neither the technical nor the legal message led to increased cookie rejection motivation. Message exposure also did not indirectly motivate cookie rejection through perceived severity, perceived vulnerability, self-efficacy, or response efficacy. Similarly, Strycharz et al. (2019) found that exposure to a video on technical knowledge about Google’s personalized advertising practices did not motivate opt-out behavior either directly or indirectly through perceived vulnerability, privacy concern, self-efficacy, and response efficacy. In addition, Boerman et al. (2024) tested the immediate and longitudinal effects of three intervention messages that aimed to (1) heighten threat appraisals, (2) boost coping appraisals, and (3) combat feelings of privacy fatigue, respectively, to motivate subsequent privacy protection behaviors. Their findings showed that, for example, the coping message increased privacy self-efficacy and response efficacy of blocking behavioral tracking immediately, and the fatigue message increased actual cookie rejection behavior. However, neither the threat nor the fatigue message lowered threat appraisals or privacy fatigue in either the short or the long term.

Gain/Loss Framing

Gain/loss framing has similarly received substantial attention in the privacy scholarship but has shown mixed evidence. As a message-based approach, gain/loss framing is a type of equivalence framing that presents the same piece of information in different ways (Kahneman & Tversky, 1984). Gain-framed messages tend to emphasize potential benefits and positive outcomes of engaging in a recommended action (e.g., benefits to privacy by rejecting website cookies), and loss-framed messages tend to address possible costs and negative consequences of not performing such an action (e.g., costs to privacy by not rejecting website cookies).

Empirically, Seo and Park (2019) found that gain framing was more effective at convincing users to change their password for an online service among those who perceive higher psychological ownership of the service (i.e., spend more time on and feel more attached to an online social network), whereas loss framing was more effective for those who perceive lower psychological ownership. Plachkinova and Menard (2022) demonstrated that loss-framed (vs. gain-framed) messages were more effective at increasing internet security concerns among those with low baseline concerns. Conversely, Ghaiumy Anaraky et al. (2024) found that gain/loss framing did not impact users’ adoption of a smart device network inspector. The mixed evidence is consistent with meta-analytic findings in other contexts showing that gain/loss framing has limited direct persuasion effects, and that neither type of framing is fundamentally more effective than the other (O’Keefe & Jensen, 2007, 2009). This suggests the need to investigate possible mediating and moderating contextual factors (Ghaiumy Anaraky et al., 2024; Nabi et al., 2018).

Taken together, intervention research across these communication-based approaches indicates limited effectiveness of exclusively focusing on cognition in intervention designs and underscores the need to explore alternative explanatory mechanisms. Interestingly, though much of this prior research draws from fear appeal theorizing, the actual role of discrete emotions in motivating attitudinal and behavioral change has not been empirically tested in current message-based privacy interventions. We thus turn to the emotions-as-frames model (Nabi, 2003, 2007) that allows us to theoretically integrate message design and emotion into online privacy research.

Emotions as Frames

The emotions-as-frames model (EFM; Nabi, 2003, 2007) provides a theoretical integration of communication, emotion, and persuasion. The EFM predicts that discrete emotions, once elicited by message content that is consistent with their core relational theme, can serve as frames that privilege certain information in terms of accessibility and processing, thus influencing subsequent attitudes and behaviors in pursuit of emotionally-consistent goals (Nabi, 2003, 2007). In other words, the EFM suggests that message-induced emotions mediate the effects of gain/loss framing on subsequent persuasive outcomes. Indeed, mounting evidence, including findings from a meta-analysis (Nabi et al., 2020), supports the mediating role of emotions in explaining the persuasive effects of gain/loss framing in various contexts, such as climate change and health (e.g., Bilandzic et al., 2017; Nabi et al., 2018, 2024), though the EFM has yet to be tested in the online privacy context.

Still, consistent with the EFM, direct relationships between discrete emotions and privacy attitudes and behaviors have been documented. For example, Cho et al. (2020) demonstrated that negative emotions, such as frustration, regret, anxiety, and fear, are correlated with increased privacy protection behaviors. Boss et al. (2015) found that fear induced by reading a virus warning led to higher intentions to, and more actual use of, privacy-protective strategies, such as data backup and using anti-malware software. Zhu et al. (2018) found a positive correlation between fear and mobile app users’ intention to adopt security protection measures. And Li et al. (2011) found that while joy positively predicted privacy self-efficacy and negatively predicted privacy risk belief, fear positively predicted risk belief. These studies provide evidence for direct relationships between emotions and privacy outcomes; however, it remains unclear, from a message design standpoint, how emotions can be evoked by well-constructed persuasive messages (e.g., gain/loss framing, fear appeals) to combat privacy powerlessness and motivate privacy protection.

Emotional Framing of Fear Appeals

In a fear appeal message, PMT is clear that the threat component (i.e., severity and vulnerability) can generate fear, but it is less clear about how people emotionally respond to the efficacy component (i.e., response- and self-efficacy). In fact, the efficacy component that describes the viability of a recommended approach can be gain-/loss-framed to induce different emotions (Nabi, 2003, 2007). Specifically, the emphasis on achieving possible benefits as a result of enacting a recommended behavior of gain framing likely aligns with the core relational themes of positive emotions, such as hope (i.e., fearing the worst yet yearning for better), whereas the focus on avoiding potential loss as a result of action or inaction of loss framing may capture core relational themes of negative emotions such as fear (i.e., imminent threat). This speculation was indeed validated by Nabi et al. (2018), who found that in an environmental context, gain-framed efficacy messages induced hope, whereas loss-framed efficacy messages induced fear. However, we are not aware of existing studies that incorporate a full fear appeals approach, coupled with gain/loss framing, to study how discrete emotions can explain message effects on attitudinal and behavioral changes in online privacy contexts. The first goal of this study is thus to validate framing effects on inducing emotions in the online privacy domain. We thus predict:

Combating Privacy Powerlessness

Once discrete emotions are elicited, the EFM predicts that these emotions will serve as key drivers of attitudinal and behavioral change. This is especially important as privacy scholars have recently called for potential remedies to perceptions of privacy cynicism, powerlessness, and resignation, which broadly encompass (low) efficacy beliefs that individuals do not have control over privacy and thus represent a key attitudinal barrier to behavioral change that intervention approaches should target (Lutz et al., 2020; Ranzini et al., 2023). We directly respond to Ranzini et al.’s call by arguing that fear appeal messages designed to boost efficacy in privacy protection and induce emotions should help to combat feelings of powerlessness. Specifically, we posit that the action tendencies of hope (i.e., to move toward the desired outcome) and fear (i.e., to avoid danger) should help overcome feelings of powerlessness and consequently motivate privacy protection.

Indeed, though much privacy theorizing assumes user agency and control over online privacy (e.g., the privacy calculus model, communication privacy management theory), burgeoning research on privacy powerlessness reveals that people increasingly perceive online privacy risks to be inevitable and privacy protection to be ineffective (e.g., Cho, 2022; Draper & Turow, 2019; Hargittai & Marwick, 2016; Lutz et al., 2020). Various constructs have been developed to describe user disempowerment in privacy protection, such as digital resignation, privacy fatigue, privacy cynicism, and privacy helplessness (see Draper et al., 2024 for a review). Privacy cynicism, for example, is conceptualized as a coping mechanism through which people who lack privacy protection, despite high concerns, perceive privacy protection to be ineffective as a means to reduce dissonance (Hoffmann et al., 2016). Privacy cynicism includes four dimensions: powerlessness, resignation, mistrust, and uncertainty (Lutz et al., 2020). Although this research is still developing, findings show that privacy resignation (Lutz et al., 2020), privacy fatigue (Choi et al., 2018), and privacy helplessness (Cho, 2022) were negatively related to privacy protection behavior. Privacy cynicism was also found to attenuate the positive relationship between perceived vulnerability of online data breaches and privacy protection behavior (van Ooijen et al., 2024).

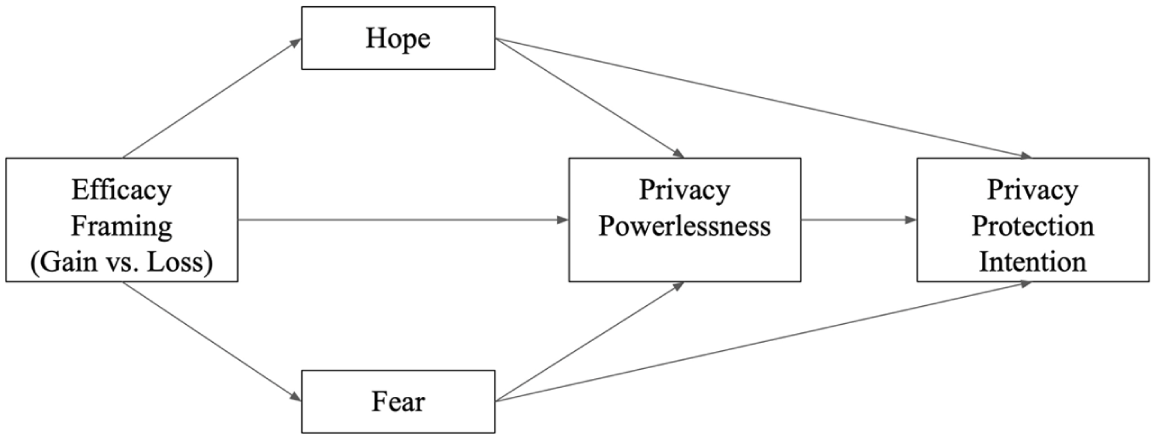

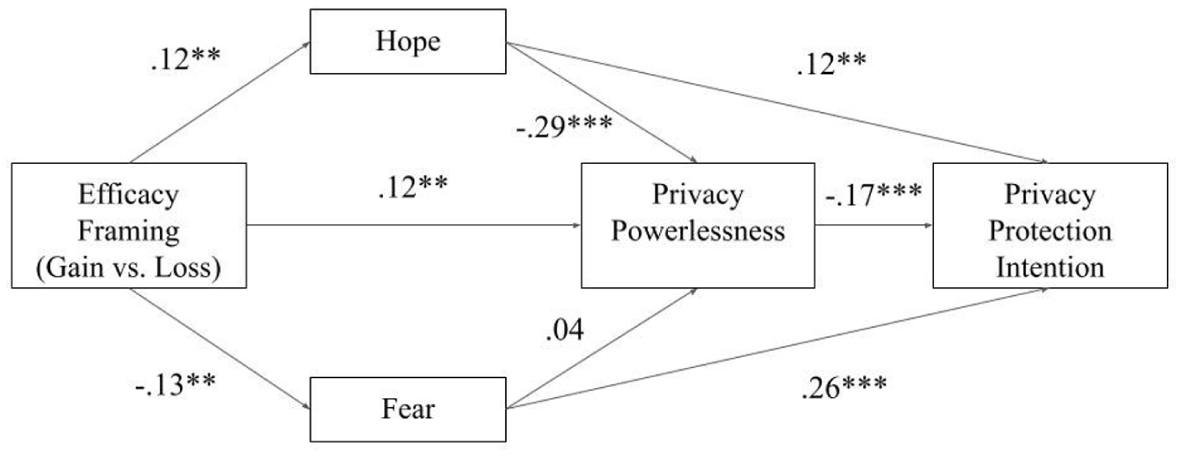

Given the mixed evidence on the effectiveness of cognition-based knowledge training (e.g., Strycharz et al., 2019, 2021), intervention approaches that target perceptions of disempowerment are especially timely (see Boerman et al., 2024). Our study focuses on privacy powerlessness specifically, which was found to be the most prevalent subdimension of privacy cynicism (Lutz et al., 2020). As theorized by the EFM, gain-/loss-framed efficacy messages should respectively induce hope and fear, which then reduce privacy powerlessness and increase privacy protection intention. Therefore, we predict the following (see Figure 1 for a conceptual diagram):

Hypothesized path mediation model.

Because previous research has found mixed evidence for the direct relationships between privacy powerlessness (as well as other closely related constructs) and privacy protection behaviors (e.g., Cho, 2022; Choi et al., 2018; Lutz et al., 2020), we next ask:

Finally, as an exploratory feature of this study, we account for the context-dependent nature of online privacy (Masur, 2019; Nissenbaum, 2010) by testing the proposed relationships across two different online privacy contexts. Nissenbaum’s (2010) framework of contextual integrity argues that privacy assessments are governed by four key parameters: contexts (i.e., the backdrops that inform the norm of information flow), actors (i.e., entities involved in the information flow; senders share information about the subjects to the recipients), information attributes (i.e., characteristics or nature of information being shared), and transmission principles (i.e., rules that facilitate or constrain information flow). Masur’s (2019) situational perspective similarly recognizes that various internal (e.g., situational needs and motives), interpersonal (e.g., social norms, reciprocity), and environmental (e.g., regulatory frameworks) factors combine to impact privacy and self-disclosure decisions online.

Effective fear appeal messages should therefore acknowledge these contextual factors (e.g., specific actors that pose privacy threats to the types of personal information in a given context) to better elicit emotional, attitudinal, and behavioral responses to privacy risks. Our study thus explores whether the effectiveness of emotional appeals replicates across two behavioral contexts—adjusting social media privacy settings and rejecting website cookies—that differ in various contextual factors. For example, the potential audiences for personal information on social media may include both social actors (e.g., friends, family members, scammers) and institutional actors (e.g., social media companies, advertisers), whereas the actors involved in the collection and use of website cookies are primarily institutional (Bazarova & Masur, 2020). In terms of the types of information being collected and the associated privacy risks, social media disclosures often involve personally-identifiable or relational content, and thus may elicit perceived risks such as social judgment and interpersonal conflict. On the other hand, website cookies primarily capture behavioral traces such as browsing patterns, device identifiers, and location data, which may raise concerns about institutional surveillance and algorithmic profiling. The prevalence and scale of institutional data collection may also elicit a heightened level of perceived privacy threats and a lowered sense of control (Lutz et al., 2020). Specifying these contextual differences therefore allows us to better understand how our hypothesized persuasive mechanisms may function similarly or differently across contexts and inform more nuanced message design in online privacy interventions. Therefore, we ask:

Method

Procedure and Participants

Upon IRB approval, two single-factor, posttest-only, between-subjects online experiments were conducted in August, 2024 on Qualtrics. Demographically-stratified samples of U.S. adults based on sex, age, and ethnicity were recruited from Prolific (see online Supplemental Material [OSM] Section 1 for how our sample distributions compare to those of the U.S. census data). Participants were compensated based on Prolific’s guidelines. The two studies differed by message topic and the advocated behavioral change (Study 1: changing social media privacy settings, Study 2: rejecting website cookies). For both studies, participants were randomly assigned to one of three conditions (i.e., gain-framed efficacy message condition, loss-framed efficacy message condition, and no-message condition). 1 Upon consent, participants in either the gain- or loss-framed message condition were first instructed to read a stimulus message purported to be a recent news article about online privacy. After reading the message, they answered a battery of questions that measured their emotions, privacy powerlessness, privacy protection intention, and various control variables. Those in the no-message condition, which was included to assess the persuasiveness of the constructed messages relative to no intervention, were simply instructed to fill out a questionnaire about online privacy that measured all the variables of interest. Those who had already frequently protected their online privacy were screened out prior to their participation to avoid ceiling effects, as they are not in the target audience for these types of persuasive appeals. 2

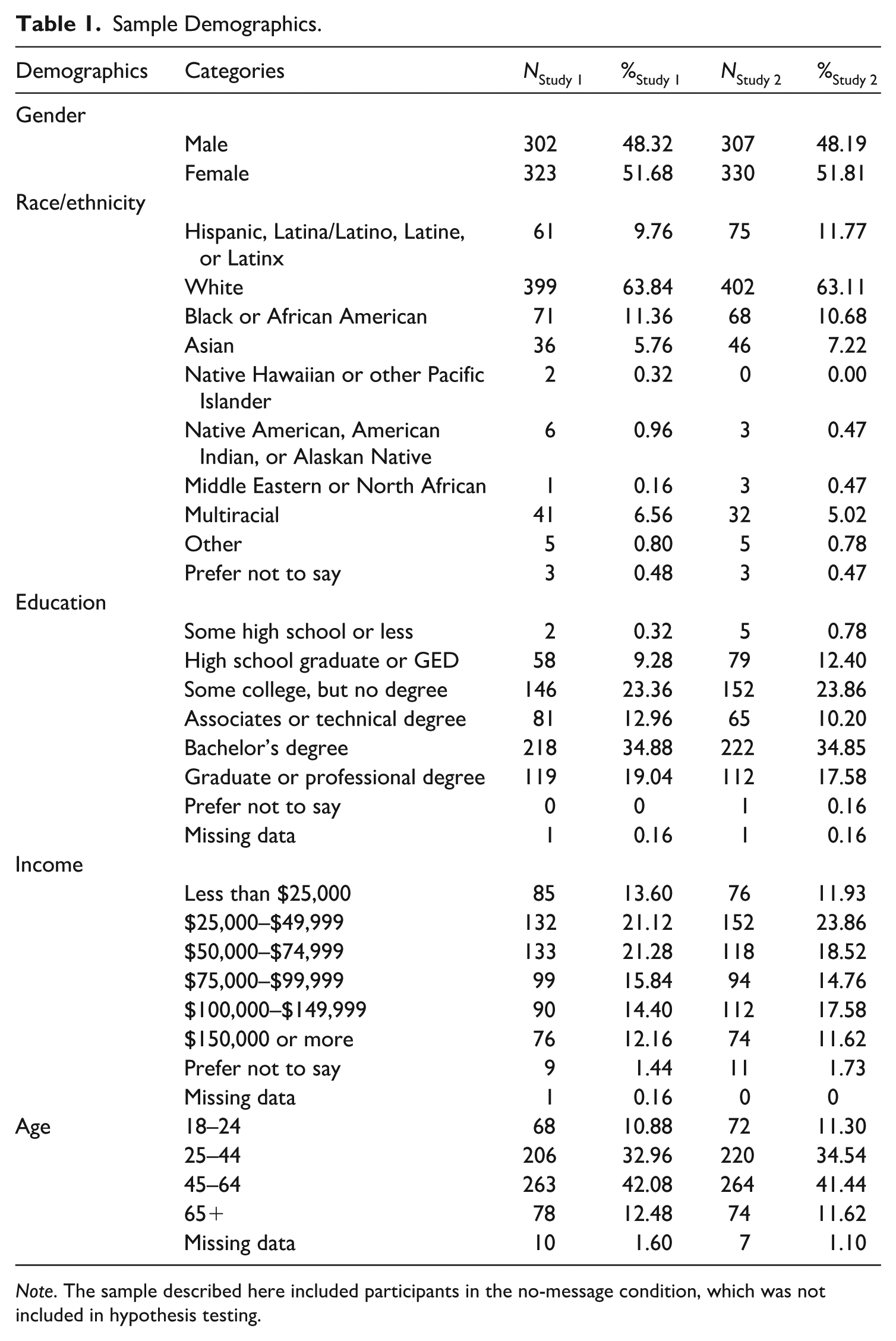

A sample size estimation based on the recommendations of Jackson (2003) and Kline (2023) suggested that a total of 260 participants would be needed to test our hypothesized path model. To account for potential low-quality data, an initial total sample of 735 participants was recruited for Study 1, and 722 participants for Study 2. Following methodological guidelines and previous research (McPhee et al., 2022; Nabi et al., 2018), data quality checks were implemented such that those who did not complete the study (n = 66), spent less than 1/3 of median completion time on the study (n = 4), failed the attention checks or admitted not paying attention to the questions (n = 82), or spent less than 10 s reading the stimuli (n = 43) were excluded from the data, which led to 625 participants for Study 1 (ngain-frame = 269, nloss-frame = 263, nno message = 93), 637 participants for Study 2 (ngain-frame = 270, nloss-frame = 272, nno message = 95; see Table 1 for sample demographics). Given that this research is focused on the effects of variation in persuasive message structure, the no-message group was recruited simply to assess whether the constructed messages were appropriately persuasive and was thus excluded from hypothesis testing, leaving 523 participants for Study 1 and 542 participants for Study 2.

Sample Demographics.

Note. The sample described here included participants in the no-message condition, which was not included in hypothesis testing.

Stimuli Design

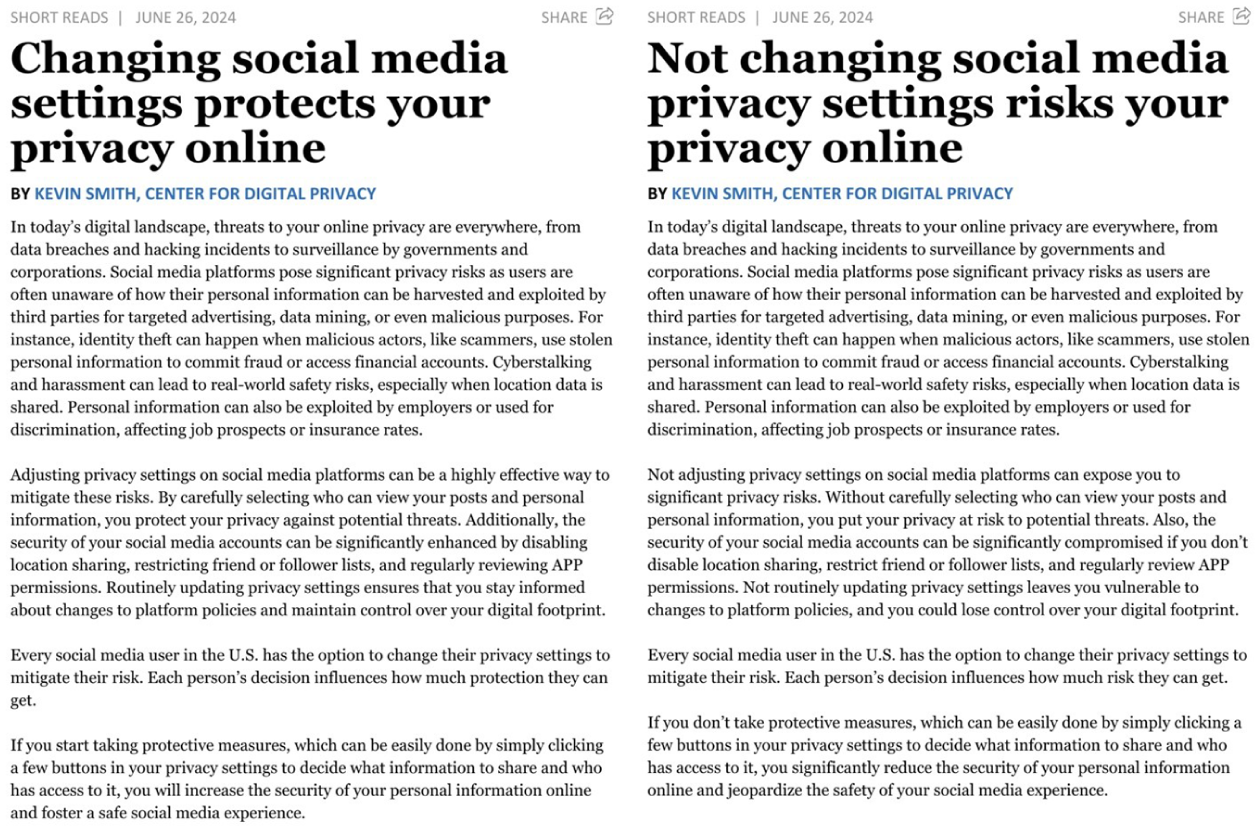

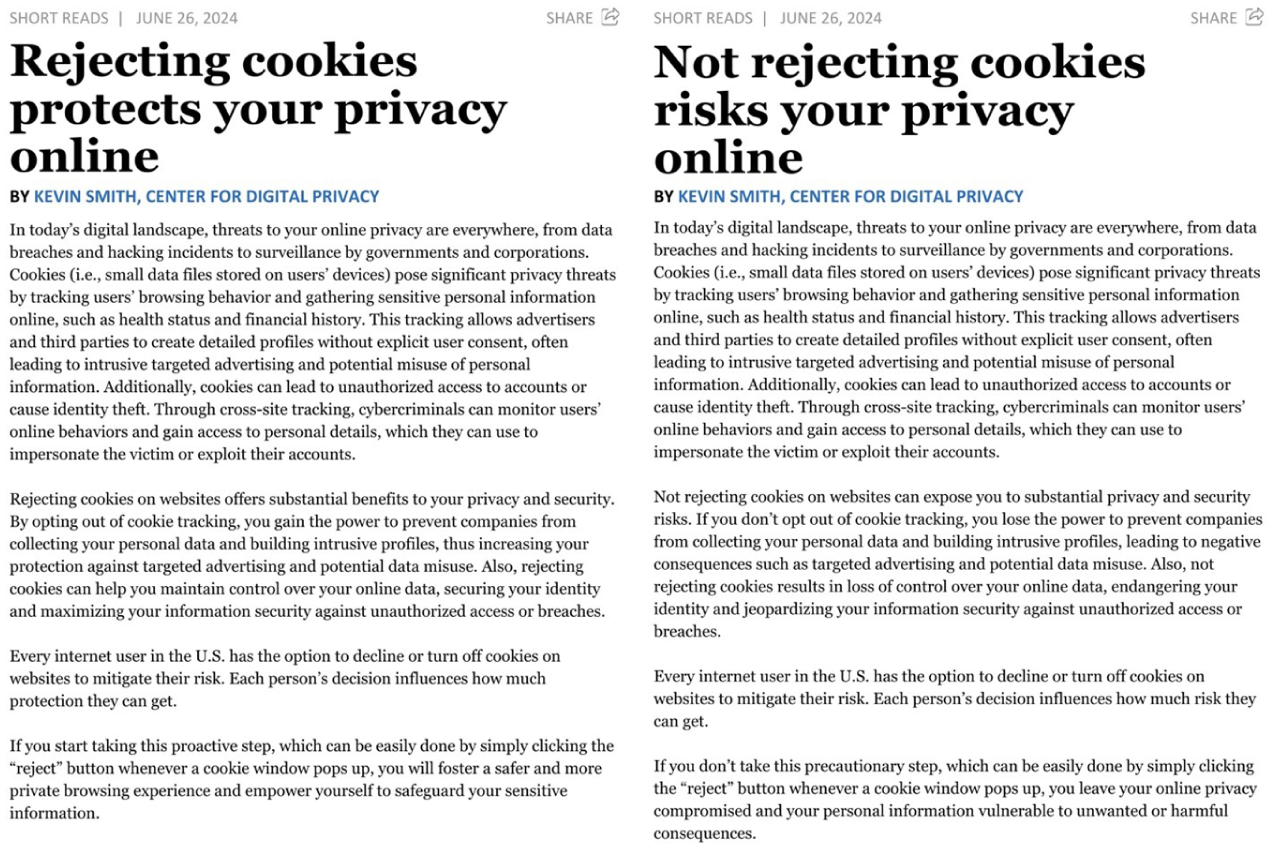

Guided by fear appeal research (Rogers, 1983; Witte, 1994), the messages were designed to start with a threat component, followed by an efficacy component (see Figures 2 and 3 for the stimuli). The threat component (i.e., paragraph 1 in the stimuli) described either the pervasive privacy risks on social media or on the Internet that could lead to severe privacy consequences, such as identity theft and cyberstalking, and remained constant for its respective topic. The efficacy component (i.e., paragraphs 2–4 in the stimuli) described how adjusting social media privacy settings or rejecting cookies could be an effective approach to mitigate such privacy threats (i.e., response efficacy) and reassured participants about their ability to protect their online privacy (i.e., self-efficacy). The gain/loss framing manipulation was incorporated into the efficacy message component, such that the gain-framed efficacy component emphasized heightened privacy protection as a result of adopting the advocated strategies (e.g., “Rejecting cookies on websites offers substantial benefits to your privacy and security”), whereas the loss-framed efficacy component addressed the loss of control over personal information as a result of not adopting the strategies (e.g., “Not rejecting cookies on websites can expose you to substantial privacy and security risks”). In other words, fear appeals served as the message context for our gain/loss framing manipulation, and consistent with equivalence framing research (Kahneman & Tversky, 1984), participants in both efficacy conditions received the same informational content that was framed differently.

Experimental stimuli for Study 1 (changing social media privacy settings).

Experimental stimuli for Study 2 (rejecting website cookies).

These messages also accounted for the key parameters of contextual integrity (Nissenbaum, 2010) by specifying, for example, the context (i.e., social media vs. the Internet/websites), actors involved (e.g., social media platforms, malicious actors, and scammers vs. advertisers and third parties), and information type (e.g., financial information and location data vs. behavioral data and automated user profiles).

Prior to the main study, we conducted a pilot study on Prolific with 312 U.S. adults. We tested each of the message components separately to ensure they met their respective theoretical assumptions (i.e., threat components induced fear, gain-/loss-framed components generated perceptions of potential gain/loss, and all messages were perceived as realistic). Findings confirmed that all assumptions were met and the manipulation was successful (see OSM Section 2 for details).

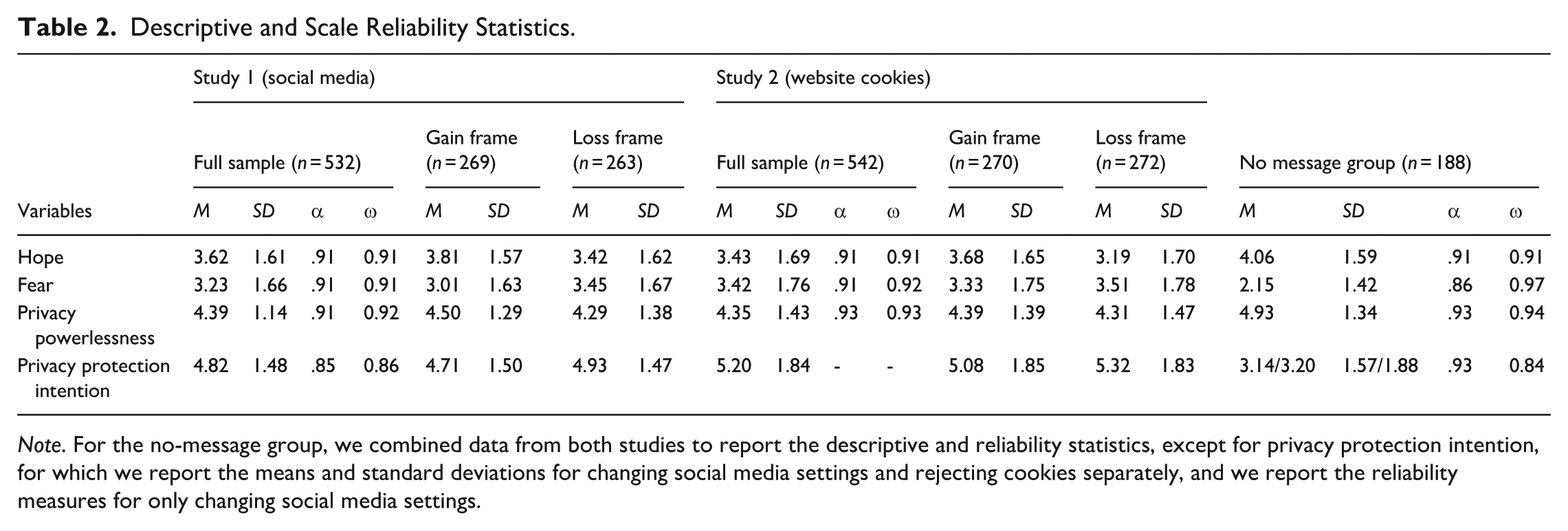

Measures

Key variables were measured based on established scales. See Table 2 for a summary of descriptive and scale reliability statistics. A complete list of survey items is available in the OSM Section 3. To measure emotions, participants reported on a 7-point scale (1 = not at all, 7 = very much) how much hope (hopeful, encouraged, optimistic) and fear (anxious, scared, worried) they experienced after reading the article (Nabi et al., 2018). Privacy powerlessness was measured by adopting the powerlessness dimension of the privacy cynicism scale (Lutz et al., 2020). On a 7-point scale (1 = strongly disagree, 7 = strongly agree), participants indicated how much they agreed with five statements, such as “Even if I try to protect my data, I can’t prevent others from accessing them.” On a 7-point scale (1 = extremely unlikely, 7 = extremely likely), participants indicated their likelihood of using a battery of privacy-protective behaviors, of which five items measured changing privacy settings (i.e., “Change social media privacy settings from default,” “Restrict access to your social media profile,” “Disable location sharing on social media,” “Use an exclusive friend list for certain posts,” and “ Review social media privacy policies”) and one item measured rejecting cookies (i.e., “Reject, turn off, or delete cookies or other tracking on websites.”) These measures were adapted from previous research (e.g., Boerman et al., 2021; Wang & Metzger, 2023) and were specifically chosen to reflect the privacy behaviors advocated in the messages. Of note, while there are many behavioral options for adjusting social media privacy settings, the option for rejecting cookies is limited to one action (i.e., click on the “reject” button).

Descriptive and Scale Reliability Statistics.

Note. For the no-message group, we combined data from both studies to report the descriptive and reliability statistics, except for privacy protection intention, for which we report the means and standard deviations for changing social media settings and rejecting cookies separately, and we report the reliability measures for only changing social media settings.

Data Screening and Analysis

To test

Before running the path analysis, we checked for assumptions of multivariate and univariate normality by requesting Mardia’s multivariate test, Henze-Zirkler’s multivariate test, Anderson-Darling’s univariate test, and Chi-Square Q-Q plots. Results showed that, for both topics, our data violated the multivariate assumption of normality, and a few individual variables violated the univariate assumption of normality, although we did not see any outstanding issues with outliers or missing data. To account for these violations, we used a bootstrap sample of 5,000 and 95% confidence intervals (CI) and obtained robust standard errors in the analyses.

We also validated our underlying theoretical assumption that the persuasive messages we created did, in fact, generate changes in behavioral intention. That is, for both the privacy settings topic, F(1, 623) = 89.16, p < .001, and the cookies topic, F(1, 635) = 106, p < .001, receiving a persuasive message led to higher privacy protection intention than not receiving a message (see Table 2).

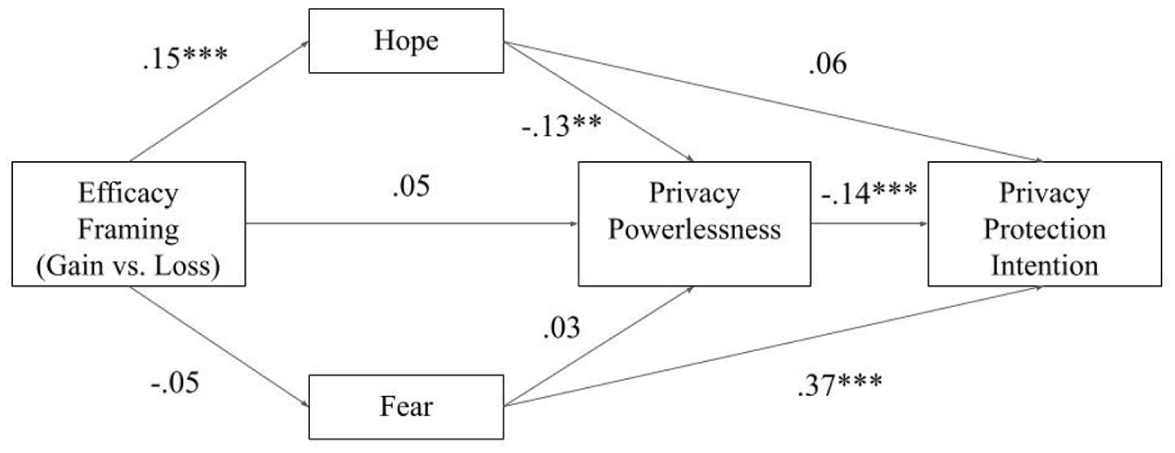

Results

Multiple criteria were taken into consideration to assess model fit. A well-fitting model should have a non-significant Chi-Square test of model fit, although this test is sensitive to sample size, a Comparative Fit Index (CFI) ≥ 0.95, a root mean square error of approximation (RMSEA) ≤ 0.05, although an RMSEA ≤ 0.08 indicates reasonable fit, and a standardized root mean square residual fit index (SRMR) ≤ 0.05 (Browne & Cudeck, 1993; Hu & Bentler, 1999). The final path models overall demonstrated exceptional fit for both Study 1 (χ² (2) = 6.20, p = .05, CFI = 0.97, RMSEA [90% CI] = 0.06, [0.01, 0.12], SRMR = 0.03) and Study 2 (χ² (2) = 10.20, p = .01, CFI = 0.93, RMSEA [90% CI] = 0.09, [0.04, 0.14], SRMR = 0.04). Figures 4 and 5 show standardized path coefficients for the social media and the cookies topics, respectively.

Results of path analysis for Study 1 (social media topic).

Results of path analysis for Study 2 (cookies topic).

Effects of Efficacy Framing on Emotion

The Mediating Role of Emotion

In testing

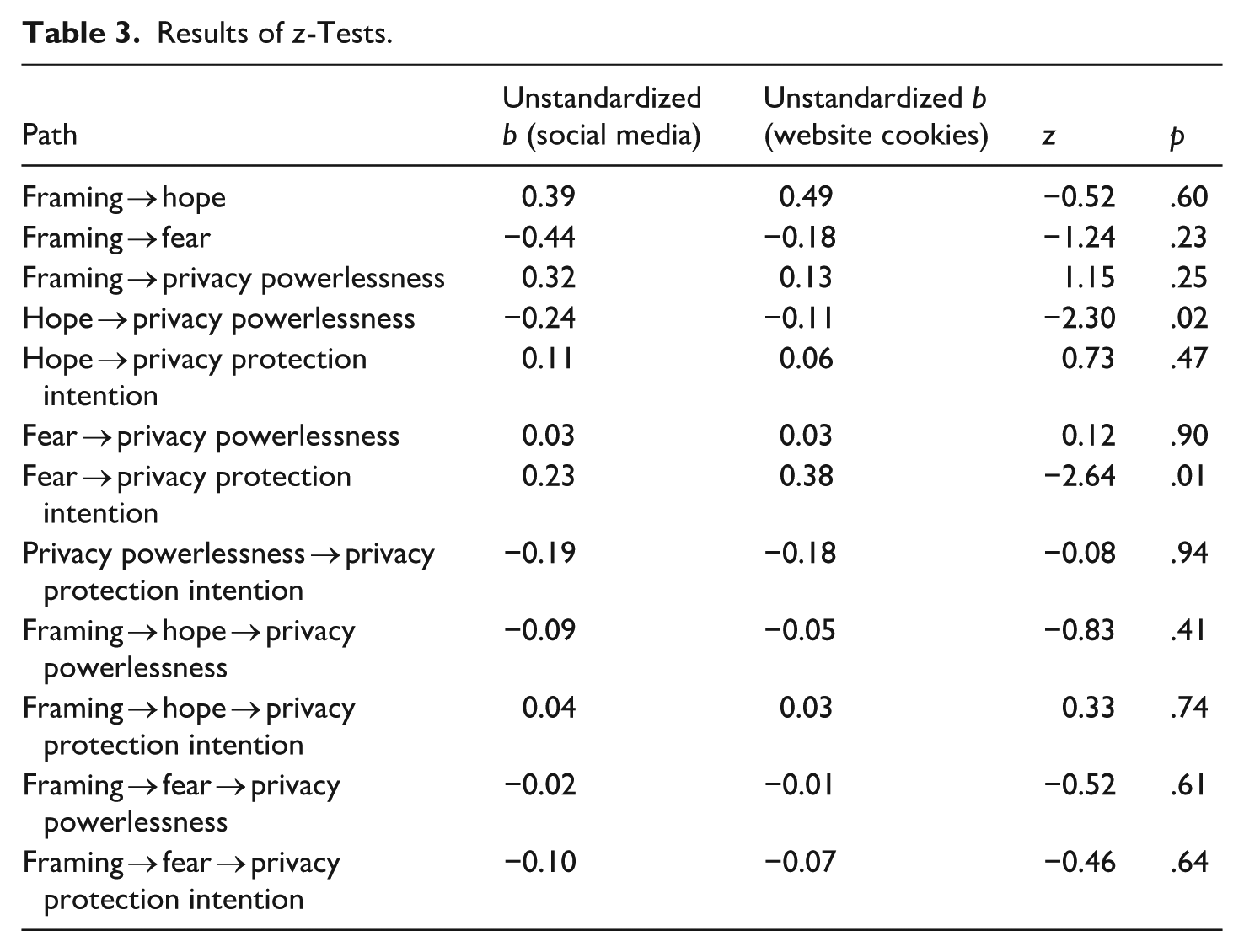

Answering

Finally,

Results of z-Tests.

Discussion

Privacy scholars and practitioners have raised concerns about the limited privacy protection strategies online users tend to adopt, despite significant risks to their personal information (e.g., McClain et al., 2023). Heightened perceptions of privacy cynicism, powerlessness, and fatigue (Choi et al., 2018; Lutz et al., 2020) further discourage users’ privacy protection motivation, thus highlighting a need for intervention approaches that specifically target such feelings of disempowerment (Ranzini et al., 2023). While the dominant cognitive focus in extant privacy theorizing (e.g., Rogers, 1983) and intervention approaches (e.g., Strycharz et al., 2019, 2021) has been fruitful, we know relatively little about the role of message-induced emotions—as a complementary yet promising theoretical mechanism—in motivating attitudinal and behavioral change in online privacy self-protection. To fill this gap in the privacy literature, we tested the effectiveness of gain-/loss-framed efficacy messages in combating privacy powerlessness and motivating privacy protection, and demonstrated the mediating role of emotions—hope and fear specifically—in explaining message effectiveness. We also showed that such processes differed between two behavioral contexts: adjusting social media privacy settings and rejecting cookies.

Findings lend considerable support to our EFM-based prediction that discrete emotions, once elicited by messages that are consistent with their core relational themes, can serve as frames to impact subsequent privacy attitudes and behaviors (e.g., Nabi, 2003; Nabi et al., 2020). Specifically, gain framing created higher hope, which was associated with lower privacy powerlessness in both contexts, and higher protection intention in the social media context. Loss framing led to higher fear in the social media context, which was associated with higher privacy protection intention. These findings provide evidence for the effectiveness of emotional appeals messages in online privacy, thus advancing the role of message-induced emotions in the privacy scholarship that has previously received scant attention.

Importantly, while both hope and fear have the potential to directly motivate behavioral changes in protection intention, only hope consistently reduced privacy powerlessness across contexts. Given that privacy powerlessness includes efficacy beliefs that privacy protection is futile, hope generated by gain-framed efficacy messages successfully reduced feelings of powerlessness, which in turn related to increased protection intention, demonstrating a message-emotion-attitude-behavior link in online privacy. On the other hand, while fear and its danger-avoidance action tendency drove people to adjust privacy settings, it did not serve to counter the lack of efficacy beliefs in privacy protection. The finding that only hope has the potential to combat attitudinal barriers is consistent with Nabi et al. (2018), who found that while gain-frame-induced hope generated greater pro-environmental attitudes and subsequently increased interest in advocacy behaviors, loss-frame-induced fear only led to an increase in advocacy behaviors. These findings have important implications. By definition, emotions are short-lived, whereas attitudes are relatively enduring (Lazarus, 1991; Rokeach, 1968). Thus, while privacy protection intention driven by fear will likely weaken over time as emotion fades, hope-induced attitudinal changes in privacy powerlessness will likely have more enduring effects. In other words, compared to fear, hope has greater potential for combating the attitudinal barrier to privacy protection and producing more sustained persuasion effects. Overall, these results show that emotion-based theoretical and intervention approaches provide a valuable addition to the privacy literature where cognition-based models dominate, and demonstrate the novel and promising role of hope in particular in generating attitudinal and behavioral change across online privacy contexts.

Finally, as an exploratory aspect of our study, we found that while some message-processing patterns replicated across contexts, others were context-specific. Generally, message effects were less salient in the website cookies than in the social media context. In particular, fear did not differ as a function of framing in the cookies context. It is possible that the threat component (though not part of the manipulation) of the fear appeals message had already elicited high levels of fear, thus limiting the additional fear induction of the loss-framed efficacy component, regardless of its framing. This might have produced a ceiling effect specifically for the cookies topic, as participants might generally feel fearful vis-à-vis institutional practices of continuous automated data collection and processing for marketing or surveillance purposes over which they have little control (Büchi et al., 2022).

Similarly, although gain framing induced higher hope in the cookies context, hope itself was not potent enough to drive behavioral changes in privacy protection intention. This may again be explained by the overarching feeling of lack of control in the website context, compared to the social media environment where privacy-related affordances and interface designs can better facilitate users’ feelings of agency. These speculations are corroborated by the z-tests findings, which showed that the negative correlation between hope and privacy powerlessness was stronger in the social media context. In other words, hope had a stronger effect on reducing powerlessness in the agentic social media environment where privacy management is more possible. Interestingly, however, the positive relationship between fear and privacy protection intention was stronger in the cookies context. This suggests that although fear may not differ by framing per se, felt fear may still drive behavioral change in this context.

Overall, these findings speak to the context-dependent nature of online privacy (Masur, 2019; Nissenbaum, 2010). Future research should empirically test, perhaps by adopting a factorial vignette approach, how each of the contextual factors contributes to the effectiveness of emotional appeal messages in motivating privacy attitudinal and behavioral change. Research should also replicate our findings in other online contexts, such as e-commerce and eHealth, to further understand which messages, in which context, are more or less effective.

Limitations and Future Research

This study has a few limitations worth highlighting. We only tested two discrete emotions. This was necessary given the core relational themes of hope and fear conceptually map onto the gain/loss frames in the message design (see Nabi, 2003). However, this limits our ability to speak to the persuasive effects of a broader range of discrete emotions. Future research should continue to explore other message-induced emotions in online privacy. For example, a message describing companies’ dataveillance practices as a threat to users’ freedom of using the internet may trigger users’ resistance and anger, which may also motivate protection intention.

Similarly, we only measured adjusting privacy settings and rejecting cookies as the two behavioral outcomes because they were advocated in the messages. Future research should continue to explore other privacy protection behaviors that can be motivated by emotions, such as self-inhibition (e.g., quitting the app to avoid future tracking; Büchi et al., 2022). Relatedly, we measured behavioral intentions rather than actual privacy protection behavior. Future research should conduct longitudinal experiments (e.g., Boerman et al., 2024) to validate message effectiveness in generating actual behavioral changes.

Finally, although we tested the message effects across two demographically-stratified samples in the U.S., results did not account for how people from particular sociodemographic backgrounds or different cultural contexts may respond differently to privacy risks described in the messages, as highlighted by recent research (e.g., Wang & Metzger, 2024). This warrants future research from a persuasion perspective, as intervention messages should be sensitive to audiences’ attitudes toward and knowledge about online privacy. Qualitative and community-based research will be instrumental in guiding more tailored intervention message design.

Contributions

With these limitations in mind, the findings make several important contributions. Theoretically, this study sheds light on the important role of message-induced discrete emotions in combating privacy powerlessness and motivating privacy protection, which is a theoretical process that traditional cognitive models of privacy do not account for. Indeed, while people often engage in cost-benefit calculus to inform their privacy decisions, it is important to recognize that emotional and physiological responses arising from people’s appraisals of their information security also guide their attitudes and behaviors (e.g., Cho et al., 2020).

Findings further contribute to the growing attention to positive emotions, such as hope, which have been overlooked in past persuasion research generally, and fear appeal literature specifically (Nabi & Myrick, 2019). Indeed, while fear was only a mediator for privacy protection in the social media context, consistent mediating effects of hope across both topics suggest that in the domain of online privacy where individuals often feel helpless, positive emotions like hope can be leveraged to uplift privacy protection intentions. This is important because previous privacy interventions that draw from PMT and fear appeals tend to exclusively focus on fear (Boss et al., 2015). This finding therefore challenges the efficacy of fear-based approaches and suggests that positive emotions play a more promising role in the online privacy context. Practically, this finding can help guide message design for public education campaigns and interventions concerning online privacy.

Our theorizing also aligns with increasing scholarly interest in the affective and emotional aspects of people’s responses—particularly feelings of disempowerment—to online privacy risks. For example, Choi et al. (2018) proposed that when users face high demands in privacy protection yet feel inefficacious about meeting such demands, they may develop affective responses such as fatigue and emotional exhaustion. Cho (2022) similarly conceptualized privacy helplessness as a multidimensional construct encompassing cognitive, emotional, and motivational deficits in privacy protection. Designing emotion-focused interventions is thus important because, to the extent that privacy-fatigued individuals tend to minimize cognitive resources in response to relentless privacy risks (Choi et al., 2018), cognition-driven intervention approaches aiming to increase awareness and knowledge will likely be less appreciated or carefully processed by the audience. Future research may thus test whether emotions intentionally evoked can combat the affective aspect of privacy disempowerment, in addition to cognition.

Our findings thus demonstrate the efficacy of message-based remedies for privacy powerlessness (Ranzini et al., 2023). To our knowledge, only Boerman et al. (2024) have tested message effects on combating privacy fatigue and motivating privacy protection. Their fatigue message focused on acknowledging feelings of privacy fatigue and boosting response efficacy, though the message did not produce their expected effects. Our study goes further by adopting a full fear appeals approach (i.e., including both threat and efficacy) and testing emotions as an explanatory mechanism. Nonetheless, we only focused on the privacy powerlessness, so future research should continue to test message effectiveness on other related constructs. For example, can emotional appeals help to reduce other subdimensions of privacy cynicism, such as mistrust and uncertainty (Lutz et al., 2020)? Ultimately, our hope is that this study inspires a fruitful line of future intervention research that focuses on careful applications of persuasion theory to design effective intervention messages to motivate online privacy protection in the age of pervasive privacy risks.

Supplemental Material

sj-docx-1-crx-10.1177_00936502261415680 – Supplemental material for The Pulse of Privacy: The Role of Efficacy Framing and Discrete Emotion in Combating Privacy Powerlessness and Motivating Online Privacy Protection

Supplemental material, sj-docx-1-crx-10.1177_00936502261415680 for The Pulse of Privacy: The Role of Efficacy Framing and Discrete Emotion in Combating Privacy Powerlessness and Motivating Online Privacy Protection by Laurent H. Wang, Lindsay B. Miller, Miriam J. Metzger and Robin L. Nabi in Communication Research

Footnotes

Acknowledgements

The authors would like to thank Dr. Jiaying Liu for her advice on the data analysis and the three anonymous reviewers for their helpful feedback that made this paper stronger.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Science Foundation under Grant No. 2151340.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The data reported in this study is available upon request from the corresponding author.

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.