Abstract

Widespread concerns about the pervasiveness of misinformation have propelled one antidote to the center of scholarly attention: the journalistic fact check. Yet, fact checks often do not work as intended. While most fact checks are text only, a compelling theoretical argument can be made for using a video format instead. In this pre-registered experiment conducted in Germany (N = 1,093), we investigated whether using video versus text can improve fact checks’ ability to correct misperceptions about transgender women, cannabis consumption, migration, and climate change. Video fact checks outperformed text fact checks, with those holding false or uncertain pre-existing beliefs benefiting the most. We contribute to motivated reasoning theory the idea that visual information can override directional reasoning better than textual information, and that processing fluency is the mechanism by which this occurs. Our findings paint an optimistic picture for the ability of fact checks to debunk misinformation, especially for those holding misperceptions.

The importance of an informed citizenry for a thriving democracy has been widely recognized (Galston, 2001), as has the inherent difficulty in supplying citizens with accurate information (Kaiser et al., 2022; Tandoc et al., 2019). Given the ubiquity of misinformation 1 —that is, false or misleading claims presented as true—fears run high that we are not far from a future in which people will find it difficult to agree even on basic facts (Dan, Paris et al., 2021; Kuklinski et al., 2000; Pennycook et al., 2021).

As one antidote to the spread of misinformation, journalists created the “fact check” – published assessments of the validity of claims (Walter et al., 2020). Fast on the heels of these fact checks came studies to determine their effectiveness. The findings have been mixed. Some studies find that fact checks correct false beliefs (Bode & Vraga, 2015; Hameleers et al., 2020; Kotz et al., 2023); others find they do not (Garrett & Weeks, 2013; Jarman, 2014; Nyhan & Reifler, 2010). Recent meta-analyses are similarly mixed—Chan and Albarracín (2023) concluded that various forms of corrections, not just journalistic fact checks, are mostly unsuccessful in correcting science-relevant misinformation, while Walter et al. (2020) found that journalistic fact checks did have an overall positive effect on belief correction of political misinformation, but the effect was small. Both meta-analyses identified one key explanation for different findings—message presentation, defined as the way the fact check is displayed, for example, whether it is short or long, simple or complex, text-only or accompanied by visuals.

This study takes up the challenge of improving fact checks’ effectiveness via message presentation. While most fact checks are text only, a compelling theoretical argument can be made for using a video format instead. Hence, we tested the idea that video fact checks are more effective than text fact checks. Also, we examined if motivated reasoning, operationalized as pre-existing beliefs, moderates the impact of fact checks (Walter et al., 2020; Wang, 2021), and if processing fluency is a causal mechanism.

As a primary contribution, we present evidence that video fact checks outperform text fact checks in correcting beliefs and that those holding false or uncertain pre-existing beliefs benefit most. Thus, this study contributes to our theoretical understanding of motivated reasoning processes, by clarifying that the video format can override directional goals. These findings paint a more optimistic picture than many recent writings, suggesting that videos are particularly apt to debunk misinformation.

As a second contribution, we present evidence that processing fluency may be the causal mechanism behind this effect, thus acting as a mediator of belief correction via the video format. The study of processing fluency as a potential mediator is important as it helps us explain why video fact checks are more successful in correcting false beliefs than text fact checks. According to theory and evidence, information that is easier to process—that is, fluent—is judged as more accurate (Schwarz, 2004). Videos are generally more fluent than text (Dan, 2018a). Thus, we hypothesize that video fact checks will be easier to cognitively process, which in turn leads people to believe they are accurate and thus enhance belief correction.

In addition to the theoretical contributions outlined above, this study is important for at least two reasons. First, because it takes up the challenge of improving fact checks’ persuasiveness in an effort to create better informed citizens, focusing on polarized topics. Polarized topics are those on which citizens are divided, in the sense that their views on the “right” thing to do (and thus ultimately policy preferences) differ tremendously. While these views might still differ when people can agree on basic facts on the issue at hand, they are likely to differ widely when the public is misinformed. The latter case threatens the common knowledge base normally acting as a social glue. For this reason, focusing efforts on correcting false beliefs about polarized topics is an endeavor worth pursuing (see Dan & Arendt, 2021, 2024; Dan & Dixon, 2021). Yet, false beliefs about polarized topics are known to be particularly difficult to debunk because they drive people to engage in directionally motivated reasoning to protect their political identity (Chan & Albarracín, 2023). Our study takes motivated reasoning into account by measuring pre-existing beliefs, as a meta-analysis showed that when corrections contradict a person’s pre-existing attitudes, they are less effective on belief correction (Rathje et al., 2023); thus, it is particularly important to find ways to correct these kinds of misbeliefs.

Second, this research matters because it studies fact checks in a country other than the U.S., thus allowing scholarship to make more generalizable claims. While 53 countries around the world currently have fact-checking organizations, the scientific literature focuses predominantly on one (Walter et al., 2020). Germany has a plethora of fact checks, which are sorely needed considering a large portion of citizens are prone to belief in misinformation—as many as a third believe in conspiracy theories (Deutsche Welle, 2020). Studying other countries is also important because differences exist between fact checks in the U.S. and other nations (Ferracioli et al., 2022).

Literature Review

Visuals and Moving Images

While most fact checks appear to be text based, video fact checks in Europe constitute “a handful attached to successful broadcasters” (Graves & Cherubini, 2016, p. 8) such as the German broadcaster ARD, and Spain’s Maldita.es. PolitiFact (2021) in the US also offers video fact checks on YouTube. This paper makes a case for news organizations to incorporate more video fact checks. Research has consistently shown that visuals—still photographs and moving images—are processed differently than text (Barry, 1997; Graber, 1990; for a recent review, see Dan, 2018a, 2018b). Dual Coding Theory (Paivio, 1986) especially speaks to this phenomenon by specifying two separate processing systems, one for language and one for nonverbal information. It theorizes that information coded in both systems has advantages, including being better remembered. This implicates video fact checks because their use of both verbal and visual information engages both processing systems, improving judgment. The key to this dual-processing model is its integration of the affective system, with its automatic response via emotions, and the deliberate efforts of the cognitive system. Theoretically, the effect of video fact checks is predicted by DCT, which posits that when information that uses both visual and verbal systems is pooled, an additive effect produces better results than when only one system is used (Paivio, 1979).

DCT has been supported with evidence that visuals attract and hold news users’ attention more than text, are processed faster and prior to words, are better remembered and aid understanding (Dan, 2018a, 2018b; Graber, 1990). They garner attention (McCombs et al., 1988) and more are processed – 75% of images in contrast with 25% of text (Garcia & Stark, 1991). People learn more from a story with pictures (Graber, 1990). Images are valuable framing devices (Coleman, 2010; Dan & Dimitrova, 2022) and can have an agenda-setting effect, leading people to believe that issues with bigger pictures are more important (Wanta, 1988). They also make information more touching, generate emotions (Graber, 1990), and can even improve moral reasoning (Coleman, 2006). This is not to ascribe special power to images, for many such claims are oversimplistic (Domke et al., 2002). Instead, images interact with words and our pre-existing ideas of the world (Domke et al., 2002), which is the process proposed and tested in this study via inclusion of a moderator representing pre-existing beliefs.

The format of information affects how people perceive it (Sundar et al., 2021). Moving images are especially believable, even to the point of being able to replace existing memories (Murphy & Flynn, 2021). Video has been shown to be better believed than text or audio—known as the realism heuristic or “seeing is believing” (Dan & Rauter, 2023; Sundar et al., 2021, p. 301; Dan & Rauter, 2023; Wittenberg et al., 2021). Because videos appear to record reality, they tend to be processed more superficially (Sundar et al., 2021), with their accuracy often taken for granted (Dan, 2018a). Also, videos make use of both audio and visual channels; seeing what is said being reflected on screen turns the message into one that is easy to process cognitively (Paivio, 1979; see Dan et al., 2020). Finally, video fact checks do not require audiences to read, as a narrator speaks most of the information; this too can increase processing fluency (Ten Brinke & Weisbuch, 2020). Sundar et al. (2021) showed that viewers believed fake news more when it was presented in video than text or audio.

These findings bolster the theoretical grounding for how and why video would foster greater responsiveness to fact-checking messages yet have rarely been addressed in research on fact checks’ effectiveness. More interest has been in visuals as misinformation (Dan, Paris et al., 2021; Weikmann & Lecheler, 2023) than in visuals as corrective information. A conceptual piece argued in favor of using video fact checks (Dan, 2021) and an interview-based study with German fact checkers revealed their openness toward this format (Dan, 2022; see also Dan & Rauter, 2023). To our knowledge, only one study has tested the effect of video format on belief correction, finding that it corrected beliefs better than text fact checks (Young et al., 2018). While that study clearly contributed to our knowledge, the lessons that can be learned from it are limited as the stimulus focused on a single topic. Single messages in experiments are problematic because there may be something unique to the message selected that is influencing the results, whereas other messages might elicit different results. Treatments are designed to be broadly applicable, so evidence of their effectiveness should be demonstrated across a variety of messages. Additionally, a number of factors are confounded in any message, but these can be controlled by randomizing the extraneous factors across a range of stimulus messages (Jackson, 1992). Thus, we test multiple polarized topics. Our first hypothesis 2 proposes a main effect of video format on belief correction:

H1: Fact checks in video format will be significantly better at correcting misinformed beliefs than fact checks in text format.

Motivated Reasoning

To expect that merely changing the format of a fact check from text to video will result in belief correction would be to oversimplify the context in which fact checks operate. It has been widely established that false political beliefs are quite persistent. People holding them might be motivated to process the correction provided in a fact check in a biased manner, and, accordingly, opt to hold on to false beliefs. Misinformation is particularly difficult to debunk when topics are polarized (Chan & Albarracín, 2023; see Dan & Dixon, 2021). Motivated reasoning theory (Kunda, 1990) explains this phenomenon. Indeed, one powerful way we understand the world is via our tendency to confirm our pre-existing beliefs (Flynn et al., 2017; Kunda, 1990; Zaller, 1992). To illustrate, one recent study found belief correction to be more likely among uninformed individuals than among those who were misinformed (Li & Wagner, 2020). Evidence suggests that those holding false (Kotz et al., 2023) or uncertain pre-existing beliefs (Wang, 2021) are harder to convince to update their views following exposure to a fact check (Walter et al., 2020). Thus, it is important to distinguish between the different types of “informedness” to enhance the effects of corrections (Li & Wagner, 2020, p. 666). Exposing individuals holding false pre-existing beliefs to fact checks containing counter-attitudinal information may prompt them to pursue directional goals, where they try to confirm their pre-existing beliefs (Lewandowsky et al., 2012), instead of accuracy goals, where they try to be correct. This line of argument makes intuitive sense, but it is grim, as those holding false or uncertain beliefs are the ones most in need of belief correction.

As plausible as this argument may seem, the opposite may also be true: Moving the needle on belief correction may be more likely among those holding false or uncertain beliefs, as they have more room for improvement than those whose views are already aligned with the fact check. Another plausible explanation is found in one study where ingroup bias was overridden by an interest to watch out for problems in one’s own group’s claims (Knobloch-Westerwick et al., 2020).

Compelling theoretical arguments can be made as to why videos should be able to facilitate more belief correction than text, thus potentially overriding directionally motivated reasoning. This includes evidence of a “tipping point” where encountering ever more discrepant information forces people to give up their false beliefs (Redlawsk et al., 2010). These authors even calculate it takes about 13% of incongruent information for a person’s evaluations to become more correct. We theorize that the fluency of video fact checks may speed up this process by virtue of fluent information being perceived as more accurate.

There also is conflicting evidence on whether rational thought causes people to update the accuracy of their beliefs or dig into their pre-existing ones. Motivated System 2 Reasoning theory says that careful deliberation makes people more likely to engage in directional reasoning (Kahan, 2013), whereas other work in the Classical Reasoning Account theory has found that deliberation can override pre-existing beliefs (Pennycook & Rand, 2019). However, this requires these individuals to pay attention to the information presented, suggesting that attention-getting message presentation could play an important part. For the reasons above, we believe videos should be able to accomplish this better than text. While this has never been tested, Hameleers et al.’s (2020) findings are encouraging: Using a fact check with still images resulted in more belief correction for those holding false pre-existing beliefs. That study used a still image with a tweet, not a video. The present study tests whether the video format can overcome motivated reasoning.

Taken together, previous research has acknowledged the importance of pre-existing beliefs for the study of fact checks’ effects. But the specific nature of their role—whether those holding correct, uncertain, or false pre-existing beliefs benefit most—remains unclear. We see merit in the theory and evidence of the characteristics of video outlined above and propose:

H2: The effect of fact check format will be moderated by pre-existing beliefs; that is, people who hold uncertain or false pre-existing beliefs will have greater belief correction from video fact checks.

Processing Fluency

One theoretical reason why video fact checks should be more effective is because they are easier to cognitively process than text (Ten Brinke & Weisbuch, 2020)—what is termed “processing fluency.” Processing fluency is defined as “the subjective experience of ease or difficulty associated with completing a mental task” (Oppenheimer, 2008, p. 237). When processing requires less effort, the message is more likely to be judged as accurate (Schwarz, 2004). Fluency can be induced by the audiovisual format, among others (Meppelink et al., 2015).

In this study, we test if videos make corrective information fluent, thus easier to process, which leads people to superficial rather than deep processing (Sundar et al., 2021). That is, people may be lazy rather than biased (Pennycook & Rand, 2019). This ease of processing acts as a heuristic that tells people the information is accurate (Schwarz, 2004). Fluency is also associated with positive affect – it is remarkably consistent in eliciting positive evaluations, thought to be because of its ability to simplify complex tasks of judgment (Lick & Johnson, 2015). It is prominent in studies of judgment correction (Wegener & Petty, 1995), which we find to be particularly appropriate for video fact checks, yet fluency has been understudied in this area. Fluency could be one causal mechanism for findings that show people believe fake news more in video than text or audio format (Sundar et al., 2021); that fact checks with still images result in more belief correction for those holding false pre-existing beliefs (Hameleers et al., 2020); that video corrects beliefs better than text fact checks (Young et al., 2018); and that videos improve abilities to tell truthful from false videos (Bhargava et al., 2023). However, none of these studies looked at fluency as a causal mechanism.

We expand on this to suggest that videos will induce fluency, leading audiences to conclude the information is more accurate. Where others showed that the fluency of misinformation enables the formation of misperceptions (Sundar et al., 2021), we test whether elevating the fluency of correct information increases belief correction:

H3: The effect of fact check format on belief correction will be mediated by processing fluency, such that video fact checks will be perceived as more fluent, leading to greater belief correction.

We also examine fluency as it is moderated by motivated reasoning. It is not just that people are either motivated reasoners or influenced by fluency; both can be true – people are lazy, but they can also be biased. No single explanation is likely to account for why multiple diverse individuals update their beliefs, thus, we are interested in how these two concepts interact. People can engage in both heuristic thinking and motivated reasoning, so we study both.

We theorize that people’s pre-existing beliefs that are contradicted by a fact check will be harder to correct but will still be correctable, and this is facilitated by fluency. Relevant to our study are findings showing that plausibility of information may help overcome directional motivated reasoning (Pennycook & Rand, 2019). We see overlap in plausibility and the accuracy assessments generated by fluency and posit that this assessment of accuracy and plausibility overrides biased reasoning. We posit an interaction of fluency and motivated reasoning:

H4: Pre-existing beliefs will moderate the effect of video fact checks, mediated by processing fluency, leading to significantly more belief correction for those with false or uncertain pre-existing beliefs.

Method

This pre-registered experiment used a single-factor between-subjects factorial design with a control group in a moderation/mediation model. The factor was the fact check’s format (video, text). The mediator was processing fluency. The moderator was motivated reasoning. We used a multiple-message design with stimuli on four topics to increase validity and rule out unique stimulus effects (Reeves et al., 2016). Stimuli were created with the help of a video company and professional actors and can be accessed on OSF (https://osf.io/pbjkt/).

Participants were randomly assigned to one of the two conditions or a control group and then saw two topics (i.e., interventions) within that condition. We did this to avoid fatigue, rather than exposing each person to all four fact checks. This allowed for a test of robustness and generalizability to assess whether effects were topic dependent.

Sample

German adults were recruited from the database of Simple Opinions and offered small incentives. A G*Power analysis using effects sizes from previous research (Walter et al., 2020; Young et al., 2018), indicated that 995 participants would yield a power level of 0.95. A total of 1,359 completed the study. We removed 266 participants using pre-registered criteria, 3 leaving a total of 1,093 across the three groups: video n = 486; text n = 487; control group, n = 120. As explained above, each participant was exposed to two interventions. The interventions were analyzed separately because the samples were not independent; the same people participated in intervention 1 and intervention 2, to which they were exposed one after another. When control groups are used only to demonstrate that the treatment is better than no treatment, it is a more efficient use of resources for them to not be as large as the treatment groups; in this case, control groups can have fewer participants (Bausell, 1994; White, 2018).

Participants were diverse in terms of age (M = 44.89, SD = 15.36), gender (51.4% female, 48% male, 0.5% other), education (69.26% had a high school diploma or less, 30.74% had at least some college), and political ideology (M = 3.91, SD = 0.95; on a scale anchored 1 = Extremely left and 7 = Extremely right).

A randomization check showed participants were equivalently distributed across the format factor, Wilks’ Lambda F(5, 966) = 0.72, p = .611, on age, gender, education, political ideology, and pre-existing beliefs.

Quota Sampling of Pre-Existing Beliefs

We used the following pre-registered strategy for quota building. People entering the study were asked about their pre-existing beliefs on the stimuli topics on 7-point scales (1 = extremely certain it is false; 2 = moderately certain it is false; 3 = slightly certain it is false; 4 = not certain it is false or true; 5 = slightly certain it is true, 6 = moderately certain it is true; 7 = extremely certain it is true; Li & Wagner, 2020). The four statements were: “Transgender women are stronger than cis gender women” (true); 4 “Not eating meat helps reduce climate change” (true); “Cannabis is more harmful than alcohol” (false); and “Sea rescues create an incentive for migrants to come to Europe” (false). Scores for false claims were reversed so that higher scores indicated more accurate beliefs. These verdicts agree with those of actual fact checks published on these topics. See the stimuli on OSF for the scientific sources backing up these claims (https://osf.io/pbjkt/).

The survey company used these items to recruit nearly equal numbers of participants in three groups: holding correct pre-existing beliefs, holding uncertain pre-existing beliefs, holding false pre-existing beliefs. The following pre-registered strategy was used to assign participants into groups based on their pre-existing beliefs on the four topics—and thus on the likelihood of them engaging in directionally motivated reasoning. If respondents endorsed the false claim (or rejected the correct claim) on at least three of the four topics, they were assigned to the group that holds false beliefs; if they endorsed the correct claim (or rejected the false claim) on three topics, they were assigned to correct beliefs. In the case of ties, the type of belief that got endorsed the most determined the category to which the participant was assigned. For instance, a person who believed two false claims but got one or no claims correct and was uncertain about one or two was assigned to the “false beliefs” group; a person who got two claims correct, was uncertain about one or two, and believed one or no false claims was assigned to the “correct beliefs” category. The uncertain-beliefs group included those who either: selected “not certain it is false or true” on at least three claims; were correct on two topics and incorrect on two; or were correct on one topic, incorrect on one, and uncertain on two topics. Cutoff points were: false (1–3), uncertain (4), and correct (5–7), giving us three groups at about 33% each. This strategy was implemented for quota-building purposes only. For the analyses, a precise measure was used, with a new variable created to determine prior belief versus post belief for each item separately.

Experimental Manipulation

Groups

We used five experimental conditions. The participants who were randomly allocated to condition saw two fact checks attributed to a fictitious fact-checking organization named Faktenchecker. Those in the video condition saw two fact checks that used a video format, and those randomly assigned to the text condition saw two text fact checks that were essentially a transcript of the videos used in the video condition. Participants in the control condition saw two unrelated videos of a stand-up comedian performing a routine. Young et al. (2018) showed no differences in comedy video fact checks and non-comedy video fact checks.

Stimuli

We modeled the fact checks after those produced by #Faktenfinder, the fact-checking initiative of the German public service broadcaster ARD, to imitate a credible, nonpartisan fact-checking brand. Pew Research Center (2018) research shows ARD is trusted by 8 out of 10 people in Germany. Also, the ARD is the most trusted news brand and the one that is used most offline and online (Hölig, 2023). We created the text fact checks using one of the authors’ experience as a professional journalist, opting for an uncluttered layout, showing the text as it would be on a mobile device, thus with just a few words per line and split on multiple screens. A professional video company created the video fact checks based on our script, contracting professional actors. All stimuli, including the full videos, can be found on OSF (https://osf.io/pbjkt/; see also Supplemental Appendix Tables 1 and 2).

We selected topics of misinformation in Germany that met Garrett and Weeks (2013) definition as “contentious and highly polarized” (p. 631) at the time of the research (ARD, 2023). Unlike most fact-check studies that use only topics that are false, these represent two that are true and two that are false. While most fact checks debunk misinformation that is commonly believed by conservatives (Walter et al., 2020), this study includes one misperception held by the left-wing in Germany—that trans and cis gender women are about the same in strength; they are not.

In creating these fact checks, we did not repeat misinformation, inadvertently strengthening it and making it resistant to correction (Garrett et al., 2013), and included well argued, detailed, new, and credible information, and transparent statements about the verification methods, sources, and organization. In the videos, we did not use stereotypical images such as people in traditional Muslim garb (Garrett et al., 2013).

We minimized the number of camera changes and new information to aid fluency and avoid cognitive and information overload (Lang et al., 2013). Each fact check also contained one sentence with base rate information (e.g., “Trans women are 21% faster than cis women before feminizing hormone therapy and 12% faster after that”). In the videos, this information was shown as text on screen and the journalist-narrator speaking. Additionally, the video fact checks used visuals redundant with the spoken information to facilitate processing (Dan, 2018a). For example, when the journalist/narrator said, “In summary, trans women are stronger than cis women. There is a scientific consensus on this. That alone is not enough for success in top-class sport, because endurance, lean body mass and coordination also play an important role. This is why trans women were allowed into the women’s competition at the Olympics. But scientists agree that trans women are stronger than cis women,” the video showed Laurel Hubbard, a trans woman weightlifter at the 2021 Olympics.

Professional actors were used for the journalist-narrator. Men were used because 70% to 75% of journalists in Germany are men (Janson, 2019). For the text version, a man’s byline was used (i.e., “Martin Behrens”).

The wording of each fact check across video and text was identical, with the text fact checks being almost a transcript of the videos, altered only to conform to news writing style. All videos were about 2 minutes long because that is the length viewers tend to like best (Chen et al., 2015) and approximates the length of ARD video fact checks. Text versions were 400 to 450 words.

The fact checks began by briefly stating that the purpose of the video or story was to examine widely circulated claims with an open mind, ensuring that the verdict was objectively drawn. The video and text story used information from scientific sources such as academic journals, government research, and databases, cited accordingly (e.g., “ ‘Combined effect of alcohol and cannabis,’ Psychopharmacology, 2021”). Each fact check contained three scientific sources debunking the misinformation and endorsing the correct claim. All studies referenced a scientific consensus. Audiences were told what was done to fact-check the claim at hand such as reaching out to those posting the original claim on social media to get their sources, combing through scientific databases, and talking to scientists.

Manipulation Check

A manipulation check was conducted (N = 75) to test the finished fact checks. Details can be found on OSF (https://osf.io/pbjkt/). The manipulation check confirmed that the political lean of the fact-checked topics was successful: Using ANOVA with Bonferroni tests, the right-leaning transgender story was rated as significantly more favorable to the right than the left-leaning climate change (p < .001), cannabis (p < .001), and migration (p < .001) stories; there was no difference between the left-wing stories (climate vs. cannabis p = .339; climate vs. migration p = .676, cannabis vs. migration p = 1.00). As intended, the video fact checks were rated as highly fluent on 7-point scales (M = 6.00, SD = 1.03), and verbally and visually redundant (M = 4.96, SD = 1.46). The fact checks were perceived to be realistic (M = 4.53, SD = 1.47), and showed no significant differences in realism between text and video, F(3, 225) = 1.85, p = .138. There were no differences between story topics on realism, F(3, 225) = 1.60, p = .191, fluency, F(3, 225) = 0.269, p = .847, or redundancy F(3, 225) = 2.410, p = .118.

Measures

The full questionnaire can be found on OSF (https://osf.io/pbjkt/).

Motivated Reasoning

Motivated reasoning, the moderator, was measured using a 7-point scale with the items recording pre-existing beliefs related to the four topics reported above (Transgender M = 3.50, SD = 1.43; Climate change M = 4.26, SD = 1.82; Cannabis M = 4.49, SD = 1.73; Migration M = 3.65, SD = 1.72). False claims were reversed; higher values represent more correct views.

Belief Correction

Post-exposure beliefs were assessed after participants saw each fact check, using the same items as for motivated reasoning. We created a difference score by subtracting the pre-treatment belief score from the post-treatment score for each claim. We matched the pre- with the post-exposure belief on each specific story each person got. For instance, someone who got the transgender story whose pre-existing belief about transgender women was 3 (false) and had a post-exposure transgender belief of 7 (true), received a belief correction score of 4 (7 – 3 = 4). This person’s beliefs about transgender women were corrected by 4 points on a 7-point scale. A score of zero means there was no belief correction. This was repeated for each person with the second fact-checking intervention each person received (Belief correction: Transgender M = 2.10, SD = 2.01, n = 485; Climate change M = 1.17, SD = 1.71, n = 487; Cannabis M = 1.08, SD = 1.96, n = 486; Migration M = 1.39, SD = 1.89, n = 488). Participants were exposed to two topics, so the total N across topics is 1,946—double the 973 participants in treatment groups. Higher scores indicate more belief correction.

Processing Fluency

Processing fluency, the mediator, was measured with 7-point semantic differentials on difficult/easy, unclear/clear, effortful/effortless, and incomprehensible/comprehensible (Cronbach’s α First intervention = 0.841; Second intervention = 0.913). We arrived at these measures following Graf et al. (2018) exhaustive study designed to determine the most psychometrically valid and reliable way to measure fluency. They concluded that the two best measures were a five-item scale with one measure from each of the five dimensions of fluency effects, and a one-item scale. We used four of the five, eliminating the disfluent/fluent item because it did not have a German translation. Graf et al. (2018) also showed that one item – difficult/easy – was “sufficient to capture the fluency experience” (p. 394) and recommended use of the single-item measure because of its parsimony. We reasoned that eliminating one of the five items, while including the recommended single item, would not compromise the scale. As reported above, this measure showed good reliability. Means and standard deviations were: First intervention (M = 5.54, SD = 1.33); Second intervention (M = 5.68, SD = 1.37). Higher scores indicate higher processing fluency.

Procedure

Upon providing informed consent, participants were first asked their pre-existing beliefs concerning all four topics of our stimuli. Participants were randomly exposed to fact checks on two of the four topics, with questions about the other two topics serving as masking questions. Immediately after exposure to each fact check, participants were asked the same questions on their beliefs, used to determine belief correction, and about processing fluency. Demographics were collected at the end. Participants randomly assigned to the control group watched two control videos and answered the same questions on their beliefs.

Ethics & Pre-Registration Statement

The IRB of the University of Texas at Austin approved this study (ID: 00002988; dated September 20, 2022). This study was pre-registered on OSF (https://osf.io/pbjkt/).

Results

Our first task was to determine whether fact checks corrected false beliefs. We compared the belief correction scores in the treatment groups to those in the control group. Both video (M = 0.64, SD = 0.73) and text fact checks (M = 0.46, SD = 0.68) were better at correcting beliefs than the control (M = 0.09, SD = 0.48), F(2, 1090) = 32.68, p < .001, η2 = 0.057. Having established that fact checks were able to correct beliefs, we then focused on comparing treatment groups.

Hypothesis Testing

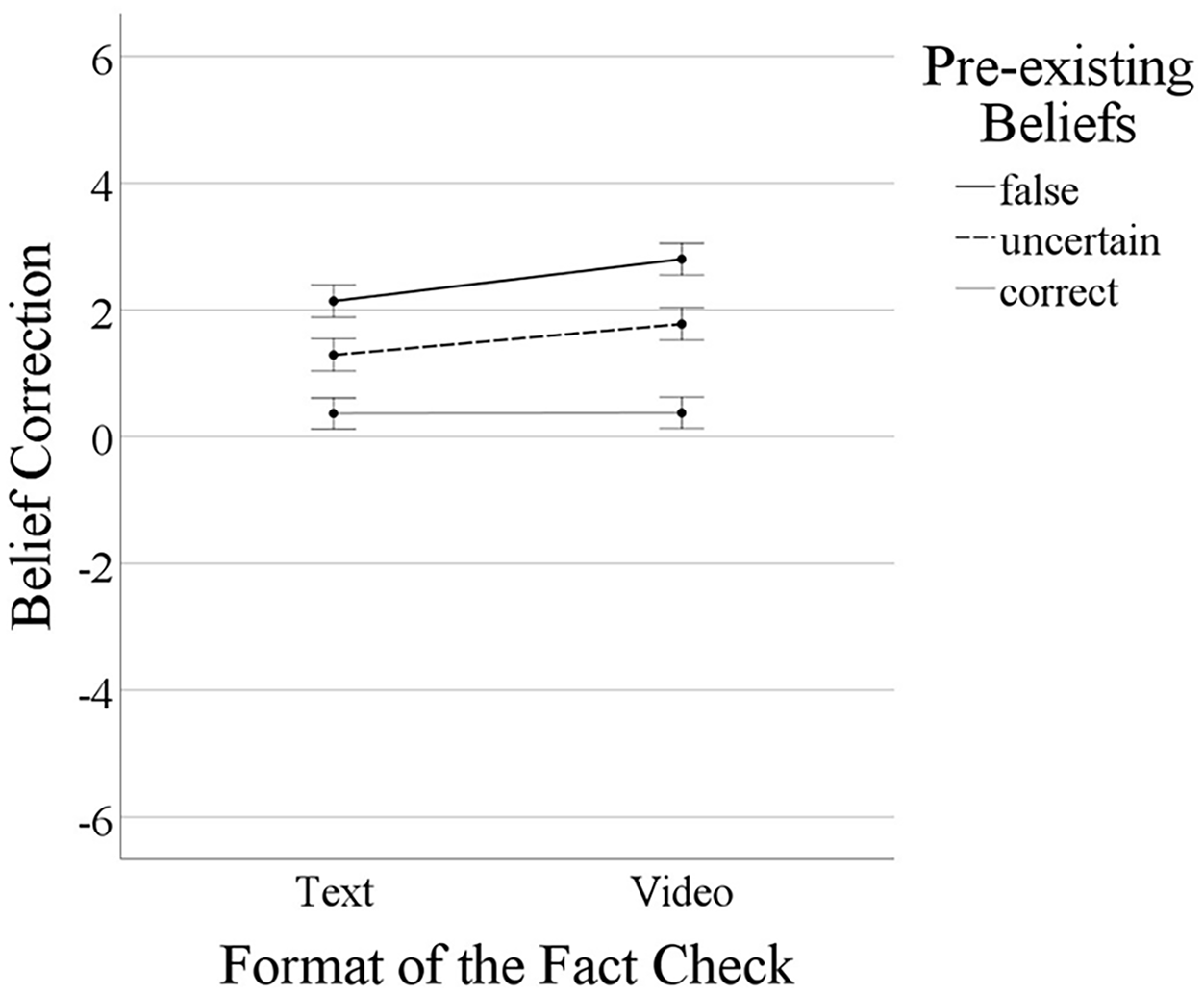

In the first intervention, there were main effects for format, F(1, 967) = 13.77, p < .001, η² = 0.014, and for pre-existing beliefs, F(2, 967) = 138.50, p < .001, η² = 0.223. Also, there was a significant interaction, F(2, 967) = 3.61, p = .027, η² = 0.007. All six groups showed means with confidence intervals excluding zero, indicating that all fact-checking interventions corrected beliefs (see Figure 1). The most beneficial effect was elicited in those who originally held false or uncertain pre-existing beliefs, with videos prompting even higher belief correction than text.

Strength of the effect of video versus text fact checks on belief correction depending on pre-existing beliefs.

This is supported by additional bivariate t-tests, assessing the strength of the difference between text and video fact checks: In those with correct pre-existing beliefs, videos (M = 0.38, SD = 1.43) corrected beliefs about the same as text fact checks (M = 0.37, SD = 1.33), t(338) = 0.06, ∆M = 0.01, Cohen’s d = 0.01, p = .954. Yet, in those holding uncertain pre-existing beliefs and in those with false pre-existing beliefs, videos outperformed text fact checks. Specifically, for those with uncertain pre-existing beliefs, videos (M = 1.78, SD = 1.39) worked considerably better than text fact checks (M = 1.29, SD = 1.45), t(311) = 3.04, ∆M = 0.49, Cohen’s d = 0.34, p = .003. In those with false pre-existing beliefs, videos (M = 2.80, SD = 2.05) outperformed text fact checks once again (M = 2.14, SD = 1.96), t(318) = 2.96, ∆M = 0.66, Cohen’s d = 0.33, p = .003. Note that the unstandardized mean difference (∆M) between the groups is even stronger in those with false pre-existing beliefs (∆M = 0.66) compared to those with uncertain pre-existing beliefs (∆M = 0.49). However, due to the larger variability in the data of those with false pre-existing beliefs compared to those with uncertain pre-existing beliefs, as indicated by the higher standard deviations reported above, the standardized mean difference—as expressed in the effect size estimate Cohen’s d—between these two groups appeared to be approximately the same.

The analyses

In a test of robustness, analysis conducted for the second intervention participants received generally replicated the findings reported above. An ANOVA indicated main effects both for format, F(1, 967) = 14.75, p < .001, η² = 0.015 and for pre-existing beliefs, F(2, 967) = 153.00, p < .001, η² = 0.240, in addition to a significant interaction effect, F(2, 967) = 3.22, p = .040, η² = 0.007, also supporting H2. Findings were robust.

We investigated the generalizability of findings by testing if the size of the interaction effect predicted depended on the specific topic of the fact check by re-running both ANOVAs reported above, this time also including story topic as an additional factor. The format × pre-existing beliefs × topic interaction term did not indicate a significant effect—either for the first story participants received, F(6, 949) = 0.66, p = .686, η² = 0.004, or for the second story, F(6, 949) = 0.36, p = .903, η² = 0.002. Thus, the moderation effect predicted in H2 did not depend on topic, increasing confidence in the generalizability of our findings.

For the first intervention, video fact checks increased processing fluency, B = 0.53, 95% CI [0.37, 0.69], SE = 0.83, p < .001, and fluency increased belief correction, B = 0.22, 95% CI [0.14, 0.31], SE = 0.45, p < .001. There was a significant direct effect of format on belief correction, B = 0.27, 95% CI [0.04, 0.50], SE = 0.12, p = .022.

The same pattern was observed for the second intervention: Video fact checks increased processing fluency, B = 0.28, 95% CI [0.11, 0.45], SE = 0.09, p = .001, which in turn increased belief correction, B = 0.27, 95% CI [0.18, 0.36], SE = 0.05, p < .001. There was a significant direct effect of format on belief correction, B = 0.40, 95% CI [0.15, 0.65], SE = 0.13, p = .002.

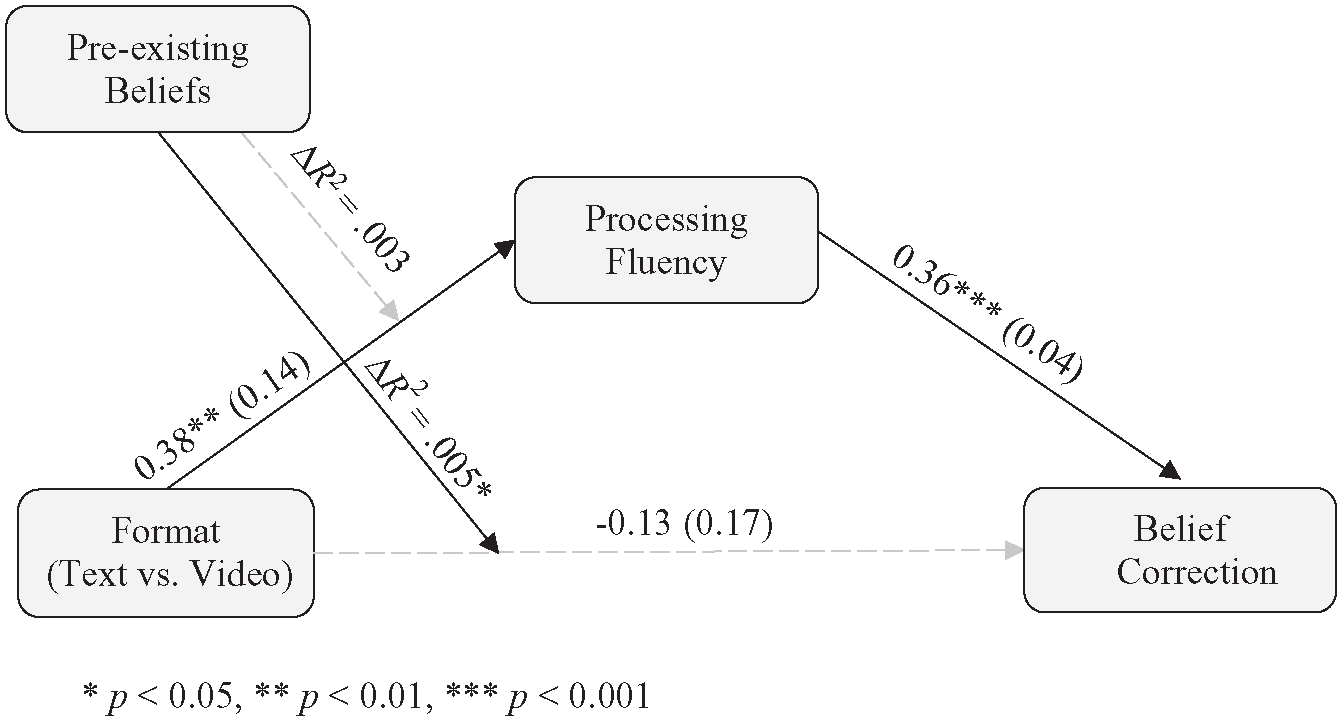

The effect of video versus text fact checks on belief correction mediated by processing fluency and moderated by pre-existing beliefs.

For the first intervention, we found evidence for mediation: Video fact checks increased processing fluency, B = 0.38, 95% CI [0.11, 0.65], SE = 0.14, p = .006, which in turn increased belief correction, B = 0.36, 95% CI [0.28, 0.43], SE = 0.04, p < .001. There was not a significant direct effect of format on belief correction, B = −0.13, 95% CI [−0.46, 0.21], SE = 0.17, p = .456, but there was the expected unconditional interaction format × pre-existing beliefs related to the direct effect path (format → belief correction), F(2, 966) = 3.14, ∆R2 = 0.005, p = .044.

However, pre-existing beliefs did not moderate the effect of format on processing fluency on the indirect effect path (format → fluency → belief correction), F(2, 967) = 1.47, ∆R2 = 0.003, p = .230. Format elicited an indirect effect (format → fluency → belief correction) on belief correction on all levels of pre-existing beliefs: correct beliefs, indirect effect = 0.13, 95% CI [0.05, 0.24], uncertain beliefs, indirect effect = 0.26, 95% CI [0.15, 0.37], and false beliefs, indirect effect = 0.18, 95% CI [0.07, 0.32].

Testing for robustness, the second intervention partly replicated the effects above: While video fact checks did not significantly increase processing fluency, B = 0.25, 95% CI [−0.02, 0.52], SE = 0.14, p = .066, processing fluency did increase belief correction, B = 0.38, 95% CI [0.30, 0.46], SE = 0.04, p < .001, providing partial support for the mediation hypothesized in H4. We say “partial” because the indirect effect on belief correction via processing fluency was not statistically significant in all three pre-existing beliefs groups for the second intervention: Although processing fluency mediated the effect of format on belief correction for the correct, Coeff = 0.10, 95% CI [0.01, 0.19], and the uncertain groups, Coeff = 0.13, 95% CI [0.01, 0.27], this was not evident in the false group, Coeff = 0.10, 95% CI [–0.02, 0.23]. Consistent with the findings reported above, pre-existing beliefs moderated the direct effect path (format → belief correction), F(2, 966) = 3.75, ∆R2 = 0.005, p = .024, but not the indirect path (format → fluency → belief correction), F(2, 967) = 0.13, ∆R2 < 0.001, p = .880.

Taken together, conditional process analysis provided tentative evidence supporting the idea that the effect of format on belief correction was mediated by processing fluency. However, some imperfections in results were obtained (see the test for robustness above). Specifically, in the first intervention, the indirect effect of format on belief correction via processing fluency did not depend on motivated reasoning—it was observed for those with correct, uncertain, and false pre-existing beliefs. However, in the second intervention, a significant indirect effect was observed for the correct and uncertain groups, but not for the false group. Put simply, analyses provide tentative evidence consistent with the idea that the video advantage was partly due to videos being easier to process than text.

More pre-registered analyses can be found on OSF (https://osf.io/pbjkt/).

Discussion

In light of concerns about significant portions of the population believing information that is demonstrably false, this study set out to test one way to enhance fact-check effects. Using a pre-registered experiment, we assessed fact checks’ ability to correct beliefs when using different formats (text vs. video). In addition, we investigated the role of directionally motivated reasoning and the causal mechanism of processing fluency.

The primary contribution to the literature is to provide evidence that video fact checks outperformed text fact checks. This provides updates to a recent meta-analysis (Walter et al., 2020), which had to rely on just eight studies testing the effect of fact checks supported by visuals. Of those studies, seven incorporated still images in their stimuli (e.g., photographs), and only one used video stimuli (Young et al., 2018). Not included in the meta-analysis is another study of TikTok video corrections. While these were not fact checks and did not examine belief correction, it did show that videos improved people’s ability to distinguish between subsequent fake and truthful videos (Bhargava et al., 2023). The other studies of still images showed mixed effects. Where fact checks with still images may or may not help with belief correction more than text, videos do seem to elicit a stronger beneficial effect. This insight is particularly important given the increasing popularity of video-based social media platforms.

The take-away for the journalism profession is that the realistic and attention-getting moving images in video play an important role in correcting audiences’ false beliefs. This happens because such videos are more realistic, and thus more believable, and also because they are easy to process. It is also important that professionals create videos with this in mind – minimize the number of camera changes, use images that say the same thing as the words being spoken, and use text on screen for especially difficult information such as that from scientific studies.

We find these results to be entirely consistent with theory about moving images that lead us to test this idea. Primarily, we are guided by Dual Coding Theory, which says when information makes use of both verbal and nonverbal processing channels, such as video, all manner of outcomes are better than when only one channel is used, such as with text (see Dan, 2018a, 2018b; Paivio, 1979). Additionally, the realism heuristic (Sundar et al., 2021, p. 301; Wittenberg et al., 2021), proposes that people believe moving images more because they are realistic. We reason that when information is believed, that should encourage people to incorporate it into their pre-existing attitudes, updating them when the new information conflicts with the old.

We theorize that this use of dual processing channels and the greater sense of realism that videos provide should lead to information that is processed fluently, which is the theoretical mechanism that this study investigated. We proposed that participants who watched and listened to the video fact checks will find them easier to comprehend than those who read the text versions. The key linkage of fluency to belief correction is that fluent information is perceived as more accurate (Schwarz, 2004). This should be especially influential in encouraging people to change their prior beliefs to be in line with the truth. We found support for the idea that the mechanism for this belief correction by video fact checks was cognitive processing fluency. Although there were some imperfections in results, findings from conditional process analyses were largely consistent.

All of this, we proposed, is moderated by people’s pre-existing beliefs, which was confirmed in this study. Whereas some studies found that those holding false beliefs cannot be brought back on track (Kotz et al., 2023; Wang, 2021), others have shown that they can (Pennycook & Rand, 2019; Redlawsk et al., 2010). Our analyses aligned with those showing that directionally motivated reasoners can be persuaded to correct their misbeliefs. In this study, those holding false or uncertain pre-existing beliefs experienced the most belief correction, a finding consistent with Hameleers et al. (2020). We showed even larger effects using videos compared to text accompanied by still images.

It has been proposed that people are not necessarily motivated reasoners but are lazy and don’t reason at all (Pennycook & Rand, 2019). The interaction of pre-existing beliefs, representing motivated reasoning, with fluency in this study shows that both can coexist. The linkage here is that information that has greater plausibility has been shown to overcome motivated reasoning (Pennycook & Rand, 2019). More plausible information is also more accurate and believable information, which is a characteristic of moving images (Dan, 2018a; Paivio, 1979; Schwarz, 2004). Perhaps the ease of processing of the video format may help people engage in analytical thinking. Another explanation could be that the greater accuracy, believability, and plausibility of information in video format operates heuristically to update beliefs. We do not find these to be inconsistent, as people use both analytical and heuristic thinking. We propose that future research should use analytical thinking as an effect moderator and that people’s reticence to invest cognitive resources could be bypassed—and the pursuit of accuracy goals (Kunda, 1990) facilitated—when a video format is used.

Our findings are consistent with the evidence provided by Young et al. (2018), but we go beyond that. In addition to testing fluency as the causal mechanism, to the best of our knowledge, this is the first study to show the beneficial effects of video fact checks on correcting misperceptions on multiple polarized topics, thus adding to the validity of the findings. This has vast relevance as views on more than one issue divide societies and are exceptionally difficult to debunk (Chan & Albarracín, 2023; see Dan & Dixon, 2021). The four different topics of misinformation give us more confidence that this effect is not unique to a particular issue. This contributes to our theoretical understanding of the role modality plays in facilitating belief correction.

Limitations

As with any research, this study has limitations. One concern might be the inability to generalize our findings outside of Germany. We recommend future research tests whether our findings hold true elsewhere. Despite this, this study extends knowledge of fact checks beyond U.S. borders, where most of the research is conducted (Chan & Albarracín, 2023; Walter et al., 2020). We relied on a large and diverse sample, and our stimuli dealt with four different topics, aiming to produce generalizable knowledge.

Second, we point out that the mediator in this study is correlational, not causal. Future work must manipulate processing fluency to establish a cause-and-effect relationship. This does not invalidate our study, however; as mediators are psychological processes that must be measured to be identified and can then be manipulated in a second study (Wu & Zumbo, 2008). While our measure of processing fluency considered the ongoing debate on how to best measure this concept, alternative measures are available (see Graf et al., 2018). Future studies attempting to replicate our findings could use more unobtrusive measures that go beyond self-reports. As self-reports have their limits when it comes to measuring mental processes, future studies may reveal even stronger mediation effects than could be shown here. However, we want to point out that we used a measure shown to be reliable and valid (Graf et al., 2018).

Third, future work is advised to delve deeper into the differences between people with confident false beliefs and people with uncertain beliefs. Indeed, previous research suggests that these two groups can be very different (e.g., Li & Wagner, 2020). In this study, the interaction was driven by the effect in those holding uncertain beliefs and those holding false beliefs on the one hand, versus those holding correct beliefs on the other hand.

Finally, studying fact checks that involve controversial claims can be challenging. While we attempted to select issues that were provable as true or false, one of the topics of our fact checks involves a claim that even scientific studies reach different conclusions on, leading fact checks to come to different conclusions as well (Burnett, 2021; Valverde, 2021). The verdict that transgender women are stronger than cis gender women athletes may be oversimplified in the fact check, making across-the-board statements difficult. Despite this limitation, our analyses indicated story topic did not interact with format or pre-existing beliefs, and robustness tests with the transwomen item deleted reached the same conclusions, giving us more confidence that this did not affect the results.

Conclusion

This study contributes the theoretical statement that audiovisuals that are realistic, engage both processing channels by presenting redundant information in both visual and verbal format, and are easy to cognitively process will better correct misinformation than text (Dan, 2021). Video fact checks that are a direct depiction of reality, with a narrator speaking the information, thus eliminating the need for reading, will be processed faster than those requiring more effort. Because they require less effort to process than written fact checks, people should judge them to be more accurate and truthful, leading them to be more likely to correct pre-existing misperceptions about controversial claims. Furthermore, this process can interact with and affect motivated reasoning, overcoming pre-existing biases. In this study, people whose pre-existing beliefs aligned with the incorrect claim not only corrected their beliefs but benefited most. This insight is also relevant to other situations and contexts where directional motivated reasoning may come into play—besides the fight against misinformation with fact checks. In those other situations and contexts, overcoming directional motivated reasoning may also be achieved by using multimodal messages that are easy to process.

This study has added to visual communication theories such as DCT by integrating insights from motivated reasoning to test whether moving images that are easily processed can override the tendency to engage in directional reasoning. We find that they can and theorize that the fluency of video fact checks may speed up this process because fluent information is perceived as accurate and better liked. For the purpose of correcting misbeliefs, video holds possibilities beyond rational argument by calling upon emotions and subconscious processes.

Scholarly investigations of misinformation and ways to combat it often end on a rather pessimistic note. Not this study. Our findings provide a considerably more optimistic outlook. Having found strong indication of video fact checks’ ability to correct beliefs, we believe that these can be an important pillar in the fight against misinformation. Thus, while misinformation is thriving online, the democratic ideal of an informed citizenry is not necessarily jeopardized as long as high-quality journalistic fact checks that employ moving images are widely available and people attend to them. Our study indicates that journalistic fact checks can help citizens find common ground on basic facts, contributing to the functioning of democracy.

Supplemental Material

sj-docx-1-crx-10.1177_00936502241287870 – Supplemental material for “I’ll Change My Beliefs When I See It”: Video Fact Checks Outperform Text Fact Checks in Correcting Misperceptions Among Those Holding False or Uncertain Pre-Existing Beliefs

Supplemental material, sj-docx-1-crx-10.1177_00936502241287870 for “I’ll Change My Beliefs When I See It”: Video Fact Checks Outperform Text Fact Checks in Correcting Misperceptions Among Those Holding False or Uncertain Pre-Existing Beliefs by Viorela Dan and Renita Coleman in Communication Research

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by the LMU Munich.

Open Practice

Data Availability

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.