Abstract

This paper provides a philosophical discussion of moderators and person-specific differences (referred to as “hedges”) in research on media effects. It is shown that while, historically, the reliance on hedges has been regarded as a sign of theoretical sophistication (the “hedges-as-progress-perspective”), it has left the field behind in a maze of epistemological problems. The paper therefore urges to reinterpret the role of hedges as a sign of theoretical resilience instead of sophistication (the “hedges-as-protection-perspective”). This shift is shown to have substantive implications for how one describes and evaluates media effects research—not just its history, but also its current state and its ambitions going into the future.

Keywords

Research on the effects of mediated communication has had a long and contrived history. Over the last odd-century, social scientists have been debating the implications of media use, often diverging in opinion about foundational issues such as the meaning and testability of media effects (e.g., Potter, 2011), the distinction between successful and unsuccessful media effects theories (e.g., Cacciatore et al., 2016; Potter, 2014), the scientific progress achieved by the domain (e.g., A. Lang, 2013; Perloff, 2013), and the generalization of scientific findings to everyday life (e.g., Ferguson, 2015). Despite these controversies, one key message appears to be internalized by scholars in the field: their object of study is complicated, and effects should—to the extent that they occur—be highly volatile phenomena, contingent on context and subject (e.g., Rains et al., 2018).

The literature has embraced this mantra wholeheartedly, with many theoretical frameworks and models focusing on the hedges of media effects—that is, the conditions demarcating when and why effects may or may not occur (Roberts, 2004). A prominent example of this is the Differential Susceptibility to Media Effects Model (DSMM). The DSMM holds as its primary postulate that “media effects are conditional” (Proposition 1; p. 226), meaning that effects will occur only by the grace of an applicable set of dispositional, developmental, and social hedges (Valkenburg & Peter, 2013a, 2013b). The implication of this widely cited proposition seems obvious: if we aim for a proper scientific understanding of media effects, we require a proper understanding of their hedges (e.g., Arendt & Matthes, 2017; Slater & Gleason, 2012; Valkenburg & Peter, 2013a, 2013b). This perspective is widely shared, and it has recently evolved into a rather extreme stance: research on so-called individual-level susceptibility has been advocating a redefinition of media effects as “intra-individual changes [due to] media use that may differ from person to person” (Pouwels et al., 2021, p. 1; emphasis added). Such a definition effectively pushes the focus on hedges into one of the highest possible levels of detail: effects are different for all people, which means that research should aim to carve out effects at the level of particular individuals.

The preoccupation with hedges in media effects research has generally been portrayed a good thing—as a sign that the field has been making theoretical progress (e.g., Perloff, 2013). In what follows, I will refer to this interpretation as the hedges-as-progress-perspective. The hedges-as-progress-perspective is intuitive: social reality is complex, and models containing more hedges seem capable of reflecting such complexity with more nuance. However, as I will argue, this optimism is largely unjustified (see also Healy, 2017, for a more general criticism of nuance in sociological theorizing). When considering the history of media effects research, it appears that the reliance on hedges has never actually been a marker of theoretical progress per se. Rather, it seems that the search for hedges has been a continuous presence—one that, unfortunately, also caused severe epistemological tensions in the field. The focus on hedges has been clashing head-first with the scientific values held dearly by quantitative scientists, and it has muddled the notion of causality that lies at the heart of research on “effects.” Metaphorically speaking, one could say that by allowing hedges all around, media effects research has gradually trapped itself in a theoretical maze.

To contextualize this problem, I will introduce the hedges-as-protection-perspective on media effects. The hedges-as-protection-perspective interprets the focus on hedges not as a logical outcome of disciplinary progress but, rather, as a strategy to ensure the field’s survival (which may then, perhaps, lead to progress, but does not guarantee it). This conceptual shift is not just a play with words; as I try to show throughout the paper, it has substantive implications for how one describes and evaluates media effects research—not just its history, but also its current state and its ambitions going into the future.

Before proceeding with the main argument, it seems useful to offer some clarifications regarding the paper’s intended scope and terminology. The goal of the paper is to contextualize the epistemological role of hedges in media effects research, which means that the scope throughout will remain rather high-level and philosophical. While this makes the paper complementary to existing methodological critiques regarding the use of hedges (e.g., Johannes et al., 2021; Rohrer et al., 2022; Vuorre et al., 2022), some readers may wonder how such a high-level argument translates into particular studies or specific strands of effects research. This question is reasonable, and I believe it merits focused discussions on a case-by-case basis. However, in order to even enable such focused discussions it seems necessary to first develop an overarching problem statement regarding the use of hedges in general. My main hope for this paper is that it provides exactly that, or at least that it underlines why reflections about the use of hedges in the field are needed.

Another aspect I wish to clarify has to do with the paper’s terminology. In particular, the use of the term hedges may sound unnecessarily dismissive, in the sense of implying that researchers introducing moderators or individual-level effects are “hedging their bets” and “try to avoid giving an answer” (Cambridge, n.d.). While I believe this is a valid way of characterizing the (intentionally) degenerative use of hedges, it is not the only way in which “hedge” can be defined. In this paper, I employ a value-neutral definition of “hedge” as “a means of protection, control, or limitation” (Cambridge, n.d.). This encompasses the idea that hedges serve a protective function (which is also why the term “hedge” is of conceptual value here compared to just using “condition” or “moderator”), but it does not imply that implementing hedges is inherently bad. To make it very clear, this is not the message I wish to convey. Rather, the big picture argument is that while hedges have often been valorized as evidence of disciplinary progress, a focus on hedges wholesale may actually be inhibiting progress.

Hedges as Markers of Sophistication: A History of the Hedges-as-Progress-Perspective

The idea that hedges represent theoretical progress is carved deeply into our historical understanding of effects research. This becomes clear when we consider how the field’s internal history is typically described. The most common narrative, often referred to as the received history, argues that media effects research has gone through several paradigmatic stages (McQuail, 2014). All of these paradigms are said to have made fundamental changes to the assumptions and claims of prior paradigms, and all of those changes were, presumably, aimed at unraveling the layers of complexity surrounding media effects.

The received history generally pinpoints the start of the first paradigm somewhere around the interbellum era. At the time, “[t]he media were feared as agents of social control that could be used by demagogues to shape the behavior of the masses” (Lowery & DeFleur, 1983, p. 366), which led scientists to search for strong, direct, and across-the-board effects of mass media on people’s behavior and cognitions. Cantril’s (1940) Invasion from Mars Study, the Motion Picture and Youth (Payne Fund) Studies (Charters, 1933) and Hovland et al. (1953) work on persuasion are often cited as archetypes of this era, which coincided with the heydays of positivism in the social sciences (Alvesson & Skoldberg, 2009).

It did not take long for these early studies to be criticized. The main source of contention simply was that research findings were unconvincing. To the extent that media effects were ever found, it seemed that they were extremely volatile and depended on a host of other factors. As Berelson (1948) summarized, “[s]ome kinds of communication on some kinds of issues, brought to the attention of some kinds of people under some kinds of conditions, have some kinds of effects” (p. 172). This realization segued media effects research into what the received history calls the second paradigm—the paradigm of minimal media effects. Not coincidentally, the years of the second paradigm were also those in which the interactions between person and environment—and the resulting construction of social reality—became the center point of social-scientific theorizing (Alvesson & Sköldberg, 2009; Sherry, 2004). This did not result in one commonly shared stance on media effects research, however. In fact, the second paradigm appears to have spawned two radically different conclusions about the field’s viability.

The first conclusion sounded highly pessimistic, and it entailed a flat-out rejection of media effects research and its positivistic foundations. These critics, not rarely from strong constructivist schools, argued that effects scholars had had “unsociological intellectual coordinates” (Pooley & Katz, 2008, p. 796). Gitlin (1978), for instance, predicted that traditional effects research was doomed to fail because it did not account for the very building blocks of social reality—the individual, and their social context:

It has looked to “effects” of broadcast programming a specifically behaviorist fashion, defining "effects" so narrowly, microscopically, and directly as to make it very likely that survey studies could show only slight effects at most. [. . .] It has tended to seek “hard data,” often enough with results so mixed as to satisfy anyone and no one, when it might have more fruitfully sought hard questions. [. . .] Is it any wonder, then, that thirty years of methodical research on "effects" of mass media have produced little theory and few coherent findings? The main result, in marvelous paradox, is the beginning of the decomposition of the going paradigm itself (p. 206).

Several voices in the field sympathized with Gitlin’s (1978) conclusion that “the media are not very important in the formation of public opinion” (p. 207). As Katz, Haas, and Gurevitch (1973) argued, it seemed that “people bend the media to their needs more readily than the media overpower them” (pp. 164–165). This shift in perspective from effect to use got widespread traction and came to form the foundation of theorizing on Uses and Gratifications (Katz, Blumler, & Gurevitch, 1973) and selective exposure (Klapper, 1960).

The second conclusion drawn during the minimal effects era sounded more optimistic. This perspective discerned a “Brink of Hope” (Klapper, 1957, p. 453) for effects studies if they were to identify the “nexus of mediating [i.e., moderating] influences” (p. 457) underlying media effects. Clarke and Kline (1974), for example, described the existence of moderators as “antidotes to [the] no-media-effects theme” (p. 225), as they brought about “curiosity [. . .] to reexamine null results about media effects” (p. 224). Effectively, this line of reasoning tried to redirect the attention of media effects research from effects per se to the hedges of those effects. This shift in theorizing was far from exclusive to the field of media effects—or even communication science, for that matter. In psychology, classic debates around the context-dependent properties of social-psychological phenomena occurred around the same time (Thorngate, 1976), as did Bandura’s (1977) gradual extension of social learning theory into social-cognitive theory (which was heavily influenced by social constructivist thought: Bandura, 2001).

The flirtation with social constructivism during the second paradigm, and the resulting focus on hedges, appears to have played a pivotal role in shaping the historical trajectory of effects research: scholars of the so-called third paradigm also dismayed the pessimistic reading of the second paradigm and preferred to theorize about “Not-So-Minimal-Consequences” of media use, delineated by hedges (Iyengar et al., 1982, p. 848). Theoretical frameworks developed during this era were plentiful (e.g., agenda-setting, cultivation, framing, spiral of silence, etc.), but all of them had roots in constructivist and critical theories (Sherry, 2004). They also all carried a common theme: media effects are “long-term, indirect, and accumulative” (Lowery & DeFleur, 1983, p. 372). Media effects exist, but they will only be identified if studies consider time-dependent individual and societal hedges.

The focus on hedges in media effects studies appears to have become even more pronounced since the turn of the 21st century. The introduction of new media technologies brought theories about media selection even more to the forefront, and it also ramped up theorizing about the compartmentalization, and even individualization, of effects. McQuail (2014) referred to this fourth, and latest, stage of effects research as the paradigm of negotiated media effects. He recognized that this paradigm effectively transitioned effects research into the realms of social constructivism: it embraces the circularity of selection and effects, and it underlines the continuous, dynamic interactions between people and their media-laden environment (see also Sherry, 2004).

Comparable to what happened during the second paradigm, the message of the fourth paradigm has been interpreted in two radically different ways. McQuail (2014) himself interpreted it as meaning that effects studies would require “a shift from quantitative and behaviorist methods toward qualitative, deeper, and ethnographic methods.” The dominant interpretation has been very much the opposite. That is to say, quantitative effects studies have not abandoned in favor of qualitative designs—quite the inverse, actually. Over the past decade the existence of hedges has been mentioned time and time again as an argument for more, rather than less, quantitative “research into conditional, indirect, transactional, and self-generated media effects” (Valkenburg & Peter, 2013b, p. 208). Once again, it seems, hedges are being put forward as “antidotes to [the] no-media-effects theme” (p. 225), paving the way to progress. According to Neuman (2018), for instance, “our paradigm would be strengthened if we recognized that media effects are neither characteristically strong nor are they characteristically minimal: they are characteristically highly variable” (p. 370). Kross et al. (2021) similarly argued that the “strategy now should be to study the different psychological processes that explain how and why social media impact well-being differently, and why these effects may vary for different people in different cultures” (p. 62). Likewise, Rodriguez et al. (2021) stated that “we must examine the differential impact that social media have on our well-being, and how their effects may differ depending on our demographic characteristics, personality traits, and cultural backgrounds” (p. 131). Such calls for hedges have been translated into the published literature: a content analysis by Holbert et al. (2020) estimated that roughly one-fourth of effects studies use moderation analyses to test for contingencies, often without a clear theoretical argument.

In general, the concern with hedges in effects research has been interpreted as a positive sign—as an indication that the field has made theoretical progress. As Perloff (2013) put it, “scientific progress can be assessed by examining sophistication of theories, and our theories have become more complex and conceptually nuanced over the past 6 decades” (p. 320). Hedges, according to this narrative, represent “theoretical refinements” (Neuman & Guggenheim, 2011, p. 177) that paint a more realistic picture of the complex social situation in which media effects occur (see also Sherry, 2004). This positive attitude is also voiced by Editorial Boards of communication journals, who say to consider the modeling of moderators as an explicit sign of research quality (Goyanes, 2020).

Given their shared optimism, it is not surprising that scholars studying media effects seem eager to continue exploring hedges in greater depth. A notable evolution in this regard is research on so-called person-specific or individual-level susceptibility (Beyens et al., 2020, 2024; Valkenburg, 2022; Valkenburg et al., 2021a; Valkenburg et al., 2022). According to the individual susceptibility perspective, media effects should be defined as “intra-individual changes due to (social) media use” (Valkenburg et al., 2021b, p. 4). This definition has a fundamental implication: if the occurrence, size, and sign of media effects vary from person to person, traditional group-level statistics cannot properly represent media effects. This also means that if media effects are to be investigated, they need to be assessed at the level of the person. Such person-specific analyses are said to hold great promise for the field, not in the least because they may shed light on the conflicting findings that have historically characterized media effects research:

[a] person-specific media effects paradigm may not only help academics resolve controversies between optimistic and pessimistic interpretations of aggregate-level effect sizes, but it may also help us understand when, why, and for whom [social media use] can lead to positive or negative effects on mental health (Valkenburg et al., 2022, p. 66).

In light of these promises it may come as no surprise that effects researchers have been quick to embrace individual susceptibility, citing it as an “important and a logical extension of prior work” (Masur et al., 2022, p. 192; see also Parry et al., 2022).

Interestingly, it is not just effects researchers themselves who voice their optimism toward the ever-increasing focus of hedges. Scholars from critical and constructivist epistemologies that were, historically, adverse to the positivistic notion of “effect” also find value in its increased contextualization. One example can be found in Ramasubramanian and Banjo’s (2020) critical media effects theory, which, as the name implies, has roots in critical scholarship. In the authors’ words, critical media effects theory “urges media effects scholars to interrogate claims of the universal applicability, generalizability, and relevance of all media effects theories, concepts, and model [. . .] across subpopulations and cultural contexts to better identify their boundaries, generalizability, robustness, applicability, and falsifiability” (p. 391). In essence, this argument does not deviate all that much from the arguments in the effects literature: media effects exist, but we can only understand them if we map out the set of relevant personal, cultural, and social hedges. Thus, it seems that the search for hedges may actually provide a portal between the historically conflicting qualitative-idiographic (constructionist/critical) and quantitative-nomothetic (post-positivist) traditions. This fact has not remained unnoticed amongst effects researchers either. As Valkenburg et al. (2022) contend, one of the main advantages of the individual-level susceptibility perspective is that it may do exactly that:

There used to be a time that researchers had “to make a choice” between a nomothetic or an idiographic method. [. . .] Luckily, we can now creatively combine the strengths of idiographic designs with those of nomothetic designs (p. 10).

In sum, it seems that the history of media effects research has been marked by a recurring sense of optimism about the modeling of hedges. It is considered to have provided the theoretical nuance and sophistication that is required to tackle an inherently complicated phenomenon, and it has even been interpreted as a way to integrate media research from historically conflicting epistemologies. In what follows, I will refer to this dominant viewpoint as the hedges-as-progress-perspective on media effects.

Problems With the Hedges-as-Progress-Perspective

Despite the optimism that the hedges-as-progress-perspective has generated, scholars have frequently questioned whether media effects research has made any historical progress at all. In the 1980s already, Lowery and DeFleur (1983, p. 359) criticized the field for providing an “almost hopeless jungle of contradictory claims about how it all works” (p. 359). A similar sentiment was voiced 10 years later by Katz (1992), who concluded that media effects researchers needed “to perform some crucial experiments and [. . .] agree on appropriate research methods rather than just storing a treasury of contradictory bibliographical references in [their] memory banks” (p. 85, as cited in Livingstone, 2006, p. 246). Still two decades after this, A. Lang (2013) observed “remarkably little progress” in the field: “[w]e have [only] identified a number of small effects [. . .] and we have demonstrated that they occur over and over and over again, in various situations and with various groups” (p.14). In A. Lang’s (2013, p.10) view, this lack of progress meant that media effects research was essentially a “paradigm in crisis” that needed to be abandoned.

Each time, these conclusions may have sounded harsh, but perhaps they were never entirely unfounded. When we take a historical look at the focus on hedges in modern media effects frameworks it should be clear that, fundamentally, their arguments do not differ all that much from those made decades ago. As we have seen, the minimal effects scholars were already there to put the hedges of media effects at the forefront of scientific debate, and even during the formative years of the first paradigm some scholars had underlined the contingent nature of media effects. Charters (1933) summary of May and Shuttleworth’s Payne Fund Study is exemplary of this:

That the movies exert an influence there can be no doubt. But it is our opinion that this influence is specific for a given child and a given movie. The same picture may influence different children in distinctly opposite directions. Thus in a general survey [. . .] the net effect appears small. (p. 16).

So, at least to some extent, it seems that the field has been invoking the same guiding principle ever since its inception: media effects exist, but they are hedged.

Of course, it is not necessarily a problem that hedges have been of continuous interest in effects research. It does however have two critical implications. First, it shows that we might want to use the terms “paradigm” and “paradigm shift” more sparingly when we characterize theoretical proposals revolving around hedges, such as differential and individual-level susceptibility (e.g., Piotrowski & Valkenburg, 2015; Valkenburg et al., 2022). Characterizing these as distinctive “paradigms” is common and may seem to add philosophical depth to their arguments, but it does not really fit the bill. In Kuhn’s (1970) original conceptualization, paradigm shifts were the result of full-fledged revolutions from one scientific paradigm to another. That is, paradigms were considered to be incommensurate (incompatible, and incomparable) in their core methods and assumptions—whence the need for a revolution. This does not seem to fit media effects research at all; rather, the preoccupation with hedges has provided a “considerable continuity” throughout the history of the field (G. E. Lang & Lang, 1981, p. 662). It therefore seems much more fitting to characterize all proposals about hedges as natural outgrowths of the field’s history rather than as new paradigms in their own right—a point to which I will return at length below.

A second implication of characterizing hedges as a historical continuity is that it brings up a critical question: if media effects studies have always revolved around the identification of hedges, then can we actually interpret the increased focus on hedges as progress (as the hedges-as-progress-perspective suggests)? The answer to this, I argue, is “no.” This may sound counterintuitive. Social reality, after all, is obviously complex, so it seems that effects could not possibly exist without hosts of interrelations and interactions with other variables (see also Healy, 2017). But this is not the point. The main problem with hedges is not ontological: of course effects are heterogeneous and dynamic. The problem is that using hedges as a primary focus of research puts the field on shoddy epistemological grounds. To show why this is the case, it is instructive to note that media effects research is traditionally said to be (post-)positivist in nature, the point being that it is, much like the natural sciences, concerned with quantitative analyses of (causal) relationships between variables (e.g., Krcmar et al., 2016; Ramasubramanian & Banjo, 2020). Unsurprisingly, then, the epistemic values said to be lauded by communication scientists also coincide with those valued by the natural sciences, and they are mainly aimed at providing intelligible tests of quantitative hypotheses.

To illustrate, let us consider DeAndrea and Holbert’s (2017) revised version of Chaffee and Berger’s (1987) criteria for theory evaluation in communication science. Table 1 organizes these criteria according to whether they have, in fact, been supported or weakened by the field’s focus on hedges. A simple head count already suggests a 4-to-3-result (meaning that most criteria have been weakened), but that is hardly the most important observation. It is more important to point out that (1) there are severe tensions between the different criteria for theory evaluation, and (2) that these tensions have been going at the expense of criteria which are, arguably, most important for an aspiring quantitative science.

An Overview of DeAndrea and Holbert’s (2017) Criteria for Theory Evaluation, Categorized According to Whether They Have Been Strengthened or Weakened by the Reliance on Hedges in Media Effects Research.

To flesh out this argument, let us first consider the values that have been respected, or even supported, by the field’s focus on hedges: heuristic provocativeness, organizing power, and explanatory power. Heuristic provocativeness refers to the extent to which theories are able to stimulate new research ideas. It is relatively obvious that the attention to hedges provides much more theoretical leeway, in the sense that it allows researchers to think beyond pre-existing conceptual boundaries of theories. The longstanding attempts at using moderators to extend existing media effects frameworks are exemplary of this: cultivation, priming, agenda-setting, and framing have all been augmented with moderating variables (mostly from psychological processing theories) to generate new sets of hypotheses (e.g., Cacciatore et al., 2016; Shrum et al., 2011). The plethora of so-called conditional process models in the literature also provides a case in point (e.g., Holbert et al., 2020; Rohrer et al., 2022). In essence, all kinds of media effects can been reinvestigated by proposing new sets of hedges, which goes to show that the search for hedges is, indeed, heuristically provocative.

Arguably, the reliance on hedges has also offered the literature with more organizational power. This can be seen in two ways. First, when we put hedges at the forefront of research on media effects, all studies in the field essentially operate under the same umbrella, and all of them come to share a common goal: to map out the set of relevant hedges of media effects. This is made especially explicit in the DSMM and the individual-level susceptibility perspectives, both of which say to offer a general framework for research on hedged media effects. Second, as we have seen earlier, the focus on hedges also reorganizes the arguments of traditionally “positivistic” effects scholars in such a way that they become aligned with the arguments of their “constructivist” and “critical” colleagues (Pooley & Katz, 2008; Ramasubramanian & Banjo, 2020; Valkenburg et al., 2022). This, indeed, appears to suggest a fundamental increase in organizational power.

The last way in which the reliance on hedges has added to research on media effects is through its increase of explanatory power. Basically, hedges allow us to explain any pattern of observations in terms of media effects: if we find effects, we may say that we have corroborated the effects hypothesis; if we do not find effects, we may attribute it to a lack of understanding of the appropriate hedges (e.g., Valkenburg, 2015; Valkenburg et al., 2022). Thus, hedges provide a philosophical device to reinterpret nearly all research findings in terms of media effects, and, thus, they provide the field with near endless explanatory power.

Critical readers may already realize that the latter aspect also jeopardizes the field’s credibility: if all findings can be explained in terms of hedges of media effects, this means that there is nothing left to predict about such effects. After all, in order to make a meaningful prediction some observations would need to be amenable as counterevidence; otherwise, any prediction will be a priori correct, which leaves us with nothing to test or, in other words, no predictive power. The lack of predictive power is highly problematic, because it renders much of empirical media effects research into a tautology: effects scholars can only ever predict and find what they already assumed—namely, that media effects occur only for the “right” sets of people under the “right” sets of circumstances (cf. Berelson, 1948).

This struggle with predictive power boils over into problems with another criterion for theory evaluation, falsifiability (Berger et al., 2010; Krcmar et al., 2016; Valkenburg & Oliver, 2020). Popper (1963), who coined the term, saw falsifiability as providing a rational distinction between science and pseudoscience. Pseudoscience only ever tries to establish the truth of hypotheses (by focusing on “confirmatory evidence”), whereas science actively pursue the possibility that hypotheses are wrong (focusing on “falsifying counterevidence”). Hence, according to Popper (1963), all scientific hypotheses should be falsifiable by a given pattern of findings. Unfortunately, the reliance on hedges in media effects research has made attempts at falsification largely impossible: any set of findings can be reinterpreted in terms of moderators or individual variability, and even absence of evidence corroborates the proposition that effects are hedged. This is not just a hypothetical problem. In fact, the existence of hedges has already been referenced to dismiss the limited evidence for media effects in the published literature (see also Valkenburg, 2015): “[b]y focusing on group-level moderator effects, meta-analyses (and the studies on which they are based) invariably gloss over more subtle individual differences between people” (Valkenburg et al., 2022, p. 61). Similar lines of reasoning are applied to motivate the importance of research into individual susceptibility:

What has often been ignored in such debates is that the effect sizes are just what they are: statistics observed at the aggregate level. Such statistics are typically derived from heterogeneous samples of adolescents who may differ greatly in their susceptibilities to the effects of environmental influences in general [. . .] and media influences in particular. (Valkenburg et al., 2022, p. 66)

The issue with this argument is that it is, in fact, a slippery slope: measures of statistical evidence should only be taken as evidence to the extent that they reflect media effects. If media effects are not found, or if they are only unconvincingly found, it just shows that media effects manifest themselves when the right sets of hedges are taken into consideration. If we are to take seriously that “theoretical progress can only occur if certain claims of theories that do not hold are formally falsified” (Valkenburg & Oliver, 2020, p. 28), this state of affairs seems highly problematic. Indeed, it leaves little to be falsified at all.

Aside from creating empirical problems in terms of predictive value and falsifiability, the reliance on hedges in media effects research has also generated issues at a theoretical level. For one, identifying media effects at more and more specific levels makes arguments about media effect gradually more complex—and, perhaps, intuitively more “valid”—but less parsimonious. The principle of parsimony, or Occam’s Razor, refers to the fact that simple explanations are to be preferred over complex explanations—at least if the latter are not clearly superior. As we have just argued, the mere addition of hedges has not added much in terms of predictive power—it has, arguably, taken away from it—but it has made explanations of media effects more complicated by introducing hosts of hedges in the form of moderators and individual-level variability. This, indeed, clashes head-first with the requirement of parsimony.

Arguably, the most fundamental theoretical problems caused by hedges are to be identified at the level of internal consistency. In essence, internal consistency refers to the fact that propositions of theories should not logically conflict one-another. It is not hard to show that the addition of hedges causes considerable problems in this regard. First of all, the integration of hedges into media effects frameworks is known to have complicated the meaning of the effects those frameworks were initially trying to explain. This problem has been surfaced in discussions surrounding the fuzzy definitions of framing (Cacciatore et al., 2016), priming (Roskos-Ewoldsen et al., 2009), cultivation (Potter, 2014), and persuasion effects (O’Keefe, 2016). Under pressure of the field’s search for hedges, all of these concepts had their definitions stretched and amended over the years and, as a result, have come to overlap very heavily with one another (see also Coenen & Van Den Bulck, 2018). This problem is well known, but the problems and solutions to it have often been identified at the level of specific frameworks. If some particular type of media effect is considered to have become unclear or ambiguous, the conclusion is that the field needs to adopt a purified definition (e.g., Cacciatore et al., 2016) or abandon it altogether (Ewoldsen, 2017). However, based on the argument delivered in this paper, it might be more useful to trace back these problems to a much more general dynamic—namely, the disciplinary preoccupation with modeling hedges.

A second way in which hedges hamper internal consistency is perhaps even more fundamental: at some point, the rampant modeling of hedges will muddle the notion of causality which underpins the concept of “media effect”. Especially the turn toward “individual” susceptibility opens the gates for some deep philosophical issues: implicitly, a definition of “media effect” as a person-specific phenomenon shifts the underlying definition of causality from what philosophers call the type-level to the token-level (for a detailed discussion, see Pearl, 2009, pp. 309–330). Type-level causal claims are those that communication scientists are generally familiar with, and they are made in reference to types of events or entities in a given population. An example of such as statement could be that “Instagram use causes depressive feelings among adolescents,” which resembles a traditional hypothesis in media effects research. The individual-level susceptibility perspective, in contrast, requires a shift of claims about media effects from the type- to the token-level. Claims at the token-level do not operate at the level of types of events or entities but at the level of particular events and entities. An example of a token-level claim about media effects could be that “Sophie’s Instagram use caused her to feel depressed.” This sleight of hand is not minimal. In fact, the existence of causation at the token-level (“singular causation”) and its relation to type-level causation (“general causation”) has long been a topic of philosophical debate (Danks, 2017; Hausman, 2005; Pearl, 2009). While it has been accepted that singular causation may exist without a general cause (Sophie’s depression can, in theory, be caused by her Instagram use even if nobody else has ever been influenced by their Instagram use), this does not mean that there is an easy way to establish scientific knowledge about the existence of singular causes without referring to general, type-level principles.

To illustrate, let us consider how one would go about establishing the token-level claim that Sophie’s depressive feelings are caused by her Instagram use. Say that we use an intensive longitudinal n-of-1-design and find that her depression scores correlate very strongly with her social media use, then does this corroborate the claim that Instagram use caused her to feel depressed? Maybe, maybe not. The answer to this question depends on the following counterfactual: had Sophie used Instagram use to a lesser extent than she did, then would she have been less depressed (Lewis, 1973)? If so, her Instagram can be identified as a cause; otherwise, it cannot. The obvious problem with evaluating this counterfactual is that it cannot be directly probed, because—despite the intensive data at our disposal—it exists in a world to which we have no access (the world in which Sophie did not use Instagram as much as she actually did). In an attempt to address this so-called “fundamental problem of causal inference,” one may turn to specialized methods, such as the potential outcomes framework (Holland, 1986) or Structural Causal Models (Pearl, 2009). In principle, these types of methods allow for an evaluation of token-level claims, but doing so will inevitably invoke type-level information along the way (Danks, 2017; Hausman, 2005; Pearl, 2009; Wright, 2011). In the methodological language of Structural Causal Models, for instance, the only discernable difference between a type- and token-level causal model is the amount of circumstantial, scenario-specific information added to the directed graph from causes to effects (Pearl, 2009, p. 256). A token-level causal graph is thus constructed by implementing all changes that are known to occur to the type-level graph once the token-level circumstances apply. Clearly, however, this means that the token-level judgment “requires knowledge of (at least) both the general causation that applies in cases of this type [i.e., the structural model], as well as details about this particular case [i.e., the token]” (Danks, 2017, p. 202). Type-level causal knowledge thus remains “an epistemically crucial backdrop to investigations into actual [token-level] causation” (Hausman, 2005, p. 51). Returning to our example of Sophie, establishing her Instagram use to be the cause of her depression first requires evidence that effects of Instagram use manifest themselves under the very circumstances and characteristics that define her. If such effects cannot or are not known to occur under said circumstances, then it is still dubious to interpret the particular findings for Sophie as evidence of a token-level causal effect.

The individual-level susceptibility perspective appears to ignore the distinction between type- and token-level causality, as becomes apparent when it interprets differences in person-specific coefficients as different manifestations of media effects. For example, in the study of Beyens et al. (2024) it is concluded that “45% of adolescents experienced no changes in well-being due to any of the three types of social media use, 28% only experienced declines in well-being, and 26% only experienced increases in well-being” (p. 1). The problem with this conclusion is subtle: in order to identify 28% (26%) of adolescents in the sample as experiencing negative (positive) effects, we need to know that the trajectories of those single individuals were, in fact, exemplars of a media effect—that is, for all individual cases we need to establish singular causation. But this creates a circularity: In order to establish singular causation in every particular case, we first need to invoke the situational applicability of a general cause as part of the evidence. The interpretation that 28% (26%) of individuals are negatively (positively) affected by media use thus hinges on the assumption that there are applicable type-level media effects, which means that claims of individual-level susceptibility assume the very phenomenon they are said to bypass. This is logically inconsistent, and it corroborates Johannes et al. (2021) plea to reinstate the focus of the field on type-level media effects (see also Vuorre et al., 2022).

In sum, I believe to have established that media effects research has suffered considerably from a (somewhat blind) emphasis on hedges: it has built its research efforts upon a potentially intractable foundation of contingencies, and this has led to a violation of its self-proclaimed criteria of scientific explanation. Metaphorically speaking, then, it seems that by building up hedges all around, the field risks trapping itself in an epistemological maze.

Of course, one could argue that this conclusion is tendentious, in the sense that it attributes more importance to the problems with some criteria and disregards the criteria that were notably strengthened by the reliance on hedges. Indeed, there is no definitive reason for preferring one set of criteria over another, but philosophers of science generally do accept that good scientific theories should, at the very least, be intelligible (De Regt, 2017). Intelligibility, here, refers to a theory’s (1) empirical adequacy and (2) its logical consistency (De Regt, 2017, p. 38)—exactly those criteria on which media effects research appears to fall short. As a result, it seems useful—necessary, even—to consider how the field could adopt a more constructive attitude toward hedges.

Hedges as Markers of Theoretical Resilience: The Hedges-as-Protection-Perspective

If we are to shift our attitude toward hedges it seems important to first establish a shared understanding of how they may, in general, serve or impede scientific progress. As we have seen, the classical interpretation is to consider the modeling of hedges as progress in and on itself (see also Healy, 2017), but this perspective seems clearly misguided—if not only for the fact that it has also problematized, rather than improved, scientific theorizing on media effects. In what follows I will argue that the search for hedges may still be fruitful, but that it should be interpreted very differently; namely, as a sign of scientific resilience. That is, hedges have not been a vehicle for scientific progress in media effects research, but they have offered the field with a strategic argument to continue despite a lacking empirical success rate. A similar interpretation has been given by Vorderer et al. (2020, p. 11):

What [the evolution of media effects research] indicates, we believe, is this: In the background of the fact that [. . .] effect sizes found for many dependent measures have been rather small, many scholars have tried to overcome this disappointing situation by either differentiating, specifying, and subsequently integrating the various relations between causes and effects in media use even further.

I will refer to this interpretation as the hedges-as-protection-perspective. The difference between the hedges-as-protection-perspective and the hedges-as-progress-perspective is not just a matter of semantics. The difference is fundamental: for the hedges-as-progress perspective, hedges are markers of theoretical sophistication and therefore inherently valuable; for the hedges-as-protection-perspective, hedges may be valuable and lead to progress, but they do not imply progress. The requirement, then, is simply to be more conservative: the addition of hedges should be considered progressive only if it leads to new findings and theoretical insights without violating the virtues of quantitative scientific explanation discussed earlier.

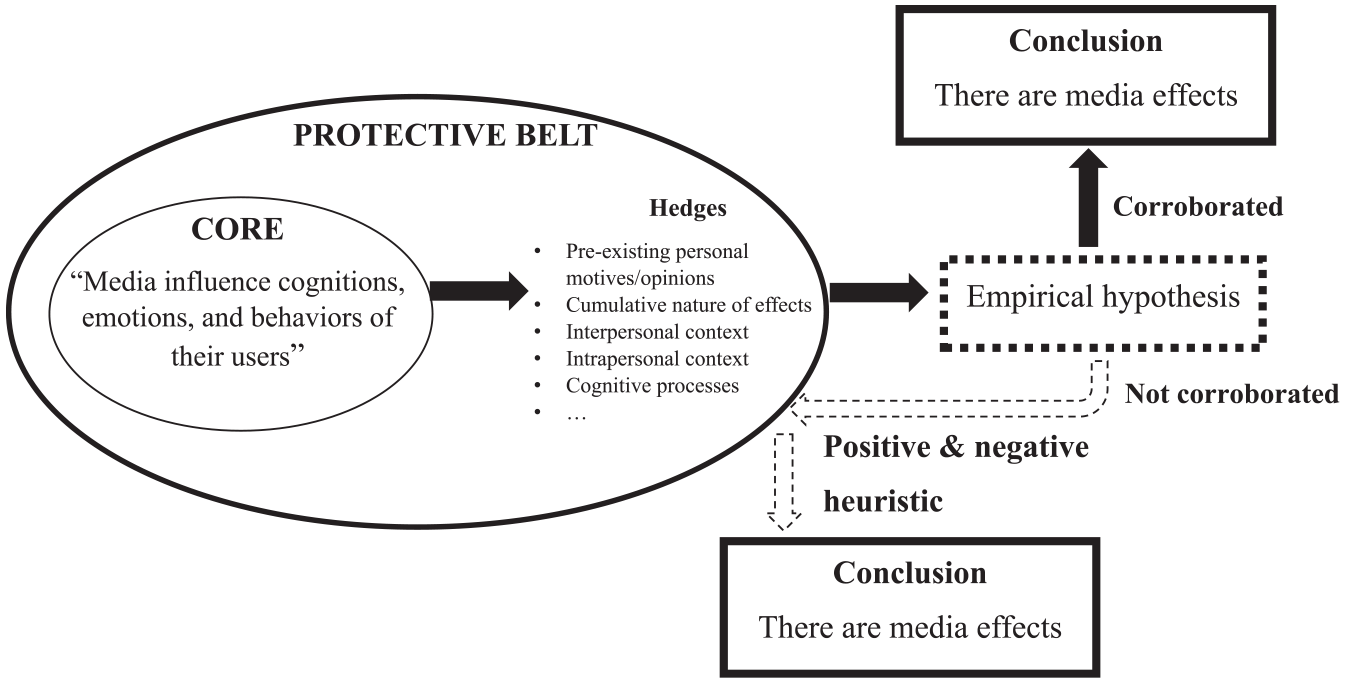

The shift in attitude required by the hedges-as-protection-perspective can be contextualized very well by Lakatos (1978a, 1978b, 1978c) work in the philosophy of science. According to Lakatos, all scientific fields—or, as he called them, research programmes—consist of two metaphorical layers. The first layer is called the hard core, and it is made up of the theoretical chain defining the essence of a discipline: its laws, commonly shared facts, and key theoretical postulates. In the research programme of media effects, the core postulate is quite obvious: ‘‘media have effects’’ (see Figure 1). We might not be sure when and why those effects occur, but we still agree that, somehow, there are effects. This proposition is the heart of the research programme. It simply is what defines the field, and without it media effects research could not possibly exist. This is also why, as Lakatos noted, it is rarely a good idea to get rid of a core hypothesis in its entirety. There are many possible reasons why research does not pan out—think of improper measurement, bad luck, or a hidden moderator—so refuting the core based on weak findings may throw out the baby with the bathwater. Lakatos therefore characterized the core of a scientific research programme as “unfalsifiable by fiat”, meaning that should never be refuted at all. This effectively offers working scientists with a decision heuristic (“do not falsify the core!”), which Lakatos referred to as the negative heuristic of a research programme.

A schematic representation of Lakatos’ argument in the context of media effects research.

Because of the negative heuristic, scientists who are confronted with unconvincing evidence for their hypotheses need to shift their attention away from the core toward “something else”. This ‘‘something else’’ is what Lakatos called the protective belt of the research programme. As Figure 1 shows, the protective belt can be envisioned as a second layer of (auxiliary) hypotheses enclosing the core, and it literally exists to protect the core against falsification: when research findings are unpalatable scientists use protective auxiliaries to argue, for instance, that the research situation was not adequately controlled, that statistical power was too low to detect the media effect of interest, or that the study did not adequately take into account the hedges of effects. These lines of reasoning, represented by the right side of Figure 1, represent what Lakatos called the positive heuristic of a research programme.

The positive heuristic offers “a problem-solving, anomaly-digesting technique” (Motterlini, 1999, p. 106) that allows scientists to defend their failing hypotheses, and it is what has made Lakatos’ account such a tentative description of scientific practice. It suffices to consider the standard discussion section of a research paper to see the positive heuristic at play but, more importantly, the positive heuristic can also be argued to underlie much of the historical reliance on hedges in media effects research. This realization is useful two main reasons—one historical, and one normative.

Historically, the positive heuristic offers a simple but useful narrative device to rationalize the trajectory of media effects research. From a Lakatosian viewpoint, the search for hedges of media effects has not been primarily useful because it made theories in the field more sophisticated or realistic (as the hedges-as-progress-perspective suggests); rather, it has been primarily useful because it has built up the protective belt and allowed the field to continue despite its dubious empirical successes. This reinterpretation is helpful, because it embeds our prior analysis and can even be used to extend other historical overviews of effects studies. To illustrate, consider Neuman and Guggenheim’s (2011) cumulative, six-stage evolution of the history of effects scholarship. All stages in Neuman and Guggenheim’s (2011) model consist of a historical cluster of media effects theories, with each cluster adding “an increasingly sophisticated set of social, cultural, and structural conditions and cognitive mechanisms that help explain when mass-mediated messages do and do not affect the beliefs and opinions of audience members” (Neuman & Guggenheim, 2011, p. 171). In the terminology of the current paper, one could rephrase this by saying that every single stage in the history of media effects research activated the field’s positive heuristic and added a particular cluster of hedges to the field’s protective belt. The protective belt thus took the form of pre-existing motivations and opinions of media users (“active audience theories”), the interpersonal context (“social context theories”), the social and cumulative nature of effects (“societal and media theories”), the wide variety of mental processes involved in a media effect (“interpretive effects theories”), and the complicated continuous interactions between exposure and effect in modern media environments (“new media theories”).

The fact that the Lakatosian argument embeds, and underlines, Neuman and Guggenheim’s (2011) historical overview, shows that media effects research has been functioning exactly as one would expect from a scientific research programme. This also suggests that it might be unfair to say that media effects research has been pre-scientific (Potter et al., 1993) or in a state of paradigmatic crisis (A. Lang, 2013)—both of which refer to scientific fields that lack a common strategy, or a shared positive heuristic. It also underlines, yet again, that the history of media effects research has not been marked by any Kuhnian paradigm shifts in the strict sense of the word. Its history has been continuous, not discontinuous, and it has been characterized by a recurring reliance on its positive heuristic.

Notwithstanding the value of the positive heuristic for descriptive purposes, it does raise a normative question: if it is reasonable for scientists to protect their core hypothesis from falsification, then is there still some kind of standard to which they should be held when doing so? Lakatos, too, was well aware of the epistemological dangers in the positive heuristic. As he stressed throughout his writing, relying on the positive heuristic may be a very rational thing to do, but it can also be dangerous if done at will. He therefore made the epistemic function of the positive heuristic very clear: it could only ever be used to the extent that the evolutions arising from it are empirically and theoretically progressive.

Theoretical progressiveness refers to the fact that the positive heuristic can be used only if the proposed additions to the protective belt are based on a preconceived theoretical idea (Lakatos, 1978c, p. 149) and if they contain excess empirical content—that is, if they entail new, and falsifiable, empirical predictions (p. 167). Thus, theoretical progressiveness parallels the criteria for establishing intelligible theory discussed earlier (i.e., predictive power, falsifiability, logical consistency, and parsimony). Empirical progressiveness, in turn, refers to the fact that all predictions arising from the positive heuristic have to be convincingly tested and corroborated by new studies. When these criteria are fulfilled, the changes to the protective belt can be considered of value: they have led to an increase in theoretical knowledge about how the scientific phenomenon operates, so it is reasonable to conclude that the research programme has made progress. However, if the positive heuristic only manages to explain away unsatisfying research results without making new or logically consistent predictions, or if those new predictions are barely corroborated, the use of the positive heuristic has had no value at all. When this happens time and time again without ever forcing theoretical and empirical progress, Lakatos considered the research programme to be degenerating. Unfortunately, as our earlier analysis has shown, it appears that the focus on hedges in media effects research has been theoretically degenerative, rather than a progressive. So, echoing A. Lang (2013), we need to ask: “where do we go from here” (p. 23)?

Lakatos (1978b) made it seem obvious that long-lasting failures to make significant progress would require a debate about the viability of the research programme and, in extreme cases, a literal intervention of scientific law and order:

One may rationally stick to a degenerating programme until it is overtaken by a rival and even after. What one must not do is to deny its poor public record. It is perfectly rational to play a risky game: what is irrational is to deceive oneself about the risk. [. . .] Editors of scientific journals should refuse to publish [these] papers which will, in general, contain either solemn reassertions of their position or absorption of counterevidence (or even of rival programmes) by ad hoc, linguistic adjustments. (Lakatos, 1978b, p. 117)

I do not think we need to go as far as giving up on media effects (as did, for instance, A. Lang, 2013). However, it seems clear that we need to rethink how our discipline deals with its positive heuristic. A useful starting point here is to remove hedges from their theoretical pedestal. This does not mean that hedges have no role to play, but they should be used only in a reasonably progressive fashion. There is no simple blueprint for what this should exactly look like, but part of the litmus test is obvious: we should always compare hedges against the criteria for intelligible theory mentioned before (i.e., predictive, falsifiable, parsimonious, and logically consistent). In the last section of this paper I will briefly summarize some philosophical starting points for moving in that direction.

A Quick Start Guide to Dealing With Hedges

A first starting point is to avoid falling prey to an intuitive fallacy concerning hedges: as social scientists, we know that our phenomena of interest are highly complicated and intricate (ontologically speaking). This might, in and on itself, already seem enough to require, rather than abandon, the modeling of hedges, but that would defy the whole point of the earlier argument (see also Healy, 2017). To avoid this line of thought, it seems important to underline a key (but perhaps unintuitive) point: The problem with statements about the existence of hedges is not that they are not true. The problem is that they are, in a sense, too obviously true to be meaningful. In Lakatosian terms, saying that media effects are indirect, conditional, and person-specific is essentially like saying that there is a protective belt surrounding the core hypothesis of media effects. Of course this is true. It is trivial.

For this reason, it seems useful to reconsider the role of hedges within the context of the media effects research programme. As a starting point, we should accept that hedges are always there. The existence of hedges is what determines the sample space of possibly observable effects and, as such, their existence is axiomatic rather than part of our empirical hypotheses. This reasoning is nothing revolutionary. In a treatise of the philosophy of the social sciences, Roberts (2004) argued that social scientists will never be able to discover “true” laws in the sense of the natural sciences; at best, they will only ever find ceteris paribus or, indeed, hedged regularities. Hedged regularities are regularities in social phenomena, qualified by our knowledge that such regularities will always have their exceptions. Adopting a definition of media effects as hedged regularities is helpful, because it underlines the a priori truth-value of the propositions about hedges: there will always be moderators and individual-level variability. There will always be hedges. Their existence is, indeed, axiomatic.

The practical implication of this hedged definition may not be immediately obvious, but it is fundamental. It means that the field should not find value in researchers choosing a given topic of interest—say, sexualized media, media violence, or political media effects—and identifying a particular set of hedges. This practice of choosing and applying hedges at will may have seemed to be allowed—in fact, even motivated—by the hedges-as-progress-perspective, but it cannot, in and on itself, add anything to the body of knowledge about media effects (see also Rohrer et al., 2022). Indeed, if we define media effects to be hedged (i.e., if we consider the existence of hedges as part of the axioms), then the conclusion that a given media effect is hedged is, in and on itself, tautological.

To avoid confusion, I do not intend to say that there is nothing to be learnt from assessing hedges at all. We simply need to be aware about their role within the context of our research programme: the existence of hedges makes up the protective belt, and any addition of a hedge enacts the positive heuristic. This starting point implies that we should not just blindly accept additional hedges as valuable scientific contributions, which may hopefully put an end to the field’s excessive “shot-gun” (Johannes et al., 2021, p. 11) approach to modeling moderators and effects heterogeneity. The only way in which the investigation of hedges may still be helpful is when it is done “in a disciplined, theory-driven, and accurate way” (Johannes et al., 2021, p. 11). More concretely, this means that studies introducing (a class of) hedges need to shift their focus from evaluating an empirical existence-question (“is it possible to identify hedges,” the answer to which is always “yes”) to establishing the theoretical necessity of the (class of) hedges (are hedges X, Y, and Z truly essential for our understanding of the effect?). In order to evaluate such necessity, one should answer four questions rooted in the scientific values discussed earlier:

Is the addition of this particular hedge (or class of hedges) truly essential for our understanding of the media effect under investigation? That is, does the hypothetical gain in theoretical knowledge compensate for the certain decrease in theoretical parsimony? If not, we should favor the simpler model, without hedges.

Is the addition of this particular hedge (or class of hedges) logically consistent with other theoretical propositions adopted about the media effect under investigation? If not, we should change those or favor a model without hedges.

Does the addition of this particular hedge (or class of hedges) add to the predictive value of the media effect under investigation? That is, is it clear how the hedge(s) determine and/or impact the hypothesis specifically, or do we only know that they might make some kind of difference (as is often the case; see Holbert & Park, 2019)? In the latter case, we should prefer the model without hedges.

Does the addition of this particular hedge still leave room for falsifying observations? If not, or if it is unclear what the falsifying observations would look like, we should prefer the model without hedges.

If all of these are answered affirmatively, the addition of hedges can be considered theoretically progressive. In this case it also makes sense to put the hedges under empirical scrutiny. In contrast to common practice, however, empirical scrutiny should not just be thought of as evaluating a single statistical test. It requires a systematic, and collective, effort aimed at reproducing and replicating the role of the proposed hedges across studies—a practice that currently seems to be mostly lacking. It may be expected that the bulk of proposals will not survive this kind of scrutiny, but if they do survive we may actually have something of value in our hands: a theoretical breakthrough or, in Lakatos (1978a) words, a progressive problem shift. These shifts are what drive a science forward, but they are not to be taken lightly either: once there is consensus about the importance of a given set of hedges, they are no longer optional when the hedge applies. This added complexity is something the field should be willing to accept; if not, it seems that a model without hedges is still to be preferred.

Footnotes

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Part of the preparatory work for this paper was done during a postdoctoral fellowship supported by the Research Foundation – Flanders under Grant 12J7619N.