Abstract

Hate speech can increase stereotyped thinking and social distancing in a society. However, there is still a lack of variety in the social groups under study and research into possible solutions to the problem. Thus, our aim is to (1) study effects of hate speech against Chinese people and transgender people and (2) to investigate if counter speech can offset the detrimental effects of hate speech. We conducted a pre-registered online experiment with a 2 × 3 between-subject design, varying the attacked group (Chinese people/transgender people) and the type of comments (neutral/hate speech/hate speech and counter speech) for an Austrian sample (n = 1285). Findings reveal no effect of hate speech on the dependent variables, indicating that citizens might not be as vulnerable to hate speech after all. However, counter speech has a polarizing effect: attitudinal gaps and differences for social distancing increase between left-wing and right-wing participants if hate speech is countered.

Comment sections on news websites or in social media environments have great deliberative potential. They provide inclusive spaces for communicative processes and opinion formation based on the exchange of rational and tolerant contributions of those concerned with an issue of public interest (Barber, 1984; Rowe, 2015). However, instead of bringing citizens closer together, user comments have raised great concern due to the vast share of offensive language found in online discussions. In extreme cases, comments even contain hate speech which refers to verbal aggressions directed at groups or individuals because of social categories such as gender, race or sexual orientation (Erjavec & Kovačič, 2012). Cross-national studies have shown that 43% of all respondents (Hawdon et al., 2017) and even 71% of the age group between 18 and 25 years (Reichelmann et al., 2021) reported to have seen hateful or degrading online speech, attacking groups or individuals, in the previous 3 months. This demonstrates that hate speech is a widespread problem of the digital era.

Due to the frequent contact of online users with hateful content in user comments, it is highly relevant to investigate effects that might result from these encounters. Previous findings show that the repetition of stereotypes in hateful comments starts processes of priming, desensitization, and changes social norms, making people more open for explicit (Hsueh et al., 2015) and implicit (Weber et al., 2020) stereotyped attitudes—especially if they already held negative attitudes toward the attacked group (Schäfer et al., 2022). Moreover, encounters with hateful comments not only change how people think about social groups but they also increase social distancing (Soral et al., 2018). This causes threats for cohesion in a society.

In light of these severe consequences of hate speech, there is an ongoing debate about measures which might help to reduce harm evoked by hate speech. User interventions are considered an effective way to publicly demonstrate a counter-position and to support attacked groups, without harming freedom of speech or spending endless resources on professional community managers (Friess et al., 2021; Porten-Cheé et al., 2020; Ziegele et al., 2020). Such counter speech can be defined as responses by users to hateful comments that adhere to deliberative norms by providing facts that contradict hateful claims and side with those attacked in the comments (Ziegele et al., 2020). However, even though many scholars emphasize the importance of counter speech as an intervention for hate speech (Kümpel & Rieger, 2019; Kunst et al., 2021; Obermaier et al., 2023), experiments testing its effects in online discussions are scarce and have not investigated effects of counter speech on the perception of social groups. As such, it is unknown if counter speech is able to overcome negative effects of hate speech on stereotyped attitudes. The current study seeks to fill this research gap.

In sum, the aim of the current study is, first, to replicate and extend previous findings by investigating effects of hateful comments for explicit and implicit stereotypes, as well as social distancing. We test whether effects that have been reported for Muslims (Schäfer et al., 2022) and refugees (Soral et al., 2018) can be replicated for other social groups which have been overlooked in hate speech experiments: Chinese and transgender people. Second, our study is the first to test whether effects of hateful comments change if they are answered with counter speech. This way, we can further explore the democratic value of counter speech as a measure to diminish the harm done by hate speech.

The findings of our experimental study revealed that the effects of hate speech on the perception of social groups cannot be replicated for Chinese and transgender people. This indicates that they might depend on the specific group that is attacked. Furthermore, we find that counter speech can have a polarizing effect: depending on the political orientation of the participants, attitudes toward social groups tend to become more extreme—a development which might threaten social cohesion.

Hate Speech in Online Discussions

Even though comment sections offer great opportunities for interactions between users of different societal groups, the debate about online discussions is heavily focused on the high share of offensive language that can be found in these settings (Keipi et al., 2017; Paasch-Colberg et al., 2021). Most frequently, comments in this category are labeled with the term incivility which describes “an unnecessarily disrespectful tone toward the discussion forum, its participants, or its topic” (Coe et al., 2014, p. 660). Incivility is a term that encompasses various forms of norm violations in both online and offline social interactions (Bormann et al., 2022). Among these forms, one particular type has been identified by Bormann et al. (2022) as anti-democratic incivility, which is similar to the concept of intolerance coined by Rossini (2022). This kind of incivility poses a threat to democracy as it targets social groups based on traits such as race, gender, or intolerance (Bormann et al., 2022), thereby impeding inclusive and respectful social discourse (Coe et al., 2014). A different term for this type of communication is hate speech which can be defined as the attacking of social groups because of the social categories they belong to, such as gender, race or sexual orientation. Following the approach by Paasch-Colberg et al. (2021, p. 173), hate speech has three distinct elements: (1) it contains negative stereotypes, (2) it dehumanizes social groups, which means members of these groups are labeled as things (e.g., “pack”), animals (e.g., “locusts”), or inhuman beings (e.g., “demons”), and/or (3) it contains expressions of violence, harm, or killing.

When it comes to the extent of intolerance or hate speech in user comments, we can differentiate between the prevalence of hate speech measured in online discussions and personal experiences with and perceptions of hate speech that Internet users report. Concerning the first, findings very much depend on the definition of hate speech, the topic that is discussed and the platform that is investigated. A content analysis of 5,031 user comments from a mix of German news sites, Facebook pages, YouTube channels and a right-wing blogs found that 52% of the analyzed comments discussing the topic immigration and refugees contained negative judgments of which 25% were identified as hate speech (Paasch-Colberg et al., 2021). Similarly, a different study investigating user comments in fringe communities connected to the alt-right movement shows that in these communities, 24% of all comments contained hate speech (Rieger et al., 2021). When interpreting these numbers, it has to be considered that the study by Paasch-Colberg et al. (2021) investigates hate speech in the context of migration while the study by Rieger et al. (2021) looks into the communication of alt-right communities. That means, the share of hateful comments is probably much higher, depending on different contexts. Looking into findings of uncivil comments in the US, a large-scale automated content analysis of 42 news outlets on Facebook shows that if incivility occurs, it is usually directed at social groups instead of single individuals. Even if extreme cases of impersonal incivility are not the majority of the investigated comments, they occur rather frequently, especially in local news outlets during specific news events like terrorist attacks (Su et al., 2018). When it comes to the exact amount of uncivil comments, a content analysis of seven German news media outlets found that in total 7% contained anti-democratic incivility with 4% detected as racist comments and 3% as dehumanizing content (Jost & Ziegele, 2022). That demonstrates that even though hateful user comments exist, they only make a minority of user comments and highly depend on the context, for example the topic, the outlet or the social media site that is investigated.

When it comes to hate speech perceptions, results of a four-nation survey study found that the number of people indicating to have seen hate speech in the last 3 months ranges between 53% (US) and 31% (Germany) (Hawdon et al., 2017). This share is much higher among young people: a survey study in six countries with people between 18 and 25 found that in total 71% reported to have been exposed to hate speech in the last 3 months, with the highest share (79%) in Finland and the lowest (65%) in France (Reichelmann et al., 2021). That shows that even though hate speech comments are only a small proportion of all comments, they seem to be very visible, probably because they provoke a lot of interaction, are remembered by those who have been exposed to them which can be explained with their norm-violating nature.

In this study, we focus explicitly on the impact of hate speech directed toward two specific minority groups in Austria: Chinese people and transgender people. These groups are particularly noteworthy for two reasons. Firstly, they represent relatively small minorities within Austria who often encounter hostility within the broader society. According to the latest data from Statistik Austria (2022), there are approximately 13,000 people with a migration background from China residing in Austria, making it the country’s 10th largest group of migrants. Moreover, a recent large-scale survey conducted by the Pew Research Center indicates that Western Europeans’ perception of China is predominantly negative, and this attitude has worsened significantly since the onset of the COVID-19 pandemic (Silver et al., 2022). As a result, Chinese people living in Austria have reported a surge in harassment incidents, both online and offline (Pinwinkler, 2020). When it comes to transgender people, there are between 400 and 500 people in Austria who are known to experience gender incongruence (Kirnbauer, 2019). That means, they feel a discrepancy between the sex they were assigned to by birth and their gender identity. Transphobia is rather prevalent: a survey for the European Union including Austria found that 54% of the interviewed transgender people reported experiencing discrimination or harassment (European Union Agency for Fundamental Rights, 2014). Moreover, the extreme right-wing party FPÖ who is part of the governing coalition in Austria openly rejects the transgender rights movement and goes so far as to suggest that it poses risks for children. This stance serves as a stark illustration of the prevailing transphobia within the country. Secondly, we chose these groups since previous experimental studies investigated effects for larger minorities and disadvantaged groups, including Muslims and refugees (Schäfer et al., 2022; Soral et al., 2018; Ziegele et al., 2018), homeless people (Ziegele et al., 2018) and LGBTQ+ people (Schäfer et al., 2022). As a result, we do not know if effects also occur for groups which are less visible in a society. Even though there are more Chinese people living in Austria than transgender people, they are both rather small minorities in Austria who have to face harassment in society. Thus, we anticipate that hate speech may have comparable effects on the perception of these groups. As such, we consider these groups as replication conditions and hypothesize that any effects observed will be consistent across both groups.

Effects of Hate Speech for Social Group Perceptions

Because hate speech per definition targets social groups, it likely affects the way social groups are perceived. Concerning the perception of social groups, stereotypes play an important role. As such, the perception of social groups can be differentiated between explicit stereotypes and implicit stereotypes. Explicit stereotypes can be defined as “beliefs about the characteristics, attributes, and behaviors of members of certain groups” (Hilton & von Hippel, 1996). These stereotypes are overtly expressed and people are aware of this kind of thinking about social groups. Implicit stereotypes, on the other hand, refer to mental associations between group concepts and certain attributes which are automatically activated in response to external stimuli (Greenwald et al., 1998). For example, if people read a user comment in which immigrants are accused of being criminals, the concept of the social group (immigrants) and the attribute (criminal) are simultaneously activated and their association becomes stronger. Usually, implicit stereotypes are measured with an implicit association test (Greenwald et al., 1998, 2003). For this test, participants have to combine different target groups and attributes. The time necessary to make these combinations is considered an indicator for the closeness of the concepts of the target and the attribute in mental representation.

Plenty of studies show that media depictions play a crucial role for the formation of stereotyped attitudes (Power et al., 1996; Schemer, 2012; Scheufele & Tewksbury, 2007; Ward & Grower, 2020). This also applies to the confrontation with hateful user comments. Several studies found that the confrontation with hate speech increased citizens’ agreement with negative explicit stereotypes about the group under attack (Hsueh et al., 2015; Schäfer et al., 2022; Soral et al., 2018; Weber et al., 2020). Also for implicit stereotypes, findings by Weber et al. (2020) show that hateful comments lead to more negative implicit stereotypes toward refugees compared to neutral comments.

There are several theoretical approaches which explain why stereotyped media content, and specifically hateful user comments, can increase stereotypes toward social groups. First, according to the idea of media priming, the exposure to stereotypes in the media is followed by the mental activation of similar kinds of thoughts, which influences subsequent information processing, thinking and behaviors (Ramasubramanian, 2007). Being confronted with negative depictions of a social group makes the connection between the social group and the negative stereotypes more salient until it becomes a common association (Higgins, 1996). If this knowledge is considered relevant and appropriate, it can be incorporated into judgments and thinking, leading citizens to hold negative stereotypes (Lee et al., 2017). Second, stereotyped media content can alter social norms. Social norms describe typical and socially accepted behaviors in a social group (Cialdini et al., 1990). These norms are inferred from behaviors of other group members (Blanchard et al., 1994; Lapinski & Rimal, 2005). Applied to the context of stereotypes, an experimental study by Blanchard et al. (1994) showed that hearing someone rejecting racism increased antiracist positions compared to a condition where no other positions were present. This indicates that people not only infer social norms based on the behavior of others, but they tend to mirror in their own acting what they perceive to be the social norm. And finally, as argued by Soral et al. (2018), frequent exposure to hateful comments starts processes of desensitization, meaning that arousal elicited by hateful comments declined when users were repeatedly confronted with this kind of comments. Desensitization, in turn, made people more likely to agree with negative stereotypes (Soral et al., 2018).

While some studies report a direct effect of hateful comments on stereotyped attitudes (Soral et al., 2018; Weber et al., 2020), others point out that pre-existing attitudes have to be taken into account. An experimental study by Schäfer et al. (2022) found that hateful comments increased stereotyped attitudes, but only among those who had already indicated a negative perception of the attacked social group before the stimulus presentation. Other studies investigating persuasive effects of user comments show that user comments increase attitude polarization (Anderson et al., 2014; Lee et al., 2017), meaning that instead of a general attitude shift, predispositions serve as a heuristic for information processing which causes a reinforcing effect (Anderson et al., 2014). This might be explained with processes of motivated reasoning (Taber & Lodge, 2006). According to this approach, different motivations play a role in attitude formation. Among these motivations is the desire to confirm pre-existing attitudes: information that contradicts prior attitudes is often met with more scrutiny than information that confirms them (Kunda, 1990). This disconfirmation bias causes the strengthening of prior beliefs which contributes to polarization (Taber & Lodge, 2006). Stereotypes can be activated (or not) depending on citizens’ motivations (Kundra & Sinclair, 1999) and reasoning can be motivated through identification with social identities (Boyer, Lecheler, & Aaldering, 2022; Feldman & Huddy, 2018). Applied to the context of hate speech, this would mean that those with negative pre-existing attitudes toward the attacked group are more strongly motivated to accept and apply harmful stereotypes to these groups. We assume:

H1: Exposure to hate speech comments leads to stronger explicit stereotypes toward the attacked group compared to neutral comments. This effect is moderated by pre-existing attitudes: it increases with more negative pre-existing attitudes.

H2: Exposure to hate speech comments leads to stronger implicit stereotypes toward the attacked group compared to neutral comments. This effect is moderated by pre-existing attitudes: it increases with more negative pre-existing attitudes.

When it comes to behavior, findings also indicate that hate speech can change how users treat social groups. For example, studies show that hate speech has a negative effect on prosocial behavior. Other studies found that participants even started attacking the groups in the comments themselves after being exposed to hateful comments (Hsueh et al., 2015).

In our study, we are interested in the effects of hate speech on the tendency to avoid the social group. This goes beyond stereotyped attitudes, since it describes the willingness of citizens to accept members of the outgroup as part of society and their everyday life. Findings by Soral et al. (2018) found that the confrontation with hate speech increased social distancing. That is, people were less willing to accept members of the attacked outgroup as part of their family, neighbors or colleagues. Here, we also assume an effect of pre-existing attitudes:

H3: Exposure to hate speech comments leads to stronger social distancing compared to neutral comments. This effect is moderated by pre-existing attitudes: it increases with more negative pre-existing attitudes.

The Interfering Role of Counter Speech

Due to the severe consequences of hate speech for those attacked, but also for society as a whole, there is an ongoing discussion about ways to counter hate speech. First, this issue is addressed legally, for example with the introduction of “the law for the protection of users on communication platforms on the Internet” in Austria (Republik Österreich, 2020). However, legal regulations assign the responsibilities to take action against hate speech predominantly to the communication platforms. The usual procedure is that after a comment was flagged by a user, the platform has a certain amount of time to check whether the comment or post needs to be removed. This comes with the risk that freedom of speech is endangered since commercial platforms decide which content is deleted, rather than democratic rule-of-law (Alkiviadou, 2019; Howard, 2019). A second possibility to fight hate speech is the moderation of hate speech. Here, the author of the original post, usually a journalist, is responsible for the management of responses (Bakker, 2014). However, the increasing number of user comments makes an effective handling of user comments difficult. Moreover, it can be an emotional burden for the moderators in charge of the community (Frischlich et al., 2019). In light of these disadvantages, a third promising strategy against hate speech is for users to engage in counter speech. Counter speech can be defined as “communication that directly responds to the creation and dissemination of hate speech with the goal of reducing harmful effects” (Bahador, 2021, p. 509). There are various forms of counter speech (Kalch & Naab, 2017). In this study, we are interested in counter speech that consists of a user reply to hateful comments that adheres to deliberative norms by providing facts, respectfully contradicting the hateful comment and/or taking side with those attacked (Ziegele et al., 2020).

Previous studies on counter speech predominantly investigate motives of Internet users to engage in counter speech (Kalch & Naab, 2017; Kunst et al., 2021; Leonhard et al., 2018; Obermaier et al., 2023; Ziegele et al., 2020). So far, it has not been investigated if counter speech also changes the effects of hate speech with regard to increased negative stereotyped attitudes and social distancing. Looking into the theoretical explanations for the effects of hate speech, it has been argued that media priming plays a role. Counter speech cannot avert that social groups and negative attributes which are expressed in hateful comments are simultaneously primed. However, counter speech also primes the social groups with more positive attributes by taking side with those attacked. This might interfere with the effects of hate speech. Moreover, studies point out that counter speech is able to change social norms in an online discussion (Obermaier et al., 2023; Schieb & Preuss, 2018). By adding different points of view to a discussion and backing up those attacked, the perception of the dominating opinion in the discussion should change which might also shift the perception of social norms compared to a discussion in which hate prevails. And finally, processes of desensitization should be lowered since other users counter the expressed hatred toward social groups and thus de-normalize hate against the group under attack.

In sum, the mechanisms that explain effects of hate speech for the formation of stereotyped attitudes and the avoidance of social groups could be overridden by the counter-voices of other users. Again, we assume that pre-existing attitudes moderate this process due to processes described in motivated reasoning: in contrast to hate speech, those that already think positively about a social group are more likely to reject negative stereotypes (Kundra & Sinclair, 1999) and be affected by counter speech. We assume:

H4: Exposure to hate speech along with counter speech will decrease explicit stereotyped attitudes compared to hate speech. This effect is moderated by pre-existing attitudes: it increases with more positive pre-existing attitudes.

H5: Exposure to hate speech along with counter speech will decrease implicit stereotyped attitudes compared to hate speech alone. This effect is moderated by pre-existing attitudes: it increases with more positive pre-existing attitudes.

H6: Exposure to hate speech along with counter speech will decrease social distancing compared to hate speech. This effect is moderated by pre-existing attitudes: it increases with more positive pre-existing attitudes.

Method

Procedure and Participants

To investigate our hypotheses and research question, we pre-registered and conducted an online experiment with a 3 × 2 between-subject design. Participants of the study were exposed to a mock social media feed containing two news posts. Below these posts were online discussions that varied with regard to the tone of the comments (neutral/hate speech/hate speech and counter speech) and the group that was mentioned in the comments (Chinese people/transgender people). Before conducting the study, we pre-tested if participants recognized the difference between the experimental conditions. We pre-registered the hypotheses, the stimulus material and procedures of the data analysis before collecting the data in September 2021. 1 Moreover, the study was approved by the IRB board of the department where the study was conducted. Due to ethical considerations of exposing participants to hate speech directed at a group they are part of, we excluded 16 respondents who identified as transgender. They were thanked for their willingness to participate and paid like the rest of the sample. There were no Chinese participants in our sample.

As part of the pre-registration, we conducted a power analysis using G*Power (Faul et al., 2007) to ensure that our study was able to detect even small effect sizes (f = 0.15). The findings revealed that we needed a sample size of N = 1072 participants. A sample that was representative of the Austrian population regarding age and gender was collected through the market research company Dynata. As usual in online panels, higher educated citizens are slightly overrepresented (Dynata, 2022).

After the data collection and data cleaning, the final sample size was N = 1285. This is slightly above the required number of participants. However, since we did not have valid answers for every participant, especially for the IAT, we decided to collect about 10% more than necessary. The final sample consisted of 51% women and 49% men with a mean age of 47.43 (SD = 16.13). Moreover, 36% had a low, 42% a medium and 22% a high level of education.

Stimulus

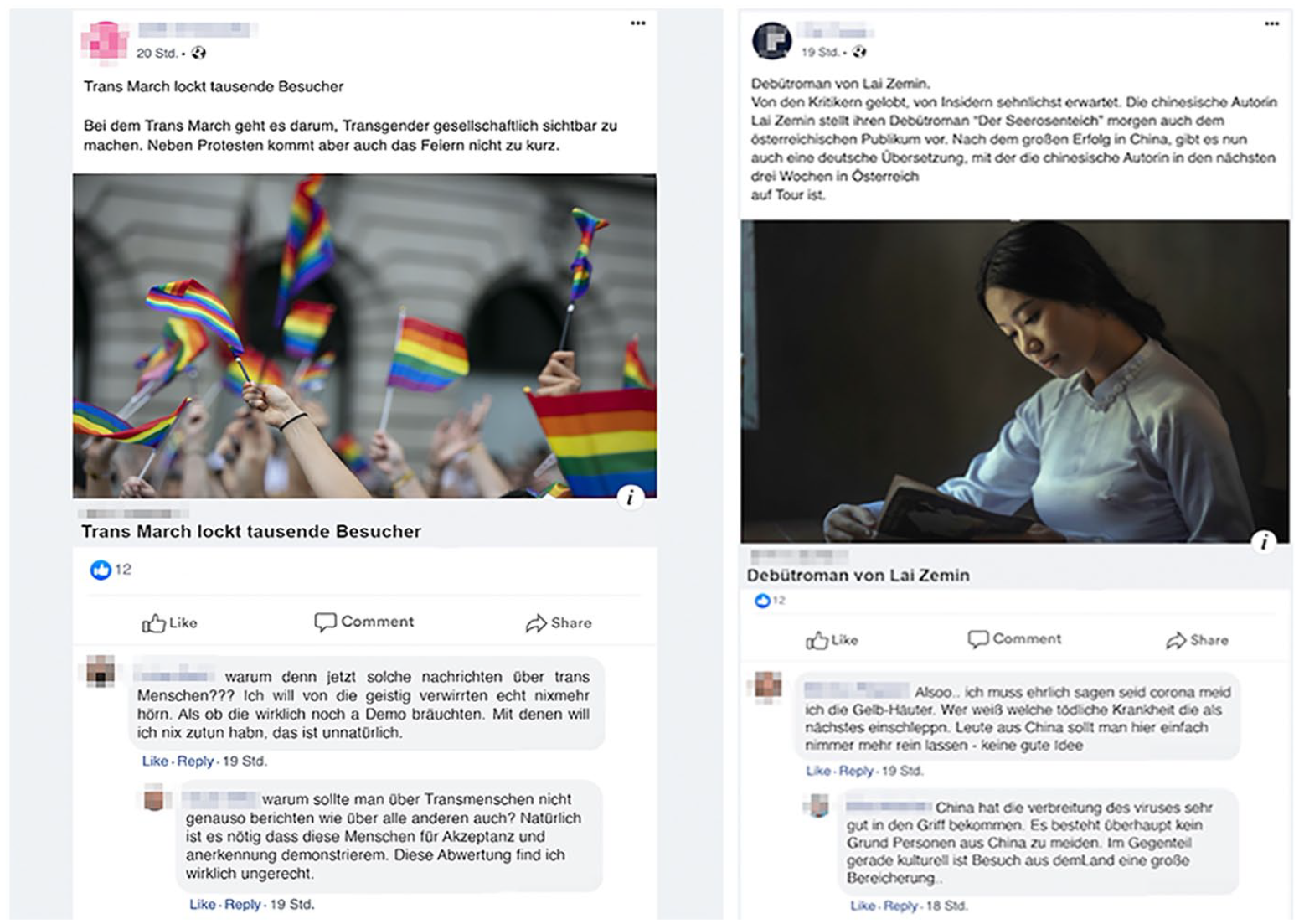

As stimulus material for this study, we created six Facebook news feeds using the graphic design software Adobe Photoshop. Each news feed contained six posts, of which four were distractors (advertising, humor posts) and two were posts of news sites which were relevant for the manipulation. These posts mentioned a festival (Chinese people: flower expo in Shanghai, Transgender people: Transmarch) and a book release (by a Chinese or a transgender author), respectively. The comments under these posts were either neutral, contained hate speech, or contained hate speech and counter speech. In the neutral version, commenters did not take any side for or against the social group in the news posts (example: “Books are so soo great! I have three lying around here. Somehow I understand. I just need to take some time for them ☺”). In the hate speech version, we followed the definition by Paasch-Colberg et al. (2021) and created comments that brought up negative stereotypes, dehumanized the social group of the news post or contained threats of violence or harm (example Chinese people: “Yesterday, I saw that a Chinese woman was spit on on the street. Somehow I understand that. What they brought us really makes me sick.”; example transgender people: “sooo. . . I have to admit that I avoid these colorful creatures. Who knows what kind of perverted tendencies they have. They should not be allowed to preach in public book stores – not a good idea.”). In the hate speech and counter speech condition, we used the same comments as in the hate speech condition, but here the comments were replied with answers that contradicted the hateful comments, provided facts and sided with the attacked group (counter speech answering the comments mentioned above “This woman had absolutely nothing to do with corona and doesn’t deserve to be treated like that. We all had a tough time but venting of frustration on Chinese people is just wrong”; “Being transgender and the sexual orientation are two very different things. There is not at all to assume transgender people have deviant sexual preferences. Especially since we don’t know a lot about transgender people, I think such a book is a great enrichment.”). That means, we followed the definition of counter speech by Ziegele et al. (2020). The stimulus material including an English translation is available in the Online Appendix available under https://osf.io/ud5xa/ (to get a higher resolution, the files need to be downloaded). An excerpt of the stimulus can be found in Figure 1.

Excerpts of the stimulus used for the hate speech and counter speech conditions for transgender people (left) and Chinese people (right). Translation of the comments for transgender people: “Why news about transgender people??? I don’t want to hear anything about these mentally confused people. As if what they need is a demo. I don’t want to have anything to do with them, this is unnatural [hate speech]; Why should transgender people not be covered like everyone else? Of course it is necessary that these people demonstrate for acceptance and equal rights. This depreciation is really unfair. [counter speech]”, Chinese people: “sooo. . . I have to admit that since COVID I avoid the yellow-skinned. Who knows what kind of deadly diseases they import next. People from China should just not be allowed to come in here –not a good idea. [hate speech]; China has handled the spread of the virus very well. There is no reason to avoid people from China. The opposite, especially in terms of culture the country is a great enrichment. [counter speech]”

To ensure that participants recognize the differences between the experimental conditions, we conducted a pretest (a) with 94 students of a large European university (74% female, Mage = 25, SD = 7.52) and (b) with the final sample in the main experiment. Participants recognized that there was more hate speech in the two experimental conditions than in the control condition in the pretest (HS/Transgender People: F(2) = 420.00, p < .001, HS/Chinese people: F(2) = 369.00, p < .001; CS/Transgender People: F(2) = 210.5, p < .01, CS/Chinese people: F(2) = 155.1, p < .001) as well as in the final experiment (HS/Transgender People: F(2) = 88.98, p < .001, HS/Chinese people: F(2) = 52.88, p < .001; CS/Transgender People: F(2) = 37.72, p < .001, CS/Chinese people: F(2) = 33.01, p < .001). The manipulations seem to have worked. 2

Measures

Pre-Existing Attitudes

For the measurement of pre-existing attitudes, we asked the participants at the beginning of the survey about their perception of four different social groups. 3 Among them were Chinese people and transgender people together with soldiers and politicians as two groups which served as distractors. Participants could indicate their perception of the groups on a scale from −5 “very negative” to +5 “very positive” (MChinese = 6.42, SD = 2.07; MTransgender = 6.62, SD = 2.27). With this kind of measurement, we followed the example of previous studies that also measured attitudes toward social groups with a single item (Küpper et al., 2017; Schäfer et al., 2022). After answering this question, two distractor questions consisting of 10 and 7 items on an unrelated topic followed, before the stimulus was presented to the participants. That means, we tried to distract participants and reduce priming effects of pre-existing attitudes by placing longer item batteries between the question of pre-existing attitudes and the stimulus presentation.

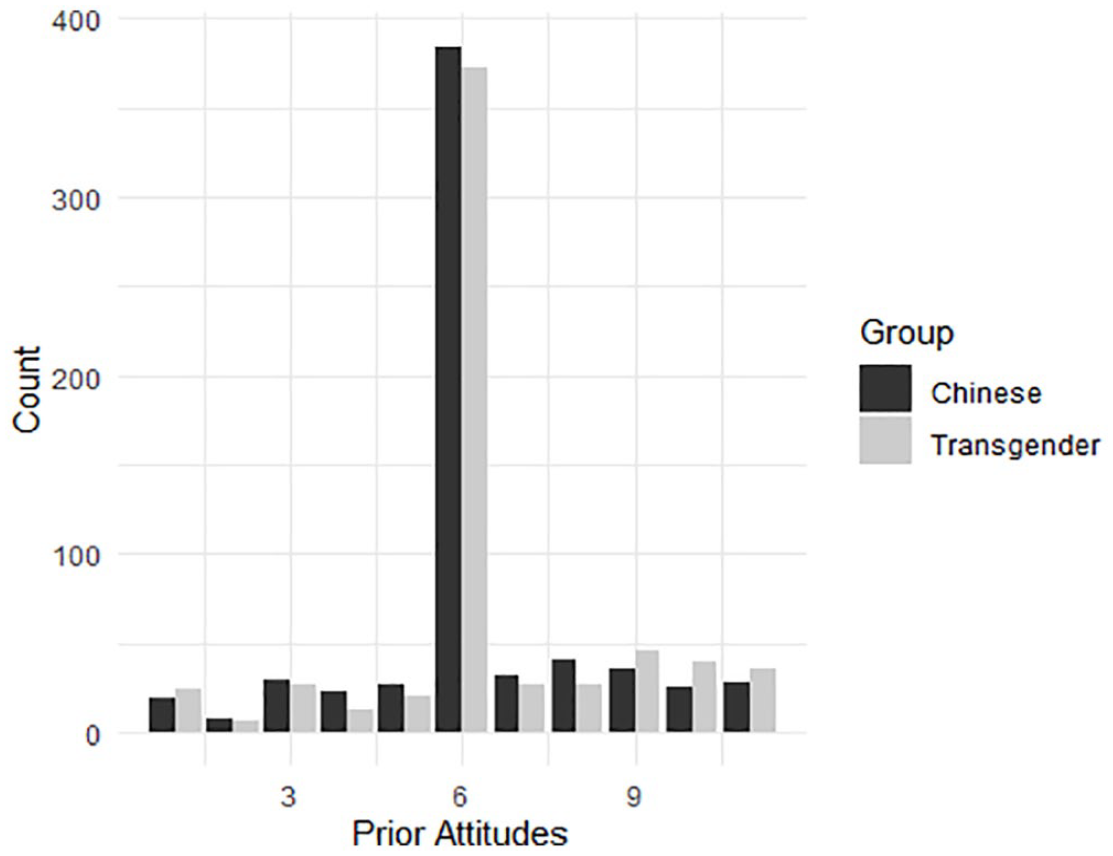

Looking at the distribution, we find that this variable has very little variance for both groups (see Figure 2). For Chinese and transgender people, 58.9% indicated to have a neutral perception of the groups and hardly people indicated having negative perceptions of the groups. Still, as described in the pre-registration of this study, we created three groups along the distribution of pre-existing attitudes for both attitudes toward Chinese people and transgender people. We created the groups by categorizing responses below 6 (neutral) as low and responses above 6 as high. Responses at 6 were categorized as medium. Given the distribution of the two measures of pre-existing attitudes, this approach ensured that we had sufficient respondents in each group, although it did not yield similar group sizes. Pre-existing attitudes were distributed as follows. About 15% of respondents have negative (low), 59% neutral (medium), and 26% have positive (high) attitudes toward the respective group pre-treatment. The distributions of pre-existing attitudes concerning the two groups are essentially identical (differences are in the decimals).

Histogram of prior attitudes toward Chinese and transgender people. The measure ranges from −5 to +5, a value of 6 in the graph means that people feel neutral, that is, neither positive, nor negative toward the respective group.

Explicit Stereotypes

To measure stereotyped attitudes, we could not rely on an established scale to measure explicit stereotypes about Chinese people since the COVID-19 pandemic came with new prejudice that were not reflected in previous measures. Moreover, existing scales were either not comparable to the corresponding measures for transgender people or mentioned stereotypes which only make sense if Chinese people are a larger minority in the country that is investigated. Since this is not the case, we relied on an item of the well-established blatant scale to measure stereotypes (Pettigrew & Meertens, 1995; “Chinese and Austrians can never be really comfortable with each other, even if they are close friends”), three items that could be adapted from the transphobia scale (Nagoshi et al., 2008; e.g., “I think something is wrong with Chinese people”) and three items of stereotypes related to Chinese culture which also contained an accusation of being responsible for COVID-19 (e.g., “Chinese are responsible for the worldwide COVID-19 pandemic.”). For these items, like for the other items of this survey, people could indicate their answers on a scale from 1 “completely disagree” to 7 “completely agree” (α = .89, M = 2.41, SD = 1.27). A full list of items can be found in the Online Appendix available under https://osf.io/ud5xa/ (document: Questionnaire_translated_usedvariables).

To measure explicit stereotypes about transgender people, we relied on the transphobia scale (Nagoshi et al., 2008; e.g., “I don’t like if someone is flirting with me and I can’t tell whether it’s a man or a woman”; “I believe that a person can never change their gender”). This measure suited the purpose of the study very well, since it has already been used to compare explicit and implicit stereotypes about transgender people (Axt et al., 2021). All items were combined to an index (α = .90, M = 3.42, SD = 1.51).

Implicit Stereotypes

To measure implicit stereotypes, we conducted an implicit association test (IAT) (Greenwald et al., 1998) which we adapted for Chinese people and transgender people. More precisely, we used the R package “iatgen” (Carpenter et al., 2019). The test consists of seven sets of trials. In each trial, participants are presented either pictures or words which they have to sort to the matching category, for instance the word “love” to the category “positive,” or a picture of a Chinese person to the category “Chinese” using the keys “e” and “i” on their keyboards. They are also presented the combination of trials, where—for example—one key is used to sort a picture or word into “positive” or “Chinese,” and the other into “negative” or “European.” The basic assumption is that this sorting is easier and thus faster if the sorting matches existing associations, that means that if the connection between Chinese people and positive attributes is stronger, people should be faster when assigning them to the same side compared to when “Chinese” and “negative” are presented on the same side. The R-package “iatgen” by Carpenter et al. (2019) also allowed us to combine these trials to compute implicit attitude scores, so-called D-scores for each participant. In this score, values around zero indicate no bias, a positive value shows a bias against Chinese or transgender people, and negative D-values indicate a bias in favor of Chinese or transgender people (Mchinese = 0.54, SD = 0.36; Mtransgender = 0.60, SD = 0.46)

Social Distancing

To measure social distancing, we followed the example by Soral et al. (2018) which is similar to the procedure by Bogardus (1925). Participants indicate if they were willing to accept a Chinese person/a transgender person as a part of their family by marriage, as a close friend, as a neighbor on the same street or as a co-worker. For each of these statements, people give their answers on a scale from 1 “doesn’t apply at all” to 7 “totally applies.” All four items were combined to an index and the value reversed. That means higher values indicate stronger social distancing (Mchinese = 1.95, SD = 1.38, α = .96; Mtransgender = 2.26, SD = 1.53, α = .94).

Results

Findings for Explicit Stereotypes

To investigate effects of hate speech (H1) and counter speech (H4) on explicit stereotypes and the moderating role of pre-existing attitudes for these effects, we calculated an ANOVA that considered pre-existing attitudes as well es the conditions of the user comments (neutral, hate speech, hate speech and counter speech), and their interaction term as independent variables. As dependent variable, we pooled the answers for explicit stereotypes against Chinese people and transgender people. 4 Findings show that only pre-existing attitudes have an effect (F(2) = 127.55, p < .001). As expected, participants with more negative pre-existing attitudes showed higher levels of negative stereotypes about Chinese people and transgender people compared to participants with more positive pre-existing attitudes. Neither the different comment conditions (F(2) = 1.74, p = .18) nor the interaction (F(4) = 0.18, p = .95) affected explicit stereotypes. Thus, H1 and H4 have to be rejected since neither hate speech nor counter speech have an impact on explicit stereotypes, even if the moderating role of pre-existing attitudes is considered.

Findings for Implicit Stereotypes

For implicit stereotypes, we assumed an effect on hate speech (H2) and counter speech (H5) which we expected to be moderated be pre-existing attitudes. Results show that participants with more negative pre-existing attitude had higher levels of bias against the two social groups compared to participants with more positive pre-existing attitudes (F(2) = 4.46, p = .01), while the comment condition (F(2) = 1.00, p = .37) and the interaction do not have an effect (F(4) = 1.34, p = .15). In sum, H2 and H5 have to be rejected.

Findings for Social Distancing

For H3 and H6, we investigated effects for social distancing. Looking at the main effects, we do not find an effect of the comment condition (F(2) = 2.17, p = .11) while prior attitudes have a significant effect (F(2) = 91.37, p < .01). Moreover, we do not find an interaction effect between the comment conditions and pre-existing attitudes (F(4) = 0.69, p = .60). For the group that saw comments about transgender people, we find an effect of political orientation (F(1) = 36.41, p < .001), no effect of the comment condition (F(2) = 0.44, p = .65) and a significant interaction between the comment condition and political orientation (F(2) = 3.85, p = .02) for social distancing. As a result, H3 and H6 have to be rejected since neither the comment condition, nor the interaction between the comment condition and pre-existing attitudes makes a difference for avoiding Chinese people or transgender people.

Exploratory Analysis

In the theoretical part of our paper, we explained that we assume that comments containing hate speech and/or counter speech can alter the perception of social groups based on processes described in media priming, social norms and desensitization. Further, we argued that effects might depend on pre-existing attitudes toward the social groups. For example, if a person already has a negative perception of a social group, he or she might be more open to accept stereotyped thinking expressed in user comments compared to a person who has a more positive perception of the social group. This can be explained with motivated reasoning and is a result of the general desire to confirm already existing beliefs and attitudes (Taber & Lodge, 2006). However, the findings of this study cannot confirm these assumptions: hate speech or counter speech did not have any influence for stereotyped attitudes or social distancing, even if pre-existing attitudes were considered. While a possible interpretation of these findings could be that hateful comments and counter speech does not play a role for the perception of social groups, a different explanation could be the flawed measurement of pre-existing attitudes. In our survey, participants were asked about their general attitudes about several social groups which they had to indicate on a scale from very negative to very positive. That means, we asked about pre-existing attitudes in a very explicit way which might have caused biased answering patterns due to social desirability. Findings show that more than half of the sample had a neutral perception of the social groups. Since we know that transphobia and also resentments toward Chinese immigrants exist in Austria (European Union Agency for Fundamental Rights, 2014; Silver et al., 2022), this might indicate that our measure is not a valid indicator for pre-existing attitudes.

As part of the control variables, we asked participants about their political ideology. Self-identification on a left-right-wing spectrum can be considered as an indicator for political conservatism which is closely related to social group perception (Chambers et al., 2013). Indeed, several findings indicate that conservatives compared to liberals have less favorable attitudes towards social minorities and higher levels of prejudice towards, for example, members of the LGBTQ+ community (Gillig et al., 2018; Hoyt et al., 2019; Prusaczyk & Hodson, 2020) and ethnic minorities (Chambers et al., 2013; Sears & Henry, 2003). One explanation for what Chambers et al. (2013) call the prejudice gap can be found in the system-justification theory (Kay & Jost, 2003). The theory posits that conservatives have a higher motivation to keep and defend the status quo in a society. As a result, they perceive social groups potentially claiming social change, like members of the LGBTQ+ community or immigrants, as potential threats which explains negative attitudes toward these groups. Further, liberals endorse a different set of values (Sears & Henry, 2003) which stresses the importance of tolerance, pluralism and equality in a society (Chambers et al., 2013). What is more, social groups are increasingly sorted in society, such that high-status and majority group members support conservative parties and ideologies, while lower-status and minority members support progressive parties and ideologies (Mason, 2015, 2018). This means that hate speech and counter speech may activate broader political identities (Mason & Wronski, 2018). In this study, members of the attacked groups are excluded from analysis for ethical reasons. However, since majority group members are more likely to reason from political motivations than minority group members (Boyer, Aaldering, & Lecheler, 2022), it is increasingly likely that political identities might motivate reasoning relatively strongly in this experiment.

Against this background, it can be argued that political ideology can be considered as a highly relevant proxy for group perceptions in this experiment. Based on previous findings, conservatives show higher levels of transphobia (Gillig et al., 2018; Prusaczyk & Hodson, 2020) and have less favorable attitudes toward migrants (Mieriņa & Koroļeva, 2015). As a result, it can be argued that liberals and conservatives also differ with regard to attitudes toward transgender people and Chinese people in our sample. As an exploratory analysis, we test whether political ideology is an effective moderator of the effects of hate speech and/or counter speech on stereotyped attitudes. Since it can be assumed that participants on the political right have less favorable attitudes toward the groups we study compared to participants of the political left, we assume that political motivations make conservative participants more open to accept stereotypes expressed in hate speech comments and liberal participants more open to take side with the attacked groups if they can observe that in the counter speech condition. Similar to the pre-registered analyses, we will test these moderated assumptions for explicit stereotypes, implicit stereotypes and social distancing in the following.

In our preregistration, we chose not to factor in political ideology when designing the study for two primary reasons. Firstly, previous research examining the impact of hate speech and counter speech often considered general attitudes toward targeted groups as moderators (Anderson et al., 2014; Lee et al., 2017; Schäfer et al., 2022). Our study aimed to replicate these findings, focusing on the moderated effects of hate speech and counter speech on social group perception. To achieve this, we adapted the most commonly used moderators from prior studies. Secondly, as explained in this section, even if we were to consider political ideology as a potential moderator, the varying attitudes toward minority groups would likely be the primary driver behind the differing effects of user comments on conservatives and liberals. Therefore, our assumption was that existing attitudes would sufficiently account for the main cause of these distinct effects. However, recognizing that political ideology is a broader concept and allows for the examination of various attitudes towards social groups in a less explicit manner compared to measuring pre-existing attitudes, we conducted an exploratory analysis to test the potential moderating effect.

In our study, political orientation was measured on a scale from 0 “left” to 10 “right” (M = 4.92; SD = 2.15). We split the sample along the median of political orientation which was 5. Participants having values from 0 to 5 were considered left-wing and people from 6 to 10 as right-wing in the analyses.

Results: Hate Speech, Counter Speech, and Ideology

Findings for Explicit Stereotypes

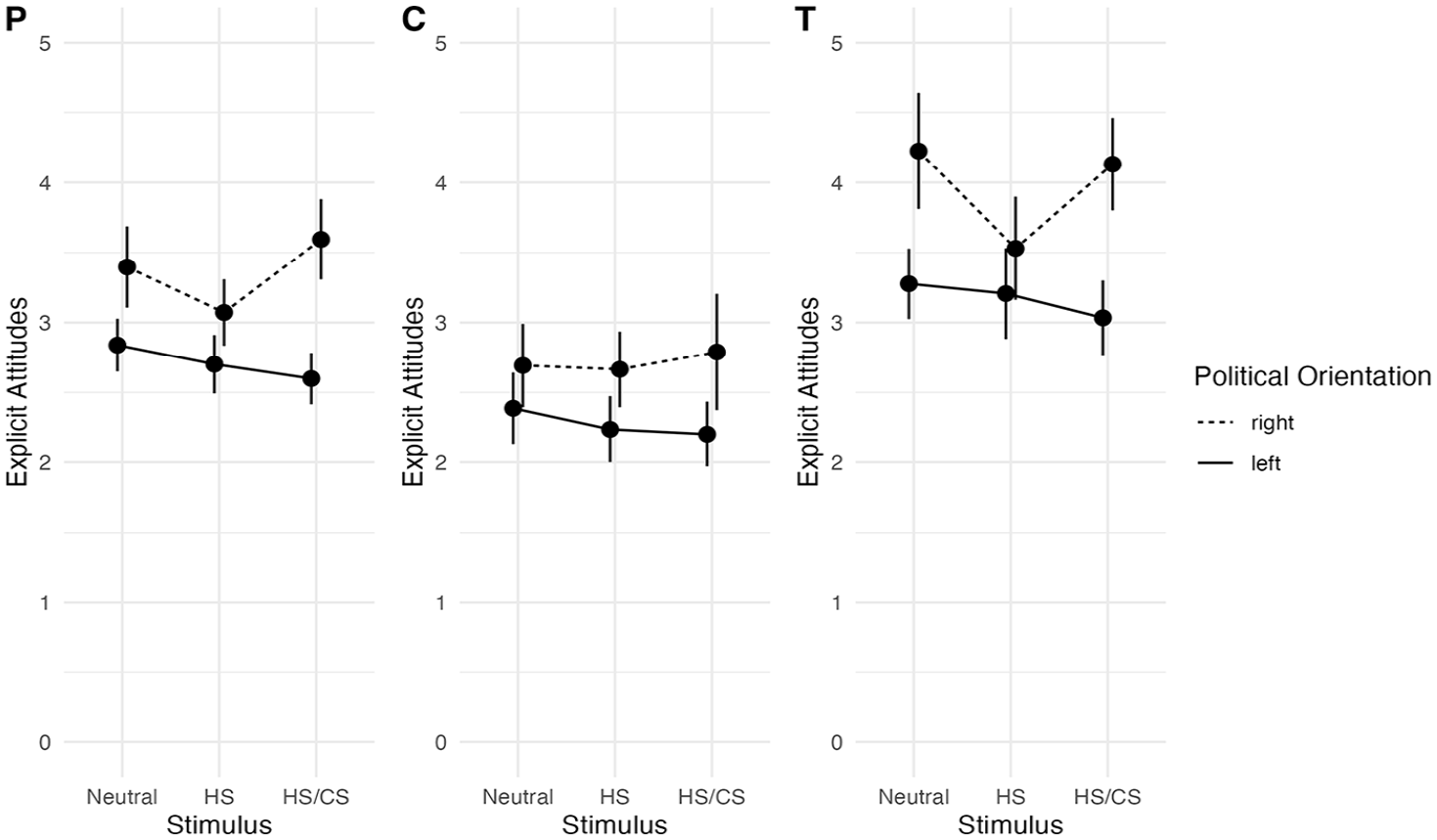

To investigate effects of hate speech and counter speech on explicit stereotypes and the moderating role of political orientation for these effects, we calculated an ANOVA that considered political orientation as well es the conditions of the user comments (neutral, hate speech, hate speech and counter speech), and their interaction term as independent variables. Again, we pooled the data for conditions of the two social groups. 5 Findings confirm that that the comment condition does not have an effect (F(2) = 1.54, p = .22), while political ideology has a main effect (F(1) = 47.55, p < .001). Right-wing citizens held more negative stereotypes against the social groups than left-wing citizens (see Figure 3). Further, the interaction of comment conditions and political ideology only reaches marginal statistical significance (F(2) = 2.42, p = .09). However, the interaction plot shows that seeing hate speech declines differences between left-wing and right-wing citizens, while adding counter speech to hate speech leads to a polarizing effect by increasing stereotypes for right-wing citizens and decreasing stereotypes for left-wing stereotypes.

Interaction plot for moderated effects of the comment conditions on explicit stereotypes with political ideology as a moderator. P shows the findings for the pooled data, C for comments about Chinese people, T for transgender people.

Findings for Implicit Stereotypes

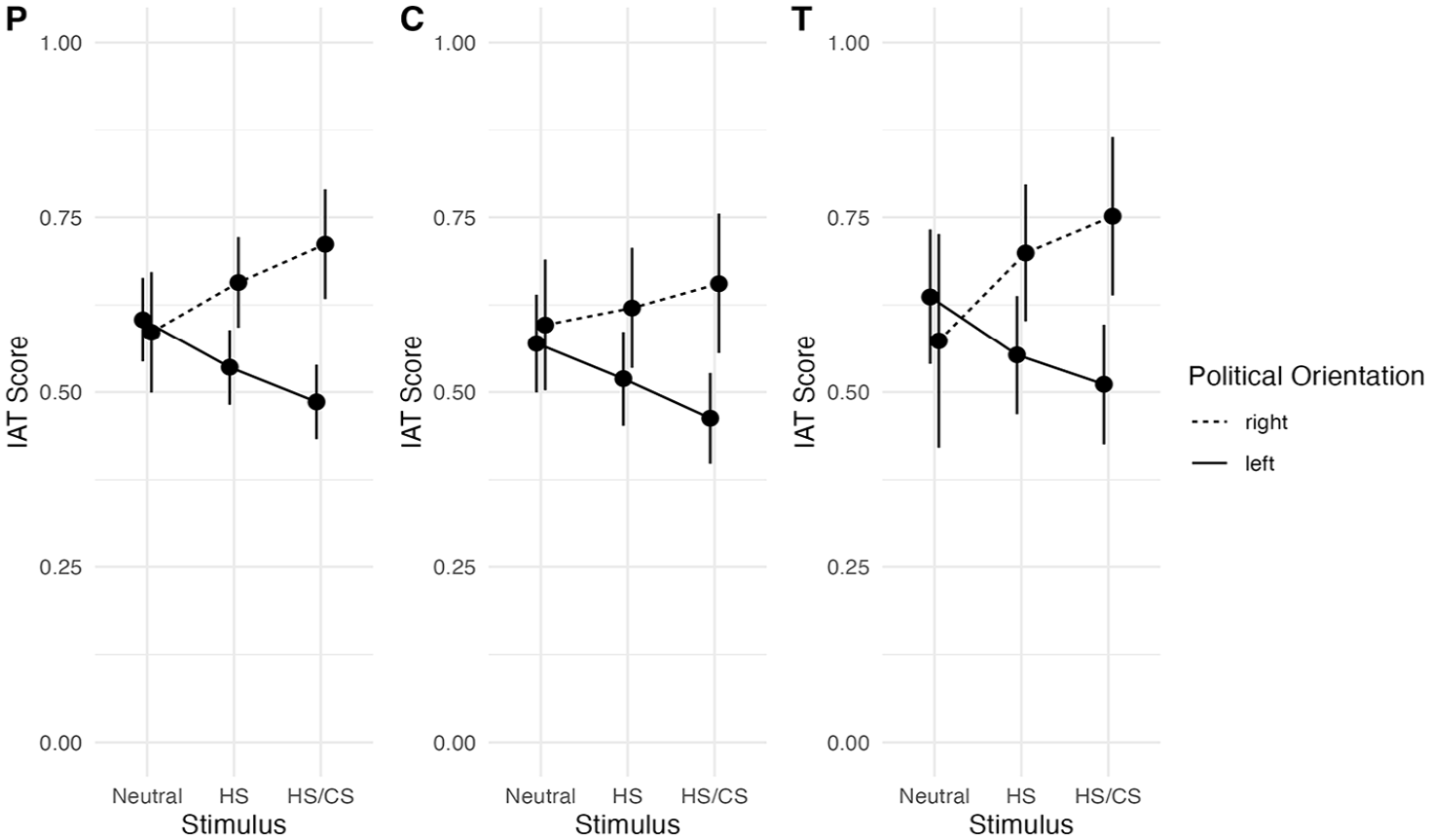

For implicit stereotypes, we assumed an effect on hate speech (H2) and counter speech (H5) which we expected to be moderated be pre-existing attitudes. Results for the condition about Chinese people show that right-wing citizens had more bias against Chinese people than left-wing citizens (F(1) = 9.1, p < .01), while the comment condition (F(2) = 1.49, p = .23) and the interaction do not have an effect (F(2) = 0.17, p = .17; see Figure 4). Similarly, for transgender people, we find that right-wing citizens had more bias against transgender people than left-wing citizens (F(1) = 7.07, p < .01), but the comment condition is not significant (F(2) = 0.11, p = .9). However, as expected we find a significant interaction effect between the comment conditions and political orientation (F(2) = 3.88, p = .02). The interaction plot (Figure 4) shows for both Chinese people and transgender people, that the neutral condition and the hate speech condition do not differ. Hate speech does not make a difference for implicit stereotypes. However, looking at the differences between the hate speech and the hate speech and counter speech condition, we find that counter speech compared to hate speech has a polarizing effect. As expected, the people on the right political spectrum increase their stereotyped thinking while people on the political left tend to lower their implicit stereotypes. This causes a significant difference between implicit stereotypes between people on the political left and right in the counter speech condition for both Chinese and transgender people.

Interaction plot for moderated effects of the comment conditions on implicit stereotypes with political ideology as a moderator. P shows the findings for the pooled data, C for comments about Chinese people, T for transgender people.

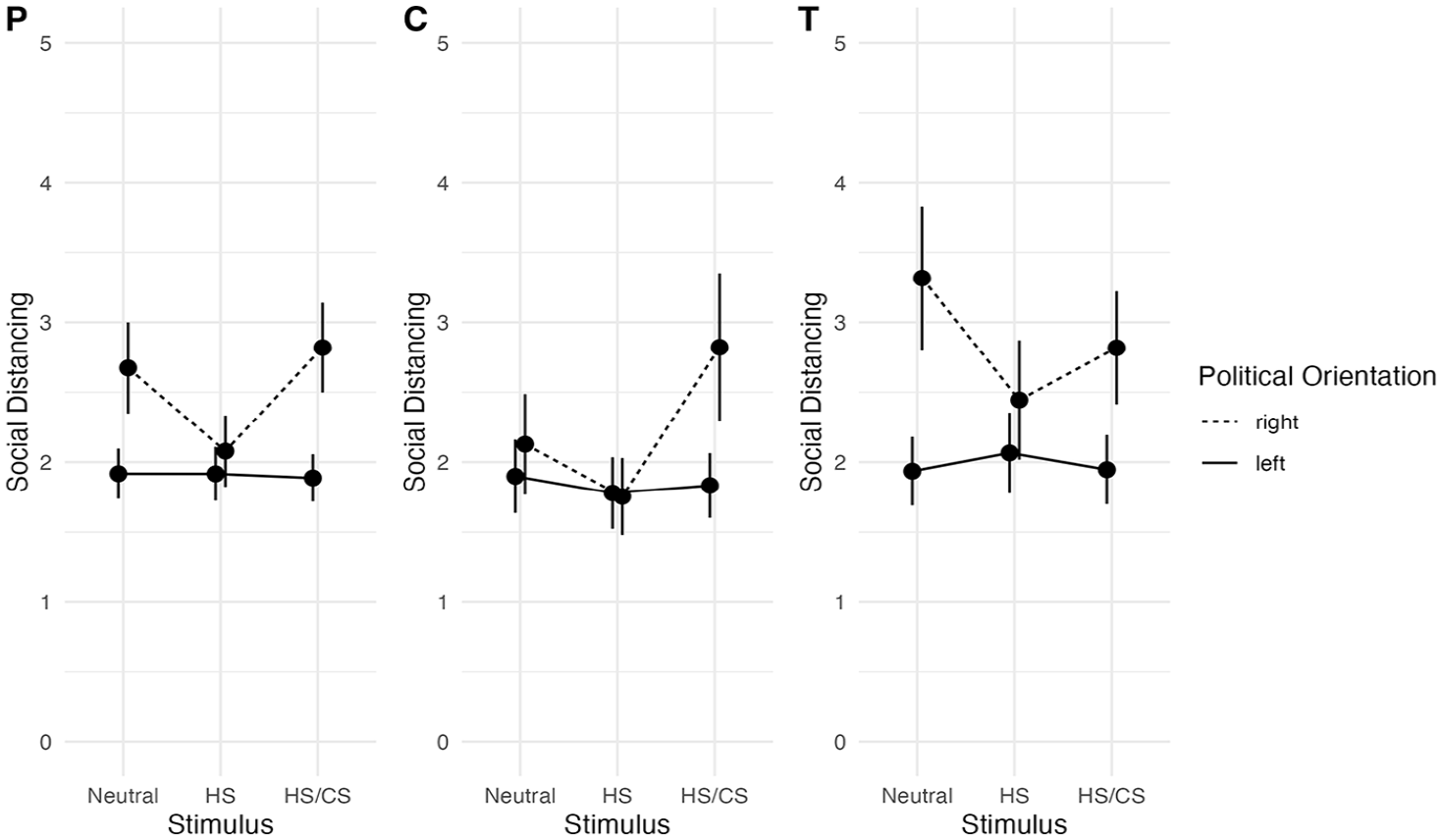

Findings for Social Distancing

Further, we investigated effects for social distancing. For the group that saw comments about Chinese people, we do not find an effect of the comment condition (F(2) = 2.28, p = .10) while political orientation has a significant effect (F(1) = 7.46, p < .01). Moreover, we find a significant interaction effect between the comment conditions and political orientation (F(2) = 5.13, p < .01). For the group that saw comments about transgender people, we find an effect of political orientation (F(1) = 36.41, p < .001), no effect of the comment condition (F(2) = 0.44, p = .65) and a significant interaction between the comment condition and political orientation (F(2) = 3.85, p = .02) for social distancing. Figure 5 shows the interaction plot. Comparing the differences between the neutral condition and hate speech, we find that hate speech either has no effect for social distancing (for comments about Chinese people) or even lowers social distancing for right-wing participants (for comments about transgender people). Further, we find that counter speech compared to hate speech only does have the effect we expected: Counter speech compared to hate speech increases social distancing for people on the political right while people on the political left remain the same between the groups. That means, the effect does increase with more positive pre-existing attitudes.

Interaction plot for moderated effects of the comment conditions on social distancing scores with political ideology as a moderator. P shows the findings for the pooled data, C for comments about Chinese people, T for transgender people.

Discussion

Hate speech has become a serious challenge of digital environments. Previous experimental studies have shown that the exposure to hateful comments does not only harm those attacked, but also comes with a shift of attitudes towards stronger stereotyped thinking (Hsueh et al., 2015; Schäfer et al., 2022; Weber et al., 2020) and even stronger tendencies to avoid attacked groups (Soral et al., 2018).

In light of the severeness of these findings, we presented an experimental study that aimed (1) to replicate previous findings for groups that have hardly been investigated so far in the context of hate speech: Chinese and transgender people. Further, (2) this study is the first to investigate if counter speech, meaning the response to hateful comments in order to reduce harmful effects (Bahador, 2021), can diminish the effects of hate speech.

In our main analysis, we did not find any differences between the comment conditions for stereotyped attitudes nor social distancing, even if pre-existing attitudes was considered as moderator. Our measure for pre-existing attitudes hardly showed variation, for both Chinese people and Transgender people. However, there is most likely variation in the population (European Union Agency for Fundamental Rights, 2014; Silver et al., 2022). Therefore, the chance is high that these null findings are a result of a flawed measurement of pre-existing attitudes. In the exploratory part of the analysis, we used political ideology as a moderator. Previous findings indicate that depending on the ideology, people differ with regard to their attitudes toward migrants and transgender people since people on the right-wing spectrum show higher resentments toward these groups (Chambers et al., 2013; Gillig et al., 2018). Political ideology is thus a reasonable proxy for pre-existing attitudes. Previous research has shown that high-status group members’ reasoning tends to be motivated through political identity (Boyer, Aaldering, & Lecheler, 2022). Since our sample excluded transgender people and Chinese people, political ideology should thus be a good predictor of motivated reasoning.

The findings with political ideology as a moderator do not show any effect of hate speech for group perceptions or social distancing in comparison to neutral comments. For both social groups we considered, we did not find any harmful effects of hate speech. That means, even in this exploratory analysis, we were not able to replicate previous findings which show that hate speech increases stereotyped attitudes toward Muslims (Schäfer et al., 2022), has an effect on implicit stereotypes toward refugees (Weber et al., 2020) and increases social distancing for several minority groups including Muslims and members of the LGBT community (Soral et al., 2018). An explanation why our findings differ from previous results could be related to the social groups we investigated. Maybe, the effects are not as universal as we thought, but rather depend on the prevalence of these groups in society and the political debate surrounding those groups. Compared to the social groups of previous studies, transgender people and Chinese migrants are very small minorities in Austria which are hardly visible in the private life of the participants. Moreover, at the time of data collection, neither group was at the center of political debate. As a result, hate speech might not per se be a polarizing but it might depend on specific characteristics of the social groups and their status in society. What exactly determines these effects, for example when it comes to the role of public visibility or personal experience with the groups has to be determined in future studies.

In the theoretical part of this study, we argued that counter speech is a promising mean to combat hate speech since it does not involve legal regulations, resources for professional content moderators or conflicts with freedom of speech. Still, the motivation of counter speakers is to reduce hate speech and its negative consequences (Bahador, 2021). However, the comparison of the group that saw hate speech and the group that saw hate speech and counter speech revealed another effect at play. For both groups, we find that if participants saw counter speech, right-wing participants increased their explicit and implicit stereotyped thinking and showed higher levels of social distancing, while for left-wing participants, effects tended to be the opposite. These findings were detected for comments about Chinese people and transgender people. Consistent with motivated reasoning theory, arguing against hate speech was accepted by those that likely already feel negatively about hate speech. In contrast, for people who are likely to already hold negative stereotypes toward the attacked groups, we witness a “boomerang effect”: they become more negative about the attacked groups (Hart & Nisbet, 2012). Another explanation of this stereotype polarization is that the clear group conflict between those who attack or support a social group might emphasize the difference between left-wing and right-wing citizens causing stronger political identification, and therefore increased political motivated reasoning (Slothuus & de Vreese, 2010). This might suggest that showing hate toward, or support for, minorities is strongly incorporated in citizens’ political identities. This is consistent with social sorting results found in the US (Mason, 2015) but indicates the need for exploration in multi-party European settings.

This means that counter speech has a polarizing effect which can cause negative consequences for social dynamics in a society. Therefore, it can be concluded that counter speech comes with a price. If hate is countered, a societal conflict becomes visible for other users which seems to push them to take a side. If people already think negatively about the social group that is attacked, they might feel critiqued by the comments of counter speakers since they also take position against their personal views. This might lead to a “boomerang effect” through motivated reasoning (Hart & Nisbet, 2012). For people who sympathize with the attacked social group, counter speech causes a confirming persuasive effect which seems to strengthen them in their views. Moreover, the clear group conflict between those who attack or support a social group might emphasize the difference between left-wing and right-wing citizens causing stronger political identification, and therefore increased political motivated reasoning (Slothuus & de Vreese, 2010). In sum, motivated reasoning could explain the polarizing effects.

Naturally, our experiment comes with limitations that should not remain unmentioned. First, we designed the comment conditions in a way that there was a clear difference between them. That means that neutral comments were exclusively neutral, the hate speech comment condition had a high share of hateful comments and in the counter speech condition, all counter speech comments argued in direct response to the topic of the hate speech comment following a similar structure of argumentation. Even though this procedure increased internal validity of our experiment, this does not reflect comment sections in real life. There, comments greatly vary with regard to the share of hateful and civil comments and replies. Thus, our experimental conditions certainly come with restrictions for external validity which have to be considered.

Second, another limitation is the measurement of pre-existing attitudes. In line with other studies, we measured this variable with a single-item that asked very broadly about the perception of social groups (negative/positive). Maybe, it is a result of social desirability that participants tended to report either a neutral or a positive opinion about the social groups that were part of this question. Further, we asked for pre-existing attitudes before the stimulus presentation. Even though we tried to distract participants by adding two questions on attitudes about two other groups to the question and by placing two longer item batteries between the question on pre-existing attitudes and the stimulus presentation, we cannot rule out priming effects. As a result, this might have biased our findings to some degree. Future studies investigating effects of hate speech and/or counter speech can learn from this by employing measures consisting of several items asking for (subtle) stereotyped perceptions of social groups to be able to test reinforcing effects.

In sum, it can be concluded that polarizing effects of hate speech depend on the specific group that is attacked. Based on our findings, it can be assumed that harmful effects for social group perceptions are less likely to occur for small minorities which are less visible in a society, but more research is required to confirm this claim. For counter speech, it can be concluded that it might not be as promising as assumed. Since a public conflict between hateful commenters and counter speakers seems to widen gaps in society and therefore reinforces polarizing dynamics in a society, it might for obvious cases of discriminating comments be a better solution to delete this kind of content. However, since this process also causes risks for freedom of speech, clear legal standards have to guide this process. Also, even though we found that counter speech may not be a way to reduce the harm done to non-members of the attacked group, it might still mitigate the negative effects of hate speech on members of the attacked group themselves. Therefore, it is important that social groups are backed up in online discussions: to show members of the attacked group that others take side for them. This is especially the case for comments containing mild forms of hate speech which do not meet the criteria for deletion but still should not remain unanswered.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was funded by the Research Award of the Department of Communication, University of Vienna.