Abstract

Most adolescents and young adults frequently encounter hate speech online. Although online bystander intervention is essential to combating such hate, young bystanders may need support with initiating interventions online. Thus, to illuminate the factors of young bystanders’ intervention, we conducted a nationwide, quota-based, quantitative online survey of 1180 young adults in Germany. Among the results, perceived personal responsibility for combating online hate speech positively predicted online bystanders’ direct and indirect intervention. Moreover, frequent exposure to online hate speech was positively associated with bystander intervention, whereas, a perceived threat or low self-efficacy reduced the likelihood of intervention. Also, a greater acceptance of negative consequences and being educated about online hate speech through peers or campaigns all positively predicted some direct and indirect forms of online bystander intervention.

Keywords

Most of today’s Internet users have already witnessed online hate speech. Unlike statements attacking individuals personally as in cyberbullying, online hate speech disparages others based on their “ethnicity, gender, sexual orientation, national origin, or some other characteristic that defines a group” (Hawdon et al., 2017: 254). In Europe and the United States, adolescents and young adults observe this emerging form of uncivil communication more frequently than any other group (Reichelmann et al., 2021). Recent representative surveys in Germany, for example, show that 40% of the population has witnessed online hate speech, whereas 73% of young adults aged 18–24 years old have encountered such abuse (Geschke et al., 2019). A majority of young bystanders do not respond to these incidents; for many young people, this may be due to a lack of appropriate competencies, such as not knowing when hateful speech is illegal or what actions they can take against it (UK Safer Internet Center, 2016).

This is problematic, because youth may mistakenly believe that standalone online hate speech is appropriate and socially acceptable (Wachs et al., 2019; Wright and Li, 2013) as their evolving moral judgment is largely influenced by their surroundings on social media (Hart and Carlo, 2005; Moreno and Uhls, 2019). Notably, while hate speech can distress targets in ways similar to traumatic events (Leets, 2002), young witnesses can also suffer long-lasting effects, including deteriorated well-being (Keipi et al., 2017). Not least for intervention programs that empower young bystanders to deal with online hate speech as a prerequisite for digital citizenship (Jones and Mitchell, 2016), the factors and experiences of youth who do (not) intervene need to be identified, about which little is known so far (Wachs et al., 2019).

Therefore, the aim of this study is to explain young bystander intervention against online hate speech, which we view as a form of digital civil courage due to its potential negative social consequences (Greitemeyer et al., 2006). For one, while the relevance of psychological components suggested in Latané and Darley’s (1970) bystander intervention model (here referred to as BIM) was often addressed for offline interventions (Dovidio et al., 2006), evidence about the relevance of these components for intervention against online hate speech is still slight (Kunst et al., 2021; Leonhard et al., 2018; Ziegele et al., 2020). This is especially true for young bystanders, as the associations found for adults might not fully translate to youth whose moral decision-making abilities are still developing (Hart and Carlo, 2005). Moreover, because online hate speech publicly disparages social groups (Wachs et al., 2021), young bystanders are faced with incidences of greater social scope than cyberbullying, which can particularly harm society as a whole (Kteily and Bruneau, 2017). It remains open whether youth feel more need to defend social groups than they would with cyberbullying victims they may know personally (Bastiaensens et al., 2016); despite some common features, this suggests that the manner in which young bystanders deal with these incidents could fundamentally differ from cyberbullying. Thus, it is worth investigating the situational BIM components as factors of young bystander intervention against online hate speech. Given that bystander behavior is also known to depend on more stable factors, we will add social, media-related, and political factors represented by social skills, digital media and political literacy to the canon of situational factors that may favor young bystander intervention (Jenkins and Nickerson, 2019).

For another, we will expand upon the literature by comparing various direct (e.g. counter-speech) and indirect (e.g. reporting) interventions employed by young bystanders against online hate speech (Jost et al., 2020). We will also distinguish constructive and destructive responses, since the latter can promote hostile discourse (Chen and Lu, 2017).

To understand how and why young bystanders intervene against hate speech online in light of socio-political and psychological factors, we conducted an online survey among young adults aged 16–25 years old (N = 1180) who were socio-demographically representative of the German population. Based on that, this study contributes to the literature by explaining young acts of intervention against online hate speech and by expanding the application of Latané and Darley’s (1970) model.

Bystander intervention against online hate speech as digital civil courage

As a form of uncivil communication in digital media (Schwertberger and Rieger, 2021), online hate speech refers to oral or written statements of “hatred or degrading attitudes toward a collective” (Hawdon et al., 2017: 254). For comparison, whereas cyberbullying is an incivility primarily targeting individuals that occurs repeatedly over long periods, online hate speech usually occurs publicly in one-off incidents (Wachs et al., 2021).

We view online bystander intervention as a form of prosocial behavior aimed at benefiting others (Bierhoff, 2002). Aside from involving forms of help intended as favors, bystander intervention in online hate speech can be viewed as a form of digital civil courage. Emerging in a perpetrator–victim–bystander triad, offline civil courage is characterized by the expectation among interveners of sustaining negative social consequences (e.g. being insulted or attacked) and often involves a public commitment to the democratic values of civil society (Greitemeyer et al., 2006; Nunner-Winkler, 2002). Such acts can include direct intervention (e.g. repelling an attacker) or indirect intervention (e.g. calling the police; Labuhn et al., 2004), and depending on the type, the degree of negative social sanctions expected may vary.

Even so, we consider direct and indirect forms of bystander intervention against online hate speech as forms of digital civil courage (Atzmüller et al., 2019). Because the online context encompasses private and public settings, both direct and indirect forms of intervention can have private and public reach, differing in the degree of visibility to other (uninvolved) users (Obermaier et al., 2015). For instance, witnesses of online hate speech can intervene directly by supporting targets in a private message (i.e. direct private intervention) or by uttering counter-speech (i.e. direct public intervention). Such counterarguing, which may seek to convince other bystanders and/or offenders and/or support victims, can be regarded as a “crowd-sourced response” (Bartlett and Krasodomski-Jones, 2015: 5). Counterarguing has been identified as essential to combating hate speech (Benesch et al., 2017), largely because it is visible to other social media users and demonstrates that such speech is unacceptable (Kümpel and Rieger, 2019). Of the various approaches, constructive counter-speech (e.g. citing facts) can foster a deliberative discussion atmosphere (Ziegele and Jost, 2020), whereas destructive counter-speech (e.g. hating back) can promote hostile discourse (Chen and Lu, 2017). Meanwhile, indirect public forms of online bystander intervention include flagging online hate speech (Naab et al., 2018) and using popularity cues to approve past counter-speech (Jost et al., 2020). An indirect private form of online intervention, by contrast, is reporting online hate speech on platforms, which can be done without other users’ noticing (Citron and Norton, 2011).

Along these lines, as with offline civil courage, direct (and public) interventions comprise forms that can more likely result in negative social consequences than indirect (and private) interventions because they are more open to attack and more visible by other users. Moreover, direct forms likely require more (e.g. cognitive and temporal) effort from bystanders (e.g. counterarguing in user comments) than indirect forms (e.g. Liking past counter-speech) (Jost et al., 2020; Porten-Cheé et al., 2020). Regarding their prevalence there are only indications that, for example, in Germany, indirect reporting is more widespread among youth (46%) than direct counter-speech (21%) (Steppat, 2021).

Mechanisms of bystander intervention against online hate speech

Latané and Darley’s (1970) five-step BIM suggests the psychological components bystanders pass through in critical situations to intervene: They have to (a) notice the incident, (b) perceive the situation to be threatening, (c) feel personally responsible for intervening, (d) determine how they can best help, and (e) implement the decision to intervene. 1 If any of those components does not favor engagement, then intervention is less likely to occur. In the literature, indications for that decision-making process have already emerged for bystander intervention in online incivilities, including cyberbullying (Obermaier et al., 2016), and also online hate speech (Leonhard et al., 2018; Ziegele et al., 2020). Young bystander intervention, however, has primarily been studied in cyberbullying (Domínguez-Hernández et al., 2018).

Moral decision-making abilities are still developing in youth (Hart and Carlo, 2005), and online hate speech differs from cyberbullying with regard to features possibly related to intervention, such as greater social scope (Wachs et al., 2021). For this reason, we will investigate how the BIM components, which represent perceptions of critical situations, are associated with forms of intervention in a similar manner for young bystanders of online hate speech. Since various personal characteristics can be important factors to explain intervention (Domínguez-Hernández et al., 2018; Latané and Darley, 1970), we will integrate social skills and political and digital media literacy that may determine how young bystanders take action against online hate speech. This allows us to distinguish between the relative importance of the situational components of the BIM and these more stable social, political, and media-related factors for young intervention against online hate speech (Ziegele et al., 2020).

Psychological components of young bystander intervention against online hate speech

Evidence suggests that the psychological components of the BIM are directly associated with online intervention. First, Jost et al. (2020) found that frequently using social media for political information used as a proxy for noticing critical incidents was positively associated with direct counter-speech. Similarly, the less familiar that young bystanders of cyberbullying are with critical situations, the likelier they refrain from intervening (Van Cleemput et al., 2014). Frequent exposure to online hate speech, however, can engender desensitization among youth, as it does for cyberbullying, such that witnesses show less prosocial responses (Pabian et al., 2016). In fact, there are indications that young people in particular, who frequently witness online hate speech, tend to overlook such statements or pay little attention to them (Schmid et al., 2022). Frequent exposure to online hate speech can therefore negatively predict young bystander intervention.

Second, as experiments and surveys have shown, the perceived severity of uncivil statements seldom affects adult bystanders’ willingness to intervene directly (Leonhard et al., 2018; Ziegele et al., 2020). Studies on cyberbullying suggest, however, that adolescents are the most likely to intervene in serious incidents, perhaps given their increased certainty that the utterances are socially unacceptable and present a threat for targets (Macháčková et al., 2013). This could be especially evident for young people’s intervention against online hate speech, because these incidents often implicitly derogate groups and may be difficult for youth to evaluate as emergencies (Wachs et al., 2021). Thus, amid limited data representing youth, we presume in line with the BIM and cyberbullying research that a high perceived threat of online hate speech for targets can positively predict young bystander intervention.

Third, perceived responsibility for intervening has proven integral in promoting intervention by online bystanders in one way or another (Jost et al., 2020; Leonhard et al., 2018). Likewise, youth who did not hold themselves responsible for intervening in cyberbullying remained silent (DeSmet et al., 2016). Although the association may be somewhat weak for young bystanders, whose awareness of their responsibilities in civic society is only emerging (Hart and Carlo, 2005), we assumed that the described dynamic does apply to their intervention against online hate speech. Thus, we hypothesized that:

H1: (a) The frequency of exposure to online hate speech is negatively, (b) the perception of threat, and (c) the perception of personal responsibility for intervening are positively associated with general online intervention among young bystanders.

Findings on how these components explain different kinds of intervention in online hate speech are scarce, especially among youth. Because direct and indirect (public and private) digital civil courage can differ in the possibility of negative reactions by other users and the effort for young bystanders, the BIM components may relate to the various forms in different ways. Correspondingly, given that higher exposure can foster desensitization among young people (Schmid et al., 2022), youth being frequently exposed to online hate speech may prefer to react indirectly, if at all. The attacks may then seem so commonplace that they want to react with in the lowest possible negative reactions and the least effort (Pabian et al., 2016).

Notably, like in the offline realm, more severe hate speech (e.g. threats of violence) may predict direct constructive or destructive intervention in public, despite the more likely negative social sanctions and the effort, as young bystanders see more urgency to limit the threat of harm to targets instantly (Fischer et al., 2006). Yet, the most serious cases, such as those that severely threaten targets and/or are illegal may result in indirect, private reporting, because then deletion by platform operators and subsequent legal action can seem necessary (Wilhelm et al., 2020). As a central component of various forms of online bystander intervention, a young person’s perceived responsibility could accurately predict direct and indirect intervention in private or public alike as shown by previous research (Jost et al., 2020). Thus, we inquired:

RQ1a: How are the components proposed in H1 associated with direct and indirect forms of online intervention among young bystanders?

In considering the type of intervention—that is, the fourth step of the BIM—the effectiveness and consequences of certain forms of (not) intervening may be weighed among other things (Dovidio et al., 2006; Latané and Darley, 1970). 2 On that topic, literature has included various indicators. Evidence suggests that seeing a chance of making a difference in effect via joint intervention predicts counter-speech (Ziegele et al., 2020). Moreover, following evidence concerning offline civil courage, self-blame as an anticipated cost of remaining silent should also positively predict online bystander intervention, whereas evaluation apprehension is likely negatively associated (Greitemeyer et al., 2006). DeSmet et al. (2016) asserted that the fear of negative sanctions also restrains adolescent cyberbullying interventions; because online hate speech may contain threats of violence (Döring and Mohseni, 2020), this connection could emerge among youth. Thus, we hypothesized that:

H2: (a) The perceived self-efficacy of online intervention and (b) the anticipated self-blame are positively associated with general online bystander intervention among youth, whereas (c) evaluation apprehension is negatively associated with it.

By extension, we suspect that the perceived self-efficacy of an online hate speech intervention is related to constructive counter-speech, because young people may deem it worthwhile to put themselves at risk by engaging in heated discussion (Ziegele et al., 2020) and investing time and mental effort (Porten-Cheé et al., 2020). Anticipated self-blame for not reacting, however, may be more likely to involve indirect intervention, because it may be primarily a matter of keeping the costs of non-intervention low; in cases of high evaluation apprehension, bystanders seem most inclined to indirectly intervene to protect themselves from negative reactions of other users (Dovidio et al., 2006). Hence, we asked:

RQ1b: How are the components proposed in H2 associated with direct and indirect forms of online intervention among young bystanders?

Social, media-related, and political factors of young bystander intervention against online hate speech

Besides the situational components related to online hate speech incidents, particular social, media-related, and political factors representing skills and competencies associated with acceptable coexistence may explain intervention (cf. Gresham et al., 2011). This dynamic may be especially relevant for young bystanders, whose social skills as well as media and political literacy are still developing, which may make them less confident in their intervention (Schmitt et al., 2018; UK Safer Internet Centre, 2016).

Regarding social skills, empathy and a tendency of assertive non-cooperation have been pinpointed to favor bullying intervention; then a young person is more likely to want to help targets and to take social risks to help (Jenkins and Nickerson, 2019). Similarly, the general tendency to show empathy and generally accepting negative social consequences have been shown as important factors of offline civil courage (Greitemeyer et al., 2006; Kastenmüller et al., 2007); and adolescent bystanders generally empathizing with targets are likely to intervene against cyberbullying (Barlińska et al., 2018). Thus, we hypothesized that:

H3: (a) Empathy with targets and (b) a general acceptance of negative social sanctions are positively associated with general online bystander intervention among youth.

It is conceivable that a higher level of empathy may predict direct and indirect interventions that comfort the targets, such as public constructive counter-speech and private messages (Van Cleemput et al., 2014; Wang, 2021). Moreover, a greater overall tendency to take social risks by protecting others may result in direct, public constructive, and destructive interventions in online hate speech, because these individuals generally take less account of possible negative social sanctions in their actions (Greitemeyer et al., 2006; Jenkins and Nickerson, 2019). We thus inquired:

RQ2: How are the social skills proposed in H3 associated with direct and indirect forms of online intervention among young bystanders?

Studies on offline bystander intervention have revealed that the more knowledge people presume to have about ways of intervening (e.g. medical training), the more likely they are to help (Pantin and Carver, 1982). Likewise, similar self-efficacy in social situations promotes adolescents’ cyberbullying intervention (Barlińska et al., 2018), as supposed for media and political literacy with respect to counterarguing against online hate speech (Kümpel and Rieger, 2019).

Media literacy, referring to the ability to access, understand, evaluate, and produce communication (Livingstone, 2004), is developed via lifelong learning (Potter, 2010). As a subtype of such literacy, critical digital media literacy can be conceptualized as consisting of three steps that can shape online intervention: Awareness comprises the skills to recognize and classify online content, reflection encompasses its critical evaluation, and empowerment means knowing how to actively engage with different forms of content (Schmitt et al., 2018). Thus, being knowledgeable of various online content may be valuable, insofar as bystanders need to know how to distinguish online hate speech from civil content. Added to that, individuals should be able to evaluate online content to rate the degree of incivility and to adequately intervene. Therefore, critical digital media literacy may positively predict young online intervention.

As part of that, young bystanders have to have sufficient knowledge about key characteristics of online hate speech (Schmitt et al., 2018), what could promote intervention (Atzmüller et al., 2019). Youth can acquire such knowledge in various settings, in which family, peers, schools, and civic intervention campaigns play a central role (Geschke et al., 2019; Wachs et al., 2021). Moreover, the ability to make judgments and act in relation to political issues marks an essential political competency (Reinemann et al., 2019). Therefore, although this did not emerge for adults (Ziegele et al., 2020), political self-efficacy could make a considerable difference in whether young bystanders initiate online intervention.

Correspondingly, young people with advanced levels of critical digital media literacy, hate speech-related knowledge, and/or political self-efficacy might prefer to engage in public, constructive counter-speech since they are able to choose the appropriate verbal strategy and to discuss controversial issues with a reasonable amount of effort (Kümpel and Rieger, 2019). Critical digital media literacy could also promote reporting online hate, which requires a familiarity with the functions of various social media platforms. Thus, we asked:

RQ3: How are critical digital media literacy, (sources of) hate speech-related knowledge, and political self-efficacy associated with general and direct and indirect forms of online intervention among young bystanders?

Method

A quota-based quantitative online survey of adolescents and young adults aged 16–25 years old in Germany was conducted in November and December 2020. 3 Quota sampling and data collection were performed by the survey company respondi. All respondents consented to participate, were warned of the potentially distressing topic, and were told that they could withdraw from the study at any time for any reason. Afterwards, respondents were given detailed information about the study’s purpose, contact points for targets of online hate speech, and financial compensation. The quota sample represented the age group in the total population in terms of gender (i.e. approximately 50% women), age (i.e. approximately 10% per year of age), and approximately in the level of education (i.e. 60% with or pursuing higher education).

Sample

Of the 1339 participants who completed the survey (51% women, age: M = 21 years, SD = 2.80, 59% with or pursuing higher education), the 159 participants (12%) who completed the questionnaire faster than 1 SD below the median dwell time of all respondents were excluded. After data cleansing, 1180 participants remained in the sample (54% women, age: M = 21 years, SD = 2.84, 61% with or pursuing higher education).

Because the study examined past online bystander intervention only, participants who had already witnessed online hate speech at least once were asked about bystander intervention in such instances. 4 These participants (n = 1092) corresponded to the entire sample (55% women, age: M = 21 years, SD = 2.83, 62% with or pursuing higher education).

Measures

All measures were rated on 5-point scales, ranging from 1 (do not agree at all) to 5 (fully agree) unless indicated otherwise. Online bystander intervention was defined as reactions to online hate speech “during the last month” to ensure that the participants remembered their experiences as well as possible. Because the time window was therefore rather narrow (Hawdon et al., 2017), participants who reported not witnessing online hate speech in the past month were asked about how they have “generally reacted” to such statements (1 = never, 5 = very frequently; Jost et al., 2020). 5 We asked whether they “Did not react” (reverse-coded, M = 2.22, SD = 1.31), intervened both indirectly—that is, “Flagged the statements in a comment as ‘hate speech’” (M = 2.02, SD = 1.32), “Reported the comments” (M = 2.90, SD = 1.45), and “Liked a comment that contradicted the utterances” (M = 2.90, SD = 1.56)—and directly—that is, “Wrote a comment that factually contradicted the statements,” “Refuted the statements with facts,” “Wrote a comment that supported the affected group” (i.e. constructive counter-speech; α = .88, M = 2.04, SD = 1.16), “Wrote a comment that offended the author,” “Wrote a comment that insulted the author” (i.e. destructive counter-speech, r = .78***, M = 1.54, SD = 0.97), “Confronted the author in a private message” (M = 1.58, SD = 1.05), and “Supported the affected in a private message” (M = 1.84, SD = 1.24).

The statistics for following measures apply to the full sample. Empathy for targets was measured with “I can easily understand how people feel when they are treated unfairly” and “I can well relate to the situation of people who are exposed to violence” (r = .54***, M = 3.64, SD = 0.98; Greitemeyer et al., 2006). General acceptance of negative social sanctions was measured with “I do not allow injustice, even if I have to accept disadvantages as a result,” “Even if I have disadvantages, I take action in the case of violence against weaker people,” and “I intervene in cases of injustice, even if this has negative consequences for me” (α = .82, M = 3.25, SD = 0.95; Kastenmüller et al., 2007; Greitemeyer et al., 2006).

Critical digital media literacy was measured with (Nienierza et al., 2021): “I always find the information I am looking for online,” “I find it easy to assess the accuracy of content on the Internet,” “I am well informed about how to protect my privacy on social media,” and “I think about what I post on the Internet and what impact it could have on others” (α = .68, M = 3.72, SD = 0.72).

Hate speech-related knowledge was assessed by participants’ agreement that online hate speech “Expresses a feeling of hatred,” “Intends to hurt other people,” “Contains insults,” and “Insults other people because they belong to a certain group” (Hawdon et al., 2017). After dummy-coding the items based on a median split (median = 5), we formed a 5-point sum index (0–4 characteristics, M = 2.10, SD = 1.47; Schmitt et al., 2018). Moreover, we asked whether this knowledge was developed “Among family and friends” (M = 2.15, SD = 1.12), “At school, training, university or work” (M = 2.56, SD = 1.25), and “In political campaigns or groups on social media” (M = 2.63, SD = 1.28; Reinemann et al., 2019).

Political self-efficacy was measured with “I can understand and assess important political issues well,” “I have the confidence to actively participate in a discussion about political issues,” and “I know politics well enough to get involved in politics” (α = .84, M = 3.07, SD = 1.03; Beierlein et al., 2012).

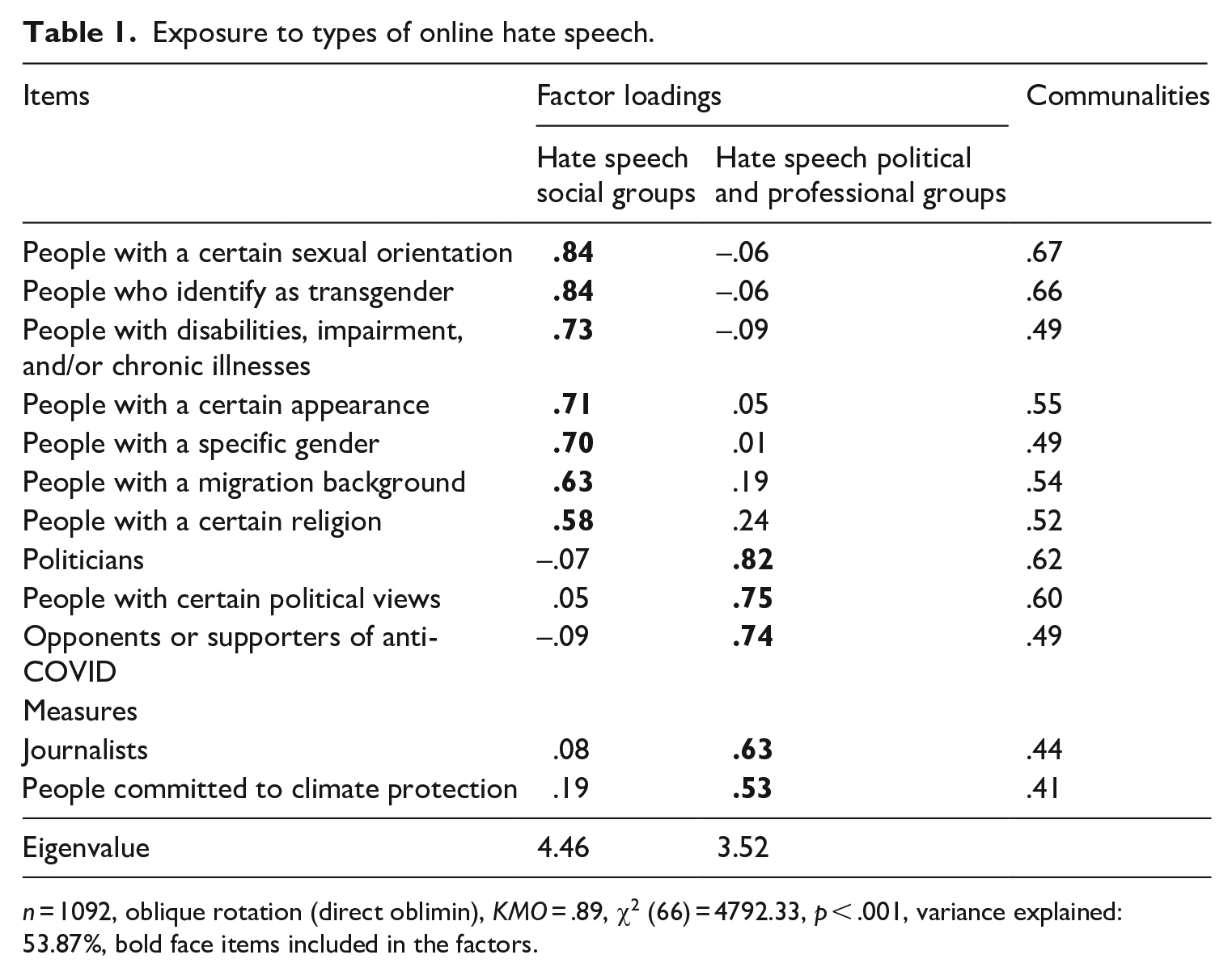

We captured the frequency of exposure (1 = never, 5 = very frequently) to online hate speech against social (α = .86, M = 3.25, SD = 0.90) and political/professional groups (α = .76, M = 3.19, SD = 0.82; Geschke et al., 2019), 6 the exposure to online hate speech “in general” (M = 3.12, SD = 1.14) and “during the last month” (M = 2.93, SD = 1.27).

Referring to their past experiences with online hate speech, participants assessed its perceived threat in terms of “Discriminatory,” “Derogatory,” “Insulting,” and “Threatening” (α = .85, M = 4.19, SD = 0.79; Leonhard et al., 2018; Obermaier et al., 2021), whereas perceived personal responsibility was measured with “I feel it is my personal responsibility to intervene,” “I feel it is my personal responsibility to take action against the statements,” and “I feel personally obliged to contradict the statements” (α = .89, M = 2.47, SD = 1.05; Obermaier et al., 2016). Perceived self-efficacy in intervention was assessed with “I cannot help those affected” (reverse-coded, M = 2.90, SD = 1.28), self-blame apprehension with “I feel complicit if I do nothing” and “If I don’t intervene, I feel guilty” (r = .68***, M = 2.53, SD = 1.11; Kastenmüller et al., 2007; Ziegele et al., 2020), and evaluation apprehension with “I am insulted myself,” “I am physically attacked,” and “I have to justify my opinion” (α = .67, M = 2.79, SD = 1.07; Neubaum and Krämer, 2018).

Socio-demographics and the use of Instagram (1 = never, 5 = daily, M = 4.34, SD = 1.29), WhatsApp (M = 4.88, SD = 0.51), and YouTube (M = 4.37, SD = 0.88) were controlled for.

Results

Forms of intervention against online hate speech among young bystanders

We first examined exposure to online hate speech as a prerequisite for intervention. In line with Geschke et al.’s (2019) findings, 84% of our participants had encountered online hate speech during the previous month and 93% in general at least rarely. Among other results, 42% of the participants who reported encountering hate speech stated that they (very) frequently witnessed such utterances against social groups (3% never), disparaging migrant background, sexual orientation, or appearance (very) often (52–57%, never: 7–8%). By comparison, 34% (very) frequently witnessed online hate speech against political/professional groups (3% never), while 51–58% (very) often encountered online hate speech against politicians, political groups, and opponents or supporters of anti-COVID measures (never: 5–7%).

Overall, 18% of those participants (very) frequently chose to react to those incidents (42% never). Approximately 40% (very) often engaged in indirect forms of intervention (42% supporting, never: 32%; 38% reporting, never: 27%; 17% flagging, never: 56%). Direct forms of bystander intervention were (very) often performed by less than 15% (13% constructive counter-speech, never: 47%; 13% contacting targets, never: 62%), ranging from less agreement to destructive forms (8% destructive counter-speech, never: 74%; 8% contacting perpetrator, never: 72%).

Factors of forms of intervention against online hate speech among young bystanders

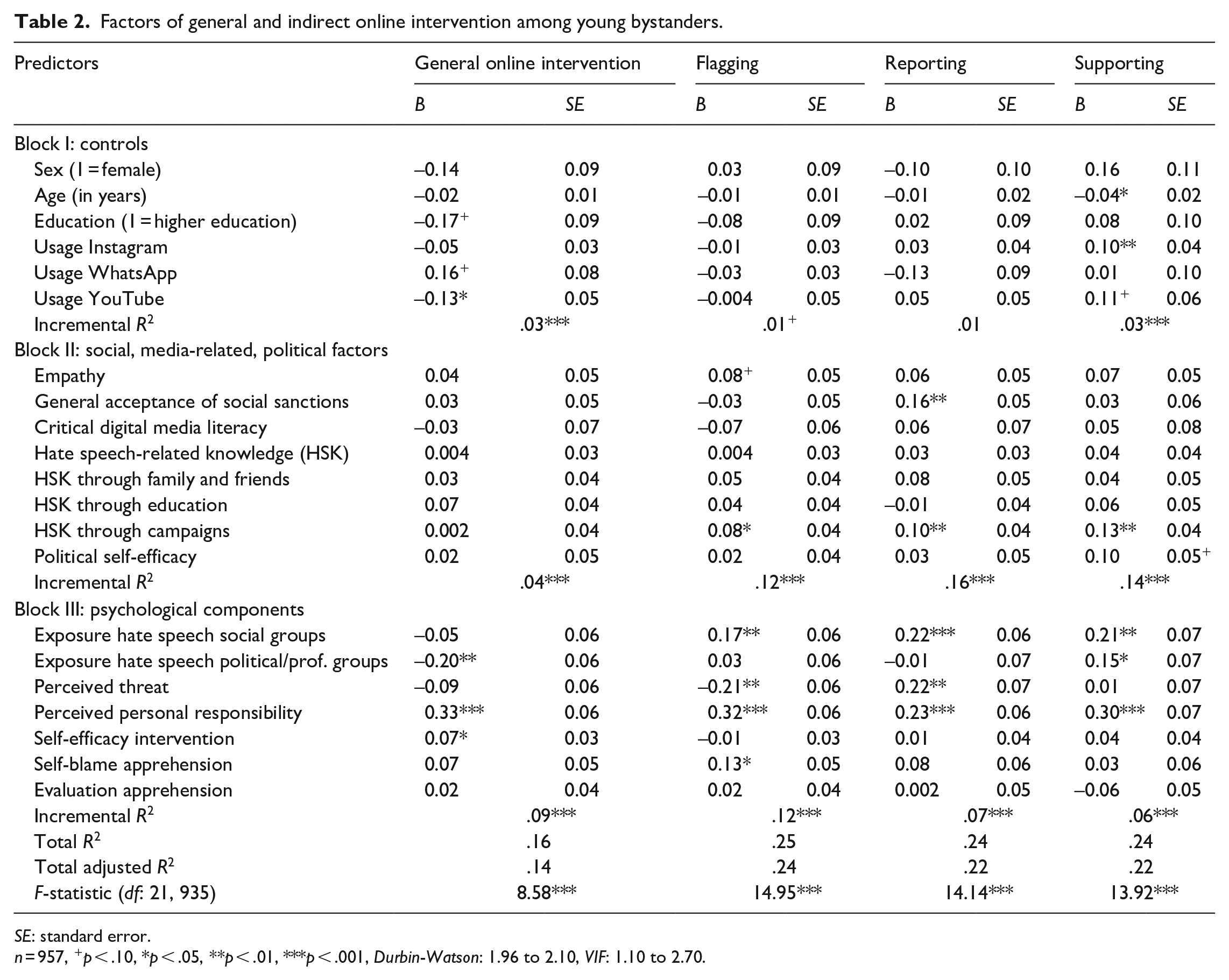

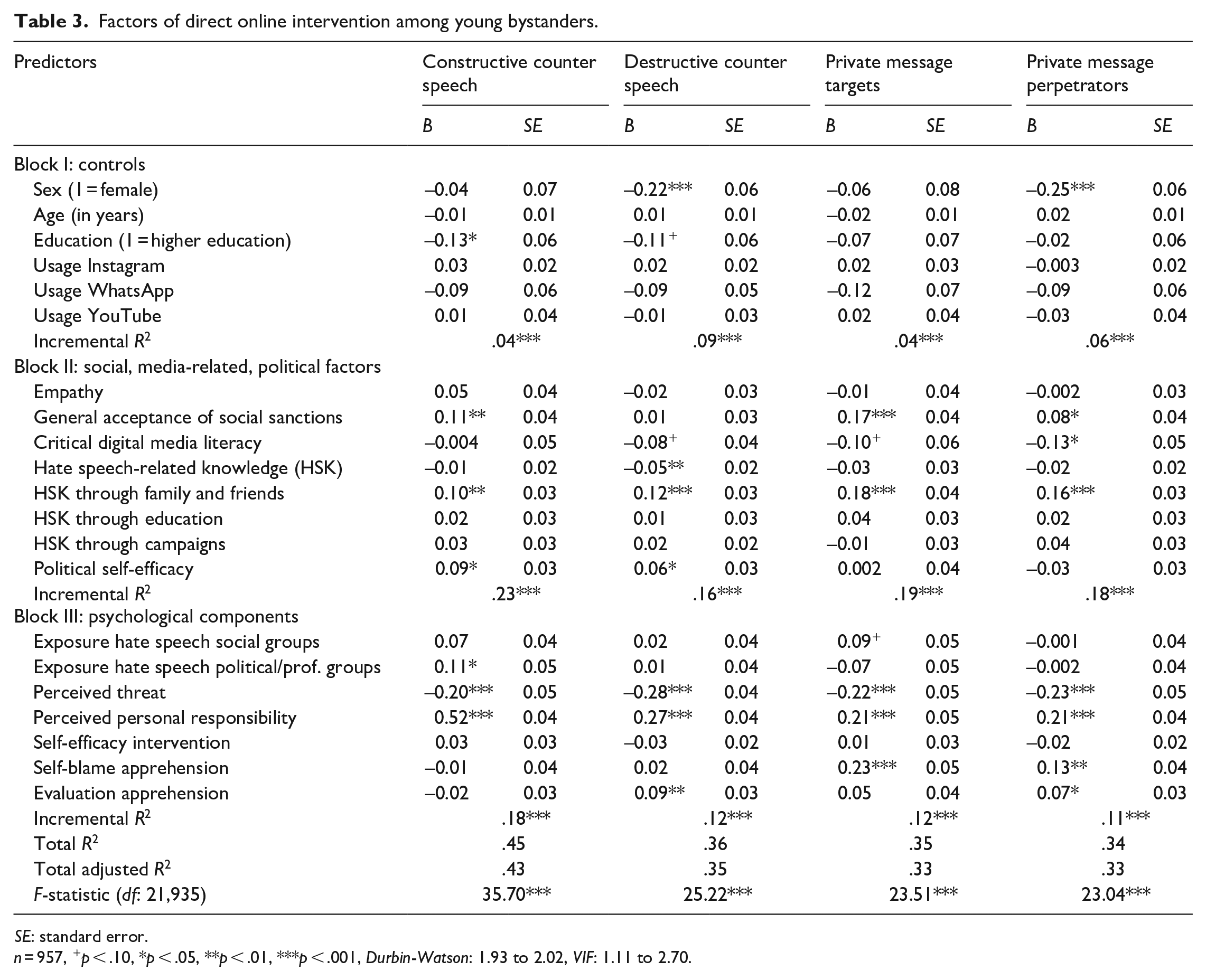

To test the hypotheses and answer the research questions, we conducted linear ordinary least squares (OLS) regressions. Social, media-related, and political factors and BIM components were used as predictors and (different forms of) online intervention as dependent variable(s) (Tables 2 and 3).

Exposure to types of online hate speech.

n = 1092, oblique rotation (direct oblimin), KMO = .89, χ2 (66) = 4792.33, p < .001, variance explained: 53.87%, bold face items included in the factors.

Factors of general and indirect online intervention among young bystanders.

SE: standard error.

n = 957, +p < .10, *p < .05, **p < .01, ***p < .001, Durbin-Watson: 1.96 to 2.10, VIF: 1.10 to 2.70.

Factors of direct online intervention among young bystanders.

SE: standard error.

n = 957, +p < .10, *p < .05, **p < .01, ***p < .001, Durbin-Watson: 1.93 to 2.02, VIF: 1.11 to 2.70.

Hypothesis 1 suggested a negative association between frequent exposure to online hate speech, and positive relations between perceived threat, and personal responsibility and general online intervention. Frequent exposure to political online hate speech predicted bystanders’ silence (B = –0.20, SE = 0.06, p = .001), meaning that H1a was supported. Although the perceived threat was not related, low felt personal responsibility for intervening predicted reactions to online hate speech (B = 0.33, SE = 0.06, p < .001), meaning H1b was rejected but H1c supported. Next, H2 presumed positive relations between general intervention and components reflecting its costs and benefits. Along those lines, self-efficacy in online intervention positively related to reacting (B = 0.07, SE = 0.03, p = .04), which supported H2a. Nevertheless, neither anticipated self-blame nor evaluation apprehension were associated with intervening, justifying the rejection of H2b and H2c.

Of the various forms of online intervention (RQ1), all indirect forms were positively related to exposure to online hate speech against social groups. As for direct online intervention, witnessing online hate speech against political groups promoted constructive counterarguing (B = 0.11, SE = 0.05, p = .02). Although the perception of threat reduced the likelihood of all forms of direct intervention and flagging, the more threatening the incidents seemed, the more the participants had reported them (B = 0.22, SE = 0.07, p = .001). In addition, perceived personal responsibility was positively associated with all forms of online bystander intervention, while anticipated self-blame predicted flagging (B = 0.13, SE = 0.05, p = .01) and privately contacting targets (B = 0.23, SE = 0.05, p < .001) and offenders (B = 0.13, SE = 0.04, p = .001). Evaluation apprehension positively correlated with destructive counterarguing (B = 0.09, SE = 0.03, p = .001) and privately contacting perpetrators (B = 0.07, SE = 0.03, p = .01).

H3 proposed being empathic and accepting negative consequences predicted intervening. However, reacting to online hate speech in general was not connected with either one, hence our rejection of H3a and H3b. Nevertheless, regarding different forms of interventions (RQ2), the tendency to take social risks protecting others positively predicted reporting (B = 0.16, SE = 0.05, p = .002), constructive counter-speech (B = 0.11, SE = 0.04, p = .004), comforting targets (B = 0.17, SE = 0.04, p < .001), and confronting offenders in private (B = 0.08, SE = 0.04, p = .02).

Last, concerning digital media literacy and political self-efficacy (RQ3), the former negatively predicted confronting offenders (B = –0.13, SE = 0.05, p = .01) and hate speech-related knowledge was negatively related to destructive counter-speech (B = –0.05, SE = 0.02, p = .01). Such knowledge gained from family and friends was positively related to all direct forms of intervention, while campaign-knowledge was associated with all indirect forms. Last, political self-efficacy positively predicted destructive (B = 0.06, SE = 0.03, p = .03) and constructive counter-arguing (B = 0.09, SE = 0.03, p = .01).

Discussion

Our findings afford essential insights into how psychological components in the BIM and social skills as well as political and digital media literacy affect how young bystanders intervene against online hate speech. In line with published findings, our results revealed that reacting in any way was associated with higher felt responsibility for and higher self-efficacy in intervening—in short, in the sense of being able to help the targets. Moreover, increased exposure to online hate speech against political groups corresponded with not reacting. Thus, high levels of contact could have led young bystanders to ignore the incidents due to feeling discouraged that making a difference is impossible and/or due to desensitization and the view that hate speech is not serious (Pabian et al., 2016; Schmid et al., 2022).

All forms of online intervention by young bystanders were most strongly predicted by feeling personally responsible for intervening, similar to findings on adults (Jost et al., 2020). Even so, the young bystanders’ sense of personal responsibility was rather low, with a mean score below the scale’s midpoint (Atzmüller et al., 2019), possibly since they are still learning about their responsibilities in civil society (Hart and Carlo, 2005). Therefore, subsequent studies should focus on which characteristics promote or inhibit a sense of responsibility among youth for intervening against online hate speech. Beyond that, efforts to promote digital civil courage should aim at raising awareness of online hate speech among youth to enhance their felt responsibility for combating it.

The more threatening youth considered online hate speech to be, the less they intervene directly. That dynamic could result from the fact that, for them, the negative social consequences of intervening (e.g. getting attacked) far outweigh its benefits for the sake of the targets or the discourse. Contrarily, research has shown that more threatening emergencies offline promote intervention as empathetic arousal and, in turn, accepting more harm for oneself when intervening (Fischer et al., 2006). Taken together, the fact that online hate speech does not pose immediate physical danger but does have potentially large audiences could explain the negative association. Along those lines, studies have shown that bystanders have to evaluate incidents as threatening to feel personally responsible and intend to intervene (Leonhard et al., 2018). Follow-up studies should therefore test those indirect relationships for young online bystanders in experimental or longitudinal designs.

Upon confronting more threatening online hate speech, young bystanders indicated a greater willingness to report the incidents (Wilhelm et al., 2020). Because reporting hate speech could thus serve to mitigate the risk for interveners, pathways on online platforms should be created for bystanders to intervene with less risk of harming themselves.

Among other results, fearing a negative evaluation by others positively predicted destructive counter-speech (e.g. hating back) and private confrontation. However, because no causal relationships in the cross-sectional data could be tested, it is conceivable that young bystanders who have had negative experiences with destructive interventions exhibited high levels of evaluation apprehension. Whereas higher levels of anticipated self-blame predicted low-risk engagement, presumably making a difference can motivate young bystanders to take action (Ziegele et al., 2020). In view of that, longitudinal studies should seek to identify the costs and rewards of (not) reacting that youth consider in situ and the long-term consequences of intervening.

Social skills as well as media and political literacy also explained forms of online bystander intervention and direct actions in particular, although to a lesser extent than the BIM components. Since generally accepting social sanctions was primarily accompanied by direct forms of intervention, digital civil courage against online hate speech seems to somewhat depend on an affinity for assertive behavior (Jenkins and Nickerson, 2019). Knowledge of the key characteristics of hate speech, but critical digital media literacy only marginally, was related to low levels of destructive interventions. Participants who indicated gaining hate speech-related knowledge from family and peers showed relatively high levels of direct intervention in constructive but also destructive ways, whereas learning about online hate speech from campaigns predicted indirect intervention. Political self-efficacy was positively related to constructive, but also destructive counter-speech, possibly due to feeling capable of partaking in controversial discussions. Thus, follow-up studies should examine which dimensions of digital media literacy and political competencies, also in reference to online hate speech, prime forms of online intervention of young bystanders (Reinemann et al., 2019). Also, subsequent studies should investigate exactly what content about online hate speech youth receive from instances of socialization and its effects on intervention.

Altogether, though young bystanders showed higher levels of indirect than direct intervention, perceived responsibility and, to a lesser extent, perceived self-efficacy in intervening enhanced all forms of digital civic courage (Jost et al., 2020). Although direct public intervention may be most effective in convincing others in some cases (Schieb and Preuss, 2016), indirect public or private intervention may sometimes be superior—for instance, to prevent directing further attention to perpetrators, suggesting social support by Liking counter-speech, or fostering (automatic) content moderation and/or legal action by reporting. Thus, the finding that young bystanders hesitated to intervene in threatening incidents suggests that platforms should provide access to ways to address those incidents indirectly. As the willingness to take on social risks for others and political self-efficacy can also favor destructive counter-speech, it is also important to create among youth hate speech-specific knowledge and reflection on the consequences of different intervention strategies. As for research, follow-up studies should investigate the long-term direct and indirect effects of the BIM’s components as well as of social, media-related, and political factors on online bystander intervention.

Limitations and future research directions

These results have some limitations: First, because no causal relationships could be detected due to the data’s cross-sectional nature, the associations should be further investigated using panel or experimental studies able to account for different forms of online bystander intervention. This way, potential interrelations between situational BIM components and more stable social, political, and media-related factors of young bystanders can also be elicited. Despite that limitation, our study marked an important first step toward visualizing the factors of intervening among younger bystanders.

Second, we asked how the young bystanders had dealt with online hate speech only. Although the findings may have been biased by socially desirable response behavior, they align with published findings about online bystander intervention and with representative data for Germany regarding exposure to online hate speech. In any case, the study was one of the first to differentiate several indirect and direct forms of online bystander intervention (Jost et al., 2020) and to explain them among young bystanders (Atzmüller et al., 2019). Follow-up studies should therefore examine which sorts of young bystanders respond to which forms of online hate speech by, for instance, using data mining combined with content analyses and/or experiments involving multiple exposures.

Third, because we initially considered associations between several predictors and different forms of online bystander intervention, some constructs could not be interrogated in their entirety. Therefore, future studies should include the dimensions of political competence and digital media literacy in analyzing online bystander intervention to pinpoint which aspects should be promoted. Added to that, though our focal population was young adults, the findings for younger age groups could differ, and future studies should thus address the bystander behavior of younger adolescents as well. All together, the forms and consequences of young bystanders’ digital civil courage should be more precisely identified, and experiments should test the effects of intervention measures to raise awareness of online hate speech.

Even with those limitations, our study has marked an important step toward understanding the mechanisms of bystander intervention against online hate speech and what can best enhance digital civil courage representing democratic values and aimed at creating more respectful interactions in digital spaces. Promoting the behavior among youth is particularly desirable, because being committed to others is a cornerstone of integration in multicultural civic society. Feeling such a commitment could encourage young online bystanders to show more digital civil courage and be less on standby.

Footnotes

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.