Abstract

This study is the first to simultaneously investigate country-level and platform-related context factors of toxic outrage, that is, destructive incivility, in online discussions. It compares user comments on the public role of religion and secularism from 2015/16 in four democracies (Australia, United States, Germany, Switzerland) and four discussion arenas on three platforms (News websites, Facebook, Twitter). A novel automated content analysis (N = 1,236,551) combines LIWC dictionaries with machine learning. The level of toxic outrage is higher in majoritarian than in consensus-oriented democracies and in arenas that afford plural, issue-driven rather than like-minded, preference-driven debates. Yet, toxic outrage is lower in forums that tend to separate public and private conversations than in those that collapse varying contexts. This suggests that user-generated discussions flourish in environments that incentivize actors to strive for compromise, put relevant issues center stage and make room for public debate at a relative distance from purely social conversation.

Digital spaces provide an infrastructure for abusive discourse to spread more quickly and broadly than ever (Coe et al., 2014). Up to 40% of the contributions in online discussions can contain uncivil elements (Ziegele et al., 2018). Civility as an expression of mutual respect has long played a central role in online public sphere research and is a central normative dimension to assess the democratic quality of online discussions (Friess & Eilders, 2015). This is rooted either in deliberative theory, which postulates that mutual respect increases the openness for opposing arguments (Kies, 2010), or in liberal notions of communicative restraint that aim to prevent social conflicts from escalating (Ackerman, 1989). Yet, uncivil language can also serve minority groups in public discussions who are otherwise not heard at all (Jamieson et al., 2017). Should normative conceptions of democratic public discourse thus allow incivility as a generally acceptable component of online speech in a liberal-individualist fashion (Freelon, 2015)?

The danger of this “anything goes” position is that normative analysis of online speech would lose its bite exactly at a time when democracy is increasingly imperiled. Even relaxed standards for online communication need to draw a line between constructive and less constructive debate contributions (Bächtiger et al., 2010). This study argues that what distinguishes normatively acceptable from unacceptable forms of incivility is that the latter generate a fundamental insensitivity toward other perspectives. It investigates toxic outrage as a rhetorical strategy that fosters such insensitivity by aiming at provoking negative emotional reactions in the audience, thus promoting closed-mindedness toward political opponents.

To limit its expansion, it is key to understand which context factors drive toxic outrage online and inhibit the more constructive styles of user-generated debate needed in healthy democracies. While studies increasingly investigate the impact of socio-technical affordances (Nagy & Neff, 2015) on debate quality (e.g., Esau et al., 2017; Freelon, 2015; Rowe, 2015b), country-level structural influences are rarely explored (Ruiz et al., 2011). In considering national political and platform-related antecedents of toxic outrage in online debates together, this study is one of the first to examine the phenomenon in its multi-layered environment.

In an automated content analysis combining dictionary-based analysis with machine learning, we investigate how the political system of a country (Lijphart, 2012), the degree of context collapse in (Boyd, 2011) and the primary use function of a discussion arena (Maia & Rezende, 2016) condition toxic outrage online. Specifically, we study user posts on the public role of religion and secularism in society from August 2015 to July 2016 in two majoritarian and two consensus-oriented democracies, namely Australia, the United States, Germany and Switzerland. We compare user comments from four discussion arenas on three platforms, namely from (a) mainstream news media’s website comment sections and (b) their Facebook pages, from the (c) Facebook pages of partisan collective actors and alternative media and from (d) Twitter.

Theory

Incivility Across Democratic Theories

Holmes (1988) advocates the strictest civility norm. From his liberal perspective, rules of omission can be constructive for democratic debates because “by tying our tongues about a sensitive question, we can secure forms of cooperation and fellowship otherwise beyond reach” (p. 19). Even in softer forms, this conversational restraint norm fundamentally aims at preventing conflicts from escalating: If a topic is controversial, we should “simply say nothing at all about this disagreement and put the moral ideals that divide us off the conversational agenda” (Ackerman, 1989, p. 16) so that respectful political collaboration can continue (Rawls, 1987). Liberal theorists remind us that social situations themselves often incentivize self-restraint: Do we not often find ourselves in debates with acquaintances where we end up unable to constructively debate the topic and decide to drop it (Ackerman, 1989; Holmes, 1988)? This also used to be the case for traditional mass media. Prior to today’s audience polarization, “when programing choices were based on garnering the largest possible number of viewers from the mass audience, the goal was to offend the fewest [and] to program the least objectionable content” (Berry & Sobieraj, 2014, p. 17). Thus, conversational restraint is often not the result of enforced rules of conduct, but of the social context and economic incentives in communication.

For deliberative theorists, liberal conversational restraint is too restrictive, as deep disagreement cannot be processed by focusing on what we agree on, but only by opening up to and assessing the weight of opposing arguments (Gutman & Thompson, 2009). Yet, such openness necessitates principles of accommodation (Gutman & Thompson, 2009), meaning that mutual respect needs to be fostered for opposing positions—requiring a moral economy. As attacks make the insulted close off against arguments and desensitize ourselves to the positions of the insulted, condemning opponents should be avoided. However, strict civility rules can maintain inequalities, as powerful groups are able to ignore civil pleas for justice and condemn uncivil ones (Huspek, 2007). Still, instead of discarding civility norms, Estlund (2008) advocates deliberative standards as a “breakdown theory”: If deliberative equilibrium is broken (e.g., by power imbalances), deviations from such standards (e.g., through incivility) should be allowed, provided they serve to restore the balance. In this understanding, civility norms serve to prevent closed-mindedness and should be relaxed only to the extent that they do not serve this purpose.

Finally, the tradition of agonistic pluralism is often portrayed as laissez-faire toward incivility, but this only applies conditionally: While passionate, impolite and disruptive speech should be appreciated from this perspective (Mouffe, 2013), agonistic pluralism aims to foster agonistic respect, that is, a “reciprocal commitment to inject generosity and forbearance into public negotiations between parties who acknowledge that the deepest wellsprings of human inspiration are to date susceptible to multiple interpretations” (Connolly, 2005, p. 125). Thus, while agonistic theorists warn us not to be “fainthearted” in relation to uncivil talk (Laclau, 2007, p. 250), analyzing and criticizing the exclusionary effects of certain types of incivility is key.

What unites normative theories’ concern for civility, then, is the telos of preventing what Medina (2013) refers to as blindness or insensitivity for the perspective of others: Certain speech acts impede constructive democratic debate because they carry a disregard for the positions of fellow debaters based on the presumption that their experiences are irrelevant or untrustworthy. Not all normative traditions would expect public debaters to understand each other, nor public debates to be frictionless, polite or friendly. But we should expect an appreciation for both common ground and differences and, conversely, be interested in how certain forms of incivility and impoliteness, which we refer to as toxic outrage, prevent such open-mindedness.

What is Toxic Outrage?

Several studies have disentangled incivility further: Papacharissi (2004) distinguishes between impoliteness and incivility. The former denotes speech acts that are characterized by inappropriate manners such as name-calling or vulgarity, whereas the latter violates democratic principles, for example by stereotyping or calling to remove other people’s rights. Muddiman (2017) refers to this as personal- versus public-level incivility. Rossini (2020), in turn, separates incivility from intolerance, with the former being a violation of common politeness norms and the latter expressing a fundamentally discriminatory intent toward people or groups based on their personal characteristics, preferences or social status. While impoliteness can also be a constructive component of online discussions, both Papacharissi (2004) and Rossini (2020) argue that democratic (public-level) incivility or intolerance is detrimental to democratic debate.

Expanding on these conceptual distinctions, what separates unacceptable from constructive forms of incivility is that they carry and create a fundamental insensitivity toward other perspectives. Toxic outrage is a rhetorical strategy that aims to foster such disregard by provoking negative emotional reactions in the audience against political opponents (Sobieraj & Berry, 2011). It contains elements of both impoliteness and democratic incivility/intolerance (Papacharissi, 2004; Rossini, 2020), but only those that are detrimental to public debate. What distinguishes toxic outrage from other forms of incivility and impoliteness are “the elements of malfeasant inaccuracy and [the strategic] intent to diminish” (Sobieraj & Berry, 2011, p. 20). While impolite, even some forms of uncivil rhetoric sometimes benefit the discussion, toxic outrage further polarizes debates (Anderson et al., 2014) by increasing closed-mindedness in the audience through moral indignation (Hwang et al., 2018). Thus, toxic “outrage is incivility writ large. It is by definition uncivil, but not all incivility is outrage” (Sobieraj & Berry, 2011, p. 20).

In an increasingly diverse digital media environment, some actors decidedly support outrageous debate by creating discussion spaces that attract this rhetoric. A widespread toxic “outrage industry” (Berry & Sobieraj, 2014) has emerged that deliberately enrages different parts of the public against each other. In this context, it has been argued that the spread of toxic outrage is linked to national political and platform-related factors (Sobieraj & Berry, 2011). By systematically analyzing toxic outrage online in majoritarian versus consensus-oriented democracies, in arenas that separate versus collapse public and private contexts and in forums that are used primarily for issue- rather than preference-driven debates, the present study sets out to test this assumption. In comparing two cases in which the respective explanatory factor is present with two cases in which the factor is absent, respectively, the research relies on the inferential “method of difference” (Mill, 1843). Thereby, looking at two cases per group facilitates the interpretation of structural influences rather than the exploration of individual country- or arena-related idiosyncrasies. The rationale for our hypotheses is set out below.

Toxic Outrage Across Democratic Political Systems

This study relies on Lijphart’s (2012) distinction of majoritarian versus consensus-oriented democracies to explain national differences in the level of toxic outrage online. While more disaggregated multi-index conceptions of democracy like Varieties of Democracy would facilitate the consideration of gradual variations in democratic performance, Lijphart’s general typology is particularly useful for our research because it “provide[s] a rough empirical estimate of a complex and multivalent concept” (Coppedge et al., 2011, p. 252). Abstracting the tangled political architectures of the studied countries allows us to investigate cross-national structural influences alongside platform-related antecedents of toxic outrage online in our unique endeavor to examine these explanatory factors together. Furthermore, as the following rationale will show, the distinction of majoritarian versus consensus-oriented democracies is closely related to the polarization versus moderation of public debates and thus tightly linked to our research question. The limitations of Lijphart’s typology will be reflected upon in the discussion section.

While majoritarian democracies are dominated by two competing party blocs and concentrate executive power with the majority party, consensus-oriented democracies share governing authority among multiple parties and thus focus on political compromise (Lijphart, 2012). Accordingly, in public debates, political actors strive to accommodate different perspectives in consensus-oriented democratic systems, whereas they clearly dissociate from each other in majoritarian democracies (Steiner et al., 2004). This habit to dissociate in two-party systems has further intensified in recent years, as political parties increasingly polarize by moving toward ideological extremes (Levendusky, 2009) and party elites fuel a strong “us versus them” dichotomy in public communication (McCoy & Somer, 2019). As majority parties increasingly divide into opposed camps, “they also increasingly perceive politics as a zero-sum competition, in which a win for one side is inherently a loss for the other” (Mason, 2018, p. 60).

The political system patterns of accommodation and dissociation in the different types of democracy are transferred to the electorate predominantly via the media (Levendusky, 2009). Specifically, as the media report on political issues in majoritarian democracies, they “communicate to the public the degree to which politicians are polarized along party lines” (Arceneaux & Johnson, 2015, p. 309), which, in turn, causes citizens to align more clearly with party ideologies, a process referred to as “partisan sorting” (Levendusky, 2009). While consensus-oriented democracies tend to have comparatively regulated democratic corporatist media systems, majoritarian democracies usually have rather liberal media systems (Hallin & Mancini, 2004) whose market-oriented structures further facilitate the depiction of “politics as a struggle between irreconcilably opposed parties” (Tucker et al., 2018, p. 40). As they follow the polarization trend within the political system by catering to increasingly divided audiences, these systems can become rather polarized liberal media systems (Nechushtai, 2018) that facilitate a rise in toxic outrage spurred by (partisan) media (Berry & Sobieraj, 2014). Still, in addition to their mediated dissemination, elite polarization cues can also spread through other channels such as interpersonal encounters (Druckman et al., 2018) or social movements (Levendusky, 2009).

Indeed, studying twenty democracies, Gidron et al. (2020) show that citizens who identify with a specific party “in countries with majoritarian, single-winner voting systems tend to dislike opposition parties more intensely . . . than do partisans in countries with proportional voting systems” (p. 10). Notably, this affective polarization is not necessarily accompanied by more extreme policy positions. Mason (2015) finds that behavioral and issue position polarization are rather distinct—and that while partisan sorting contributes strongly to the former, it does not increase issue position extremity to the same extent. Political polarization in the electorate is thus primarily based on partisan identities and group membership, taking the form of an uncivil agreement, as part of which citizens “agree on most issues but are nevertheless growing increasingly biased, active and angry” (Mason, 2013, p. 141). Based on the aforementioned theoretical and empirical insights, we assume that citizens’ anger and affective polarization manifest in user-generated discussions and therefore hypothesize:

H1: The level of toxic outrage in online user comments is higher in majoritarian than in consensus-oriented democracies.

Empirically, Lijphart (2012) distinguishes majoritarian and consensus-oriented democracies along an executives-parties and a federal-unitary dimension, consisting of five indicators each. The former considers the effective number of parliamentary parties, the concentration of power in cabinet, the dominance of the executive vis-à-vis the legislative, the disproportionality and type of the electoral system and interest group pluralism in a democracy. The latter maps indicators of “[power] diffusion by means of institutional separation” (Lijphart, 2012, p. 4) like the dispersion of power on different government levels or bicameralism. Our country selection focused strongly on the executives-parties dimension while keeping the federal-unitary dimension rather constant. The previous theoretical considerations showed that the distinction of one-party majority versus multiparty coalition governments, that is, the concentration versus sharing of executive power, and the related tendency for dissociation versus accommodation in public debates, is particularly consequential for toxic outrage online. Coincidingly, the distinction of one-party majority versus multiparty coalition governments constitutes the “most important and typical difference between the two models of democracy” (Lijphart, 2012, p. 60) as well as the empirically most defining factor of the executives-parties dimension. While aiming to include nations from several continents, we also sought to keep the number of languages low to mitigate their influence in the automated analysis. This resulted in selecting the majoritarian democracies of Australia and the United States and the consensus-oriented democracies of Germany and Switzerland (where we focus on German-language debates). While all four countries have attenuated in their degree of majority- versus consensus-orientation over the last decades, they continuously belong to the respective general types. The Supplemental Appendix A depicts the countries on Lijphart’s (2012) two-dimensional map of democracy and shows their performance on the sub-dimensions of the executives-parties dimension.

Toxic Outrage Across Online Discussion Arenas

On the platform level, specific socio-technical affordances (Marwick, 2018) shape the debates in different online discussion forums. In accordance with a platform’s technical design, users have distinct “perceptions of what actions are available to them [in these arenas]” (Nagy & Neff, 2015, p. 5), which frame how audiences predominantly use a certain communication space. We suggest that the perceived degree of context collapse in and the primary use function of a discussion arena may be particularly consequential for the level of toxic outrage in user debates.

Context collapse

Democratic discourse is often conceptualized as a semi-autonomous civic sphere that is decoupled from private, essentially sociable conversations to facilitate substantive contestation (Schudson, 1997). In online forums, however, a user’s varying audiences often integrate into one indistinguishable collective (Vitak, 2012), with public and private contexts increasingly blurring. As discussion spaces connect family, friends, co-workers, and other acquaintances (Boyd, 2011), it can be difficult for users to determine which style of communication is socially appropriate (Rowe, 2015b). While this is of course also shaped by individual conduct, overall, the literature suggests that certain platforms afford a much stronger degree of context collapse to their users than others. On Facebook, public and private spheres mix rather strongly, as users perceive a “high salience of invisible audiences and collapsed contexts” (Rowe, 2015b, p. 543) in this environment. Even when users post in seemingly public arenas, such as on the pages of media outlets or political groups, where comments are directed primarily to unknown co-debaters, these posts are at least potentially visible to the poster’s entire friend network (Hughes et al., 2012). Twitter and the website comment sections of mainstream news media, in contrast, are more public in nature. Both connect individuals to strangers more often than Facebook, “focus[ing] less on ‘who you are’ and more on what you have to say” (Hughes et al., 2012, p. 562; Rossini, 2020). The perceived degree of context collapse is thus weaker in these forums.

Online arenas that separate public from private contexts tend to be characterized by lower levels of identifiability. This reduces the risk of being held accountable for one’s statements and encourages various forms of incivility (Santana, 2014). Rowe (2015a), for example, found that user posts contain more impoliteness and democratic incivility in the Washington Post’s website comment section than on the paper’s public Facebook page. Similarly, user comments on the more de-individuated Youtube channel of the White House have been found to be more impolite than those on the government’s Facebook page (Halpern & Gibbs, 2013). We thus hypothesize:

H2: The level of toxic outrage in online user comments is higher in arenas that separate public and private contexts more clearly than in those that mix the two.

Primary use function

Another core idea of democratic theory is that public contestation should take place across lines of difference (Gutman & Thompson, 2009). However, instead of engaging with diverse views, online political discussions are often rather polarized (Yarchi et al., 2021). The primary use function of a discussion arena indicates whether this forum is used by individuals primarily for issue-driven debates that evolve pluralistically around a contested issue or rather to conduct preference-driven discussions that bring together like-minded people.

In the political context, Twitter mostly affords rather preference-driven debates with ingroup-oriented structures (Freelon, 2015; Yarchi et al., 2021), in which individuals engage with contents (Himelboim et al., 2013) and users (Vaccari et al., 2016) of similar political preferences. While hashtags could potentially bring together individuals with different views, in reality, they primarily integrate those with similar positions. Likewise, research suggests that the Facebook pages of partisan collective actors and alternative media are used primarily for discussions among like-minded people (Maia & Rezende, 2016; Maia et al., 2021). In contrast, the website comment sections and Facebook pages of mainstream news media assemble a readership base that is connected by an interest in the topic of the original article (Freelon, 2015) and whose political views have been shown to be rather diverse (Nelson & Webster, 2017). By investigating two different kinds of discussion arenas on Facebook, we account for the fact that the platform’s socio-technical affordances encourage different primary use functions.

Research shows that heterogeneous discussion spaces are more prone than homogeneous forums to foster disrespectful behavior (Maia & Rezende, 2016). Insults, for example, were found to be much more common in user comments on news websites than in debates on Twitter, which include more ingroup-oriented elements (Freelon, 2015). We thus hypothesize:

H3: The level of toxic outrage in online user comments is higher in arenas that are used primarily for issue-driven debates with plural opinions than in forums that afford rather preference-driven, like-minded discussions.

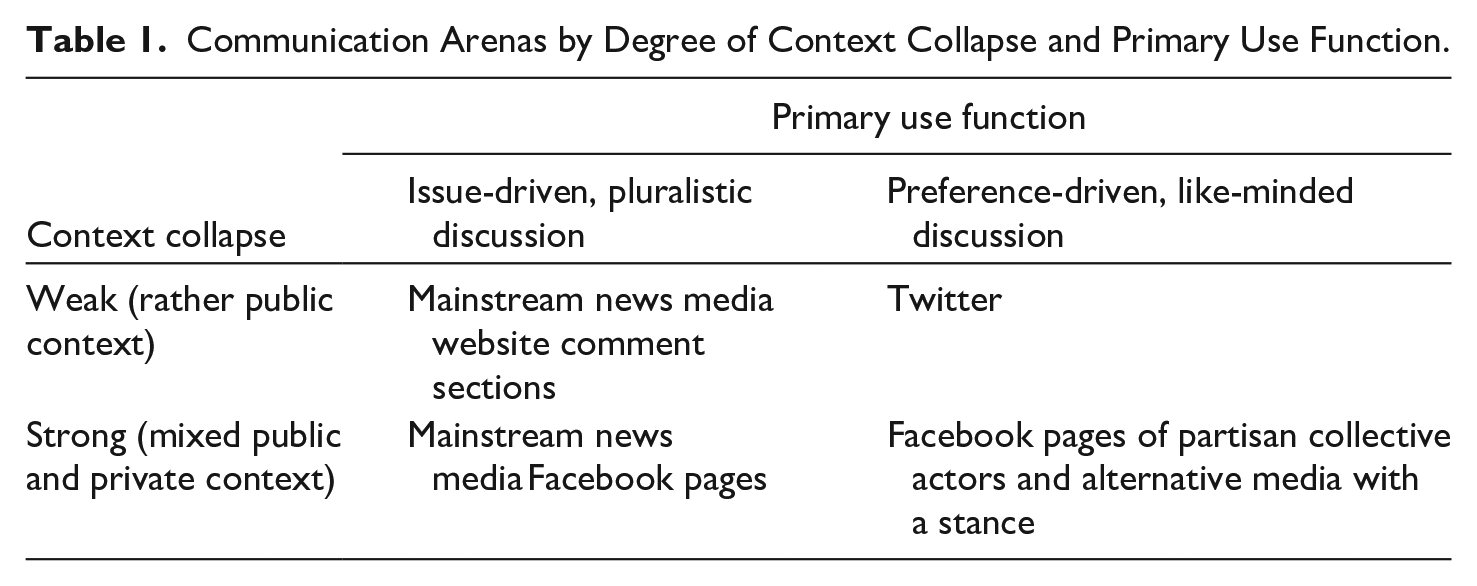

Table 1 classifies the communication arenas analyzed in this study according to their degree of context collapse and primary use function.

Communication Arenas by Degree of Context Collapse and Primary Use Function.

Methodology

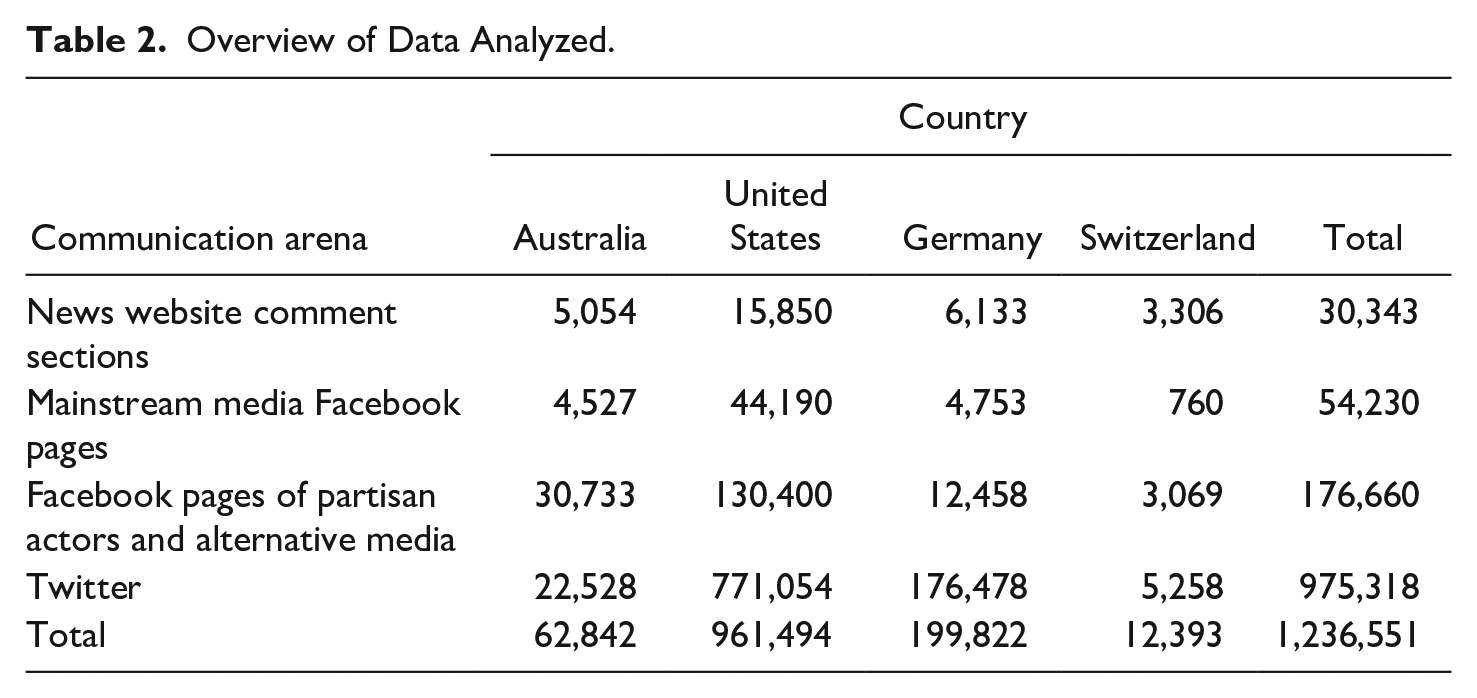

In an automated content analysis, this study investigated 1,236,551 user contributions on the public role of religion and secularism in society, published from August 2015 to July 2016. Table 2 shows the data analyzed per discussion arena per country.

Overview of Data Analyzed.

Case Study

Debates on the public role of religion and secularism in society are a hard case for civil contestation because religiously grounded value systems can exhibit elements of fundamentalism that make public discussions comparatively closed and apodictic. In religiously tainted debates, some speakers might more readily construe their opponents as enemies and denigrate their views. In the run up to the 2016 Australian and US elections and at the height of the European refugee movement 2015/2016, the public role of religion and secularism in society was hotly debated in all four countries. Amid rising skepticism about the immigration of religious minorities into Western democracies, the material we study mirrors quarrels on the ensuing expectations for cultural adaption, such as the wearing of headscarves in public, as well as longstanding issues in which religious and secular camps are divided, such as abortion or same-sex marriage.

Data Collection

Data collection took place in a carefully validated multi-step process warranting data comparability. We systematically collected material of users commenting on similar issues and positions in all four communication arenas and all four countries. This collection of user comments used a diligently selected pool of news articles and blog posts as its starting point and branched out into four data collection paths, one for each discussion arena. Accordingly, data collection started with a dataset of 1,127 news articles and blog posts on the subject of interest, issued from August 2015 to July 2016 by leading print newspapers, news websites and political blogs (Supplemental Appendix B) in the four countries. The studied outlets are the leading outlets of record in each category in the respective societies, according to 16 or more academic experts we surveyed in each country. Likewise, to build this corpus, an expert survey was conducted among 76 communication and religious studies scholars in the societies, who named relevant debates on the public role of religion and secularism in the respective society and a list of keywords associated with each debate. Based on these keywords, the articles and blog posts were selected in a novel, expert-informed topic modeling process (for detailed information see Rinke et al., 2021). The base corpus and the expert survey results then guided the following four data collection paths:

For contributions from mainstream news media websites (Supplemental Appendix B/B-1), we identified all news website articles from the base corpus featuring a user comment section (115 out of the total of 400 news website articles in the base corpus) and collected the posts therein.

For comments from mainstream news media’s Facebook pages (Supplemental Appendix B/B-2), we identified all news website articles from the base corpus that had been posted on the respective media outlet’s Facebook page (76 out of the total of 400 news website articles in the base corpus) and collected all comments below these.

To collect contributions from the Facebook pages of partisan collective actors and alternative media, we first identified relevant pages by drawing on a list of all actors mentioned in the base corpus. Those collective actors and alternative media with a particular interest in the public role of religion and secularism in society and an active Facebook page in the period of investigation (e.g., the Secular Coalition for America and Christianity Today) were chosen for analysis. As these actors were referred to by the leading print newspapers, news websites and political blogs in the studied countries, they can also be regarded to be among the most relevant of their kind in each of these societies—which makes a country comparison possible. Facebook’s “similar page” function, desktop research and consulting selected academic experts all served to expand and substantiate the selection. In total, 76 Facebook pages of partisan collective actors and 41 Facebook pages of alternative media were selected for analysis (Supplemental Appendix B/B-3). We collected all entries posted by these pages in the period of investigation and scored them for subject relevance with topic models that were built from extensive text corpora (Rinke et al., 2021) and that relied on the expert survey keywords. A cut-off for relevance was defined with gold standards of n = 300 comments in each country, each of which was scored by two trained coders with Krippendorff’s αnominal of .78. This resulted in 4,899 relevant Facebook seed posts from partisan collective actors and alternative media for which all user comments were collected.

To identify tweets, we researched all available Twitter profiles of the partisan collective actors and alternative media identified in the previous step and created a list of their 5,000 most frequently mentioned hashtags from August 2015 to July 2016. Inspired by this list, we selected 64 Twitter debate hashtags (Supplemental Appendix B/B-4) that strongly related to at least one of the debates on the public role of religion and secularism in society named in the expert survey. In the United States, for instance, the debate on religious opposition against same-sex marriage being prominently referred to in the survey led to the selection of #kimdavis. All tweets that featured at least one of the hashtags in the period of investigation were collected.

The data was collected for a large-scale research program that examines the democratic quality of user-generated debates comparatively. A subsample of the data analyzed in this study was therefore also used in a previously published study on the integrative complexity of online user comments across different types of democracy and discussion arenas (Jakob et al., 2021). However, each of these investigations focuses on a distinct dimension of debate quality, and thus makes a unique contribution to different lines of research. While this study centers on toxic outrage as a violation of civility norms in online debates, that is, on a rather sentiment-based construct, the study on integrative complexity concentrates on the argumentative quality of user comments online, that is, on a more substantive, content-related dimension of debate quality.

Automated Content Analysis

We combined an off-the-shelf dictionary with machine learning to measure toxic outrage in the collected posts—a novel automated method suggested by Dobbrick et al. (2021). This leveraged the knowledge incorporated in the word list and tailored it to our needs, thus reducing the amount of hand-coded data required for stand-alone machine learning. Aligning with CRISP-DM (Shearer, 2000), our approach followed five steps: Generating a gold standard, pre-processing it by applying LIWC, then training, evaluating and deploying the machine learning model. Since our instrument cannot classify visual material, we focused on the text of the posts.

Generating the gold standard

Following Sobieraj and Berry (2011), toxic outrage as an effort to cause a negative emotional reaction in the audience may be elicited by 13 rhetorical means, including “insulting language, name calling, emotional display, emotional language, verbal fighting/sparring, character assassination, misrepresentative exaggeration, mockery, conflagration, ideologically extremizing language, slippery slope, belittling, and obscene language” (p. 26). Based on the authors’ category descriptions (Sobieraj & Berry, 2011), two individuals were trained to code the gold standard. The unit of analysis was the comment. Importantly, rather than coding individual forms of incivility, to facilitate the automated measurement, toxic outrage was coded as a binary variable in this study, that is, as either present or absent in a comment. Thereby, toxic outrage was present if at least one of the 13 modes of outrage occurred in a post. In a pretest on 320 randomly selected user comments (20 per arena per country), Krippendorff’s αnominal was .80. In the main coding, 200 posts from each arena in each country were scored, that is, 3,200 comments. Each item was assessed by both coders, with disagreements resolved consensually.

By using this pre-defined concept of toxic outrage, this study focused on investigating “researcher-defined uncivil content” (Van Duyn & Muddiman, 2020, p. 12). Based on the gold standard, the automated classifier identified a set of English and German words, respectively, that best predict toxic outrage across all discussion arenas in the majoritarian versus consensus-oriented democracies. The advantage of this ex-ante conceptualization is that it enables a highly systematic comparison of the prevalence of toxic outrage in different types of democracy and arenas. The analysis cannot, however, provide insights into how users perceive this incivility, which can likewise vary across individuals, social contexts, and countries (Kenski et al., 2020).

Pre-processing with off-the-shelf dictionary

The gold standard was pre-processed by applying all LIWC2015/DE-LIWC2015 categories (Pennebaker et al., 2015) to the English and German posts, respectively. This includes linguistic and formal features like word count or the share of words longer than six letters.

Training the machine learning model

An M5P machine learning algorithm (Quinlan, 1992; Wang & Witten, 1996) was then trained on the gold standard. The model combines decision tree with regression analysis. It splits the data into subsets so that the variance of the target variable across the instances is minimized. When the number of data points or their variance in the subsets is below a certain threshold, it stops. Finally, a linear regression model is fit to predict the outcome in that subset. The tree-based M5P automatically deals with variable selection, variable importance, missing values, normalization, and variable interactions (Song & Lu, 2015). It thus works well with LIWC-generated features, as many dictionary categories are combinations of others, and hence highly correlated, and they vary between the English and the German LIWC. Combining decision trees with linear regression, M5P predicts continuous values that need to be discretized for binary measurement—in our case with the split point generated automatically using the gold standard.

Evaluation

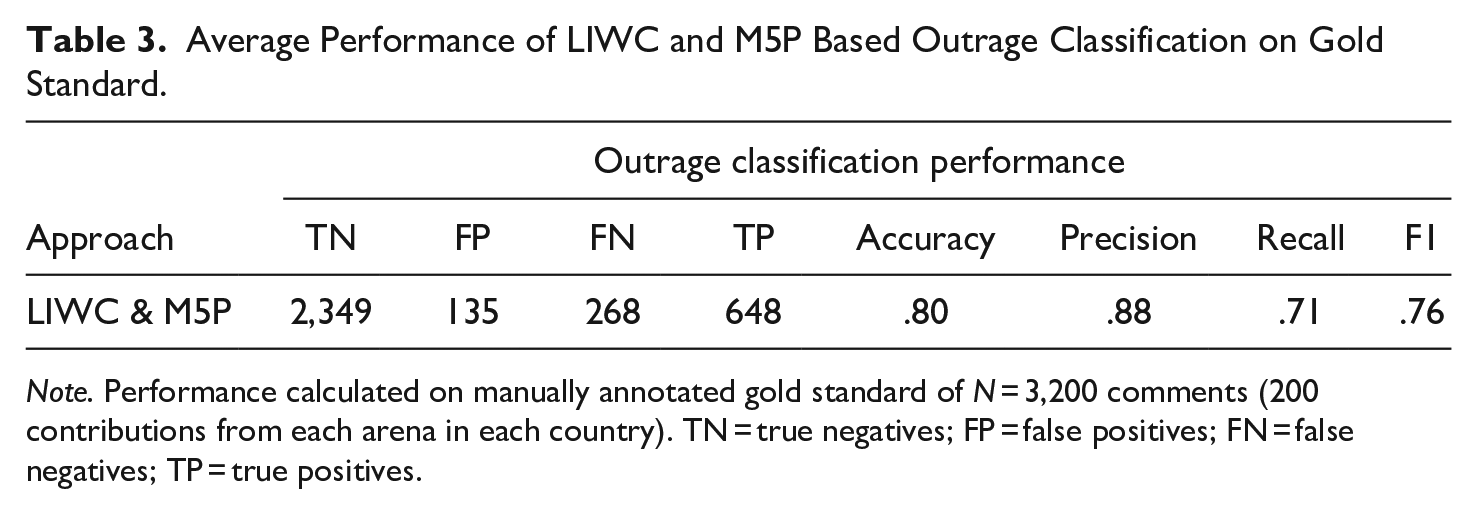

We assessed the performance of our approach with a 10-fold cross-validation on the gold standard. This trains the model on nine equal folds of data and holds one out for evaluation. The process is repeated ten times, so every subsample is used as the validation set once. The performance is then averaged over the ten runs. Table 3 shows the performance metrics, indicating that our instrument works comparatively well (see Supplemental Appendix C/Table 1 for performance per arena and country).

Average Performance of LIWC and M5P Based Outrage Classification on Gold Standard.

Note. Performance calculated on manually annotated gold standard of N = 3,200 comments (200 contributions from each arena in each country). TN = true negatives; FP = false positives; FN = false negatives; TP = true positives.

Applying the model to the corpus

Finally, we pre-processed the full data corpus in the same way as the gold standard, applied the trained M5P model to predict toxic outrage for each comment in the set and discretized the score for statistical analysis. The workflow is available in Supplemental Appendix C.

Results

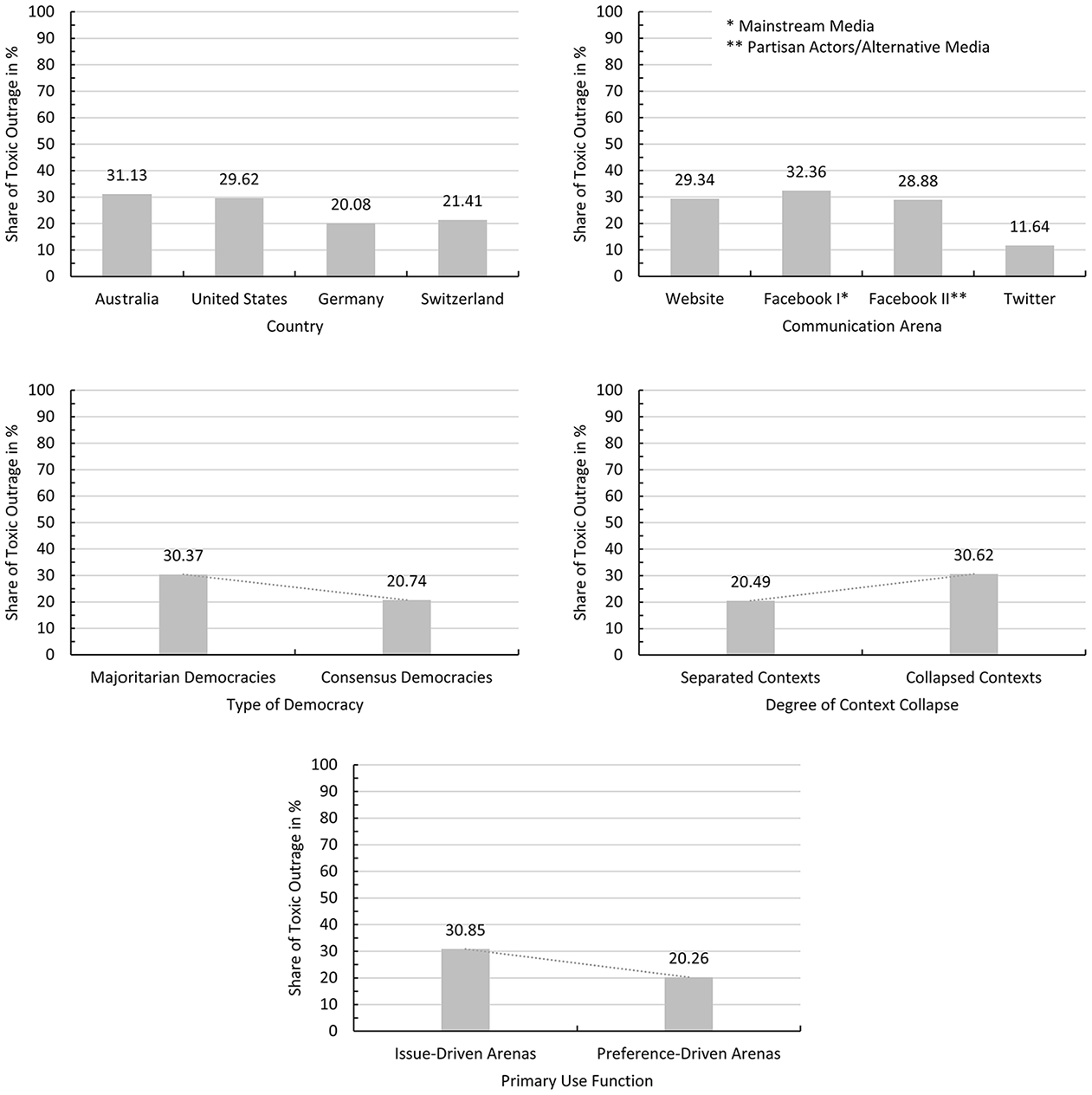

Overall, 17.67% of the user contributions in our dataset of N = 1,236,551 contained toxic outrage. Figure 1 features the share of toxic outrage per country and communication arena. It shows that toxic outrage was more frequent in some countries and forums than others, with patterns that lend initial support for the formulated hypotheses.

Share of user comments that contain toxic outrage in percent (N = 1,236,551).

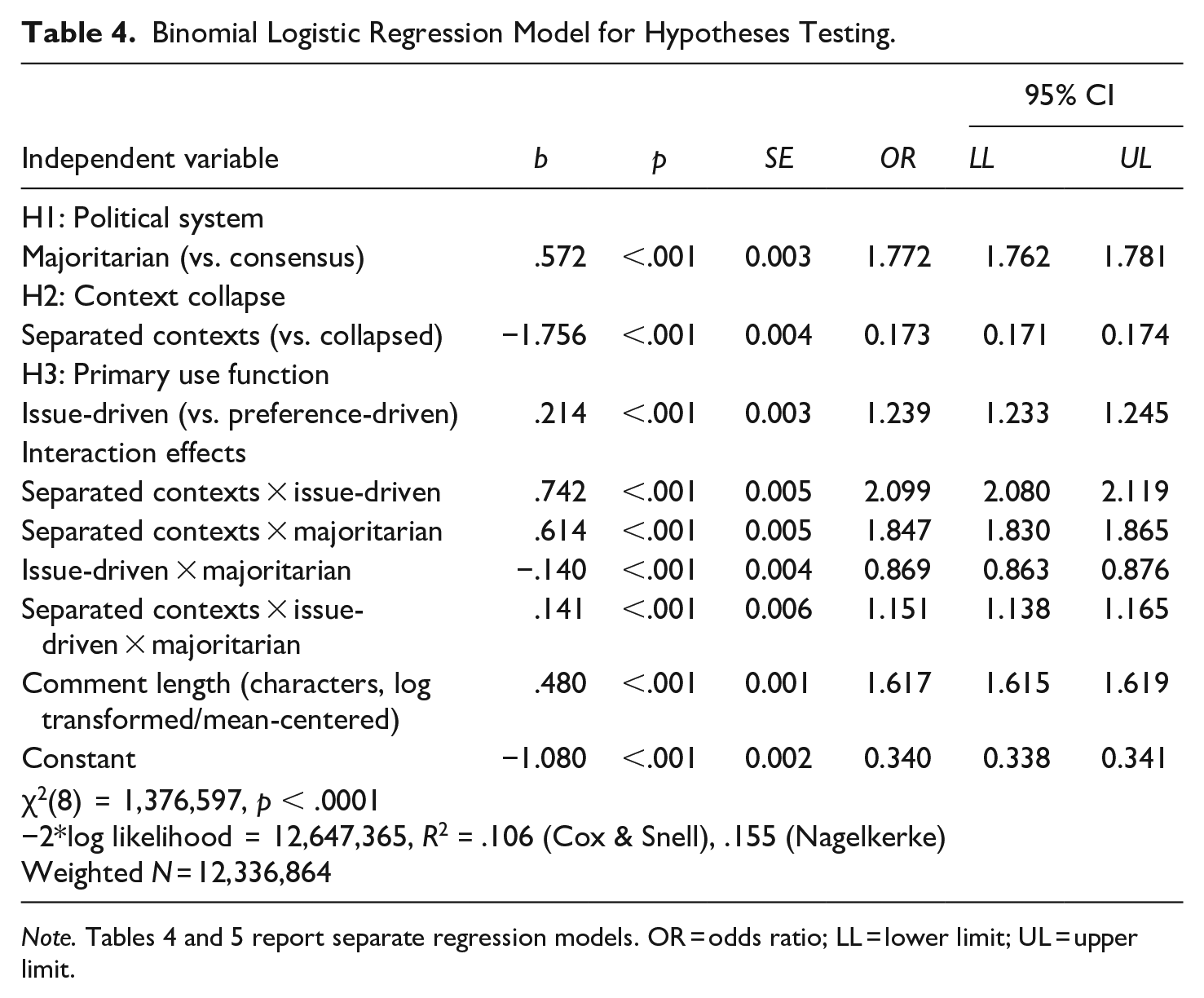

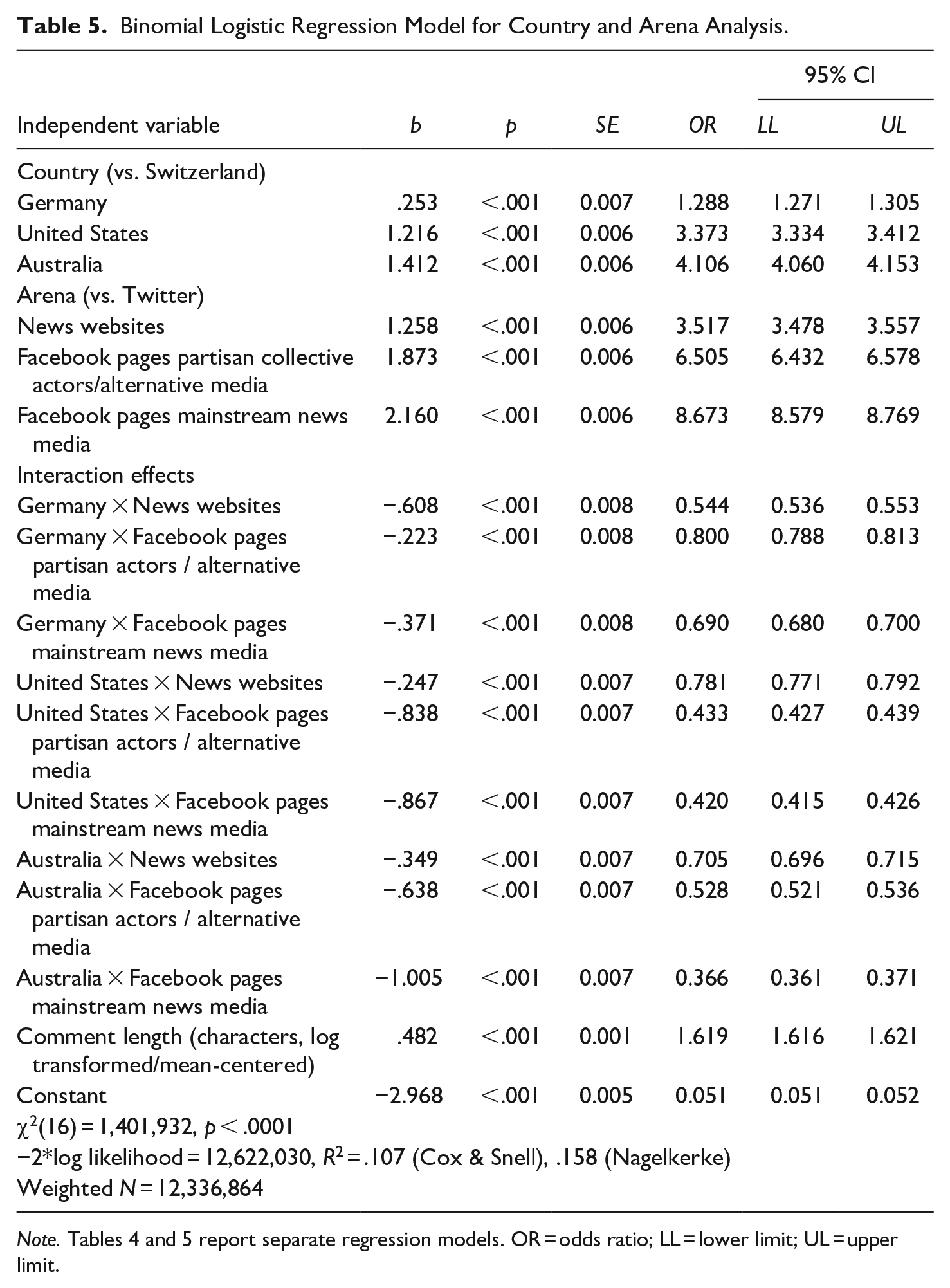

To test these hypotheses, we ran binominal logistic regression analysis. It estimates the logistic transformation of the probability of an event, that is, the log odds of toxic outrage to be present in a comment. Specifically, the presented models estimate the log odds of toxic outrage to occur in a post in a specific group versus the log odds of toxic outrage to occur in a post in the reference group (i.e., in majoritarian vs. consensus-oriented democracies, under separated vs. collapsed contexts, in issue-driven vs. preference-driven arenas). To avoid dominant effects of individual countries or arenas that stem from the varying amounts of collected comments, the data was balanced by overweighting the arenas with lower case numbers in all countries according to the size of the arena in which the largest number of comments was collected (Supplemental Appendix D/D-1). A robustness check showed that the hypothesized main effects for the democratic system, context collapse and primary use function (H1–H3) are substantively the same when tested on the weighted sample versus the hand-coded, stratified randomly sampled gold standard—and that the effect sizes fall within the confidence intervals of a bootstrapped regression analysis on a stratified undersampled dataset (Supplemental Appendix D/D-2). This demonstrates that the weighting did not affect our general findings. Since longer posts are more likely to contain toxic outrage, the regression analysis controlled for comment length. To ease interpretation, this covariate was log-transformed to reduce skewness 1 and mean-centered, so that the intercept is the expected value of Y when comment length is set to the mean instead of zero. Tables 4 and 5 report the coefficients of two separate regression models that were computed to test the hypotheses and to conduct a more detailed country and arena comparison.

Binomial Logistic Regression Model for Hypotheses Testing.

Binomial Logistic Regression Model for Country and Arena Analysis.

Table 4 shows that the data backed our first hypothesis, which stated that the level of toxic outrage in online user comments is higher in majoritarian than in consensus-oriented democracies. The predicted chance of a comment to contain toxic outrage rose from 15.9% in consensus-oriented political systems (SE < 0.001, p < .001, 95% CI [.159, .159]) to 30.5% in majoritarian democracies (SE < 0.001, p < .001, 95% CI [.305, .306]). Yet, this possibility differed slightly between both Switzerland (16.2%, SE < 0.001, p < .001, 95% CI [.161, .162]) and Germany (15.5%, SE < 0.001, p < .001, 95% CI [.155, .156]) and Australia (32.5%, SE < 0.001, p < .001, 95% CI [.324, .326]) and the United States (28.5%, SE < 0.001, p < .001, 95% CI [.285, .286]) (see also Table 5).

However, contrary to our assumption, the level of toxic outrage in online user comments was lower in arenas that separate public and private contexts more clearly (Twitter and news website comment sections) than in those mixing the two (Facebook), as shown in Table 4. The regression predicted that while a user comment has a 14.6% chance to carry toxic outrage under separated contexts (SE < 0.001, p < .001, 95% CI [.146, .146]), it is 32.7% under collapsed contexts (SE < 0.001, p < .001, 95% CI [.327, .327]). Our second hypothesis was thus rejected based on an effect into the opposite direction.

Our third hypothesis, again, was supported. As Table 4 shows, the level of toxic outrage in online user comments was higher in arenas that are used primarily for issue-driven debates with plural opinions than in forums that afford rather preference-driven, like-minded discussions. The probability that a user post contains toxic outrage was predicted at 27.5% (SE < 0.001, p < .001, 95% CI [.275, .276]) and 18.0% (SE < 0.001, p < .001, 95% CI [.179, .180]), respectively. In a more detailed breakdown, Table 5 shows that in relation to Twitter, toxic outrage was increasingly more likely in news website comment sections, on the Facebook pages of partisan collective actors and alternative media and on the Facebook pages of mainstream news media, with the chance of a user comment to contain toxic outrage at 9.6% (SE < 0.001, p < .001, 95% CI [.095, .096]), 21.6% (SE < 0.001, p < .001, 95% CI [.215, .216]), 31.0% (SE < 0.001, p < .001, 95% CI [.310, .311]), and 34.3% (SE < 0.001, p < .001, 95% CI [.343, .344]), respectively.

Apart from the hypothesized main effects, as Tables 4 and 5 show, there were significant interaction effects between the three predictor variables. These mainly stemmed from the fact that relative to the discovered country and platform effects, the chance of a user comment to contain toxic outrage in the news website comment sections of mainstream media was comparatively higher in the United States than in the other three countries and comparatively lower in Germany. Similarly, the predicted probability of a user post to carry toxic outrage on the Facebook pages of partisan actors and alternative media was comparatively higher in Australia than in the other countries (see Supplemental Appendix D/D-4 for a graphical display of the interactions).

Discussion

This study was the first to investigate national political and platform-related context factors of toxic outrage, that is, destructive incivility, in online discussions together. It analyzed user comments from Australia, the United States, Germany and Switzerland, comparing posts from the website comment sections and Facebook pages of mainstream news media, the Facebook pages of partisan collective actors and alternative media as well as from Twitter.

The level of toxic outrage in online user comments was higher in majoritarian than in consensus-oriented democracies. This suggests that the civil “spirit of accommodation” (Lijphart, 1975, p. 103) typical for consensus-oriented democracies and the tendency for dissociation and polarization in majoritarian democracies translate from elite discourse into user-generated debates, where they facilitate more or less constructive engagement. Political system characteristics may thus not only serve as incentive structures for political actors in public debates but also as modeling patterns for citizens. This supports deliberative democrats’ case for the advantages of consensus-oriented democracies, which, they argue, can incentivize social learning across political camps and thus help mitigate deep societal conflicts (Dryzek, 2005).

However, the level of toxic outrage in online user comments differed slightly between countries of the same democratic system. In explaining these nuances, this study is constrained by the limits of Lijphart’s (2012) general distinction between majoritarian and consensus-oriented democracies. While this typology allowed us to study cross-national influences alongside platform-related antecedents of toxic outrage online, it prevents us from pinpointing exactly which (combination of) characteristics of majoritarian and consensus-oriented democratic systems elicit the difference in destructive incivility (Coppedge et al., 2011). The type and strength of majoritarianism or consensus-orientation could be of interest in this respect. For instance, when minority parties gain the parliamentary majority in disproportional majoritarian political systems (as the Republican party did in the United States), this could drive political polarization even further (McCoy & Somer, 2019). Similarly, while we theorize the process of how the political system influences citizen-to-citizen interactions online, our study does not directly observe the intervening stages of this process. In addition, Lijphart’s typology may suffer from multicollinearity, with confounding factors like “cultural norms, historical pathways and [other] contextual circumstances” (Bormann, 2010, p. 6) also at play. The regulations that governments issue to mitigate uncivil conduct online or the degree to which the legislature sanctions this behavior could, for example, be especially relevant in this regard. Future research should focus more explicitly on explaining country-level differences in toxic outrage online by relying on a larger number of countries and more fine-grained empirical notions of democracy to disentangle the various factors at play.

Contrary to our expectation, the level of toxic outrage in online user comments was lower in arenas that separate public and private contexts more clearly than in those that collapse varying audiences into one group. Thus, after all, online debates were more likely to be constructive when they were conducted in semi-autonomous spheres of democratic engagement that are decoupled from private life more distinctly (Schudson, 1997). While this contrasts prior research showing that lower identifiability encourages incivility online (Halpern & Gibbs, 2013; Rowe, 2015a; Santana, 2014), it mirrors a study by Rossini (2019). Comparing comments on news media websites and Facebook pages in Brazil, she, too, finds that the identifiability of users and the ensuing social constraints on Facebook do not prevent citizens from being uncivil. The platform’s “users may be interacting with others outside of their networks when commenting on news stories and therefore might not feel as constrained by their social ties to adopt uncivil rhetoric” (Rossini, 2019, p. 236). The attitude with which citizens enter discussions in different arenas may be an additional explanation for this. When debating under separated contexts, users seek these exchanges rather actively (Springer et al., 2015). Under collapsed contexts on Facebook, in contrast, they may react more spontaneously to public content that appears on their timeline and appeals to them emotionally. Individuals discussing under separated contexts might thus be better prepared to control their behavior and refrain from toxic outrage. Theoretically, this finding overlaps with the liberal democratic understanding that the separation of public and private spheres mitigates democratic conflict. If individuals bracket their private convictions and relationships from public debates, liberal theory argues, they are more readily able to respect opposing views within the public sphere (Ackerman, 1989; Holmes, 1988).

In relation to the primary use function, we found that the level of toxic outrage in online user comments was higher in arenas that are used primarily for issue-driven debates with plural opinions than in forums that afford rather preference-driven, like-minded discussions. As hypothesized, this supports research “indicat[ing] that people are more motivated to use foul language when interacting with those who hold different views” (Maia & Rezende, 2016, p. 129). This is an important reminder that the mere confrontation with opposing positions in online discussions does not automatically lead to more constructive engagement but may in fact fuel hostility and opinion polarization. Likely, hearing different perspectives only fosters more civil debate when it is coupled with “apophatic listening” (Dobson, 2014) that is aimed at a clear understanding of what the other wants to say. To some degree, this counters agonistic theorist’s implicit assumption that public contestation as such, through the mere exposure to opposing views, can foster agonistic respect (Mouffe, 2013). At the same time, agonistic theorists would remind us to supplement this finding in future research by studying constructive siblings of toxic outrage, that is, passionate, sometimes impolite rhetoric which could make a beneficial contribution to online discussions (Jamieson et al., 2017).

Relative to the discovered country and platform effects, toxic outrage was comparatively more likely in news website comment sections in the United States and comparatively less likely in news website comment sections in Germany. This could be due to different content moderation styles in these countries, that may either reduce or encourage online incivility further (Ziegele et al., 2018). Moreover, the chance of a user comment to contain toxic outrage on the Facebook pages of partisan actors and alternative media was comparatively higher in Australia than in the other three countries, which may indicate that these actors provide discussion spaces for particularly radicalized individuals in this country. Again, these findings set the stage for a more in-depth investigation of country-related idiosyncrasies going forward.

On the platform level, this study is limited by the fact that by focusing on two particularly consequential socio-technical affordances, it necessarily disregards others. For example, as many platform providers have recently changed their policies and infrastructures for detecting and handling hate speech, their own role in fostering a more constructive online debate culture could be a future research focus. Furthermore, substantiating the above findings by investigating additional platforms is important. This is also true more generally for the topical contexts in which online discussions take place. In addition, to the benefit of measuring toxic outrage automatically on a large scale, this study did not zoom in on the different forms of toxic outrage online (Sobieraj & Berry, 2011). A more fine-grained analysis could generate more insights into which of the rhetorical strategies involved dominate over others in the digital sphere.

Ultimately, research into toxic outrage is concerned with the factors that foster constructive and respectful democratic engagements online. Our study suggests that user-generated debate flourishes in political environments that incentivize actors to strive for compromise, put relevant issues center stage and make room for public debate at a relative distance from purely social conversation. As interactive moderation (Esau et al., 2017; Ziegele et al., 2018) may be a promising way to deal with toxic outrage online, research and practice should focus specifically on developing civic technologies that can foster more constructive online engagements across the board, beyond the simple deletion of undesired posts.

Footnotes

Data Availability

The data underlying this article can be shared upon reasonable request.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was funded by the Deutsche Forschungsgemeinschaft (DFG, German Research Foundation) under grant number 260291564.