Abstract

Multiple-choice questions (MCQs) are widely used in large introductory courses. Recent research focuses on MCQ reliability and validity and overlooks questions of accessibility. Yet, access to the norms of academic discourse embedded in MCQs differs between groups of first-year students. We theorize these norms as part of the institutionalized cultural symbols that reproduce social and cultural exclusion for linguistically diverse students. A sociological focus on linguistic diversity is necessary as the percentage of students who use English as an additional language (EAL), rather than English as a native language (ENL), has grown. Drawing on sociology as pedagogy, we problematize MCQs as a medium shaping linguistically diverse students’ ability to demonstrate disciplinary knowledge. Our multimethod research uses two-stage randomized exams and focus groups with EAL and ENL students to assess the effects of a modification in instructors’ MCQ writing practices in sociology and psychology courses. Findings show that students are more likely to answer a modified MCQ correctly, with greater improvement for EAL students.

Multiple-choice questions (MCQs) are widely used in large introductory courses. Recent research focuses on constructing MCQs that are reliable and valid (see Abdulghani et al. 2017; D’Sa and Visbal-Dionaldo 2017; Tarrant and Ware 2012) and overlooks MCQ accessibility. A sociological focus on linguistic diversity in MCQs is essential as the percentage of postsecondary students who are multilingual and use English as an additional language (EAL) has grown across North America. International students—of which there are more than one million in the United States alone (Institute of International Education 2019)—drive this trend, along with first-generation immigrants and students raised in homes where English is not the primary language.

As we will discuss, research shows that the linguistic structure of MCQs contributes to performance gaps between students who use English as a native language (ENL) and who use EAL (Riccardi et al. 2020). Yet MCQ use is likely to persist (or increase) given growing enrollments in higher education (Marginson 2016) resulting in larger class sizes, increased pressure on faculty (Fairweather 2002), and more contingent teaching positions (Padilla and Thompson 2016). Even prior to COVID-19, the shift to blended and online learning increased the use of MCQs, particularly due to testing through learning management systems such as Canvas and Blackboard, where MCQs are the most frequently used assessment (Boitshwarelo, Reedy and Billany 2017). With increased online learning, we anticipate MCQs will continue in teaching platforms in the COVID-19 era. As such, instructors ought to think critically about how to best develop, use, and implement MCQs.

While MCQs may not be the best measure of student learning, and the standardization of assessment in prepackaged textbook supplements may be seen as part of the McDonaldization of education (Hayes and Wynyard 2002; Ritzer 1996), the purpose of this article is not to assess the utility of MCQs for measuring learning outcomes (e.g., Bjork, Soderstrom, and Little 2015; Smith 2017). Instead, we acknowledge the persistent use of MCQs and present evidence of the need to construct MCQs in a more accessible way to enable linguistically diverse students to demonstrate what they know.

Specifically, we outline the linguistic barriers in MCQs and provide evidence of an intervention instructors can implement in writing MCQs. We discuss whether this is useful for teaching with linguistically diverse classes or whether this intervention is also relevant for instructors teaching primarily with students who use ENL. Our multimethod research uses two-stage randomized exams and focus groups with EAL and ENL students to assess the effects of the modification in instructors’ MCQ writing practices in first-year sociology and psychology courses. The exam data address our first two research questions, and the focus group data address our third research question:

Does the linguistic structure of MCQs limit EAL students’ ability to demonstrate their disciplinary knowledge?

Is the effect of modifying MCQs of relevance exclusively for EAL students?

How do EAL and ENL students perceive differences between packed and unpacked MCQs?

Norms of Academic Discourse

During their initial year at university, students undergo socialization into academic discourse norms alongside course content. These norms are part of the institutionalized cultural symbols that maintain and reproduce social and cultural exclusion (Lamont and Lareau 1988:156). Bourdieu and Passeron (1990:495) highlight how these norms are embedded within educational evaluation used to classify students. Research on students’ linguistic engagement with academic discourse has focused on students’ ability to interpret longer texts and the development of complexity in their own writing while overlooking students’ experiences of closed-ended forms of assessment, such as MCQs (Miller, Mitchell, and Pessoa 2016; for exceptions, see Haladyna, Downing, and Rodriguez 2002; McCoubrie 2004). In researching implicit assumptions underlying MCQs, we address Lareau and Weininger’s (2003:588) assertion that “studies of cultural capital in school settings must identify the particular expectations . . . by means of which school personnel appraise students.” MCQs are an example of what Bourdieu (1984, 1991) refers to as cultural capital—different groups of students bring different capacities to decode MCQs.

Bourdieu’s approach shares much with Basil Bernstein’s (1973, 1975, 1996) work on social and cultural reproduction. Bourdieu and Bernstein emphasize the “centrality of social position in language and consciousness” (Collins 2000:66), which Bourdieu and Passeron develop through cultural or linguistic capital and linguistic habitus and which Bernstein describes through language codes. Both arrive at the assumption that children are taught different ways of speaking at home (Lamont and Lareau 1988; Lareau 2011). Some children arrive at school with language that is more attuned to academic discourse, whereas others have to adapt to a new set of linguistic codes. As a result students (and their parents) are not positioned in the same way in relation to academic norms, potentially impeding their “ability to conform to institutionalized expectations” (Laureau and Weininger 2003:588).

Reading and writing in academia are linguistically layered (Biber and Gray 2010; McCabe and Gallagher 2008; Staples et al. 2016), requiring students to engage with implicit principles governing symbolic communication (Bourdieu, Passeron, and de Saint Martin 1994). Like many forms of communication, academic texts contain linguistic codes (Bernstein 1971). We refer to these codes as “packed” and “unpacked” (see example later). Authors employ unpacked codes by making meanings and norms explicit when engaging readers who may not have a common orientation or understanding of the text. When shared norms are taken for granted, packed codes function to make communication more efficient by concisely conveying more information through dense language. Academic discourse, particularly in written form, operates primarily through packed codes. Note that in distinguishing between packed and unpacked codes, we are not suggesting that certain linguistic styles are superior. Rather, we acknowledge that schooling prefers a particular code style.

We interrogate the norms of academic discourse, specifically the operation of packed codes, within MCQs. The sociology and psychology instructors in this study collaborated with academic English instructors to identify linguistic features in their MCQ assessments that may act as barriers to students’ demonstration of knowledge. The identification process was grounded in both their experience instructing students who use EAL and the literature on functional grammar and functional linguistics (Halliday and Matthiessen 2014). Two linguistic features of MCQs were modified: nominalization and vocabulary.

Packed MCQ stem: The form of emotion management characterized by the commodification of people’s deep acting inducing a sense of alienation is . . . Unpacked MCQ stem: There are different forms of emotion management. In one form, people’s deep acting is commodified. This deep acting causes people to feel alienated. What is the name of this form of emotion management?

The packed MCQ stem consists of one highly dense nominal group, featuring pre- and extensive postmodifications to a head noun. In the unpacked MCQ stem, we modified and expanded the nominal group into three distinct and accessible statements. For a full linguistic breakdown of an MCQ question stem, see Riccardi et al. (2020:3).

Our second MCQ modification involves vocabulary. We replaced infrequently used vocabulary that is not discipline specific (i.e., not a course concept). The team used the Corpus of Contemporary American English (2019) to identify the relative frequency of specific words in written English. Infrequent words were replaced with more frequently occurring synonyms. The assumption is that frequently used words are more easily recognized and comprehended. In the previous MCQ example, the infrequently occurring word

It is important to consider the linguistic accessibility of MCQs given that students are likely to encounter this form of assessment early in their postsecondary education when they are undergoing socialization into the norms of academic discourse. Furthermore, educators do not tend to teach or make explicit the sentence-level norms of dense noun structures common to MCQ or academic writing. While sociologists have published articles in

Linguistics scholars have documented connections between the complexity of test items and cognitive burden for EAL students, potentially contributing to a performance gap between EAL and ENL students (Abedi and Gandara 2006; Abedi, Hofstetter, and Lord 2004; Abedi and Lord 2001; Parkes and Zimmaro 2016). Riccardi et al. (2020) demonstrate the importance of MCQ linguistic accessibility. Moreover, within the field of measurement and assessment, linguistic complexity is important to consider as a part of the validity and reliability of item/test writing to ensure clarity and prevent construct-irrelevant variance, or the presence of extraneous variables that affect the meaningfulness and accuracy of assessments (Abedi 2016). In our study, the confusion caused by linguistic complexity in MCQs can be considered a form of construct-irrelevant variance. What is unique in our context is the possibility to actually investigate and mitigate construct-irrelevant variance through the comparison of student performance on both packed and unpacked MCQs. We see this study as a way to meet the growing demand to investigate response processes of assessments as put forward by scholars like Zumbo and Hubley (2017).

Institutional Context

This research was conducted at a large North American research university with a focus on internationalization. International students constitute 27 percent of the student body (University of British Columbia 2021). In 2014, the university launched a pathway program for first-year international students who use EAL. Pathway students meet all university entrance requirements other than English language competency (assessed based on TOEFL, IELTS, CAEL, or IB English scores). Pathway students spend one year in the program taking first-year university courses while receiving embedded English support. Upon successful pathway completion, students are admitted to their second year of study at the university.

The instructors in the study are sociologists and psychologists who taught in both the pathway program and their home department (Sociology or Psychology). Each instructor taught multiple sections of either Introduction to Sociology or Introduction to Psychology, with at least one of their sections offered exclusively for the pathway program. Instructors kept their course content and exams the same across all sections, enabling us to investigate the research questions.

Method

Research Questions

We pursued three research questions. We address Questions 1 and 2 through two-stage randomized MCQ exams in an experimental design, and we pursue Question 3 through inductive focus groups with a subset of the students.

Does the linguistic structure of MCQs limit EAL students’ ability to demonstrate their disciplinary knowledge?

Is the effect of modifying MCQs of relevance exclusively for EAL students?

How do EAL and ENL students perceive differences between packed and unpacked MCQs?

We hypothesized that EAL students would be more likely to answer an MCQ correctly when it was unpacked (without changing the content or difficulty of the course material tested). We also hypothesized that all students would be more likely to answer an unpacked MCQ correctly but with greater improvement for EAL students.

Two-Stage Randomized Exams: Overview

Our goal was to contrast student performance on packed and unpacked MCQs by randomizing these two types of MCQs, and the order in which they were presented to students, in introductory sociology and psychology tests. We administered the questions on multiple exams to control for learning effects or novelty artifacts. We used students with differing levels of language proficiency to test the impact of MCQ design on students’ likelihood of answering a question correctly. Specifically, we draw upon a sample of EAL international students enrolled in first-year sociology and psychology courses in the pathway program alongside a linguistically diverse student body at the same university taking the same courses, tests, and instructors who are not in this transition program (to be referred to as “direct entry”) across two terms with three instructors. We analyzed data from three psychology course sections and three sociology course sections in term 1 and three psychology course sections and two sociology course sections in term 2.

Two-Stage Randomized Exams: Sample and Procedures

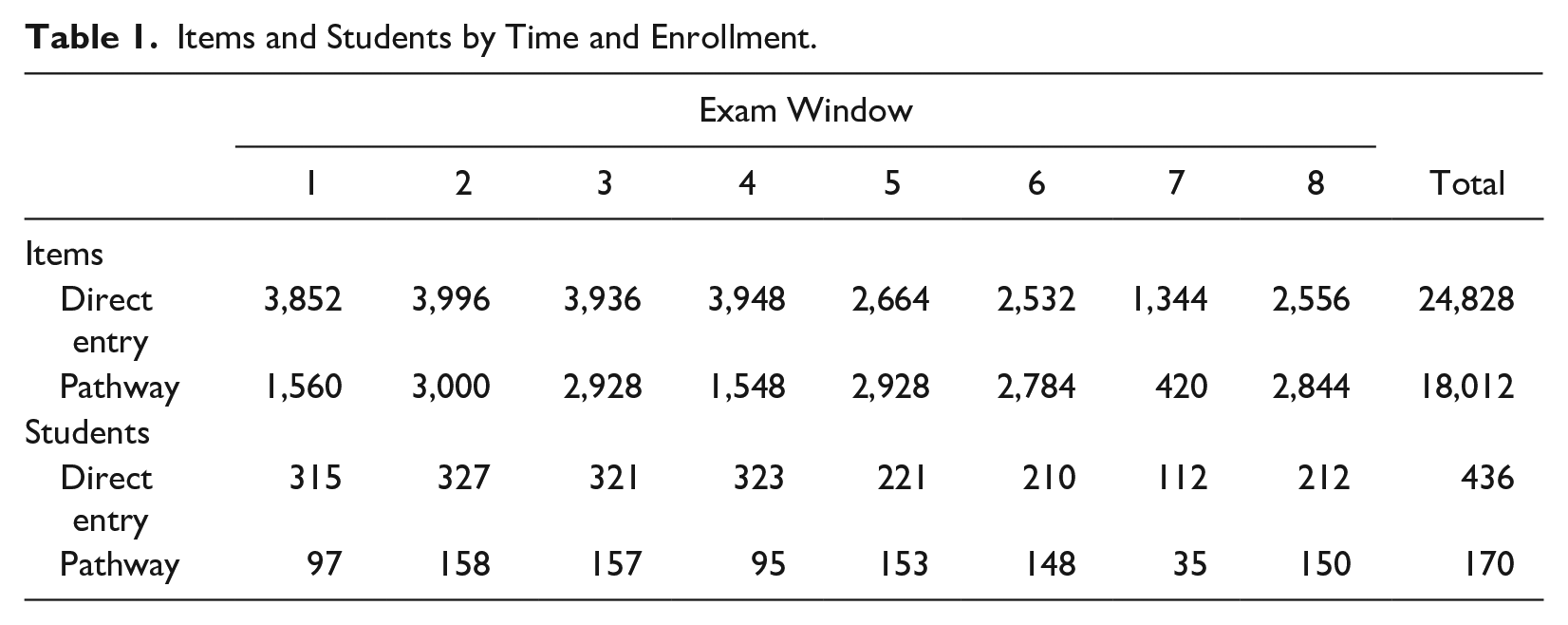

Our sample consists of 606 unique students: 170 pathway students, which is roughly 85 percent of the pathway cohort, and 436 direct entry students (see Table 1). We did not collect demographic information from our study participants as it is not customary (and often discouraged) in the case of pedagogical research at our institution unless there is strong justification. Instead, we present here general demographic data of the student body. Students in the pathway and direct-entry programs had the same admission requirements, including high school grades and prerequisite courses, and were academically comparable, with the exception of English language test scores: Students in the pathway had lower English language scores upon admission to the university. Pathway students have diverse citizenship, coming from over 50 countries, with 73 percent from China the year of the study. In the broader university, the student body consists of 26 percent international students, with China being the top country of citizenship (Planning and Institutional Research Office 2021). Across the university, 56 percent of undergraduate students are women.

Items and Students by Time and Enrollment.

Students were recruited from 11 different classes in the 2017-2018 academic year: In term 1, two direct-entry sociology courses, one direct-entry psychology course, one pathway sociology course, and two pathway psychology courses were used. In term 2, one direct-entry sociology course, one direct-entry psychology course, one pathway sociology course, and two pathway psychology courses were used. The courses were taught by three sociology and psychology faculty. As noted earlier, each faculty member taught the same course, with the same exam, in both the direct-entry and pathway programs.

Two-Stage Randomized Exams: MCQ Assessment

We are testing the effect of unpacking MCQs on a student’s ability to answer the question across eight possible exam windows throughout the year. The unit of analysis is at the item or question level of the MCQ and is not at the student level. In the first exam window, there were 3,852 exam questions asked in direct-entry courses compared with 1,560 in the pathway. Table 1 provides a summary of the number of items and students included for each exam window.

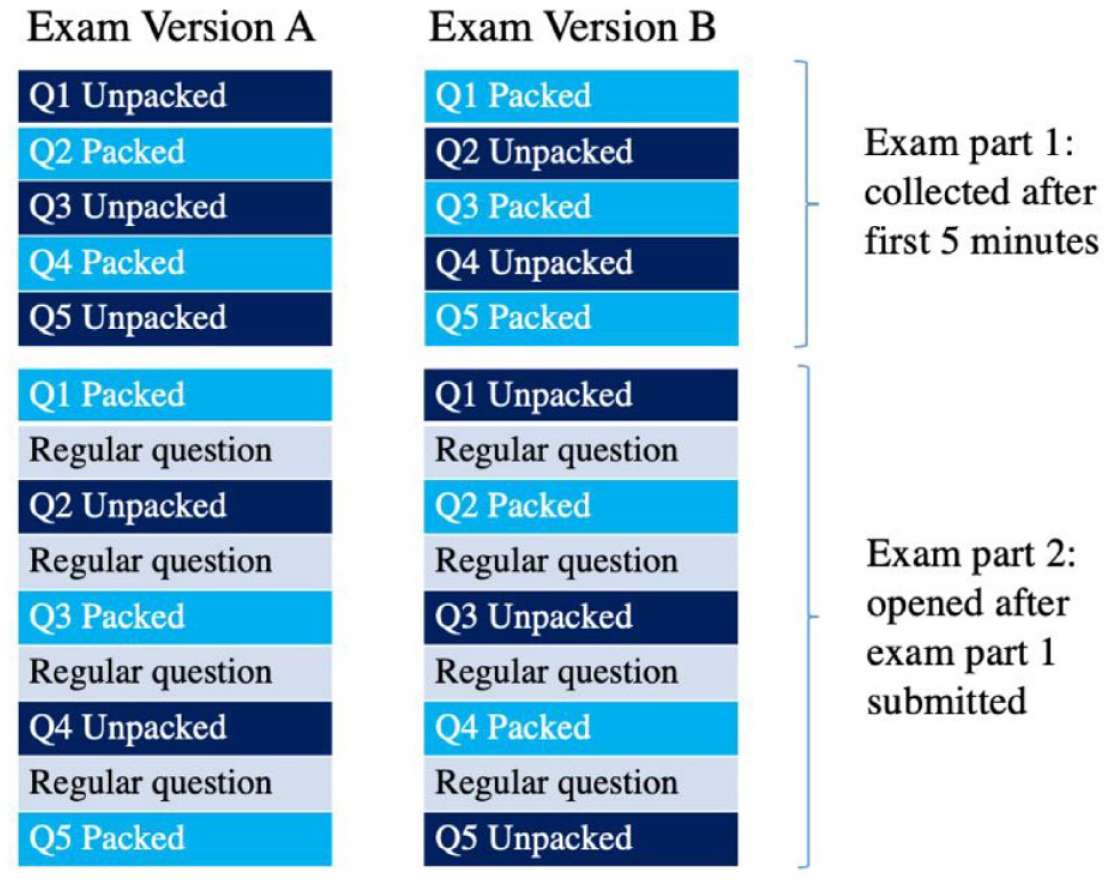

Instructors were free to administer (or not) an exam in each testing window for a maximum of eight exams. We use the exam window in our analysis to control for learning effects. For each exam, there are a total of 10 or 12 items under investigation: five or six questions with both a packed and an unpacked question. Selected questions were unpacked by EAP instructors after the course instructors had drafted their exams. All students completed both the packed and unpacked versions of the items using a randomized, in-person, two-stage paper exam, where one half of students would receive two or three of the packed questions first in addition to two or three of the unpacked questions relating to different content, return their exam part 1, and then be administered the remaining complementary set of questions in addition to the other portion of the exam (exam part 2). This ensured there was no “learning” or bias by some students receiving one set of questions prior to seeing its complement. Likewise, two different versions of the exam were randomly administered, with reverse order of packed and unpacked questions appearing in exam versions A and B. Students could not review or revise part 1 of their exam after returning it. All questions counted towards students’ final exam score; however, only study questions were analyzed.

Students were required to complete the exams as a part of their course grade; however, they were given the option to include their data in the study. To reduce coercion, a research assistant visited the classes at the beginning of the term (before any exams had occurred), managed consent, and had no responsibility for marking. While all students completed the exams, data were analyzed only for those who had provided consent in the first month of the given term. Course instructors were not privy to which students consented until final course grades had been submitted. The study was approved as minimal risk by the behavioral research ethics board.

Focus Groups

Focus groups were conducted to document how students experience MCQ sentence structure. Data were collected during three 90-minute focus groups in March 2018. All students in the five courses running that term (two direct entry and three pathway) were invited during an in-class visit by a research assistant after the instructor left the room.

Focus groups were designed inductively as little existing work investigates students’ perceptions of MCQ sentence structure. Participants were advised that the qualitative research framework positioned them as “experts” on their testing experiences. Participants individually ranked a series of MCQs based on perceived difficulty, after which they discussed their rankings collectively. The purposeful interaction created opportunities for participants not only to articulate their perception of particular MCQs but to contrast their perception with that of their peers (Kitzinger 1994; McLafferty 2004; Morgan 1997). Question prompts were open-ended, and facilitators who were not teaching the students guided the sessions. Students adopted pseudonyms and were told no identifying information would appear in final reports.

Separate focus groups were held for direct-entry and pathway students to reduce discomfort EAL students in the pathway might feel. Given the power dynamics surrounding oral language use, similarities in language competence between participants may have increased participant ease and willingness to share (Morgan 1997). Two focus groups ran for participants in the pathway program (with four and five participants, respectively), and one ran for direct-entry students, which contained four participants. Sessions were audio recorded and transcribed.

Analysis

Quantitative analysis: Two-stage randomized in-person paper exams

To investigate the role of unpacking MCQs on students’ ability to demonstrate their domain knowledge, we used multilevel logistic regression modeling while controlling for (nested by) student across exam window. Both unpacked and packed MCQs were assumed to have a specific, albeit unknown, level of difficulty based upon content and student response processes. This is why it was inappropriate to use students as the level of analysis, which would assume all questions are equally difficult.

Given the limited testing windows (eight maximum), and that not all instructors had data for every window, we do not attempt longitudinal analyses. However, recognizing that the program structure of the pathway is specifically targeted to improve students’ academic English, we deemed it necessary to account for time in the nesting of our model using random effects. Random effects of students are also included to account for the inherent variability in student ability and motivation. As an initial analysis, we also investigated whether there was any difference in exam format (exam version A or B) using means testing. No detectable difference was observed, which is consistent with our expectation. As such, we did not control for the exam type in our model given our already limited power.

We included three categorical variables in our analysis: an indication of student participation in the pathway (coded 1 if enrolled in the pathway and 0 otherwise) and two fixed-effect variables to account for instructor-level effects (instructor A and instructor B, coded 1 if students had that instructor and 0 otherwise), with reference to a randomly selected third instructor. For the sake of our analysis, we are not interested in different instructor pedagogies, although we acknowledge some inherent differences. However, given our sample, we are statistically unable to separate instructor-level from disciplinary effects; as such, instructor variables are included as statistical controls and are not intended to be used to assess teaching.

Logistic regression analyses are in units of logit odds, although we report and focus our interpretation using odds ratio values. Odds can range from 0 to positive infinity, where a value of 1 corresponds to “no effect,” meaning a student is equally likely to score as well on an unpacked item as on the packed item, and an odds ratio of 1.5 suggests the odds are 1.5 times greater (or 50 percent more likely). The main effect of unpacking the items was assessed with a

Visualization of two-stage randomized in-person paper exams.

Qualitative analysis: Focus groups

Focus group audio recordings were transcribed by one assistant. Participants’ names were replaced with pseudonyms. The first author coded the transcripts through inductive thematic analysis (Maxwell 2012:105), beginning with a series of unstructured research memos (Groenewald 2008; Miles and Huberman 1994) written through multiple independent readings of the transcripts, followed by memo comparison. Transcripts were reviewed line by line, and inductive empirical codes were applied (Gibson and Brown 2009:132–33).

Results

Multilevel Model

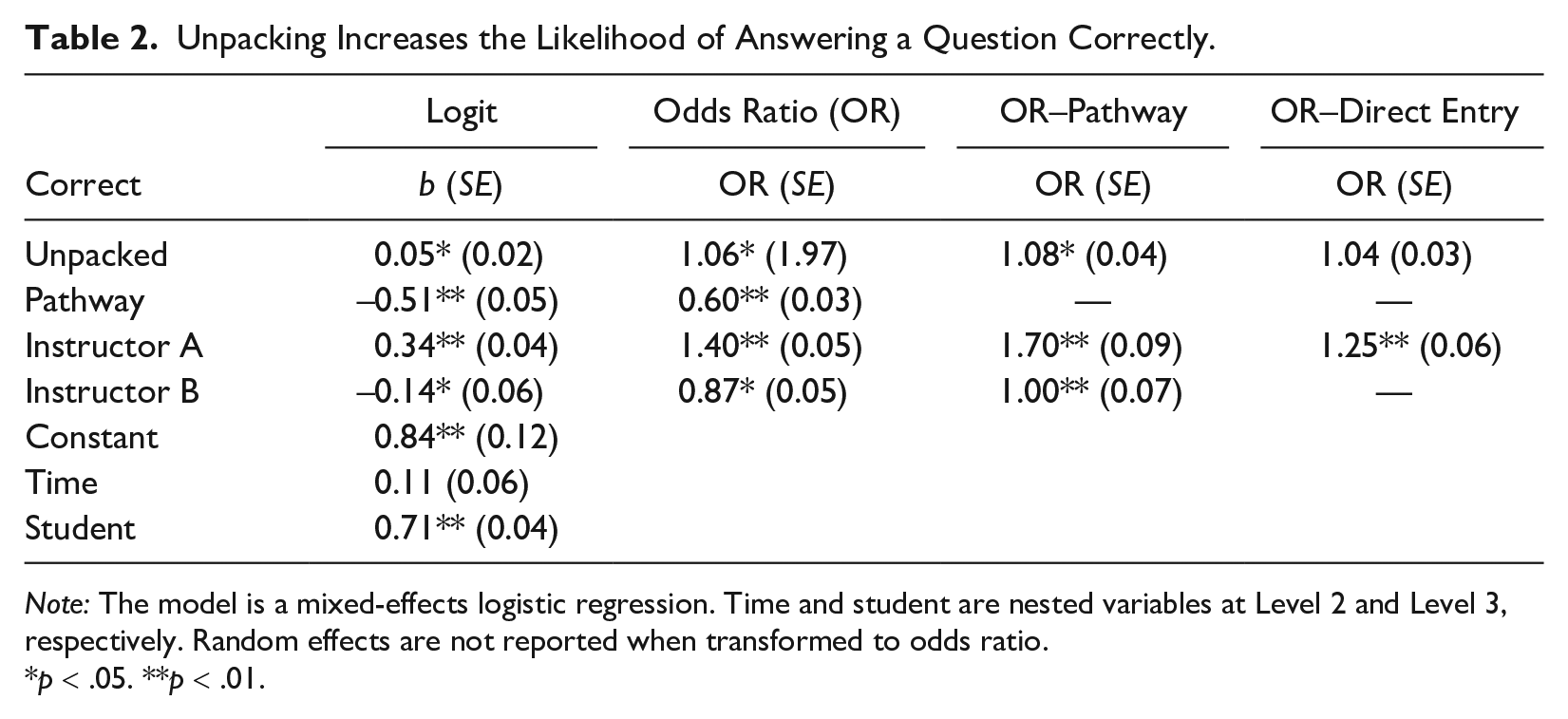

Table 2 provides the results from our multilevel logistic regression models. Results suggest that unpacking increases all students’ ability to demonstrate their knowledge by answering more MCQs correctly but are mostly driven by pathway students. On average, all students were 6 percent more likely to answer an unpacked question correctly compared with packed questions. Considering pathway and direct-entry students separately, results support our initial hypothesis that students in the pathway benefit more from reduced complexity of MCQs than direct-entry students.

Unpacking Increases the Likelihood of Answering a Question Correctly.

Note: The model is a mixed-effects logistic regression. Time and student are nested variables at Level 2 and Level 3, respectively. Random effects are not reported when transformed to odds ratio.

The coefficient for unpacked questions is significant for the pathway (odds ratio of 1.08) and nonsignificant for direct-entry students (odds ratio of 1.04). Pathway students were 8 percentage points more likely to answer unpacked items. This effect is notable given the smaller sample size of the pathway. Students in the pathway program answer fewer questions correctly compared with direct-entry students. As such, there could be ceiling effects for direct-entry students on their ability to demonstrate increased proficiency with unpacked questions. However, there is no support that students in the pathway benefit more than direct-entry students when we include an interaction term of the unpacked item by participation in the pathway, which was nonsignificant in the model (results not reported), contrary to our initial hypothesis. It could be that we lack enough statistical power to include additional variables in an already saturated model. We examined MCQ questions by time period, packed/unpacked, program, and discipline and performed

Student Perceptions of MCQs

Students perceived that unpacked MCQs contained more information than packed MCQs. Yet, the additional words required for this unpacking were a burden for pathway students. Pathway students also identified the need to practice engaging with MCQs prior to an assessment. Last, all participants struggled to isolate MCQ sentence structure in relation to other elements of difficulty in MCQ question stems.

Finding 1: Students perceived that unpacked MCQs contain more information

Focus group participants were asked to compare a set of MCQs in both their packed and unpacked forms. The main area of comparison they identified was the number of words in the question stem. Participants noted that the unpacked MCQ versions had more words and also perceived that these words provided more information and context:

The multiple-choice questions have more details . . . when asking the question, so that is really helpful, more details . . .

And when you say include lots of details, what could be an example?

. . . Kind of develop the question. Just, not having a very, like, a five-word question for example. (pathway)

Here Andrea equates more words with more details to interpret the meaning of the MCQ stem, even though the unpacked MCQs parallel the packed questions in content. This suggests that simplifying the MCQ stem may help shift the question from relying on packed codes to unpacked codes, perceived by students to be more accessible (Bernstein 1971). This finding was consistent across pathway and direct-entry students.

Finding 2: Unpacked MCQs take longer to read

The inclusion of additional words in an MCQ stem requires more reading time. When asked to compare a packed and unpacked version of the same question, pathway student Jung explained he would prefer the packed MCQ due to time pressure:

I preferred the first one. Because the second one is really long. And, I have time limitations, so, I don’t want to waste time reading the question, this long question.

(pathway)

Direct-entry participants noted a similar relationship between number of words and time to comprehend the question yet did not find this to be an issue:

You spend more time reading the question and I think, if time is something that plays an important part in test writing, then wasting the extra 5 to 10 seconds reading the question could screw you over for the next two questions.

Do you often feel rushed when you’re doing MCQ?

No. (direct entry)

Although direct-entry students expressed an awareness of MCQ reading time, they did not feel it was a challenge. This finding suggests that the positive effects of unpacking MCQs may be increased if EAL students had more time. It also indicates that instructors must be selective about which content to unpack, potentially keeping less important content nominalized to avoid unnecessarily long questions. In addition, instructors who do choose to unpack their MCQ stems into more clauses (and sentences) might need to recalculate how long their students will need.

Finding 3: EAL students attached importance to practice MCQs

Pathway students stressed the significance of practicing MCQs and of reviewing each MCQ after the test, suggesting that they perceived exposure to this form of assessment as important for their success. Dean, for example, argued that students should . . . study more on how to interpret the questions and highlight important parts of questions that actually can help to solve, or understand what is the question asking for them. (pathway)

This need for practice and repetition was echoed by other pathway students but not by direct-entry students. Some pathway students, when asked how instructors should use their resources to improve students’ ability to answer MCQs, felt that changing the question was less important than giving students opportunities to work with practice questions and to review the MCQs posttest. Students felt that it was beneficial to study the material in the same format as the assessment. This raises questions about whether it is MCQ structure that should be modified in introductory-level assessments or whether an alternative or complementary intervention would be to provide more embedded support for students on how to engage with this form of assessment (Goldenberg 2008; Janzen 2008).

Finding 4: Students struggled to isolate MCQ linguistic structure

Overall, students in all groups spent little time explicitly reflecting on MCQ sentence structure, even when prompted. Focus group questions were organized around a worksheet with MCQs that students ranked on difficulty, yet students tended to revert to discussion about how instructors and students approach these kinds of tests in general:

[For instructors to make these MCQs] more understandable, is there anything they could do?

I think teachers are trying their best to make it understandable for most of the students. And, it is quite understandable that sometimes, eh, professors [are] trying to trick the students. . . . However, [the] student also has to be ready to identify the trick that has been hidden in plain sight. Most of the misunderstanding comes from students, it’s not totally grasping the main idea of the topic, so it all boils down to study hard, study smart.

(pathway)

In his response, pathway student Dean articulates that while instructors try to make questions accessible, they are sometimes trying to mislead students into selecting an incorrect response. Although Dean does not clarify whether the root of this MCQ “trick that has been hidden in plain sight” is conceptual or linguistic in nature, he places the responsibility on students to enhance their preparation. The advice to “study hard, study smart” does not distinguish between a student’s effort to understand and retain course content and their ability to decipher what is being asked in the MCQ. Like Dean, participants consistently paid more attention to study strategies and techniques for identifying the right MCQ response, overlooking possible issues in the structure. This may be indicative of a blind spot in students’ perceptions of and preparation for MCQ assessment, suggesting a greater need for MCQ literacy.

Discussion and Conclusion

This article draws upon sociological insights about language and the reproduction of inequality (Bourdieu and Passeron 1990) to explore how MCQs mediate linguistically diverse students’ ability to demonstrate disciplinary knowledge. We find a sociology-as-pedagogy (Halasz and Kaufman 2008) approach useful for considering MCQs, the standardized nature of which is often equated with objectivity in comparison with more open-ended assessment forms. Yet, as Bourdieu (1991) cautions, social inequalities can operate more effectively when they are perceived to have been alleviated.

Large introductory courses (where MCQs tend to be widely used) are the first form of gatekeeping for first-year students. By improving assessment tools so they measure what instructors think they are measuring, educators can support students in demonstrating their knowledge of course material rather than their ability to navigate implicit norms of academic discourse. The 8-percentage-point benefit we found of unpacked MCQs is particularly useful in the high-stakes pathway program, where future fields of study are, for some students, restricted by grades. Even if MCQs were worth only 50 percent of a student’s exam grade, this could easily translate into their attaining a 68 percent versus a 72 percent. By considering the benefits of reduced linguistic complexity of MCQs in a university pathway program to support EAL students jointly with direct-entry university students, we are able to better understand item bias. Likewise, by considering the broader ecological setting and background of students in our investigation of MCQs, we contribute to a more robust ecological model of item response (Roberson and Zumbo 2019; Zumbo et al. 2015).

The findings have a number of implications. The quantitative data indicate that there are small benefits of MCQ unpacking for both EAL and ENL students: Both groups are more likely to answer an MCQ correctly after it has been unpacked. There is a likelihood that benefits are greater for EAL students, though this requires further study. These data show that inclusive MCQ assessment practices are of relevance not only for educators teaching EAL students but also for those teaching a linguistically heterogenous student body. Instructors may follow much of our unpacking method by checking word frequency in the Corpus of Contemporary American English (2019) and by breaking up dense sentences. Peer review of MCQs is also recommended (Tarrant and Ware 2012). Colleges can offer MCQ writing workshops and incorporate broader discussion of linguistic diversity in assessment (Piller 2016).

Beyond the quantitative data, the focus group findings add nuance to how MCQ assessment procedures can be adopted classwide to benefit EAL students. Unpacked MCQs were perceived by students to contain more content, thus shifting from a packed to an unpacked code. Yet, unpacking lengthened the MCQ stem, presenting challenges for EAL students who lacked time. Instructors are encouraged to think carefully about which components of the question require unpacking and which do not. A related consideration is the duration of time allowed for MCQ tests. Though both EAL and ENL students are aware of exam time pressure, only the EAL participants reported needing more time to read what the MCQ was asking. As such, instructors may build additional comprehension time into MCQ assessments with a linguistically diverse student body. Time is also relevant to inclusive assessment interventions. In one intervention, Abedi et al. (2004) encouraged the provision that English dictionaries be provided to reduce the burden of English comprehension. However, utilizing a dictionary undoubtedly cuts into valuable test writing time.

The focus groups demonstrated that EAL students desired more practice with MCQs to become familiar with their linguistic structure. This finding suggests that pretest practice MCQs may offer linguistic benefits in addition to content retention (Thomas et al. 2020). To increase MCQ literacy, instructors may also give students access to review their exams after grades are returned.

Future research on inclusive MCQ assessments requires investigation into which elements of MCQs support students’ ability to demonstrate their knowledge. Likewise, we know direct-entry courses can contain a large amount of heterogeneity of students, including linguistic background. Future data collection would benefit from more precise understanding of student demographics to investigate interaction effects and to determine when and how MCQ unpacking can reduce construct-irrelevant variance in assessment writing.

This research required sociology and psychology instructors to critically engage with linguistics in the process of unpacking MCQs. Through this process we learned that linguistic complexity and barriers are organized differently within written and verbal communication. While verbal communication is rooted in greater causal complexity, with spoken language typically consisting of shorter clauses with more emphasis on the verb group (or action), written texts—and especially, academic discourse—tend to focus more on abstract ideas, which requires greater nominal complexity (Halliday 2007; Riccardi et al. 2020). Distinctions of this nature are of interest to sociologists, who have tended to explore, for example, class-based differences in interaction without distinguishing between the contexts in which these interactions are textually or verbally mediated (e.g., Lareau 2011). Future research may bridge sociology and linguistics to explore the complexity related to assessments and the reproduction of inequalities.

Footnotes

Editor’s Note

Reviewers for this manuscript were, in alphabetical order, Anne F. Eisenberg, Daphne Pedersen, and Jerrod Yarosh.

Funding

We are grateful for support from the SoTL SEED grants at the University of British Columbia Center for Teaching, Learning, and Technology for partially funding this work.