Abstract

Keywords

Nationwide, a majority of first-time students in community colleges are placed in developmental coursework in math, English, or both, and few of these students successfully navigate the curriculum to complete college-level courses in these subjects (Bailey, 2009). However, evidence indicates that a meaningful percentage of these students could achieve passing grades in college-level courses despite being assessed as needing developmental instruction, with the result that they are underplaced in the curriculum (Belfield & Crosta, 2012; Scott-Clayton, 2012). Consequently, researchers, policy makers, and college administrators increasingly are focusing their attention on improving assessment and placement processes to more accurately determine students’ capabilities and avoid unnecessary skill remediation with its corresponding risk of premature departure from college (Scott-Clayton, Crosta, & Belfield, 2014).

A number of studies have examined the use of alternative or supplementary information to more accurately place community college students in math and English. These studies generally indicate that high school achievement provides predictions of course outcomes in math and English that are superior to predictions based solely on placement exam scores (Bahr, 2016; Ngo & Kwon, 2015; Scott-Clayton et al., 2014). Thus far, however, research on this subject has shed little light on the particular measures of high school achievement that are the best predictors of course outcomes, how these predictors differ between math and English, and how they vary across the levels of skill represented in the coursework that composes the curriculum of each subject (e.g., in mathematics, statistics vs. college algebra vs. precalculus). Moreover, prior research provides only limited information about how these measures should be applied when placing students in math and English courses, particularly the thresholds on the measures above which achievement of a passing grade in a particular course would be expected.

This study uses a data mining technique known as decision tree analysis to investigate these issues, drawing on statewide high school and community college data from California. From a range of high school achievement measures, cumulative high school grade point average (GPA) emerges as the most consistently useful measure for identifying students who are likely to pass a given math or English course, sometimes supplemented by specific indicators of progress in the high school math or English curriculum. Building on the results of the analyses, placement recommendations are derived that reduce the risk of student underplacement relative to standardized placement exams and that are easily applied by community colleges in a variety of assessment and placement systems. These recommendations were validated with two separate testing samples.

Background

Access Versus Success

Increasing access to higher education has been a foundational principle of community colleges from their beginning over 100 years ago. To that end, most community colleges exercise relatively nonselective admissions, welcoming nearly all students who apply (Bahr & Gross, 2016). This open-door policy has created a contradiction for these institutions, however, because the majority of students who enroll are deemed to be underprepared for college-level coursework or have other circumstances that increase their likelihood of leaving college without achieving their educational goals (K. L. Hughes & Scott-Clayton, 2011; Juszkiewicz, 2014; Parker, Bustillos, & Behringer, 2010). It perhaps is not surprising then that the rates at which community college students complete postsecondary credentials or transfer to 4-year institutions tend to be low, compared with the graduation rates of 4-year institutions.

In recent years, scholars have questioned whether a promise of access to higher education, when combined with a comparatively small probability of completing college, can be considered access at all (Bahr & Gross, 2016; Shulock & Moore, 2007). This shift in discourse concerning community colleges has resulted in a sizable effort by scholars, administrators, policy makers, foundations, and other stakeholders to identify the institutional policies and practices that contribute to the loss of so many students along the path to a college degree. Because of this effort, it is now clear that many students become mired in developmental curricula in math, reading, or writing and never reach college-level coursework in these subjects, foreclosing educational opportunities in many fields of study.

Developmental Education

Developmental education, sometimes referred to as remedial or basic skills education, has been the most common response of community colleges to the perceived shortfalls in academic skills among newly admitted students (Scott-Clayton et al., 2014). Nationally, over two thirds of community college students enroll in at least one developmental course (Bahr & Gross, 2016), but an even larger percentage of community college students are placed into developmental coursework, with some students electing not to enroll (Bailey, Jeong, & Cho, 2010). Thus, the considerable majority of community college students are affected by developmental education, either through the time and cost of taking developmental courses or by being prevented from enrolling, or advised not to enroll, in college-level coursework because they have not completed the prerequisite developmental courses.

Assessed needs for developmental education vary greatly across community college students. Some students are placed just one level below college readiness in math, reading, or writing and need only complete one developmental course before advancing into college-level coursework in that subject. Other students are placed two or more levels below college readiness in one, two, or all three subjects and may require a year or more to complete the developmental sequences. Developmental courses usually are presented by colleges as credit bearing (i.e., students earn credits in the courses), but these credits typically do not count toward a postsecondary credential. Thus, a student may spend considerable time and money in developmental education without making progress toward a degree.

Moreover, although developmental education is intended to prepare students with the reading, writing, and mathematics skills deemed necessary to pass college-level courses, evidence regarding the effectiveness of remediation is mixed at best (Attewell, Lavin, Domina, & Levey, 2006; Bahr, 2008; Bettinger & Long, 2005). Long developmental sequences, in particular, present a substantial obstacle to students’ progress and attainment, hindering rather than helping some students (Bahr, 2012; Hayward & Willett 2014). Thus, it is clear that developmental education―a primary means through which the promise of access to higher education is intended to be fulfilled―is not always helping students in proportion to the direct and opportunity costs that it presents (Burdman, 2012). More research is needed on the ways in which developmental education deters, rather than encourages, access and success.

In Search of a Solution

Efforts to improve developmental education and thereby increase community college students’ chances of completing college-level coursework and graduating have taken several forms. Among these, stakeholders have sought to redesign developmental curricula to accelerate the rate at which students who are directed to remedial coursework are able to advance into college-level coursework (Bailey et al., 2016; Burdman, 2012; Hayward & Willett, 2014; Kalamkarian, Raufman, & Edgecombe, 2015; Pearson, 2014).

Nodine, Dadgar, Venezia, and Bracco (2013) describe three models of acceleration. The first is corequisite remediation, sometimes referred to as mainstreaming with supplemental support (Bailey et al., 2016), in which students who are assessed as needing remediation are placed directly into college-level coursework but with supplementary support courses. Evidence indicates that corequisite remediation can improve the rate at which students complete college-level coursework in a subject (e.g., Cho, Kopko, Jenkins, & Jaggars, 2012; Jenkins, Speroni, Belfield, Jaggars, & Edgecombe, 2010). The second is developmental compression in which remedial curricula are modified to reduce the number of courses required to reach college-level coursework (Edgecombe, Jaggars, Xu, & Barragan, 2014). In math, this often is accomplished by differentiating a curricular pathway to degrees in the sciences and engineering, requiring advanced mathematics, from a pathway leading to other programs of study. The last is developmental modularization in which traditional remedial courses are replaced with “discrete learning units” (Pearson, 2014, p. 5) intended to strengthen particular competencies (Bickerstaff, Fay, & Trimble, 2016).

While it is unquestionably important to improve developmental curricula, it also is incumbent on colleges to ensure that assessment and placement processes are as accurate as possible. Of particular importance is minimizing the number of students who are deemed unprepared for college-level courses despite having the ability to achieve passing grades in these courses. Inaccurate placement of this sort is an obstacle in students’ paths to credentials, elevates their risk of premature departure from college, and contributes to the sometimes-unfulfilled promise of opportunity offered by community colleges (Burdman, 2012). In other words, even minimal remediation, when unnecessary, is problematic. Hence, the decision to place a student in developmental coursework should not be taken lightly and should be based on a thorough assessment of students’ preparation and readiness for college-level coursework.

To assess students’ readiness, community colleges in many states rely heavily on standardized placement exams, such as ACCUPLACER® (Burdman, 2012; Fields & Parsad, 2012; K. L. Hughes & Scott-Clayton, 2011). Placement exams have noteworthy strengths and weaknesses. On one hand, they can be largely automated, making them relatively inexpensive to administer and requiring few support personnel (Jaggars, Hodara, & Stacey, 2013; Scott-Clayton et al., 2014; Sedlacek, 2004). Placement exams also have the appearance of consistency and even-handedness, which reinforces the perceived legitimacy of the placement process (Sedlacek, 2004).

On the other hand, placement exams provide only a narrow view of the complex concept of college readiness (Burdman, 2012). Evidence indicates that their capacity to predict performance in coursework is modest at best (Bahr, 2016), and that placement exams are more likely to underestimate students’ capabilities than to overestimate them (Scott-Clayton, 2012). As a result, some students who have the capacity to succeed in college-level courses instead are placed in developmental coursework, increasing their risk of departure from college.

The systematic underplacement of students may be driven in part by the fact that students frequently are unaware of the consequences of their placement exam scores until after they have taken the exams and, thus, do not prepare adequately (Fay, Bickerstaff, & Hodara, 2013; Hodara, Jaggars, & Karp, 2012; Nodine et al., 2013). Unfortunately, evidence indicates that simply notifying students of the consequential nature of the placement exams is not sufficient to improve performance (Moss, Bahr, Arsenault, & Oster, 2018).

Recognizing these weaknesses, community colleges across the country are exploring the use of alternative and supplemental measures of students’ readiness for college-level coursework (Bailey et al., 2016; Burdman, 2012; Education Commission of the States, 2015). Among these measures, high school GPA has been found to be a strong predictor of performance in college-level courses in math and English (Bahr, 2016; Scott-Clayton et al., 2014), a finding confirmed in 4-year institutions as well (Geiser & Santelices, 2007; Hiss & Franks, 2014). In addition, Ngo and Kwon (2015) found math achievement in high school (e.g., highest math course completed and grade achieved) to be a useful supplement to standardized exams for placing students in the math curriculum. J. Hughes and Petscher (2016) mention a number of other measures of high school achievement that might be examined in future research, such as credits completed in math or English, and when particular math or English courses were taken, among others.

The value of high school achievement for predicting performance in college courses likely stems from the fact that measures of high school achievement are based on information collected over a lengthy period of time by many instructors in a variety of learning contexts. Moreover, they reflect many means by which students may demonstrate their academic capabilities (e.g., exams, papers, oral reports), and they also capture a range of behaviors and skills that are necessary for success in school (e.g., timeliness, organization, diligence, planning). In contrast, placement exams are executed in just minutes and are largely unidimensional, reflecting a single mode of academic capability.

Measures of high school achievement are not without potential limitations, of course. Their applicability to older students who have been out of high school for a number of years, students who did not graduate from high school, students who were home-schooled, and students who did not attend a U.S. high school is unclear. Nevertheless, evidence indicates that high school achievement can be used to accurately place a large fraction of first-time community college students in the math and English curricula, reducing systematic underplacement resulting from reliance on standardized placement exams.

This Study

The body of research regarding the use of high school achievement measures to place students in the community college curriculum is growing, but several critical questions remain to be addressed. First, it is not clear which measures of high school achievement are the most useful for predicting performance in college-level coursework in math and English. For example, are broad indicators of academic achievement (e.g., overall high school GPA, 12th grade GPA) stronger or weaker predictors of performance in college-level math than are narrower measures of academic performance (e.g., highest successfully completed math course in high school, grade achieved in the most recent high school math course)? Moreover, if there are differences across subjects, do the indicators that are most useful for predicting performance in college-level math differ from those that are most useful for predicting performance in college-level English?

Second, the dichotomy of college-level versus developmental coursework belies the range of skill levels represented in the curriculum that composes these categories. Developmental math, for example, can be composed of four or more sequential courses, from arithmetic through intermediate algebra. College-level math includes college algebra, statistics, general education math, trigonometry, precalculus, calculus, and so on, and these courses differ meaningfully in the mathematical competencies demanded of students. Currently, research on the use of high school achievement measures to place community college students cannot speak conclusively regarding how consistent are the predictors of performance across the range of courses within a given subject. To illustrate with college-level math, the most useful predictors of performance in statistics may differ from the most useful predictors of performance in college algebra, which, in turn, may differ from the most useful predictors of performance in precalculus, and so on. Prior research has offered only limited insights into distinctions between college-level math courses (Bahr, 2016; Scott-Clayton, 2012).

Finally, once the most useful predictors of performance across the math and English curricula are identified, the thresholds of achievement on these predictors above which success in a given course is likely still must be determined. Phrased as a question, how may these predictors be applied across the range of courses in a given subject to maximize students’ chances of successfully completing a college-level course? For example, if high school GPA is determined to be an important predictor of performance in transfer-level English, how high must be a student’s high school GPA before achievement of a passing grade in transfer-level English is likely?

To summarize, continuing to advance research, policy, and practice concerning the use of high school achievement measures to place community college students in the math and English curricula requires answers to at least two major questions, which are as follows:

What are the useful predictors of performance in developmental and college-level math and English coursework, and to what extent do these predictors vary across the levels of skill represented in the curriculum in each subject?

What are the appropriate thresholds of achievement to apply when placing students in the math and English curricula?

This study addresses these questions using a data mining technique known as decision tree analysis and drawing on high school and community college data from California.

California Policy Context

The California Community College (CCC) system is composed of 113 community colleges and serves approximately 2.1 million students per year, accounting for about one fifth of all community college students in the United States (California Community Colleges Chancellor’s Office [CCCCO], 2017). Approximately 80% of newly admitted students in the CCC system are referred to developmental education (Mejia, Rodriguez, & Johnson, 2016). Latino/a and African American students are overrepresented, with 87% of new students in these groups enrolling in developmental education as compared with 70% of White students (Mejia et al., 2016). Low-income students, too, are overrepresented, with 86% enrolling in developmental education (Mejia et al., 2016).

Evidence indicates that the majority of CCC students who enroll in developmental math or writing ultimately do not complete a college-level course in the subject (Bahr, 2012). In a recent study, just over one quarter (27%) of developmental math students completed a college-level math course successfully, whereas less than one half (44 percent) of developmental English students completed a college-level English course successfully (Mejia et al., 2016).

The decision in Romero-Frias et al. v. Mertes et al. (1988) prohibited California’s community colleges from relying solely on standardized exams for student assessment and placement. Instead, the colleges must consider additional information about student academic readiness, referred to as “multiple measures” in the state guidelines (CCCCO, 2011, p. 4). Interpretations of this requirement and the resulting placement practices vary widely across colleges, but standardized placement exams remain central and frequently dominant in the placement process (WestEd, 2011). In fact, in a statewide survey, all of the responding colleges indicated that they rely at least in part on placement exams in math and English (Rodriguez, Mejia, & Johnson, 2016).

In addition to placement exams, colleges reported using an average of two other measures of readiness in math and English. Roughly three in five colleges supplement the results of placement exams with a student’s grade in his or her last high school math class, and two fifths consider grade in last high school English class. About one third of colleges use high school GPA to assess math and English readiness. Other common measures include the recommendation of an instructor or counselor at the college and results from the state’s Early Assessment Program (EAP), which is built largely around standardized testing in 11th grade. These were reported by approximately one third and three fifths of responding colleges, respectively. A closer look at the nine community colleges of the Los Angeles County Community College District confirmed both the variability in practices observed in the statewide survey and the centrality of standardized placement exams in the assessment of student readiness (Melguizo, Kosiewicz, Prather, & Bos, 2014; Ngo & Kwon, 2015).

It is important to note, however, that the additional assessment measures observed in these studies were not necessarily applied to all new students (Rodriguez et al., 2016). For example, some colleges confined the use of additional measures to recent high school graduates, to students who specifically requested that they be used, or to students who challenged their initial placement recommendation. Thus, the level of variation in assessment practices across the colleges of the CCC system may not be reflected to the same degree across the student body of the CCC system, with perhaps a majority of students not benefiting from a multiple-measures assessment approach.

There are few state guidelines to assist California’s community colleges in implementing multiple-measures assessment and placement processes, which perhaps is unsurprising in light of the evident variability in placement practices. Most of the existing requirements and guidelines concern the validation of placement tests (CCCCO, 2001), while colleges currently are not required to validate their multiple measure policies (CCCCO, 2011, pp. 2.4-2.5). Nevertheless, the Academic Senate for California Community Colleges (ASCCC) recently reaffirmed the importance of multiple-measures placement practices (ASCCC, 2013), and an ASCCC task force concluded that “the inclusion of multiple measures in our assessment processes is an important step toward the goal of improving the accuracy of assessments” (Grimes-Hillman, Holcroft, Fulks, Lee, & Smith, 2014, p. 7).

Data and Measures

Data Source

Data for this project were drawn from Cal-PASS Plus (CPP), a project of the CCCCO. CPP is a voluntary, intersegmental data repository that includes educational and workplace outcome data on California residents. CPP was initiated in the mid-2000s, and many public K-12 schools in California, all of the state’s community colleges, and some public 4-year institutions now contribute data to CPP. Data for the community colleges were provided by the CCCCO and are available from Summer 1992 to nearly present day. Participation in CPP by K-12 schools initially was low but has grown over time, with 57% of public high school districts and 79% of public high school students accounted for in the 2014-2015 academic year.

Sample

The theoretical universe for this study is composed of all CCC students who enrolled in a developmental or college-level math or English course between Summer 1992 and Spring 2015. For the purposes of this study, we define developmental math as including arithmetic, pre-algebra, beginning algebra, and intermediate algebra, whereas college-level math includes general education math, statistics, college algebra, trigonometry, precalculus, and calculus I (Bahr, 2012; Bahr, Jackson, McNaughtan, Oster, & Gross, 2017). Like developmental math, developmental English includes four levels of coursework, but college-level English is limited to one category, namely, college composition and writing. We analyze each level of developmental math and English separately, just as we analyze each category of college-level math and English separately.

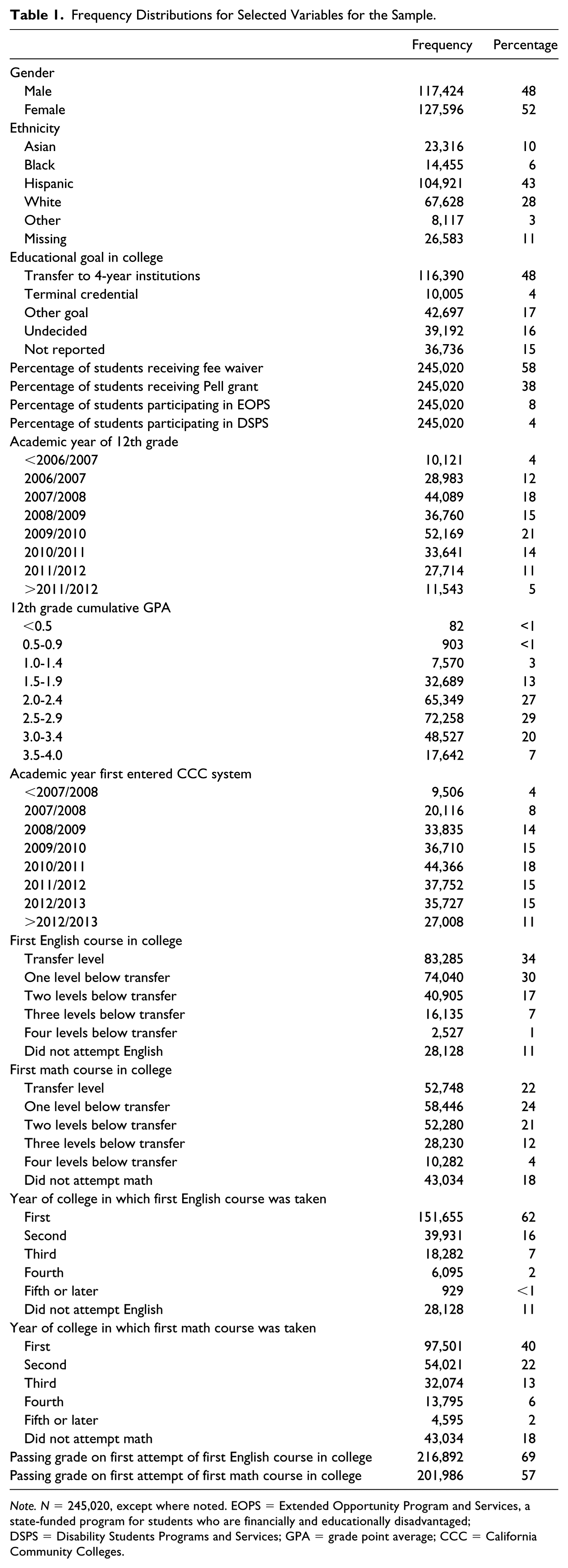

The primary limitation imposed on the analytical sample is that students have a valid academic year GPA for the 9th, 10th, 11th, and 12th grades. Because K-12 districts varied in the amount of historical data that they provided to CPP, the analytical sample is limited to 245,020 students, with most (96%) of these having entered the CCC system between Fall 2007 and Summer 2014.

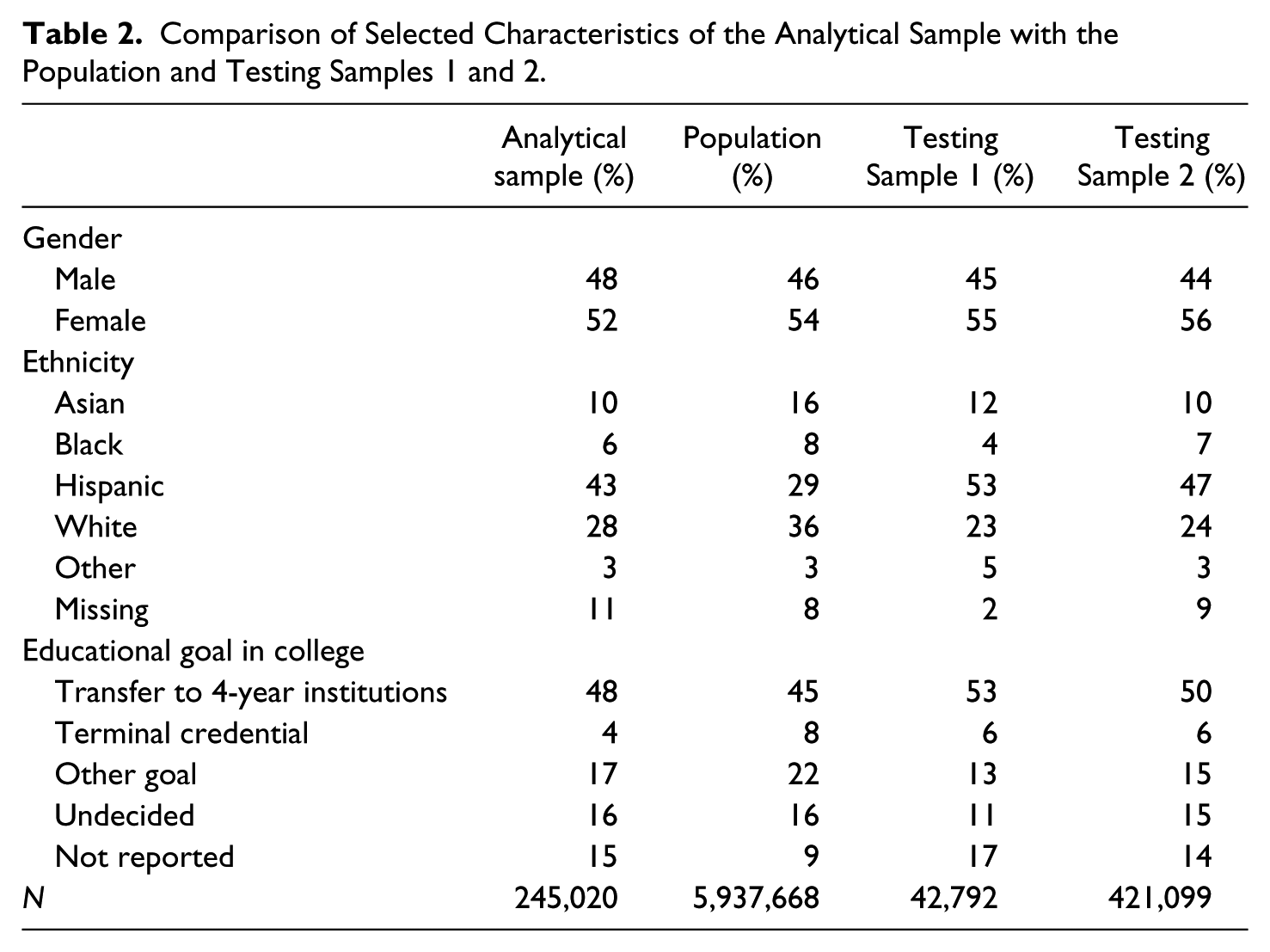

Of this sample, 216,892 students enrolled in a developmental or college-level English course, and 201,986 students enrolled in a developmental or college-level math course, as defined in this study. Note that the sum of these figures is greater than the total number of students in the sample because 173,858 students enrolled in both math and English. Note also that we did not impose on the sample the requirement that these math or English enrollments occur in a student’s first year of college. Nevertheless, 70% of the students in our sample who enrolled in a developmental or college-level English course did so in their first year, as did 48% of students who enrolled in a developmental or college-level math course. Characteristics of the students in the sample are presented in Table 1. In addition, a comparison of selected demographic characteristics for the analytical sample, the population of all CCC students who enrolled in a developmental or college-level math or English course between Summer 1992 and Spring 2015, and Testing Samples 1 and 2 is provided in Table 2. We note that the analytical sample is reasonably similar to the larger population except that the sample has a greater share of Hispanic students and a smaller share of White and Asian students, likely reflecting shifting state demographics combined with the fact that most students in the sample entered college in the past decade (between Fall 2007 and Summer 2014).

Frequency Distributions for Selected Variables for the Sample.

Note. N = 245,020, except where noted. EOPS = Extended Opportunity Program and Services, a state-funded program for students who are financially and educationally disadvantaged; DSPS = Disability Students Programs and Services; GPA = grade point average; CCC = California Community Colleges.

Comparison of Selected Characteristics of the Analytical Sample with the Population and Testing Samples 1 and 2.

Measures

The primary outcome of interest for each analytical sample was a dichotomous indicator of the achievement of a passing grade on the first attempt of a student’s first math or first English course, focusing on the developmental and college-level math and English courses described previously. A passing grade is defined as a grade of A, B, C, Credit, or Pass, while a non-passing grade is defined as a grade of D, F, No Credit, No Pass, or Withdrawal. Incomplete grades (e.g., IA, IB, IC, ID, IF) were recoded to their default value.

To test whether results differ between students who transition directly from high school to college with no delay (i.e., direct matriculants, hereafter DMs) and students who delay starting college by one or more years after the end of high school (nondirect matriculants, hereafter NDMs), we created two sets of models. The DM models use data only through 11th grade because DM students are still enrolled in high school when placement decisions are made, making 12th grade academic performance unobserved with respect to placement decision-making by colleges. More specifically, at many of the colleges of the CCC system, application and assessment of students for the Fall term begins in January, with colleges often working closely with local high schools in the spring term. The school year for high schools typically does not end until mid-June, and grades frequently are not finalized until July, when registration at the community colleges already is underway. Hence, 12th grade transcripts often are unavailable for students who matriculate directly to community college after high school, which is the reason why we exclude 12th grade academic performance from the DM models. In contrast, the NDM models use all data through 12th grade under the presumption that any delay in matriculating to college allows time for 12th grade transcripts to be finalized and become available for use in placement decisions. Despite this difference between the DM and NDM models, the analytical samples for the two sets of models were the same.

The independent variables used to predict achievement of a passing grade in the student’s first math course for the DM analyses included cumulative high school GPA (measured on a 4-point scale) at the end of 11th grade; grade points achieved in math in each of 9th grade, 10th grade, and 11th grade (again measured on a 4-point scale); and dichotomous indicators of whether a student ever attempted and, if so, whether they achieved a grade of B or higher, B–, C+, or C in each of the following courses: Algebra 1, Algebra 2, geometry, trigonometry, precalculus, calculus, statistics, or any Advanced Placement (AP) math.

For the NDM math analyses, the independent variables differed only in that 12th grade coursework and grade points also were included, and cumulative high school GPA as of the end of 12th grade was substituted for 11th grade cumulative GPA. In addition, we included a measure of delay of math, which we operationalized as the number of primary terms (excluding summers) between completion of the last high school math course and enrollment in the first community college math course.

The independent variables for the DM English analyses were similar and included cumulative high school GPA as of the end of 11th grade; grade points achieved in English in each of 9th grade, 10th grade, and 11th grade; and dichotomous indicators of whether a student ever attempted and, if so, whether they achieved a grade of B or higher, B–, C+, or C in each of the following courses: English as a Second Language (ESL), any Expository English, a California State University (CSU) designed Expository Reading and Writing Course (ERWC), developmental English, or AP English. The independent variables for the NDM analyses differed in the substitution of cumulative GPA at the end of 12th grade for 11th grade cumulative GPA, the inclusion of grade points achieved in English in 12th grade, and a measure of delay between last high school English course and first community college English course.

Method of Analysis

Decision Trees

We use decision tree methods (Breiman, Friedman, Olshen, & Stone, 1984; Dietterich, 2000; Murthy, 1998; Quinlan, 1986) to analyze the relationships between the measures of high school achievement and successful completion of students’ first math and English courses in community college. Decision trees were developed as a machine learning and data mining tool but are finding use in education research as well (Ahmed & Elaraby, 2014; Willett, Hayward, & Dahlstrom, 2008). Decision trees draw on a set of researcher-specified variables to classify observations iteratively into groups of progressively smaller size. At each step of the classification process, the algorithm selects the one variable and, further, the threshold of values on that variable that best sort the observations into two groups, seeking to maximize the homogeneity of a specified outcome within each group. Information measures such as the Gini–Simpson index are commonly used to determine within-group homogeneity. The algorithm stops classifying observations when the tree reaches a specified complexity parameter, chosen to minimize cross-validation error.

Decision tree methods offer several advantages over traditional regression methods for this study. First, decision trees are relatively assumption-free; they do not have implicit distributional or relational assumptions, reducing challenges to the potential fit of the data or models. Second, decision tree methods are less restrictive in dealing with missing data than are regression methods (Ding & Simonoff, 2010). Third, decision trees support the analysis of nonlinear and nonadditive relationships without variable transformations or the specification of interactions. Finally, decision trees categorize students into discrete groups according to their likelihood of a particular outcome using simple if-then logical statements. These statements can be presented visually in a manner that is intuitive and relatively easy to understand for lay audiences. This last aspect of decision tree methods is especially important for this study because it facilitates the communication of results to college administrators, educators, and practitioners. In turn, the clear communication helps to garner support for changes to institutional placement practices that may result from bringing research findings to bear. All decision trees in this study were produced using R with the -rpart- package (Therneau, Atkinson, & Ripley, 2015).

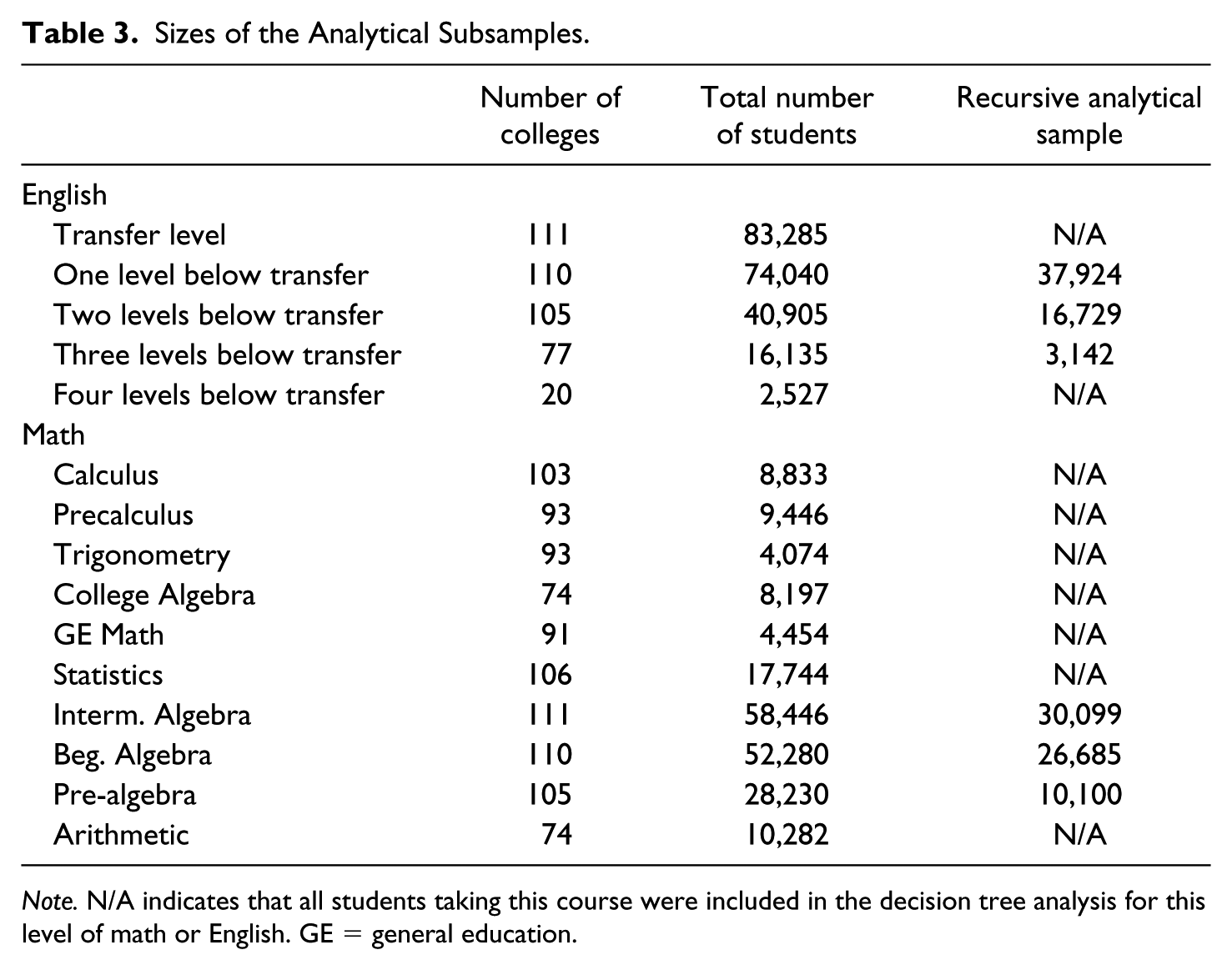

We developed decision trees predicting achievement of a passing grade in each of the nine levels of math coursework and four levels of English coursework, separately for DMs and NDMs. We did not develop decision trees for the lowest level of math and English because these courses often are open access without readiness requirements. We thus developed a total of 18 decision trees for math and eight decision trees for English.

Recursive Process

Given that our outcomes were binary indicators of successful completion of particular courses, each reflecting a level of skill in a curricular hierarchy, we developed a Poisson decision tree for each course using a recursive approach. In particular, we estimated models starting with the college-level courses in each subject, using subsamples of our larger analytical sample composed of all students whose first math or English course was one of these college-level courses. For example, the students whose first math course was Calculus I (first-semester calculus) constituted the subsample used for the Calculus I decision tree, whereas the students whose first math course was precalculus constituted the subsample used for the precalculus decision tree, and so on.

Then, once placement rules were determined for all college-level courses in a subject, students who met the placement rules for one or more of these college-level courses were excluded from the analytical subsample used to develop the decision tree for the highest developmental course in the subject, as well as the subsamples used for all lower level courses. 1 This exclusion occurred regardless of the actual skill level of a student’s first math/English course. For example, a student whose first math course was intermediate algebra (the highest skill developmental math course) was included in the analytical subsample used to develop the intermediate algebra decision tree only if the student’s first math course was intermediate algebra and the student’s high school achievement would not have qualified him or her to enroll in one or more of the college-level math courses, given the decision trees for the college-level math courses and their corresponding placement rules. This recursive process was continued for the next highest developmental course in the subject (e.g., beginning algebra in mathematics), with students meeting placement rules for the highest developmental course or one or more college-level courses being excluded from the analytical subsample.

Our recursive approach prevents the artificial inflation of expected pass rates in the decision tree output, as students placing into higher level courses were excluded from lower level trees. Table 3 provides the number of students who attempted each math and English course as their first course in the subject, as well as the size of each analytical subsample resulting from our recursive approach.

Sizes of the Analytical Subsamples.

Note. N/A indicates that all students taking this course were included in the decision tree analysis for this level of math or English. GE = general education.

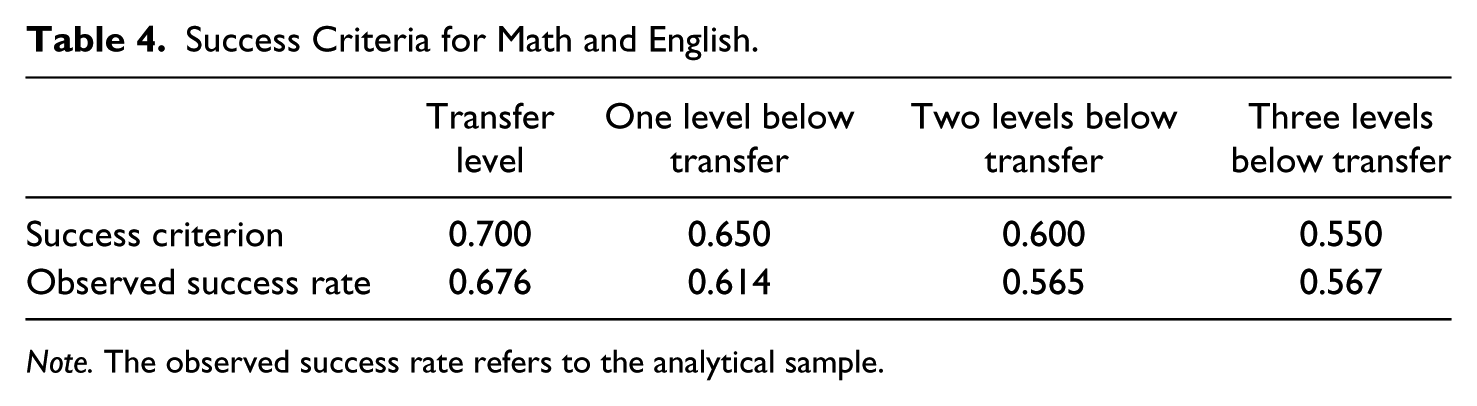

Success Criteria

The decision tree for each course (whether math or English) creates distinct groups of students, referred to as terminal nodes, each of which has a predicted success rate―the proportion of students in a given group who are predicted to achieve a passing grade on their first attempt of the focal course. Determining which terminal nodes define the curricular placement rules for a course requires an a priori success criterion, constituting the minimum expected course success rate. Nodes with a predicted success rate at or above this criterion then define the placement rules for the course. In other words, the groups of students identified as eligible to be placed at a specific level of coursework have a predicted success rate equal to or higher than the criterion.

Success criteria for this study were selected conservatively to reflect historic pass rates in the courses, to ensure that students placed under rules developed from the decision trees were likely to succeed in these courses, and that the application of such placement rules would not negatively impact overall course pass rates. Table 4 summarizes the success criteria selected for this study, as well as the actual (observed) rate of success in each course for students in the sample.

Success Criteria for Math and English.

Note. The observed success rate refers to the analytical sample.

Deriving Placement Recommendations From Decision Tree Analyses

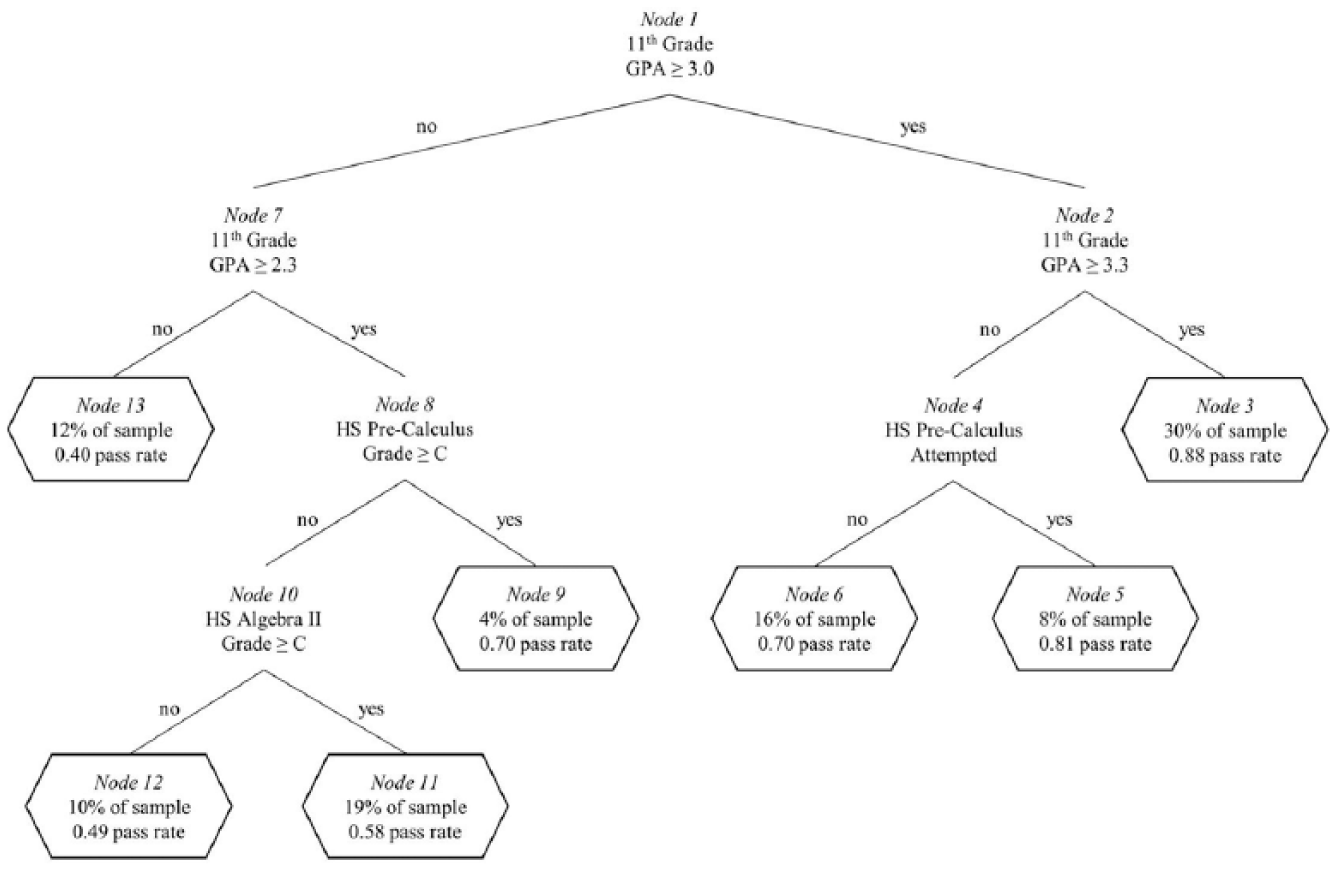

In Figure 1, we present a notated version of the decision tree that resulted from our analysis of performance (i.e., achieving a passing grade or not) in college statistics as a first math course. This decision tree is for DMs, which, as explained earlier, assumes that 12th grade transcript information is unavailable in the assessment process. The sample on which this decision tree is based includes all students who enrolled in statistics as their first community college math course and who had valid data on the key predictors of performance, described earlier. In the remainder of this section, we provide an interpretation of this decision tree, and then we explain the relationship between the results of this particular decision tree analysis and the development of placement recommendations for college-level statistics.

Decision tree for college-level statistics for students matriculating directly from high school.

The root node (Node 1) in Figure 1 splits the total analytical sample by cumulative high school GPA as of the end of 11th grade. The right-hand branch includes all students who have cumulative high school GPAs equal to or greater than 3.0, while the left-hand branch includes the remaining students, all of whom have cumulative high school GPAs of less than 3.0. The appearance of cumulative GPA in the root node, at the highest level of the decision tree, indicates that it is a key predictor of achieving a passing grade in college statistics.

Reading down the right-hand side of the tree, the first internal node (Node 2) splits the subsample of students with cumulative high school GPAs at or above 3.0 into a subgroup with GPAs at or above 3.3 (the right-hand branch) and a subgroup with GPAs between 3.0 and 3.2 (the left-hand branch). To the right is a terminal node (Node 3). Node 3 contains 30% of the overall DM sample for statistics and has a predicted probability of successfully passing statistics of 0.88. In other words, 88% of community college DMs with cumulative high school GPAs of 3.3 or higher are predicted to achieve a passing grade in college statistics as their first math course. Of note, this terminal node combined with the other terminal nodes in Figure 1 accounts for 100% of the sample that was used to generate this decision tree.

In the left-hand branch from Node 2 are students with cumulative high school GPAs between 3.0 and 3.2. In Node 4, these students are split by whether they enrolled in precalculus in high school, a simple dichotomous variable assigned a value of 1 if the student attempted precalculus prior to the 12th grade and 0 otherwise. Students who did so, and who had cumulative high school GPAs between 3.0 and 3.2, are found in Terminal Node 5. They account for 8% of the DM sample and have a predicted 0.81 probability of achieving a passing grade in statistics as a first math course. Those who did not take precalculus are found in Terminal Node 6, containing 16% of the sample with an estimated 0.70 probability of achieving a passing grade in statistics as a first math course.

As noted earlier, an a priori success criterion is necessary to select the terminal nodes that will be used to define the placement rules for a given course. The success criterion for transfer-level courses is 0.70 (Table 4, discussed earlier). Terminal Nodes 3, 5, and 6 all meet or exceed this criterion and, thus, contribute information to the placement rules for statistics.

Returning to Node 1, the left-hand branch contains students with cumulative high school GPAs of less than 3.0. Tracing this and subsequent branches to the terminal nodes, we find only one additional terminal node (Node 9) with a predicted probability of success of .70 or greater, making it relevant to the placement rules. This node contains students with cumulative high school GPAs between 2.3 and 2.9, who also completed high school precalculus with a grade of C or better.

Drawing on the terminal nodes in Figure 1 that meet or exceed the success criterion (0.70), DMs with cumulative high school GPAs of 3.0 or greater may be placed in statistics. In addition, DMs with cumulative high school GPAs between 2.3 and 2.9 may be placed in statistics if they also completed high school precalculus with a grade of C or better. Students who do not fit either of these descriptions would be recommended for a lower level math course.

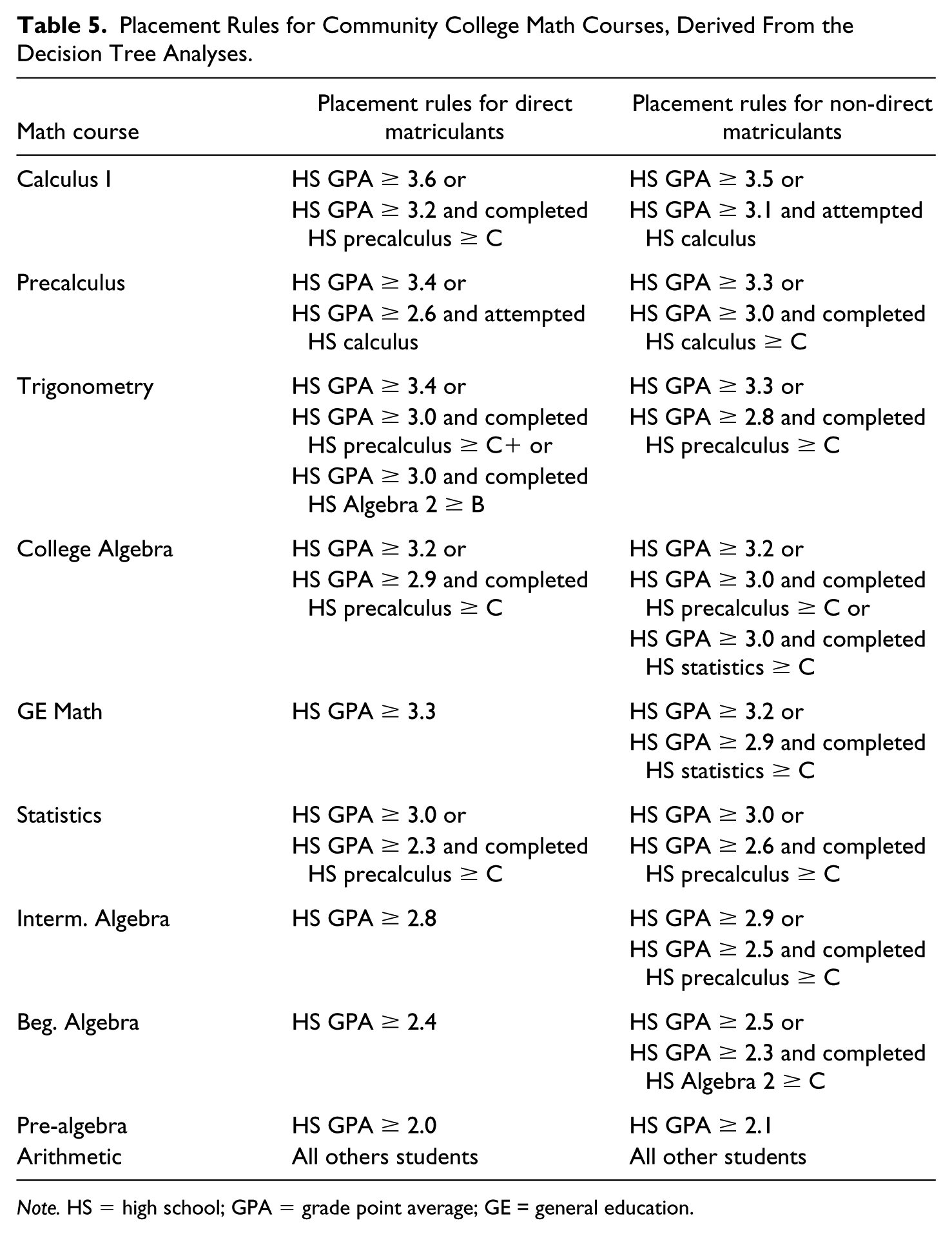

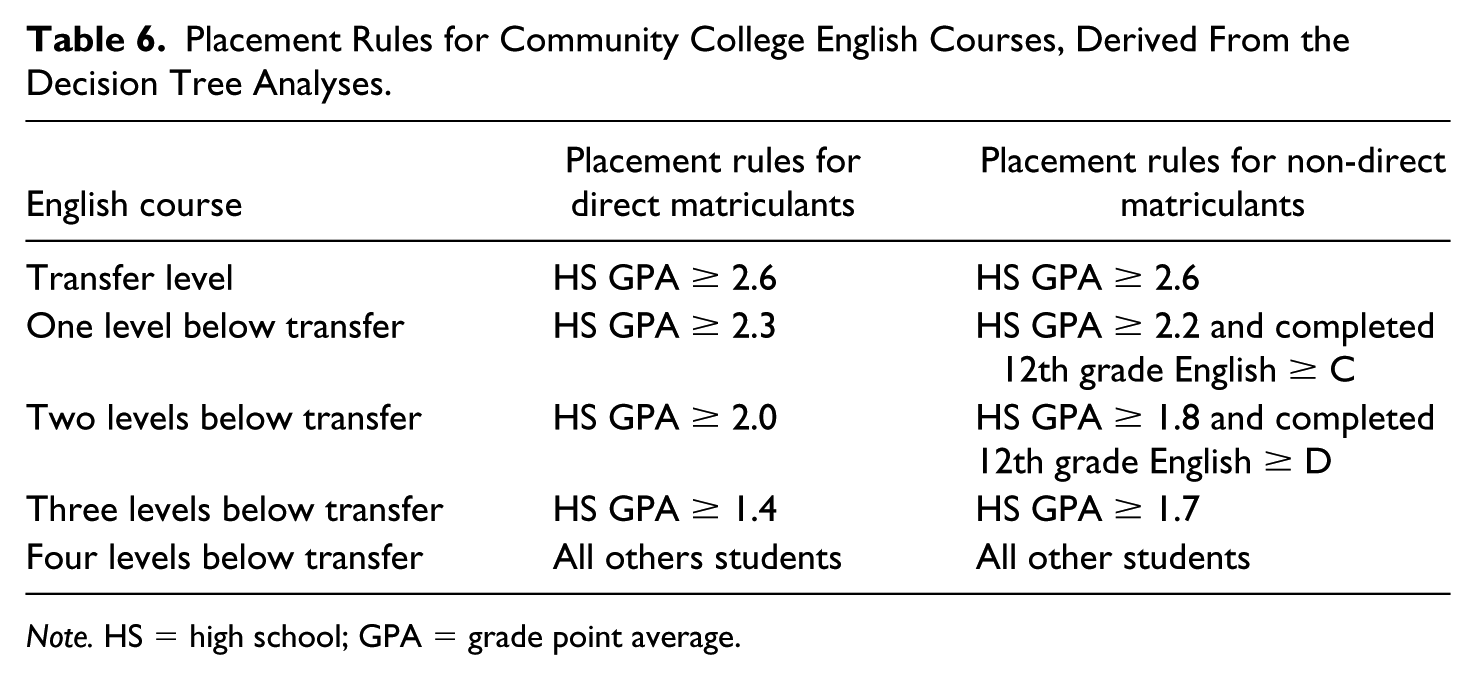

This set of placement recommendations is presented in Table 5 alongside the recommendations for all other levels of math and for both DMs and NDMs. Each set of placement recommendations was derived from a decision tree analysis in the same manner as were the recommendations for statistics. Table 6 presents the parallel placement recommendations for English.

Placement Rules for Community College Math Courses, Derived From the Decision Tree Analyses.

Note. HS = high school; GPA = grade point average; GE = general education.

Placement Rules for Community College English Courses, Derived From the Decision Tree Analyses.

Note. HS = high school; GPA = grade point average.

Findings

Useful Predictors of Performance in Math and English

This study proposed two questions with the goal of advancing research, policy, and practice on the use of high school achievement measures to place community college students in the math and English curricula. The first of these was, “What are the useful predictors of performance in developmental and college-level math and English coursework, and to what extent do these predictors vary across the levels of skill represented in the curriculum in each subject?”

Tables 5 and 6 demonstrate that cumulative high school GPA is a key predictor of passing math and English courses at all levels of skill. Every math course has an associated threshold of high school GPA at or above which success in that course is expected, even in the absence of specific indication of progress in the high school math curriculum (i.e., even without evidence that a student passed a particular high school math course). This holds true both for students who enter college immediately after high school (DMs) and for those who delay (NDMs). The same is observed for all four of the English courses among DMs and two of the four English courses among NDMs.

Two differences between math and English are noteworthy. First, consistent with prior work (Bahr, 2016), the minimum high school GPA above which success is likely in college-level math is somewhat higher than the minimum high school GPA for college-level English. The minimum high school GPA to access college-level math for DMs is 3.0 (see the row for statistics in Table 5), assuming that precalculus was not completed in high school. The corresponding minimum high school GPA for college-level English is 2.6 (see the row for transfer-level English in Table 6).

Second, there frequently are two categories of students who are likely to succeed in a given math course: (a) students who have stronger cumulative high school GPAs, for whom GPA alone usually is a sufficient indicator of readiness, and (b) students who have somewhat lower high school GPAs who advanced further or performed better in math specifically. For example, DMs who are likely to be successful in college algebra comprise students with GPAs of 3.2 or greater (the first path to success) and students with GPAs of 2.9 or greater who completed high school precalculus with a C or higher (the second path to success). The second path to success is especially common among NDMs, occurring in eight of nine math courses among NDMs but just five math courses among DMs. A parallel pattern is observed in two of four English courses among NDMs but not observed in any English course among DMs.

The higher GPA threshold for success in math relative to English, and the more frequent occurrence of the second path to success in math relative to its occurrence in English, may be accounted for by a single explanation. In particular, it may be that the reading and writing skills addressed in the college English curriculum are invoked across a greater range of high school courses than are the quantitative skills addressed in the college math curriculum. If that is the case, cumulative high school GPA would be more reflective of English competency than of math competency in the absence of specific indication of progress in the high school math or English curriculum. Hence, a higher GPA would be necessary to signal a given level of math competency than is necessary to signal the corresponding level of English competency. That is precisely what is observed here.

Furthermore, if quantitative skills are invoked across a narrower range of courses in high school than are reading and writing skills, then a specific indication of progress in the high school curriculum (e.g., passing a given level of high school math) in combination with high school GPA would be useful more frequently for signaling competency across the levels of skills represented in the math curriculum than across the levels of skills represented in the English curriculum. Again, that is precisely what is observed here, with the second path to success occurring much more frequently with math than with English.

Thresholds of Achievement to Apply When Placing Students

The second question posed in this study was, “What are the appropriate thresholds of achievement to apply when placing students in the math and English curricula?” Tables 5 and 6 present specific recommendations in that regard. Unsurprisingly, the recommendations indicate that higher levels of high school achievement are necessary to ensure a strong likelihood of success in math and English courses of greater skill. For example, in the absence of a specific indication of progress in the high school math curriculum, a cumulative high school GPA of 3.6 is needed for Calculus I as a first college math course, but a GPA of 3.2 is sufficient for college algebra. That said, the minimum high school achievement for recommendations into general education math, college algebra, trigonometry, and precalculus are all reasonably similar to one another. Statistics has the lowest minimum expected achievement of the college-level math courses, and Calculus I has the highest. Minimum achievement for recommendations into development math or English courses also is lower than that of college-level math or English, and this is a logical consequence of the recursive process applied in the analyses.

In addition, the placement recommendations for DMs and NDMs are very similar in most cases. However, as noted, the second path to course success―a minimum high school GPA in combination with a minimum subject-specific demonstration of competency―occurs more frequently in the placement recommendations for NDMs than it does for DMs. To make sense of this finding, keep in mind that the analytical samples for the DM and NDM analyses are the same. The difference between the two analyses lies in the fact that the former incorporates high school achievement information through the end of 11th grade, whereas the latter includes achievement information for all 4 years of high school. Thus, what is seen here is evidence that students’ achievement of subject-specific skill milestones late in their high school careers can be especially important indicators of college readiness even in the absence of a strong cumulative GPA. Hence, it should be a priority for high schools to provide, and community colleges to utilize in their placement processes, the most up-to-date transcript information available for incoming college students.

Model Validation

There are two important questions to ask regarding the validity of these results. First, does assessing student readiness with multiple measures of high school achievement improve the rate of successful completion of the target course, relative to assessing students with placement tests? Second, are the results replicable in other samples?

To answer these questions, two separate testing samples were developed. Concerning the first of these testing samples, because CPP is a voluntary and ongoing data sharing system, additional data became available while the work of this study was underway. These new data addressed the 2015-2016 academic year. The same sample selection criteria of valid information on 9th, 10th, 11th, and 12th grade GPA were applied to these data to create a new sample referred to as Testing Sample 1.

Regarding the second testing sample, a number of students were excluded from the analytical sample or from Testing Sample 1 because they were missing valid information on high school GPA in one or more of the grade levels. However, some of these students had sufficient information on high school achievement to reach a placement recommendation, given the placement rules derived from the decision tree analyses. These students with partially missing but sufficient information for placement recommendations were included in Testing Sample 2.

In Table 2, presented earlier, selected characteristics of the analytical sample were compared with the two testing samples. Overall, the three samples are reasonably similar to one another with the exception that students in Testing Sample 1 are more likely than are students in the analytical sample to be Hispanic and less likely to be missing data on race or ethnicity. They also are more likely to report an educational goal of transferring to a 4-year institution and less likely to report being undecided.

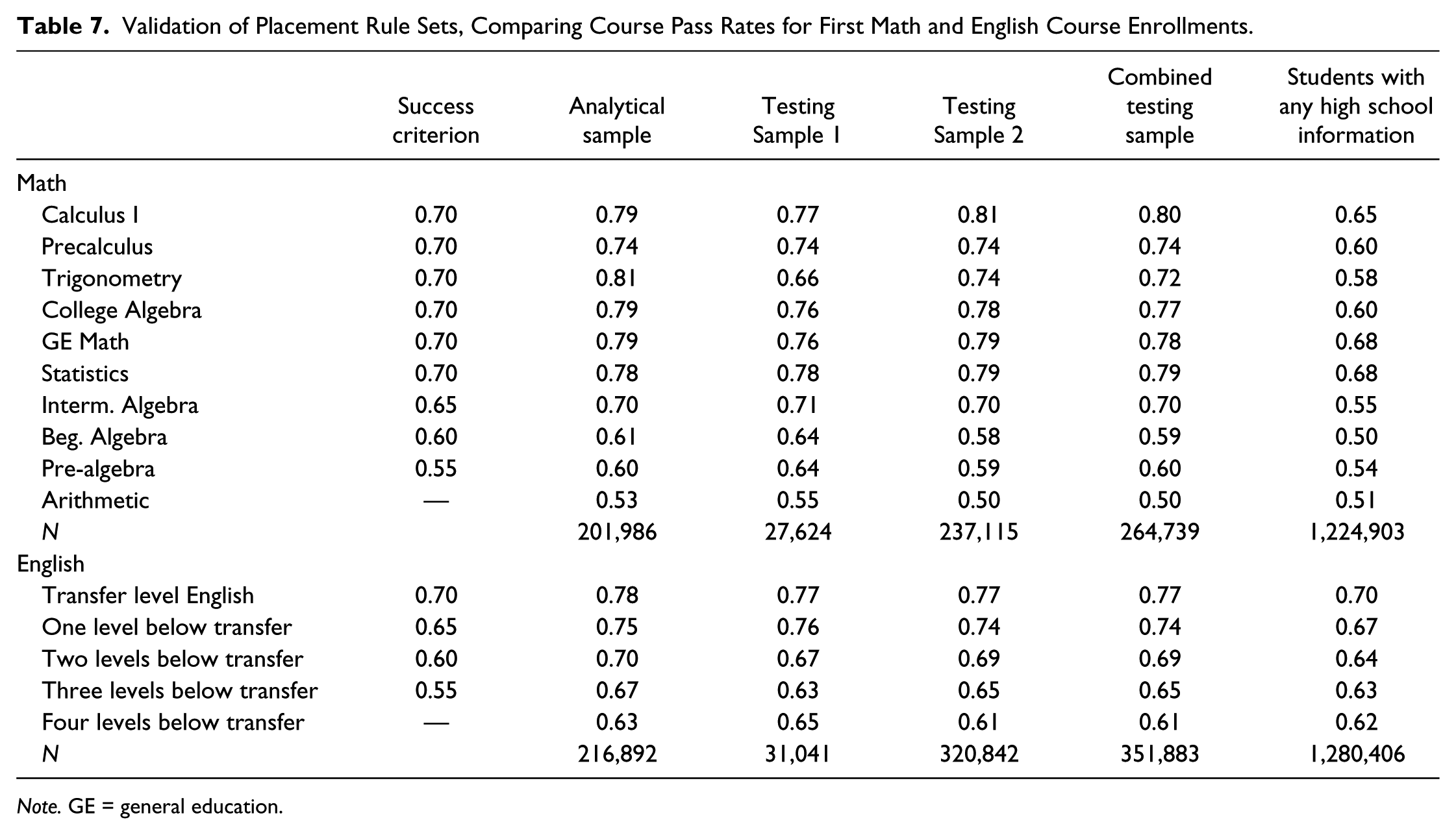

To validate the placement rules, the rules were applied to the analytical sample and to the two testing samples, with the result that the highest recommended placement level for each student in math and English could be determined. Focusing on students whose highest placement matched their actual first course in the subject, the pass rate for each first math and first English course was calculated in each sample. Then, these pass rates were compared with (a) the specified success criteria and (b) the corresponding pass rates for students who had any high school information, disregarding the placement rules developed in this study. The results are presented in Table 7.

Validation of Placement Rule Sets, Comparing Course Pass Rates for First Math and English Course Enrollments.

Note. GE = general education.

The results in Table 7 support the validity of the placement rules derived from the decision trees. In particular, with only one exception, the observed pass rates for the analytical sample and two testing samples exceed those of students with any high school information, most of whom presumably were placed based in large part on their placement test scores. The one exception is in developmental English three levels below college level, where Testing Sample 1 has an observed pass rate equal to that of students with any high school information.

In addition, pass rates of the analytical sample and testing samples all exceed the success criteria, save for one. In Testing Sample 2, the 0.58 pass rate in beginning algebra is slightly lower than the corresponding success criterion of 0.60.

Finally, pass rates generally are very stable across the analytical sample and two testing samples. Only trigonometry varies meaningfully, with students in the analytical sample having a pass rate of 0.81, while pass rates of 0.66 and 0.74 are observed in Testing Samples 1 and 2, respectively.

Discussion and Conclusion

The open admissions policies of community colleges not only guarantee access to postsecondary education but also ensure a student population of highly varied educational experiences and readiness for college-level coursework. In the interest of helping students to identify and remediate any skill shortfalls, community colleges employ various assessment tools and then sort students into coursework appropriate to their levels of readiness. Among these assessment tools, standardized placement exams have become a mainstay of community colleges. Yet, recent studies have shown that students’ potential to achieve a passing grade in math and English coursework often is underestimated by placement tests, with many students being placed into remedial courses despite being likely to pass college-level courses (Bahr, 2016; Scott-Clayton et al., 2014). In turn, being placed in remedial courses increases students’ risk of leaving college without a postsecondary credential (Burdman, 2012). Thus, the means by which many community colleges strive to increase students’ chances of completing college can prove ultimately to be an obstacle to their success.

From the perspective of community college instructors, however, the occurrence of student underplacement is largely invisible because the counterfactual of the same student taking a higher level course is unobserved. More apparent to instructors is overplacement resulting in students struggling in a course, but evidence indicates that overplacement is less common with placement tests than is underplacement (Scott-Clayton et al., 2014). The difference in the visibility of these two phenomena―underplacement and overplacement―can lead to inaccurate assumptions about the effectiveness of institutional placement policies. For example, some community colleges adopt more stringent placement polices than do 4-year institutions in equivalent courses (Fields & Parsad, 2012). More importantly, the relative invisibility of underplacement has resulted in the problem remaining largely unaddressed in most states.

The limited research to date has indicated that measures of high school achievement can be used to generate placement recommendations that are more accurate, on average, than are placement recommendations based on placement tests, resulting in fewer students being under- or overplaced in math and English coursework (Bahr, 2016; Scott-Clayton et al., 2014). The results of this study bear out that conclusion and indicate that cumulative high school GPA is the most consistently useful predictor of performance across levels of math and English coursework. This study also demonstrates that a higher GPA is necessary to signal readiness for college-level coursework in math than is necessary to signal readiness for college-level coursework in English, which is consistent with prior work (Bahr, 2016). In addition, the combination of cumulative GPA with specific indications of progress in the high school curriculum, such as course completion, is frequently useful for predicting performance in math among DMs and for predicting performance in both math and English among NDMs.

Drawing on the relationships observed between high school achievement and students’ likelihood of passing each math and English course, placement rules were developed and then validated with two testing samples. In contrast to prior research, which has been limited by the need to collapse developmental and college-level coursework into monolithic categories, the approach applied here and the large size of the sample allowed for placement rules that distinguish between levels of development coursework and between levels of college coursework, which is an important advancement in this area.

Implications and Future Research

Given the large and diverse sample employed in this study, a question that might be asked is why analyses were not conducted separately for particular subgroups of students to derive subgroup-specific placement placement rules. The central goal of the research was to develop placement recommendations that colleges could implement directly in their assessment and placement processes. Colleges could not reasonably apply different placement rules to men versus women, White students versus Latino/a students, students graduating from one high school versus another, and so on, without facing charges of discrimination. In addition, subgroup-specific placement recommendations would not be helpful to high schools seeking to strengthen the preparation of students for college, nor would they be helpful to colleges seeking to clearly articulate to high school students the expectations for college readiness.

However, future research on the use of high school achievement to place community college students should endeavor to estimate the effect of such policy changes on the rate of direct enrollment in college-level coursework and should disaggregate this effect by student sociodemographic characteristics, such as socioeconomic status, gender, and race or ethnicity. In addition to the measures used in this study, an investigation of this sort will require data addressing either college students’ placement test scores or the level of coursework to which students were directed at college entry. Unfortunately, neither of these was consistently available in the data used for this study.

Evidence from one prior study in an urban setting suggests that placing students based on high school achievement would alter the racial or ethnic composition of college-level math and English courses, increasing the fraction of Hispanic students but decreasing the fraction of Black and Asian students (Scott-Clayton & Stacey, 2015). However, in that study, the authors’ projections held constant the overall rate at which students are directed to college-level coursework, which would not be the case in reality if, as the evidence indicates, underplacement is more common than is overplacement. Instead, increased enrollment in college-level coursework would be expected across the spectrum of student backgrounds and perhaps most among students of lower socioeconomic status who may receive less counsel about the importance of preparing for placement tests.

This increase in the number of students placed in college-level coursework when using measures of high school achievement raises important considerations for institutions. On one hand, administrators and faculty will need to be prepared to accommodate greater demand for college-level coursework, likely from a student population that is more heterogeneous with respect to academic backgrounds. On the other hand, administrators and faculty will need to be prepared for declining enrollment in developmental courses as well as a possible homogenization of the skills of students in developmental courses, as students who previously would have been underplaced are directed upward to courses that more accurately match their readiness. These changes will have consequences both for the faculty who teach college-level and developmental math and English courses and for the nature of instruction in these courses. These consequences should be investigated in future research.

Another important implication of substituting measures of high school achievement for college-administered placement tests is that colleges will be incentivized to work more closely with local high schools to prepare students for college, rather than simply critiquing the preparation provided by high schools. Future research should investigate how this change alters the dialogue between community colleges and local high schools, as well as the discourse among college faculty and administrators about the quality of preparation provided by local high schools.

In addition to the aforementioned lines of inquiry, three aspects of the work require further investigation in future research. First, this study drew a distinction between the information that would be available to colleges about students who matriculate directly from high school and those who delay matriculation. More research is needed on the relationship between the length of delay between high school graduation and college enrollment and the extent to which measures of high school achievement can be used to predict performance in math and English coursework. Specifically, does the utility of high school achievement measures for predicting performance fade over time, remain relatively constant, or possibly grow, relative to placement tests or other measures?

Second, as noted, this study investigated cumulative GPA as reported by high schools and found it to be a key predictor of performance in math and English coursework. It may be worthwhile, though, for future research to consider more nuanced variations of GPA such as subject-specific GPA (e.g., cumulative GPA in math courses, cumulative GPA in English courses).

Finally, this study benefited from access to information about students that was reported directly by high schools. Yet, many community colleges and system offices currently do not have access to high school transcripts. It will be important for future research to investigate the viability of students’ self-reported information about high school achievement in place of information reported directly by high schools.

Communicating Results to Stakeholders

This study was conducted as part of the California Multiple Measures Assessment Project (MMAP), one of the first statewide initiatives to examine empirically the use of high school achievement to place community college students in math and English coursework. As other states pursue similar investigations, it will be useful to reflect on lessons learned from MMAP. In particular, in communicating this research and the placement recommendations to faculty, administrators, and policy makers in California, an important strength of the decision tree methods has been the interpretability of the results. The connection between the analyses and the placement rules generally is transparent even to stakeholders who are not versant in quantitative research, which increases confidence in the empirical grounding of the recommendations.

At the same time, however, the prominence of high school GPA in the results has led some stakeholders to question whether the placement rule sets actually reflect the multiple-measures assessment that the research team was charged to develop. This is an understandable misinterpretation of the results because, unlike regression methods, decision trees do not display coefficients for all included variables regardless of their relative weight in predicting the outcome of interest. Consequently, it has been important to clearly articulate to stakeholders (a) the large number of high school achievement measures that were included in the analyses, and (b) the logic by which information on one or two measures of high school achievement (e.g., GPA, highest math or English course) can render largely superfluous information on additional measures of high school achievement.

Another challenge with acceptance of the rule sets has been concern by some colleges that using high school achievement may result in excessive rates of overplacement, rather than a reduction in the largely unobserved and unacknowledged problem of underplacement. Important in allaying this concern has been sharing the results experienced by colleges that have piloted the placement recommendations (e.g., Cooper, 2016; Huang, Hsieh, & Lopez, 2016). Early findings indicate that using measures of high school achievement to place students increased the number of students in college-level coursework while still maintaining historic pass rates in these courses. In other words, on average, students placed via placement tests did not differ in their rate of course success from students placed via high school achievement, but, when using high school achievement, more students qualified to take college-level coursework.

Furthermore, to ease acceptance of the math rule sets in particular, supplementary minimum high school course completion requirements were added to the placement recommendations. For placement in calculus or precalculus, new students were expected to meet the placement rules and to have completed high school precalculus, trigonometry, or a higher level course. For placement in trigonometry or college algebra, students must meet the placement rules and also have completed high school Algebra 2 or a higher level course. For placement in general education math, statistics, or intermediate algebra, the minimum is high school Algebra 1. Placement in beginning algebra, pre-algebra, or arithmetic depends on the placement rule sets only and requires no minimum level of high school coursework. Empirically speaking, these additional requirements have very little effect on the number of students placed in a given level of math because the large majority of students who met the placement rules for that level of math also met the additional high school coursework requirement. Still, the additional requirements helped to ease concerns about the possibility of student over-placement in math.

Evolving Policy Context

While this manuscript was under review, the State of California passed Assembly Bill 705 (AB 705; Seymour-Campbell Student Success Act of 2012, 2017). Central to the bill is the provision that community colleges must maximize the probability that the student will enter and complete transfer-level coursework in English and mathematics within a one-year timeframe, and use, in the placement of students into English and mathematics courses in order to achieve this goal, one or more of the following: high school coursework, high school grades, and high school grade point average. (Para. 3)

This bill was informed in part by the research described here, as well as research from the Community College Research Center, the Public Policy Institute of California, and others. In combination with the MMAP team’s work to build information technology infrastructure to support the systematic sharing of high school transcript information with colleges, this research played a key role in demonstrating that using high school achievement to assess and place students in the math and English curricula is both strongly justified by the evidence and practically feasible for colleges.

Of note, this study employed a conservative threshold for course success when defining placement recommendations. In effect, the recommendations suggest placement in a given course only when a student is very likely to succeed in that course, defined for transfer-level courses as a .70 or greater probability of success, roughly the average statewide success rate in transfer-level English. AB 705 goes further, however, prohibiting colleges from requiring students to enroll in remedial English or mathematics coursework that lengthens their time to complete a degree unless placement research that includes consideration of high school grade point average and coursework shows that those students are highly unlikely to succeed in transfer-level coursework in English and mathematics. (Para. 4; italics added for emphasis)

That is, AB 705 shifts the balance of colleges’ efforts toward curtailing the risk of underplacement even at the potential cost of somewhat lower overall pass rates in college-level courses. As a result, the work of MMAP already is being adapted to provide guidance to colleges using the new requirements of AB 705, namely that students be placed into developmental courses only if highly unlikely to succeed in a transfer-level course in that discipline and that such placement maximizes student’s likelihood of successful completion of a transfer-level course in that discipline within 1 year of college entry. One possible result of this adjustment is a revised set of placement recommendations that direct most students who graduated from high school and matriculated to a community college within a reasonable timeframe to transfer-level coursework, but that recommend varying levels of supplementary support depending upon students’ level of high school achievement.

More broadly, the passage of AB 705 in California speaks to a reconsideration in the national discourse of the role that developmental education plays in community colleges. Practically speaking, assigning students to developmental coursework has been the default in many colleges. This practice has been driven at least in part by the implicit assumption that any risks posed to students’ chances of graduating by unnecessarily assigning them to development coursework were minor in comparison with the risks of assigning academically underprepared students to college-level coursework. Now, that is known to be untrue. Assigning students to development coursework is far from a risk-free proposition, and, further, placement tests as the preferred assessment instruments are more likely to underplace students than to overplace them. Hence, states such as Florida, Tennessee, and now California are beginning to test or implement curricular models that deemphasize the role of developmental coursework and default to assigning students to college-level coursework. Needless to say, there will be much to learn from these efforts in the years to come.

Footnotes

Acknowledgements

The authors gratefully acknowledge contributions and feedback on this work from Alyssa Nguyen and Nathan Pellegrin.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The research discussed here is a result of a collaboration of the California Community Colleges Chancellor’s Office, California Community Colleges Common Assessment Initiative, the Research and Planning Group for California Community Colleges, and Educational Results Partnership. It received funding from the Butte-Glenn Community College District.