Abstract

This study examines question-order effects in measuring satisfaction with democracy (SWD). Particularly, the authors are interested in whether the relative position of the question regarding satisfaction with the state of the economy (SWE) in the questionnaire affects responses to the SWD item. The authors conducted three independent split-ballot experiments in Hungary between March 2021 and May 2022. They report a significant and substantial negative priming effect that possibly leads to a systematic underestimation of SWD. Importantly, the authors find no question-order effect in the measurement of SWE. The analysis further reveals a contrast effect: when the SWD question is primed, the difference between SWE and SWD means increases. The authors’ final recommendation is that researchers either put the SWD question before the SWE item to avoid question-order bias or randomize question order. These findings should assist future data collection efforts (comparative or single-country studies) in developing and integrating a battery of satisfaction items into questionnaires and help users assess data quality.

Keywords

The study of attitudes toward democracy and democratic performance has gained momentum with the gradual degradation of democracies worldwide (Bermeo 2016). During what some call the third wave of autocratization (Lührmann and Lindberg 2019; Waldner and Lust 2018), we see the halt of liberal democracy projects in Eastern European countries such as Hungary, Poland, and the Czech Republic (Bustikova and Guasti 2017; Sitter and Bakke 2019; Vachudova 2020). Arguably, citizen satisfaction with democracy (SWD) plays a role in the future of liberal democracy, in that it has the potential to stabilize democracy and prevent radical changes (Bernauer and Vatter 2012). Behavioral consequences of democratic dissatisfaction include support for antidemocratic parties, participation in illegal political action, and decreased willingness to vote (Anderson et al. 2005; André and Depauw 2017; Bengtsson and Christensen 2016).

It is no surprise that SWD has been the focus of many studies. At the same time, the SWD survey item has been criticized because of inter- and intracountry variation that prohibits the meaningful comparison of individuals and countries (Canache, Mondak, and Seligson 2001; Clarke, Dutt, and Kornberg 1993) and because it is highly sensitive to institutional context (Linde and Ekman 2003). Thus, SWD is demonstrably not a suitable indicator of political support for democracy. However, it is generally considered a good measure of citizens’ subjective assessment of the quality of democracy as they perceive it (Cutler, Nuesser, and Nyblade 2013). Recent evidence puts researchers on guard by demonstrating that different cross-national survey projects report varying levels, trends, and determinants of SWD, raising further questions about measurement validity (Valgarðsson and Devine 2022). At the same time, these survey projects differ in terms of the wording and scale of the SWD item, as well as the general context of the questionnaire, which further hampers comparative efforts. In this research note we focus on how survey context affects responses to the SWD question.

Our research question is informed by the European Social Survey’s (ESS) core questionnaire. The ESS is a popular source for the study of SWD (e.g., Christmann 2018; Harteveld et al. 2021; Magalhães 2016; Nemčok and Wass 2021; Stecker and Tausendpfund 2016; Vlachová 2019; Zilinsky 2019). It offers high-quality, wide-ranging comparative and longitudinal data on people’s social and political attitudes. In the ESS, the SWD measure (STFDEM) “On the whole, how satisfied are you with the way democracy works in your country?” (ESS Round 10: European Social Survey 2022) follows several satisfaction measures: “All things considered, how satisfied are you with your life as a whole nowadays?” (STFLIFE), “On the whole, how satisfied are you with the present state of the economy in [country]?” (STFECO), and “Now thinking about the [country] government, how satisfied are you with the way it is doing its job?” (STFGOV). All items are measured on a scale ranging from 0 to 10, with “extremely dissatisfied” (0) and “extremely satisfied” (10) at the two extremes. These survey items are not visually presented as a battery, but because of their joint focus (i.e., satisfaction), respondents may think that they are clustered together for a reason. Therefore, this series of connected questions may be subject to question-order bias, and the order of questions may affect the level of SWD as well as its determinants. Of the three preceding satisfaction items, we zoom in on satisfaction with the state of the economy (SWE) and how its relative position in the questionnaire affects SWD responses.

We carried out three independent split-ballot experiments in Hungary between May 2021 and May 2022. We conclude that if the SWE question is asked first (i.e., the SWD question is primed by SWE), the mean value of SWD is significantly and meaningfully lower than when the SWD question is not primed. Importantly, question order affects SWD but not SWE. Our results are relevant to (1) the creators of the ESS for the further development of the core questionnaire; (2) the creators of other large, comparative studies that have yet to include a full satisfaction battery; (3) researchers designing smaller studies who may consider including several satisfaction items; and (4) users of such studies looking for additional information on data quality.

Question-Order Effects

Question-order effect, or question-order bias, refers to the phenomenon when the order in which questions are presented in a survey influences respondents’ answers (Schuman and Presser 1983). This can affect not only the distribution of responses but relationships between the variables as well. The question-order effect is well documented, with studies on such effects appearing as early as the 1930s and 1940s (Cantril 1944; Sayre 1939).

To understand question-order effects, the literature points to the complicated process of answering survey questions. Respondents must first interpret the question, access relevant information in their memory, weigh information according to its relevance, formulate information into a judgment, and finally translate this judgment into a scale (Thau et al. 2021; Tourangeau, Rips, and Rasinski 2000; Zaller 1992). However, according to the principle of cognitive accessibility, people do not retrieve all relevant information in their memory, only the information that is most accessible. The recency principle dictates that the most accessible information is information most recently thought about (Wyer 1980; Wyer and Srull 1986). The information contained or activated in the previous question is thus used to answer the subsequent question, creating a priming effect (Förster, Liberman, and Friedman 2007; Higgins, Rholes, and Jones 1977; Janiszewski and Wyer 2014; Strack 1992). Respondents may or may not be aware of the priming episode (Strack 1992). The activation function of priming is an automatic process and happens subconsciously. The information function of priming, however, requires respondents to be aware of the priming episode: on the basis of the previous question, they have to infer the intended meaning of the subsequent question and that the previous and subsequent questions are grouped together for a reason (Strack 1992). Two forms of the priming effect are commonly discussed in the literature: assimilation (or carryover) and contrast (or backfire) effects (DeMoranville and Bienstock 2003; Sigelman 1981; Strack 1992; Sudman, Bradburn, and Schwarz 1996; Tourangeau et al. 2000).

Assimilation versus Contrast Effects

An assimilation effect occurs when respondents respond to the subsequent question in a similar way as they did the previous question, strengthening the correlation between the two questions. This may happen either consciously (i.e., the respondent is aware of the priming episode and infers the intended meaning of the questions [information function of priming]) or subconsciously (i.e., the respondent is not aware of the priming episode [activation function]). Alternatively, the information function of priming, when respondents are aware of the priming episode, may lead to a contrast effect and a gap between responses to the previous and subsequent questions (Strack 1992). The maxim of quantity encourages respondents to be as informative as possible and avoid redundancy. Therefore, when two questions overlap in their content, respondents typically aim to provide new information (see DeMoranville and Bienstock 2003). This leads to a weaker relationship between the two items.

General versus Specific Questions

The scholarship often discusses question-order bias in the context of general versus specific (or whole versus part) questions (Schwarz, Strack, and Mai 1991; Strack 1992; Strack, Martin, and Schwarz 1988). Most literature recommends that if items are in a general versus specific relationship, the general question should precede the specific one (McClendon and O’Brien 1988). The reason again lies in the recency principle. Because general questions are difficult to answer and responses are subject to different interpretations (Schuman and Presser 1983), respondents will retrieve the most recently activated information. If the specific question comes first, the information activated by the question will influence interpretation of the subsequent general question (i.e., the intended meaning of the general item), and therefore the correlation between the two questions will be stronger than if the questions were in the opposite order (McClendon and O’Brien 1988). An example of general and specific questions is satisfaction with life type of questions versus satisfaction with various subareas of life, such as marriage, work, or social life. If satisfaction with, for instance, marriage comes first, respondents will use this information to interpret the more general life satisfaction question. In other words, the respondent thinks the author of the questionnaire must have intended that satisfaction with one’s marriage is an integral part of life satisfaction. However, if the general question comes first, the life satisfaction question’s intended meaning remains open to many interpretations (i.e., besides marriage), and the relationship between life satisfaction and satisfaction with one’s marriage will be weaker (McClendon and O’Brien 1988).

SWE and SWD

General questions are notoriously difficult to answer. They can activate a lot of information, the intended meaning of the question has a lot of potential interpretations, and respondents must make a strong cognitive effort to answer (Schuman and Presser 1983). The more ambiguous the content of the question, the more likely that respondents will use primed information (McFarland 1981; Thau et al. 2021; Van De Walle and Van Ryzin 2011). General questions are thus very likely to encounter question-order bias.

The SWD survey item is a typical general question. The definition of democracy itself is open to interpretation (Quaranta 2018). Therefore, respondents will likely reach back to previous questions for information on the intended purpose of the item. Our study focuses on the priming effect of SWE on SWD. Research on SWD reveals a relationship between satisfaction and all sorts of government outputs (e.g., government effectiveness, corruption, and quality of public administration; Ariely 2013; Dahlberg and Holmberg 2014; Wagner, Schneider, and Halla 2009), including economic performance (Christmann 2018; Daoust and Nadeau 2021; Magalhães 2016). Although there is much ambiguity about whether democracy and economic development are causally connected (Doucouliagos and Ulubaşoğlu 2008; Gerring et al. 2005), data from wave 5 (2005–2007) 1 of the World Values Survey (Inglehart et al. 2018) indicate that an overwhelming majority of respondents think a “prospering economy” is an essential characteristic of democracy. This puts the two items into a part (economy) versus whole (democracy) dynamic.

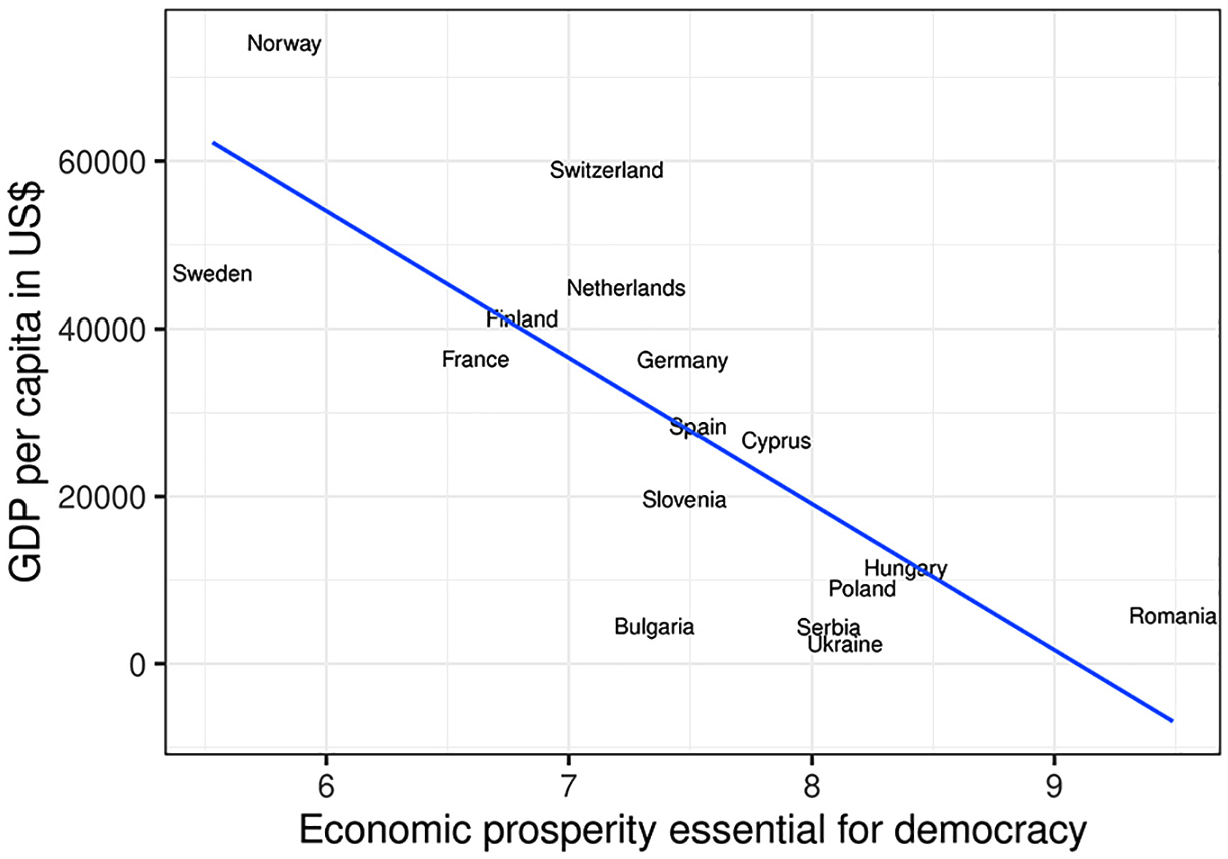

Figure 1 displays the mean values of a prospering economy being an essential characteristic of democracy (measured on a scale ranging from 1 to 10) over gross domestic product. We include only European countries. The data clearly show that the perceived importance of economic prosperity for democracy is negatively correlated with gross domestic product. We also see that in younger democracies, economic performance is an important feature of democracy. Hence, and especially in such countries, because of the information activated by the SWE item and respondents’ inferences regarding the intended meaning of the general question (SWD), we expect a significant priming effect on SWD.

Economic prosperity and democracy by gross domestic product (GDP) per capita (U.S. dollars) across Europe, 2006.

For our empirical exercise, we select Hungary as a case. Its comparatively poor economic performance and Hungarians’ materialistic perception of democracy (see Figure 1) make it an ideal candidate to demonstrate how the order of SWE and SWD items influences democratic satisfaction in the context of a whole versus part design.

Study Design

We conducted three studies in March 2021 (study 1), March 2022 (study 2), and May 2022 (study 3) as part of larger surveys. We refer to study 1 as the main study and studies 2 and 3 as replications. In studies 1 and 2, the contracted companies randomly selected the samples from their carefully maintained online panel. These samples are representative of the Hungarian population with Internet access, 18 to 65 years of age, by gender (male and female), age group (18–29, 30–39, 40–49, 50–59, and ≥60 years), and level of education (low, middle, and high). Online survey samples generally do not represent the whole population, but this technique of collecting data is much less resource demanding, is widely used, and is appropriate for conducting survey experiments (Auspurg and Hinz 2014). Studies 1 and 2 perform well against population indicators such as age, gender, and education. Respondents in study 3 were recruited on Facebook, with no representativeness criteria. In this study, men and individuals with higher education are overrepresented.

We coded two versions of the questionnaire with different question orders: (1) SWD followed by SWE (control group; not primed) and (2) SWE followed by SWD (treatment group; primed). The key survey items (SWD and SWE) appeared on separate pages, and a “Go back” button was provided. The questionnaire directed respondents to the two versions of the survey via simple randomization. The randomization procedure resulted in groups of roughly the same size in all three studies (study 1, n = 502 [control] and n = 498 [treatment]; study 2, n = 591 and n = 609; study 3, n = 461 and n = 459). The groups are balanced across various covariates (see Appendix A in the online supplement), suggesting that randomization was successfully performed. Appendix D in the online supplement presents a test of the randomization.

Results

Negative Priming Effect

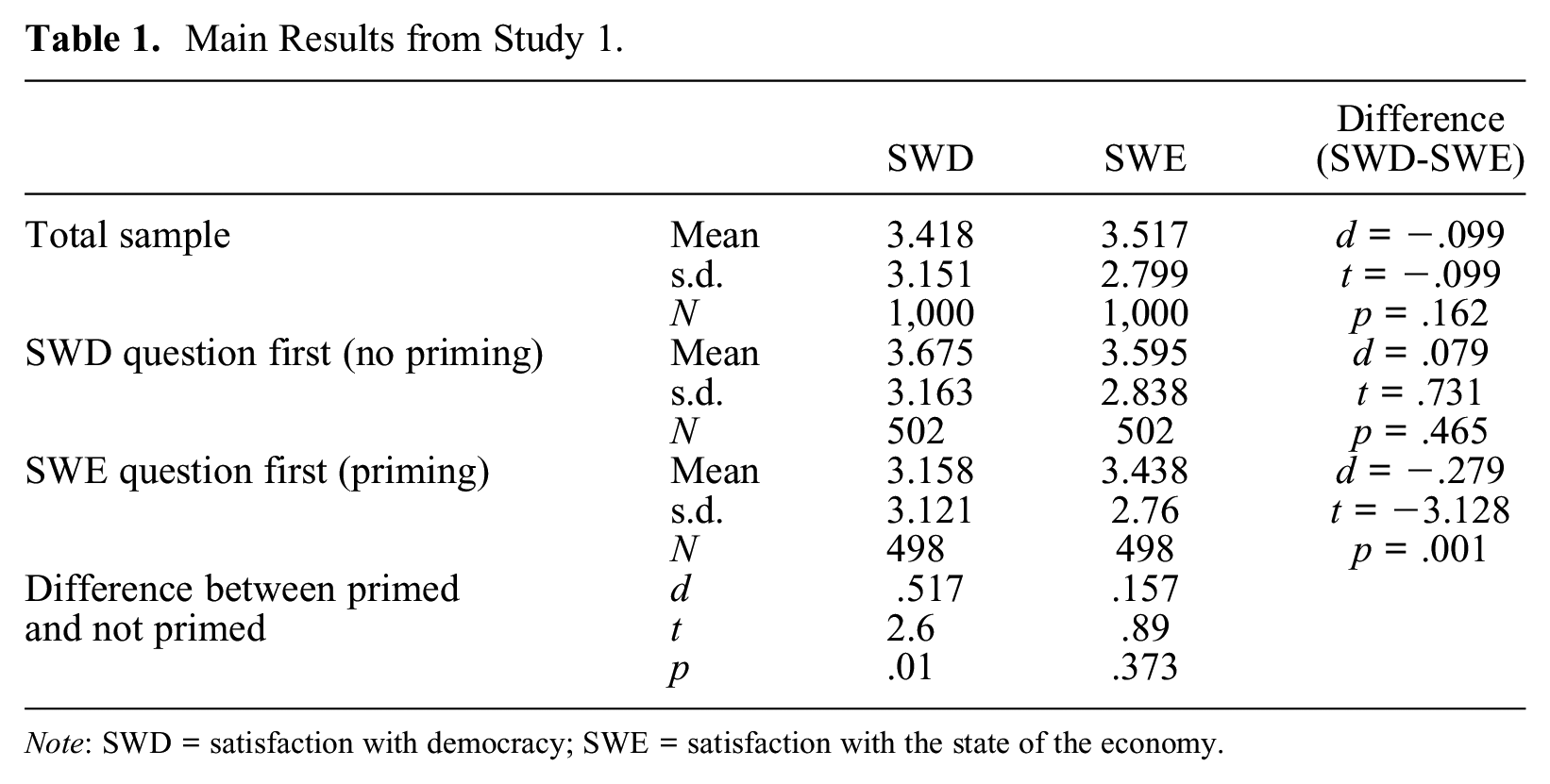

Table 1 shows the main results from study 1. The difference in the SWD mean across the not primed (3.675) and primed (3.159) groups is substantial: when the SWE question is asked first, the mean of SWD falls by 0.516 points. More substantively, in the control group with no priming, 56.8 percent of respondents were not satisfied with democracy (SWD < 5), and this figure increases to 66.3 percent in the treatment group. The change amounts to a 9.5 percentage point growth (χ2 = 9.51, p = .002) in the number of dissatisfied individuals. To compare the effect size of priming to that of other important covariates, we calculated Cohen’s d, which measures the standardized differences between two group means (Cohen 1988). Cohen’s d of priming (0.16, 95 percent confidence interval = 0.04 to 0.29) is as large as in the case of political interest (0.17, 95 percent confidence interval = 0.04 to 0.31) but smaller than for party preference (−1.85, 95 percent confidence interval = −2.03 to −1.68), which is the strongest covariant. In summary, our results show the SWE question has a statistically significant negative priming effect on the SWD item, and this effect is meaningful in size. Importantly, question order affects the measurement of SWD but not that of SWE.

Main Results from Study 1.

Note: SWD = satisfaction with democracy; SWE = satisfaction with the state of the economy.

Contrast Effect

In the case of priming (i.e., when the SWE question is asked first), the difference between SWD and SWE means becomes significant. The mean value of SWD (3.159) is lower than the mean value of SWE (3.438). Conversely, in the group with no priming, we find no prevalent differences. This is potential evidence for a contrast effect: the distance between the two indicators increases in the primed group.

Replication

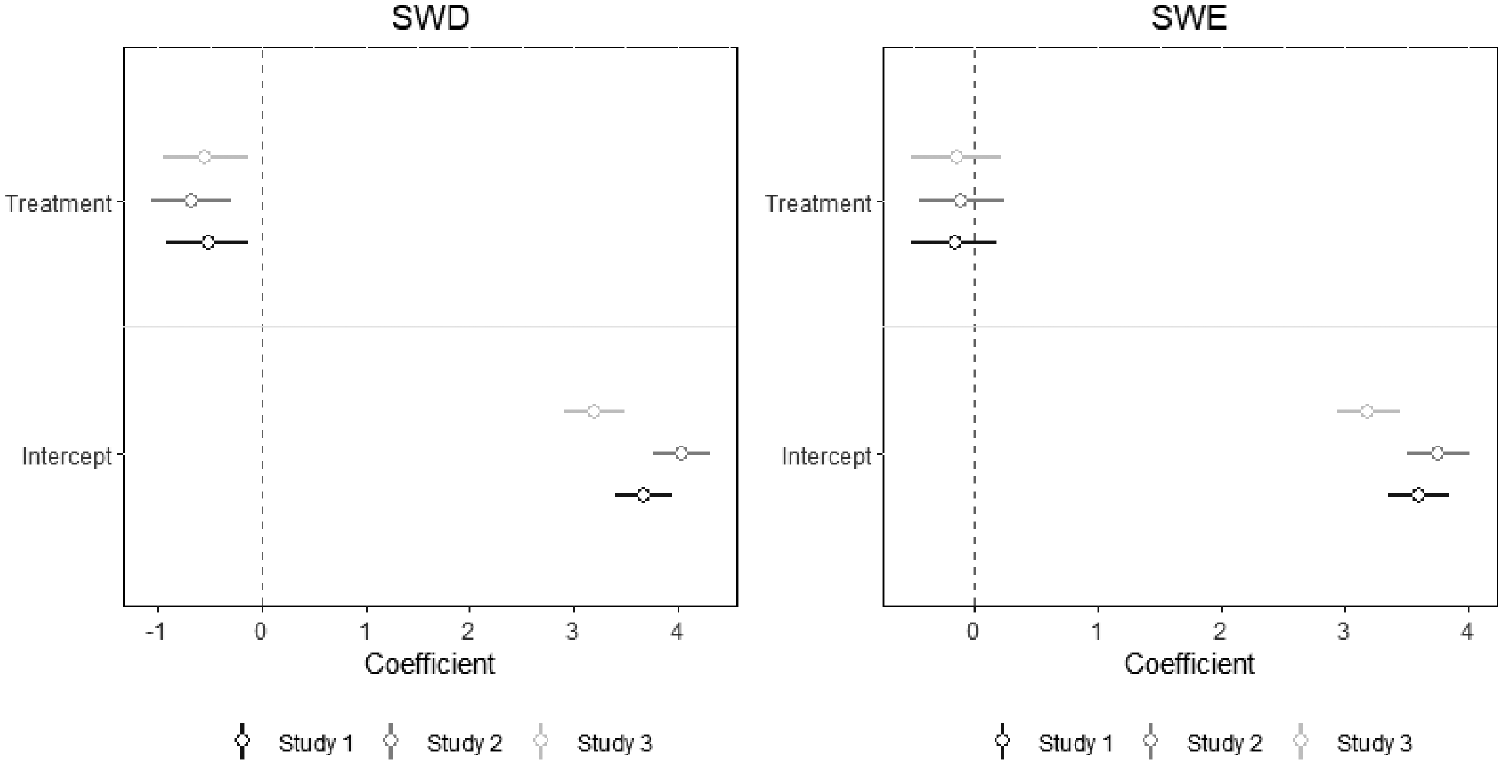

We replicated our study in March 2022 and May 2022 on samples with 1,200 and 920 respondents, respectively. As indicated in Figure 2, both studies 2 and 3 confirm the results of study 1. In both studies, we find that priming has a substantial negative effect on the mean value of SWD. We also find evidence for a contrast effect. Similar to study 1, question order does not affect SWE (see Appendix B in the online supplement). The fact that we were able to replicate the main findings of study 1 on a sample that is not representative of the population and that was collected using a different data collection technique (see study 3) strengthens the robustness of our results.

The effect of treatment group membership on satisfaction with democracy (SWD) and satisfaction with the state of the economy (SWE).

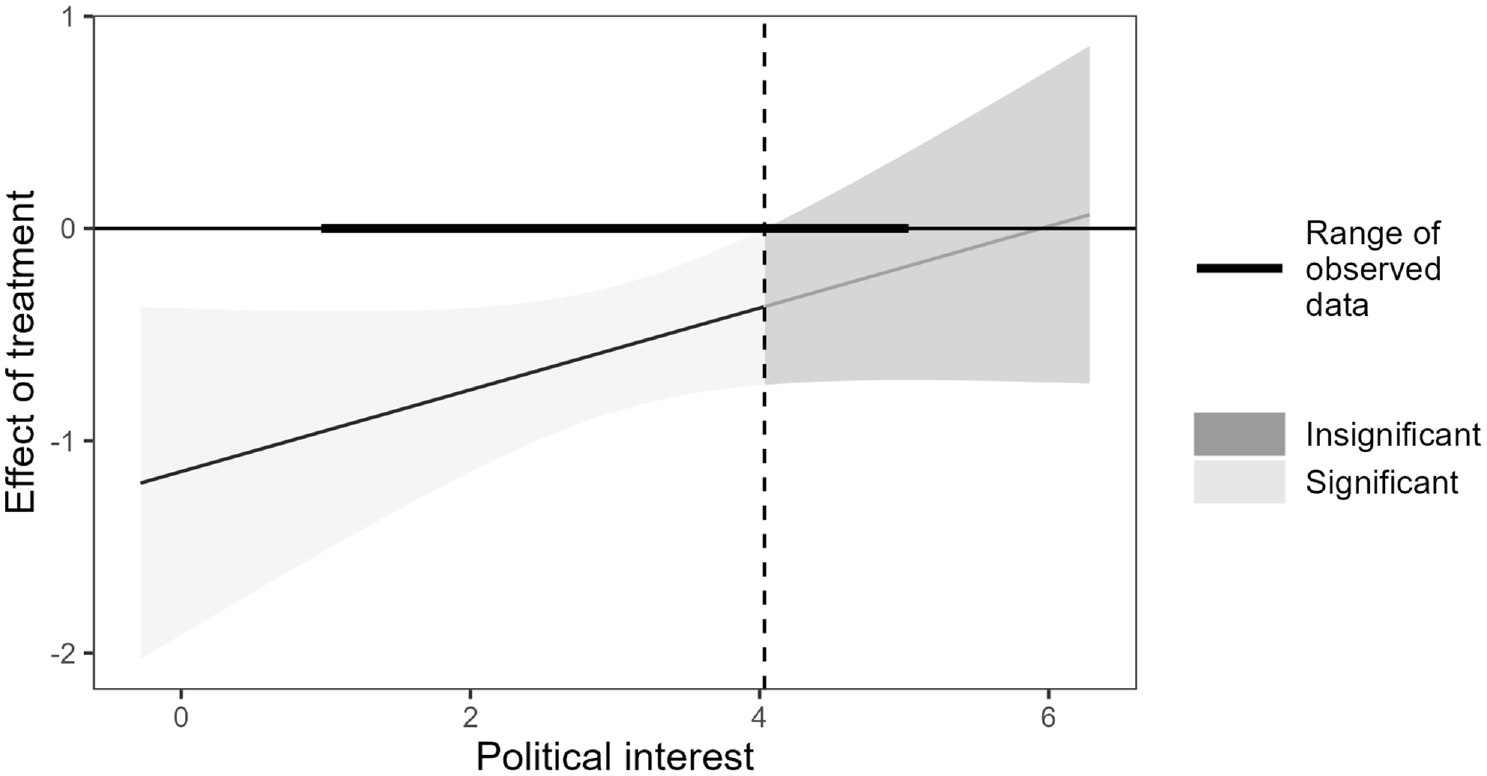

Subgroup Treatment Effects

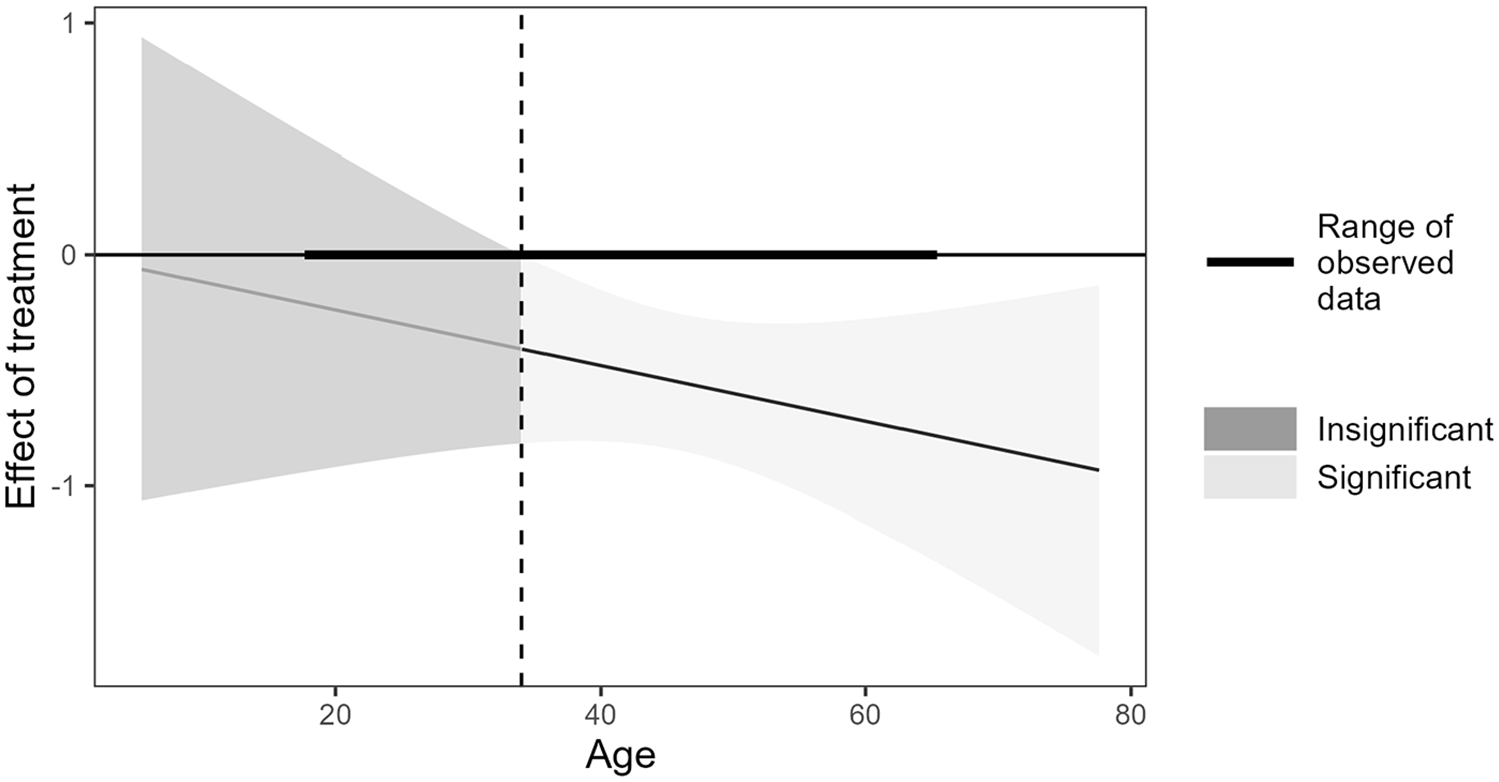

To further investigate the robustness of our results, we searched for heterogeneous treatment effects. First, we built a simple linear regression model explaining SWD with question order (treatment) and respondent features such as age, gender, level of education, place of residence, party preference, and political interest. Second, we interacted question order with each covariate, one at a time. In these models, a significant interaction term means the effect of the treatment varies across specific groups of respondents. We found no statistically significant effects for gender, level of education, place of residence, or party preference. For age and political interest, the Johnson-Neyman plots (Brambor, Clark, and Golder 2006) for study 1 reveal that, first, the effect of treatment does not change for the youngest group, but it slightly increases as respondents get older (see Figure 3), and second, for people with stronger political interests we see a small decrease in treatment effect, which eventually stabilizes after interest level 4 (out of 6; see Figure 4). However, these subgroup effects are inconsistent across the three studies: studies 2 and 3 show the effect of the priming event decreases (in contrast to study 1, in which it increases) with increasing age, and the effect becomes stronger with higher levels of political interest. Although the effect of the treatment only slightly changes with age and political interest, the potential heterogeneity of the effect further complicates the puzzle and sabotages the comparison of individuals even within the same country.

Johnson-Neyman plot of treatment and age.

Johnson-Neyman plot of treatment and political interest.

Conclusions

This study focused on question-order effects in measuring SWD. Specifically, we examined whether the relative position of the SWE question affects responses regarding SWD. Our question was informed by the ESS’s core questionnaire, in which the SWD question is embedded into a battery of satisfaction measures. We conducted three independent split-ballot experiments in Hungary.

On the basis of our analysis, we draw four conclusions. First, we find a significant and substantial negative priming effect that possibly leads to a systematic underestimation of SWD. Second, we find no question-order effect in the measurement of SWE. Third, the analysis reveals a contrast effect: when the SWD question is primed, the difference between SWE and SWD means increases. Prior scholarship explains this with the maxim-of-quantity principle, which posits that if two questions overlap to some extent, respondents try to provide new information to avoid redundancy. Our data are not suited to confirm this mechanism in our samples, but we find this hypothesis appealing, and future research could zoom in on the psychological processes that establish the contrast effect in the context of measuring satisfaction. Fourth, we find indications of heterogeneous treatment effects in our data. This means question-order bias may complicate comparison of individuals’ SWD responses.

On the basis of our findings, we have two recommendations regarding questionnaire design. First, in countries similar to Hungary concerning the materialistic understanding of democracy, which puts SWD and SWE in a whole versus part dynamic, we recommend that the SWD question precedes the SWE item. Second, in countries where we do not know if there is a question-order bias, the order of satisfaction items should be random. Randomization also offers a great opportunity to reveal the presence of such bias. Further research should identify other sources of question-order bias in the satisfaction battery. Satisfaction with the government is a strong candidate here. We already know a great deal about how party preference affects SWD through winning and losing elections (Bernauer and Vatter 2012; Blais and Gélineau 2007; Blais, Morin-Chassé, and Singh 2017; Curini, Jou, and Memoli 2012; Singh 2014). The item on satisfaction with the government asked before the SWD question may very well activate the feeling of winning/losing and consequently widen the winner-loser gap in SWD. We believe that our recommendations will assist ongoing and future data collection efforts (comparative or single-country studies) in developing and integrating a battery of satisfaction items into questionnaires.

Although it was outside the scope of our study, our results hint at a potential measurement validity concern regarding SWD. We urge authors to be aware of and evaluate the magnitude of this problem in the context of their research. If authors are interested in the level (or mean) of SWD, the priming effect presents a significant problem. At the same time, in the case of multivariate models, the gravity of the problem depends on the aim of the modeling exercise. Importantly, we recommend that authors still rely on the rich and high-quality data the ESS offers. We hope our study helps researchers use the data more comprehensively.

Finally, a few words on the generalizability of the results are in order. Our case selection rests on the observation that Hungarians’ understanding of democracy is demonstrably materialistic (see Figure 1). In countries where respondents are better able to differentiate between democracy and the state of the economy, and hence the overlap between the SWD and SWE items is smaller, the priming effect may be less prevalent. However, we suspect our findings travel well to countries where citizens’ attitudes toward democracy are similar to those of Hungarians, including new democracies. Furthermore, we presented a case of overall dissatisfaction: on average, respondents were rather dissatisfied with the state of both the economy and democracy. The dissatisfaction with the economy sets satisfaction on a downward spiral that leads to even more dissatisfaction with democracy. However, the question of whether there is a similar substantial bias in the case of more satisfied nations remains open. Should our findings not travel to other political contexts, we are presented with an even bigger puzzle. If the priming effect varies across countries, it may substantially hamper cross-country comparisons. Together with problems of intracountry comparison revealed by the heterogeneous treatment effects, this should put researchers on guard when designing questionnaires and using comparative data.

Supplemental Material

sj-docx-1-smx-10.1177_00811750241254363 – Supplemental material for Question-Order Effect in the Study of Satisfaction with Democracy: Lessons from Three Split-Ballot Experiments

Supplemental material, sj-docx-1-smx-10.1177_00811750241254363 for Question-Order Effect in the Study of Satisfaction with Democracy: Lessons from Three Split-Ballot Experiments by Zsófia Papp, Pál Susánszky and Andrea Szabó in Sociological Methodology

Footnotes

Acknowledgements

We thank Levente Littvay for useful and constructive feedback.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Zsófia Papp is a recipient of the János Bolyai Research Scholarship and the Bolyai+ Scholarship. This work was supported by the National Research, Development and Innovation Fund of Hungary (FK131569, FK135274, and K119603).

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.