Abstract

In mixed methods approaches, statistical models are used to identify “nested” cases for intensive, small-

Keywords

The literature on “mixed methods” in political science continues to expand and has developed a growing practical tool kit for combining research on the “large-

This article shows that due to this tension, standard mixed methods approaches promise more in terms of identifying the “right” cases than they can deliver. This is largely due to the discrepancy between the random components of large-

These broader inferential challenges are illustrated through a discussion of standard case choice prescriptions for the investigation of causal mechanisms. We notably show that recommendations to choose “typical” or “onlier” cases do not guarantee representativeness in terms of causal effects. The potential inferential mistakes resulting from this include false negatives (or type II errors), when a causal process is absent or weak in a specific case, a finding that is then wrongly generalized to the wider population. Especially when a case study does not test specific ex ante hypotheses, it can also generate false positives (or type I errors), when a causal mechanism is (correctly) identified in the case at hand, but its presence is wrongly attributed to the wider population.

Contrary to established practice, we show that choosing reinforcing outlier cases—where an outcome is stronger than predicted in the LNA—is appropriate for testing preexisting hypotheses on causal mechanisms, as it reduces (though does not eliminate) the risk of false negatives. We also argue that when causal mechanisms are investigated inductively, researchers face a choice between “onlier” and reinforcing outlier cases that represents a trade-off between false negatives and false positives. We demonstrate that the inferential power of nested research designs can be much increased through paired comparisons of nested cases, especially if our theory about the causal process allows us to choose contrasting outliers.

We also show that similar rules apply for research designs aiming to identify mechanisms that account for reverse causality, which however should focus on “attenuating” outliers, that is, cases with weaker than predicted outcomes. Finally, while existing prescriptions to use outlier cases to investigate measurement error and (nonconfounding) omitted variables are basically correct, generalizing conclusions from single case studies on such issues need to be treated with caution. The same applies to the choice of “high leverage” cases for the identification of unobserved confounders.

This article first reviews standard prescriptions for model-led case choice in the mixed methods literature. It then investigates the implicit assumptions about causal effects in individual cases that this literature makes. Reanalyzing statistical models from published studies as well as from Monte Carole simulations, it shows that LNA research allows us no reliable conclusions about causal effects in individual cases. It outlines rules for minimizing the inferential risks this uncertainty creates and shows that its arguments and rules work for a variety of LNA modeling approaches and are relevant for a wide range of inferential objectives in case study research.

Literature Review

While the immediate function of case study research is the production of internally valid inferences, much of the social sciences continues to use them for the investigation of generalizable patterns (Elman, Gerring, and Mahoney 2016; Herron and Quinn 2016; Lieberman 2005). The grounds for choosing cases for generalization in older methods literature are relatively hazy, focusing on qualitative judgments of specific cases as “least likely” or “most likely” environments for testing a given hypothesis (Eckstein 1975). During the last two decades or so, methodologists have developed more formal, quantitative methods for choosing cases for potential generalizability. The nesting of a small number of case studies within large-

The purposes underlying formalized, nested case choice include the detection of measurement error, investigation of causal heterogeneity and scope conditions, identification of omitted variables, and sometimes, ambitiously, the estimation of causal effect sizes (Gerring and Cojocaru 2016; Herron and Quinn 2016; Seawright 2016b). Finally, most prominently, formal case choice is used to identify cases best suited for the investigation of causal mechanisms that underlie the effects established in large-

Selection rules differ by purpose: Deviant cases (i.e., ones badly explained by the large-

Onliers or “typical” cases, by contrast, are occasionally recommended for estimating causal effect sizes (Herron and Quinn 2016), but mostly to investigate causal mechanisms, usually through some variant of within-case “process tracing” (Brady and Collier 2010; George and Bennett 2005; Lieberman 2005). 1 For such typical case choice, we are also usually held to pick cases in which the model expects an independent variable to produce a particularly strong effect (Barnes and Weller 2014; Lieberman 2005; Seawright 2016a). Although the literature typically uses examples from observational LNA, the logic of nested case choice can also be applied to experimental methods (Seawright 2016b).

Seawright (2016b) has recently argued, against existing literature, that choosing deviant or outlier cases can also make the detection of a causal process linking already observed causal and outcome variables more likely. His argument, related to but distinct from the ones in this article, will be discussed in more detail below.

Existing Critiques of Nested Research Designs

Mixed methods and nested research designs more specifically have been criticized from several perspectives. Some scholars have argued that there is a fundamental incompatibility between large-

Another critique of nested research is closer to the arguments in this article: That biased or incomplete specification of the LNA model can lead to the incorrect identification of individual cases as onliers or outliers, leading to an SNA with a wrong and potentially misleading focus (Rohlfing 2008). To this, we would add that there is an unresolved discussion over what makes a “good” model (Achen 2005). At a minimum, which factors should be included in an LNA model will depend on the changing state of our theories.

This uncertainty over specifying the right model is compounded by an issue discussed in the following section: The fact that even if we have a model correctly describing the average causal effects in a given sample, we cannot be sure that in all

Rethinking Case Study-Based Generalization under Causal Heterogeneity

Most statistical models in political science cannot make reliable point predictions about causal effects in individual cases, even if the model itself is estimated with a high degree of precision, that is, when the standard errors of its coefficients are small. Experimental research designs acknowledge this by explicitly focusing on the average treatment effect (ATE), that is, without inferring the impact that a treatment has had on individual observations, the nontreatment counterfactual of which is always unobservable (Holland 1986). Experimentalists can, at best, identify group-level heterogeneity of treatment effects (Imai and Ratkovic 2013). The same is, of course, true of observational studies (Rubin 2005) but not sufficiently recognized in much of the mixed methods literature (see Seawright 2016a, for an exception). 2

The difference between group-level average effects and individual effects is captured intuitively by the distinction between a statistical model’s confidence interval and its prediction interval. Even models with tight confidence intervals usually have wide prediction intervals, meaning they cannot predict outcomes for individual observation with any precision. We illustrate this point in Online Appendix Section 1 (which can be found at http://smr.sagepub.com/supplemental/) with Monte Carlo data and replicating two prominent pieces of research. If effects for individual observations vary, however, then the underlying mechanisms producing these effects can be assumed to vary too (see the next section for a more detailed discussion of the link between effects and mechanisms).

Advocates of “nested” research designs have a reply to this: That when choosing individual cases from a larger population, we do typically know their individual outcome score and the error/residual. We do

In this interpretation, the error term captures the unobserved variables and stochastic forces that work to “push” a case off the regression surface. The remaining difference to the population mean is explained with the systematic factors captured through independent variables and their coefficients. Critically, however, a given independent variable’s effect on the outcome in an individual case is inferred from the model, which estimates the slope of the particular

This is not a problem if we assume that causal effects of our independent variables are invariant across

We can call this the assumption of perfect causal homogeneity. It is distinct from the typical assumption of causal homogeneity which merely posits that the same causal regularities are at work across a given sample, without implying that they will work always out at the same predicted effect sizes

As example, let us consider the oft-cited Collier and Hoeffler’s (2004) regression model predicting civil war onset as a function of various structural variables, including commodity exports, population size, and gross domestic product (GDP) growth (replicated in Online Appendix Section 1, which can be found at http://smr.sagepub.com/supplemental/). Perfect causal homogeneity would mean that in

Perfect causal homogeneity cannot be directly proven. It has an interesting implication for methods of “nested” case choice, however: It is not clear how choosing “onlier” cases with a small error term helps in identifying “typical” cases—we assume, after all, that within our case, universe causal effects are constant and homogenous. Cases with large residuals simply are subject to larger residual error processes that have nothing to do with the causal effect we are interested in, which should still be at work with the same force as in all other cases. As a result, we can in principle observe the same underlying causal processes across all cases. To go back to the Collier and Hoeffler example, perhaps prudent leadership was an idiosyncratic contributor to the error term that saved a given country with negative GDP growth rates from civil war, but war was still objectively more likely to a certain, fixed extent because of the economic shock.

Some authors do indeed discount the recommendation to select “onliers” and simply choose cases where the independent variable of interest is likely to have a particularly large impact according to the LNA model. Teorell (2010) in his study of global democratization takes this approach and explicitly pays no heed to his cases’ residual (p. 184). Barnes and Weller (2014) similarly recommend to focus on cases where the addition of a given independent variable in a statistical model leads to a maximum reduction (but not a minimization) of the residual. But is this a defensible approach?

What If Causal Effects Vary from Case to Case?

Under perfect causal homogeneity, residuals do not matter. By focusing on onliers rather than simply high-impact cases, authors like Gerring and Lieberman seem to implicitly assume that we cannot take such homogeneity for granted. This article will argue that this is a reasonable, conservative position, based on standard assumptions of the potential outcomes framework.

Both Gerring (2007a:147) and Lieberman (2005:448) are aware that statistically representative cases are not necessarily theoretically representative and that their small residual can be caused by unobserved factors (be they unobserved variables or simple stochastic fluctuation). This implies that the residual measured in the statistical model does not capture all case-specific idiosyncrasies. This however can only be the case if the causal effects estimated in the rest of the model themselves vary: If they were constant and deterministic across all cases, the residual would always pick up

The implicit assumption that causal effects are not homogenous across cases seems intuitively plausible if we consider how we think about case-level causal mechanisms in research practice. Take the relationship between years of schooling and countries’ economic growth as an example (Barro 2001). It is obvious that perfect causal homogeneity is unlikely.

We can easily imagine unsystematic, case-specific ways in which the impact of schooling on economic activity itself could vary and which no general LNA model can capture: Perhaps a country has strong and productive traditions of artisanship that are undermined through academic training; or perhaps important parts of society—such as ethnic minorities—might perceive state policies to increase years of schooling as unwelcome intervention, resulting in longer but ineffective schooling. More generally, the effect of years of schooling is likely to just be subject to irreducible stochastic variation across cases.

As important, there are likely to be

If unobserved factors that modulate treatment effects are not systematically correlated with any of the observed independent variables, this does not necessarily bias the LNA model, as their effects average out. They

This means that at least some of the case residuals in a model might be explained by case-level variation in the causal effects themselves rather than by unrelated errors. This is indeed a foundational assumption of the potential outcomes framework, where treatment effects are assumed to vary across individual cases and causal heterogeneity can be modeled at best for larger groups of cases (Holland 1986; Imai and Ratkovic 2013).

To capture this issue theoretically, we propose a distinction between

In the language of the potential outcomes framework, the CPE captures treatment heterogeneity across individual observations. In biomedical statistics, the phenomenon is also known as “subject-treatment interaction” (Poulson, Gadbury, and Allison 2012), a phenomenon that the fundamental problem of causal inference prevents us from directly measuring. The background error, in turn, is best thought of as a combination of the effects of unobserved variables and of pure stochastic noise on the outcome variable. It operates separately from the core causal effect we are interested in.

To go back to the example on education and growth, there could be a negative CPE that reduces the direct effect of education on growth in one particular case because formal education leads to a decline of productive artisanal traditions in that country. A background error could be any other unrelated (and unmeasured) case-specific factor pushing growth up or down that is independent of education, perhaps the quality of leadership or weather conditions.

How does this relate to causal mechanisms? The background error does not affect the operation of any causal mechanism between

Allowing for CPEs means that case-study-based generalizations about broader causal patterns and mechanisms is difficult

We should mention that this interpretation of causal mechanisms remains in a frequentist, stochastic framework that corresponds to a linear, additive model of causality in which many variables and all probabilities are measured in continuous terms and in which there are unexplained residuals. This is not easily compatible with a prominent approach to qualitative case research in which causal relationships are expressed in terms of necessary and sufficient conditions, in which causal accounts are meant to be exhaustive and in which, given a set causal conditions, there is no clear place for different effect sizes (Goertz and Mahoney 2012; Schneider and Rohlfing 2013).

This is a tension that all nested research designs that depart from a statistical model face. The nature of case study research and process tracing in such nested designs will therefore have to be different from qualitative approaches focused on exhaustive constellations of causes: Instead of aiming at a complete account of the causal pattern that led to a specific outcome, it will primarily aim to identify one pathway that

In the approach discussed here, the statistical model comes first and we allow for unexplained (and potentially unexplainable) treatment heterogeneity of individual observations in the shape of CPEs. This has a key implication for nested research designs: Different from the assumption of perfect causal homogeneity, the presence of CPEs does in fact allow us to justify the choice of onliers as typical cases. Consider that the background error by definition is not correlated with the CPE, and an observation’s residual is a combination of the two. They can offset each other, which will typically result in a smaller residual, or can add up, which will tend to generate a larger residual. The further away a case lies from the regression surface, the more likely it is to have large causal process and background errors that work in the same direction. This means that for such cases, CPEs will on average be biased in one direction and, critically, be larger than for onlier cases, where their sample average is zero and their individual values (positive or negative) will be smaller.

In the education-growth example, cases with a “typical” growth outcome are more likely to have both a smaller CPE of the education effect and a smaller background error, therefore making it more likely that the case-level effect of education on growth is closer to what the model predicts. For an “attenuating” outlier with a smaller than predicted growth outcome, by contrast, it is likely that unrelated background error processes have helped to push growth down, but size of the education effect itself is also more likely to be below average and thereby unrepresentative.

In this sense, onlier cases are indeed more likely to be “typical,” that is, to be subject to individual CPEs that are smaller and cluster around zero. By choosing onliers, we can reduce the risk of idiosyncratic cases in which the causal effect of interest is not unusually weak, strong, or absent. But how good of an insurance is this? We use a simple Monte Carole simulation of a bivariate correlation to test this.

We first investigate the absolute CPEs for onliers as compared to outliers of different magnitudes. We then specifically assess the types of cases where

A Simulation of Causal Process Errors

The data generation model chosen is arbitrary but helps to illustrate the measurement issues at hand, namely, the range of causal process and background errors that can result from different case choice rules. We generate 10,000 observations on the basis of the formula

The error/residual results from adding two individual, uncorrelated, smaller error terms; one for the causal process and one for the background error. Each of the two has a standard deviation of

Note that the point here is the conceptual illustration of the problem; in practice, CPEs are unobservable in quantitative models. Their importance in a data generation process could be anything from quite small to accounting for most of the error structure, thereby creating strong individual-level causal heterogeneity making generalization even more difficult.

For the time being, we assume that there are distinct causal effects at both extreme ends of the causal variable; an implicit assumption shared by much of the nested case choice literature. For the education-growth example, this would imply (reasonably) that both low and high education levels will have observable effects on growth, created by identifiable causal mechanisms. For an alternative scenario where causal effects are simply absent at one end of the causal variable’s value range, see the below section on cross-case comparisons.

Using the above simulation data, what happens if we follow standard rules for identifying “typical” cases for in-depth study of the causal process linking

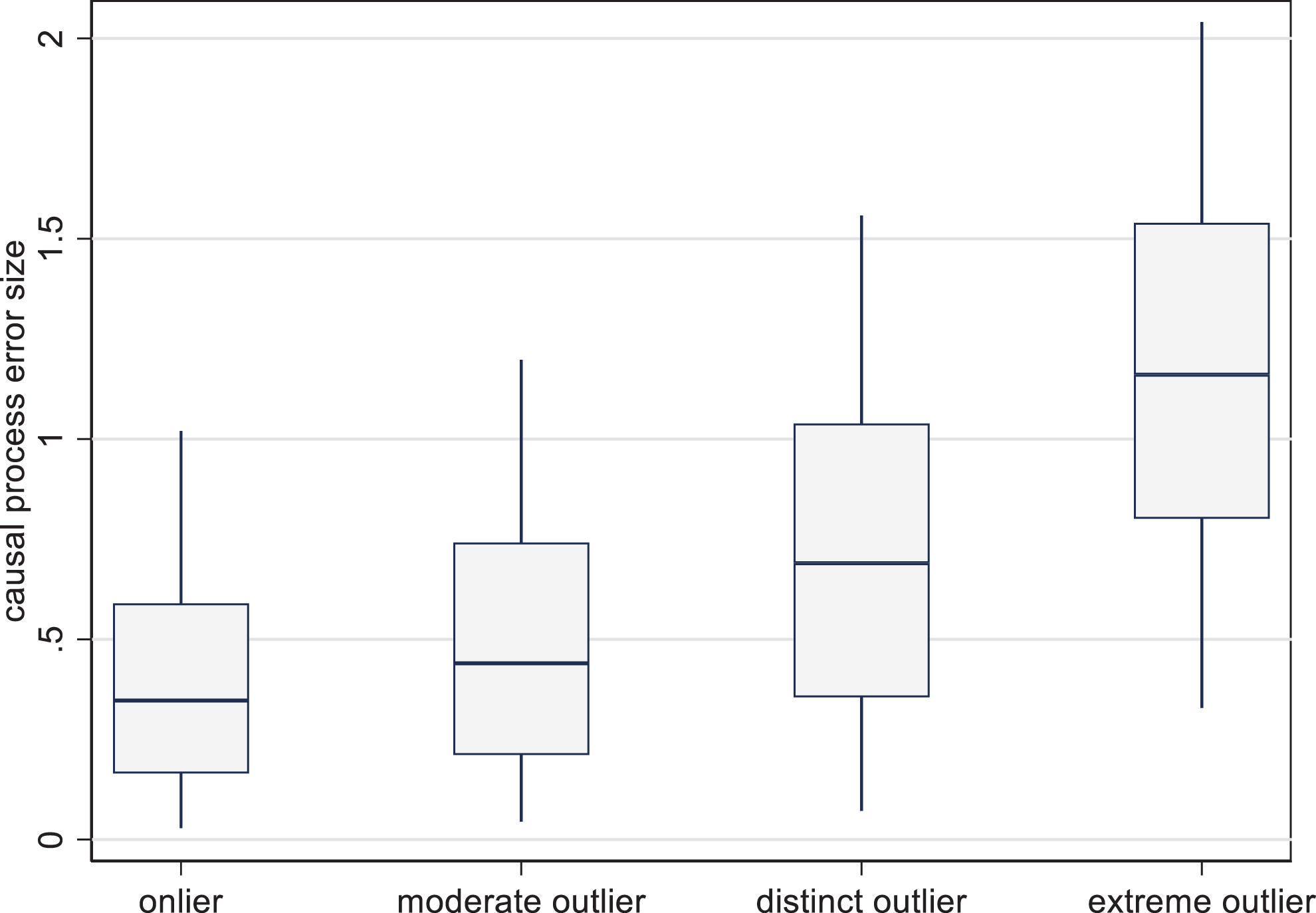

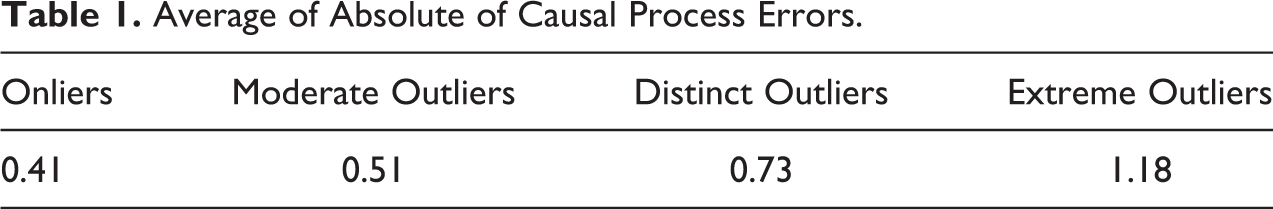

To assess the utility of choosing onlier cases, we compare the average size of the absolute of CPEs for cases chosen according to four different selection criteria: onliers, where the absolute of the case residual is less than half of its standard deviation in the full sample (39 percent of cases); moderate outliers, where the absolute of the case residual lies between half and a full standard deviation (30 percent of cases); distinct outliers, where it lies between 1 and 2 standard deviations (27 percent of cases); extreme outliers, where it lies above 2 standard deviations (4 percent of cases); (due to the large

The box plots in Figure 1 show the distribution of CPEs for different case categories (the boxes contain the central two quartiles of CPE values, while the whiskers contain 95 percent). As we would expect, onliers do indeed have the smallest average CPEs and the most compact distribution of CPEs. Yet their advantage is small: Table 1 shows that the average CPE for onliers lies at 0.41, compared to a sample average of 0.71 (which is

Simulated absolute causal process errors by outlier status.

Average of Absolute of Causal Process Errors.

More important, however, is that onliers also have substantial average CPEs. To recall, the linear effects of

Conditions for False Negatives

We have so far worried about positive and negative causal errors. What if our concern is not unrepresentative causal effects in general, but only

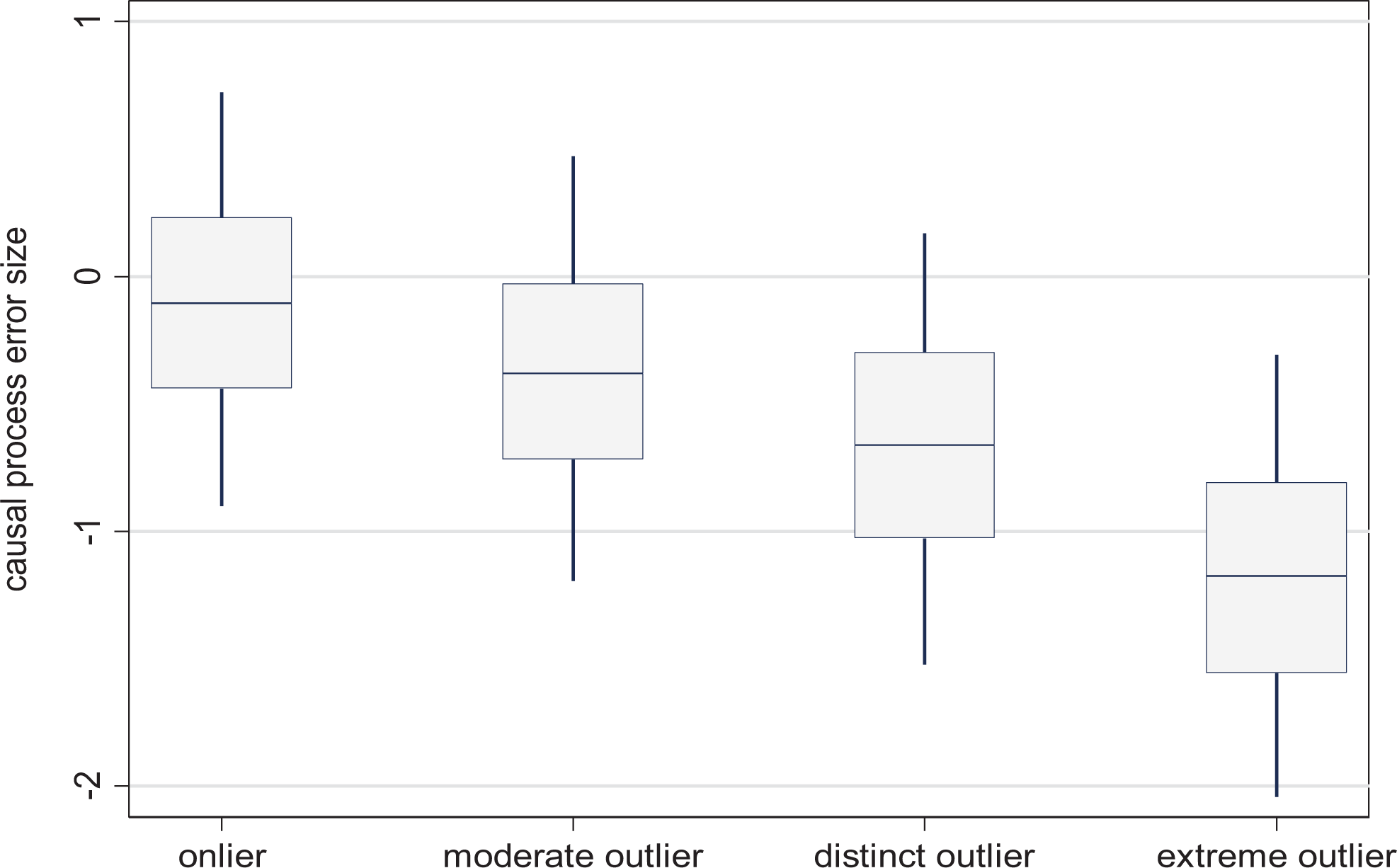

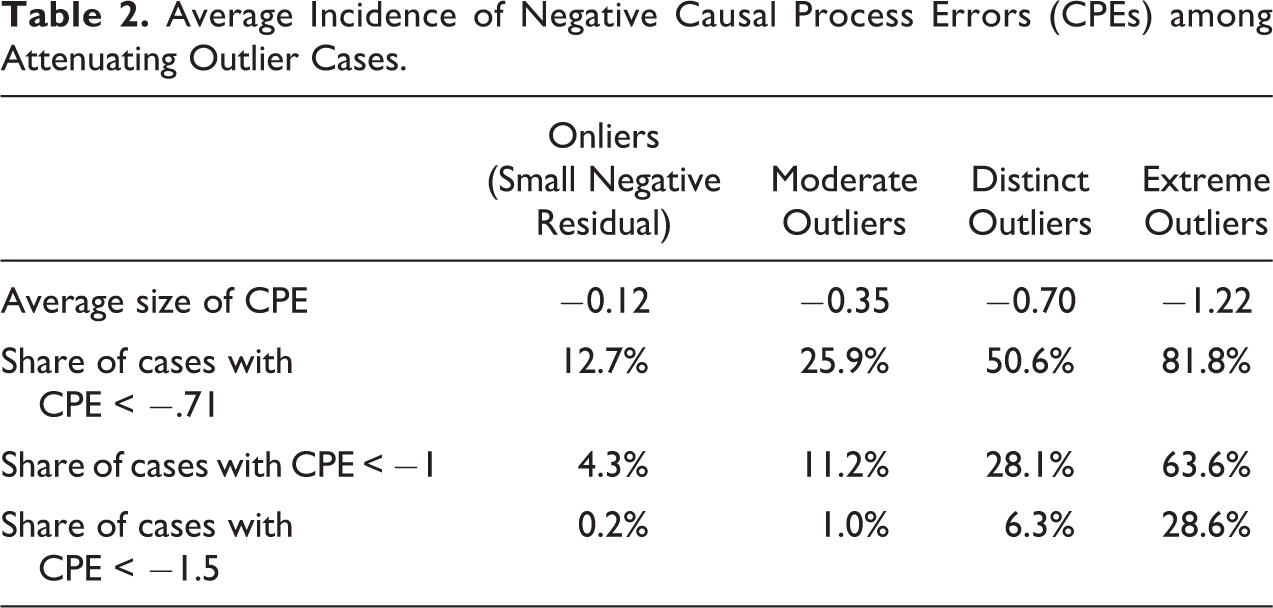

Attenuated effects occur in cases where CPEs

Simulated causal process errors by attenuating outlier status.

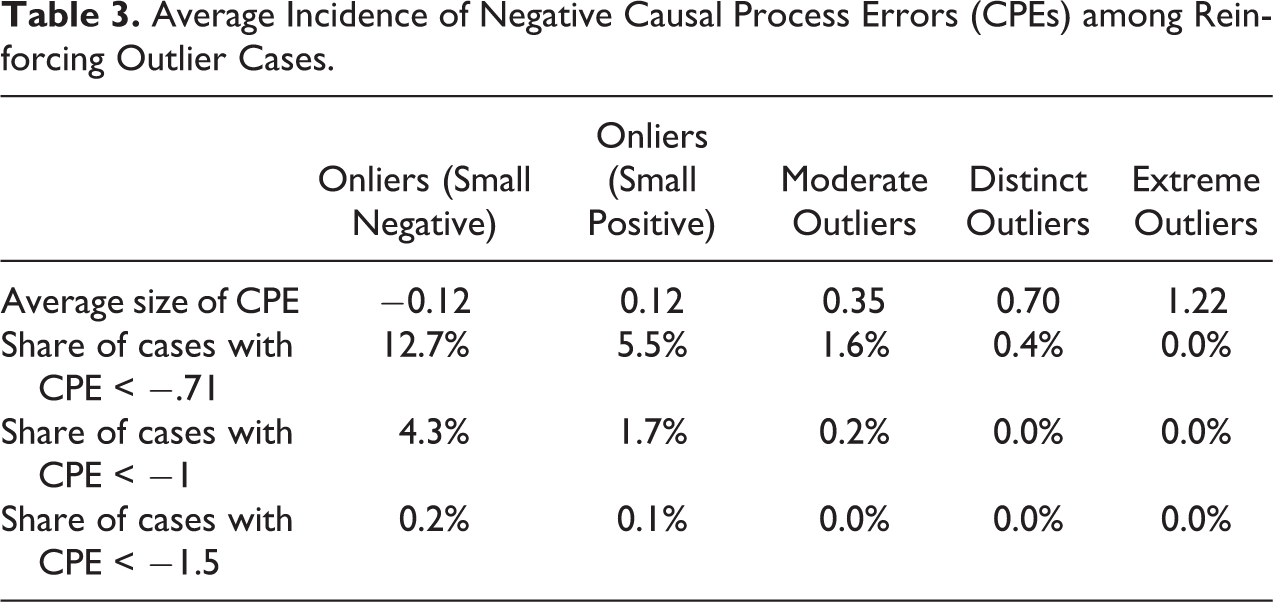

Unsurprisingly, for onliers with small negative residuals between zero and half of a standard deviation, there is little systematic bias in the CPEs, whose average, despite considerable dispersion, is −0.12. The systematic bias becomes much larger for stronger outliers and converges on the average of the absolute of CPEs for extreme outliers from Figure 1, as attenuating CPEs constitute virtually all of the CPEs in these cases.

How frequent are cases in which CPEs substantially reduce causal effects? The bottom three rows of Table 2 show the shares of cases in our four case categories that have CPEs below −0.71 (the CPEs’ standard deviation), −1, and −1.5. With a CPE of −0.71, even a case with an extreme

Average Incidence of Negative Causal Process Errors (CPEs) among Attenuating Outlier Cases.

Under our assumptions, 12.7 percent of “high leverage” onlier cases would lose almost half or more of their causal effect due to CPEs, 4.3 percent would lose at least two-thirds, and 0.2 percent would lose their causal effects entirely and typically experience a reverse effect.

While we saw above that choosing onliers does not protect much against CPEs per se, compared to attenuating outliers they provide some insurance against large CPEs that substantially reduce or eliminate the

If we flatten the slope of the data generation process to

There are further reasons to believe that false negatives could be more frequent in research practice than in the above “clean” example: In smaller samples, there will often be no convenient onlier cases available that offers maximum leverage, creating a trade-off between onlier status and leverage. Model misspecification and mismeasurement further increase the risk of false negatives, as we might pick apparent onliers that are not onliers under a better model. We have also assumed the same standard deviation of CPEs for all values of

In sum, the onlier rule provides reasonable but weak guidance in identifying typical cases and does not guard against false negatives if our LNA model is weak. Substantially, one can think of many reasons for why a causal process might not work or even produce the opposite of the expected outcome in specific cases. In the Collier and Hoeffler model, for example, primary commodity exports might in fact reduce the risk of conflict in a case where revenues are judiciously used for patronage and cooptation, a mechanism producing a pacifying effect. At the same time, other factors picked up by the background error, for example, militarization of society or high levels of organized crime, might in turn push the country toward a higher probability of war and hence back onto the regression surface, making it an onlier that is in fact not representative of general causal processes.

Our choice to make the CPE on average as large as the background error is arbitrary. This article’s basic arguments cover all ranges of CPEs, however: If we assume a smaller causal error, we are closer to a world of perfect causal homogeneity, which, as we have seen, offers no justification at all for onlier choices. For larger CPEs than assumed here, the onlier choice remains justified, but any observation—including onliers—will tend to be less representative, making generalization universally difficult. 4 This is equivalent to what happens when a model’s general explanatory fit is lowered as in the above example.

There is no obvious method for identifying the relative importance of CPEs relative to background errors in a model’s error structure. A researcher can only speculate how sensitive a hypothesized causal mechanism could be to idiosyncratic context factors and stochastic noise that we cannot model. More complex, higher level mechanisms might be more susceptible to be affected by context conditions: Macro-level processes like those linking education to growth or natural resources to conflict are more likely to be on the heterogeneous side. Qualitative cross-case comparisons might help researchers acquire a better, though not conclusive, sense of a mechanism’s dependence on idiosyncratic context.

While CPEs can always modulate case-level effect sizes, we have seen that under some conditions, the choice of onlier case studies makes it more likely that we will at least detect the

Above we have looked at attenuating outliers where CPEs are likely to reduce the force of the causal process at hand. The flipside of this finding is that if we choose reinforcing outliers, their CPEs on average are likely to strengthen the causal process at hand. Ceteris paribus, a stronger reinforcing CPE should make process tracing easier due to the larger effect size.

Table 3 shows the incidence of substantial attenuating CPEs for different types of outliers (see Online Appendix Section 2, Figure A.11, which can be found at http://smr.sagepub.com/supplemental/, for the corresponding figure). The results are clear: Even choosing only moderate reinforcing outliers strongly reduces the risks of CPEs that substantially weaken a causal process. Larger attenuating CPEs become unlikely if we choose reinforcing outlier cases. If the aim is to avoid the risk of false negatives, it pays to choose reinforcing outliers. In the education-growth example, this would mean choosing a country with somewhat higher than expected growth, thereby making it more likely that the education effect contributing to this growth is present and visible (if, on average, somewhat stronger than in the general case population).

Average Incidence of Negative Causal Process Errors (CPEs) among Reinforcing Outlier Cases.

Lieberman discusses the assessment we need to make when a nested case study does not bear out a hypothesis (Lieberman 2005:448): Is it just an idiosyncratic case subject to specific circumstances or measurement error? Or is something wrong with the hypothesis and perhaps the LNA used to choose the case? Any such discretionary judgment call will be open to potential criticism. That said, idiosyncrasies that suppress causal processes are less likely for reinforcing outliers: If we do not find a hypothesized process there, it is unlikely to exist elsewhere. Reinforcing outliers are also less vulnerable to measurement errors that can undermine the detection of a causal process: If

Conditions for False Positives

Choosing reinforcing outliers makes case-specific effect sizes likely to be somewhat larger than typical. But as noted above, the purpose of case studies is seldom to make point estimates of effect sizes—and in any case, the average

In our definition, the CPE captures case-specific factors that can boost or reduce a causal effect. If these are substantive rather than just stochastic, could a reinforcing outlier lead to false generalizations about the nature of the causal process at hand—a

CPEs with a positive (observable) impact on the outcome are more likely for reinforcing outliers, however, just like attenuating CPEs are more likely for attenuating outliers in Table 2. Processes that create reinforcing CPEs are the ones we would be more likely to wrongly incorporate in our generalizing conclusions about the causal mechanisms at hand. In the case of natural resources and conflict, we might, for example, find that reliance on commodity rents allowed a country’s leadership to disengage from its social constituencies, in turn increasing the likelihood of conflict—but fail to realize that this process was case-specific. We might still be able to identify causal channels linking rents and conflict that are more general, but the risk of identifying idiosyncratic causal channels is higher for reinforcing outliers. We should at a minimum proceed with caution in assessing whether what we have found might “travel” to other cases.

Inductive research on causal mechanisms about a given

The situation is different for case studies that test preexisting hypotheses about a causal process: Unless these hypotheses have been generated by the case at hand, any case will be a reasonable test of their generalizability. It is unlikely that a preexisting hypothesis perchance only applies in one case, that is, that we find a false positive.

7

It is at the very least likely to also apply in some other cases—although we cannot conclude that it is universal or estimate its frequency, which is the domain of large-

Under these circumstances of hypothesis testing, it is most appropriate to choose reinforcing outliers as argued above, as in such cases, general causal processes are more likely to be strong and visible. Reinforcing outliers are also useful for falsification, as they effectively constitute “most likely” cases—if a hypothesis does not apply there, it is less likely to apply elsewhere.

Onliers and attenuating outliers, by contrast, run a substantial risk of yielding a false negative in research designs that investigate existing hypotheses, for reasons identified above. If a hypothesized causal process is absent or substantially weak in such cases, we will not know whether this is due to the case’s idiosyncrasies or because the causal process hypothesis is wrong. 8

In practice, even deductive research designs can create new causal process hypotheses, or new nuances to existing hypotheses, in the process of case research. But if we identify such new dimensions, we should be careful about generalizing from them, as they might constitute part of a reinforcing CPE. As with general inductive research, such theoretical adjustments should ideally be subjected to further tests on other cases or, if possible, a modified LNA. In the context of primary resources and civil war, for example, we might investigate a resource exporting country in which high levels of corruption in the distribution of rents have contributed to the greed and disaffection leading to war. This could lead us to add an interaction between a corruption measure and resource exports in the LNA model.

If what we find appears intuitively plausible for a larger share of cases, the question whether the case at hand was an onlier or not should be secondary in deciding about LNA model revisions. If the new specification provides no leverage in the revised LNA, this suggests that the additional nuances we have detected were case-specific. If a revised causal process hypothesis cannot be tested in the LNA, the best way of assessing its generalizability is to study at least one more case for which it then becomes an ex ante hypothesis. In this case, as per the above discussion, a reinforcing outlier should be chosen.

The above discussion highlights the critical importance of a clear research protocol, that is, of establishing and documenting at which stage hypotheses are generated and when they are tested. This is critical for deciding which type of case to choose in nested research designs. Nonexperimental political science still does not pay much attention to documenting research designs ex ante (Humphreys, de la Sierra, and van der Windt 2013).

The above guidelines are quite different from standard rules of case choice in the mixed methods literature. The one author who suggests the deliberate choice of outliers is Seawright. He rightly, if fairly briefly, points out the value of deviant cases for investigating causal pathways between known

For the sake of exposition, the randomly generated data in the above discussion were based on a simple bivariate regression model. The basic logic of our arguments holds under wide range of assumptions and model variations; see Online Appendix Section 3, which can be found at http://smr.sagepub.com/supplemental/, for a discussion of multivariate and discrete outcome models, models with nonlinear effects and for case studies that investigate several independent variables at once.

Causal Process Errors and Other Case Selection Objectives

Our interpretation of causal heterogeneity also has implications for other purposes of case selection beyond the investigation of mechanisms. First, if we allow for the possibility of CPEs, using case studies for estimating general effect sizes for larger populations—a relatively unusual approach yet one advocated in recent literature (Herron and Quinn 2016)—is a highly unreliable enterprise.

When it comes to diagnostic case studies for identifying measurement error on the outcome variable, unobserved (nonconfounder) variables, and systematic causal heterogeneity, existing prescriptions to use deviant cases (Seawright 2016a) continue to make sense. Yet we need to allow for the possibility that deviance is just an outcome of unsystematic causal heterogeneity, including particularly weak or powerful versions of a general, already known causal mechanism. A single case study is not necessarily dispositive in identifying any of the above factors.

To the extent that case studies are used to test for endogeneity in the shape of reverse causality, we can use analogous rules as for the detection of conventional causal mechanisms: A reverse mechanism linking the outcome variable to the (assumed) causal variable will again on average be more visible for reinforcing outliers. But as the

Finally, if the objective is to detect omitted variables that act as confounders (i.e., are related to both the independent and dependent variable in our model), the choice of outliers is of no particular use. Confounders are not systematically stronger for outliers: As confounders affect both

A Defense of Paired Case Comparisons

So far, our discussion has looked at the investigation of individual cases, similar to the “pathway case” approach recommended by Gerring (2007b). How helpful is it to investigate more than one case for the process tracing of mechanisms, as recommended by Lieberman (2005:441)? What is the impact on the reliability of our findings under the CPE framework? It turns out that this framework provides a new and strong justification for traditional approaches to paired comparison (Slater and Ziblatt 2013; Tarrow 2010), especially “method of difference”-type setups with contrasting outcomes across cases. This is significant because the value added of such comparisons has been in doubt ever since Lieberson’s (1991) trenchant criticism of the use of Mill’s methods of comparison in social science.

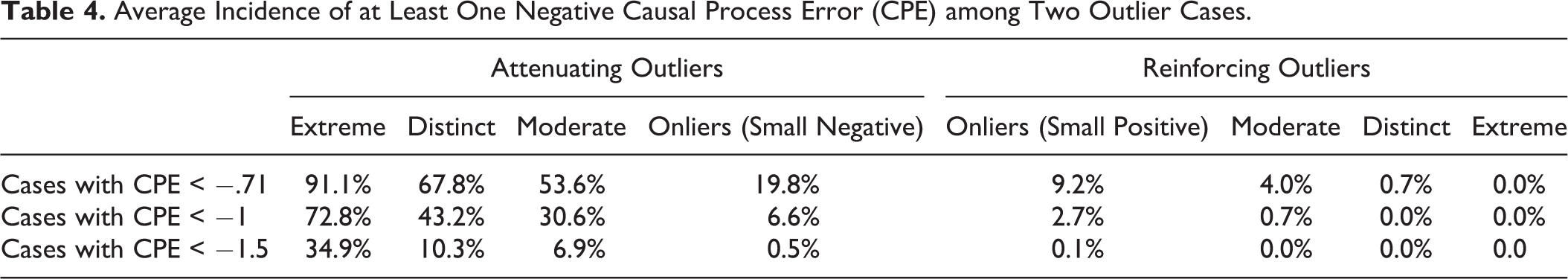

To be sure, if we investigate two cases, causal heterogeneity means that the probability of finding at least one false negative increases significantly compared to a single case study (see Table 4 compared to Tables 2 and 3; for a graphical representation of attenuating CPEs in paired comparisons, see Figure A.12 in Online Appendix Section 2, which can be found at http://smr.sagepub.com/supplemental/). The risk that in one of two countries under study education has an unusual effect on growth is higher than in a single case study.

Average Incidence of at Least One Negative Causal Process Error (CPE) among Two Outlier Cases.

If we are testing preexisting hypotheses, a mixed finding with one false negative might lead us to conclude that the process at hand is likely to apply in some cases but not others and that we are dealing with an issue of group-level causal heterogeneity—while in fact, net of case-specific, idiosyncratic errors, the causal process identified in the other case is general. If we arrive at mixed findings inductively, the (wrong) suspicion that what we have found is idiosyncratic and not generalizable will be even stronger.

The above might seem dispiriting for comparative scholars. But it applies only if the two case studies are dealt with as separate investigations. In this context, we expect their outcome scores to be implicitly compared to the population mean, net of statistical controls. In a properly executed paired comparison, however, we compare outcomes

In this context, we should look at the comparison as one integrated research design. We need to consider the probability that we will find the same or similar causal processes (or absence thereof) in two cases if they have been chosen with contrasting leverage on the independent variable of interest. Identifying cases this way extends Seawright’s (2016b) recommendation to choose extreme values on the independent variable from the choice of single cases to the choice of case pairs.

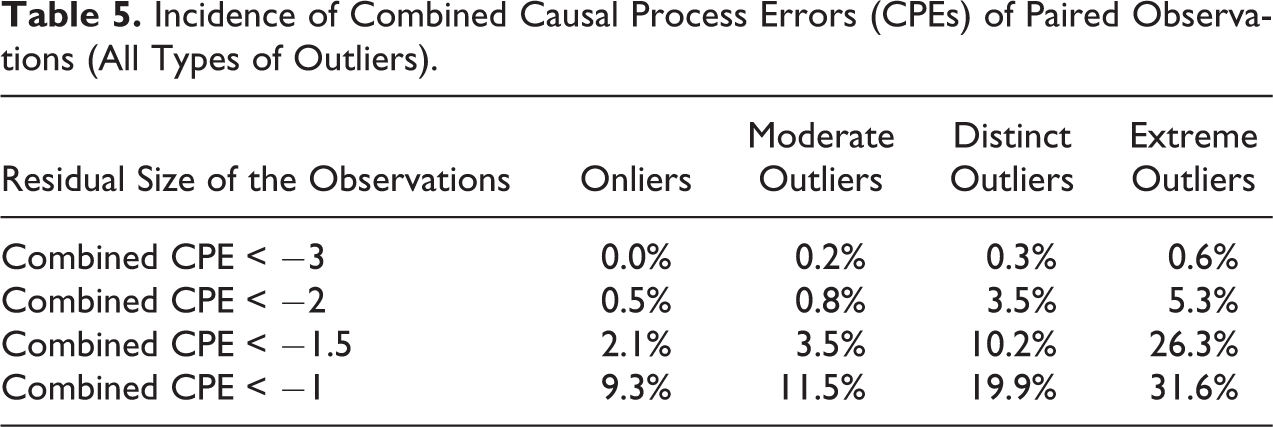

We consider pairs of cases chosen at opposite ends of the

Table 5 indicates that the combined attenuating effect of two cases’ CPEs very rarely is strong enough to obliterate the whole difference in causal effects across the two cases. The linear effects of

Incidence of Combined Causal Process Errors (CPEs) of Paired Observations (All Types of Outliers).

The risk of a false negative becomes larger if we choose case pairs in which the individual cases have larger, randomly chosen residuals. It grows also if we choose case pairs whose

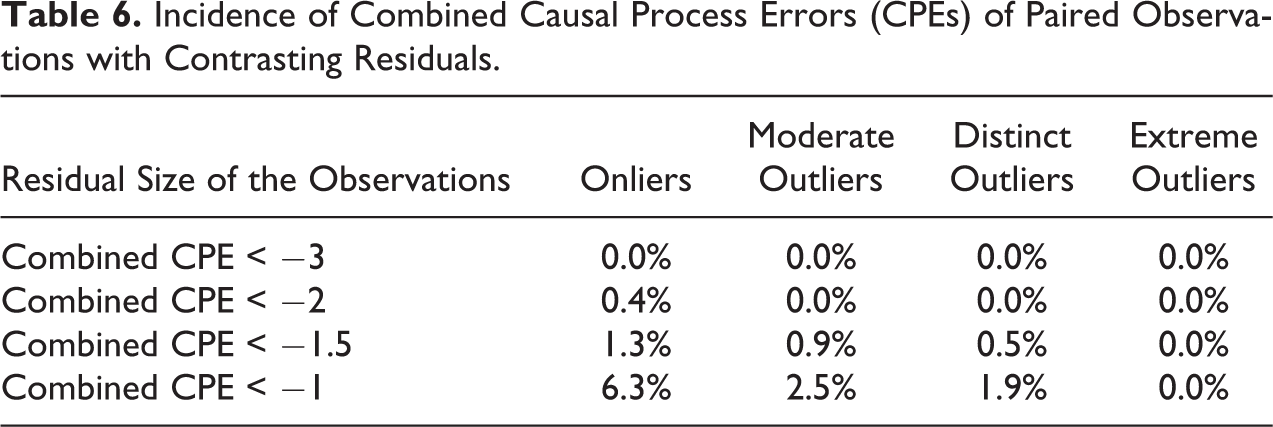

Choosing onliers is better than random choice of outliers. But what about choosing outliers strategically? Similar to our strategy of choosing reinforcing outliers in a single case study, we now choose cases whose outcomes lie

Incidence of Combined Causal Process Errors (CPEs) of Paired Observations with Contrasting Residuals.

To return to the education-growth example: If we compare a case with low educational attainment and particularly low growth outcomes with a case with high educational attainment and particularly high growth outcomes, it is unlikely that the difference in education levels has not contributed in at least some way to the difference in growth across the two cases through observable mechanisms.

Qualitative methods literature argues that investigating a contrasting case helps understand one’s core case better (Tarrow 2010:17) and that comparisons should capture the full variation of outcomes (Slater and Ziblatt 2013). Our framework provides a formal justification for these intuitions.

Different from single-case nested research, in the case of comparisons, the same essential logic applies to deductive and inductive process tracing. The main difference is that the inferential payoff from comparisons is larger for inductive research: Not only does the choice of contrasting outliers lower the risk of attenuated effects and make it more likely that contrasting causal processes will be visible. To the extent that the two cases at hand show variations of the same process, this process is much more likely to be generalizable. This means that comparisons are particularly advisable for inductive research on causal processes.

The analysis of paired case studies also helps with identifying cases for exploratory research that serve to identify new independent variables which could be added to an LNA model (“deviant” cases in Gerring’s terminology and “model-building” case studies in Lieberman’s). Both Lieberman and Gerring counsel the

The only qualification to the rule of choosing contrasting outliers obtains if cases with a low value of the

Whether we can think of a causal factor as absent when it takes low values is easier to decide in a deductive research design based on a specific theory of how that factor brings about the outcome. It can be harder in inductive research designs without hypotheses about the causal mechanism. In case of doubt, one should err on the side of caution by choosing two reinforcing outliers. Investigating two cases with similar values on the independent variable instead of just one does increases the risk of at least one false negative, but the baseline that these cases are contrasted with is the complete absence of the independent variable. If we choose two cases with an

The above discussion has important implications for single case studies too: If a causal variable is theorized as simply absent at its lower boundary, with no specific expectation of observable mechanisms, the visibility of effects at the upper range of the variable in a single case is potentially larger. This is because, as above, we are not comparing our observations to the population mean as in our above discussion of causal variables that we expect to have contrasting effects at both ends of their value range. We instead implicitly compare a mechanism at the high end of the causal variable’s range with its (theoretical) absence at the opposite end of the range. How much of an advantage this simpler setup constitutes will in practice depend on the strength of a causal process and its error structure. In any case, the same rules for choosing reinforcing outliers apply as for other single case studies; see Online Appendix Section 4, which can be found at http://smr.sagepub.com/supplemental/, for additional simulations of causal effects and CPEs that converge toward zero at one end of the causal variable’s range.

While it might appear counterintuitive to large-

Discussion: Implications for Research Practice

Imagine we build a statistical model explaining voter attitudes based on survey data. The model is then used to identify one individual respondent who is chosen for a case study on how and why wealth impacts citizens’ political self-identification because (a) she is very wealthy and (b) her score on the outcome variable of left-right placement produces a small residual in the statistical model. Further imagine that with superhuman finesse, we are able to explain the factors that shaped her political position through a detailed biographical investigation. Having accounted for all other systematic and case-specific context factors in this case, we then isolate the impact that wealth has had and the mechanisms involved. We finally conclude that thanks to the small residual, the mechanisms identified are likely to capture how wealth influences political positions in the voting population in general.

This story is absurdly heroic, yet it summarizes what nested research designs often ask us to do. We should be all the more skeptical about standard case identification procedures in such research designs if they involve more complex and more variegated units of analysis such as social groups, organizations, or states.

10

As long as we believe that we live in a stochastic world, any generalization from case studies—whether embedded in large-

Mario Small has argued that we can never reach statistical representativeness in small-

This article is not quite as pessimistic as Small. We cannot use small-

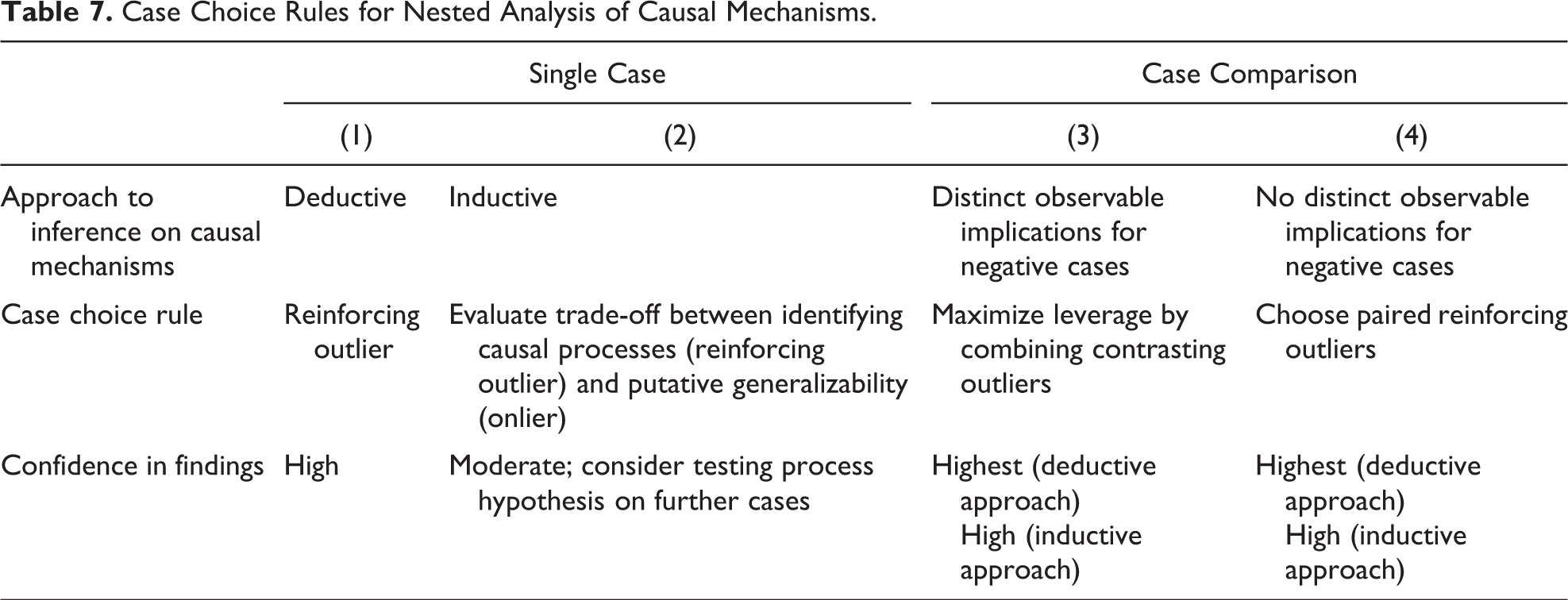

In its analysis of nested case choice, this article has shown that the size of causal effects is likely to fluctuate in both onlier and outlier cases. But estimates of effect size are not the primary concern of most case study research. Instead, in many instances, we want to establish whether a causal process exists and investigate its nature. Table 7 gives an overview of the rules for doing so that emerge from our discussion. Critically, our case selection strategy should take into account our state of knowledge: If we have existing hypotheses about the process(es) at hand, in a single case study, it is most advisable to investigate reinforcing outliers, as this makes the presence, strength, and visibility of a given causal process more likely, facilitating process tracing (column 1 in Table 7). Reinforcing outliers have a smaller risk of false negatives, while false positives in hypothesis-testing research are unlikely.

Case Choice Rules for Nested Analysis of Causal Mechanisms.

If our objective is to inductively identify causal mechanisms linking a given independent variable with an outcome (column 2), reinforcing outliers make it more likely that general causal patterns are more visible. If we want to generalize about these processes, however, reinforcing outliers also increase the chance that the identified causal processes, or aspects thereof, are idiosyncratic. In some cases, it will be theoretically or intuitively obvious whether a specific process is likely to apply to a wider population, but in others, it will not be. Choosing onlier cases for the inductive identification of causal processes makes false positives less likely but increases the risk of false negatives. As onliers can also be subject to causal process errors, inductive generalization about causal mechanisms on the basis of individual case studies is generally tenuous. Such generalizing inferences can be every bit as problematic as using case studies to generalize about causal effects, a problem that is more clearly recognized in the mixed methods literature (Gerring 2004:348; Lieberman 2005:441). Inductive research about causal processes hence faces a trade-off between false negatives and false positives. How this is resolved will depend on the purpose and context of the project at hand, but the trade-off should in any case be made explicit.

We have seen that increasing the number of cases under study can work as partial corrective to case-specific errors. This is especially the case if we can pair cases in a joint research design that maximizes the contrast in the causal variable at hand, thereby minimizing the risk of false negatives. Ceteris paribus, this procedure is considerably more robust than single “pathway” case studies. It only works, however, if there are distinct observable implications for contrasting values on the independent variable (column 3).

If that is not the case—if one end of the contrast only implies absence of a causal process—it is more advisable to choose two reinforcing outlier cases with a strong expected impact on

This article has also used its framework to refine case selection rules for other research purposes such as identifying new causal variables, confounders, measurement error, and reverse causality. It mostly supports preexisting selection approaches in these cases, but with a note of caution about generalizability. It provides a new rule for selecting attenuating outliers for identifying mechanisms that underlie potential reverse causality.

If this article’s arguments seem complex, then this is because the use of large-

Case studies continue to be very important in their own right for producing internally valid accounts of what happens in individual cases, and they arguably remain the primary tool of hypothesis generation for large-

On the most general level, researchers using statistical methods need to take these methods’ assumptions about how causality works seriously and, to be consistent, carry them into their case studies. This means that case-level causal effects are, ex ante, unknowable and mechanisms, when detected, potentially unrepresentative. If we want to reliably generalize with small-

Supplemental Material

Supplemental Material, sj-pdf-1-smr-10.1177_0049124120986206 - Taking Causal Heterogeneity Seriously: Implications for Case Choice and Case Study-Based Generalizations

Supplemental Material, sj-pdf-1-smr-10.1177_0049124120986206 for Taking Causal Heterogeneity Seriously: Implications for Case Choice and Case Study-Based Generalizations by Steffen Hertog in Sociological Methods & Research

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.