Abstract

This pilot study investigates the potential of a voice-based chatbot (EnMIA) to support speaking fluency, motivation, and engagement among undergraduate Korean language learners at a single U.S. Midwestern university. The chatbot aims to provide interactive, real-world speaking tasks accessible through multi-platforms, supporting seamless learning. Data were collected over one academic semester through pre- and post-speaking assessments and surveys. Speaking performance data indicated an improvement in fluency, though accuracy and complexity remained unchanged, in a pre-post design without control group, suggesting short-term practice may strengthen learners’ ability to speak more smoothly and confidently. However, without a control group, gains cannot be solely attributed to the tool. Survey results showed high perceptions of support, design, and usability, with interactive tasks correlated with motivation. The findings highlight chatbot-supported interaction potentially enhance motivation and fluency, while pointing to the need for extended practice and targeted design to affect accuracy and complexity.

The primary goal of second language (L2) programs in higher education is to foster students’ communicative competence and linguistic proficiency, enabling effective real-world interactions (Littlemore & Low, 2006; Schulz, 2006). This communicative emphasis, encompassing fluent meaning conveyance and interpretation, aligns with broader second language acquisition (SLA) goals that prioritize meaningful language use in diverse contexts alongside grammatical accuracy (Kessler & Bikowski, 2010). As globalized societies demand interculturally adaptable multilingual communicators (Jackson, 2020), instructors must provide ample authentic practice opportunities to build fluency and confidence. While the broader literature on L2 communicative competence applies globally, this pilot study is scoped to undergraduate Korean language learners in a U.S. university context using EnMIA over one semester.

Theoretical frameworks underscore interaction and production as key acquisition drivers: negotiating meaning in conversations refines output and comprehension (Loewen & Sato, 2018), while meaningful contexts strengthen fluency and internalization of structures (Shehadeh, 2022). Thus, curricula should prioritize real-world tasks like dialogues and role-plays (Ellis et al., 2020). Still, traditional L2 classroom settings often constrain opportunities for oral practice due to limited instructional time, large class sizes, and diverse learner proficiency levels (Blake, 2016; Zhao & Lai, 2023). Consequently, educators are increasingly turning to technology to create scalable, interactive environments that support communicative practice.

Educational technology advancements have transformed L2 pedagogy by offering less-intimidating environments that reduce anxiety and encourage risk-taking (Dickinson et al., 2008). Computer-assisted language learning (CALL) and mobile-assisted language learning (MALL) have introduced dynamic platforms, such as virtual reality (VR) applications (Nicolaidou et al., 2023), artificial intelligence (AI) dialogue systems (Zhai & Wibowo, 2023), and AI-generated courseware (Schroeder et al., 2022), which facilitate personalized and immersive learning experiences. These tools align with contemporary CALL frameworks, which advocate for technology that supports task-appropriate language use and feedback (Ziegler & González-Lloret, 2022). For instance, VR enables contextualized practice, AI simulates real-time conversation (Godwin-Jones, 2019; F. Li & Li, 2023), and MALL leverages mobile devices for flexible, autonomous learning (Kukulska-Hulme, 2018; F. Li & Li, 2023).

Among these technologies, voice-based AI chatbots have emerged as particularly promising for L2 speaking practice. Unlike text-based systems, voice-based chatbots support oral proficiency by enabling learners to engage in spoken dialogues, receive pronunciation feedback, and practice conversational turn-taking (Jeon, 2023). These capabilities address a critical gap in traditional L2 instruction, where opportunities for oral practice are often limited (Bibauw et al., 2022). Chatbots also align with motivational theories, as interactive tasks foster engagement and intrinsic motivation (Boo et al., 2015; Oh & Song, 2021). The effectiveness of chatbots, however, depends on their design, requiring authentic tasks, intuitive usability, and accessibility to prevent excessive cognitive overload in complex interfaces (Kalyuga & Singh, 2016; Wang et al., 2025).

This study investigates the role of a voice-based chatbot in providing L2 conversation practice within an interactive learning environment. It aims to develop a framework for designing communicative tasks that leverage technology to potentially enhance speaking proficiency. Specifically, the study addresses two objectives: (1) to explore how communicative tasks suitable for an interactive learning environment can be crafted, focusing on task types such as interactive speaking and assessments, and (2) to evaluate the impact of these tasks on students’ perceived motivation, engagement, and language proficiency. By examining the voice-based chatbot's support for learning, design principles, and usability, the study contributes to the CALL and MALL literature, addressing gaps in voice-based chatbot applications and task-specific effects (Plonsky & Ziegler, 2024). The findings aim to inform L2 educators on integrating technology to foster communicative competence, preparing students for effective L2 communication in diverse, intercultural contexts.

Recent debates in CALL/MALL (e.g., Burston et al., 2024; R. Li, 2024) also highlight challenges in attributing gains to AI tools in quasi-experimental designs (e.g., pre-post without controls), where maturation or instructional effects may confound results. Moreover, while meta-analyses show moderate effects of chatbots on speaking skills (Lyu et al., 2025), voice-based systems remain underexplored in formal settings.

Literature Review

Second language acquisition (SLA) research has increasingly focused on leveraging technology to enhance learner motivation, interaction, and usability in language education. The advent of computer-assisted language learning (CALL) and mobile-assisted language learning (MALL) has introduced innovative tools, such as conversational agents or chatbots, to support scalable, interactive practice in various language learning tasks (Bibauw et al., 2022; Jeon, 2023; Song et al., 2017).

Task-Based Language Teaching

Task-based language teaching (TBLT) is a learner-centered approach emphasizing authentic, real-world tasks to foster meaningful use in second language (L2) acquisition. Tasks, designed by instructors and often negotiated with learners, engage students in activities that prioritize communication, pragmatic processing, and non-linguistic outcomes, such as problem-solving or collaboration (F. Li & Li, 2023). TBLT has gained widespread adoption in L2 education globally, valued for its ability to integrate language skills with practical application (Ellis et al., 2020). Learning outcomes in TBLT emerge from dynamic interactions among tasks, learners, and contexts, making task design critical to effective language development (Shehadeh, 2022).

TBLT's efficacy lies in its focus on tasks that mirror real-life communication, encouraging meaning negotiation and contextual language production (González-Lloret, 2022). For instance, tasks such as role-plays or dialogues prompt learners to use language purposefully, enhancing fluency and engagement. Recent studies underscore TBLT's compatibility with technology-mediated environments, where digital tools can simulate authentic scenarios and provide immediate feedback (Ziegler & González-Lloret, 2022). Voice-based chatbots could align with TBLT by offering interactive platforms for learners to practice conversational tasks, receive pronunciation corrections, and engage in meaning-focused exchanges, addressing the need for scalable oral practice (Shehadeh, 2022).

Technology-enhanced TBLT also supports sociocultural learning outcomes, fostering multiliteracies that connect individual language use with broader social contexts (Plonsky & Ziegler, 2024). Chatbots facilitate this by providing platforms for collaborative, task-based interactions, where learners can negotiate meaning with the system or peers, enhancing both linguistic and intercultural competence. However, effective task design in digital environments requires balancing authenticity with usability, ensuring tasks are engaging yet accessible (F. Li & Li, 2023). This study leverages TBLT principles to design conversational tasks, investigating their impact on motivation, engagement, and proficiency in L2 speaking practice.

Interaction in Second Language Learning

Interaction is a cornerstone of TBLT, facilitating meaningful language use and learner engagement in second language (L2) acquisition (Robinson, 2011). TBLT emphasizes three key principles in fostering interaction. First, meaning-focused tasks are critical for promoting interaction, as they engage learners in authentic, communicative activities. Tasks such as collaborative dialogues or problem-solving scenarios encourage learners to produce language in context, enhancing fluency and comprehension (Shehadeh, 2022). For example, collaborative tasks foster negotiation of meaning, improving the quality and complexity of learners’ speech (Loewen & Sato, 2018). Instructional design should prioritize tasks that integrate authentic materials and real-world contexts to maximize meaningful interaction, supporting L2 development (González-Lloret, 2022). Second, interaction opportunities in TBLT enable active participation, often scaffolded by technology. Loewen and Sato (2018) identify two interaction types: (1) problem-solving interactions, where learners address communication breakdowns, and (2) form-focused interactions, where tasks prompt attention to linguistic accuracy alongside meaning. Technology, such as voice-based chatbots, enhances these interactions by simulating conversational partners, offering real-time feedback, and reducing speaking anxiety, which can hinder performance (Gregersen et al., 2014). Last, TBLT's learner-centered approach shifts instructors’ roles to facilitators, empowering learners to take active roles in task engagement. Learners collaborate, evaluate resources, and discuss outcomes, fostering autonomy and interaction (Shehadeh, 2022). Technology-mediated tasks support this by offering flexible, accessible platforms for collaborative practice (Plonsky & Ziegler, 2024). However, research on interactive chatbots in TBLT remains limited, particularly regarding how task design leverages mobile affordances to enhance interaction.

Despite TBLT's proven effectiveness, gaps persist in understanding technology's role in supporting interaction, especially for speaking proficiency. Many L2 learners face limited exposure to oral practice, and traditional settings often exacerbate anxiety (Gregersen et al., 2014). Richards (2006) highlights the need for innovative interventions, such as using emerging technology, to provide ample speaking opportunities.

Technology-Enabled Language Learning

Technology-enabled language learning has transformed language education by facilitating flexible, context-integrated learning experiences. It supports structured content delivery and assessment in formal settings and informal learning activities that extend beyond the classroom, promoting seamless learning across diverse contexts (Kukulska-Hulme, 2018).

Seamless learning integrates formal and informal L2 experiences, connecting classroom instruction with real-world practice through physical and digital environments (Burston, 2014; Wong & Looi, 2011). It leverages mobile devices to support synchronous and asynchronous activities, fostering learner communities and enabling continuous engagement (F. Li & Li, 2023). For instance, Nicolaidou et al. (2023) compared traditional classroom instruction, mobile app use in contextual settings (e.g., a zoo), and extended mobile use at home, finding that extended mobile access significantly improved vocabulary acquisition. This suggests mobile technologies extend learning opportunities, allowing learners to practice L2 skills in varied contexts without relying solely on classroom time (Kukulska-Hulme, 2018).

Despite their potential, the use of technology in L2 education faces challenges that impact effectiveness. Technical issues, such as connectivity problems or unintuitive interfaces, can disrupt learning, particularly for diverse learner populations (Plonsky & Ziegler, 2024). González-Lloret (2022) notes that poorly designed technology-mediated tasks may increase cognitive load, deterring engagement. Usability, defined as the extent to which a tool enables effective, efficient, and satisfactory use in specific contexts (Nielsen, 2012), is thus critical. Usability evaluations must consider instructional design, learner interactions, and accessibility to accommodate diverse needs (Fennelly-Atkinson et al., 2023; Kalyuga & Singh, 2016). For example, complex interfaces requiring technical expertise can hinder L2 learners, emphasizing the need for intuitive, user-friendly designs (F. Li & Li, 2023).

Effective technology tools require design principles that balance pedagogical goals with technological affordances. Seamless learning designs should incorporate personalized tasks, support transitions between learning activities, and align with sociocultural models of L2 acquisition, which emphasize integrative motivation and community engagement (Burston, 2014). For voice-based tools, usability principles are paramount to ensure learners can focus on speaking practice without technical barriers (Kalyuga & Singh, 2016). This study also addresses these needs by developing design principles for interactive chatbots, informed by usability frameworks, to support interactive, accessible L2 speaking tasks.

The evolution of technology-based L2 education has shifted from basic digital drills to sophisticated, AI-driven platforms that support communicative and personalized learning (Bui et al., 2025; Ziegler & González-Lloret, 2022). Recent CALL research emphasizes adaptive systems that adjust content to learners’ proficiency levels, enhancing engagement and efficacy (Godwin-Jones, 2019). Plonsky and Ziegler (2024) highlight that CALL's strength lies in its ability to provide interactive, feedback-rich environments, enabling learners to practice language skills in context. For instance, AI-driven tools can simulate conversational scenarios, offering opportunities for speaking and listening practice that align with SLA goals (Levy & Stockwell, 2013). These advancements position CALL as a robust framework for integrating chatbots, which can deliver real-time, adaptive interactions to support L2 development (Stockwell, 2013).

Chatbots have gained prominence in SLA for their ability to simulate human-like dialogue, offering scalable speaking practice (Bibauw et al., 2022). Voice-based chatbots, in particular, enable learners to develop oral proficiency by engaging in conversational exchanges and receiving pronunciation feedback (Jeon, 2023). For example, Oh and Song (2021) developed and tested a voice-based mobile application for Korean EFL learners, reporting improved speaking confidence and engagement in a university setting, which is similar to the context of the present EnMIA pilot. Fryer et al. (2019) found that chatbots increase engagement through immediate, personalized responses, supporting learners’ communicative needs. Bibauw et al.'s (2022) meta-analysis reported moderate to large effect sizes for chatbots on vocabulary and speaking skills, though motivational impacts vary. Shehadeh (2022) notes that chatbot efficacy depends on task design, with interactive, authentic tasks (e.g., role-plays) yielding stronger outcomes than repetitive exercises. Voice-based chatbots can address the challenge of providing oral practice in settings with limited access to instructors or native speakers (Blake, 2013), but their success hinges on aligning tasks with learners’ proficiency and goals.

Research Gap

Despite the promise of chatbots, several research gaps persist. First, while Bibauw et al. (2022) and Jeon (2023) demonstrate chatbots’ efficacy for speaking skills, studies on voice-based chatbots in formal classroom settings are limited. Second, the role of task type (e.g., interactive vs. static speaking) in driving motivation and outcomes remains underexplored (Stockwell, 2013). Third, usability challenges, such as learning curves and technical errors, are frequently reported but rarely addressed through iterative design (Fryer et al., 2019). Fourth, the interplay of accessibility, motivation, and usability in chatbot-assisted learning requires further investigation, particularly in diverse learner populations (Kukulska-Hulme, 2018).

This study addresses these gaps by examining the suggested app, EnMIA's (Enhanced Mobile Interactive Application) impact on L2 learning, focusing on its support for motivation, design principles, usability, and task-specific effects in L2 classes. It draws on recent SLA, CALL/MALL, and usability research to hypothesize that EnMIA enhances engagement, motivation, and proficiency through interactive, accessible, and user-friendly features.

In summary, recent literature underscores the potential of voice-based chatbots to enhance L2 learning by providing motivating, accessible, and interactive practice opportunities. Contemporary SLA motivation theories highlight the importance of autonomy and engagement, which CALL and MALL platforms can operationalize (Boo et al., 2015; Ziegler & González-Lloret, 2022). Chatbots, particularly voice-based ones, offer scalable solutions for speaking practice, aligning with these principles (Bibauw et al., 2022; Jeon, 2023; Wang et al., 2025). However, their success depends on effective task design, usability, and accessibility (Kalyuga & Singh, 2016; Kukulska-Hulme, 2018). This study, as a pilot, builds on these insights to investigate EnMIA's role in L2 classes, contributing to the CALL and MALL literature by addressing gaps in task-specific effects and usability considerations.

This Study

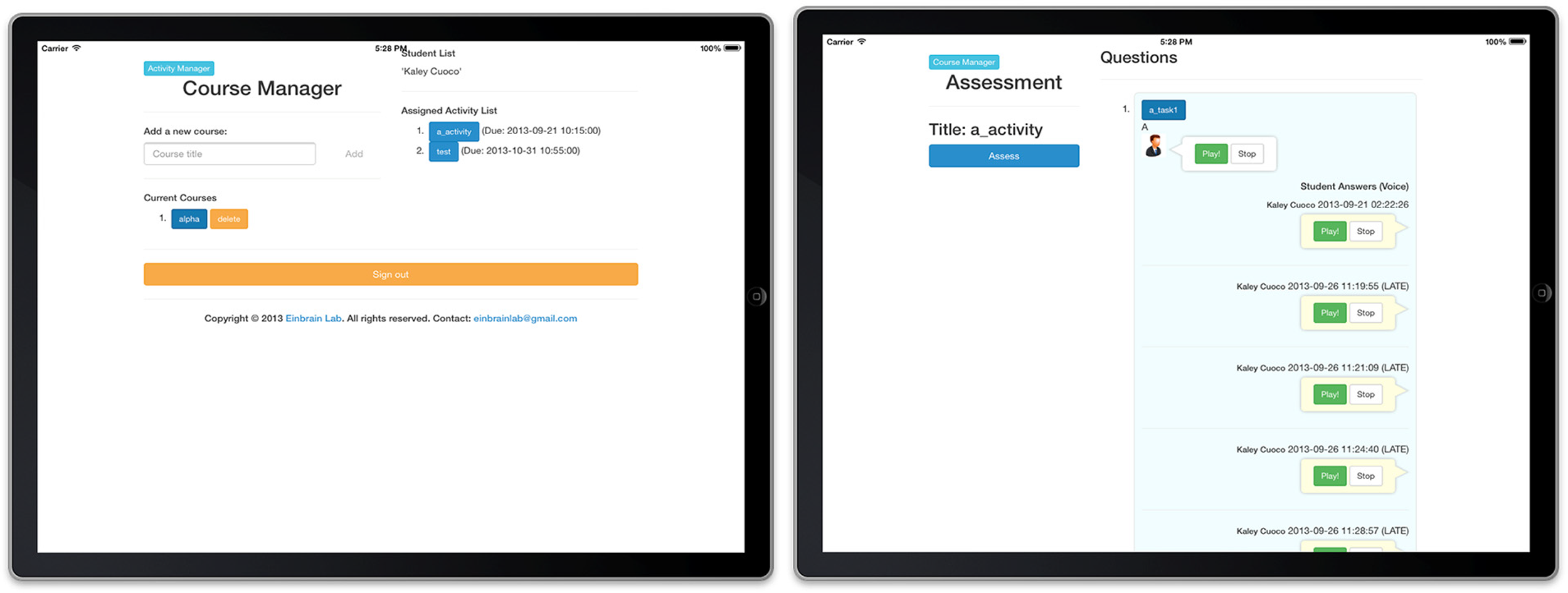

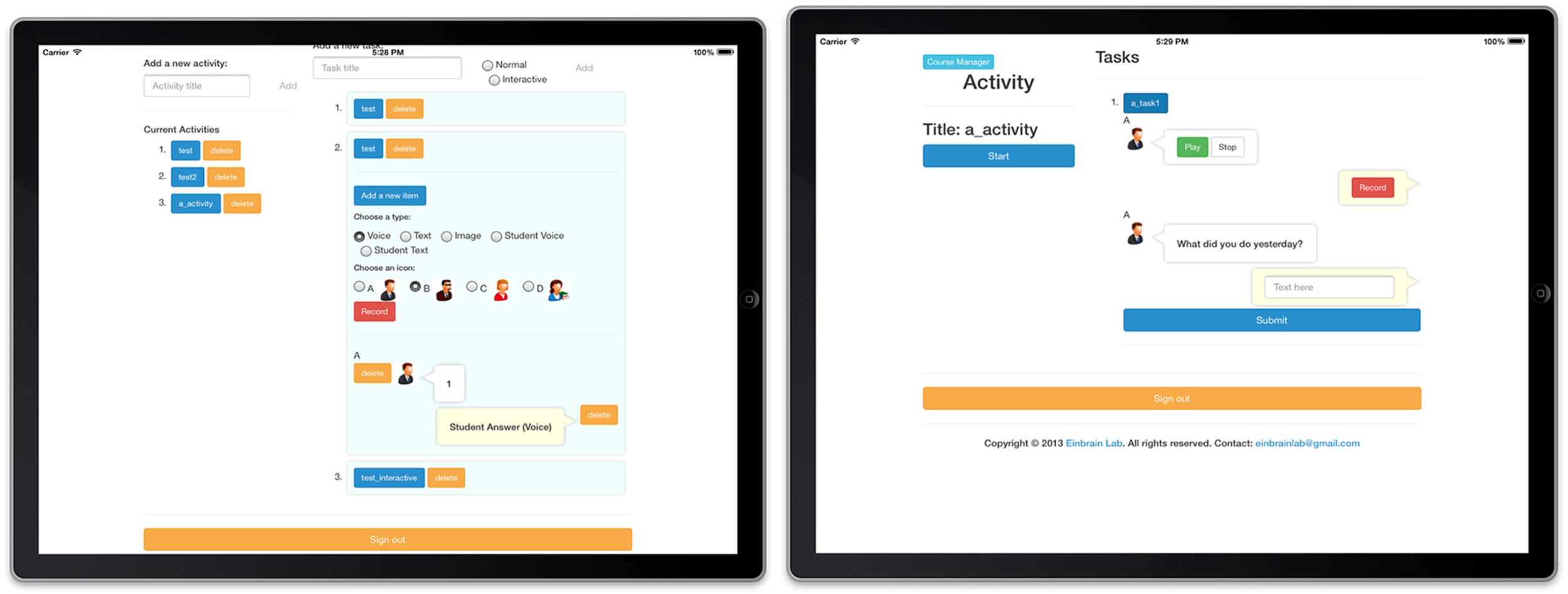

This study investigates the efficacy of EnMIA, a voice-based chatbot, designed to enhance L2 speaking proficiency, motivation, and engagement in Korean language courses in the U.S. EnMIA leverages TBLT principles, mobile technology, and usability-focused design to support L2 speaking practice, particularly fluency. These design principles are proposed based on literature and iterative development but require empirical validation through comparative studies. By simulating real-world conversations and offering immediate feedback, EnMIA aims to foster seamless learning across formal and informal settings, reducing barriers such as speaking anxiety and logistical constraints. Prior to the study, we describe the technology design principles and task design of EnMIA (see Figures 1 and 2). This pilot is limited to Korean language courses at one U.S. institution.

Screenshots from EnMIA: instructors’ activity management view (L) and assessment view (R).

Screenshots from EnMIA: students’ activity view.

Language Learning Tool Development—A Voice-Based Chatbot

The development of language learning tools like voice-based chatbots requires a robust theoretical foundation to guide effective instructional design. Theory-driven design provides structured heuristics that enhance tool functionality and learner engagement (Kalyuga & Singh, 2016). This study adopts a motivation-based framework, drawing on contemporary L2 motivation research to address engagement challenges language courses (Boo et al., 2015). Additionally, usability principles are integrated to optimize the learner experience, ensuring the chatbot supports accessible, interactive speaking practice.

Tool Design. In this study, the primary design principle for the chatbot is to facilitate goal-directed, contextually relevant language learning activities. This principle is supported by five sub-principles: (1) fostering interactive tasks that encourage conversation and collaboration, (2) enabling real-world language use, (3) supporting learner autonomy, (4) providing immediate feedback, and (5) ensuring cross-context accessibility. Boo et al. (2015) highlight that limited opportunities for authentic practice and non-interactive tasks reduce learner motivation, issues EnMIA addresses through voice-based, mobile-accessible activities. MALL technologies enable learners to engage in diverse, real-world contexts, enhancing integrative motivation and skill development (F. Li & Li, 2023).

Usability is critical to technology-supported learning environments, ensuring intuitive interfaces that minimize technical barriers (Kukulska-Hulme, 2018). Complex or unresponsive designs can disengage learners, particularly in mobile settings where flexibility is paramount (Kalyuga & Singh, 2016). Our tool's voice-based interface supports synchronous and asynchronous practice, accommodating varied schedules and reducing logistical constraints. By aligning with motivation theories and MALL principles, our design promotes active participation and seamless learning across classroom and informal settings (F. Li & Li, 2023). This study investigates these principles, aiming to establish a framework for voice-based tools that enhance L2 speaking proficiency, particularly for learners with limited access to model speakers or practice opportunities.

Task Design. Motivation is a pivotal factor in SLA, influencing learners’ persistence and engagement. Boo et al. (2015) provide a comprehensive review of L2 motivation, emphasizing the role of dynamic, context-sensitive motivational strategies in sustaining learner effort. Their framework suggests that technology-mediated tasks, which offer autonomy and relevance, enhance intrinsic motivation, aligning with the needs of diverse L2 learners. Ryan and Deci's (2016) self-determination theory further posits that tasks supporting autonomy, competence, and relatedness foster intrinsic motivation. For example, technology that allows learners to choose tasks or receive tailored feedback can enhance their sense of competence (Fryer et al., 2019). These theories underscore the potential of chatbots to deliver engaging, motivating tasks, such as interactive dialogues, that sustain learner investment in L2 practice (Richards, 2006). However, poorly designed tasks, such as those lacking clear goals, can diminish motivation, necessitating careful design considerations (Dörnyei, 2007).

Research Questions

This study aims to contribute to language teaching and learning literature by providing empirical insights into technology-mediated speaking practice and informing the development of accessible language learning tools. The goal of this research is to evaluate EnMIA's impact on language learners’ speaking outcomes and to establish design principles for voice-based language learning tools. Conducted in a university setting, the study examines how EnMIA's interactive tasks influence learners’ communicative abilities and engagement, particularly for those with limited access to model speakers. The research is guided by the following questions:

Method

This study evaluated the EnMIA voice-based chatbot's impact on second language (L2) speaking proficiency, motivation, engagement, and usability in two undergraduate Korean language courses. The study addressed an instructor's concerns about students’ low participation and limited opportunities for oral practice in non-native environments, aiming to enhance speaking skills through interactive, technology-mediated tasks.

Participants

Participants included one primary instructor, two associate instructors, and 38 undergraduate students enrolled in four sections of two Korean language courses (two sections per course) at a Midwestern U.S. university. This single-group pre-post design, while practical for pilot evaluation, limits causal attribution. The courses, representing different proficiency levels, were selected based on the instructor's willingness to participate and the research team's availability, constituting a convenience sample at a single institution. All students had access to mobile devices, enabling EnMIA use. Students were informed about the study, and ethical consent was obtained following institutional review board approval. One student's survey response was excluded due to incomplete data, resulting in 37 valid responses for analysis.

Procedures

The study was guided by five design principles derived from L2 motivation frameworks and technology-assisted language learning principles, emphasizing interactive, contextually relevant tasks. EnMIA's development integrated rapid prototyping, aligning system design with these principles through iterative collaboration among the software development team, language instructors, and educational technology experts. The system architecture utilized cross-platform software development tools, audio processing modules, user behavior controllers, and server-side data processing engines. EnMIA was deployed as a web application for desktop/laptop access and converted to native mobile apps (iOS and Android), ensuring cross-platform accessibility.

Pilot Testing and Revision

Initial usability testing focused on suitability, learnability, and error rates, conducted early to refine the design. Walkthrough testing with an undergraduate student and feedback from instructors led to iterative refinements. EnMIA's final specifications included: (1) course registration, allowing instructors to create courses and students to enroll, (2) activity management, enabling instructors to assign speaking tasks and students to participate, and (3) review and assessment, facilitating instructor feedback on student performance.

Data Collection

Data were collected over one semester via pre- and post-speaking assessments and intervention surveys.

Learners completed a pre- and post-test speaking task. Each task consisted of a three-minute role-play conversation with prompts designed to elicit spontaneous speech. Performances were audio-recorded and rated by two trained raters on three dimensions: Fluency, Accuracy, and Complexity, each scored on a 1–5 scale. A composite score was also computed as the mean of the three subscales. Inter-rater reliability was strong (Cohen's κ = .81). Scores from both raters were averaged for analysis.

Surveys, administered online during the final class, used a 5-point Likert scale (1 = Strongly Disagree, 5 = Strongly Agree) to evaluate (1) Learning Support, (2) Tool Design Principles, (3) Usability, (4) Motivation, and (5) Perceived Learning Improvement. All participants consented to data collection.

Data Analysis

Paired-samples t-tests were used to compare pre- and post-test performance scores for the experimental group. Effect sizes were reported using Cohen's d. Survey data, comprising Likert-scale ratings (1–5) on learning support, design principles, and usability, were analyzed using descriptive statistics to summarize learner perceptions. Independent samples t-tests compared mean ratings between courses to assess EnMIA's impact on motivation and engagement, with a significance level of α = .05. Effect sizes (Cohen's d) were calculated to quantify differences, ensuring robust interpretation of EnMIA's effectiveness. In addition, correlations were calculated between the number of tasks per type (weighted by participant counts) and mean survey ratings to explore task influence.

Result

Participation

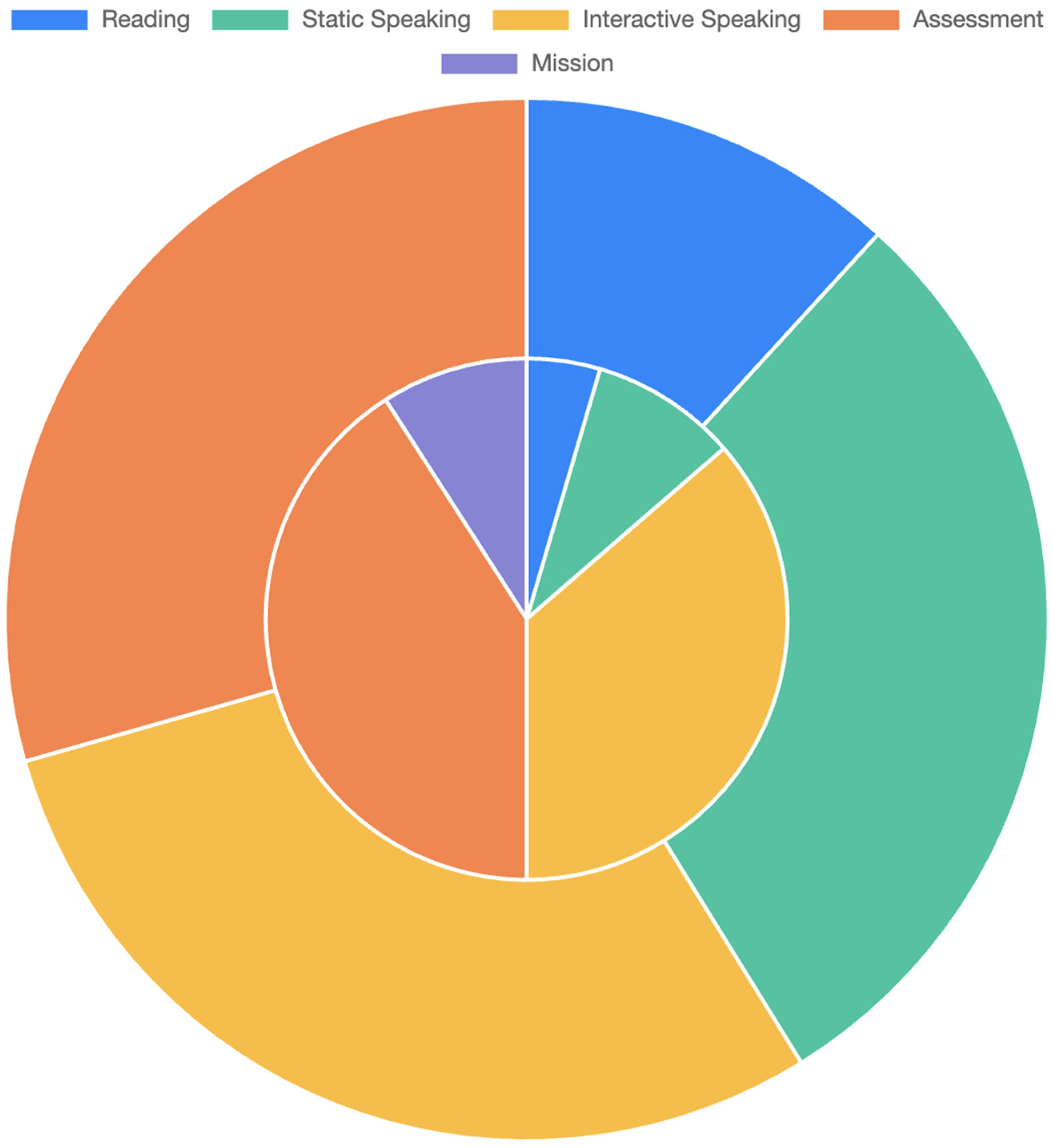

In the implementation 23 tasks that the instructor created and assigned to the students using EnMIA could be categorized into: (1) Reading: To provide opportunities to read sentences, conversations, or paragraphs, (2) Static Speaking: To provide opportunities to use the language in a situated context without interactive activities, (3) Interactive Speaking: To provide interactive opportunities to use the language in the situated context, (4) Mission: To provide opportunities to use the language in a situated context, and (5) Assessment: To assess and evaluate students’ knowledge, skills, and performance on the language.

The number of total activities were five for Course 1 and seven for Course 2. The number of total tasks were 13 for Course 1 and 19 for Course 2. Graded activities were 3 (9 tasks) for Course 1 and 4 (14 tasks) for Course 2. Non-graded activities were 2 (4 tasks) for Course 1 and 3 (5 tasks) for Course 2. Participation level was high on graded activities (100%) and intermediate on non-graded activities (i.e., 90.0% for Course 1 and 71.8% for Course 2).

The students participated in several activities described above. The instructor assessed how much the students learn the language through the tool. First, the graded tasks went well as expected: 100% participation rate. The instructor analyzed that most of the instructional goals have been accomplished except the mission type task: 23.0% participation rate. There was one Mission activity with 2 tasks (e.g., “Find a native speaker of the language and have a chat with them for 2 min about their traditional food.”).

Speaking Performance

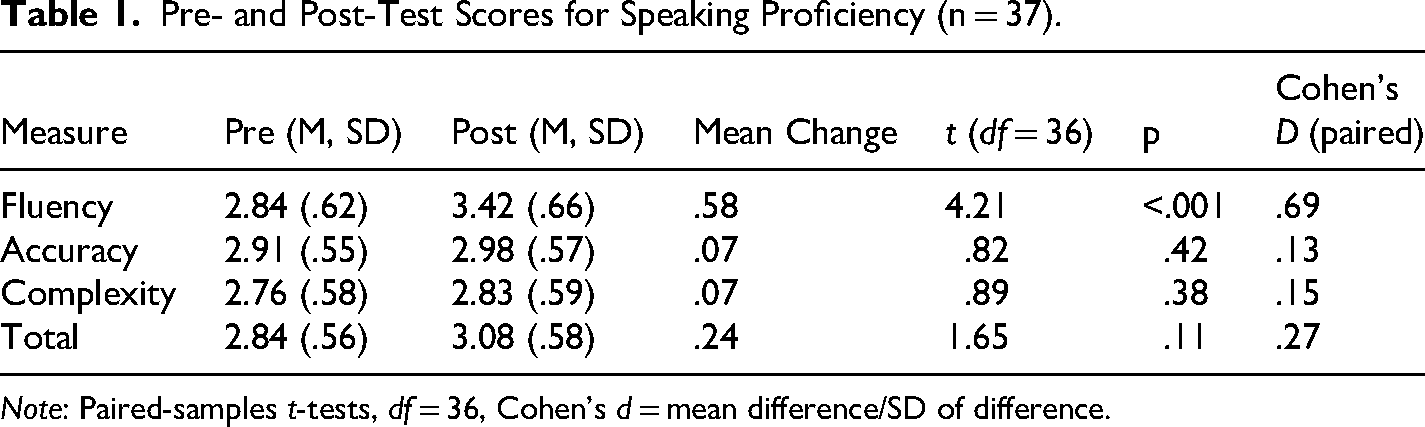

Participants in the EnMIA condition (n = 37) completed a 3-min oral task at the beginning (pre) and end (post) of the semester. Each recording was rated by two independent raters on Fluency, Accuracy, and Complexity (1–5 scale). Inter-rater agreement was high (Cohen's κ = .81). A composite score (mean of the three) was also computed. Paired-samples t-tests examined changes from pre to post. Results are summarized in Table 1.

Pre- and Post-Test Scores for Speaking Proficiency (n = 37).

Note: Paired-samples t-tests, df = 36, Cohen's d = mean difference/SD of difference.

Students showed a statistically significant gain in fluency (p < .001, medium effect size, d = .69), but not in accuracy, complexity, or composite scores. This suggests that short-term practice with EnMIA may have primarily enhanced learners’ ability to speak more smoothly and confidently, while structural accuracy and syntactic complexity did not change significantly over a single semester.

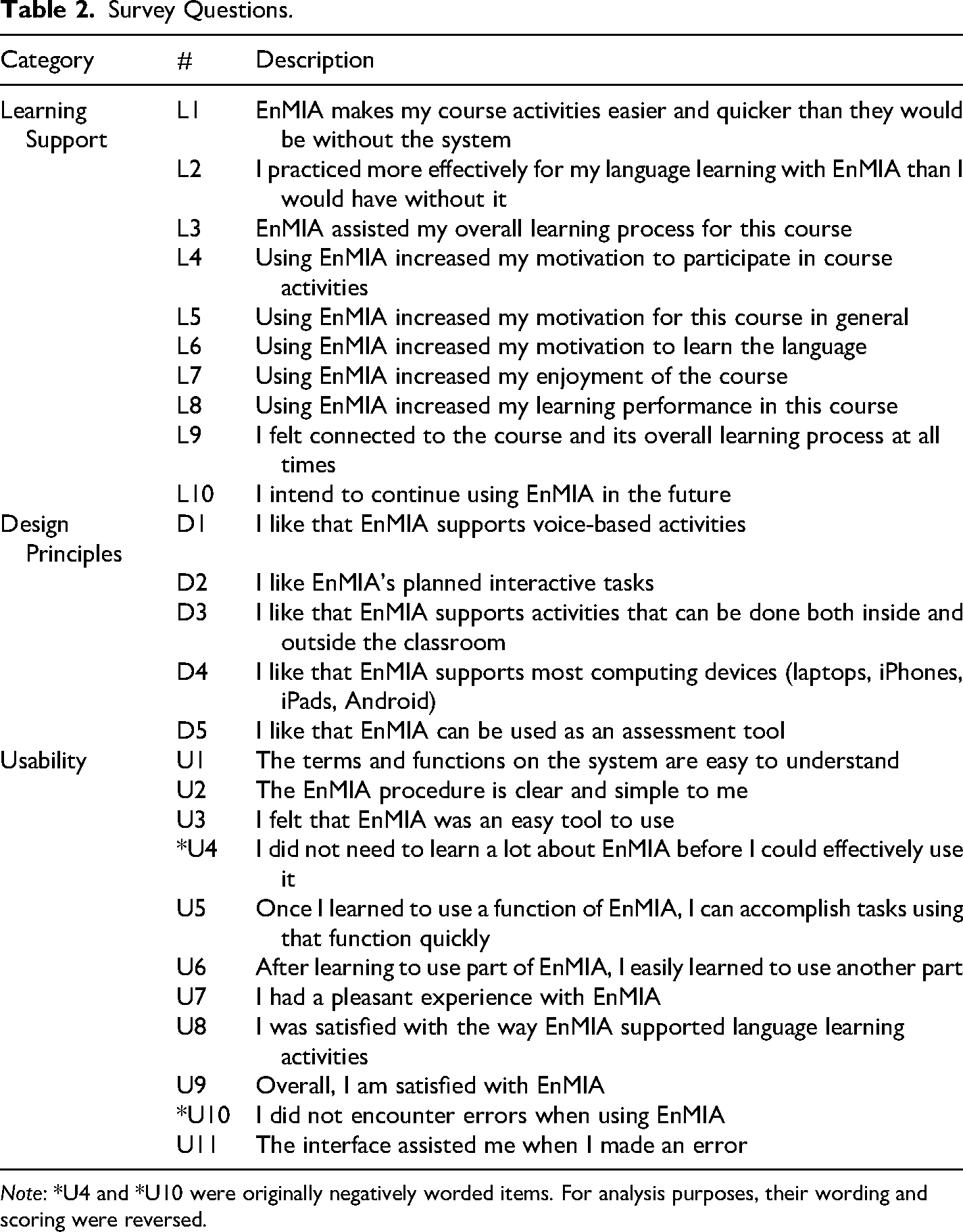

Students’ Perceived Levels

The evaluation questions for the perceived aspects (i.e., learning support, design principles, and usability) are shown in Table 2.

Survey Questions.

Note: *U4 and *U10 were originally negatively worded items. For analysis purposes, their wording and scoring were reversed.

Descriptive Statistics

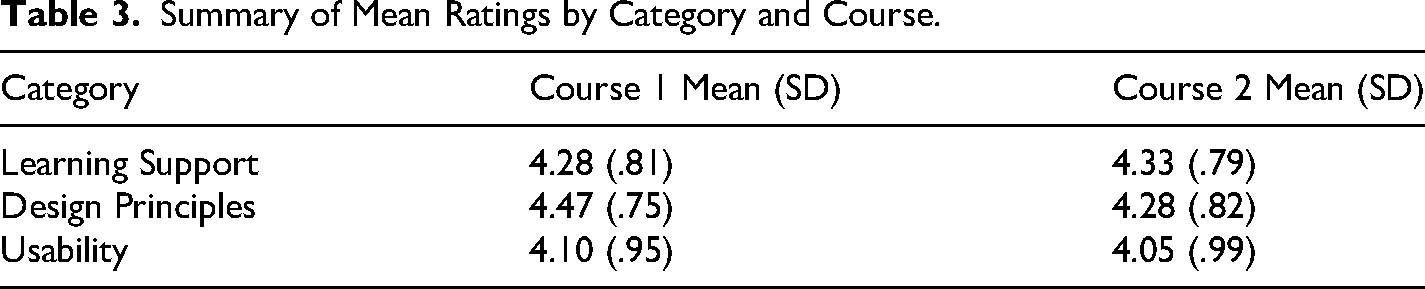

As shown in Table 3, mean ratings and standard deviations (SD) were calculated for each survey category and question, aggregated by course. The overall mean ratings across categories were: (1) Learning Support: Course 1: 4.28 (SD = .81) and Course 2: 4.33 (SD = .79), (2) Design Principles: Course 1: 4.47 (SD = .75) and Course 2: 4.28 (SD = .82), and (3) Usability: Course 1: 4.10 (SD = .95) and Course 2: 4.05 (SD = .99).

Summary of Mean Ratings by Category and Course.

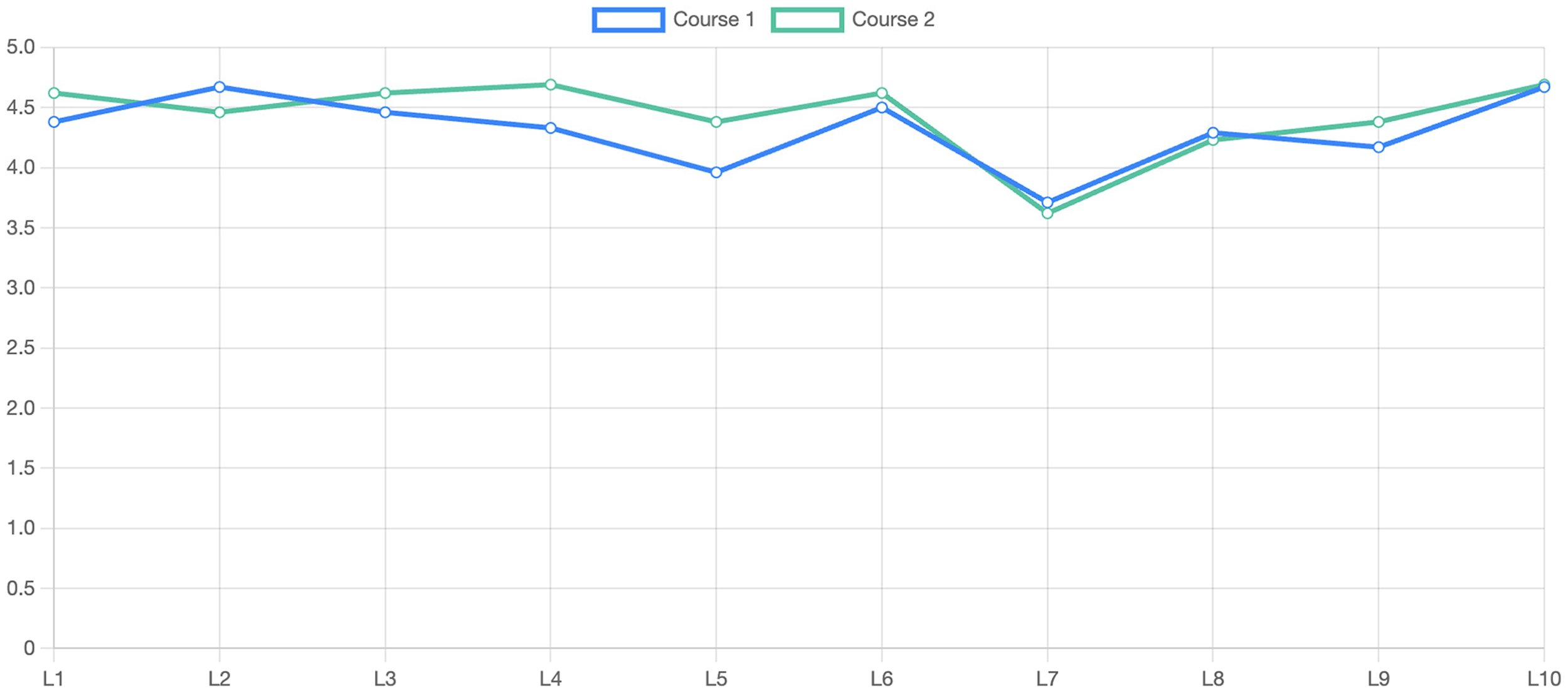

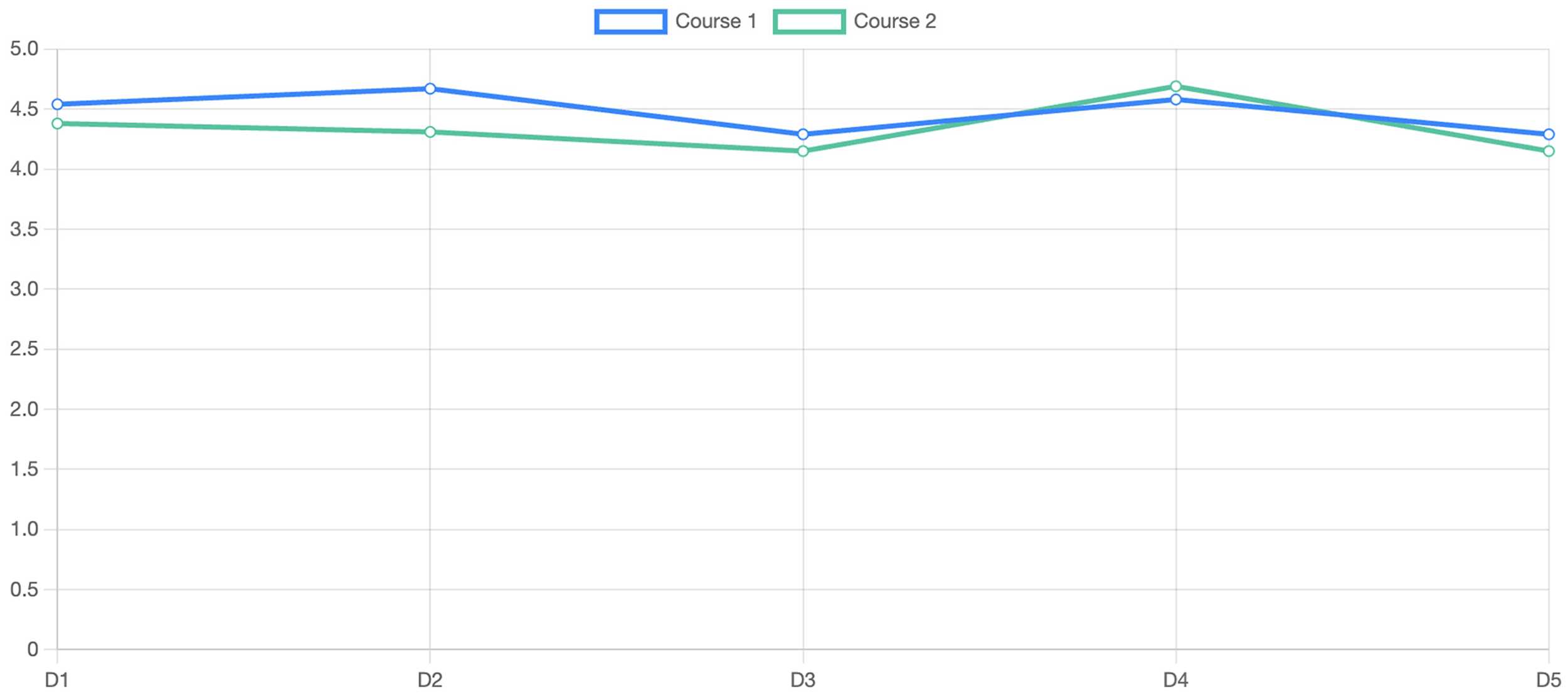

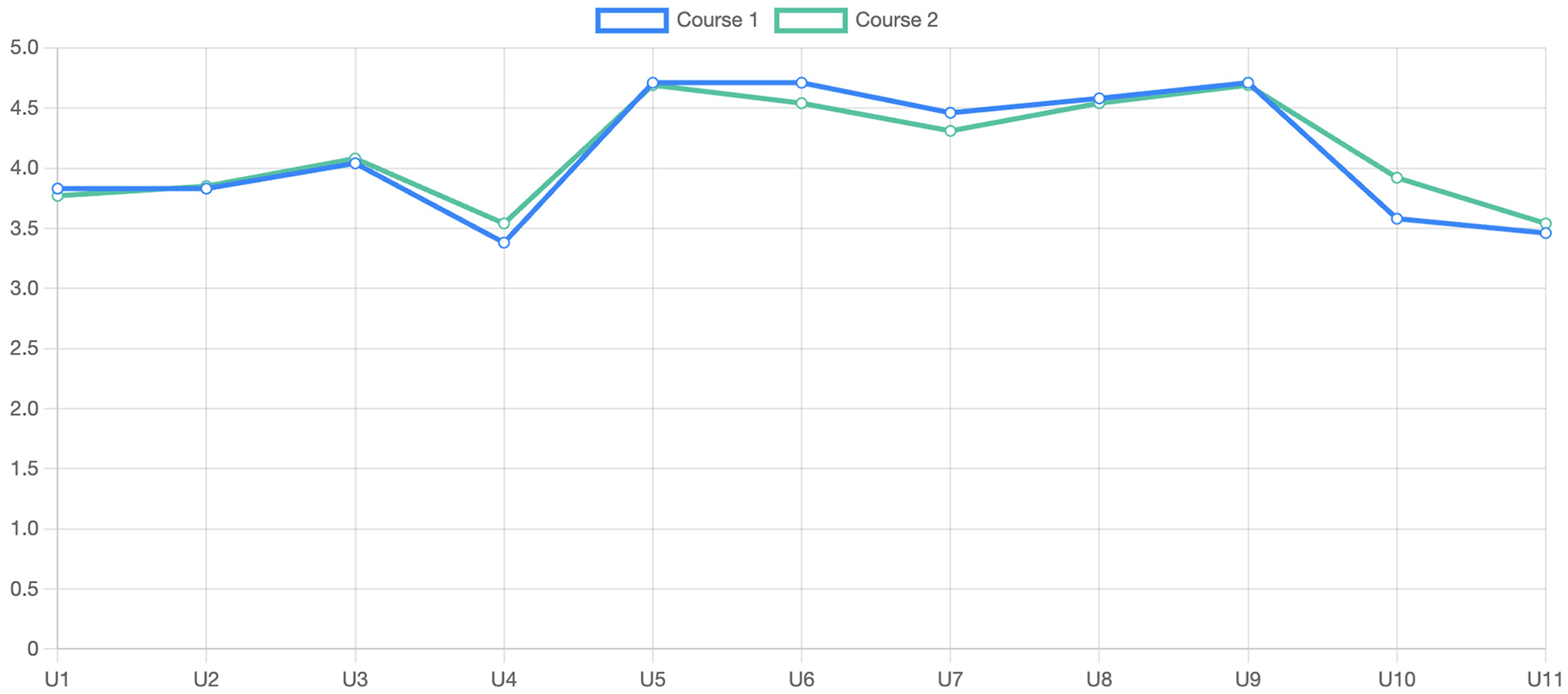

The following are some notable descriptive statistics, which can be seen in Figures 3–5.

Learning Support: Both courses rated EnMIA highly, with means above 4.0 for most questions. Course 1's highest-rated item was L2 (“I practiced more effectively for my language learning with EnMIA than I would have without it,” M = 4.67, SD = .56), reflecting enhanced practice quality. Course 2's highest was L4 (“Using EnMIA increased my motivation to participate in course activities,” M = 4.69, SD = .48), suggesting strong engagement. The lowest-rated item was L7 (“Using EnMIA increased my enjoyment of the course”) in both courses (Course 1: M = 3.71, SD = .95; Course 2: M = 3.62, SD = 1.12), indicating moderate enjoyment relative to other benefits. Design Principles: Students valued EnMIA's design, particularly D4 (“I like that EnMIA supports most computing devices”), with Course 1 at 4.58 (SD = .58) and Course 2 at 4.69 (SD = .48). Course 1 rated D1 (“I like that EnMIA supports voice-based activities”) higher (M = 4.54, SD = .66) than Course 2 (M = 4.38, SD = .77), likely due to more Static Speaking tasks in Course 1. The lowest-rated item was D5 (“I like that EnMIA can be used as an assessment tool”) in Course 2 (M = 4.15, SD = .90). Usability: Usability ratings were positive but more variable. U8 (“I was satisfied with the way EnMIA supported language learning activities”) scored highest (Course 1: M = 4.58, SD = .58; Course 2: M = 4.54, SD = .66). U4 (“I did not need to learn a lot about EnMIA before I could effectively use it”) was the lowest (Course 1: M = 3.38, SD = 1.24; Course 2: M = 3.54, SD = 1.13), indicating a perceived learning curve. U10 (“I did not encounter errors when using EnMIA”) also scored lower (Course 1: M = 3.58, SD = 1.44; Course 2: M = 3.92, SD = 1.32), suggesting occasional errors.

Learning support ratings by question.

Design principles ratings by question.

Usability ratings by question.

Comparisons between Courses

Independent samples t-tests were conducted to compare ratings between courses for each question, using a Bonferroni-corrected alpha of .002 (.05/26) to account for multiple comparisons. No significant differences were found (all p > .002), indicating similar perceptions across courses. Some notable trends were found.

First, U4 (“I did not need to learn a lot about EnMIA before I could effectively use it”): Course 1 (M = 3.38, SD = 1.24) vs. Course 2 (M = 3.54, SD = 1.13), t(35) = −.39, p = .70. Course 1 students perceived a slightly steeper learning curve, possibly due to diverse task types.

Second, L2 (“I practiced more effectively for my language learning with EnMIA than I would have without it”): Course 1 (M = 4.67, SD = .56) vs. Course 2 (M = 4.46, SD = .52), t(35) = 1.12, p = .27, suggesting Course 1 students felt EnMIA enhanced practice more.

Last, U10 (“I did not encounter errors when using EnMIA”): Course 1 (M = 3.58, SD = 1.44) vs. Course 2 (M = 3.92, SD = 1.32), t(35) = −.71, p = .48, indicating Course 1 students reported more errors.

Task Types

Correlation

Pearson correlations were calculated between the number of tasks per type (weighted by participant counts) and mean survey ratings to explore task influence. Here are the key findings.

Interactive Speaking: In Course 1 (5 tasks, 23–25 participants), Interactive Speaking correlated strongly with L4 (“Using EnMIA increased my motivation to participate in course activities,” r = .62, p < .05) and U8 (“I was satisfied with the way EnMIA supported language learning activities,” r = .58, p < .05). In Course 2 (8 tasks, 13 participants), the correlation with L4 (“Using EnMIA increased my motivation to participate in course activities”) was stronger (r = .71, p < .01), suggesting a potential association between Interactive Speaking tasks and higher motivation ratings, though based on small subsamples and requiring caution. Assessment: Course 1 (5 tasks) and Course 2 (9 tasks) showed a positive correlation with D5 (“I like that EnMIA can be used as an assessment tool,” r = .65, p < .05), indicating students valued EnMIA's assessment capabilities in courses with more assessment tasks. Static Speaking and Reading: No significant correlations were found, likely due to fewer tasks (Course 1: 5 Static Speaking, 2 Reading; Course 2: 2 Static Speaking, 1 Reading). Mission: In Course 2 (2 tasks, 3 participants), Mission tasks negatively correlated with U10 (“I did not encounter errors when using EnMIA,” r = −.55, p < .05), suggesting potential errors in less-structured tasks.

Usability

An unexpected finding was the low rating for U4 (“I did not need to learn a lot about EnMIA before I could effectively use it”) in both courses (Course 1: M = 3.38; Course 2: M = 3.54), indicating a moderate learning curve. This was more pronounced in Course 1, likely due to its diverse task types (i.e., Reading, Static Speaking, Interactive Speaking, Assessment) as shown in Figure 6, which may have required more effort to master EnMIA's features. This contrasts with high ratings for U3 (“I felt that EnMIA was an easy tool to use,” Course 1: M = 4.04; Course 2: M = 4.08), suggesting that while EnMIA was user-friendly overall, initial learning posed a challenge, particularly for Course 1 students.

Task type distribution by course.

Motivation

Course 2 students reported slightly higher motivation (L4, “Using EnMIA increased my motivation to participate in course activities,” M = 4.69) and connection to the course (L9, “I felt connected to the course and its overall learning process at all times,” M = 4.38), likely due to the higher proportion of Interactive Speaking tasks (8 vs. 5 in Course 1), which correlated strongly with engagement (r = 0.71, p < .01).

Design Principles

Design principles were rated highly, particularly device compatibility (D4, “I like that EnMIA supports most computing devices”), though Course 1 students valued voice-based activities (D1, “I like that EnMIA supports voice-based activities”) more, possibly due to more Static Speaking tasks. In addition, the correlation between Assessment tasks and D5 (“I like that EnMIA can be used as an assessment tool,” r = .65, p < .05) underscores EnMIA's value as an assessment tool. The task distribution (see Figure 6) highlights Course 2's emphasis on Interactive Speaking and Assessment.

Discussion

The present study highlights one way that a voice-based activity tool could be employed to support second language (L2) teaching and learning. L2 course takers have a variety of commitments and responsibilities such as acquiring and practicing language content and skills, developing fluency, and, most importantly, passing their courses. To understand the situation and reduce the burden of learners, L2 studies have been done to theoretically support language learning. To encourage learners’ motivation in their language learning, a voice-based language learning activity tool was developed and implemented in this study. The aim of the tool is to encourage learners’ active participation in language learning activities outside the classroom as well as in class. The present study focused on how a voice-based language learning activity tool can be harnessed to provide theory-based support to L2 learners. The other purpose of this study was to produce new knowledge on both design methods and the theory. The findings from this study provide evidence that shows promise as a supplementary tool for L2 teaching and learning, as demonstrated by mean ratings across learning support, design principles, and usability. These results align with prior research on conversational agents in language education (e.g., Bibauw et al., 2022; Jeon, 2023). The results of this study have the following implications.

First, the performance findings refine the perception data. While learners reported feeling motivated and supported, measurable gains were observed only in fluency. This pattern suggests that EnMIA's voice-based interactive tasks potentially promote spontaneous production and conversational ease, consistent with interactionist SLA theories emphasizing practice in real-time speech (Long, 1983). However, accuracy and complexity, which are often linked to longer-term instruction and feedback cycles, may require extended use or more explicit form-focused tasks to improve. Thus, EnMIA's immediate value may lie in boosting fluency and lowering barriers to oral participation, with accuracy and complexity gains potentially emerging in longitudinal applications. Still, these fluency-focused findings also highlight debates in educational technology: pre-post designs often overestimate intervention effects due to confounding factors (e.g., semester-long instruction). Recent studies on voice-AI chatbots emphasize similar fluency gains but stress the need for controls to isolate tool impacts (e.g., Lyu et al., 2025).

Second, EnMIA's high ratings for items of learning effectiveness and motivation reflect its possible effectiveness in fostering skill development and engagement, aligning with second language acquisition (SLA) principles articulated by Krashen's (1982) input hypothesis and Long's (1981, 1983) interaction hypothesis. These theories emphasize comprehensible input and meaningful output, which EnMIA facilitates through its voice-based, interactive tasks. This study extends these frameworks by showing how technology-mediated interaction can replicate communicative dynamics traditionally found in face-to-face settings.

Third, the significant correlation between interactive speaking tasks and perceived motivation highlights the role of task design in sustaining engagement. The course with more interactive speaking tasks reported higher motivation. This aligns with Dörnyei's (2001) framework, which emphasizes authentic, interactive tasks for intrinsic motivation. Interactive speaking tasks, involving real-time dialogues, support Van Patten's (2017) focus on meaningful communication for proficiency. The higher motivation in Course 2 may stem from graded tasks, introducing performance incentives per Ryan and Deci's (2016) self-determination theory. In addition, the correlation with satisfaction with EnMIA's support suggests interactive speaking tasks boost both motivation and perceived efficacy, as noted by Stockwell (2013). Still, correlations with task types are exploratory and limited by uneven participation; self-reports may reflect novelty effects.

Fourth, the high ratings for design principles, particularly supporting most computing devices, underscore accessibility's role, as Kukulska-Hulme (2018) notes that device compatibility reduces barriers in mobile learning environments. Besides, the higher voice-based feature rating in Course 1 reflects more static speaking tasks, aligning with Chapelle's (1998) task-specific computer-supported language learning principles. The correlation between assessment tasks and design principles supporting assessment indicates value in formative feedback (Godwin-Jones, 2019). EnMIA's dual role as practice and assessment tool could support integrated learning (Levy & Stockwell, 2013). Contributions to design principles are descriptive; future work should test alternatives empirically.

Fifth, despite overall positive usability ratings, lower scores for items related to the need for extra help and tool errors suggest a moderate learning curve and potential technical issues. This contrasts with high ratings on easy-to-use tools, aligning with Nielsen's (2012) usability heuristics on minimizing learning effort. Course 1's lower scores may reflect diverse task types, which may increase cognitive load (Sweller, 2011). The negative correlation between the tool-error item and mission tasks suggests errors from unclear instructions or glitches, common in chatbots (Fryer et al., 2019).

Last, the overall findings regarding design principles and their relationship with task type suggest that EnMIA's interactive and voice-based features might enhance motivation and learning outcomes, with task type and frequency shaping student experiences. Future improvements could focus on reducing the initial learning curve and error rates to optimize usability.

Limitations and Further Research

There are certain limitations that researchers and educators should be aware of. First, speaking assessments showed significant fluency gains but no changes in accuracy or complexity, which may reflect the short timeframe and the tool's focus on interactive rather than form-focused practice. The speaking task was brief and rubric-based, leaving open questions about longer-term or authentic communicative gains. The second limitation has to do with the use of questionnaires as a method to evaluate learners’ motivation and engagement. Questionnaires have been accused of being static methods that can only detect perceived levels instead of more transient respondent characteristics as self-reports may differ from reality. Brief assessment task and coarse scale may lack sensitivity for accuracy/complexity. Third, this study was conducted in specific second language learning courses at a university. Findings are thus specific to this university Korean language context and not intended for broad generalization without replication. The course settings vary across several different contextual aspects, such as institution size, geographic location, K-12 versus higher education, and so forth. Although there is little reason to think the participants are highly exceptional, it is impossible to know just how and to what extent we might generalize to other second language learning courses due to the small sample size, which limits generalizability. Fourth, since this was the design and development process, strict comparison could not be conducted. The absence of a control group is a major limitation, preventing attribution of fluency gains solely to EnMIA (possible confounds, such as regular coursework, maturation). Last, the limitations include the small sample size, limited generalizability, lack of significant course differences due to sample size and similar task designs, and a short-term focus.

Future studies can be designed to address the issues and overcome the limitations described above. The results of the present study also suggest several areas for future research. Since this study did not show the direct effectiveness of the tool or real enhancement of students’ performance, further research is needed. Future research efforts can employ experimental designs (e.g., EnMIA group with a control group) to gather information about the effectiveness of the tool in terms of attitude, integrativeness, motivation, and learning performance (i.e., through L2 performance comparison). The total complex of integrativeness, attitudes toward the learning situation, and motivation can be seen as integrative motivation (Masgoret & Gardner, 2003), which is referred to as “a complex of attitudinal, goal-directed, and motivational attributes” (Gardner, 2001, p. 9). More empirical studies are needed to extend the findings of this research to other settings, courses, and subjects. Further, future research could use qualitative methods to explore interactive speaking tasks (Dörnyei, 2007), longitudinal studies for proficiency gains (Jeon, 2023), extensive usability testing (Nielsen, 2012), comparative analyses (Bibauw et al., 2022), individual differences (Benson, 2011), and technology integration (Godwin-Jones, 2019).

Conclusion

This pilot and feasibility study examined the design and implementation of EnMIA, a voice-based chatbot for second language learners. Results demonstrated high student satisfaction and motivation, with interactive tasks strongly linked to engagement. Importantly, pre- and post-speaking assessments revealed preliminary fluency gains in a pilot context, potentially indicating that chatbot-supported practice can improve oral performance in the short term. However, accuracy and complexity did not change, suggesting that while EnMIA effectively lowers barriers to speaking, targeted design and extended use are required for more comprehensive proficiency development. As Gardner (2001) argued, language learning theories do not directly ensure success in second language acquisition but indirectly support learners and instructors by exploring relationships among key variables. Thus, we argue that instructional designers, educational technologists, instructors, and learners should collaborate to optimize the use of voice-based technologies for learning. In conclusion, as a feasibility study, EnMIA illustrates potential for voice-based tools in supplementing fluency practice, warranting rigorous controlled research.

Studies in Humans and Animals

The research has been approved by Institutional Review Board as exemption.

Footnotes

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability

Data sharing is not applicable to this article.