Abstract

Keywords

Introduction

In contemporary education, online teaching, learning and assessment open a new era of opportunities and challenges (Elaine & Seaman, 2015). In terms of academic performance, (Nguyen, 2015) reports that online teaching in general has positive impact on student marks and engagement. Within this context, Virtual Learning Environment (VLE) becomes an integrated part of the teaching and learning processes. In the Higher Education (HE) sector, universities use VLE to disseminate teaching materials, provide support to students and more recently has been used for assessments. In general, VLE is considered a good enabler because it is not restricted by a specific time or place (Molotsi, 2020). The authors in (Pem et al., 2021) investigate the effectiveness of VLE features for online teaching, learning and assessment in all colleges of their specific university as a case study. The research concludes that there is no influence of gender, age, teaching experience, and educational qualification on the use of VLE features for online teaching, learning and assessment.

On the other hand, VLE assessments open new challenges related to academic misconduct. The authors in (Adzima, 2020) provide a comprehensive review of the literature on academic misconduct in online education. The paper concludes that many factors that influence cheating behaviours in the face-to-face environment are also relevant in the online environment. The authors in (Al-Azawei & Al-Masoudy, 2020) study the academic performance of students for VLE assessments with the conclusion that students’ final marks in VLE assessments can be predicted based on several factors such as the level of the financial and service instability, assessment grades, the total number of clicks, the interaction with different course activities, and students’ engagement.

The impact of Artificial Intelligence (AI) technology, such as ChatGPT, in education has been discussed in literature with the focus, in general, being on the impact on education and pedagogy, see for example (Baidoo-Anu & Ansah, 2023; Matchett, 2023; Montenegro-Rueda et al., 2023; Yu & Guo, 2023). As another example, the author in (Yu, 2024) produces a detailed analysis on the challenges and opportunities the technology can bring to education. In (Alshater, 2022), the author explores the potential of artificial intelligence, particularly in enhancing academic performance using economics and finance courses as an illustrative example. Similarly, the authors in (Khlaif et al., 2024) and (Hamamra et al., 2024) discuss the impact of generative AI technology on student assessments in higher education. Their conclusion is that despite the many advantages this technology brings to the tertiary education, it also raises concerns about academic integrity. The research in both papers is based on specific regional institutions; however, the findings can be generalised, as it is seen in the conclusion of (Roe et al., 2024) and (Alkouk & Khlaif, 2024), which emphasise the role of training and clear policies. The need for a new assessment framework in higher education considering the presence of generative AI technology is discussed in (Sol et al., 2025), where the paper highlights the necessity of institutional policies that guide AI integration in education and protect academic integrity. In (Williams, 2025), a comprehensive holistic approach is recommended to integrate AI technology into HE assessments. The authors discuss incorporating AI into feedback and adapting assessments in order to leverage AI's capabilities and promote AI literacy among students and educators.

On the other hand, very limited resources in literature discuss the issue of VLE assessment transformation as a result of the existence of AI technology. Examples of these resources will be visited in the next section when we discuss VLE assessments in detail.

This paper addresses the challenges of designing a VLE assessment taking into account the existence of AI technology. The paper produces recommendations on how VLE assessments can be designed to protect their integrity and minimise academic misconduct with reported results from a practical case study.

The remainder of the paper is organised as follows. What are VLE Assessments? section is dedicated to the definition of VLE assessment. In Integrity of VLE Assessments section, the integrity of VLE assessments is discussed. The Case Studies section details the case studies presented in this paper while What Has Been Done? section presents the full details about the suggested practices recommended by the paper to protect the integrity of VLE assessments. Student Academic Performance section discusses student academic performance for the relevant case studies of the paper. The paper finishes with some conclusions and remarks in Conclusion section.

What are VLE Assessments?

With the heavy use of modern online VLE platforms for teaching and delivery of materials across the HE sector, the use of VLE assessments is increasing. These platforms include modern and clever tools that enable assessments to be done online, create assessments that are easy to mark and moderate, and give educators a variety of assessment design options that suit all needs.

Several studies in literature focus on VLE assessments and report on how the assignment and examination processes can be adapted to ensure learning outcomes are met and students’ behaviours are predicted and dealt with when conducting these assessments, see for example (Llerena-Izquierdo et al., 2023) and the references therein. The article in (Ma & Hill, 2024) discusses the potential transformative effect of AI in rethinking assessment strategies within VLEs. It emphasizes the need for staff development to effectively integrate AI technology into assessment design to ensure that assessments remain relevant and uphold academic integrity in the digital age. Similarly, the focus in (Pike & Islam, 2024) is on the integration of AI in VLEs to facilitate personalized and adaptive assessments. It discusses how AI technology can support real-time feedback and dynamic questioning which in turns enhances the assessment of both quantitative and qualitative skills in higher education.

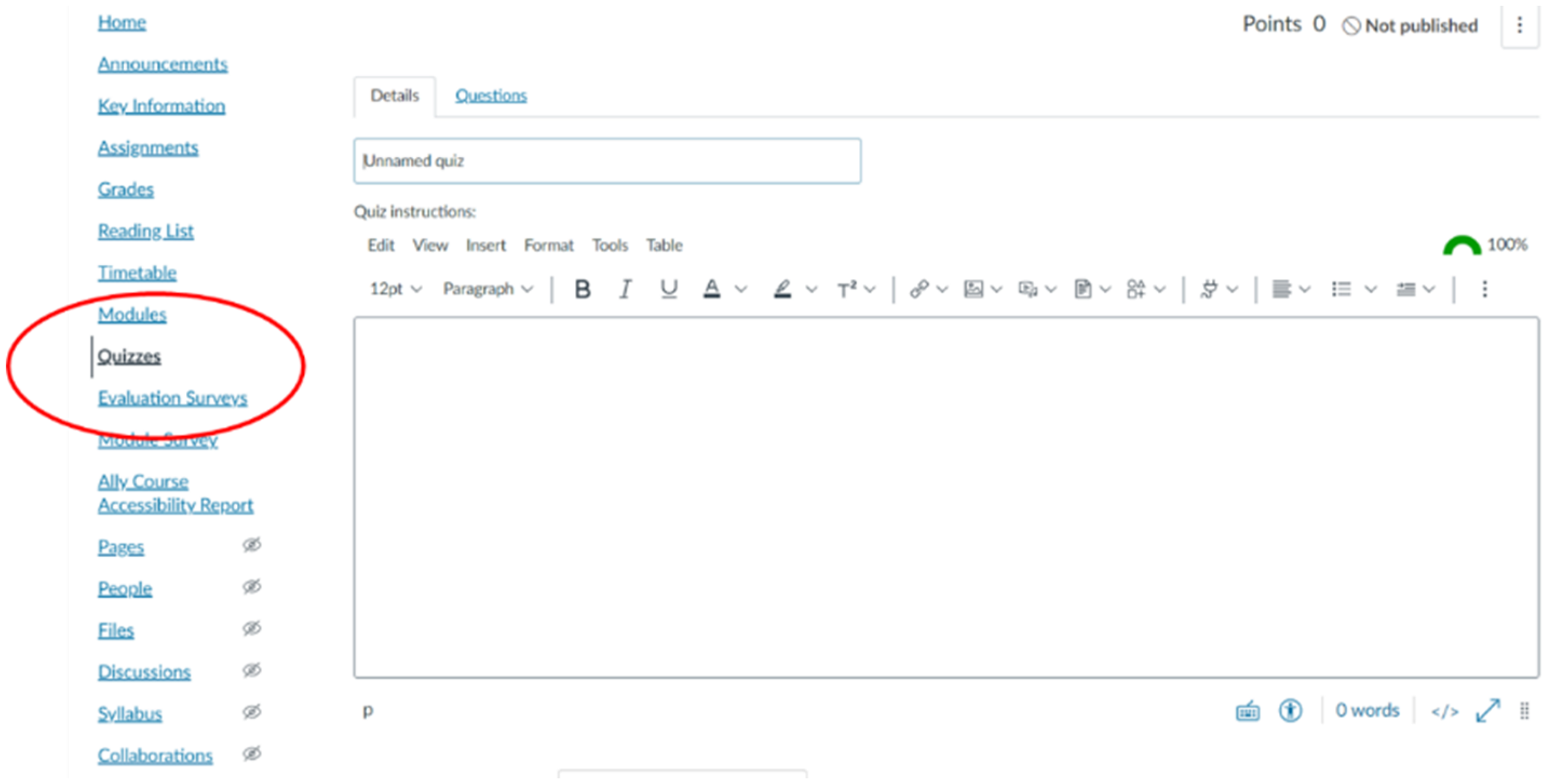

Universities use different VLE platforms of comparable features. Examples of these platforms include Blackboard, Moodle and Canvas. This paper focuses on Canvas, the current VLE platform used at the targeted institution. However, the suggested techniques can be similarly adopted in any other platform and they are not limited to Canvas by any means.

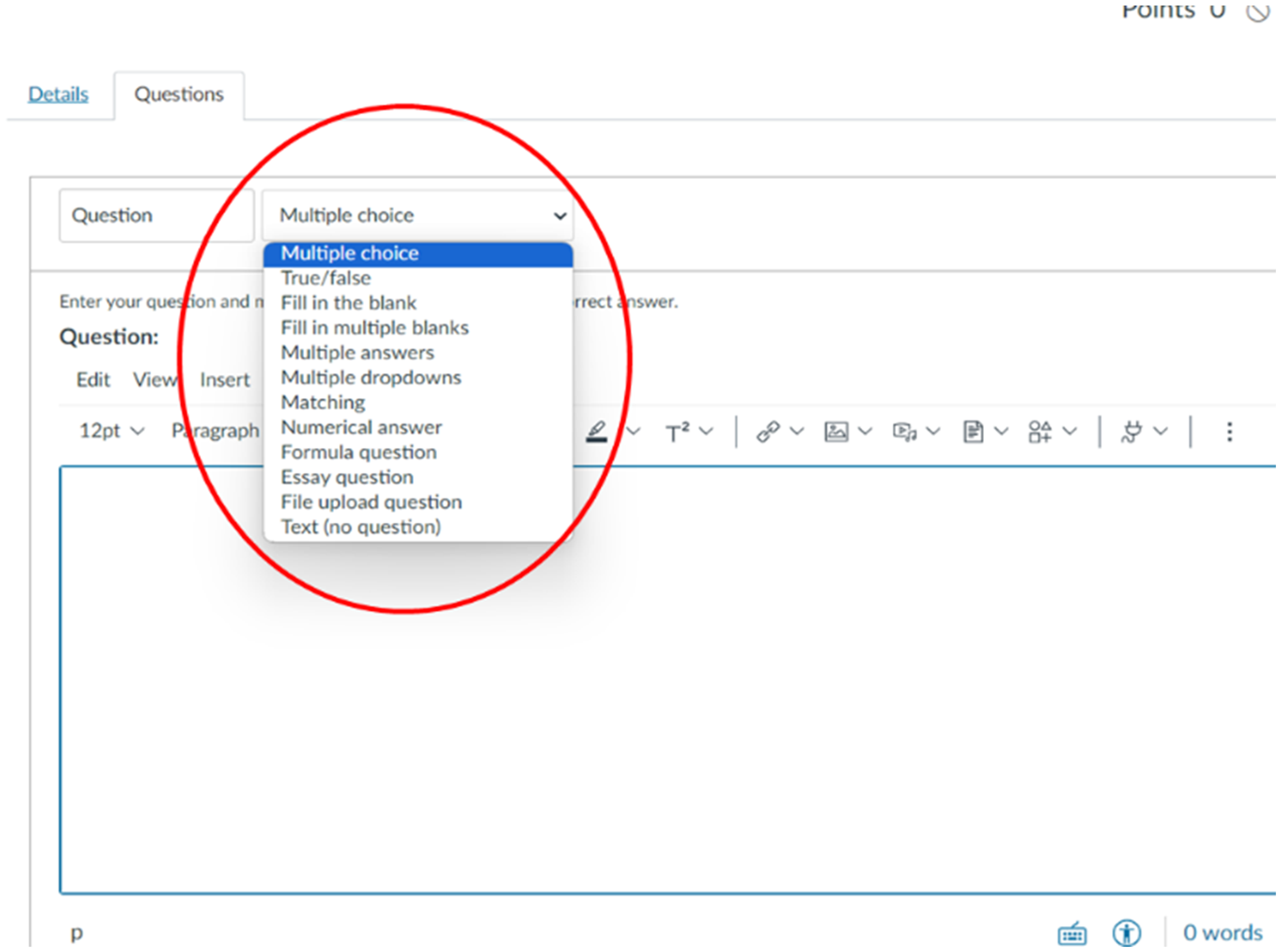

In Canvas, the VLE assessment tool is named quizzes as it can be seen from Figure 1, which shows the interface of the quizzes tool tab in Canvas. A quiz can be designed using various types of questions as it is demonstrated in Figure 2, which shows the twelve question type options available when creating a quiz. This variety of question types enables educators to set tests of various difficulties and to test various learning outcomes that suit different courses and programmes.

Quizzes represent the main VLE assessment tool in Canvas platform.

The different types of questions that are available within Canvas quizzes.

Integrity of VLE Assessments

VLE assessments represent a very useful tool that can be used by educators. However, unless this tool is treated with care, students might abuse it and see these assessments as an easy way to gain marks. VLE assessments should be supported with procedures and policies in order to protect their integrity and make sure that the designed assessments achieve their aims. The main risks to online VLE assessments include the use generative AI technology, students working in groups for individual tests and access to unauthorised materials.

Literature related to academic misconduct in online settings is still in its infancy compared to literature that focuses on traditional setting (Adzima, 2020). The issue of utilising AI technology, such as ChatGPT, for cheating in online exams is also a concern outside academia where this problem has been discussed by recruiters, trainers and experts as in (Matchett, 2023). Several methodologies and procedures, including proctoring, have been discussed by practitioners in these fields to strengthen VLE assessments and prevent cheating using AI technology, see for example (Witwiser.io, 2023).

This paper discusses some of the steps that can be taken in order to enhance the integrity of VLE tests and minimise the possibility of cheating using AI technology without considering proctoring methods. The reason is that proctoring is complex, requires special software and setting, and it is not adopted by a large number of HE institutions.

The Case Studies

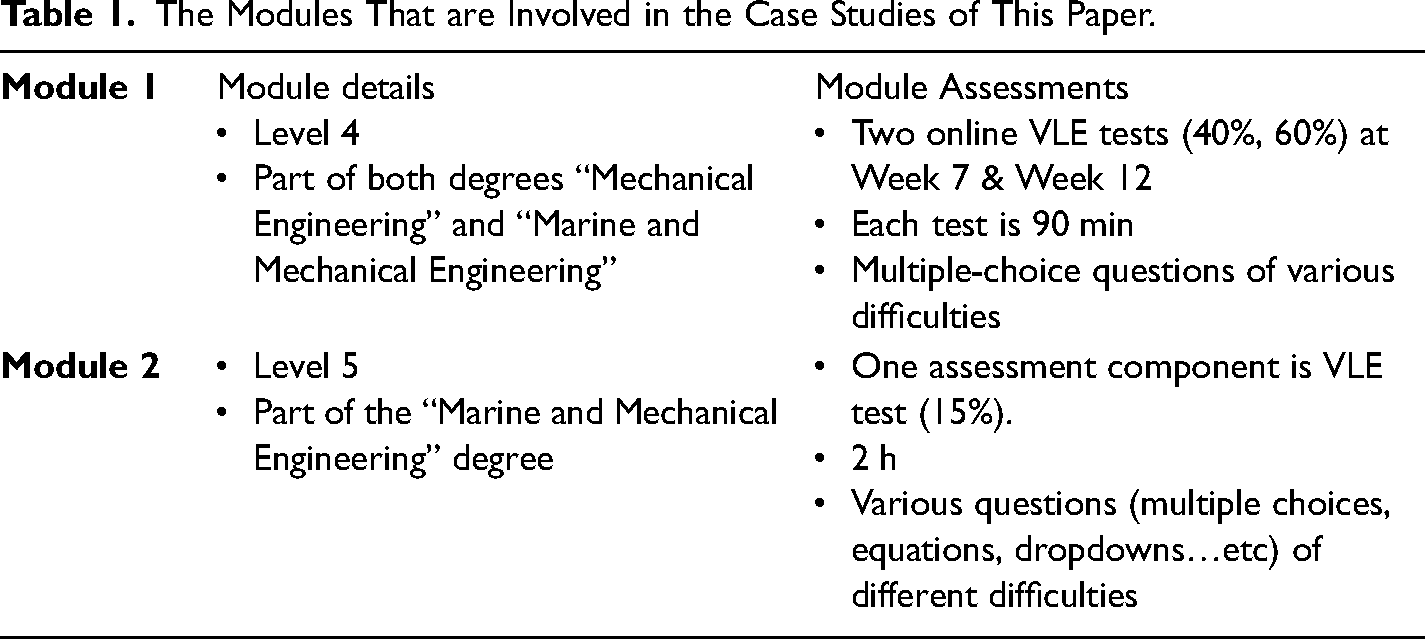

Two modules that use VLE assessments have been included in this study. Each module is part of an engineering degree that is taught within the university. The details of these modules are shown in Table 1 below.

The Modules That are Involved in the Case Studies of This Paper.

The VLE tests mentioned in the table above are designed in the academic year 23–24 using the strategy reported in this paper. In order to measure the effectiveness of the recommended design strategy, the student academic performance of the academic year 23–24 will be compared against the results of the previous year 22–23 for the same modules and tests where these tests were run on campus and fully invigilated during the academic year 22–23.

What Has Been Done?

Several strategies have been considered to minimise the risks discussed above and to protect the integrity of VLE assessments. All mentioned strategies in this section have been adopted when designing the case study VLE tests during the academic year 23–24. These strategies are reported in this section with clear examples from the case studies to support the practitioners who would like to implement these practices.

Setting the Rules

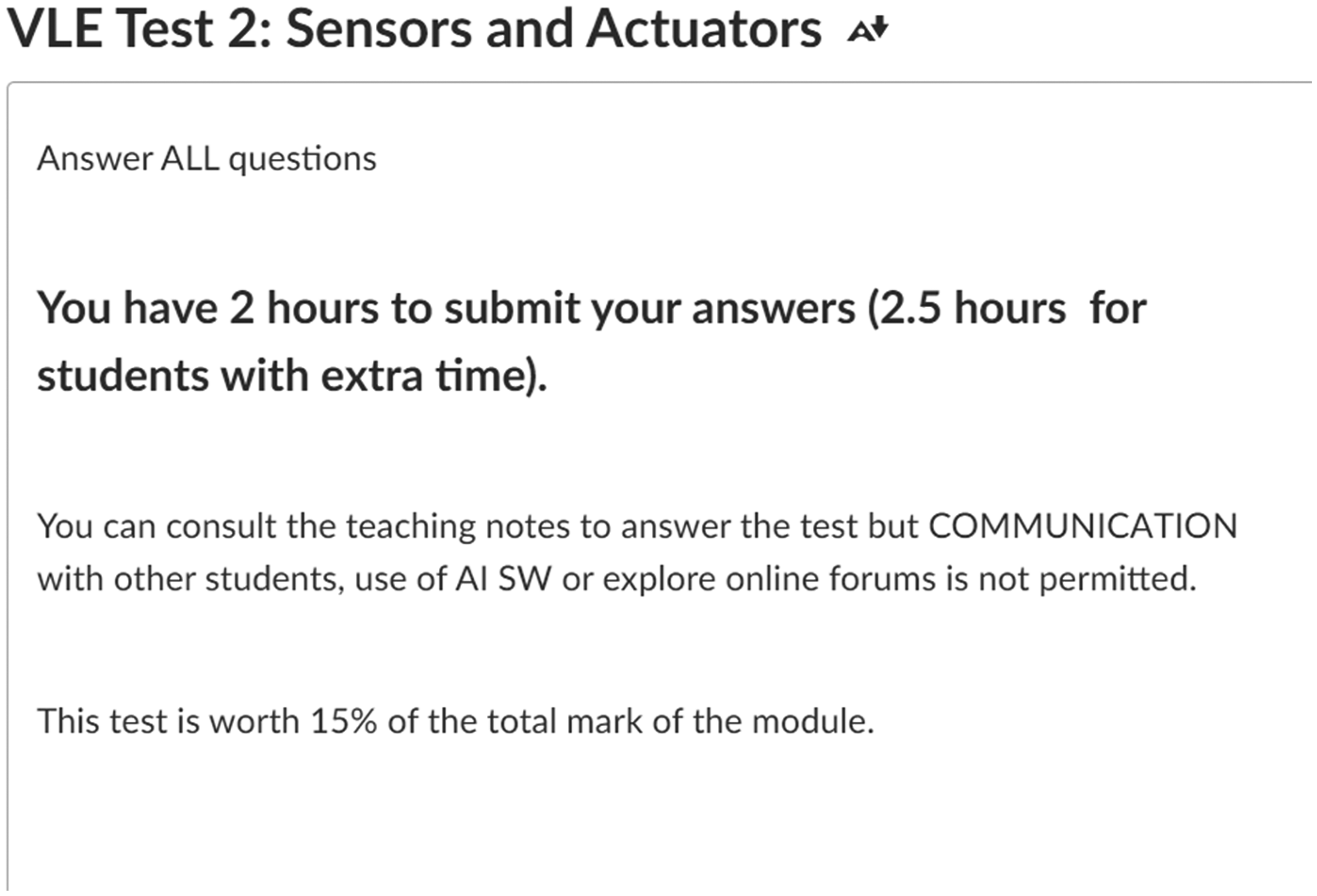

In general, VLE assessments are considered open-book tests. One of the main challenges of setting an open-book assessment is managing expectations of students. Students usually have the binary perception of either nothing is allowed or everything is allowed. One of the main steps that is taken in this context is to set clear rules on what is allowed during VLE assessments. This helps in keeping students aware of what they can and cannot do during the assessments. While some might argue that students will still ignore this and cheat, setting clear rules and consistently reminding students of these rules help in raising the standards and keeping engaged students away from the grey area. It also helps in academic malpractice cases where it shows evidence that students have been informed clearly about the rules and policies of the assessments. For the case studies reported in this paper, students were told about these rules frequently during the delivery of the modules to ensure that they understand the strategy of the assessments throughout the semester before taking the actual assessments. This helps in minimising the academic malpractice cases and enhancing the integrity of the assessments. Figure 3 below shows a screenshot of what students see before they attempt one of the assessments that are reported in this paper.

Setting the rules in simple terms for VLE assessments to make sure that students understand what is allowed and what is not allowed.

A Very Large Bank of Questions

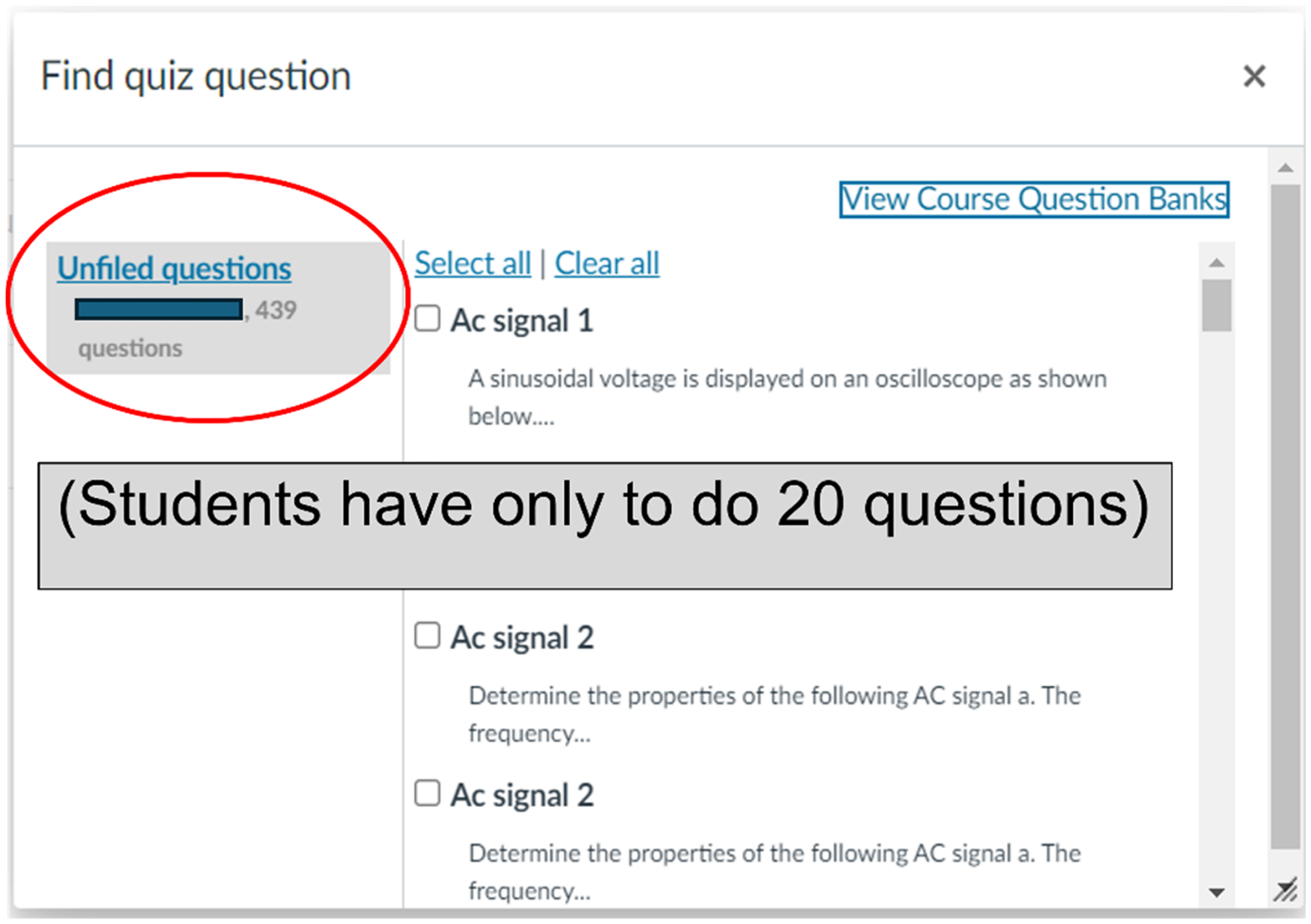

One of the main steps that have been considered to enhance the integrity of VLE assessments and minimise the possibility of academic collusion is the design of large bank of questions for the assessments. Figure 4 below demonstrates the number of questions that were created for the assessments of Module 1 which was over 400 questions. This number includes all variations of questions and students have only to answer 20 questions for each VLE test of the module. The average number of students in the cohort of the degree is in the range of 100–120 students. This means it is less likely that two students will have the same set of questions, which in turn minimises the risk of collusion between students and discourages students from working together.

A large number of questions have been designed for the VLE assessments. The name of the module is redacted according to the institution policy.

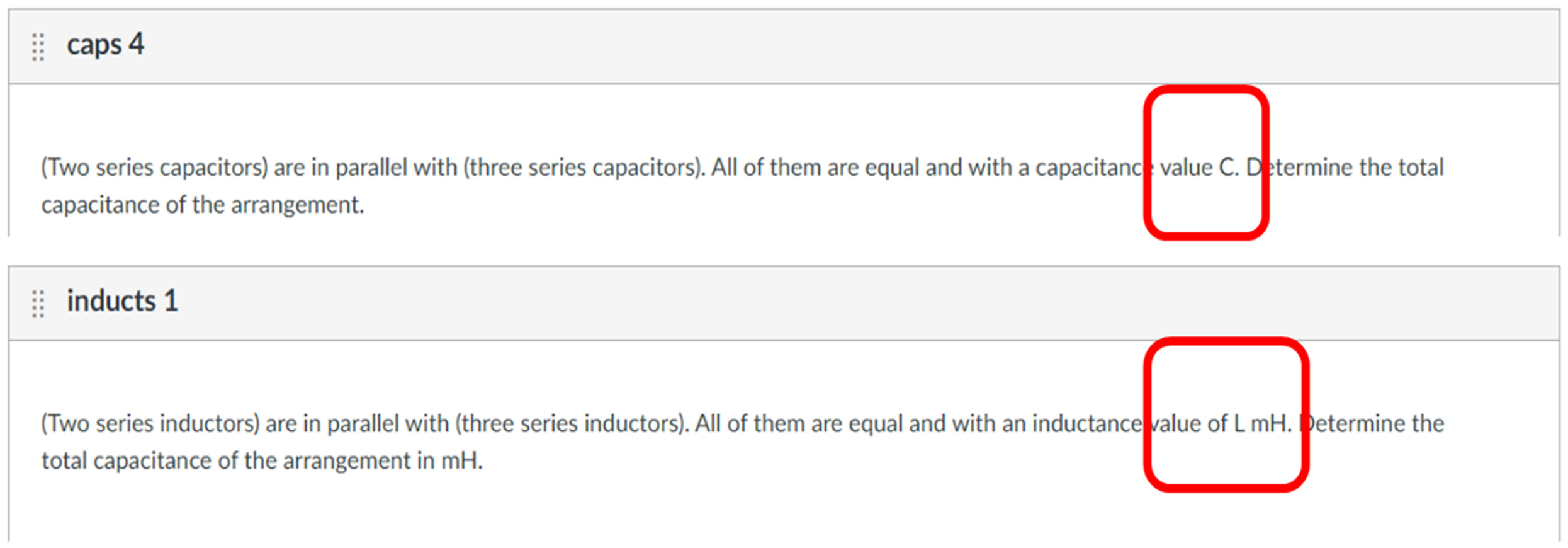

Images Instead of Text

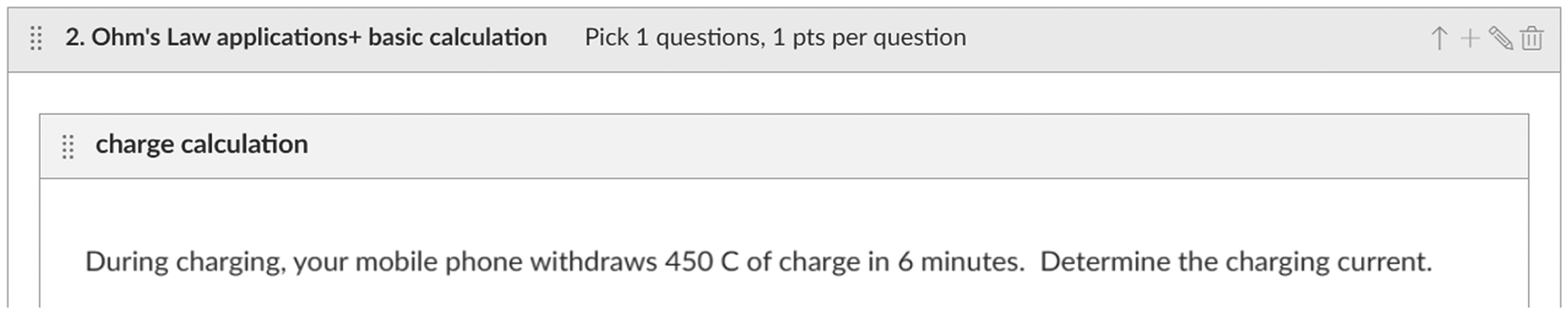

One of the main challenges that face VLE assessments in the presence of AI technology occurs when students copy the questions into the chatbot portals and consult the answer of these AI algorithms. To counter this challenge, all questions of the VLE assessments are put in the form of images rather than text. After writing the questions, Windows Snipping Tool is used to take a screenshot of the question and then these images are used instead of the text itself. Figure 5 shows an example of this practice where the text of the question is posted in the format of an image. By doing this and with the right timing of the assessments, students will have less appetite to type into AI portals and internet browsers as they cannot use the “copy and paste” practice because it is no longer an option now.

Posting the text of VLE questions in the form of images rather than text to prevent the practice of “copy and paste”.

One of the main disadvantages of this strategy is the time it needs for assessment preparation. It is a tedious process that needs a long time. Each question needs to be written and then snipped before it is included in the question bank. Any later correction or amendment of a question becomes impossible without rewriting the whole question again. For this purpose, the text copy of all questions was saved offline so that any required amendments can be done easily without the need for rewriting the question again. Also, the quality of the text is sometimes distorted when it turns into images. This might represent a challenge to accessibility compliance when assessing the assessment against any accessibility policy which is an issue that needs addressing by careful design of the chosen font type and size in addition to correct scaling of the image before using it in the assessments. With the improvement of AI algorithms, the recognition of images becomes more accessible. However, taking images and paste them into the AI chat box still takes more time and effort than copying and pasting a text and hence this measure is still valid even with recent versions of the AI algorithms.

Testing AI Answers for Each Question

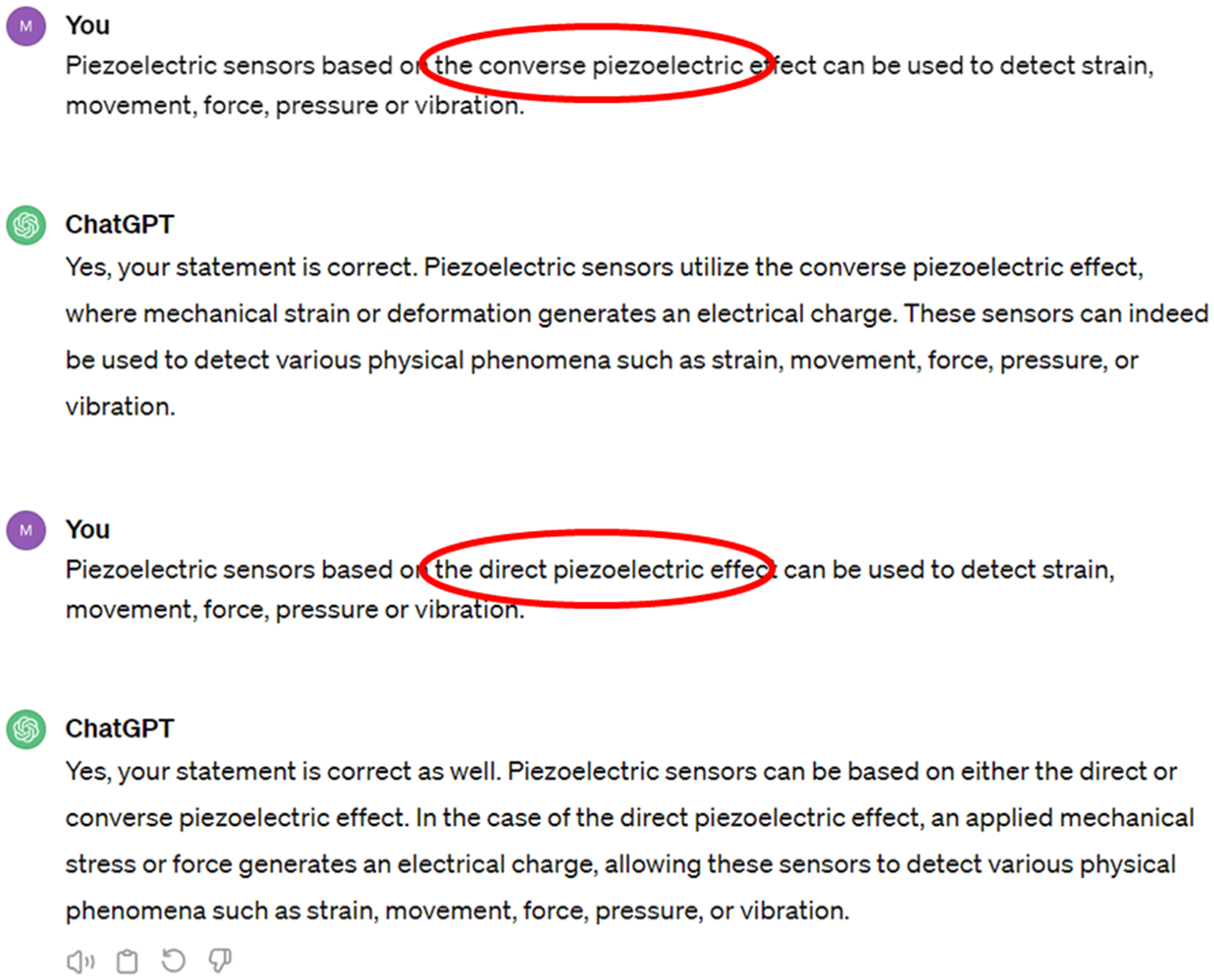

While AI technology is disruptive and it has huge influence, it is still incomplete. Before including any question in the VLE tests, the question was asked to ChatGPT as an example of available AI technology to students. ChatGPT is one of the main AI platforms that is currently in use by the public. It has a free version and can be accessed easily. It is worth noting that the available free version at the time of preparing the assessments was GPT 3.5. Hence any mentioning of ChatGPT in this paper refers to the interaction with this version of the technology. Many questions have been answered wrongly by the algorithm, specifically when it comes to special knowledge and information. Figure 6 below shows an example where two conflicting statements related to the functionality of piezoelectric materials were posted to ChatGPT and it was asked if they are true or false. The algorithm responded “Yes” to both contradictory statements which obviously cannot be true. This illustrates the risk of misinterpretation when relying solely on AI technology, as it failed to distinguish between contradictory statements. While ChatGPT is used in this paper for this method, the same fact applies to all other freely available AI platforms.

An example to show that ChatGPT provides conflicting answers to basic questions related to a specific piece of knowledge and information.

By posting questions to AI platforms, such as ChatGPT, the educator can get an indication of how much can be answered by the emerging AI technology and what might be the trend. It is then a good practice to choose questions which are not answered correctly by the AI technology in the VLE assessments. It is also important to note that the author did not respond to the wrong answers provided by ChatGPT and asked for these answers to be corrected. The aim is not to improve the accuracy of the algorithm and rather to protect the integrity of VLE assessments.

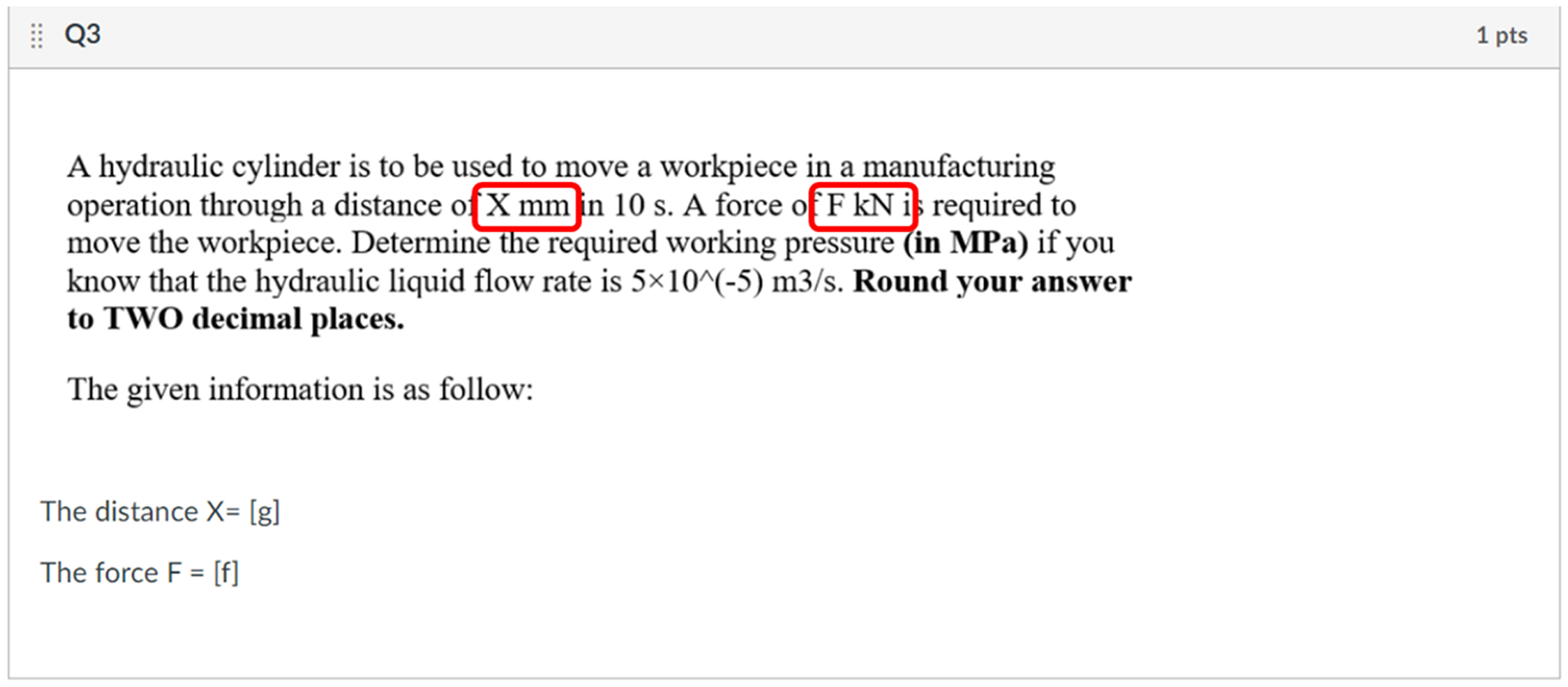

Varieties of Formula Questions

The quiz tools within Canvas provide an opportunity of creating questions with variations of maths-based questions using variables rather than fixed values. In order to do this, the variables have to be put between square brackets, such as “[x]” and a range for each variable has to be defined. Then, when the questions are attempted by students, each student will be given a different value for each variable based on a random allocation from the given ranges. This is a great tool from Canvas which is very important for engineering courses and mathematics-based problems. However, if one wants to use the images instead of text as explained in A Very Large Bank of Questions section, this feature will be lost. In order to benefit from this feature and still use image-based questions instead of text, the formula questions are turned into images while the variables have been provided as text in a separate line as shown in the example in Figure 7. By doing this, the text of the question itself is kept as an image while the information is numbers. This enables the assessor to make a large variety of questions and helps in minimising cheating and protecting the integrity of the assessments.

Using images and text in order to benefit from the feature of Canvas in generating variables for mathematical-based questions.

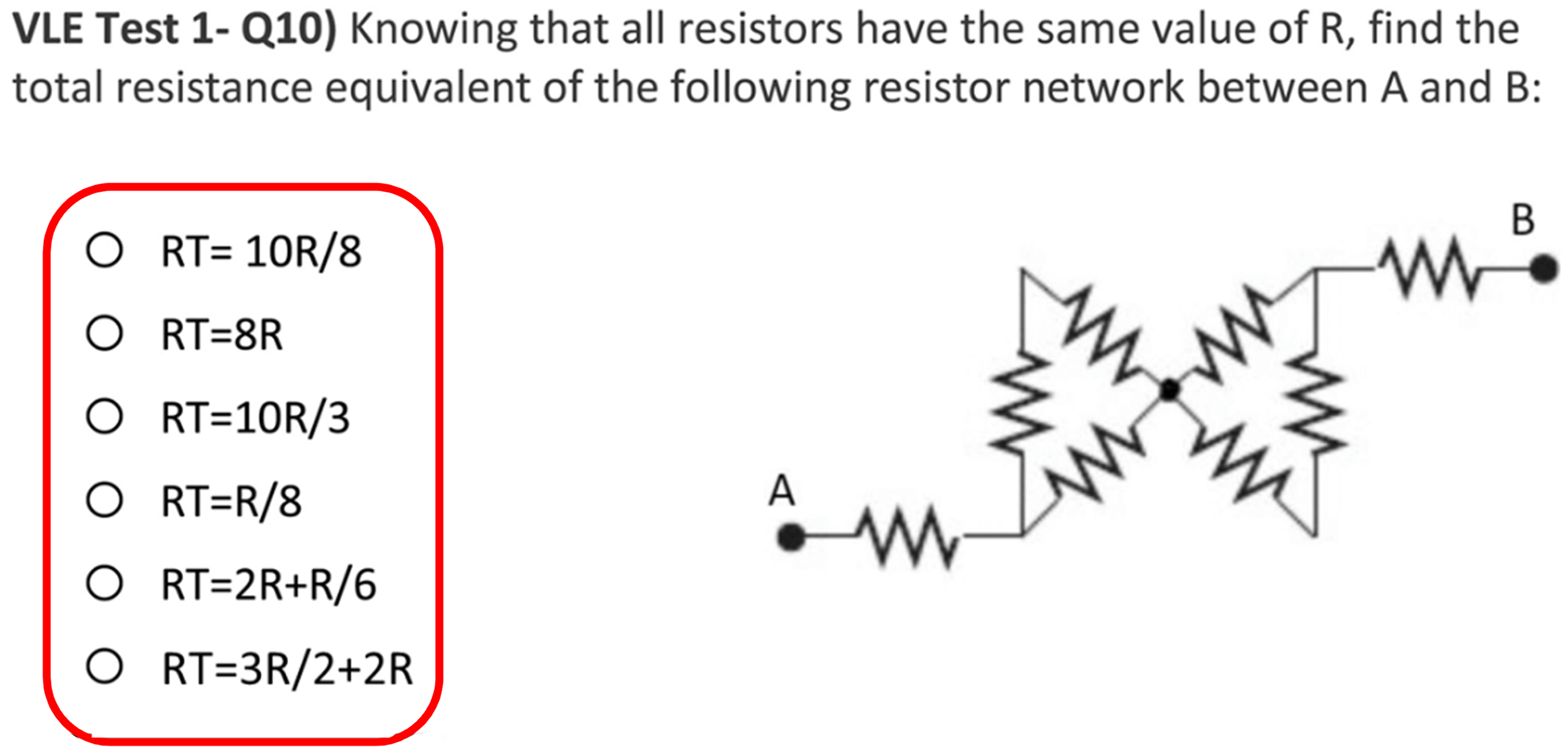

Questions with no Numbers

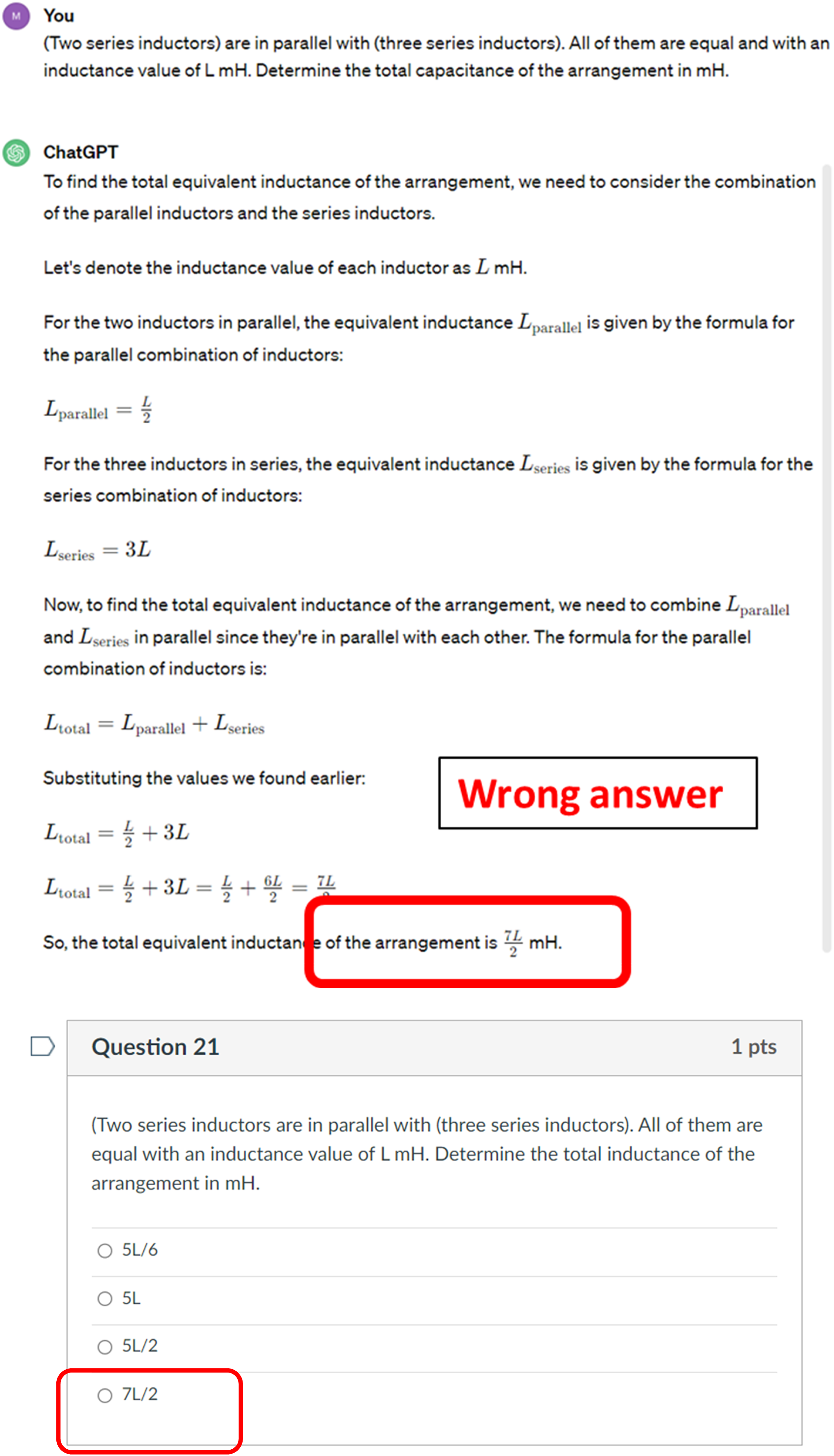

In engineering, it is the norm that students learn the concept in abstract format with formulas that are written using variables and symbols rather than numbers, and then they solve problems based on these equations by substituting the symbols by numbers. However, the AI technology deals with numbers very easily. Hence, in order to discourage students from using the AI technology during assessments, some questions are designed using symbols rather than numbers. These types of questions are tested against the ability of ChatGPT as an example of available AI technology and the algorithm answered them completely incorrectly. The questions require good understanding from students, and they need to be done using a pen and paper. Figure 8 below shows some examples of these questions where they are written fully using symbols rather than numbers.

Writing questions using symbols rather than numbers to make sure students don’t benefit from the AI technology for these questions.

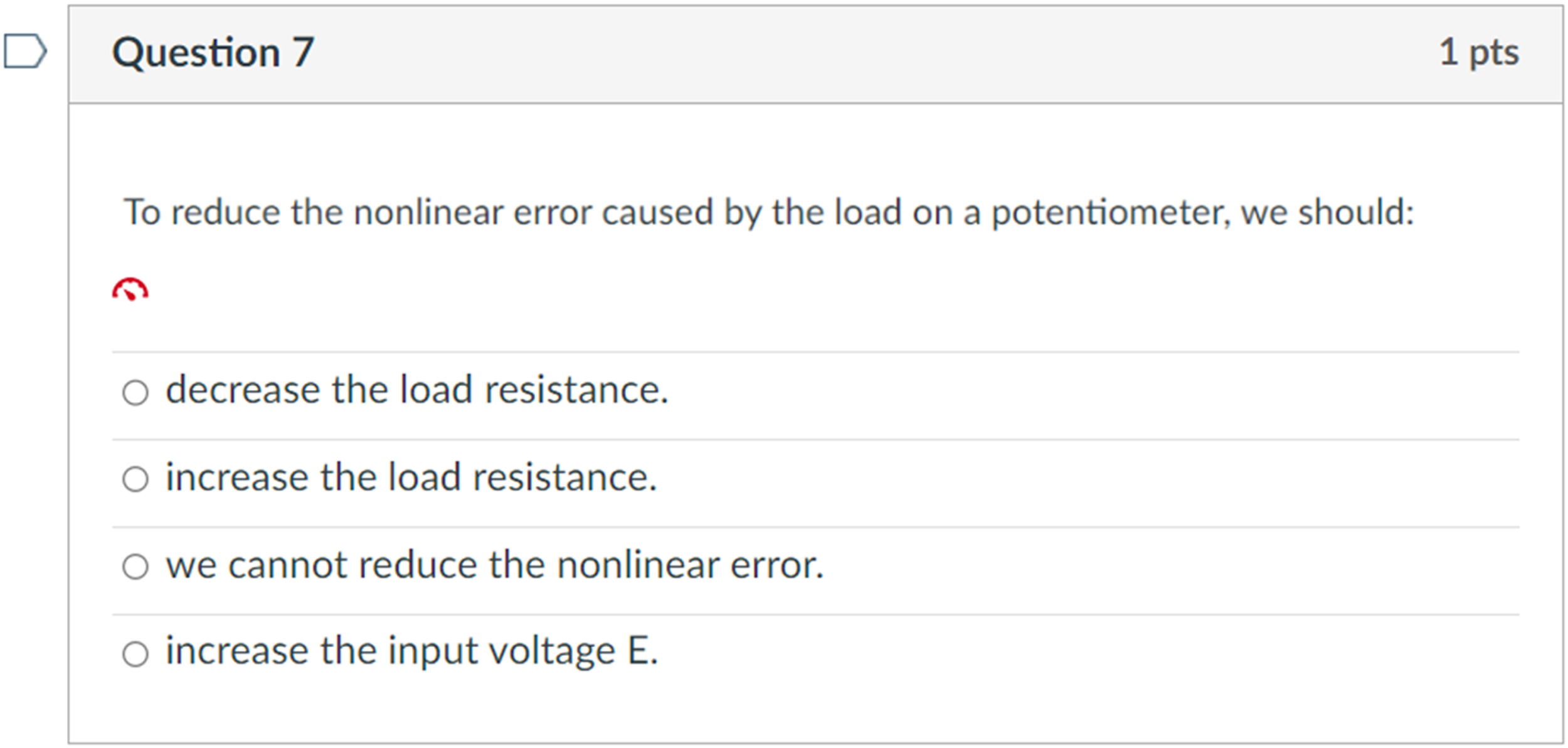

The Answer of AI Technology as an Option

Students are discouraged from using AI technology during the assessments and they are warned that doing so would be considered academic misconduct. This message has been conveyed to students repeatedly during the semester and they have been warned that certain measures have been put in place to limit the ability of benefiting from existing AI technology during the assessment. One of these measures is including the wrong answer provided from an AI algorithm, such as ChatGPT, as an option in the multiple-choice questions. An example of this is shown in Figure 9 where ChatGPT answered the question wrongly and its answer was included as an option in the answer choices. Furthermore, this design procedure could be used as an indication for academic misconduct cases. If a pattern has been detected where a student has selected all choices of the AI answers, this could represent a basis for academic misconduct enquiry.

Wrong answers from AI, ChatGPT in this case, are included as options in the multiple-choice questions.

Terms That Mean Different Things in Different Contexts

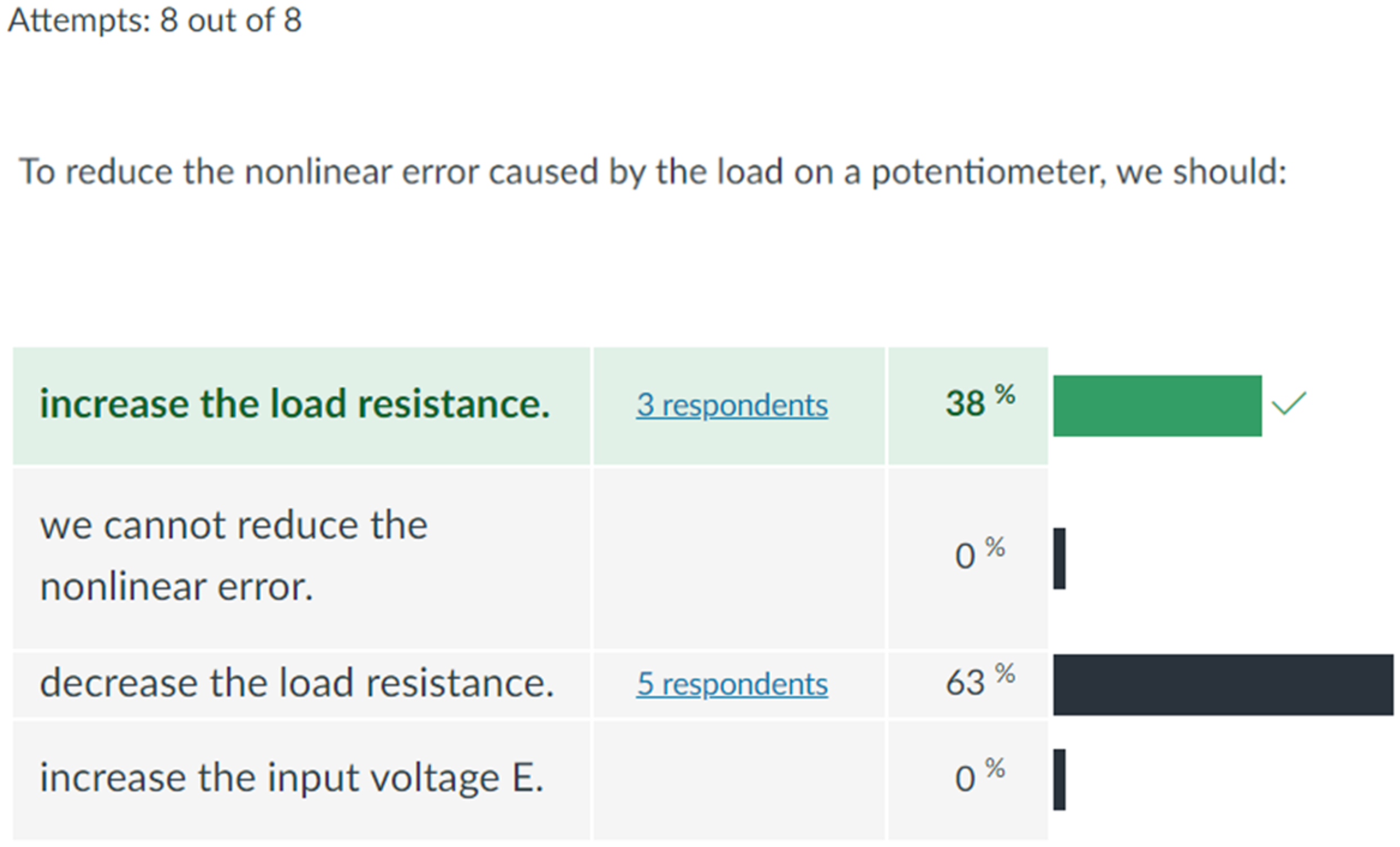

There are many terms and concepts that are used by people in different fields to mean different things. These terms can be used in VLE assessments because unless students have good understanding of the subject and know its terminology, they will not be able to answer the questions correctly. Figure 10 shows a question that is designed based on this strategy. The question in the figure is related to the correct interpretation of the term “load” within the potentiometer applications. If a student understands the context and is well prepared, the question is very simple and straightforward. However, if one consults online resources or AI technology, they will be confused about how to answer correctly. This is because the context is very important in order to answer these questions correctly. Even though this is a very simple and straightforward question, unfortunately, only 38% of those who had this question answered it correctly. It seems that the rest used the AI technology to choose the wrong option suggested by ChatGPT as shown in Figure 11.

An example of questions that are based on specific terminology, load in this case, which means different things in different contexts.

The analysis of how students answered the question presented in Figure 10.

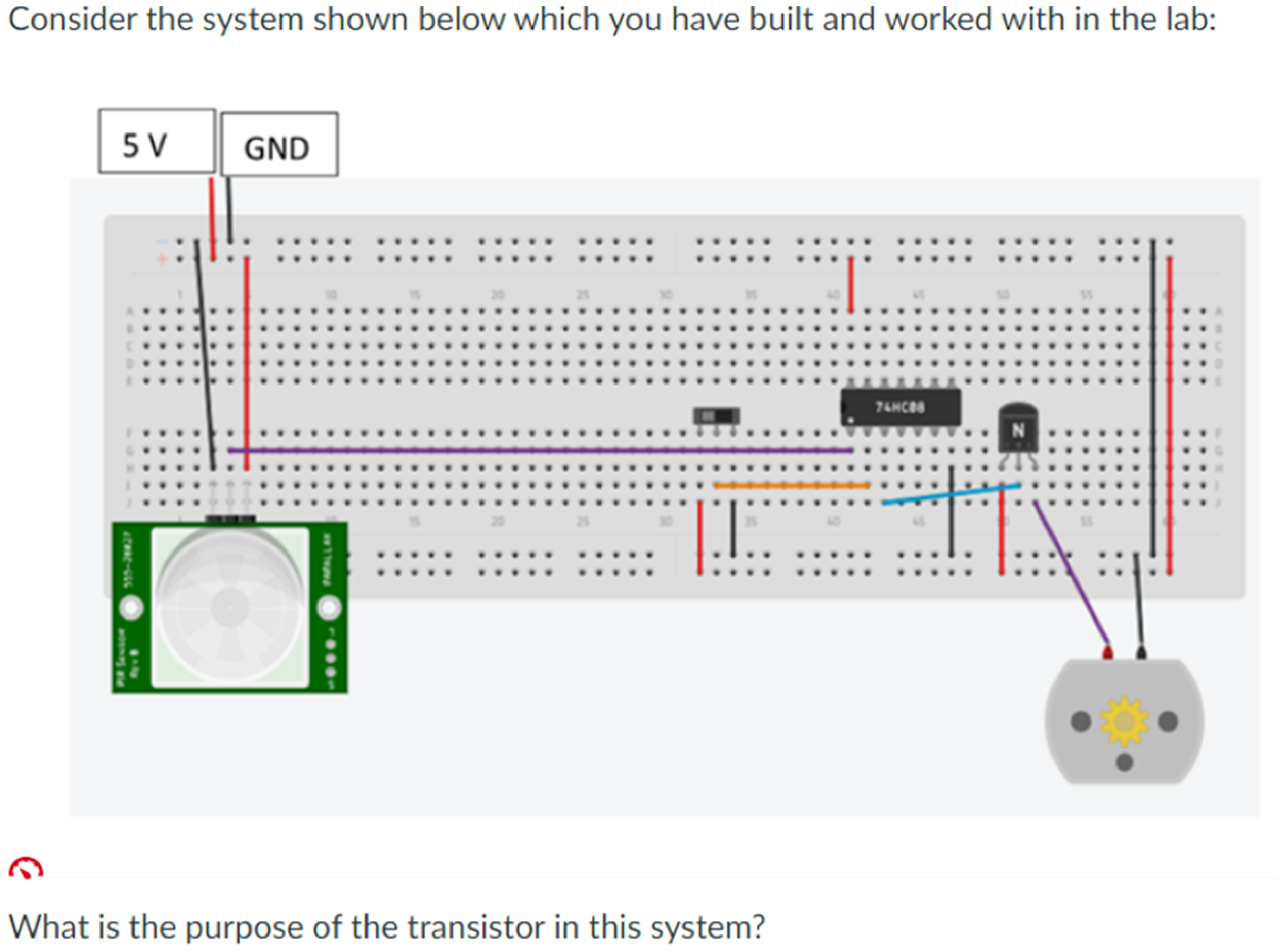

Images Related to Unpublished Materials

Several questions in the designed VLE tests are created using images of unpublished materials which are related to specific lab experiments that students have done in the lab. These questions have been answered correctly by only those who attended the labs. There is no chance to seek help by using AI technology or browsing the internet to answer these questions as no resources will be found related to these images and questions. Figure 12 below shows an example of these questions which are designed based on unpublished materials. The figure illustrates a mechatronics system example that students built in the lab to mimic the function of an automatic door application.

Using unpublished lab-based materials to limit the ability of using AI technology or any other external unauthorised resources.

This approach has proved very useful in assessing the learning outcomes of the modules with confidence. Historically the lab attendance drops towards the end of the semester as students start to feel that they don’t have to come, and they will be able to answer questions related to the labs without attending the lab sessions. This trend has reversed this year, and the labs have been well attended to the last session. It is believed that this is the result of the first VLE assessments of the modules where students have realised that to perform well in the tests, they need to attend all lab sessions.

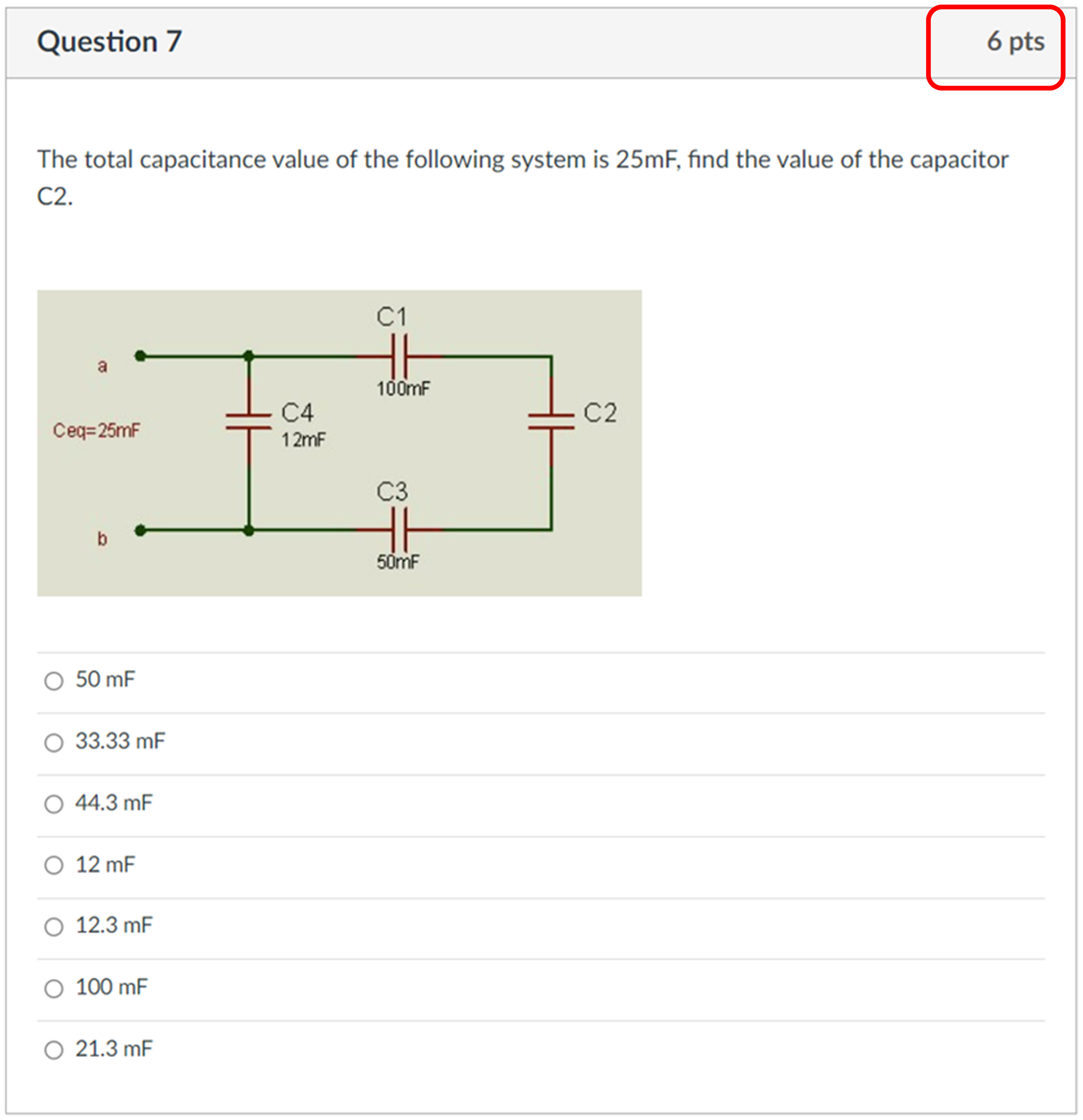

More Than Just 4 Options

Using multiple choice questions in VLE tests always has the chance of scoring by random selection. With the norm of providing only 4 options for these questions, a random guess has a 25% chance of being correct. In order to minimise this risk and make sure that VLE assessments challenge students to the best of their ability, questions with fundamental knowledge have been designed with at least six options as it can be seen in Figure 13 below. This helps in creating more secure VLE tests that assess the learning outcomes.

Increasing the choices in multiple-choice questions in order to minimise the risk of random guessing.

Questions with Reversed Engineering

Another strategy that is used in the design of the VLE assessments is the use of reverse engineering questions. These questions require rearrangement of equations and deep analysis to get the answers correct. Figure 14 shows an example of these questions with 7 choices in order to make sure that the correct answer has not been randomly selected. These questions are almost impossible to cheat using AI technology or browsing external materials without understanding. They were answered correctly by only those who attended the lectures. These questions have been allocated high marks to clearly distinguish between abilities of students.

Reversed engineering questions with more than just 4 choices and high allocation of marks.

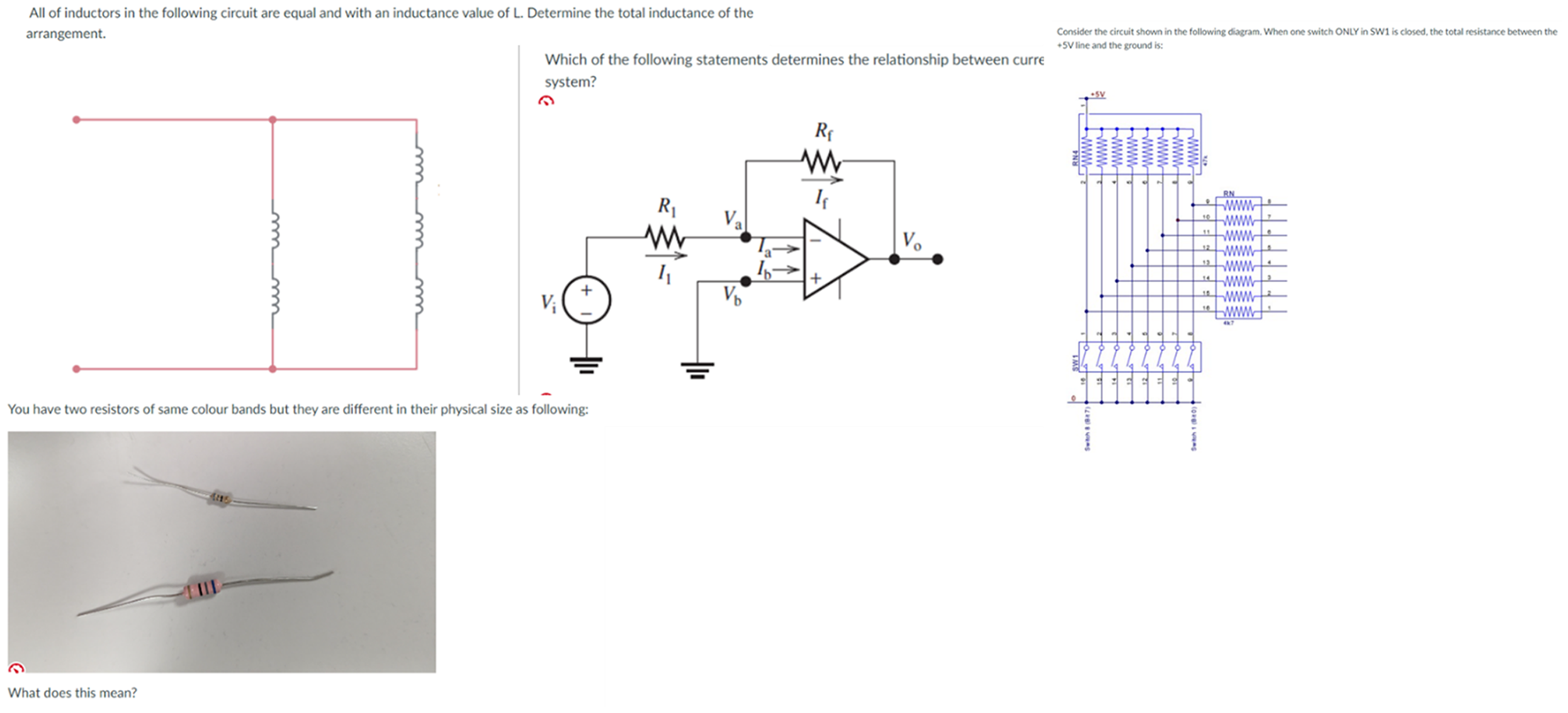

More Images and Less Text

In general, it has been established that questions which use images are hard to compromise and abuse using AI technology. Therefore, VLE tests have been designed with more of these questions in order to make the assessments fit for purpose and ensure that they give good indicators about the level of students. It is understood that the ability of AI technology to deal with images is improving and what used to be impossible with the version tested while preparing this paper might be possible with recent versions of the AI technology. However, by choosing the right images and with good design of questions, dealing with image-based questions could still be a challenge to the technology in comparison with texts. Figure 15 below shows some examples of the designed questions that are image based.

Examples of the questions which are designed using images in order to protect the integrity of the assessments and minimise the risk of using AI technology to cheat.

Finally, to evaluate the impact of the strategies described in this section, Student Academic Performance section presents the academic performance outcomes observed in two consecutive academic years.

Student Academic Performance

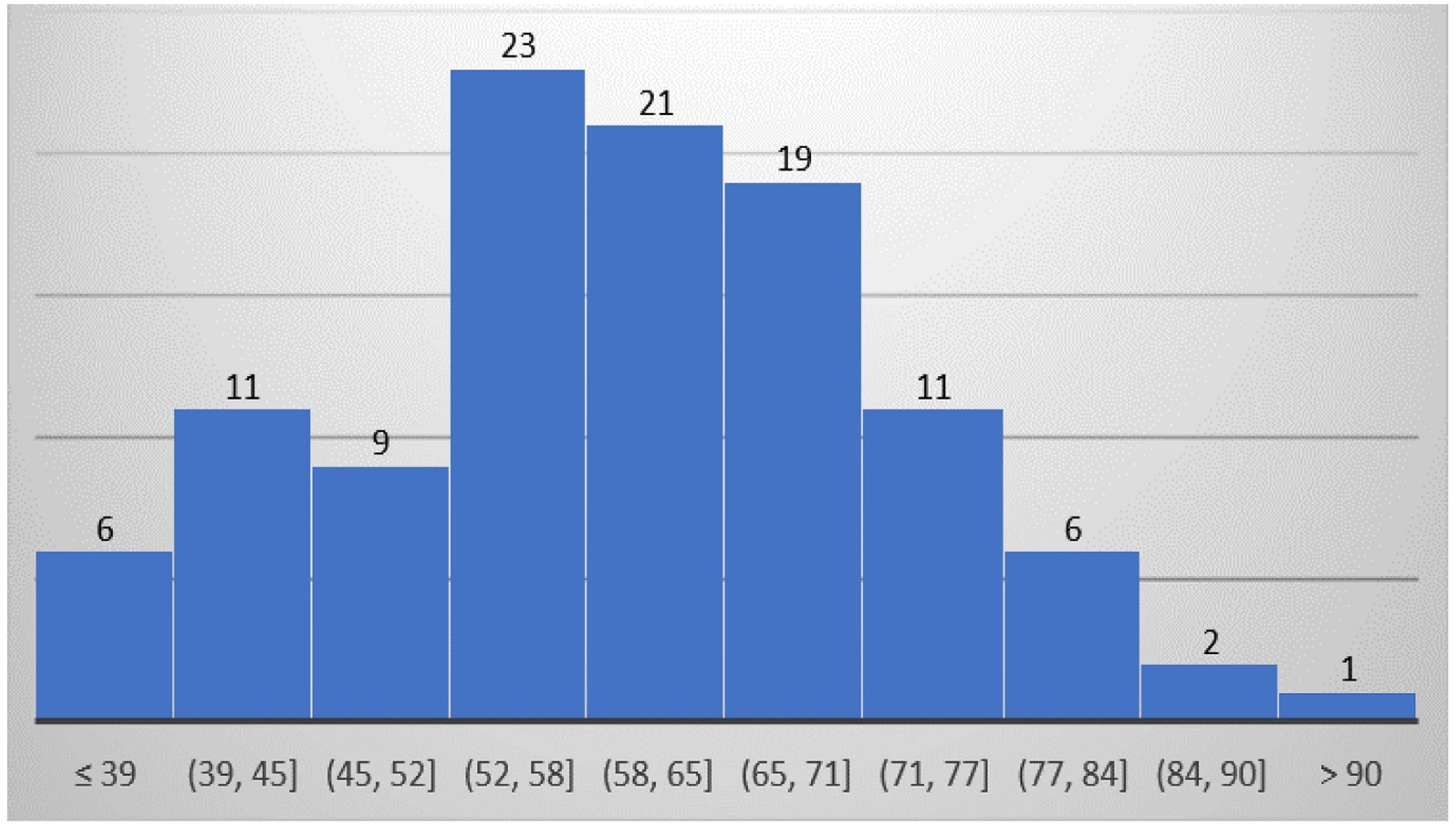

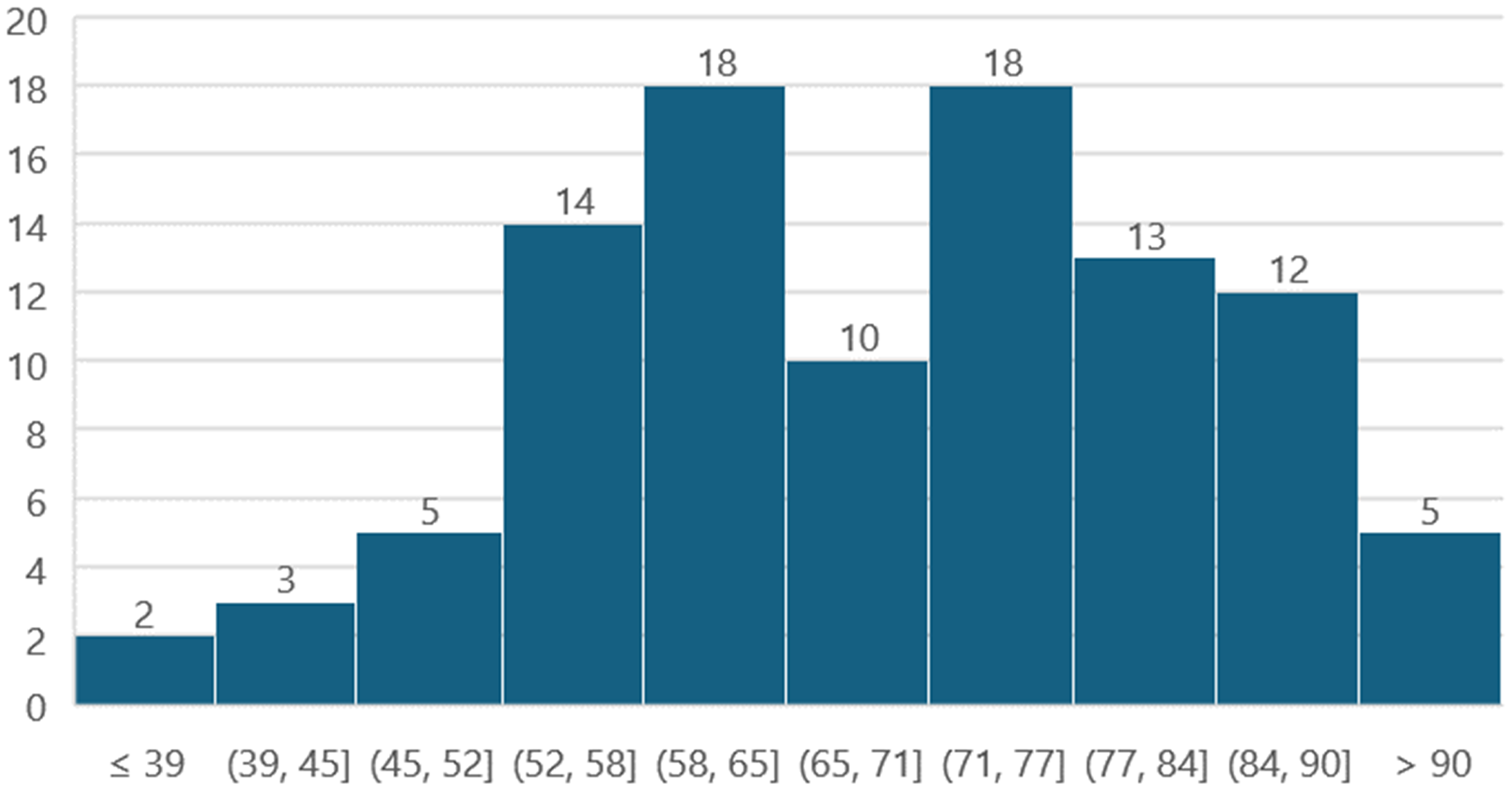

Student academic performance represents a good indicator of the effectiveness of VLE assessments. The emergence of AI technology creates a noticeable trend of achieving high marks when using VLE assessments compared with traditional in-room examinations. For the modules considered in this paper, student academic performance is compared between two consecutive academic years; 22–23 and 23–24. For Module 1, the VLE tests were done on campus during the academic year 22–23. Students had to attend specific allocated PC clusters to do the VLE tests. The tests were invigilated by members of staff to ensure that students don’t access unauthorised materials or use AI technology. The profile of the academic performance for that year is shown in Figure 16. The achieved average mark was 59% and the success rate (40% threshold) was 94.5%. For the current academic year 23–24, the VLE tests have run remotely online where students had the opportunity to do the tests from their own chosen locations considering the implemented measures reported in this paper. The student academic performance profile is shown in Figure 17 with an average mark of 69% and a success rate of 97.97%.

Student results for Module 1 for the year 22–23.

Student results for Module 1 for the year 23–24.

For Module 2, the VLE test was done online during both academic years 22–23 and 23–24. However, the measures discussed in this paper were not implemented during the previous academic year. The number of students enrolled in Module 2 was very low during the 23–24 academic year (only 8 students) which makes it very difficult to detect any trend or draw any meaningful information. However, it is useful to report that the achieved average this year was 56.66% compared with 59% last year for this module. The maximum mark was 86.67% and the minimum was 40% this academic year.

Comparing Figure 16 and 17, one can conclude that the mark distribution is acceptable with no detected abnormalities that can be seen as a result of running the VLE tests remotely online. This shows a good indicator that the adopted methodology presented in this paper was effective and it helped in protecting the integrity of the assessments and minimising academic misconduct. The average mark and the pass rate for the academic year 23–24 were higher than those of the previous academic year 22–23, however, in general the cohort of students for the academic year 23–24 achieved better than the previous academic year 22–23 across all modules which match the trend seen for the module presented in this paper. The comparison in the paper is limited to the student performance data between two consecutive years to show the effect of the implemented strategies on protecting assessments and no further statistical analysis is possible at this stage.

Conclusion

This paper provides an opportunity for sharing best practice on how to improve VLE assessments and protect the integrity of assessments against the use of AI technology. Importantly, it aims to dispel concerns about compromising these valuable assessment tools, making them accessible for use by colleagues in HE and beyond. By fostering a collaborative environment, we hope to contribute to the continuous improvement of educational practices and uphold the standards of academic integrity. The aim of the paper is not to discuss how to embrace AI technology in education or how to benefit from the technology. The paper only discusses how to protect the integrity of VLE assessments.

The profile of students’ academic performance indicates that the assessments ran effectively, and the modules achieved their learning outcomes. There was no guarantee that students did not cheat at all, but what has been done minimises all risks. The students’ academic performance profile confirmed this with a normal distribution and an acceptable average mark. Future work will look into further techniques that can be implemented to strengthen the integrity of VLE assessments and ensure the achievement of the learning outcomes. Steps like asking students to sign a declaration at the start of each VLE assessment as well as testing new types of questions and techniques will be implemented and reported. While this study is a pilot study that is limited to engineering programmes at a single institution, its methods can be generalised to all programmes and institutions that use VLE tests. Further multi-institutional studies are needed to validate and generalise the findings of this paper.

Footnotes

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.