Abstract

We investigate perceptions of AI among university students and staff, focusing on sociodemographic predictors of use, attitudes and literacy. We follow an explanatory mixed-methods approach: an online survey (269 students and staff) capturing self-reported AI use, attitudes, and literacy, and 24 semi-structured online interviews exploring barriers to acceptance in higher education and research. Quantitative data reveal differences in perceptions of AI usage between students and staff. Males report higher use, more positive attitudes, and greater AI literacy than females. Higher socioeconomic status predicts more frequent use, and older age predicts lower AI literacy. Qualitative findings highlight concerns about academic repercussions of AI and threat to jobs. Participants highlight a lack of guidance and need for support to promote responsible use. Universities should increase engagement, and provide unambiguous guidance, to tackle misperceptions of how AI is used by others and address staff and student fears impacting AI acceptance and adoption.

Keywords

Background

The use of artificial intelligence (AI) in higher education and research has increased significantly in recent years (Alqahtani et al., 2023; Crompton & Burke, 2023; Laupichler et al., 2022; Zawacki-Richter et al., 2019), driven by tools like ChatGPT, which already impact various aspects of academia (Atlas, 2023; Neumann et al., 2023; Strzelecki, 2024). In this study, we use the term AcademAI to refer to the presence and application of AI within higher education institutions for educational and research purposes, as engaged with by both students and academic staff. From a teaching perspective, AI can assist in a range of tasks, including delivering course content, curating learning materials, conducting automated grading, and predicting learner performance and satisfaction (Ouyang et al., 2022; Zawacki-Richter et al., 2019). From a learner's perspective, AI can help to transform education through increased personalised learning and adaptive content creation (Alqahtani et al., 2023). A key application of AI tools in higher education involves generating and editing text (Atlas, 2023). AI can also assist researchers at multiple phases of research, including generating ideas, conducting literature reviews, analysing and interpreting data, and formatting and editing manuscripts (Alqahtani et al., 2023; Ivanov, 2023). From a university perspective, AI has the potential to enhance education and research. However, there are valid concerns about academic integrity, plagiarism, education quality, algorithmic biases, as well as fair and equitable access to emerging technologies (Ivanov, 2023; Neumann et al., 2023). At present, one of the most common concerns is student use of generative AI to produce written work that is submitted as their own (Ivanov, 2023). This has the potential to increase cheating and plagiarism, as well as to undermine the quality of academic submissions (Chan, 2023). A recent survey of US college students found that 60% had used ChatGPT for more than half of their assignments, 75% of whom felt that using ChatGPT to cheat on their assignments was wrong, but did it anyway (Intelligent, 2023). Similarly, concerns have been raised regarding the use of AI for research. AI can produce factually incorrect responses, or ‘hallucinations’, and an overreliance on AI could lead to a decline in academic innovation and critical thinking (Ivanov, 2023). Despite these concerns, AI can, if managed effectively by higher education institutions, be a transformative resource in the future of education and research.

Researchers have called on universities to embrace and proactively manage the introduction of AI (Lim et al., 2023). Higher education institutions have differed in their initial responses to AI, popularised by ChatGPT. Whilst some responded by banning the use of AI for assignments and graded written work, others sought to incorporate AI into their assessment practices (Ivanov, 2023). For example, attempts to integrate AI tools into higher education may require students to critique AI-generated responses to user prompts on a given topic (University of British Columbia, 2023), or to ask AI tools to provide feedback on specific aspects of their writing (Duke University, 2023). In 2023, the Russell Group of universities in the UK published a guidance note on the use of generative AI in higher education, arguing that universities should support staff and students to become AI literate and promote equal access to these emerging technologies (Russell Group, 2023; Tobin, 2023). However, successful policies for implementing AI require understanding of the factors underpinning use and adoption. In a review of over 500 articles on educational technology research, Hew et al. (2019) found that 40% of studies were devoid of any theoretical framework. Psychological theories provide insights into the factors that influence technology-related behaviours. Early research into AI adoption has utilised the Unified Theory of Acceptance and Use of Technology (UTAUT) (Venkatesh et al., 2012) to examine the application of AI tools in higher education and research (Kelly et al., 2023; Strzelecki, 2024). The framework aims to demonstrate how factors such as ‘Performance expectancy’, ‘Social influence’, ‘Effort expectancy’, and ‘Facilitating conditions’, influence the behavioural intention to use, and subsequent adoption of, different technologies (Venkatesh et al., 2003, 2012). The factor ‘Facilitating conditions’ is of central importance to the role that higher education institutions play in the acceptance of AI. In this context, facilitating conditions may refer to staff and student experiences of institutional policies and levels of support, as well as the impact that socioeconomic factors can have on university life.

The degree to which people feel they are sufficiently literate to be able to engage with technology can be a key factor in acceptance and adoption (as has been shown in relation to computer and digital literacy (Nikou et al., 2022; Yeşilyurt & Vezne, 2023). Research into AI literacy remains in its infancy, though it is believed to be a key ingredient for helping to prepare people for increased future collaboration with AI in work and education (Laupichler et al., 2022; Ng, Su, et al., 2024). AI literacy is defined as, “a set of competencies that enables individuals to critically evaluate AI technologies, communicate and collaborate effectively with AI, and use AI as a tool online, at home, and in the workplace” (Long & Magerko, 2020, p. 2). Being AI literate does not require an in-depth understanding of the theory and mechanics of the underlying technology, but involves being able to competently navigate AI products for their intended use, and to evaluate the accuracy of the resulting output (Wang et al., 2023). This area of research must adapt quickly to changes in research and educational practice by adopting up-to-date measures. Many popular measures of attitudes and acceptance of technology were created prior to the popularisation of generative AI (Davis, 1989; Rosen et al., 2013; Venkatesh et al., 2012) and therefore may not be valid in this context. Using concise and innovative measures tailored to capture AI-related constructs will facilitate research evaluating perceptions of AI and help to inform future policies for education and research (Grassini, 2023). For example, Wang et al. (2023) recently devised a measure of AI literacy capturing: awareness, use, evaluation, and ethical practices. Additionally, Grassini (2023) recently devised a concise measure of public attitudes towards AI. It is important to measure people's attitudes towards AI in order to determine individual differences in acceptance and use (Ng, Wu, et al., 2024). Given the recent emergence of AI technologies, there is still limited knowledge regarding the levels of usage, attitudes, and AI literacy among students and staff in higher education and research (Strzelecki, 2024).

The Joint Information Systems Committee (JISC) have raised concerns about digital inequality regarding the implementation of AI tools in higher education, in particular with respect to the potential for current and future costs of AI technologies to act as a barrier to access (JISC, 2023). The digital divide refers to disparities in access and usage associated with digital technologies, and is impacted by sociodemographic factors like income, education and access to support (Lythreatis et al., 2022). Early research suggests that the digital divide plays a critical role in influencing AI literacy, as people with greater access to digital technologies are typically more AI literate (Celik, 2023). AI literacy can provide a competitive advantage in education and research by increasing efficiency and output (Neumann et al., 2023). Therefore, individuals who have limited access to digital technologies may be at a disadvantage. In much the same way that access to digital technology can impact skills underpinning AI literacy, there may also be inequalities as a consequence of institutions’ approaches to AI. For instance, if an institution fails to adequately integrate AI and offer sufficient support, its students may fall behind in AI literacy, putting them at a disadvantage compared to those at institutions that fully embrace AI (Ivanov, 2023).

A number of individual characteristics have been consistently associated with differences in the acceptance and use of digital technologies, though research is needed to determine whether these characteristics are the same, or different, in the context of AI in higher education and research. For example, males are broadly more positive towards the adoption of new digital technologies (Cai et al., 2017) and there is a persistent negative association between age and acceptance of various digital technologies (Hauk et al., 2018). From the emerging research on the use of AI, males report greater ChatGPT use than females, and attitudes towards AI are most positive amongst young people and individuals of higher socio-economic status (Méndez-Suárez et al., 2023). A recent meta-analysis of studies of children and adult learners showed a large, positive effect size for the impact of AI tools on learning achievement, suggesting that those who adopt AI tools are likely to have an educational advantage over those who do not (Zheng et al., 2023). One of the biggest concerns about the adoption of AI in an academic context is the potential for it to widen existing, or create new, gaps in educational attainment (Taylor, 2024). Therefore, research is needed to capture individual differences in attitudes towards AI in the emerging context of AI in higher education and research. This will help to better understand the digital divide in a university context. To create equal opportunities in education and research, access and use of AI tools must be fairly supported by the policies of higher education institutions.

The aim of this study is to investigate student and staff perceptions of AI through a theoretical framework of technology acceptance. The findings will help inform universities of the drivers of AI attitudes and behaviours, including key barriers and facilitators to AI adoption in higher education and research. Capturing individual differences in usage, attitudes, and AI literacy will provide insight to guide interventions designed to address rising digital inequalities (Lutz, 2019) and inform inclusive university policies aimed at encouraging the fair adoption and use of technology so that no individual is left behind in the age of AI.

The primary research questions are as follows:

How, and to what extent, is AI commonly used by students and academic staff in higher education and research? Do individual characteristics predict usage, attitudes, and AI literacy in higher education and research? What are the perceived barriers and facilitators to AI adoption in higher education and research?

Method

This study was approved by the Department of Psychology Ethics Committee at Northumbria University (ref. 6661). The study adopted an explanatory mixed-methods approach, preregistered with the Open Science Framework (OSF) [osf.io/dgwna/]. First, we conducted an online survey of student and academic staff perspectives of AI at Northumbria University, Newcastle, United Kingdom. From this sample, we recruited participants with varying attitudes towards AI, as determined by their scores on an AI attitude scale, to ensure a quantitatively diverse range of perspectives. We then conducted individual semi-structured online interviews to investigate perceived barriers and facilitators to the acceptance and use of AI tools in higher education and research.

Quantitative Survey

We first conducted a quantitative, correlational online survey study using a Qualtrics questionnaire. Pilot testing was conducted on an opportunity sample of 20 participants from the Psychology Department at Northumbria University, to assist in developing the survey.

Sample and Recruitment

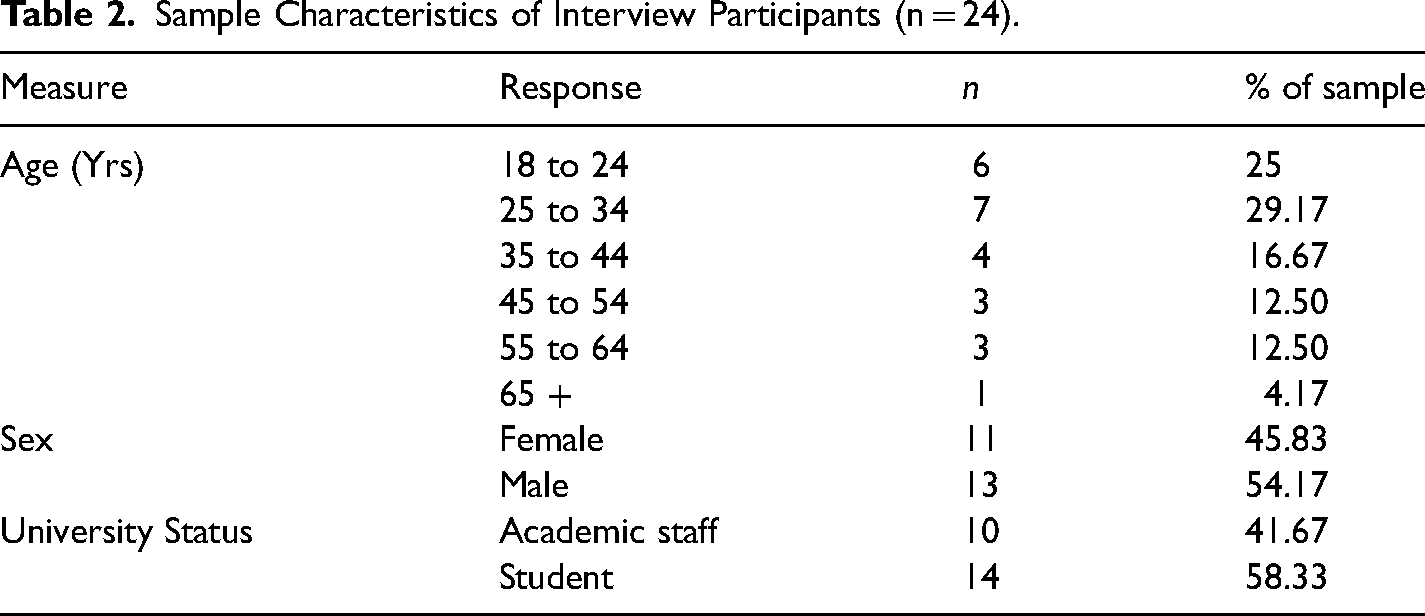

An opportunity sample of students and academic staff from Northumbria University was recruited via email and study posters located on campus. To ensure participant responses were representative of multiple academic disciplines, we advertised the study through programme leads and departmental representatives from each of the 17 academic departments at the University. Participants were offered the chance to enter a prize draw for online shopping vouchers as thanks for their contribution to the study. Participants were university students or academic staff aged between 18 and 66 years (Mean age = 30.77 years, SD = 11.65 years). 157 participants were female, 106 were male and six chose not to report their sex (n = 269 total). For full details of the sample characteristics for the quantitative survey, see Table 1.

Sample Characteristics of Survey Participants (n = 269).

Materials

The full survey questionnaire is registered with the OSF [osf.io/dgwna/] and included the measures described below. To encourage a consistent interpretation of AI throughout the survey responses, participants were told that, “we are referring to publicly available generative AI tools that typically respond to user prompts to produce a desired output, including generating text, image, audio and video. Popular examples of AI include text-based tools like OpenAI's ChatGPT, Google Bard, and Microsoft Copilot; and image-based tools such as Microsoft Designer and OpenAI's DALL-E.” Participants were specifically asked to answer questions about AI in the context of educational and/or research activities.

Personal Information

The survey asked participants for their age, sex, educational or professional status at the University, academic department in which they were enrolled or employed and their score for the MacArthur Scale of Subjective Socioeconomic Status (Adler et al., 2000).

Use of AI

Participants reported how frequently they use AI for education or research, and how frequently they believe the typical student and academic staff member at their university uses AI. Responses were provided on a seven-point Likert scale from “Never” to “Multiple times daily”. Participants were also asked to indicate how they use AI by selecting multiple items from the options presented (e.g., “generating text”, “explaining ideas”, “data analysis”); and the purpose(s) for which they use AI (e.g., “completing graded assignments”, “preparing teaching materials”, and “conducting research activities”).

Attitudes Towards AI

Using the validated four-item AI Attitude Scale (AIAS-4) (Grassini, 2023), participants were asked to indicate the extent to which they agree with four statements regarding their beliefs about whether AI will improve their life and work in the future, their intended future use of AI, and the benefits of AI for humanity. Responses were given on a seven-point Likert scale from “Strongly disagree” to “Strongly agree”.

AI Literacy

Using the validated 12-item AI Literacy Scale (AILS) (Wang et al., 2023), participants indicated the extent to which they agree with 12 statements regarding their knowledge, ease of use, ability, and ethical use of AI. Responses were given on a seven-point Likert scale from “Strongly disagree” to “Strongly agree”.

Quantitative Survey Analysis

All statistical analyses were performed using R (R Core Team, 2021). The following packages were used for data processing, analysis and visualisation: apaTables (Hope, 2022; Revelle, 2021; Stanley, 2021; Wickham, 2016; Wickham et al., 2020). Our full R script is available on the (OSF; osf.io/dgwna). Ordinal logistic regression was used to investigate individual characteristics that predict frequency of AI use (measured as an ordinal variable). Multiple linear regression was used to measure individual characteristics that predict attitudes towards AI (using summed scores for the AIAS-4 (Grassini, 2023) and AI literacy (using summed scores for the AILS (Wang et al., 2023)). Cronbach's alpha scores showed high levels of internal consistency for both scales: AIAS-4, α = .90; AILS, α = .85 (Taber, 2018).

Qualitative Interviews

Following the survey, we conducted semi-structured qualitative interviews to further capture attitudes towards AI and to investigate perceived barriers and facilitators to the acceptance and use of AI tools in higher education and research.

Sample and Recruitment

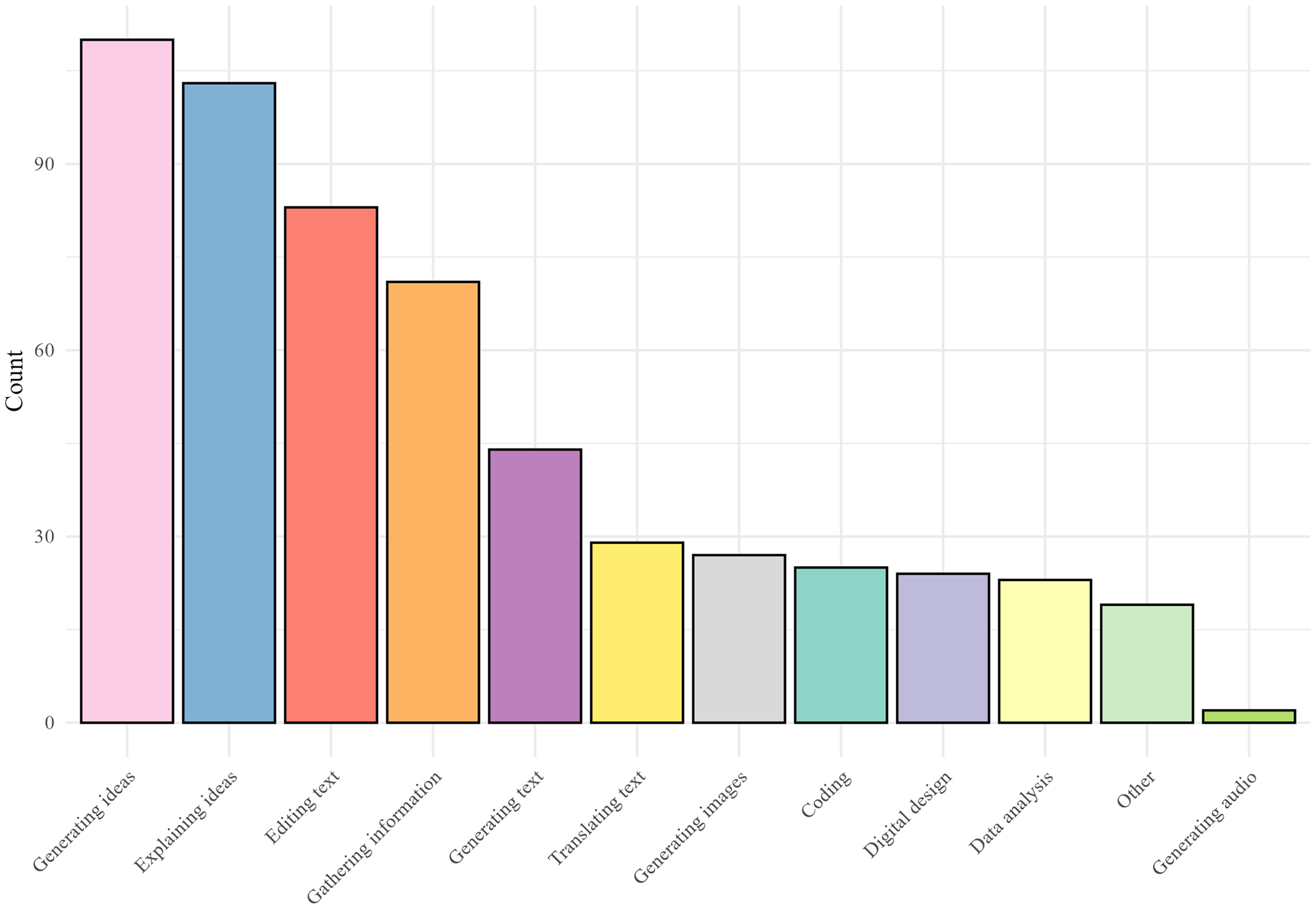

Interviewees were recruited from the online sample of survey participants. During the survey, participants were invited to take part in an interview to discuss the use of AI in higher education and research. Willing participants provided their email addresses to be contacted for scheduling an interview. Guidance for determining sample sizes for qualitative research suggests that multiples of 10–12 participants per group can be optimally informative and that samples approaching 30 participants can become unwieldy to administer and analyse (Boddy, 2016). Therefore, we recruited 24 interview participants: 14 students, and 10 members of academic staff. Participants were selected by examining AIAS scores, iteratively returning to these scores throughout participant selection to ensure a broad range of attitudes towards AI. Reported scores for attitudes towards AI (AIAS-4) from our sample of interview participants ranged from 1.25 to seven (Mean = 4.96/7, SD = 1.46). Table 2 provides a full description of the sample characteristics.

Sample Characteristics of Interview Participants (n = 24).

Qualitative Interview Protocol

Semi-structured interviews were conducted online via Microsoft Teams to avoid participants needing to download or install additional software. Interviews lasted between 19–50 min (average 37 min). An interview schedule (available on the OSF [osf.io/dgwna/]) guided each interview and included the following sections: 1) introduction, 2) use of AI, 3) attitudes towards AI, 4) perceived barriers to effective use, 5) perceived facilitators to effective use, 6) the role of the university in managing the use of AI, and 7) rounding up. Participants were encouraged to freely discuss their opinions about AI and the role of the university in inhibiting or facilitating the use of AI tools. Interviews were digitally recorded and transcribed verbatim. All identifying information was pseudonymised with participants subsequently being referred to by participant number.

Qualitative Interview Analysis

Reflexive Thematic Analysis (Braun & Clarke, 2019) was used to analyse the qualitative interview data. In our study, one researcher examined and analysed transcripts using NVivo 12 to create an initial thematic structure. The analysis and coding were guided by the three research questions, indicating a partly deductive approach. However, the iterative and reflective nature of theme development also incorporated inductive reasoning as new themes were drawn from the data. Themes were revisited and reviewed between phases by all members of the research team, until a consensus was reached, and the final thematic framework was produced collaboratively. We chose to analyse student and staff perceptions together, rather than as two distinct groups, as this acknowledges the combined influence of student and staff perspectives, reflecting the interconnected nature of these roles and their collective impact on AI integration in academic environments. In doing so, our results provide a holistic understanding of attitudes towards AI in educational and research contexts.

Results

Quantitative Survey

Participants ranged from 18–66 yrs, with a mean age of 30.77 years (SD = 11.65 yrs, n = 269 total). The mean student age was 24.58 years (SD = 6.48 yrs, range = 18–53 yrs) and staff mean age of 42.28 years (SD = 10.35 yrs, range = 24–66 yrs). 157 participants were female (100 students, 57 staff), 106 were male (72 students, 34 staff) and six chose not to report their sex (three students, three staff). The mean score for subjective socioeconomic status (SSES) overall was 6.00 (SD = 1.52, range = 2–10), 5.83 for students (SD = 1.53, range = 2–10) and for 6.32 staff (SD = 1.45, range = 3–9). A summary of the overall survey sample demographics and characteristics is provided in Table 1.

Use of AI

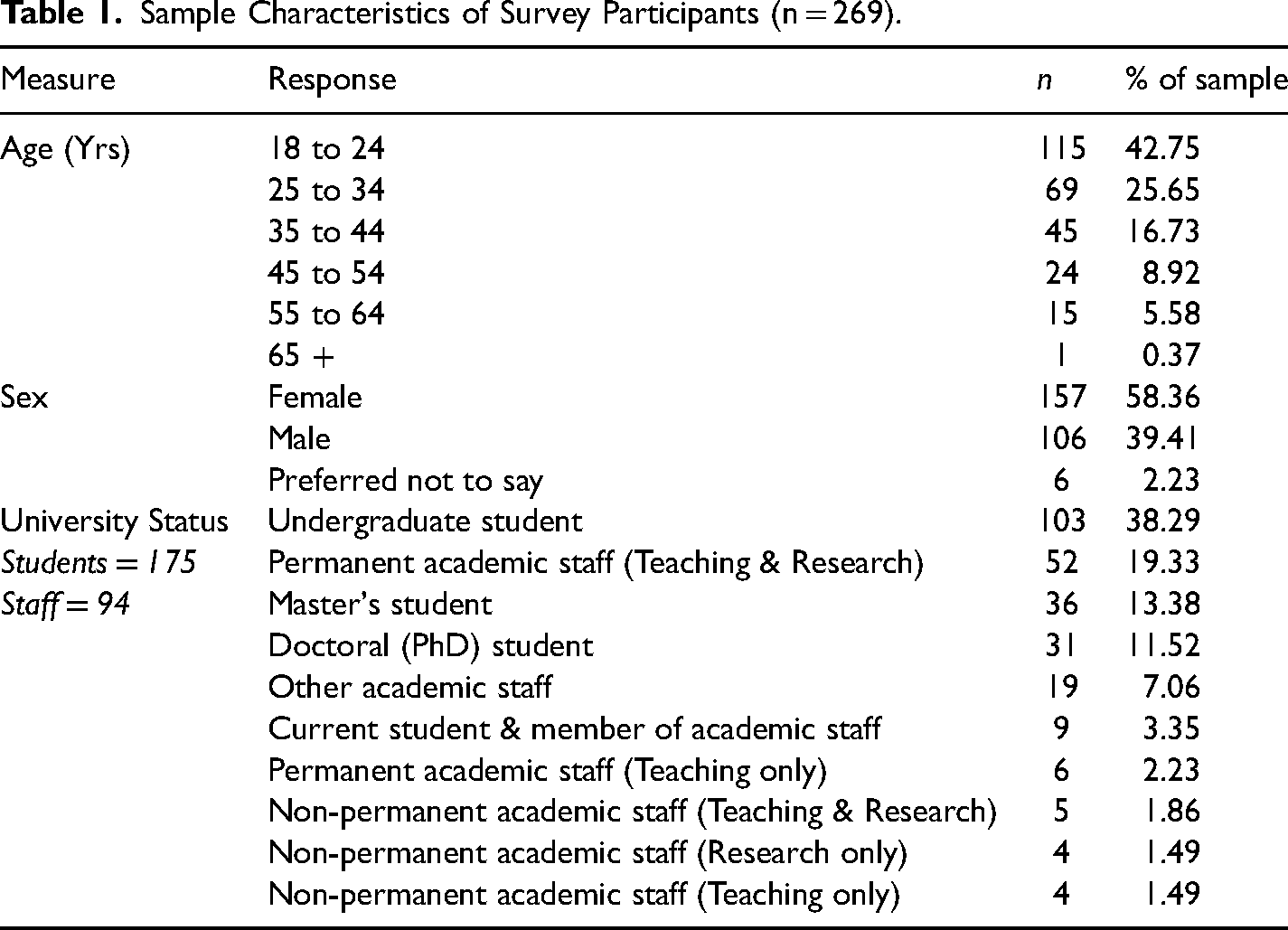

The most commonly described uses of AI were the same for students and staff: generating ideas (41% of sample), explaining ideas (38%) and editing text (31%; see Figure 1).

Bar plot showing self-reported use of AI tools by students and academic staff (n = 269).

Bar plot showing self-reported use of AI tools by students and academic staff (n = 269).

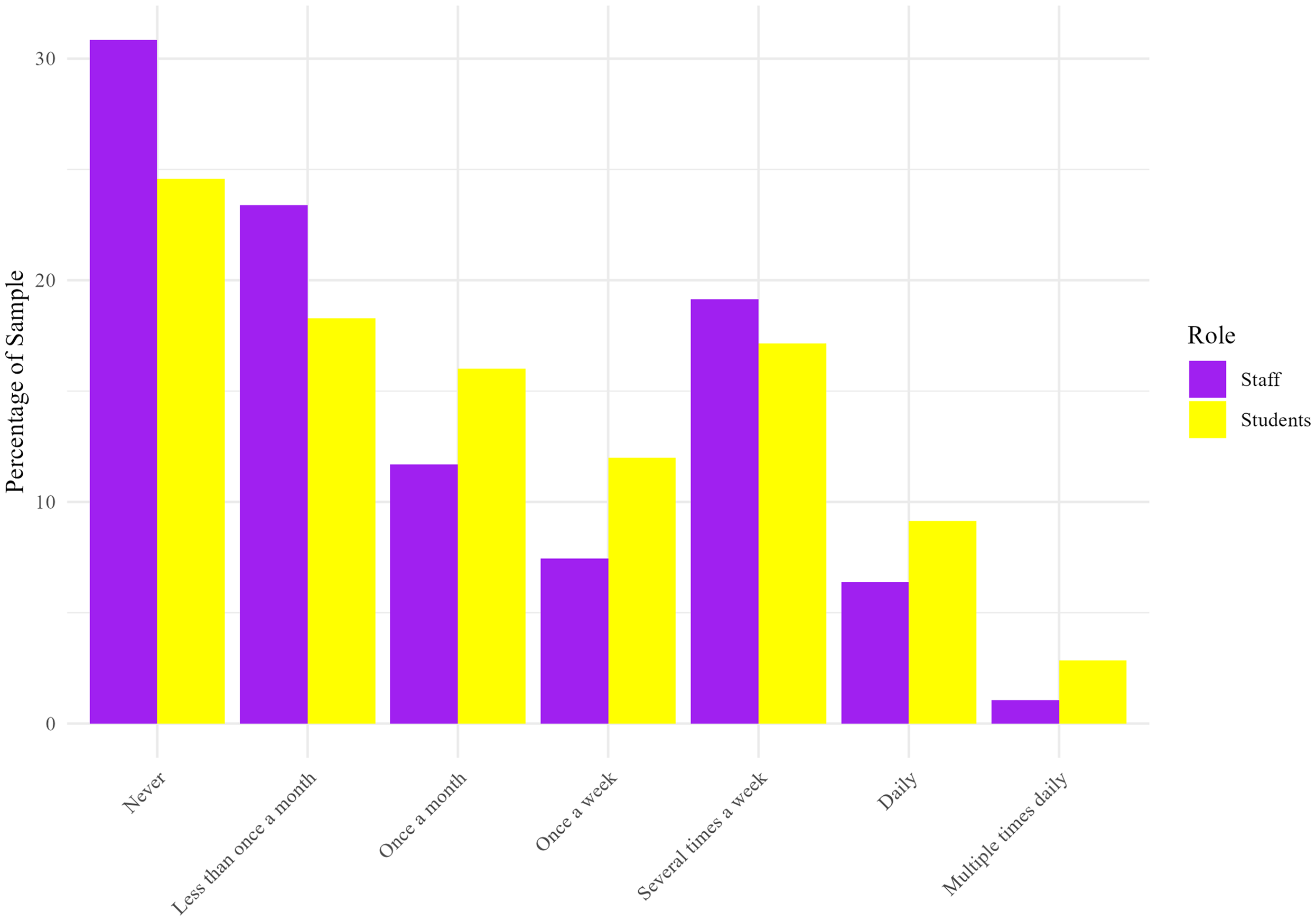

Interestingly, 25% of students and 31% of academic staff reported having never used a generative AI tool, whereas 29% of students and 27% of staff reported using AI tools several times a week, daily or even multiple times a day (see Figure 2). A Mann-Whitney U-Test indicated that there was no statistically significant difference in the self-reported frequency of AI use between students and academic staff (W = 9108.5, p = 0.139).

Bar plot showing frequency of AI use by students and academic staff (n = 269).

Bar plot showing frequency of AI use by students and academic staff (n = 269)]

Academic staff significantly overestimated the frequency of AI use by students compared to students’ own reports (W = 5265, p < 0.001), and also overestimated the use of AI by fellow academic staff (W = 4465, p < 0.001). Similarly, students significantly overestimated the self-reported frequency of AI use by staff compared to self-reported data (W = 6316, p = 0.0014), but also overestimated the use of other students compared to self reports (W = 15400, p < 0.001). These results suggest a disparity between perceptions of the extent to which AI is used by others (both staff and student groups) and self-reported use of AI tools.

Ordinal logistic regression was conducted to examine the relationship between the frequency of AI use and various demographic predictors: age, sex, SSES, and role (staff or student). Sex predicted frequency of use, with males more likely to report higher levels of AI use than females (β = 0.748, SE = 0.228, t = 3.286). Higher SSES also predicted greater frequency of AI use (β = 0.286, SE = 0.113, t = 2.531). Age (β = 0.061, SE = 0.158, t = 0.389) and university status (staff vs student; β = 0.473, SE = 0.333, t = 1.418) did not predict self-reported frequency of AI use (for full regression results, see supplement Table S1).

Attitudes Towards AI

The mean score for the AIAS-4 was 4.66 (SD = 1.39, range = 1–7) indicating that the sample were broadly more positive than negative about AI. In a linear regression model, significant predictors of attitudes towards AI (AIAS) included sex (β = 0.494, p = 0.004) and SSES (β = 0.169, p = 0.046). Males tended to have higher AIAS scores compared to females, and higher SSES was associated with more positive attitudes towards AI (for full regression results, see supplement Table S2).

AI Literacy

The mean score for self-reported AI literacy was 4.81 (SD = 0.92, range = 2.08–7), suggesting that the sample broadly perceived themselves to be reasonably AI literate. In a linear regression model, significant predictors of AI literacy included age (β = -0.240, p = 0.003), and sex (β = 0.501, p < 0.001). These results indicate a negative relationship between age and self-perceived AI literacy, and that males have greater self-perceived AI literacy than females (for full regression results, see supplement Table S3).

Qualitative Interviews

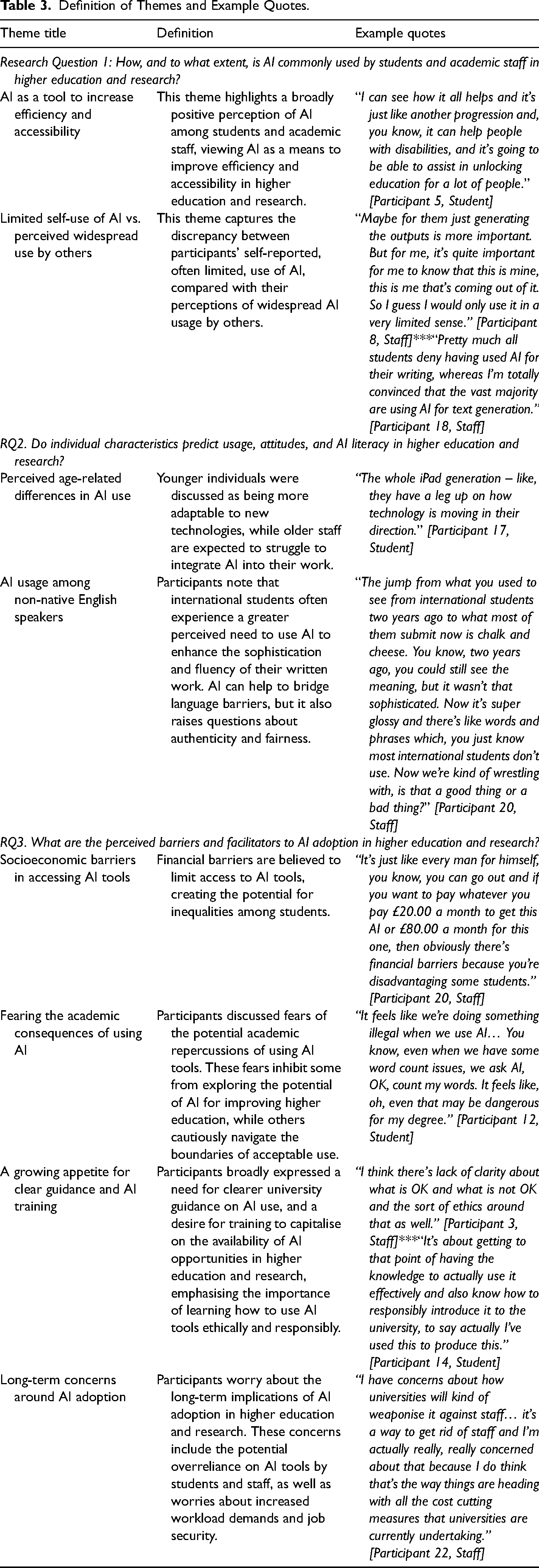

We report the qualitative findings from our mixed-methods approach by presenting the themes that were highlighted by our analysis as best expressing the overall narrative of the interview transcripts. These themes are divided by research question to clearly present how the findings of this research address the directives of the study. Table 2 provides the sample characteristics for our interview participants. Table 3 provides an overview of each theme, accompanied by a representative example quotation from the transcripts. Each theme is then presented, defined and explored in further detail below.

Definition of Themes and Example Quotes.

Research Question 1

Participants discussed AI tools, such as ChatGPT, as offering the potential to increase efficiency and accessibility, particularly for writing and idea generation. However, there was a marked contrast between the cautious, ethical self-reported use by individuals and the belief that AI is being used more extensively and less responsibly by others in the academic community.

AI as a Tool to Increase Efficiency and Accessibility

Participants broadly discussed exploring the use of generative AI tools as a positive means to save time and increase efficiency. Though four participants discussed the use of AI for assisting with coding and data analysis, the focus of all interviews was on the use of AI (primarily ChatGPT) for writing tasks and generating ideas. When discussing the potential for AI to assist with written work, 11 of the 24 participants discussed how AI could be used as a general assistive tool that could improve efficiency in existing tasks. Participants often saw this as a ‘next step’ in the development of technology, akin to previous advancements in assistive tools. “I’m kind of broadly optimistic about it. I’m not scared of it. I think great, here we go, you know - this is something else which is going to make us be able to do things better basically.” [Participant 20, Staff] “It is an incredibly useful tool if it's used correctly, appropriately, and that you should be encouraged because it can help us to do tasks more effectively, more clearly, and it can work as a tool in the same way as we have used, I guess a phone or similar.” [Participant 7, Staff] “I can see how it all helps and it's just like another progression and, you know, it can help people with disabilities, and it's going to be able to assist in unlocking education for a lot of people.” [Participant 5, Student]

Limited Self-use of AI vs. Perceived Widespread use by Others

There was a clear division between participants’ self-reported use of AI tools and their perceptions of the use of AI by others. Participants typically described their own use of AI tools as being, cautious, limited, and ethically responsible. In contrast, most participants (19/24) speculated that others are using AI to a far greater extent than they are themselves. Participants also often perceived the use of AI by others as being more cavalier and ethically dubious. This was true for descriptions of both student and staff use of AI.

All students from our sample (14/24) suggested they had adopted AI in a measured way, using it in a limited scope. “I’ve used it a wee bit for paraphrasing, so if I can’t quite get like a sentence to kind of be in an academic writing kind of style, I want to enhance like the words used that I just put it in there and then bring out some words that is giving me and then kind of do a blend of that paraphrasing to all my own work.” [Participant 15, Student] “The only times I’ve found myself really using AI has been to act as like a supplementary tool to my understanding. Sometimes I struggle to engage with textbooks. I find them quite inaccessible, as someone that has attention span issues. So having something that can generate like a quick summary is quite cool. It's quite helpful.” [Participant 17, Student]

“I’ll use them occasionally to be like. If I have no idea where to start, I’ll say OK like. I don’t know. Ask. OK, what are some topics I can explore under this? It will give me something like that I could then look into… just as a jumping off point. I don’t really use it very often. But it has helped a lot in the past, yeah.” [Participant 2, Student]

“Other students use it when they just can’t be bothered to do the work themselves. There are all kinds of people that do use AI, I don’t ask people about it a lot, so I’m not sure who exactly is paying for it or what models they’re using. It's like a lot of people, though.” [Participant 1, Student] “I think the reality is certain, undergraduates will be using it an awful lot! I think it's getting to that question of actually is this acceptable?” [Participant 14, Student]

“Pretty much all students deny having used AI for their writing, whereas I’m totally convinced that the vast majority are using AI for text generation.” [Participant 18, Staff]

“Maybe for them just generating the outputs is more important. But for me, it's quite important for me to know that this is mine, this is me that's coming out of it. So I guess I would only use it in a very limited sense.” [Participant 8, Staff] “I would not write my research papers manuscripts using AI because, yet again, I think I’ve got too strong ethics and opinions about my own work.” [Participant 7, Staff]

“I mean I’ve heard anecdotally of people in other disciplines doing it.” [Participant 13, Staff]

“I do think some researchers are gonna use it to write papers and to speed up work that way… I mean, we’ve all seen examples of AI papers and people have accidentally left their ChatGPT prompts in, like it's happening already.” [Participant 22, Staff]

Research Question 2

Key findings suggest that participants believe younger individuals adapt more easily to AI, and that older staff are likely to struggle, or be reluctant, to integrate AI into their work. Participants also highlighted that the reliance on AI of non-native English speakers to enhance their academic writing raises concerns about the integrity of university degrees.

Perceived Age-Related Differences in AI use

One third of participants (8/24) reported age as being a key characteristic that would likely influence the adoption, use, and ability to navigate AI tools for higher education and research. Younger individuals (both students and staff) were believed to be more able to adapt to new technologies, while older staff were expected to struggle to integrate AI into their work. “I’m able to adapt to these technologies quite quickly. This probably goes, you know, even further for people who are younger than me, like that the whole iPad generation like they have a leg up on how technology is moving in their direction.” [Participant 17, Student] “Age is gonna be a big one. I know a lot of staff members and like, PhD students that you know, older than myself, who still do all their referencing by hand and they’re just like, ‘this is what I’ve always done’… So if these people aren’t necessarily comfortable using a reference software or spreadsheet, they probably aren’t gonna be awfully comfortable with ChatGPT.” [Participant 19, Student] “I’m on quite a female heavy course… I think males are more likely to use AI and they’re more about - I think risk taking behaviour. I know that females do use it, but I’ve had more discussions with females about – like, they don’t agree with it, and they think it's cheating. But guys tend to think it's fine.” [Participant 15, Student]

AI Usage Among Non-Native English Speakers

Several participants (7/24) highlighted that being a non-native speaker of English is likely to impact the use of AI in higher education. Participants highlighted that international students may often experience a greater perceived need to use AI to enhance the sophistication and fluency of their written work, due to the difficulties associated with communicating complex academic ideas in a second language. “The jump from what you used to see from international students two years ago to what most of them submit now is chalk and cheese. You know, two years ago, you could still see the meaning, but it wasn’t that sophisticated. Now it's super glossy and there's like words and phrases which, you just know most international students don’t use. Now we’re kind of wrestling with, is that a good thing or a bad thing?” [Participant 20, Staff] “I think people who don’t have English as their main language have it more difficult to like, write essays in English, and they tend to use AI more … I know people who write something in their own words but give it to AI, you know, to give it a bit more pzazz - because they feel that the English isn’t good enough for university standards. [Participant 24, Student] “If someone has specifically gone to another country to have a degree from England, there is this inherent assumption that we must have a working knowledge of English to have achieved that degree, so it becomes quite tricky.” [Participant 22, Staff]

“If you get a degree from a British university, does that not come with this expectation that you’ve got a good working knowledge of academic English. What does leaving university with that qualification mean anymore? What is it supposed to say about you?” [Participant 20, Staff]

Research Question 3

Participants discussed a range of barriers to the effective use of AI, primarily the potential for socioeconomic factors to limit the equitable access to AI tools. Students and staff both discussed the approach of universities to academic misconduct as potentially creating a sense of fear among students that could create a barrier to benefiting from new technologies. They also expressed a lack of clarity and understanding from the university with respect to guidance on how AI should be used for education and research. This lack of clarity also led to a desire for AI training to help staff and students capitalise on the opportunities offered by new technologies. Finally, broader concerns around the long-term implications of AI for higher education and research created a sense of unease among some participants, which could impact the willingness of university staff to embrace AI into their work. These barriers and facilitators are discussed in turn below.

Socioeconomic Barriers in Accessing AI Tools

Half of participants (12/24) reported potential financial barriers in the fair access of AI tools and concerns that this would lead to some staff/students being disadvantaged. “It's just like every man for himself, you know, you can go out and if you want to pay whatever you pay £20.00 a month to get this AI or £80.00 a month for this one, then obviously there's financial barriers because you’re disadvantaging some students.” [Participant 20, Staff] “If one person is more financially able to, he could get more out of AI than the other student. So yeah, that's also another factor that's not fair.” [Participant 24, Staff]

Fearing the Academic Consequences of Using AI

Two thirds of participants (16/24) discussed fears of the potential academic repercussions of using AI tools for the purposes of education. These fears were not shared in the context of research but were reported to inhibit some from exploring the potential of AI for improving higher education, while others cautiously navigate the boundaries of acceptable use. “It feels like we’re doing something illegal when we use AI… You know, even when we have some word count issues, we ask AI, OK, count my words. It feels like, oh, even that may be dangerous for my degree.” [Participant 12, Student] “So I’m a quite wary of how much I use it or what I use it for. Some people are like, ‘oh, the uni can’t enforce that, you can’t get done for using AI!’, so they use it to the full. I’m a bit sceptical on whether to believe that person or not.” [Participant 15, Student]

“So it's my first year in the uni and I was very, you know, conscious about doing everything correct and by the book. So definitely my first few essays I was like, well, I’m just going to completely ignore AI rather than even potentially risking being in a grey area or not, just avoid it altogether.” [Participant 21, Student]

“There's of course the fear of getting penalised and you know the way our messages are put out, we really justify that fear! So that's not good because it holds students back.” [Participant 18, Staff]

A Growing Appetite for Clear Guidance and AI Training

Approximately one third of participants (7/24) believed there was a lack of clarity regarding university guidance on AI ethics and usage, highlighting uncertainty among both students and staff about when and how AI tools should be appropriately applied. This suggests a need for clearer and more consistent communication of practical and ethical guidelines for integrating AI into education and research. “I think a lot of my friends and peers are kind of like similar - we don't know when to use it.” [Participant 15, Student] “I think there's lack of clarity about what is OK and what is not OK and the sort of ethics around that as well.” [Participant 3, Staff]

“The people who are coming up with the guidance, what's OK and what's not OK, I think it's probably quite clear in their head, but I think disseminating that between however many staff there are in the university to make sure it's consistent, you know… I think the university probably think they know theoretically what the right thing to do is, but I don't think that's being communicated.” [Participant 20, Staff]

“It's about getting to that point of having the knowledge to actually use it effectively and also know how to responsibly introduce it to the university, to say actually I’ve used this to produce this.” [Participant 14, Student] “I’m always a bit kind of nervous using new platforms. So I think the training element would be important for me.” [Participant 16, Staff]

“We have to teach the standard of what's the appropriate way to use these things to say this is the university way. If you get something from this university, they will know how to use AI and they will know how to do it ethically.” [Participant 4, Staff]

Long-Term Concerns Around AI Adoption

Participants raised concerns about the long-term implications of AI adoption in higher education and research. These concerns include the potential overreliance on AI tools by students and staff, as well as worries about increased workload demands and job security.

One third of participants (8/24) discussed concerns over the harms of relying too much on AI. “So, one of my concerns is that of an intellectual crisis if students don’t learn the skills anymore. Because of reliance on, say, software and so on.” [Participant 18, Staff] “It has become like a sort of distraction when writing assignments, because I know like when I’m writing my assignment, I don’t want to depend on AI when writing like the assignment and stuff, but sometimes it becomes too easy like … I just think like, I could just give it to AI.” [Participant 24, Student] “I have concerns about how universities will kind of weaponise it against staff… It's a way to get rid of staff and I’m actually really, really concerned about that because I do think that's the way things are heading with all the cost cutting measures that universities are currently undertaking.” [Participant 22, Staff] “The main concern I want to keep my job. So most of the CEOs and Chairmans and stuff are encouraging people to adopt this technology. But people have back in their mind, if I don’t adopt, if I don’t cooperate, I will lose my job.” [Participant 11, Student]

“My concern would be if now that AI is able to do all this, will it actually be a good cheaper substitute for actual employers like for the data analysis that I do?” [Participant 24, Student]

Discussion

The Use of AI by Students and Academic Staff

A wide array of AI uses were reported during both the quantitative and qualitative phases of our project. Survey participants most commonly cited the use of AI for generating ideas. Both quantitative and qualitative findings indicated prevalent use of AI for text generation and editing, as well as for coding and data analysis. These findings highlight an increasing engagement by students and university staff with AI to support educational and research activities. Despite the breadth of AI uses reported by participants, quantitative data demonstrated that the frequency of AI use can vary notably between individuals, a finding supported by the perceptions reported in the qualitative data that patterns of use differ among people. Notably, ‘never’ was the most commonly reported frequency of AI use by both students and academic staff. This suggests that despite the significant rise in AI adoption in higher education and research, there remains a considerable proportion who report being yet to engage with AI. This points to the need to explore and understand the factors that influence differing levels of AI acceptance and adoption.

Significant disparities were evident between individuals’ perceptions of their own and others’ engagement with AI. Both students and staff tended to overestimate AI usage by both staff and by students. Qualitative findings further revealed a belief among both students and staff that students use AI for assessed work to a far greater extent than is suggested by our self-report data. It is possible that some students and staff underreported their own use of AI, in part due to the widely discussed fears of punishment in an academic context, as well as potentially stigmatising views that associate AI use with laziness or cheating. Other studies in higher education have reported greater use of AI by students, for example a recent survey of US college students found that 60% used ChatGPT for over half of their assignments (Intelligent, 2023). It is essential that higher education institutions accurately capture the extent to which students/staff are using AI tools, to ensure that future policies are not based on misconceptions. This suggests the urgent need for greater dialogue between students and staff to clarify how AI is being employed and to establish acceptable practices moving forward.

Individual Differences in Usage, Attitudes, and AI Literacy

Our quantitative survey findings suggest that being male and having a higher SSES predict more frequent use of AI and more positive attitudes towards it. Additionally, being male and younger were associated with greater self-perceived AI literacy. These findings align with previous literature, which has reported more frequent use of ChatGPT by males and more positive attitudes towards AI among those of higher socioeconomic status (Méndez-Suárez et al., 2023). A review of studies on AI adoption highlighted sex differences as a significant and consistent influence throughout the literature (Ismatullaev & Kim, 2024). Our quantitative findings support the important role that sex differences can play in AI adoption, though it is noteworthy that, when asked about individual differences likely to affect AI attitudes and adoption, only one of our 24 interviewees discussed sex and gender. The potential failure of higher education institutions to recognise and understand individual differences in the adoption of AI could limit equal opportunities in education and research. Overall, our findings highlight age, sex, socioeconomic status and being a non-native speaker of English as critical factors to the digital divide in the context of AI use in higher education and research. Ensuring equitable opportunities, and the perceived freedom to engage with AI tools, is crucial to avoid exacerbating existing inequalities in educational opportunities (JISC, 2023).

Barriers and Facilitators to AI Adoption in Higher Education and Research

In addition to potential socioeconomic barriers to adoption, our qualitative findings also highlighted specific fears among both staff and students that could deter individuals from looking to make the most of opportunities offered by AI. Firstly, students expressed concerns about potential academic repercussions from using AI tools. These fears expressly prevented some from considering AI's potential to enhance their education. Additionally, many staff members voiced concerns about the long-term implications of AI adoption in higher education and research. These worries include fears that universities may expect staff to significantly increase their output, both in terms of teaching and research, due to predicted efficiency gains from AI. There is also concern that the enhanced efficiency provided by AI could threaten job security in the future. Overall, these fears could foster distrust towards the introduction of AI by universities, potentially acting as an additional barrier to its adoption by some staff members. The UTAUT framework highlights the importance of ‘social influence’ and ‘facilitating conditions’ in shaping the behavioural intention to use and the subsequent adoption of different technologies (Venkatesh et al., 2012). This framework, combined with our findings, suggests that universities should engage more with social narratives and discussions within the institution that likely foment fears and concerns around the consequences of AI adoption among both students and staff. Recent research has emphasised how the UTAUT dimension ‘social influence’ plays a pivotal role in shaping student intention to use ChatGPT, suggesting that promoting the responsible use of AI can be achieved by encouraging staff and fellow students who are already using AI effectively to advocate its use among more reluctant peers (Strzelecki & ElArabawy, 2024).

Additionally, universities should facilitate a greater understanding of the responsible use of AI in education and research through supported training activities. Researchers have called on universities to embrace and proactively manage the introduction of AI (Lim et al., 2023). During interviews, both students and staff reported a lack of clarity in the guidance offered by universities regarding the practical and ethical use of AI for education and research. This is consistent with student concerns around AI reported by JISC that suggest that a lack of clear guidance from universities could lead to increased misuse of AI tools (JISC, 2024). Our qualitative findings showed that students and staff broadly expressed a strong desire for training to capitalise on AI opportunities in higher education and research, emphasising the importance of learning how to use AI tools ethically and responsibly. This reinforces recent findings emphasising students’ calls for transforming higher education to prepare them effectively for future employment in an AI-powered society (Chiu, 2024).

Limitations

Despite our quantitative and qualitative samples coming from a broad range of academic disciplines, our limited sample size prevented us from being able to statistically compare student and staff perceptions across academic departments. Some research has suggested that there may be differences between Science, Technology, Engineering, and Mathematics (STEM) subjects and non-STEM subjects in how to most effectively facilitate the effective use of AI in higher education (Khadivi, 2023; Wu & Zhang, 2024). It is important to investigate departmental differences in usage, attitudes, and AI literacy in higher education institutions in the future to help further identify institutional barriers to the acceptance of AI.

Another limitation of our study is the potential for self-report data to underrepresent the full extent to which AI is being used in higher education and research. Participants reported fearing the academic repercussions of being found to have used AI to conduct their work and studies. Despite the study emphasising the anonymity of all responses, the research team consisted of university staff. Therefore, it is possible that some participants chose to underreport their own use of AI due to concerns for the integrity of their own work. To avoid the potential for biases in self-reported data, future studies of higher education institutions may incorporate objective measures of the extent to which AI is used in academia (e.g., by capturing the extent to which AI tools are accessed via the institution's own servers). Future studies could also consider the use of researchers who are external to the institution being studied, potentially reducing participants’ concerns about their responses being tracked to their own AI use and potentially impacting their academic or work-related standing.

Conclusion

The study found a broad range of AI uses among students and staff, including generating and understanding ideas, editing text, coding, and data analysis. Despite this, a notable proportion of participants reported never using AI, indicating substantial variation in reported adoption levels. Discrepancies between self-reported and perceived AI use suggest the need for better understanding and communication of AI practices across higher education and research. Individual factors such as sex, age and socioeconomic status significantly influence AI use and attitudes, emphasising the importance of addressing the digital divide. However, these individual drivers of AI behaviours are not all always clear and apparent, suggesting the need for further dialogue around individual differences in AI adoption. Both students and staff voiced concerns about the implications of AI, including academic impacts, increased work demands, and a general lack of clarity from universities on AI guidance, highlighting further barriers to adoption.

AcademAI, and the growing presence of AI, is likely to continue to dominate discussions around higher education and research in the years to come. The current climate of fear and uncertainty among students and staff could be counterproductive to the equitable and effective use of AI, as well as to accurate data collection on current trends. To address these challenges, institutions should not only enhance training but also work towards changing the culture around AI. This includes fostering a more positive and informed approach to AI adoption, which will help mitigate the anxieties surrounding its use and encourage a more balanced and comprehensive understanding of its implications.

Supplemental Material

sj-docx-1-ets-10.1177_00472395251347304 - Supplemental material for AcademAI: Investigating AI Usage, Attitudes, and Literacy in Higher Education and Research

Supplemental material, sj-docx-1-ets-10.1177_00472395251347304 for AcademAI: Investigating AI Usage, Attitudes, and Literacy in Higher Education and Research by Richard Brown, Elizabeth Sillence and Dawn Branley-Bell in Journal of Educational Technology Systems

Footnotes

Acknowledgements

We would like to acknowledge the contribution and support offered by Northumbria University staff and students throughout the project.

Authors’ Contributions

RB designed the survey instrument, conducted data collection, analysis and first authored the manuscript. ES and DBB co-designed the survey instrument and interview schedule, contributed to qualitative data analysis, editing the manuscript and supervising the research project. All authors co-wrote and approved the final manuscript.

Data Availability

The quantitative datasets generated during the current study are available upon publication of this manuscript on the OSF [osf.io/dgwna/]. Qualitative transcripts are not publicly available to protect the identity of study participants.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical Approval

This study was approved by the Department of Psychology Ethics Committee at Northumbria University (ref. 6661).

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Northumbria University (Internal Funding Scheme 23/24).

Supplemental Material

Supplemental material for this article is available online.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.