Abstract

Learning to read is an essential skill for later academic success, positive self-esteem, and gainful employment. Students who display reading difficulties/disabilities at the end of third grade are less likely to succeed in content areas and graduate from high school. Recent data suggests that many students in today’s schools do not become skilled readers, and the reading loss widens during summer months due to skill regression. Regression of reading skills is greater for students from low Socioeconomic status (SES) families and for students with disabilities. This study examined the effects of two computer-assisted reading programs on the reading skills of 21 students at-risk for reading failure during a summer break. All students were pre- and post-tested after 8 weeks of intervention. Furthermore, tutors’ and students’ perceptions regarding the effectiveness and desirability of the programs were measured. A description of the computer programs, results, implications, and limitations of the study are discussed.

Introduction

Reading competency involves mastering the essential skills of word recognition and comprehension. Successfully mastering basic literacy skills in the earlier grades is fundamental for later academic success, positive self-esteem, and employment opportunities (Hammond, 2012; Mather et al., 2001; Slavin et al., 2009; Pindiprolu & Forbush, 2021). However, the National Assessment of Educational Progress (NAEP) data from the past decade suggests that a large percentage of students in today's schools do not become skilled readers. For example, only 37% of fourth-grade students and 36% of eighth-grade students performed at or above the “proficient” level in the area of reading according to the 2017 NAEP data (National Center for Education Statistics, 2018). Lyon (2001) estimated that 70% of the students identified as at-risk for reading failure at the start of first grade would continue to experience reading difficulties in their adult lives. Thus, the prevention of reading failure in the earlier grades is fundamental to overcoming reading skill deficits and a subsequent decrease in motivation to read.

Students who struggle in reading in their early years tend to make fewer gains across grades than students who do not (Sandberg-Patton & Reschly, 2013). Parallel to these weaker gains in reading is skill loss during the summer breaks. According to Sandberg-Patton and Reschly's (2013) research, students in the lower elementary grades (i.e., second and third grades) demonstrated greater summer learning loss in oral reading fluency than students in the upper elementary grades. The researchers suggested that this discrepancy is because students in earlier elementary grades do not have the essential skills to read independently during the summer break. Furthermore, the study results indicated that the learning loss was greater for students receiving special education services and/or for students from lower socioeconomic backgrounds (Sandberg-Patton & Reschly, 2013). Hence, it is essential to support the development of basic literacy skills in the early grades because the lack of basic reading skills can create large skill deficits over the years. For example, the loss in reading skills when compounded over multiple years creates a 2–3 year reading deficit in children (Allington & McGill-Franzen, 2003).

Computer-assisted reading programs (CRPs) offer an alternative for supporting the literacy instructional needs of students at risk for reading failure during summer break. CRPs offer multiple advantages over print-based reading programs. First, they require minimal training. Second, they can provide highly specialized instruction in the area of reading with high fidelity and relatively low costs (Torgensen et al., 2010). Third, the use of games, graphics, and multimodal presentations can facilitate the motivation of students who struggle to read (Regtvoort & Leiji, 2007). Fourth, recent literature suggests that CRPs are effective in facilitating basic early literacy skills.

Literature on CRPs

In 2002, Blok et al. examined the literature to evaluate the effectiveness of computer-assisted programs in facilitating beginning reading instruction. Based on their analysis of the literature, the authors concluded that computer-assisted programs were positive in facilitating early literacy skills. The overall effect size of the computer-assisted programs was small but positive (Blok et al., 2002). Given the poor-quality design of many of the studies, the authors called for a cautious interpretation of the findings. In 2011, Chambers et al. evaluated the effectiveness of a small group Tier II computer-assisted intervention on the reading skills of struggling readers. The study was conducted in 33 high-poverty Success for All (SFA) schools over a 1-year period. The schools were randomly assigned to experimental and control conditions. The intervention, Team Alphie, consisted of computer-assisted intervention, embedded multimedia, cooperative learning, and tutoring. The intervention was implemented four times a week. The control group students received individualized tutoring conducted in SFA schools. The results of the study indicated that the experimental group students in the first grade had higher reading achievement than students in the control group. The reading achievement for second graders was the same for students in experimental and control groups (Chambers et al., 2011).

In 2008, Macaruso and Walker examined the effects of phonics-based computer-assisted program, Early Reading, on the preliteracy skills of kindergartners. Intervention sessions lasted for 15–20 min, and 2–3 sessions were conducted weekly over a 6-month period. Both the intervention and control group students also received daily reading instruction. The results indicated that the students in the computer-assisted program group significantly outperformed students in the control group on the phonological awareness skill measure. The authors concluded that treatment group students, especially the low-performing students, greatly benefited from the Early Reading program when implemented as a supplemental program to the daily reading instruction (Macaruso & Walker, 2008). In 2011, Macaruso and Rodman conducted two studies to evaluate the effectiveness of computer-assisted instruction. In study 1, they examined the effectiveness of Early Reading program with pre-schoolers. In study 2, the authors examined the effectiveness of Early Reading and Primary Reading with kindergartners. In study 1, the experimental group computer-assisted instruction (CAI) students performed significantly better on phonological awareness measure and the control group made greater gains on naming upper case letters. In the second study with kindergartners, the results indicated that the students in the experimental group (CAI) made significantly greater gains on the Total Test scores and on the Word Reading subtest. Based on the findings, the authors concluded the CRPs provided intensive practice and benefits for pre-schoolers and low-performing kindergartners (Macaruso & Rodman, 2011).

Watson and Hempenstall (2008) examined the effects of Funnix CRP on the reading skills of kindergarten (KG) and first-grade students. The results indicated that KG students in the Funnix group had significant gains on phonemic awareness, letter-sound fluency, oral reading fluency, and non-word decoding measures. For first-grade students, participants in the Funnix group achieved significant pre–post gains on letter-name knowledge, letter-sound fluency, oral reading fluency, and non-word decoding measures. The researchers concluded that KG students made the most gains and that future studies examine the effectiveness of Funnix with at-risk beginning readers to measure the extent to which they benefit from the Funnix program. Similarly, Huffstetter et al. (2010), examined the effects of Headsprout program on the basic literacy skills of at-risk pre-school children. The program was implemented in 30 min every day for 8 weeks during the school year. Based on the results, the authors concluded that Headsprout CRP was effective in facilitating early reading skills of at-risk pre-school children. Social validity data indicated that the teachers and teacher assistants were positive regarding program effectiveness with one teacher reporting “feeling burned out” due to the daily implementation of the program. The authors called for additional studies, with a literacy program condition, to evaluate the effectiveness of the Headsprout program.

In 2011, Saine et al. conducted a study in Finland to examine the effectiveness of computer-assisted intervention on the letter knowledge, reading accuracy, fluency, and spelling of children at-risk for reading disabilities. The 7-year-olds were assigned to either a regular remedial reading intervention program, a computer-assisted reading program, or a mainstream reading program. The results indicated that the computer-assisted reading group made greater gains on the letter knowledge, decoding, accuracy, fluency, and spelling measures than the students in the regular remedial program or the mainstream reading program (Saine et al., 2011). Furthermore, the students in the computer-assisted program maintained their gains at 12- and 16-month maintenance checks after the intervention. The authors concluded that computer-assisted remedial reading intervention programs are highly beneficial for at-risk students.

In 2014, Regan et al. examined the effects of a computer-assisted instruction program, Lexia Strategies for Older Students (SOS)™ CRP, on the word recognition skills of four elementary students with mild disabilities. Almost all the study participants required additional direct instruction to master some of their target skills (Regan, et al., 2014). Based on the results, the authors concluded that Lexia SOS CRP with additional direct instruction could facilitate word recognition skills of upper elementary-grade students. In 2018, Messer and Nash evaluated the effectiveness of a computer-assisted reading intervention, Trainertext, on at-risk students’ performance on six reading-related measures in six UK schools. Each tutorial session lasted 10–15 min and was implemented two to three times a week. Initially, the intervention was provided to the students in the experimental group and the students in the control group received intervention after post-test 1. The results of post-test 1 indicated that students in the experimental group had significantly higher standard scores than the students in the control group on decoding, phonological awareness, naming speed, phonological short-term memory, and executive working memory measures and maintained the gains at post-test 2 (Messer & Nash, 2018). The students in the control group showed improvements on most of the measures on post-test 2. Based on the results, the authors concluded that computer-assisted intervention had a significant effect on the reading-related abilities of English-speaking children and that longer intervention duration resulted in higher gains for the students. In 2018, Rogowsky et al. examined the effects of computer-assisted instruction on the reading and math skills of pre-school children. Children in the computer-assisted group engaged with the program for 10 min each day for 11 consecutive weeks. Results indicated that students in the computer-assisted group had significant improvement in the scores on literacy skills than students in the comparison group. Based on the results the authors concluded that computer-assisted learning may enhance the literacy skills of pre-schoolers (Rogowsky et al., 2018).

Given the debilitating consequences of early reading failure, there is a need to study the effectiveness of educational technology in ameliorating the reading deficits of at-risk students in early grades (Agodini et al., 2003; Pindiprolu & Forbush, 2021). Furthermore, there is a significant dearth of rigorous evaluations of the effectiveness of CRPs (Rouse & Krueger, 2004) and the effectiveness of the CRPs in reducing summer learning loss. It is in this context the present study was undertaken. The purpose of the study was to evaluate the effectiveness of two CRPs, when implemented by university students as tutors during a summer break, on the reading skills of elementary-grade students with reading difficulties. Furthermore, the tutor's perceptions regarding the effectiveness, ease of implementation, and desirability of the program were assessed, along with the students’ perceptions of the effectiveness and desirability of the programs.

Method

Participants and Setting

Participants for the study consisted of students with reading difficulties in grades KG through second grade. To recruit students, the following steps were undertaken. First, focus group meetings were conducted with district supervisors of a school district located in Northern Utah. Participants reviewed the objectives of the study and offered feedback on the procedures, measures, and computer-based programs to be used in the study. Second

CRPs

Two CRPs, Funnix and Headsprout, were chosen for the study based on the following three criteria. One, they addressed the critical literacy skills of phonemic awareness, phonics, fluency, vocabulary, and reading comprehension. Two, they were designed for use with students in kindergarten to second grade. Third, they required similar lengths of time to complete a lesson/episode.

Dependent Measures

Results

Four tutors implemented the CRP programs with 21 KG through second-grade students. Of the 21 students, 11 were assigned to the Headsprout (HS) group and 10 were assigned to the Funnix group. Six of the 11 students in the HS group were also classified as English as a Second Language (ESL) students and three of the 10 students in the Funnix group were classified as ESL students. For the HS group, five students were in kindergarten, five were in first grade, and one was in second grade. Of the 10 students in the Funnix group, three were in kindergarten, four were in first grade, and three were attending second grade. Approximately 55% of the students in the HS program and 70% of the students in the Funnix program completed 30 or more episodes/lessons. The average number of episodes completed by students in the HS group was 28, and the range was 20–34 episodes. The average number of lessons completed by the students in the Funnix group was 32 and the range was 6–34 lessons.

Interobserver Agreement

During the course of the study, IOA on the DIBELS measures was undertaken for at least 20% of the pre- and post-tests. IOA was calculated using an item-by-item agreement. The average pre-test IOA was 99.3% on the LNF measure, 99% on the ORF measure, 97% on the ISF measure, 95.5% on the RF measure, 95.8% on the NWF measure, 91% on the WUF measure, and 89.3% on the PSF measure. The average post-IOA was 100% for the ISF measure, 97% for the LNF measure, 90.4% for the PSF measure, 89.8% for the ORF measure, 85% for the NWF measure, 81.3% for the WUF measure, and 79.45% on the RF measure.

Treatment Fidelity

Tutors were observed a minimum of two times during their program's implementation. A treatment fidelity form with instructions was developed for each computer-assisted program. Project coordinators collected data using a treatment fidelity recording form. For Funnix, the observations on the implementation of the CRP included recording the number of opportunities available and tutors’ correct responses to those opportunities on five components: (a) pausing the program after the child made an error; (b) modeling a correct response; (c) prompting the student to repeat incorrect sounds and words; (d) doing entire exercises “over” if frequent errors occurred; and (e) praising for correct student's responses during the lesson and workbook activities. Approximately 265 feedback and/or error correction opportunities occurred during the six observation sessions for tutors implementing the Funnix program. The students correctly responded to 98% of those response opportunities and received positive feedback/praise. The average correct response rate for tutors was 100% on repeating an exercise, 86.6% for pausing the program and prompting the student to repeat incorrect sounds, and 66.6% for modeling correct responses. The average praise rate was two praises per minute. For Headsprout, the observations on the implementation of the CRP included the number of opportunities tutors had and the correct responses of the tutors on the following two components: (a) modeling correct responses when the student mispronounced a word while reading or responding to stimuli and (b) prompting the student to repeat incorrect sounds and words. The average correct rate across tutoring sessions was 70% for modeling correct responses and 45% for prompting students to repeat incorrect sounds and the lesson. The average praise rate was 2.1 praises per minute.

CRP Effectiveness

To evaluate the effects of the programs on the basic early reading skills of the students, two types of analyses were conducted. First, a one-way analysis of covariance was used to compare the relative effectiveness of the two CRPs on the seven DIBELS measures. The one-way analysis of covariance was conducted with the computer-assisted program as the independent variable, post-tests as the dependent variable, and the pre-tests as the covariate. Second, paired-sample t-tests were undertaken to measure the pre–post gains on the DIBELS probes for each group. All null hypotheses were tested at the .05 significance level (two-tailed tests).

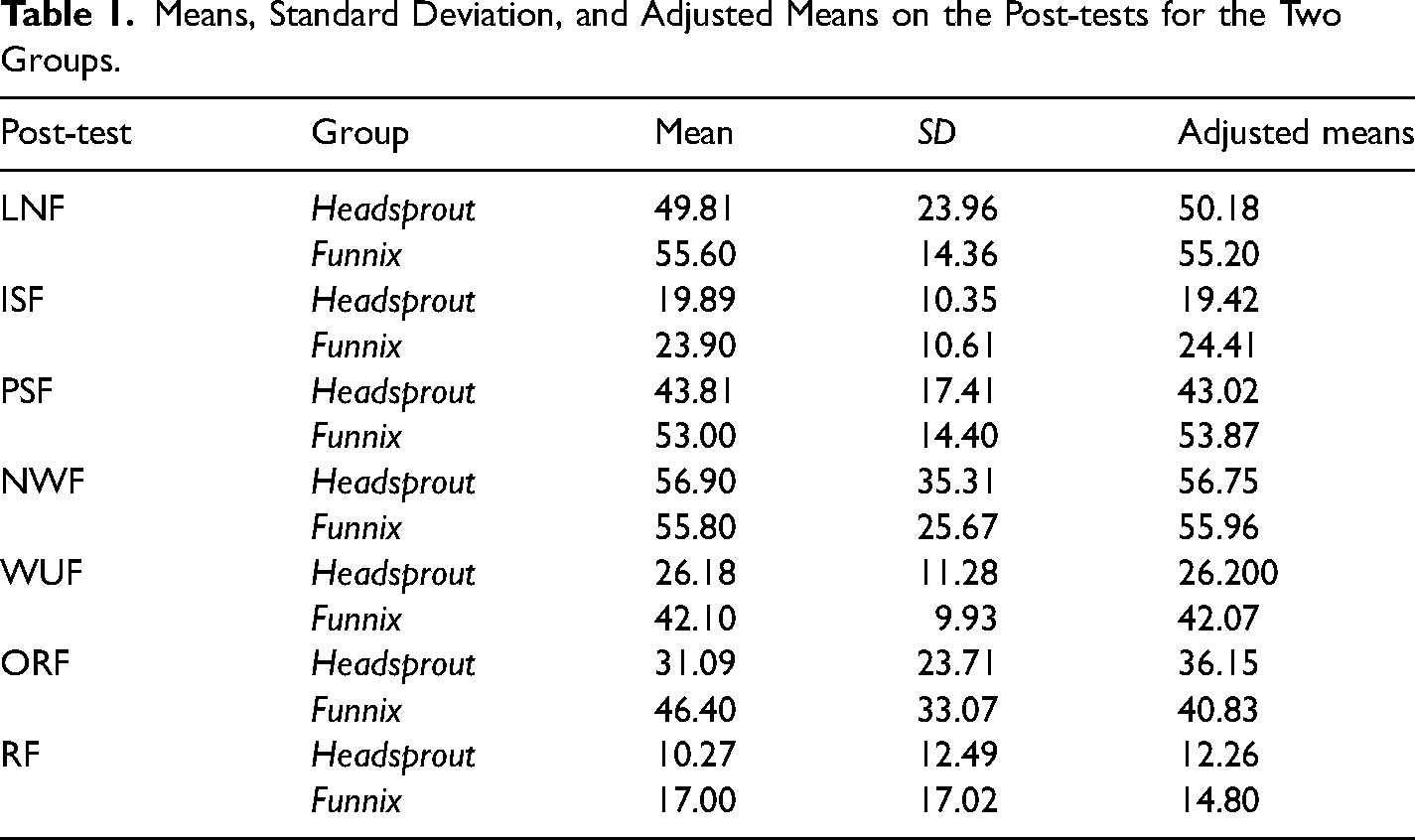

Before conducting the one-way analysis of covariance (ANCOVA) on the seven DIBELS measures, the homogeneity of slopes assumption test was evaluated. The null hypothesis for the homogeneity of slopes test was rejected (i.e., the homogeneity of slopes assumption was not met) for the ORF measure, F(1, 17) = 7.6, p = .01. Furthermore, the partial eta square was large, .31, indicating that ANCOVA results of a significant finding on the ORF measure should be re-examined using other statistical procedures. In addition, the null hypothesis for the LNF measure was not significant, F(1, 17) = 3.76, p = .07. The partial eta square was large, .18, indicating that ANCOVA results of significant finding on the LNF measure should be re-examined using other statistical procedures. The ANCOVA main analyses for effects indicated a statistically significant difference only on the WUF measure, F(1, 18) = 10.83, p

Means, Standard Deviation, and Adjusted Means on the Post-tests for the Two Groups.

A paired-sample t-test was conducted for each group on each of the seven DIBELS measures to evaluate pre–post gains. For the Funnix group, a statistically significant difference was found between the pre- and post-test scores on the WUF measure, t (9), = 3.24, p = .01. Further, the value on the PSF measure was t (9), = 2.02, p = .07. The Funnix group had higher post-test mean scores on the PSF, NWF, WUF, and RF measures. The Headsprout group had higher post-test mean scores on the NWF measure. As the sample size was small, standardized effect size index d was computed. For the Funnix group, the effect size was large for the WUF measure (1.03), medium for the PSF measure (.64), and small for the RF measures (.38). No significant positive effect sizes were found for the HS group.

Tutors’ Perceptions

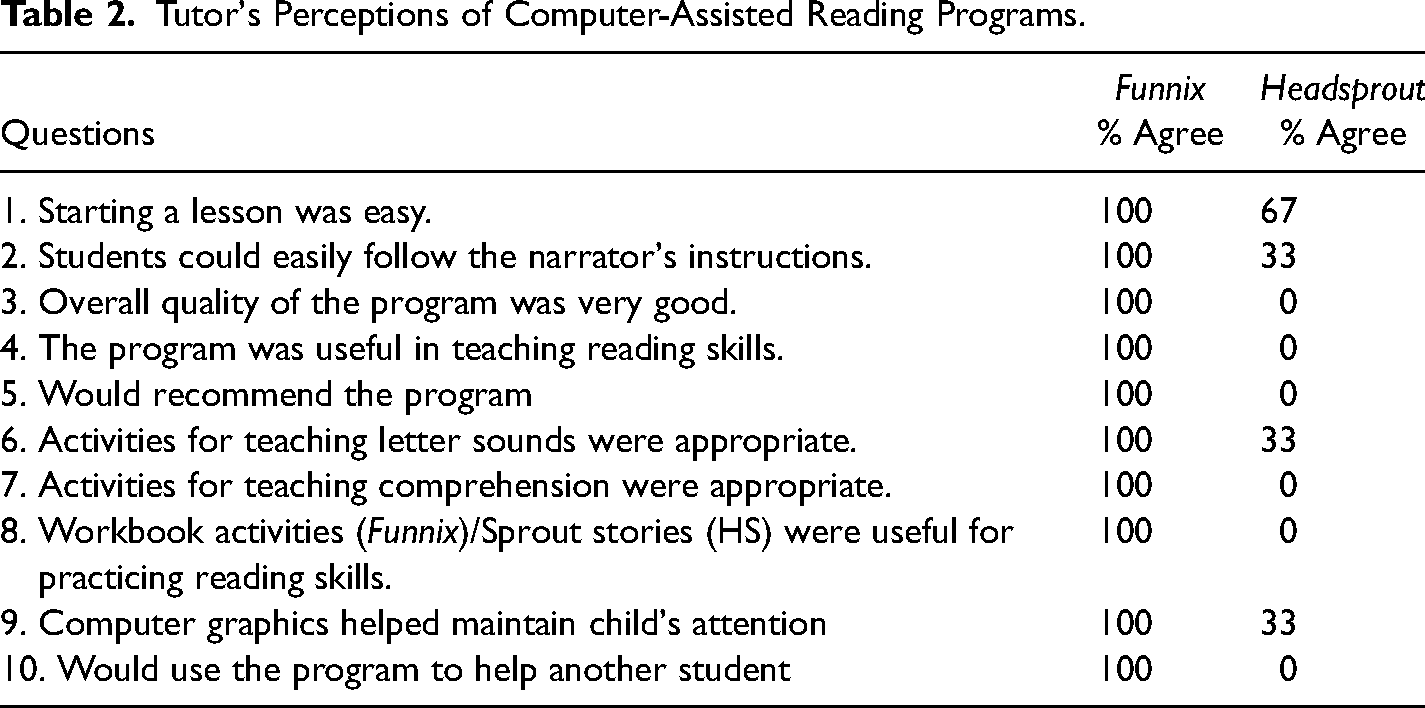

The results from the social validity questionnaires administered to the tutors indicated that 100% of the tutors (n = 3) implementing the HS program and 100% of the tutors (n = 2) implementing the Funnix program had previous experiences implementing reading instruction. Further, 100% of the tutors in the Funnix program indicated that they would recommend the program to others helping students with reading and would use the program to help other students. None of the tutors in the HS program indicated that they would recommend the program or indicated that they would use the program to help another student. Regarding the ease of using the Funnix program, 100% of the tutors in the Funnix program agreed that starting the lesson, moving between exercises, repeating the lesson, and pausing the program were easy. Similarly, 100% of the Funnix tutors agreed that (a) students easily followed the instructions of the narrator; (b) they were comfortable using the program; (c) the program was useful in teaching reading skills to students; and (d) Funnix was a good program. Regarding the ease of using the HS program, 67% of the tutors in the program agreed that starting the lesson and 33% agreed that students could easily follow the instructions. On the question of students’ comfortableness using the HS program, 33% disagreed (67% were neutral). All tutors indicated “neutral” to the statements regarding (a) the usefulness of the HS program in teaching reading skills to students and (b) that HS was a good program.

Regarding the appropriateness of teaching activities in the Funnix program, 100% of the Funnix tutors agreed that (a) activities for teaching sounds of letters and comprehension were appropriate and 50% indicated that activities for teaching words were appropriate. Similarly, 100% of the Funnix tutors agreed that (a) computer graphics on the program helped maintain students’ attention; (b) workbook activities for practicing reading skills were useful; (c) their feedback helped their students learning of reading skills; and (d) the instructional time was sufficient for teaching reading skills (see Table 2). Regarding the appropriateness of teaching activities in the Headsprout program, 33% of the HS tutors agreed that activities for teaching sounds of letters and words were appropriate. Similarly, 33% of the HS tutors agreed that (a) computer graphics on the program helped maintain students’ attention, and (b) their feedback helped their students learning of reading skills. On the usefulness of the Sprout stories, sufficiency of instructional time, and appropriateness of the comprehension activities, 67% of the HS tutors were neutral (33% disagreed).

Tutor's Perceptions of Computer-Assisted Reading Programs.

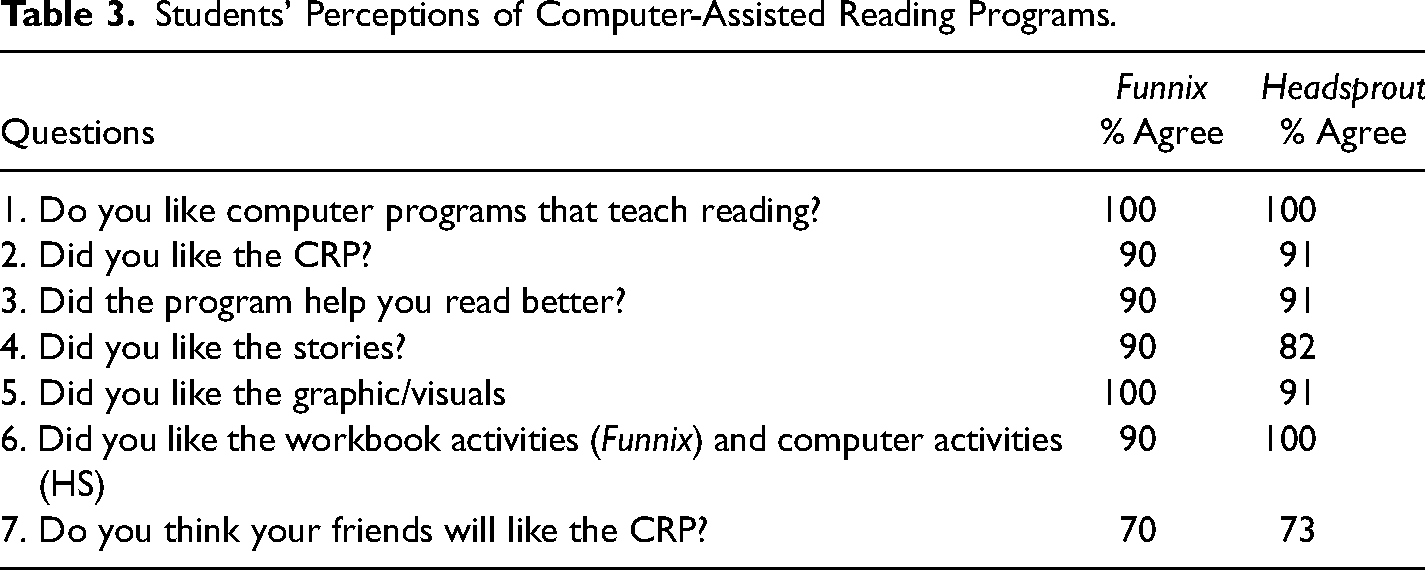

Students’ Perceptions

The results from the social validity questionnaire administered to the students in the Funnix group (n = 10) indicated that (a) 100% of the students liked having an adult help them learn to read, (b) 90% (n = 10) of the students liked the Funnix program, (c) 100% of the students liked the visuals in the program, (d) 90% liked the workbook activities, (e) 90% liked the stories, (f) 90% of the students indicated that Funnix helped them learn to read (10% indicated “I don’t know”), and (g) 70% indicated that their friends might like the program (20% indicated that they don’t know). The results from the social validity questionnaire administered to the students in the Headsprout group (n = 11) indicated that (a) 91% liked the Headsprout program, (b) 73% liked having an adult help them read, (c) 91% liked the visuals, (d) all students (100%) liked the activities/games, (e) 82% liked the stories, (f) 91% of the students indicated that HS helped them learn to read, and (g) 73% indicated that their friends might like the program (18% indicated that they don’t know/did not answer; see Table 3).

Students’ Perceptions of Computer-Assisted Reading Programs.

Discussion

This study examined the impact of a summer implementation of two CRPs on the basic early literacy skills of KG through second-grade at-risk students. The results indicated that (a) there was a statistically significant difference between the two groups on the WUF measure in favor of the Funnix program and (b) the Funnix group showed pre–post gains on four of the seven measures (PSF, WUF, NWF, and RF). The HS group showed gains (higher post-test) scores only on the NWF measure. The social validity data indicated that (a) the tutors were very positive regarding the effectiveness, ease of use, and appropriateness of the activities in the Funnix program than the tutors who implemented the HS program and (b) the students in both programs were equally positive regarding the program activities and effectiveness.

A significant finding of this study is that the Funnix CRP program facilitated gains in the areas of phonemic awareness (as measured by PSF), phonics (as measured by NWF), vocabulary (as measured by WUF), and comprehension (RF measures). Of these four, the effects were significant on three of the measures (i.e., the effect size was large for the WUF, medium for the PSF, and small for the RF), which are critical early reading skills. These findings support Watson and Hempenstall (2008) findings that the Funnix program facilitates the growth of basic early literacy skills of elementary-grade students and extends the findings to the at-risk student population. The results from the study provide additional evidence that the Funnix program can be used to provide focused, explicit intervention in the areas of phonemic awareness, phonics, and fluency during the summer break and thereby prevent the compounding of reading deficits across years.

The results from the study indicated that the students in the HS group did not show any significant gains and in fact exhibited low post-test scores on five of the DIBELS measures. The decreases were significant (medium to small) on the LNF, ISF, PSF, and WUF measures. There could be multiple reasons or a confluence of factors for the lack of growth of skills of students in the HS group. First, (a) the summer intervention occurred for over a month (the other month was spent on pre- and post-testing) and (b) all students in the HS group started from episode 1 in the program irrespective of their abilities, as there was no program pre-assessment to determine entry points for students based on their abilities. The HS program is designed as such, as it is a performance guarantee program that promises mastery of basic literacy skills at the completion of the program, which is 80 episodes. The combination of the duration of the intervention and the program placement structure could have prevented the students from learning new skills that were appropriate to their skill gaps, but were targeted later in the program. For example, Huffstetter et al. (2010) also hypothesized that students completing 40 episodes or less might not have been exposed to the skills measured by a standardized test. Second, fewer students in the HS program than in the Funnix program completed 30 or more episodes/lessons. The average number of episodes completed by students in the HS group was lower than that of episodes completed by students in the Funnix group. This could have influenced the results. The average number of episodes completed by the HS students was 28, which was less than the episodes completed by the students in the Huffstetter et al. (2010) study and could have been a factor for the lack of positive findings for this group in this study. Third, six of the 11 students in the HS group were also classified as ESL students compared to three students in the Funnix group and this could have differentially influenced the results. Fourth, a majority of the students in the HS group were KG students and the Funnix group had a relatively higher number of students in the second grade. Given that students in Funnix were placed based on the results on the program pre-assessments could have resulted in more gains on the post-tests.

The results from the social validity assessment also indicated that none of the tutors in the HS program indicated that they would recommend the program nor indicated that they would use the program to help another student. Similarly, the tutors’ responses were not positive on statements regarding students’ comfortableness using the HS program, the usefulness of the HS program in teaching reading skills, and that HS was a good program. This lack of affirmative ratings for the HS program could be due to the tutors’ perceptions that the tasks in the beginning episodes were not appropriate for their students. Furthermore, because HS is a student-centered program requiring the students with ESL to understand the directions and make choices could have influenced tutors’ perceptions. It should be noted that only one of the three HS tutors agreed that students were able to follow directions easily. The factors discussed above of starting every student from episode 1, the ability of the ESL students to follow directions, and the short duration of the intervention did not allow tutors to a complete exposure to activities and skills taught in the program and could have contributed to the lack of positive tutors’ perceptions.

Regarding the appropriateness of teaching activities, there were more positive responses from tutors regarding Funnix than HS. This could be due to students being placed at their instructional level. This appropriate placement, despite the short duration of the intervention, could have also influenced positive responses from both tutors that Funnix was useful in teaching reading skills to the students and that it was a good program. Furthermore, given that Funnix is an adult-navigated program, the tutors were comfortable using the program, and a fewer number of ESL students in the Funnix group could have influenced the tutor's positive responses. Also, all tutors agreed that students were able to easily follow instructions of the narrator and this along with the above factors could have influenced their positive perception of the program and their positive recommendation of the program to others attempting to help students with reading difficulties.

Limitations

There are multiple limitations to this summer study that need to be considered when examining the results. These include (a) the absence of a control group, (b) a small sample size, and (c) the short duration of the intervention. Each limitation is discussed below.

Significant Contributions of the Study

It is essential to support the development of basic literacy skills in the early grades as the failure to do so has debilitating life consequences for an individual. Research suggests reading interventions with at-risk students and students with reading disabilities in early grades is essential as the loss of reading skills in early grades compounded over multiple years can create large skill deficits over the years. Hence, interventions during summer breaks are essential to prevent the decline of reading loss and to promote reading skill acquisition. This study adds to the small literature base on the effectiveness of computer-assisted reading programs in promoting early literacy skills of at-risk readers when implemented by tutors during a summer break. Evaluation of CRPs is indispensable as they can provide support and help overcome the limited knowledge of early literacy instruction of providers and are very cost-effective. More importantly, they can provide explicit instruction, which is essential for improving the literacy skills of students (Kim et al., 2011) and can be implemented with high fidelity. The present study also measured the perceptions of tutors and students regarding ease of use, likeability, and effectiveness of the programs. The information on the ease to use and desirableness is essential for understanding the sustainability of program implementation in practice. Such information facilitates the translation of research to practice by providing teachers, parents, and other practitioners with a complete picture of the programs, aid in their selection, and the effective use of CRP programs.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The research was supported by Grant H327A040103 from the Office of Special Education Programs of the U.S. Department of Education. We thank the families and children who participated in the study.