Abstract

The vast development and expansion of educational technology has led to the deconstruction of various barriers and challenges that the traditional approaches were incapable of overcoming. However, a field that still holds a great deal of interest and needs to be strengthened concerns the practices that surround mathematics education. Digital learning tools can provide a partial solution to this problem. In this study, we introduce a curriculum-driven digital practicing tool which accommodates diverse learning styles and educational needs. The representative sample of the pilot included 135 third-grade primary school students, randomly split in two groups, who participated in the 8-week experiment. The findings suggest that the digital practicing path can facilitate the development of subject mastery and increase the accuracy of arithmetic fact calculations. In addition, the use of learning analytics tools can facilitate the knowledge acquisition process and prevent learners from developing misconceptions toward the learning subject.

Mathematics is an essential skill to learn (Kazanidis & Pellas, 2019). In addition to providing the necessary tools for the everyday life, they also form the base on which many other subjects and skills are built upon (Kelly, 2006; Roy et al., 2017). Educational research in mathematics places the role of the teacher, the design of the task, and the learning environment among the most influential factors that determine learners’ success (Geiger et al., 2010). However, despite the efforts that teachers and instructors put into making the subject less dry and more attractive, students are still facing serious problems and require a significant amount of support to overcome the obstacles they face (Doabler & Fien, 2013). An example of a problem that pupils usually encounter concerns the number of exercises they solve correctly (Chinn, 2013). In the traditional context, however, the teacher can provide only a limited amount of instruction or support to students, whereas the time gap between practicing and provision of feedback is usually long (Cunha et al., 2018). As a result, students usually find themselves in a position that disallows them from iterating their tasks in full prior to progressing to the next topic (Welder, 2006).

The benefits that the integration of information and communications technology (ICT) brings in the educational context are numerous, but the most important is identified on its potential to mediate the gap between the interacting stakeholders. In greater detail, digital learning environments enable teachers to monitor students’ progress and, therefore, provide them with immediate feedback and/or assistance as needed (Brečko et al., 2014; Pérez-Sanagustín et al., 2017). At the same time, students can regulate their own learning strategies and needs in accordance to their intrinsic motivation (Acedo & Hughes, 2014; da Silva Figueira-Sampaio et al., 2009). Nevertheless, a common problem in digital education concerns the lack of software solutions that are explicitly designed and developed in accordance to the needs of the particular curriculum of each educational institution (Kucirkova, 2014).

The framework for mathematics learning that Reinhold et al. (2020) propose describes the fundamental dimensions that should be taken into account when designing interactive learning environments. The parameters, briefly mentioned here, are (a) the integration of the particular

The Present Study

Motivated by the aforementioned recommendation, in this work, we investigate the educational potential of a digital learning tool—which has been fundamentally designed and developed under the notion of the presented framework—in the context of the elementary school mathematics curriculum. In addition, we compare the skills and the competencies that students develop during the intervention and explore the elements that make such platform successful. The former is realized through the use of the built-in learning analytics (LA) tool that captures and records learners’ interactions with the platform and the involved stakeholders (i.e., instructors, other students).

The aforementioned objectives guide the focus of our investigation and used as a guide to provide preliminary answers to the following research question: To what extent curriculum-driven educational software improve pupils’ mathematic mastery and arithmetic fluency, compared with the traditional didactic approach?

Background and Related Work

The accelerating pace at which ICT has advanced over the past few decades has affected many aspects of our society with almost immediate effects (Grabe & Grabe, 2007). Nevertheless, the educational policies are still adapting at a rather slow pace, while the traditional instructional approaches manifest in the classrooms (Albert & Kim, 2013; Ayinde, 2014). The reasons that underpin the aforementioned issues are manifold. Hiebert and Grouws (2007) identify the root of the problem in the lack of robust theories related to classroom teaching. Others link the traditional, old fashioned, teacher-dependent techniques—which are still dominant in many educational systems—with the inadequacy of the curriculum to facilitate the development of subject mastery and literacy in a coherent, consistent, and progressive manner (Contreras, 2014; Daniel, 2017; Maaß & Artigue, 2013; Ozdamli et al., 2013). Another inadequacy concerns the lack of personalized learning strategies and, therefore, the provision of support to those individuals who are in need. Despite the fact that personalized learning is regarded as a highly contributing factor toward learners’ educational advancement (Deed et al., 2014; Johnson, 2004), the difficulty to implement this model in practice makes the current situation even more problematic (Courcier, 2007). A proposed solution to mitigate the aforementioned issues considers the embodiment of ICT applications in the traditional classroom (Drigas et al., 2014; Ghavifekr et al., 2012).

Traditional Education and ICT

Τhe evolutionary emergence of ICT sparked researchers’ interest to explore, assess, and address how technology influences, enhances, or enriches learning (Cheung & Slavin, 2012; Erdogdu & Erdogdu, 2015; Ghavifekr et al., 2014; Luu & Freeman, 2011; Yuen & Hew, 2018). Nevertheless, the less prominent outcomes have divided the opinion of the scientific community as to whether (educational) technology genuinely contributes to the advancement of knowledge. To this end, the supporters of the technology-enhanced learning approach argue over the dynamic and multidimensional nature of the available e-Learning tools to sustain motivation (Hussain et al., 2011), improve the teaching and learning quality (Bingimlas, 2009; Hamidi et al., 2011), and facilitate the attainment of distinct learning outcomes (Bower, 2017). On the other hand, the more skeptical adopters proclaim that such technologies have the potential to bring better learning outcomes only if the interventions are designed and developed under the guidelines of established learning theories and models (Mayes & de Freitas, 2007). This claim is aligned with the conclusions drawn by other researchers who suggest that, despite the increase of the digital educational tools, there is no significant impact on learners’ experience or advancement (Geiger et al., 2010; Lameras & Moumoutzis, 2015).

The argument of the debate, however, is not concerning only the contradictory results. As Postholm (2007) advocates, studies related to educational technology are usually reporting empirical observations and fail to disclosure the theories that have been utilized to conceptualize the research context or the frameworks that informed the methodological techniques. As a result, a portion of researchers has subjected the outcomes of such studies under strict critique (Bennett & Oliver, 2011; Bulfin et al., 2014; Jones & Czerniewicz, 2011; Markauskaite & Reimann, 2014). Theorized and evidence-based research is, indeed, essential to understand the elements and the variables that contribute toward students’ learning growth. Accomplishing this goal will enable educators to track students’ needs and policy makers to transform the curriculum planning (Hiebert & Grouws, 2007).

Nevertheless, for these objectives to be achieved, a deeper and more thorough understanding related to students’ personal learning style, needs, preferences, and misconceptions is required. In that view, however, the traditional classroom setting does not provide fertile ground for the collection of broad-scale data due to the practical limitations that such practices involve. An approach to deal with this challenge is by utilizing digital learning tools that have been fundamentally developed under the principles of well-established learning theories and advanced in accordance to the guidelines of enacted instructional design frameworks and models (Kucirkova, 2014; Pepin et al., 2017).

Digital Instructional Design for Mathematics

Research related to mathematics education demonstrates a supportive stance toward the augmentation of the traditional practices through the use of digital tools (Bray & Tangney, 2017; Bussi et al., 2000). To this end, researchers (e.g., Artigue, 2002; Oates, 2011; Olive et al., 2009) distinguish the added value of the digital learning approach into two broad categories: (a) the

Nevertheless, in order for such tools to be effective, special attention should be paid on the design elements of the digital learning environment. Not surprisingly, different studies highlight different variables. According to Rodríguez-Aflecht et al. (2018), the technology should be flexible enough so as to account the different approaches that learners may utilize toward the solution finding and capable of facilitating the reflection process upon completion (Heirdsfield, 2011). Others (e.g., Arnab et al., 2015; Mulligan & Mitchelmore, 2013; Threlfall, 2009; Verschaffel et al., 2009) focus on the practical elements that are responsible for the mental and cognitive engagement of learners (e.g., the utilization of gamification techniques to integrate the curriculum, the visual representation of the exercises, the escalation of the difficulty level). In any case, researchers and educators share the same opinion as far as the aim of such technologies is concerned by emphasizing on the need for change from the

Self-Regulated Learning

Enabling learners to monitor and regulate their learning is a critical factor for educational success (Panadero & Järvelä, 2015). As part of the 21st century skills movement, self-regulated learning is thought to be among the most important competences that students need to develop (Shute, 2011; Sottilare et al., 2014; Zimmerman, 2008). In the literature, self-regulated learning is described as an active and goal-oriented process driven by learners’ thoughts, feelings, and strategic actions (Panadero & Järvelä, 2015). To this end, students who regulate their learning needs and carefully plan their learning tasks are considered to be more successful and confident than others (Zimmerman & Schunk, 2011). However, this is not generally the norm as students are not generally successful at self-regulation (Azevedo & Aleven, 2013).

In computer-based learning environments, learners’ behaviors can be traced and monitored in an unobtrusive fashion (Greene & Azevedo, 2010). These

An approach to overcome these obstacles considers the employment of students’ online learning traces (e.g., information selection, learning strategies, task planning) as the means to make inferences about their learning needs and patterns (e.g., Azevedo & Aleven, 2013; Walker et al., 2014). These traces are, subsequently, used to predict students’ self-regulatory processes and, thus, academic success (Molenaar et al., 2019).

Automated Assessment

Examining students’ knowledge and skills is a core element of the educational system (Pellegrino, 2014). Assessments, as a process to aid learning, can take various forms (e.g., formative, informative, summative) and levels of complexity (e.g., correctness of the exercise, style of the proposed solution, authenticity of the content; Black & Wiliam, 1998; Joy et al., 2005). However, the practical issues that surround this process (e.g., repetition of the task, extensive use of human resources) have led to its (partial) automation (Biggam, 2010). The benefits of automatic assessment are numerous (e.g., continuous diagnostic information, accelerated results, better use of human resources; Barana & Marchisio, 2016) but so are the drawbacks (e.g., lack of dynamic analysis, low accuracy or precision of correctness in problem-solving exercises; Bey et al., 2018). With that in mind, the full potential of this approach—at least for the time being—is realized mainly in the context of practicing exercises (Pelkola et al., 2018).

Automated Feedback

Educators are often combining multiple approaches to construct their feedback (e.g., explanatory, guided, corrective, suggestive, epistemic) which is then distributed to students during the different phases of the learning process (e.g., task planning, progression check, task completion; Alvarez et al., 2012; Guasch et al., 2013). Nevertheless, according to Irons (2007), the effectiveness of feedback on learning decreases as the response time increases. Automated generated feedback can mitigate this issue by offering learners the opportunity to monitor and regulate their performance. In doing so, students’ intrinsic motivation to complete the task increases, and their confidence to progress is boosted (Schaap, 2011). Nevertheless, the field of automated feedback is still relatively unexplored, especially when it comes to the typologies or the strategies that are utilized to identify individuals’ needs (Sedrakyan et al., 2020). Therein, additional research toward this direction is needed to maximize the potential of targeted feedback and ensure that learners’ personal goals and objectives are fulfilled.

Learning Analytics

Researchers have attempted to define LA from different perspectives and angles. Some consider them as an innovative approach to collect student-generated data, which can be subsequently utilized to provide personalized learning experiences (Junco & Clem, 2015; Xing et al., 2015), while others focus on the patterns that can be developed—in accordance to students’ learning behaviors—so as to inform the future developmental decisions of the learning environment (Drachsler & Kalz, 2016; Rubel & Jones, 2016). Long and Siemens (2011) propose a definition that rounds this topic from both perspectives. The authors describe LA as a

Research Design

The Digital Learning Environment

The LA team at the University of Turku, in collaboration with Finnish school teachers, has created for the online platform

Teachers’ Dashboard for Task Assigning With Adjustable Difficulty Levels.

Figure 2 illustrates a sample of the different instructional design elements that have been used for this experiment along with a brief explanation that is provided later.

Example Exercises of the Digital Learning Path for Mathematics.

The forest (top-left snap): a traditional exercise where the students have to fill in the missing number. A total of 10 questions, with varied difficulty levels, are presented to students, and the answers are checked by the system automatically. In cases where an incorrect submission has been made, the correct value is presented before moving to the next set of questions.

The kingdom (top-right snap): a problem-solving exercise, which combines numbers and symbols, where the students have to deduce which number each of the symbols represents. The aim of this exercise is to train and evaluate pupils’ analytical thinking. A total of 25 exercises are available, the difficulty of which is increasing gradually. To prevent guessing, different symbols may or may not have the same value.

The riddle (middle snap): At the end of each learning subject, a complex mathematical challenge becomes available. In order for these bonus exercises to be unlocked, the student should have a cumulative sum of 95% on all the previous tasks (school/homework). Likewise the previous cases, the difficulty of these exercises increases incrementally.

The clock (bottom-left snap): a

The racer (bottom-right snap): a gamified exercise that is used to train students’ fluency in mathematic calculations. Each round has 20 questions, and a total of 5 mistakes (virtual lives) are allowed. The speed of the virtual car is increasing progressively, but students can accelerate or decelerate it without, however, being able to immobilize it completely.

In addition, the platform presents a comprehensive LA feature that allows teachers to collect, in real-time, important information about their students’ actions. The performance statistical data—such as the number of completed exercises, the response times, the scores—are presented in a visualized way so as to facilitate the monitoring process of students’ progression in a timely and effortless manner (Figure 3, bottom frame).

Dashboard for Monitoring Students’ Progress and Misconceptions.

Moreover, the embedded artificial intelligence engine detects and highlights students’ misconceptions in different mathematical topics and enables educators to provide additional mentoring or support to those students who are in need (Figure 3, top frame). The real-time detection of misconceptions has been compared with the Finnish standardized test in mathematics, and the results shown that it can detect them equally reliably (Lokkila et al., 2015).

Research Context

The present study was conducted in the context of a joint effort between the University of Turku, Finland and the Ministry of Education of the United Arab Emirates. The research team of the Centre for LA coordinated the conduct of the intervention in different ways (e.g., by providing training and technical support to the local schoolteachers, overseeing the experiment process) and further performed the data collection and interpretation.

For the needs of this experiment, two elementary schools in Dubai were volunteered to participate with their third-grade pupils. Each school joined the initiative with two classes, and each class was assigned randomly a different group role (i.e., control and treatment). Students who were in the

Research Method

To contextualize the study from different viewpoints and perspectives, students’ competence in mathematics was examined prior to the start and after the completion of the experiment using two identical mathematical tests. The knowledge-testing exercises were designed from the researchers of the University of Turku, in collaboration with the participating teachers, prior to the initiation of the intervention. The conduct of the experiment was commenced right after the completion of the pretests and concluded 8 weeks later with the distribution of the posttests. All the tests were answered using pen and paper to avoid handing an advantage to the treatment group. Students were also requested to disclosure their name in order to satisfy requests for withdrawal. Figure 4 provides an overview of the experimental process.

Overview of the Experiment Process.

Research Material

The

Results Presentation and Discussion

In total, 160 students presented parental consent to participate in the study. However, only those pupils who answered both the pre- and the postintervention test were considered in the data analysis process, and thus, the sample of the study was reduced to 135. Of these, 70 represented the control group and the rest (65) the treatment group (Figure 5).

The Sample of the Study.

To preserve the experimental validity and ensure that the student cohorts had equivalent prior knowledge in mathematics, the preintervention scores were statistically examined using Levene’s test for homogeneity of variance. The homogeneity test indicated that the results did not achieve statistical significance, and thus, additional tests for statistical comparison could be performed. Prior to proceeding, it should be noted that the test scores have been scaled (lowest: 0 and maximum: 1) so as to ease the presentation and discussion process.

Pupils’ Prior and Background Knowledge

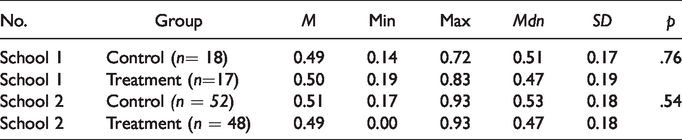

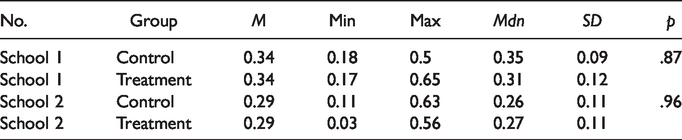

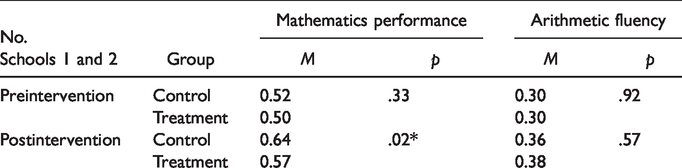

Both student cohorts of each school showed similar capabilities in terms of mathematic competence (Table 1) and arithmetic fluency (Table 2). However, in order for this observation to be validated, a two-tailed independent-samples

Preintervention Test Results of Pupils’ Mathematic Performance.

Preintervention Test Results of Pupils’ Arithmetic Fluency.

Regarding the mathematics tests per se, the relatively small distribution of students’ scores and the mean values indicate that the majority of the pupils were average-versed. Not surprisingly, though, both schools had both high (School 1: 17%, School 2: 19%,

Likewise, the mathematics mastery test, a similar picture is drawn with regard to pupils’ capacity in performing arithmetic calculations. In this case, however, the scores are considerably lower (below average). Nevertheless, this outcome can be attributed only partially to students’ skills as the difficulty to complete the test is also a decisive factor (see Research Material section).

Pupils’ Constructed Knowledge

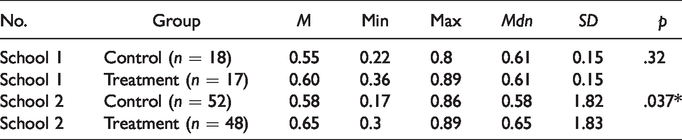

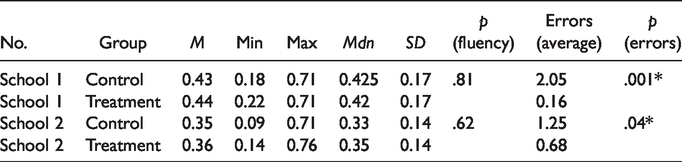

Upon completion of the intervention, pupils’ mathematical and arithmetic fluency skills were reevaluated. As expected, an overall positive difference in the performance of all the groups is observed with the treatment group having the leading edge in both tests (Tables 3 and 4). However, when comparing the impact of the conventional teaching method to the digital learning approach, the postintervention

Postintervention Test Results of Pupils’ Mathematic Performance.

Postintervention Test Results of Pupils’ Arithmetic Fluency.

As far as the arithmetic fluency test results are concerned, special attention should also be given to the difference between the number of incorrect answers that the pupils of each cohort had. As Table 4 indicates, the treatment groups of both schools made considerably and statistically significant fewer mistakes than their fellow students. As a result, a positive impact of this approach—at least—on the accuracy and precision of the calculations can be observed.

To this end, in an effort to increase the statistical power and, therefore, the validity of this experiment, we combine the scores of the individual cohorts from both schools and treat them homogenously (Table 5). Under this consideration, the impact of the intervention on pupils’ mathematic knowledge development is further validated, while the added value of this tool on students’ arithmetic skills remains limited or, at best, inconclusive.

Pretest and Posttest Results Combined.

Given the short-term duration of the intervention, a direct comparison related to pupils’ knowledge acquisition and skills’ enhancement was deemed noteworthy. The treatment groups had more radical advancement during the course of the experiment. Subsequently, it can be speculated that a greater—and possibly statistically significant—improvement may as well be observed in the case of the arithmetic fluency development. This assumption is further grounded after considering the findings from a similar study which was conducted in the context of three schools in Lithuania (Kurvinen et al., 2019).

Conclusions and Future Work Direction

In the present study, we contrasted the educational potential of a curriculum-driven digital tool with the learning outcomes that students in the traditional classroom context achieved. From the presented evidences, it can be concluded that the construction of mathematical knowledge and understanding—as with any other subject—is not a privilege for the few

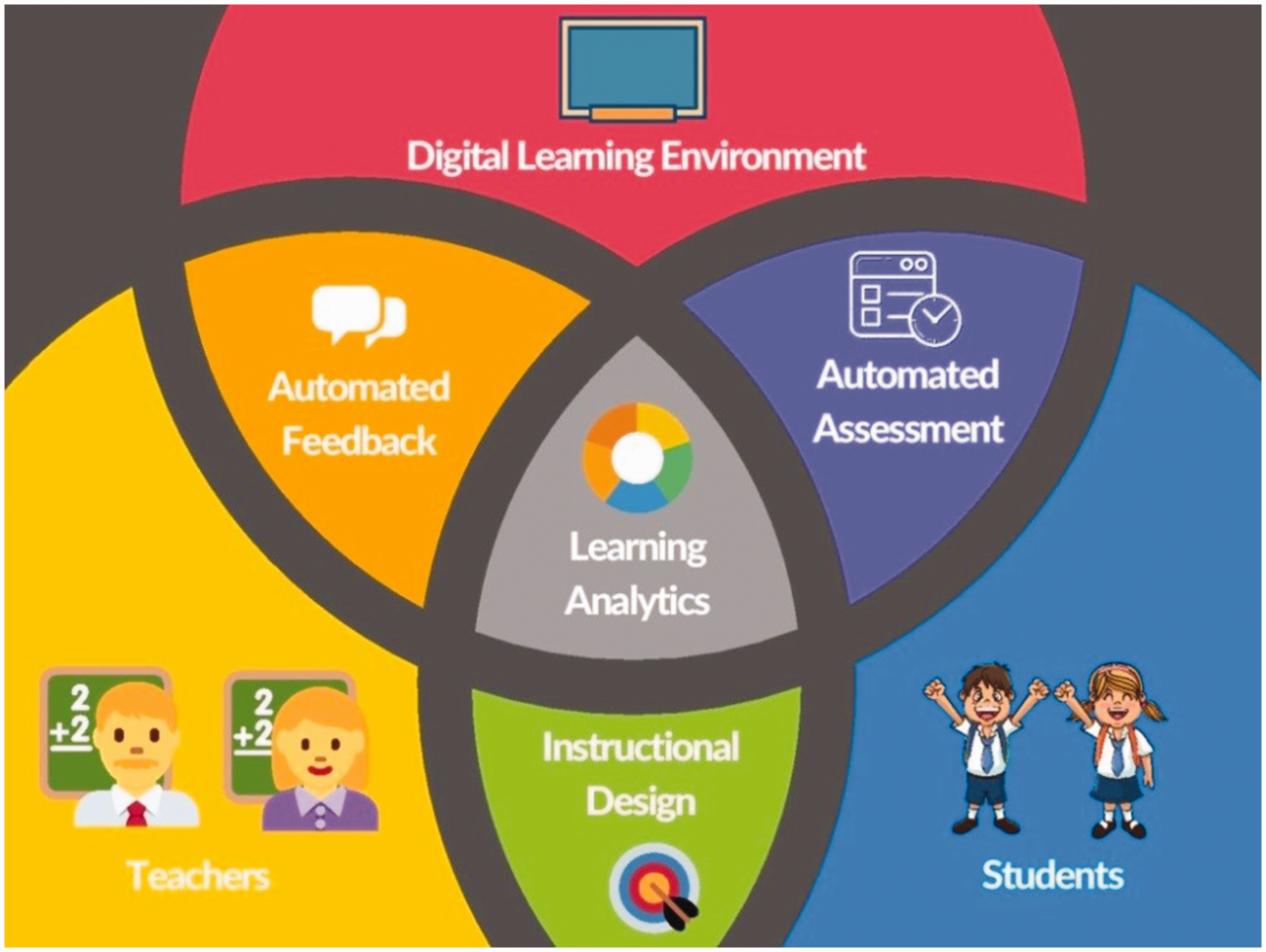

Eduten Playground brings together various elements that can promote learners’ self-efficacy and regulation of learning, increase motivation, and strengthen performance (Figure 6). In addition, the freedom offered to teachers to create and share their own exercises—tailored to their needs and teaching approach—differentiates the aforementioned platform from other available solutions that are more restrictive when it comes to content customization and personalization. To this end, the inclusion of an LA dashboard further enables teachers to identify students who are not making adequate progress and, thus, are in need of additional support.

Overview of the Eduten Playground Features and the Links Between the Interacting Stakeholders.

Notwithstanding the foregoing, the significantly improved learning gains that students demonstrated, despite the short duration of the intervention, makes apparent that, for such a tool to reach its full potential, a longer time investment is needed so as to compliment further students’ effort and, thus, educational achievements. The takeaway message from this work suggests that the inclusion of such a tool, from as early as the primary school level, can greatly prevent the development of wrong conceptions and set the foundations for continuous and sustainable knowledge development.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Fire Fellowship Grant provided by KAUTE Foundation (Finland) for the needs of the project: Adaptive Electronic Study Path in Mathematics.