Abstract

Generative artificial intelligence (genAI) tools are transforming workflows, with growing interest in their potential applications in qualitative research. While the use of genAI in facilitating the systematic review process has been explored, its application in the quality appraisal of qualitative research remains to be understood. This pilot study aims to evaluate the degree to which ChatGPT appraises qualitative research using popular appraisal tools compared to human assessments. Two reviewers applied the Critical Appraisal Skills Program (CASP) and Joanna Briggs Institute (JBI) checklists for qualitative research to studies identified through a previously published review (n = 21). Next, iteratively developed prompts along with a copy of each study were uploaded to ChatGPT to instruct it to appraise each article. Interrater reliability measures and crude agreements were conducted to estimate the level of agreement between human and genAI assessments. Interrater reliability assessments between human and ChatGPT (GPT-5) revealed no agreement to moderate agreement for CASP checklist items (kappa: <.00-.46; crude agreement: 23.8%-100%) and from none to substantial for JBI items (kappa: <.00-.83; crude agreement: 4.8%-95.2%). Agreement was highest for reporting-based elements such as study aims, ethics approval, value of research (CASP), and participant voices and conclusions (JBI). Disagreements were greatest for interpretive and context-dependent items such as research design, researcher–participant relationships, and worldview–methodology congruity. Findings demonstrate that ChatGPT (GPT-5) can reliably identify objective components yet performs inconsistently when assessing items requiring nuance and contextual understanding across both checklists. Currently, any adoption of genAI for quality appraisal of qualitative research must be carefully applied only alongside human assessments and uphold principles of transparency and data privacy.

Keywords

Evaluates ChatGPT’s capacity to conduct qualitative quality appraisal by comparing AI-generated CASP and JBI assessments with human consensus ratings across 21 studies.

Finds strong alignment on clearly reported, descriptive items (e.g., study aims, ethics, participant voices, value of research), demonstrating AI’s reliability with objective checklist criteria.

Identifies substantial inconsistencies on interpretive and context-dependent items, such as research design, researcher–participant relationship, and methodological congruity, where human judgment remains essential.

Reveals wide variability in agreement metrics, with kappa values ranging from none to moderate for CASP and none to substantial for JBI, underscoring tool-based differences in AI performance.

Concludes that genAI cannot replace human appraisal in qualitative research.

Introduction

The integration of generative artificial intelligence (genAI) tools continues to reshape workflows across various domains, including psychology, 1 healthcare, 2 education, 3 and research. 4 While there are concerns regarding the potential risks associated with reliance on genAI technologies, such as inaccuracies and hallucinations,5,6 their broad applicability gives rise to notable advantages, such as enhanced productivity and cost-effectiveness. 7 GenAI tools employ advanced computational techniques such as machine learning (ML), deep learning (DL), and natural language processing to analyze large volumes of data and generate outputs such as text, images, videos, and music in response to user prompts. 8 For instance, OpenAI’s ChatGPT, a variant of the GPT language model, is a tool trained to produce human-like responses in conversational contexts. 8

GenAI offers significant potential in streamlining research processes 9 and has been explored for its application in qualitative research synthesis, including evidence review 10 and thematic analysis.11-13 A qualitative study assessing the comprehension capabilities of 6 large language models (LLMs) of medical research papers using the STROBE checklist found that LLMs, while varying in their ability to understand medical papers and provide summaries of the different sections of the paper, have the potential to enhance the efficiency of qualitative synthesis and facilitate evidence-based decision-making. 14

Central to assessing research quality is quality appraisal. While its transferability to qualitative research remains to be of debate due to a lack of consensus in how criteria can be developed to assess quality in qualitative research,15,16 some studies suggest that a systematic quality appraisal can ensure the rigor of qualitative studies.17,18 In addition, an important element of a systematic review is the appraisal of methodological quality of the primary studies to decide whether its results are trustworthy and the extent to which these studies should contribute to the overall evidence synthesis. 19 This is particularly important in fields such as public health, where the validity and reliability of findings inform decision-making. 20 A variety of tools exist to appraise the quality of a study, with some using reporting guidelines to serve as a proxy for quality appraisal. Quality appraisal tools range in their assessment of research quality, including rigor, credibility, trustworthiness, and relevance of results, offering guidance for identifying a study’s strengths and weaknesses and informing the credibility of its findings. 18 The most widely adopted are the Joanna Briggs Institute (JBI) Checklist 21 and Critical Appraisal Skills Program (CASP) Checklist, 22 which contain elements on design, recruitment strategy, data collection, reflexivity, analysis, ethics, reporting, and value of findings. The main purpose of these tools is to serve as an appraisal in qualitative syntheses and help ensure the findings are based on trustworthy evidence.

Preliminary searches found no existing studies exploring the use of genAI for conducting quality appraisals of qualitative studies using established quality appraisal tools. Notably, Nguyen-Trung et al 10 explored the application of genAI in rapid reviews and acknowledged the potential of AI for quality appraisal as an area warranting future exploration. Addressing this gap will provide insight for guiding the appropriate use and development of AI-assisted tools in quality appraisal in qualitative research.

Therefore, this pilot study aims to evaluate ChatGPT’s appraisals of qualitative research by assessing areas of alignment and divergence with human reviewers and evaluating the potential applications and limitations of genAI in qualitative quality appraisal. The objectives of this research are to:

Develop a systematic process for using ChatGPT to conduct quality appraisal using the CASP and JBI qualitative checklists; and

Assess the level of agreement between AI and human-conducted quality appraisals.

Methods

Twenty-one peer-reviewed qualitative studies were identified through a previously published review by Fazakarley et al, 23 which synthesized qualitative research on stakeholder beliefs around the use of AI in healthcare. The qualitative synthesis identified 19 open-access articles, and 2 articles with restricted access. This review was selected as our base for quality appraisals because the studies contained within it varied in geography, participants, data collection methods, and analytical approaches used. Next, the CASP and JBI qualitative checklists were applied to all studies, and the following information was noted: presence of item (ie, yes, no, or unclear), a brief justification, and location where the item is found in the study.

The CASP checklist, a quality appraisal tool endorsed by the Cochrane Qualitative and Implementation Methods, comprises 10 questions guiding the assessment of the validity, results, and local relevance of results presented in qualitative research. 17 The JBI checklist also includes 10 questions on the congruity of elements within the study (e.g. congruity between method and results), reflexivity, participant voices, ethics, and coherence of conclusions. 15 We selected these 2 checklists because they are the most widely adopted quality appraisal tools used in qualitative syntheses and are known for their ease of use.15,17 More information about these tools can be accessed by visiting the respective websites.21,22

ChatGPT was selected for this pilot study given its widespread availability, integration into academic and professional workflows, and prior evidence of use in tasks such as literature review and evidence synthesis.24,25 Its conversational interface also facilitates transparent prompt construction, making it suitable for a feasibility assessment of quality appraisal.

Prior to data analysis, a pretest was conducted to: (i) critically appraise 3 studies via a human reviewer (HS) and (ii) assess genAI’s ability to perform comparable quality appraisals on the same studies. This pretesting guided the iterative development of prompts provided to ChatGPT 4o in June 2025 (Supplemental File 1). Next, 2 independent reviewers (HS, MM, and AT) assessed each study by applying the CASP and JBI qualitative checklist. Following independent review, the reviewers discussed any conflicts and generated a consensus across the 21 studies (Supplemental Files 2 and 3). Consensus ratings served as a reference standard for comparison with genAI’s appraisals of the 2 qualitative checklists across 21 studies.

For the CASP checklist, the following prompt and an attached copy of the article were provided to ChatGPT (GPT-5) in a new ‘chat’ for each study:

I would like you to critically appraise the attached qualitative study using the CASP Qualitative Research Checklist.

1. Carefully review the full content of the study.

Then, assess each of the ten questions in the CASP checklist, and provide your assessment in a table format with the following 4 columns:

1. Title of the study

2. A brief answer to the question in the checklist (Yes, No, Can’t tell)

3. A 1 sentence justification based on explicit evidence from the text

4. The location in the study where the relevant information is found

Use the following criteria to guide your judgement:

Answer ‘Yes’ if the criterion is clearly addressed in the text, with adequate detail and justification.

Answer ‘No’ if criterion is completely absent.

Answer ‘Can’t tell’ if the criterion is partially met, unclear, or lacks sufficient detail to judge confidently.

When forming a response, take note of the following considerations for each checklist item (exact descriptions of each item from the tool are included in the prompt; see Supplemental 1 for the full prompt, including exact item descriptions from the checklist).

For the JBI checklist, the following prompt and an attached copy of the article were provided to ChatGPT (GPT-5) in a new ‘chat’ for each study:

I would like you to critically appraise the attached qualitative study using the JBI Critical Appraisal Checklist for Qualitative Research Carefully review the full content of the study. Then, assess each of the ten questions in the JBI critical appraisal tool for qualitative research, and provide your assessment in a table format with the following 4 columns:

1. Title of the study

2. A brief answer to the question in the checklist (Yes, No, Unsure)

3. A 1 sentence justification based on explicit evidence from the text

4. The location in the study where the relevant information is found

Use the following criteria to guide your judgement:

Answer ‘Yes’ if the criterion is clearly addressed in the text, with adequate detail and justification.

Answer ‘No’ if criterion is completely absent.

Answer ‘Unsure’ if the criterion is partially met, unclear, or lacks sufficient detail to judge confidently.

When forming a response, take note of the following considerations for each checklist item (exact descriptions of each item from the tool are included in the prompt; see Supplemental 2 for the full prompt, including exact item descriptions from the checklist).

The raw appraised outputs from the ChatGPT (GPT-5) are provided in an open database: https://osf.io/cyfsm/. Subsequently, interrater reliability between human and ChatGPT (GPT-5) assessments was assessed using the ‘irr’ package in RStudio (version 2025.05.0 running R 4.4.0). Crude percent agreement, although a less rigorous metric, 26 is also presented because Cohen’s kappa could not be calculated for some checklist items due to homogeneous responses. This crude measure reflects the proportion of results that agree between the human reviewer and ChatGPT (GPT-5) but does not account for agreement due to chance. Despite its limitations, we report on this metric alongside Cohen’s kappa to highlight the crude level of agreement to provide additional context where kappa could not be calculated.

Results

Summary of Included Studies

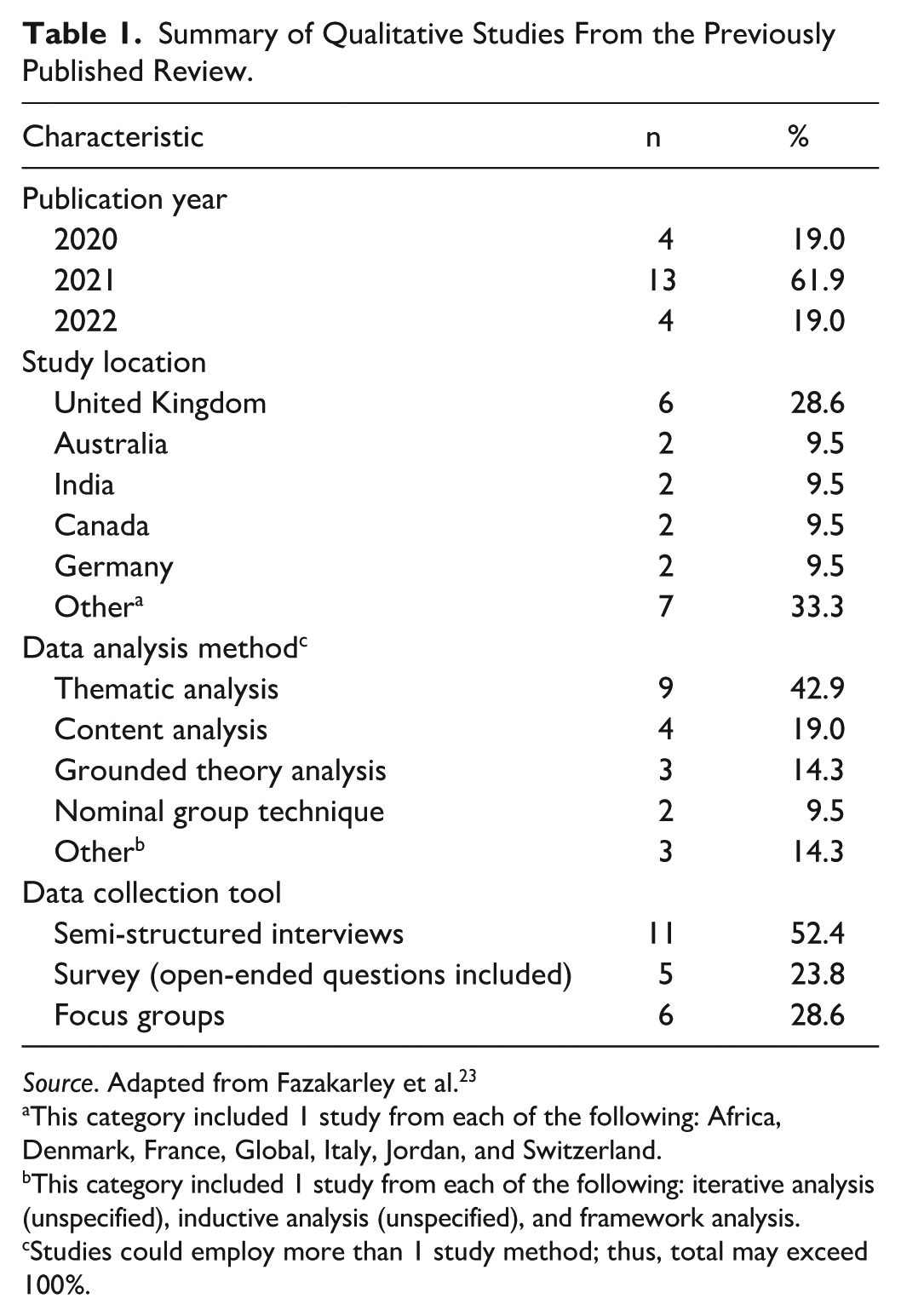

The 21 articles included in this pilot study, drawn from a published review of qualitative research, were published between 2020 and 2022, and had a median number of participants of 28 (range: 12-475). Participants included students, researchers, industry experts, healthcare providers, industry stakeholders, and regulators. Most studies used semi-structured interviews, thematic analysis, and were conducted in the United Kingdom (Table 1).

Summary of Qualitative Studies From the Previously Published Review.

Source. Adapted from Fazakarley et al. 23

This category included 1 study from each of the following: Africa, Denmark, France, Global, Italy, Jordan, and Switzerland.

This category included 1 study from each of the following: iterative analysis (unspecified), inductive analysis (unspecified), and framework analysis.

Studies could employ more than 1 study method; thus, total may exceed 100%.

Human and ChatGPT (GPT-5) Responses to CASP Checklist Items

When comparing human and ChatGPT (GPT-5) responses for each CASP checklist item, the highest alignment in ‘Yes’ responses occurred when assessing the value of research, statement of aims, and ethics (Table 2). In contrast, the greatest difference was observed in items assessing the appropriateness of research design and the researcher–participant relationship. Responses selected as ‘No’ showed limited alignment overall, with notable divergence for the researcher–participant relationship. For ‘Unclear’ responses, the strongest alignment was observed for rigorous data analysis, whereas the lowest alignment again appeared for the assessment of appropriate research design. See Supplemental 3 for the consolidated CASP quality appraisal results for all 21 included qualitative studies, showing side-by-side human and ChatGPT (GPT-5) responses for each checklist item (Q1–Q10).

Comparing the Responses Using the CASP Checklist Between Human and ChatGPT (GPT-5) Assessments of 21 Qualitative Studies.

Human and ChatGPT (GPT-5) Responses to JBI Checklist Items

When comparing results for JBI checklist items, the greatest alignment in ‘Yes’ responses was observed for participant voices represented, ethical approval, and the relationship of conclusions to analysis (Table 3). Objective–method congruity and design–data collection congruity also showed high agreement. In contrast, the greatest differences occurred for worldview–methodology congruity and design–analysis congruity, where ChatGPT (GPT-5) was more likely to classify items as ‘Yes’. For ‘No’ responses, partial alignment was observed for researcher orientation and the influence of the researcher on the research, although ChatGPT (GPT-5) more often shifted these assessments to ‘Unclear’. See Supplemental 4 for the consolidated JBI quality appraisal results for all 21 included qualitative studies, showing side-by-side human and ChatGPT (GPT-5) responses for each checklist item (Q1–Q10).

Comparing the Responses Using the JBI Checklist Between Human and ChatGPT (GPT-5) Assessments of 21 Qualitative Studies.

Agreement Between Human and ChatGPT (GPT-5) Assessments

Cohen’s kappa coefficients indicated levels of agreement between ChatGPT (GPT-5) and the human reviewers ranging from none to moderate (<0.00-0.46) for CASP items and none to substantial (<0.00-0.83) for JBI items (Table 4). To address checklist items where little within-reviewer variability prevented kappa calculation, crude percent agreement was also reported, spanning from as low as 23.8% to as high as 100% across CASP items, and from 4.8% to 95.2% across JBI items. Highest crude agreement was observed for CASP item 10 (value of research, 100%) and JBI items 3 (Design and data collection method congruity) and 8 (Participant voices represented, 95.2%), while the lowest was seen for CASP item 3 (Appropriate research design, 23.8%) and JBI item 1 (Worldview-methodology congruity, 4.8%).

Level of Agreement Between Human and Generative AI Assessments, Demonstrated Through Cohen’s Kappa Coefficients and Crude Percent Agreement (%).

Some interrater reliability measures could not be calculated due to homogeneous responses across studies.

Discussion

The potential applications of genAI are rapidly expanding, with diverse sectors integrating these tools to enhance workflow efficiency. In research, tools such as RobotReviewer have been explored for their ability to automate risk of bias assessment in randomized controlled trials, 27 while software like NVivo has embedded auto-coding features for theme and sentiment identification. 28 In particular, the rapid integration of genAI tools and their contributions to the systematic review process have highlighted the success of genAI tools in assisting research processes.29,30 Notably, systematic review platforms such as DistillerSR and Nested Knowledge have incorporated AI-based tools to assist with early phases of reviews, such as data extraction, searching, and screening. 31 In particular, DistillerSR, a reference management software, integrates AI into their screening and data extraction capabilities, 32 with promise shown for screening efficiency. 33 However, the application of genAI in more interpretative processes, such as quality appraisal, remains uncertain. In this pilot study, ChatGPT (GPT-5) was used to evaluate how well it performed quality appraisal of qualitative studies, and results were compared to those of human reviewers. Future research should compare ChatGPT with other large language models to assess performance patterns.

Our findings demonstrate that ChatGPT (GPT-5) makes sound decisions when identifying simpler, descriptive components. For instance, there was favorable crude agreement for study aims and value of research (CASP), and for participant voices, ethical approval, and conclusions (JBI). These findings suggest genAI tools may best support researchers in identifying key study components that are primarily reporting-based and require less subjectivity. Notably, the efficiency with which these assessments are performed may also provide significant advantages for streamlining the reporting checklist process and ensuring researchers are reporting key elements of qualitative research. A scoping review exploring resource use during systematic reviews identified quality appraisal as a highly resource-intensive task. 34 Studies have investigated the use of quality assessments in systematic reviews and found an increase in the adoption of such assessments in qualitative and quantitative evidence syntheses despite the number and subjectivity of appraisal tools available.35-37 However, there continue to be concerns around the sufficient integration of quality assessments within the overall synthesis, especially inherent challenges with more interpretative methods such as meta-ethnography.35,37 Thus, there is a need to revisit the use of genAI to conduct quality appraisal and further explore its use and effectiveness in conducting reporting checklists for qualitative studies.

Our findings also highlighted differences between the 2 appraisal tools. CASP items more often targeted clear reporting elements (e.g., study aims, ethics, value of research), where ChatGPT (GPT-5) achieved higher alignment with human reviewers. In contrast, the JBI checklist places greater emphasis on methodological congruity (e.g., worldview–methodology alignment, design–analysis coherence), which are inherently more interpretive. This distinction is consistent with prior comparative analyses of appraisal tools, such as Hannes et al, 38 who observed that CASP is less sensitive to deeper aspects of validity, while JBI’s emphasis on congruity provides greater coherence in assessing interpretive and theoretical dimensions. These distinctions may help explain why agreement ranged from none to moderate for CASP, but from none to substantial for JBI. Appraisal tools differ not only in structure but also in the types of validity they emphasize, 38 with our findings suggesting that genAI tools are more effective in supporting reporting-based assessments but remain limited in making judgments that require contextual or nuanced interpretation. Human reviewers remain essential for addressing the more interpretive dimensions of qualitative appraisal.

Recognizing that quality appraisals often require nuanced judgment and contextual understanding, we identified widespread limitations with the application of genAI for quality appraisal using both CASP and JBI qualitative checklists. Even when provided with the full explanatory notes from the CASP and JBI checklists, ChatGPT (GPT-5) remained inconsistent, with only minor changes observed in item-level outputs. This suggests that limitations are not solely prompt-dependent but instead reflect challenges with applying nuanced, interpretive judgment. When encountered with interpretative and context-dependent components, most notably appropriate research design and the researcher–participant relationship (CASP), and worldview–methodology congruity and design–analysis congruity (JBI), ChatGPT (GPT-5) showed poor agreement with human reviewers. In these areas, ChatGPT (GPT-5) often shifted responses toward ‘Yes’ or ‘Unclear’, diverging from human reviewers. The tool may have struggled with such elements due to multiple reasons. First, the subjective nature of the CASP and JBI checklists 17 may have presented challenges for the tool. For instance, the fixed response options of the CASP tool often lack the flexibility needed to accommodate nuanced interpretation, 17 which may have made it difficult for genAI to exercise contextual judgment when appraising the qualitative studies. Second, the limitations in the reasoning depth of genAI may also pose challenges for interpreting nuanced items. 39 A study comparing the concordance and speed of information extracted by ChatGPT-3.5 Turbo against human extraction methods found that the model was effective in extracting simple information, but researcher involvement was required for complex tasks. 40 Similarly, although a study evaluating the comprehension of 6 LLMs in summarizing medical papers found that ChatGPT-3.5 excelled in identifying funding information, which the authors note requires a nuanced understanding of elements embedded at the end of the papers, it also observed that LLMs demonstrated weaker performance on items requiring larger methodological interpretation. 14 We did not analyze the AI-generated justifications, which may provide further insights into model reasoning and limitations in nuanced judgment.

Based on our findings, the research team developed the following recommendations through consensus discussions, drawing on our expertise in evidence synthesis and in the governance and application of genAI in public health. These recommendations are intended to inform future work on the use of genAI in the quality appraisal of qualitative research:

Recommendation 1: Human Oversight and Additional Evaluation Are Necessary

Human oversight remains critical to the application of genAI in quality appraisal. Studies have repeatedly demonstrated the importance of human oversight in research processes, including systematic reviews 30 and in the creation of plain language summaries 41 to ensure the validity of genAI outputs. Our study adds to this knowledge base and stresses the importance of human oversight when adopting genAI tools, particularly in qualitative research, where interpretative and context-dependent components are highly prevalent. In particular, our analysis highlights the limitations of genAI when faced with subjective context. Additional testing and evaluation are needed to examine how such tools perform across a wider variety of review topics and contexts. Based on this pilot study, genAI-assisted assessments are too inconsistent to be reliable for adoption in qualitative reviews. If used, they should be subjected to human oversight to ensure accuracy and contextual appropriateness. Human oversight should be undertaken by researchers with the appropriate expertise to critically assess the output and its relevance to the intended use. At this stage, the greatest utility of genAI quality assessment would likely be in rapid review contexts where resources and timelines might normally allow only 1 human reviewer. While our analysis focused on agreement across checklist items, we did not conduct a qualitative coding of the 1-sentence justifications generated by ChatGPT (GPT-5). Future research should systematically analyze these justifications for accuracy, relevance, and depth, as this may provide richer insights into model reasoning. Ultimately, genAI tools should complement the researcher’s efforts.

Recommendation 2: Transparent Reporting Remains Essential for Rigor and Trust in Qualitative Research

Transparent reporting indicating where and how genAI is applied in the qualitative synthesis process ensures the rigor of qualitative research. This is particularly important given the skepticism surrounding genAI adoption, largely due to unseen algorithmic activity that produces outcomes without clear explanation.42,43 In addition, maintaining transparency ensures rigor and trust, aligning with best practices in qualitative research. 44

Recommendation 3: Caution Should be Exercised With Regard to Data Privacy

Caution is warranted when adopting genAI tools in qualitative research, as uploading non-open-access or unpublished full-text studies may compromise privacy and pose ethical risks. Oftentimes, AI platforms process and store user data, and using copyrighted or subscription-based material may breach ethical or legal guidelines. 45 Therefore, to mitigate these concerns, researchers should avoid inputting restricted content into public AI platforms and consult AI data policies and institutional guidelines.

Recommendation 4: The Development of a GenAI-Compatible Quality Appraisal Tool May Enhance Integration of GenAI in Qualitative Quality Appraisal

Current quality appraisal frameworks were not designed to accommodate the application of genAI. In particular, this study demonstrates the constraints of applying genAI to quality appraisal, which requires nuance and contextual understanding. The protocol guiding the development of QUADAS-AI, an AI-specific extension of QUADAS, acknowledges that addressing the concerns of AI is central to developing tools that can effectively perform the desired task. 46 Therefore, we recommend exploring the development of validated quality appraisal tools for rapid reviews as a first step that prioritizes AI compatibility. These tools should address the limitations of current AI systems and incorporate features that reduce ambiguity in interpretation. Addressing these limitations can enhance the efficiency, accuracy, and reliability of AI-assisted quality appraisal.

Conclusions

This pilot study reveals that genAI may not be an appropriate standalone replacement for quality appraisal of qualitative research using existing qualitative checklists. However, its integration can be considered alongside human oversight. Our study demonstrated low human-genAI reliability to effectively apply the checklists across nuanced and context-dependent items. However, ChatGPT (GPT-5) showed promising results in applying the checklist for items that were reporting-based and required little depth such as study aims. Future research is needed to explore the capacity of genAI tools to apply reporting checklists, and to evaluate the capacity of existing genAI tools to critically appraise qualitative studies across broader contexts and settings. This will further our understanding of the strengths and limitations of genAI in the quality appraisal process, which will be critical for developing and refining tools that can effectively support evidence synthesis.

Supplemental Material

sj-pdf-1-inq-10.1177_00469580251399374 – Supplemental material for A Pilot Study on Generative Artificial Intelligence’s Reliability in Qualitative Research Quality Appraisal Using CASP and JBI Checklists

Supplemental material, sj-pdf-1-inq-10.1177_00469580251399374 for A Pilot Study on Generative Artificial Intelligence’s Reliability in Qualitative Research Quality Appraisal Using CASP and JBI Checklists by Hisba Shereefdeen, Abhinand Thaivalappil, Ian Young and Melissa MacKay in INQUIRY: The Journal of Health Care Organization, Provision, and Financing

Footnotes

Ethical Considerations

There are no human participants in this article and informed consent is not required.

Author Contributions

Conceptualization (MM and AT), Methodology (HS, AT, IY, and MM), Formal analysis (AT), Investigation (HS, AT, and MM), Writing – original draft (HS), Writing – review & editing (HS, AT, IY, and MM), Resources (MM), Supervision (AT and MM), and Validation (AT, IY, and MM). All authors have read and approved the final manuscript.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article. One author is employed at the Public Health Agency of Canada (PHAC). The work was not undertaken under the auspices of PHAC as part of their employment responsibilities. Any views expressed therein are not those of PHAC.

Data Availability Statement

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.