Abstract

Community participation is an important area of rehabilitation research. However, accurately capturing individual experiences with participation is difficult using conventional survey approaches. Biases in self-report and lack of contextual information are leading threats to understanding participation and how individual circumstances influence outcomes. Ecological Momentary Assessment (EMA) addresses weaknesses in conventional data-collection approaches that could enhance participation research but presents the potential for high participant burden and low compliance that threaten data quality. The purpose of this paper is to present findings from a proof-of-concept EMA study focused on academic engagement as a form of community participation in sample of college students enriched for disability. We report initial feasibility and validation results comparing momentary data with conventional measures of academic engagement. Finally, we discuss potential application of this method in participation outcome research and rehabilitation practice.

Keywords

Rehabilitation researchers, like other behavioral scientists, are interested in questions regarding experiences and behaviors in real-life contexts. However, efforts to understand community participation outcomes are significantly hindered by research approaches that do not capture data within an individual’s daily life. Instead, insights are often limited to summary and retrospective reports on how an individual typically feels or behaves without the benefit of environmental context. The value of understanding environmental context for rehabilitation research is referred to as a foundational principle (Dunn et al., 2016), with prominent rehabilitation scholars centering the importance of environment, climate, and interactions in their work (Dunn et al., 2016; Engel, 1977). Reflecting ecological validity and environmental context in participation research remains challenging.

The International Classification of Functioning (ICF) model introduced by the World Health Organization in 2001 has gained significant attention in rehabilitation research, particularly its emphasis on the need to understand activities and community participation as the expression of how and when individuals can perform activities that are important to them in their daily lives. Participation is defined most simply as “involvement in a life situation” (World Health Organization, 2001, p. 16). Environmental and contextual factors can serve as barriers and facilitators to an individual’s behavior and resulting participation outcomes (Badley, 2008). The ICF taxonomy delineates possible life areas to be assessed and understood in participation research, including (a) learning and applying knowledge, (b) communication, (c) mobility, (d) self-care, (e) domestic life, (f) interpersonal relationships, and (g) community, social, and civic life (Resnik & Plow, 2009). These life areas are of significant importance and valuation and are areas where individuals with disabilities experience reduced participation or even exclusion. Participation outcomes are highlighted as crucial to individuals with disabilities and their family members with significant implications for qualify of life (Resnik & Plow, 2009).

Defining, operationalizing, and measuring participation in rehabilitation research has been a perennial challenge (Dijkers, 2010; Imms et al., 2016; Mallinson & Hammel, 2010; Whiteneck & Dijkers, 2009). Despite these challenges, it remains critically important as a outcome of rehabilitation interventions (Imms et al., 2016; Kossi et al., 2024; Seekins et al., 2012). Researchers have emphasized the importance of clarity, subjective, and objective viewpoints and interaction of person and environment in recording participation data. Participation data are collected through survey instruments, either self-report or observer report, providing a point-in-time estimate of a person’s daily or typical routines and interactions. Several instruments are available across multiple life domains (mobility, domestic life, social interactions, and community life; Tate et al., 2013), with most demonstrating moderate to good reliability (Magasi & Post, 2010). Subjective measures are typically limited to asking respondents to indicate their level of difficulty performing activities, barriers and facilitators to participation, and general frequency and satisfaction with activities and participation (Resnik & Plow, 2009). The objective measurement tools typically ask for individual or observer ratings of engagement and independence with a set of participation activities (Chang et al., 2013; Magasi & Post, 2010). Both approaches lack of precision regarding frequency of activities and comparison to a group norm (Chang et al., 2013). In addition, these instruments do not illuminate how a person behaves or experiences their natural environment and rely on participants to recall and quantify what has been typical for them over a period ranging from weeks to months (Chang et al., 2013).

Evidence suggests that data collected using measures that rely on retrospective reports are fraught with inaccuracy and bias (Shiffman et al., 2008). Questions that ask individuals to recall their experiences or behavior over a time period (i.e., 7 days, 4 weeks), are difficult to answer and force respondents to use a variety of estimation strategies, often biased by their current state or more recent events in their answers (Schwarz, 2007). It is particularly difficult to recall the details of behaviors and experiences that are routine, providing researchers with flawed data despite the best intentions and efforts of respondents. Respondents may also overestimate or underestimate their engagement with target behaviors (i.e., exercising; Nicolson et al., 2018) or experience of pain or symptoms (Van den Bergh & Walentynowicz, 2016).

Retrospective data collected through surveys that ask participants about their behavior or experiences “limits our ability to accurately characterize, understand, and change behavior in real-world settings, and misses the dynamics of life as it is lived, day-to-day, hour by hour” (Shiffman et al., 2008, p. 3). Behaviors and experiences within a regular day and an individual’s real-life context is where participation is best captured and understood. While information on an individual’s satisfaction, or how much difficulty they have or assistance they need in performing these life activities can be helpful, more objective and accurate data on how often, when, and under what conditions they perform desired activities is needed. Shifting to models that allow for within-person analysis of participation patterns, including individual reports of function and affect, and contextual influences that appear to hinder or facilitate participation behavior provide significant opportunities for research and intervention development.

Value of EMA for Rehabilitation Research and Understanding Participation

Ecological Momentary Assessment (EMA) is a data-collection method that allows capture of behaviors and subjective experiences through experience sampling over the course of a typical day or other designated time period (de Vries et al., 2021; Stone et al., 2007). Experience sampling provides a method of systematic evaluation of subjective experiences and behaviors that improve our ability to understand participation (Dijkers, 2010). A variety of data-collection strategies can be encompassed under EMA, including daily diary, behavioral observation, self-monitoring, experience sampling, and other monitoring methods. These methods have existed for nearly a century, with daily diary studies dating back to the 1920s (de Vries et al., 2021). Strategies range from very high tech, such as continuous blood pressure or other physiological monitoring, to very low-tech, where an individual is called at the end of the day to answer a few questions or asked to take notes on index cards a few times a day to report activities of interest. The common features of EMA include repeated assessments very close in time to the behavior or event of interest, collected over a period of time, within the individuals’ real-world context (Stone et al., 2007).

EMA addresses known limitations in research on community participation outcomes, particularly those related to poor recall or biased responses from participants (Shiffman et al., 2008) and a lack of ecological validity of measures (Schwarz, 2007; Trull & Ebner-Priemer, 2009). EMA can improve data quality by capitalizing on distributed, high-frequency assessment designs (e.g. measurement burst design studies; Nesselroade, 1991). Capturing responses over time allows for understanding of patterns of behavior rather than a simple point-in-time assessment that may or may not be representative of a person’s actual state. While EMA still relies on participant self-report, the approach of asking an individual to report whether an event or experience occurred within the last few hours, or their current state, reduces the potential for bias and difficulty with recall. However, EMA is not common in disability or rehabilitation research, despite demonstrated usefulness in many disability populations (Gromatsky et al., 2020; McKeon et al., 2018; Porras-Segovia et al., 2020). Drawbacks of EMA include the potential for high participant burden (i.e., requests to respond to prompts multiple times per day for several days) and threats to data quality if compliance is low (de Vries et al., 2021). Best practices suggest brief assessments and efforts to limit steps needed to complete assessments can reduce risks (Smyth et al., 2017).

EMA also opens the door for improved intervention deployment and monitoring in rehabilitation counseling settings. Ecological momentary interventions (EMIs) are considered to be an extension of EMA, allowing the observations drawn from within-day responses to inform practitioners of what kind of intervention may be needed, and when it would be most likely to have a positive impact (Bell et al., 2017). EMA and EMI have been used clinically, for example, to monitor symptoms of individuals post-concussion and their compliance with treatment (Elbin et al., 2025), cravings and their impact on relapse among people in recovery (Moore et al., 2014), and in treatment of mental health disorders (Bell et al., 2017).

The ENGAGE Study of Academic Engagement

ENGAGE is an EMA study of college students focused on the everyday factors surrounding academic engagement. Academic engagement is an important community participation outcome to capture, as it represents a necessary component of fulfilling a social role as a student (Chang et al., 2013; Whiteneck & Dijkers, 2009). Engagement behaviors are defined as “energized, directed, and sustained actions” toward learning activities (Skinner et al., 2009, p. 225), and behaviorally defined as the “amount and quality” of time spent in learning activities, sustaining attention, and avoiding distraction and disruption in academic spaces (Hoi, 2024, p. 2).

Studies on academic engagement are an important step to understanding persistence and academic performance among college students with disabilities. Students with disabilities represent approximately 20% of the college student population (National Center for Educational Statistics, 2023), but these data are certainly an underestimate, as only about 37% of disabled students disclose their disability to their academic institution (National Center for Educational Statistics, 2022). Data regarding academic performance and completion rates of students with disabilities are mixed (Kutscher & Tuckwiller, 2019), suggesting that there is significant variation among this population in their post-secondary outcomes. From an economic and social standpoint, completing a post-secondary degree provides individuals with greater employment stability and earning potential (Bureau of Labor and Statistics [BLS], 2023, 2024). Post-secondary education also provides significant opportunities for friendships and engaging with leisure and recreational activities. However, students vary in their engagement with the academic and social environment, and the degree to which students engage can influence persistence and performance (Casanova et al., 2024; Hoi, 2024; Kutscher & Tuckwiller, 2019; Mamiseishvili & Koch, 2011). While grades are the most common metric associated with academic outcomes, long-term academic success is realized through daily engagement in attending class and studying (Hoi, 2024). The ENGAGE study utilized a measurement burst design to monitor college students’ academic engagement behaviors (i.e., studying, attending class) over a typical week during a semester.

The ENGAGE study provides proof-of-concept, leveraging EMA to address questions related to behavioral engagement in meaningful life activities and participation and contextual factors that support or inhibit individual performance. However, EMA introduces complexities to studies (de Vries et al., 2021), and important questions remain about the utility of EMA in participation research. The following questions were explored:

Methods

Participants

Participants were recruited from university list-servs in the Penn State College of Education and Student Disability Resources. Undergraduate students who were currently enrolled in courses at Penn State University, >18 years of age, were included in the study. Participants were excluded if they were unable to independently complete the EMA surveys or not fluent in English. A total of N = 108 students were enrolled in the study (see Results section for demographic information). All participants provided written informed consent and received compensation for their participation. All experimental procedures were approved by Pennsylvania State University’s Institutional Review Board for the ethical treatment of human participants.

Materials and Procedures

The study protocol involved two phases: (a) an initial in-person consent and study onboarding visit where participants were given an overview of the study, were acquainted with the study materials, and completed full-length surveys on a study tablet; (b) following the in-person session, participants completed a 7-day EMA measurement burst on study-provided smart phones.

EMA Protocol

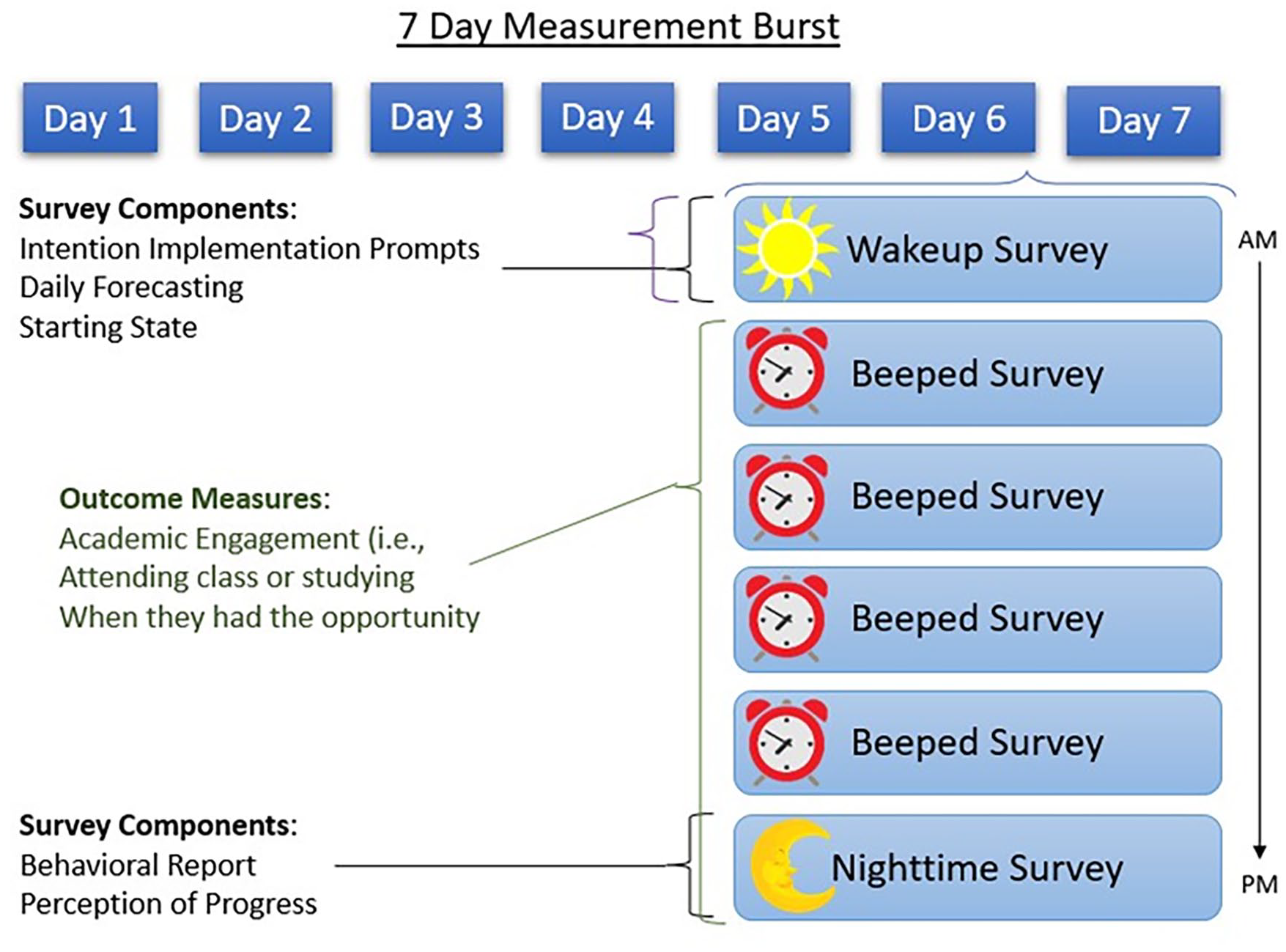

Data for the ENGAGE study were collected on investigator-provided phones. The phones were configured into “kiosk” mode, limiting participant access to phone features to the study application only. A custom Android EMA mobile application was loaded onto the investigator-provided phones and used to administer the EMA protocol. The EMA protocol was conducted over 7 days in a measurement burst design (Smyth et al., 2017). The 7 days included 5 weekdays and 2 weekend days. Each day in the measurement burst involved one self-initiated morning survey, followed by four pseudo-randomly signaled “beeped” surveys, followed by one self-initiated nighttime survey (see Figure 1 for a visual depiction of the protocol). At the start of the study, participants provided their typical waking time. From this information, the EMA protocol was programmed for each participant so that their first beeped survey would be delivered at a random time within 2 hours of their self-reported typical morning waking time. Subsequent notifications were separated by an average of 3.75 hours and pseudo-randomly jittered around this interval by up to 30 minutes. The notification schedule was designed to provide relatively even coverage of a day with small variations in survey notifications to avoid a predictable schedule. The nighttime survey was to be completed as close to the end of the person’s day as possible, but before midnight. Academic engagement was assessed in the beeped surveys and the nighttime survey by asking students to report on academic engagement behaviors since the last survey. The primary analysis, focused on academic engagement behaviors, made use of the four beeped surveys and daily nighttime surveys. The morning surveys were not used because engagement behavior data were not collected.

Visual Depiction of the EMA Protocol

Measures

Demographics

Participants were asked to identify their gender, race and ethnicity, age, year in school, and disability status and description.

Academic Engagement

Engagement was measured using a full-length inventory administered on the study tablet during the onboarding session and with eight items included in the EMA protocol (see below).

Student Course Engagement Questionnaire

The SCEQ was used to measure academic engagement (Handelsman et al., 2005). The SCEQ includes 23 items and is multidimensional, with four factors of engagement: Skills (nine items), emotional (five items), participation/interaction (six items), and performance (three items). Respondents are asked to consider how the statements relate to their general approach to coursework. Items are rated on a scale of 1 (not at all like me) to 5 (very much like me). Sample items include, “I make sure to study on a regular basis,” “I ask questions when I do not understand the instructor,” and “I am organized.” A full-scale score was calculated for each participant. Internal consistency in our sample was α = .89.

EMA of Academic Engagement

EMA of academic engagement included eight items focused on behaviors associated with attending class and studying. Participants were asked during each beeped and nighttime survey whether they had an opportunity to attend class (“Since the last survey, have you had class?”) or study (“Since the last survey, have you had time to study?”) during the period of time leading up to the current survey. A “Yes” response to either question branched to three engagement follow-up questions related to the specific behavior (class attendance/studying episode). A “No” response to either question branched to balancing questions about time spent socializing, engaging in self-care, and resting. Class engagement follow-up questions included: In class, “I was attentive,” “I participated (for example, by taking notes, asking questions, or adding to discussions”), and “I was distracted (for example, phone, laptop, daydreaming, talking”). Study engagement questions included “I prepared myself for upcoming classes,” “I organized myself to stay on top of schoolwork,” and “I reviewed, studied, or read for class.” Engagement behaviors were rated on a 100-point visual analog slider scale of 0 (not at all) to 100 (extremely). These indicators of engagement mirrored those found on cross-sectional measures of engagement such as the SCEQ described earlier.

End of Day Satisfaction Ratings

The nighttime survey included a daily wrap-up, asking participants to rate their perceptions of their day “overall.” A single item related to satisfaction with progress toward academic goals was included “I am happy with the amount of schoolwork I got done.” This item was rated on a 100-point visual analog slider scale of 0 (not at all) to 100 (extremely).

Computed Variables

Compositive academic engagement variables for studying and class were computed by averaging the three items that participants answered about their engagement in studying and class. For studying engagement, we added their ratings for being organized, prepared, and up to date and dividing by three. For the class engagement composite, we added participant ratings of attentiveness, participation, and the inverse of distraction and dividing by three. A total academic engagement composite score was created by averaging the class and studying composite scores.

Statistical Analysis

Prior to analysis, data were examined for completeness and quality. All data management, descriptives, and analyses were conducted using R version R v4.3.2 (R Core Team, 2023) and R Studio version 2023.12.0 Build 369 (RStudio Team, 2023). Random effects, intercept-only, multilevel models were conducted using the nlme package (Pinheiro et al., 2023). Multilevel reliability estimates were generated using the multilevel.reliability function, part of the psych package (Revelle, 2023).

Results

Descriptive Statistics

Participant Demographics and Compliance With the EMA Protocol

N = 108 participants were enrolled in the study. Of the N = 108, seven participants provided a response to <50% of expected surveys and were excluded from subsequent analyses. Participants in the analysis sample (n = 101) were on average 21 years of age (SD = 3.41; range = 18–44 years), 28% male, 74% identified as White (4% Asian or Pacific Islander, 5% Black or African American, 3% Latinx, and 14% more than one race). Students were distributed across academic levels: 12.7% were freshman, 23.5% were sophomores, 35.3% were juniors, and 26.5% were seniors. Eighty students (79.2%) reported a disability, the remaining 20.8% indicated that they did not have a disability. Self-reported disabilities were later categorized into the following types: learning/attention (27.7%), mental health (20.8%), chronic physical health (9.9%), sensory (3.0%), traumatic brain injury/concussion (3.0%), and mobility (3.0%). Multiple disabilities were reported by 10.9% of participants.

Participants in the analysis sample (n = 101) provided, on average, 29 beeped and nighttime surveys (SD = 4.95, Min = 18, Max = 40), and the overall mean survey completion rate was 84% of expected surveys. Beeped and nighttime surveys were uniformly completed across days in the measurement burst such that, on average, participants responded to at least one beeped and nighttime survey during 6.80 of the 7 study days (SD = 0.55, Min = 4, Max = 7).

Academic Engagement, Satisfaction With Academic Progress, and GPA

The average rating of academic engagement on the SCEQ was M = 3.7 (SD = 0.5, Min = 1, Max = 5). During the EMA measurement burst, participants reported attending class on M = 23.3% (SD = 10.3) of occasions and studying on M = 27.8% (SD = 19.5) of occasions. Quality ratings of academic engagement during class attendance showed that participants, on average, gave high ratings of their class participation (M = 69.5, SD = 15.5) and attention (M = 68.1, SD = 15.0) and lower ratings of distraction (M = 42.0, SD = 16.0). Quality ratings of academic engagement related to studying showed that participants, on average, gave high ratings of preparation for class (M = 64.7, SD = 18.6), being organized (M = 65.3, SD = 18.9), and up to date on class materials (M = 69.1, SD = 19.3). Participants’ average end-of-day satisfaction with schoolwork rating was M = 46.5 (SD = 17.7). The average self-reported GPA for the sample was M = 3.18 (SD = 0.5).

Associations Between SCEQ, EMA Academic Engagement, GPA, and Satisfaction

We conducted a correlation analysis to examine associations between EMA-based measures of academic engagement and the full-length SCEQ inventory, as well as criterion associations with overall GPA and end-of-day ratings of satisfaction with academic progress. The SCEQ does not dissociate between facets of academic engagement during class and study. Therefore, we used the EMA composite total academic engagement score for these analyses. A significant, but weak, positive correlation was observed between the SCEQ and the EMA composite total academic engagement score (r = 0.32, p < .01).

Criterion associations between the SCEQ, EMA composite total academic engagement score, GPA, and end-of-day satisfaction ratings indicated that the SCEQ was more strongly associated with overall self-reported GPA (r = 0.41, p < .01) than the EMA composite (r = 0.12, p = .23). However, the EMA composite was more strongly associated with end-of-day satisfaction ratings (r = 0.46, p < .01) than the SCEQ (r = 0.27, p < .01).

Reliability of EMA Academic Engagement

Results of random effects, intercept-only, multilevel models suggested that approximately 74% of variance observed in momentary reports of class-based academic engagement (Intraclass Correlation Coefficient (ICC) = 0.26) and 52% of variance in momentary reports of study-based academic engagement (ICC = 0.48) was within persons, over time. We conducted multilevel reliability analysis, based on Generalizability Theory (Cranford et al., 2006), to determine both the between-person and within-person reliability of these measures. For the class-based academic engagement composite, we observed between-person reliability of RBP = .96 and a within-person reliability of change estimate of RWP = .76. For the study-based academic engagement composite, we observed between-person reliability of RBP = .98 and a within-person reliability of change estimate of RWP = .66.

Discussion

The ENGAGE study of academic engagement presents proof-of-concept for use of EMA to improve capture of participation experiences and behaviors by examining: (a) cross-sectional and ambulatory methods of understanding academic engagement, (b) engagement behavior data compared with static indicators of academic progress, and (c) the within-person variability of engagement behavior during a typical week. The following paragraphs will outline our findings as they pertain to our three research questions, and then we will discuss applications of the EMA method to rehabilitation practice, future research, and our study limitations.

We were able to recruit a sample that included a high proportion of students who identify as disabled, with diversity across academic status. Of the 108 enrolled, only seven were not considered compliant with the protocol (completed less than 50% of expected surveys). This degree of participant non-compliance is not uncommon in EMA research, and our results aligned with previous reports from EMA studies (de Vries et al., 2021; Williams et al., 2021). Among the remaining analysis sample, we saw overall excellent compliance to the protocol suggesting that this approach may be feasible to study participation in samples with equivalent time constraints and demands (i.e., school or work demands).

We found relatively weak correlations between ambulatory and cross-sectional measures of engagement. Correlations between cross-sectional measures of academic engagement and GPA were stronger than ambulatory measures of engagement, and ambulatory measures of engagement had stronger relationships with end of day perceptions of progress than cross-sectional. Taken together, it appears that more global, cross-sectional measures of engagement may reflect a student’s “career history” as a student (e.g., aligned with their GPA), and the momentary assessment of engagement is more reflective of effort put forth in a given day or even a part of a day. These findings suggest that different aspects of engagement are captured by applying the two methods. Academic engagement is observed to impact persistence and performance as early as the first semester (Hoi, 2024), with evidence suggesting that engagement in the first semester of college predicting performance in future semesters (Casanova et al., 2024). Waiting for end-of-term grades or retrospective accounts of engagement may be too late for students who are struggling academically, with potential consequences to their persistence. Particularly for students receiving academic-support interventions, understanding if the students are using the delivered strategies and seeing improvements in the short-term is valuable. The information obtained via momentary assessment may be more useful to monitor students post-intervention, to document adherence and engagement in target behaviors (Dao et al., 2021). This more intensive view on students within their context is likely to be more timely and instructive than waiting for end-of-term grades or other outcome measures that might otherwise be used to assess intervention impact.

Multilevel estimates of reliability demonstrated stability of the ambulatory measures in our data. One advantage of EMA is the ability to repeatedly sample, which can result in highly sensitive data with high between-person reliability due to averaging. For both class-based and study-based academic engagement, we observed very high between-person reliability of these measures. Each exhibited slightly lower within-person reliability (i.e., within-person change). This is common in EMA research and unsurprising (Nezlek, 2017) given the focus of within-person reliability is on the sensitivity of single assessments during the burst protocol to changes that occur throughout the burst protocol. Within-person reliability, in this case, reflects both the effect size of change anticipated at the timescale measured (i.e. how much variation is expected on a survey-to-survey basis) and the precision of scores generated from the three individual items collected at each survey. On balance, because the study and protocol involves many assessments (five assessments/day over seven days), lower estimates of within-person reliability are statistically tolerable (Nezlek, 2017).

Implications for Rehabilitation Research and Practice

Benefits of EMA to Rehabilitation Practice and Interventions

Capturing information outside of clinical settings, in the person’s natural environment, makes information available that may inform treatments and interventions (Dijkers, 2010; McKeon et al., 2018; Whiteneck & Dijkers, 2009). Criticisms of rehabilitation interventions include difficulty transferring learning to the settings and situations where it is needed most. The benefit of EMA to intervention development for disabled populations is articulated well by McKeon et al. (2018),

Assessment methods delivered through a more contextually relevant approach, in the real world, at the moment when difficulties are naturally experienced, and arguably, where assistance is most needed, may more accurately reflect the experiences and the needs of individuals with CID [Chronic Illness and Disability] (p. 975).

Understanding what experiences are, within the days and moments of daily life, helps us to garner a better picture of participation and what, if anything, could support more satisfying outcomes for individuals.

EMA has been used extensively with disability populations for clinical purposes outside of rehabilitation counseling (Elbin et al., 2025; Juengst et al., 2021; Porras-Segovia et al., 2020). Feasibility and acceptability of use of EMA with disability populations has been promising. For example, Porras-Segovia et al. (2020) retained individuals experiencing significant mental health symptoms (e.g., suicidal ideations) at favorable rates to participants without mental health concerns. Elbin et al. (2025) observed good compliance and acceptability in their sample of adolescents post-concussion and their reporting of symptoms and adherence with practitioner recommendations. The ability of clients to report on their experiences, symptoms, and activities during a regular day or week provides rich data for counselors to use in assessment and treatment planning. For those delivering interventions, EMA can be leveraged to assess adherence and uptake of the intervention as an important fidelity measure.

Benefits of EMA to Participation Research

EMA provides an important opportunity for rehabilitation researchers and has been underutilized (McKeon et al., 2018). A known limitation of capturing information on participation outcomes are the broad nature of what participation is and how it is defined (Chang et al., 2013; Resnik & Plow, 2009; Whiteneck & Dijkers, 2009). Further, it is impossible to quantify information at the group level to be able to estimate whether observed participation is “good” or “bad,” or “adequate” or “inadequate” when the person’s context and typical behaviors are not taken into account or understood (Dijkers, 2010; Whiteneck & Dijkers, 2009). Leveraging our example from the ENGAGE study, we do not know the “right” amount of studying or class attendance for any participant. An innumerable number of personal and situational factors determine how much time spent engaging in class and studying will result in the optimal result for an individual. Engagement in academic tasks must also be considered within the context of the rest of a person’s life, and competes with social and self-care time, work, and maintaining other social roles such as friend or family member. Studies that rely on sample means assume that individuals are part of a homogeneous group and ignore individual differences in engagement behavior because of the underlying assumption that students are the same (Fisher et al., 2018). Person-oriented studies, capturing individual behavior including the day-to-day fluctuations in putting effort into attending class, paying attention and participating, and studying and preparing for class is where we are able to get a sense for what students do, when, and under what conditions (Fisher et al., 2018; Hoi, 2024). Using EMA, information on how time is spent, engagement in target behaviors, and contextual factors can all be recorded and considered within and between individuals, allowing for greater understanding of influences of performance of the target behavior over the course of the observation period.

Rehabilitation researchers applying EMA methods to participation research will need to consider the target behavior in question, the sample characteristics, and context when developing EMA studies. Questions to be addressed in study design include the estimated number of days of observation needed to assure that the target behavior is observed with sufficient frequency, number of assessments per day needed to ensure sufficient coverage, and balancing coverage with concerns of participant burden and compliance. An additional consideration is the development of items and surveys that align with the capture of experiences and behaviors. This may mean modifications to existing measures, or generation of new items where appropriate. Items written to capture general trends or perceptions must shift to the EMA style where participants are asked to report concrete observations of what has occurred or how one feels at the moment. In the ENGAGE example, we consulted definitions of engagement and conventional measures of engagement to determine how to assess for engagement behaviors throughout a day (Handelsman et al., 2005; Hoi, 2024). Modifications were made to shift the frame of reference from what a participant generally does to what they did in the last few hours. For example, we modified questions on general study habits (Handelsman et al., 2005) to whether a study session occurred in a given time frame and reflections of session quality. These shifts are minimal and allow for documentation of activity during a set time window, reducing the risk of biased responses based on typical behavior or self-perception of how a participant performs as a student (Shiffman et al., 2008).

Limitations

These findings must be considered within the following limitations. Our volunteer sample may not generalize to the broader population of college students with disabilities. Specifically, our sample had a higher proportion of White students (74%) than university enrollment data (62%) for the most recent enrollment year (Pennsylvania State University, 2024). Future research may consider enhanced recruitment strategies to enroll a sample with more racial and ethnic diversity mirroring university demographics. Data collected was all self-report and was not verified by objective observation or other information. We recruited students during the academic semester (Weeks 2–14) and assumed that behavior captured during this time was representative of typical habits and experiences, but we may have observed participants during an atypical time. Due to the nature of EMA studies and the need for brief measures, we selected a few items to represent global concepts of engagement and thus may not have captured all engagement participation. In our protocol, students were only able to report one academic engagement event per beeped survey. If students reported that they were in class, they were not asked about studying. Therefore, actual studying periods may have been higher than captured in our data. Despite these limitations, our study has implications for application of EMA methodology to rehabilitation research on community participation.

The ENGAGE study used a mobile application to collect information from users, recorded on lab-owned phones. This approach requires intensive contact with research team members and coordination with student schedules to collect the data needed. Although not experienced in our study, risks such as loss of phones or chargers, damage to phones, and missed appointments can threaten efficiency of data collection. Future research will not rely so heavily on individual contacts with the research team thanks to innovations to ambulatory applications that are available for download to a user’s phone, allowing for broad deployment of studies to a larger pool of potential participants. We did not have reports of difficulty with accessibility, but we did have to constrain participation to those who could use the smart phone features without assistance. Allowing users to leverage their own technology is likely to increase accessibility in that users have their own settings and devices in such a way that supports their own use. Particularly for samples of individuals with disabilities, this expansion will ensure more representative samples.

Conclusions

Improving capture of individual’s participation in desired community activities supporting valued social roles is a high priority in rehabilitation research (Imms et al., 2016; Kossi et al., 2024; Mallinson & Hammel, 2010), and most conventional tools have known limitations because of reliance on participant recall and lack of ecological validity of measures (Schwarz, 2007; Shiffman et al., 2008; Trull & Ebner-Priemer, 2009). The ENGAGE study of academic engagement provides a proof of concept for the EMA method in participation research. Compliance to the protocol was excellent, data showed high reliability, and EMA data provided distinct information on engagement from conventional measures. EMA is underutilized in rehabilitation research and provides significant opportunity for observational research and intervention development because of its utility in capturing objective and accurate data on how often, when, and under what conditions individuals perform desired activities representing meaningful community participation.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

This study was supported by the National Institute on Aging of the National Institutes of Health under award numbers K99AG056670 and R00AG056670.