Abstract

Objective:

The Centers for Disease Control and Prevention’s (CDC’s) Evaluation Fellowship Program is a 2-year fellowship that includes training, placement with a CDC program, and professional development funds. We evaluated whether the program contributed to CDC’s evaluation capacity, prepared fellows for evaluation work, and contributed to their career advancement during its first 10 years.

Methods:

We used a mixed-methods approach, including conducting an online survey and telephone interviews. External evaluators sent surveys to all 152 alumni and all 123 mentors who participated in the program from 2011 through 2020 (first 8 cohorts) and interviewed 9 mentors and 15 alumni.

Results:

A total of 110 alumni (72.4%) and 44 mentors (35.8%) completed surveys. Of 44 mentors, most agreed their fellow(s) contributed to their program’s overall evaluation capacity (90.9%) and its ability to do more evaluation (88.6%). Most (84.2%-88.1%) alumni agreed that the Evaluation Fellowship Program prepared them to apply the 6 skill sets that aligned with CDC’s Framework for Program Evaluation in Public Health. Support from the Fellowship office was significantly and positively correlated with performing evaluation tasks (β = 0.25; P = .004) and alumni obtaining their first job (β = 0.36; P < .001). Host program mentoring was significantly correlated with performing evaluation tasks (β = 0.27; P = .02) and alumni obtaining their first job (β = 0.34; P = .007).

Conclusion:

CDC’s Evaluation Fellowship Program has made progress toward building CDC’s evaluation capacity and preparing a public health workforce to use evaluation skills in various settings. A service-learning model that provides training and applied experiences could prepare a workforce to build evaluation capacity.

Recent federal initiatives have emphasized the importance of evaluation in advancing public health strategies. The US Government Accountability Office defines evaluation as “an assessment using systematic data collection and analysis of one or more programs, policies, and organizations intended to assess their effectiveness and efficiency” 1 ; evaluation includes a range of activities within research and practice contexts, such as efficacy and effectiveness studies, program monitoring and improvement, and economic analyses. The Foundations for Evidence-Based Policymaking Act of 2018 (hereinafter, Evidence Act) 2 positions evaluation as a core function for all federal agencies and points to its critical role in helping agencies demonstrate their impact and improve their strategies. Public Health 3.0 3 calls for increasing “actionable data” and metrics to advance complex initiatives and emphasizes workforce training and leadership as key approaches to achieving related Healthy People 2030 goals. 4 Hence, an opportunity exists to develop a public health workforce to promote ongoing evaluation of public health strategies and build organizational capacity. 3

Evaluation capacity building typically includes a multipronged approach intended to influence organizational structures, processes, and workforce capabilities.5-7 In particular, evaluation capacity building focuses on “intentional work to continuously create and sustain overall organizational processes that make quality evaluation and its uses routine.” 6 As the nation’s public health protection agency, the Centers for Disease Control and Prevention (CDC) has an important role in building evaluation capacity in public health through its funding, technical assistance, and workforce development.

Program

Evaluation Fellowship Program

In 2010, CDC established its chief evaluation officer as a leadership position, which was followed by development of evaluator position descriptions, implementation of requirements in funding announcements, creation of a CDC Evaluation Day and evaluation awards, and establishment of evaluation consultation services within its Program Performance and Evaluation Office (PPEO). 8

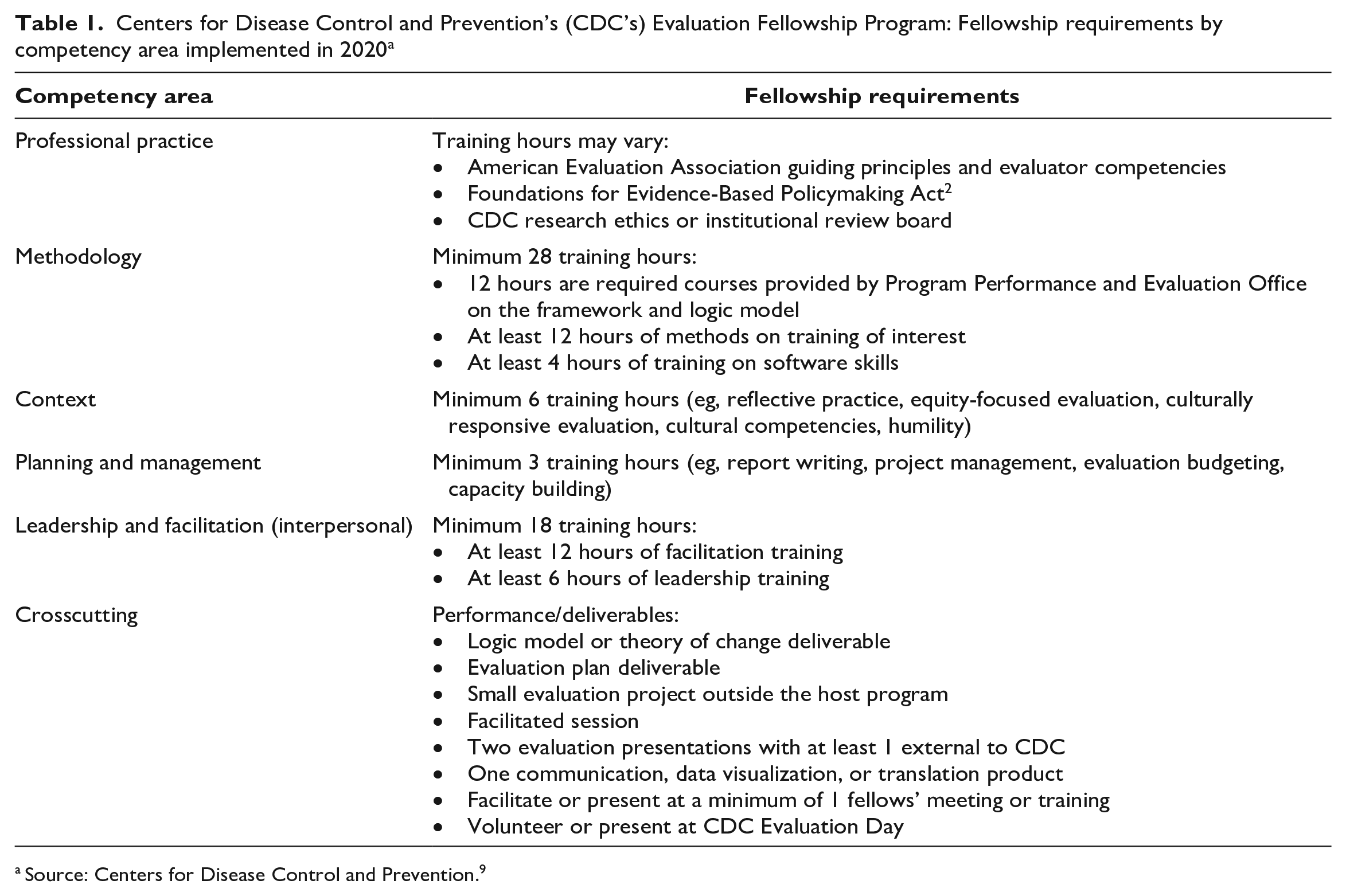

In 2011, CDC started the Evaluation Fellowship Program (hereinafter, Fellowship) to build capacity to conduct high-quality evaluations within the agency and to train a workforce with the skills to improve evaluation practices within public health more broadly. PPEO administers the program, recruits host programs, provides mentorship to fellows, hosts separate fellow and mentor meetings, and coordinates some training activities. As the Fellowship has evolved, it has maintained its core elements, including a 2-year placement with a CDC host program, professional development funds, 20% time for training and service-learning opportunities, completion of an evaluation project in a CDC office outside the host program, Fellowship-sponsored trainings, and public service activities (Table 1). The Fellowship also recently integrated 5 competencies (professional practice, methodology, context, planning and management, and leadership and facilitation) adapted from the American Evaluation Association’s competencies for evaluators. 10 The Fellowship regularly collects information for program monitoring and improvement, including midyear and evaluation project surveys completed by fellows and mentors, a fellows’ departure survey, and feedback collected during check-in calls and meetings.

Centers for Disease Control and Prevention’s (CDC’s) Evaluation Fellowship Program: Fellowship requirements by competency area implemented in 2020 a

Source: Centers for Disease Control and Prevention. 9

Evaluation Purpose and Questions

The evaluation team (the authors of this article), which comprised 2 external and 3 CDC evaluators, assessed the Fellowship’s progress toward its long-term goals. We addressed 3 questions: (1) To what extent did fellows perceive that the Fellowship prepared them to do evaluation work? (2) How did fellows view Fellowship supports contributing to their career advancement? and (3) In what ways did mentors perceive the Fellowship contributed to increasing CDC’s evaluation capacity?

Methods

Participants and Procedure

The 2 external evaluators (G.K. and J.Z.) collected all data from November 2020 through April 2021 and shared only deidentified data with CDC. We invited all past fellows (alumni) from the 2011 through 2018 Fellowship cohorts (n = 152) to participate in the alumni survey (n = 85 currently at CDC, n = 67 no longer at CDC). Of 152 alumni, 110 (72.4%) completed surveys: 62 (56.4%) from 2011 through 2015 cohorts (early cohorts) and 47 (42.7%) from 2016 through 2018 cohorts (recent cohorts). One participant did not report the cohort year. Because of changes made by the Fellowship during its first decade for program improvement, the potential for recall bias, and the alumni’s varying time in the workforce postgraduation, we examined differences between the early and recent cohorts.

We also invited all mentors currently at CDC (n = 123) to participate in the mentor survey. Mentors who were no longer at CDC were not included because the program does not track mentors once they leave the agency. However, we included 2 individuals who had served as mentors for multiple fellows and for whom the program had recent contact information. Of 123 mentors, 44 (35.8%) completed surveys. During the COVID-19 pandemic, many mentors served on CDC’s COVID-19 emergency response, which may have impeded their ability to participate in the surveys or interviews. However, 32 (72.7%) respondents had served as mentors for ≥2 fellows, representing mentorship during multiple cohorts, and 6 were also alumni of the Fellowship. Most mentors served from 2014 through 2017 (n = 29, 65.9%); 8 (18.2%) served as mentors from 2011 through 2013, and 7 (15.9%) began in 2018.

After survey administration, we invited survey respondents to participate in individual interviews to provide further insight into their experiences with the Fellowship. One evaluator (J.Z.) conducted all 24 interviews: 9 with mentors and 15 with alumni. Mentors reported having served as primary mentors for 1 to 10 fellows. Alumni participants began their fellowships anywhere from 2012 through 2018.

The evaluation protocol was reviewed through CDC’s institutional review board process to determine if human subjects research applied, and the evaluation was determined to be exempt from human subjects review because it was nonresearch program evaluation.

Survey Measures

We developed a 31-item mentor survey (including 2 open-ended items) and a 28-item alumni survey (including 4 open-ended items). We used the following 7 mentor survey items to assess fellows’ contribution to their host program’s evaluation capacity: evaluation skills, practices, products, structures, abilities, quantity, and 1 item that asked about improving “overall evaluation capacity.” We used 7-point Likert scales (1 = strongly disagree to 7 = strongly agree) for the mentor survey response categories and considered responses >4 (neutral) as endorsing each contribution.

We included 6 items in the alumni survey to measure the extent to which the Fellowship prepared alumni with skills related to each of the 6 steps of the CDC Framework for Program Evaluation in Public Health11,12 (hereinafter, CDC Evaluation Framework): Gather Stakeholders, Describe the Program, Focus Evaluation Design, Gather Credible Evidence, Justify Conclusions, and Ensure Use and Share Lessons. Response options for these items ranged from 1 = not at all to 7 = very much. In addition to examining responses to these items individually, we also averaged responses across the 6 items to create a composite measure, which we labeled “prepared to perform evaluation tasks” (Cronbach α = 0.94). We also measured “perceptions of the Fellowship” among alumni using 2 items that asked whether the Fellowship helped them obtain a first job and perform in their first job after the Fellowship and 4 items that asked about mentoring and support they received from PPEO and their host program. We used 7-point Likert scales (1 = strongly disagree to 7 = strongly agree) for the response categories. We used the 6-item composite measure and the 6 items on alumni “perceptions of the Fellowship” to examine the contribution of the Fellowship to alumni career advancement.

Interview Questions and Data Collection

The team designed interview questions to solicit details and context on survey items. For mentors, we included questions that asked about the ways fellows have contributed to evaluation capacity in their host program, CDC, and the public health field. For alumni, we asked how the Fellowship contributed to their skills and career and how specific Fellowship supports (eg, mentors, Fellowship office, training experiences) contributed to their skills and career.

One external evaluator (J.Z.) recruited and conducted interviews with participants from the pool of survey respondents who indicated at the end of their survey that they would be willing to be interviewed. To preserve survey confidentiality, those who responded yes were directed to a separate form. The evaluators used purposive sampling to ensure representation across Fellowship years and contacted additional mentors from cohorts that were not represented in the first pool of survey respondents.

Analysis

We used an explanatory sequential mixed-methods design 13 to analyze the data, using qualitative interview findings to provide context for understanding the quantitative survey responses from alumni and mentors. Primary analysis of the quantitative data involved calculating frequencies of responses to survey items. We also performed the following analysis: (1) comparing mean responses in the alumni survey from recent cohorts (2016 through 2018) with responses from early cohorts (2011 through 2015) and (2) comparing mean responses for mentors who had worked with ≥2 fellows versus those who had served as a mentor for 1 fellow. We compared mean responses in the alumni survey from recent cohorts (2016 through 2018) with responses from early cohorts (2011 through 2015) using an independent-samples t test. Similarly, we also compared mean responses of mentors who had worked with ≥2 fellows with responses of those who had served as a mentor for 1 fellow using an independent-samples t test. Few of these tests exceeded the critical values of t106 = 1.98 for alumni data or t42 = 2.02 for mentor data (where the subscript represents df), suggesting that responses were consistent across key subgroups of participants.

We conducted linear multiple regression analyses to assess the independent contributions of Fellowship support to each of the 3 indicators of skills and job performance. We controlled for the year in which alumni entered the Fellowship to account for differences that could be attributed to the evolution of the program over time, recall bias, and time in the workforce after graduation from the Fellowship. We assessed regression coefficients as significant at P < .05, 2-tailed, when corresponding t values (110 df) exceeded the critical value of 1.98 and at P < .01 when corresponding t values (110 df) exceeded the critical value of 2.62.

We used the standardized β coefficient to compare the strength of the effect of each support with each area of preparation for evaluation tasks. The higher the absolute value of the β coefficient, the stronger the effect. Given the moderate to strong correlations among the indicators of Fellowship support (r = 0.34-0.73), we examined tolerance statistics and found them to be within an acceptable range (>10).

Results

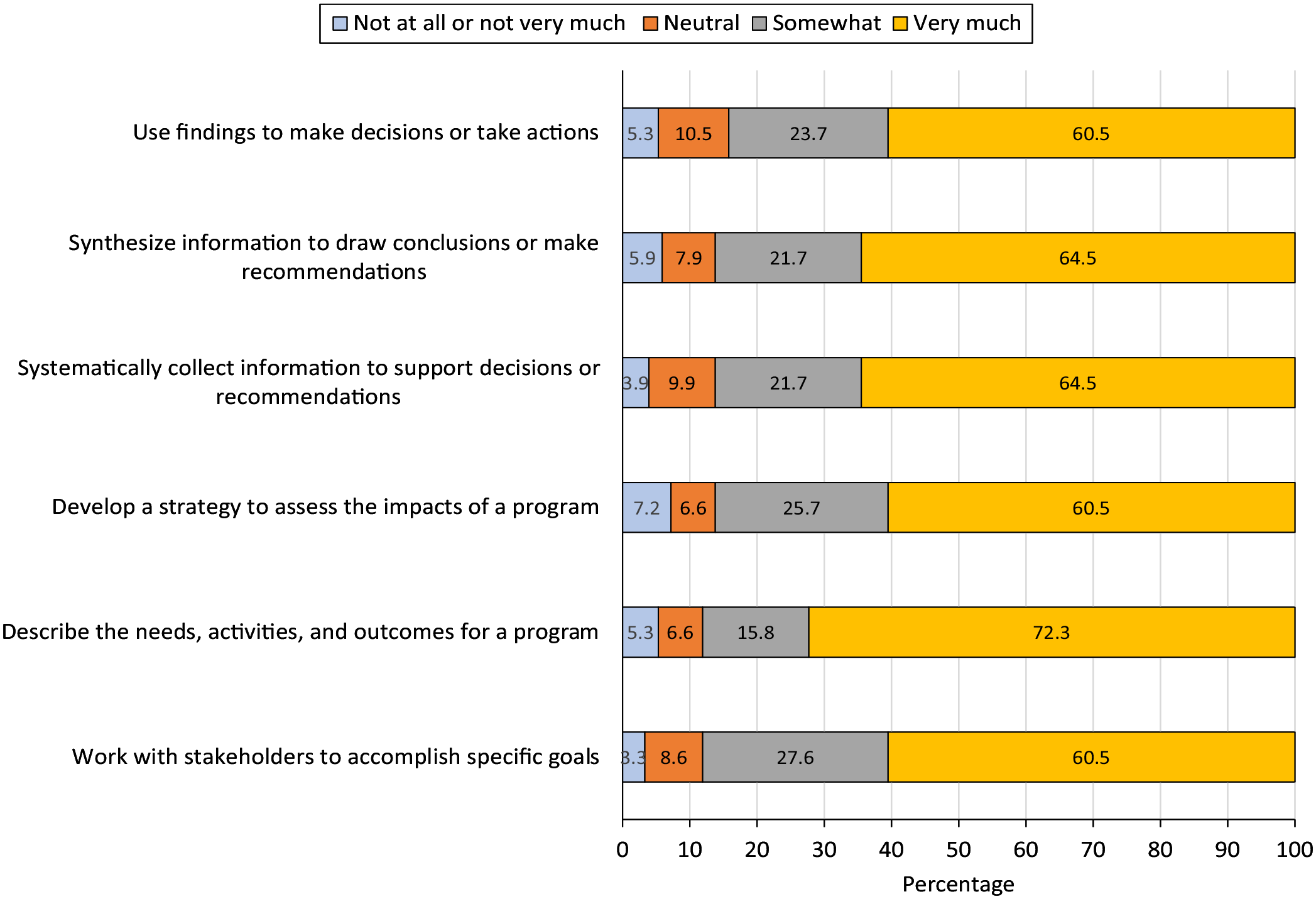

Contribution of the Fellowship to Prepare Alumni for Evaluation Work

For questions that examined the extent to which the Fellowship prepared alumni to apply the 6 skill sets that aligned with the CDC Evaluation Framework (Figure 1), most alumni reported that the Fellowship prepared them “very much.” In addition, most alumni (121/152, 79.6%) responded that the Fellowship helped them to obtain their first post-Fellowship position (mean [SD] response = 5.7 [1.1]) and to perform effectively in their first post-Fellowship position (125/152, 82.2%; mean [SD] response = 5.7 [1.5]) (Table 2).

Reports of contribution of the Centers for Disease Control and Prevention’s Evaluation Fellowship Program to the professional skills of Fellowship alumni from cohorts 2011-2020 (n = 152).

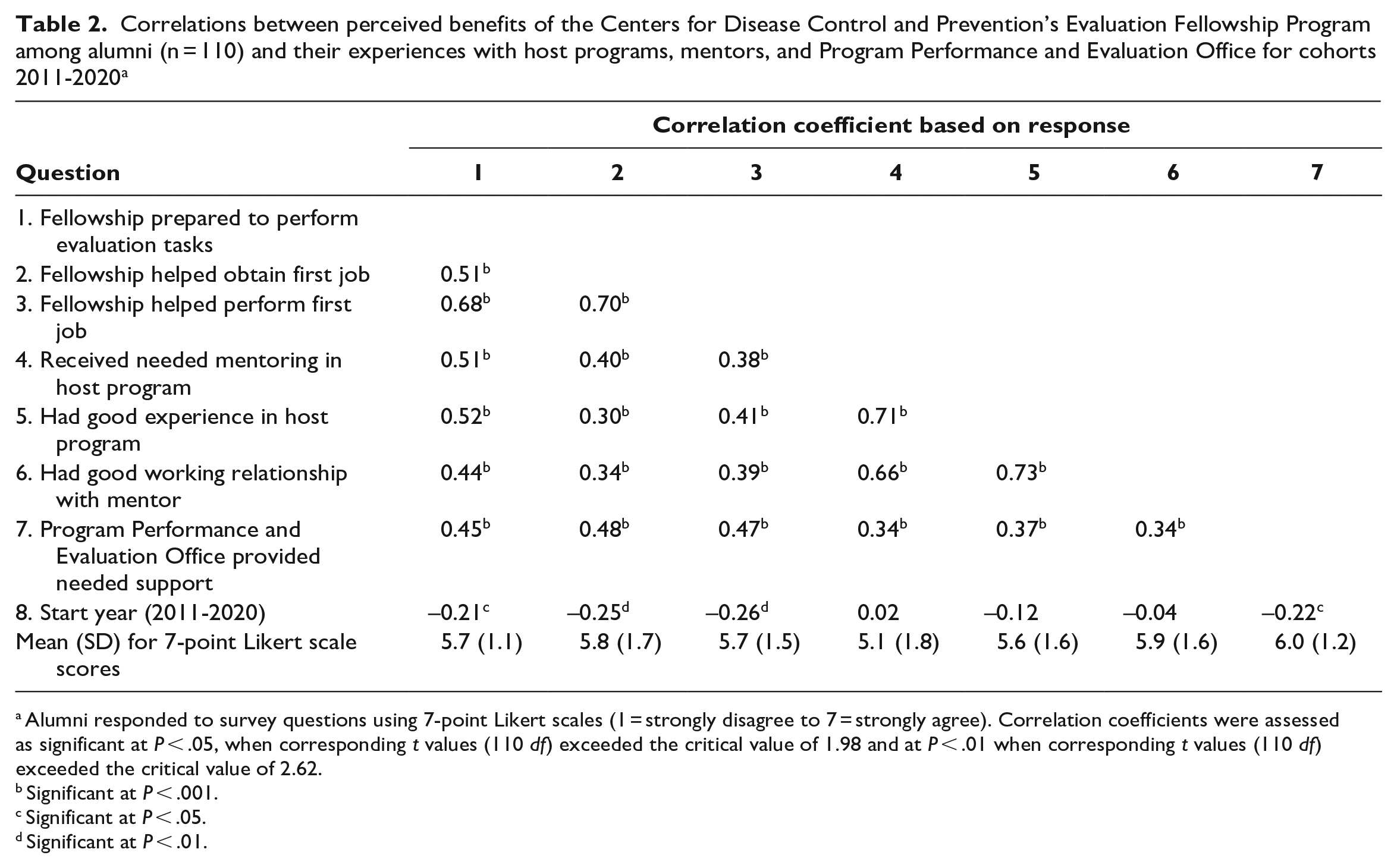

Correlations between perceived benefits of the Centers for Disease Control and Prevention’s Evaluation Fellowship Program among alumni (n = 110) and their experiences with host programs, mentors, and Program Performance and Evaluation Office for cohorts 2011-2020 a

Alumni responded to survey questions using 7-point Likert scales (1 = strongly disagree to 7 = strongly agree). Correlation coefficients were assessed as significant at P < .05, when corresponding t values (110 df) exceeded the critical value of 1.98 and at P < .01 when corresponding t values (110 df) exceeded the critical value of 2.62.

Significant at P < .001.

Significant at P < .05.

Significant at P < .01.

Many alumni described how the service-learning structure of the Fellowship contributed to “on-the-job learning. I had access to subject matter experts. I also learned how cooperative projects are done.” A few alumni explained how they benefited from opportunities beyond learning technical evaluation skills. One alumnus explained, “I learned skills that I still use today: leading groups, capacity building, getting buy-in from others regarding the importance of evaluation . . . and how to influence the agenda when you are not a leader.”

Contribution of the Fellowship to Skills and Career Advancement in Fellows

Alumni reported positive perceptions that they (1) received needed mentoring in their host program (mean [SD] response = 5.1 [1.8]), (2) had good experiences in their host program (mean [SD] response = 5.6 [1.6]), (3) had a good working relationship with their assigned mentor (mean [SD] response = 5.9 [1.6]), and (4) received needed support from PPEO (mean [SD] response = 6.0 [1.2]). These 4 indicators of Fellowship supports correlated positively with each of 3 indicators of skills and job performance: preparation to perform evaluation tasks (composite measure; r = 0.44-0.52), perceptions that the Fellowship helped alumni obtain their first job (r = 0.30-0.48), and perceptions that the Fellowship helped alumni perform their first job effectively (r = 0.38-0.47) (Table 2).

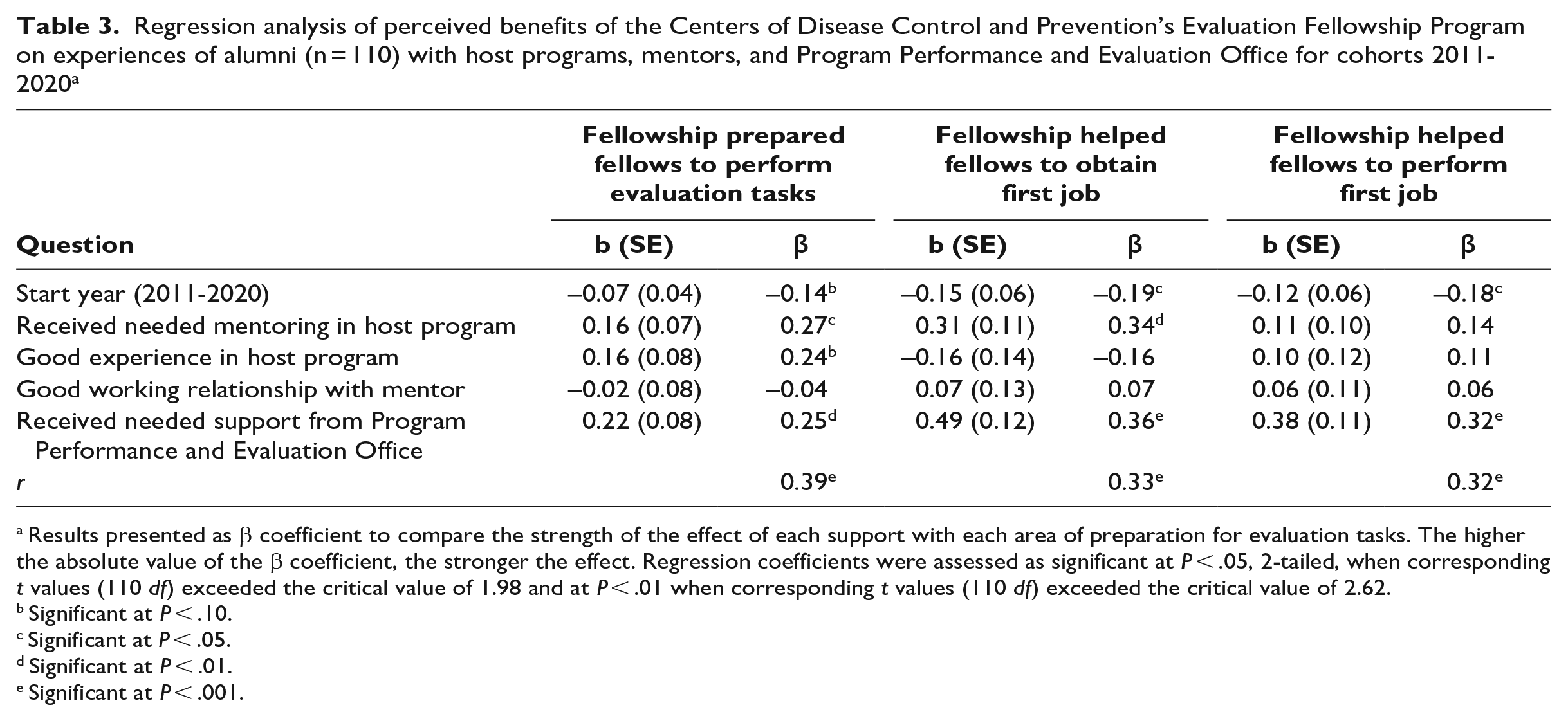

After controlling for start year, we found that perceptions of having received needed supports from PPEO contributed significantly and positively to preparation to perform evaluation tasks (β = 0.25; P = .004), perceptions that the Fellowship helped alumni obtain their first job (β = 0.36; P < .001), and perceptions that the Fellowship helped alumni perform their first job effectively (β = 0.32; P < .001) (Table 3). In addition, perceptions of mentoring received from the host program contributed significantly and positively to preparation to perform evaluation tasks (β = 0.27; P = .02) and perceptions that the Fellowship helped alumni obtain their first job (β = 0.34; P = .007). Alumni perceptions of having had a good experience in the host program and of having had a good working relationship with their mentor were not independently related to any of the 3 indicators of skills and job performance.

Regression analysis of perceived benefits of the Centers of Disease Control and Prevention’s Evaluation Fellowship Program on experiences of alumni (n = 110) with host programs, mentors, and Program Performance and Evaluation Office for cohorts 2011-2020 a

Results presented as β coefficient to compare the strength of the effect of each support with each area of preparation for evaluation tasks. The higher the absolute value of the β coefficient, the stronger the effect. Regression coefficients were assessed as significant at P < .05, 2-tailed, when corresponding t values (110 df) exceeded the critical value of 1.98 and at P < .01 when corresponding t values (110 df) exceeded the critical value of 2.62.

Significant at P < .10.

Significant at P < .05.

Significant at P < .01.

Significant at P < .001.

Alumni indicated a number of opportunities that contributed to their learning and skills building. Formal training, which was facilitated by having ample professional development funds, was commonly cited by alumni as a way they learned specific skills, such as methods and data visualization. One alumnus explained how working at host programs was helpful: “I had a champion within the program. I had very specific projects laid out and I got very direct and applicable feedback.” Another alumnus reported, “I got hands-on experience with planning and implementation.”

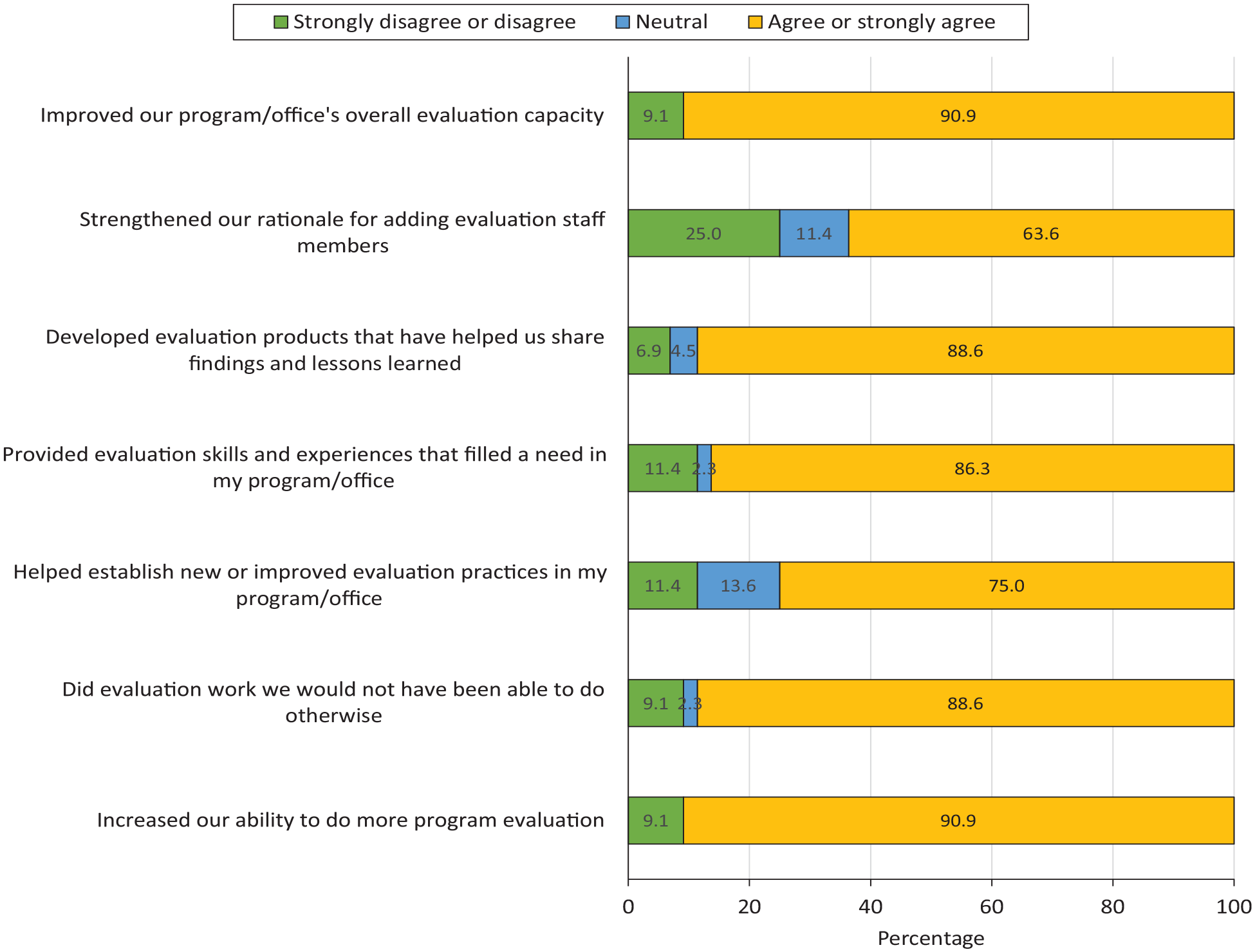

Contribution of the Fellowship to Increased Evaluation Capacity at CDC

Almost all mentors agreed that their fellows contributed to their program’s overall evaluation capacity (40/44, 90.9%) and their ability to do more evaluation (39/44, 88.6%) and work they would not have otherwise been able to do (39/44, 88.6%) (Figure 2). Most mentors also agreed that their fellows helped establish new practices (33/44, 75.0%), provided evaluation skills and experiences to their program (39/44, 88.6%), and developed products that helped share findings (39/44, 88.6%). Most mentors (28/44, 63.6%) reported that fellows strengthened their rationale for adding evaluation staff.

Level of agreement among mentors (n = 44) on how fellows in the Centers for Disease Control and Prevention’s Evaluation Fellowship Program contributed to their current or most recent program fellowship for cohorts 2011-2020.

In interviews, mentors discussed how fellows contributed to CDC’s evaluation capacity overall. One mentor explained how fellows have contributed to the agency’s capacity through their reach into agency programs: “Fellows go into places that have no evaluation. They have expanded the consciousness of the agency in terms of the importance of evaluation.” Another mentor explained, “Many [fellows] take on leadership positions, they continue to network with CDC [after the Fellowship], with other evaluators, and with public health professionals.”

Several mentors discussed how fellows contribute to CDC’s evaluation capacity by working in programs with few evaluation resources, bringing new ideas from trainings, and “[expanding] the pool of resources that CDC has to address evaluation.” One mentor explained, for example, that “by working on small projects outside of their primary projects, they are increasing the overall evaluation capacity of the agency.”

Mentors identified skill sets that fellows brought to their programs that filled specific needs and built evaluation capacity. Some mentors described how fellows had skills in data management and analysis and “brought expertise we didn’t have that they gained from the trainings, such as data visualization.” Mentors also explained how fellows contributed to their programs. Some fellows contributed to programs by presenting evaluation findings and publishing articles, which resulted in “visibility to [the] program by showcasing the work they had done.” Another way fellows contributed was by filling evaluation staffing needs, which increased their programs’ ability to do more evaluation work. For example, fellows could “take on projects” within their own program and “had time to work on small projects [outside their program] when no one else did.”

Challenges Faced During the Fellowship Experience

Although most participants reported positive experiences with the Fellowship, some alumni and mentors reported challenges. Two alumni expressed frustration that they were not hired as permanent employees after the Fellowship. From 2014 through 2020, 50% to 60% of Fellowship graduates remained at CDC as civil servants or contractors or through other mechanisms; however, the ability to hire within programs can vary from year to year based on budget and hiring guidelines. A few mentors also believed that some programs continuously hired fellows in lieu of permanent staff and found this problematic. Another issue for some alumni was their fit and relationship with their mentors. In addition, a few mentors reported that some fellows start the program needing more guidance and “hand holding” than others because of varying evaluation skills and professional experience.

Lessons Learned

Evaluation is a critical public health function, 2 and workforce development is essential to building evaluation capacity. 3 Our findings support that, overall, alumni perceive that the Fellowship contributed to their skills and experiences to conduct evaluation activities reflected in the CDC Evaluation Framework. Mentors pointed to numerous ways that fellows have contributed to CDC’s evaluation capacity, a long-term goal of the Fellowship. These activities include working with programs and partners, applying methodological and analytical skills, and using data for critical public health actions. Mentors reported that fellows contributed to their program’s capacity by improving both the amount of evaluation work that they were able to do and the quality of their work through bringing key skill sets, establishing practices, and developing products. Importantly, most mentors agreed that fellows helped their program and CDC do work they would otherwise not have been able to do. In addition, the Fellowship has served as a pipeline to bring evaluators to CDC programs and other public health organizations.

The Fellowship’s service-learning model, 14 which provides training and applied experiences, may be beneficial in preparing workforce capacity for evaluation. Alumni explained that, beyond technical evaluation skills, opportunities to learn management skills, facilitation, and informal leadership roles were experiences that transferred to future professional roles. Other alumni described how being able to apply training concepts to their evaluation work helped build their skills and open leadership opportunities. Mentors commonly reported that fellows brought new and different skills from training to strengthen their program. Some alumni reported that they had challenging placements and mentor relationships. However, responses from fellows on their relationships with mentors and host programs were not significantly associated with their skills and job performance post-Fellowship. This finding may have been due in part to varied opportunities provided both within and outside host programs, multiple mentors, and supports from PPEO.

This evaluation had several limitations. First, because participants included alumni from 8 cohorts, their perspectives may vary by events that occurred during their particular time (a cohort effect), thus having potential for recall bias. We found, however, that for most measures, differences between early (2011-2015) and recent (2016-2018) cohorts were not significant. Second, the mentor survey response rate was relatively low (35.8%). Although most respondents had been mentors across multiple cohorts, our findings may not capture the views of one-time mentors. Finally, our measures of evaluation capacity relied on mentors’ reports of their perceptions. Although mentors may be well positioned to observe contributions of fellows, having measures of actual products, practices, and structures that result from contributions of fellows would provide stronger support in future evaluations.

Despite these limitations, the evaluation findings support the investment in workforce training as a potential strategy to build evaluation capacity. Fellowship graduates can bring together their formal training and applied experience within an organization to provide evaluation leadership and build networks of professionals prepared to meet current and future public health challenges. Future evaluation of the Fellowship could include additional agency perspectives on fellows’ contributions to CDC’s evaluation capacity, as well as documentation of fellows’ contributions through evaluation products, practices, and structures.

Footnotes

Disclaimer

The findings and conclusions in this article are those of the authors and do not necessarily represent the views of the Centers for Disease Control and Prevention.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.