Abstract

Objectives

The Pregnancy Risk Assessment Monitoring System (PRAMS), conducted by the Centers for Disease Control and Prevention in collaboration with state health departments, is the largest state-level surveillance system that includes a question on the intention status of pregnancies leading to live birth. In 2012, the question was changed to include an additional response option describing uncertainty before the pregnancy about the desire for pregnancy. This analysis investigated how this additional response option affected women’s responses.

Methods

We used the change in the pregnancy intention question in 2012 as a natural experiment, taking advantage of relatively stable distributions of pregnancy intentions during short periods of time in states. Using PRAMS data from 2009-2014 (N = 222 781), we used a regression discontinuity-in-time design to test for differences in the proportion of women choosing each response option in the periods before and after the question change.

Results

During 2012-2014, 13%-15% of women chose the new response option, “I wasn’t sure what I wanted.” The addition of the new response option substantially affected distributions of pregnancy intentions, drawing responses away from all answer choices except “I wanted to be pregnant then.” Effects were not uniform across age, parity, or race/ethnicity or across states.

Conclusions

These effects could influence estimated levels and trends of the proportion of births that are characterized as intended, mistimed, or unwanted, as well as estimates of differences between demographic groups. These findings will help to inform new strategies for measuring pregnancy and childbearing desires among women.

Virtually all population-based surveys of individual-level fertility experiences include retrospective questions to measure intentions for past pregnancies. These questions ask women to recall how they had felt, before the pregnancy, about becoming pregnant or having a baby.

A growing body of research suggests that measures of pregnancy intentions should allow for a wider and more realistic range of responses than has been available to date, particularly the ability to characterize prior feelings as ambivalent or unformed.1-9 To address this need, some surveys included additional response options to the pregnancy intention measure.10,11 This strategy was adopted in 2012 by the Pregnancy Risk Assessment Monitoring System (PRAMS) surveys conducted annually by the Centers for Disease Control and Prevention in collaboration with state health departments.

Data on pregnancy intentions on the PRAMS questionnaire are obtained by asking respondents to think back to the time just before their recent pregnancy resulting in birth and to recall how they felt about becoming pregnant at that time. The response options did not change for more than a decade (from 2000 to 2011):

Thinking back to just before you got pregnant with your new baby, how did you feel about becoming pregnant? (Check

I wanted to be pregnant sooner.

I wanted to be pregnant later.

I wanted to be pregnant then.

I didn’t want to be pregnant then or at any time in the future.

Traditionally, researchers refer to pregnancies for which women selected “I wanted to be pregnant sooner” or “I wanted to be pregnant then” as “intended.” “Mistimed” pregnancies are those for which women had wanted the pregnancy to occur later, and “unwanted” pregnancies are those for which women responded that they did not want to become pregnant then or at any time in the future. Because the mistimed and unwanted categories imply that the pregnancy was not desired at the time it occurred or at all, these 2 groups are often combined and termed “unintended” pregnancies.

Each state participating in PRAMS administers a set of core questions (uniform across states) among which the pregnancy intention question has always been included. Beginning with the 2012 version of the survey (Phase 7), PRAMS added a fifth response option to the core pregnancy intention question: “I wasn’t sure what I wanted.” This option refers to uncertainty in the respondent’s feelings immediately before the pregnancy, not at the time of the survey. The order of response categories was also changed slightly, with “I wanted to become pregnant later” moving from the second to the first category.

The addition of the fifth answer option in PRAMS expands the traditionally constructed measure of pregnancy intentions to include women who had not formed clear desires or intentions before the pregnancy or who had had mixed feelings about the pregnancy. Previous surveys that included this option found that substantial proportions of women chose it.10,11

When a question has 5 answer options, the proportion choosing each option will be smaller than when a question has only 4 answer options. However, what is unknown is how the additional response category will affect the relative proportion of women choosing the previously existing response categories. For example, women who would have responded that they “wanted to be pregnant later” if they had been presented with only 4 answer choices may be more likely to choose the new option (“I wasn’t sure what I wanted”) than women who would have responded that they “wanted to be pregnant then.” Understanding the effects of the change in response options is critical because changes in the proportion of women who select each response category could affect the comparability of estimates of the proportion of births that are identified as intended, mistimed, or unwanted across time in each state. This comparability of estimates is of special concern given the wide use of these surveys in tracking statistics over time12-15 and in investigating associations between pregnancy intention and other maternal health behaviors and infant outcomes, which often involve pooling data across years.16-19 Even if the relative proportions selecting each response category did not shift in the overall population, proportions may have shifted differentially across population groups; if women with certain demographic characteristics were more likely to select the “I wasn’t sure” option, this selection could affect patterns of group differentials and reported associations with other behaviors.

The objective of this study was to investigate how the change in response options affected reporting of pregnancy intentions in PRAMS, with a particular focus on comparability over time. More broadly, we explored how the addition of a response option that allows women to report having been uncertain before their pregnancy may affect measurement of pregnancy intentions.

Methods

In this analysis, we used the change in the PRAMS pregnancy intention question in 2012 as a natural experiment, taking advantage of the fact that, within states, the distribution of pregnancy intentions among women having live births has been fairly stable during short periods. After describing overall changes in the proportion of women reporting each response category, we used a regression discontinuity-in-time design to formally test for differences between the periods immediately before and after the question change. We then examined whether the change had differential effects across demographic groups.

Data

The PRAMS questionnaire is mailed to women who had a recent live birth (usually within 2 to 6 months after delivery). Each state’s sample is drawn from vital records, and some populations are oversampled to create annual, representative data at the state level of all women delivering during that year. 20 In 2018, forty-seven states, New York City, Puerto Rico, the District of Columbia, and the Great Plains Tribal Chairmen’s Health Board participated in PRAMS, covering approximately 83% of all live births in the United States. 21

We limited our analytical sample to jurisdictions that had data for at least 1 year during 2009-2014 and response rates ≥65% in a given year. Our sample comprised 222 781 women from 36 states and New York City. Not all states contributed data in each of the 6 years. We performed sensitivity tests by using data only from states that had data for all 6 years; these analyses had a sample size of 168 462 women from 16 states. We conducted all analyses in Stata version 15.1, 22 with weighted data and standard errors adjusted to take into account the complex sample design of PRAMS. The Guttmacher Institute Institutional Review Board determined that this analysis was exempt from institutional review board review.

Measures

We examined pregnancy intention in relation to several measures in the PRAMS surveys: year of interview (2009-2014), age at which the woman gave birth (≤17, 18-19, 20-24, 25-29, 30-34, 35-39, 40-44), her number of previous live births (0, 1, ≥2), and her race/ethnicity (non-Hispanic white, non-Hispanic black, Hispanic, and non-Hispanic other; the latter includes all non-Hispanic women who reported races other than white or black or multiple races). We focused on 3 core demographic measures—age, parity, and race/ethnicity—because pregnancy intentions vary widely by these characteristics, and the extent to which the effect of the question change varies by these 3 core demographic measures is sufficient to demonstrate the need for careful consideration of any future analyses using these data. Although other measures are also related to pregnancy intention, a comprehensive investigation was beyond the scope of this analysis.

Analysis

We first produced descriptive statistics using logistic regressions predicting the proportion of women selecting each of the response categories in each year. For these descriptive statistics, we included “missing” as a valid response to examine item nonresponse rates before and after the question change. Because some differences could be driven by which states fielded surveys in which year (the proportion of women reporting each response category varies substantially by state), we included state fixed effects in each regression to control for differences in sets of states contributing data.

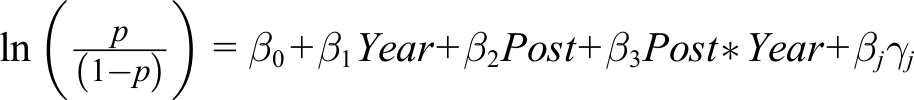

We then used a regression discontinuity-in-time design 23 to estimate the effect of the question change on the odds of women selecting each response option, net of trends in the years before (2009-2011) and after (2012-2014) the question change. The log odds of selecting each response option were predicted by using an equation of the form

where Year represents year of the survey minus 2011 (to center the values of the variable on the year before the question change),

24

Post is a variable indicating whether the respondent was asked the intention question before or after the question change, and

A major assumption of regression discontinuity-in-time designs is that the functional form of the assignment variable (in our analysis, year of the survey) is correctly specified. In other words, to compare 2009-2011 with 2012-2014, we need to have correctly modeled time trends in both these periods to compare the mean response net of those trends. We opted to use a linear functional form based on visual inspection of the trends across years; we also tested the addition of higher-order terms, but none improved model fit.

The model estimated an average treatment effect: the effect of the question change averaged across all respondents. It is possible, however, that the addition of a response category affected different groups of women in different ways (heterogeneity of treatment effects across population groups). We tested for this possibility by running models with interaction terms between the treatment assignment variable and demographic covariates. We ran separate models testing interactions by age, race/ethnicity, parity, and state of residence. Because age and parity are highly correlated, we controlled for age in models testing interactions by parity. In models testing interactions with state of residence, we included controls for both age and race/ethnicity to account for variation across states in demographic composition. In these latter models, joint hypothesis tests were used to evaluate the state-indicator interaction terms as a block, to test if any of the state interaction terms were significantly different from zero. In addition, in the models estimating interactions by state, we limited our sample to states contributing data for all 6 years (2009-2014).

Finally, given the large sample size of the pooled PRAMS surveys, we used a conservative α level of .001. We also tested an alternate specification in which we restricted our sample to states with data for all 6 years for each analysis; results were substantively unchanged. All analytic code is available at https://osf.io/x8r7v.

Results

Predicted Proportion of Each Response Category, by Year of Survey

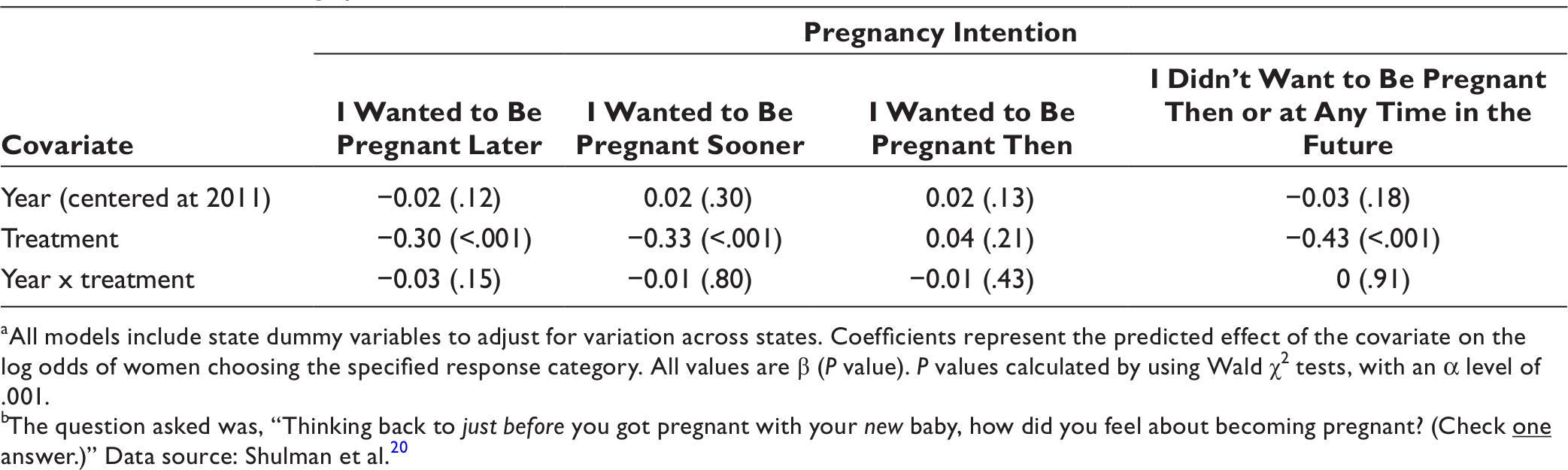

Responses to the pregnancy intention question were stable during 2009-2011; each response category changed by no more than 1 percentage point during the 3-year period (Figure 1). Almost one-third (31%-32%) of women reported that they had wanted the pregnancy to occur later, 17%-18% said they had wanted it to occur sooner, 39%-40% said they had wanted it to occur then, and 9%-10% said they did not want to be pregnant then or at any time in the future. About 2% of women skipped the question; this proportion remained stable during the entire study period.

Percentage distribution of responses among women to the pregnancy intention question for each year of the Pregnancy Risk Assessment Monitoring System (PRAMS) survey, 2009-2014. The question asked was, “Thinking back to just before you got pregnant with your new baby, how did you feel about becoming pregnant? (Check

After the introduction of the additional response category in 2012, 13%-15% of women selected it in 2012-2014 (Figure 1). The additional response category drew responses away from all categories except “I wanted to be pregnant then,” which remained stable. From 2011 to 2012, the proportion responding that they had wanted the pregnancy to occur later dropped 7 percentage points (from 31% to 24%), the proportion responding that they had wanted the pregnancy to occur sooner dropped 5 percentage points (from 18% to 13%), and the proportion stating that they did not want the pregnancy then or at any time in the future dropped 4 percentage points (from 10% to 6%). The proportion of respondents selecting each category remained largely stable in the years 2012-2014, varying by no more than 1 or 2 percentage points during the 3-year period.

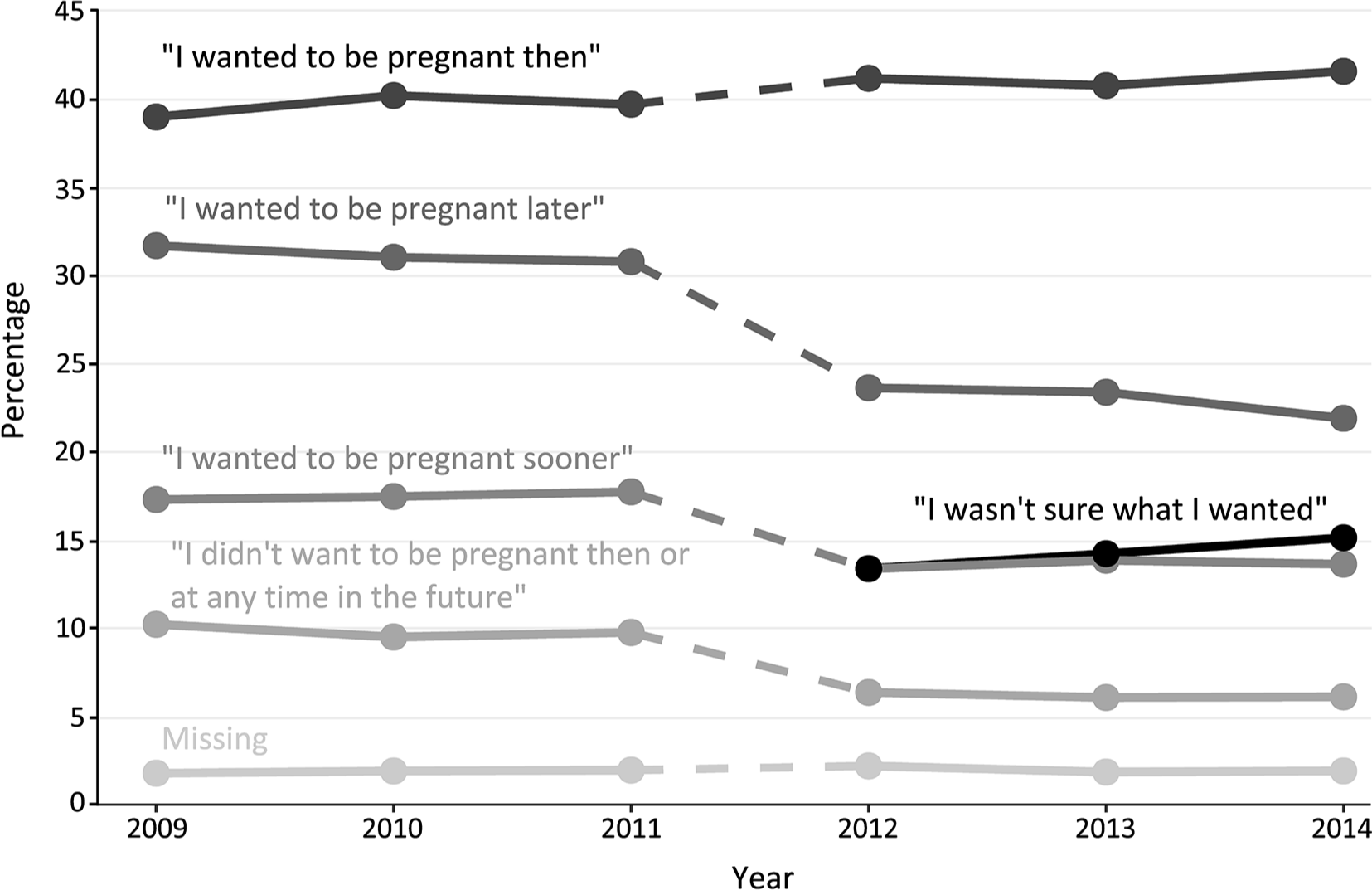

Selection of “Not Sure” Category, by Characteristics of Women

We found substantial differences across demographic groups in which women were most likely to select the response “I wasn’t sure what I wanted” (Figure 2A–C). Women in the 3 youngest age groups (≤17, 18-19, and 20-24) were significantly more likely than women aged 25-29 to select the “I wasn’t sure” category to describe their intention at the time of the pregnancy (Figure 2A). Women in the oldest age category (≥40) were also significantly more likely than women aged 25-29 to respond “I wasn’t sure”; the responses of women aged 30-34 and 35-39 were not significantly different from the responses of women aged 25-29.

Percentages of women reporting “I wasn’t sure what I wanted,” adjusted for state and year of survey, by maternal age, by maternal race/ethnicity, and by number of previous live births, Pregnancy Risk Assessment Monitoring System survey, 2012-2014. The question asked was, “Thinking back to just before you got pregnant with your new baby, how did you feel about becoming pregnant? (Check

Non-Hispanic black women and non-Hispanic women of races other than black or white were significantly more likely to respond “I wasn’t sure” than non-Hispanic white women (Figure 2B). We found no significant differences between non-Hispanic white women and Hispanic women. Women with ≥2 previous live births were more likely to select “I wasn’t sure” than women with no previous live births or 1 previous live birth (Figure 2C).

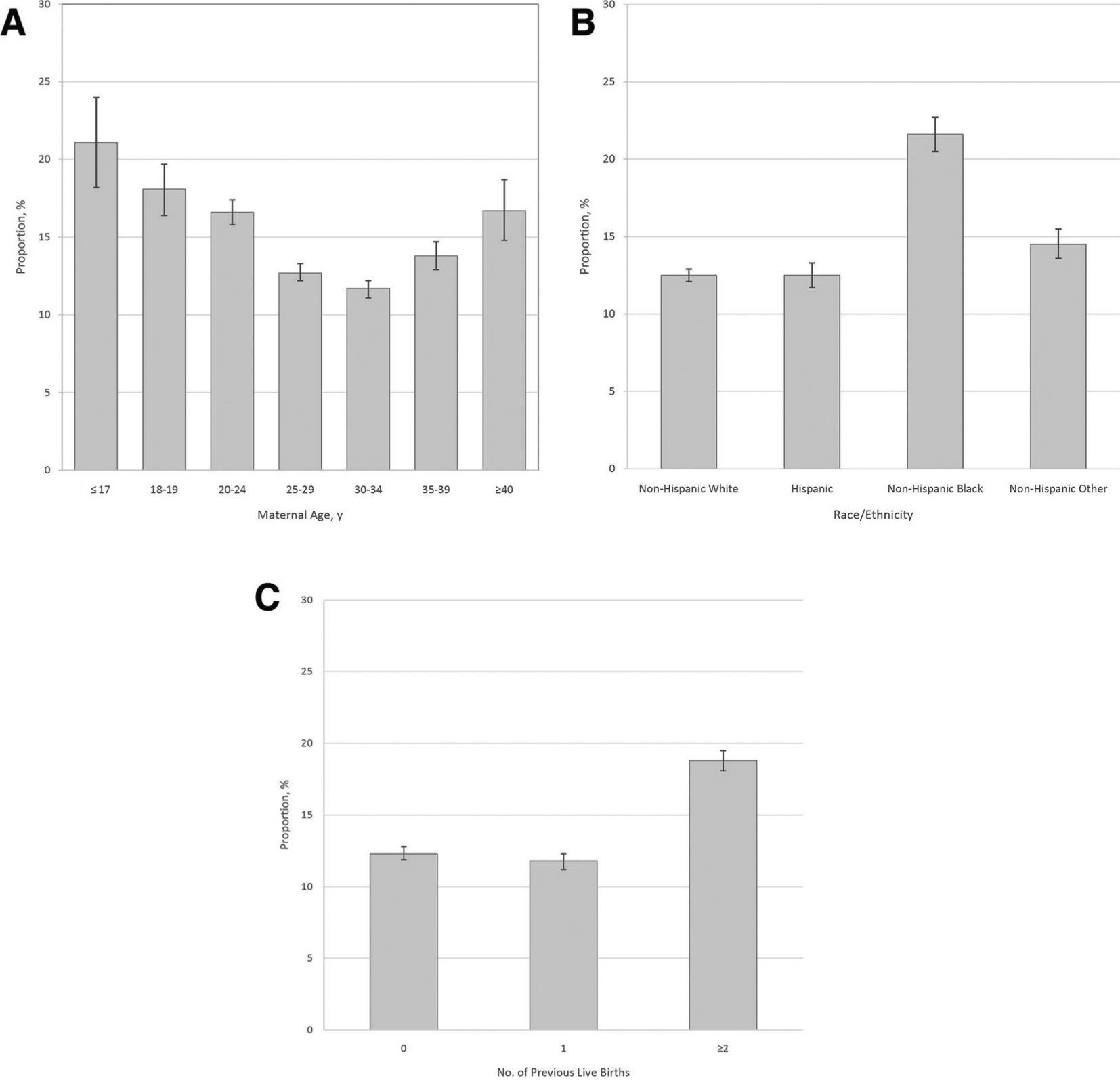

Overall Effects of Question Change

According to the regression discontinuity models, women were significantly less likely after the question change in 2012 than before 2012 to respond that they wanted to become pregnant “later” (β = –0.30) or “sooner” (β = –0.33) (Table). They also were significantly less likely after the question change than before the question change to respond that they “didn’t want to be pregnant then or at any time in the future” (β = –0.43). We found no significant change in the likelihood of selecting the option “I wanted to be pregnant then.” Linear time trends were not significant for any of the response categories, suggesting the proportions of women choosing each category within the periods before and after the question change did not change. Coefficients for year ranged from –0.03 to 0.02 during 2009-2011 and from –0.05 to 0.01 during 2012-2014.

aAll models include state dummy variables to adjust for variation across states. Coefficients represent the predicted effect of the covariate on the log odds of women choosing the specified response category. All values are β (P value). P values calculated by using Wald χ2 tests, with an α level of .001.

bThe question asked was, “Thinking back to just before you got pregnant with your new baby, how did you feel about becoming pregnant? (Check

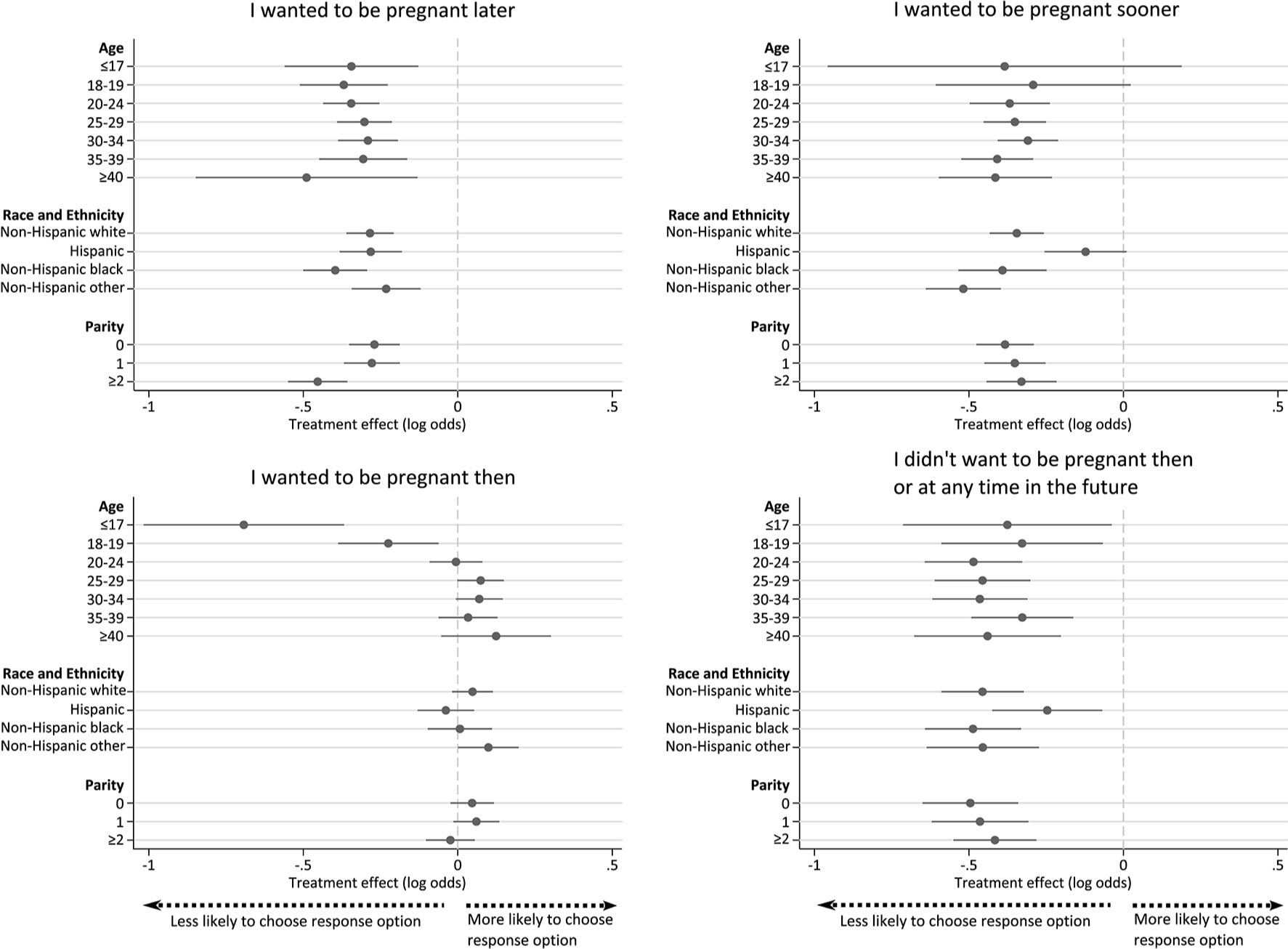

Effects of Question Change, by Age, Race/Ethnicity, and Parity

We found no significant interactions between treatment and age for any intention category except “I wanted to be pregnant then” (Figure 3). Women aged ≤17 and 18-19 had significantly larger treatment effects than women aged 25-29. These younger women were less likely to select “I wanted to be pregnant then” after the question change than before the question change (with changes in log odds of –0.69 for women aged ≤17 and –0.22 for women aged 18-19). We found no changes among women in other age groups.

Predicted treatment effects by age, race/ethnicity, and parity, Pregnancy Risk Assessment Monitoring System (PRAMS) survey, 2012-2014. The question asked was, “Thinking back to just before you got pregnant with your new baby, how did you feel about becoming pregnant? (Check

In contrast, we found no significant interactions between treatment and race/ethnicity for any response category except “I wanted to be pregnant sooner” (Figure 3). Hispanic women had significantly smaller treatment effects than non-Hispanic white women. The question change had a significantly negative effect among all non-Hispanic women (of any race) on the odds of selecting this category. Among Hispanic women, the effect of the question change was statistically indistinguishable from zero.

We found significant interactions between treatment and parity, even after adjusting for mother’s age (Figure 3). Declines in the odds of choosing “I wanted to be pregnant later” after the question change were steeper among women with ≥2 previous births (β = –0.45) than among women with no previous births (β = –0.27) or 1 previous birth (β = –0.28). We found no significant differences in estimated treatment effects on the other response categories.

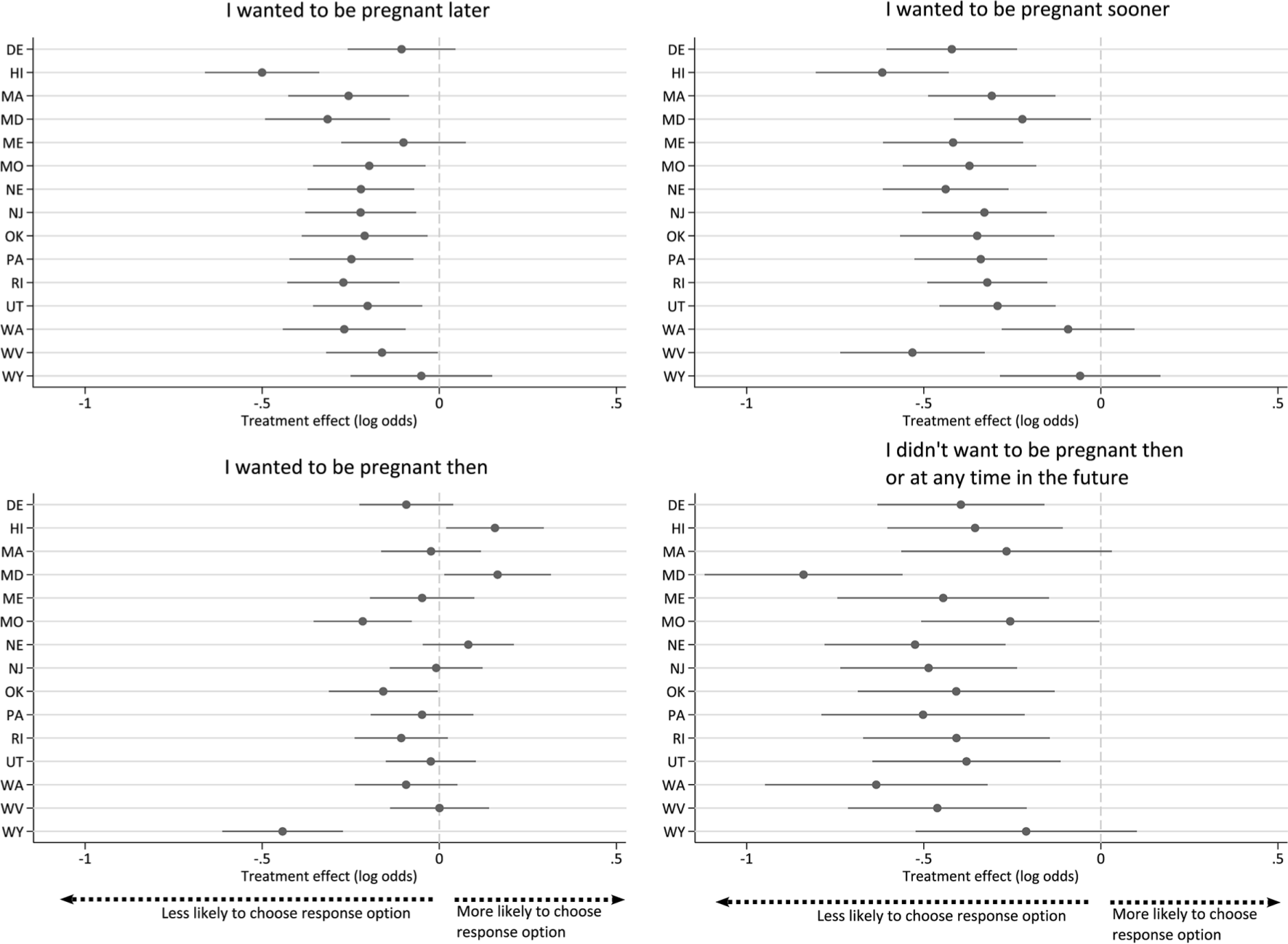

Effects of Question Change, by State

When we estimated the effects of the question change on the proportion of women choosing each response category by state, we found significant heterogeneity by state in each response category except “I didn’t want to be pregnant then or at any time in the future” (Figure 4).

Predicted treatment effect among states with data for all years of the study period, Pregnancy Risk Assessment Monitoring System (PRAMS) survey, 2012-2014. The question asked was, “Thinking back to just before you got pregnant with your new baby, how did you feel about becoming pregnant? (Check

Discussion

Expanding the measure of pregnancy intentions in the PRAMS surveys to include a response option for women who recall having been unsure of their desire for pregnancy before their most recent pregnancy appears to offer a more salient answer category for a significant proportion of women than the more limited options available in previous surveys. In 2012-2014, 13%-15% of women whose pregnancy led to a live birth chose the new answer option, “I wasn’t sure what I wanted.”

It was inevitable that the addition of another response option would affect the proportions of respondents choosing the existing options. However, the addition did not affect the likelihood of women selecting all other options equally. Instead, it drew responses away from all answer choices except “I wanted to be pregnant then.” The pre-2012 construct of the pregnancy intention question may have been constraining women’s responses by failing to recognize uncertainty as a valid state of mind before pregnancy, thereby forcing respondents to choose among answer choices that may not have accurately represented how they recalled their attitudes toward pregnancy.

The effect of the question change on the proportion of women who said “I wanted to be pregnant sooner” suggests that this group may be heterogeneous in their pregnancy desires. Past research has paid little attention to this group or characterized them as women who were having difficulty conceiving 9 or had been trying to get pregnant and conceived later than they preferred. These pregnancies are often interpreted as unambiguously positive and wanted. The substantial shift from this category when the “not sure” option is included suggests that not all women who chose “I wanted to be pregnant sooner” had this viewpoint. For example, some women who responded that they had wanted to become pregnant sooner may have been reflecting on a better time in their life for that pregnancy rather than indicating a strong desire for pregnancy. In addition, not all women have clear preferences or plans for the timing of their pregnancies. 25 Other research suggests that uncertainty and changes in pregnancy intentions across the life course are common.26-28

Differential effects of the question change by age, race/ethnicity, and parity also suggest that the change may affect estimates of trends in differences between demographic groups, if researchers compare estimates before and after 2012. Research examining the relationship between pregnancy intentions and other measures may also be affected. We investigated only 3 demographic characteristics in this analysis, but treatment effects of the question change across other characteristics are likely to differ as well; consequently, estimates of the association between respondent characteristics and pregnancy intention may be affected in unpredictable ways. We recommend that researchers should not pool PRAMS survey data across the year in which the question change occurred if the measure of pregnancy intention is used in analyses.

The question change is likely to have substantial effects on state-level estimates of the percentage of births from pregnancies categorized as unintended (ie, mistimed and unwanted). 29 In particular, estimates of unintended pregnancy from the 2012 surveys and later are likely to be lower than estimates from previous years. In many states, the proportion of births resulting from unintended pregnancies may appear to have declined from 2011 to 2012, but the decrease may be entirely attributable to the addition of the new answer option. That the effect of the question change varied substantially by state of residence further complicates this problem. For example, if one were to recalculate unintended pregnancy rates for 2011 and substitute the proportion of births in each response category for 2012, unintended pregnancy rates in 2011 would be 16% lower, on average; percentage declines would range from 3% (in Maine) to close to 30% (in Georgia). Thus, any ongoing surveillance of pregnancy intentions at the state level should not consider estimates before 2012 to be comparable with estimates after 2012.

Limitations

This study attempted to infer causal effects from observational data. Some important and unobserved factors may not be adequately accounted for in our analyses, although regression discontinuity-in-time designs are generally robust to omission of unobservable data as long as the functional form is correctly specified. 23

In particular, if there were events that occurred at the beginning of 2012 (coinciding with the question change in PRAMS) that affected how women in the country, or women in a particular state, might respond to questions characterizing their desires before pregnancy, then our results could be confounded with that change. However, we know of no national or state policy changes that went into effect at the beginning of 2012 that would have substantially affected women’s responses.

There was a slight reordering of the answer categories in 2012, but we assumed its impact was minimal: the reordering affected only the first and second response options, and respondents were able to see all options simultaneously. However, because both changes occurred simultaneously—an additional response option and reordering—we have no way of distinguishing the relative effect of each change.

Finally, given the number of interactions examined, some of our significant results may have been due to chance. We did not explicitly adjust for multiple comparisons; instead, we presented all comparisons tested, with an acknowledgment of the possibility of type I error rates higher than our nominal level of 0.1%. Our α level of .001 approximates a simple Bonferroni correction to a conventional α of .05: including all tested levels of the 3 interactions plus the treatment covariate and across models for each of the 4 response options, we conducted 48 substantive hypothesis tests; .05/48 = ∼.001.

Conclusions

The effects of the PRAMS question change in 2012 identified in this analysis underscore the need for further work on how best to characterize women’s childbearing desires. Our results do not suggest that research using the “new” pregnancy intention question in PRAMS is less valid than previous research; in fact, the substantial proportions of women selecting “I wasn’t sure what I wanted” imply latent demand for an option describing uncertainty about fertility intentions before pregnancy. The addition of the response option in PRAMS to capture uncertainty related to pregnancy desires has likely improved the measure. In this analysis, we found that the question change led to insights about how response option selection may change when additional response options are offered and how this movement may alter our interpretations of the existing response options. Researchers using PRAMS data should be aware of the effect of the question change in future studies.

Footnotes

Acknowledgments

The authors thank Sarah Cowan, Liza Fuentes, Sarah Hayford, Megan Kavanaugh, and Shivani Kochhar for their comments on this article. We also gratefully acknowledge the PRAMS Working Group and the Centers for Disease Control and Prevention for collection and provision of the data used in our analyses.

Authors’ Note

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors declared the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Susan Thompson Buffett Foundation (grant number 576.04).