Abstract

Research indicates that teachers’ theories of intelligence (incremental vs. entity) are likely to affect their teaching practices, and some teachers hold lower expectations for students with learning disabilities. This study explored the relationships between college instructors’ theories of intelligence and the feedback they provided based on a student’s writing sample under two conditions: the student’s dyslexia was mentioned versus not mentioned. One hundred and one college instructors completed a survey. Results of path analysis indicated the instructors who endorsed the incremental theory of intelligence gave significantly more encouraging comments than those who endorsed the entity theory. Instructors’ theories of intelligence did not predict the grade assigned, the number of weaknesses pointed out, and the number of suggestions provided. The instructors informed of the student’s dyslexia gave significantly higher grades than those not informed, but the instructors’ feedback did not differ. No significant interaction between instructors’ theories of intelligence and awareness of student dyslexia was found.

Keywords

Introduction

Research indicates that teachers’ perceptions of students’ learning potential are likely to be influenced by teachers’ theories of intelligence (intelligence being malleable vs. fixed) (Rattan et al., 2012; Webb, 2015) and students’ learning disability labels (Klehm, 2014; Osterholm et al., 2007). These findings suggest that the ways teachers assign grades and provide feedback on students’ coursework are likely to be affected not only by students’ performance per se, but also by teachers’ theories of intelligence and their awareness of students’ learning disabilities. However, not much research has directly examined the effect of college instructors’ theories of intelligence and awareness of students’ learning disabilities on the grade and feedback they provide for their students. College students with learning disabilities consider their interactions with instructors as a major factor contributing to their educational achievement and believe that they benefit from instructors who convey clear expectations and challenge students in the learning process (Madaus et al., 2003). Yet, it is not clear whether such student-instructor interactions and instructional practices are experienced regularly by college students with learning disabilities. It is also unclear how these practices are related to instructors’ theories of intelligence and/or their awareness of students’ learning disabilities.

To address the knowledge gaps described above, this study sought to explore potential relationships between two predictors (college instructors’ theories of intelligence and awareness of a student’s dyslexia) and the grade and feedback college instructors provided based on a piece of student writing. Since previous studies have indicated that people’s malleable vs. fixed implicit theories are associated with their tendency to form stereotypes (Levy & Dweck, 1999; Rydell et al., 2007) and can change in different contexts (Leith et al., 2014), we further investigated whether the relationship between college instructors’ theories of intelligence and the grade and feedback they provided varied with their awareness of the student’s dyslexia. Dyslexia is a learning disability characterized by inaccurate word reading, spelling, and difficulty in understanding the meaning of what is read (American Psychiatric Association, 2013). Students with dyslexia often find it difficult to process various sounds within words, to spell words correctly, to comprehend what they are reading, and/or to express their ideas in writing (Cortiella & Horowitz, 2014). Dyslexia was the disability focused on in our study because reading and writing constitute an important part of academic learning (Edgecombe et al., 2014).

Instructors’ Theories of Intelligence and Their Instructional Practices

People’s theories of intelligence could be categorized into two types: incremental and entity. The incremental theory refers to the belief that intelligence is malleable; the entity theory refers to the belief that intelligence is fixed (Dweck, 2000; Dweck & Leggett, 1988). Compared to the entity theory, the incremental theory of intelligence was associated with healthier motivational patterns (Davis et al., 2011; Dinger & Dickhäuser, 2013), more positive learning behaviors (Rickert et al., 2014), higher achievement (Blackwell et al., 2007), greater performance (Dar-Nimrod & Heine, 2006), more productive ways of handling failures (Nussbaum & Dweck, 2008), and better adaptability while facing novel and/or uncertain situations (Martin et al., 2013).

Although many studies have indicated students’ incremental theory of intelligence leads to positive effects on motivation and learning, very few studies have examined the effects of teachers’ theories of intelligence on their teaching practices. The existing evidence suggests that teachers’ theories of intelligence are likely to affect their evaluations of student work, the feedback provided to their students (Rissanen et al., 2018) and their students’ course interest and expectations of academic success (LaCosse et al., 2020). Rattan et al. (2012) surveyed 41 graduate student instructors at a university, asking them to imagine meeting with a student who failed the first test of the academic year. Their findings indicated that the participants holding an entity theory were more likely to attribute the student’s failing grade to a “lack of math intelligence,” to hold lower expectations for the student’s future achievement, and to adopt “comforting and potentially unhelpful practices” (p. 734). In this study, comforting practices referred to providing feedback with the intention to console the imagined student for his/her lack of math intelligence (e.g., “Explain that not everyone has math talent-some people are ‘math people’ and some people aren’t.”) (p. 733). Unhelpful practices referred to making suggestions that would lower the student’s engagement (e.g., “Assign less math homework.”) (p. 733). Further, their surveys of 54 university students revealed that the students who received such “comfort feedback” reported less motivation to study and lower expectations for their final grades.

The review of previous research suggests that teachers’ theories of intelligence may implicitly affect their evaluations of student performance and their feedback provided to students, which potentially influence students’ motivation, effort, and achievement. However, these studies did not include students with learning disabilities as participants. Furthermore, in Rattan and colleagues’ study (2012), the participants were asked to respond to an imaginary scenario instead of generating their own feedback based on formal evaluations of students’ actual work. Thus, it remains unclear whether a similar instructional pattern exists when instructors are informed that their students have a learning disability or when they are provided with a student’s sample work for evaluation. Therefore, it is imperative to examine the influence of instructors’ theories of intelligence by analyzing their feedback to an actual assignment written by a student with a learning disability.

Students with Learning Disabilities and Teacher Beliefs/Practices

The potential implications of the aforementioned findings related to the education of postsecondary students with learning disabilities merit special attention. Students with learning disabilities who attend postsecondary institutions are protected by Section 504 of the Rehabilitation Act of 1973 and the Americans with Disabilities Act Amendments Act of 2008. Section 104.44 in subpart E of Section 504 specifically stipulates that proper modifications and/or accommodations should be made to enable students with disabilities to demonstrate their mastery of the essential academic requirements for a course or program. In the last decades, the number of students with learning disabilities entering postsecondary education institutions has increased significantly (Newman et al., 2010). Given the increased number of students with learning disabilities enrolled in higher education, it is important to examine whether the educational practices in postsecondary institutions meet the needs of students with learning disabilities as intended by the above-mentioned laws.

As of 2016, approximately 19% of undergraduate students in the United States reported having a disability (National Center for Education Statistics, 2018). However, among those students with disabilities who received special education services in high school, only one-fourth of them continued to receive special accommodations and supports in college (Horowitz et al., 2017). This suggests that the learning experience of the majority of college students with learning disabilities is under the direct influence of their instructors. Therefore, it is crucial to understand the ways college instructors teach and support students with learning disabilities.

Learning disabilities are defined as conditions that “arise from neurological differences in brain structure and function and affect a person’s ability to receive, store, process, retrieve or communicate information” (Cortiella & Horowitz, 2014, p. 3). Learning disabilities are not directly related to intelligence, vision, or hearing issues (Horowitz et al., 2017). Compared to their peers without disabilities, students with learning disabilities often need more time to comprehend a new concept or learn a new skill (Cortiella & Horowitz, 2014), and tend to obtain lower scores on achievement tests (Sparks & Lovett, 2009). Previous research indicates that students with learning disabilities tend to believe in the entity theory of intelligence and hold relatively low academic self-efficacy (Baird et al., 2009; DuPaul et al., 2017; May & Stone, 2010). In a study conducted by Davis and colleagues (2011), university students who endorsed the entity theory experienced stronger helpless feelings and lower self-efficacy than students who endorsed the incremental theory when they were led to believe they would compete against academically competitive students on a math test. Since students with learning disabilities tend to hold relatively low academic self-efficacy, believing in the entity theory would be particularly detrimental to their motivation of learning and may negatively impact their academic achievement. Therefore, it is especially important for students with learning disabilities to receive encouraging comments and useful feedback from their instructors to maximize their learning potential (cf. Truax, 2018; Wingate, 2010).

Given that instructors’ constructive feedback serves a crucial role in students’ learning, it is imperative to examine the relationship between college instructors’ awareness of students’ learning disabilities and their feedback to students. Some studies suggest that teachers might have lower expectations for academic performance of students with learning disabilities. Klehm (2014) found that middle school general education teachers did not believe that students with disabilities were capable of benefiting from instruction as much as special education teachers did. After reviewing 34 studies related to learning disabilities labels, Osterholm et al. (2007) indicated that such labels led both K-12 teachers and college instructors to lower expectations. However, Osterholm et al.’s review also noted that teachers’ grading of a student’s writing and the support they offered to the student did not vary with their awareness of the student’s learning disabilities. Based on the previous research, it is likely that college instructors might provide instructional feedback and assign grades to students fairly without being biased by the learning disabilities labels.

Previous research has not yielded a consistent result with regards to the changeability of theories of intelligence in various contexts. Research suggests that people’s theories of intelligence may differ in different academic domains (e.g., holding a fixed view of intelligence in the arts and math, but an incremental view of intelligence in the humanities; Patterson et al., 2016; Shively & Ryan, 2013) or in different contexts (e.g., adopting an incremental view in order to forgive favored political candidates’ mistakes vs. a fixed view in order to confirm the past mistakes are caused by political opponents; Leith et al., 2014). However, in regard to learning disabilities, Gutshall (2013) compared K-12 teachers’ theories of intelligence between two hypothetical scenarios, one where the student was depicted as having a learning disability and the other where the student was depicted as having no disability. The study found no difference in teachers’ theories of intelligence between the two scenarios. Since Gutshall’s study did not focus on college instructors, it would be important to gain more understanding on whether the influence of college instructors’ theories of intelligence on their instructional practices depends on their awareness of students’ learning disabilities.

Present Study

Taken together, the existing literature suggests that teachers’ views toward students with learning disabilities may be influenced by their theories of intelligence, and such views may be associated with their instructional practices. To our knowledge, however, no studies have examined college instructors’ theories of intelligence and their relevant instructional practices differentiated among students with or without learning disabilities.

To address the research gap, this study aimed to explore the relationship between college instructors’ theories of intelligence and the instructional feedback they provided based on a piece of student writing under two conditions: the instructor was informed of the student’s dyslexia versus no disability was mentioned. The instructors were randomly assigned into these two conditions, while the other predictor (instructors’ theories of intelligence) was based on the participants’ self-reports.

Specifically, this study addressed the following research questions. (a) Based on the same piece of student writing, did college instructors’ theories of intelligence predict: the grade they assigned, the number of encouraging comments they made, the number of weaknesses they pointed out, and the number of specific suggestions they provided? (b) Based on the same piece of student writing, did college instructors’ awareness of the student’s dyslexia predict: the grade they assigned, the number of encouraging comments they made, the number of weaknesses they pointed out, and the number of specific suggestions they provided? (c) Did the relationship between the instructors’ theories of intelligence and the grade/feedback provided depend on the experimental condition (student’s dyslexia mentioned vs. not mentioned)?

Based on the nature of theories of intelligence and the pattern revealed in Rattan et al.’s study (2012), we hypothesized that college instructors who embrace the incremental theory would make more encouraging comments and provide more specific suggestions to help the student improve. Due to lack of previous studies that addressed associations of instructors’ theories of intelligence with the grade they assigned and the number of weaknesses they pointed out, we did not formulate specific hypotheses for these two dependent variables. Previous research suggests that teachers’ feedback and grading might not be biased by their awareness of students’ learning disabilities (Osterholm et al., 2007). Therefore, we hypothesized that the number of encouraging comments and specific suggestions provided, the number of weaknesses pointed out, and the assigned grade would not vary with instructors’ awareness of the student’s dyslexia (knowing vs not knowing). Previous research suggests that K-12 teachers’ theories of intelligence did not vary with students’ disabilities (Gutshall, 2013). Although Gutshall’s study did not focus on college settings, we hypothesized that the effect of college instructors’ theories of intelligence on the grade/feedback they provide would not change due to their awareness of the student’s dyslexia. Therefore, there would be no interaction between the instructors’ theories of intelligence and their awareness of the student’s dyslexia.

Method

Participants

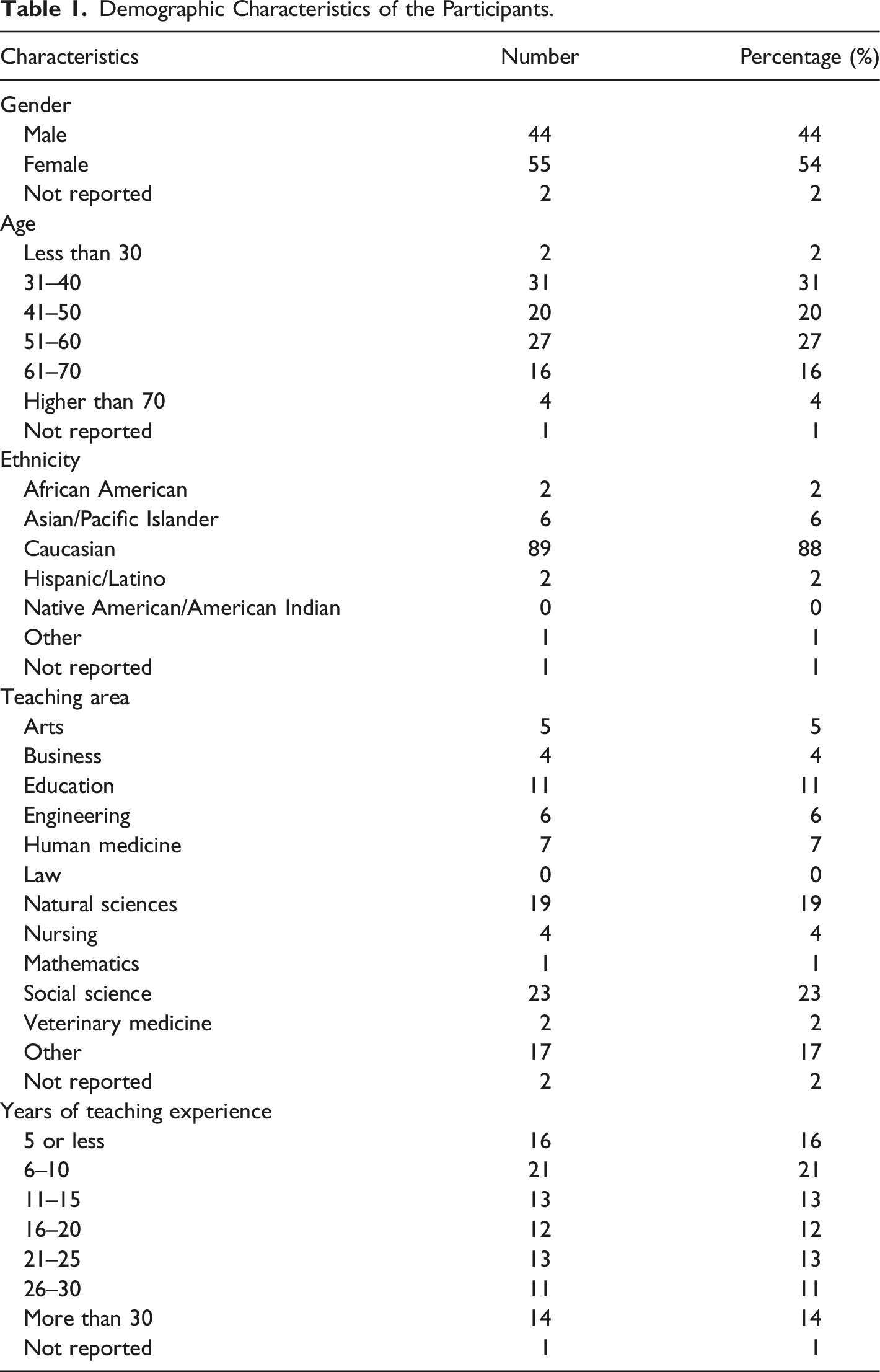

Demographic Characteristics of the Participants.

Procedure

After receiving Institutional Review Board (IRB) approval, an email was sent to all instructors in a large Midwestern public research university in the United States to invite them to complete an online survey via Qualtrics. The email included a description of the topic of the study (college instructors’ theories of intelligence and feedback provided for students with or without dyslexia), the participation process (completing an online survey that would take approximately 15 minutes), the content of the survey (five questions related to basic demographic information, two questions related to theories of intelligence, and a task to grade a paragraph of a student’s writing sample and provide feedback for the student). Also included was the potential participant’s right to voluntarily participate in the study or withdraw from the study without penalty, the proper contact information if the participant has concerns or questions about this study or about his or her role and rights as a research participant, and a link to the online survey. Each potential participant was invited to indicate his or her voluntary agreement to participate in this study by completing the online survey. Approximately 7 weeks later, another email was sent to all instructors who had not participated in this study to invite them one more time. The participants were randomly assigned by Qualtrics to two possible experimental conditions: (a) being informed that the piece of writing was from a student with dyslexia, and (b) no mention of any disability. The participants were not aware of this manipulation, and did not receive a debriefing after they completed the survey about the manipulation of experimental conditions. This design imitated the natural condition where college instructors may or may not know whether a certain student has a learning disability, since a large percentage of college students with learning disabilities do not report it (Horowitz et al., 2017). We did not set a time limit for each participant to complete the grading/feedback task, because instructors normally have the freedom to decide how much time they spend on grading and providing feedback for a student. The data collection ended 2 weeks after the second invitation was sent. Results of chi-square tests indicated that the two groups were similar in all demographic characteristics (i.e., gender, age, ethnicity, teaching area, and years of teaching experience).

Measure

The survey contained five items for demographic information (gender, age, ethnicity, teaching area, and years of teaching experience), and two Likert-scale items taken from Dweck’s (2000) work to assess the participant’s theory of intelligence. The participants were asked to choose the response that showed how much they agree with each of two statements: (1) “To be honest, you can’t really change how intelligent you are.” (2) “You can learn new things, but you can’t really change your basic intelligence.” Both items were indicated as being able to be used alone (Dweck, 2000). The possible score of each item ranged from 1 (most entity) to 6 (most incremental).

After responding to the two items that assessed theories of intelligence, the participants were asked to provide a letter grade for a student writing sample and give feedback for the student. One version of the survey described the student as having dyslexia; the other version did not mention any disability. The writing sample was the introductory paragraph of a paper written by a student with dyslexia for one of her upper level (300-level) undergraduate course assignments. It included minor grammar and sentence structure problems that contained errors students with dyslexia were likely to make due to their tendency to get sounds and/or letters mixed up and to omit words. We used a writing sample that should be comprehensible to college instructors from all disciplines. The writing sample was collected and used with permission. The instructions to the participants for this task and the entire writing sample are in the appendix.

Data Coding

The scores from the two items related to theories of intelligence were added together to represent the theory-of-intelligence score. The Cronbach’s alpha of the theory-of-intelligence scale in this study was 0.96, indicating a relatively high internal consistency. The experimental condition with no disability mentioned was coded as “0”; the other condition with dyslexia mentioned was coded as “1.” The letter grade each participant assigned was converted into a scale of A = 4, A- = 3.67, B+ = 3.33, B = 3, B- = 2.67, C+ = 2.33, C = 2, C- = 1.67, D+ = 1.33, D = 1, D- = 0.5, and E= 0.

Each participant’s comments were coded into three categories: encouraging comments made, weaknesses pointed out, and specific suggestions provided. The coding was done by two observers with the following rules. (a) If a sentence pointed out a positive aspect of the writing or directly praised the student (e.g., “The content conveys some helpful ideas.” or “You’ve made a good start!”), it was coded as an “encouraging comment.” (b) If a sentence pointed out a negative aspect of the writing without a specified direction for improvement (e.g., “Disjointed sentences.”), it was coded as a “weakness.” (c) If a sentence provided a suggestion for an action to take or pointed out a specific area that needed to be improved (e.g., “Please see me to make a plan to get extra writing help.” or “I’d suggest that you focus on avoiding generalizations by working closely with evidence and sources to identify specific examples.”), it was coded as a “suggestion.” (d) If a sentence contained two or more ideas more or less “along the same line” (e.g., “The writer has many grammatical errors that make the reading difficult to understand.”), it was coded as one incident in the related category (e.g., “weakness”). (e) If a sentence contained two ideas belonging to two categories in the coding system (e.g., “The ideas presented here are well laid out, but the grammar could use some improvement.”), it was coded as one incident in each category (e.g., an “encouraging comment” and a “suggestion”).

Both observers first coded all the feedback independently. The inter-observer agreements for the number of encouraging comments made, number of weaknesses pointed out, and number of suggestions provided were assessed with Cohen’s kappa, at 0.83, 0.68, and 0.67, respectively, which reached/exceeded the range of substantial agreement (kappa between 0.61 and 0.80; Vierra & Garrett, 2005). Additionally, correlations between the two independent coders for the number of encouraging comments made, number of weaknesses pointed out, and number of suggestions provided were high, at 0.94, 0.90, and 0.96, respectively. Each disagreement was resolved by a discussion that led to a consensus between the two data coders.

Data Analysis

We used Muthén and Muthén’s (1998–2012) Mplus 7.0 to conduct path analysis for examining whether the four outcome variables (i.e., grade assigned, number of encouraging comments made, number of weaknesses pointed out, and number of specific suggestions provided) were predicted by theory-of-intelligence score, experimental condition (0 = no disability mentioned; 1 = dyslexia mentioned), and the interaction between the two predictors for the following reasons: (a) The grade and the three types of instructor feedback were four outcome variables that were considered as being interdependent within subjects; (b) path analysis allows the estimation of the associations of the four outcome variables with the independent variables to be executed in one model, which can account for interdependence of these outcome variables within subjects and examine the overall effect of the independent variables on all the outcome variables.

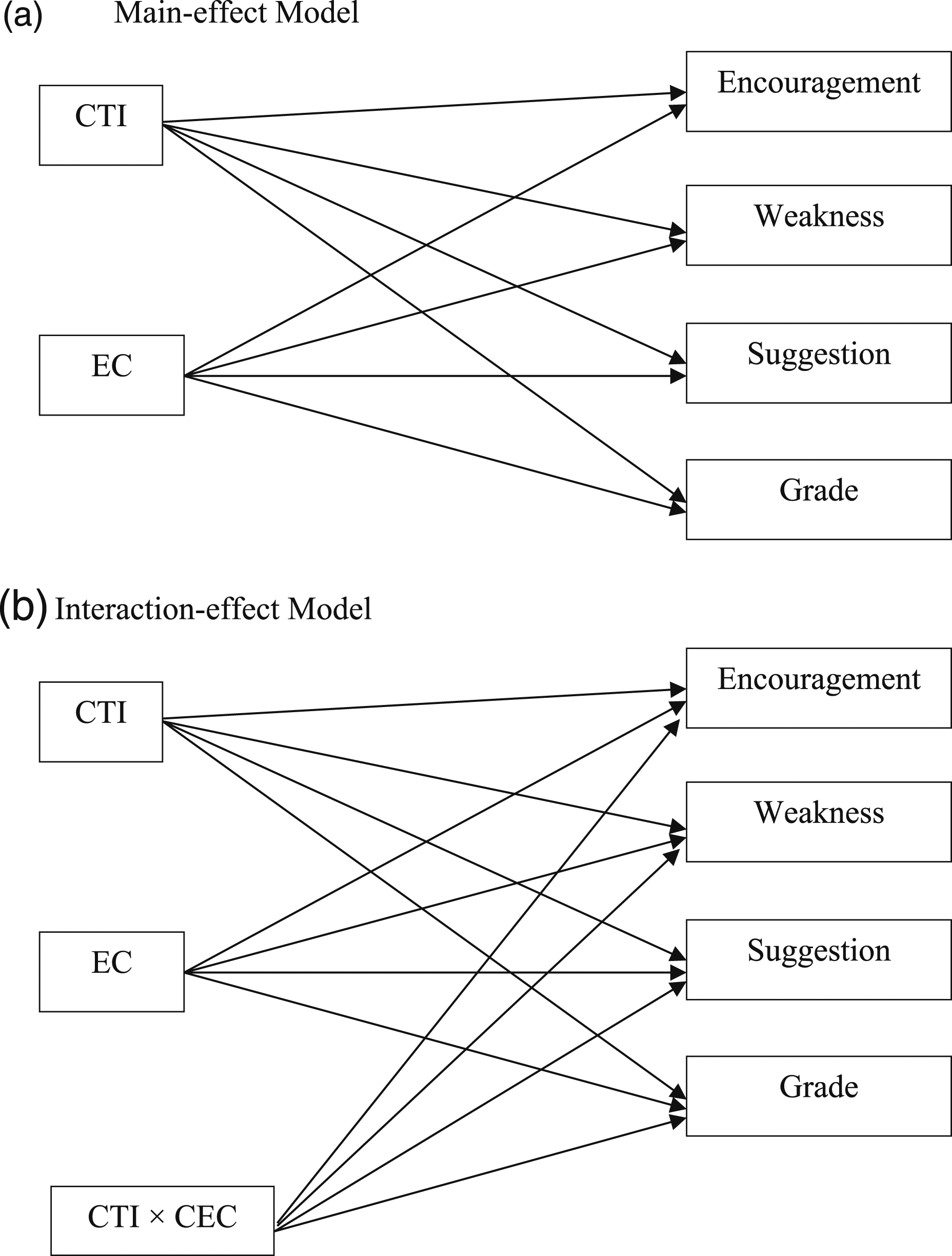

Two path-analysis models were tested. The first was the main-effect model: each outcome variable was predicted by centered theory-of-intelligence score and experimental condition (Figure 1(a)). The theory-of-intelligence scores were centered by their mean to enable the interpretation of the effect of experimental condition to become conceptually meaningful (the effect of experimental condition when the theory of intelligence was at its mean value) (Field, 2013). The second is the interaction-effect model: each dependent variable was predicted by centered theory-of-intelligence score, experimental condition, and centered theory-of-intelligence score × centered experimental condition (Figure 1(b)). The experimental condition scores were centered in the interaction term to avoid multicollinearity (Field, 2013). Conceptual Map of Path-analysis Models. CTI = Centered Theory of Intelligence; EC = Experimental Condition (awareness/unawareness of student dyslexia); CEC = Centered Experimental Condition.

According to Huck (2004), a normal distribution is declared for a variable if −1 < skewness <1 and −1 < kurtosis <2. Except for the grade and the number of weaknesses pointed out (skewness coefficients = −0.72 and 0.85; kurtosis coefficients = 0.22 and −0.44, respectively), the distributions of number of encouraging comments made and number of specific suggestions provided were highly and positively skewed (skewness coefficients = 1.66 and 1.69; kurtosis coefficients = 3.21 and 3.78, respectively). Additionally, the three types of instructor feedback (i.e., numbers of encouraging comments made, weaknesses pointed out, and specific suggestions provided) were coded as non-negative integer values (count outcomes). According to Long and Freese (2001), Poisson or negative binomial regression is appropriate for regressing count outcomes with positive skewness. Although the number of weaknesses pointed out was not highly and positively skewed, its skewness coefficient was positive and it was a count outcome. Accordingly, we executed preliminary Poisson and negative binomial regression analyses individually to test the association of each type of instructor feedback with the independent variables for the purpose of determining whether Poisson or negative binomial regression was more appropriate for each type of instructor feedback. For all the types of instructor feedback, the goodness-of-fit chi-squared tests for negative binomial regression were not statistically significant, while the tests for Poisson regression were. Given that a non-significant goodness-of-fit chi-squared test suggests a good model fit (Long & Freese, 2001), negative binomial regressions were used for all the types of instructor feedback in both models. Ordinary least squares (OLS) regression was used for the outcome variable “grade” in both models since “grade” was a continuous variable and normally distributed as mentioned above. In Mplus 7.0, by default, when negative binomial regression is integrated into a path-analysis model, restricted maximum likelihood (REML) technique is used for estimating all parameters, which yields robust standard errors (Muthén & Muthén, 1998–2012). Also, given negative binomial regressions used for both path-analysis models, regular model fit indexes (e.g., CFI and RMSEA) are not able to be computed (Muthén & Muthén, 1998–2012). As suggested by Long and Freese (2001), for each model, a Wald chi-square test was conducted to test whether the overall effects of the two predictor variables on the outcome variables were significant. A Wald chi-square test is an omnibus test in which a significant test indicates that the model as a whole is better than the null model (i.e., all the coefficients of the effects of the predictor variables on the outcome variables are set at zero).

For the main-effect model, as shown in Figure 1(A), we tested whether the effect of each predictor on each outcome variable was significantly different from zero, which resulted in eight tests. For the interaction-effect model, as shown in Figure 1(B), we conducted 12 major tests of whether the effect of each predictor on each outcome variable was significantly different from zero. To control the experiment-wise (familywise) error rate across the eight tests for the main-effect model and the 12 tests for the interaction-effect model, we conducted the false discovery rate (FDR) procedure (Benjamini & Hochberg, 1995) because this approach renders better familywise error control than no multiplicity control and more statistical power than other strict familywise error controlling methods (e.g., the Bonferroni procedure) (Cribbie, 2007). According to Benjamini and Hochberg (1995), the FDR procedure was conducted as follows. For the main-effect model, we ranked the p values of the parameter estimates of the eight effects from largest to smallest (p8–p1). Next, if p8

Results

Descriptive Analysis

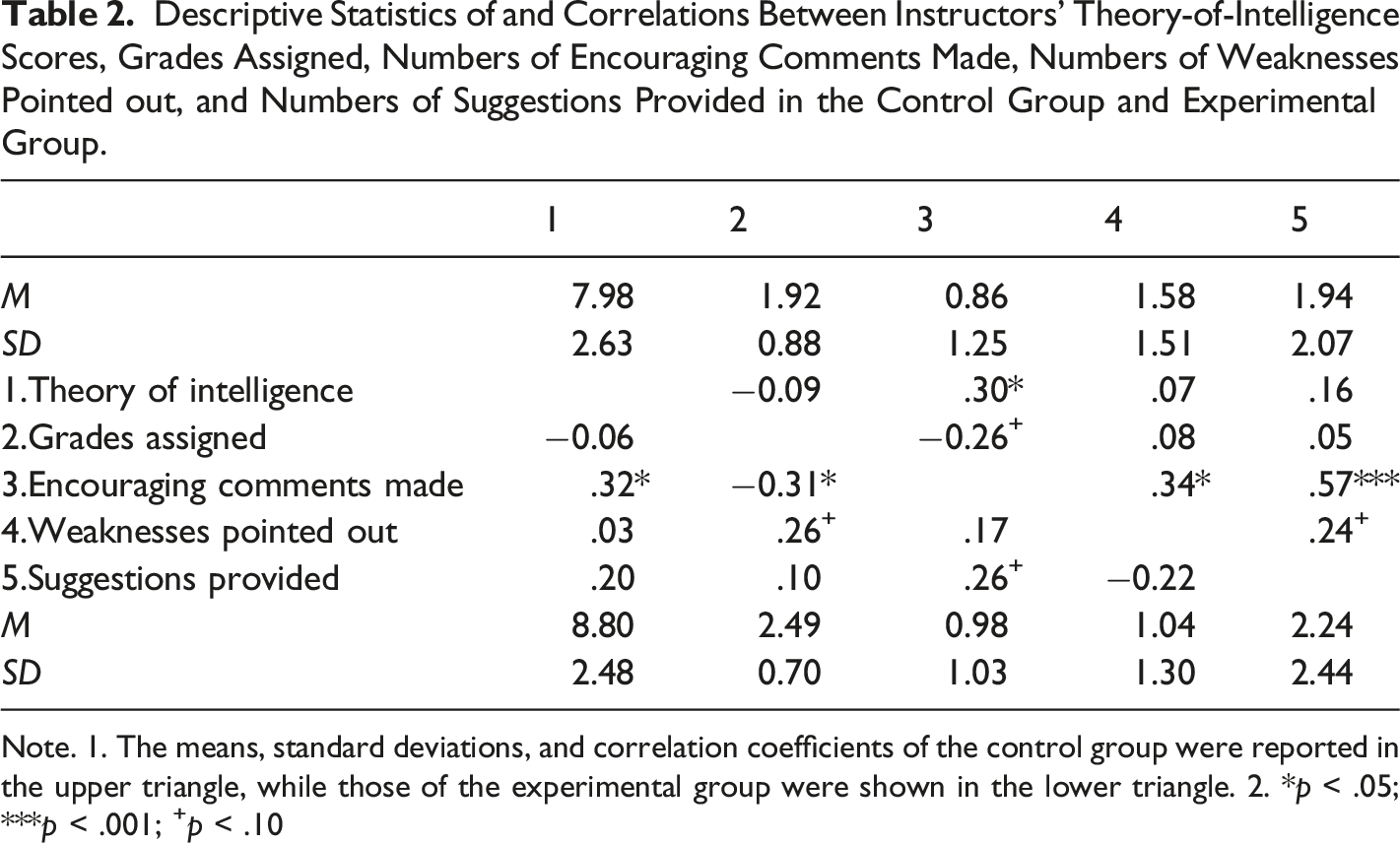

Descriptive Statistics of and Correlations Between Instructors’ Theory-of-Intelligence Scores, Grades Assigned, Numbers of Encouraging Comments Made, Numbers of Weaknesses Pointed out, and Numbers of Suggestions Provided in the Control Group and Experimental Group.

Note. 1. The means, standard deviations, and correlation coefficients of the control group were reported in the upper triangle, while those of the experimental group were shown in the lower triangle. 2. *p < .05; ***p < .001; +p < .10

Table 2 also shows the correlations between the above-mentioned variables in each group. In the control group, theory-of-intelligence was positively associated with numbers of encouraging comments (r = 0.30, p =.032); numbers of encouraging comments were positively associated with numbers of weaknesses (r = 0.34, p =.017) and numbers of suggestion (r = 0.57, p < .001) in significant levels and negatively associated with grades (r = −0.26, p =.075) in a marginally significant level; numbers of weaknesses were positively associated with numbers of suggestion (r = 0.24, p =.095) in a marginally significant level. In the dyslexia group, theory-of-intelligence was positively associated with numbers of encouraging comments (r = 0.32, p =.022); grades were negatively associated with numbers of encouraging comments (r = −0.31, p =.031) in a significant level and positively associated with numbers of weaknesses (r = 0.26, p =.069) in a marginally significant level; numbers of encouraging comments were positively associated with numbers of suggestion (r = 0.26, p =.069) in a marginally significant level.

Path Analysis

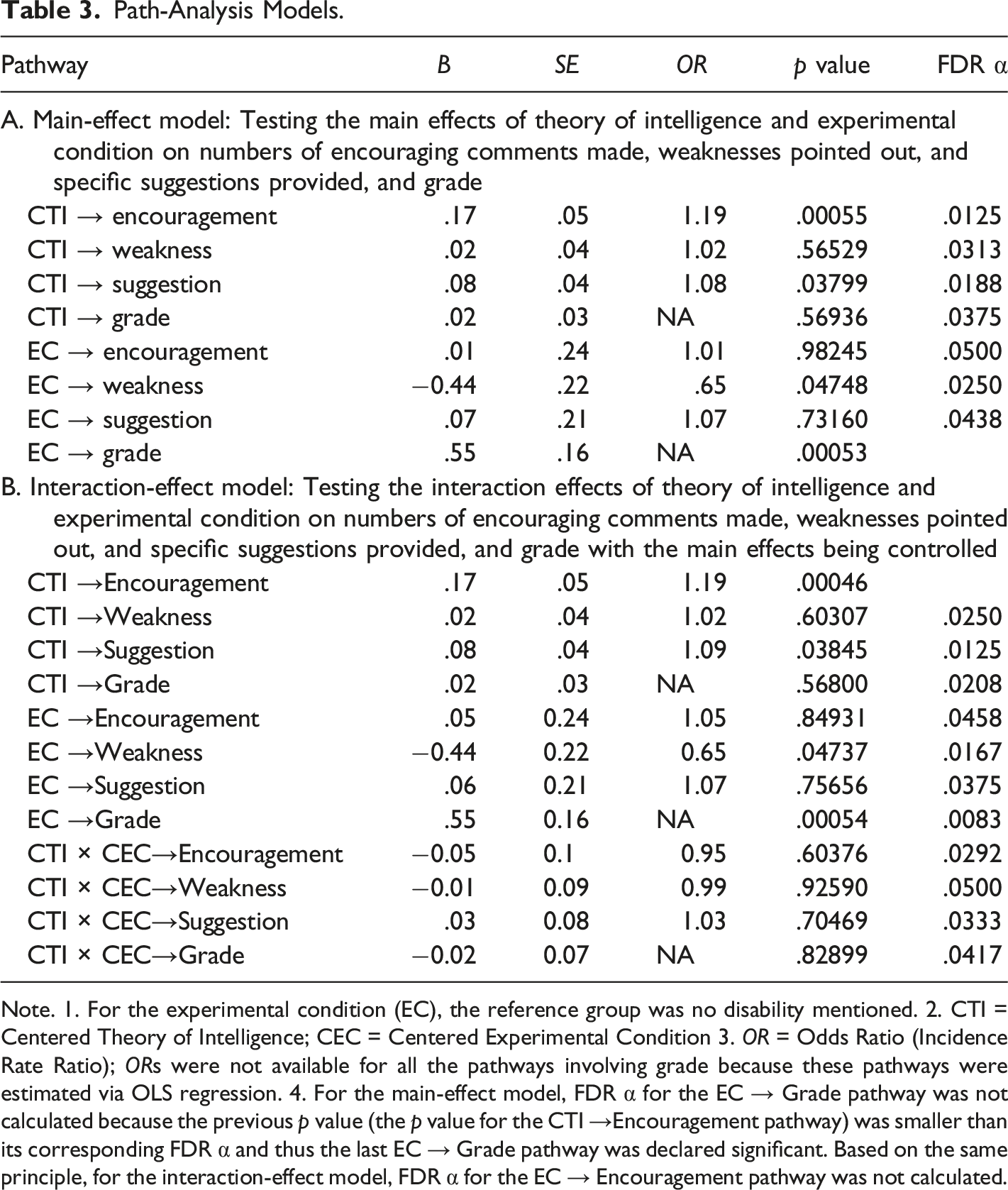

Path-Analysis Models.

Note. 1. For the experimental condition (EC), the reference group was no disability mentioned. 2. CTI = Centered Theory of Intelligence; CEC = Centered Experimental Condition 3. OR = Odds Ratio (Incidence Rate Ratio); ORs were not available for all the pathways involving grade because these pathways were estimated via OLS regression. 4. For the main-effect model, FDR α for the EC → Grade pathway was not calculated because the previous p value (the p value for the CTI →Encouragement pathway) was smaller than its corresponding FDR α and thus the last EC → Grade pathway was declared significant. Based on the same principle, for the interaction-effect model, FDR α for the EC → Encouragement pathway was not calculated.

For the interaction-effect model, the Wald chi-squares test was significant, with χ2(12) = 36.08, p < .001. This indicates that the overall main and interaction effects of the predictors on the outcome variables were significant, suggesting that the model as a whole was better than the null model. As shown in Table 3B, the two significant main effects in the main-effect model remained significant in the interaction-effect model. All the other main effects and the interactions between instructors’ theories of intelligence and experimental condition for any outcome variable were not significant.

To test whether the main- or the interaction-effect model was better, as suggested by Muthén and Muthén (2005), we executed the Satorra-Bentler (SB) scaled chi-square difference test to examine whether the SB scaled chi-square difference between the main-effect and the interaction-effect model was significant, since a REML technique was performed. The result of SB scaled chi-square difference test was not significant (χ 2 (4) = 0.46, p = .977). A nonsignificant test suggests that the two models are assumed to be statistically the same and a more parsimonious model (i.e., the main-effect model) is better (Muthén & Muthén, 2005). In other words, adding interaction effects into the main-effect model did not explain more variance of all the outcome variables.

Discussion

This study expanded the literature by exploring whether college instructors’ theories of intelligence and awareness of a student’s dyslexia predicted the grade they assigned and the feedback they provided based on a piece of student writing. It also explored if the potential effect of the instructors’ theories of intelligence on grade and feedback depended on the instructors’ awareness of the student’s dyslexia. Results indicated the instructors endorsing the incremental theory offered significantly more encouraging comments than those endorsing the entity theory, but the instructors’ theories of intelligence did not predict the grade assigned, the number of weaknesses pointed out, and the number of specific suggestions made based on the student’s writing. The instructors who were informed of the student’s dyslexia gave significantly higher grades, but did not differ from the instructors who were not informed of the student’s dyslexia in the numbers of encouraging comments made, weaknesses pointed out, and specific suggestions provided. No interaction between the theories of intelligence and the experimental condition was found.

College Instructors’ Theories of Intelligence and Their Instructional Practices

The results of the study indicated that teachers’ theories of intelligence were significantly associated with the number of encouraging comments they made. This finding supported the notion that teachers’ theories of intelligence play an important role in their teaching practices (Rissanen et al., 2018). The instructors who endorsed the incremental theory offered more encouraging comments for the student to improve his/her writing. This finding supported our hypothesis. Although we did not find previous studies that directly assessed the effect of instructors’ theories of intelligence on the encouragements they offered to students, this finding is consistent with some patterns found in previous studies. For example, Heslin et al. (2006) found that managers who believed in the incremental theory were reported to provide more coaching to help their employees. This finding was also coherent with the finding by Rattan et al. (2012) that teachers with an incremental mindset were less likely to provide unhelpful strategies (e.g., “dropping the class”) to students. Previous studies have also indicated that the extent to which students’ learning benefits from instructors’ feedback is related to the students’ engagement with the feedback (Wingate, 2010; Zimbardi et al., 2016). It is possible that instructors’ encouraging comments have the potential to enhance students’ engagement with feedback, thereby improving learning outcomes. Our finding related to the instructors’ encouraging comments underscore the importance of raising college instructors’ awareness and sensitivity regarding the potential influence of their theories of intelligence on the feedback they provide for their students.

Our results indicated that teachers’ theories of intelligence did not have a significant association with the assigned grade and feedback in regards to weaknesses and suggestions. The finding that instructors who endorsed the incremental theory of intelligence did not provide more suggestions for the student to improve his/her writing was inconsistent with our hypothesis. This is an area worth further investigation. Future studies can use in-depth interviews to understand instructors’ rationales for grading, providing suggestions, and pointing out weaknesses. Doing so could further explore why these is no association of instructors’ theories of intelligence and their grading and feedback regarding weaknesses and suggestions.

College Instructors’ Awareness of Student Disabilities and Their Instructional Practices

The instructors under the two experimental conditions (knowing vs. not knowing the student’s dyslexia) did not differ in the amounts of the three types of instructional feedback provided for the student (i.e., encouraging comments made, weaknesses pointed out, and specific suggestions provided). These findings were consistent with our hypotheses and previous research summarized by Osterholm et al. (2007). Our findings suggest that instructors’ awareness of a student’s dyslexia does not demotivate them from providing constructive feedback. The instructional practices of the instructors in the present study are aligned with the suggestion that teachers provide encouraging comments and useful feedback in order to maximize learning potential for students with learning disabilities (Gersten & Baker, 2001; Truax, 2018).

Despite providing similar levels of feedback in the two experimental conditions (knowing vs. not knowing the student’s dyslexia), the instructors who were informed of the student’s dyslexia gave significantly higher grades, compared with those instructors who were not informed. This finding was not in line with our hypothesis. Osterholm et al.’s (2007) review noted that teachers’ grading of a student’s writing did not vary with their awareness of the student’s learning disabilities. Our findings revealed a different pattern of teacher expectation reflected in significantly higher grades, based on the same piece of writing, when the student was labeled with dyslexia. The intention of the law for teaching students with disabilities emphasizes the importance of providing accommodations (e.g., instructional support and suggestions for study skills) to students with disabilities, without being at the cost of lowering grading standards (Scott & Gregg, 2000). The above instructional principle ensures that these students would be able to develop and demonstrate competency essential to the education they are pursuing. Our finding raises a concern on whether adopting lower standards (assigning a higher grade) can optimize learning opportunities and outcomes for students with learning disabilities in higher education.

Interactions between Instructor’s Theories of Intelligence and Awareness of Student Disabilities

We did not find significant interactions between instructors’ theories of intelligence and their awareness of student dyslexia in this sample. This finding suggested that the impact of the instructor’s theories of intelligence on grade or feedback did not depend on the instructor’s awareness of the student’s dyslexia. This was consistent with our hypothesis and Gutshall’s (2013) findings that K-12 teachers’ theories of intelligence were not influenced by hypothetical scenarios related to students’ disabilities. By contrast, other studies indicated that people’s theories of intelligence could vary in different academic domains (e.g., a fixed view of intelligence in the arts and math, but an incremental view of intelligence in the humanities; Patterson et al., 2016; Shively & Ryan, 2013) or in different goal-specific contexts (e.g., political preferences; Leith et al., 2014). It appeared that individuals’ theories of intelligence may change in some cases, but not in others (e.g., a student’s dyslexia status).

The majority of college instructors do not have sufficient knowledge and training on instruction for students with disabilities (Cook et al., 2009; Hansen, 2013). This could have influenced the results of the present study. It is possible that the instructors did not have opportunities to reflect on their implicit theories of intelligence in relation to their teaching practices. If instructors are provided with opportunities to reflect on the effect of their own theories of intelligence on their teaching and are equipped with knowledge and training on teaching strategies for students with learning disabilities, they may be more likely to adjust their implicit theories of intelligence and provide helpful feedback to improve motivation and achievement of students with learning disabilities. Previous research has found that people’s theories of intelligence could change after being engaged in readings, videos, discussions, and/or reflections provided by educational interventions that were designed to teach the incremental view of intelligence (Blackwell et al., 2007; Rattan et al., 2012). Therefore, it is important for future studies to explore whether special education training and professional development opportunities that increase instructors’ awareness of the potential effect of their own theories of intelligence will affect their instructional practices with students with learning disabilities.

Limitations

Limitations of this study included a lack of a grading rubric attached to the survey for instructors’ feedback, a lack of multiple opportunities for the participants to provide grading and feedback, a potential priming effect caused by the order of the survey items, and the risk of voluntary response bias for survey results. We designed the survey to solicit diverse responses with an open-ended response section and purposely did not include a rubric that might inadvertently limit instructors’ responses. However, some potential participants expressed a concern that they could not grade a piece of student writing without a rubric. We also realized afterwards that without a rubric, the instructors’ individual biases and beliefs may have an influence on their behavior of grading and providing feedback. Findings might be different if instructors were asked to assess a piece of student writing based on a more clearly defined rubric. Future studies can include a rubric and explore if grading and feedback would differ between instructors endorsing different theories of intelligence and between the two experimental conditions.

In this study, the participants were offered a single writing sample to provide a grade and feedback. This approach may have resulted in limited sampling for instructors’ grading/feedback. Future studies could include multiple writing samples across various topics and points of times to improve the reliability of the findings.

In this study, the participants responded to the two items that assessed their theories of intelligence before they were asked to complete the grading/feedback task. It is possible that those items have acted as a primer and influenced the participants to behave in ways that were consistent with their responses to those items and in ways that they may not have behaved if the priming effect had not been there. In future studies, the order of the survey items should be changed to avoid potential priming effect.

Additionally, while a sample of 101 participants was not small, in terms of percentage, we only obtained completed surveys from 3.5% (101/2856) of the instructors invited to participate in this study. Although we received responses from participants of a wide range of ages, teaching areas, and lengths of teaching experience, the voluntary selection bias still could have impacted the outcomes of this study. In the future, in order to obtain more representative results, it is important that college administrators incorporate a similar survey as part of a college-wide assessment of the relationships between instructors’ theories of intelligence and instructional practices for students with and without learning disabilities.

Implications

The results of the study have important implications for educators and administrators who may have opportunities to work with college students with disabilities. With an incremental belief, instructors are more likely to provide encouraging feedback. As discussed, constructive feedback from instructors is crucial to student learning, and instructors’ instructional practices are associated with their beliefs. Therefore, college administrators may consider providing educational sessions for college instructors to reflect on their implicit beliefs and associated teaching practices. The fact that instructors are more likely to raise grades for writing assignments of students with learning disabilities (e.g., dyslexia) is not an ideal practice. Thus, training or workshops designed to improve college instructors’ teaching practices for students with disabilities are much needed. These educational opportunities will raise instructors’ awareness and sensitivity to their teaching practices related to implicit beliefs in intelligence and students with disabilities, which will ultimately benefit student learning in order to reach their full potential.

Appendix: Instructions for the Grading/Feedback Task and the Student Writing Sample

Imagine you asked your students in a class to do some research about the effects of technology on children and write a paper based on their findings. It was a 300-level class designed for juniors. Each student was expected to include an introduction paragraph that provides an overview of the paper. Please read the introduction paragraph written by a student (copied below).

The Effect of Technology on Children

Technology can be very help with multiple things to make life easier. It makes life in school a lot easier and even life at home. Now a person can look up information for home work and pay bills at the same time. Things that a person would have had to leave their house for is now located in one place, the computer. Technology may be good in some ways and bad in others. The problem that is associated with technology is the time it is being used. A child should not use technology as long as they do and increasing time can come with many problems. Children are becoming unable to stop with technology language when it is not needed, communication problems, obesity, and self-esteem, and physical body problems in the future. Now technology is being used in schools more and extending children’s time on the computers with in the day. Technology use for children should be monitored and scheduled for how long they can be on it.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.