Abstract

Although the concept of “AI governance” is frequently used in the debate, it is still rather undertheorized. Often it seems to refer to the mechanisms and structures needed to avoid “bad” outcomes and achieve “good” outcomes with regard to the ethical problems artificial intelligence is thought to actualize. In this article we argue that, although this outcome-focused view captures one important aspect of “good governance,” its emphasis on effects runs the risk of overlooking important procedural aspects of good AI governance. One of the most important properties of good AI governance is political legitimacy. Starting out from the assumptions that AI governance should be seen as global in scope and that political legitimacy requires at least a democratic minimum, this article has a twofold aim: to develop a theoretical framework for theorizing the political legitimacy of global AI governance, and to demonstrate how it can be used as a compass for critially assessing the legitimacy of actual instances of global AI governance. Elaborating on a distinction between “governance by AI” and “governance of AI” in relation to different kinds of authority and different kinds of decision-making leads us to the conclusions that much of the existing global AI governance lacks important properties necessary for political legitimacy, and that political legitimacy would be negatively impacted if we handed over certain forms of decision-making to artificial intelligence systems.

The study of the social and ethical impact of artificial intelligence (AI) is still in its infancy and contributions to the field endeavor to keep up with the continuous developments of the booming AI industry. It is hence not surprising that key concepts like “AI ethics” and “AI governance” are still rather vague and undertheorized. We suggest that the former can be defined as the field of applied ethics that is concerned with the ethical questions that arise in light of actual and conceivable AI systems. The study of “AI governance,” then, could be seen as a subdomain of “AI ethics,” guided by the assumption that we can collectively influence the development of AI. In the literature, “AI governance” often simply refers to the mechanisms and structures needed to avoid “bad” outcomes and achieve “good” outcomes with regard to the problems and issues already identified and formulated within AI ethics.

In this article, we argue that although this outcome-focused view captures one important aspect of what “good AI governance” requires, its emphasis on the

The structure of the article is straightforward. In the first section, we give a brief overview of how the concept of “governance” is applied in the literature on AI governance, to illustrate the predominant outcome-focused view and the worries that it raises (section “What is ‘Governance’ in AI Governance?”). Thereafter, we focus on the first part of our twofold aim, developing the theoretical framework constituted by some basic normative boundary conditions shaped in light of the distinction between “governance by AI” and “governance of AI” (section “The Political Legitimacy of AI Governance: A Theoretical Framework”). The third section focuses on the second part of the twofold aim, applying this theoretical framework to current AI governance to illuminate how it can be used as a critical compass for assessing the (lack of) legitimacy of actual instances of AI governance (section “Assessing the Political Legitimacy of Global AI Governance”). The final section concludes and addresses the ways in which the proposed approach may respond to the worries raised by the outcome-focused view of AI governance (section “Winding Up”).

What is “Governance” in AI Governance?

The concept of “governance” in discussions around AI is currently a term much too broad for its own good. It is used to refer to everything from the plethora of “ethics guidelines” for AI development written by state- and non-state actors (Jobin et al., 2019), to the presence of human oversight in automated processes (AIHLEG, 2019: 16), and hypothetical international laws for preventing undesirable “race dynamics” among superpowers developing AI (Dafoe, 2018: 43–47). This imprecision is not surprising. First, there are a number of ways of defining AI, and the scope of what counts as AI governance hence depends on how AI is delineated, to begin with. 2 Second, both AI and its regulation are rapidly changing phenomena, and technological breakthroughs and suggestions for how to govern AI are constantly presented not only in academic journals, but also in blog posts, podcasts, and non-peer reviewed papers posted online. Contributors to the AI governance debate are not only academics but also nongovernmental organizations (NGOs) and tech companies with a potential interest in shaping the field in accordance with their interests. Indeed, some have suggested that the vagueness of the concept of “governance” lends it flexibility, which in turn explains why it is so popular among scholars and policymakers (Peters, 2012: 19). We will argue that there is a risk, however, that the way in which AI governance is currently conceived creates a blind spot for the distinctively political nature of the relevant questions about the goals of AI development and deployment, and what it would take for the answers to be democratically legitimate.

The simplest way to begin staking out what currently constitutes “AI governance” research, at least research relating to normative issues, is to conceive of it as a subdomain of the wider field of “AI ethics.” This field, in turn, could plausibly be defined by analogy to how other fields in applied ethics are delineated: just like “bioethics” is concerned with ethical issues arising in light of advances in the life sciences, AI ethics is the field engaged in answering ethical questions that arise in light of actual and conceivable AI systems. 3 Several recent prominent handbooks and academic overviews of the ethics of AI, for instance, introduce the field as concerned with the ethical implications and use of AI applications, and define it by giving examples of issues it covers, including questions around AI-powered autonomous weapons, criminal sentencing, automated work processes, autonomous vehicles and sex robots, and what the moral status of AI agents are (Bostrom and Yudkowsky, 2014; Dubber et al., 2020; Liao, 2020; Müller, 2020). Furthermore, most would recognize that AI development is not a deterministic process, but rather a practice that can be steered toward or away from certain outcomes. Often using lofty and imprecise terms, it is commonplace to state that “society” or “humanity” can “guide” AI development toward certain goals. One major obstacle to this, it is assumed but rarely explained with analytical rigor, is how to make sure that AI systems can be made to “align” with “human values,” often meaning roughly that the effects they produce should be in line with what “we” wish to see (Gabriel and Ghazavi, 2022).

We suggest that the currently most common way of understanding what “AI governance” is, follows from combining the characterization of AI ethics as constituted by the list of questions and issues that AI actualizes, with the assumption that we can collectively influence the development of AI. Hence, “AI governance” often refers to

We believe this is a straightforward way of understanding AI governance, and that it is only natural that a young and interdisciplinary field of study has coalesced like this. Indeed, we find it difficult to think of more fruitful ways of defining the field. It is crucial, however, to recognize that this is a largely

First, it means that research and policy proposals around AI governance become intimately tied to prior assumptions about AI ethics, and the specific issues that receive the most attention there become the most discussed governance measures. For instance, although the issue of AI safety—understood here as the problem of making sure that a potential future superintelligent artificial general intelligence does not harm or subjugate the interests of humans—historically has received much less attention and funding than the technical AI research and development that could create such an AI system, it has arguably grown to engage a significant part of the technical AI research community, as well as capturing the attention of many philosophers.

4

In this subfield, “AI governance” is often used to refer to the questions around how to treat and control a potential superintelligent AI as a

Second, if AI governance research draws too much on the list of issues identified in AI ethics, it risks overlooking institutions that do not pursue those aims narrowly construed, as well as the vast literature on (domestic and global) “governance” in political science and other fields, which investigates and theorizes governance arrangements more broadly understood (cf. Levi-Faur, 2012). As we will discuss below, many AI applications are already covered by existing legislation and regulation, and a large number of state and non-state actors are currently in the process of staking out positions and negotiating future regulations of AI development and deployment. For instance, although the new European Union (EU) directive on AI technology is still only in the making, the existing General Data Protection Regulation (GDPR) arguably covers at least some AI applications. The outcome-focused understanding of AI governance may lead us to remain focused on imagining idealized institutional responses to our list of issues, overlooking the way that existing, actual institutions influence the ways in which AI technology is built and implemented. As more such initiatives appear, they should ideally be both subjects of and an inspiration for AI governance research. 5

A third worry is that the outcome-focused understanding of AI governance naturally directs our attention toward the

So, while we do not believe that AI ethics and existing AI governance literature are misguided, we have argued that the way it is conceived risks leading to us systematically missing the important values at stake in how governance mechanisms for AI work, as distinct from the outcomes they produce. It is worth noting that, unlike some of the problems identified in AI ethics, such as algorithmic bias or lack of transparency in AI-assisted decision-making, the problem we have identified cannot be solved by better algorithms or more data, or by mechanisms that induce more ethical reflection or more diversity among those who develop AI technology (Cf. O’Neil and Gunn (2020)). Our point is that the very identification of the problems and potential of AI, the ranking of these, and the process of weighing them against other values, are all prior tasks that require politics, and should be subsumed under a properly conceptualized notion of political legitimacy.

The Political Legitimacy of AI Governance: A Theoretical Framework

While the prevailing outcome-oriented view of AI governance stresses one important aspect of “good governance,” since a focus on solving specific issues in AI ethics to avoid bad outcomes and achieving good ones is crucial for improving the whole global governance structure of AI, measuring good governance by the effects of governance mechanisms runs the risk of overlooking important procedural aspects of good AI governance. One of the most important properties of good governance from the perspective of normative political theory is political legitimacy. In this section, we develop a theoretical framework for analyzing the political legitimacy of actual and hypothetical global AI governance institutions.

Indeed, since political legitimacy is a heavily disputed notion in both the empirical and normative literature—alluding to everything from effectiveness to justice—the presumption that global governance must at least be minimally democratic to be legitimate is controversial. However, we assume for the sake of argument that it is sufficiently undisputed in the normative theoretical literature, at least among many political philosophers.

7

As stated in the introduction, our aim is not to defend a substantive first-order theory of global political legitimacy, but rather to spell out and defend some normative boundary conditions that any satisfactory account of the political legitimacy of AI governance must respect. Specifically, we will elaborate on the distinction between “governance

Global Political Legitimacy: Conceptual and Normative Assumptions

As noted in the previous section, a large part of AI governance is inherently global in scope and it is indeed a subset of global governance more generally. This suggests that we must apply a conceptual apparatus that is fitting for a global context to address the question of political legitimacy. For this purpose, the existing literature on AI governance would benefit from importing insights from the research on global governance conducted in political science and political theory. This section hence elaborates some general conceptual and normative assumptions made in the article about how political legitimacy and democracy must be understood in a global, as opposed to domestic, context.

Today, it is widely acknowledged among political scientists and normative theorists that political legitimacy is a desirable quality of global governance institutions more generally, and not only those regulating AI. Hence, even though there is little agreement on the specific regulative content of principles of political legitimacy, there is consensus on the value of political legitimacy in global governance. To theorize the political legitimacy of global governance, however, means to identify certain features of global political legitimacy which distinguishes it from political legitimacy as it has been applied traditionally. In the philosophical literature, political legitimacy is typically described as a virtue of political arrangements and the rules (laws) that are made within them. It refers to the justification of coercive power, political power or political authority, and usually signify the right to rule, and on most accounts also entail political obligations (Buchanan, 2002, 2004; Christiano, 1996; Wellman, 1996). Moreover, it is commonly assumed that principles of political legitimacy are supposed to regulate the relationship between what we may broadly call “rule-takers” and “rule-makers,” that is, between political entities—agents and institutions—that make, apply and enforce rules and the subjects to whom these rules apply (Buchanan, 2002; Erman, 2020; Buchanan and Keohane, 2006; Valentini, 2012).

This concept of political legitimacy has been developed for a domestic context, however, and is therefore not fully fitting for the global domain and for the specific circumstances of global politics, such as the emergence of new forms of rule, new actors, and the exercise of unchecked powers (Bohman, 2004). To make the concept useful for normative purposes in the global domain, and allow it to successfully classify objects in that realm, two modifications are called for, which are acknowledged by key theorists in the literature on global political legitimacy and which still preserves the core meaning of political legitimacy as it has traditionally been understood.

The first important amendment, suggested by Allen Buchanan, is to abandon the strong idea of “the right to rule” presupposed by the traditional understanding—presuming an exclusive right to use coercive force—which is ill-fitting for global politics, since no agent or institution in global governance rule or claim to rule coercively in this robust way. There is, in other words, no global entity that could, for instance, force AI developers to focus on certain applications or avoid others. Instead, a distinguishing property of global political legitimacy seems to be a weaker coercive element, where “being morally justified in exercising political power” implies issuing rules and ascribing benefits and costs for compliance or noninterference with the efforts to govern (Buchanan, 2010: 82–84).

The second modification concerns the commonsensical understanding of “the right to rule” and “rightful authority,” which is typically defined in terms of law and lawmaking and discussed in legal terms, such as in terms of the capacity to impose legal duties (Buchanan and Keohane, 2006; Christiano, 2013). Since most global governance arrangements and institutions are not lawmakers, they could exercise political power without being a proper object of global political legitimacy on this reading, and a satisfying account must recognize this. Rule-making in a global context hence ought to include not only lawmaking but also policymaking and other kinds of decision-making on political matters (i.e. matters of public concern). As we will return to below, this captures the way that certain entities currently exert influence over AI development.

In sum, on the proposed conceptual framework, the function of principles of global political legitimacy is to regulate the relationship between political entities and those over whom they exercise political power to determine under what conditions they have the right to make rules, that is, to make political decisions regarding laws and policies, and where benefits and costs are attached to, for example, compliance and noncompliance, noninterference and interference (Erman, 2020).

Moving from conceptual to normative presumptions, we assume in this article that global governance arrangements around AI, but also more generally, must at least be minimally democratic in order to fulfill the requirements of global political legitimacy. It is possible, of course, to claim that the promise or threat of AI is

Moreover, since we do not pursue a substantive argument offering a specific theory of the political legitimacy of global AI governance, but rather aim to defend certain normative conditions that should be met when theorizing such a theory, it is important to adopt a broad definition of democracy, to make it compatible with all main conceptions in democratic theory, ranging from voting-centered views to views focusing on deliberation and civic engagement. Democracy is here seen as a normative ideal rather than simply a decision method, which means that the principles offered as a minimal democratic threshold for global political legitimacy are seen as part of such an ideal. To treat democracy as a decision method is rather uninteresting in the present context, since that would entail that the answer to what a democratic threshold would be is determined solely by the normative ideal that motivated the choice of democracy (as method) to begin with. Instead, our broad understanding of democracy as an ideal alludes to “the rule by the people,” which is seen as a particular form of political self-determination or self-rule. On this view, a political entity (e.g. system, polity or institution) is democratic if, and only if, those who are affected by its decisions have an opportunity to participate in their making as equals (Erman, 2020). 9

With these general conceptual and normative assumptions on the table concerning global political legitimacy, let us move over to the specific task of spelling out a number normative boundary conditions that a satisfactory account of the political legitimacy of AI governance must respect.

Governance by AI and Governance of AI

In the literature on AI governance, focus is typically directed either at “governance

A concern about governance

Governance

The distinction between governance by AI and governance of AI is rarely mentioned in the literature, and above all, it is to our knowledge not problematized from the viewpoint of AI ethics.

11

This is unfortunate, since it risks hiding the fact that the debate around AI governance incorporates two sets of largely different phenomena, whose relationship ought to be theorized. The normative considerations regarding global political legitimacy that we have just presented suggest that governance by and governance of AI are, in fact, related. Assessing the political legitimacy of existing and conceivable AI governance will hence require particular attention being paid to

Normative Boundary Conditions for the Legitimacy of Global AI Governance

Large parts of the current global governance of AI consists of numerous standards, guidelines, policy frameworks. laws, as well as international policies, and political entities rely on governance by AI in more and more areas. In order for global AI governance to be legitimate, we will suggest, it needs to follow the principles that regulate the relationship between the political entities involved and those over whom they exercise political power. These principles determine under what conditions the governing entities have the right to make political decisions. Recall that according to the general understanding of democracy assumed here, the political entities (agents and institutions) making up the regulatory AI structure are democratic if, and only if, those who are affected by the decisions have an opportunity to participate in their making as equals.

12

Given that we assume that global political legitimacy requires a democratic minimum, the democratic quality of this regulatory structure will depend on (a) what kinds of

Broadly speaking, there are two kinds of authority involved in AI governance, which have fundamentally different normative status from a democratic standpoint: what we here call “authorized entities” and “mandated entities,” respectively. What we mean by

Apart from authorized entities, what we mean by

These two different kinds of authority are in turn tied to different kinds of decision-making, from the standpoint of democracy. To explain why, we must return to the idea of democracy as an ideal of political self-determination or self-rule. The normative core of this ideal is the idea that a person is only obliged to comply with the rules she has had the opportunity to authorize. Thus, democracy as a form of political self-determination has to do with political “authorship,” according to which an agent may only be rightfully coerced by decision-making insofar as she has taken part in its authorization. This means that there is a fundamental difference between coercive decision-making and non-coercive decision-making from a democratic standpoint. In practice, coercive decision-making usually comes in the form of lawmaking, whereas non-coercive decision-making typically comes in the form of policymaking. 13 Here we use “coercion” broadly, to make our argument neutral vis-a-vis specific theories of democracy and compatible with the permissive understanding of global political legitimacy sketched earlier. It refers not only to a physical aspect, which is often stressed in the literature—involving force, sanctions, or threat of disciplinary action—but also an authoritative aspect, which has to do with being subjected to authoritative commands. In both cases, benefits and costs are usually tied to compliance and noncompliance.

Typically, laws are more fundamental and formal than policies, constituting a system of rules that sets out principles, procedures, and standards that proscribe, mandate, or permit certain relationships between people and institutions. Policies usually consist of statements setting out certain procedural and substantive goals of what should be accomplished in the near or remote future. Importantly, however, policies comply with laws and are formulated within a legal framework, even if they may aim to fundamentally change an existing law or identify a new law that is needed (Erman, 2020; Erman and Furendal, 2022). In our view, these two kinds of decision-making (coercive and non-coercive) are connected to the two kinds of authorities discussed above in the following way: it is

This means that a satisfactory account of the political legitimacy of AI governance should respect that there is a specific normative relationship between

Authorized entities in the AI space, would be those entities which have the right to rule because they have been established through a democratic procedure in which those affected by AI (in one form or the other) have had an opportunity to participate as equals in shaping the “control of the agenda” concerning AI, generally seen as a fundamental property of democracy (Dahl, 1989). This is why authorized entities necessarily must engage in governance

It also follows from the normative conditions defended here that authorized and mandated entities can create a legitimacy chain between those affected and the entities that create coercive law and non-coercive policy. This requires, however, that each authorization and delegation fulfills a requirement of

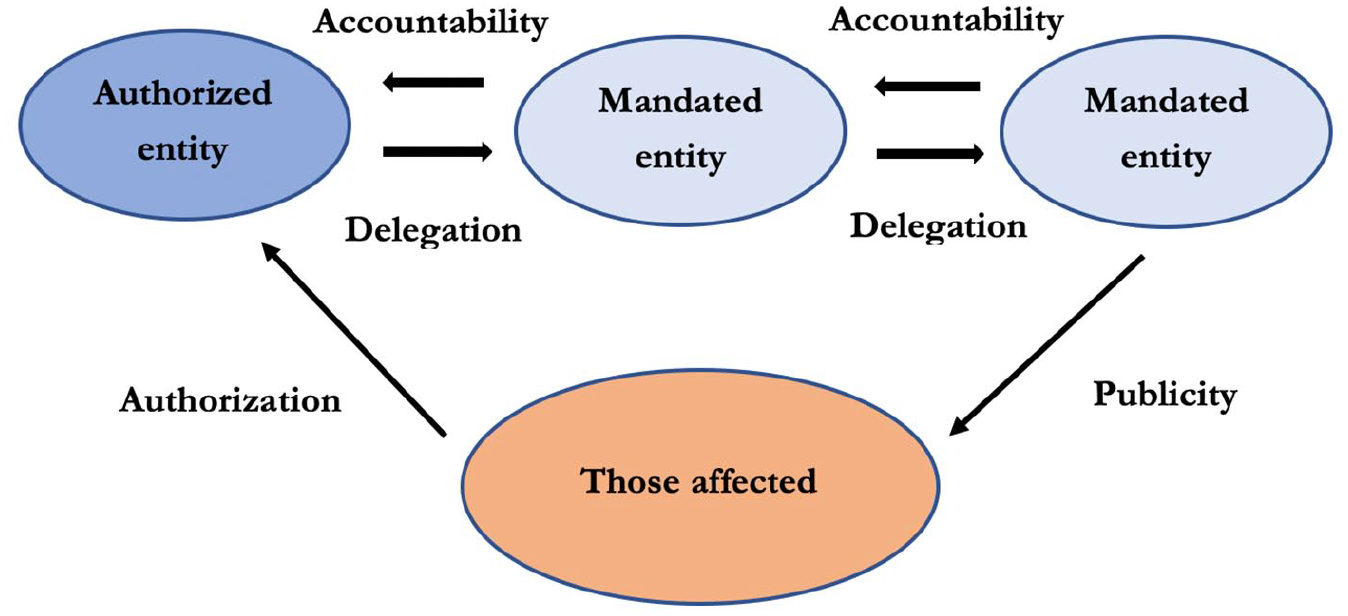

If we depict these normative boundary conditions as a (circular) chain of legitimacy (Figure 1), an authorized entity owes accountability to those who authorized it (those affected, according to some criterion of inclusion). If the people are dissatisfied (according to some standard of accountability), they may withdraw the political power. Similarly, every mandated entity owes accountability to the authorized entity which lent it authority through delegation. If the mandated entity does not live up to the appropriate accountability standard (which, again, depends on the democratic theory), its authority may be withdrawn. This is the case for every step of delegation in a legitimacy chain. For not only authorized entities may delegate authority to mandated entities; also mandated entities are allowed to delegate political power in a further step to an additional mandated (often very specialized) entity, as long as the first step in the chain of delegations is from an authorized entity. However, the right to revocation is tied to every delegation in the chain: an entity

Normative boundary conditions as a (circular) chain of legitimacy.

Winding up, in this section we have conducted rather abstract and broad-strokes theorizing to address the first (and main) part of our twofold aim. We believe that this is called for, since large parts of the debate on AI governance have been rather narrowly focused on specific instances of AI governance and have failed to recognize the normative significance of the distinction between governance of and governance by AI, and the outcome-focused contributions to the literature rarely ask what conditions are needed for governance arrangements to be politically legitimate. Our suggested theoretical framework raises the gaze to capture on a general level some normative boundary conditions that we think a satisfactory account of the political legitimacy of global AI governance should respect. Needless to say, what it means to respect these conditions will depend heavily on what a specific substantial theory of legitimate AI governance aims to achieve, and what its principles are meant to regulate. For example, if the intention is to offer a non-ideal theory, “respecting the normative conditions” would presumably be interpreted in less demanding terms—with principles tied to several feasibility constraints—since such a theory aims to be fully realizable. If the intention is to offer an ideal or even ideal and comprehensive theory, on the contrary, not only would the conditions presumably be respected in a strict sense, without much concern for feasibility apart from the proviso “ought implies can,” but additional requirements would probably be added, concerning for instance distributive justice.

Assessing the Political Legitimacy of Global AI Governance

With this theoretical framework on the table, let us move to the second part of our twofold aim and apply the framework on current AI governance to illustrate how it may work as a compass for assessing the political legitimacy of instances of global AI governance. Since we have laid out some normative boundary conditions that a reasonable account of political legitimacy should respect, rather than developed a substantial theory, the framework cannot be used to fully evaluate all existing varieties of global AI governance, nor can it be used to specify in detail what the most politically legitimate AI governance regime would be like. Both tasks would require us to commit to specific democratic theories and their assumptions. Nevertheless, the more minimalist framework we have offered can be used as a compass to assess particular governance arrangements and indicate whether they are more or less legitimate. In light of what we have argued above, we can conceive of five possible kinds of politically legitimate AI governance in the global domain, and one common form of governance that seems to lack legitimacy.

First, regarding governance

Second, there could be

In addition, politically legitimate global governance of AI could also take the form of

We could also conceive of

Finally, there could be (e) mandated entities that engage in governance

Importantly, two interesting things follow from the framework presented. First, as we mentioned above, our view suggests that

Second, and equally important, many of the entities that are engaged in the global AI governance currently taking form, do not fit into either of the five categories. 19 One of the most prominent kind of actor involved in current global AI governance, for instance, are neither mandated nor authorized, but non-state, private actors. Many AI-developing companies and interest groups have recently authored various “soft law” documents, including ethics guidelines, standards, and codes of conducts set to guide their continued work in the sphere. Examples of entities that have presented or committed to such initiatives include industry organizations like the Institute of Electrical and Electronics Engineers (2021), tech giants like IBM (2021), AI labs like DeepMind, as well as individual AI researchers and developers (Future of Life Institute, 2018). In one sense, these documents could be seen as committed to core democratic values, since they are a form of self-regulation, where relevant stakeholders voluntarily commit to an agreement that guides their future actions in the AI sphere. Hence, they could arguably be seen as an instance of “corporate social responsibility” (CSR); initiatives that aim to promote societal and humanitarian goals by supporting ethically oriented business practices. Furthermore, one could argue that if we relaxed our strict understanding of authorization, people have indeed exercised influence—in their role as consumers in a market—and in some sense authorized this kind of governance. Such initiatives could hence be considered politically legitimate, both because they ultimately promote the good outcomes that many of those interested in AI governance care about, and because on this wider interpretation of authorization, people have indeed had an indirect say in them.

In response, we recognize that initiatives from non-mandated and non-authorized entities

Winding Up

We started this article by suggesting that “AI governance” typically refers to the structures and mechanisms needed to avoid bad outcomes and achieve good outcomes with regard to an already identified set of AI-related issues. We then raised a number of concerns with this predominant outcome-focused view of AI governance. Above all, we expressed the concern that a focus on the effects of governance mechanisms runs the risk of overlooking important procedural aspects of good AI governance. Against the backdrop of this lacuna in current research—together with the two presumptions that political legitimacy is one of the most important procedural properties of good governance, and that political legitimacy at least must be minimally democratic—we have developed a theoretical framework consisting of a number of normative boundary considerations that any reasonable account of the global political legitimacy of AI governance should respect. This was done by elaborating on the distinction between “governance by AI” and “governance of AI” in relation to different kinds of authority and different kinds of decision-making. Finally, we demonstrated how this framework could be used as a critical compass for assessing the legitimacy of actual instances of global AI governance. Among other things, we pointed out that both the idea of outsourcing democratic decision-making to AI systems, and the soft law documents and ethics guidelines produced by non-mandated private actors in AI governance have deficiencies with regard to political legitimacy.

Recall the three worries we initially identified when considering the outcome-focused character of current research on AI governance. The first two worries—which stressed that AI governance research may become too focused on the issues that receive the attention of AI ethicists, and overlook existing regulations and institutions—seem readily fixable by simply widening the field and welcoming alternative research strategies. AI ethics research will clearly continue to play a crucial role in understanding what is at stake when AI is being developed. The third worry, however, was that AI governance risks being reduced to the question of how to implement and enforce an already fixed agenda. We have tried to address this worry by offering normative considerations that enable a more profound and systematic understanding of global AI governance by problematizing the very assumptions upon which an outcome-focused view relies. The outcome-focused view investigates the best solutions to a list of already identified issues that AI actualizes. The values of political legitimacy and democracy, however, raise more fundamental questions about

Of course, our approach does not in any way undermine the outcome-focused view of AI governance. Rather, it complements it by directing our focus elsewhere, on the procedural aspects that also should be taken into consideration when theorizing good AI governance. At best, it offers a more comprehensive analysis of what good AI governance entails in the global domain. Obviously, this has to be investigated in much more detail, but we have a sense that also the more narrow outcome-focused view would benefit from being incorporated in to a broader approach to AI governance, such as the one sketched here.

Footnotes

Acknowledgements

The authors owe special thanks to the editor and anonymous referees of

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Research projects funded by Marianne and Markus Wallenberg Foundation (MMW 2020.0044) and by the Swedish Research Council (2018-01549).