Abstract

Policing is increasingly being shaped by data collection and analysis. However, we still know little about the quality of the data police services acquire and utilize. Drawing on a survey of analysts from across Canada, this article examines several data collection, analysis, and quality issues. We argue that as we move towards an era of big data policing it is imperative that police services pay more attention to the quality of the data they collect. We conclude by discussing the implications of ignoring data quality issues and the need to develop a more robust research culture in policing.

Introduction

The collection of various types of data by police services has increasingly become a key aspect of everyday police work. Canadian police agencies have been moving towards data and intelligence driven approaches, including storing and using big data. This has partly stemmed from discussions around developing more effective and efficient police services in order to tackle increasingly complex crime and safety issues (Sanders et al., 2018). As a result, police officers are spending large amounts of their time documenting their work (e.g., report writing) and entering data into computer databases (Chan, 2001; Ericson and Haggerty, 1997). In addition, new technologies have broadened police data collection beyond crime and arrest data to include data from videos, pictures, social media posts, automated license-plate readers, biometrics, phone records, and facial-recognition software (Chan and Bennett Moses, 2016; Ferguson, 2017; Mackenzie, 2015; Sanders and Sheptycki, 2017; Walsh and O'Connor, 2018). Likewise, the rapid growth of the volume of this data has overtaken the ability of analysts to meaningfully process, organize, and analyse it (Burcher and Whelan, 2018; Sheptycki, 2004).

While the police continue to collect vast amounts of data, we know little about the quality of the data coming into police data management systems. Therefore, the purpose of this paper is to examine police data quality from the perspectives of the analysts working regularly with police data in Canada. To that end, this paper first examines the literature pertaining to policing approaches that are increasingly more data-driven. Next, we examine the existing literature on data quality in policing before describing the survey methods used to collect analysts’ perspectives. Then, the findings highlight the main data quality themes emerging from the survey. Finally, in our discussion we pay particular attention to the implications of ignoring data quality in policing. We argue that as police services become more committed to the use of data to inform operations, it is imperative that they also consider the quality of their data. Without doing so questions the validity and reliability of the analyses that drive police decision-making.

Data-driven policing

Advances in information technologies have substantially changed policing (Chan, 2001; Lum et al., 2017). Ericson and Haggerty (1997) go as far as to argue that the primary role of police in contemporary times has changed from being principally law enforcers and maintainers of social order to that of being information managers, communicators, and collectors of risk knowledge. This shift also comes at a time when the new harms police are asked to manage are ever-increasing (e.g., identity theft, cybercrime) (CCA, 2014). Additionally, police officers are increasingly spending more of their time dealing with non-criminal matters (e.g., mental health calls), engaging in proactive policing (e.g., community meetings), and documenting their work (CACP, 2015). Thus, much of policing work is unrelated to crime statistics/rates (Bass et al., 2014).

Despite this increasing complexity and demand, police are expected to do more with fewer resources as the costs of policing have become a growing concern for the public and governments (AMO, 2015; CACP, 2015; Huey, 2017; PSC, 2013; Taylor Griffiths et al., 2015). While tapering off since 2010/2011 after nearly a decade of steady increases in police budgets, policing still costs Canadians approximately 15.7 billion dollars a year (Conor et al., 2019). In part, the move towards more evidence-based policing is a response to cost concerns and is an effort by police services to show their value for public dollars spent (Cordner, 2020). Addressing contemporary harms in a cost-efficient manner has compelled policing to become more data-driven and data-focused. Policing has become increasingly reliant on the use of sophisticated information technologies such as crime mapping along with capitalizing on highly skilled law enforcement analysts who examine and transform the vast amount of data coming into police agencies into actionable intelligence (Cope, 2004).

Data-driven policing is relatively new (Brayne, 2017). The innovative development of Compstat (or computerized statistics), which originated at the New York City Police Department in the 1990s is one of the first examples of police strategically utilizing data to inform their response to crime and disorder. The Compstat policing model was dual-purposed, as it involved the use of collected data to help police management measure performance and to identify where crime was geographically concentrated. Armed with this data, commanders within those precincts were expected to understand and address identified crime issues (Weisburd et al., 2003).

New technologies have benefitted police, but they have also increased the complexity of crime analyses (Chan, 2001; Sanders and Henderson, 2013). For example, social media users publicly post a wealth of data that can be analysed by the police (Albrechtshund, 2008; Schneider, 2016; Trottier, 2012). Similarly, the use of big data by police often involves the collection and analysis of quantitative data to help predict where crimes are most likely to occur, identify risky individuals, predict potential victims of crime, and – most controversially – shape preventative intervention. These types of analyses require significant amounts of data, advanced statistical analyses, and often the use of computer algorithms, data mining, and machine learning (Aradau and Blanke, 2017; Brayne, 2017; Ferguson, 2017; Joh, 2014; NASEM, 2018; Papachristos et al., 2013; Perry et al., 2013). Likewise, some scholars have argued that most policing data warehouses are inadequate to manage both structured and unstructured data and do not meet standards for advanced analytics (Sanders et al., 2018).

The increasing use of data in policing is an attempt to move policing away from its traditionally reactive tactics to more proactive techniques (Dencik et al., 2018) and to improve police decision-making. However, decision-making that is based on faulty data, or failing to recognize the limitations of collected data, could result in the misallocation of valuable police resources, wasted public dollars, and even worse, possibly more crime and victimization. Similarly, interpreting accurate or inaccurate data incorrectly could lead to similar results. These potential issues impacting how data is utilized in police decision-making are best understood through a lens of data quality.

Data quality in policing

Police services that adhere to data-driven and evidence-based policing approaches rely on not just the use of sophisticated technologies and analyses, but decision-making that is based on ‘good data’. For the purposes of this article, we consider ‘good’ or high-quality data to be valid and reliable (Mitar, 2004) which includes data being objective, transparent, reproducible, useful, relevant, timely, and accessible (Condelli et al., 2002; Howard et al., 2000). When these standards are unable to be met, it is important to acknowledge the limitations of the data (e.g., arrest data can be influenced by officer discretion, see Porter et al. [2020]) being reported on so that these limitations can be factored into decision-making. Transparent data quality standards help support data literacy, particularly law enforcement analysts whose work involves drawing conclusions based on the data but also as analyses become more automated (Sanders et al., 2018). Implicit in these arguments is that rigorous standards are needed to improve data quality and access, particularly when data is sometimes stored in various data systems or entered in varied ways. Furthermore, within the Canadian context specifically, Sanders et al. (2018) point out that in Canada, unlike elsewhere (e.g., United Kingdom), there is no overarching intelligence model that governs how data is collected, organized, and used.

With these concerns in mind, there are several examples that support worries over the quality of criminal justice data under current processes and standards. At a broad level, there are well-established concerns related to the underreporting of particular types of crime in official statistics, including sexual violence (Statistics Canada, 2015). Further, crime statistics have often been criticized as being better measures of what police do with their time than of actual criminal occurrence (Joh, 2015). More specifically, Hickman and Poore (2016) examined data collected by the Bureau of Justice Statistics in the United States (US) on police use of force and found that this data was of little reliability or validity. This data was noted as problematic because police agencies interpreted the data reporting requirements in different ways – some reported all citizen complaints rather than use of force complaints, some reported only complaints they followed up on for further investigation rather than all citizen complaints, and some used officers’ own reports of use of force rather than citizen complaints. Despite these issues with data quality, the US Department of Justice continues to refer to such data as a reliable and valid indicator of police use of force.

The consequences of poor data quality and clear standards can be amplified with recent technological advances (Sanders et al., 2018). For example, the use of algorithms and predictive analytics are often assumed to be more impartial than traditional data collection and analysis methods because of the statistical sophistication and quantity of data utilized (Bennett Moses and Chan, 2018; Joh, 2015; Perry et al., 2013; Selbst, 2017), yet these technologies do not ensure data quality specifically (Ferguson, 2017). Though, some ‘big data’ techniques are believed to be less sensitive to issues of validity and reliability because of a more ‘pure’ focus on association (Eubanks, 2017; Ferguson, 2017). These data quality issues become important when trying to interpret and design policy based on the analytics provided.

While traditional data collection by the police has been fairly basic and focused on crime statistics (e.g., the reduction of crime rates), new forms of data collection and analysis require police officers and organizations to acquire new skills, technologies, and adaptabilities. Some studies have examined how individual officers interact with and use information technologies (e.g., Chan, 2001; Lindsay et al., 2011, 2014; Meehan, 1998; Sanders, 2014; Sanders and Henderson, 2013; Singh, 2017), but few have examined the value that officers ascribe to the data they enter into these databases. As Lum et al. (2017: 156–157) note, ‘introducing technological innovations such as crime analysis and information technologies may not produce expected returns for new policing paradigms…unless officers see these alternative approaches as “real police work”’.

The little research that has been completed examining perspectives on data entry and collection has found that those entering the data (oftentimes this is frontline police officers but this can vary by location) see little benefit to their daily work while those at higher ranks and those designing and analysing the data collected perceive large benefits (Lum et al., 2017; Sanders and Henderson, 2013; Terpstra and Kort, 2017). One explanation for the lack of enthusiasm expressed by officers towards data has to do with the heavy and ever increasing paperwork burden they carry (Ericson and Haggerty, 1997). For example, Koziarski (2018), examining specialized mental health crisis intervention and co-response teams in Canada, found that in some jurisdictions a mental health call required several hours of, often complex, paperwork before officers could proceed to the next call. Essentially, police officers would much rather be doing police work than documenting it through paperwork and data entry (Terpstra and Kort, 2017; UK Home Office, 2016). This is similarly reflected in comments made by some analysts suggesting that police officers tend to want quick solutions instead of a thorough analysis simply because of the urgency of their work (Belur and Johnson, 2018). This may be in part due to a lack of understanding that some officers have about the work of analysts and the value that they bring to police agencies (Sanders et al., 2018). Furthermore, there is some evidence that officers can easily circumvent having to enter data into formal databases. Meehan (1993) found that police were able to keep crime rates at low levels by manipulating formal reporting. Instead of formally recording incidents, police kept informal internal records. How officers perceive the value of data and interact with it is crucial in contemporary policing where ‘real’ and ‘good’ police work is increasingly being associated with paperwork, data entry, and analysis.

The lack of buy in from frontline personnel, who are mainly responsible for data entry, poses a significant question as to how well police are committed to data-driven policing and the necessary data collection tasks. Having high quality data entered into police data management systems has profound implications for the subsequent data analyses and decisions police make based on analytical work. As Ferguson (2017: 5) notes regarding big data ‘[t]he algorithmic correlations can be wrong. And if police act on that inaccurate data, lives and liberty can be lost’.

The quality of police data is one of the most prominent obstacles for law enforcement analysts (Sanders and Condon, 2017). Scholars have noted that analysts frequently report crucial details missing from intelligence reports, as well as incorrect data entry (Burcher and Whelan, 2018; Cope, 2004). In some instances, officers duplicate efforts by entering the same data across various data systems, which contributes to greater opportunity for error (Sanders et al., 2018). Software design can also impact data quality. For example, data entered manually into data platforms can lead to spelling errors which poses difficulties in obtaining accurate search results (Sanders et al., 2018). Further, while data entry is often standardized (e.g., through the use of drop down boxes), this still requires that people subjectively categorize incidents which can lead to inconsistent data entry (Sanders and Henderson, 2013). Standardized platforms for data entry also do not necessarily ensure that the data that are valuable to analysts are being collected. As Sanders and Condon (2017) found, analysts rarely provided input on platform design and acquisition.

To correct this, analysts commonly engage in data cleaning to compensate for poor quality data (Belur and Johnson, 2018; Innes et al., 2005). In fact, one of the foundational texts for data analysts, Exploring Crime Analysis: Readings on Essential Skills (Bruce, 2017a), includes a discussion on data quality and illustrates various data cleaning techniques intended to minimize the problems associated with expected data quality issues. To the extent that analysts engage in such tasks along with routine administrative reporting, it may leave highly skilled analysts feeling underutilized or unequipped to conduct more sophisticated analyses (Sanders and Condon, 2017).

Poor data quality can result in detrimental effects when it comes to the interpretation of data. For instance, Sheptycki (2004) highlights the possibility of linkage blindness, referring to the linkages between crimes that analysts fail to spot on account of inadequate or insufficient data at the original stages of incident reporting. There is also the possibility of noise, which refers to the value of processed information that circulates intelligence systems. There is a high level of discretion that a police officer has when determining which information from an incident is significant enough to record. This discretion has an undue influence on the distance between the reporting, the recording, and the interpretation of data (e.g., greater gaps result in greater noise) (Sheptycki, 2004). Taken together, there are several obstacles that can hinder the ability of analysts in police services to produce high quality analyses.

Despite an increasingly strong reliance on information and data by police, scholars have only started to uncover the potential data issues that exist for analysts in the field (Burcher and Whelan, 2018; Cope, 2004; Sheptycki, 2004). Overall, it is still unclear whether the data collected by police services is of a quality that can determine successful policing strategies (CCA, 2014) and whether the data are of sufficient quality to support more data-driven forms of policing than traditional policing techniques. The research conducted thus far suggests that much of the data collected by police services appear to be utilized to demonstrate productivity (e.g., number of arrests) and measure performance (e.g., reduced crime rates) (Muller, 2018; Schulenberg, 2016), with less attention towards using that data to evaluate or design interventions. More recently though, Sanders et al. (2018) conducted preliminary interviews with Canadian analysts, which highlighted that some Canadian agencies, attempting to be more intelligence and data-driven, are in fact changing how they utilize, organize, and manage data including incorporating methods to evaluate and improve data quality. Some agencies have gone so far as to create data quality assurance units. Despite a lack of overall intelligence framework to support consistent data quality, Canadian police agencies are required to follow Uniform Crime Reporting rules in order to report their incidents of crime (Statistics Canada, 2018) and there are mechanisms in place that ensure consistent and error free reporting practices (Fetter, 2009). Although this is a positive step, it is clear that the data collected and analysed by police are not just about crime or victimization.

The research has begun to understand data quality issues in policing but given the complexity of the data collection and analytics enterprise more research is needed. This article adds to the literature on this topic by discussing data quality from the perspectives of analysts working with police data. Analysts are a key resource to assess a police agency’s data quality since their role involves sifting through various data sources, organizing, and attempting to make meaning of ‘noise’ in order to provide decision-makers with options to action on based on their findings (Bruce, 2017b). Indeed, in data-driven approaches, analysts are responsible for guiding the prioritization of police resources to address crime and disorder (Boba Santos and Taylor, 2014). Likewise, some scholars would argue that crime analysis plays a key role in effective policing practices (Boba Santos et al., 2014), including hot spots and problem solving policing (Lum, 2013). However, numerous scholars have demonstrated cultural and structural barriers to the integration of data driven analysis and analysts into policing (Belur and Johnson, 2018; Sanders and Condon, 2017; Sanders et al., 2015). Analysts are therefore one of the main consumers of policing data and are therefore in a position to discuss its quality and usefulness.

Methods

Data were collected via online survey distributed between June and August 2019. Our survey built on some of the rich qualitative work, already completed by other researchers (e.g., Sanders and Condon, 2017), with only a limited number of analysts at specific Canadian police services. That is, rather than focusing in on a few select police services, the target sample for our survey was analysts working in police services across Canada. Our goal was to examine the breadth of potential data quality issues being experienced in a variety of police services across Canada. To this end, we sent a recruitment email to every English-speaking police service in Canada (n = 150) requesting that analysts complete the survey. The survey link was also posted on social media and distributed through the Canadian Society of Evidence Based Policing’s newsletter which is sent to subscribers monthly.

Our survey was distributed using the survey software Qualtrics. This article is part of a larger research project examining analysts’ work in police services in Canada. The specific research question guiding this paper is: How do those tasked with analysing and managing data in police services view data quality? Given the lack of research on data quality, our survey questions were designed to be exploratory and gather a breadth of knowledge on the topic rather than be used to generalize to all analysts working in Canadian police services. The data collected from participants was anonymous and any open-ended answers to questions that were potentially identifying were anonymized. The findings from our survey are reported in aggregate form. As discussed above, data quality in this article is conceptualized as how reliable and valid the data is, but in keeping with the exploratory nature of this research, data quality was not defined for participants. Therefore, analysts’ responses are based on their subjective perceptions of data quality.

The overall goal of this paper is to present a clearer understanding of data quality in police services from analysts’ perspectives (the ones actually working with the data on a daily basis). The analysis presented in the upcoming findings section is meant to highlight data quality issues experienced by police services. To that end, the statistical software SPSS was used to analyse the quantitative survey results. A series of open-ended questions were also used to supplement the closed-ended survey questions. These open-ended questions were coded using NVivo software. Coding was done through an iterative process and responses underwent multiple readings (Charmaz, 2005; DeCuir-Gunby et al., 2011). More specifically, participant responses were first coded based around broad themes. A second reading of participants’ responses refined the initial coding scheme into more specific codes and ensured consistency across the coding framework. Finally, participants’ responses were read a third time, codes were again refined, and selective quotes were chosen to illustrate themes found in the data. These findings are used to support and provide context to the quantitative survey findings where applicable.

In total, 67 people began our survey, with 44 completing the majority of the survey, seven completing portions of the survey (which we have included in the findings), and 16 clicking through the survey but not providing any answers (where n’s below do not equal 51, participants either stated they preferred not to answer the question or did not provide a response). Unfortunately, Statistics Canada, the governmental body that regularly tracks police resources in Canada, has not yet examined the number of analysts working in policing. At best, we can estimate that around 28,422 civilians worked in policing in Canada in 2016 (approximately 29% of all police personnel), but this includes managers, IT professionals, and other types of civilians including analysts (Greenland and Lam, 2017). Therefore, we do not know how many analysts work at police services in Canada and thus were unable to examine how representative this number is of all analysts. However, it should be reemphasized that representativeness was not the goal of the survey; instead it was obtaining a breadth of understandings pertaining to data quality. Of the 51 participants included in our analysis, the vast majority (n = 49, 96%) were civilians with only two sworn officers (4%) working as analysts. Those completing the survey had a range of analyst job titles including crime analyst, crime intelligence analyst, strategic planner, statistician, and research analyst. Internationally, there are no commonly recognized standards associated with these titles and many can have overlapping responsibilities, however the main commonality is that each work with police data. Therefore, we refer to these range of job titles in this paper as analysts.

Participants had been working at their current police service as an analyst for an average (mean) 7.4 years (median = 6.0, range 6 months to 23 years) and had worked as an analyst for an average (mean) 11.25 years (median = 9.0, range 6 months to 30 years) over their careers. Most participants worked at agencies that had over 100 sworn officers (n = 41, 85%) at their police service with the remaining having 51–100 officers (n = 3, 6%), 10–50 officers (n = 3, 6%), and under 10 officers (n = 1, 2%). Most participants worked at police services that employed over 10 analysts (n = 19, 40%) or only 1–2 analysts (n = 15, 31%), and to a lesser extent, 6–10 analysts (n = 6, 13%) or 3–5 analysts (n = 8, 17%). Overall, we were able to obtain responses from analysts across Canada (Atlantic region [n = 8, 19%]; Central region [n = 19, 45%]; Prairie region [n = 11, 26%]; West Coast [n = 4, 10%]) as well as from urban (n = 31, 71%), suburban (n = 12, 27%), and to a lesser extent rural (n = 1, 2%) areas.

In terms of demographics, participants ranged in age from 27 to 56 with an average (mean) age of 40 (median = 40, n = 39). Most participants identified as female (n = 27, 77%) in comparison to male (n = 8, 22%). Participants tended to have an undergraduate degree (n = 21, 49%) or graduate degree (n = 21, 49%) with only a minority having only some college/university (n = 1, 2%). Educational backgrounds encompassed a range of different fields including but not limited to criminology, psychology, geography, policing, sociology, computer science, communications, and political science. Most participants made over $100,000 a year in their positions (n = 23, 62%) with 27% (n = 10) making between $80,000–99,999 and 10% (n = 10) making less than $80,000. In terms of the racial/cultural groups analysts identified with, the majority stated they were White (n = 39, 87%) with the remaining stating they were Indigenous/Aboriginal (n = 2, 4%), South Asian (n = 2, 4%), Black (n = 1, 2%), and Latin American (n = 1, 2%).

Findings

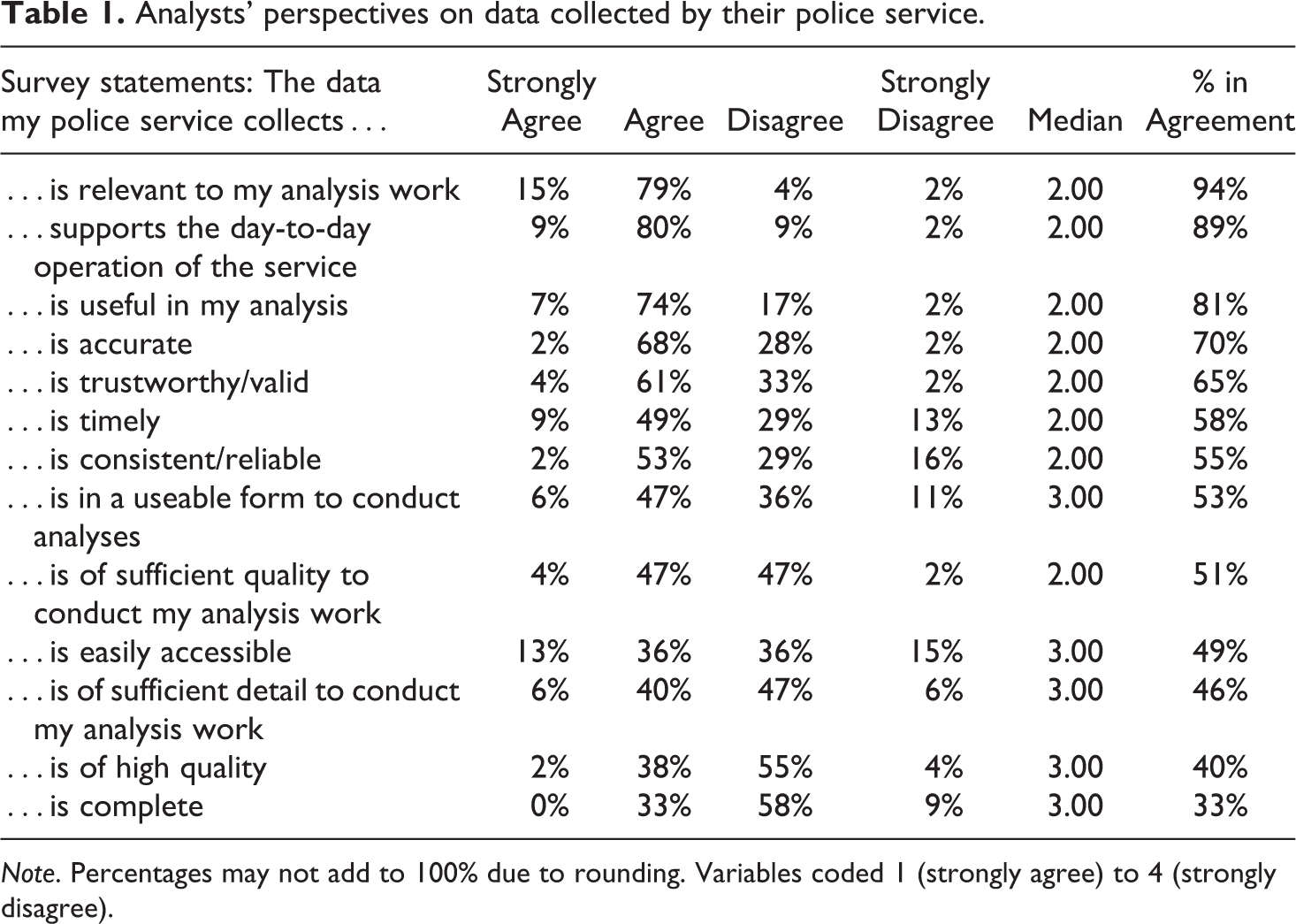

Participants were asked to state their level of agreement (i.e., strongly agree, agree, disagree, strongly disagree) with a series of statements about the data collected at their police services. The results are presented in Table 1. In what follows, we discuss the percentage that both strongly agree and agree compared to those who strongly disagree and disagree.

Analysts’ perspectives on data collected by their police service.

Note. Percentages may not add to 100% due to rounding. Variables coded 1 (strongly agree) to 4 (strongly disagree).

The majority of analysts agreed that the data collected by their police service was relevant to their analysis work (94%), supported the day-to-day operation of the service (89%), and was useful in their analysis (81%). However, when it came to questions about data quality, confidence in the data began to decline. Seventy percent of the respondents felt the data was accurate and 65% felt it was trustworthy/valid. To a lesser extent, most participants agreed that the data collected by their police service was timely (58%), consistent/reliable (55%), in a useable form to conduct analyses (53%), and of sufficient quality to conduct their analysis work (51%). More concerning is that only a minority of analysts agreed that the data their police service collected was easily accessible (49%), of sufficient detail to conduct their analysis work (46%), of a high quality (40%), and complete (33%). Such data concerns affected how analysts felt about their work. While many analysts felt either very confident (13%) or confident (35%) that the conclusions drawn from their analyses were accurate, the majority of analysts (44%) stated that they were only somewhat confident or not at all confident (8%) in the accuracy of their analyses.

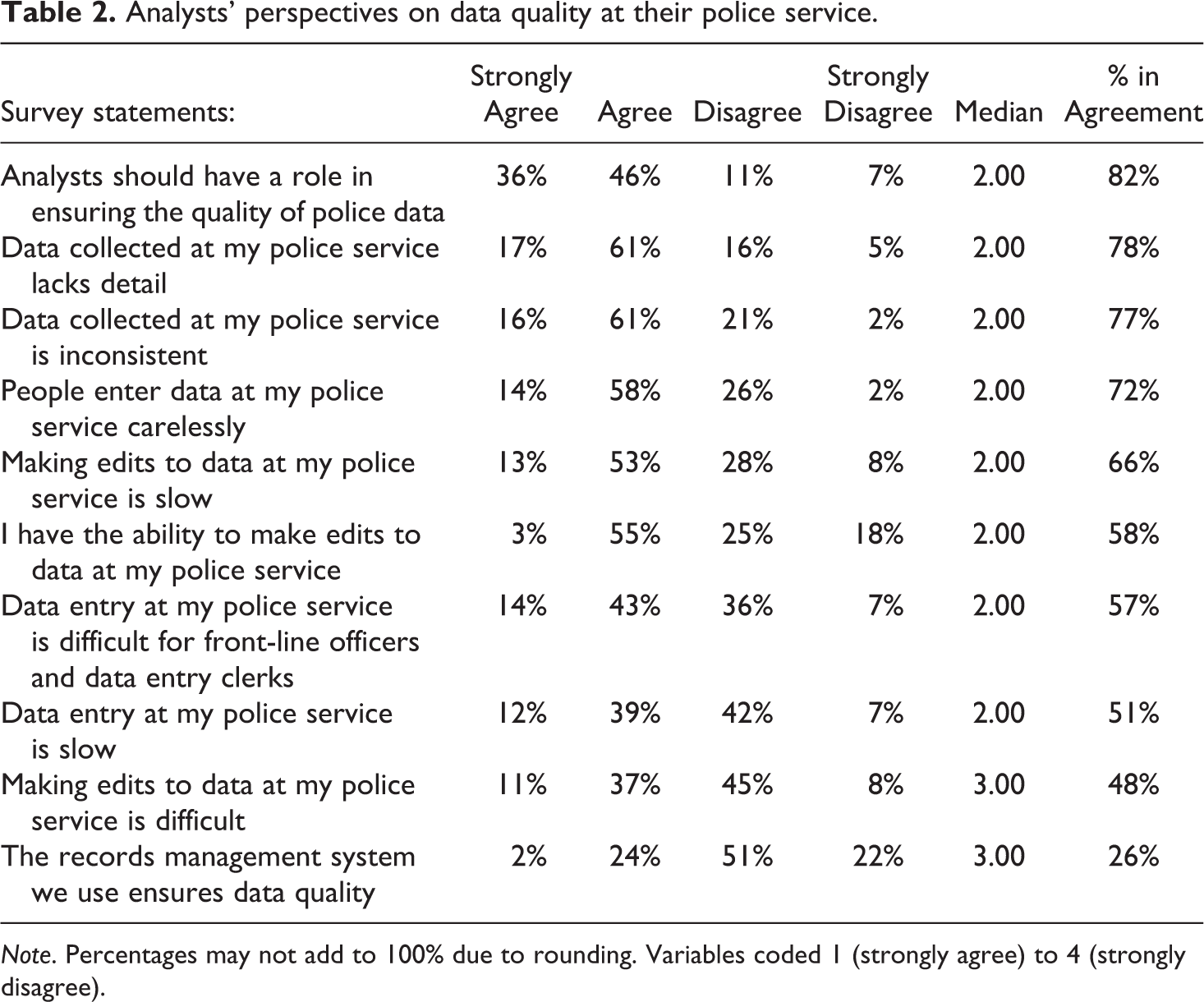

Asked directly about their level of agreement regarding a series of statements pertaining to data collection and entry (see Table 2), the majority of analysts agreed or strongly agreed that data collected by their police service lacked detail (78%), was inconsistent (77%), and that the people entering data did so carelessly (72%). Participants also strongly agreed or agreed that analysts should have a role in ensuring the quality of police data (82%). However, only 58% were in agreement that they had the ability to make edits to data collected by their police service and for some, making edits was identified as being difficult (48%) and slow (66%). Similarly, the majority of analysts were in agreement that data entry was difficult for front-line officers and data entry clerks (57%) and that data entry was slow (51%).

Analysts’ perspectives on data quality at their police service.

Note. Percentages may not add to 100% due to rounding. Variables coded 1 (strongly agree) to 4 (strongly disagree).

One key area highlighted by analysts as being in need of improvement was the records management systems (RMS) used by police services. Interestingly, only 26% of analysts were in agreement that their records management system ensured data quality (see Table 2). In particular, only 16% of participants stated that the data fields in their RMS were very clear whereas 70% rated them as somewhat clear or not very clear (14%). Similarly, when participants were asked an open-ended question about the barriers they encountered ensuring data quality at their police service, RMS were noted as a key issue. As one analyst explained: Our officers can put data into the RMS, but we can’t get it all out. The RMS was never built as an analytical tool. And of course, GIGO [garbage in, garbage out]. Members use their own acronyms and don’t use spell check, so to try and get data out of the system using wildcards still doesn’t ensure we get 100% of the data out of the system…We try to be as transparent as possible, yet I am always concerned that this lack of ‘perfection’ may be seen as a way of ‘hiding’ data.

In addition to the RMS, analysts noted several other specific barriers that limited data quality at their police services. The most common issue mentioned by participants was a lack of training and experience. In particular, data entry was mentioned as not being taken seriously due to the busyness of the police service, a lack of importance being placed on the issue by officers and/or leadership, and/or a lack of understanding as to how the data entered into databases is subsequently used and impacts officers’ work. For example: Police officers are too busy to worry about ensuring data entry is done accurately, or they are not taught about the impact poor data quality has on crime stats and decision-making. [Officers] don’t always realize how much analytical value there is in completing the data correctly and in detail, so they just try to do the bare minimum as quickly as they can so they can move on to the next call.

Another barrier was a lack of data quality oversight from supervisors and leadership. For some, it was perceived that leadership did not value or understand the importance of data quality and the work of their analysts. For example: We can’t get the number of bodies wrong. Yet, I am continually getting the wrong number of homicides and motor vehicle fatalities. These numbers are most often under 20 and my analysts can’t even get that right. There have been a few times where the count for one section has changed three times! For me, I blame management for not holding their analysts more accountable. Laughing at the mistakes, blaming it on a Monday, blaming their mistakes on not enough coffee is not ok at my level. Executive do not care about true facts, they only want data/analysis that states what they want and not facts. Analysts give executives the information they want and in return are treated better, sent on training/conferences, and given opportunities the rest of the analysts are not.

The final barrier to ensuring data quality noted by analysts was having unclear and underutilized data governing procedures. We also specifically asked participants an open-ended question about the procedures that govern data quality at their police service. Some participants stated that they had no procedures governing data quality while others stated that they had procedures, but they were not consistently followed. As one participant stated: We have Sergeants assigned to carry out quality assurance, however, they do not understand what some of the fields mean and are not very concerned about data accuracy – only that the file was investigated/closed properly. Members of the Records Department also carry out QA [quality assurance] with respect to some aspects of data entry, but their accuracy depends on their own interpretations of the rules.

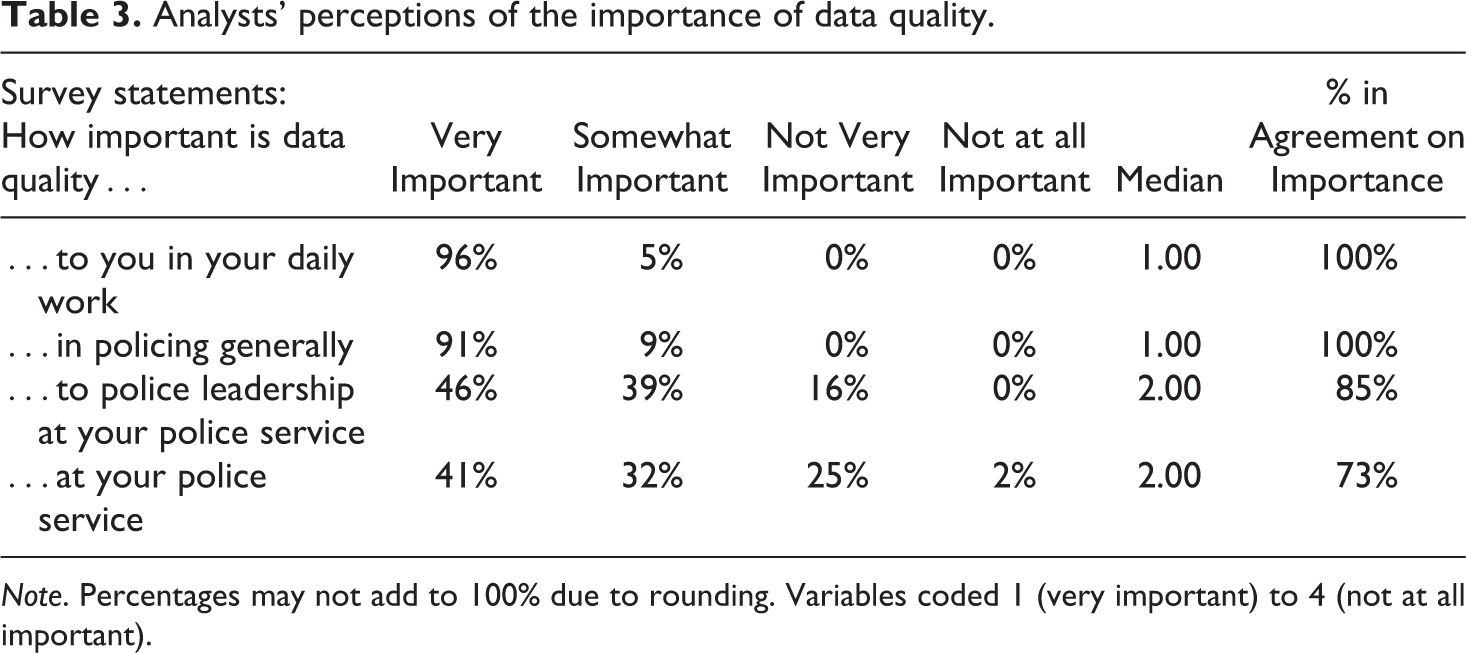

Analysts’ perceptions of the importance of data quality.

Note. Percentages may not add to 100% due to rounding. Variables coded 1 (very important) to 4 (not at all important).

Regardless of the barriers or successes associated with data quality at police services, the open-ended responses from participants noted important areas in need of improvement going forward. First, there was a need expressed for more coherent procedures governing data quality and attention to making sure those procedures are followed. This included making sure police services had dedicated people responsible for ensuring data quality, file reviews and regular audits, having one central database instead of multiple databases for reporting, having software that highlights errors and is easier to use (e.g., drop-down menus) for those entering data, consistency in definitions to properly code data, and making the completion of certain database fields mandatory (e.g., flagging incidents as gang-related). In part, this meant that analysts needed to be provided with more flexibility (e.g., to edit databases), consultation (e.g., in shaping policy changes and governance, data inputs, data management systems), and responsibility (e.g., setting future priorities, determining crime trends). One participant even suggested that there should be ‘civilian oversight of the entire analytical process, independent of sworn members’. Essentially, more accountability for data quality procedures and collaboration within police services were believed to be key ingredients to improving data quality at police services.

Second, analysts expressed that there was a need for more training related to the importance of data quality. In particular, analysts thought that it was important to instill in officers how the data they enter into databases is used and how subsequent analyses based on this data affects officers’ work and policing priorities. As one participant explained ‘if there is no connection to [officers’] work or why [data] entry is important, it will be difficult to improve [data quality]’. More generally, it was also suggested that officers and civilians needed more (ongoing and refresher) training on how to enter data into their police service’s RMS. It was thought that this type of training would improve the accuracy and consistency of data inputs. One participant even suggested that ‘civilians should play a much larger role in data entry, taking this off the plate of officers who are more concerned with moving on to the next call’.

Finally, analysts noted that there was a need for supervisors and police leadership to take data quality more seriously. This could be accomplished through numerous means including having leaders champion data quality, more people being hired with the authority (i.e., rank) to ensure data quality procedures are followed (e.g., a quality assurance person), and having leaders hold existing personnel responsible for data errors and making sure those errors are fixed. Again, analysts perceived data quality improving as more accountability for data quality was formalized within the bureaucracy of police services. For some, this meant that a serious focus on data quality would require a change in the culture at police services towards one that was more data-informed/driven.

Discussion

The data collection and data quality issues identified by analysts are not new to policing. As discussed in the literature review, policing has often prioritized collecting data rather than collecting ‘good’ data (Hickman and Poore, 2016; Joh, 2015; Sanders et al., 2018). This penchant for collecting large volumes of data with little concern for data quality is a continuing issue, particularly as we move into an era of big data where the sheer volume of data collected is perceived to eliminate potential biases, an idea that has been critiqued (Bennett Moses and Chan, 2018; Brayne, 2017; Ferguson, 2017; Joh, 2015). Our research provides a unique perspective on this issue through an online survey with analysts themselves. Examining analysts’ perceptions of data quality in police services exposes several issues that must be addressed if police services are to truly consider themselves data-driven. Our survey findings noted several concerns from analysts as to the reliability and validity of the analyses and data that drive police decision-making in Canada. There appears to be a tension between wanting to be seen as a data-driven (or evidence-based) police service but at the same time being unable, or worse unwilling, to do the work needed to accomplish this in practice. In what follows, we argue that being data-driven requires more attention be paid to the quality of the data driving decision-making in police services. We also discuss the implications of not addressing data quality issues in police services.

The lack of attention to data quality is concerning. It raises questions about how much trust the community can place in the data the police collect and use to inform their work. That is, the analysts themselves noted that police services often struggled to meet even the basic requirements for ensuring their data was reliable and valid. For example, a majority of analysts perceived the data police services collected as incomplete, inconsistent, being entered carelessly into databases, of low quality, and lacking sufficient detail to conduct proper analyses. Yet, at the same time, this is the data that is being used to allocate resources, guide operations, and to make knowledge claims about crime, victimization, and reducing harm (Ratcliffe, 2007; Sanders et al., 2018). Recently, there have been numerous protests and calls from the public to defund police services in Canada (and elsewhere) and reallocate resources to more proactive social services. While the long-term impacts of these protests on police budgets remain unknown, they raise big questions about the willingness and capacity of police services to invest time and resources in their data quality procedures at a time when they are confronting major existential questions. However, there is good reason to see data quality improvements as critical to reducing systemic racism and other forms of bias in policing as we know that the processes through which data are collected, entered, and interpreted shapes and is shaped by those systemic problems that result in certain groups being targeted and treated unfairly (Ferguson, 2017). As police agencies are under increasing scrutiny in their decision-making, resource allocation, and financial choices, using high quality research supported by high quality data will help enhance their legitimacy during crises (Mitchell and Lewis, 2017).

On the surface, the data quality issues identified by the analysts in this research seem relatively easy to remedy if greater attention were paid to treating the problem as simply a procedural issue. For example, analysts noted that data quality in police services could be improved by handing over more of the data work to trained analysts, increasing training for those entering data (e.g., frontline officers), streamlining RMS programs and acquiring more user-friendly software for managing data, having leadership actually take data quality seriously (e.g., hold people accountable for entering and analysing data properly, reinforcing the importance of data quality), and instituting data quality checks and balances (e.g., data audits, people dedicated to quality assurance). We agree that these are all necessary improvements that need to be made if police services are to make reliable and valid claims about the data they possess. Indeed, analysts noted that some police services already had these in place and were working on improving data quality. Overall, the needed improvements noted by analysts would likely go a long way to ensuring the reliability and validity of police data.

However, all of these improvement suggestions assume that police services truly want to improve the quality of the data they collect and analyse. It is unclear if this is indeed the case. While some police services clearly aimed to improve their data quality, as some analysts noted, often the leaders responsible for ensuring data quality did not have the requisite skills and knowledge of research that would enable them to make needed changes. Similarly, most of the participants had concerns about how seriously data accuracy was being taken by officers. Even more concerning was the suggestion that leadership might reward analysts who could manipulate data to suit leadership’s agenda/arguments. Further, some police services had no procedures governing data quality or failed to follow the guidelines even when they were in place. Therefore, while some police services appear to have embraced data-driven approaches and their role as knowledge workers, our findings also point to much hesitancy in Canadian policing.

Therefore, it appears that there is a serious need to focus more attention on improving data quality within Canadian police services before they can claim to be data-driven and evidence-based institutions. Accomplishing this will require instituting the improvements noted by analysts above but also a greater acceptance of a research culture within policing. Doing so would require that police services shift away from a culture of image work where data is used primarily to promote a favourable image of police to the public (Mawby, 2002; O'Connor and Zaidi, 2020).

There is a need among policy makers and researchers to better understand and articulate what a research culture might look like and the best policies for creating one. Current suggestions include acknowledging failures, dedication to improving data reliability and validity issues, objectivity (including the sharing of data and information with outsiders), and having an openness to critique (Howard et al., 2000; Mitar, 2004). This approach embraces the use of data to learn and improve rather than to manipulate and convince. From the analysts’ perspectives, it appears that police services speak in a language that superficially acknowledges the importance of good data but have often not instituted the requisite research culture/principles to guide their work at an organizational level to make that come to fruition. Data quality seems to have been treated as a ‘nice to have’ rather than a ‘must have’.

Police leaders looking to shift their agencies towards more data-driven and evidence-based approaches must develop not just a greater understanding of research (Huey et al., 2018), but also prioritize data quality and promote such values throughout their organizations, including during recruit training (Mitchell and Lewis, 2017). Police agencies that place value on their analysts must also support removing cultural and structural barriers that contribute to poor data quality. In an era of big data where data is assumed to be unbiased (which it is not), we would argue that data quality is a ‘must have’ and is long overdue.

Conclusion

While Canadian police services are increasingly positioning themselves as data-driven and evidence-based, the question remains as to why police services in Canada have not focused more concerted attention on the quality of the data they collect. They were long ago identified as knowledge workers dealing in information (see Ericson and Haggerty, 1997) but appear to have paid little attention to the quality of this information and therefore, its reliability and validity. Having said that, a limitation of our work is that we only spoke to analysts about this topic and our sample was not selected in a way that would make our results generalizable to all police services in Canada. Therefore, the findings from our study should be considered preliminary and much more research needs to be conducted on data quality in policing. Paying attention to this issue now is particularly important as most new technological tools being used by the police collect and deal in information (e.g., facial recognition, drones, big data), as police legitimacy and trust are being tested, and as police agencies face increasing demands for transparency and accountability. It is no longer sufficient for policing to claim that they are data-driven. Instead, policing must be guided by data that is reliable and valid and a research culture that adheres to ethical principles.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.