Abstract

In speech perception, listeners tend to hear real words rather than non-words on a physically balanced real word—non-word continuum (lexical bias effect). Bourguignon et al. found a similar effect in speech production: With spectral auditory feedback perturbations, they altered vowels toward other vowels thereby causing a shift in lexical status (from word to non-word or vice versa) or not. This study tests whether the lexical bias effect can be extended to the temporal domain in speech production. We perturbed the German vowels /a/ and /a:/ in real words toward the respective other phoneme using a real-time temporal auditory feedback adaptation paradigm. This manipulation pushed the percepts either toward another real word (lexical condition) or not (non-lexical condition). In both perturbation setups (stretching short /a/, or compressing long /a:/), speakers counteracted the perturbation with productions opposing the direction of perturbation. However, response magnitude was similar across conditions, that is, independent of a shift in lexical status. The results indicate that, in the temporal domain, speakers do not heavily rely on higher-level linguistic information, but rather are principally oriented toward maintaining phonemic identification. These findings further imply that temporal and spectral parameters in speech production and perception are governed by different processing strategies.

1 Introduction

Humans perform innumerable motor tasks every day. Speaking is one of the most complex uniquely-human motor tasks carried out on a daily basis. In speech, a large set of muscles of the speech apparatus is coordinated to form language-specific acoustic outcomes such as syllables or words. This coordination requires the precise interplay of neurological and motor systems to ensure precision in timing and sequencing, as well as in the spectral shape of the intended speech segments. In so doing, the system incorporates information from the auditory and tactile senses. In speech acquisition, mental representations of speech segments are built using information about how a speech segment should sound (

In auditory feedback perturbations, a parameter in the speech signal, such as a formant frequency of a vowel, is altered in real-time and sent back to the speaker via headphones, thus masking their naturally produced feedback. In response, speakers typically alter their ongoing productions with adjustments in the opposite direction to the applied shift—they

In determining the guiding forces behind such responses, several studies have indicated that speakers’ responses are sensitive to the linguistic and/or phonological system of their native language(s) and do not linearly mirror any frequency shift independent of phonological surroundings. Niziolek and Guenther (2013), for example, introduced formant shifts that fell either comfortably within the speaker’s vowel category (e.g., shifting /ε/ in /bεd/ toward another phonetic realization of /ε/), and perturbations that fell near/across a phonemic boundary (e.g., shifting /ε/ in /bεd/ toward a version that sounded more like /bæd/). They found that the amount of compensation was three times larger when the shift fell near a phonemic boundary, indicating that the feedback-feedforward integration is sensitive to linguistic categories, with speakers aiming to maintain the intended category in production. Another study, by Mitsuya et al. (2011), examined the effect of phoneme boundaries in two different native speaker groups to test for the influence of the speakers’ native phonological systems. They examined responses to first formant (F1) shifts in English speakers producing the English /ε/, native Japanese speakers producing the Japanese /e/, and native Japanese learners of English producing the English /ε/. All vowels were embedded in mono-syllabic real words in the respective languages. While all three speaker groups showed similar response patterns to a

A review of the literature on adaptation to spectrally shifted auditory feedback leads to the conclusion that the majority of studies perturbed vowels in words toward other existing (and therefore phonotactically legal) words, with the vowel of interest being the distinctive element in the minimal pair (see, for example, MacDonald et al., 2010; Nault & Munhall, 2020; Purcell & Munhall, 2006a). However, phoneme boundaries do exist dissociated from lexical boundaries (i.e., it is possible to recognize vowels in isolation or in non-word contexts) and with most of the mentioned studies the contribution of phonemic and lexical identity remains blurred. To examine the connection between lexical and phonemic identification, Bourguignon et al. (2014) investigated the role of lexical status (e.g., shifting from a real word to a non-word) dissociated from phoneme boundaries. Their motivation rose from the bias of the speech production system (as revealed in slips of the tongue) to rather confound phonemes in words when the permutation results in another real word (Baars et al., 1975). This phenomenon resembles the

Similar to the study by Bourguignon et al. (2014), the dissertation by Frank (2011) investigated the effect of lexical biases on speech production in self-produced speech with auditory feedback perturbations. Frank (2011) used formant shifts of F1 to alter a real word (e.g., “bed” toward “bid”) with a special interest in whether the heard feedback is another real word (

Intriguingly, the majority of auditory feedback perturbation studies have focused on spectral properties of speech. Yet, the lexical bias effect also works with temporal properties of speech. Notably, the first study by Ganong (1980) assessed a phoneme contrast that is cued by duration (voice onset time [VOT]:

Responses to temporal feedback manipulations have not been completely consistent over the speech material and languages investigated to date. A variety of different factors that could have an impact on adaptation to temporal perturbations have been considered. Oschkinat and Hoole (2020) perturbed the German words “Pfannkuchen” (/pfankuːxən/,

While Karlin and Parrell (2022) provide first evidence that phonemic identification might play a different role in speech timing than it does for spectral information, their experimental paradigm leaves unsettled issues to investigate: Previous temporal auditory feedback perturbation studies agreed on the suggestion that there is a bias for lengthening responses being more easily produced than shortening responses (Karlin & Parrell, 2022; Oschkinat & Hoole, 2020). By comparing two conditions that used different perturbation directions, whereby one condition additionally delayed the target while the other did not, the paradigm in Karlin and Parrell (2022) might have biased their outcome and affected the comparability of the conditions. Furthermore, as noted previously for spectral perturbation studies, in this case, too, crossing of a phonemic boundary was always closely tied to the crossing of a lexical boundary from one lexical identification to another. Hence, the influence of phonemic vs. lexical status remains unclear.

This study is concerned with the role of phonemic vs. lexical identity along the temporal dimension. We compare responses to phonemic shifts that do not cause a shift in lexical status (e.g., shifting a real word toward another real word) with responses to phonemic shifts that also cause a shift in lexical status (from a real word toward a non-word) using the same perturbation setup across the compared conditions. Although the collection of our data had already started prior to the publication of Karlin and Parrell (2022, see Section 2.1 for details), it complements this previous study well due to its different methodology.

In English, duration as a primary cue for establishing phoneme boundaries is not as ubiquitous as formant frequencies are for delineating vowels. In German, however, the vowels /a/ and /a:/ differ almost exclusively in duration without remarkable differences in spectral properties (Jessen, 1993; Pätzold & Simpson, 1997). Hence, it can be assumed that minimal pairs such as /ʃta:t/ and /ʃtat/ differ predominantly in vowel duration. Similar to the study by Bourguignon et al. (2014), the segmental perturbation of the vowels /a/ and /a:/ in this study pushed the vowel percepts near/across phoneme boundaries. The manipulation involved two perturbation conditions: one where the critical vowel was compressed and one where it was stretched. In addition, each condition comprised two target words: One where the perturbation led to a shift in lexical status from real word to non-word, and the other one without a shift in lexical status (from one real word to another real word). Consequently, the applied perturbation (stretching and compressing of segments) was balanced over the two lexical conditions.

If speakers respond similarly in both lexical conditions (i.e., lexical status does not matter), we may conclude that speakers attend to phonemic identification more than to lexical status. If speakers respond more strongly in the condition that shifts a real word toward another real word than a real word toward a non-word, it can be concluded that lexical status plays an additional role in the control of speech timing, further indicating that word productions on a word—non-word continuum are more flexible toward the non-word boundary (lexical bias effect). Accordingly, with this study, we aim to shed light on the mechanisms for controlling speech timing focusing on phonemic and lexical status.

2 Methods

In the following, we report the characteristics of the tested sample (Section 2.1) and the implementation of the experimental procedure (Sections 2.2 and 2.3). Section 2.4 reviews the reliability of the procedure, including an issue with a malfunction at a technical level that led to testing of additional participants before analyses. Section 2.5 outlines data preparation and exclusion; Section 2.6 then outlines the analytical strategy for the main analyses of the vowels (Section 3) and the additional analyses of the consonants (Section 4) and further gives a visual overview of the response data (Figure 4). We report how we determined the measures of interest along with the R version and R packages used for the reported analyses (Section 3). The study’s design and the analyses were not pre-registered. We used the STROBE cross-sectional reporting guidelines (Von Elm et al., 2007). This study was approved by the ethics committee of the medical faculty of the Ludwig Maximilian University of Munich on August 10, 2017.

2.1 Participants

Initially, 51 participants were tested in 2018/2019. After post-processing the data and verifying the fit of the perturbation to each participant’s speech, another set of 10 participants was tested with improved implementation of the perturbation procedure (see Section 2.4), resulting in a total number of 61 tested participants (51 females, 10 males). We considered the sample size adequate to large compared with previous auditory feedback perturbation studies, which commonly used 15 to 20 speakers per group (see Caudrelier & Rochet-Capellan, 2019 for an overview). The exact implementation as well as participant exclusion rules will be outlined further below. All participants were native speakers of German recruited in the Munich area, between 18 and 34 years of age (mean age 23 years), and none of the participants claimed to have any speech or hearing impairments. All participants gave informed written consent to participate in this study.

2.2 Experimental setup

Real-time temporal auditory feedback perturbation was implemented in Matlab using the time-warping function of Audapter (Cai et al., 2008, 2011) with similar setups as in Oschkinat and Hoole (2020, 2022). Participants spoke into a Beyerdynamic TG H74 headset microphone (Heilbronn, Germany) placed 3 cm from the corner of the mouth. The spoken signal was fed into the computer and sent back via E-A-RTone 3A in-ear earphones with foam eartips (3M, Saint Paul, MN, USA) which minimize airborne sound transmission. Feedback was sent back to the participant with a total delay of around 45 ms. This delay is the sum of the buffering and processing delays in the Audapter software plus the hardware delay from the MOTU Microbook II soundcard (estimated at 12–13 ms, cf. Kim et al., 2020)

1

. Note, however, that this delay was already received during the Baseline, and did not cause any visible trends or effects of DAF during the Baseline. In real-time temporal auditory feedback perturbation, it is necessary to first stretch one part of the signal before a subsequent part can be compressed, otherwise the signal that should serve as feedback would not yet have been produced after the received compression. Therefore, in this study the stretched part in the signal always preceded the compressed part. The perturbation was implemented in two experimental setups focusing on the German vowel length contrast between the vowels /a/ and /a:/: One setup stretching the vowel /a/ (Vowel stretching setup) and one setup compressing the vowel /a:/ (Vowel compression setup), each of them comprising two words/conditions. In one condition the perturbation did not change lexical status (real word to another real word). This condition will be termed

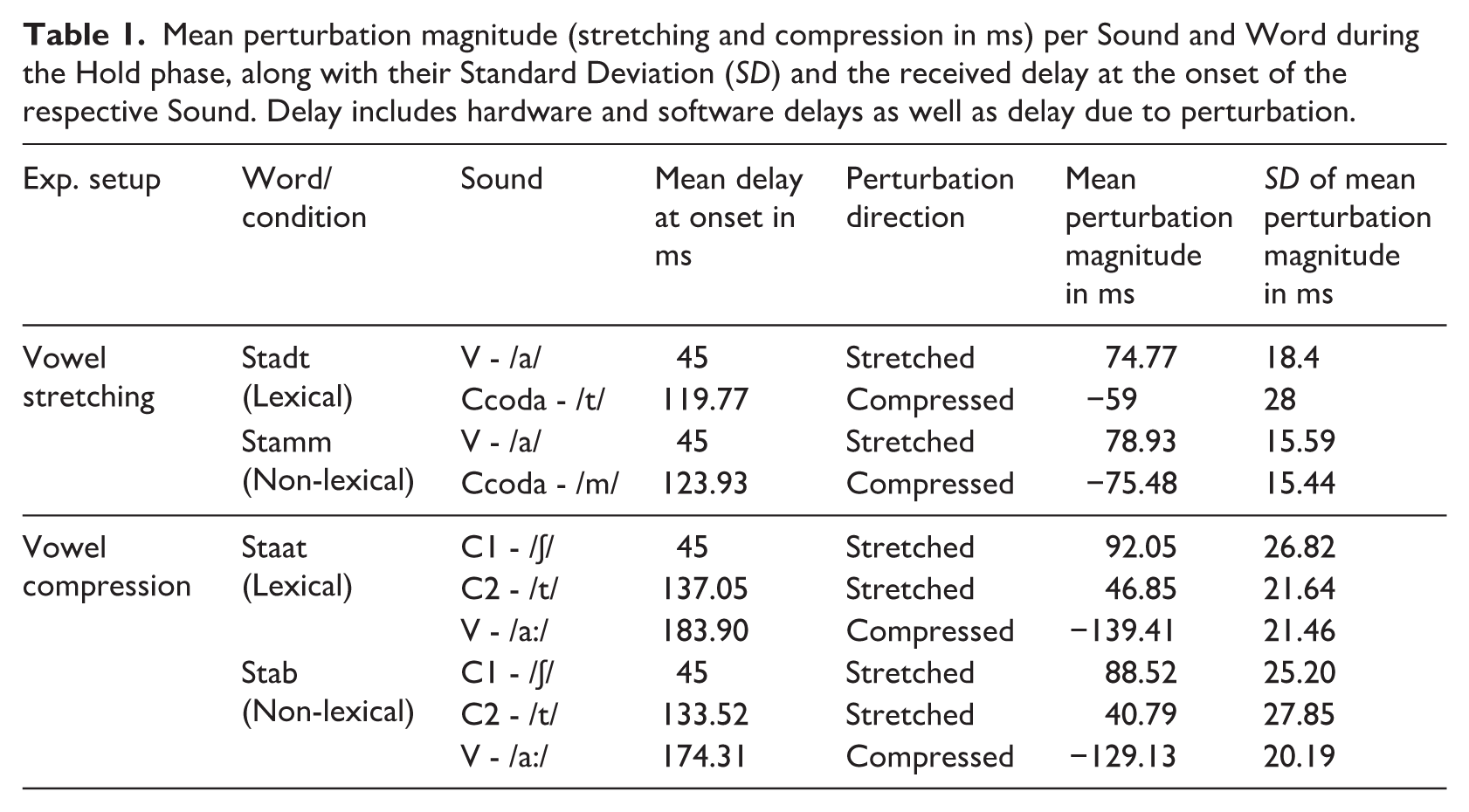

Mean perturbation magnitude (stretching and compression in ms) per Sound and Word during the Hold phase, along with their Standard Deviation (

Each participant performed either both conditions/target words of the Vowel stretching setup or both conditions/target words of the Vowel compression setup (23 participants performed the Vowel stretching setup

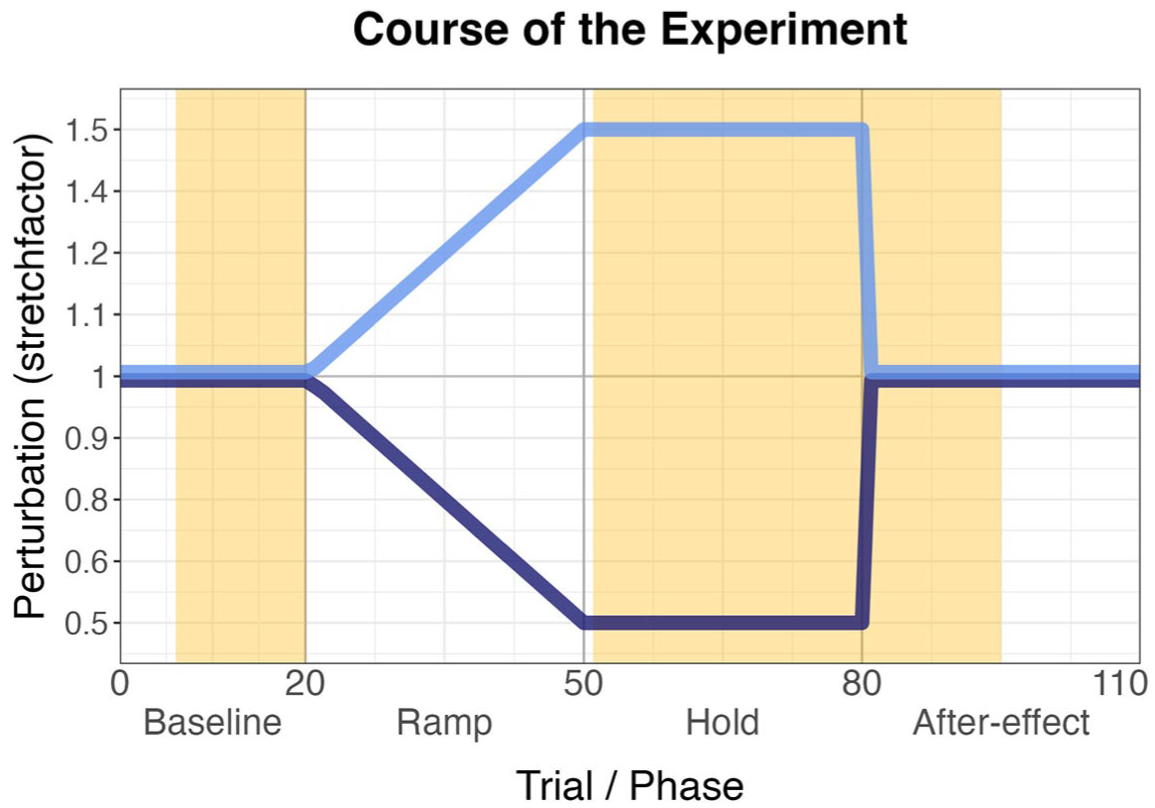

Course of the experiment with trial number/phase on the x-axis and the amount of perturbation (stretch factor) on the y-axis. 1 indicates that the signal was played back as produced; the other numbers indicate the proportion of compression or stretching (stretching in light blue, compression in dark blue). The yellow part marks the trials used for statistical comparison between Baseline (Trials 6–20) Hold phase (Trials 51–80) and early After-effect phase (81–95).

For each of the tested target words, the participant performed a pretest which consisted of 10 to 15 trials of the experiment with no perturbation; this served to adjust the microphone level and to analyze individual duration patterns in the produced speech for implementation into the main testing protocol (see Section 2.3). It further served to get the participant used to the procedure and establish a stable speaking rate and volume.

2.3 Implementation

The implementation of the temporal real-time perturbation required two main parts: First, the start of the perturbation needed to be detected in the ongoing speech signal with Audapter’s OST, which was generically pre-defined for all participants per experimental setup (i.e., Vowel stretching vs. Vowel compression). Second, the OST had to be complemented with participant-specific information about the duration of the part in the signal that should be perturbed (perturbation section). The latter was established in the pretest and determined for each participant and word individually.

The OST is a part of the

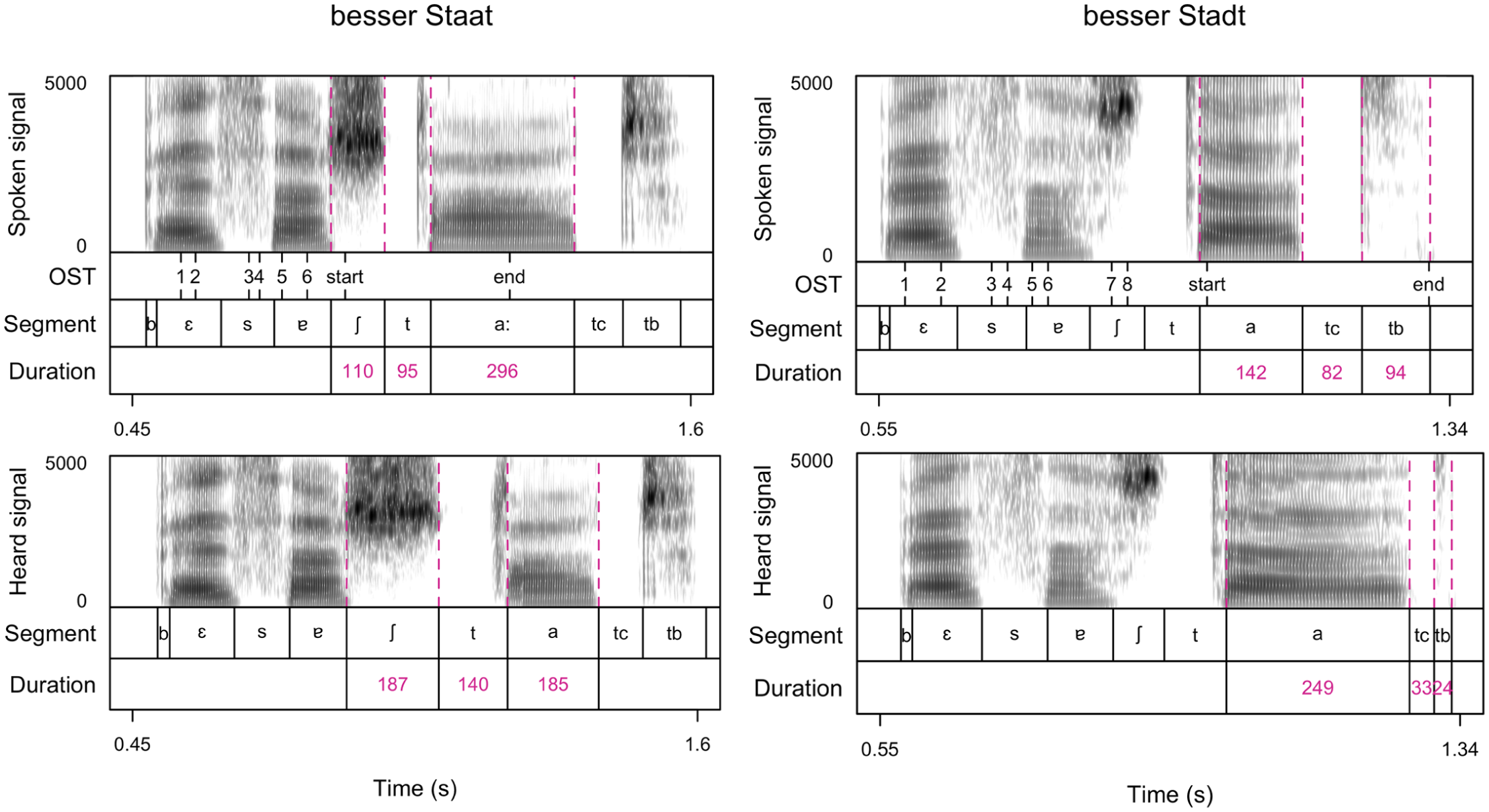

Visualizations (spectrograms and annotations) of the spoken signal (upper panels) and the heard/perturbed signal (lower panels) of one Hold phase trial for

The perturbation section was coded as two halves, whereby the first half was always stretched, and the second half compressed. In maximum perturbation (during the Hold phase), the signal in the first half of the perturbation section was stretched by an amount of 1.5, whereas the second half was compressed by a factor of 0.5 (see Figure 1, light blue and dark blue lines, respectively). Due to this approach, the absolute duration of the perturbation section (and thus of the stretching and compression) varied between participants, ensuring a perturbation that is relative to the produced segments rather than an absolute amount in milliseconds. Given the shorter duration of /a/ compared with /a:/, the absolute amount of stretching for /a/ was smaller than the amount of compression for /a:/.

2.4 Corrections to the initial implementation and additional testing of participants

In post-processing, every speaker’s data was scanned for the correct triggering of the intended perturbation section (onset + vowel in the Vowel compression setup or vowel + coda in the Vowel stretching setup). The implementation worked very reliably in the Vowel stretching setup (

Due to this malfunction in triggering the intended part in the utterance, 10 new participants were tested with the Vowel stretching setup and an

Figure 3 shows the vowel durations in the Baseline for both setups with indication about the OST implementation in the Vowel compression setup (gray: new OST, black: old OST, participants with perturbed vowel /ɐ/ excluded). The Figure indicates that the additionally tested participants for the Vowel compression setup had longer vowel durations (a slower speech rate) in the Baseline. However, the whole batch of tested participants forms a homogeneous group, and the difference in Baseline duration rather indicates that the new OST implementation did work better for speakers with a slower speech rate. This was confirmed by statistic modeling (see Appendix A). In summary, there was no systematic difference in response patterns of participants tested with the initial implementation but a

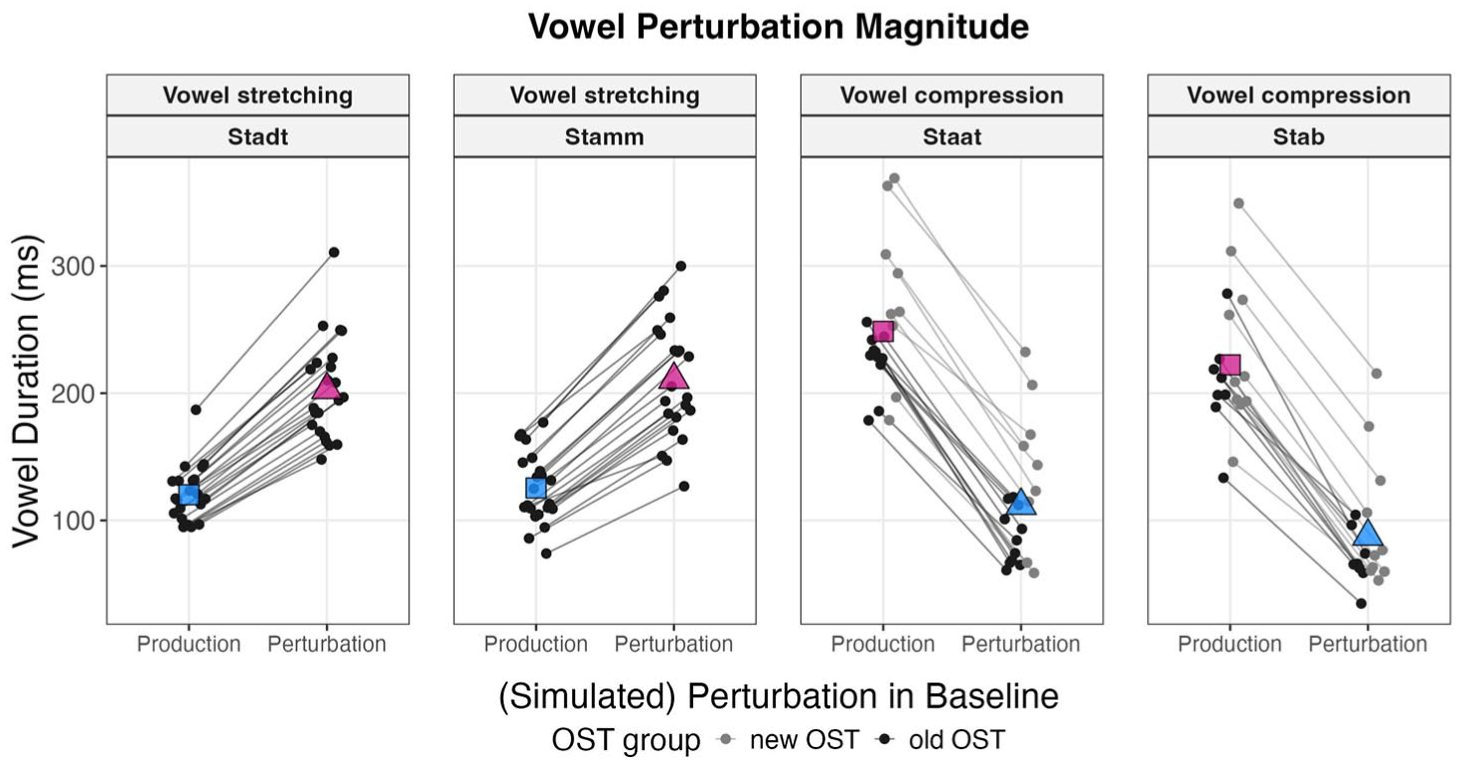

Mean vowel durations produced during the Baseline (Production) and simulated received perturbation (Perturbation). Dots mark single participants linked by lines (Staat:

2.5 Data preparation

For all participants (new and old OST tracking), single trials in which the perturbation did not work as intended were marked with an automated Matlab script. This script included rules about the minimal portion of stretching and compression that the intended segments should have received. Furthermore, incomplete trials or trials with yawning were removed from the data set. Participants with either fewer than 20 functioning Ramp phase trials or 20 functioning Hold phase, and fewer than nine Baseline or After-effect phase trials were removed completely from further analyses for the respective target word, since adequate perturbation throughout the experiment could not be ensured. Based on these criteria, one participant was removed for

To summarize, based on these exclusion rules, for the analyses reported below data from 22 participants for

Figure 3 visualizes the perturbation magnitude as implemented, that is, how perturbation would be received without any corrective responses. The key aim of this figure is to indicate the durational region that the perturbation pushed into. For visualization, for each participant and condition, the maximum stretch factor (1.5 for vowel stretching/0.5 for vowel compression) was applied to the perturbation section covering the vowel in the baseline trials (excluding the first five trials) as a

2.6 Analytical strategy

For all subsequent analyses, segment durations were automatically determined by a Matlab script and then hand-corrected in

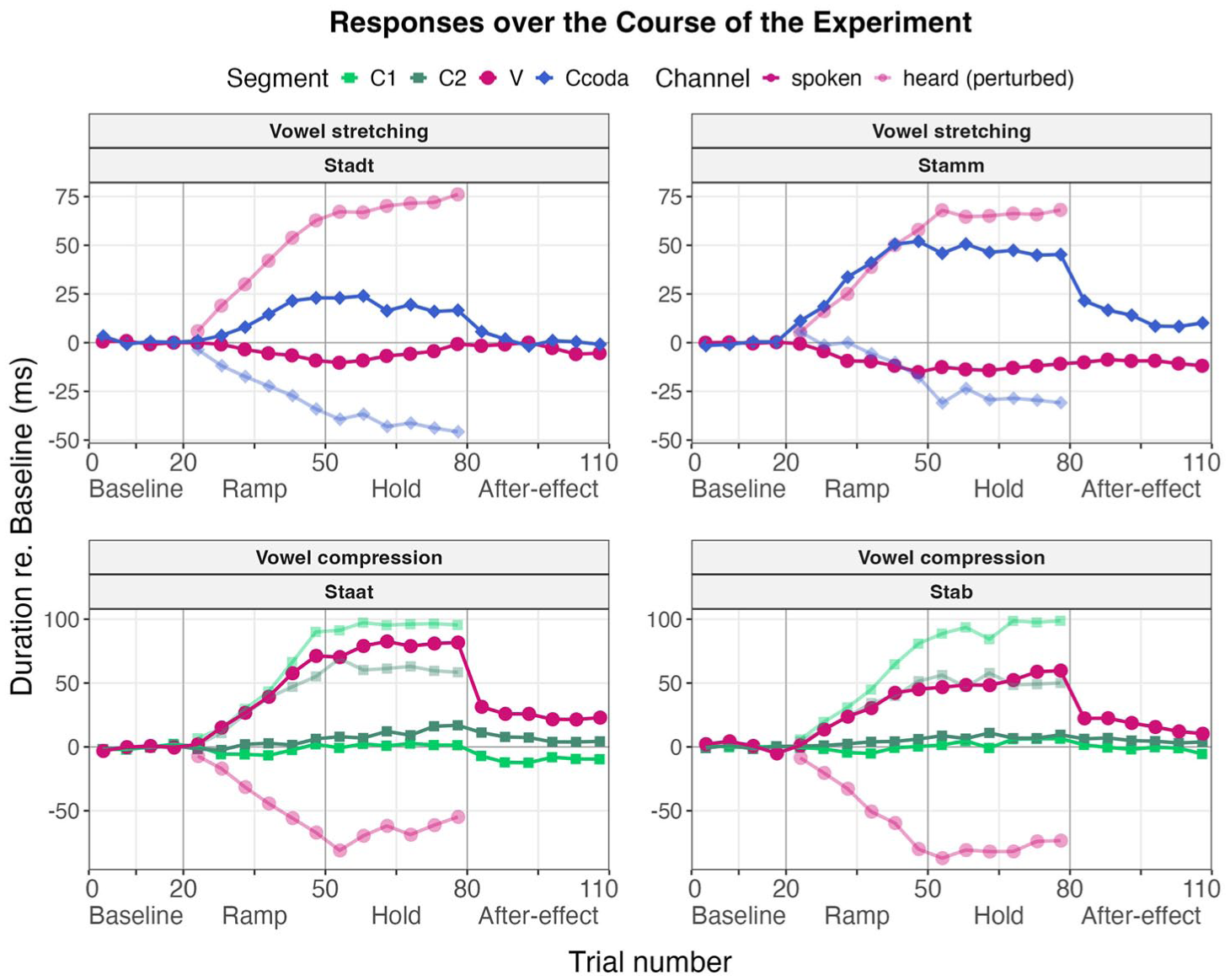

Mean durations binned over five trials of the perturbed segments for all participants over the course of the experiment. Spoken signal in solid dots/lines and heard/perturbed signal in transparent. Vowel (V) and the coda consonant (Ccoda, closure duration of /t/, or duration of /m/, respectively) of the Vowel stretching setup in the upper panels (magenta and blue, Stadt = 22 participants, Stamm = 22 participants) and the onset consonants (C1 - /ʃ/, C2 - /t/) and the vowel (V) of the Vowel compression setup in the lower panels (green and magenta, Staat = 20 participants, Stab = 18 participants). Lexical condition in the left panels, non-lexical condition in the right panels.

3 Main analyses: vowels

The primary focus of this study is to examine the responses to perturbations of /a:/ and /a/ that either shift or do not shift their target words toward another lexical identity. The following sections are organized into two main approaches, capturing first the temporal flexibility of the vowels (Section 3.1), and second the percentage of change relative to the amount of perturbation (Section 3.2). All analyses were carried out in RStudio (R version 4.2.2) using mostly packages of the

3.1 Temporal flexibility of vowels

In this section, we compare vowel durations between the Baseline, Hold phase, and After-effect phase. This analysis shows whether the vowels themselves are being flexibly adjusted in response to the applied perturbation. In general, we expect speakers to oppose the direction of perturbation during the Hold phase, thereby reducing the perceived auditory error. In the Vowel stretching setup (

In the Vowel compression setup (

The subsequent analyses aim at capturing responses during perturbation by comparing Baseline and Hold phase. In addition, the amount of adaptation is examined by comparing Baseline and After-effect phase productions (a significant difference would indicate learning effects, adaptation). In addition, to capture effects of reactive feedback control during the Hold phase, Hold and After-effect phase productions will be compared. Reactive feedback control is indicated by significantly larger responses in the Hold than in the After-effect phase.

3.1.1 Model selection

To examine the effects of Phase (Baseline, Hold phase, After-effect phase), Experimental Setup (Vowel stretching or Vowel compression), and Lexical Condition (lexical, non-lexical) on vowel productions, we fit a linear mixed-effects model (LMM) using the lmerTest package in R (Kuznetsova et al., 2017). Although the dependent variable (vowel duration in milliseconds) was not strongly skewed, a log10 transformation was applied to improve the normality of the residuals in the final model. The model structure was built incrementally, beginning with a null model that included only a random intercept for participant. Fixed effects were introduced stepwise, and models were compared using likelihood ratio tests via analysis of variance to determine whether additional terms significantly improved model fit. The final model included main effects for Phase (Baseline, Hold, After-effect), Lexical Condition (lexical, non-lexical), and Experimental Setup (Vowel stretching, Vowel compression), as well as their two-way and three-way interactions. The random effects structure included random intercepts for participants and a by-participant random slope for Phase to account for individual variability in this within-participant factor. Model diagnostics confirmed model convergence, normality of residuals, and stable fixed-effect estimates.

Marginal and conditional

3.1.2 Results: vowel responses during and after perturbation

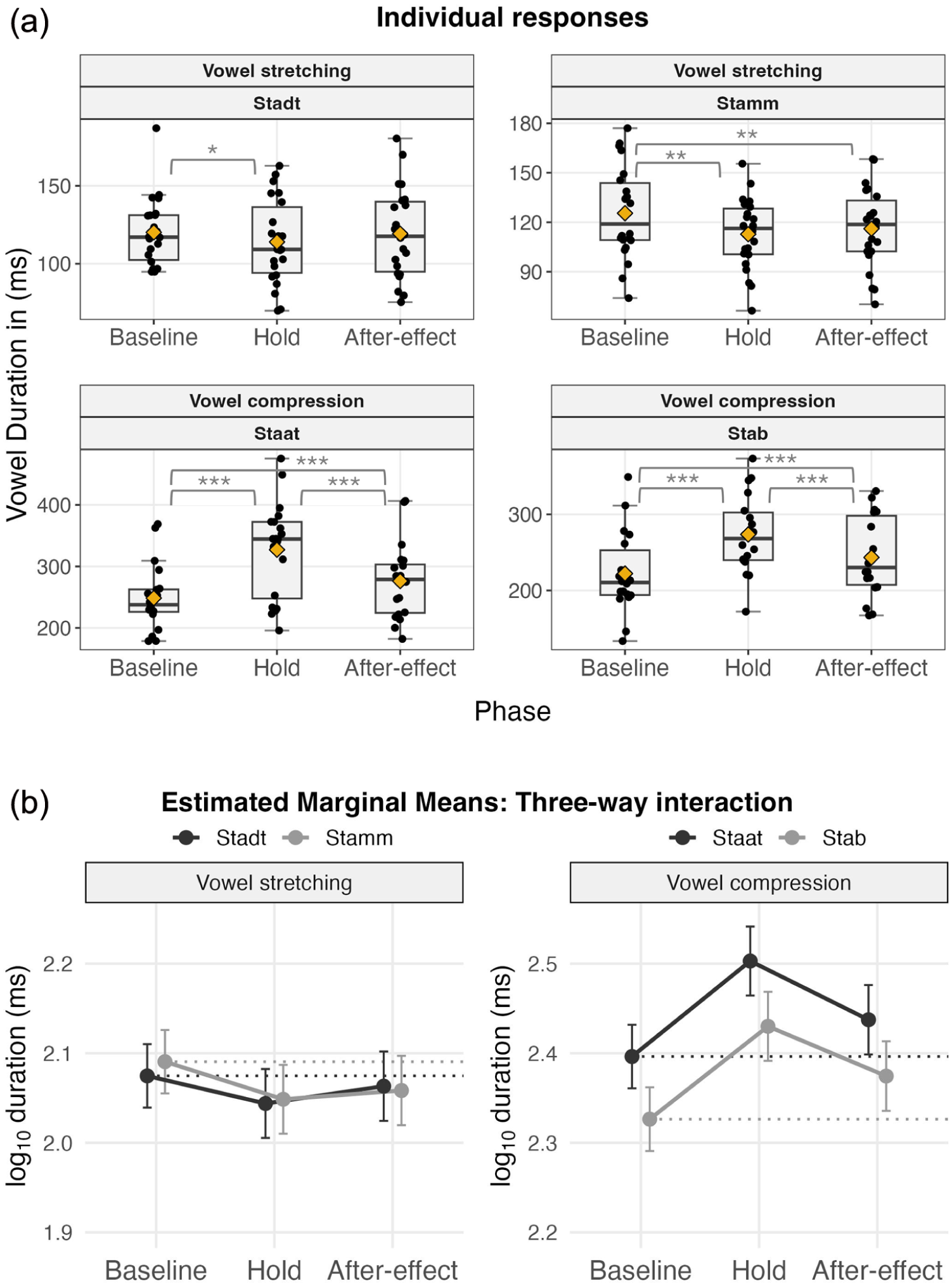

The analysis revealed a significant three-way interaction between Phase, Lexical Condition, and Experimental Setup,

(a) Individual response data (ms) per phase and experiment. Boxes span the interquartile range (25th–75th percentiles), and whiskers extend to 1.5 × IQR beyond the quartiles (or to the most extreme value, if inside this range). Means indicated by yellow diamonds, median displayed as a horizontal line. Individual participants marked by dots, points beyond whiskers are outliers. (b) Points show estimated marginal means (log-transformed ms) with 95% confidence intervals (error bars) for the interaction between Phase, Condition, and Experimental Setup. Lexical Condition in black, non-lexical condition in gray. Dotted lines mark the baseline mean per word for visual orientation.

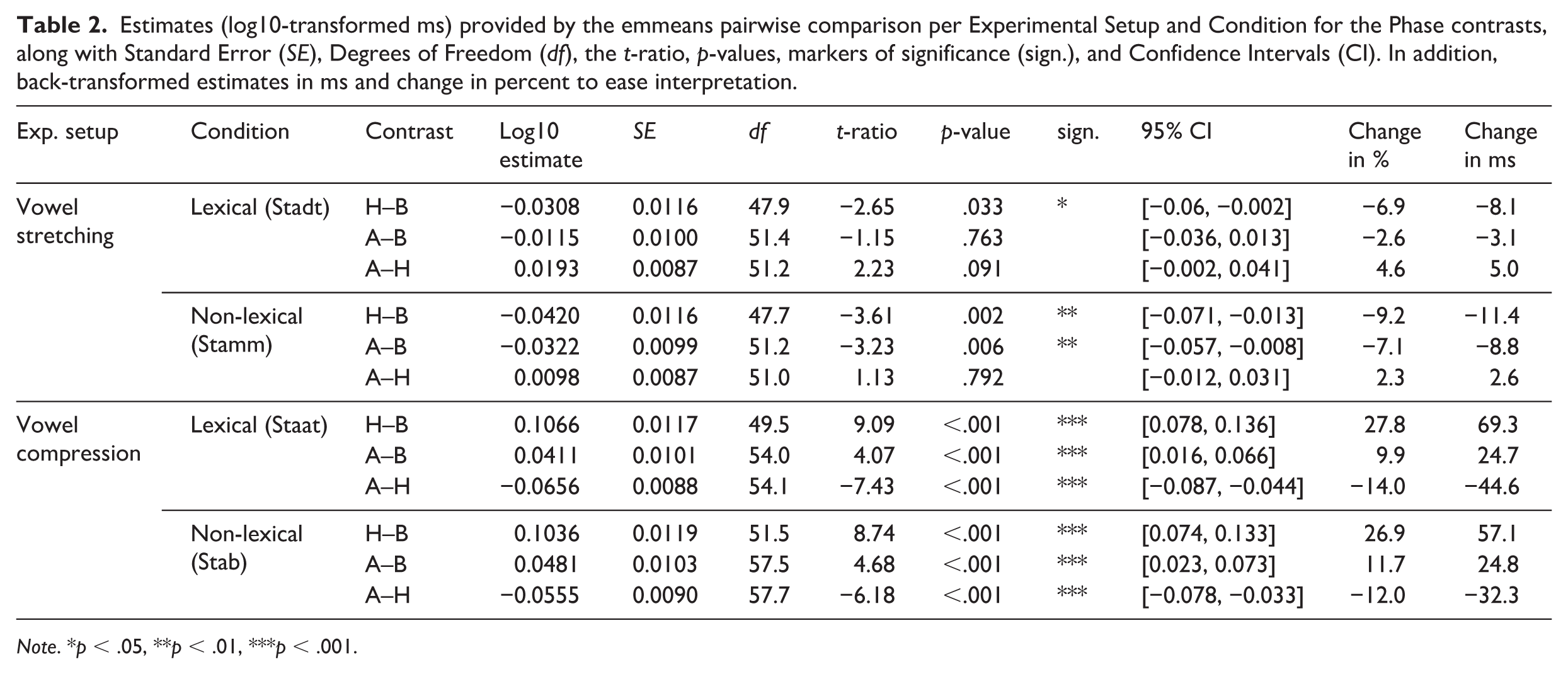

Given the significant three-way interaction, we conducted post hoc pairwise comparisons between Phases (Baseline, Hold, After-effect) using estimated marginal means (EMMs) with the emmeans package in R (Lenth, 2022). Comparisons were run separately for each combination of Experimental Setup (Vowel stretching, Vowel compression) and Lexcial Condition (lexical, non-lexical), with Bonferroni-adjusted

As a reminder, the pairwise comparison of the Hold−Baseline contrast indicates whether and in what direction speakers responded during exposure to the perturbation (and the delay); the After-effect−Baseline comparison reflects the magnitude of any remaining adaptive change in production after the perturbation was removed; and the After-effect−Hold comparison tests whether there was a significant reactive feedback control response (due to the delayed signal caused by the prior stretch) during the Hold phase, as indicated by a significant change in duration toward the baseline during the After-effect phase. Estimates are reported on the log-transformed scale used in the model and are supplemented with back-transformed differences in milliseconds to aid interpretation. Statistical results include log10 estimates, standard errors, degrees of freedom,

Estimates (log10-transformed ms) provided by the emmeans pairwise comparison per Experimental Setup and Condition for the Phase contrasts, along with Standard Error (

Negative estimates denote shortening responses, whereas positive estimates indicate lengthening responses. Note, however, that in the Vowel stretching setup, opposing responses result in negative estimates (shortening), whereas in the Vowel compression setup, opposing responses result in positive estimates (lengthening). To ease interpretation, we briefly note that vowel durations in the Hold and After-effect phases (H−B, A−B contrasts) consistently shifted

In the Vowel stretching setup, vowel durations shortened significantly from Baseline to Hold for both the lexical condition

In the Vowel compression setup, durations increased significantly from Baseline to Hold for both the lexical condition

In summary, speakers opposed the direction of perturbation in both setups and both conditions. Shortening responses (negative estimates, Vowel stretching setup) were much less pronounced than vowel lengthening responses (positive estimates, see Figure 5(b)), which is in line with findings from previous studies (Karlin & Parrell, 2022; Oschkinat & Hoole, 2020).

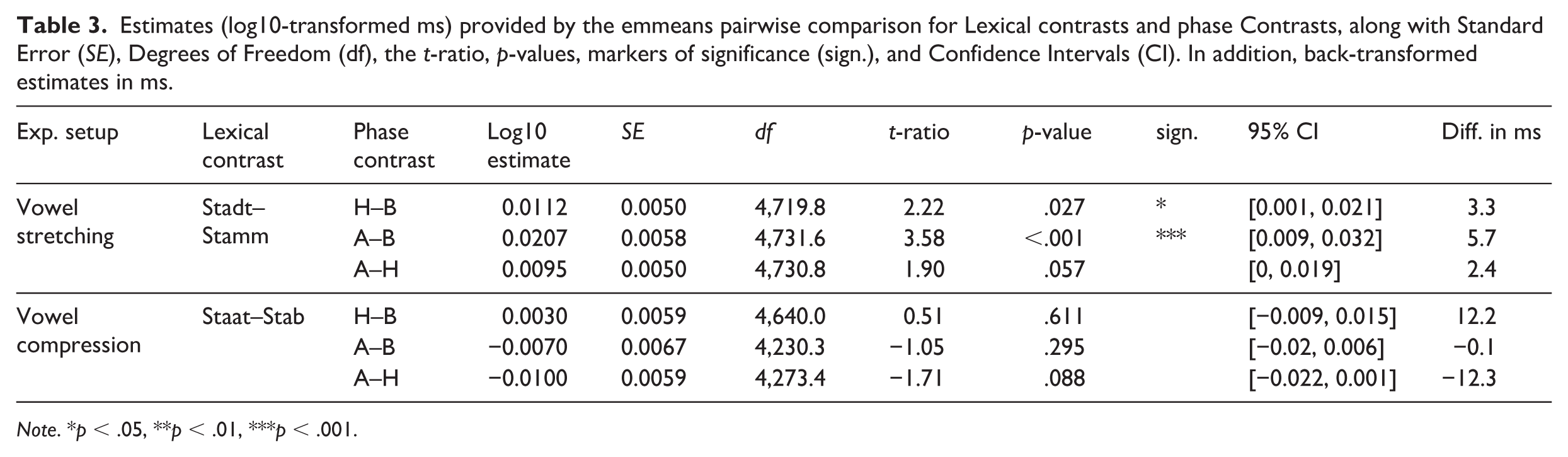

3.1.3 Results: lexical differences within setup

The analyses of second-order contrasts (summarized in Table 3) revealed that, in the Vowel stretching setup, the reduction in vowel duration from Baseline to Hold was significantly larger for the non-lexical condition (

Estimates (log10-transformed ms) provided by the emmeans pairwise comparison for Lexical contrasts and phase Contrasts, along with Standard Error (

3.1.4 Summary: vowel responses

Overall, the results demonstrate that speakers systematically opposed the direction of auditory perturbation for both the stretched vowel /a/ (Vowel stretching setup) and the compressed vowel /a:/ (vowel compression setup). In the Vowel stretching setup (

Second-order contrasts revealed a significant lexicality effect in the Vowel stretching setup: shortening was greater for the non-lexical condition

3.2 Lexical effects across setups

To examine a more global impact of lexicality on the perception-production loop, we analyzed the effect of lexical condition (lexical shift or non-lexical shift) on response magnitude

For this purpose, we calculated a measure of response (in %) relative to the maximal applied perturbation in the Hold phase, for both the Hold and the After-effect phase. This measure accounts for different perturbation magnitudes between experimental setups and thus allows a direct comparison.

3.2.1 Adaptation relative to the perturbation

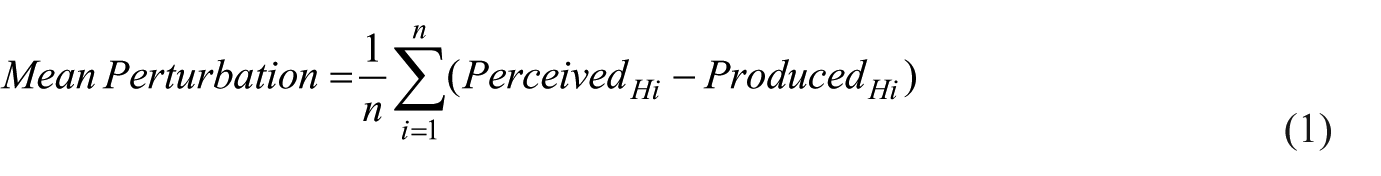

Adaptation as percent of perturbation was determined per speaker, word, and trial. This was achieved by first calculating the mean perturbation magnitude in the Hold phase as the mean duration of the perceived (perturbed) signal minus the spoken signal during the Hold phase (see Formula 1), where

Analogously to the procedure in Karlin and Parrell (2022),

With this calculation, we capture adaptation relative to the perturbation in the Hold phase, which technically includes both adaptive and reactive feedback control. In the After-effect phase, this measure captures only the adaptive component, but does so not in relation to the Baseline (as in Section 3.1), but in relation to the applied perturbation.

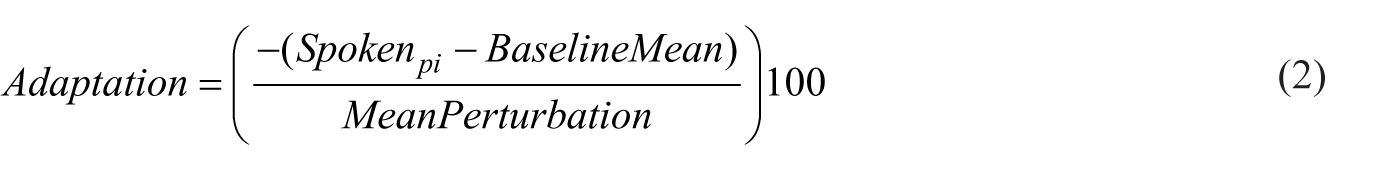

Visual examples of exemplary response patterns over the course of the experiment for a stretched segment. Titles indicate the amount of Adaptation in percent of perturbation (as calculated in formula 2) in the Hold phase (and no after-effects in the After-effect phase, for simplicity). The dark blue solid line marks the spoken signal, the light blue dashed line the heard/perturbed signal.

3.2.2 Model selection

Models were built with adaptation in % as the dependent variable, starting with a random-intercepts-only model. We then added fixed effects for Phase and Lexical Condition, followed by their interaction to test whether the Lexical Condition effect varied across Phases. Finally, Experimental Setup was included as a fixed effect to control for known baseline differences between experimental setups. Interaction terms involving Setup were not included, as our primary research question concerned Lexical Condition differences within phases, and we had no hypothesis that this effect would differ across Setups. Including Setup as a main effect allowed us to account for overall level differences without overfitting the model or introducing collinearity due to known overlap between Setup, Lexical Condition, and Word. The final LMM included fixed effects for Phase, Lexical Condition, their interaction, and Setup, along with by-subject random intercepts and random slopes for Phase. Visual inspection of residuals showed moderate deviations from normality, particularly in the tails of the Q–Q plot. Influence diagnostics indicated that several participants lay outside recommended boundaries; however, no single participant exerted a disproportionate influence on the fixed effects, including the critical Phase × Lexical Condition interaction. Attempts to transform the dependent variable (e.g., via signed square root) did not improve model fit or residual behavior. Given the large sample size, convergence of the full random effects structure, and the stability of key effects across influence analyses, we retained the untransformed outcome and reported results using standard inferential procedures. Robustness checks confirmed that the Phase × Lexical Condition interaction remained statistically significant and directionally consistent even when excluding the most influential participants.

3.2.3 Results: lexical effects across setups

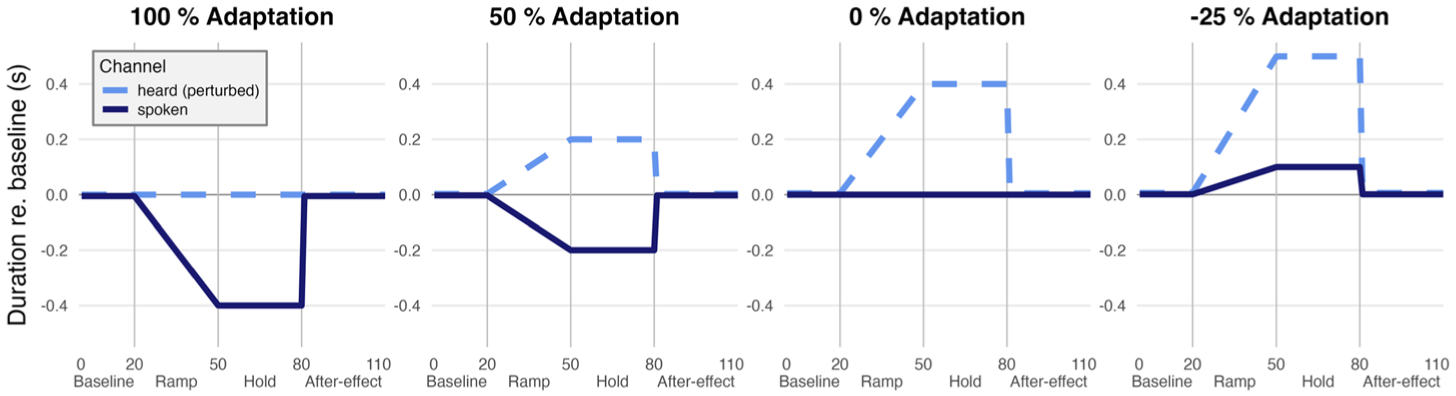

The model summary revealed a significant main effect of Phase,

In contrast, during the After-effect phase, adaptation was significantly larger for the non-lexical condition (

(a) Mean adaptation relative to perturbation throughout the Hold and After-effect phase by word, binned per 5 trials. Lexical condition in black (

3.2.4 Summary: lexical effect

In summary, although a significant main effect of Lexical Condition was observed, this effect appears to be driven primarily by the non-lexical condition

4 Other perturbed segments (consonants)

To achieve the desired manipulation of the vowels in our target words, the onset consonants preceding the compressed vowels in

4.1 Compressed coda consonants (in Stadt and Stamm )

For the compressed coda consonants in

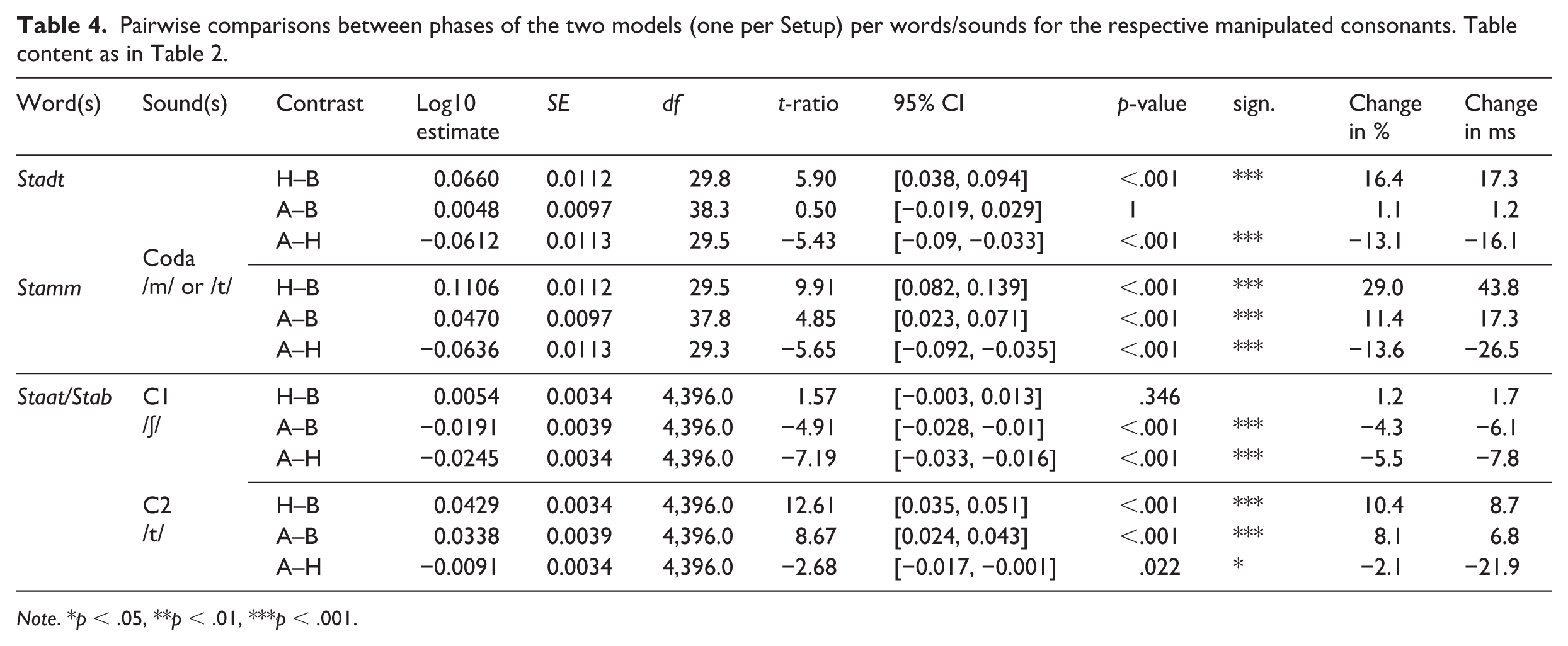

Pairwise comparisons between phases of the two models (one per Setup) per words/sounds for the respective manipulated consonants. Table content as in Table 2.

In

4.2 Stretched onset consonants (in Staat and Stab )

For the stretched onset consonants in

4.3 Summary: consonants

Taken together, the coda consonants /t/ (closure) and /m/ showed significant lengthening during perturbation (Hold phase), but only the /m/ in

5 Discussion

This study investigated the temporal control of speech segments with a special focus on lexical status in German. In a real-time temporal auditory feedback perturbation paradigm, we compressed and stretched vowels and consonants in real words. The vowel manipulations pushed toward a phonemic boundary, either causing a shift in lexical identity but not in lexical status (word to word, lexical condition) or a shift in lexical status but not in lexical identity (word to non-word, non-lexical condition). With this setup, we tested for a lexical bias effect in speakers’ production-perception loop. The two conditions were compared in absolute duration differences (in ms) within the same implementations (stretching the vowel /a/ or compressing the vowel /a:/) to ensure comparability and to examine the flexibility of the vowels to shorten or lengthen in production. In addition, the responses were compared across implementations with response measures relative to the applied perturbation.

5.1 Vowels

The main analyses of vowel durations (Section 3.1) showed that during perturbation (in the Hold phase), all vowels of interest were significantly adjusted in the direction opposite to the direction of perturbation. This opposing response was necessarily adaptive for the vowels in

Section 3.2 set the amount of response in relation to the applied perturbation during the Hold phase, and therefore allowed for a comparison of responses

5.2 Consonants

The analyses of the consonants in Section 4 supported this assumption, showing that both coda consonants (closure of /t/ in

Both onset consonants (C1 - /ʃ/ and C2 - /t/ in

5.3 Imbalance in shortening and lengthening responses

The data from both experimental setups indicate that lengthening responses are more pronounced than shortening responses. This effect might be naturally given, since short vowels can only be shortened by a certain extent before they are non-existent, while lengthening is theoretically limitless. This imbalance is presumably further enhanced by the fact that shortening responses most likely require higher cognitive effort due to the need to be “ahead of time” in planning and execution. On the contrary, lengthening or slowing down in response to manipulated auditory feedback can always be a generic response of taking more time to process the input. All compressed segments (the vowels in

5.4 Lexical effects

This study provides first insights into the control of phonemic quantity contrasts when an auditory shift in phonemic category jeopardizes the lexical status or not. We found that for temporal properties in German, maintaining lexical status seems less important than maintaining phonemic identification or maintaining typical productions of the intended token, that is, overall, our data did not show strong lexical effects. This finding indicates that, in our data, lexical status is not the major force that drives adaptive and reactive responses and that the lexical bias effect may not take effect in the temporal control of self-produced speech. Given our data, it is, on the one hand, possible that either phonemic identification is of higher priority than lexical status since adaptation was found for all vowels but to a similar extent independent of lexical condition. On the other hand, it could also be the case that phonemic or lexical categories in general do not play a large role in temporal adaptations. This assumption is supported by Karlin and Parrell (2022), who concluded that the driving force of adaptive responses in their data was not a change in phonemic category but rather percepts that fell outside the typical distribution of productions of the intended segment. This assumption could further explain the large responses for /m/ in

Regarding the role of timing in accepting speech tokens as self-produced, the study by Lind et al. (2014) connected self-agency in speech motor control with semantic processing in perception/production. In an auditory feedback perturbation study going beyond the phonemic level they fed back different words than those produced, challenging self-agency in semantic monitoring. In a Stroop task, participants had to name the color of an orthographically presented color word (such as the color name “red” written in the color blue) while hearing themselves over headphones. In some trials, a color name produced by the participant in previous trials, and different from the one produced in the respective trial, was fed back. Over two thirds of falsely fed back tokens (here: different words than those produced) remained undetected by participants and were still experienced as self-produced, as long as they had an ideal temporal alignment with the produced word. Thus, the temporal alignment between feedback and production was found to be more important than spectral shape, or even lexical/semantic identification. These findings, in combination with the results from this study, suggest that higher-order linguistic function plays a minor role compared with the general temporal alignment of productions and perceived auditory feedback.

5.5 Outlook and limitations

This study is to our knowledge the first examining the planning and control of temporal phonemic cues with respect to their dependence on higher-order linguistic function in speech motor control. In this study, we tested for a lexical bias effect by perturbing toward a “heard competitor” (another real word) or not. In future studies, a valuable addition would be to perturb toward “spoken competitors”; this would involve stretching the /a:/ in Staat and compressing the /a/ in Stadt, whereby opposing responses would push toward the “spoken competitors,” analogously to the spectral perturbation study by Frank (2011). This perturbation would reduce the mismatch between the sensory channels but could help to indicate whether different categories in feedforward representations might hinder adaptation to erroneous auditory feedback. For future studies it would further be relevant to investigate languages with more pronounced quantity cues, such as languages with geminates that differ in quantity, such as Italian or Finnish. For example, Finnish has segmental quantity oppositions in both consonants and vowels. Furthermore, in Finnish, there is a complex relationship between syllable and segment duration in stressed syllables depending on the moraic pattern, syllable structure, and voicing (Suomi & Ylitalo, 2004). More broadly speaking, the planning and control of cues on different linguistic levels remains a fruitful area of investigation. While most studies investigate phonemes as the distinctive factors in real word identification, another appealing approach to phonemic identification would be to use spectral or temporal alterations that violate the phonological structure of the language; this could deepen our understanding of the connection between the phonemic and phonotactic system of a language (i.e., which sounds exist and which sounds are allowed to be combined next to each other). For example, in Oschkinat and Hoole (2020), we found shortening responses to the stretching of the vowel /a/ in “

One limitation of the study is that it is possible that for some speakers, the perturbation might have pushed more toward the phoneme boundaries than for others, and therefore might have elicited greater responses. In future studies, the perceptual category boundaries and typically produced tokens of the vowels should be examined and monitored throughout the experiment. This approach should further take the broader context such as speech rate and typical context of sounds as well as word representation in the sense of exemplar-based approaches into account.

Further limits on generality are naturally given due to the small number of studies on the topic to date. That is, the speech material in this study (as in every similar study) is limited, and to date real-time temporal auditory feedback perturbation studies span over speech material in German and English. Since it was concluded previously that findings from temporal perturbation studies depend on the phonological structure of a language, for more generality the investigation of further languages is a clear desideratum. In addition, this study tested participants between the age of 18 and 35 to eliminate possible effects of hearing loss due to aging and reduce variability within the tested group of participants. In future studies, other age groups could be the focus of investigation to reduce this gap in our knowledge. In summary, the investigation of online processing of temporal characteristics of auditory feedback is still at quite an early stage compared with the huge body of work on the processing of spectral perturbations. Our results make it clear that results from the spectral domain cannot simply be extrapolated to the temporal domain and much work remains to be done to achieve a detailed understanding of processing in the temporal domain.

6 Conclusion

This study rejected the hypothesis that speakers respond more to temporal auditory feedback errors when the lexical identity (word to word) is jeopardized. Instead, phonemic identification and sound class were shown to be factors that enhance responses. Hence, this finding does not support the assumption that the so-called lexical bias effect plays a role in self-monitoring of temporal dimensions of speech, further indicating that self-produced speech is differently processed than not self-produced speech. The data of this study should serve as a motivation for continuing research on sensorimotor integration in speech with respect to higher-order linguistic processing, enhancing the development of linguistic and psycholinguistic models of speech perception that intertwine with existing models of speech motor control.

Footnotes

Appendix A

The following analysis examines whether the groups in the vowel compression setup (

Appendix B

In this appendix, the participant group of the Vowel compression setup for which the perturbation accidentally stretched the previous vowel /ɐ/ in the carrier word /bεsɐ/ were analyzed similar to the analysis described in Section 3.1. This group comprised 10 participants for

Acknowledgements

We thank all our participants for taking part in our study and Rosa Hufschmidt, Sebastian Böhnke, and Sishi Liao for their help in running the tests.

We would further like to thank two anonymous reviewers for their thoughtful comments on an earlier version of this manuscript. All remaining errors are our own.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by DFG; Grant No. RE 3047/1-1 and Grant No. HO 3271/6-1.