Abstract

This study compared online and face-to-face (f2f) testing using the short Polish version of the LITMUS Sentence Repetition Task (SRep) with multilingual and monolingual Polish-speaking children. The shift to remote testing during the COVID-19 pandemic prompted questions about whether online methods yield results comparable with in-person testing for assessing multilingual children’s grammatical abilities. Reliable online testing could enhance access to underrepresented populations, enabling families from diverse backgrounds to participate from home. We tested 92 multilingual children (speaking Polish and English or German) and 55 monolingual Polish-speaking children aged 4;6–7;6. Each child completed the SRep task twice (online and f2f) in a counterbalanced order. Results showed better performance on f2f tasks for both groups. Multilingual children improved on their second attempt, regardless of format, while monolinguals consistently scored higher in the f2f condition. These findings indicate differences in performance across testing modalities and the need to adapt and norm the SRep task for both online and f2f administration separately.

Keywords

1 Introduction

The growing use of remote (online) data collection methods has reshaped how developmental language research is conducted (Kominsky et al., 2021; Rhodes et al., 2020; Scott & Schulz, 2017; Sheskin et al., 2020; Zaadnoordijk et al., 2021). As they become more widely used—due to increased accessibility, flexibility, and postpandemic normalization—questions arise about how results obtained in online contexts compare with those gathered through traditional face-to-face (f2f) procedures. Rather than assuming inferiority or superiority of either approach, it is crucial to examine their comparability: do children perform similarly across modalities? Are task outcomes—such as morphosyntactic accuracy in sentence repetition—affected by the mode of administration? Addressing these questions is essential for ensuring methodological consistency, interpreting developmental data accurately, and making informed decisions about research and clinical practice design in both monolingual and multilingual populations.

Online and f2f procedures each offer distinct advantages (McRoy et al., 2024; Shields et al., 2021; Sutherland et al., 2017). In-person testing allows for greater control over the testing environment, easier rapport-building with child participants (Campbell et al., 2024), and direct monitoring of task engagement and comprehension (Shields et al., 2021). Online procedures, on the contrary, offer greater flexibility and scalability, reduce travel time and costs for both families and researchers, and allow access to geographically dispersed or underrepresented populations (McRoy et al., 2024).

These features make remote data collection particularly promising for studies involving multilingual children.

Children growing up with two or more languages often face a lack of access to clinical or research settings where both of their languages are spoken and understood. This challenge is particularly evident in countries where bilingual education and services are underdeveloped or where there is a shortage of professionals trained to work with multilingual populations (Castilla-Earls et al., 2022; Peña & Sutherland, 2022). In such contexts, online assessment (for diagnostic services) and language research (for empirical investigation) have emerged as promising tools to expand access. However, the comparability and reliability of online versus face-to-face (f2f) assessments remain open questions, especially when working with young children whose language skills are still developing.

Comparing f2f and online administration of the SRep task is theoretically grounded in Social Presence Theory (Gunawardena, 1995; Short et al., 1976). The absence of the physical co-presence can impact the individual’s behavior and, consequently, performance. According to the theory, mediated communication lacks the nonverbal cues, including body language, facial expressions, and eye contact, even when video connection is established. This reduction of social cues can lead to a sense of psychological distance. For children, whose communication and emotional recognition skills are still developing, this may play a role in their performance. This is also related to sociocognitive and developmental perspectives, which stress that children’s language performance is not merely a reflection of internal competence but is shaped by features of the interactional context (Tomasello, 2005; Vygotsky, 1978). In in-person settings, the presence of a researcher can provide subtle forms of social anchoring, supporting attentional focus, and reinforcing motivation—even if the task itself, like SRep, is noninteractive and based on prerecorded stimuli. Finally, from a measurement perspective, our comparison speaks to the issues of ecological validity and measurement invariance. While SRep is widely used in research and clinical assessment, it was designed and normed for in-person use. With the growing prevalence of online developmental testing (Castilla-Earls et al., 2022; Scott & Schulz, 2017), it is crucial to determine whether online implementations yield comparable data. Without such evidence, conclusions drawn from remote testing—particularly in populations such as multilingual children—may risk reduced validity (Buchanan & Smith, 1999).

Our study contributes to this discussion by comparing online and f2f versions of the Polish version of the LITMUS Sentence Repetition Task (SRep, Przygocka et al., 2021), a widely used tool for assessing morphosyntactic abilities. The question we address is whether the method of administration may play a role in the performance of children.

1.1 Assessing grammatical competence with Sentence Repetition Tasks

Sentence repetition tasks (SRep) have been shown to be an effective tool for the diagnosis of language impairments in English and other languages (e.g., Conti-Ramsden et al., 2001; Guasti et al., 2021; Pham & Ebert, 2020) and, more broadly, SRep results may reliably indicate children’s morphosyntactic skills (Polišenská et al., 2015). When doing a Sentence Repetition Task (SRep), the child hears a sentence that had either been prerecorded or that is said out loud by the experimenter and is asked to repeat it verbatim. The length of the presented sentences is designed to ensure that they are not simply memorized and repeated without understanding. The rationale behind the task is that to repeat the sentence correctly, the child must first decode and interpret its meaning, and then process and reproduce the sentence using long-term memory, which would not be possible without access to the grammatical structures represented in the given sentence (Marinis & Armon-Lotem, 2015). SRep tasks engage a wide range of language processing skills (Lombardi & Potter, 1992; Potter & Lombardi, 1990) and involve all levels of the language production system (Bock & Levelt, 1994). In other words, to reproduce a medium to long sentence, one needs to know not only the vocabulary but also the grammar (Komeili & Marshall, 2013). Such tasks allow the researchers to determine which grammatical constructions have already been mastered by the child and which have not yet (Kidd et al., 2007). They may also reveal morphological deficits, which is why they are commonly used both in clinical practice and in research (Conti-Ramsden et al., 2001). SRep is relatively easy to administer and adapt to online testing, which is an additional advantage in the case of testing children in the computer-mediated formula.

Recent studies (Aparici et al., 2024) stressed the necessity of focusing on the language development of multilingual children, particularly in online assessments (Yang et al., 2024). This emphasis was critical given the gap in our understanding of multilingual versus monolingual language development. For monolingual children, language growth and challenges are well-documented and understood, forming a robust foundation for accurate diagnosis and intervention.

1.1.1 Comparing online and f2f language assessment in children

Some language studies with children have shown that online methods can be comparable with f2f methods in terms of psychometric reliability, validity, and overall effectiveness. For example, Magimairaj et al. (2022) showed that the online version of the Test of Narrative Language–Second Edition (TNL-2) maintained similar psychometric properties to the in-person version, while Arnold et al. (2022) found that an established grammatical intervention was equally comparable when delivered via telepractice. In studies with adult participants, online testing has lowered barriers to research, enabling scientists to collect datasets from diverse populations quickly (Buhrmester et al., 2011; Feenstra et al., 2018; Paolacci et al., 2010; Shapiro et al., 2013). The shift to online learning during the COVID-19 pandemic sped up this trend, as shown by the increase in the use of telepractice among speech and language pathologists (Pratt et al., 2022). When focusing on behavioral research with children, several studies have suggested that when assessing the language abilities of 4- to 9-year-olds the levels of agreement between f2f assessments and online assessments ranged from fair to high (Eriks-Brophy et al., 2008; Waite et al., 2006, 2012). Manning et al. (2020) showed that language samples collected from children online were comparable with samples collected f2f in terms of speech and language measures. During parent-child play sessions, the authors recorded child language samples either in the lab or via video calls at home. The quantity of acceptable samples and the proportion of utterances with clear audio were similar for both the f2f and online recordings. The features of the children’s speech and language, including average utterance length, type-token ratio, vocabulary diversity, grammatical precision, and clarity of speech, showed no significant differences across the two collection methods. In addition, the consistency of transcriptions, evaluated on a subset of these samples, was high and did not vary between the f2f and online methods. Pratt and colleagues (2022) conducted a proof-of-concept study in which the participants were 4- to 8-year-old children who were bilingual in Spanish and English. The results were obtained for a battery of language tests that were administered f2f and online, including sentence repetition, which is a task measuring morphosyntactic abilities. They found a significant positive correlation (r = .95 for English morphosyntax; r = .82 for Spanish morphosyntax) between f2f and online performances of tasks measuring morphosyntactic abilities. Although the sample was small (n = 10), the study indicated that online and f2f studies yielded similar results.

The existing body of online research concerning multilingual children remains relatively limited (Peña & Sutherland, 2022). Castilla-Earls and colleagues (2022) conducted a study with bilingual children to assess the comparability of online and f2f methods in delivering language assessment services. This research aimed to determine if the method of delivery influences receptive vocabulary scores in Spanish and English. Children were tested twice, initially in a f2f setting and subsequently online or vice versa, due to the logistical changes forced by the COVID-19 pandemic. The assessments used were standardized vocabulary tests—specifically, the Peabody Picture Vocabulary Test–Fourth Edition (PPVT-4) for English and its Spanish counterpart, Test de Vocabulario en Imágenes Peabody (TVIP). The study included both bilingual children with typical language development and those with developmental language disorders (DLD).

The study reported no significant differences in receptive vocabulary scores between the two methods, indicating that telepractice can be as effective as f2f assessments. This result supports the feasibility of online sessions in environments where traditional f2f interactions are not possible, such as during lockdowns or in remote areas.

Castilla-Earls and colleagues (2022) suggest that online service is not only a viable alternative to f2f assessments in terms of reliability, but also a necessary tool in expanding access to speech-language pathology services. The authors stress the potential of the online modality in incorporating diverse and underserved populations into research and clinical practices, which is especially significant for bilingual communities.

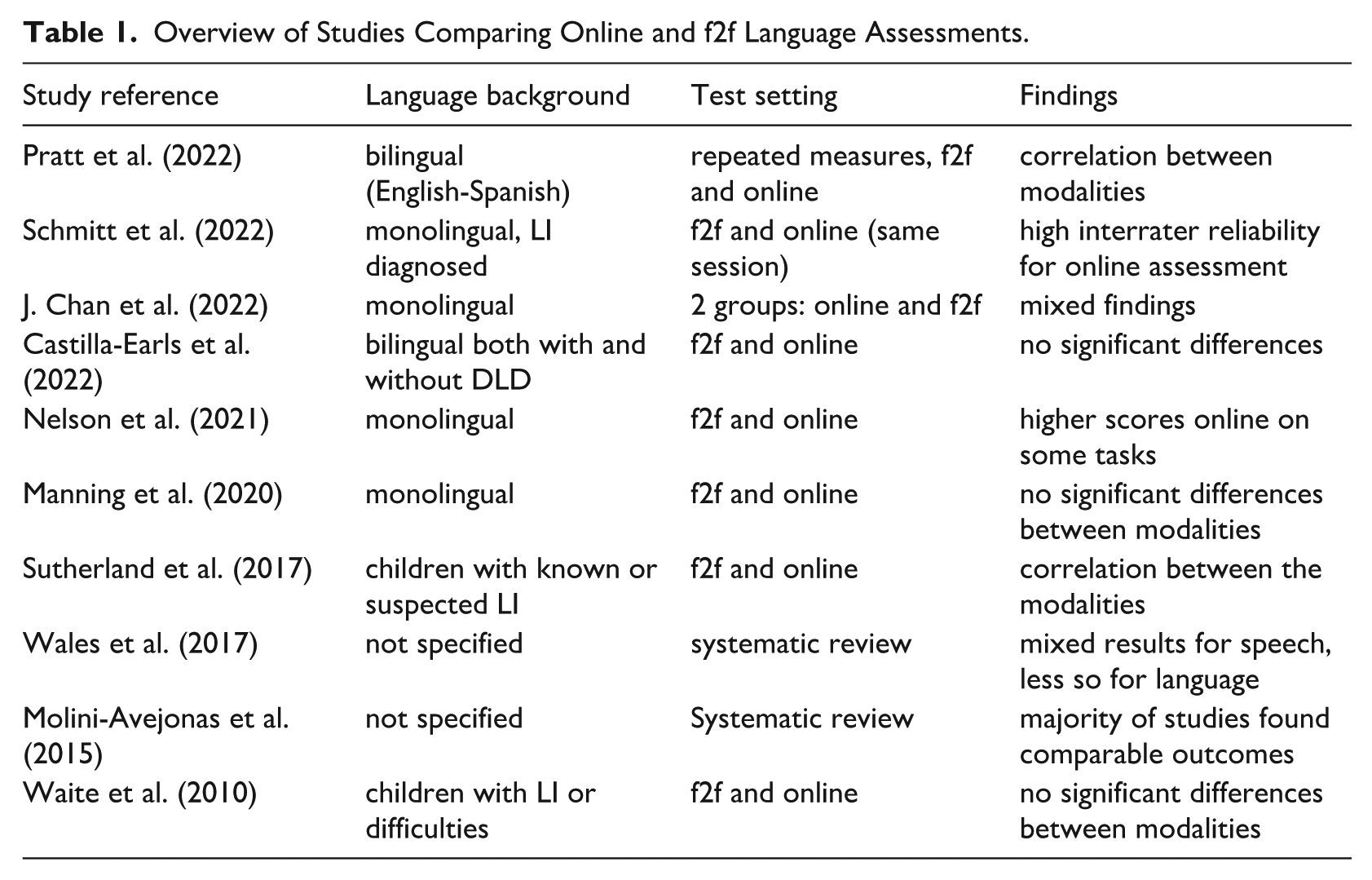

To provide an overview of previous research comparing online and f2f assessment methods in language research, Table 1 summarizes key studies on the topic. It presents the list of studies in descending order by year of publication. The criteria for inclusion was the focus on language research involving children and that the authors provide conclusions and recommendations related to online assessments. One of these studies used the Wechsler Intelligence Scale for Children (WISC), however, four subtests that are part of it are language assessments (Vocabulary, Similarities, Comprehension, Information). A more detailed version of this table, including study methods, assessment types, findings, author interpretations, and limitations, is available in the Supplementary Material.

Overview of Studies Comparing Online and f2f Language Assessments.

While the studies presented in the table largely suggest that online assessments are a valid alternative to f2f assessments, the results are not always consistent, and do not always allow for strong conclusions. For example, Nelson et al. (2021) reported higher scores online than in person in Information, Similarities, and Matrix Reasoning, but found no significant differences in other tasks. Despite this, the authors concluded that online assessments can effectively mirror f2f results, stressing the need for further research to clarify these discrepancies and assess the reliability of online testing across different tasks and populations.

Our aim was to verify whether remote testing yields similar results to those of the in-person testing of the grammatical abilities of multilingual and monolingual children. Answering this question could be significant for accessing understudied populations. In particular, Scott and Schulz (2017) concluded that many important questions about children’s development and learning mechanisms remain unanswered merely because of practical challenges in conducting studies that address them. Reliable online data collection could address these constraints by allowing families to participate in behavioral studies at their homes.

Testing language abilities in multilingual children, particularly through online tools, is crucial, perhaps even more so than for monolingual children. This is because multilingual children often reside in environments where the majority language is different from the one(s) spoken at home. While monolingual children can readily access language support and assessment services in their country of residence, multilingual children face unique challenges. Their exposure to their home language(s) might be limited to their family and immediate community, making it difficult to access specialists fluent in these languages locally. Online testing platforms offer a solution to this problem by providing these children with the opportunity to be assessed in all their languages, regardless of the local availability of language specialists. This is especially important for ensuring that any language development issues are accurately identified and addressed, taking into account the full scope of the child’s linguistic environment (Paradis, 2023; Wang et al., 2025).

2 The current study

The aim of the study was to empirically compare online with f2f testing using the short Polish version of the LITMUS Sentence Repetition Task (Przygocka et al., 2021) with multilingual children who speak Polish as one of their languages. Because of the limitations in and variability of previous studies, summarized in Table 1, we did not formulate specific hypotheses related to the existence and direction of the potential differences in the testing formats. Our main research question was whether the results of SRep administered online yields similar results to the results obtained by the same children in SRep administered face-to-face.

In other words, the study focused on determining how well the SRep, which was originally intended for f2f use, is applicable to online testing of multilingual children.

3 Method

3.1 Participants

We tested 92 children aged 4;6–7;6 (M = 5;11; SD = 0;7; age at first session) who were raised multilingually, either because of various languages being present in their home and school environment or because at least one of their parents decided to talk to them in a language other than Polish even though they were not native speakers of that language. The group was relatively gender-balanced, with 51.1% boys (n = 47) and 48.9% girls (n = 45). All children included in the analysis were within typical nonverbal intelligence for their age according to the Raven’s Colored Matrices (RCPM) scores (from 5 to 10 sten; M = 7.5; SD = 1.4). According to parental report, none of the children had a formal diagnosis of any neurodevelopmental disorder. For all the children, one of the languages was Polish, and another was either English (n = 55), German (n = 22), or both (n = 16). In the whole group of multilingual children, 47.3% spoke more than two languages (apart from Polish, English, and German, these were mainly Spanish, French, Arabic, Hungarian, Russian, and Silesian), but Polish and English or German were the dominant languages. The exposure to each language differed, the age of first exposure averaged 3 months for Polish, 14 months for English, and 11 months for German. Length of exposure averaged 69 months for Polish, 59 months for English, and 59 months for German. Similarly, the length of exposure to each language in months was calculated, taking into consideration the age of the child during the first session. At the time of the study, 49.5 % of children in this group lived in Poland (n = 46), 40.7%—in Germany (n = 37), and 7%—in the United Kingdom (n = 6). The remaining three children lived in the United States of America, New Zealand, and Belgium, and spoke Polish and English. The group of multilingual children included 24 children (24.7 % of the group) raised in intentional bilingualism, who lived in Poland, but at least one of the parents spoke to them regularly in English and/or they attended kindergarten with an English-speaking teacher. Most mothers of multilingual children had higher education (84.9%; n = 79) or unfinished higher education (7.5%; n = 7), only 5.4% of mothers (n = 5) had secondary education. Multilingual children scored high in Polish Cross-Linguistic Lexical Tasks (CLT, Haman et al., 2015; M = 30.87, max. 32, SD = 2.12). Children who live outside Poland were assessed f2f in their homes by members of our teams who traveled to collect data, or, in the case of three families, they were tested in Poland when they were visiting their relatives during summer vacation.

In addition, we also tested 55 Polish monolingual children using the same tools. We collected data from 21 boys (38.2%) and 34 girls (61.8%) aged 5;0–7;0 (M = 5;11; SD = 0;7; age at first session). Children included in this group lived in Poland and spoke only one language. All children obtained results in the RCPM that were typical for their age (M = 7.8; SD = 1.3) and were not diagnosed with neurodevelopmental disorders. Monolingual children scored on average M = 31.78 (max. 32, SD = 0.53) in Polish CLT. The mothers of monolingual children mostly had higher education (87.3%; n = 48) or unfinished higher education (9.1%; n = 5), only one had secondary education and only one had primary education.

For each participating child, we obtained parental report through PABIQ (see the “Materials” section). Eighty-seven mothers and six fathers completed the questionnaire.

3.2 Materials

We used the Polish short version of the Sentence Repetition Task (Marinis & Armon-Lotem, 2015; Przygocka et al., 2021) which is described in detail below. The list of the 20 items is provided in Supplementary Materials, Table S7 (Przygocka et al., 2021). These sentences are included to document the stimuli analyzed here and do not constitute a publication of the instrument; administration and scoring should follow the complete LITMUS SRep procedure (Przygocka et al., 2021), with a step-by-step protocol to be reported in a separate methods paper (Author et al., in preparation). In addition, to control for vocabulary, we used the noun comprehension subtask of the computerized Cross-linguistic Lexical Task (CLT-PL-R; Haman et al., 2021). To control for nonverbal intelligence, we used the RCPM (Jaworowska & Szustrowa, 2011). The Parents of Bilingual Children Questionnaire (PABIQ) was used to obtain data on family demographics, language background, and some language practices (Tuller, 2015; Polish online adaptation by Mieszkowska et al., 2021).

3.3 Sentence Repetition Task

The short version of the LITMUS Polish Sentence Repetition Task (Przygocka et al., 2021), is based on a long version (Banasik et al., 2012) developed within the COST Action IS0804 “Language Impairment in Multilingual Society: Linguistic Patterns and the Road to Assessment” (Armon-Lotem et al., 2015). When doing the Sentence Repetition Task (SRep), the child hears a prerecorded sentence and is asked to repeat it. The task consists of 20 sentences of varying complexity and length. The syntactic structures selected for the short version of SRep represent three levels of complexity of Polish language syntax. The first level consists of simple sentences of five different types of constructions: 1. SVO with one auxiliary / SVO with one modal, 2. SVO with one auxiliary and negation / SVO with one modal and negation, 3. short actional passives, 4. wh-who/what question, 5. bi-clausal sentences—coordination or complement. This level is represented in the test by the first eight sentences. The next level is more complex SVO constructions and general complex sentences, such as: 1. SVO with two auxiliaries or auxiliary + modal, 2. SVO with two auxiliaries and negation, 3. wh-object which question 2x and indirect object wh-question, 4. Bi-clausal sentences—complement clauses /adjunct clauses. The second level also contains eight sentences. The last, third level consists of complex sentences with the following structures: 1. SO relative clause—center embedded, 2. sentences with nouns taking complements, 3. object relatives with adverbial PPs. They are represented by the last four sentences of the task. Level 1 sentences are also shorter (5–7 words) than Levels 2 and 3 sentences (7–8 words). The task is presented in PowerPoint (Marinis & Armon-Lotem, 2015), with an animation of a bear moving toward a treasure, with each of the sentences that are being repeated. The sentences have been prerecorded and are played automatically when the slide is changed. There is no time limit for the task, and it is not timed, the child can take as much as she needs to repeat the sentence that is played. When the child repeats the sentence or says “I don’t know,” the researcher proceeds to the next slide/next sentence. The child only hears each sentence once, unless a disruption that makes it impossible for the child to hear the sentence. All responses are audio-recorded and scored after the session. If the child produces a verbatim repetition of the stimulus sentence, the response is scored as correct (score = 1). Otherwise, it is scored as incorrect (score = 0). Phonological alterations to the target words (the words a child needs to accurately repeat in the same grammatical form as presented) that may be related to the child’s emerging pronunciation are not taken into account. The maximum score for this task is 20. This binary method is referred to as target-conformity coding. In addition to this approach, we also applied two alternative scoring schemes, inspired by Correia et al. (2025). In the structure coding system, responses were evaluated with respect to the accuracy of the syntactic structure, even if lexical substitutions or minor morphological deviations occurred. In the structure and grammaticality coding system, a response was credited only if both the target syntactic frame and overall grammatical well-formedness were preserved.

Sentence repetition tasks involving sentences of varying morphosyntactic complexity are effective screening measures for language impairment in children, as children with LI often struggle with complex structures (Polišenská et al., 2015).

3.4 Procedure

The data presented in this study were collected between July 16, 2022 and May 31, 2023.

Each of the children was tested twice: once during an f2f visit to the child’s home by a research assistant, and once during an online meeting, when the child was at home and the same research assistant conducted the study connecting remotely using a video conferencing application. Remote testing was conducted via video conferencing platforms that were familiar and convenient for the families (as indicated by them), most commonly MS Teams, Google Meet, or Zoom.

Data were collected over a period of time spanning between 2 and 4 weeks for each child. The time between the online and f2f testing was approximately 2.5 weeks (M = 18 days, SD = 6 days).

During the online session, the child only performed SRep, and during the f2f session—SRep, CLT, and RCPM were administered in a sequentially balanced order. Consequently, the sequence in the f2f session varied, with SRep not always being the first task administered, while in the online session, as it was the only task, it was never preceded by another one.

While doing the Sentence Repetition Task, the child was asked to wear headphones during both of the sessions.

Before starting the online study, the parent of the child was asked to verify the sound quality, and necessary steps were taken to adjust it if needed. The researcher shared both the screen and the sound with the child. The parent of the child was asked to be present during the beginning of the session when the sound and vision were tested, but was free to leave when the child started the task. The research assistant shared their screen to present the PowerPoint-based version of the Sentence Repetition Task, which included both visual and auditory presentation, the same that was used in f2f setting. Completing the task during the online session took about 5 min. The whole f2f session with the child took up to 30 min. During the first session, the researcher introduced herself and had a semi-structured conversation with the child to create a positive and comfortable environment. Following the child’s verbal consent to participate in the study, the researcher initiated the PowerPoint presentation (f2f session) or shared their screen with the visual and auditory presentation of the SRep (online session). After reading the standardized instructions to the child, the researcher started the prerecorded presentation. The child’s responses to SRep in both modalities (online and in-person) were audio-recorded and then transcribed by the researcher. The scoring took place after the administration. The study protocol card had a general rubric for noting any technical issues or the child’s behaviors or reactions that might indicate disruptions. The research assistants had been trained to indicate if there were any disturbances during the administration of the tasks. In our study, we did not encounter significant technical issues that could hinder the online testing or compromise data quality.

There was no fixed division of research assistants by participant group: they worked with both monolingual and multilingual children. The researchers had received intensive training and verification before data collection, including detailed instruction in how to transcribe and score children’s responses.

Responses were audio-recorded and later transcribed and scored by the research assistants conducting the sessions. To ensure data reliability, all transcripts and scoring were reviewed as part of a quality control protocol implemented by the project leader and data steward. This process included a thorough review and feedback on each researcher’s initial transcriptions and scorings, followed by systematic auditing and—if necessary—correction of data by the core research team. All responses were scored according to three systems: target-conformity, structure coding, and structure and grammaticality coding (see the “Materials” section for details).

The study was conducted after the strict lockdown phases of the COVID-19 pandemic and we assume that certain tools and habits developed during the pandemic—such as caregivers’ increased familiarity with video conferencing—may have facilitated the implementation of the remote testing sessions.

Recruitment was generally challenging, though not necessarily more so than in our previous studies conducted in face-to-face settings. Based on our experience, the main difficulty lies in reaching families with children in a relatively narrow target age range, rather than in caregivers’ willingness to participate. That said, the online modality may have introduced some degree of self-selection: families who were more confident in their digital skills and accustomed to using computers might have been more likely to take part. Once recruited, however, retention in the study was high—many children appeared to enjoy the sessions, and some even asked when they would meet again with the research assistant.

3.5 Data analysis

The analyses were conducted in two steps. In the first step, an omnibus model was tested, incorporating participants’ language group (monolingual vs. multilingual), testing order (f2f or online first), “test type” (f2f vs. online), and their potential interactions. The objective of this step was to identify which effects reached statistical significance. In the second step, follow-up analyses were performed separately for monolingual and multilingual children. For each group, a mixed 2 × (2) design was employed. This approach allowed for the examination of a between-group main effect of testing sequence, a within-group main effect of “test type,” and their potential interaction. In all analyses, the dependent variable was the total score on the SRep task. These scores were calculated using three scoring schemes: target-conformity coding, structure coding, and structure and grammaticality coding. The latter two approaches were inspired by Correia et al. (2025) and allowed us to account for sentence structure accuracy and the combination of sentence structure with overall grammatical correctness, respectively.

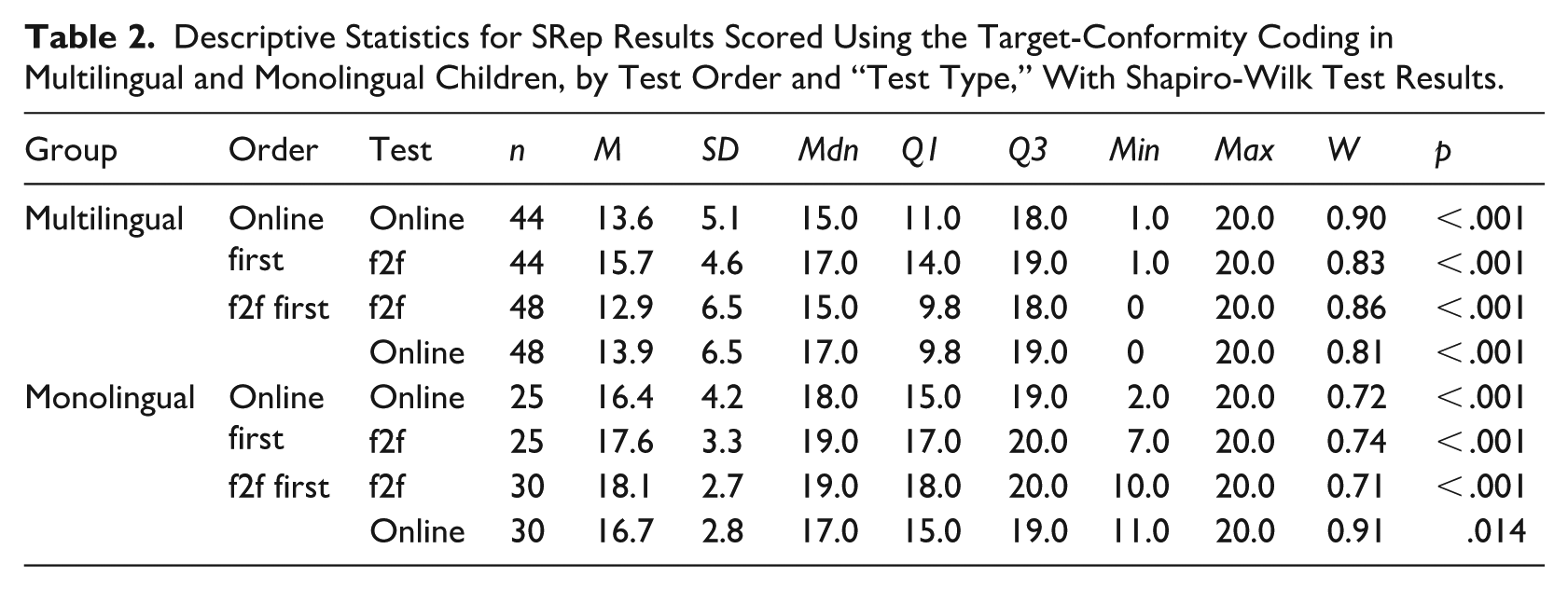

Given that the distributions of the outcome variables significantly deviated from normality (see Tables 2 to 4), nonparametric equivalents of mixed-model analysis of variance (ANOVA-type tests) were employed. Specifically, the F2-LD-F1 method was used for the omnibus model (Step 1), and the F1-LD-F1 method was applied in the follow-up analyses (Step 2), following Brunner et al. (2002). In these models, language group and testing sequence were treated as whole-plot (between-group) factors (F2/F1), while the “test type,” which stratified repeated measurements, served as the subplot (within-group) factor (F1; Noguchi et al., 2012). For both models, ANOVA-type statistics were calculated to assess main and interaction effects.

Descriptive Statistics for SRep Results Scored Using the Target-Conformity Coding in Multilingual and Monolingual Children, by Test Order and “Test Type,” With Shapiro-Wilk Test Results.

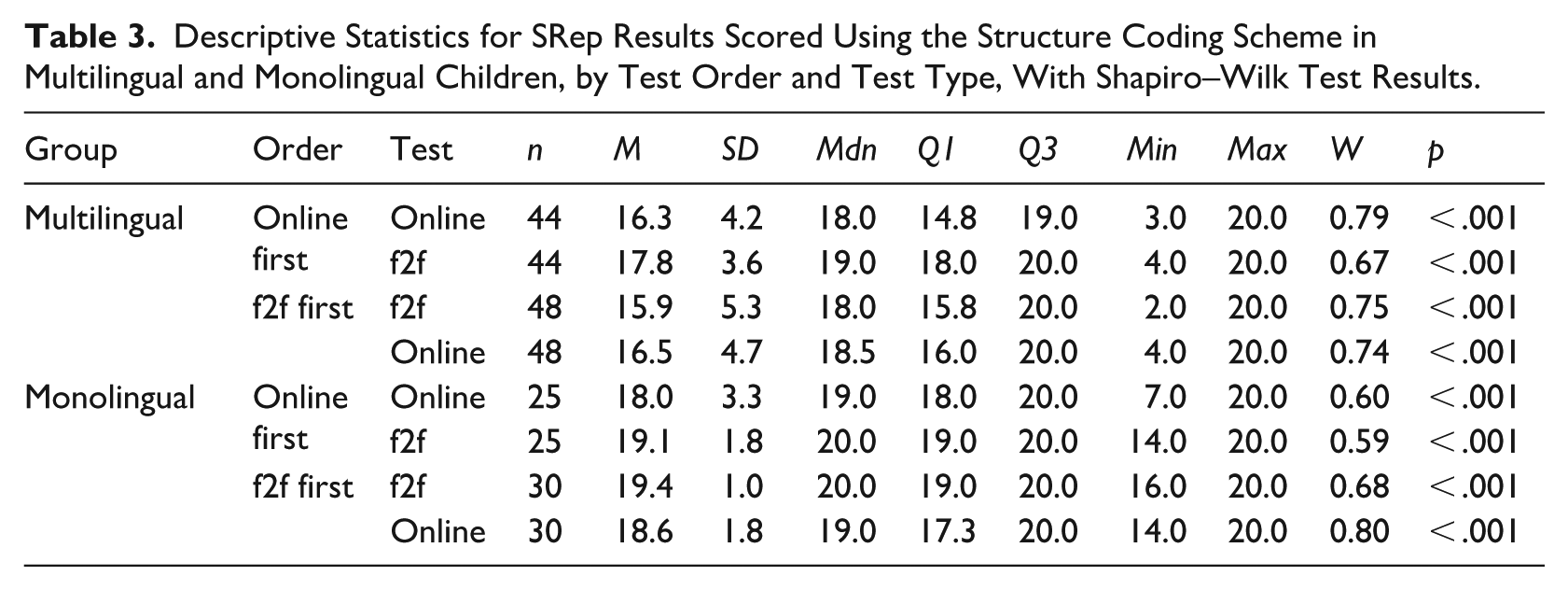

Descriptive Statistics for SRep Results Scored Using the Structure Coding Scheme in Multilingual and Monolingual Children, by Test Order and Test Type, With Shapiro–Wilk Test Results.

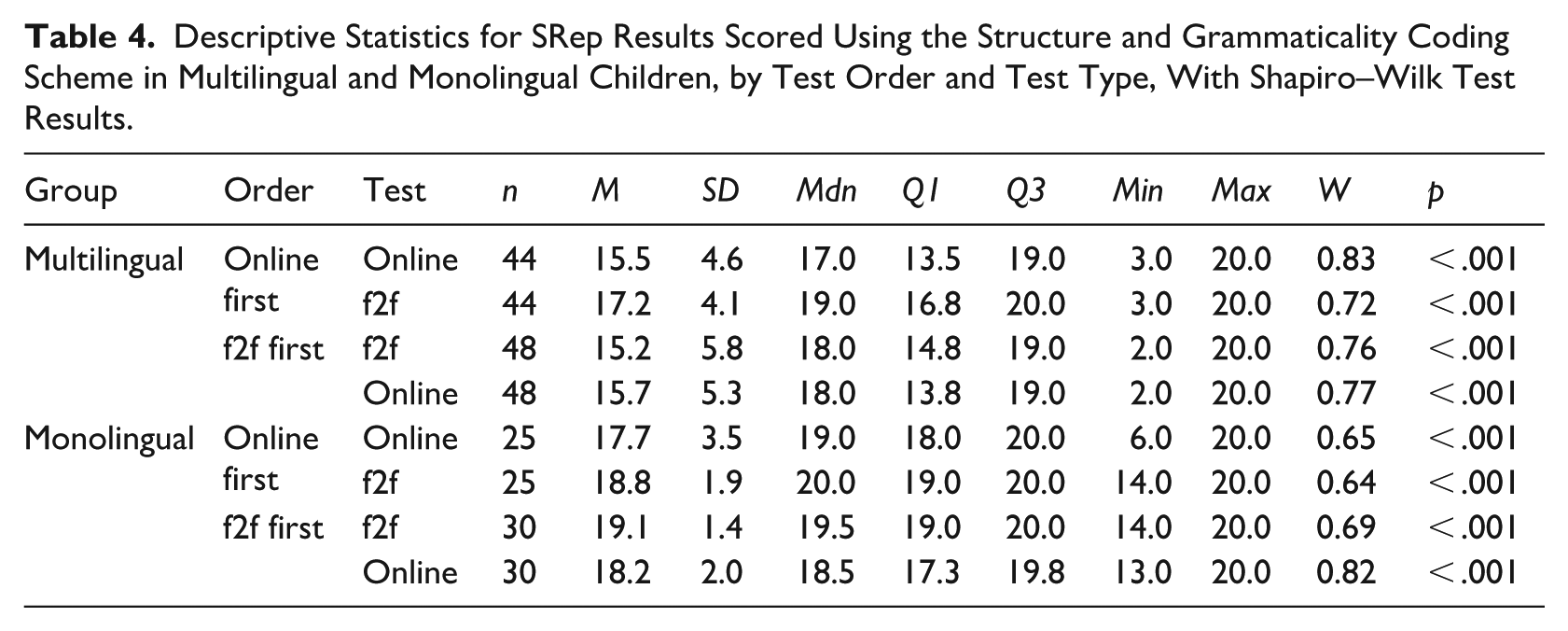

Descriptive Statistics for SRep Results Scored Using the Structure and Grammaticality Coding Scheme in Multilingual and Monolingual Children, by Test Order and Test Type, With Shapiro–Wilk Test Results.

As these models do not include built-in post hoc comparisons procedures (such as Tukey’s HSD), pairwise tests were conducted manually. Within-group differences were examined using the nonparametric Wilcoxon signed-rank test, while between-group differences were assessed using the Mann–Whitney U test, both with Bonferroni correction. Statistical significance was set at a corrected threshold of p < .0125 (.05/4). Effect sizes were estimated using the rank biserial correlation, and 95% confidence intervals were calculated via bootstrapping (5,000 iterations).

All analyses were conducted using R version 4.1.1 (R Core Team, 2021) on Windows 10 Pro 64 bit (build 19045), using the packages rio version 0.5.29 (C. Chan et al., 2021), purrr version 0.3.4 (Wickham, 2020), sjPlot version 2.8.14 (Lüdecke, 2023), report version 0.5.7 (Makowski et al., 2023), nparLD version 2.2 (Noguchi et al., 2012), ggstatsplot version 0.9.3 (Patil, 2021), patchwork version 1.1.2 (Pedersen, 2022), psych version 2.1.6 (Revelle, 2021), MASS version 7.3.57 (Venables & Ripley, 2002), ggplot2 version 3.4.0 (Wickham, 2016), readxl version 1.3.1 (Wickham & Bryan, 2023), and dplyr version 1.1.2 (Wickham et al., 2023).

4 Results

4.1 Descriptive statistics

Table 2 presents the descriptive statistics for the SRep results scored using the target-conformity coding scheme for monolingual (n = 55) and multilingual (n = 92) children, broken down by the order of testing (online first or f2f first) and the “test type” (online or f2f). The table also includes Shapiro-Wilk test results assessing the normality of the distributions.

An analysis of the distribution of the dependent variable (SRep) across each of the main factors revealed the following patterns. In the group of multilingual children, the mean score was M = 14.0 (Mdn = 16.0; SD = 5.8), whereas in the group of monolingual children, the mean was M = 17.2 (Mdn = 18.0; SD = 3.3). With respect to testing order, children in the online-first group obtained an average score of M = 15.5 (Mdn = 17.0; SD = 4.7), while those in the f2f-first group scored M = 14.9 (Mdn = 17.0; SD = 5.7). Regarding “test type,” the mean score for the face-to-face version was M = 15.6 (Mdn = 18.0; SD = 5.2), and for the online version, M = 14.8 (Mdn = 17.0; SD = 5.3).

In an analogous manner, the distributions of SRep scores obtained using alternative sentence evaluation methods were assessed: structure coding (focusing on sentence structure accuracy; Table 3), and structure and grammaticality coding (requiring both the correct structure and overall grammatical well-formedness; Table 4).

As shown in Tables 3 and 4, the application of alternative scoring schemes (structure coding and structure and grammaticality coding) led to systematic increases in SRep scores. Under structure coding (focusing on sentence structure accuracy), mean scores increased by approximately 2.2 points; under structure and grammaticality coding (requiring both the correct structure and overall grammatical well-formedness), the increase averaged 1.7 points. The mean difference between these two scoring approaches was 0.7 points. In addition, the lower standard deviations and narrower interquartile ranges observed under the alternative scoring schemes indicate reduced variability and a greater concentration of scores around the mean.

To determine which main and interaction effects were statistically significant, nonparametric tests of the ANOVA-type were employed. This approach was necessary due to the significant deviations from normality observed in the distributions of the dependent variable across the group compared.

4.2 Omnibus model

The results of the ANOVA-type tests for the 2 × 2 × (2) design are summarized below; full statistics are provided in Supplementary Materials in Table S1. Two main effects were statistically significant across all three coding methods: language group, target-conformity: ATS(1; ∞) = 17.34; p < .001; structure: ATS(1; ∞) = 15.60; p < .001; structure-and-grammaticality: ATS(1; ∞) = 19.24; p < .001, and test type, target-conformity: ATS(1; ∞) = 26.13; p < .001; structure: ATS(1; ∞) = 20.76; p < .001; structure-and-grammaticality: ATS(1; ∞) = 22.47; p < .001. In contrast, the main effect of testing order (f2f first vs. online first) was nonsignificant across all scoring methods. The interaction between testing order and language group also failed to reach significance under any scoring approach. However, the interaction between testing order and “test type” was statistically significant in all three models, target-conformity: ATS(1; ∞) = 6.25; p = .012; structure: ATS(1; ∞) = 10.15; p = .001; structure-and-grammaticality: ATS(1; ∞) = 5.55; p = .019, suggesting that the effect of test type depended on the sequence in which the tests were administered. The interaction between language group and test type was significant only in the target-conformity coding model, ATS(1; ∞) = 7.71, p = .005. Notably, the three-way interaction among testing order, language group, and test type reached statistical significance across all scoring methods, target-conformity: ATS(1; ∞) = 16.64; p < .001; structure: ATS(1; ∞) = 5.25; p = .022; structure-and-grammaticality: ATS(1; ∞) = 6.17; p = .013. This finding suggests that the interaction between test type and testing order may differ depending on language group. Consequently, follow-up analyses were conducted separately for monolingual and multilingual children.

4.3 The results for the multilingual group

The analysis followed an ANOVA-type mixed-model framework with a 2 × (2) design, in which “test type” (f2f vs. online) was treated as a within-subject factor and test order (f2f first vs. online first) as a between-subject factor. The results (Supplementary Materials, Table S2) are presented separately for each of the three scoring methods: target-conformity coding, structure coding, and structure and grammaticality coding.

Across all scoring methods, test order did not have a statistically significant effect on SRep performance. In contrast, the main effect of test type reached significance in all models, target-conformity: ATS(1; ∞) = 5.37; p = .021; structure: ATS(1; ∞) = 6.25; p = .012; structure-and-grammaticality: ATS(1; ∞) = 12.91; p < .001. Moreover, a robust and statistically significant interaction between test order and test type was found in each model, target-conformity: ATS(1; ∞) = 43.78; p < .001; structure: ATS(1; ∞) = 26.88; p < .001; structure-and-grammaticality: ATS(1; ∞) = 25.33; p = .001, indicating that the effect of test type varied depending on the order in which the assessments were administered.

The factor “order” (i.e., whether the f2f test was administered first or the online test) did not have a statistically significant effect on the test results. In contrast, the factor “test type” (f2f or online) did have a statistically significant effect, with children achieving higher scores during f2f sessions (M = 14.3; Mdn = 16.0; SD = 5.8) compared with online sessions (M = 13.7; Mdn = 15.5; SD = 5.9). Similar patterns were observed for the structure coding, and structure and grammaticality coding methods. Nevertheless, the statistically significant interaction effect indicates that the effect of a “test type” varied by test administration order.

Further pairwise comparisons of the interaction effect were carried out manually, as ANOVA-type models do not have built-in procedures for post hoc comparisons. The nonparametric Wilcoxon test was used to gain insight into the direction of within-group differences. These analyses examined the differences between the “test types” (f2f vs. online) within each administrative group (f2f-first and online-first). In addition, the nonparametric Mann–Whitney U test was used to analyze the differences between the administrative conditions (f2f-first vs. online-first) within each “test type” (f2f and online). These follow-up analyses were conducted separately for each of the three scoring methods: target-conformity coding, structure coding, and structure and grammaticality coding.

Pairwise comparisons revealed significant within-group differences in SRep performance according to the “test type” in the online-first group (n = 44). Across all three scoring methods, children performed significantly better in the f2f condition than in the online one. For the target-conformity coding method, the mean score in the f2f condition was M = 15.7 (Mdn = 17.0; SD = 4.6) compared with M = 13.5 (Mdn = 15.0; SD = 5.1) in the online condition, W = 719.00, p < .001. The rank biserial correlation, rrb = −0.94, represented a large effect size. The 95% confidence interval for that correlation [-0.97, -0.89], did not include zero, further supporting the significance of the effect. Similarly, under the structure coding, scores were significantly higher in the f2f condition (M = 17.8; Mdn = 19.0; SD = 3.6) than in the online condition (M = 16.3; Mdn = 18.0; SD = 4.3), W = 510.00, p < .001, rrb = −0.82, CI95% [−0.90, −0.67]. For the structure and grammaticality coding method, children again scored higher in the f2f condition (M = 17.3; Mdn = 19.0; SD = 4.1) than online (M = 15.6; Mdn = 17.0; SD = 4.6), W = 561.00, p < .001, rrb = −0.89, CI95% [−0.94, −0.79]. These consistent findings across scoring methods confirm a robust effect of test type in the online-first group, with higher SRep performance observed in f2f sessions.

In the f2f-first group (n = 48), pairwise comparisons also revealed within-group differences between test types. However, the direction of the effect was reversed compared with the online-first group: children achieved higher scores in the online condition than in the f2f condition. Under the target-conformity coding method, the average score was M = 13.9 (Mdn = 17.0; SD = 6.5) in the online condition and M = 12.9 (Mdn = 15.0; SD = 6.5) in the f2f condition. The Wilcoxon test reached statistical significance, W = 112.00, p = .001. The rank-biserial correlation was rrb = 0.62, with a 95% confidence interval [0.37, 0.79], indicating a moderate to large effect. A similar pattern emerged for the structure coding, with M = 16.5 (Mdn = 18.5; SD = 4.7) in the online condition and M = 15.9 (Mdn = 18.0; SD = 5.3) in the f2f condition. The result was again statistically significant (W = 104.00, p = .007). The corresponding effect size was rrb = 0.55, CI95% [0.29; 0.74]. For the structure and grammaticality coding method, the Wilcoxon test (W = 184.00, p = .049) did not reach statistical significance after Bonferroni correction (α corr. = .0125), suggesting comparable performance regardless of whether the test was administered f2f or online.

In contrast, between-group comparisons for each “test type” condition revealed no statistically significant differences based on the testing order after applying the Bonferroni-adjusted alpha level (α corr. = .0125). In the f2f condition, none of the comparisons reached the corrected threshold for statistical significance, regardless of the of coding method: target-conformity (U = 788.00, p = .036), structure (U = 774.00; p = .023), and structure and grammaticality (U = 782.00; p = .029). A similar pattern was observed in the online condition, where the Mann–Whitney U tests also yielded a nonsignificant result (Ut-c = 1220.00, p = .206; Us = 1190.00; p = .306; Us-g = 1170; p = .366 respectively). These results indicate that task order did not significantly affect children’s performance in either test format, regardless of how responses were scored.

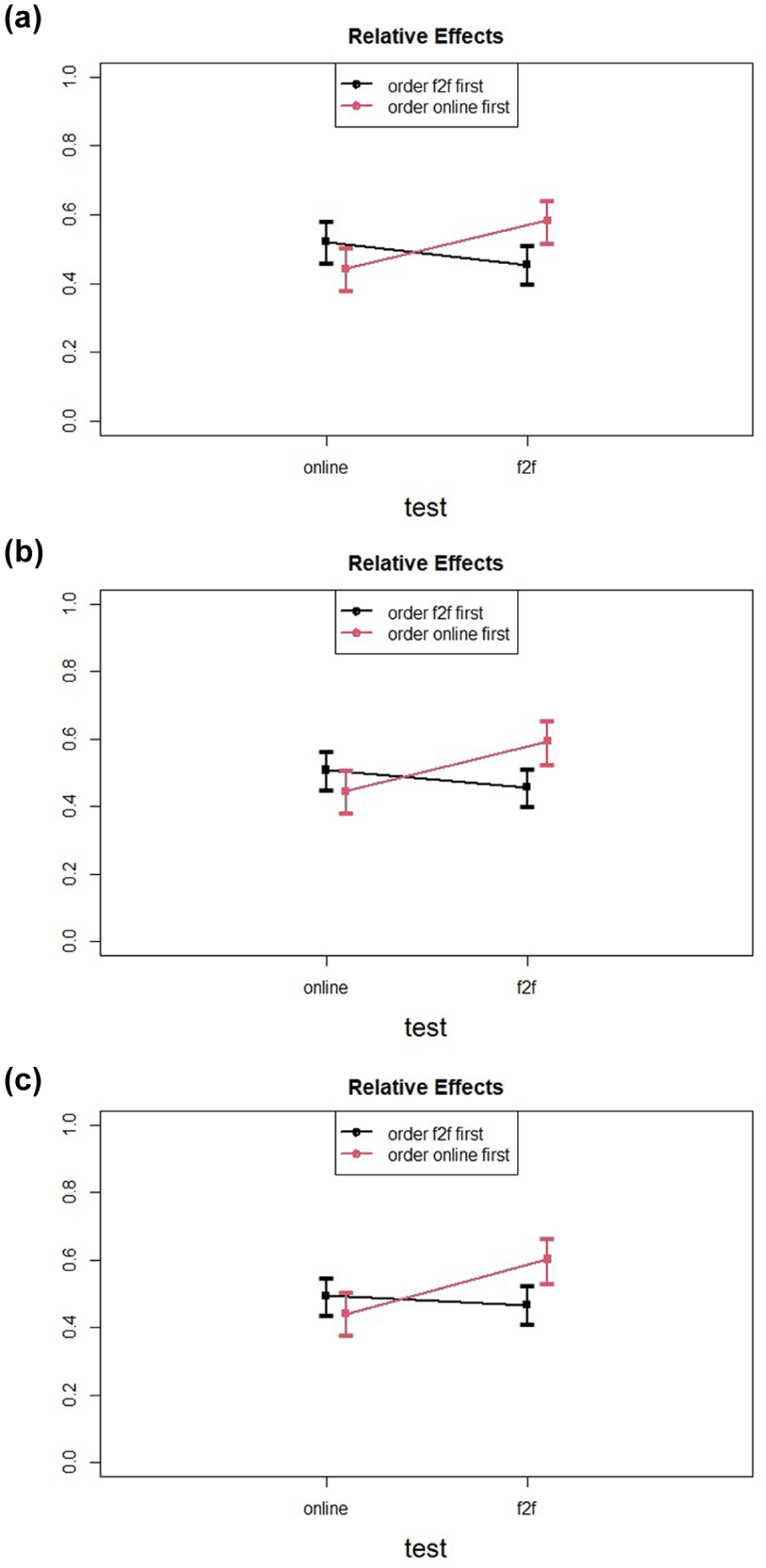

Figure 1 presents the interaction effects between test type (f2f vs. online) and testing order (f2f-first vs. online-first), shown separately for each of the three SRep coding methods: target-conformity, structure, and structure and grammaticality. The figure provides a summary of the simple main effects previously discussed, allowing for direct comparison across scoring approaches. In all coding methods, performance was consistently higher in the second testing session, regardless of whether it was conducted online or face-to-face. This pattern suggests a potential learning or adaptation effect over time, which emerged as a consistent feature in the multilingual group.

SRep performance in multilingual children by testing order (f2f-first vs. online-first) and “test type” (f2f vs. online), shown separately for each scoring method: (a) target-conformity coding, (b) structure coding, and (c) structure and grammaticality coding.

To further explore the observed results, the ANOVA-type model was extended by including an additional between-group factor: country of residence (Poland vs. abroad). The analyses followed a 2 × 2 × (2) design, incorporating test order, country, and “test type” as predictors. The results were analyzed separately for each of the three scoring methods: target-conformity coding, structure coding, and structure and grammaticality coding. Across all scoring approaches, only two effects reached statistical significance. The first was a main effect of country of residence, target-conformity: ATS(1; ∞) = 12.43; p < .001; structure: ATS(1; ∞) = 7.86; p = .005; structure-and-grammaticality: ATS(1; ∞) = 8.54; p = .003, indicating that multilingual children living in Poland scored higher on the SRep task than those living abroad, regardless of the other conditions of the study. Under the target-conformity coding method, the mean score for children in Poland was M = 15.8 (Mdn = 17.0; SD = 4.6), compared with M = 12.3 (Mdn = 14.0; SD = 6.4) for children living abroad. In the structure coding method, the Polish group achieved a mean score of M = 17.8 (Mdn = 19.0; SD = 3.4), whereas the group abroad scored M = 15.5 (Mdn = 17.5; SD = 5.2). Likewise, in the structure and grammaticality coding method, the respective mean scores were M = 17.3 (Mdn = 19.0; SD = 4.0) for children in Poland and M = 14.6 (Mdn = 17; SD = 5.6) for those living abroad.

A statistically significant interaction between test order and test type was again observed across all scoring methods—target-conformity: ATS(1; ∞) = 29.95; p < .001; structure: ATS(1; ∞) = 18.58; p < .001; structure-and-grammaticality: ATS(1; ∞) = 17.21; p < .001—replicating the previous findings and confirming that the effect of test type continued to depend on the order in which the test was administered.

In addition, a main effect of “test type” reached statistical significance only under the structure and grammaticality coding method, ATS(1; ∞) = 7.21; p = .007, indicating that, when that alternative linguistic accuracy was considered, performance differed reliably between the f2f and online test formats, with higher scores observed in the face-to-face condition.

Importantly, no significant interactions with country of residence were observed, suggesting that this variable did not alter the previously reported patterns of results. The observed effects were stable across subgroups defined by country of residence. Full statistics are provided in the Supplementary Materials (Table S3).

4.4 Test results for the monolingual group

The analyses for the monolingual group were also carried out using a mixed model of the ANOVA-type within a 2 × (2) design, which allowed the identification of both main effects (“test type” and test order) and interaction effects. In this framework, “test type” was treated as a within-subjects factor. The results are reported separately for each of the three scoring methods: target-conformity coding, structure coding, and structure and grammaticality coding.

Across all scoring methods, the main effect of “test type” was the only effect to reach statistical significance, target-conformity: ATS(1; ∞) = 21.29; p < .001; structure: ATS(1; ∞) = 13.43; p = .002; structure-and-grammaticality: ATS(1; ∞) = 10.69; p = .001. These results indicate that monolingual children performed better in the f2f condition than in the online one. Mean SRep scores in the f2f format ranged from 17.8 (target-conformity coding) to 19.2 (structure coding), while online scores ranged from 16.5 (target-conformity coding) to 18.3 (structure coding). Effect size estimates (rank biserial correlation) ranged from −0.61 to −0.76, indicating moderate to large effects.

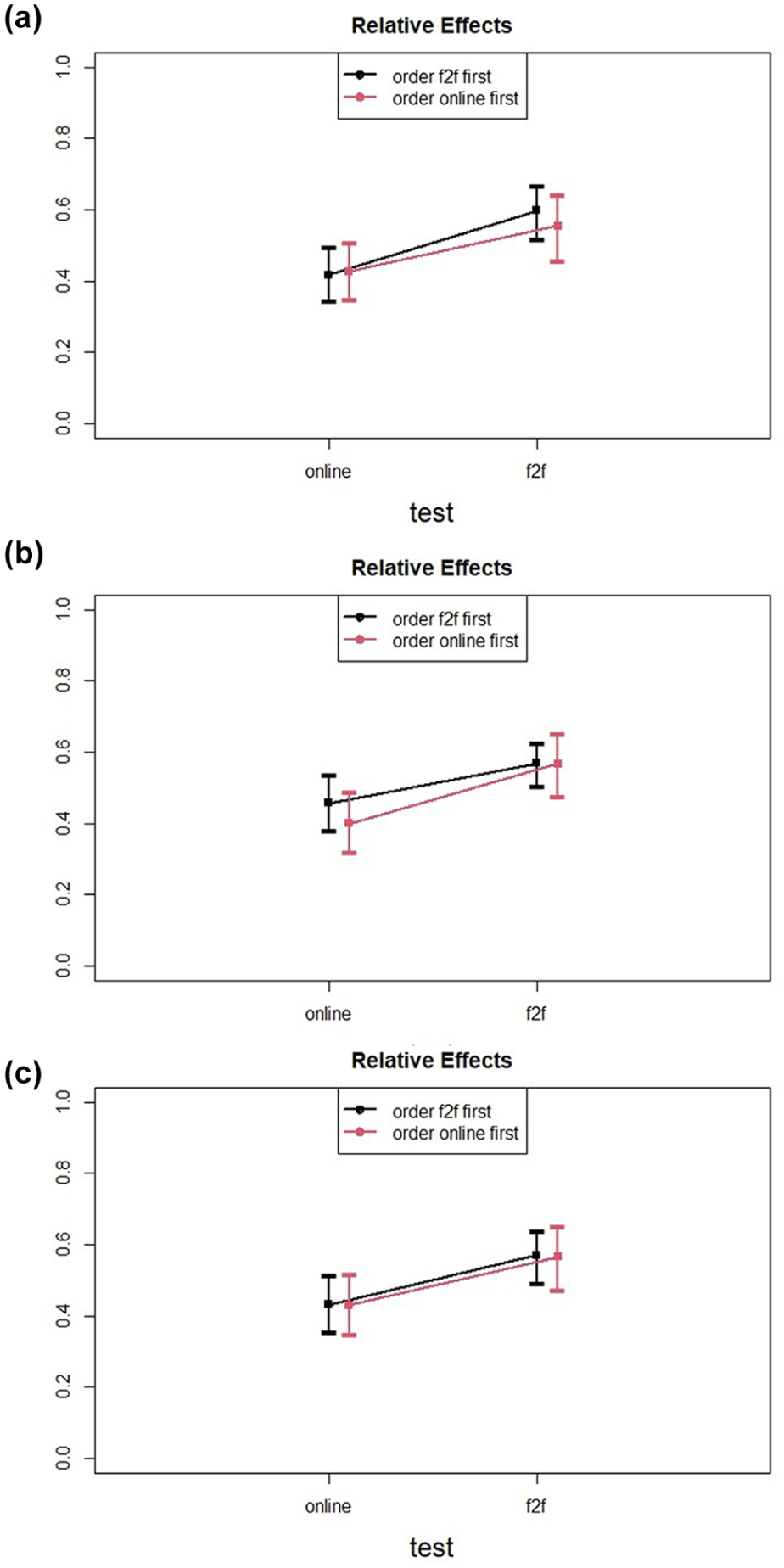

These findings suggest that the differences between testing formats were consistent and reliable across coding schemes. The nonsignificant interaction between test type and test order suggests that the format-related performance differences remained stable regardless of whether the child began with the f2f or online task (see Figure 2). Full statistics are available in the Supplementary Materials:

SRep performance in monolingual children by testing order (f2f-first vs. online-first) and “test type” (f2f vs. online), shown separately for each scoring method: (a) target-conformity coding, (b) structure coding, and (c) structure and grammaticality coding.

4.5 Structure-specific analysis

To understand whether certain types of grammatical structures may be more sensitive to testing modality (face-to-face vs. online), we conducted additional analyses focusing on individual sentence types used in the Polish Sentence Repetition Task (SRep). Although the global scores offer a general picture of grammatical performance, prior research suggests that sentence repetition performance may vary depending on the syntactic complexity of the structure being tested (Polišenská et al., 2015). Some structures may be more demanding in terms of processing and production, and thus more vulnerable to contextual factors such as test administration mode.

In the Polish SRep task, 20 sentence items were grouped into 11 structure types, each representing a particular grammatical construction. These included a range of syntactic forms of varying complexity, from simple declarative sentences to embedded clauses and relative constructions. The following structure categories were distinguished: Structure 1: SVO with Auxiliary or Modal (Items 2, 4), Structure 2: SVO with Auxiliary and Negation or Modal and Negation (Items 3, 8), Structure 3: Short Actional Passives (Item 5), Structure 4: WH-Who/What Questions (Items 1, 6), Structure 5: Bi-Clausal Sentences (Coordination or Complement) (Item 7), Structure 6: SVO with Two Auxiliaries or Auxiliary and Modal (Items 9, 15), Structure 7: Bi-Clausal Sentences (Complement or Adjunct Clauses) (Items 10, 11), Structure 8: WH-Object Which Questions and Indirect Object WH-Questions (Items 12, 14), Structure 9: SVO with Two Auxiliaries and Negation (Items 13, 17), Structure 10: Object Relatives with Adverbial PPs (Items 16, 20), and Structure 11: Sentences with Nouns and Complements (Items 18, 19).

Each of these 11 structure types was analyzed separately in a 2 × (2) mixed-model ANOVA-type test, with testing order (f2f-first vs. online-first) as a between-subject factor and test type (f2f vs. online) as a within-subject factor. A Bonferroni correction was applied to adjust for multiple comparisons (α = .05/33 = .0015). The results for the multilingual and monolingual groups are presented below.

4.5.1 Multilingual group

To further explore the results obtained for general score of SRep, additional analyses were conducted for each of the 11 linguistic structures that were assessed with the Polish Sentence Repetition Task questionnaire. An ANOVA-type test within a 2 × (2) mixed model was employed once more. To control for multiple tests across 11 structures and three effects, we applied a Bonferroni correction (α = .0015 = .05/33). Full per-structure statistics are reported in the Supplementary Materials (Table S5).

The ANOVA-type tests showed that among all the structures, only three exhibited statistically significant interaction effects between order (f2f-first vs. online-first) and “test type” (f2f and online). These were Structure 2: SVO with Auxiliary and Negation or Modal and Negation (ATS[1, ∞] = 10.64; p < .0015), Structure 6: SVO with Two Auxiliaries or Auxiliary and Modal (ATS [1, ∞] = 11.40; p < .0015) and Structure 8: WH-Object Which Questions and Indirect Object WH-Questions (ATS [1, ∞] = 13.02; p < .0015). To gain a clearer understanding of the interaction effects, post hoc analyses were conducted manually using nonparametric tests. Specifically, Wilcoxon signed-rank tests were employed to analyze within-group differences, while Mann–Whitney U tests were used to examine between-group differences.

The results obtained from these post hoc analyses revealed statistically significant within-group differences in the online-first group for two structures: Wstr2 = 105.0; pstr2 = .005 and Wstr8 = 121.0; pstr8 = .003. Children demonstrated higher performance levels on the f2f version of the task (Mstr2 = 1.7; Mdnstr2 = 2.0; SDstr2 = 0.5; Mstr8 = 1.7; Mdnstr8 = 2.0; SDstr8 = 0.6) than on the online one (Mstr2 = 1.5; Mdnstr2 = 2.0; SDstr2 = 0.6; Mstr8 = 1.3; Mdnstr8 = 1.0; SDstr8 = 0.7). The rank biserial correlations for both structures, rrb_str2 = −0.75 and rrb_str8 = −0.78, represented large effect sizes. The 95% confidence intervals for these correlations (CI95% str2 [−0.87, −0.56] and CI95% str8 [−0.88, −0.60]) did not include zero, further supporting the significance of the observed effect. In contrast, in the online-first group, differences in Structure 6 reached the corrected threshold for statistical significance [Wstr6 = 36; pstr6 = .006]. Moreover, in this specific condition, multilingual children performed better in the online assessment (M = 1.4; Mdn = 2.0; SD = 0.8) − which constituted their second measurement − compared with the f2f condition (M = 1.2; Mdn = 1.5; SD = 0.9). However, due to a predominant number of identical scores across the two assessments (40 out of 48 cases), the effect size was not estimated, as it could have been substantially biased by the limited variability in scores. The remaining differences were not statistically significant.

4.5.2 Monolingual group

Additional analyses were also conducted for the monolingual group to examine differences in performance across the 11 linguistic structures assessed using the Polish Sentence Repetition Task questionnaire. The analyses employed an ANOVA-type test within a 2 × (2) mixed model, with a Bonferroni correction applied to account for multiple comparisons on the same dataset, resulting in a corrected significance level of α = .0015 (.05/33). Complete per-structure results are available in the Supplementary Materials (Table S6).

The findings indicate that only one of the analyzed structures—Structure 7: Bi-Clausal Sentences (Complement or Adjunct Clauses)—showed a statistically significant main effect of “test type” (f2f vs. online), ATS (1, ∞) = 15.15, p < .0015.

Participants generally performed worse on the online version of the task (M = 1.6; Mdn = 2.0; SD = 0.6) compared with the f2f version (M = 1.8; Mdn = 2.0; SD = 0.5), regardless of the order in which the tests were administered. However, because the vast majority of participants (43 out of 55) obtained identical scores across the two assessments, estimating the effect size was not feasible, as the low variability could have introduced substantial bias.

5 Discussion

The aim of our study was to compare the outcomes of face-to-face and online administration of the Polish SRep task. To this end, we assessed the performance of both multilingual and monolingual children across both modalities. The results revealed a consistent trend: children in both groups performed better in the face-to-face condition. Our results indicate that online and f2f administrations should be treated as distinct forms of the task.

For multilingual children, performance differed significantly between the f2f and online conditions, but this difference was moderated by the order in which the tests were administered. Children who completed the online task first, performed better in the subsequent f2f session. When f2f was administered first, children did not show the same degree of improvement on the online task: they did perform slightly better online than in the earlier f2f condition, but this effect was smaller. It can be inferred that multilingual children showed improvement in their performance in the second attempt, regardless of the testing environment. But the improvement was different, depending on the order. This effect remained robust even when controlling for the children’s country of residence (Poland vs. abroad).

The performance of monolingual children was consistently higher in the f2f testing format.

That is, a significant main effect of modality was observed: children performed better in the f2f condition, regardless of testing order.

After we scored the results using the structure-and-grammaticality coding scheme, the within-group contrast for the multilingual f2f-first subgroup did not remain significant after Bonferroni adjustment, indicating no statistically significant difference between modalities in this subgroup. This may reflect the higher mean scores and reduced variability observed under this scoring scheme in our sample, which can make small modality differences more difficult to detect. At the same time, in the multilingual group the order × test type interaction under this scheme is significant, with a clear second-attempt improvement when the second administration is face-to-face. Taken together, the overall face-to-face advantage is evident at the model level across scoring schemes.

While some previous studies have found comparable outcomes across online and face-to-face assessments (e.g., Castilla-Earls et al., 2022; Pratt et al., 2022; please see Table 1), these studies differed in their focus — examining other types of language tasks, using different participant groups, sometimes involving smaller samples. Our findings suggest that, at least for morphosyntactic tasks such as sentence repetition, in-person administration may offer advantages.

Trying to interpret why the learning effect takes place for multilingual, but not for monolingual children, we can consider two tentative and not mutually exclusive explanations. First, it is possible that the learning effect requires a certain stage of language development, which—when surpassed, reduces or erases the effect. If children are still acquiring structures, they might be more receptive to learning and improvement through repetition. This increased sensitivity might explain why multilingual children show significant improvement when exposed to the SRep for the second time, while monolingual children do not. It could be argued that children reach a “saturation point” where further exposure to the task yields diminishing gain in terms of linguistic improvement. This phenomenon can be linked to the concept of the “plateau effect” in language learning (Mirzaei et al., 2017), where learners find it increasingly challenging to make noticeable progress after achieving a certain level of proficiency. This saturation point implies that the observed learning effect is not merely a byproduct of multilingualism per se, but rather it is closely associated with the developmental phase of language acquisition. This aligns with Paradis (2010), who suggests that the acquisition of morphosyntactic structures in bilingual children depends on accumulating a “critical mass” of input, and that more complex structures require more prolonged exposure to be mastered. This developmental progression implies that children may benefit more from repeated exposure during earlier stages of grammatical development, whereas such effects might diminish once a certain level of proficiency is reached. This perspective complements the concept of the plateau effect in language learning (Mirzaei et al., 2017), according to which learners experience slower observable gains after achieving intermediate proficiency levels. This interpretation may offer some insight into the observed pattern of results, but it is tentative. Our data were not collected with this comparison as a primary objective. Further research is needed to determine whether the suggested mechanism may indeed underlie the differences in performance across testing modalities. Second, we might infer that different patterns in monolingual and multilingual groups might have something to do with the multilinguals’ heightened ability to learn and adapt due to their simultaneous engagement with two language systems (Adesope et al., 2010; Bialystok, 2009; Kroll & Bialystok, 2013). This heightened adaptability could enhance their ability to benefit from repeated exposure to language tasks. In other words, it might be hypothesized that multilinguals show increased performance in the second attempt even if the second attempt is online due to their potential adaptability and experience in navigating varying linguistic and environmental contexts. This adaptability can make them more resilient to changes in testing formats and environments. This would require further research.

These interpretations of the results we obtained, if verified in future research, could provide valuable insights into optimizing language learning strategies and assessment methods for multilingual children at different stages of their language development.

The fact that f2f administration is associated with higher scores may be related with factors such as a more familiar environment, reduced distractions, or the physical presence of a test administrator, which could provide a sense of comfort and clarity. These factors likely benefit both groups. What differs is how this advantage relates to testing order. In the multilingual sample we observed an order × modality pattern, with a second-attempt improvement when the second session was face-to-face. In the monolingual sample the face-to-face advantage was similar regardless of order, so no additional second-attempt gain was evident. A cautious interpretation may be that multilingual children may adjust more quickly to the testing format after one exposure (procedural/attentional adaptation), whereas monolingual performance shows a stable face-to-face benefit independent of order.

In our study, we did not encounter significant technical issues that would impede or make it difficult to run the test online, nor did we experience interferences that could lower the data quality, so we are not considering technical issues during task administration as a possible confounding variable.

In addition, we should consider the possibility of potential difference in previous exposure to online communication between monolingual and multilingual children (Palviainen & Kędra, 2020). Families who live away from their countries of origin may be communicating via videoconferencing apps on a regular basis with their relatives, children may be encouraged to engage in mediated conversations with grandparents. This may enhance multilingual children’s comfort with digital communication and contribute to their increased performance in online interactions. Monolingual Polish-speaking children, whose interactions may be more localized and f2f, might have less familiarity and experience with online communication platforms, potentially influencing their performance in digital settings. However, as our sample of multilingual children consisted both of families living away from Poland (country where L1 is spoken), and in Poland, this would only apply to a part of our group of multilingual children.

We conducted an additional structure-level analysis across the 11 grammatical constructions included in the Polish Sentence Repetition Task. The results indicated that modality-related differences were not uniformly distributed across sentence types. In the multilingual group, three structures— SVO with Auxiliary and Negation or Modal and Negation, SVO with Two Auxiliaries or Auxiliary and Modal, and WH-Object Which Questions and Indirect Object WH-Questions—showed statistically significant interaction effects between test order and modality, with better performance in the face-to-face condition. In the monolingual group, only one structure—bi-clausal coordination/complement—exhibited a significant main effect of modality.

These findings suggest that some grammatical structures may be more sensitive than others to the testing context. This might be because some constructions impose greater processing demands: relations that span a longer distance (nonlocal dependencies) and sequences of morphosyntactic operations (e.g., combinations of auxiliaries, modality, and negation) require maintaining and integrating more information before a well-formed response can be produced (Friedmann et al., 2009; Gibson, 2000; Jakubowicz, 2011). Because sentence repetition engages both grammatical representations and domain-general resources such as working memory and attentional control (Klem et al., 2015; Marinis & Armon-Lotem, 2015), subtle differences between online and face-to-face administration may have a larger impact on higher-demand constructions. However, given the exploratory nature of this analysis and the number of comparisons performed, these patterns should be interpreted with caution. Further studies would be needed to confirm whether certain types of linguistic constructions are consistently more susceptible to modality effects.

5.1 Implications for online testing

The results of our study stress that it is important to recognize that online and f2f studies are not fully equivalent. The distinct contexts and groups yield varied results. Consequently, findings from online studies should be interpreted within their unique context and cannot be directly compared with those from f2f studies, irrespective of the group studied. However, this does not cancel the potential benefits of remote synchronous online testing, that is computer-mediated mode of assessment conducted remotely where both the person administering the task and the participant (here: the child) engage in the process at the same time but in a different place.

As long as certain precautions are taken into consideration, online testing can offer solutions to important challenges in data collection, such as improved recruitment as online testing has a wider reach and is less costly, improved retention in longitudinal studies, and reducing missing data. These precautions include making sure the participants have a stable internet connection that was tested prior to the session to avoid disruptions during testing, the testing environment at home prepared to minimize distractions—prior arrangement with the families to secure a quiet space and monitoring for potential difficulties during the testing. Although recruitment and retention of participants may be possibly improved owing to remote online testing, some limitations remain. Variation in caregiver’s education and race/ethnicity remains problematic (Bambha & Casasola, 2021; Nelson et al., 2021; Scott & Schulz, 2017). Accessibility of children with low SES remains a challenge for researchers. Several barriers and strategies for improvement have been identified in the literature (e.g., Bonevski et al., 2014). Barriers include distrust of research, fear of authority, perceived lack of personal benefit, and potential harm or stigma, particularly among marginalized groups. In addition, cultural beliefs, age and gender sensitivities, and low literacy levels also pose significant recruitment challenges. Another potential caution is the application of norms for tools that were standardized in f2f testing to interpret results of online diagnosis, as this could potentially result in lower standardized scores.

To overcome these barriers, strategies such as forming community-research partnerships have been emphasized (Antonijevic-Elliott et al., 2020). Involving community groups in the research process can build trust and increase recruitment rates. For example, employing local community members as recruiters and using community advisory groups can make research appear more community-driven. Providing culturally and linguistically appropriate recruitment materials and offering incentives such as financial compensation or community recognition are also effective strategies. In addition, ensuring that the research is seen as beneficial to the community and using flexible and inclusive data collection methods can further increase participation rates.

Overall, an approach that involves the community at every stage of the research process and addresses the specific barriers faced by low-SES families may improve recruitment of these children into language development studies. Using online testing might address some recruitment challenges. Online participation offers greater accessibility to participants who might otherwise be unreachable due to geographic, mobility, or time constraints. It can significantly reduce participants’ fear of authority and perceived risks, as they can engage in research activities from the safety and privacy of their homes.

Schidelko et al. (2021) suggest that even subtle details of online implementations might be significant. Specifically, Schidelko et al. attribute the absence of an effect in their study, in contrast to the observed effect in a similar study by Sheskin and Keil (2018), to differences in methodological details of task administration. They note that while Sheskin & Keil employed color-coded pictures, their own study utilized a detailed video or animated slideshow format. They propose that such modifications could significantly influence children’s performance.

Based on our results and interpretations and suppositions we built on them, and also complemented by the nuances pointed out by Schidelko et al., future research could control for variables such as previous exposure to online communication and using videoconferencing format in conversations by children tested; type of stimuli used, such as static images versus animated content, and the degree of interactivity provided by the testing platform or videoconferencing application.

Our findings align with theoretical perspectives emphasizing the role of social presence and contextual support in children’s language performance. In particular, Social Presence Theory posits that the degree to which individuals perceive others as being physically and psychologically present in an interaction can shape communicative behavior and engagement. In our study, even though children responded to prerecorded auditory stimuli in both modalities, the presence of a physically co-located adult that interacted with the child prior to the session may have reduced psychological distance in f2f testing and supported sustained attention.

Developmental theories further suggest that children’s task performance is not solely a reflection of their internal competence but is also supported by the immediate social environment (Hollich et al., 2000; Vygotsky, 1978). The in-person presence of the experimenter may offer implicit scaffolding or motivational anchoring, facilitating task focus and persistence.

Our findings may have important implications for the interpretation and use of language assessment tools administered in different modalities. While online testing provides practical advantages—such as flexibility, accessibility, and reduced logistical burden—it may not fully capture children’s grammatical competence to the same extent as in f2f settings. This raises the question of whether tasks like the Sentence Repetition Task (SRep), originally developed and normed for in-person use, can be assumed to yield equivalent results when administered online. In light of this, future research should continue to examine potential modality effects and explore whether separate standardization and norming procedures are warranted for online versus face-to-face administration formats.

Building on this, the next step would be to validate each format on its own terms to provide a modality-specific interpretation. Future work should examine how scores for each modality relate to other grammatical measures. In addition, calibrating the two formats using within-session, counterbalanced administrations would help determine how to rescale online results relative to f2f results without assuming interchangeability.

Until such efforts are undertaken, caution is advised when interpreting scores across modalities as directly comparable.

As multilingual populations are heterogeneous, population norms may be unrealistic. A practical alternative would be to provide reference values adjusted for age and language exposure, alongside documentation of the testing context. For multilingual assessment, the SRep can be used as an index of morphosyntax. Score interpretation anchored in the child’s language profile rather than monolingual norms. Scores should be interpreted with reference to indicators of language experience, such as age of acquisition, cumulative exposure/use, and schooling language. Decision thresholds for multilingual children should be derived and evaluated within multilingual samples against independent clinical judgments, with transparent documentation of the testing context.

5.2 Limitations

As is often the case, our group of multilingual children was a heterogeneous one in terms of language background. Exposure to each language differed, and so did the general language situation in the home language and the practices of the tested children.

We are aware that when testing a sample of multilingual children, factors such as their linguistic heterogeneity, variation in communication styles at their homes, child-rearing practices, and beliefs should be considered (e.g., Thordardottir, 2010). However, due to the repeated measures design, we were able to isolate the hypothetical effect of the procedure itself from numerous other factors related to individual variance such as baseline cognitive and language skills, socio-economic status, and educational background. This design allows us to attribute observed differences more confidently to the experimental manipulation rather than to background variables that might influence outcomes in designs using independent samples.

It would be ideal if the researchers were able to ensure that testing conditions are identical for all participants (Buchanan & Smith, 1999). However, this is not a trivial task for online testing: participants are not in the controlled space of our laboratory, but at their homes when they can be possibly affected by noises, smells, events that we do not have control over or may even be unaware of—especially with the higher quality internet cameras, the devices are designed to collect the sound from the speaker directly by the microphone and to silence background noises. According to Ihme et al. (2009) while testing online, participants’ effort, concentration, attention, and compliance cannot be easily assessed, or at least this is much more difficult than f2f situation. We did not observe any overt challenges related to the maintenance of the child’s motivation and attention, in either the f2f or online mode of administration of the task, but as was mentioned in the procedure section, we only had a general rubric on the study protocol card where indication of possible disturbances to the study procedure might have been noted, but we did not measure the child’s motivation or attention.

A methodology-related limitation of our study might be that our online and f2f sessions differed in duration. As we collected additional measures during the in-person part of the study but not during the online session, the latter was significantly shorter and the SRep administered during it, unlike in f2f session, was always the first (and only) task. We consider this a tradeoff between the necessary length of the session and controlling for various aspects related to the order of the tasks in the study.

As our procedure required remote testing with the use of a computer and internet connection by the tested family, we were able to reach only parents and children, who had access to a computer or a laptop with a stable internet connection at home, and where parents were relatively tech-savvy. Although the latter was not a formal requirement for the participation of the study, we expect that it is mostly people who feel comfortable with technology that sign up for synchronous remote testing. Knowing that there will be a need to connect with the experimenter and possibly check for problems with sound and vision may cause anxiety in people who do not use computers on a daily basis.

In this respect, we do realize that our sample is limited, as it is also skewed toward higher socio-economic status. This limitation has also been noted in previous remote studies. For instance, Ozernov-Palchik and collaborators (2022) noted that despite targeted efforts, their sample remained skewed to the uneven access to digital resources.

6 Conclusion

Our study highlights that online and f2f testing for assessing children’s grammatical development through the Sentence Repetition Task yield different outcomes, and that the monolingual and multilingual groups behaved differently when comparing the two testing situations. Thus, these methods are not fully equivalent and should be compared with caution. Despite this, online testing remains a valuable tool. It enhances accessibility for diverse families, offers cost and time efficiency, and facilitates larger sample sizes and more frequent data collection. However, it is crucial to recognize and address the challenges associated with online testing, such as potential technical issues, reduced researcher control, and possible differences in previous experience with online communication. Carefully balancing these factors, online studies can still provide significant contributions to language development research.

Supplemental Material

sj-docx-1-las-10.1177_00238309251394372 – Supplemental material for Comparing Online and Face-to-Face Administration of the Polish Sentence Repetition Task in Monolingual and Multilingual Children: Higher Scores in Face-to-Face Testing

Supplemental material, sj-docx-1-las-10.1177_00238309251394372 for Comparing Online and Face-to-Face Administration of the Polish Sentence Repetition Task in Monolingual and Multilingual Children: Higher Scores in Face-to-Face Testing by Natalia Banasik-Jemielniak, Magdalena Kochańska, Maria Obarska, Maria Zajączkowska, Joanna Świderska and Ewa Haman in Language and Speech

Footnotes

Acknowledgements

We would like to thank Andrii Loburets for providing statistical consultation and assistance with our data analysis. Your expertise was invaluable to our project.

Funding