Abstract

Assessing laboratory animals’ welfare – their current and/or past subjective affective states – is essential for ethical and regulatory reasons (and central to biomedical research into, for example, pain, nausea or anxiety). But this is challenging; and in the quest for quantification (and perhaps simplicity), it can be tempting to overlook construct validity. Nevertheless, that our indicators have good construct validity – that is, they accurately reflect the construct or concept of interest – is essential. This is true whether we are interested in short-term emotions like fear, longer-term mood-like states such as malaise, or markers of cumulative stress over a project or even a lifespan. Without it, welfare assessments risk being incorrect: inaccurate and unhelpful for the animals they aim to evaluate and assist. Here (summarising text from a forthcoming edited book), I introduce five validatory tests, as well as highlighting the importance of considering indicators’ responsiveness/sensitivity and selectivity/specificity. I also outline how these principles could help improve the construct validation of both humane endpoints and retrospective severity assessments. Careful construct validation can never fully solve the ‘Other Minds’ problem: that animals’ subjective experiences are private (such that we can never measure them, only infer them). However, done well, construct validation would add additional logical rigour to laboratory animal welfare assessment, increase its accuracy, and make benchmarking (e.g. severity classification) more transparent.

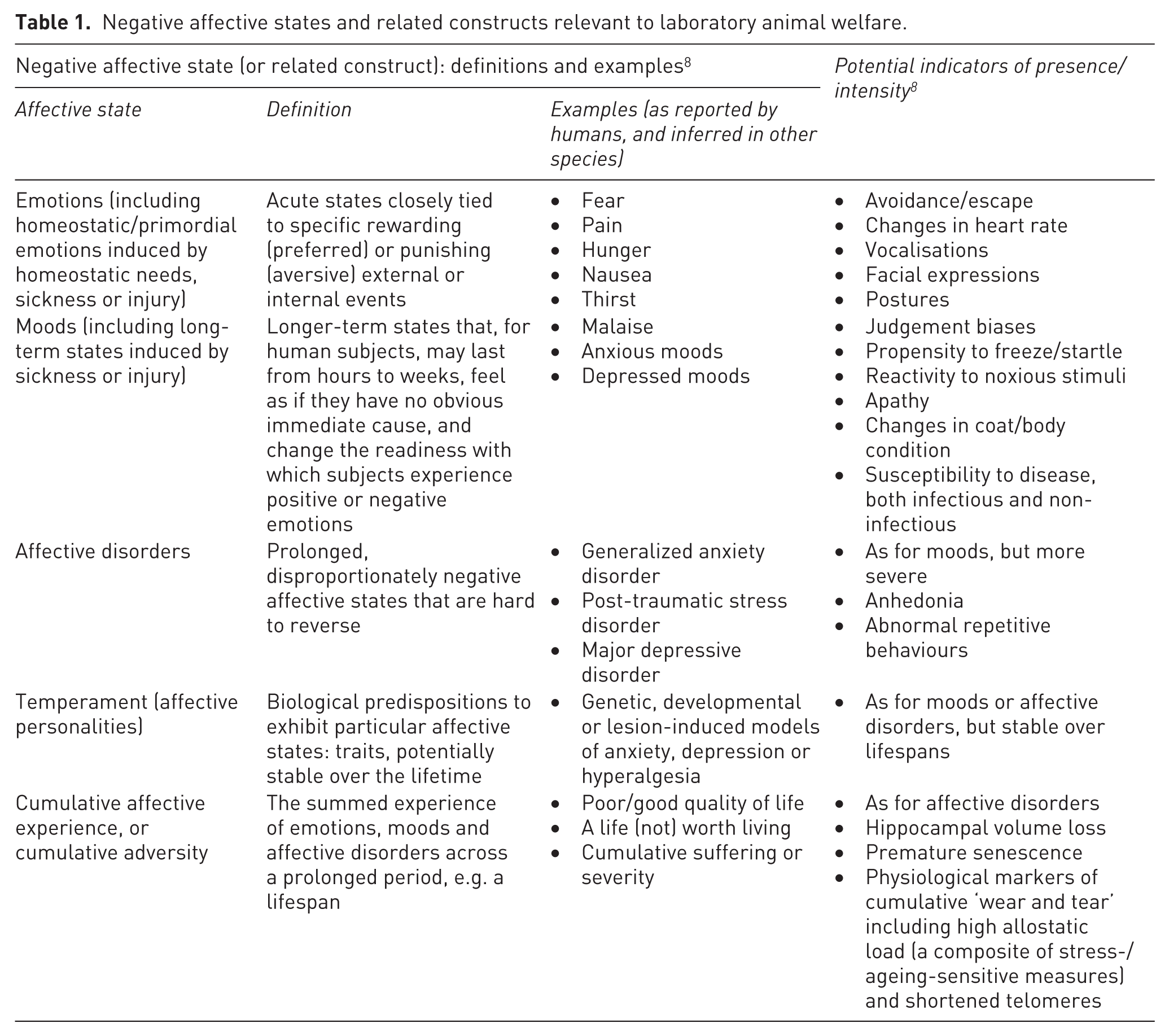

Assessing laboratory animals’ welfare – their current and/or past subjective affective states (Table 1) – is essential for ethical and regulatory reasons (and central to biomedical research into, for example, pain, nausea or anxiety).1–3 This is challenging, however. Animals cannot tell us how they are feeling, and relevant indicators – measurable variables from which to infer non-measurable feelings – can vary across states (Table 1), as well as across species, strains, age classes and welfare challenges (e.g. specific diseases being modelled).4-7 Consequently, any method yielding seemingly objective values with apparent ease can be appealing (be this quick cage-side checks, simple behavioural tests, automated readouts from sensors, or impressive-looking ‘composite scores’). But in the quest for quantification (and perhaps simplicity of assessment), construct validity must not be overlooked.

Negative affective states and related constructs relevant to laboratory animal welfare.

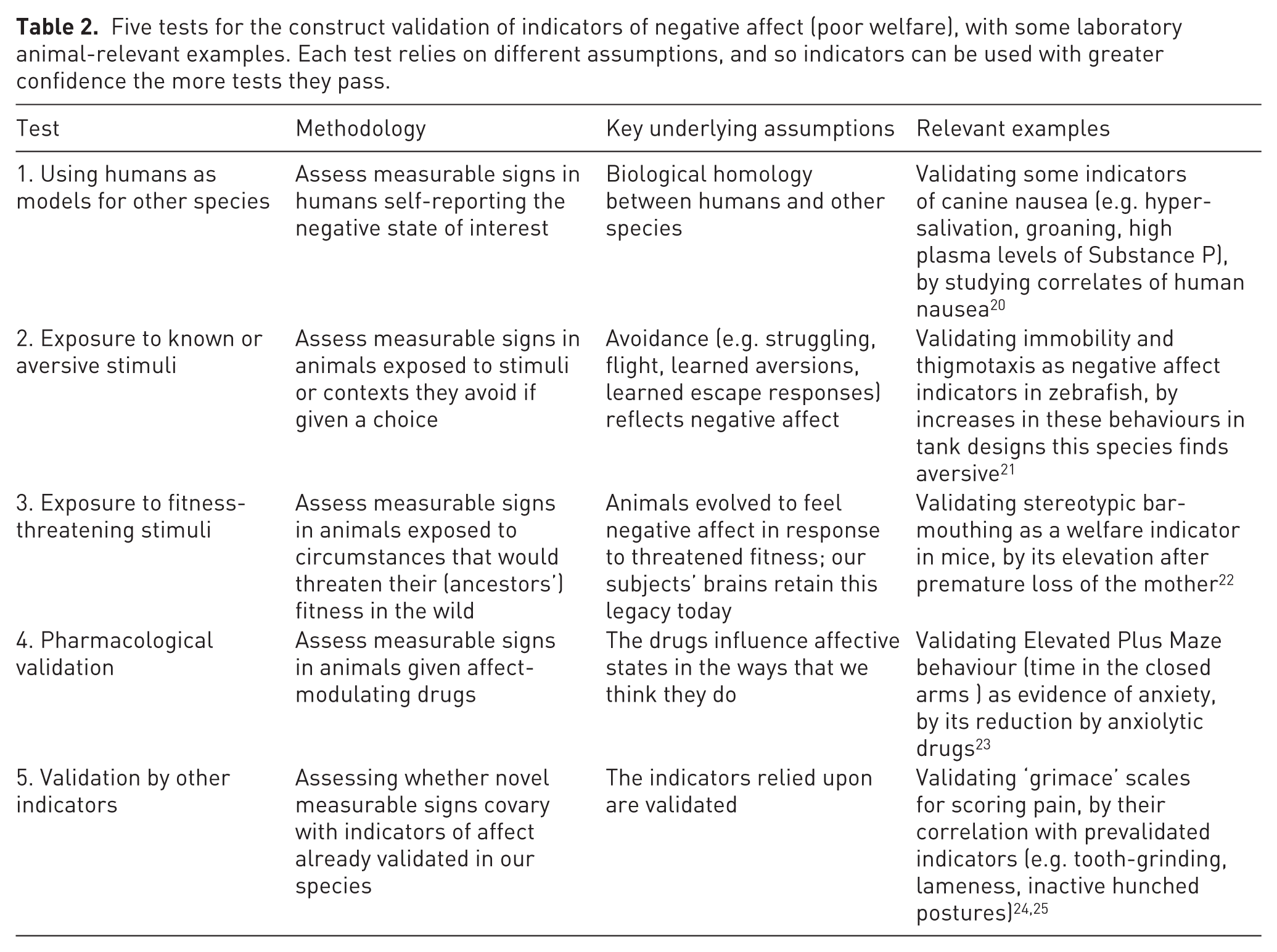

Construct validity means how accurately a metric reflects a construct or concept of interest (such as how well a cognitive test score reflects intelligence, for instance).9,10 When assessing animal welfare – regardless of whether our construct of interest is, say, fear, contentment, or quality of life – the indicators we use must thus reflect ‘“ground-truth” – the state that the animal is actually in’, 11 yielding ‘numbers that really do reflect the welfare as experienced by the animals’. 12 Significant consequences can result if they do not. Using affect indicators of questionable validity may, for instance, help explain why few new therapies for humans have emerged from animal-based biomedical research on pain13–15 and depression.16–18 Using affect indicators of questionable validity also risks welfare assessments being incorrect and unhelpful for animals.10,19 Construct validation is thus essential for ensuring that metrics mean what we hope they do. Happily, validatory methods are described in affect-focused biomedical studies, animal welfare research, veterinary research, and psychological research on human and animal emotions. 10 Five key methods emerge in these literatures: validation tests that potential indicators should pass (cf. the negative state indicators in Table 2).

Five tests for the construct validation of indicators of negative affect (poor welfare), with some laboratory animal-relevant examples. Each test relies on different assumptions, and so indicators can be used with greater confidence the more tests they pass.

Along with passing validatory tests, ideal indicators should be highly responsive, sensitively reacting to all relevant changes in affect (even subtle ones) in an incremental manner. 9 They should also be highly selective, only reflecting the specific affective states we wish to assess. Sadly, perfectly ideal indicators do not exist. But understanding the properties of those we have can help identify the best metric (or combination) for a given task. As Dawkins 26 put it, this is like ‘Be[ing] aware of the limitations of your materials before you start building a house’. To illustrate, weight loss can be validly used to infer suffering in clinical models, but only if we appreciate a priori when it can be insensitive (e.g. in acute conditions where animals rapidly become moribund, 27 or where effects are masked by reduced activity or even changes that increase bodyweight like elevated corticosteroid levels 28 or ascites 29 ; see also Talbot et al. 5 ). Such understanding can in turn reduce errors: failing to detect changes in affective states that are present (a.k.a. false negatives/false null conclusions), or mistakenly inferring changes in affective states that are not present (a.k.a. false positives/false leads).

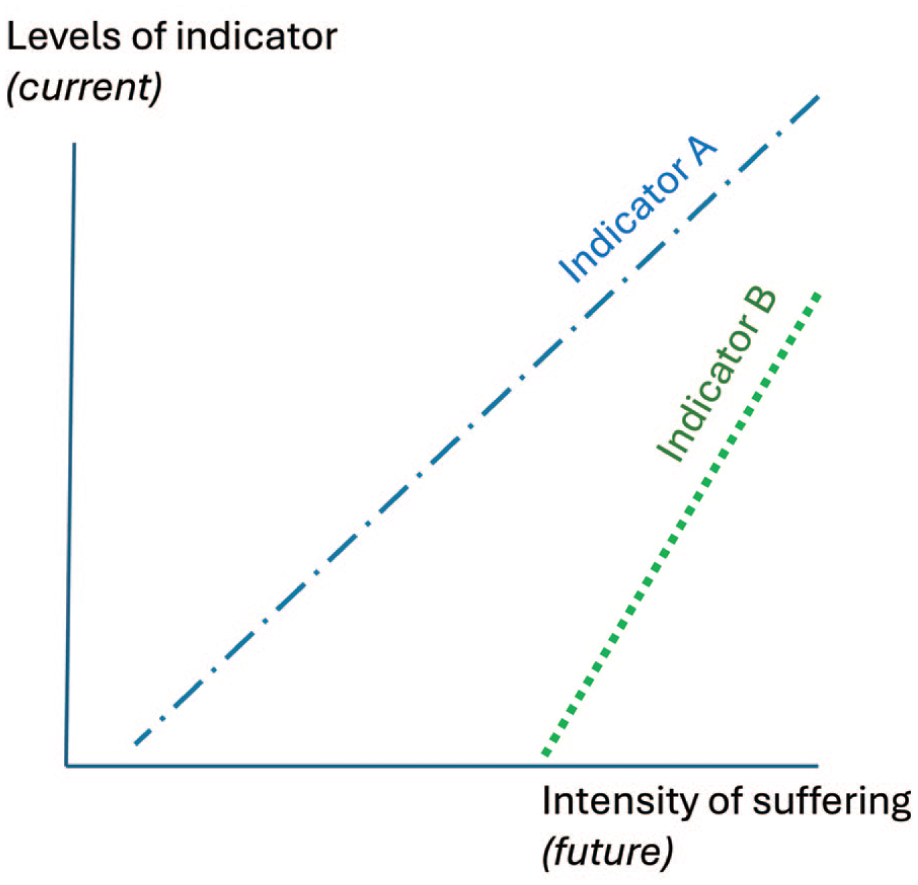

Formal principles of construct validation could help improve humane endpoints in terms of both their validity and their humaneness. To serve as accurate proxies (e.g. triggering a study’s end or a subject’s removal), humane endpoints should statistically predict severe suffering. Studies developing these essentially use Test 5 (see Table 1 and Figure 1): data collected from animals subsequently assessed for clinical scores warranting euthanasia are retrospectively analysed to identify which potential indicators differentiate between subjects who will live or die (and perhaps also between experimental animals and healthy controls).4–6,27,30 However, such studies are rare; they assume that clinical scores are valid; and, furthermore, the resulting ‘humane’ endpoints may still involve much suffering6,30 (see also Figure 1). Together, this makes further research into humane endpoints essential, and meeting this need should arguably involve new, complementary validatory tests. For example, for animal models of disease, this could involve liaising with patient groups, doctors and human clinical researchers to identify measurable signs that precede severe suffering – even desires for medically assisted dying – in relevant human patients (cf. Test 1 in Table 2).

Two (imaginary) indicators that could be used to identify future suffering and consequent endpoints.

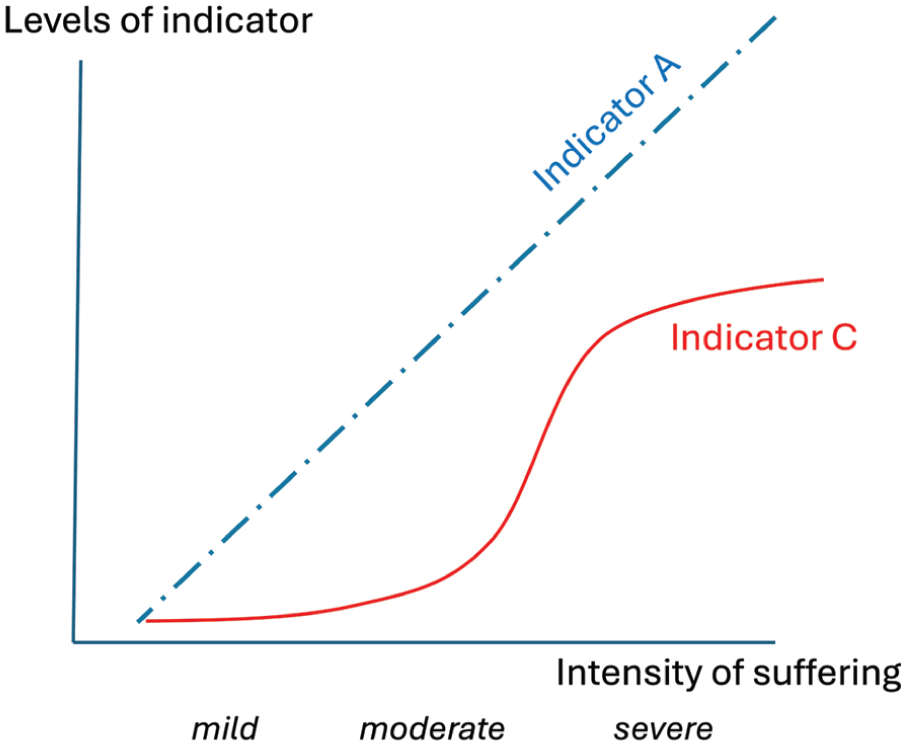

Such principles should also inform severity assessment: an even more challenging task because indicators must reflect not just relatively more or less suffering (as in Figure 1), but particular levels (and ideally even their boundaries: see Figure 2). Directive 2010/63/EU, 31 for example, defines ‘mild’ as causing only short-term mild pain, suffering or distress (i.e. mild negative emotions cf. Table 1); ‘moderate’ as causing moderately negative emotions and/or longer-term negative states (e.g. negative moods, Table 1) that are only mild or only moderately impair overall condition (presumably via their cumulative impact); and ‘severe’ as causing severely negative emotions, and/or negative moods that are moderately to highly negative or severely impair overall condition. The Directive also lists types of procedure judged to fall within each category. However, it does not supply evidence for these judgements; for instance, it assumes that conventional caging is neutral, when instead this causes cumulative stress 32 ; and any such procedure-based approach risks overlooking practices that modify severity for individual animals (e.g. refinements in technique or analgesic use; animals’ temperaments; how handling styles affect fear of humans; how social buffering and housing quality affect resilience). Furthermore, even texts that include the welcome addition of animal-based welfare indicators (e.g. De Vleeschauwer et al. 33 ) still generally restrict these to clinical signs only (ignoring cognitive, physiological, immunological and behavioural signs of negative affect), as well as leaving opaque what makes something a sign of mild versus moderate versus severe impact. Thus as Reiber et al. 7 summarise, the central problem is ‘we do not yet have . . . a gold standard combination of severity assessment parameters that reflects the actual truth about severity’.

Two (imaginary) indicators useful for assessing severity in different ways.

Thinking formally about construct validation could again help by laying out logical, explicit frameworks to advance progress. For example, Test 1 again highlights the value of using data from relevant human patients, here for identifying measurable signs that reflect self-reported mild, moderate or severe reductions in quality of life. Tests 2 and 3 suggest merit in seeking indicators that differentiate between animals exposed to situations ranging from mildly to intensely aversive (Test 2), or from subtly to devastatingly harmful to fitness (Test 3). And Test 4’s pharmacological approach indicates another route: identifying whether affect-rectifying drugs influence indicators even at very low doses (as expected for indicators of mildly negative states) or only when doses are maximally high (as expected for indicators of very severe states).

Even the most careful construct validation will never fully solve the ‘Other Minds’ problem: that subjective experiences are private. However, done well it would add more logical rigour to laboratory animal welfare assessment by grounding this in sound biological principles, and by encouraging underlying assumptions to be made explicit. In turn this should reduce risks of false leads or false null errors, and make benchmarking (e.g. severity classification) more transparent and defensible. It could even increase the translatability of biomedical research to human patients (cf. Krock et al. 34 , Gorman and Davies 35 ).

Footnotes

Acknowledgements

I would like to thank Mike Mendl, with whom many of these ideas were thrashed out over years (no, wait: decades); Gail Davies, Jess Cait and Aileen MacLellan for discussions on patient-centred approaches; and Anna Olsson for inviting this commentary and critiquing earlier drafts. The work was conducted on the ancestral lands of the Mississaugas of the Credit First Nation.

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author thanks NSERC for progamme funding.

Data availability and ethics approval

This commentary did not involve primary data (however, while still in press, copies of cited chapters can be provided on request); nor did this commentary involve animal use to require ethical approval.