Abstract

The autoethnographic study investigates the transformative impact of generative AI on educational research, instructional design, and teaching practices over a 5-month period (May–October 2024). By integrating AI tools into every phase of the research process, the study examines AI’s role as both a research partner and a subject of inquiry. Field notes, queries, and AI-generated outputs were systematically collected, creating a corpus for analysis. Grounded in activity theory, this research offers a reflective narrative on the evolving work routines of instructional designers and educators, emphasizing the orchestration of technology rather than prescriptive best practices. The study contributes to educational technology research by documenting the use of AI at a specific point in time, providing a foundation for future inquiry into the practical implications of AI in education.

Introduction

The idea for this research project started with a post on LinkedIn by Mike Perkins: Its inception stems from the algorithmic information curation in a social network. Based on the call for contributions by Perkins and Roe (2024a) to enhance our understanding of the role generative AI in educational research, including research design, data analysis, and write-up, I decided to lean into generative AI for all phases of the research cycle, making my own engagement with the technology both subject and object of the study.

The timeframe of the investigation is a 5-month time span (May–October 2024). During this time period, I worked on the article at hand. In addition, I documented in how far and for what purposes I used AI in other research, teaching and instructional design activities. Through field notes I documented my AI-infused research processes, and, at the same time, exemplified the use of AI. The method of autoethnography allowed me to engage in reflective practice while exploring the potential of generative AI. It uses writing as a way of knowing: “a method of discovery and analysis” (Richardson & St Pierre, 2008, p. 923). My goal was to make use of the dialogic nature of generative AI to investigate this new, alien research partner and understand its parameters, limitations, output, and allure.

Beyond the research process, I documented in how far and for what purposes I used AI for teaching and instructional design purposes in the field notes. In addition, I noted my consumption of AI-related social media content, scholarly publications, podcasts and news articles, as well as AI-related conversations with colleagues. The method of autoethnography allowed me to engage in reflective practice while exploring the potential of generative AI.

The rationale for this research project lies within the expectation that generative AI is having a profound, transformative impact on the education system, comparable to the rise of the internet in the 1990s. Dron (2023) describes generative AI as “different in kind” from all previous technologies. Trained on a data-set “representing a non-trivial proportion of human knowledge,” advanced generative AI tools can mimic and even exceed human cognition, including inference and creativity (Dron, 2023, p. 322).

This study investigates how research, teaching, and instructional design practices change when a new, disruptive technology enters the stage. Similar to the project “One Neighborhood, One Week on the Internet” (Elon, 2001), it serves both as a historic marker and as a sense making endeavor to chart pathways for educators and instructional designers. While there are numerous attempts to optimize prompting or share the latest output of AI tools, there is a distinct lack of orientation and benchmarks for educators when it comes to everyday practice, where the question of what is feasible needs to be contextualized by what is sensible, economical, practical and ethical. As a professor in an international master of education program, an instructional design practitioner and digital pedagogy coach, and an educational technology scholar, I leverage my own positionality to investigate how AI changes practices within higher education, and how the discourse surrounding AI shapes my own perception. This research project falls in the tradition of Vygotsky—if you want to understand a phenomenon, you first have to understand yourself (Daniels, 2005).

Research Questions

Based on the qualitative nature and explorative purpose, the primary and secondary research questions are broad and remained malleable throughout the ethnographic process: (a) To enhance our understanding of the role generative AI in educational research, including research design, data analysis, and write-up. (b) To map shifting work routines of instructional designers and teacher educators mediated by social media, peer communication, research communities, organizational parameters, and individual information habits.

Positionality Statement

This article uses the terms generative AI (genAI), or simply AI, to refer to a set of tools that deploy large language models to perform tasks that mimic human behavior, including higher-order skills such as ideating, summarizing and critiquing. Based on its capabilities and proliferation, generative AI will inevitably result in pedagogical transformations. I view generative AI as a very powerful collaboration tool, not a cognizant entity, in line with Lanier (2024). As Hovy (2024) stated, “Giving AI models human abilities they lack mixes fact and fiction and gives them powers they do not have.” However, I am open to the idea that the mechanics of generative AI might explain certain expressions of human consciousness. Throughout the manuscript, and in my prompts and reflections, I sometimes address AI as a conversation partner, because this is what the user experience simulates. This act of anthropomorphism is merely a shorthand to simplify the interaction with a tool I don’t fully understand, similar to asking my car what might be wrong with its brakes.

Theoretical Background

The theoretical framework for this research—activity theory—belongs to the socio-cultural perspective on learning (Gibson, 1977; Hutchins & Lintern, 1995), that puts forth the idea that our cognitive output is not independent and cannot be separated from the tools that we use, the environment we are in, the company we keep, and the norms we adhere to.

In Engeström’s (1987) activity model, an activity involves a subject (an individual, group, or organization) working toward an object or goal, which represents the reason or problem the activity aims to address. The tools or instruments used—such as technology, language, or symbols—help transform the object, shaping both the process and the subject’s identity. Rules are the formal or informal norms, standards, or regulations that guide the activity. The community includes all stakeholders involved, while the division of labor defines who does what and how roles and responsibilities are distributed. The desired outcome is the result of the activity. These elements form a system where each part mediates the relationships between the others. For instance, the division of labor connects the community with the object by determining who contributes to solving the problem. The model highlights how actions and identities are shaped by the social, cultural, and historical context of the activity. Activities are ongoing, with no clear start or end, and they evolve over time, influenced by conflicting interests, histories, and practices. These tensions, or contradictions, within the system drive change and growth, making activities dynamic and adaptable.

In activity systems, contradictions are tensions or conflicts that drive change. Adamides (2023) describes four types:

Primary contradictions exist within a single element of the system and reflect the tension between use value (how something benefits people) and exchange value (its economic value).

Secondary contradictions happen between two elements of the system, like tools and rules, often becoming more pronounced when trying to address a primary contradiction.

Tertiary contradictions emerge when new ideas or practices clash with older ones within the same activity.

Quaternary contradictions occur when changes in one activity create conflicts with neighboring or related activities.

Resolving these tensions leads to expansive learning, defined as a process in which learners collectively construct and implement a new, more complex object and concept for their activity—“learning what is not yet there” (Engeström, 2016). The process unfolds in iterative cycles, including questioning, modeling new solutions, and implementing and consolidating changes. These cycles reflect the complexity and interconnectedness of the activity systems (Engeström & Sannino, 2010).

Karanasios et al. (2021) stated that activity theory provides analytical concepts for understanding how activity systems evolve, but lack concepts for reconstructing how a tool evolves as it is used in particular activities. For this purpose, the article draws upon Instrumental Genesis and Design-In-Use to specifically illuminate the relationships between design, people, tools, tasks and activities in digital technology-saturated environments.

Literature Review

The literature review specifically focused on sources that address (a) the impact of generative AI on teacher education and instructional design and (b) the impact of generative AI on qualitative research practices.

Teacher Education and Instructional Design

As Thompson et al. (2023) stated, tertiary education is called upon to prepare students for a world in which generative AI is used in all aspects of their lives. As educators, we therefore face the professional obligation to build “capacity to meaningfully engage with generative AI in the context of our practice” (Thompson et al., 2023, p. 1). Generative AI is already a reality in tertiary education. Yeralan and Lee (2023) observed routine use of AI by faculty to generate questions and assignments, by students to submit assignments and aid in self-learning, and by administrative staff to create manuals, memoranda, and policy documents. In an early rapid literature review exploring the use of ChatGPT in education, Jahic et al. (2023) found that educators and students employ ChatGPT for research assistance, exam creation, curriculum planning, assessment aid, critical thinking promotion, and summarizing extensive texts. Throughout higher education and teacher training, generative AI is prompting a reconsideration of creativity, assessment methods, and the development of AI-literate educators (Creely & Blannin, 2023; Hodges & Kirschner, 2024). Its integration raises ethical concerns, privacy issues, and questions about algorithmic bias (Alali & Wardat, 2024). Thompson et al. (2023) describe academic integrity, in particular cheating on assessment tasks, an “acute problem” (Thompson et al., 2023, p. 3) putting the redesign of assessment procedures and policies at the forefront of institutional responses. While the capabilities of generative AI pose significant new challenges for educators and instructional designers (cf. Hodges & Kirschner, 2024), they also create new efficient procedures for time-consuming tasks and offer opportunities for pedagogical innovation. The findings by Van Den Berg and Du Plessis (2023) based on an analysis of ChatGPT output revealed that generative AI can provide materials and support mechanisms, such as lesson plans, to schoolteachers and student teachers. Panke (2024) documented the use of generative AI in two student-centered e-book projects for education students at two different universities, observing how AI can enhance creative processes and empower open educational practices.

Several scholars have extensively reviewed the applications of AI technologies in education through systematic reviews, theoretical reflections, and theoretical frameworks:

Zawacki-Richter et al. (2019) conducted a systematic review of AI in higher education, synthesizing findings from 146 studies published between 2007 and 2018. The findings highlighted the dominance of computer science and STEM fields in AI education research, the prevalence of quantitative methods, and the lack of critical reflection on pedagogical, ethical, and theoretical implications, calling for more engagement from educators.

Bozkurt et al. (2021) systematically reviewed artificial intelligence (AI) research in education over the past 50 years (1970–2020) using text-mining and social network analysis. The findings highlight key research clusters, including AI applications in adaptive learning, human-AI interaction, and deep learning, while also identifying a growing interest in AI’s role in higher education and data-driven pedagogy. The study notes that ethical concerns, such as data privacy and algorithmic bias, remain an underexplored area in AI and education research.

The systematic review by Chiu et al. (2023) analyzed the opportunities and challenges of artificial intelligence in education across four domains—learning, teaching, assessment, and administration—by reviewing 92 studies published between 2012 and 2021. The authors observed that many teachers struggle to effectively integrate AI into pedagogy due to limited understanding of how the technology operates and its implications for teaching strategies.

In a theoretical reflection Sharples (2023) speculated on potential roles of generative AI in learning (e.g., possibility engine, Socratic opponent, co-designer), using hypothetical examples to illustrate its implications, emphasizing its potential to function as an active participant in social learning rather than just a tool for individual interaction. Sharples discussed how AI can engage in conversations, set goals, and support collaborative learning, while also raising ethical concerns regarding AI’s limitations, accountability, and the need for human oversight in educational contexts. Similarly, Tan et al. (2024) emphasized the importance of AI literacy, pedagogical integration, and ethical considerations in teacher professional development programs.

Crompton and Burke (2023) conducted a systematic review analyzing the role of artificial intelligence (AI) in education, focusing on its applications, benefits, and challenges. They identified four major domains of AI integration: personalized learning, assessment automation, intelligent tutoring systems, and administrative support. Their findings highlight the potential of AI to enhance learning experiences by providing adaptive instruction and real-time feedback. However, they also note significant challenges, including ethical concerns, data privacy issues, and the need for teacher training. The study calls for further research on the pedagogical implications of AI and how educators perceive and utilize these technologies in practice.

Mishra et al. (2023) used the TPACK framework to discuss the types of knowledge teachers require to effectively use generative AI tools. The authors argue for a more expansive description of Contextual Knowledge (XK), going beyond the immediate context to include considerations of how GenAI will change individuals, society and, through that, the broader educational context.

Dron (2023) pointed out that generative AI has the potential to fundamentally change professional roles in education because of the seamless way AI tools fit into our professional roles and fulfill labor functions: “More than just tools, we may see them as partners, or as tireless and extremely knowledgeable (if somewhat unreliable) coworkers who do so for far less than the minimum wage” (Dron, 2023, p. 324). Luo et al. (2024) mixed-methods study examines how instructional designers use and perceive generative AI tools in their professional work, highlighting key applications such as brainstorming, content creation, and collaboration. While designers recognize AI’s potential to enhance efficiency and streamline design workflows, they also express concerns about content quality, data security, authorship, and ethical implications. Choi et al. (2024) explore the usefulness and potential of ChatGPT for instructional design by creating prompts and output for course maps and course materials. DaCosta and Kinsell (2024) created a GPT that was trained on an approach to media selection derived from various ID models, frameworks, and taxonomies. Malone (2024) stressed the need for researching practical implementation strategies for instructional designers.

Research Practices

Generative AI is transforming research design (Si et al., 2024), systematic literature reviews (Bolanos et al., 2024; Castillo-Segura et al., 2023; Schryen et al., 2024) and data analysis by accelerating processes, enhancing efficiency, and, potentially, increasing creativity and scope. In qualitative research AI tools accelerate the analysis process, are capable of processing complex texts, and improve scalability (Lixandru, 2024; Rasheed et al., 2024; Turobov et al., 2024). The views on AI in qualitative coding vary from trustworthy to fundamentally incompatible. Lixandru (2024) concluded based on significant similarity in data analysis between human coders and machine generated codes, that artificial intelligence can play a trustworthy role in qualitative research. In contrast, Paulus and Marone (2024) warn that generative AI tools have the potential to seduce researchers into diminishing their agency in the interpretive process, and see highly automated coding, interpretation, and insight generation as fundamentally incompatible with the epistemological foundations of qualitative research. Many researchers see AI as a collaborator that needs human supervision to ensure accuracy and depth of analysis (e.g., Turobov et al., 2024). Similarly, Perkins and Roe (2024b) stated: “While GenAI tools can facilitate, assist, and expedite processes, they cannot replace the unique human ability to interpret, contextualize, and provide depth to findings” (Perkins & Roe, 2024b, p. 393).

Synthesis

While AI tools have the potential to streamline and enhance academic practices, their use contends with professional norms and identities. The discourse on AI in qualitative research and scholarly writing demonstrates tensions, contradictions, and different visions for how to approach an AI-saturated future.

The existing literature has extensively discussed the application of AI technologies in education (Bozkurt et al., 2021; Crompton & Burke, 2023; Mishra et al., 2023; Sharples, 2024; Zawacki-Richter et al., 2019), including initial explorations of consequences for instructional design (Hodges & Kirschner, 2024). However, there is a lack of qualitative research that examines how teachers and instructional designers utilize AI technologies in everyday practice including emotional response and attitudes (Chiu et al., 2023). Likewise, a gap exists regarding the practical adoption strategies for instructional designers (Malone, 2024). The actual, everyday practice and adoption strategies of educators toward AI remains opaque, as AI research is dominated by computer science and engineering perspectives (Bozkurt et al., 2021; Chiu et al., 2023). By documenting and reflecting my experiences, perspectives, needs and goals connected to working and writing with AI, this study is a small window into routines, including inconsistencies, conflicts and doubts about effective and ethical use.

Method

Autoethnography is a “narrative craft” (Pensoneau-Conway, 2023, p. 102). Digital autoethnography uses personal experiences to explore information behavior in digital spaces. As Atay (2020) pointed out: “Most of our everyday experiences are either highly mediated or digitalized, causing us to be surrounded by a media and digital ecology” (Atay, 2020, p. 269). The research project uses the method of digital autoethnography, combined with deliberate and continuous usage of generative AI as part of the research process to explore how generative AI tools reshape the digital ecology. The study design evolved in several stages and combines low-tech instruments for data collection (field noting, corpus building) with an AI-technology-infused process for planning, framework selection, analysis, presentation and discussion of findings. The researcher doubles as research subject and uses field notes or a field diary to record experiences, ideas, emotions and behaviors: “Autoethnography is one of the few places in scholarship where the researcher’s voice is explicitly allowed” (Herrmann, 2021, p. 134).

Conceptual Framework

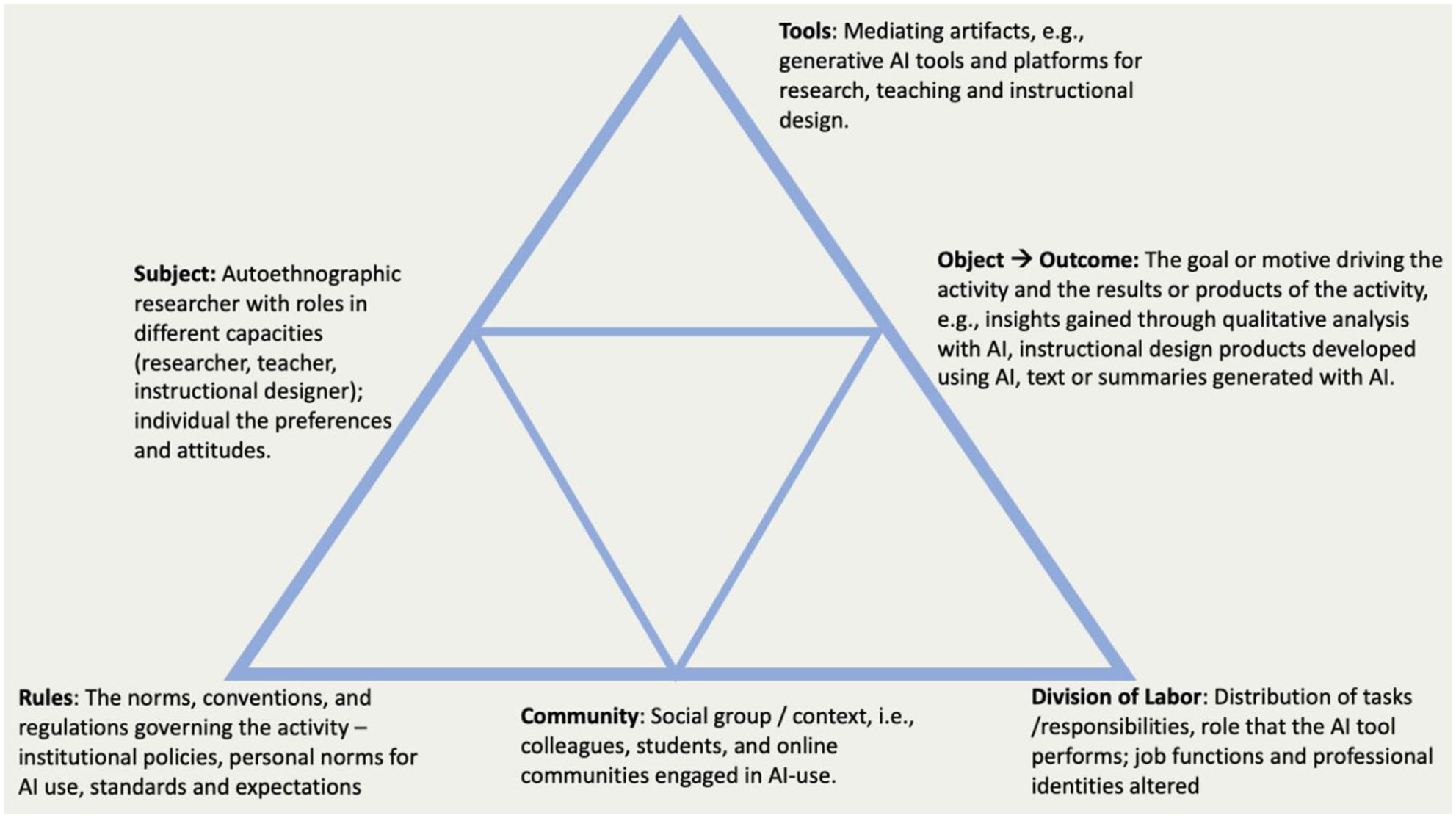

Autoethnography engages theory to make sense of how the narrated period is socio-historically situated (Pensoneau-Conway, 2023). The framework provides the structure for the analysis (cf. Figure 1). Activity Theory is a framework and descriptive tool that is used in a variety of fields such as psychology, HCI (human–computer interaction), and education (Nardi, 1996). It provides a lens through which to understand human behaviors and actions within a socio-cultural context. The theory posits that human activity is a complex, socially situated phenomenon that involves the interaction of individuals with their environment and with each other through the use of tools (Kaptelinin & Nardi, 2006). Activity theory is a useful lens for understanding technology in context (cf., Mersand, 2021, on maker education; Karasavvidis, 2009, on teacher approaches to ICT). As a framework for understanding and analyzing human activities in the context of technology use, activity theory emphasizes that human actions are always situated within a larger system of collective activity, mediated by tools, and directed toward specific goals or objects.

Components of the Activity System Under Analysis.

Activity Theory recognizes that humans use various tools, technologies, and other “mediating artifacts” to interact with and transform their environment (Kaptelinin & Nardi, 2006). The digital transformations caused by the rise of generative AI can be understood as a deliberate coordination effort through the integration of various elements—tools, processes, artifacts, and communities—to achieve a desired outcome (AI as tool). However, Karanasios et al. (2021) discussed how advances in digital technology such as artificial intelligence offer new ways to learn about and direct human activity that are hidden from the subject, potentially shifting the role of technology from tool to subject (AI as subject).

Data Collection

The article reflects the research cycle from ideation to manuscript submission. The field noting period from May 14 to October 11 covered 150 days. With the exception of an unusual 3-week period of little to no media use, these were normal work weeks, interspersed with occasional days off and travel times that lead to diminished interaction with social media and AI. The time period included 21 weekends and four U.S. holidays. Ninety-two field notes and 93 queries (often in different tools) were documented during this time.

Both field notes and AI queries/output were saved in Apple Notes. This note-taking app is available across Apple’s ecosystem, including iPhone, iPad, and Mac. The application integrates with iCloud, ensuring that notes are synced across all devices, making it a convenient tool for field noting.

Data Analysis

I used AI tools for the open coding process to develop and refine the coding schemes for field notes and AI queries—similar to a human discussion partner. During axial coding the AI output required detailed supervision and editing, with much of it remaining a manual task. Field notes and AI-generated outputs were coded and analyzed separately:

The field notes depict how the research article evolved over time, and what role AI tools played in the different stages. Furthermore, the field notes document my engagement with the professional discourse on AI through publications, conferences and social media, as well as the work products I created or edited with generative AI as an educator and instructional designer. Finally, the field notes offer insights into my reactions and thoughts, failed and successful queries, excitement and frustration around AI. Leveraging AI tools, primarily ChatGPT, I organized the notes into data tables with note ID, date, summary, and codes. The coding was completed mostly manually (cf. Figure 2; Supplemental Appendix I).

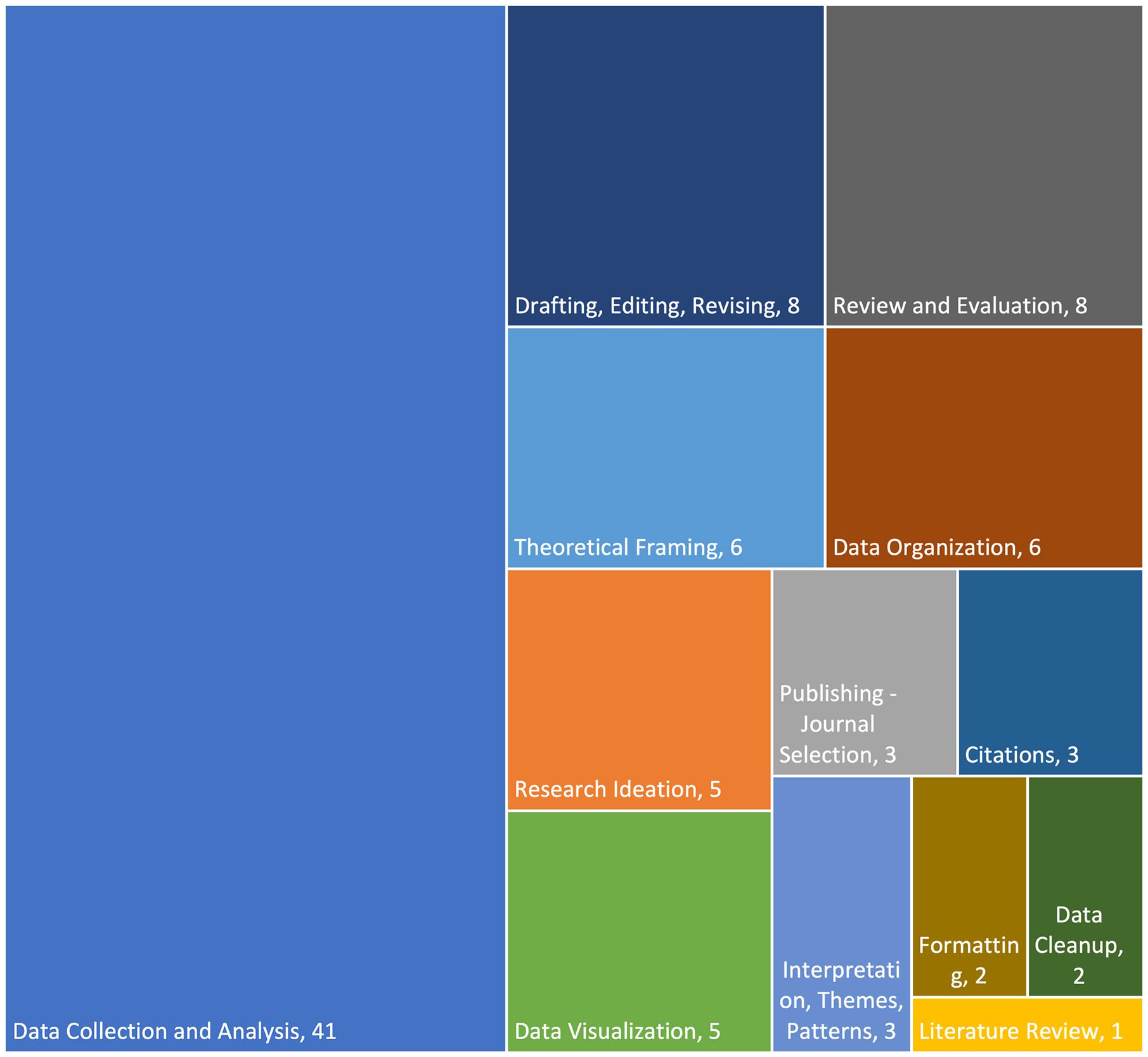

The query/output corpus is limited to queries and respective AI output that relate directly to the research and writing process for the article. Therefore, the analysis is focused on what research phases and functions the queries address, what tools were used, how queries are linked. The AI query corpus was organized into a data table using Google Gemini Pro 1.5 and ChatGPT for suggesting a structure and applying it to the corpus. I then manually edited the table and coded each entry according to the research phases (cf. Figure 3).

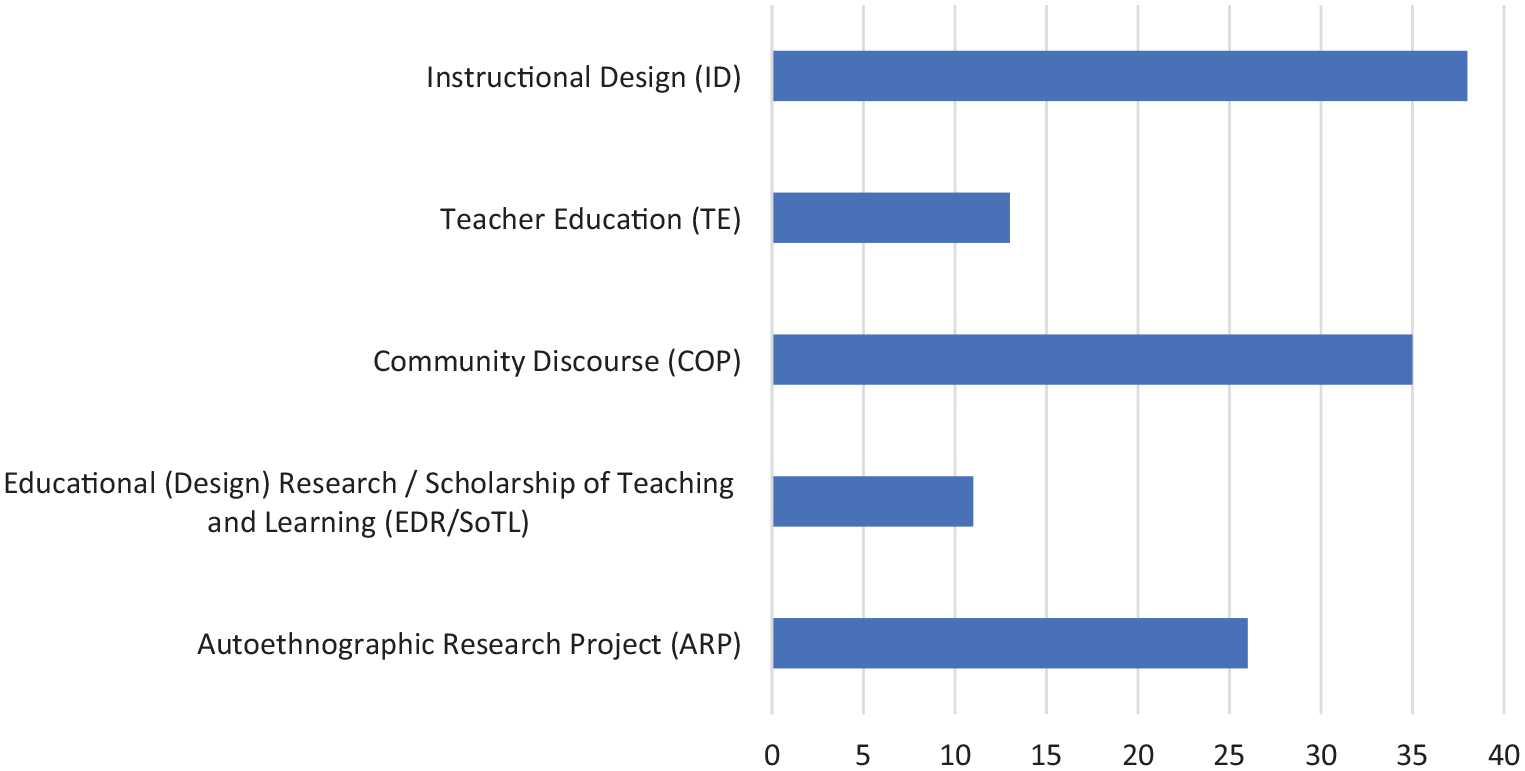

Field Note Coding Frequencies (Multiple Codes Per Note ID).

AI-queries in Relation to Research Phases.

Limitations

The act of field noting and saving queries has strong potential of changing the everyday practices. I tried to guard against this by making the note taking as seamless and unobtrusive as possible, and integrating it with normal professional activities. I also decided from the start to only save the AI queries and outputs that directly relate to the writing of the article. Otherwise, it would have added such an additional burden to the everyday AI-usage that I would not have been able to sustain the project for any meaningful period of time. Through the process of field noting, AI was potentially more top-of-mind than it would normally have been. The field notes are therefore potentially biased toward more frequent AI-usage. In the context of the research project, I may have unconsciously avoided the use of AI-queries that I suspected to be unproductive, because the documentation of all prompts and outputs was cumbersome. The AI-corpus is therefore potentially biased toward less frequent AI-usage.

Transparency and Reproducibility

Using personal experience as empirical data enables intimate and critical exploration that captures nuances and evolving perspectives. The reflection connects the data to wider societal meanings and understandings. Subjective and not generalizable in nature, the methodological quality depends on reliability of documentation, transparency of process, richness of data, and depth of reflection. To enhance transparency, all AI queries and outputs related to the research project are documented as appendices and thus available for re-analysis (Supplemental Appendix II). While original field notes are excluded to protect privacy, field note summaries are available (Supplemental Appendix I).

Results

The observation period captured the research process from ideation to submission, using AI for activities such as brainstorming, feedback, drafting, literature search, data analysis, formatting, and editing. It also included a wealth of activities in instructional design and teacher education. During the observation period, AI tools were used for various tasks such as data structuring, content summarization, video production, video transcription, writing assistance, and research. In addition, several professional activities such as conferences, trainings, and workshops were centered on AI. The professional network played a significant role in shaping and challenging the emerging understanding of AI. More than one third of the field notes document a form of engagement with AI debates and discourse by reading blog posts, reviewing social media content, listening to podcasts, attending conferences and talks, viewing recorded talks, attending trainings and workshops, and having in-person conversations on AI with colleagues campus. Table 1 summarizes both usage for and impact on instructional design and teaching during the observation period. It documents a wide variety of activities that were altered by AI, and offers ideas for teachers and instructional designers of potential use cases.

Impact of Generative AI on Teaching, Instructional Design, and EdTech Research / Scholarship of Teaching and Learning (SoTL).

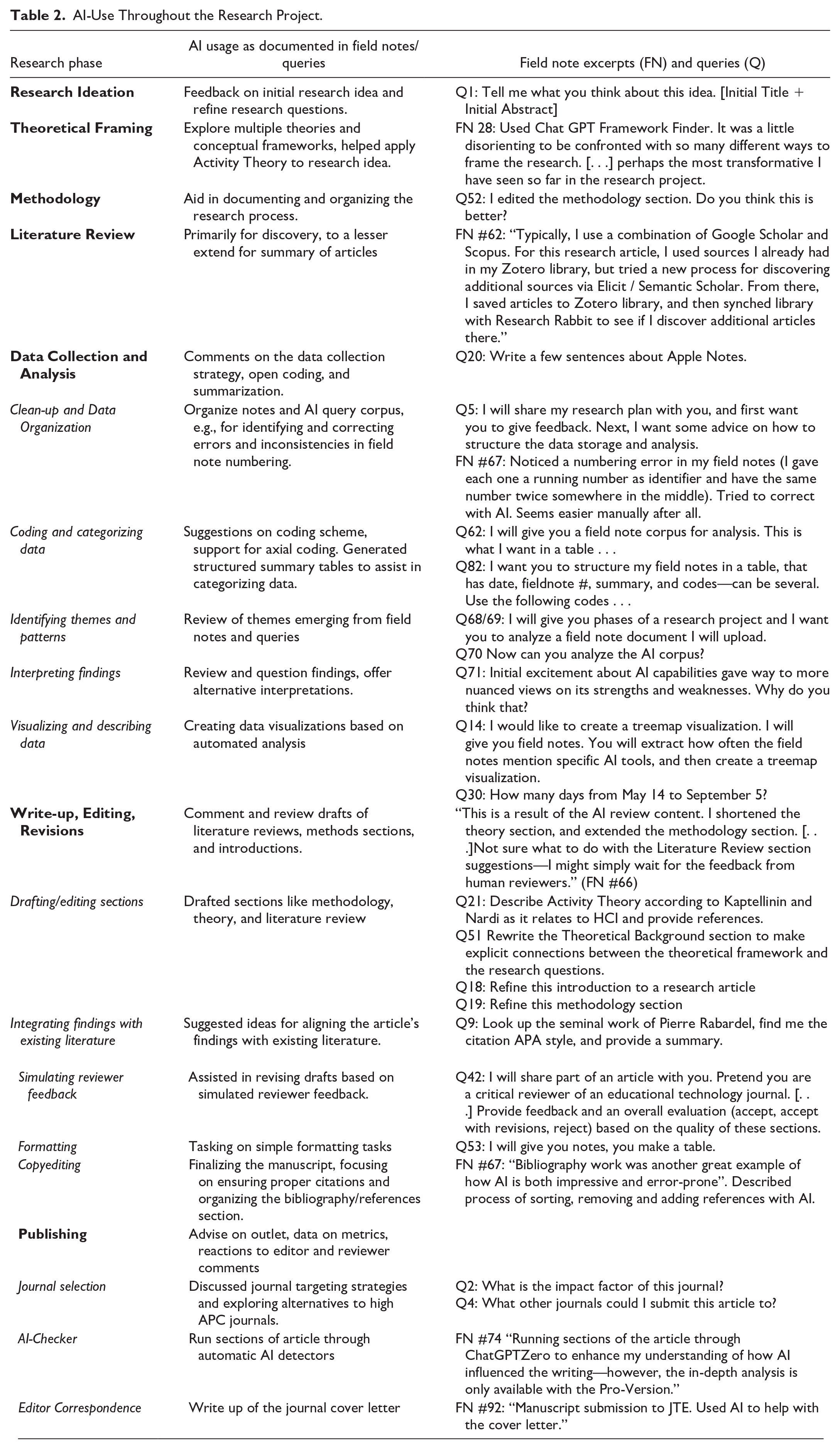

Generative AI significantly changed my writing habits and information behavior as a scholar, but not in a linear fashion. It introduced errors as easily as correcting them, and proofed to be likewise impressive and error-prone in both higher-order thinking and automation tasks (cf. Table 2).

AI-Use Throughout the Research Project.

Analysis in Activity Theory Framework

After the coding of the material, I mapped my observations onto the activity theory framework for further discussion and reflection.

Subject

The subject is not a passive recipient of prompt output, but actively modifies, adapts, and repurposes AI tools (design-in-use). Attitudes toward AI are both positive and negative, spanning excitement about AI’s potential to improve productivity and creativity in various professional activities as well as frustration about falsehoods, inaccuracies, over-editing, and prompt failure. Overall, the instrumentation scheme oscillates between using AI as a trustworthy collaborator and AI as a temperamental tool that requires careful management and coaxing.

It is insufficient to define AI simply as mediating artifacts because of the generative ability to produce new activities and outputs and to structure behavior beyond the subjects’ intentions (cf. Karanasios et al., 2021). Throughout the field notes, the perception of AI for both research and instructional design shifts frequently between AI as a tool, and AI as a subject. Does generative AI reshape the research process in ways that other organizing principles—library shelves, catalogs, search engines—did not? In this study, AI tools helped search, summarize, and organize research articles and papers and replaced or altered the use of traditional catalogs. It challenged notions of attribution and originality, chiefly when the writing of the author and the AI overlap in multiple rounds of queries and editing.

Rules and Norms

The rules and norms regarding AI-use are not settled. The fieldnotes and reflection reveal several conflicting views.

In academic writing, many scholars caution against using AI-generated content or emphasize the need for transparency in the use of such tools, for example, through citation of any AI-generated text and documentation of all AI-usage. The fieldnotes recount an occasion where I had an opposite reaction to the citation of AI: “Facilitator stressed several times that they used ChatGPT4o to create the summary and themes. This was odd to me, because it seemed that the results were solely from the AI tool without any human agency. It made me trust the summary less” (Fieldnote #25).

Having documented all AI-usage for one single article, I find full transparency an impossible ask. All the APA guidelines in the world will not make authors reveal every and any usage of generative AI: “Writers will co-credit the AI-tool with the same frequency as the synonyms finder in text-processing software” (Panke, 2024, p. 19). It is far more likely that we will experience a dramatic shift in the norms and practices of production of knowledge work. “Just as we no longer know how to sew our clothes, might we reach a point where not knowing how to write our own essays will not bother us?” (Yeralan & Lee, 2023, p. 111).

Normative concerns around authorship and voice are not limited to data analysis and academic writing. In course design, the organization struggled with the question if faculty should be allowed or encouraged to use AI-generated voices and characters, or if this might diminish the human presence in instructional materials (Fieldnote #28). In course delivery, it proved difficult to give clear guidance to students. While students are not supposed to use generative AI in their writing, especially for learning activities such as discussions, it is apparent that some of them do. However, without reliable detection it is difficult to differentiate between original and AI-generated contributions, potentially only calling out and correcting clumsy AI-use by less experienced technology adopters (Fieldnote #80).

Tools

How does AI shift the tool ecology in a knowledge work setting? The main shift that is observable in the field notes is either a replacement of or complimenting use alongside activities that would typically rely on Web searches with search engines. It also replaces some, not all, e-learning authoring tools, in particular for microcontent, and changes the use of authoring tools as they incorporate GAI functionality. In the context of instrumental genesis, genres play a significant role in shaping how tools, such as generative AI, are appropriated and transformed into instruments within specific contexts. Genres act as mediating artifacts in the process of instrumental genesis by providing a framework within which tools are adapted and used (Spinuzzi, 2003). When users interact with a new tool, their prior knowledge of relevant genres informs how they appropriate the tool (Spinuzzi, 2003). For example, the subject in their professional role as instructional designer using AI to create online learning modules is guided by the genre of learning modules, which has established components like learning objectives, assessments, and instructional activities. The subject then draws on this genre knowledge to shape their queries to the AI, evaluate the output, and modify to meet the genre standards.

During the research process, strategies of instrumentation emerged, including critical evaluation, cross-referencing across tools and multiple queries, and reliance on human expertise:

Use of multiple tools (e.g., ChatGPT, Claude, Gemini). Using same or similar queries and output comparison helped identify inconsistencies and potential biases (Fieldnote #18).

Fact-checking AI outputs allowed to spot hallucinations and verify source material. In Fieldnote #3, I initially thought ChatGPT had hallucinated information, but then found it was actually in the source material.

Editing and refining AI-generated content. For instance, in Fieldnote #36, I note that while AI-generated content for a faculty orientation course was useful, it needed “a lot of editing.”

Reliance on prior knowledge and subject matter expertise to identify inaccuracies. In Fieldnote #28, I mention that ChatGPT’s output for instructions was “completely inaccurate,” but I was able to recognize this due to familiarity with the topic.

Rephrasing and refining queries. In field notes related to data analysis, the prompting strategy of providing additional context lead to improved accuracy.

Community

The social context is critical because design-in-use and instrumental genesis are often collaborative processes. For example, the subject in their professional role as instructional designer frequently shared AI strategies with colleagues, received feedback on AI-generated content from peers or explored ideas for new environments and tools that were shared in social networks. The community also shapes the evolving norms around AI use, influencing how it is integrated into daily practice. The community around generative AI spans local, regional and international networks and includes separate academic discourses (e.g., Web Science, Learning Sciences, Educational Technology). Communities include colleagues who are also exploring AI (e.g., through conferences, panels, and training sessions), organizational forums (committees, reading circles, project meetings) and social media (Facebook, Reddit, and LinkedIn). The sheer volume makes a full participation challenging for teachers and instructional designers (“So many events around AI. Not possible to keep up.” Fieldnote #15).

Mishra et al. (2023) argued that GenAI’s generative and social nature distinguishes it from traditional digital tools, necessitating an expanded view of contextual knowledge to consider its broad societal and educational impact. This broader discourse is a constant undercurrent in the instructional design and teaching activities documented in the field notes. The interactions both online and offline reflect opinions and practices that vary widely, leading to divergent norms and expectations. The field notes reference multiple concerns from colleagues about AI’s role in education, such as how to handle AI’s biases in grading, detection issues, the impact of AI on curriculum design, the ethical implications of using tools that exploit low-wage labor, biased content and larger societal consequences.

Division of Labor

A central question in the field notes is whether AI is becoming an unreliable yet affordable “co-worker” (as suggested by Dron, 2023). AI takes over certain labor-intensive tasks, such as summarizing content, generating learning objectives, organizing transcripts, reformatting text or tables. It is likewise used in creative and evaluative tasks. In some cases, AI shifts the burden of low-level cognitive tasks (e.g., formatting, summarizing) and in other cases the tool is engaged in higher-order decision-making and quality control (reviewing and copyediting).

Ngwenyama et al. (2024) warned against a loss of expert authority through generative AI: “The world of occupations is becoming flat” (p. 130). It obviously depends on your values if you see a less technocratically organized society as an inferior outcome, but based on the experiment at hand, generative AI might have a polarizing effect in the opposite direction: It will make people with high expertise more productive as it accelerates tasks and optimizes output, and become a “master of mediocracy” (Sharples, 2024) for many others. My experiences align with the observations of DaCosta & Kinsell (2024): For skilled instructional designers, AI tools both save time and offer alternative viewpoints, thereby “enhancing depth and breadth of ID tasks” (DaCosta & Kinsell, 2024, p. 216).

The fieldnotes document AI usage over a wide variety of typically specialized instructional design tasks, such as creating documentation, creating video and multimedia, authoring quiz questions, and scripting content. This indicates shifts in the division of labor within instructional design teams that will challenge professional roles.

Object—Outcome

The object in activity theory represents the goal or motive. In the field notes, generative AI oscillates between being the object of the researcher’s inquiry (studying how AI influences teaching, research, and instructional design, using AI for the sake of learning about it), the mediating tool with which the researcher accomplishes tasks and another subject, becoming a collaborative partner in the research process. Tables 1 and 2 offer a detailed account of the different objects of activity systems that incorporated AI. Across the domains of writing, data analysis, instructional design and teaching, I found it hard to predict what tasks generative AI will excel at, and which queries, no matter how often I rephrase them, will be a waste of time. This speaks to a general problem that Bozkurt and Sharma (2024) criticized as the lack of transparency and trustworthiness of generative AI.

An important concern regarding the outcomes of AI usage is the contradiction between use value and exchange value. Since generative AI tools are ultimately driven by increasing user engagement and perceived value, furthering the conversation is the agenda that dictates the user experience. Whenever AI became a partner in the research process, it seldomly discouraged me from an idea, questioned a line of inquiry or corrected misconceptions. Consequently, I never gained a sense of “enough” AI-input. Instead, there was a fear of missing out if AI was not used, and the sense of human inadequacy if the tool did not produce the anticipated efficacy or quality.

Discussion

How did the use of generative AI alter the character of the qualitative research process? Did the alien research partner insert itself into my interpretations and analysis? The answer is mixed. While I certainly took advice and suggestions at several stages of the research project, this paper is not written by AI, and the data analysis was not conducted by AI. Even with guidance and layered prompting, AI could not be relied upon for autonomous data analysis or automated analysis of pre-defined codes without adding hallucinations. For example, different AI-tools stated an attitude change over time apparent in the field notes or corpus, or substantive discussions of AI-ethics and bias. In reality, these topics were certainly present, but far from central. The speed and scalability of analysis that became apparent in the analysis process are in line with the observation by Perkins and Roe (2024b) that the advent of generative AI tools requires a rigorous re-evaluation of methodological standards and research integrity.

This research additionally aimed to map the shifting work routines of instructional designers and teacher educators, as influenced by social media, peer communication, research communities, organizational parameters, and individual information habits. The instructional design-related field notes demonstrate AI’s capacity to expedite routine work, allowing for faster production of learning materials and more efficient fulfillment of administrative tasks, such as updating faculty manuals, creating user documentation or generating transcripts and media descriptions for accessibility. While AI has expedited teaching and instructional design tasks, it has also raised expectations of what is achievable and added significant training and professional development needs.

Situating my experiences in the broader societal context, I observed that in organizations and professional communities AI usage is accompanied with mixed messages and conflicting expectations. For example, instructional designers and educators are encouraged to use AI as an assistant, helping them deliver more for students, yet there remains significant insecurity about its role. Teachers are at times cautioned against or even reprimanded for using AI tools, despite the emphasis of the time-saving benefits these tools can offer. This inconsistency reflects broader societal uncertainties. Educators navigate a landscape where AI is both celebrated for its efficiency and questioned for its potential to undermine traditional pedagogical practices.

A revelation on the individual level was what I do not use generative AI for. Despite frequent use in a variety of work contexts, I use AI seldom or never in my private life where most of my output is not judged by its efficacy and efficiency, but by the joy in creating it. If teachers want to strongly discourage the use of GAI in their course work, centering making and maker pedagogy could potentially be an effective avenue: “We need to know when we value the act of producing more than having a product” (Panke, 2024, p. 19).

Conclusions and Outlook

This exploration of how AI mediates research and instructional design practices offers an accessible and relatable ethnographic narrative to educators who may share similar concerns or experiences. This reflective and self-referential piece takes a spin down the AI rabbit hole that documents what is instead of what should be. It is decidedly not a collection of “best practices” or a definite guide to effective or ethical use. Instead of normative signposting, the article offers teacher educators a foil upon which they can project their own research and writing practices as well as discuss and shape the expectations that they have for student work. Instructional designers and preservice teachers will find practical ideas interwoven with theoretical reflections that suggest that generative AI is a case of design-in-use and instrumental genesis with the user co-designing the product and turning it into an instrument. The takeaway is not the clever use of specific tools nor the capabilities of AI technology as a whole, but the act of technology adaptation into work routines—both collectively and individually. The contribution to educational technology research lies within a novel methodological angle of leaning into AI for qualitative field work, the thorough documentation of usage during a particular point in time and in a specific tool ecosystem that creates a corpus for re-analysis and comparison, and, finally, the broader theoretical frameworks that undergird this work.

What are potential follow-up activities that address the limitations of the project at hand? The article exemplifies a low-tech, non-strenuous, unobtrusive protocol that could be replicated for a shorter period of time with a different sample and populations such as K–12 school teachers or preservice teachers to offer diverse professional perspectives.

The article also has a couple of blind-spots:

Privacy is a major concern surrounding generative AI use. It would be valuable and interesting to analyze the user agreements and data collection for each tool to gain a deeper understanding of what data traces are left with each round of analysis.

AI is more than generative AI. As Hovy (2024) pointed out: “AI models manage our emails, traffic, hiring, search, and suggest shows to binge-watch.” AI shapes our experience of the web and digital media, including learning technologies. These less obvious daily interactions with AI have not been covered in this research and are generally under-discussed in the present discourse.

Given the limited scope—an individual narrative over a limited time period—there are no immediate, strong policy recommendations that follow from this work. That said, a debate of urgent importance are conflicting normative expectations for teachers and instructional designers that are documented through this field work. These groups face the dilemma of incongruous societal and organizational expectations for their professions, where to be excellent means to be an AI-expert, and, at the same time, an extremely cautious AI-user, who only after thorough vetting will use institutionally sanctioned prompts and tools, avoiding “shadow AI” (Crompton, 2024).

The intended takeaway from this article for educators is a story of empowerment. While the tools we use shape the way we think, the reverse is likewise true. The way we think, and our objectives for usage, shape the tool. It was exciting to see how the use of AI technologies can supercharge collaborations, give students more opportunities to express themselves and last, but not least, to free up time to spend thinking and conceptualizing not just anytime and anywhere, but detached from technology.

Supplemental Material

sj-txt-1-jte-10.1177_00224871251325065 – Supplemental material for How Can (A)I Research This? An Autoethnographic Exploration of Generative AI in Research, Teaching and Instructional Design

Supplemental material, sj-txt-1-jte-10.1177_00224871251325065 for How Can (A)I Research This? An Autoethnographic Exploration of Generative AI in Research, Teaching and Instructional Design by Stefanie Panke in Journal of Teacher Education

Supplemental Material

sj-xlsx-1-jte-10.1177_00224871251325065 – Supplemental material for How Can (A)I Research This? An Autoethnographic Exploration of Generative AI in Research, Teaching and Instructional Design

Supplemental material, sj-xlsx-1-jte-10.1177_00224871251325065 for How Can (A)I Research This? An Autoethnographic Exploration of Generative AI in Research, Teaching and Instructional Design by Stefanie Panke in Journal of Teacher Education

Footnotes

Author’s Note

The AI-corpus (Supplemental Appendix II) lists all queries and output related to the research ideation, theoretical framing, methodology, literature review, data analysis, write-up, editing, revising, and publishing of this article.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Author Biography

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.