Abstract

Intermediary organizations’ (IOs) involvement in teacher education policies has grown in recent years. Apart from advocating for the introduction of alternative routes into the teaching profession, IOs have facilitated the spread of outcomes-based teacher preparation accountability. While previous studies examined the neoliberal market-based logic of their proposals, less is known about technocracy as a discourse informing teacher education redesign. To address this gap, I use the tools of critical policy and critical discourse analysis to examine how IOs advocated for and participated in the construction of outcomes-based accountability regimes. This analysis captures key elements of technocracy, such as the depoliticization of social issues, scientism, and the dismissal of opposition on which accountability regimes are built. By attending to the assumptions and inherent contradictions of technocratic discourses, I shed light on the ways in which accountability regimes dismiss opposition and seek to refashion governance structures in teacher education.

In teacher education policy, intermediary organizations (IOs)—non-profits, for-profit providers, research institutes, and think tanks—have supported deregulation and the expansion of alternative routes into teaching, such as Teach for America and the Relay Graduate School of Education (Kretchmar et al., 2018; Lubienski & Brewer, 2019; Zeichner & Conklin, 2016; Zeichner & Peña-Sandoval, 2015). Yet, IOs have also been instrumental in promoting, introducing, and constructing accountability systems that seek to redesign various teacher education programs (Floden, 2017; Lewis & Young, 2013). Studies examining these influences have focused on the accountability proposals of different organizations, such as the National Council on Teacher Quality (NCTQ) and the Council for Accreditation of Educator Preparation (CAEP) (Cochran-Smith et al., 2018; Floden, 2017). The focus on differences, however, has obscured how IOs have pursued overlapping priorities in ways that allowed their messages to resemble consensus and gain traction in policymaking communities (Aydarova, 2022b; Galey-Horn et al., 2020).

To examine the discourses of teacher preparation accountability promoted by IOs over the span of the last decade, I use the theoretical framework of technocracy developed in political science (Centeno, 1993; Easterly, 2013; Fischer, 1990; Putnam, 1977). The goal of my study was to analyze the discourses that IOs utilized to support the construction of outcomes-based accountability systems in teacher preparation. The analysis of IO policy activities and discourses presented in this article captures the salient features of technocracy as well as its inherent contradictions. By shedding light on the assumptions as well as the rationalizations of technocratic approaches, I seek to trouble discourses that have come to dominate teacher education policies in the last decade.

Literature Review

Calls to hold teacher preparation accountable have an extensive history (Imig & Imig, 2006; Wilson & Youngs, 2005). In the 1970s, teacher effectiveness studies used a process–product approach to examine which teacher actions resulted in greater learning gains (Labaree, 2004). While studies conducted in this vein found correlations between some instructional actions and students’ academic achievement (Lavigne & Good, 2019), they also showed that student learning was affected by many more factors than teachers’ actions alone (Lagemann, 2000). Throughout the 90s, studies in econometrics began to measure teacher effectiveness using outcomes-based measures, such as value-added or growth models (Hanushek & Rivkin, 2004). This line of work gained more policy prominence with the introduction of No Child Left Behind and Race to the Top when teacher effectiveness came to dominate policy and research agendas again. This time, however, teacher effectiveness was narrowly defined as improvement of test scores and teacher education emerged as a policy problem (Cochran-Smith, 2005). Over time, proposals for teacher education accountability began to include several measures of teacher effectiveness: K-12 students’ academic growth; teachers’ performance on classroom observations; surveys of graduates, graduates’ employers, and K-12 students (Brabeck et al., 2016); as well as candidates’ scores on performance assessments, such as the Educative Teacher Performance Assessment (edTPA) (Darling-Hammond, 2020).

Several research studies examined how these measures could be used to evaluate program quality. Some studies showed that program graduates’ value-added scores help distinguish between effective and ineffective preparation programs if small programs are eliminated from the sample (Plecki et al., 2012). According to Bastian et al. (2018), teacher evaluation ratings can help assess the quality of the programs from which teachers graduated if school contexts are taken into account. Their study suggested that teacher preparation programs could use individual-level data-sharing systems to assess graduates’ performance in the classroom for program improvement. For useful inferences to emerge from the data, however, robust data systems connecting program features and teachers’ work outcomes are necessary—an aspiration that has not yet fully materialized in most contexts (Goldhaber, 2019).

At the same time, researchers expressed caution about the limitations of certain types of evidence (i.e., value-added scores), the contradictory goals of evaluations pursued by different entities, and potential unintended consequences of high-stakes evaluations (Feuer et al., 2013; Ginsberg & Kingston, 2014). Furthermore, in their analysis of accountability policies promoted by CAEP, NCTQ, and the federal government, Cochran-Smith et al. (2018) noted how these proposals were rooted in neoliberal market-based ideology. The authors observed that these accountability tools deprofessionalized teachers and fostered a “thin equity” approach that failed to “acknowledge the complex and intersecting historical, economic, and social systems that create inequalities in access to teacher quality in the first place” (Cochran-Smith et al., 2018, p. 30). Jenlink (2017) also noted that “technical-managerial accountability” promoted by CAEP and other IOs undermined democracy. Bullough (2016) attended to the simultaneous rise of accountability for university-based teacher preparation and the deregulation of alternative routes, concluding that through the introduction of these measures “teacher educators have lost much of their control over teacher education” (p. 73).

In debates over the potential benefits or drawbacks of accountability systems, perspectives of IO policy analysts have been largely missing. The lack of attention to their position on accountability is unfortunate because IOs have become de facto policymakers (Scott et al., 2017). Through the work of their networks and advocacy coalitions, IO policy actors facilitate policy convergence (Ferrare & Setari, 2018; Galey-Horn et al., 2020) toward a set of solutions rooted in the principles of neoliberal managerial technocracy (Kretchmar & Zeichner, 2016; Trujillo, 2014). While IO influences on teacher education policy have been recognized (Imig et al., 2018; Wiseman, 2012), their use of technocratic discourses in the construction of accountability regimes in teacher education has been largely overlooked. Through this project, I interrogate the assumptions and discursive moves embedded in IO proposals for teacher preparation accountability and show how technocracy operates as “a regime of truth,” which Foucault (1980) defined as the types of discourse which it accepts and makes function as true; the mechanisms and instances which enable one to distinguish true and false statements, the means by which each is sanctioned; the techniques and procedures accorded value in the acquisition of truth; the status of those who are charged with saying what counts as true. (p. 131)

Thus, this study explores the following research questions:

Theoretical Framework

In the context of policymaking and policy implementation, Fischer (1990) defined technocracy as “a system of governance in which technically trained experts rule by virtue of their specialized knowledge and position in dominant political and economic institutions” (p. 17). Traditionally, the theory of technocracy was applied to state bureaucracies and institutions (Putnam, 1977; Ribbhagen, 2011). The emergence of networked neoliberal governance (Ball & Junemann, 2012), however, has allowed “technically trained experts”—such as IO policy analysts—to steer educational policies (Scott et al., 2017). I apply the term “technocrat” to experts affiliated with IOs not only because that is the term used by those who closely observe their activities, but also because this is the term they use themselves (see Pondiscio, 2019).

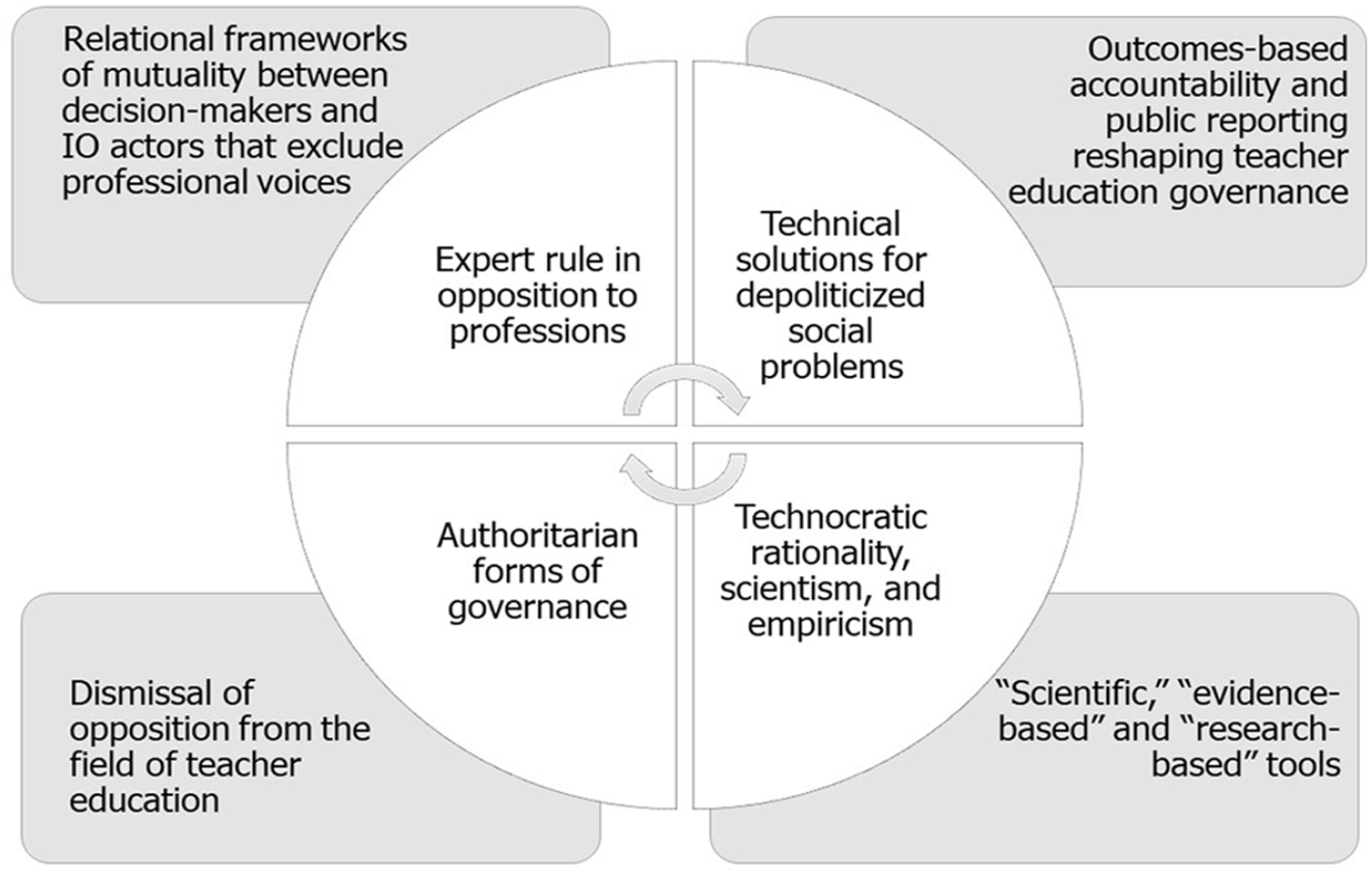

For the analysis presented in this article, four distinguishing features of technocracy are important to consider (Figure 1). First, technocrats are often experts who rule in opposition to professions, treating professional expertise “as a hindrance—structural and ideological—to genuine social and political reform” (Fischer, 1990, p. 8). As technocrats rely on advanced technology and task complexity, they exclude those who are “not fluent in expert languages” (Centeno, 1993, p. 318), thus concentrating power in their own hands. In educational contexts, it means that the introduction of complex data systems not only increases the reliance of educational institutions on external experts but also excludes educators from deliberations on the desirability or usefulness of these systems in the first place. Perceived as not well-versed in data management and analysis, educators who raise concerns about overreliance on data are perceived as “adherents of a normative order with no legitimate claim for attention” (Centeno, 1993, p. 327).

Alignment Between Technocratic Discourses and Accountability Regimes.

Second, technocrats seek technical solutions for political and socioeconomic problems (Centeno, 1993). These solutions represent “technostructure’s instrumental orientation” that emphasizes “the calculation of efficient means . . . to given ends” (Fischer, 1990, p. 216), where formulaic approaches are deployed to manipulate variables to control outcomes. As Ribbhagen (2011, p. 23) observed, “management by objectives, performance management, and New Public Management” reflect the technocratic control of social institutions. Social problems become depoliticized: issues of injustice and inequality are side-stepped, the interactions between different aspects of social processes are oversimplified, and social actors’ activities are decontextualized (Easterly, 2013; Fischer, 1990).

Third, in their search for solutions, technocrats seek to solve problems in a “rational scientific manner” (Putnam, 1977, p. 387). Evidence-based and data-driven decision-making become the coin of the realm in which formulas and calculations eliminate other forms of knowing (Easterly, 2013; Ribbhagen, 2011; Scott, 1998; Trujillo, 2014). Scientism and empiricism replace deliberations about agendas, goals, and values with allegedly “value-free, objective criteria for making decisions” (Centeno, 1993, p. 311). Yet the criteria technocrats use are not completely value-free, despite their claims, as they prioritize cost-benefit analyses in ways that make “efficient and effective utilization of scarce resources [. . .] the primary decision criteria” (Fischer, 1990, p. 24).

Finally, technocrats dismiss opposition and rely on undemocratic decision-making. Because only technocratic experts are seen as authoritative sources of knowledge necessary for taking action (Centeno, 1993; Easterly, 2013; Fischer, 1990), time spent on debates or deliberations is perceived as a loss. Empirical studies of technocracy have shown that it quenches dissent and emerges as an authoritarian form of governance (Centeno, 1993; Fischer, 1990; Putnam, 1977). This approach to decision-making aligns with the vision pursued by the corporate educational reform movement and venture philanthropies that have used technocratic approaches to frame their policy interventions (Trujillo, 2014; Williamson, 2018).

Overall, technocracy operates as a regime of truth (Foucault, 1980) by defining what constitutes a problem and how it should be solved, along with setting the parameters of what is knowable, how it should be measured, and who should have the power to do it. The theory of technocracy offers a useful conceptual lens for understanding IO policy actors’ role as technocratic experts and for interrogating technocratic discourses in the construction of accountability regimes.

Method

In his analysis of technocracy, Fischer (1990) noted that “the critique of technocracy must focus more on implicit assumptions” (p. 20). For this reason, I conceptualized this study in the tradition of critical policy analysis (CPA), which approaches policy as instrument of power and examines how it operates as “a regime of truth” (Ball, 2015; Foucault, 1980). Organically connected to CPA is critical discourse analysis (CDA) that affords an opportunity to critically interrogate assumptions underpinning social practices and examine discourse “as a way of construing aspects of the world associated with a particular social perspective” (N. Fairclough, 2013, p. 179). Although CDA has been critiqued for its focus on linguistic features and available discourses rather than their absences (Blommaert, 2005), it has become indispensable for understanding global neoliberalism and managerial technocracy (Johnstone, 2017).

Researcher Positionality

I began following external actors’ involvement in the development of teacher education policies when NCTQ rankings came out in 2013. As debates about federal regulations for teacher preparation were raging between 2014 and 2016, I started exploring behind-the-scenes activity that informed these policies and their potential impact on teacher education (Aydarova & Berliner, 2018). It soon became clear that university-based teacher education is increasingly regulated, monitored, and controlled by non-profit organizations and research think tanks (Cochran-Smith et al., 2018). Because of my professional commitments to anti-racist, socially just, and inclusive pedagogies that IO policy technocrats often dismiss (Aydarova, 2021), I was compelled to investigate their policy influences and value orientations through this project.

Study Design

This study is a part of a larger project that examined how IOs construct, circulate, and disseminate knowledge for teacher education policies in the United States (Aydarova, 2021, 2022b). Data collection proceeded through two stages. During the first stage, I collected IO policy texts and artifacts that focused on teacher education policies and reforms between 1998 and 2018. Because IOs’ publications are considered gray literature, many of their publications are not found in traditional academic databases and require manual searches of IO websites.

As I collected artifacts and analyzed IO policy activities, I noticed that policy actors from a subset of these organizations often formed coalitions to advance their proposals. As I demonstrated elsewhere (Aydarova, 2021, 2022b), actors from these organizations operated as a “flex net” (Wedel, 2009)—a policy network driven by shared theoretical commitments, advocating for similar measures, and pooling together resources to advance a common agenda. Among them were such organizations as the Council of Chief State School Officers (CCSSO), Teacher Preparation Analytics (TPA), the New Teacher Project (TNTP), Deans for Impact (DFI), NCTQ, Bellwether Education Partners (BEP), Data Quality Campaign (DQC), and several others.

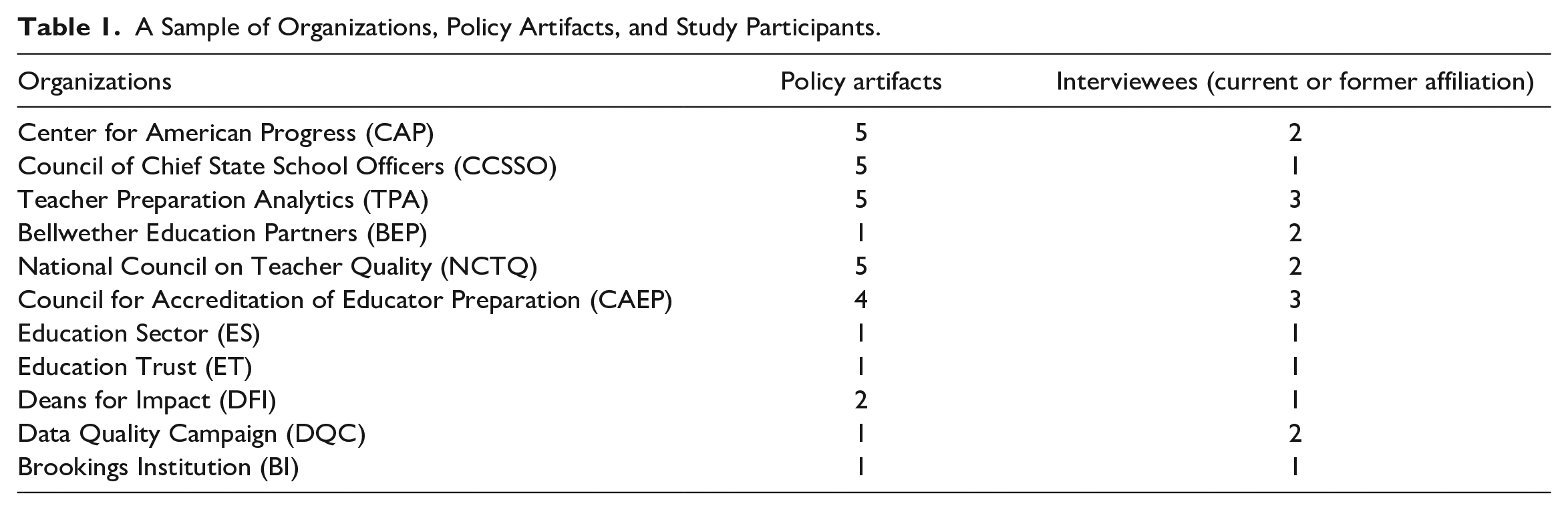

From the overall dataset, I chose 50 policy artifacts—primarily policy reports—that focused on proposals for holding teacher preparation accountable for various outcomes (for the full list, see the digital supplement). I focused on policy reports because they provided recommendations for how accountability systems should be designed and often influenced how teacher education policies were conceptualized. The criteria for inclusion in the corpus were as follows: (a) policy artifacts had to be produced by non-profit organizations, think tanks, or research institutes and (b) policy artifacts had to focus on teacher preparation and discuss accountability at length. I excluded from my sample proposals that focused exclusively on teacher compensation, teacher tenure, teacher evaluations, or school accountability more broadly. Table 1 captures a sample of organizations and their reports.

A Sample of Organizations, Policy Artifacts, and Study Participants.

I used a bibliographic analysis of references, citations, and endnotes to locate additional sources for the database. Because IO research production and policy advocacy have been previously described as an “echo chamber,” in which works of like-minded actors are regularly referenced (Goldie et al., 2014; Zeichner & Conklin, 2016), this step allowed me to check my assumptions about the flex net membership and ensure that the corpus incorporated the artifacts deemed important for the advancement of accountability regimes by the IO policy community.

The second stage of data collection involved 16 ethnographic interviews (Schensul et al., 1999) with IO experts who produced teacher preparation accountability proposals (August to December 2019). In order “to research meaning-making [and] to look at . . . how texts practically figure in particular areas of social life” (N. Fairclough, 2003, p. 15), I used purposeful sampling and invited the authors of the reports on teacher preparation accountability to participate in my study (Table 1). Interviews focused on IO policy analysts’ perspectives on problems in teacher education and their potential solutions, which invariably pointed to accountability reporting and data use for continuous improvement. During interviews, I also solicited recommendations for other policy actors that I should interview. Based on these suggestions, I learned how participants perceived who belonged to their networks, such as experts from other IOs, and those who they saw as opposition, such as the team at the helm of the American Association of Colleges for Teacher Education (AACTE). I used these suggestions to expand my sample and incorporate more perspectives into my analysis, such as adding interviews with CAEP and AACTE staff. The interviews were conducted in person in Washington, DC, over Zoom, or over the phone. They were audio-recorded and transcribed verbatim for analysis.

Data Analysis

The first step in my analysis involved repeated readings of interview transcripts and texts in my corpus. Initially, I applied in vivo coding (Saldaña, 2016). From the patterns I noticed in the in vivo codes, I turned to the theory of technocracy as it best illuminated the relationships between assumptions embedded in the proposals and the course of action IOs supported. Then, moving recursively (LeCompte & Schensul, 2013) between the theory of technocracy and the data, I revised and combined in vivo codes into a set of themes focusing on policy actors (“experts,” “policymakers,” “teacher educators”), policy activities (“advocacy,” “report writing,” “meeting with staffers,” “relationships with policymakers”), problem definitions (“low quality teacher preparation,” “achievement gap,”), policy solutions (“public reporting of results,” “quality indicators,” “changed governance”), and knowledge paradigms deployed in policy proposals (“empiricism,” “rational decision-making”), and so on. I used NVivo to apply those codes and themes to the data and created a matrix that captured patterns of arguments, positions, and relationships across IO texts (Miles & Huberman, 1994). Based on that matrix, I reconstructed how IO policy actors presented their arguments about accountability systems and how they legitimated their proposals by evoking empiricism and rationalism. While there is some variation in the elements that different actors prioritized, there were substantial overlaps in priorities they pursued and measures they promoted (Aydarova, 2021, 2022b).

During the final stage of analysis, I turned to CDA (N. Fairclough, 2013). Fairclough’s CDA tools were applied to the texts produced by IO policy analysts and the interview transcripts. N. Fairclough (2003) distinguishes between “internal” and “external” relations of texts (p. 36). Analysis of internal relations attends to linguistic elements within texts to excavate their embedded meanings. Focusing on IO actors’ descriptions of their policy activities, I used CDA tools to examine how technocracy as a discourse “establishes its particular set of subject positions, which those who operate within it are constrained to occupy” (N. Fairclough, 2001, p. 85). I paid attention to the vocabulary that was deployed to describe different participants or elevate the status of the IO proposals, pronoun usage to mark inclusion and exclusion (“we” vs. “they”), as well as evaluations of various actors’ responses to accountability measures. In addition, I examined nominalizations—instances when social processes are treated linguistically as entities that exist without agents guiding, directing, or influencing them (N. Fairclough, 2003). For example, the statement “societal inequality worsens” [ERN, 2018, p. 3] 1 uses a semantic nominalization to describe a social change with a seeming absence of agents who create it. In this study, nominalizations were used to identify depoliticized problems addressed by technical solutions.

Analysis of external relations focuses on texts’ relationships to other texts (N. Fairclough, 1992, 2001, 2003). To trace external relations of texts, N. Fairclough (2003) developed CDA tools that utilize the concept of intertextuality. This term was coined by Kristeva (1969/1986) based on Bakhtin’s (1981) notion of dialogism, which attends to the ways in which utterances produced by various social actors respond to, appropriate parts of, and anticipate utterances of others. In this analysis, utterance can be any type of text—whether a turn in a conversation, a novel, a letter, a speech, or a news report. In policy studies, policy reports, policy texts, as well as policy analysts’ explanations of policy ideas can be conceptualized as utterances populated by voices of others (N. Fairclough, 1992, 2013).

Multiple passes through the interview transcripts and policy artifacts allowed me to build textual and discursive chains that connected echoes of ideas between different artifacts. Those intertextual links manifested themselves in direct borrowings of ideas, such as a persistent use of NCTQ reports to describe the “chaotic” nature of teacher education that had to be “reigned in” through accountability reforms. They became evident in shared assumptions that created the veneer of consensus around IO proposals, such as the claim that public reporting of performance data would produce “continuous improvement.” Intertextuality also became evident in dialogic links between texts where opponents’ voices were included into texts to address difference in positions (N. Fairclough, 2003). Intertextual analysis became particularly important because prior research on the powerful has shown the importance of examining the discrepancies between what policy actors present publicly as official truths and how they describe various problems privately (Aydarova, 2019, 2022a; Walford, 1994). To capture these discrepancies, I utilized the CDA concept of rationalization that addresses the epistemic problem where speakers reveal in private conversations how their publicly espoused positions lack evidentiary support (I. Fairclough & Fairclough, 2012). This required establishing intertextual links between policy actors’ claims in policy reports that promoted accountability systems and interview segments that refracted the meanings of those claims.

Rigor and Trustworthiness

I worked to establish the trustworthiness of the study through a prolonged engagement (Lincoln & Guba, 1986) with IO policy activities (January 2016–December 2021). To ensure the rigor of the study, I deployed several methodological approaches for data collection and analysis, such as interviews with IO actors, ethnographic observations of policy events organized by IOs, as well as multi-step analysis of policy texts, reports, and ethnographic data (Marshall & Rossman, 2016). I wrote analytic memos and kept a research journal as I chronicled and tabulated the discourse analysis process (Greckhamer & Cilesiz, 2014).

Findings

I use the framework of technocracy to organize my findings. I first describe IO actors’ role as technocratic experts and how their policy activities contributed to the emergence of accountability regimes. Next, I present how IO policy analysts utilized technocratic discourses by depoliticizing social problems, offering technical solutions, and dismissing opposition.

Expert Rule and Exclusion of Professional Voices

The network of IOs that were influential in shaping discourses of teacher preparation accountability included such organizations as the Center for American Progress (CAP), CCSSO, NCTQ, CAEP, TNTP, DFI, and several others (Aydarova, 2022b). Working in networks or coalitions (e.g., #TeachStrong or CCSSO’s Network for Transforming Educator Preparation), IO policy actors positioned themselves as experts seeking to influence policy through relational proximity to policymakers.

In constructing their positions as experts on education and teacher education policy, IO analysts cast themselves as “people that [policymakers] think are credible and know some things that they ought to pay attention to” [Interview 1]. The position of “credible” experts afforded mutuality in relationships with policymakers. Relational modality among IO analysts and policymakers was constructed through clauses denoting two-directional actions despite potential power asymmetries between them (N. Fairclough, 2001). For instance, IO analysts share their reports and research findings with congressional staff; in turn, when congressional staff work on an issue related to the work that IOs have done, they reach out to IO analysts seeking their input into policy proposals they develop: We’ll usually do a big send to relevant policymakers over email of papers that we think folks will be interested in. Sometimes members of Congress or other policymakers will reach out to us if they’ve seen something and they want to ask questions or they know we’ve worked on a certain issue and they are interested in writing a piece of legislation on it. Or even just getting resources. Because sometimes they’re trying to brief their boss or prepare for a hearing or something like that. So, they reach out to us, and sometimes we reach out to them and ask for meetings. [Interview 3]

During interviews, several analysts noted that the emergence of teacher preparation federal regulations as well as the shift toward outcomes-based reporting emerged out of this mutual exchange between IOs and state actors. For example, one of the former Education Sector (ES) affiliates shared that he had received a request for a proposal for a teacher preparation accountability system: Department of Ed was working on teacher prep and they wanted good ideas. So, they reached out to us and said, “Why don’t you put your ideas down on paper?” And so we did as a team, we sat down and we wrote it pretty quick. We championed some of the outcomes-based reporting, which subsequently became that regulation. [Interview 2]

Similarly, when the CAP commissioned a report on teacher preparation accountability, Department of Education (DOE) staff attended its release [CAP, 2010] and followed up with requests for more information. DOE’s teacher preparation regulations proposals echoed the measures that were discussed in the report and in its public release [CAP Video, 2010].

During discussions of state-level policymaking where most teacher education policies are shaped, power imbalances shifted toward IO representatives. Of the national level IOs, NCTQ was described as one of the most influential organizations that established relationships with policymakers across various states. NCTQ experts explained their role in the following way: We try to put to [the states] best practices. It’s one on one. We never go to a state, they have to come to us for help and advice, and we do that a lot. There are very few states we haven’t worked with at this point. There are states that are grappling with a teacher prep policy. They want to run language by us, or they want to ask us just advice on how to do something better. It’s often a one-time phone call, sometimes it’s more extensive, they ask us to sit on a committee, but that’s far less frequent. [Interview 11]

The verbs in this quote point to an asymmetrical relationship where NCTQ experts were givers of expertise that had to be actively pursued by state policymakers. By evoking a hierarchical arrangement, IO analysts positioned themselves as expert authority on teacher education policies. At the same time, the relationship that emerged was still marked with a degree of mutuality (“we work with states”).

Philanthropic organizations facilitated relational connections between IOs and policymakers. The Gates and Schusterman Foundations were often discussed as most involved in teacher preparation accountability and redesign beyond funding projects: [IOs] publish reports and white papers, they hold conferences; congressional staff and state staff are invited to those meetings. A lot of big foundations now have a person who’s assigned to a state. They might not live in the state, but for example, in the states where the Gates Foundation funds work, somebody on the Gates Foundation team is like the main [person] to be in touch with, say the superintendent, or the deputy superintendent, or the president of the university; to share information, to answer questions, so they circulate that way. They frequently give briefings for congressional staff on issues that they’re involved in. [Interview 1]

This quote illustrates that philanthropic organizations disseminated IO reports and proposals among state-level actors. While the direction of relationships went from IOs to staff of the philanthropic organizations and then to state actors, verbs denoted inclusive relationships where “state staff are invited,” information is “shared,” questions are “answered,” and ideas “circulate.”

These relationships of mutuality also became evident in pronoun use. For example, in a CAP (2010) report, “we” was used to include policymakers and IO analysts: “We need to align producers and employers through incentives, rewards, and better public information about the problem” [emphasis added, p. 17]. In contrast, in contexts where teacher education programs were discussed “they” emerged: “Even when teacher preparation programs are able to measure teacher effectiveness, they need to figure out how teachers get these results” [emphasis added, p. 14]. The contrasts in pronoun usage revealed how relational boundaries were drawn (N. Fairclough, 2001)—IO actors and decision-makers belonged to the inner circle, whereas teacher educators were positioned outside of it.

This boundary-drawing was apparent in descriptions of arguments about accountability measures where IO policy actors offered perspectives “a whole lot different [from] the perspective of educator preparation programs” [Interview 3], got “into hot water with some teacher educators” [Interview 1], were “booed heavily” and “beat up” by teacher educators, were “drummed out of town by teacher prep,” or were viewed by teacher educators as “an enemy” [Interview 11]. This discursive positioning of experts—outside the field and often in opposition to it—increased their authority for offering solutions for “a field in disarray” that “has neglected to police itself” and showed “abdication of responsibility” for preparing teachers for the classroom [NCTQ, 2013, p. 94].

Depoliticization of Social Problems and Technical Solutions

The subject positions that emerged in IOs’ descriptions of policy activities were reinforced through problem constructions in their policy proposals. Three central problems dominated IO proposals for teacher preparation accountability: changes in economic systems, the “achievement gap,” and high teacher turnover.

IO reports and blog posts described the economy and labor market as “rapidly changing,” leaving families “perplexed” and “facing situations in which their children and grandchildren will be less prosperous than they are” [CCSSO, 2012, p. 2]. Observations that “societal inequality worsens” [ERN, 2018, p. 3] presented intensifying economic inequality, downward social mobility, and growing precarity as agentless processes (N. Fairclough, 2001). The absence of agents involved in creating those problems pointed to the depoliticization of social and economic issues (N. Fairclough, 2003). Diminishing safety nets, declines in state provisions, and reduced community supports emerged as implicit givens, thus normalizing uneven distribution of resources across the society. The absence of agents in these descriptions created a discursive void for who should be held responsible for the negative effects of these changes (N. Fairclough, 2003).

Attributions of responsibility did, however, emerge in discussions of disparities among students from different racial, ethnic, and socioeconomic backgrounds: The persistently large achievement gaps between Asian and white students and students of color, and between our affluent and low-income students, fuel doubts about the ability of our nation’s schools and teachers to ensure that all children will acquire the knowledge and skills necessary for full and productive participation in our society. [TPA, 2014, p. 1]

In this problem construction, the phrase “achievement gaps” was focalized by being placed early in the sentence as the reason why “the ability of our nation’s schools and teachers” should be questioned. This framing treated educational inequities as teachers’ failure to transmit knowledge and skills to their students. Apart from deploying this nominalization, the authors also utilized the pronoun “our” to bring the readers—policymakers and decision-makers—into the inner circle of those who together with them are best positioned to create change.

The problem of “high rates of turnover in low-achieving schools that have high proportions of students in poverty and minority students” [CAP, 2010, p. 17] was attributed to the low-quality professional preparation that teachers received. Nominalization “turnover” was used to describe the problem, omitting teachers as agents who leave the profession. This nominalization obscured dehumanizing conditions in chronically underfunded and de facto segregated schools that serve many minoritized students (Carter Andrews et al., 2016); the growing stress of working with over-tested children (Bybee, 2020); and the general burnout among teachers due to the growing accountability regimes in K-12 settings (Ryan et al., 2017). The discursive void created by the nominalization and silence over other contributing factors allowed the authors to place the responsibility for teacher turnover on teacher preparation programs.

Yet, despite the certainties and simplifications presented in reports, most IOs’ policy analysts discussed the futility of expecting results from educational systems if larger socioeconomic problems were not addressed. For example, one expert shared observations about a successful project that took a comprehensive approach to address safety nets, meal plans, as well as community supports as a part of their educational revitalization plans: [That project] correctly understands that this is a systematic problem and you can’t just fix education. You’ve got to look at why these students who are in the third grade can’t read and you’ve got to look at why these students are acting out in the classroom and what are they not getting in their life and all this sort of stuff . . . That’s another way of trying to approach it, from the systemic point of view . . . I don’t believe we’re going to fix education without fixing these other problems that we have. [Interview 5]

The discrepancies between reports that treated problems in the K-12 sector as teachers’ or teacher educators’ fault and interviews where IO analysts acknowledged systemic issues at the level of social, political, and economic inequalities revealed a rationalization (I. Fairclough & Fairclough, 2012). Anticipating that policymakers expected clear-cut answers that could be easily translated into actionable steps, IO policy analysts did not discuss the need for systemic social or economic reforms. Instead, they offered depoliticized problem constructions and technical solutions of holding teacher preparation programs accountable for K-12 students’ learning outcomes [CCSSO, 2012; CAP, 2010; ES, 2011; Education Trust, 2013; NCTQ, 2012, 2013].

At the same time, since teachers and teacher educators were discursively positioned as actors at fault for these problems, they could not offer solutions. This role would be filled by IO policy analysts. When IO coalitions emerged to introduce teacher education accountability measures (Aydarova, 2022b), IO analysts stated that “that major changes in teacher prep are afoot, with or without the buy-in of higher education” [NCTQ, 2011].

Accountability Proposals and the Reshaping of Teacher Education Governance

Given the discursive void created by excluding professional voices, IO policy analysts offered several proposals for accountability systems that could reform teacher education. Among their proposals were the increase of the federal role in monitoring the quality of teacher education (e.g., federal regulations for teacher preparation), new accreditation approaches (CAEP), the introduction of inspectorate programs (such as Teacher Preparation Inspectorate), restructuring of Title II state report cards, the use of teacher preparation performance dashboards for public reporting at the state level, as well as the development of longitudinal systems for monitoring program performance over time [DQC, 2017].

With titles like Measuring What Matters [CAP, 2010; CCSSO, 2018], IO texts laid out claims about the priorities the field should be pursuing. While there was some variation, several measures appeared consistently across different proposals: candidate classroom performance (based on edTPA or other standardized assessments), graduate job placement and retention, graduate survey, teacher effectiveness as measured by value-added or growth scores, as well as selectivity during admissions. These measures were repeated across a host of reports by CAP [2010], CCSSO [2012], CAEP [2015], NCTQ [2012, 2013], DQC [2017], TNTP [2017], TeachPlus [2018], and others. The repetition of these measures served as a textual chain that linked IOs’ policy proposals into a web that sent a message of alleged consensus (N. Fairclough, 2003) over accountability metrics. Commonalities among proposed measures emerged not only because IO analysts “talk to each other about teacher prep” [Interview 3], but also because in some cases, it was the same authors writing reports for different organizations. For example, TPA staff produced reports for CAEP [TPA, 2014] as well as CCSSO [2016, 2018].

IO analysts argued that reporting data on these outcomes was necessary to “signal” to employers, potential candidates, policymakers, and the public, which programs prepared high-quality teachers. Across the reports, the shared assumption was that public reporting of these performance data would spur change in the programs and improve the quality of teaching in K-12 schools. By bringing failing teacher education programs into the spotlight, accountability systems could “break the cycle of low performance that often sends poorly prepared new teachers back into the same low-performing school systems” [ES, 2011, p. 13]. In addition, some IO analysts argued that outcomes-based measures positioned independent teacher preparation providers in a more favorable light, which would support their proliferation if redesigned accountability systems were implemented across all the states [ERN, 2017].

Less public became the discussions about how accountability systems were changing systems of governance. For example, an obscure CAP [2013] report issued without typical press releases or media announcements noted that new accountability systems would open teacher education programs to more external control than is typical for professional programs housed in higher education institutions. In more stark terms, NCTQ analysts explained that their accountability measures were supposed to address the problem that “the field doesn’t govern itself. It leaves it to others to govern. It leaves it exposed to groups like NCTQ. It puts the profession always in defense mode rather than policing itself” [Interview 11]. Ultimately, the overt goals of “safeguard[ing] public interest” [NCTQ, 2019] obscured how accountability systems emerged as an opportunity to reshape the governance of teacher education and increase external actors’ power in directing reform processes.

Technocratic Rationality and Scientism

Over time, IO accountability proposals underwent the process of codification—“a narrowing down the range of discourses for representing the world” (N. Fairclough, 2001, p. 207). This narrowing eliminated political, philosophical, and moral dimensions of debates, creating a closure around accountability as a discourse of scientific rationality. The focus on “science,” “evidence,” and “research-based” approaches emerged both during the interviews and in the reports IOs published. For example, one of the participants explained: The one thing that I would like to see is for educators to believe in science. I don’t mean like creationism versus evolution, but believe that we should be driven by evidence, where evidence exists. I think that would make a huge difference to how we all do our work in education and in teacher education. [Interview 1]

Along similar lines, NCTQ leadership described their primary mission as “everything we do is about trying to pressure the field to embrace what is research based and evidence based and teach it and practice it” [Interview 11]. Ironically, however, when teacher preparation standards for Teacher Prep Review [NCTQ, 2013] were developed, “there wasn’t a scintilla of research” supporting some of them. In those cases, the standards were “not based on research [but on] a certain common-sense indicator” [Interview 11].

One policy tool that, from the experts’ perspective, exemplified “rational and scientific ideas” [TPA, 2013, p. 32] was the Key Effectiveness Indicators (KEI) Framework. It was first commissioned by CAEP with funding from Pearson to use “objective sources” [p. iii] for mapping out what a national system of teacher preparation accountability could entail. The report provided the justification for the structure of CAEP standards [Interview 6]. Subsequently, KEI informed to varying degrees the work of CCSSO’s Network for Transforming Educator Preparation [CCSSO, 2016, 2017, 2018], TNTP [2017], DFI [2016], TeachPlus [2018].

Like the measures advocated by other IOs, the KEI framework included four domains of program assessment data: “candidate selection and completion,” “knowledge and skills for teaching,” “performance as classroom teachers,” and “contribution to state needs” [TPA, 2016a]. Those were broken down into 12 indicators of program performance and 20 measures “that operationalize the indicators.” KEI included such elements as scores on content knowledge tests, performance assessments, teacher effectiveness evaluated through classroom observations, as well as value-added or growth models, data on job placement and retention, and others. All measures were quantitative scores that could be standardized across programs. Even though the authors noted that “there is no body of scientific literature . . . that unequivocally points to specific measures” [TPA, 2016a, p. 4] for accountability systems, in reports for policymakers’ use they presented KEI as “based on empirical research” [CCSSO, 2016, p. 2].

The KEI implementation guide [TPA, 2016a] utilized vocabulary of scientific studies—“triangulation,” “reliability,” “validity,” “correlations,” and others—to underscore the framework’s ability to detect variation and determine causes for strong or weak performance among programs: Four domains . . . provide a multi-dimensional scan of teacher preparation programs that will be valuable both for indicating key factors that may be account [sic] for the strength of programs’ performance on the various measures and for providing triangulation with other measures to achieve a more reliable analysis of programs’ strengths and weaknesses. [emphasis in the original, TPA, 2016a, p. 5]

This borrowing of terminology from science contributed to blurring the genres of a policy report and a research study. The guide was also highly prescriptive with guidelines for measures and data reporting. Academic strength should be reported on two cohorts each year—“entering candidates” and “exiting completers” [TPA, 2016a, p. 10]. Content knowledge should be reported based on the scores that test developers identified as indicators of “a strong command of subject knowledge” (p. 13) or as a norm-referenced value, as 50th or 60th percentile in the statewide scoring distribution (but not pass rates because those are “information-poor” [TPA, 2016a, p. 13]). On performance assessments, such as edTPA, programs had to report “the mean scores and score distribution of program” [p. 15] to show the variation among the completers. By providing narrow specifications for reporting requirements, the framework staked a claim in scientism. Precise data would provide exact problem definitions and offer clear solutions.

In interviews, however, precision gave way to uncertainty over what the tool could offer. As one of the experts explained how KEI was picked up and implemented by different states, he made the following observation: It’s an extremely difficult thing to do and it’s more an art than a science in many ways because the data aren’t perfect and you don’t know really going in the extent to which the scores on the indicators from different programs really differentiate anything of substance. When I worked in [state X], I remember I went to talk to their state Board of Education and I explained to them that this was kind of like a car. On your dashboard of your car you’ve got lights. The lights come on. Sometimes it’s a false positive, right? Sometimes the lights come on like “check engine” light. Maybe a false positive, maybe not. But you don’t really know what’s wrong until you get under the hood. Key Effectiveness Indicators are meant to be much more like a dashboard and not like some kind of a computer that gets under the hood and measures things. [Interview 5]

This observation reveals another rationalization (I. Fairclough & Fairclough, 2012). Public documents, reports, and presentations promoted KEI as a “scientific” tool that could detect minor variations and allow strong inferences about program quality. Yet in more candid conversations, the story changed. Instead of ironclad science, the deployment of outcomes-based indicators became “an art” that had to be approached with caution.

Furthermore, when new evidence emerged, it challenged some of the key assumptions that accountability regimes were based on. Most of the reports that promoted teacher preparation accountability referenced the Gates Foundations’ Measures of Effective Teaching Project as a key intervention that demonstrated how teacher effectiveness can be improved using evaluation tools. When the RAND Corporation [2018] reviewed the impact of the project on student learning, it revealed disappointing results. Evaluations of teachers were shaped and constrained by broader social, political, and economic factors; implementation of evaluation systems did not result in any meaningful changes in student achievement. Despite these revelations, IO analysts continued offering various states technical assistance for developing and implementing new data systems. Using the accountability frameworks they designed, IO analysts worked to “totally change the needle, to drive up their game to improve their measures, to improve their data, their accountability systems” [Interview 8]. Accountability codified as science with formulas of performance measures positioned IO analysts as indispensable actors for developing, implementing, and ultimately upholding technocratic regimes of truth.

Dismissal of Opposition

The construction of accountability regimes proceeded in the context of ongoing contestations around some of the selected measures. One element under debate was the use of K-12 students’ test scores in the evaluation of teacher preparation programs. IO analysts knew about teacher educators’ concerns with the methodology, validity, and reliability of value-added measures. For example, several intertextual links (N. Fairclough, 1992) emerged in one of the Brookings Institution (BI) reports. Commenting on the “tensions” around the use of valued-added scores in federal regulations, a blog post author noted: Letters written in 2012 to the committee from deans of preparation programs give an indication why they thought the use of test scores was a bad idea. Ironically, the letters argue that using test scores was not supported by scientific evidence. In light of what the National Research Council reported, the deans appear unconcerned about being hoisted on their own petard. One letter wrote that “We need a great deal more research across the K-12 years and subjects that are taught to know what teachers do that leads to the best learning outcomes, and we need valid and reliable observation measures to assess this.” But if this is the case, what is the basis for their current programs? [BI, 2014, p. 4]

On one hand, this segment referenced a report by the National Research Council (2010), which, contrary to BI expert’s claims, called for more research on the relationship between teacher preparation and student outcomes instead of unequivocally supporting the use of test scores to evaluate programs. On the other hand, the voices from the field were incorporated through the use of a direct quote from the deans’ letter. Yet, this quote was used not only to dismiss the concerns, but also to challenge the existential premises of teacher education programs altogether by questioning their “basis.” The use of the phrase “hoisted on their own petard” in relation to education deans was also telling. The opposition to value-added testing coming from the field was presented as the reason for their potential demise.

Similar patterns of dismissals were evident in discussions of impact in CAEP standards. An interviewee who held leadership positions in CAEP and maintained strong ties with IOs discussed the challenges faced by the CAEP commission when value-added measures were introduced into the accreditation standards: Value-added assessment was a sore point with some people. They didn’t want it in there at all. That was controversial for the field, the value-added assessment and the idea of using data to evaluate the performance of the graduates and hold programs accountable for that. [Interview 7]

Subsequently, the interviewee described value-added or growth measures for evaluating programs as “cutting edge” and “path breaking,” while the opposition to these measures was written off as a reaction against measures that “had [not] been part of the culture of teacher preparation programs.” Despite the opposition from the field, CAEP maintained measures of impact in the 2015 standards. When the National Education Policy Center [2016] issued a report documenting the objections to outcomes-based accountability, CAEP issued a statement defending its approach and justifying the use of value-added or growth models as rooted in empirical research [CAEP, n.d.].

Instead of engaging in a dialogue over critiques and evidence, the most common discursive tactic utilized by the IO policy analysts was to dismiss opposition as unwillingness to change. This dismissal was often accompanied by “negative evaluations” (N. Fairclough, 2001, p. 97) of those who did not sign on to the accountability agenda. NCTQ [2014] characterized the field of teacher education as “chaotic and ungovernable” (p. 14). Borrowing from NCTQ’s [2013, p. 35] critiques of university-based programs, a guide by the Philanthropy Roundtable referred to teacher education as “notoriously difficult” to change and “languish[ing] without repercussions” for poor performance. DFI described those who had reservations about new accountability designs as “nervous,” “hostile,” playing “defense,” and “tear[ing] apart any and every new proposal” [DFI, 2016, p. 15].

In contexts where teacher educators’ opposition was addressed in revised IO proposals, greater backlash ensued. After CAEP modified some of its standards to accommodate teacher educators’ concerns, Education Reform Now (ERN) called for the creation of a new accrediting body that would bypass input from teacher educators altogether and pursue more stringent outcomes-based standards because “the cartel of teacher preparation program providers needs to be broken” [ERN, 2017, p. 13]. This proposal underscored that not teacher educators but other stakeholders, such as superintendents, would be better suited to choose the metrics for evaluating the quality of teacher preparation programs.

Discussion

The purpose of this project was to examine how IO policy analysts utilized technocratic discourses to construct accountability regimes. This study makes four contributions to scholarship on teacher preparation accountability. First, it captures how relationships of mutuality between policymakers and IO policy analysts facilitated information flows between them. Through formal and informal interactions, policy proposals for the construction of accountability regimes were shared and circulated between IO analysts, Congress staffers, DOE staff, and state officials. Philanthropic organizations supported this work not only by sponsoring some of the reports and projects (Scott & Jabbar, 2014) but also by acting as mediators between national intermediaries and various state level actors.

Second, it documents how technocratic discourses facilitated a shift in governance and decision-making structures. IO proposals created a veneer of consensus around a set of outcome measures that were promoted by most actors within the flex net and beyond (Aydarova, 2021, 2022b). As outcomes-based accountability proposals were put forward, they were presented as tools for informing the public and improving programs. Below the surface, the focus was on shifting governance structures, so that teacher education would be more open to external “policing.” The focus on quantitative measures that are standardized across programs and contexts made teacher education easier to steer and manage from a distance (Ball & Junemann, 2012). The pursuit of creating new governance structures came alongside the codification of accountability as a discourse of science, which bolstered the legitimacy of accountability proposals as “research-based” and precision-driven. This codification also made technocratic experts indispensable for implementing these systems because they were “fluent in expert languages” (Centeno, 1993, p. 318). For instance, even though TPA is a private company—an entity rarely associated with policymaking—its associates became de facto policymakers (Scott et al., 2017) through the consulting services that informed the development and introduction of teacher preparation effectiveness dashboards in Georgia, Illinois, and several other states.

Third, this study documented how technocratic discourses deployed by IOs denied teacher educators agency in policy debates. Relational frameworks where policymakers relied on IO expertise positioned teacher educators as outsiders. By placing the responsibility for educational inequities on teachers and programs that prepared them, IO analysts further discredited educators’ voices in reform processes. In the midst of struggles and contestations around the codification of accountability as science, teacher educators’ concerns about value-added measures were dismissed as aversion to change. New proposals sought to exclude teacher educators’ voices from deliberations altogether, treating them as “a cartel . . . to be broken” [ERN, 2017, p. 13]. These observations shed light on the obstacles teacher educators face in policy contexts when they respond to states’ efforts to ramp up teacher preparation accountability (Aydarova et al., 2022b). Not only do they have to offer alternative accountability models, but also gain a position as credible subjects with professional expertise rather than insubordinate objects of reform.

Finally, this study underscored that depoliticization of social issues is an important element of technocracy as a regime of truth (Foucault, 1980). This observation sheds light on the challenges of centering justice and equity in teacher education. By focusing on what is measurable and observable, accountability regimes that currently govern the field are by design averse to any political commitments or ethical obligations, such as equity or justice. While alternative accountability models that center equity, cultural responsiveness, and social justice (Education Deans for Equity and Justice, 2019; Hood et al., 2022) offer a corrective to evaluation measures focused on “thin equity” (Cochran-Smith et al., 2018), they are unlikely to gain traction unless accountability regimes based on technocratic discourses are dismantled.

Implications

This study has addressed an area that has been previously overlooked in teacher education research—how IO actors have drawn on technocratic discourses to construct accountability regimes in teacher education. As a result of these changes, policies have taken a turn toward more authoritarian decision-making—a common preference among technocratic experts (Centeno, 1993; Fischer, 1990). For this reason, it behooves teacher educators to pay greater attention to the reforms that IO experts advocate for and critically interrogate the discourses embedded in them. This can be done by following the blog posts, newsletters, and social media announcements of such IOs as NCTQ, CCSSO, DFI, Manhattan Institute, American Enterprise Institute, and others. While AACTE has begun to issue preemptive responses to NCTQ’s reports and the National Education Policy Center has published critiques of accountability proposals, these actions tend to be isolated incidents that do little to disrupt IOs’ technocratic agendas.

In that regard, more collective engagement in policy advocacy that centers humanistic priorities of education and justice-oriented teacher education is necessary (Aydarova et al., 2022b). As teacher educators work to reclaim their voice in policy deliberations (Aydarova et al., 2021, 2022a) alternative accountability models centered on democratic responsibility and “thick” equity (Cochran-Smith et al., 2018) should be shared through public and policy forums. Changes in program practices also deserve attention. Instead of performing compliance and becoming complicit in the technocratic redesign of teacher education, teacher educators should attend to the matters of moral and ethical responsibilities that equity-oriented work requires (Anderson, 2019; Philip et al., 2019). Rather than pursuing technical solutions for depoliticized problems, teacher education programs should focus on addressing historical injustices (Annamma & Winn, 2019) and building solidarity with communities pursuing transformative justice (Zeichner et al., 2016).

This project has focused on policy discourses of external actors and presented an analysis of the tools, mechanisms, and priorities that technocrats have introduced. Although technocratic discourses have influenced accreditation standards for teacher education (Aydarova, 2021), questions remain regarding the effects of these changes on practices and cultural forms within various programs. Future research should utilize ethnographic methods to analyze the effects of technocratic accountability regimes on political, moral, and ethical commitments of teacher education. Furthermore, future studies should examine whether democratic accountability models are feasible as alternatives to the technocratic models of accreditation and state monitoring. More specifically, what deserves attention is whether the professional community can reach consensus on pursuing alternative accountability models and can influence decision-makers’ perspectives on how teacher education should be evaluated. Ultimately, it is the field’s commitments to equity, diversity, and justice that will determine its ability to respond to the historical challenges. Without a change in evaluation mechanisms and accountability models, those commitments could wither because they remain peripheral (Philip et al., 2019) when technocracy operates as a regime of truth.

Supplemental Material

sj-docx-1-jte-10.1177_00224871231174835 – Supplemental material for Intermediary Organizations, Technocratic Discourses, and the Rise of Accountability Regimes in Teacher Education

Supplemental material, sj-docx-1-jte-10.1177_00224871231174835 for Intermediary Organizations, Technocratic Discourses, and the Rise of Accountability Regimes in Teacher Education by Elena Aydarova in Journal of Teacher Education

Footnotes

Acknowledgements

The author is grateful to Nancy Fichtman Dana and Peter Youngs for their feedback on earlier drafts of this manuscript. The author would also like to thank Bevin Roue and anonymous reviewers for support in revisions of this work.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was funded by the Spencer Foundation Small Research Grant #202000159, the American Fellowship from the American Association of University Women, and Auburn University’s Intramural Grant Program.

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biography

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.