Abstract

Posttreatment variables are covariates that are preceded by the main explanatory variable. Their inclusion in a statistical model does not ‘control’ for their influence on the relationship of interest, and it does not substitute for a mediation analysis. Likewise, a coefficient estimate of an appropriate ‘control variable’ cannot be interpreted as a causal effect estimate. While these facts are well-established in various fields across the social sciences, their recognition in the field of peace and conflict studies is more limited. Originally collected data on recent publications from leading peace and conflict journals reveal that a large majority of evaluated articles condition on posttreatment variables, demonstrating how a review of these fallacies can help to substantially improve future research on peace and conflict. Drawing on a broad set of literature and using graphical approaches, I offer an intuitive explanation of the logic of posttreatment variables and clarify common misconceptions. Building on recent developments in methodology and software, and by deriving conditions for bounding using analytical bias expressions, I discuss avenues for dealing with posttreatment variables in observational studies. The article concludes with a discussion of implications for applied research.

Introduction

Which variables should researchers not condition on (not ‘control for’) in empirical research on peace and conflict? Research design and variable selection are areas in which there are no easy answers available. While the computation of a regression is usually just one click away, which covariates to include in that regression no computer can tell (King, Keohane & Verba, 1994). 1 Therefore, questions of designing research and selecting variables have been studied abundantly. This article attempts to raise renewed awareness to the challenge of variable selection and discusses avenues to address common issues that are particularly relevant to applied research. In doing so, emphasis is given to straightforward and accessible explanations rather than to statistical depth. The target audience of this article are empirical peace and conflict researchers.

Most quantitative research on peace and conflict seeks to approximate causal claims using observational data. While observational research designs can never match the gold standard of design-based inference, the use of appropriate statistical methods, availability of high-quality data and careful model design can go a long way. The practice of using ‘control variables,’ that is, of conditioning on covariates to partial out the effect of an explanatory variable of interest, attests to scholars’ effort to transcend claims of mere correlation (King, Keohane & Verba, 1994). 2

In support of this effort, I argue that empirical research in the field of peace and conflict studies does not pay enough attention to the question of causal sequence. The causal ordering of variables matters for the estimation of causal effects. One well-known example of the importance of sequence is the topic of ‘reverse causality:’ when estimating the effect of an explanatory variable of interest (the ‘treatment;’ X) on a variable to be explained (‘outcome;’ Y), the estimate may be distorted by a reverse effect, that is, not only does X influence Y, but also does Y influence X. An example is the effect of democratization on economic prosperity, and vice versa. The importance of reverse causality is well-established and commonly considered in the curriculum, reviewer comments, conference discussions and editor reports. This article focuses on an issue of similar importance and related to causal effect direction, but which receives much less attention: treatment effect estimates are sensitive to the causal sequence of the covariates included for conditioning (also called ‘confounders’ or ‘control variables;’ Z). If the treatment variable causally precedes a covariate, this covariate is called a ‘posttreatment variable’ and its inclusion in the analysis biases the treatment’s total effect estimate. This concern over causal direction between the treatment and covariates should receive just as much attention as the question of causal direction between the treatment and the outcome.

Addressing the potential for posttreatment bias can be simple, while ignoring it can substantially distort estimation results – even making the coefficient point in the opposite direction – and lead to an erroneous (failure of) rejection of the null-hypothesis. Using graphical illustrations and drawing on a broad body of methodological literature, I examine core concepts and provide an accessible explanation of why peace and conflict research should care about posttreatment variables. Reviewing classical approaches and recent methodological advances, and by analytically deriving conditions for bounding exercises, I reflect on avenues for how to avoid common sources of bias related to variable selection, including omitted variable bias (OVB), selection bias and over-control bias.

This article contributes to the peace and conflict research programme and to social science methodology in several ways. A review of all publications between January 2018 and May 2021 in the Journal of Peace Research (JPR) and the Journal of Conflict Resolution (JCR) indicates that 75% of relevant articles may suffer from posttreatment bias and, therefore, may report substantially misleading results. Only a fraction of these studies shows any awareness of this issue. As I will show below, many mistakes can be easily avoided or addressed. Therefore, an accessible explanation of the role of posttreatment variables provides an important opportunity to substantially improve empirical research in the field of peace and conflict. At a more foundational level, the article touches on core empirical concepts and reiterates the role of covariates in multiple regression. The extent to which this may seem substantively trivial is exactly what underlines its paramount importance: many years after the works of, for example, Achen (2005) and Clarke (2009, 2005), my review of publications in peace and conflict studies indicates that some of the most basic tenets still find limited application. Including the ‘usual set of controls’ without considering the bias they may induce, and a standard to interpret covariate coefficients, are among the practices that warrant renewed attention.

While the main goal of this primer is to provide a pedagogical and targeted read on the topic of causal sequence, it also offers a few methodological innovations. First, drawing on a broad set of literature across the fields of political science, psychology, biostatistics and epidemiology, it offers a concise overview of a large number of technical contributions that, directly or indirectly, touch on the topic of posttreatment bias. In doing so, it highlights how the separate literatures on variable selection, reverse causality and mediation analysis intersect and can be applied to conceptualize posttreatment variables. For example, as of this writing there is no dedicated study on the practice of lagging covariates to ameliorate posttreatment bias, but some lessons can be inferred from research on reverse causality. Second, it is the first to offer a systematic assessment of the conditions under which a treatment effect can be bounded by including and excluding a ‘proxy control’ while allowing for collider-stratification bias. Using analytical bias expressions, I show that the number of scenarios that allow bounding based on effect directions is very limited and how, among these few scenarios, bias is unevenly distributed.

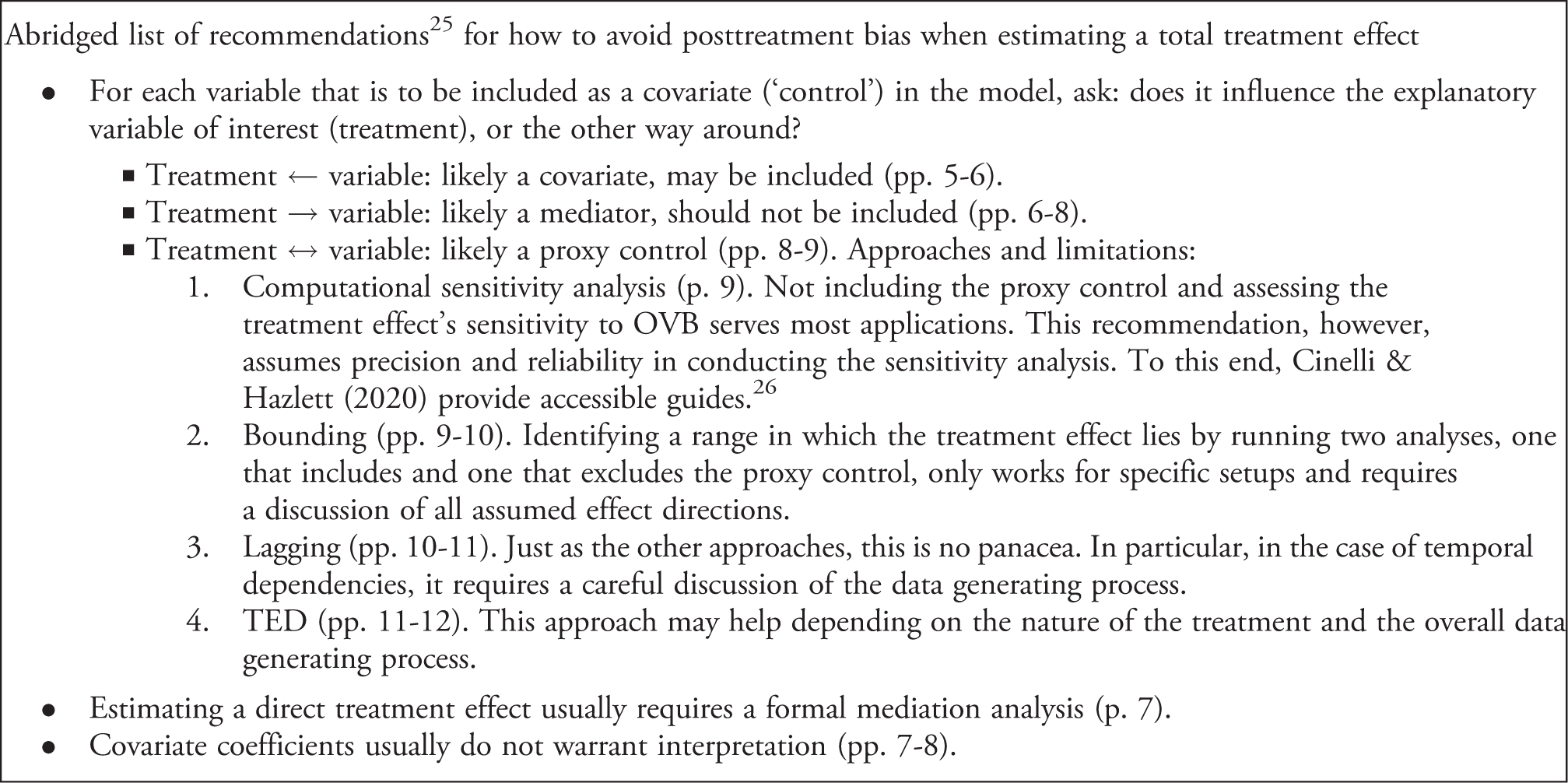

The article proceeds as follows. First, I review recent publications in JPR and JCR to illustrate the extent to which posttreatment bias may threaten inference in the contemporary peace and conflict research programme. I then systematically introduce and explain the concept of posttreatment variables and the distinction between confounding and mediation. Third, I outline why conditioning on a posttreatment variable biases estimation in the context of different research objectives, namely the total and direct treatment effects, and how to recover unbiased results in a simple research setup. This is followed by a discussion of the challenge of ‘proxy controls’ in applied research and potential avenues to address it. These include computational sensitivity analyses, deriving bias directions for bounding in analytical sensitivity analyses, the lagging of covariates and the total effect decomposition approach. The article concludes with a summary of implications and an abridged checklist of key takeaways.

But everybody knows this, right?

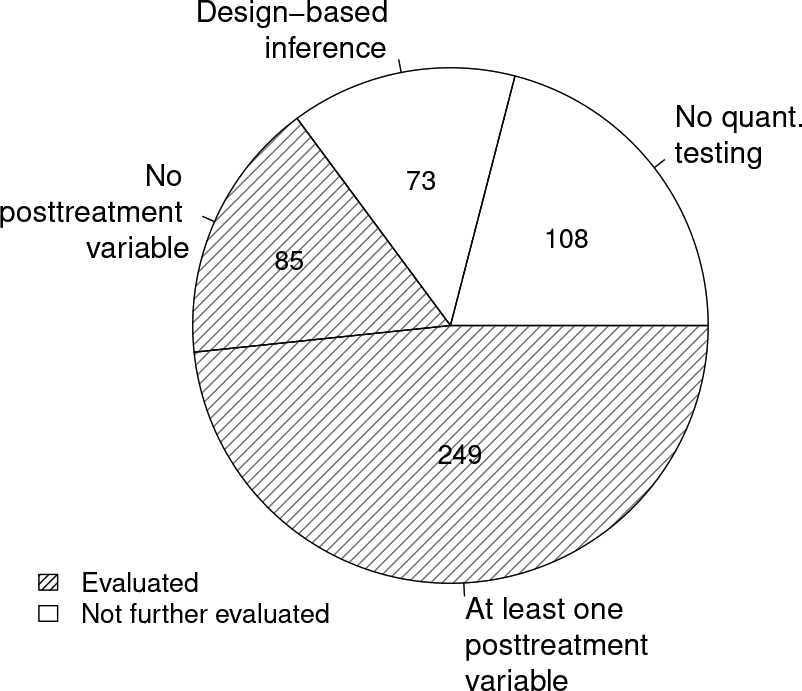

Posttreatment bias is a known issue in the social sciences and systematic scholarship on it dates back to Rosenbaum (1984). To understand the extent to which observational research on peace and conflict considers this threat to inference, I collected data on all publications in JPR and JCR between January 2018 and May 2021. Of all articles that employ a ‘standard’ regression framework, 3 75% condition on covariates that may be influenced by the treatment (249 out of 334) in their main analysis. 4 The proportions are visualized in Figure 1.

Article sample

In other words, three-quarters of reviewed articles may report biased results. The most common offenders are so-called ‘standard control variables,’ such as gross domestic product per capita and regime type, which are often included in regression models without considering their necessity and appropriateness as covariates. Another common practice among the evaluated studies is to include multiple treatment variables relating to different hypotheses in the same regression model, so that they all condition on each other. As I will show below, the resulting bias can be substantial: including an inappropriate covariate is just as problematic as failing to account for relevant confounders and means risking substantively wrong conclusions and misleading policy recommendations. In other words, using an inadequate ‘control variable’ is just as problematic as failing to ‘control for’ an adequate one.

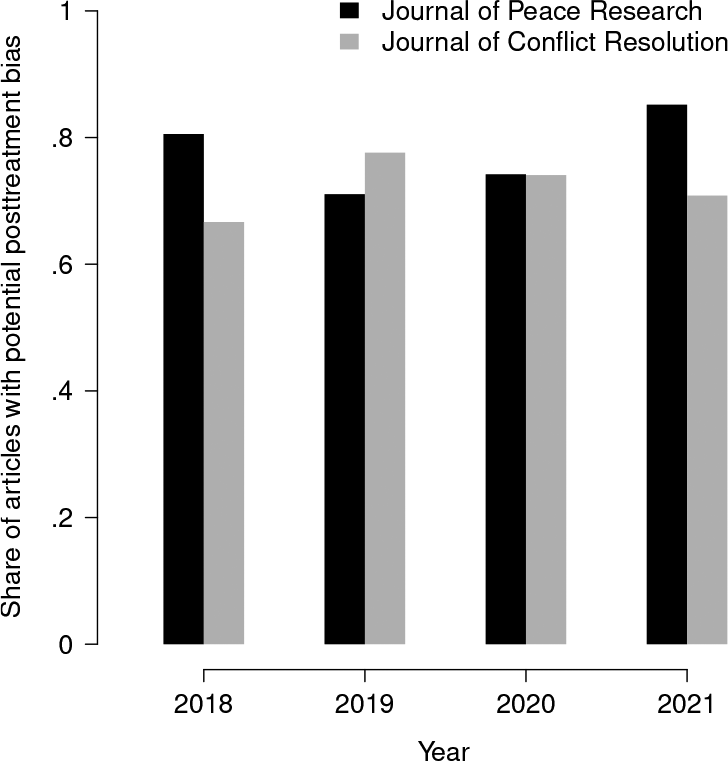

Journal comparison over time

As attention to posttreatment bias has increased in recent years, it may be argued that contemporary awareness among peace and conflict researchers is higher than suggested by the pie chart, and that it just takes time for this new generation of research to fully emerge. However, Figure 2 does not support this notion. Comparing the yearly share of studies that include posttreatment variables, there is no sign of improvement over time.

Alternatively, it may be argued that this high share of studies that include posttreatment variables is not due to a lack of awareness, but intentional: as I will show below, deciding on the inclusion of covariates can present a difficult trade-off. Sometimes, choosing to condition on a ‘bad control’ (Angrist & Pischke, 2009) is a conscious decision in favour of mitigating OVB and accepting the potential of posttreatment bias. Such a decision requires careful consideration of the assumed data generating process and empirical model. To learn the degree to which the high share of inclusion of posttreatment variables is based on conscious decisions, information on manuscripts’ operationalization and results discussion were also coded. Out of all 249 articles that include at least one posttreatment variable in their main analysis, only 28 show any awareness of this issue in their discussion. 5 This lack of transparency in the face of possibly large bias that may, in some cases, substantially distort the results is concerning and suggests unawareness among peace and conflict scholars.

This conclusion finds additional support in the fact that 63% of all coded articles include an explicit interpretation of covariate coefficient estimates. As I will discuss below, in most applied cases in which researchers use covariates to minimize OVB in a treatment effect estimate, interpreting the covariates’ coefficient estimates is not tenable. Put differently, the widespread norm in peace and conflict research of interpreting ‘control variable results’ is, at best, futile and, at worst, substantially misleading.

In sum, the topic of ‘control variables’ requires renewed attention, and a primer on the relevance of their causal sequence is warranted. As the sample of coded articles shows, this is not an issue pertaining to any individual article, but is something that the field of peace and conflict research faces collectively. Research is not produced in a vacuum. The lack of transparency and discussion of this source of bias, as well as the continued norm of interpreting covariate coefficient estimates, raise important questions not only for individual authors, but for colleagues and supervisors who discuss manuscripts at draft stage, and for reviewers and editors who do so at publication stage. For example, conference discussions and peer review tend to centre on the risk of OVB (the notorious ‘Have you controlled for…?’) while disregarding over-control bias (asking ‘How do you justify controlling for…?’). 6 For any research project, even if accepting the potential for posttreatment bias was a tenable option amid worse alternatives, such meaningful decisions warrant a transparent discussion. Below, after a short introduction to key concepts, I provide explanations aimed at helping peace and conflict researchers to navigate these choices and to be transparent about the assumptions they require.

Confounder or mediator?

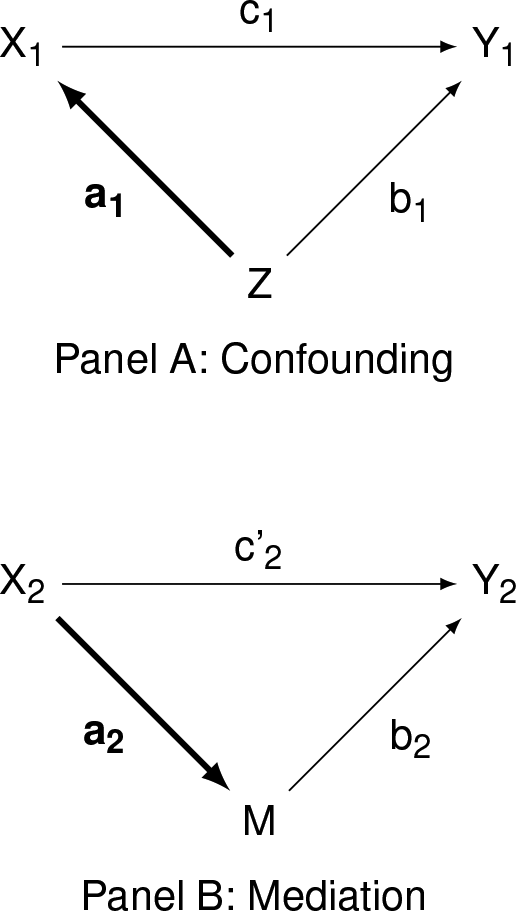

Observational peace and conflict studies that are interested in the estimation of a directional effect of a treatment variable on an outcome variable include additional covariates in their analysis. These covariates are included for the purpose of mitigating confounding. Not conditioning on a confounder means risking OVB. However, not all variables are confounders and qualify to be held constant, and their causal ordering can give important clues on their adequacy (Gelman & Hill, 2007; Pearl, Glymour & Jewell, 2016). Basic model

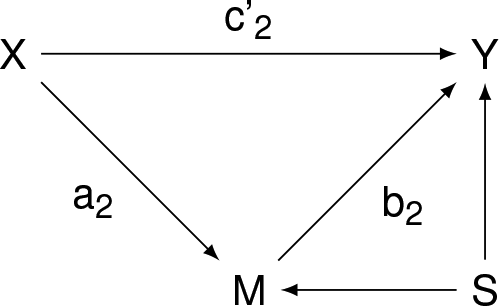

Most studies are interested in estimating the overall (total) effect of X on Y, which is denoted in Panel A as c

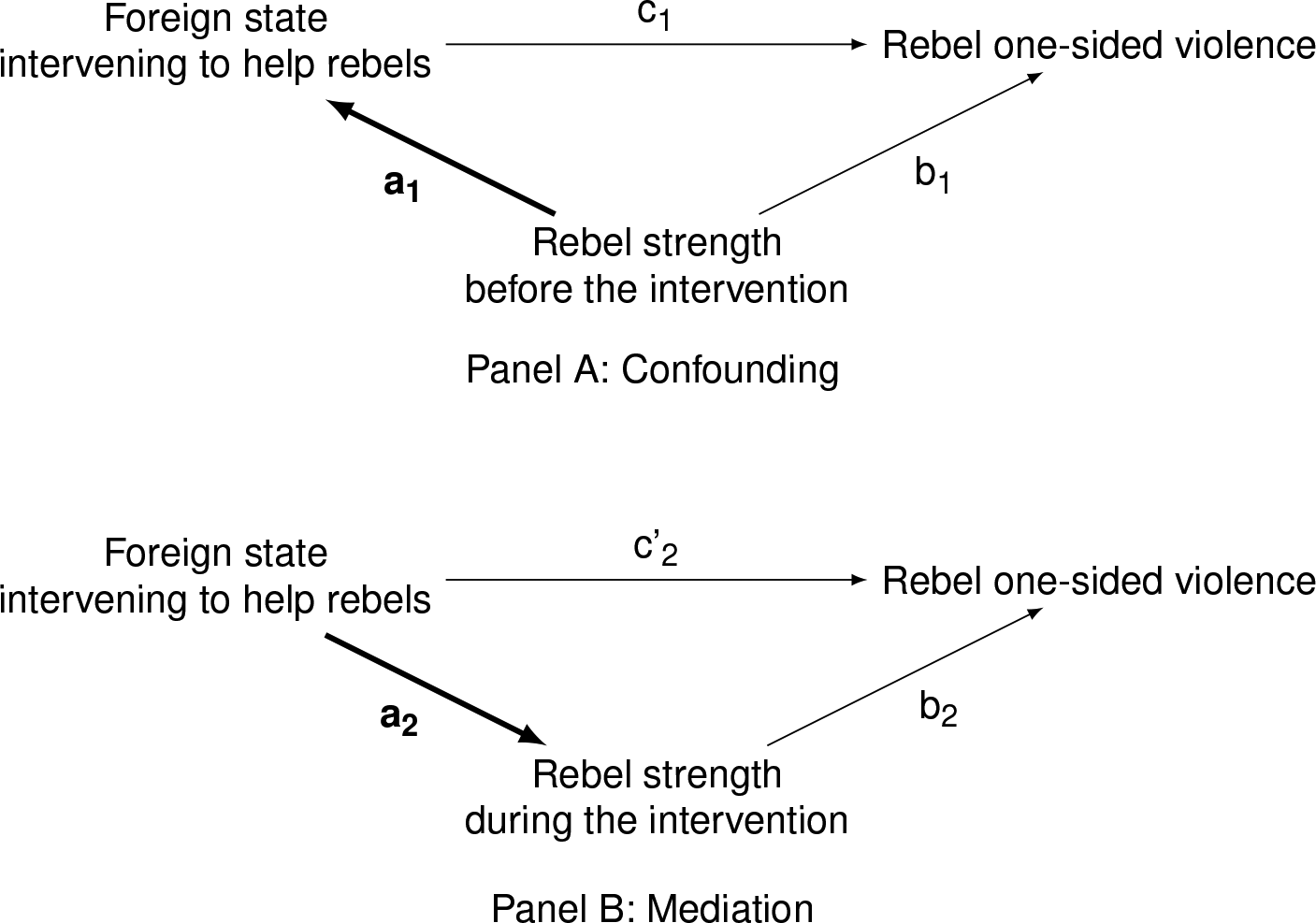

Figure 4 mirrors Figure 3, exemplifying the variables X, Z, M and Y with actual concepts from peace and conflict studies. This example is loosely based on Wood, Kathman & Gent (2012), though adjusted and simplified for the purposes of illustration. A research hypothesis for this setup may read ‘A foreign state’s intervention on the side of the rebels during civil war decreases one-sided violence perpetrated by the rebels,’ possibly due to a shift in the actors’ power balance (cf. Wood, Kathman & Gent 2012). In this case, a foreign state’s intervention is the treatment X, and rebel one-sided violence is the outcome Y. Therefore, like most research on peace and conflict, this example hypothesis is geared towards testing the total treatment effect c

However, what if rebels’ strength is measured after the foreign state’s intervention has already begun, as shown in Panel B of Figure 4? In this case, rebels’ strength is probably influenced by the intervention itself, as shown in Panel B. Therefore, rebels’ strength during the intervention is a mediator M: it is a mechanism that relays Basic example

In summary, determining whether a variable precedes or succeeds the treatment is necessary to understand whether the variable acts as a pretreatment confounder or a posttreatment mediator. Therefore, when discussing the ‘control variables’ in a research design, it is vitally important to exercise transparency over the assumed direction between the treatment and each covariate (the direction of path a in Figures 3 and 4). The following section details why this distinction is important for unbiased estimation.

Bias by (not) conditioning and what to do about it

Conditioning on a posttreatment variable M can be problematic.

11

First, and intuitively, conditioning on a posttreatment variable means to partial out a part of the treatment effect itself. The mediating variable acts as a mechanism that relays a part of the effect of X on Y, which is why the arrows in Figure 3‘s Panel B indicate that part of the effect of X on Y ‘flows through’ M. Conditioning on it means to exclude a portion of (i.e. biasing) the total treatment effect (Gelman & Hill, 2007; Pearl, Glymour & Jewell, 2016; Cinelli, Forney & Pearl, 2020). Referring back to the example in Figure 4‘s Panel B, rebels’ strength is a mechanism that links a foreign state’s intervention to rebel-perpetrated one-sided violence. In this artificial scenario, conditioning on rebels’ strength means to partial out that mechanism, thus biasing the total effect estimate of foreign intervention on one-sided violence, and getting an incorrect test result for the hypothesis above. This kind of bias has the intuitive name of ‘over-control bias’ or ‘posttreatment bias’ (Elwert & Winship, 2014). The bias can go in either direction, either inflating or attenuating the coefficient estimate. Under ideal circumstances, the effect researchers are left with is the direct effect, c’

Basic extension

What about instances in which researchers wish to isolate a certain mechanism by partialling out another mechanism, or discern the magnitude of one path independent of another? Acharya, Blackwell & Sen (2016) find that this is the case for 23% of publications that condition on posttreatment variables, out of a sample of publications from three top political science journals

12

between 2010 and 2015. However, the ‘isolated’ direct effect c’

Yet more importantly, assuming that c’

In sum, whether the aim is to estimate the total treatment effect or the direct treatment effect, simply conditioning on a posttreatment variable without considering the underlying assumptions is probably adding bias rather than reducing it. When the quantity of interest is the total treatment effect, as is the case in most empirical peace and conflict research, a variable that is purely posttreatment should just be disregarded altogether. Unfortunately, many confounders in applied research are not purely posttreatment and exhibit directional ambiguity, which is more complicated to address and will be discussed in the next section. When the interest lies with the direct treatment effect, this needs to be appropriately modelled: while, in a basic artificial setup and under strong assumptions, the coefficient estimates may still be recovered by a simple regression model, in a more realistic research scenario it is almost certainly necessary to implement a formal mediation analysis. 14 See Carter, Shaver & Wright (2019) for an application in peace and conflict studies, showing how the notorious effect of rugged terrain (X) on civil war (Y) is mediated by political marginalization (M).

Appreciating the causal sequence between variables, and the difference between the direct and indirect treatment effect, also challenges a straightforward interpretation of covariate results in multivariate regression. In an analysis that conditions on a confounder Z, the covariate’s coefficient suffers from the same biases discussed above. This becomes intuitively apparent when considering Panel A in Figure 3: the total effect of Z on Y is a combination of both b1 and a1c1. In an analysis that regresses Y on X and Z, the estimated coefficient of Z cannot be interpreted as the total effect of that covariate on the outcome, because part of the effect of the covariate is relayed through X. Moreover, the covariate’s coefficient estimate likely suffers itself from OVB, because the model specification is usually tailored towards the explanatory variable of interest and does not consider second-hand confounders. Therefore, neither the substantive effect size nor the significance of covariates’ coefficient estimates can be meaningfully interpreted in a standard regression setup. The mistaken belief in such ‘mutual adjustment’ among covariates is also known as the ‘Table 2 Fallacy’ (Westreich & Greenland, 2013). 15 Nevertheless, a majority of evaluated studies on peace and conflict actively interpret their covariate results.

The total treatment effect in an imperfect world

The implication of the previous section is that all research should include a transparent discussion of the assumed relationship between treatment and covariates. Studies seeking to estimate a total treatment effect may condition on pretreatment variables, and should avoid conditioning on posttreatment variables. 16 Unfortunately, the real world of potential covariates does not only divide into pretreatment and posttreatment.

What to do when a covariate acts as both confounder and mediator? A version of this problem is exemplified by Angrist & Pischke (2009) under the name ‘proxy control:’ in order to account for a confounder that cannot be measured (an ‘unobserved confounder;’ U), the researcher has the option to use a proxy variable. That proxy is, however, only observed after the treatment. A dilemma occurs in which including the covariate in the analysis alleviates OVB because it proxies for a pretreatment confounder, but at the same time induces over-control bias because it was measured after treatment.

17

Proxy control

One of the most eminent works in peace and conflict research, Why Civil Resistance Works: The Strategic Logic of Nonviolent Conflict (Chenoweth & Stephan, 2011), illustrates the challenge of accommodating a proxy control. Studying how the use of nonviolent means (X) influences resistance campaigns’ likelihood of achieving their policy goals (Y), Chenoweth & Stephan (2011) argue that nonviolence attracts a larger and more diverse support base, which in turn improves the movement’s likelihood of success. However, not only do the means of resistance influence a campaign’s size (M), but a campaign’s size also determines, both directly and indirectly, the means that it is able to adopt (Z). Chenoweth & Stephan (2011) address this issue by including peak campaign size as a covariate in their models, thereby mitigating OVB. Meanwhile, with campaign size also being a key mechanism that relays part of the effect of nonviolence on campaign success, its inclusion as a covariate is also bound to induce over-control bias.

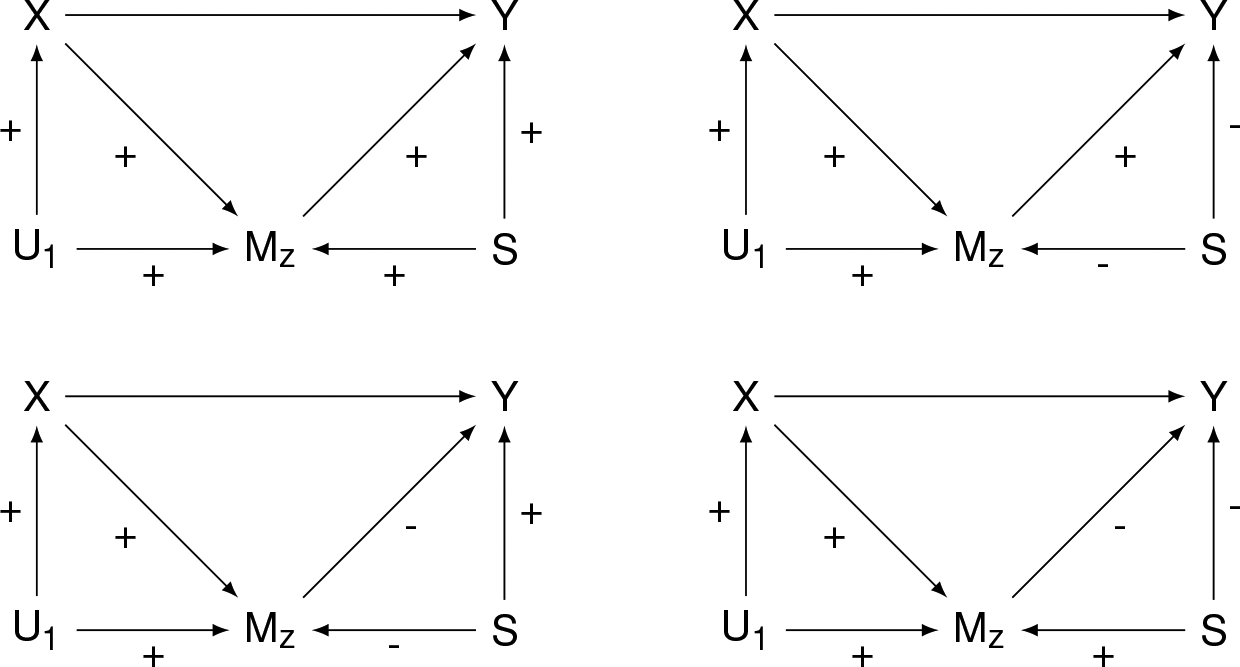

Figure 6 visualizes an adaptation of the problem, showing the variable M

Sensitivity analysis

A canonical way of addressing the dilemma of a proxy control is to conduct a sensitivity analysis, as recently recommended by Groenwold, Palmer & Tilling (2021) and dating back to Rosenbaum (1984). The basic logic behind a sensitivity analysis is to examine the results’ robustness under various assumptions about the setup and strengths of association between variables. While this is not a ‘fix,’ it enables the researcher to better understand the assumptions under which their findings hold and to exercise transparency about the limitations of their study. Sensitivity analyses may be conducted via bias expressions (analytically) or via simulation (computationally).

Computational sensitivity analyses are easily achievable given the common provision of powerful statistical software.

19

Groenwold, Palmer & Tilling (2021) suggest several R packages on structural equation modelling that include useful functionality for simulating data, including DAGitty (Textor et al., 2016) and lavaan (Rosseel, 2012). DAGitty also comes with a browser-based environment to ease the introduction to, and interaction with, graphical representations of model specifications (dyadic acyclic graphs; DAGs) at

www.dagitty.net

. A recent tool worth highlighting is sensemakr by Cinelli & Hazlett (2020), which was specifically designed for sensitivity analysis in applied research. It offers comprehensive and easy-to-use functionality in both R and

Package selection depends on the researcher’s needs and preferences, but the procedure when estimating the total treatment effect is similar across tools. In a first step, the researcher estimates their regression model using only pretreatment covariates and excluding potentially offending covariates – that is, excluding all covariates that may be directly or indirectly influenced by the explanatory variable of interest. This risks OVB but avoids over-control and collider-stratification bias. In a second step, the researcher conducts an analysis of their treatment effect’s sensitivity to OVB using one of the aforementioned tools, and reports the findings (e.g. through a contour plot) together with their regression results. The material cited above guides readers through the implementation and interpretation of the sensitivity analysis. In this context I specifically highlight sensemakr by Cinelli & Hazlett (2020) for providing a very accessible guide. Among a small but growing number of studies on peace and conflict that use sensemakr to probe their results’ sensitivity to OVB, see, for example, Koos & Lindsey (2022) and Pinckney, Butcher & Braithwaite (2022) for applications.

As a form of manual sensitivity analysis, some empirical research attempts to bound the total treatment effect by estimating two regressions, one that includes M

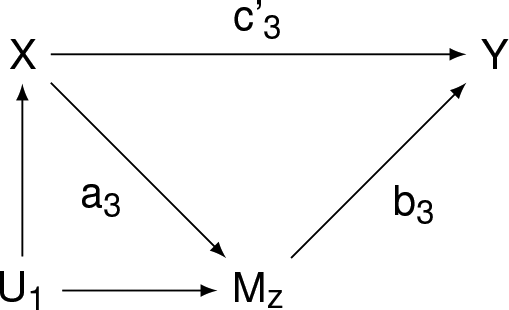

Based on general bias expressions in Groenwold, Palmer & Tilling (2021), I derive conditions under which bias direction is identifiable solely based on effect signs, and in the context of a more realistic setting that combines all the challenges discussed so far: a mediator variable that also serves as proxy control, paired with S that makes M Combinations of effect directions that allow bounding

This is useful for two reasons. First, Figure 7 allows a researcher to map their assumed effect directions in their own theoretical model setup onto these graphs. If they match, this may enable the researcher to identify the upper and lower limit of their total treatment effect through a bounding exercise. Second, Figure 7 is useful due to all the combinations and settings it does not display. In other words, it serves as a reminder that conducting two regressions and claiming they bound the true effect without carefully considering the underlying assumptions is, in most instances, wrong. Even slight changes to these setups can result in different dynamics that render bias direction dependent on relative effect sizes (Elwert & Winship, 2014; Cinelli, Forney & Pearl, 2020; Groenwold, Palmer & Tilling, 2021). Therefore, when arguing for a certain bias direction, researchers must exercise caution and be transparent regarding their assumptions. Finally, it is important to recall that even when the total treatment effect is successfully bounded, its possible realizations are not uniformly distributed between the two limits and may cluster close to either boundary. 22

The Online appendix section 2 provides further analytical results that can help to probe empirical findings in a more nuanced way (see Online Equations 2.2a and 2.5). Based on these expressions, researchers can explicitly state their assumed data generating process to examine the direction of bias in their projects, whether their regression coefficient may be a conservative or inflated estimate, as well as whether their total treatment effect can be bounded. This discussion of the opportunities for and limitations of inferring the direction of bias should not discourage researchers from reporting different model specifications. While special attention should be given to a careful interpretation and prudent inference, it is always good practice to exercise transparency over the results’ model dependence. To this end, Young & Holsteen (2017) developed a systematic framework for estimating and reporting model uncertainty and robustness in the context of different specifications.

Lagging covariates

The use of panel data in peace and conflict studies is ubiquitous, presenting unique challenges and opportunities. Research on social science methodology made important advances on the practice of lagging as a way to ameliorate reverse causality between treatment and outcome as a source of endogeneity.

23

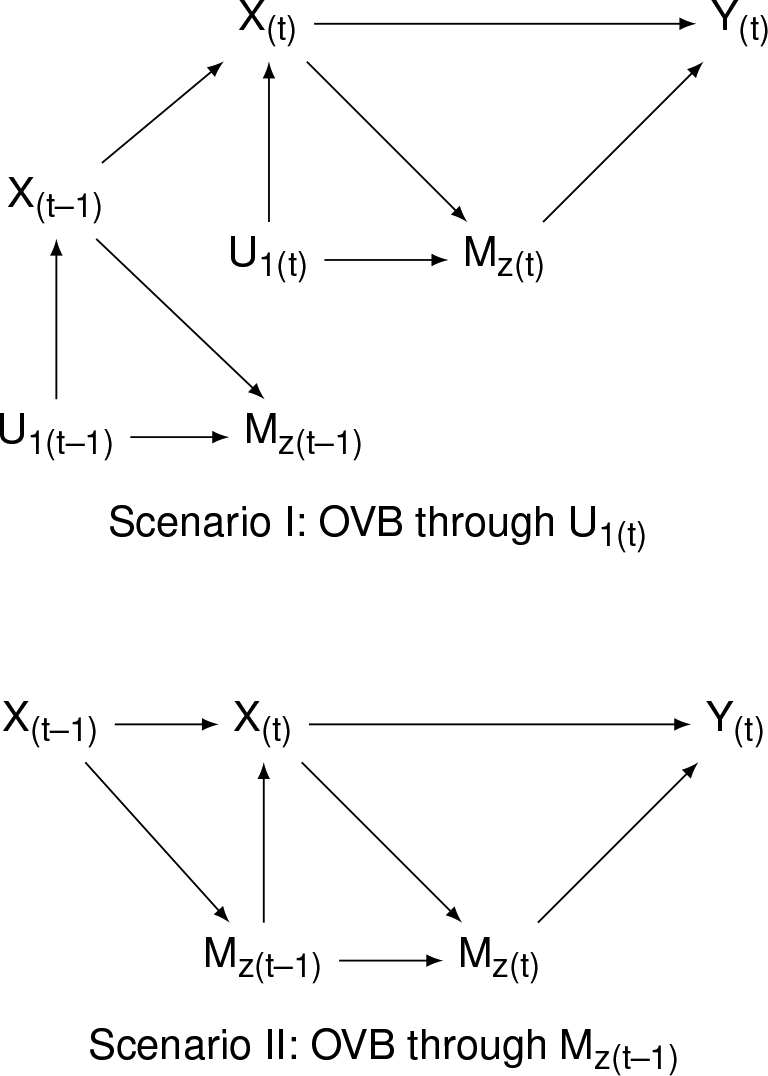

Lagging a covariate as a way of addressing the proxy control problem has not received systematic Proxy control (panel)

Figure 8 visualizes two scenarios that help to exemplify the potential use and limitations of lagging to address the proxy control problem. Scenario I offers a simple extension of Figure 6, adding temporal dependence to the treatment X. In this particular case, the total treatment effect can be estimated by using past values of X and not conditioning on M

In Scenario II, past values of M

Total effect decomposition (TED)

Finally, a potential solution to the proxy control problem is offered by the TED approach developed by Aklin & Bayer (2017). It is an intuitive way to recover unbiased effect estimates for the case of a binary treatment (dummy) variable (X The outcome Y is regressed on all covariates that are pretreatment (all confounders Z; these must be unrelated to any posttreatment variable M Using the estimates from the previous step, predicted values are calculated for all treated observations (X = 1). Also written as predicting: The total effect estimate c is the mean difference between the predicted values of the previous step (

To summarize, addressing the challenge of a proxy control requires nuance. When having to choose between OVB and posttreatment bias, there is no all-in-one solution: depending on the theoretical framework and empirical setup, different conditions and assumptions apply. In all instances, however, exercising transparency and prudence in the interpretation of results is key. To this end, sensitivity analyses are instrumental to the quantification and communication of uncertainty.

Conclusion and implications for applied research

The peace and conflict research programme has continuously improved its application of quantitative methods. Works such as Clarke (2009) and Schrodt (2013), and seminal contributions by Gary King, Kosuke Imai, and others, substantially contributed to scholars’ understanding and awareness. Prominently, this included the slow abandonment of garbage can models (cf. Achen, 2005). However, while the notion of ‘more covariates is always better’ became rightfully outdated, less attention was given to the suitability of those covariates that remained and make up today’s models in peace and conflict research. Many studies justify the inclusion of covariates merely based on their relationship with the outcome, or worse, by declaring them the ‘usual set of controls’ – the inclusion of which is, oftentimes, either pointless or detrimental for minimizing bias in estimation.

There is much to consider in the context of research design and model specification, a discussion of which goes beyond the scope of any individual article. 24 I focus on one issue that can help to significantly improve estimation practice in peace and conflict studies. Manuscripts must give more emphasis to the discussion of model specification, explaining how each covariate relates not only to the outcome, but also to the explanatory variable of interest (the treatment). In doing so, the direction of these relationships requires attention: does the covariate influence the treatment, or does the treatment influence the covariate?

The direction of the effect between the treatment and each covariate matters. Conditioning on a posttreatment variable, that is, a covariate preceded by the treatment, biases the total treatment effect estimate. Meanwhile, even if the aim is to isolate an individual mechanism (direct treatment effect), due care has to be given to the modelling strategy and underlying assumptions to mitigate bias. The solution for avoiding posttreatment bias is easy: not to include posttreatment variables in one’s model specification. However, there are variables that may be partly influenced by the treatment, but at the same time also proxy for an exogenous confounder. Not conditioning on them means to avoid posttreatment bias, but to risk OVB – conditioning on them means to account for OVB, but to accept posttreatment bias. These ‘proxy controls’ require a careful assessment and transparent discussion. A prudent way to address such a dilemma is to conduct a computational sensitivity analysis that quantifies the extent to which research findings are sensitive to OVB. Other potential avenues, depending on the research setup and the underlying assumptions, are to conduct an analytical sensitivity analysis, lag covariates to temporally precede the treatment variable, or follow the TED approach.

I show that a majority of quantitative hypothesis tests in recent publications in the field of peace and conflict research may be biased due to a lack of consideration for the issue of posttreatment variables. Just as reverse causality between the treatment and the outcome is an important point of consideration for authors, reviewers and editors, causal direction between the treatment and covariates should be as well. Moreover, a majority of evaluated studies actively interpret their covariates’ effect estimates or significance levels, suggesting a widespread lack of awareness for the role of model specification. It is this awareness that this study seeks to raise, in an effort to facilitate transparent discussions on variable selection in the peace and conflict research programme. Questions surrounding research design and model specification are never easy to answer, but they are important to ask.

Footnotes

Replication data

The dataset, codebook, and script for the article review, along with the Online appendix, are available at https://www.prio.org/jpr/datasets/ and on ![]() . Data management was conducted using R.

. Data management was conducted using R.

Acknowledgements

I thank the Journal of Peace Research editors and two anonymous reviewers for their helpful feedback, as well as Patrick Bayer, Charles Butcher, Daina Chiba, Amélie Godefroidt, Thea Johansson, Moritz Marbach, Phillip Nelson, Clara Neupert-Wentz, Espen Geelmuyden Rød, Christoph V Steinert and Felix B Weber for many useful comments and conversations that helped shape this article. Moreover, I am thankful for great suggestions by the Norwegian University of Science and Technology peace research group, participants at the University of Essex’ Michael Nicholson Centre for Conflict and Cooperation workshop and the Interdisciplinary Peace and Conflict Research Network workshop, as well as by the peace researchers at the 2021 annual conferences of the Conflict Research Society and of the German Association for Peace and Conflict Studies. Special thanks to Christoffer Andersen, Lasse Holtar, Conor Kelly, Andreas Lillebråten and Niclas Weischner for their excellent research assistance. All mistakes are my own.

Funding

The author received no independent financial support for the research, authorship, and/or publication of this article.