Abstract

Do survey participants in conflict zones respond differently if they have been interviewed before? Academic and policy interest in postwar political opinion has increased tremendously. One unexpected consequence of this surge of survey research is a growing probability that individuals will be interviewed multiple times. However, if participating in one survey causes respondents to change their attitudes or behavior, their subsequent survey responses may be biased in comparison to the rest of the sample population. Our article aims to investigate such ‘survey participation effects’ in conflict contexts. We draw on original survey data collected in the eastern Democratic Republic of Congo (DRC). In our representative sample, 18% of respondents report that they have been interviewed before. Multivariate analyses demonstrate that their stated attitudes on social relations, political institutions, gender norms, and wartime victimization differ substantively from the responses of first-time interviewees. Moreover, our analyses indicate that experienced respondents have specific response styles – in particular, a tendency to support extreme response options. While substantive bias in multivariate analyses seems to be rather rare, our findings indicate that researchers should be aware of the footprints of data collection efforts in areas frequently targeted by household and opinion surveys.

Introduction

An increasing number of research teams have been traveling to conflict zones to collect data for aid impact evaluations, academic research projects or more general opinion polls. This development comes with an increasing probability that households and individuals will be surveyed multiple times. However, being exposed to interview situations may affect people’s attitudes, behavior, and general response behavior. This, in turn, can influence their answers in subsequent surveys. This article aims to investigate the potential effects of repeated interview participation on survey-based descriptive and inferential analyses focusing on the micro-level dynamics of violent conflict.

It has been recognized since at least 1940 that a ‘big problem yet unsolved is whether repeated interviews are likely, in themselves, to influence a respondent’s opinions’ (Lazarsfeld, 1940: 128). For example, being exposed to a survey question might make respondents reflect and gather information about the topic after the survey. Repeated interviews may also increase trust in survey processes and the willingness to provide sensitive information to unknown enumerators. Conversely, participation in multiple surveys may create a certain degree of survey fatigue that reduces people’s motivation to substantively engage with survey items (Lund, Shackleton & Luckert, 2011).

All these factors could affect people’s opinions and corresponding answers in subsequent interviews. Moreover, they may also shape people’s response styles: ‘a systematic tendency to respond to a range of questionnaire items on some other basis than the specific item content’ (Paulhus, 1991: 17). A large body of research in marketing, cognitive psychology, and survey methodology has investigated the potential effects of repeated survey participation – focusing almost exclusively on participation in panel surveys in Western states (see comprehensive reviews and discussions in Cantor, 2007; Warren & Halpern-Manners, 2012). Several studies find evidence of ‘panel conditioning’ effects as outlined above, albeit of different types and in different directions (Bergmann & Barth, 2018; Warren & Halpern-Manners, 2012). At the same time, a substantial number of studies have found no or only very small effects (Smith, Gerber & Orlich, 2003; Bartels, 1999). This inconclusiveness may at least partly be traced back to the heterogeneous effects of multiple survey participation depending on survey design, content, and socio-economic contexts.

We investigate these survey participation effects in face-to-face perception surveys in the context of armed conflicts. The effects of survey experience may be particularly pronounced under these specific circumstances: in the light of pressing socio-economic needs and little formal education, individuals may have less crystallized attitudes on abstract concepts and less practice in translating their opinions into choices in closed-ended questions. High levels of violence and polarization can also increase distrust in unknown interviewers and reluctance towards disclosing sensitive information. Finally, as a result of a high dependence on international assistance, respondents may initially place high (and potentially unwarranted) expectations on survey participation when interviews are considered part of foreign aid planning.

Under these scope conditions, previous survey participation may have particularly strong effects in terms of (a) increasing respondents’ practice in thinking about and responding to survey items, (b) decreasing distrust in the interview process, and (c) creating a certain degree of disillusionment and survey fatigue. Consequently, the response patterns of experienced interviewees may substantially differ from those of first-time survey participants.

We investigate these potential effects using original survey data collected in the eastern Democratic Republic of the Congo (DRC). Together with a Congolese survey organization, we collected data from 1,000 randomly sampled households in 100 villages in the eastern province of South Kivu in 2017. Importantly, we also asked whether respondents had previously participated in similar surveys. Our analyses demonstrate substantive correlations of this self-reported prior survey participation with a wide array of outcomes relating to people’s social and political attitudes. Moreover, we document significant associations with response styles: people who have already participated in surveys tend to select more extreme response options in Likert-scaled items. Lastly, we show that previous survey experience can moderately bias certain ‘standard’ analyses of the sociopolitical legacy of violence. This, however, seems to be the case only for a limited and specific set of models.

These findings contribute to research on the specific methodological challenges of empirical analyses in conflict-affected areas (e.g. Brück et al., 2016; Haer & Becher, 2012). Previous studies have highlighted that interview situations and interviewer–interviewee interactions differ from those in surveys conducted in stable political contexts. Among others, particularities of conflict zones can lead to more pronounced interviewer effects that shape the interviewees’ response patterns (Adida et al., 2016). We demonstrate that surveys in conflict zones also risk being affected by previous survey participation. While substantive bias in multivariate analyses seems to be moderate, our findings indicate that researchers should be aware of the footprints of data collection efforts in areas frequently targeted by household and opinion surveys.

Opinion surveys in armed conflicts

Research on the causes and consequences of violent conflict has long focused on the country level, investigating how political, social, and economic factors increase or decrease the risk of violent conflict, its intensity or its termination. In recent years, however, the field has experienced a micro-level turn. A growing number of researchers are now focusing on trying to understand the motives, attitudes, and interpretations of individuals actively involved in or passively affected by violent conflict. Many of these studies rely on survey data collected in standardized perception interviews (see overview in Brück et al., 2016).

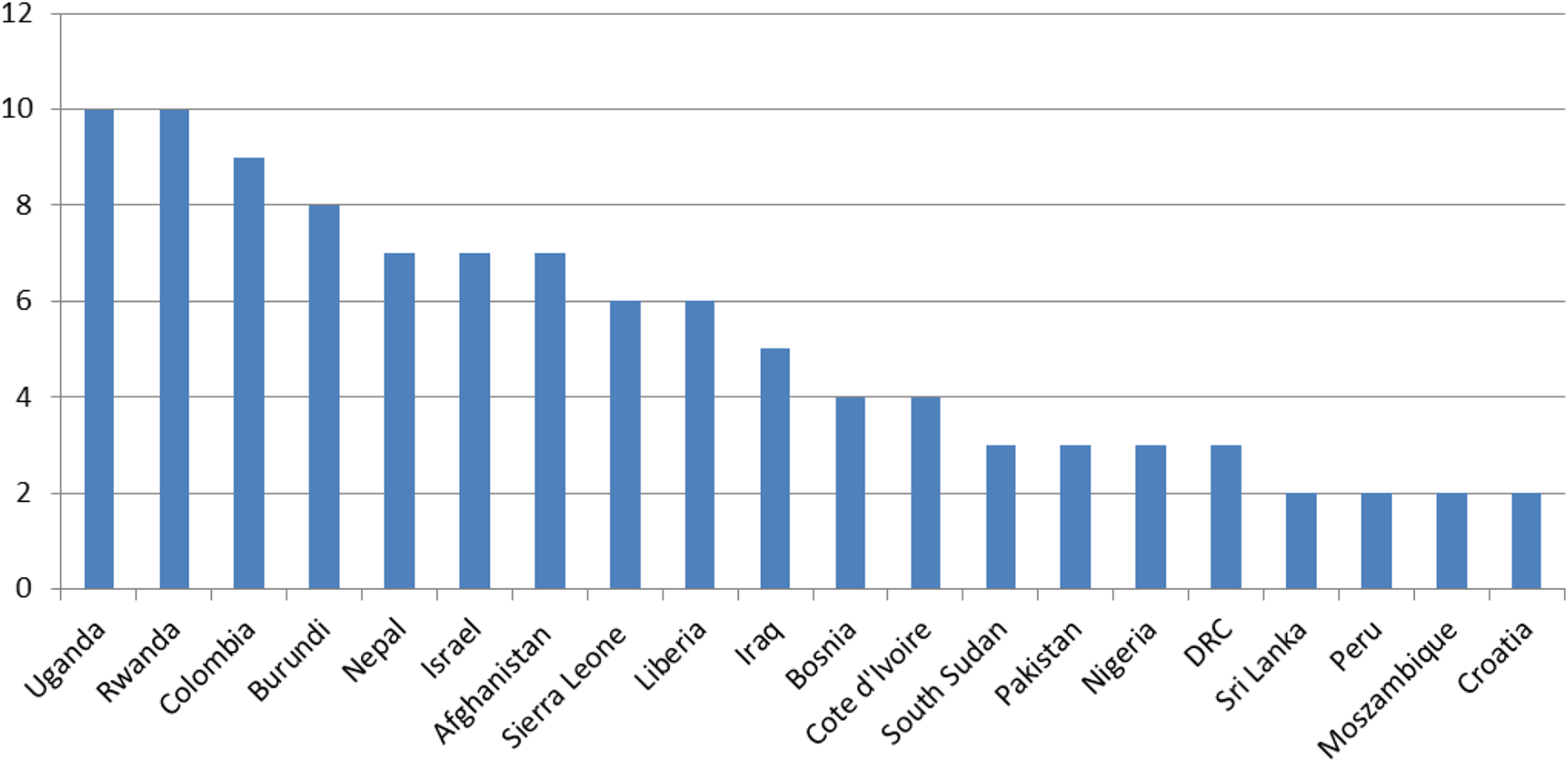

In order to get a general sense of this research field we have collected information on research articles published in the past ten years (2008–17) featuring survey-based analyses of conflict zones (a total of 113 articles).

1

The Survey-based research published 2008–17 (count)

This comparatively high level of regional concentration increases the likelihood that individuals will be surveyed multiple times by different academic or development organizations – most notably, if we take into consideration that our data are likely to capture only a very small fraction of surveys carried out in these countries (only those that resulted in published academic work). The following sections very briefly summarize potential effects of multiple survey participation and then present some explorative hypotheses on how these effects may shape response patterns in conflict and post-conflict settings.

Effects of multiple survey participation

Having a complete stranger enter your home to ask a battery of questions about politics, economics, or social issues for up to 120 minutes is an unusual event. If this experience causes respondents to change their attitudes or their more general response behavior, any subsequent survey responses may be biased in comparison to the population from which they were originally sampled (Bartels, 1999: 4).

First, previous survey participation may act as a stimulus that influences actual attitudes, behavior, and knowledge on specific aspects included in the respective survey instrument. The stimulus raises awareness and induces people to acquire more information and to reconsider their attitudes and actions. For example, as a consequence of a survey on voting behavior people may pay more attention to the news, discuss political campaigns with their friends and family, or register to vote. This may affect their understanding of election processes or their appraisal of the relevance of voting (Yalch, 1976; Traugott & Katosh, 1979).

Second, in addition to influencing actual attitudes, survey participation may also influence people’s general response behavior. The more experienced a respondent is at thinking about a given topic, the more he or she will be able to think anew about that topic and to answer relevant questions (see overview in Cantor, 2007). Familiarization with the survey procedure due to repeated participation can increase respondents’ trust in the survey process and make them more willing to disclose personal information (Struminskaya, 2016; Waterton & Lievesley, 1989). Finally, previous survey participation may also reduce motivation and effort to answer accurately if respondents have not experienced any tangible effects from initial interviews (Krosnick, 1991).

A large body of research has highlighted the potential effects of multiple survey participation. The following section aims at specifying the expected effects of multiple survey participation in conflict zones. As we cannot draw on an established body of theoretical and empirical research, our hypotheses represent exploratory ‘most likely’ associations rather than strong and fully theoretically grounded theoretical expectations.

Multiple survey participation in conflict zones

Previous research indicates that the presence and magnitude of survey participation effects depend, among other factors, on (a) individuals’ levels of practice in thinking about and responding to survey items, (b) their trust in the interview process, and (c) their motivation to participate in the interview and provide accurate responses (Cantor, 2007; Bergmann & Barth, 2018; Warren & Halpern-Manners, 2012). Our main arguments presented below rely on the assumption that first-time interviewees in conflict zones display – on average – particularly little practice, high levels of distrust in the interview process, and relatively high expectations and motivation to participate. Under these rather pronounced baseline conditions, one single prior survey participation can make a great difference in terms of individuals’ attitude crystallization and familiarity with closed-ended survey items, their assessment of the trustworthiness of the interview process, and their expectations regarding potential beneficial outcomes of interview participation. Consequently, we expect the marginal effect of an individual interview participation to be relatively strong compared to stable developing countries. The following subsections elaborate on these scope conditions and present our preliminary hypotheses.

Social and political attitudes

Fragile states have been described as the ‘poorest of the poor’ (OECD, 2010: 75). Grave socio-economic conditions are likely to influence respondents’ practice in answering standardized questions relating to abstract notions. Many respondents will enter survey situations without having had the opportunity to put much thought to their concrete positions on matters that are rather remote from their pressing daily priorities. Due to little formal education and experience in interacting with formal (state) institutions, interviewees are also more likely to be unaccustomed to the exam-like style of closed-ended survey questions (Lupu & Michelitch, 2018: 202). They will tend to have less practice at translating their attitudes into choices of specific response options on fine-grained scales relating to rather abstract survey items (Krosnick, 1991).

Previous research has found that such conditions are particularly likely to produce rather strong survey participation effects (e.g. Cantor, 2007). Attitudes vary in terms of their degree of ‘crystallization’ and accessibility: the less people have systematically and repeatedly thought about certain issues, the more inconsistent, malleable, and inaccessible are their attitudes (e.g. Tourangeau, Rips & Rasinski, 2000). Less crystallized attitudes, in turn, are more sensitive to cognitive stimuli: ‘the act of participating in the baseline survey may set in motion a series of cognitive processes that change that attitude by the time of a follow-up survey’ (Warren & Halpern-Manners, 2012: 499). In particular, respondents may elicit stronger and more consistent opinions as a result of repeated interviews (Bergmann & Barth, 2018).

Consequently, if baseline practice and attitude crystallization is particularly low in conflict zones and if previous survey experience can lead to changes of less crystallized attitudes, then we expect that experienced interviewees respond differently to survey items on concepts commonly investigated in conflict zones (e.g. social capital, state-society relations, gender norms) than first-time interviewees (Hypothesis 1).

Social desirability bias and nonresponse

The most obvious specificity of conflict contexts is a particularly high level of violence and insecurity. This is likely to influence respondents’ trust in the interview process. Violence and insecurity may increase hostile attitudes towards individuals that do not belong to people’s in-group – such as unknown interviewers (e.g. Bauer et al., 2014). Moreover, during civil wars and in polarized postwar societies, individuals’ personal safety depends on how they are perceived by armed actors: as supporters, opponents or bystanders. In such contexts of high insecurity any kind of disclosure of preferences can be potentially dangerous. The resulting fears are likely to impact on individuals’ response patterns in interview situations as they ‘fear that providing the “wrong” answer will lead to social censure and may even threaten their safety’ (Bullock, Imai & Shapiro, 2011: 365). 2

Previous research has found that repeated survey participation can mitigate such fears as people gain more trust in interviewers, the interview process, and assurances of confidentiality. As respondents find that interviews do not create any negative repercussions, they become more willing to report on attitudes and experiences (Tourangeau, Rips & Rasinski, 2000; Waterton & Lievesley, 1989). This can reduce the prevalence of certain response styles such as social desirability bias and item non-response. Social desirability is the tendency to respond in agreement with prevailing norms. Item non-response occurs when interviewees do not provide any substantive response to specific survey items. Instead, they tell the interviewer that they ‘don’t know’ the response (Krumpal, 2013).

Thus, if baseline levels of fear and distrust are particularly high in conflict zones and if previous survey experience can mitigate these fears, then we expect experienced respondents to elicit lower levels of social desirability bias (Hypothesis 2a) and item-non-response (Hypothesis 2b) than first-time interviewees.

Extreme response and acquiescence bias

The third specificity of conflict contexts is a high dependency on non-state international assistance. In conflict-affected countries such as Liberia, South Sudan or the DRC, up to 80% of basic services like education or health are being provided by humanitarian and faith-based relief organizations (ODI, 2012). In such a context, the sheer presence of researchers and enumerators can generate expectations of future development and humanitarian assistance (Berja, 2016; Lund, Shackleton & Luckert, 2011). Consequently, individuals in conflict zones may be particularly likely to interpret interview participation as an opportunity to generate tangible benefits for the respondent and/or her local communities. This is turn is likely to increase interviewees’ motivation to participate in surveys and invest effort in providing sensible and adequate responses.

Multiple survey participation can reduce this motivation (Bergmann & Barth, 2018; Warren & Halpern-Manners, 2012). Respondents may feel disillusioned as they realize that their expectations have not materialized in the aftermath of their previous survey participation. Less motivated individuals tend to evaluate questions only superficially and try to use strategies that ease the response process (Krosnick, 1991). This can reinforce certain response styles such as extreme response bias or acquiescence bias. Extreme response bias is the tendency to select extreme response options on a rating scale, independent of the specific content of a question – for example, ‘I disagree a lot’ rather than ‘I disagree’. Low motivation induces respondents to stop processing information after the first response option or to pick the last option offered to them. Acquiescence (‘yea-saying’) is the tendency to agree with items, regardless of their content. Most assertions offered in survey questions are probably reasonable, making it easy to justify saying ‘I agree’. Only those respondents that are motivated to devote the greater effort to generate reasons why a statement may not be true, are likely to disagree (Krosnick, 1991).

Thus, if baseline levels of motivation are particularly high in conflict zones and if multiple survey participation can have a demotivating effect, then we expect experienced respondents to display higher levels of extreme response (Hypothesis 3a) and acquiescence bias (Hypothesis 3b) than first-time interviewees.

The three specificities of conflict zones highlighted above define the scope conditions of our three hypotheses: previous survey participation is particularly likely to exert strong effects on attitudes, to reduce social desirability bias and item non-response, and to increase extreme response and acquiescence bias under conditions of high levels of underdevelopment, violence, and aid dependency. These conditions are not exclusive to conflict contexts and not all conflict contexts display all three characteristics to the same extent. However, on average, we expect the combination of these conditions to be more likely in contexts of ongoing or recent phases of armed conflict.

Survey data from the Eastern DRC

We test our hypotheses with survey data collected in eastern DRC. In the light of the scope conditions mentioned above, we consider the region to be a ‘most likely’ case: eastern DRC is marked by intensive phases of violence, very low socio-economic development, and a high reliance on foreign aid. Consequently, we consider the case well suited for a first and rather exploratory analysis of survey participation effects.

Eastern DRC is recovering from a series of armed conflicts that were a direct result of the 1994 genocide in Rwanda. After years of intense warfare, a transitional government took over power in 2003. Until today, the provinces of North and South Kivu experience high levels of instability and localized violent conflicts involving a great number of armed groups and the Congolese army. Much of the violence has been directed against the civilian population. Massacres, rape, and displacement are widespread (see e.g. Prunier, 2011).

This violence has led to a massive humanitarian crisis. The DRC is one of the poorest countries worldwide. It was ranked 176th out of 189 states in the 2018 Human Development Index. According to recent estimates, around 13 million people in the DRC are affected by severe food insecurity and need humanitarian assistance; around 4.5 million people have been displaced inside the country.

In practice, public services are largely provided by nongovernmental organizations (Trefon, 2011). These agencies often use surveys to collect baseline data and determine the need for specific interventions. Apart from these internal surveys (of which there have likely been several dozen), a few large-scale surveys have been carried out in the DRC. So far, three waves of the Demographic and Health Surveys (DHS) have been implemented. The Harvard Humanitarian Initiative has collected several survey waves on individual-level perceptions on conflict and justice. Finally, several academic researchers have either collected survey data themselves or cooperated with agencies to accompany impact evaluations (Humphreys, de la Sierra & Van der Windt, 2015).

To assess the extent to which multiple survey participation may impact response patterns, we rely on an original opinion survey with 1,000 respondents (in 100 randomly sampled villages) in South Kivu carried out between February and March 2017. The primary purpose of the survey was to assess the prevalence of individual and collective exposure to violence in the context of the ongoing conflicts and to understand how these experiences affected social relationships, political and security perceptions, and gender-related norms and practices.

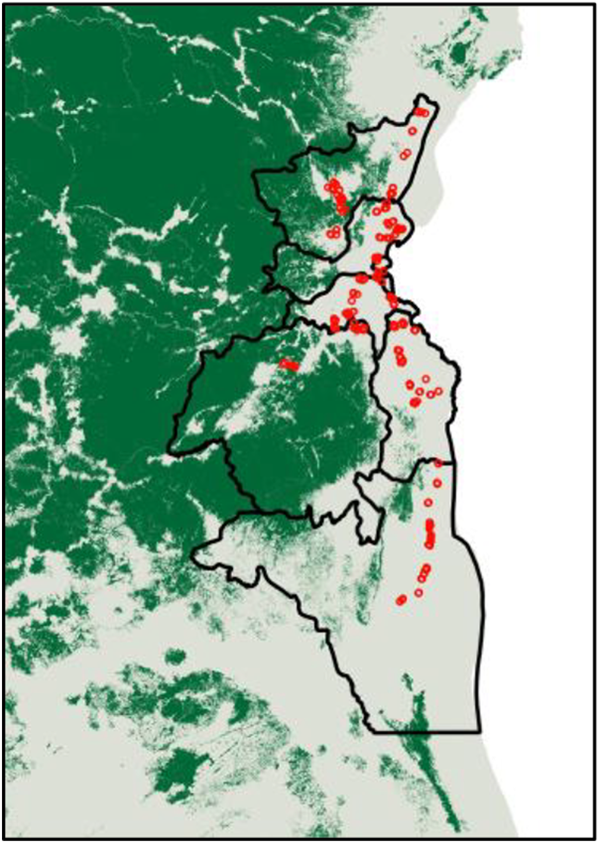

The survey included around 500 questions and follow-up questions on social capital, political attitudes, experiences of violence, and attitudes related to gender equality. The median interview duration was 86 minutes (standard deviation = 24.5). Respondents received 1,500 Congolese Francs (approx. 1.30 USD) to Map of South Kivu and survey locations

We employed a multistage sampling protocol to generate a sample representative of the province (the protocol is available in the Online appendix). The sample excluded major urban areas such as Bukavu to keep the overall demographic conditions (population size, agricultural livelihoods, and infrastructure) among the villages similar. Figure 2 shows the spatial distribution of the survey locations.

To measure our main explanatory variable, Previous survey, we included a question that asked respondents whether they had ever participated in a survey such as ours before. This question was asked at the end of the survey instrument, so that respondents had a good understanding of what ‘survey’ means. In our set of respondents, 18% reported that they had participated at least once in a survey before. Our measure is relatively crude, and we cannot differentiate between whether respondents participated in an academic survey or interviews conducted by nongovernmental organizations or the UN mission. We also do not know which topics have been covered by previous surveys and how respondents experienced these surveys. Despite these limitations, we believe that our measure is an important first step towards exploring the impact of previous survey participations on substantive outcomes and response patterns. We discuss avenues for more refined research on survey participation effects in the discussion section.

Selection into previous survey participation

We start by investigating the determinants of our explanatory variable, Previous survey participation. While we assume that at least some of the variation in this variable is driven by the random sampling strategies of previous large-scale surveys, we do not expect survey participation to be entirely exogenous. For logistical and security reasons, many surveys rely on pure convenience samples, leading to overrepresentation of villages that are easily accessible. Moreover, international and nongovernmental organizations may focus on specific population groups (e.g. violence-affected villages, displaced persons).

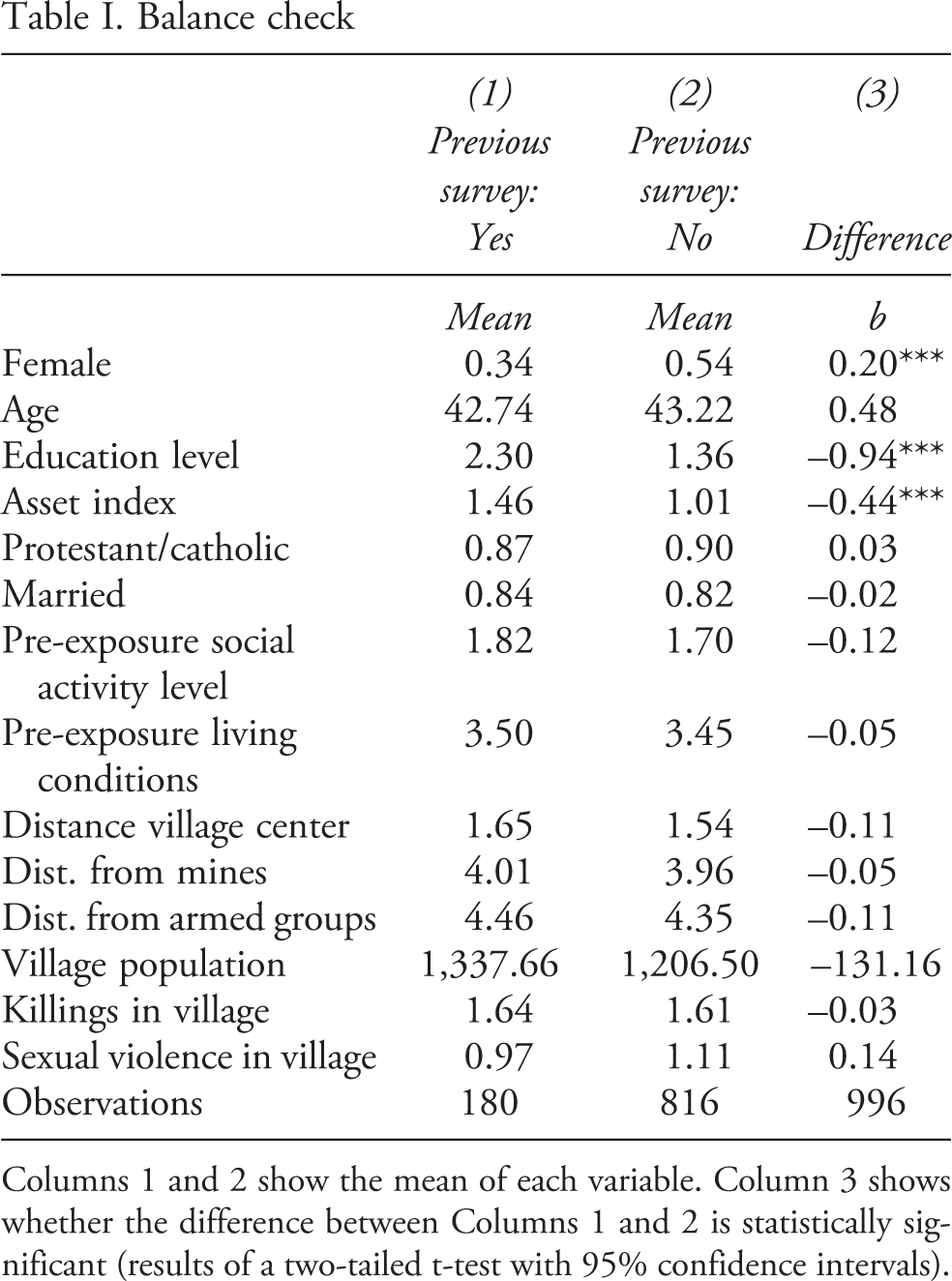

In order to mitigate selection bias in our main analyses we analyze the potential determinants of multiple survey participation. We focus on variables that may confound the relationship between multiple survey participation and response styles. By default, all measures from our household survey must be considered ‘post-treatment’. Specifically, attitudinal factors may themselves be contaminated by interviewees’ response styles. The selection of our control variables therefore focuses on variables that are less susceptible to response bias, because they are drawn from information that is independent of the individual respondent (i.e. village surveys) or that is more verifiable from the outside and may therefore demotivate incorrect responses (e.g. gender, age, distances). Table I provides a balance check for our explanatory variable Previous survey as well as the results of a two-tailed t-test to assess the degree of difference. 3

Balance check

Columns 1 and 2 show the mean of each variable. Column 3 shows whether the difference between Columns 1 and 2 is statistically significant (results of a two-tailed t-test with 95% confidence intervals).

Empirical strategy

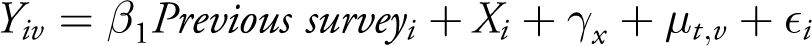

We now turn to investigating our hypotheses on the effects of previous survey participation. We describe the construction of the outcome variables in the respective sections below. Most of our outcome variables are continuous, standardized variables. For these we use simple linear regression models with standard errors clustered at the village level (for binary outcome variables we use both linear probability models and logistic regressions). In our analyses, we estimate the following general model

where Y denotes our outcome of interest for respondent i in village v.

4

For each outcome variable, we estimate three main models. The first model includes all control variables in vector Xi presented above, enumerator fixed effects

Hypothesis 1: Individual social and political attitudes

To assess whether previous survey participation influences responses on survey items related to social and political attitudes (H1), we first estimate the effect of previous survey experience on four key outcomes of interest in post-conflict settings: social capital, political/institutional trust, reporting of violence exposure, and gender inequality. We use principal component analysis to identify key components of 86 Likert-scaled questions along these four outcomes. 8 Our social capital measure reflects the frequency of interaction with ethnic kin and non-kin. The political trust variable captures people’s respect for political institutions, in particular the rule of law. Violence exposure relies on questions that ask respondents whether the household has been directly affected by armed group violence (e.g. murder, looting, displacement). Gender inequality loads on value judgements that disempower women. All Likert-scaled and continuous variables have been standardized (std) to make the effect sizes comparable (apart from binary variables Previous survey and Female).

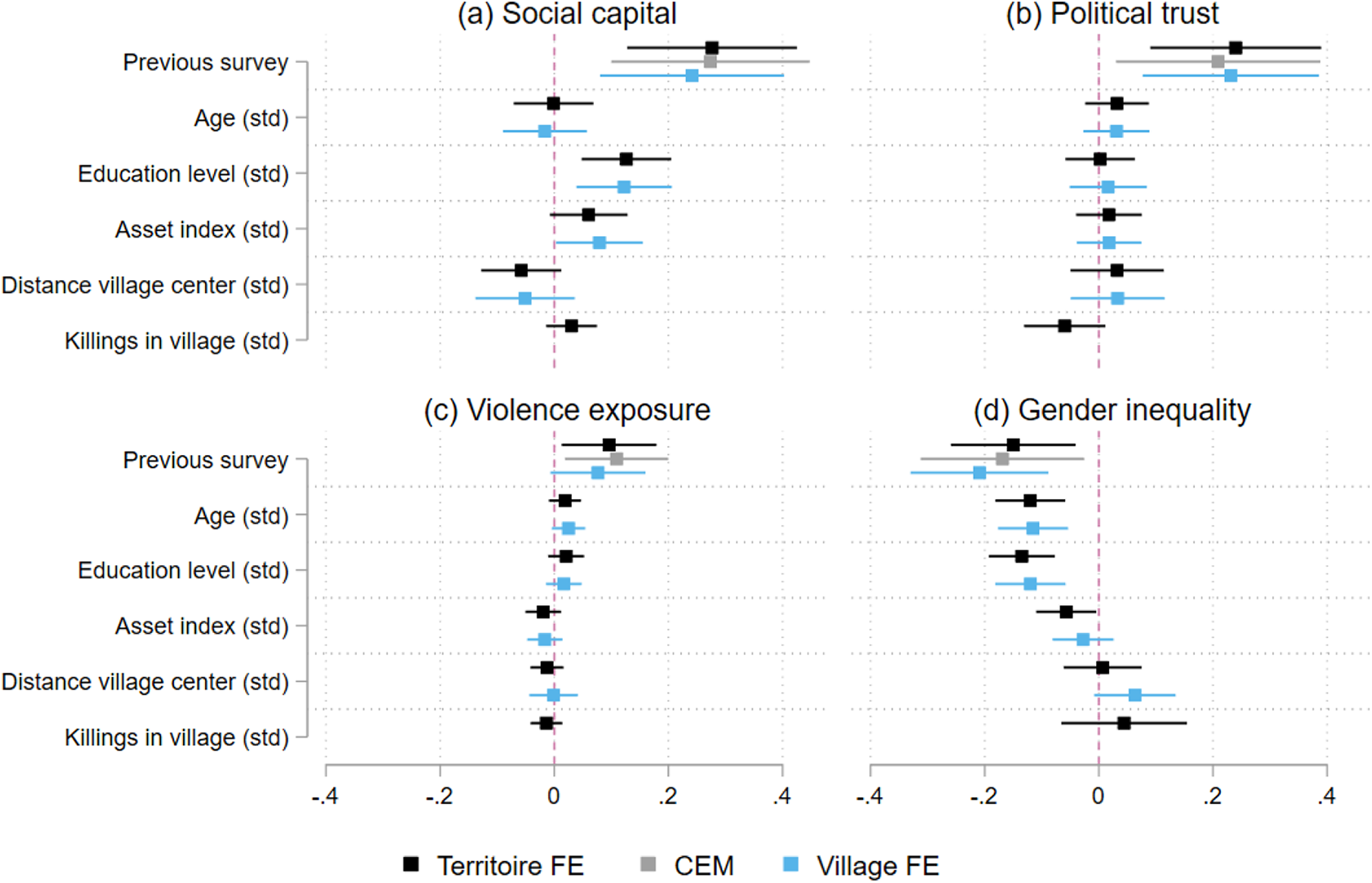

Figure 3 displays the correlations between previous survey participation and these outcomes of interest. As noted before, we estimate three models for each outcome, represented by different colors for point estimates and 95% confidence intervals. We have excluded the Female variable in the graphs because its large effect size dwarfs the other variables’ effects and makes them graphically indistinguishable. 9 The regression tables underlying this plot (including the Female dummy) are available in Tables A1 to A4 in the Online appendix.

The correlations all point in the same direction. Respondents who have participated in previous surveys report higher levels of social capital, more positive views of political institutions, and more exposure to violence, and they oppose gender inequality. This pattern may indicate more open and positive attitudes – but also extreme response bias or acquiescence bias. We investigate these assumptions below.

Alternatively, these findings may also indicate reverse causality as more open and trustful people may be more Effect of previous survey on social and political attitudes

Hypothesis 2: Social desirability bias and item non-response

Next, we investigate whether previous survey experience affects social desirability bias (H2a) and item non-response (H2b). To assess effects on social desirability bias, we make use of a list experiment and a direct question on the experience of domestic violence, an experience widely associated with stigmatization and therefore underreporting. For space reasons, we summarize the findings in the main text, but provide full details in the Online appendix.

List experiments are unobtrusive methods to indirectly measure sensitive attitudes, behavior or experiences by adding random noise and thereby granting respondents privacy (Blair & Imai, 2012). We administered a list experiment and a direct question designed to explore the degree of misreporting of domestic violence. The list experiment yields a prevalence rate of domestic violence of 31%, compared to only 22% reported to the direct question. This suggests a misreporting rate of 9%. The analysis shows that previous survey participation is associated with lower levels of misreporting. However, the results are not statistically significant. Thus, while the findings are generally in line with our theoretical assumptions, results are not sufficiently strong to support the notion that previous survey experience reduces misreporting bias associated with sensitive questions (H2a).

Next, we examine whether previous survey participation affects item non-response (H2b). We operationalize item non-response as the share of all 85 Likert-scaled questions in the survey instrument to which respondents have answered that they ‘don’t know’ the response. Higher values therefore indicate a higher share of item non-response (remember that all of our variables have been standardized; therefore, the coefficients themselves are not informative in absolute terms). Table A10 in the Effect of previous survey on response behavior

Hypothesis 3: Extreme response and acquiescence bias

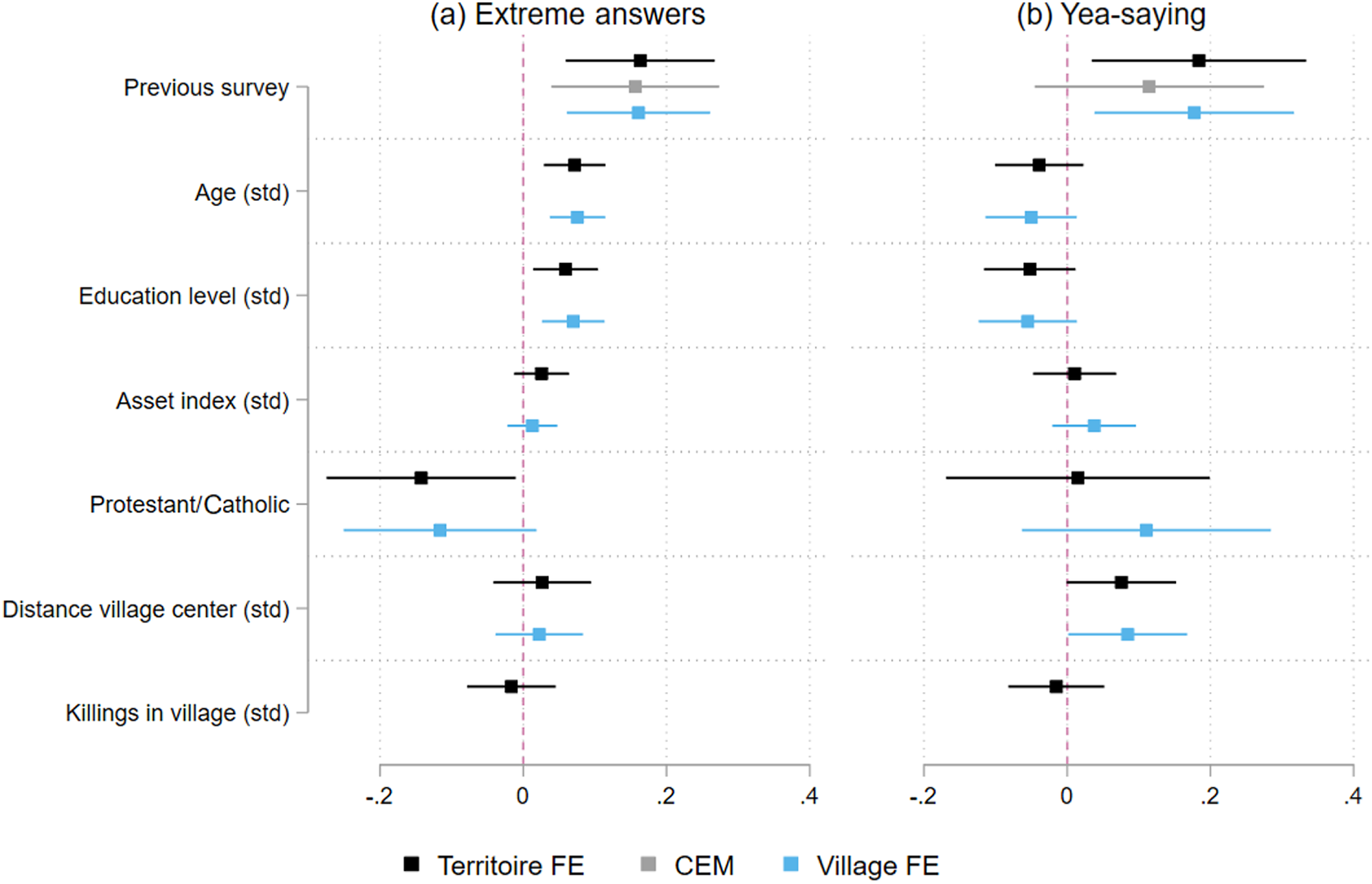

We now turn to extreme responses (H3a) and acquiescence (H3b). In order to test effects of previous survey participation on the first, we follow a procedure suggested by Bachman & O’Malley (1984): we have computed the index for extreme response style as the relative number of scores given within the extreme categories of the rating scale, including ‘strongly agree’ and ‘strongly disagree’. Higher values indicate more extreme response behavior. Panel (a) in Figure 4 plots the effect of previous survey on extreme response behavior according to the three models shown in Table A12 (Online appendix).

We can see that Previous survey participation has a statistically significant effect on people’s preference for extreme answers. Furthermore, Column 4 in Table A12 shows the results for the conditional effect of previous survey on gender. Indeed, the interaction term has a negative and weakly significant coefficient indicating differential effects for men and women.

To assess how far the correlation between Previous survey and extreme response styles may be traced back to reduced motivation and survey fatigue, we investigate whether extreme response behavior is more common towards the end of the survey, where response motivation should be particularly low. To do so, we use the same method to calculate two outcome variables which capture the share of extreme answers – this time we use only questions from the first third and the last third of the questionnaire. We show the results in Tables A13 (first third) and A14 (last third) in the Online appendix. We find no effect for Previous survey experience at the beginning of a survey, but strong effects on extreme answers towards the end of the survey. This finding is in line with the assumption that experienced interviewees are less likely to maintain a high level of motivation to substantively engage with survey questions throughout the entire interview (H3a). 11

Finally, we also analyze two measures of acquiescent response styles (aka yea-saying) which we hypothesized to be positively affected by previous survey experience (H3b). First, we simply calculate the ratio of positive to negative responses. Second, we follow a strategy suggested by Arndt & Crane (1975): they assessed two survey items with the same response options, with one item being the mirror image of the other (including a negative term). Without any yea-saying bias, responses would also mirror each other – agreement with one item should correlate with disagreement with the second. Agreement with both statements would indicate yea-saying.

Regarding our first measure, the total share of affirmative responses, Panel (b) in Figure 4 shows the effect of previous survey participation on yea-saying (detailed results are shown in Table A15 in the Online appendix). 12 Apart from the matched sample, we find a positive effect of previous survey participation. For the second measure we create a dummy variable that is 1 when people ‘strongly agree’ or ‘agree’ with both of the following questions: (1) ‘I am often ashamed in front of people’ and (2) ‘I have no problem to speak my mind in front of other people’. Since the statements are inversely related, agreement with both indicates yea-saying. We find substantive effects of previous survey participation for these specific mirror items (see Table A18) but not for other question pairs (e.g. gender attitudes and domestic relations, respect and discrimination in the community). 13

Due to the specific measurement of our explanatory variable, there is a certain risk that the weak positive correlation between Previous survey participation and yea-saying represents a statistical artefact rather than a causal relation: we rely on self-reported previous survey participation. Individuals with a general personal predisposition to answer questions in the affirmative may claim to have participated in a previous survey (when in fact they have not) and display higher levels of acquiescence throughout the rest of the survey. To assess this possibility, we exploit the fact that we asked whether people had ever participated in a focus group discussion (FGD) right after our main question on previous survey participation. We now limit our sample to respondents who indicated that they had never participated in a focus group discussion (FGD) to exclude all interviewees who may automatically confirm any question related to previous experiences (783 respondents). With the same set-up as in Table A15 (see Panel b in Figure 4), we find similar effects for previous survey participation only in the first two models (Table A19), indicating that initial findings on affirmative response styles may in fact at least partly represent a statistical artifact. Finally, we also assessed variation in the effects of previous survey participation on acquiescence at the beginning and the end of the interview (see above). Contrary to our theoretical argument, we find no evidence of any substantive variation (see Tables A16 and A17). Overall, findings on higher levels of acquiescence among experienced interviewees due to increased survey fatigue remain rather weak.

Taken together, all presented analyses indicate that multiple survey participation can have moderate but still substantive effects on respondents’ stated social/political attitudes and on their response styles (i.e. extreme responses). In principle, however, the first may be a result of the latter. We may have found differences in attitudes between first-time and experienced interviewees (H1) because experienced interviewees tend to systematically report more extreme views on all kinds of items (H3a). To assess this possibility, we have re-estimated the effects of multiple survey participation on social/political attitudes including an additional control for respondents’ extreme response tendency. This additional control substantively reduces the statistical and substantive effect of previous survey participation on some attitudes (see Column 4 in Tables A1 to A4 in the Online appendix). This simple auxiliary analysis indicates that some attitudinal differences between first-time interviewees and experienced respondents can be traced back to changes in response styles, while others may represent substantive attitudinal effects of prior survey participation.

To what extent can these effects then bias the correlations between political and social variables observed in ‘standard’ micro-level analyses of political violence?

Standard models on violence exposure and social capital

Effects on standard models on the sociopolitical legacy of violence

Considering the moderate effects of previous survey participation presented above, we expect multivariate regressions to be affected only in individual models that are particularly prone to bias because they include explanatory and outcome variables that are both rather strongly correlated with previous survey participation, as indicated above. We aim to assess such a worst-case scenario in terms of potential omitted variable bias introduced by a failure to account for previous survey participation.

Specifically, we assess whether previous survey participation may influence or confound the effects of exposure to violence on social capital (e.g. Blattman, 2009; Koos, 2018) or political trust (e.g. De Juan & Pierskalla, 2016; Sacks & Larizza, 2012).

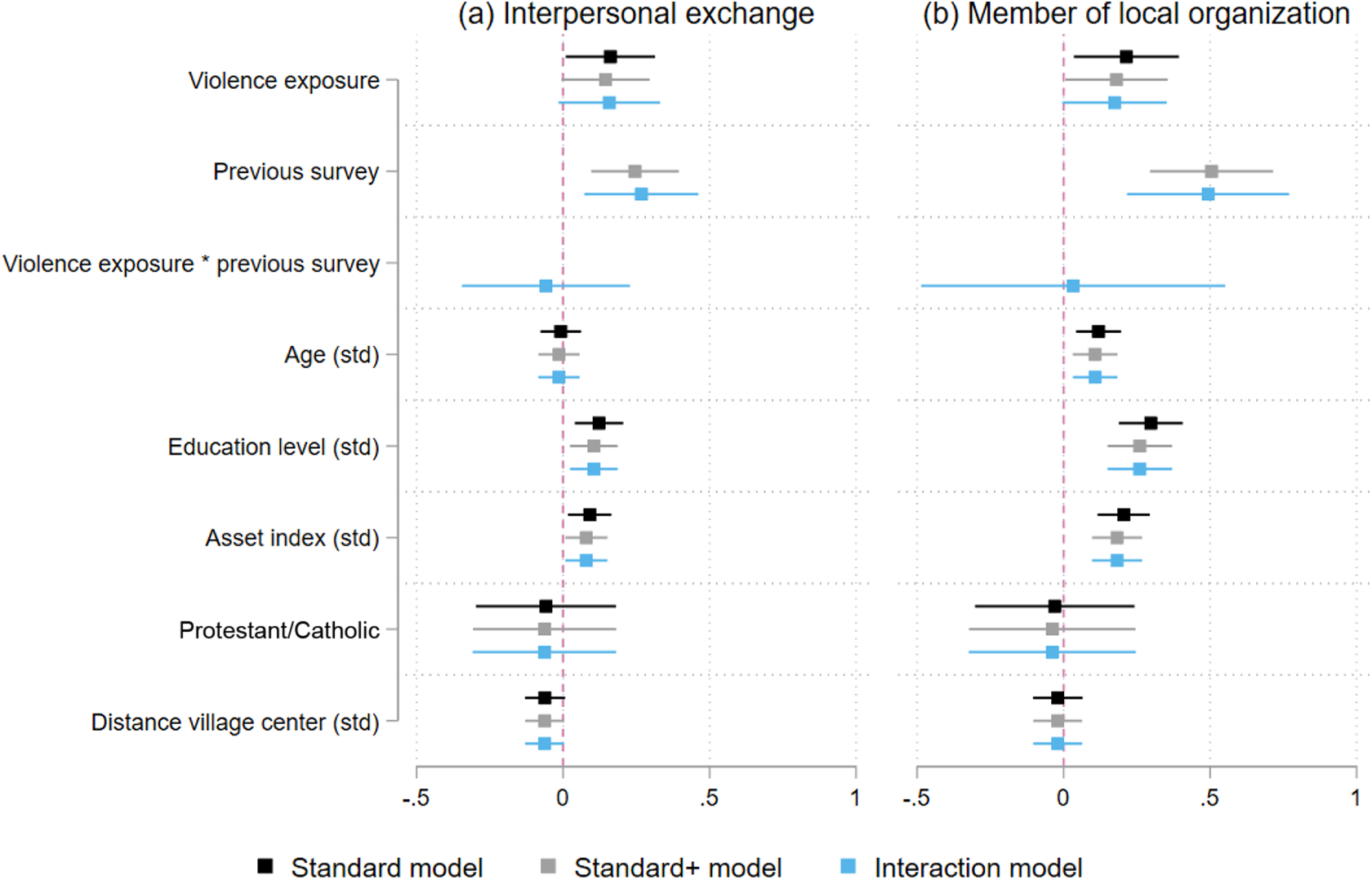

We estimate three models for each outcome variable. The first is a standard model in which we estimate the effect of Violence exposure by including the standard controls, but without previous survey participation. We use the same measure of Violence exposure as above – that is, whether someone in the household was directly affected by violence on the part of armed groups (32% prevalence). In the second model (standard+), we add our Previous survey variable, and in the third we interact exposure to violence and previous survey participation to assess whether the effect of exposure to violence is dependent on previous survey participation. 14

Regarding the social legacy of violence, we consider two outcome variables that reflect measures of previous studies on the determinants of social capital: the frequency of interpersonal exchange with kin and non-kin and the number of local organizations a respondent (or someone from the household) is a member of. Figure 5 plots the results for both outcomes (see Tables A21 and A22 in the Online appendix for detailed results). For interpersonal exchange (Panel a), violence exposure has a positive and statistically significant effect (p < 0.05) in the standard model, but when we add the previous survey variable as well as the interaction term, the effect of violence exposure gets weaker in terms of effect size and statistical significance (p < 0.10). As above, Previous survey is positive and statistically significant. This indicates that the effect of exposure to violence appears to be weakly confounded by previous experience. A similar pattern can be observed for membership in local organizations (Panel b).

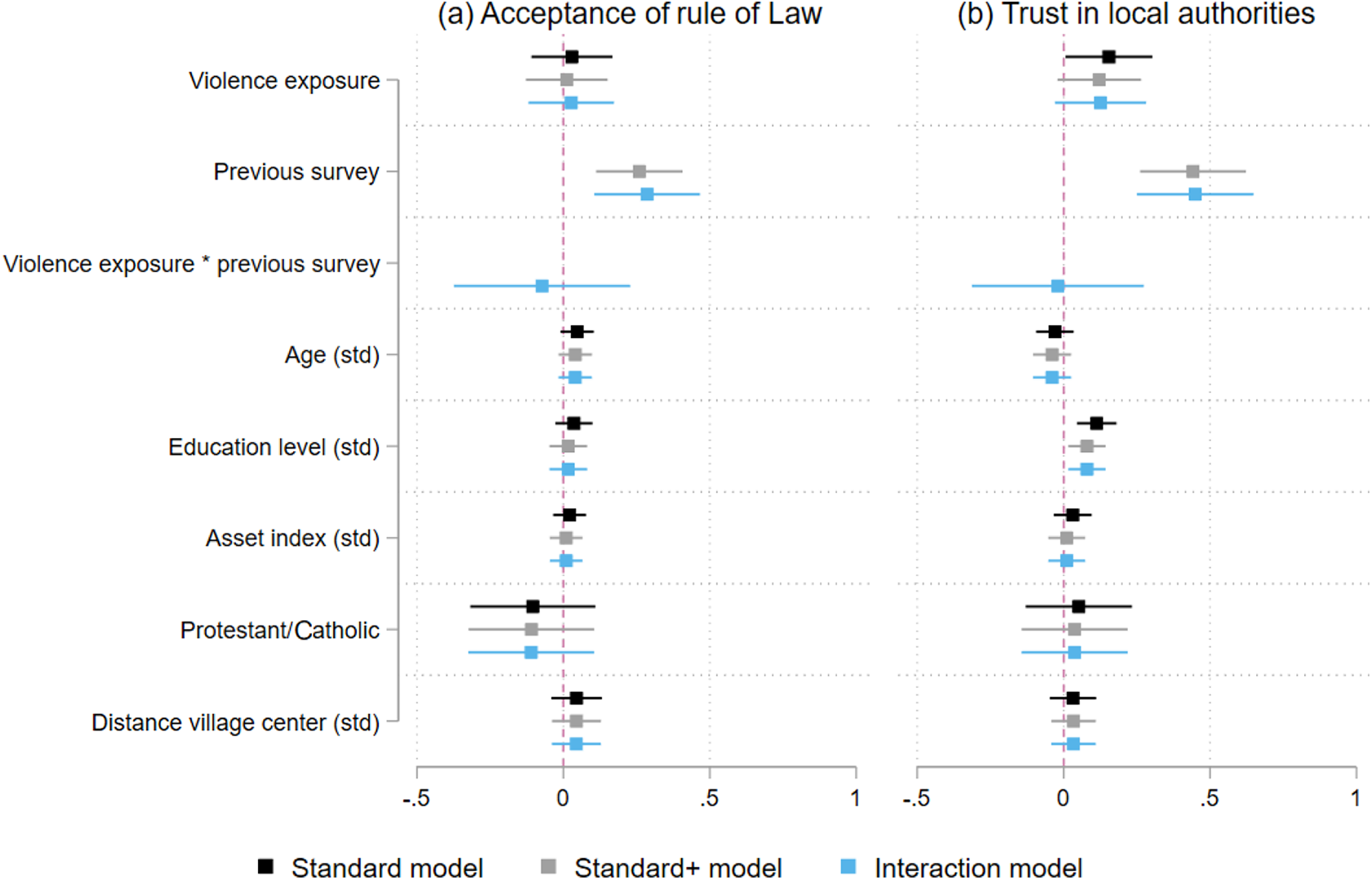

We now turn to models that estimate the effect of violence on questions associated with perspectives on political institutions using the same model specifications as above. Figure 6 plots the results for two outcome Standard models on violence exposure and political/institutional trust

Overall, the effects of the previous survey are noticeable but certainly not strong. This modest impact can also be observed with a weak increase of the models’ explanatory power, that is, the adjusted R2 increases by around 1% to 2%. We interpret this as evidence that previous survey experience will only in rather rare circumstances influence analyses of the effects of exposure to violence on social and political attitudes. 15

Discussion

Taken together, our findings indicate that multiple survey participation can influence response behavior in conflict contexts. As such, the results are in line with previous studies that demonstrate how specific characteristics of these contexts can influence the results of survey-based research (e.g. in terms of pronounced interviewer effects). Which lessons can be drawn from these findings? We suggest measures that (a) reduce the prevalence of multiple survey participation, (b) prevent potential methodological implications, and (c) help us understanding its potential real-world effects.

Our preliminary overview of survey-based research in and on conflict zones indicates a relatively high degree of concentration in only a few countries. Increasing the country-level diversity of survey-based research can not only help avoiding methodological implications of multiple survey participation. Investigating conflict contexts beyond countries such as Uganda, Nepal or Colombia would also improve our understanding of diverse micro-level dynamics and reduce the survey burden on individual populations. Similarly, researchers should diversify strategies of sample selection in areas with high survey frequencies. Most surveys rely on random walk patterns or random sampling from household lists provided by local elites (village chiefs and administrators). Both strategies may be prone to repeated over/under-sampling of households depending on their location (i.e. city centers), economic status and/or relationship to the village elite. The use of innovative sampling strategies based on remote sensing information may not only contribute to more effective randomization but also to higher diversification of survey samples and lower risks of multiple survey participation (e.g. Chew et al., 2018).

In most cases, no specific precautions may be required to mitigate adverse effects of multiple survey participation on academic research – particularly in areas with low or moderate survey activity. However, for research in contexts displaying a high survey frequency (e.g. after peace agreements or natural disasters or in the countries listed above) researchers should take previous survey experience into account when designing and analyzing surveys. Our findings indicate that survey participation effects increase with the duration of interviews as respondents’ fatigue increases. In areas with high survey frequencies, researchers could keep this mind – for example, by more carefully balancing information needs and the objective of avoiding overly lengthy interviews and by concentrating attitudinal survey items early in the survey instrument. A simple way to consider potential confounding effects in the analysis is to include a question like ours in the survey instrument and to consider that dummy variable in the estimations. This dummy variable should then capture any differences between the mean values of the outcome variable that cannot be accounted for by differences in the values of the other explanatory variables (Bartels, 1999). Finally, researchers may also consider defining replacement rules in the sample protocol for respondents that indicate having participated in previous surveys. 16

Our findings indicate that previous survey experience may lead to actual changes in attitudes. We believe that it is extremely important to fully understand these potential effects – most notably, to avoid any potential negative repercussions of academic survey research. We therefore suggest investigating these ‘real-world’ survey participations effects more thoroughly. First, future surveys could include more detailed questions to document the date of the survey, the organization responsible for the survey (e.g. World Bank, UN, university), respondents’ experience with previous surveys, expectations related to survey participation, and other characteristics that would allow for the disaggregation of previous survey experiences into fragments of substantive interest. Second, specific panel-surveys could be designed to identify the mechanisms through which interviews affect interviewees’ responses – for example, investigating variation in knowledge (e.g. probes of political knowledge) and observable behavior (e.g. public goods games) across experienced and first-time interviewees. A better understanding of substantive survey participation effects would allow researchers to incorporate them into ethical procedures of survey-based research in conflict zones.

Conclusion

Survey research is an important tool for strengthening our understanding of individual-level experiences of violence; attitudes towards justice, development, and the state; and various kinds of indicators relevant to the design of public policies and programs that contribute to social, economic, and political development. For this and other reasons survey research undertaken by academic researchers and in the context of impact evaluations in conflict-affected states has increased tremendously in recent years.

We have shown that the prevalence of previous survey exposure is significant (18%) in the eastern DRC. We cannot assess this number in relation to other conflict contexts because – to the best of our knowledge – no previous study has investigated the extent of multiple interviews in conflict-affected areas. In absolute terms, we consider this magnitude quite substantial: the responses of every fifth respondent may be influenced by previous data collection efforts rather than by local conditions of interest alone. Moreover, based on our basic review of survey-based research in conflict contexts, we assume that other countries/regions such as Northern Uganda, Rwanda, Burundi, and Nepal are likely to have even higher survey exposure prevalence rates. The DRC is located on the lower end of the scale in terms of the number of previous survey-based studies per country (Figure 1).

Our findings indicate that previous survey exposure affects outcomes of substantive interest, including social capital, political trust, the reporting of violence, and gender-related attitudes. Furthermore, some types of response behavior may be influenced by previous survey exposure, particularly extreme answering. Finally, we have shown that not accounting for previous survey exposure can in some individual cases bias the results of some standard models on the sociopolitical legacy of violent conflicts.

These findings should be interpreted in the light of two main limitations of our analysis that could be addressed in future studies on the effects of multiple survey participation. First, our analyses are limited in terms of identifying the specific causal mechanisms that link multiple survey participation to specific response patterns. Previous research on panel surveys in Western democracies suggests a variety of channels such as increased knowledge and awareness, actual changes in attitudes and behavior, and ‘mere’ response styles in subsequent standardized interviews. A more sophisticated research design (see suggestions above) and more fine-grained measures of previous survey experience could help in specifying the exact effects of multiple survey participation.

Second, our findings are based on a single case study of a most-likely case. The DRC can be considered ‘extreme’ in terms of what we defined as key scope conditions of our primary arguments: particularly high levels of violence, humanitarian needs, and dependence on international aid agencies. Relying on a single case study we cannot test these potential scope conditions themselves – other factors may be responsible for the relatively strong survey participation effects in the DRC. Further analyses are needed (1) to specify the conditions of survey participations effects, (2) to assess the generalizability of the findings to other conflict zones, and (3) to gain more substantive insights into the potential footprints of intensive survey-based research in and on populations in conflict-affected areas.

Footnotes

Replication data

Acknowledgements

We are grateful to participants of the Workshop ‘Understanding individuals’ opinions and experiences in conflict-affected societies’ (Oslo, 14–15 May 2018) for comments on a previous version of this article. We are indebted to 22 enumerators and the management team of Research Initiatives for Social Development in Bukavu, South Kivu, in particular to late Jean-Paul Zibika, Pacifique Mwambusa, Emmanuel Kandate, Eustache Kuliumbwa, and Pepin Mugisho.

Funding

Financial support from the German Research Foundation (DFG Grant KO 5170/1-1) is gratefully acknowledged.