Abstract

This article finds that online reviews submitted during the weekend tend to have lower rating scores than reviews submitted during the week. Analyzing 400 million reviews across 33 e-commerce, hospitality, entertainment, and employer platforms, the authors find that weekend reviews have a 3% lower relative share of 5-star ratings and a 6% higher relative share of 1-, 2-, or 3-star ratings compared with weekday reviews. The pattern emerges even when controlling for quality of reviewed items. This weekend effect is surprising given that studies usually report higher happiness levels and a better mood on weekends. The authors discuss several explanations related to where the review is submitted (platform characteristics), what the review is about (listing characteristics), and who submits the review (reviewer characteristics). They present evidence that temporal self-selection of reviewers is a dominant driver of the weekend effect. During the weekend, a different set of users—those more prone to write negative reviews—is more likely to leave a review. These findings complement extant research on review self-selection by adding a temporal layer to the self-selection processes inherent in online reviews. This article also highlights managerial implications by demonstrating that solicitations sent during the weekend (vs. weekday solicitation) lead to collecting more negative reviews.

Differences between the week and the weekend are widely referred to as “weekend effects” and are well-researched in many disciplines such as health care (Bell and Redelmeier 2001), finance (Cross 1973), environmental studies (Cleveland et al. 1974), meteorology (Cerveny and Balling 1998), and psychology (Helliwell and Wang 2015). The mechanisms behind these weekend effects vary depending on the context. In this article, we uncover a weekend effect in online review ratings that is contrary to the expectation one might have, given that people generally have a better mood and higher happiness during the weekend (Stone, Schneider, and Harter 2012; Tsai 2019). We find that online reviews submitted on the weekend consistently carry lower ratings. The robustness of this effect is demonstrated using datasets across 33 major platforms (including Amazon, Glassdoor, Yelp, and IMDb) and almost 400 million reviews, covering more than 20 million reviewed products, movies, services, or employers, from more than 60 million users. The datasets feature a wide range of product and e-commerce, service and leisure, and workplace-related review platforms (see Figure 1).

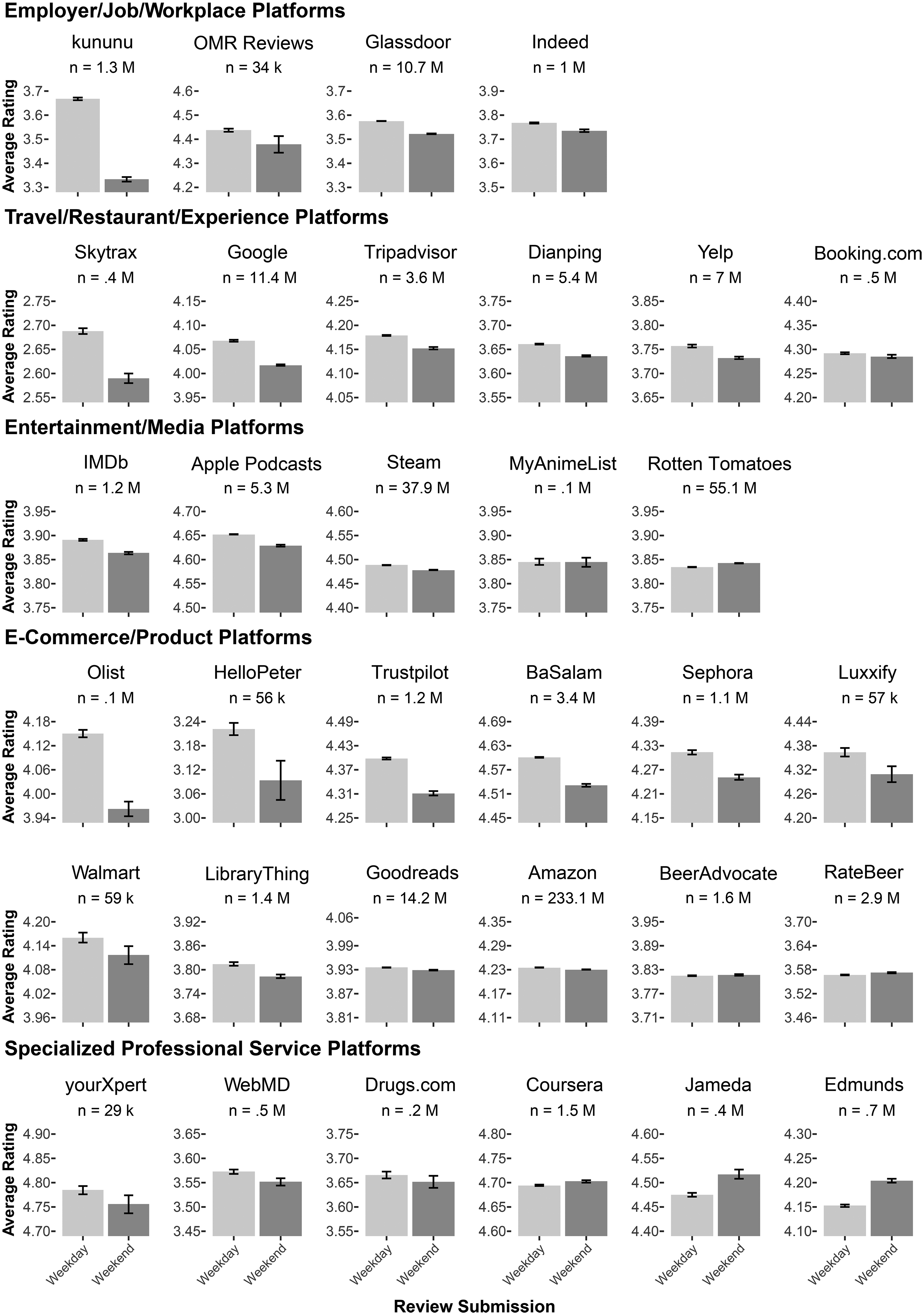

Average Online Review Ratings Submitted During Weekdays Versus Weekends.

To establish the robustness of the weekend effect, we first show across our extensive set of online platforms that reviews written on the weekend are consistently more negative than reviews written during the week. Specifically, we find this to be the case in 26 platforms (79% of all our datasets). We demonstrate the robustness of the effect across different model specifications, including listing- and reviewer-level fixed effects, and by varying the minimum number of reviews required for both reviewers and listings. To better understand the “weekend effect” in online reviews, we tested a large set of potential drivers of this phenomenon. We categorize the potential drivers as related to the platform, the listing, and the reviewer. Regarding platform-specific characteristics, we find that the platform category accounts for most of the variation in the weekend effect, with platforms collecting reviews related to the employer, job, or workplace showing the biggest weekend effect.

Regarding listing-related drivers, we find that neither systematic differences between listings (e.g., a product, business, restaurant, hotel, software, movie, employer) reviewed during the week versus the weekend, nor fluctuations in quality potentially caused by greater crowdedness on weekends in the hospitality sector, can fully explain the effect. In terms of reviewer-related drivers, we find evidence for both within-reviewer and between-reviewer differences. However, we find that across most platforms, between-reviewer differences, which we term temporal self-selection, are larger and more consistent than within-reviewer differences, although exceptions exist. Specifically, we identify distinct user segments, observing that those who write reviews on weekends tend to submit lower average ratings compared with those who review exclusively during the week. We find traces in the review texts of these two user segments that are systematically different, revealing potential explanations for the differences in these two groups: Weekend reviewers consistently use fewer words reflecting social processes (i.e., mentions of friends, family, and humans in general). We do not find these textual differences for reviewers who review both during the week and on the weekend. This paints a picture of weekend reviewers being less socially connected. This finding is complemented by additional evidence showing that weekend reviewers have fewer friends—another sign of lower social integration (Falci and McNeely 2009; Ueno 2005). Together, these findings suggest that the weekend effect is less explained by where the review is submitted or what is being reviewed but rather by who submits the review, where those opting to write reviews only on the weekend tend to write more negative reviews and show signs of lower social connectedness.

Finally, we demonstrate the practical relevance of the weekend effect for online reviews and show how the effect is meaningful for businesses and platforms despite being small. Our findings imply that the same listing could receive different online review ratings, regardless of the underlying quality, depending on whether the review is composed by a weekend or weekday reviewer, and we find evidence that companies can influence this composition via review solicitation timing. We use various approaches to demonstrate the managerial relevance of the weekend effect of online reviews. First, we highlight the heightened vulnerability of listings with few reviews. Second, we show how the timing of review solicitations can impact the valence of collected online reviews. To reduce the number of negative reviews written on the weekend, our findings thus suggest that businesses strategically solicit reviews exclusively on weekdays. We test this strategy across five studies on online review platforms for which we know or can approximate when the review was solicited. Two of those are akin to an A/B test in which we randomly solicited reviews during weekdays versus the weekend (one is a field study). Our findings demonstrate that solicited reviews show a weekend effect and support the strategic solicitation of reviews on weekdays to reduce the negative weekend effect.

Our study contributes to the broad literature on weekend effects by introducing a weekend effect in online reviews to marketing research. Further, this work augments the literature on online reviews, and particularly self-selection in online reviews, by establishing a temporal dimension to self-selection processes based on the day of the week. Moreover, by examining how contextual factors influence audience selection for sharing experiences and the communication of satisfaction, this research highlights the importance of situational factors, particularly the timing of review creation, in shaping electronic word of mouth.

The remainder of the article is organized as follows. We begin with an overview of relevant literature. Next, we assemble robust evidence for a weekend effect from almost half a billion online reviews across 33 datasets worldwide. Then, we explore potential drivers of the weekend effect and identify temporal self-selection as the most empirically supported explanation. We further investigate the underlying causes of temporal self-selection and find systematic differences between weekend and weekday reviewers, particularly in their social markers and connections. Finally, we offer managerial implications, discuss the findings, and conclude with suggestions for further research.

Related Literature

Our research is related to three different research streams: user-generated content (UGC) and how it varies across time, self-selection of online reviewers, and contextual influences.

UGC and Temporal Variation

Variations in UGC across time have been analyzed in the context of social media platforms (e.g., tweets, Facebook posts), blog posts, and music streaming. Given the day of the week, not only do users engage differently (Zor, Kim, and Monga 2022), they also produce other content: Tweets show higher levels of positive affect (Golder and Macy 2011) as well as higher levels of happiness (Dodds et al. 2011) during the weekend compared with weekdays, with some evidence for a small “Blue Monday” (sadness) and a “Thank God It's Friday” (happiness) effect (Stone, Schneider, and Harter 2012). Similar patterns are found for Facebook posts (Kramer 2010; Wang et al. 2014) and blog posts (Mihalcea and Liu 2006). According to Ryan, Bernstein, and Brown (2010), higher happiness on weekends is related to nonwork experiences. While findings of previous research in an offline (Egloff et al. 1995) and online social media context suggest that, on average, individuals are happier and in a better mood on the weekend, this good mood seems not to spill over to online review ratings.

However, it is essential to account for self-selection when transferring this observation of mood to other contexts such as online review platforms, where underlying motivations to contribute might vary. Whereas both social media and online review platforms tend to not be representative of the general population (Anderson and Simester 2014; Mellon and Prosser 2017), they differ regarding some underlying motivations to create content and engage in either of the two types of UGC (social media posts and online reviews). A literature review on the various underlying motivations (see Table WA1) reveals that while social media content creation and online reviews share some motivations, such as impression management (Berger 2014), other motivations differ significantly between the two. Social media posts are often motivated by self-expression, connection with others, and socialization (Kaplan and Haenlein 2010), whereas online reviews are often related to providing feedback and helping users regulate their emotions through venting (Hennig-Thurau et al. 2004; Hennig-Thurau, Walsh, and Walsh 2003). Given these differences and the subsequent self-selection of users on social media and online review platforms, it is not apparent that the positive weekend effect regarding content on social media platforms translates to reviews written on online review platforms. We are the first to extend the findings of weekend effects in UGC from social media posts to online reviews and thus augment the literature of context, and particularly weekend effects, in UGC.

Reviewer Self-Selection and Characteristics

Self-selection of reviewers has been shown to affect online reviews (Hu, Pavlou, and Zhang 2017). Prior literature identifies different forms of self-selection that affect online ratings such as purchase self-selection (Hu, Pavlou, and Zhang 2017; Kramer 2007), intertemporal self-selection (Li and Hitt 2008; Moe and Schweidel 2012), and polarity self-selection (Hu, Pavlou, and Zhang 2017). Findings regarding intertemporal self-selection and its effect on consumer ratings are particularly relevant to the current research. Intertemporal self-selection refers to consumers reviewing at different times throughout the product life cycle. For example, Li and Hitt (2008) find differences between early and late reviewers in the product life cycle, with early reviewers writing more extreme and positive reviews due to self-selection of the type of consumer (early vs. late adopters). Relatedly, there is a debate in political research about how the day of the week affects election polls (Lau 1994) and voting outcomes (Bradfield and Johnson 2017; Sanders and Jenkins 2016), as a different group of people might select to participate in a poll or vote on a weekday versus a weekend, leading to differences in political forecasts and outcomes. Given previous findings that time can impact self-selection and subsequent behaviors, the choice to write reviews and the review content might also change as a function of the day of the week.

Apart from temporal selection of reviewers, previous research demonstrates that reviewer characteristics can be associated with differences in online ratings. Moe and Schweidel (2012) show that more active reviewers write more negative and more differentiated reviews. In contrast, less frequent reviewers tend to follow the opinions of previous reviewers. Reviewer expertise has also been associated with harsher evaluations in both online consumer settings (Bondi, Rossi, and Stevens 2024) and offline peer review contexts (Gallo, Sullivan, and Glisson 2016). Personality traits matter as well, as Han (2021) demonstrates, and even interpersonal closeness to an intended audience can influence review valence (Barasch and Berger 2014; Chen 2017; Dubois, Bonezzi, and De Angelis 2016).

We extend prior literature on intertemporal self-selection and rating differences among reviewer groups by identifying a temporal layer of self-selection related to the weekend that we term “temporal self-selection.”

Contextual Influences

Previous research shows that human decision-making can be susceptible to influences from externalities that seem far from obvious and often affect decisions without the person being aware of this influence. This was shown even in critical contexts with highly consequential decisions such as interviewer decisions about medical student candidates (Redelmeier and Baxter 2009), rulings of judges (Danziger, Levav, and Avnaim-Pesso 2011; Redelmeier and Baxter 2009), or earning calls (Chen, Demers, and Lev 2018), but it is also relevant to consumer activities such as shopping (Ahlbom et al. 2023). Hence, one could assume that subtle contextual influences might explain differences between review ratings given on the weekend versus weekdays. Some evidence that contextual influences can affect reviews has been presented by researchers such as Gao et al. (2018), who showed that consumers from countries with high power distance tend to give low ratings to hotels because, without noticing it, they feel superior to the hotels; and Brandes and Dover (2022) showed that bad weather affects review writers and reduces online rating scores. In accord with these findings, a potential contextual influence on online reviews could be the difference between the week and the weekend.

If this is true, the question arises as to what is different between weekdays and the weekend? Here, researchers suggest that on a weekend people are, in general, in a positive emotional state (e.g., Helliwell and Wang 2015; Stone, Schneider, and Harter 2012). Additionally, research on other domains of UGC offers ample evidence that an elevated mood on the weekend can lead to more positive information shared during this time (Dodds et al. 2011; Golder and Macy 2011). Following these findings, one could infer that reviews submitted during the weekend are more positive. We conducted an exploratory survey with managers and employees of online review platforms and confirmed that, indeed, their expectation would also be that online reviews submitted during the weekend are more positive (see Web Appendix B).

However, previous literature also finds evidence for what is termed weekend or Sunday neurosis (Maennig, Steenbeck, and Wilhelm 2014), referring to lower levels of reported subjective well-being (Akay and Martinsson 2009) and life satisfaction on a Sunday (Kavetsos, Dimitriadou, and Dolan 2014). Moreover, it is for this reason that the weekend is the most frequent time for suicide attempts (Klemesrud 1976). The observation of Sunday neurosis dates back to Ferenczi (1919) and Frankl (1959), and terms like weekend blues, weekend gloominess, Sunday malaise, or weekend loneliness have long been common. Thus, prior findings on contextual influences do not give a clear picture of how reviews might be affected during the week versus the weekend.

In what follows, we first present evidence for a weekend effect across 33 datasets and demonstrate robustness of the effect across multiple model specifications controlling for listing or reviewer characteristics. Additionally, we show that a similar effect appears on public holidays. We next present a comprehensive set of potential explanations for the weekend effect and test their ability to explain the observations. We then build on our results and discuss their managerial implications across five additional studies, including a field experiment.

Evidence for a Weekend Effect in Online Reviews

We use 33 online review datasets to incorporate various types of targets being reviewed. The datasets can be grouped into five categories: product and e-commerce reviews (e.g., Amazon, Sephora); travel, restaurant, and experience reviews (e.g., Yelp, Google, Tripadvisor), with the predominant category being restaurants and hotels; employer, job, and workplace-related reviews (e.g., Glassdoor, B2B software review sites evaluating tools primarily used in professional settings, like G2); entertainment and media-related reviews (e.g., IMDb, Rotten Tomatoes); and reviews related to specialized professional services (e.g., Coursera, lawyer review sites). Table 1 gives a comprehensive overview and descriptives of our datasets. Table WC5 provides an overview of our data sources used. Across most datasets, we code Saturdays and Sundays as being the weekend, which is in line with what a vast majority of countries consider as “weekend.” Only for BaSalam, an e-commerce review platform in Iran, did we code Friday (and Saturday) as the weekend, because in Islam, Friday (Yawm al-Jumu'ah) is the holy day, similar to Sunday in Christianity or Saturday (Shabbat) in Judaism.

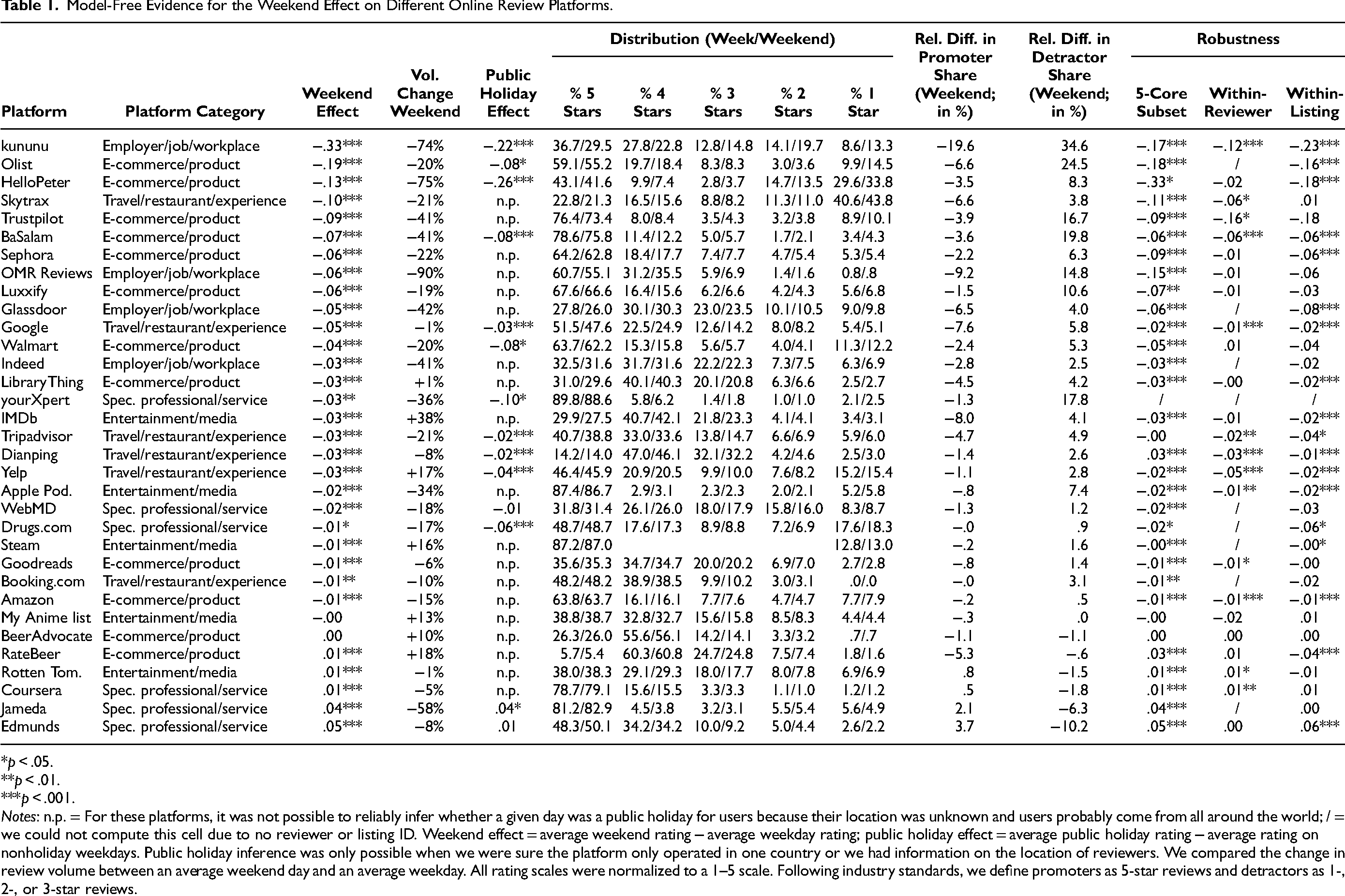

Model-Free Evidence for the Weekend Effect on Different Online Review Platforms.

*p < .05.

**p < .01.

***p < .001.

Notes: n.p. = For these platforms, it was not possible to reliably infer whether a given day was a public holiday for users because their location was unknown and users probably come from all around the world; / = we could not compute this cell due to no reviewer or listing ID. Weekend effect = average weekend rating − average weekday rating; public holiday effect = average public holiday rating − average rating on nonholiday weekdays. Public holiday inference was only possible when we were sure the platform only operated in one country or we had information on the location of reviewers. We compared the change in review volume between an average weekend day and an average weekday. All rating scales were normalized to a 1–5 scale. Following industry standards, we define promoters as 5-star reviews and detractors as 1-, 2-, or 3-star reviews.

Model-Free Evidence

Concentrating on our focal variable, the review valence (i.e., the star rating), we find consistent evidence for a weekend effect in online reviews across our comprehensive datasets spanning 33 online review platforms and nearly 400 million reviews (see Figure 1). First, our results show a significant (p < .001) overall main effect, confirming that reviews written on the weekend are significantly more negative than those written on weekdays, on average by .04 stars. Focusing on each individual platform, we find a significant negative weekend effect in 26 platforms (79%), while 2 platforms (6%) show no significant difference, and 5 platforms (15%) show a small, reversed weekend effect (see Table 1). Regarding review volume, on 26 of 33 platforms, fewer reviews are submitted on a typical weekend day compared with a typical weekday (see Table 1).

To incorporate the full distribution of online review ratings submitted on weekdays and weekend days, we follow standard industry practices using the net promoter score (NPS) and classify ratings as either detracting or promoting. We find that relative to the weekday distribution, on weekends the percentage of reviews classified as “promoting” (i.e., 5-star ratings) is on average 3.0% lower (min = −19.6%, max = 3.7%), while those considered “detracting” (i.e., 1-, 2-, and 3-star ratings) are on average 5.7% higher (min = −10.2%; max = 34.6%). This suggests that the weekend effect is driven by fewer 5-star reviews and more 1-, 2-, and 3-star reviews submitted on weekends.

In addition to the consistent weekend effect across platforms as shown in Figure 1, we see when examining online review ratings submitted on different days of the week that across weekdays, no clear and consistent pattern emerges across all platforms (see Figure WC2). In this article we focus on differences between weekdays and weekends, but we discuss how future research could extend our results to different days of the week.

Robustness of the Weekend Effect

Different subsets

We demonstrate the robustness of the weekend effect in different subsets, as can be seen when looking at within-listing differences in Table 1 (i.e., listings that received reviews during both the week and weekend). The evidence disproves the possibility that worse products, businesses, or employers are being reviewed on the weekend, as the weekend effect tends to persist even for the same listing.

The results in Table 1 also show that the weekend effect is robust to different specifications, such as considering only reviewers who have written at least five reviews and reviewed listings that have accumulated at least five reviews (“5-core subset”). To further assess the robustness across different cutoffs, we test the weekend effect within subsets of reviewers or listings with a minimum number of reviews (see Figures WC3 and WC4 in Web Appendix C). The following patterns appear: On some platforms, subsets of reviewers with greater experience (i.e., having submitted more reviews) are associated with smaller weekend effects (see, e.g., kununu, BaSalam, Google, Dianping, Yelp), whereas on other platforms, the weekend effect is larger among more experienced reviewers (see, e.g., Sephora, Amazon) (see Figure WC3). In subsets of listings with a high number of reviews, we again find no consistent pattern in how the weekend effect changes (see Figure WC4). Overall, the key insight from these analyses is that the weekend effect is robust, even in datasets comprising experienced reviewers or review targets with a substantial number of existing reviews.

Across geographies

Furthermore, we find that the weekend effect is consistent across geographic locations. For instance, the restaurant review platforms Yelp (United States) and Dianping (China) both exhibit a significant weekend effect, with similar patterns in review ratings across the week (see Figure WC2), despite operating on opposite sides of the globe. This pattern holds for employer review platforms such as Glassdoor and Indeed (mainly United States) versus kununu (Germany), as well as for e-commerce marketplaces like Amazon (primarily northern hemisphere, United States) compared with Olist (Brazil, southern hemisphere), Trustpilot (Denmark), or HelloPeter (South Africa). And within our dataset of worldwide Google reviews (the only dataset with reviews from all around the world), we find that the weekend effect is significant across the ten largest countries (with regard to review volume).

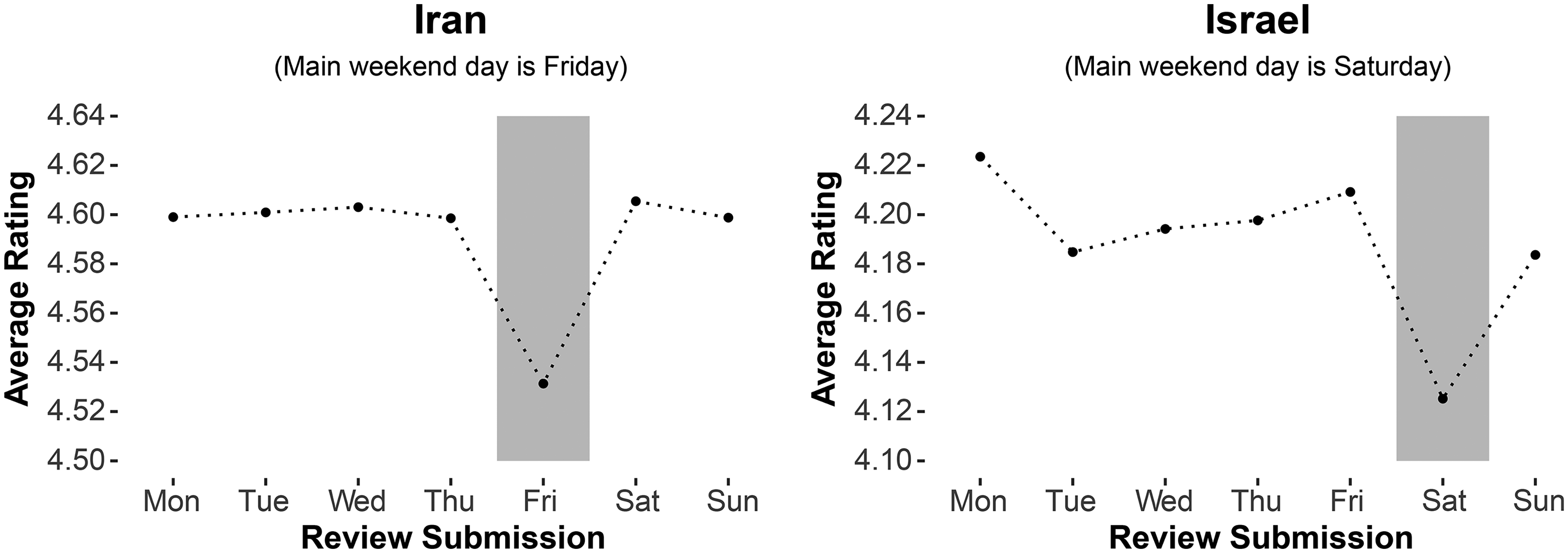

Most reviews in our dataset probably stem from a location where the weekend is commonly defined as Saturday and Sunday, but there are two notable exceptions: Iran with Friday (Yawm al-Jumu'ah) and Israel with Saturday (Shabbat) as the most prominent weekend day. In line with that, in the dataset of the Iranian BaSalam e-commerce marketplace, Friday has the lowest online review rating scores. For Google reviews of businesses in Israel, indeed Saturday is the day when the worst reviews are submitted (see Figure 2).

Average Online Review Ratings Across Countries with Different Weekends.

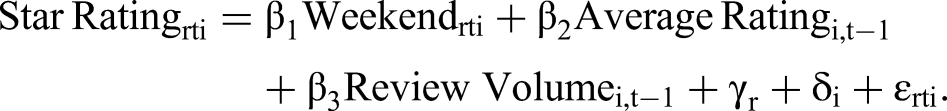

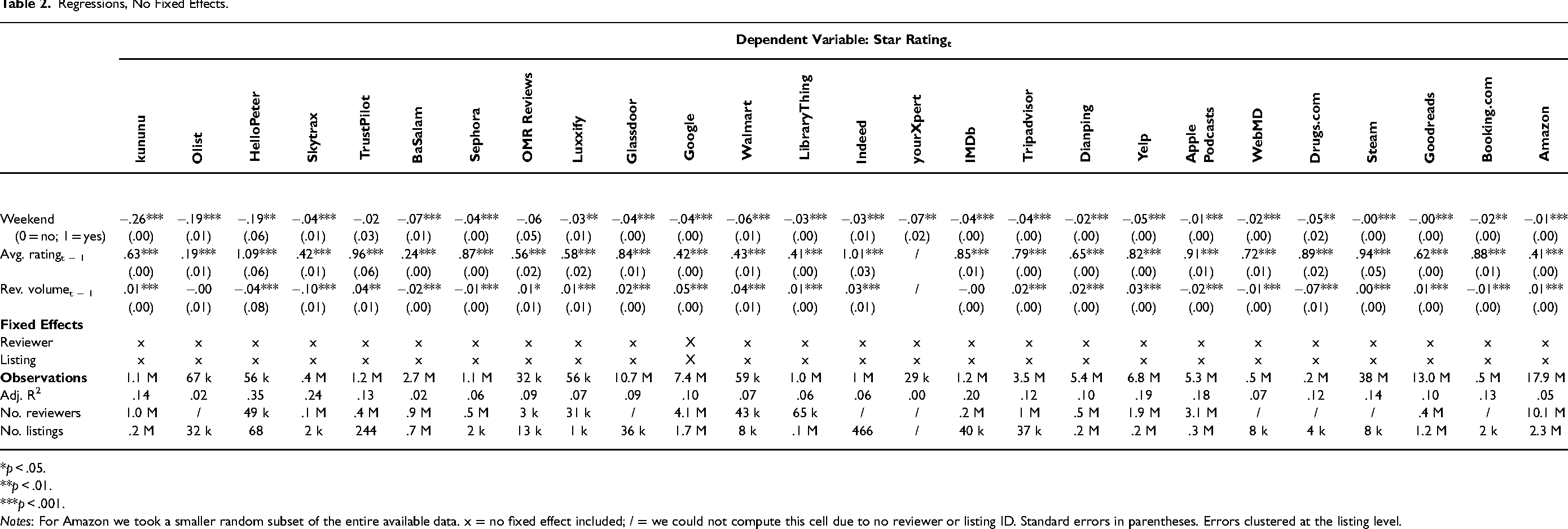

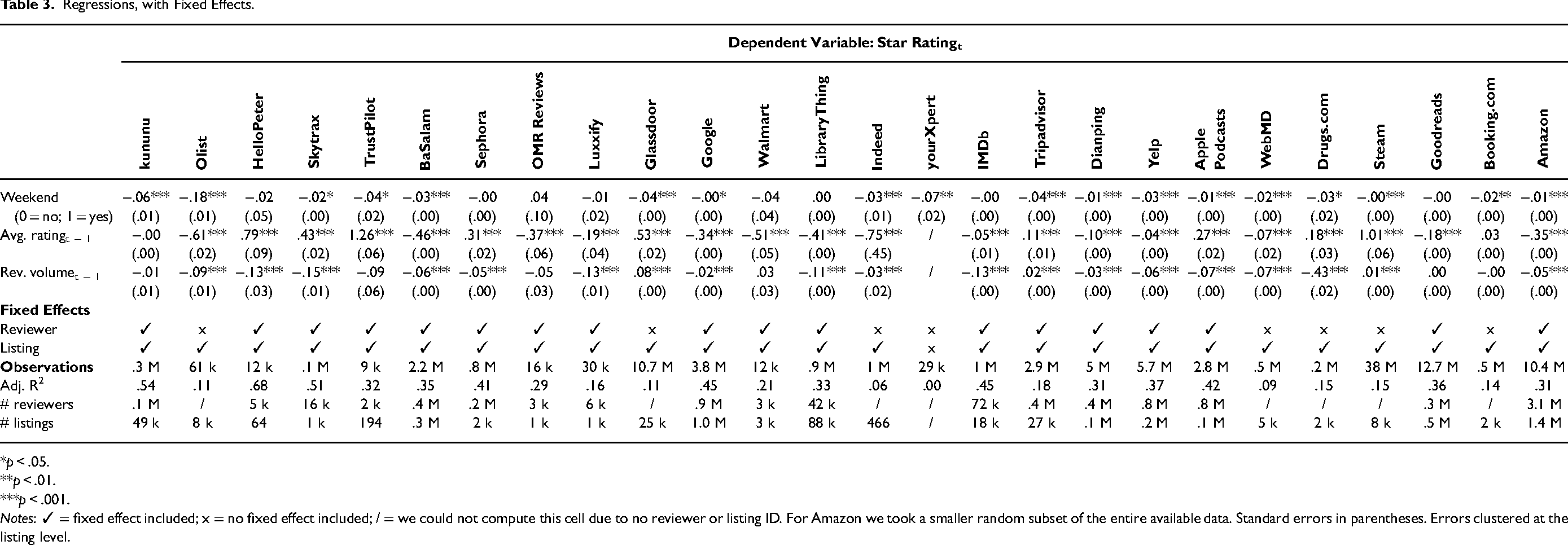

Regression Analysis with Fixed Effects

We confirm our results from Table 1, running regressions for all datasets for which we found a significant weekend effect in the model-free evidence (see Table 1, “Weekend Effect” column). Our dependent variable is the review valence (i.e., the star rating) submitted by reviewer r, at time t, for the review target i. The latter is the listing being reviewed (i.e., a product, business, restaurant, hotel, software, movie, or employer). The main independent variable of interest is a dummy variable that indicates whether this review was submitted during the weekend (0 = no, 1 = yes). The unit of analysis is a review. We control for both the review valence and volume at t − 1 of review target i, capturing both the average rating and the number of reviews that a user would be exposed to—and potentially influenced by—at time t (Lee, Hosanagar, and Tan 2015). Thus, these covariates are time-varying and are therefore separately identified from reviewer and business fixed effects, accounting for time-invariant heterogeneity. Table 2 shows the results without fixed effects, while in Table 3 we add a reviewer fixed effect (γr) and a business fixed effect (δi), whenever the data availability permits. The model including reviewer and listing fixed effects is thus specified as follows:

Regressions, No Fixed Effects.

*p < .05.

**p < .01.

***p < .001.

Notes: For Amazon we took a smaller random subset of the entire available data. x = no fixed effect included; / = we could not compute this cell due to no reviewer or listing ID. Standard errors in parentheses. Errors clustered at the listing level.

Regressions, with Fixed Effects.

*p < .05.

**p < .01.

***p < .001.

Notes: ✓ = fixed effect included; x = no fixed effect included; / = we could not compute this cell due to no reviewer or listing ID. For Amazon we took a smaller random subset of the entire available data. Standard errors in parentheses. Errors clustered at the listing level.

Results in Table 3 indicate a significant negative effect of the weekend dummy, confirming that reviews submitted during weekends tend to have lower ratings. With listing fixed effects added, 24 of 26 platforms that have a weekend effect in the model-free evidence (Table 1) still show a significant weekend effect (see Table WD1). Even after we control for both listing- and reviewer-level fixed effects (when possible), the negative weekend effect remains statistically significant for two-thirds of the platforms (17 of 26 platforms; Table 3). For a few platforms, the effect becomes insignificant once fixed effects are included, likely because only reviewers and listings who wrote or received reviews both during the week and the weekend are included in the estimation of the weekend coefficient, as we explain subsequently. Web Appendix D reports the results where fixed effects are gradually added (i.e., first none, then only reviewer fixed effects, then only listing fixed effects, then both).

The Case of Public Holidays: A Public Holiday Effect?

The purpose of this analysis is to examine whether the weekend effect extends beyond the periodicity of a seven-day cycle and manifests on public holidays, which share characteristics with weekends, such as work-free status and altered daily routines. Indeed, in finance, not only a weekend effect but also a holiday effect has been found (Thaler 1987). Similarly, in medicine, the weekend effect holds on public holidays (Sharp, Choi, and Hayward 2013). Given these results, we investigate online reviews and their rating on public holidays (e.g., Labor Day, Christmas) for robustness and conceptual replication of the weekend effect. We further leverage a setting similar to a natural experiment across a space and time dimension (i.e., a certain location where a given day is a public holiday vs. another location where it is not).

To do, so we acquired a dataset of public holidays for countries in our dataset with the Nager.Date API and merged this information with our datasets where users’ and businesses’ locations can be reliably determined or inferred (14 platforms; see Table WC1). We calculate a “public holiday effect,” which we define as the average rating on a public holiday minus the average rating on nonholiday weekdays (see Table 1). We find that online review ratings submitted on public holidays in the respective country are similar to online review ratings submitted during weekends. Overall, we find a public holiday effect for 11 of the 14 (79%) platforms. On average, the public holiday effect is similar in size to the weekend effect in online review ratings. It is important to note that this analysis is constrained by local variations in the definition of public holidays and by individual differences in the relevance attributed to specific holidays.

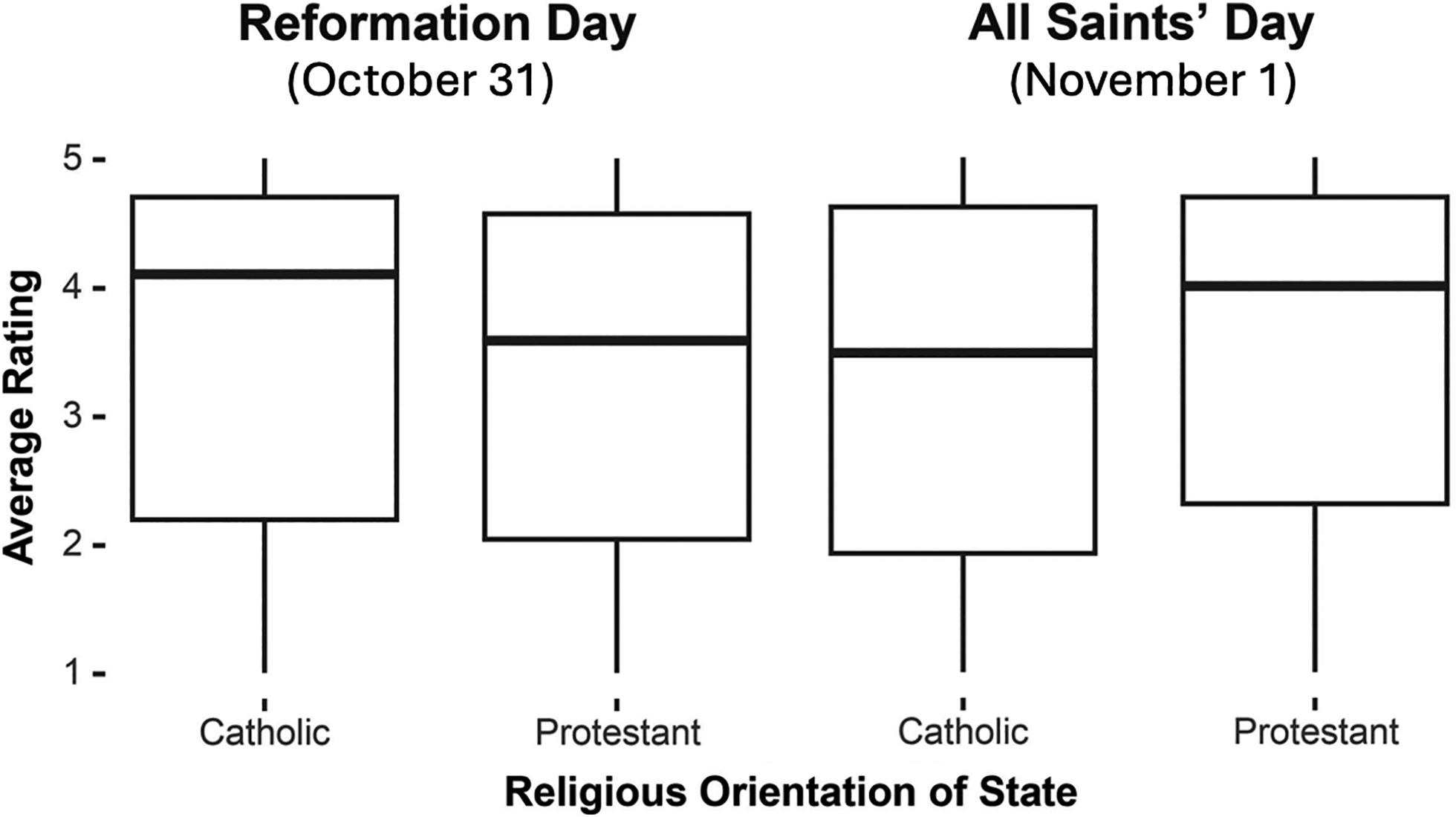

To further test the difference in reviews written on public holidays, we use the context of public holidays in Germany, which differ between states. Historically, some federal states in Germany, such as Bavaria, were more Catholic, whereas other federal states, such as Schleswig-Holstein, were more Protestant. These influences and the federal system have led to differences in public holidays across the federal states that persist today. For example, All Saints’ Day (Allerheiligen) on November 1 is a public holiday only in Catholic federal states, whereas Reformation Day (Reformationstag) on October 31 is a public holiday only in Protestant federal states. This holds for everyone working in a federal state, independent of one's personal religion. This setting thus allows us to compare reviews written in states that were subject to a public holiday versus not. We expect to find worse reviews in those federal states where the respective day is a work-free public holiday compared with the control group of states where the respective day is a normal working day.

To test this, we use the dataset of employer reviews in Germany, which for some reviews includes a variable about the federal state of the reviewed business. Figure 3 supports our hypothesis. The average rating on Reformation Day, which is a work-free public holiday only in Protestant federal states, is significantly worse in Protestant federal states (M = 3.28) compared with Catholic federal states (M = 3.52, t(668) = 2.98, p < .01). Conversely, on All Saints’ Day, which is a work-free public holiday only in Catholic federal states, review ratings are significantly better in Protestant states (M = 3.50) than in Catholic states (M = 3.26, t(1,028) = −3.09, p < .01).

Average Online Review Rating Across German States with Different Public Holidays.

Explaining the Weekend Effect

So far, we have established that the weekend effect exists and is robust for most online review platforms across a diverse and comprehensive set of categories (see Table 1). We further showed its robustness in different subsets (i.e., reviewers or listings with at least a certain number of reviews; see Web Appendix D) and tested different model specifications, such as controlling for listing and reviewer characteristics (see Tables 2 and 3).

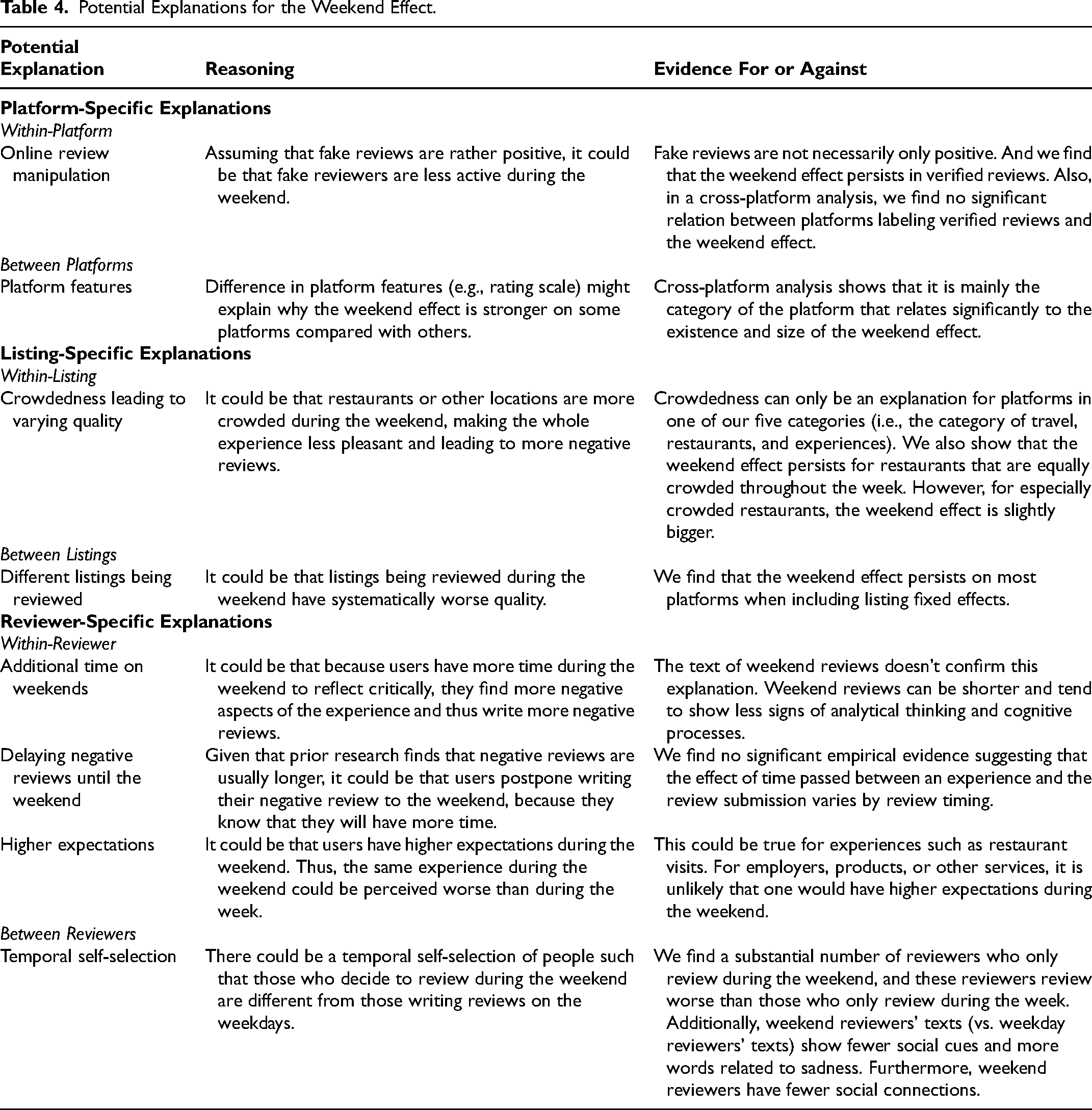

Next, we discuss and examine a comprehensive set of potential explanations for the weekend effect, where some explanations can only apply to some of the datasets (e.g., how crowded the place is can only matter for services). We categorize these explanations as platform-, listing-, and reviewer-related. Additionally, we distinguish between explanations that operate within a single platform, listing, or reviewer and those that arise between different platforms, listings, or reviewers. Within-explanations pertain to variations specific to a given platform, listing, or individual reviewer over time, while between-explanations address differences observed between distinct platforms, listings, or groups of reviewers, such as variations in products or reviewer demographics. Table 4 summarizes these explanations and their supporting empirical evidence, discussed in detail below. The explanation for which we find the most consistent evidence and that may apply universally across platforms from all categories is a reviewer-related explanation, namely temporal self-selection referring to between reviewer differences. We also observe some, though less consistent, evidence for within-reviewer differences as well as an amplification of the weekend effect for some platforms (e.g., employer reviews) and via restaurant crowdedness.

Potential Explanations for the Weekend Effect.

Platform-Specific Explanations

The weekend effect in online reviews might stem from platform-specific explanations, both “within” a single platform and “between” different platforms. Within-platform explanations could involve aspects specific to a platform that change in the course of a week, such as review manipulation or fake reviewing might. Between-platform explanations pertain to variations across platforms themselves (e.g., different rating scales).

Within-platform: online review manipulation

One could argue that fake reviews or online review manipulations (as covered in Mayzlin, Dover, and Chevalier [2014]) are responsible for the weekend effect. On weekends, professional fake reviewers who typically write positive reviews may be less active, potentially leading to lower average ratings and contributing to the observed weekend effect.

We argue that this is unlikely for the following reasons: First, fake reviews are not necessarily positive—they can also be negative, written by competitors (Luca and Zervas 2016). Thus, if fake reviewers are less active during the weekend, we should see a reduction in both very positive and very negative reviews. However, among reviews submitted on a weekend, we observe a lower share of very positive reviews and a higher share of negative reviews (see Table 1). Second, we find that the weekend effect persists on platforms that consciously make efforts to reduce fake reviews or where fake reviews are arguably absent (i.e., yourXpert and Yelp). Third, the weekend effect also holds when restricting the analysis to verified reviews (Amazon) or active reviews (kununu) only (see Web Appendix E). Altogether, we find no evidence to support a claim that fake reviewing can explain the weekend effect, as the weekend effect persists even in datasets and contexts where fake reviews are minimized and accounted for.

Between platforms: platform features

To investigate whether specific platform features are associated with the weekend effect, we collected a set of platform characteristics (see Table WC1) and ran a review-level regression with the numeric rating as the dependent variable. The regression includes interactions between each platform characteristic and a weekend indicator to assess which features amplify or attenuate the weekend effect. To create a balanced dataset across platforms, we randomly sampled an equal number of observations from each of the 33 datasets and combined them into a single dataset. All rating scales were linearly rescaled to a common range from 1 to 5. The platform characteristics include (1) the platform category, (2) the ratio of weekday to weekend review volume, (3) positive imbalance among all existing reviews on the platform (defined as in Schoenmueller, Netzer, and Stahl [2020]), (4) type of business model, (5) availability of a reviewer social network feature, (6) presence of reviewer recognition features, (7) availability of a verified review functionality, and (8) ability for businesses to respond to reviews.

We report the full regression in Web Appendix F. In summary, we find a negative main effect of the weekend indicator (−.05, SE = .01, p < .001), indicating that reviews written on weekends are, on average, less positive. Among the 33 platforms studied, this negative weekend effect is attenuated when platforms offer a reviewer social network. This may be because, under these conditions, review content resembles other UGC, such as typical social media posts (see Web Appendix A), where, as discussed previously, weekends are associated with more positive content submissions.

However, the weekend effect becomes even stronger on platforms where verified reviews are enabled and where responses to reviews are supported, suggesting that features promoting accountability amplify the weekend-related decline in star ratings. Regarding the platform category, the weekend effect is strongest for employer/job/workplace platforms and weakest (or even reversed in a few cases) for platforms focusing on specialized services (e.g., Coursera) or with a pure focus on one product (e.g., Edmunds). We can only speculate why platforms in the employer, job, and workplace category show a more pronounced weekend effect. It is possible that individuals thinking about job-related matters during the weekend may be experiencing significant dissatisfaction at work. For most people, work is typically not a top priority during the weekend unless they are facing serious issues. A heightened focus on work-related issues during leisure time could coincide with a greater likelihood of leaving a review, which is more likely to be negative.

Listing-Specific Explanations

Potential explanations of the weekend effect related to what is being reviewed have two sources. It could be that the same target being reviewed during the week and the weekend changes (“within-listing”): An obvious explanation would be crowdedness of businesses during the weekend. The other string of explanations revolves around different targets being reviewed during the week and weekend (“between listings”).

Within-listing: quality fluctuations/crowdedness

Businesses oftentimes experience higher traffic on weekends, particularly in settings like restaurants. Crowdedness can lead to slower service, longer queues, and higher stress levels among service employees, resulting in a lower-quality customer experience. As a result, the same business might receive more negative reviews on weekends than on weekdays, simply due to the impact of crowd-induced variations in service quality.

Notably, crowdedness can explain the weekend effect only for platforms in one of our five categories: travel, restaurants, and experiences. Here, it could be that quality fluctuates between weekdays and weekends, but this is unlikely for products and employers, where the perceived underlying quality of what is being reviewed is presumably stable, at least throughout the time frame of a week. Since we demonstrate that the weekend effect persists across four other platform categories—including e-commerce products and employers—where quality should remain consistent at least throughout the week, quality variation between the week and weekend cannot be a universal explanation for the weekend effect.

For a few of our datasets in the travel, restaurant, and experience category, however, crowdedness could matter and potentially contribute to the weekend effect. To determine the relevance of crowdedness for the weekend effect of reviews in these contexts, we use an external data source (SafeGraph) that allows us to assess crowdedness for each business through an approximated number of daily visitors for each location. After carefully merging Yelp businesses with locations, we identified roughly 70,000 businesses with visitor data and sufficient coverage (see Web Appendix G). While we do find that relatively highly frequented places during the weekend show a larger weekend effect than businesses that are relatively highly frequented during the week, most importantly, for businesses that are equally busy during the week and weekend, there is still a significant weekend effect.

In another dataset (yourXpert), dates of the actual experience (i.e., consultation with a lawyer) are known, allowing us to assess whether the quality of what is reviewed changes between weekdays and weekends. We find no significant difference for week and weekend consultations (Mweek = 9.50, Mweekend = 9.51, t(7,120) = −.19, p = .84), but for timing of the review submissions, the platform shows the usual weekend effect (see Table 1).

In summary, we find no evidence that quality fluctuations are responsible for the weekend effect. The special case of crowdedness might exacerbate the weekend effect, but only in contexts where crowdedness is relevant, which is only in 6 of our 33 datasets (i.e., those focused on restaurants and travel such as Yelp).

Between listings: different listings being reviewed

If the listing (e.g., a product, business, employer) reviewed on weekends tends to be systematically of lower quality, this could potentially explain the observed weekend effect. Several pieces of evidence challenge this explanation.

After we control for listing fixed effects, the weekend effect loses its significance for only 2 of the 26 platforms that initially showed the effect (see Table WD1)—namely Trustpilot and OMR Reviews, which are among the platforms with the fewest reviewed listings in our dataset (i.e., around 250 shops for Trustpilot and 1,000 types of software on OMR Reviews; see Table WC1).

It could also be that on a weekend, less popular listings (i.e., products with a systematically lower review volume or lower average review valence) are being reviewed, thereby triggering users to submit a lower rating. These time-variant characteristics of a listing are not controlled for through fixed effects. Therefore, in the regression displayed in Table 2, we controlled for review volume and review valence in t − 1, as users might have seen it when they submitted the review in t. Also, with the inclusion of these variables, the weekend effect remains relevant for 25 out of the 26 platforms (see Table 2).

The persistence of the weekend effect under the described conditions suggests that it cannot be universally explained by differences in listing quality, instead implying other factors contributing to lower ratings on weekends.

Reviewer-Specific Explanations

Beyond the characteristics of what is being reviewed, who submits the review (i.e., reviewer characteristics) could help explain the weekend effect. We examine and discuss potential within-reviewer explanations such as reviewers having more time to write on weekends, a tendency to delay writing negative reviews until the weekend, and higher expectations for weekend experiences. For between-reviewer explanations, we observe that different reviewers are more likely to submit reviews on weekends compared with weekdays, a phenomenon we refer to as “temporal self-selection.”

Within-reviewer: more time to reflect

Since people typically have more free time on weekends, one might expect them to have more time to reflect critically on their experiences, potentially leading to less favorable and more detailed reviews compared with those written during the typically busier weekdays. It is known that online reviews with a higher star rating tend to have shorter text (Ullah et al. 2016), suggesting that reviewers feel the need to provide more detailed explanations when leaving a 1-star rating (Ghasemaghaei et al. 2018). Upon testing two of our datasets, this was confirmed (Amazon: Corrrating, word count = −.09, p < .001; Yelp: Corrrating, word count = −.20, p < .001). However, contrary to this expectation, weekend reviews—despite being generally more negative—do not necessarily contain longer text, as we find mixed results across Amazon and Yelp, with both platforms showing a weekend effect (see Table 1). On Yelp, reviews submitted on weekends tend to have fewer words (Mweek = 107.24, Mweekend = 100.70) and shorter sentences (Mweek = 13.50, Mweekend = 13.01), and show lower signs of cognitive involvement (Mweek = 9.19; Mweekend = 8.98). For Amazon, this is reversed (word count: Mweek = 48.32, Mweekend = 48.86; words per sentence: Mweek = 11.05, Mweekend = 11.09; cognitive processes: Mweek = 9.79, Mweekend = 9.80).

Moreover, if reviewers were taking more time to write on weekends, we might expect a higher proportion of reviews submitted via desktop rather than mobile devices. But data from Tripadvisor contradict this: During weekends, there is a lower proportion of desktop submissions (92.3%) compared with weekdays (94.9%).

In summary, it is difficult to test whether online reviews during the weekend are worse due to users having more time to reflect. Collectively, findings from our secondary data using different proxies regarding this explanation show no strong evidence for reviewers engaging in deeper reflection when writing reviews on weekends.

Within-reviewer: delaying negative reviews

People might wait until the weekend to write negative reviews, as they generally have a tendency to procrastinate doing unpleasant tasks (Pychyl et al. 2000). However, previous research demonstrated a fading affect bias, which implies that people forget negative memories more quickly than memories related to positive emotions (Walker, Skowronski, and Thompson 2003). Furthermore, research documents a positivity bias for temporally distant events (Eyal et al. 2004; Herzog, Hansen, and Wänke 2007). Both these theoretical accounts would predict that if people postpone writing certain reviews to the weekend, those reviews should be positively biased. And indeed, empirical research supports this. Reviews for rather temporally distant events (i.e., the event happened some time ago) tend to be more positive (Huang et al. 2016; Neumann, Gutt, and Kundisch 2022). In most of our secondary data, we cannot assess the time that passed between the actual experience and the writing of the online review. Exceptions are Tripadvisor and yourXpert, where the time passed is 1.24 months and 9.66 days, respectively.

To test whether people delay writing their negative reviews, we compare the time elapsed since the experience for reviews written during the week versus those written during the weekend. For both platforms, results of a two-sided t-test showed no significant difference (Tripadvisor: Mweek = 1.20 months, Mweekend = 1.16 months; t(90,378) = 1.94, p = .053; yourXpert: Mweek = 14.28 days, Mweekend = 17.54 days; t(444) = −.84, p = .403). This suggests that the explanation for the weekend effect seems not to be driven by people delaying sharing negative experiences to the weekend.

Within-reviewer: higher expectations

Another explanation for worse reviews on weekends could be that there are different expectations on weekdays and weekends. Elevated expectations during weekends may stem from their association with leisure, relaxation, and the desire to maximize the value of this rare, work-free time. Given that experiences are evaluated relative to expectations, the heightened standards associated with weekends may lead to more negative perceptions of experiences that fail to meet the elevated expectations.

The desire to make optimal use of the limited weekend time may manifest in engaging in different activities compared with weekdays. We have ruled out that the weekend effect is caused by this explanation in the “between-listings” explanation above, where we showed that the weekend effect is not driven by different listings being predominantly reviewed during the weekend. Moreover, we showed that the weekend effect persists when controlling for a business's previous rating and review volume, which can be seen as a proxy for the quality and popularity of the business and thus related to expectations of the product or experience (see Table 3). Two datasets allow for an additional analysis related to price as a reflection of expectations. In Yelp, some businesses denote a price range (i.e., $, $$, $$$, or $$$$) for their services, and Sephora lists the price of reviewed products (in USD). For Yelp, the average price of reviewed businesses during both the week and weekend is between $ (= 1) and $$ (= 2) (namely, 1.89), and for Sephora products reviewed during the weekend, prices are lower compared with weekday reviews (Mweek = 49.30 USD, Mweekend = 48.07 USD; p < .001). This again confirms that on the weekend it is not the case that more upscale or expensive targets are being reviewed for which users may hold higher expectations.

Generally, like the previous crowdedness account, different expectations seem to be relevant only for the category of travel, restaurants, and experiences. For products and employers, one would not expect weekend reviewers to have higher expectations. Using available proxies, we find no consistent evidence that different expectations of consumers between the week and weekend can explain the weekend effect.

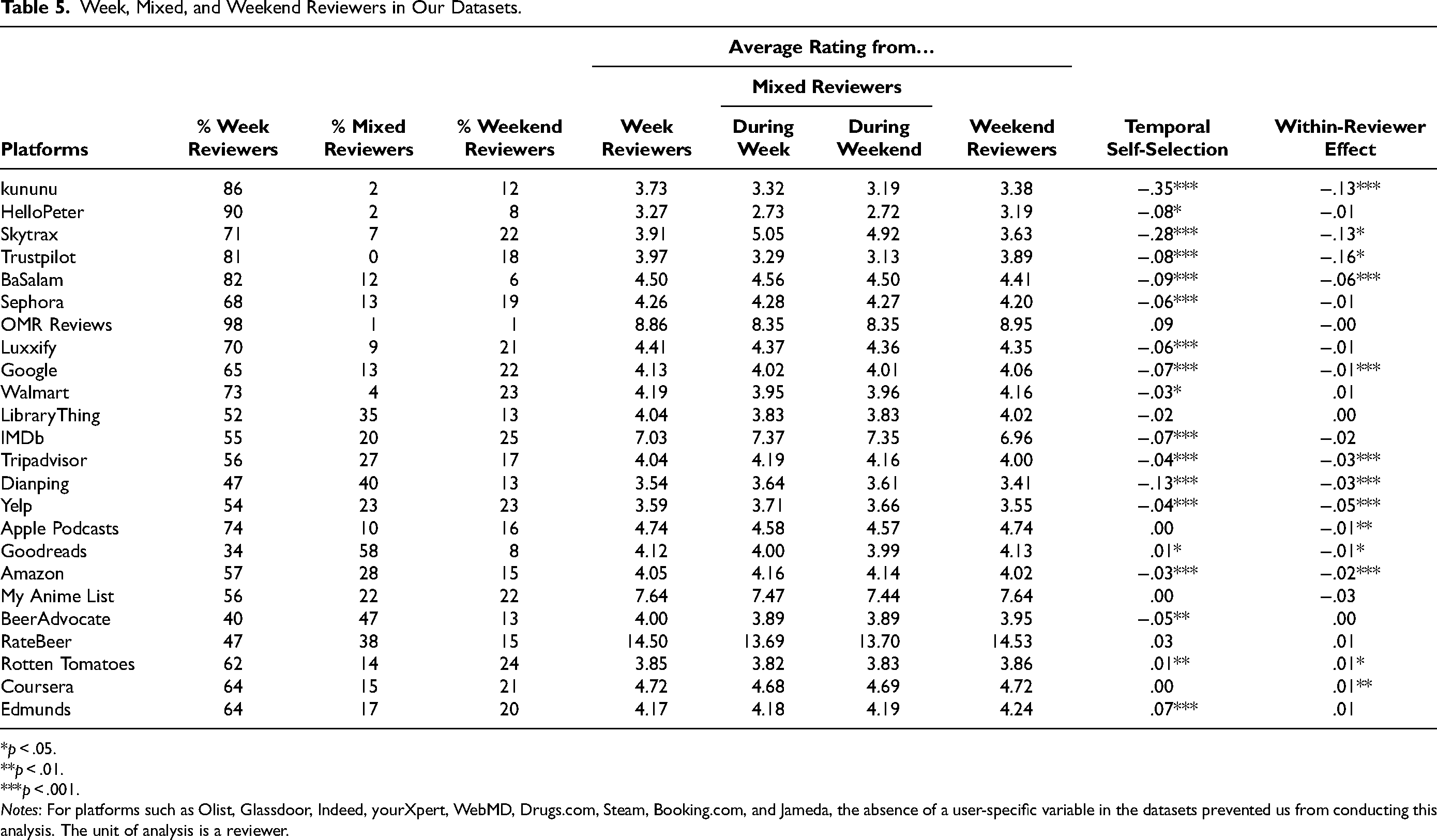

Between reviewers: temporal self-selection

Drawing on the literature of self-selection, particularly intertemporal self-selection, we conjectured that different types of reviewers could choose to write on weekends versus weekdays, leading to systematic differences in online reviews and ratings. To investigate this possibility, we define three types of reviewers: weekday reviewers, who submit reviews only during the week; mixed reviewers, who submit both during the week and weekend; and weekend reviewers, who submit reviews only on the weekend. When averaging across all our datasets, we find that, on average, every sixth reviewer reviewed only during the weekend (17%). A little more than half of reviewers reviewed only during the week (64%), and the rest are mixed reviewers who reviewed both during the week and the weekend (19%). To understand whether the weekend effect is driven more strongly by this temporal self-selection of reviewers or by differences within reviewers, we compare the three groups and their ratings submitted during the week and weekend (see Table 5).

Week, Mixed, and Weekend Reviewers in Our Datasets.

*p < .05.

**p < .01.

***p < .001.

Notes: For platforms such as Olist, Glassdoor, Indeed, yourXpert, WebMD, Drugs.com, Steam, Booking.com, and Jameda, the absence of a user-specific variable in the datasets prevented us from conducting this analysis. The unit of analysis is a reviewer.

Table 5 shows that the within-reviewer effect (i.e., the difference of review ratings submitted by mixed reviewers during the week and weekend) is generally smaller (Mwithin-reviewer = .027) than the difference between the ratings of online reviews submitted by pure week and weekend reviewers (Mtemp. self-selection = .053) and less consistent across platforms (temporal self-selection: 63% of platforms; within-reviewer effect: 46% of platforms).

We conducted the following additional robustness analyses within the Amazon dataset: The temporal self-selection remains significant also in a subset of users who have submitted at least two reviews (Mtemp. self-selection = −.02, p < .001), when looking at only the three categories within Amazon that have shown the strongest weekend effect (i.e., software; patio, lawn, and garden; and video games; Mtemp. self-selection = −.08, p < .001), and holding the product being reviewed constant (Mtemp. self-selection = −.05, p < .001).

To better understand this temporal self-selection (i.e., different reviewers writing reviews during the week vs. the weekend), we explore how weekend reviewers differ from weekday reviewers by comparing the two groups based on the limited information available in our secondary datasets regarding reviewer characteristics. In addition, we analyze the review texts themselves. Prior research suggests that language can serve as a window into the reviewer's identity (Park et al. 2015), potentially revealing systematic differences between the two groups. We focus our text analysis on one of the major review platforms: Amazon. We do so to hold product quality constant, as it cannot fluctuate throughout the week, as in restaurants, for example. To further ensure stability regarding what is being reviewed, we focus our attention again on the three categories that show the largest weekend effect within Amazon: software (weekend effect = −.05), patio, lawn, and garden (weekend effect = −.04), and video games (weekend effect = −.04). These categories were selected because they show the strongest weekend effects, allowing for a more focused investigation into the characteristics of weekend versus weekday reviewers who drive the observed differences. However, it is important to acknowledge that this selection based on effect size limits the generalizability of our findings.

We compare the text of the week and weekend reviewers using Linguistic Inquiry and Word Count (LIWC; Pennebaker, Booth, and Francis 2001), which is a widely used dictionary-based software for analyzing written text (Hartmann et al. 2019). We focus on the four categories of “psychological processes” (i.e., cognition, affect, social, and perception) to explore potential processes that reviewers might engage in when submitting an online review. The LIWC scores we report represent the percentage of words in a given review that fall into each category, thereby normalizing for differences in review length. We see that weekend reviewers tend to use fewer words belonging to the social category in LIWC (“LIWC: Social”; Mweek = 6.25, Mweekend = 6.16; p < .001). Words related to social process in LIWC indicate social interactions (e.g., talk, share, meet), family, and friends. Previous studies suggest that the use of this group of words relates to social connections and closeness (Pressman and Cohen 2007; Stone and Pennebaker 2002). Thus, the consistently lower use of words relating to social processes suggests lower social connections and closeness of weekend reviewers, based on the language used in their reviews compared with weekday reviewers.

We also find a significant difference in the use of mentions related to affect (“LIWC: Affect”; Mweek = 12.96, Mweekend = 12.87; p < .001). Within affective processes (“affect”), we find the starkest difference for mentions related to sadness (LIWC: “emo_sad”), with more sadness coming from weekend reviewers’ reviews (Mweek = .097, Mweekend = .106; p < .001). For the remaining categories, we find no evidence for differences in other psychological processes, such as a cognitive (“LIWC: cogproc”; Mweek = 9.94, Mweekend = 9.94; p = .43) or perceptual process (“LIWC: Perception”; Mweek = 7.40, Mweekend = 7.40; p = .71).

The differences for the category related to social processes (“social”) as well as the category of mentions related to sadness (“emo_sad”) remain significant when holding the product constant (i.e., for each product we compare the average across its reviews from week and weekend reviewers; “LIWC: Social”; Mweek = 6.23, Mweekend = 6.17; p = .01; “LIWC: emo_sad”; Mweek = .113, Mweekend = .118; p = .04.

Finally, if the observed differences in how weekday and weekend reviewers write their reviews reflect underlying differences between these groups, we would expect such differences to be absent in the reviews of mixed reviewers who submit on both weekdays and weekends. To test this, we build random datasets of equal size of 1 million reviews each from week reviewers, weekend reviewers, and mixed reviewers, including their week and weekend review texts from the previous dataset. Comparing the week and weekend reviews, we find that the psychological driver in LIWC of “social” processes is indeed not statistically different between mixed reviewers’ week and weekend reviews (“LIWC: Social”; Mweek = 5.90, Mweekend = 5.90; p = .70). Regarding the emotion of sadness, in the texts of mixed reviewers, we find that whereas there are still slightly more mentions related to sad words during the weekend (LIWC: “emo_sad”; Mweek = .072, Mweekend = .077; p < .01), this difference is smaller than the difference of week and weekend reviewers (Mweek = .097, Mweekend = .106; p < .001). These results allow us to further speculate about the smaller and less consistent weekend effect among mixed reviewers. The within-reviewer explanations we tested, such as differences in expectations, do not account for this pattern, though the slight increase in sad language on weekends may reflect subtle situational influences. One possibility is mood congruence, where a reviewer's emotional state at the time of writing influences tone. While speculative, this interpretation aligns with the observed text difference.

Building on our findings regarding textual cues of lower social connectedness of weekend reviewers, we investigate potential differences between the two types of reviewers regarding the number of friends on online platforms. This information is only available in two of our datasets that include a social network: Yelp and Dianping. On both, we find that weekend reviewers have significantly fewer friends than week reviewers (one fewer friend on Dianping, p < .05; three fewer friends on Yelp, p < .001). This is in line with lower mentions of social processes in weekend reviewers’ texts. Moreover, results from a subsequent survey among actual Yelp reviewers suggest that the number of friends on Yelp is a valid proxy for real-life friends and correlates with how lonely and socially connected Yelp reviewers are (see Web Appendix H).

Hence, we find consistent evidence that weekend reviewers tend to submit online reviews with lower ratings compared with the group of week reviewers (see Table 5, “Temporal Self-Selection” column), and that the difference in ratings between these two reviewer groups is higher and more consistent than the difference within mixed reviewers’ week and weekend ratings (see Table 5, “Within-Reviewer Effect” column). While week and weekend reviewers differ in their review texts, mixed reviewers show no week–weekend differences in social references and only smaller differences in sadness. Further, weekend reviewers have fewer online connections. Overall, our findings consistently suggest that weekend reviewers tend to experience higher levels of social disconnectedness compared with their weekday counterparts, consistent with literature on weekend loneliness (e.g., Akay and Martinsson 2009; Kavetsos, Dimitriadou, and Dolan 2014; Maennig, Steenbeck, and Wilhelm 2014). A survey we conducted of week and weekend Yelp reviewers shows suggestive evidence in line with our findings that those active on weekends report feeling less socially connected and lonelier (Web Appendix H).

Taken together, the explanation for which we find the most consistent support across multiple data sources and methods is a reviewer-related explanation, namely temporal self-selection referring to differences between reviewers who review during the week versus on weekends. While other potential explanations—such as variation within reviewers between week and weekend reviews, contextual influences like crowdedness, or category-specific effects—may also play a role, they receive less consistent empirical support or appear limited to specific contexts. Overall, temporal self-selection appears to be the most broadly applicable explanation of the weekend effect, even though other contributing factors cannot be entirely ruled out.

Managerial Implications

The weekend effect may have significant implications for businesses reviewed online, which, in today's digital landscape, encompasses virtually all businesses. We first provide evidence for why the weekend effect is meaningful and relevant. Then we show with five studies (including two field experiments) that businesses seeking to collect reviews can mitigate the weekend effect of reviews through strategic review management and more specifically by restricting the timing of online review solicitations to weekdays.

The Relevance of the Weekend Effect for Businesses

Across our datasets, we find an average weekend effect of .04 stars. Even if this effect seems small at first glance, it still matters. We demonstrate this by examining the weekend effect in online reviews, focusing on (1) its implications for the long tail, (2) its influence on rankings, (3) the impact of single negative reviews more likely to arrive during weekends, (4) the magnitude of observed effects in online review research, and (5) its approximated economic significance.

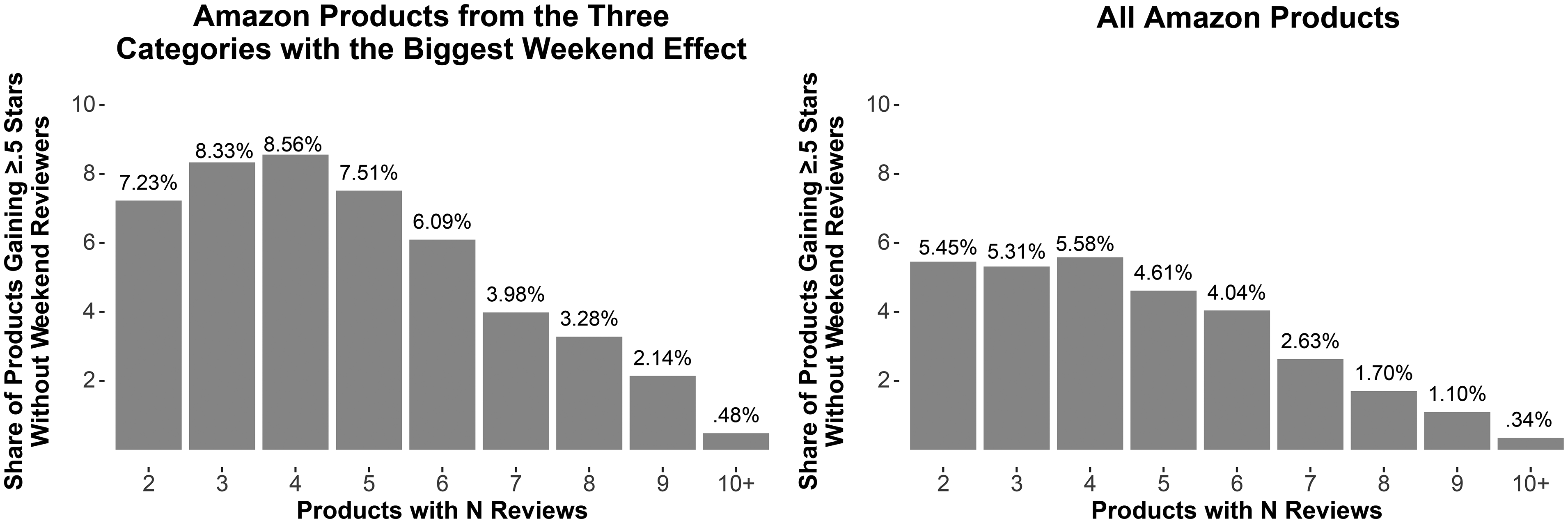

The relevance of the weekend effect for the long tail. Listings on online review platforms tend to follow a long-tail distribution (i.e., a few products with strong sales and many products for which only a few are sold) (Anderson 2006; Brynjolfsson, Hu, and Smith 2006; Oestreicher-Singer and Sundararajan 2012). Because the number of online reviews each listing has is a function of sales, the number of online reviews per listing also follows a long-tail distribution (i.e., a few listings with many reviews and vice versa). For example, on Amazon the median number of reviews per listing is 3, while the mean is 22, offering evidence for a long-tail distribution. A single negative review (which we show is more likely to be submitted during the weekend) is especially detrimental to the average rating of a listing that so far has accumulated only a few reviews. Thus, for a typical product on Amazon with three reviews and an average rating of 4 stars, a new 1-star review would alter the average rating from 4 to 3.25 stars. To more systematically assess the impact on the average rating and implications for the long tail, we measured how frequently listed products experience at least a half-star improvement if there were no weekend reviewers. From prior literature, we know that such a half-star improvement is associated with a substantial demand impact (e.g., Anderson and Magruder 2012; Luca 2011; Magnusson 2022). We focused on products as here the quality is stable. We calculated each listed product's average rating in two ways: first, using all reviews; and second, excluding reviews from weekend reviewers. Our goal was to assess how often a product's average rating improves by at least .5 stars under the latter condition. As expected, we find that products with fewer reviews are more susceptible to the influence of weekend reviewers. Figure 4 shows that up to 6% of products with only three or four reviews would experience a half-star increase in their average rating if weekend reviewers were excluded. This percentage increases to more than 8% for Amazon products in the three categories with the biggest weekend effect from before. The weekend effect and its impact on rankings. Given the potential impact of a single negative rating, the weekend effect can have important implications for rankings. Typically, online review platforms display listings ranked according to average ratings or use an internal sorting logic, which generally relies as well on a listing's average rating (Talton et al. 2019). For rankings based on the average rating, a decrease in the average rating of .75 stars—similar to the one described previously—can move a product from the 70th to the 40th percentile, effectively shifting it from the top 30% to the bottom 40% of the platform.

1

This is because listings tend to be ranked very close to each other when considering their overall average rating. But even small rating differences can have drastic implications for how listings are ranked, how visible they are, and how much interaction they receive. Among 10 listings, 80%–90% of users viewed and 70% clicked on the top listing, while less than 10% viewed and clicked on the bottom listing (Demsyn-Jones 2022; Pan et al. 2007). To visualize the effect, we demonstrate the influence of small rating differences on rankings in our data. We again focus on software products from Amazon, because unlike restaurants or other experiences, the underlying quality of reviewed software is arguably stable throughout a week. In our data we observe 1,962 products in this category that currently have three reviews, which is the median number of reviews in the software category. The products’ median rating is 3.33 stars, and their ranking position is 938. If a fourth negative 1-star review is added to one of these products, which is 4.6%

2

more likely to happen during the weekend, its new average rating would be 2.75 stars, thereby downgrading this software from rank 938 to 1,114. Such a change in rank—176 positions, or 8.9% on a list of 1,962 products—may result from a single additional negative review. Finally, to more systematically analyze differences in ratings, we compare how products would be ranked according to their weekday-only reviews versus their weekend-only reviews.

3

We observe that the two rankings differ significantly (Wilcoxon signed-rank test with continuity correction: p < .001) and show only a medium correlation (Kendall's τ = .51). Usual measures for comparing rankings such as Kendall's τ have the disadvantage that they are unweighted, placing as much emphasis on the disagreement of two rankings at their top as at their bottom. Therefore, Webber, Moffat, and Zobel (2010) proposed an improved similarity measure, which they call rank-based overlap (RBO), that is especially suited for instances such as search engines or online review platforms, where results at the top matter more than results at the bottom. This is important, as users usually do not read beyond the first page. According to this measure, the two rankings are even more dissimilar (RBO = .15). The impact of a single review. Certainly, for the few listings that have accumulated many reviews, a single bad review might have less of an impact on the average rating. Nonetheless, it has been shown that not only is the average rating important for consumers’ decisions, but individual reviews also can have a strong effect on decisions (Vana and Lambrecht 2021), especially negative reviews (Varga and Albuquerque 2023). Thus, the threat of a single negative review displayed as the most recent online review becomes more imminent around weekends. Effect sizes in online review research. Small effect sizes in online review research have been widely observed and can be expected due to the skewness of online ratings and the strong tendency of reviewers to give 5-star ratings (Brandes, Godes, and Mayzlin 2022; Schoenmueller, Netzer, and Stahl 2020). Brandes and Dover (2022) report that ratings drop by .1 stars when it rains, Bayerl et al. (2025) find that women's rating average is .08 stars higher than men's, and Bairathi, Lambrecht, and Zhang (2023) show that female freelancers receive ratings .01 stars lower than male freelancers. Approximating economic significance. For the 26 platforms for which we find a weekend effect (see Table 1), a comparison of the NPS of all online reviews submitted during the weekend versus during the week shows a lower NPS for weekend reviews by .2 to 19.5 points (see Table WC1). Across these datasets, the NPS from weekend reviews is 3.6 NPS points lower compared with weekday reviews. Marsden, Samson, and Upton 2005 showed that a higher NPS is associated with more future growth and sales: In their analysis, each 1-point difference in NPS relates to a .147% revenue growth for the average business in their analysis. Thus, transferring the differences into monetary value shows the potential importance of the weekend effect in terms of sales. According to Marsden, Samson, and Upton (2005), a weekend effect corresponding to a .2- to 19.5-point lower NPS across platforms relates to a .07% to 2.9% lower revenue growth.

Share of Products Gaining Half a Star Without Weekend Reviewers (by Number of Reviews).

In summary, the weekend effect of online reviews, despite seeming small in absolute magnitude, has economic significance and consequences for businesses. In the following sections, we present five additional studies that illustrate how companies can leverage our findings to strategically adjust the timing of review solicitations, thereby actively incorporating insights related to the weekend effect.

Leveraging the Findings of the Weekend Effect

Building on our findings, we conducted five studies to investigate its practical implications for companies. These studies reveal that soliciting reviews during the weekend leads to a higher proportion of negative feedback. By understanding this pattern, companies can strategically adjust their review solicitation timing to mitigate unfavorable outcomes.

Study 1: Soliciting via email

We build on a dataset of employer reviews for which we can identify a review solicited via email. For a subset of 282,881 employer reviews from our kununu dataset, we can assess whether users wrote a review after having received an email from the platform asking them to do so. Comparing only solicited weekend reviews and solicited week reviews, we find the “weekend effect” pattern: Weekend reviews (n = 17,875, M = 3.69) are significantly less favorable than weekday reviews (n = 265,006, M = 3.86; t(20,008) = 18.83, p < .001). This finding suggests that the weekend effect is not limited to unsolicited reviewing behavior but also appears in solicited reviews, indicating that the mere timing of review solicitation may influence review valence. A limitation in this study is that we can only infer the time the review was written, not the time the email was sent.

Study 2: Soliciting via social media ads

For a set of 76,323 employer reviews, we know that users were triggered to write the review through a social media ad. Similar to the previous study, we don’t know when reviewers were exposed to the social media ad, but it seems very unlikely that someone clicked on a social media ad during the week and kept the window open only to then review during the weekend. Thus, in this dataset it's highly likely that the exposure to the solicitation and the submission of the review coincided on the same day. We find again that weekend reviews (n = 14,572, M = 3.42) are significantly less favorable than weekday reviews (n = 61,751, M = 3.59; t(21,694) = 14.01, p < .001). Thus, the result confirms in a different setting that the weekend effect is present even in solicited reviews, suggesting that the timing of solicitation may influence review valence. Both studies are in the context of employer, job, and workplace reviews, which is the category with the largest weekend effect. In the following, we assess whether soliciting during the week versus the weekend matters in other contexts.

Study 3: Soliciting via emails

In our dataset from yourXpert, we know when the actual experience (i.e., the consultation with a lawyer) took place. Upon discussion with the platform, we learned that it sends an automated email reminding users to review 2 days after the experience took place, and for a period of time, it sent another additional reminder after 14 days. Forty percent of reviews (n = 11,796) are written on either the day of the experience or the day after; for the remaining 60% (n = 17,502), we assume that they were solicited through the email. This seems a sensible approach, as the data clearly shows peaks in review volume when solicitation emails are sent. Despite the small sample size compared with the previous studies, our regression analysis still shows that ratings are, on average, .046 stars lower (SE = .027) when the solicitation email is sent on the weekend (p = .088), while controlling for whether the review was triggered by the first or the second reminder email. A limitation of this study is that the date when the email was sent was not randomized. It could be that users who consulted a legal expert on Thursday or Friday (and thus received the email on a Saturday and Sunday) are inherently different in personality or were concerned with different, perhaps more difficult, issues. At the same time, legal experts accepting a consultation closer to the weekend could differ along unobservable characteristics such as their experience. We go on to address these issues by conducting two A/B tests.

Study 4: An experiment with Prolific users

The aim of this study was to approximate an actual field setting in which users are randomly assigned to one of two conditions (“week” and “weekend”) and are asked to submit a review. For random assignment to these two conditions, we conducted a longitudinal multipart study through Prolific. In Part 1, we constructed a database of users, replicating the type of database a platform or business might maintain. We opened Part 1 in November 2024 to 1,000 Prolific users from the United States who were fluent in English. We made participants aware that we would reach out to them again within the following 10 days for the second part of the study. We randomly split the participants acquired in the first stage into two groups: 500 were invited on a Wednesday and 500 were invited on a Saturday for our Part 2, in which users submitted online reviews for any restaurant, software, and hotel (the order was randomized for each participant). On Wednesday 406 respondents took part (380 after attention check), and on Saturday 296 respondents took part (281 after attention check). A chi-square test of independence confirmed that significantly more participants responded on Wednesday (380) than on Saturday (281) (χ2(1, N = 1,000) = 42.86, p < .001). Additionally, as expected with random assignment, there were no significant differences between the two initial groups of 500 participants in how they were assigned to receive Part 2 during the weekday or weekend (p > .10) (see Table WI1 in Web Appendix I). However, differences emerged among those who actually chose to participate in Part 2 (see Table WI2). Specifically, participants from the weekend group reported marginally higher levels of social disconnectedness (Mweek = 3.24, Mweekend = 3.43; t(588) = 1.72, p = .09) and had submitted fewer past reviews (Mweek = 5.46, Mweekend = 4.23; t(658) = −2.43, p = .02). These patterns suggest that, despite random assignment, the individuals who chose to respond on the weekend differed systematically from weekday respondents; this is consistent with the broader notion of temporal self-selection, whereby different types of people are more likely to engage on different days.

For each user, we then averaged their three rating scores to build one average review rating per user. We know from our secondary data that the average weekend effect is −.04 stars (see Table 1); we thus use this value as a comparison point. The results of our field study show a similar average weekend effect of −.05 stars (Mweek = 4.12, Mweekend = 4.07). Due to the modest effect size, a much larger sample size would be required to achieve statistical significance. A power analysis on the weekend effect across restaurants (Table 1: Yelp), software (Table 1: OMR Reviews), and hotels (Table 1: Tripadvisor) would have required at least 30,000 to 40,000 reviews. Consequently, the statistical power of this analysis is low and the difference is not statistically significant (two-sided t-test: p = .33, t(585) = −.97). In addition to the comparison of mean differences, we compare the two groups regarding the categories of detractors (all 1-, 2-, and 3-star reviews) and promoters (4- and 5-star reviews), similar to our secondary-data analysis. Implementing a chi-squared test of independence reveals a difference between the day of the week (Saturday vs. Wednesday) and review ratings (promoters vs. detractors) at a p-value of .06 (χ2(1, N = 661) = 3.42). Although, for technical reasons, our study can’t have sufficient power to achieve strong statistical significance, the observed effect size is consistent with our secondary data, and it is common to see platforms collecting large amounts of reviews in one campaign.

While Study 4 replicates the weekend effect under randomized solicitation timing, our managerial implication is not to try to adjust the valence of weekend-written reviews. Rather, we demonstrate that strategically timing review solicitations can reduce the likelihood of weekend submissions, thereby avoiding the weekend effect altogether. Focusing review solicitation on weekdays can avoid triggering a segment of users that systematically is more likely to review negatively, thereby alleviating the weekend effect. In this way, the timing of solicitation serves as a practical lever to manage the tone of reviews. Study 5 provides further evidence for this effect using a real-world review platform.

Study 5: A field experiment

In collaboration with an online review platform (OMR Reviews), we conducted a preregistered field study (https://aspredicted.org/9gvh-xxfs.pdf). The platform provided access to 12,500 4 of its existing users and email addresses with marketing and outreach consent so we could solicit (without an incentive) half of the users on a Wednesday and the other half on a Saturday through an email similar to how the platform usually reaches out to its user base. We chose Wednesday and Saturday because on this platform these are the days with the highest and lowest average ratings, respectively, based on our secondary data. The average rating of those who received the email on a Wednesday (Saturday) was 8.03 stars (7.61 stars). Unfortunately, only .6% of users converted to the review process (n = 70; such low conversion rates to writing an online review are the norm; Anderson and Simester 2014). Thus, although we replicate the direction and the magnitude of the weekend effect in the preregistered direction (two-sided t-test: p = .41, t(64) = −.83), a power analysis shows that 3,000 respondents would have been required to reach significance. This number was beyond the capacity of the corporate partner in this study but is within a plausible range for campaigns collecting reviews. Therefore, although our study lacks sufficient power to achieve statistical significance, the observed trend and effect size are again fully consistent with our secondary data.

In summary, the evidence from these five studies, despite their specific limitations, demonstrates the managerial relevance of the weekend effect. Our findings inform businesses how the mere timing of review solicitations can affect the subsequent review ratings and thus how the adjustment of the timing of review solicitations to weekdays can help avoid collecting negative weekend reviews. This complements work on optimal scheduling in social media content management, which shows that the timing of posts can significantly shape engagement (Kanuri, Chen, and Sridhar 2018). Beyond adjusting the timing of solicitations, our findings suggest that platforms should, where feasible, identify weekend reviewers based on existing reviewer histories. This approach would allow platforms to not only refine the timing of solicitations but also tailor the selection of targeted individuals to minimize negative effects of the weekend.

Discussion