Abstract

What drives the liking of video advertisements? The authors analyzed neural signals during ad exposure from three functional magnetic resonance imaging (fMRI) data sets (113 participants from two countries watching 85 video ads) with automated meta-analytic decoding (Neurosynth). These brain-based measures of psychological processes—including perception and language (information processing), executive function and memory (cognitive functions), and social cognition and emotion (social-affective response)—predicted subsequent self-report ad liking, with emotion and memory being the earliest predictors after the first three seconds. Over the span of ad exposure, while the predictiveness of emotion peaked early and fell, that of social cognition had a peak-and-stable pattern, followed by a late peak of predictiveness in perception and executive function. At the aggregate level, neural signals—especially those associated with social-affective response—improved the prediction of out-of-sample ad liking compared with traditional anatomically based neuroimaging analysis and self-report liking. Finally, early-onset social-affective response predicted population ad liking in a behavioral replication. Overall, this study helps delineate the psychological mechanisms underlying ad processing and ad liking and proposes a novel neuroscience-based approach for generating psychological insights and improving out-of-sample predictions.

Video advertisements have been the mainstay of advertising media as the marketing landscape has shifted increasingly toward the internet and mobile devices. According to a survey of one industry group, digital video advertising captures the greatest share of the advertising budget at 19% (Interactive Advertising Bureau 2021), while some estimates put online video advertising spending at U.S. $106 billion in 2022 (Statista 2022). Combined with traditional television broadcasting, consumers can expect to continue to encounter video ads both online and offline.

The effectiveness of video ads depends in part on whether viewers like them or not (MacKenzie, Lutz, and Belch 1986). That in turn leads to the question of how viewers arrive at their opinions during ad exposure. From the AIDA (attention, interest, desire, action) model of advertising (Strong 1925) a century ago to the subsequent Lavidge and Steiner (1961) hierarchical framework, different psychological accounts of effective advertising have recognized how cognition and emotion contribute to ad liking (Barry and Howard 1990; Vakratsas and Ambler 1999). Pinpointing the underlying psychological processes of ad liking, however, poses methodological challenges. First, self-reporting requires elaboration and reflection that interfere with autonomous processes such as information processing or memory formation. Second, instruments such as surveys are less amenable to recording moment-to-moment reactions during ad exposure, which is crucial for studying exactly when during an ad these processes occur.

Neurophysiological measurements provide a complementary tool to traditional self-report assessments as a means for unobtrusive and continuous measurement of automatic or subconscious responses (Plassmann et al. 2015). Eye-tracking, electromyography, and facial-coding techniques (Li et al. 2022; McDuff et al. 2015; Teixeira, Wedel, and Pieters 2012) map out transitory facial and ocular movements during ad exposure, while electroencephalography detects electrical signals emanating from the cortical surface of the brain during ad viewing (Baldo et al. 2022; Hakim et al. 2021), both with fine-grained temporal resolution (>100 Hz). Functional magnetic resonance imaging (fMRI) captures neural activity of the entire brain—albeit at a lower temporal resolution of about .5 Hz—offering potentially more in-depth access to psychological processes during ad exposure (Chan et al. 2019). Despite these efforts to capture neurophysiological signals during ad exposure, inferring psychological processes from these signals and relating them to ad liking and ad effectiveness poses its own methodological challenges (Poldrack 2006; Sarter, Berntson, and Cacioppo 1996).

The aim of this study is to make use of the latest advances in neuroscience to identify psychological processes—and their temporal dynamics—underlying video ad liking, based on brain responses during fMRI scanning. Using a publicly available meta-analytic database of the extant neuroscience literature (Neurosynth; Yarkoni et al. 2011), we transform raw neuroimaging data into brain-based measures of a broad range of psychological processes, allowing us to examine which psychological processes lead to ad liking and at which point in time. In the second part of the article, we examine the link between these neural signals and aggregate out-of-sample liking of video ads. We also explore whether—and which—neural signals provide additional information beyond self-report measurements in predicting aggregate out-of-sample ad liking.

Measuring Psychological Processes During Ad Exposure with Brain Measurements

The sheer complexity of the human mind means there is no consensus on how psychological processes should be delineated at the brain level (Mesulam 2000). In this study, we adapt a widely used framework informed by neuropsychology (DSM-5; Sachdev et al. 2014), which originally proposes a six-domain organization of cognitive functions: perception, language, attention, executive function, memory, and social cognition. Working in close tandem with cognitive functions is emotion, and the interactions between the two have been studied behaviorally and neurophysiologically (Robinson, Watkins, and Harmon-Jones 2013). Together, these seven domains represent a broad range of psychological processes from a neurophysiological perspective and map roughly onto three aspects pertinent to advertising research (Table 1): perception and language (information processing); attention, executive function, and memory (cognitive functions); and social cognition and emotion (social-affective responses).

Psychological Processes Informed by Neurophysiology and Their Potential Relevance to Advertising.

Stronger perceptual and linguistic responses to stimuli have been linked to subsequent choices and preferences (Schmälzle et al. 2015; Shimojo et al. 2003), while memory and attention have long been understood as crucial determinants of consumer preference (Kardes and Kalyanaram 1992; Wedel and Pieters 2019). At the same time, executive functions, such as deliberation, inhibitory control, and numerical operations, indicate the level of processing fluency (Lee and Labroo 2004) and have been found to interact with brand preference (Peatfield et al. 2015). Social cognition, encompassing psychological processes such as theory of mind and mentalizing (i.e., perspective taking and the understanding of others’ intentions and feelings), is closely related to the advertising literature on narrative transportation and identification (Escalas 2004; Van Laer et al. 2014). Finally, emotion is well known to be a key component of consumer responses to advertising (Holbrook and Batra 1987).

Studying the nervous system helps uncover the conscious and subconscious psychological processes underlying various consumer behaviors. As neuroimaging technologies such as electroencephalography and fMRI have become more accessible and less costly, they have gained in popularity both in the industry and among academic researchers (Ariely and Berns 2010; Plassmann et al. 2015; Smidts et al. 2014). A robust body of research on the neural basis of consumer preference has identified the importance of several key brain regions associated with cognition, emotion, and value representations (see Plassmann, Ramsøy, and Milosavljevic 2012 for a review). For example, dorsolateral prefrontal cortex (dlPFC), considered as one of the neural substrates of cognitive control (Hare, Camerer, and Rangel 2009), has been found to be related to brand choice (McClure et al. 2004). The subcortical structure of the amygdala is known to track affective intensity (Phelps 2006) and indicate reward (Murray 2007).

Moreover, in the context of consumer neuroscience, positive affective responses are linked to activity in the nucleus accumbens (NAcc) and are associated with consumer preference in numerous studies (Genevsky and Knutson 2015; Genevsky, Yoon, and Knutson 2017; Knutson et al. 2007). Last, the ventromedial prefrontal cortex (vmPFC) has long been established as a neural substrate where consumer valuation occurs (Falk, Berkman, and Lieberman 2012; Falk et al. 2016; Knutson et al. 2007; Plassmann, O’Doherty, and Rangel 2007). Uncovering the associations between consumer response and neural activity at these volumes of interest (VOIs) has provided biological evidence of psychological processes invoked by advertising and has offered insights on the interplay of perception, cognition, and emotion.

Recent advances in neuroimaging analysis have expanded upon this VOI-based approach. Instead of treating anatomical structures as distinct information sources, researchers combine neural signals from across the whole brain to generate composite measures, either to then train prediction models (Kragel et al. 2018; Wager et al. 2013) or to obtain psychological insights (Lieberman et al. 2019; Van Ast et al. 2016). There are several core advantages with the whole-brain (vs. VOI-based) approach. First, it pools signals from multiple voxels (small volumetric units of a brain image) distributed across the brain at once, potentially providing more information than a handful of VOIs. Second, whole-brain patterns can potentially offer better insights on the underlying psychological processes as they often involve the interplay between disparate regions in the brain (Poldrak 2006). Finally, the rise of multivoxel pattern analysis (Norman et al. 2006) has led to new discoveries on how the brain encodes information. For example, neural encoding of reward information has been found to not only encapsulate value but also incorporate specific information about the expected outcome, sensory features, or required action associated with the reward (Kahnt 2018). In consumer neuroscience, multivoxel pattern analysis techniques help uncover how abstract and complex consumer knowledge, such as brand image, is encoded in the brain (Chan, Boksem, and Smidts 2018; Chen, Nelson, and Hsu 2015).

Interpreting Brain Measurements with a Neuroscientific Meta-Analytic Database

One of the main goals of consumer neuroscience is to offer insights into consumer psychology (Smidts et al. 2014). However, interpreting neural signals is fraught with philosophical and methodological challenges (Bennett and Hacker 2022). For instance, given the observed brain measurements, how do we know which psychological processes have likely occurred? To this end, a recent trend in neuroscience is to use large-scale meta-analytic databases such as Neurosynth (Yarkoni et al. 2011) or NeuroQuery (Dockès et al. 2020) to interpret whole-brain patterns of neural activity. These publicly available meta-analytic databases are built upon automatic text mining and data extraction of the extant neuroscientific literature.

For example, Neurosynth—the most widely used database of its kind—mines the full text corpus of about 14,000 peer-reviewed neuroscientific publications and the anatomical locations reported in the text. The database contains a collection of concepts (either term based, e.g., with a single term such as “memory,” or topic based, where each topic is a cluster of words), each of which is associated with a brain statistical map indicating any consistent relationship between the concept and the neural activity reported in the brain’s different anatomical locations. (For a more in-depth explanation of the meta-analytical process, see Yarkoni et al. [2011].)

The existence of Neurosynth and other similar databases offers opportunities to improve the interpretability of neuroimaging findings (Plassmann et al. 2015; Yarkoni et al. 2011). When we want to understand the implications of observed neural activity, the long-standing practice of relying on anatomical landmarks carries the inherent risk of “reverse inference” (Poldrack 2011), namely the assumption of one-to-one correspondence between brain structures and psychological processes. As an example, amygdala activity is at once associated with both reward (Murray 2007) and disgust (Sambataro et al. 2006). Instead of focusing on the activity (or absence thereof) within the amygdala as qualitative evidence of specific psychological processes, the use of Neurosynth association maps (e.g., “reward” and “disgust” maps) allows quantitative inference based on whole-brain activity patterns, thus reducing the risk of reverse inference based on individual regions.

Usage of Neurosynth can be either confirmatory or exploratory. On the confirmatory side, researchers select a term of interest and use the associated meta-analytical map to extract neural signals related to that term, with which hypothesis testing can be done. For example, Doré et al. (2020) use the reward association map to extract reward-related brain activity when individuals read news articles and test whether the reward signal of individuals tracked virality in the population. In contrast, Li et al. (2017) adopt an exploratory approach by comparing brain activity during a gambling task with gain or loss framing against the entire Neurosynth database. Their goal is to test competing psychological accounts of the framing effect: whether it is driven by a competition between emotion and control or an indication of differential cognitive engagement across decision frames. They find evidence of the latter based on higher brain pattern similarity to task-engagement-related Neurosynth maps (such as “working,” “task”) relative to emotion-related maps (such as “feelings,” “emotions”).

In both examples, Neurosynth decoding reduces whole-brain activity to a measure of a term selected a priori by investigators (e.g., to what degree the brain is in a “reward” or “task engagement” state). Here, we propose an alternative approach that departs in two aspects from the extant consumer neuroscience studies that use Neurosynth decoding. First, we move from term-based to topic-based modeling. 1 This approach first extracts topics based on the covariance between different terms in article abstracts by automatic text mining (latent Dirichlet allocation; LDA) and then matches them with consistently reported brain activity in the concerned articles.

In the Neurosynth database, the number of topics after LDA modeling is predefined at 50, 100, 200, and 400, which can be understood as the various resolutions of the semantic space of the corpus (we use the 400-topic model in this study, which offers the highest semantic resolution). Each topic is represented by 40 words that tend to co-occur in the neuroscientific literature. For example, in the 400-topic model, the first five words for Topic 4 are “mood,” “affective,” “induction,” “states,” and “sad,” suggesting an emotion-related cluster, while for Topic 5, they are “judgments,” “judgment,” “judged,” “make,” and “metacognitive,” suggesting a cluster focusing on executive decision making. 2 Such topic-based modeling, as opposed to term-based modeling, allows for more nuanced descriptions of a concept and reduces the confounding risk of polysemy. For example, the term “value” appears in Topic 89 (“range,” “wide,” “characteristic,” “variables,” “operating”), Topic 144 (“decision,” “making,” “choice,” “decisions,” “choices”), and Topic 342 (“caudate,” “nucleus,” “accumbens,” “nacc,” “reward”), each reflecting a different meaning (concerning research methodology, decision making, and affective response, respectively) that might be confounded in term-based modeling.

The second main point of departure is to move away from using Neurosynth decoding to identify a single psychological process. As consumer response is typically driven by multiple factors, especially during immersive experiences such as watching video ads, such use of single-process Neurosynth decoding might be limiting. An alternative approach would be to treat neural signals as a combination of simultaneous psychological processes. For example, a recent study attempted to identify distinct psychological states while watching video by expressing neural activity in a combination of 16 preselected Neurosynth terms, including “language,” “emotion,” “inhibition,” and “sensorimotor” (Van der Meer et al. 2020). Instead of curating a set of terms, in this study we first project raw neuroimaging data obtained during video ad exposure onto the entire Neurosynth 400-topic space, effectively rendering neural signals from about 50,000 voxels within a brain image into a combination of multiple and simultaneous psychological processes. Figure 1 illustrates the single- versus multiple-process Neurosynth decoding approaches (note that the multiple-process approach is also applicable for term decoding).

Single- Versus Multiple-Process Neurosynth Decoding.

The use of Neurosynth (and other similar meta-analytic databases) should be preambled by a note of caution. Given the data-driven nature of automatic text mining of the extant neuroscientific literature, the collection of topics and terms tends to skew toward neurocognitive and neuropathological constructs, which have so far made up the bulk of the research in the field. As such, these automatically generated topics (and terms) do not necessarily and exhaustively cover the full spectrum of psychological phenomena, and they may not offer enough resolution to sufficiently differentiate psychologically related but distinct processes (e.g., fear and disgust). This can be due to either insufficient research at the moment or the fact that many psychological processes are found to involve common neural circuits, at least as observed at the spatial resolution afforded by the available neuroimaging technology. Nevertheless, the multiple-process Neurosynth decoding approach we use in this study reduces the risk of reverse inference compared with relying on anatomical landmarks alone. It also offers a data-driven way to analyze multiple psychological processes during video ad exposure.

Temporal Dynamics of Liking-Related Psychological Processes

Video watching is an immersive experience that unfolds across time. Unlike the evaluation of static materials such as print ads, consumers uncover new information about the experience sequentially. The question is, therefore, at which point during video ad exposure does a viewer develop liking of the ad? An early study using a continuous emotional self-report method indicates that peak moments and the ending of an ad are decisive for consumers' overall judgment (Baumgartner, Sujan, and Padgett 1997), although a more recent study examining empirical data of mobile video ads suggests that consumers likely make up their mind about whether to click on the ad within the first 10–15 seconds (Chiong et al. 2023). An eye-tracking and facial-coding study suggests that early appearances of joy and surprise responses (within the first ten seconds) predict more sustained attention and less skipping (Teixeira, Wedel, and Pieters 2012). Finally, an fMRI study in which participants watched a series of short documentary clips finds that activity at the nucleus accumbens and anterior insula—brain areas associated with positive and negative affective responses, respectively—within the first four seconds of video onset predicts whether participants will skip the video when given the chance (Tong et al. 2020).

All in all, extant evidence suggests that early-onset (within the first ten seconds) affective responses play a role in ad liking. However, given the difficulty in measuring real-time psychological processes (eye tracking, facial coding, or continuous self-reporting could reasonably capture attention and emotion but not other processes), there remain unaddressed issues on the temporal dynamics of other psychological processes related to ad liking. Are there other early-onset psychological processes that can predict subsequent ad liking? Furthermore, does the peak-end rule apply to all these psychological processes? Answers to these questions will inform marketing practitioners on how to structure content in video ads to maximize impact.

Predicting Aggregate Consumer Response with Neural Signals

While pioneering studies in consumer neuroscience focused on identifying neural signals implicated with individual preferences, the scope of research has been gradually expanding toward “brain-to-population” predictions (Berkman and Falk 2013; Boksem and Smidts 2015; Chan et al. 2019; Couwenberg et al. 2017; Falk et al. 2016; Knutson and Genevsky 2018; Scholz et al. 2017; Venkatraman et al. 2015). That is, researchers are now using neural activity from a small group to predict aggregate consumer response at large, akin to using surveys or focus groups. In many of these brain-based prediction studies, researchers selected a combination of brain structures (i.e., VOIs) such as those discussed in the previous section, and they extracted their activity while consumers were shown marketing information. These VOI signals have been shown to track aggregate outcomes such as ad campaign responses (Falk, Berkman, and Lieberman 2012) or YouTube video view counts (Tong et al. 2020). Moreover, in some instances, brain-based information from the “neural focus group” was found to improve out-of-sample predictions as compared with models that include only self-report measurements from the same group (Boksem and Smidts 2015; Chan et al. 2019; Dmochowski et al. 2014; Falk and Scholz 2018; Knutson and Genevsky 2018).

The early evidence that neural signals contain information that can supplement self-report ratings in predicting aggregate consumer response raises a question: What are the psychological bases of these “hidden” neural signals? There have been suggestions that consumer response (e.g., a choice between chocolate and broccoli) contains multiple components, including affective response (e.g., pleasantness of sweet taste) and idiosyncratic motivation (e.g., personal diet goals). Moreover, it is theorized that the affective component could be more generalizable, that is, more consistent across consumers. Thus, while both emotion and motivation might predict self-report preference or choice within a consumer, measuring affective response might offer more accurate forecasts of aggregate consumer outcomes in addition to self-report measurements, especially in hedonic consumption (Knutson and Genevsky 2018). In the context of video ads, would neural signals of different psychological processes recorded from a small sample of participants offer additional information on aggregate liking, above and beyond asking the same participants to report their preference?

Overview of the Article and Summary of Findings

This article is structured in two parts. In the first part, we report a pooled analysis of three neuroimaging data sets that examine which psychological processes during video ad exposure led to liking of the video ads. We then identify moment-to-moment predictiveness of these neural signals and compare their relative contributions over the entire span of ad exposure. In the second part, we focus on whether these neural signals could provide additional information on aggregate ad liking beyond self-report measurements and whether early-onset psychological processes could be tapped for predicting aggregate ad liking. 3

Table 2 summarizes the findings. In brief, we found that neural signals associated with a broad range of psychological processes during ad exposure predicted subsequent self-report liking. Neural signals became predictive within the first ten seconds (except perception), with emotion and memory among the earliest predictors in the first three seconds. Over the span of ad exposure, while the predictiveness of emotion peaked early and fell, that of social cognition had a peak-and-stable pattern, followed by a late peak of predictiveness in perception and executive function. At the aggregate level, early-onset neural signals remained predictive of aggregate out-of-sample liking after accounting for the self-report liking of the same participants. Finally, in a behavioral replication, self-report ratings of social-affective response in the first ten seconds of ads were predictive of population liking, even after controlling for self-report liking.

Summary of Findings.

Notes: Early onset refers to the first ten seconds of the ad. Shaded cells indicate not tested.

Part 1: Uncovering Neural Signals of Self-Report Ad Liking

To uncover neural signals—and identify the associated psychological processes—of ad liking, we pool three existing neuroimaging data sets in which participants watched video ads and reported their liking while undergoing fMRI scanning. Deploying an automatic meta-analytic database (Neurosynth), we transform raw brain measurements into a combination of 400 topics derived from the corpus of the extant neuroscience literature. We then identify which topics (and the psychological processes they describe) would be predictive of subsequent self-report liking. Last, we investigate the temporal dynamics of these psychological processes by estimating and comparing the predictive effects of liking-related neural signals across the span of ad exposure.

Overview of Neuroimaging Data Sets

Of the three neuroimaging data sets used in this study, one has not been published before (Data Set 1), whereas the other two have been previously published (Data Set 2 by Chan et al. [2019] and Data Set 3 by Venkatraman et al. [2015]). The key details of the data sets are listed in Table 3. In all three data sets, the video ads used had been previously broadcast on television. In total, there were 113 participants and 85 video ads. Web Appendix A describes details about the task procedure and the neuroimaging data acquisition.

Details of Neuroimaging Data Sets.

Notes: N.A. = not available.

Data Set 1

Twenty-five participants (16 female, 9 male; Mage = 28 years) were recruited from the general public by a marketing research company. Potential subjects responded to an online MRI screening questionnaire that ensured they had no history of neurological illness or damage, were not using drugs or psychiatric medication, and had normal or corrected-to-normal vision. Those who were found suitable for scanning were contacted, and written informed consent was obtained in advance. Twenty ads from six recruitment agencies were used in this study, with their lengths ranging from 15 to 60 seconds (mean = 35.3, SD = 14.2). Participants were invited to the scanning facility, where they watched the 20 ads, presented twice in randomized order, during fMRI scanning. (We only analyzed the neuroimaging data from the first viewing.) Immediately after the second viewing, participants indicated their liking of the ads via button presses with no time limit (a five-star scale with half-star increments). The scanning lasted about 35 minutes in a single run, and each participant was paid €70.

Data Set 2 (Chan et al. 2019)

Sixty participants (33 female, 27 male; Mage = 36 years) were recruited in two waves (wave 1: N = 40; wave 2: N = 20). Each wave had a different scanner and acquisition settings (see details in Web Appendix A). Similar to Data Set 1, the participants were recruited from the general public by a marketing research company and had the same screening procedure. Informed consent was obtained before the experiment. Thirty-five video ads from seven telecommunication brands were used as stimuli, with their lengths varying from 25 to 60 seconds (mean = 40.5 s, SD = 9.4 s). Participants watched the 35 ads, presented in randomized order, during fMRI scanning. Immediately after each video, they indicated their liking of the ads via button presses with no time limit (a five-star scale with half-star increments). They then waited for 6–10 seconds with a blank screen before another video began. The scanning lasted about 35 minutes in a single run. After the scanning, participants completed a follow-up survey in which they rated to what extent each ad was familiar, entertaining, informative, relevant, and convincing. Each participant was paid €60.

Data Set 3 (Venkatraman et al. 2015)

Twenty-eight participants (15 female, 13 male; Mage = 29 years) completed the fMRI scanning. All participants were right-handed, healthy individuals with normal or corrected-to-normal vision. They were also free of any hearing problems and provided written consent before participating. Forty video ads from 15 unique brands of various products and services (including consumer products, financial services, and internet travel services) were used in this study, and they were all 30 seconds long. Participants watched the videos in five separate runs during fMRI scanning. In each run, videos were presented in randomized order. Immediately after each video ad, participants indicated their familiarity, liking, and purchase intent via button presses (on a five-point scale) with a maximum time limit of 5 seconds per question. Each scanning run lasted about 8 minutes (i.e., 40 minutes in total), and each participant was paid $40.

Measurements of Liking

Self-report liking

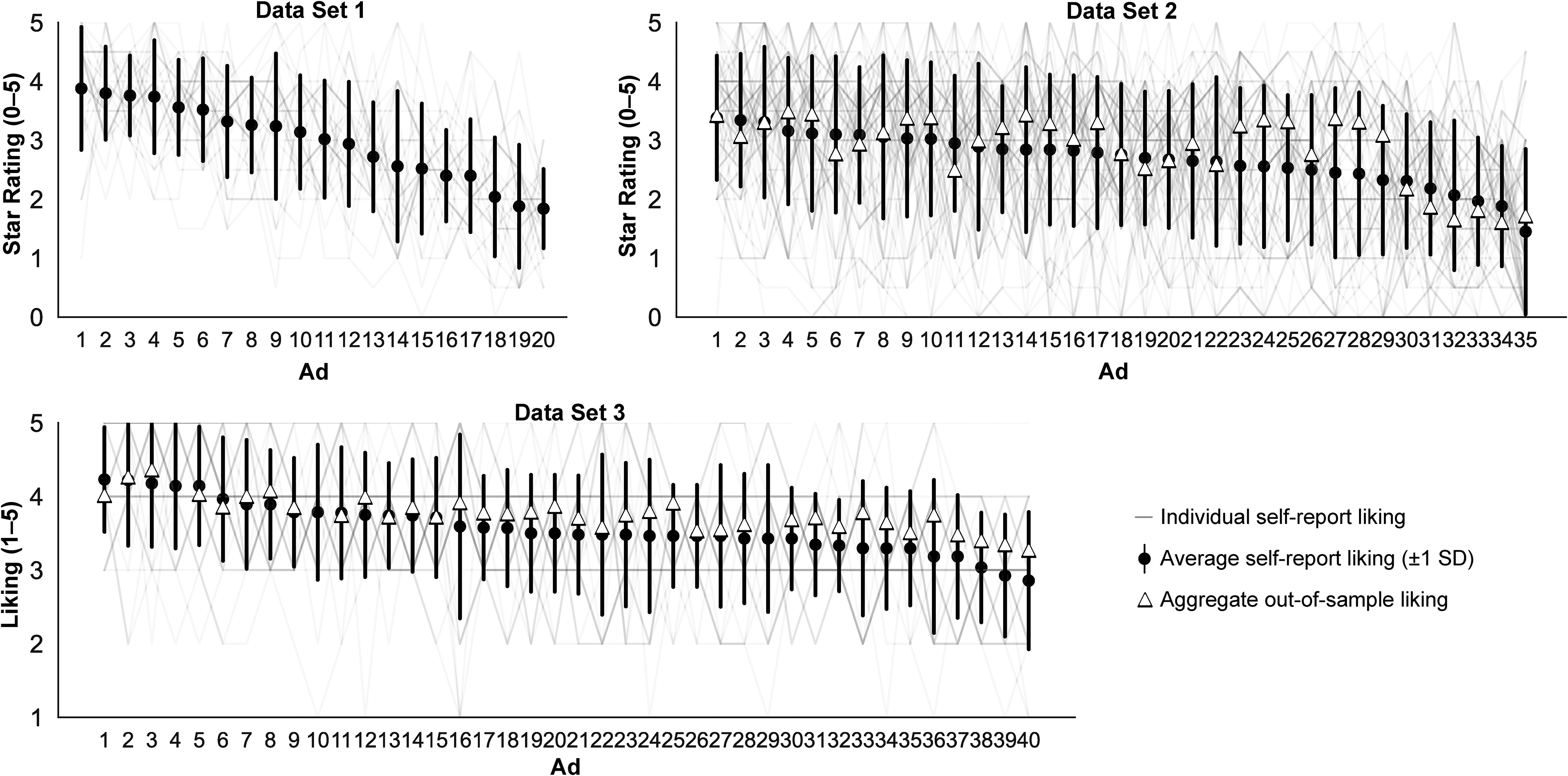

Across the three data sets, 113 participants viewed subsets of 85 video ads (3,720 presentations in total) while undergoing fMRI scanning. There was no missed response of liking rating for Data Sets 1 and 2 since participants did not have a time limit at the star-rating phase. In Data Set 3, on 21 (1.88%) occasions, responses were absent during the five-second rating window, and thus the corresponding brain recordings were discarded, leaving a total of 3,699 trials for analysis (Figure 2). Interrater agreement of ad liking within each data set, measured by intraclass correlation coefficients (two-way mixed effects, consistency, single rater), was low (Data Set 1: .32; Data Set 2: .13; Data Set 3: .16), showing idiosyncrasies in preference for the video ads.

Self-Report and Aggregate Out-of-Sample Liking of Video Ads.

Aggregate out-of-sample liking

Aggregate out-of-sample liking was available for two data sets (Data Sets 2 and 3). For Data Set 2, aggregate liking was based on another group of 117 individuals not involved in fMRI scanning, who watched and rated those 35 ads using the same five-star scale (0–5 with half-star increments). For Data Set 3, another group of 186 individuals not involved in fMRI scanning watched and rated 37 of the 40 ads, using the same five-point scale.

Method

The analysis of the neuroimaging data sets consisted of four steps. First, the fMRI data underwent a standard preprocessing procedure, and then the whole-brain voxel time series were projected onto the Neurosynth topic space by calculating the dot products with the 400 topic association maps. We took the topic expression scores averaged during ad exposure and ran a linear mixed model (LMM) with self-report ad liking as the dependent variable, identifying significantly predictive topics after correcting for multiple comparisons. As a last step, we redid the LMMs for each psychological process by averaging the expression scores of the relevant topics. We then conducted time-course analyses, extracting signals both with a three-second rolling window along ad exposure (for moment-to-moment predictiveness) and in four quarters (for relative contributions across the span of ad).

Neuroimaging data preprocessing

We preprocessed the neuroimaging data with the default settings of the fmriprep software version 1.4.0 (Esteban et al. 2019). To correct for head motion, we realigned the functional images to the mean image. Functional images were slice-time-corrected, coregistered to the anatomical image, and spatially normalized to the Montreal Neurological Institute template. The whole-brain time series were then detrended and scaled to percentage signal change, with eight confounding variables (six motion parameters, the average global signal, and the white matter signal) regressed out. After anatomical-to-Neurosynth topic space projection (see details below and Figure 3, Panel A), we extracted the time series for each ad exposure within each participant (Figure 3, Panel B). To account for different video onset times among participants, and varying repetition times (i.e., the time interval between each brain image) for each of the three data sets, we time-corrected and upsampled the whole-brain time series for each ad to 1 Hz by linear interpolation. In addition, we added five seconds to all video onset times to account for the delay due to hemodynamic response.

Anatomical-to-Neurosynth Analysis.

Mapping brain anatomical space onto Neurosynth topic space

Figure 3, Panel A, illustrates the anatomical-to-Neurosynth topic space projection after preprocessing. The Neurosynth database (version 0.7, released in July 2018) 4 contains a set of 400 topics extracted with LDA from the abstracts of 14,371 neuroscientific publications (see Poldrack et al. [2012] and Yarkoni et al. [2011] for details of data extraction and text mining). LDA extracts a single topic that assigns a large weight to each of the co-occurring terms in article abstracts, resulting in a cluster of semantically related words. Each topic is represented by a brain map, which is a vector of z-scores representing the nonzero association between the topic loading and voxel activation reported in the publications. (The z-scores are converted from the p-values obtained based on a two-way chi-square test on a 2 × 2 contingency table of topic presence crossed with voxel activation.) Essentially, the association map indicates the brain regions reported more consistently in publications that belong to the topic than in those that do not.

For the anatomical-to-Neurosynth projection, we modeled our approach after the procedure established in the extant literature (Cosme and Lopez 2020; Doré, Weber, and Ochsner 2017; Van Ast et al. 2016). The Neurosynth topic maps were first thresholded at p < .01 with false discovery rate (FDR) correction (Benjamini and Hochberg 1995). The topic expression score was then calculated as a weighted average of brain activity, with weights defined by the thresholded Neurosynth topic map. Alternatively speaking, each participant's whole-brain time series (consisting of activity of about 50,000 voxels at 1 Hz) of an ad was converted into a time series of 400 expression scores by obtaining its dot products with the whole set of Neurosynth topic maps.

Identifying Neurosynth topics predictive of ad liking

Akin to traditional voxel-wise general linear model analysis, we estimated 400 LMMs, topic by topic, with self-report liking after ad exposure as the dependent variable (N = 3,699 trials from 113 participants, Figure 3, Panel B). In each LMM, the topic expression score was entered as the independent variable, with participants as random slopes and intercepts. (Both dependent and independent variables were centered at the mean and scaled by the standard deviation within the participants, and were winsorized at ±3 standard deviations to reduce the effect of outliers [accounting for .4% of all instances].) Out of the 400 topics, we identified those whose expression score significantly predicted self-report ad liking, using a threshold of p < .01 after FDR correction for multiple comparisons. We then categorized these statistically significant topics into one of the seven psychological processes (perception, language, attention, executive function, memory, social cognition, and emotion) by examining the topic words. The remaining topics contained predominantly anatomical (e.g., “gyrus,” “sulcus”) or pathological words (e.g., “psychopathy,” “disease”).

Time-course analyses of neural signals

After identifying Neurosynth topics (from the entire set of 400 topics) predictive of ad liking, we combined the topics under each psychological process and calculated the average score (akin to averaging voxel signals in an anatomical cluster). We then investigated at which time point these neural signals associated with different psychological processes became predictive of liking during ad exposure. Specifically, the average expression scores were extracted with a rolling preceding 3-second window from the 1st to the 15th second from the start of the ad—in 2-second increments—and from the 15th to the last second until the end of the ad. (Recall that across the three data sets, the ads were of variable lengths, between 15 and 60 seconds.)

At each time point, as described in the previous section, we estimated an LMM using self-report liking as the dependent variable and each expression score as the independent variable, with participants entered as random slopes. By tracking the coefficients of the LMMs across the timeline, we could then observe when neural signals became predictive of liking. (Within each topic, multiple comparisons across time points were corrected with the FDR procedure.) Finally, to compare the relative predictiveness across the span of ad exposure, we also divided each ad into four quarters and extracted the average expression scores within each quarter. For each psychological process, we estimated an LMM with the four expression scores (one from each of the quarters) entered together as the independent variables. We also estimated models with neural signals associated from all processes entered: four models from each of the quarters as well as average and peak signals of the entire ad.

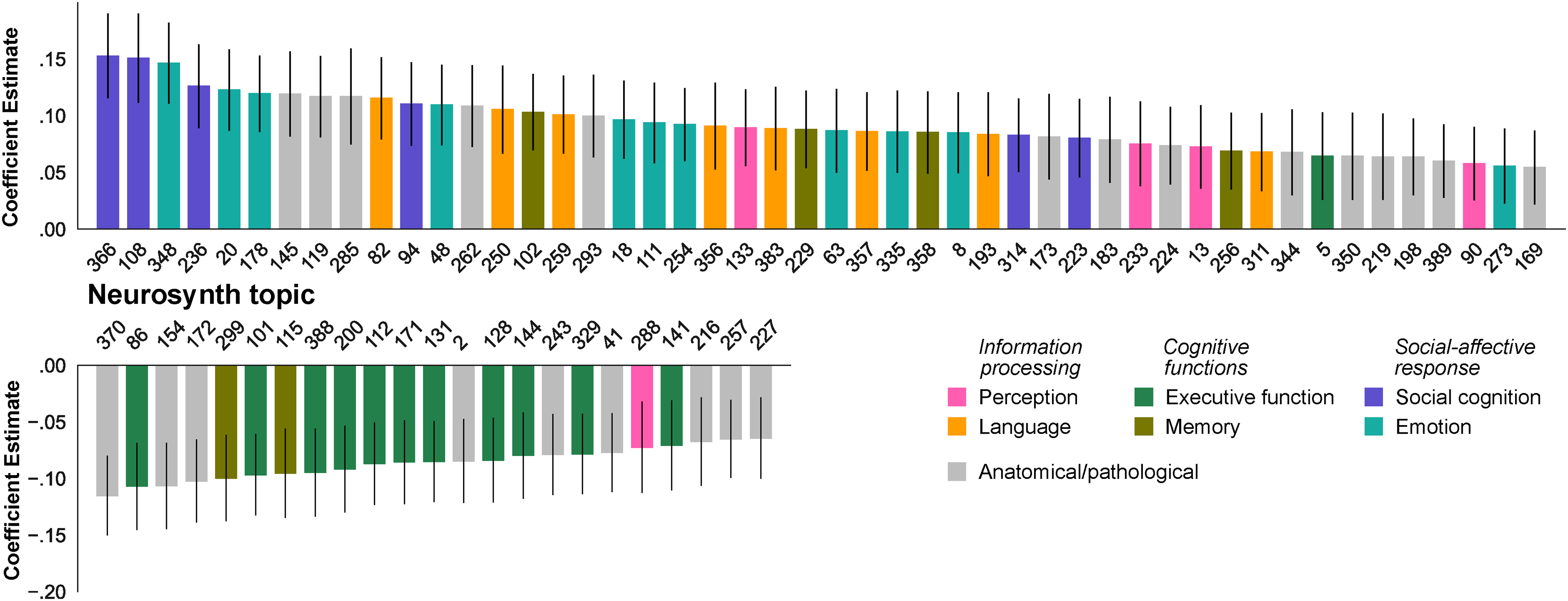

Results

Of the 400 Neurosynth topics, there were 48 topics whose expression scores averaged over ad exposure positively correlated with subsequent ad liking, while 23 were negatively correlated (ps < .01, FDR corrected). Figure 4 shows coefficient estimates of significant topics, and Table 4 details the first five constituent words from the top three topics of each psychological processes. (The full list of significant topics, and their constituent words, can be found in Web Appendix B1.) Examining the word clusters of the significant topics, the topics with the largest positive coefficients (i.e., higher expression score during ad exposure means greater ad liking) seem to be that of social cognition and emotion, followed by language, memory, and perception. For example, Topic 366, which has the largest coefficient, contains words such as “mentalizing,” “belief,” and “inference,” while Topic 348 contains words such as “emotion,” “expression,” and “affective.”

Neurosynth Topics Predictive of Video Ad Liking.

Most Predictive Topics from Each Psychological Process.

Notes: Only the five top terms are shown for each topic.

In contrast, most of the negative topics seemed to belong to executive functions. The top topic (86) seems to concern numeracy (“number,” “numerical,” “numbers,” “magnitude,” “symbolic”), while others involve inhibitory control (e.g., 388: “conflict,” “response,” “monitoring,” “pm,” “control”). The sole positive topic related to executive functions is about judgment and evaluation (5: “judgments,” “judgment,” “judged,” “make,” “metacognitive”). It should also be noted that two negative topics pertain to working memory (299: “memory,” “working,” “verbal,” “performance,” “maintenance”; 115: “wm,” “memory,” “working,” “maintenance,” “performance”), and another negative topic pertains to perception, more specifically mental rotation (288: “mental,” “rotation,” “visuospatial,” “spatial,” “transformation”). No topic related to attention was found to be significant in the exploratory analysis.

In supplementary analyses, we observed similar findings using the Neurosynth 50-topic model instead of the 400-topic one (Web Appendix B2). We also conducted a series of traditional anatomically based analyses (Web Appendix C1 and C2), finding that consistent with the Neurosynth decoding findings, brain regions associated with memory, emotion, and social cognition tracked self-report ad liking. To verify if attention was involved with ad liking, we examined a topic (297) pertaining to attention and found the expression score not significantly associated with liking (Web Appendix D1). We also tested term decoding instead of topic decoding and found 146 Neurosynth terms (out of 3,228) predictive of liking, suggesting similar psychological processes (Web Appendix D2). We also attempted to examine task-relevant terms—“preference,” “ratings,” and “evaluation”—and only “ratings” seemed to be predictive of liking (Web Appendix D3). Finally, these expression scores were found to explain additional variance in self-report liking than VOI activity alone (Web Appendix E).

Moment-to-moment predictiveness of neural signals

After identifying Neurosynth topics predictive of ad liking in each psychological process, we calculated the average expression scores of these predictive topics as the neural signals associated with that process (excluding topics with opposite signs as previously detailed, i.e., Topics 299 and 155 for memory, 288 for perception, and 5 for executive function) and observed their moment-to-moment predictiveness of liking (Figure 5, Panel A). Neural signals associated with emotion and memory seemed to become predictive early on, as soon as after about three seconds, while that involving social cognition, language, and executive function exhibited predictiveness a few seconds later, at around five to seven seconds. Perception signals, however, did not become predictive until later on, after ten seconds. (We repeated the analysis with cumulative instead of moment-to-moment signals, with similar results on the emergence of liking predictiveness; see Web Appendix F.)

Time-Course Analyses of Neural Signals Predictive of Self-Report Liking.

Relative contributions to liking over the span of ad exposure

For each psychological process, we inspected the relative contributions to liking across the span of ad exposure by examining the coefficient estimates of the associated neural signals extracted from the four quarters of ad exposure (Figure 5, top of Panel B; see Web Appendix G1 for coefficient estimates of all topics). Overall, we observed a late peak in predictiveness for perception, memory, and executive function, while the predictiveness of language and social cognition had a peak-and-stable pattern and that of emotion took on a peak-and-fall shape. Modeling all processes together per quarter (Figure 5, bottom of Panel B; see Web Appendix G2 for coefficient estimates of the models) further suggests that while emotion was most predictive of liking early on, its predictiveness waned toward the end of the ad just as social cognition and executive function emerged as more important predictors.

Discussion

Neural signals extracted by automatic meta-analytic decoding (Neurosynth) and associated with a broad range of psychological processes—including information processing, cognitive functions, and social-affective response—during video ad exposure predicted subsequent self-report ad liking. While emotion and memory were found to be the earliest indicators of liking, comparison over the span of ad exposure reveals some shifts. Specifically, the predictiveness of emotion seemed to wane over time and was taken over by social cognition and executive function toward the end of the ad. Alongside this shift, there was an apparent late peak of perception in terms of predictiveness.

Part 2: Predicting Aggregate Ad Liking with Neural Signals

Having identified neural signals—and the associated psychological processes—related to ad liking within a consumer, in this part, we focus our analysis on the ads themselves, that is, whether neural signals inform how well individual ads perform at the aggregate level. We begin by examining whether, at the aggregate level, neural signals extracted from the neuroimaging sample correlated with aggregate out-of-sample liking. We then test whether these neural signals offer additional information than traditional VOI-based analysis as well as self-report liking. We evaluate whether early-onset psychological processes, found to be predictive of individual liking within the first ten seconds, also provide clues on aggregate out-of-sample liking. Finally, we conduct two behavioral studies as an effort to replicate and generalize the findings.

Method

We analyzed two of the three data sets (Data Sets 2 and 3), which had aggregate out-of-sample liking data available for 72 ads (all 35 ads in Data Set 2, and 37 out of 40 ads in Data Set 3; see Figure 2), with individual ads as the unit of analysis. Similar to the previous section, we used the average expression scores of topics from each psychological process as our brain-based measures. Both average expression scores and self-report liking ratings were centered at the mean, scaled by the standard deviation, and winsorized at ±3 standard deviations within participants before calculating the averages per ad. Aggregate out-of-sample liking was centered at the mean and scaled by the standard deviation within each data set.

We first examined the correlations between averaged neural signals (of both the entire ad and the first ten seconds) and aggregate liking. To verify whether neural signals based on Neurosynth decoding offer additional information compared with traditional VOI-based analysis and self-report measurements, we estimated linear regression models predicting aggregate liking and compared them with three baseline models: (1) VOI activity (amygdala, dlPFC, NAcc, and vmPFC) as the baseline VOI-based model used in the previous literature (Venkatraman et al. 2015), (2) self-report liking, and (3) self-report liking and VOI activity.

Results

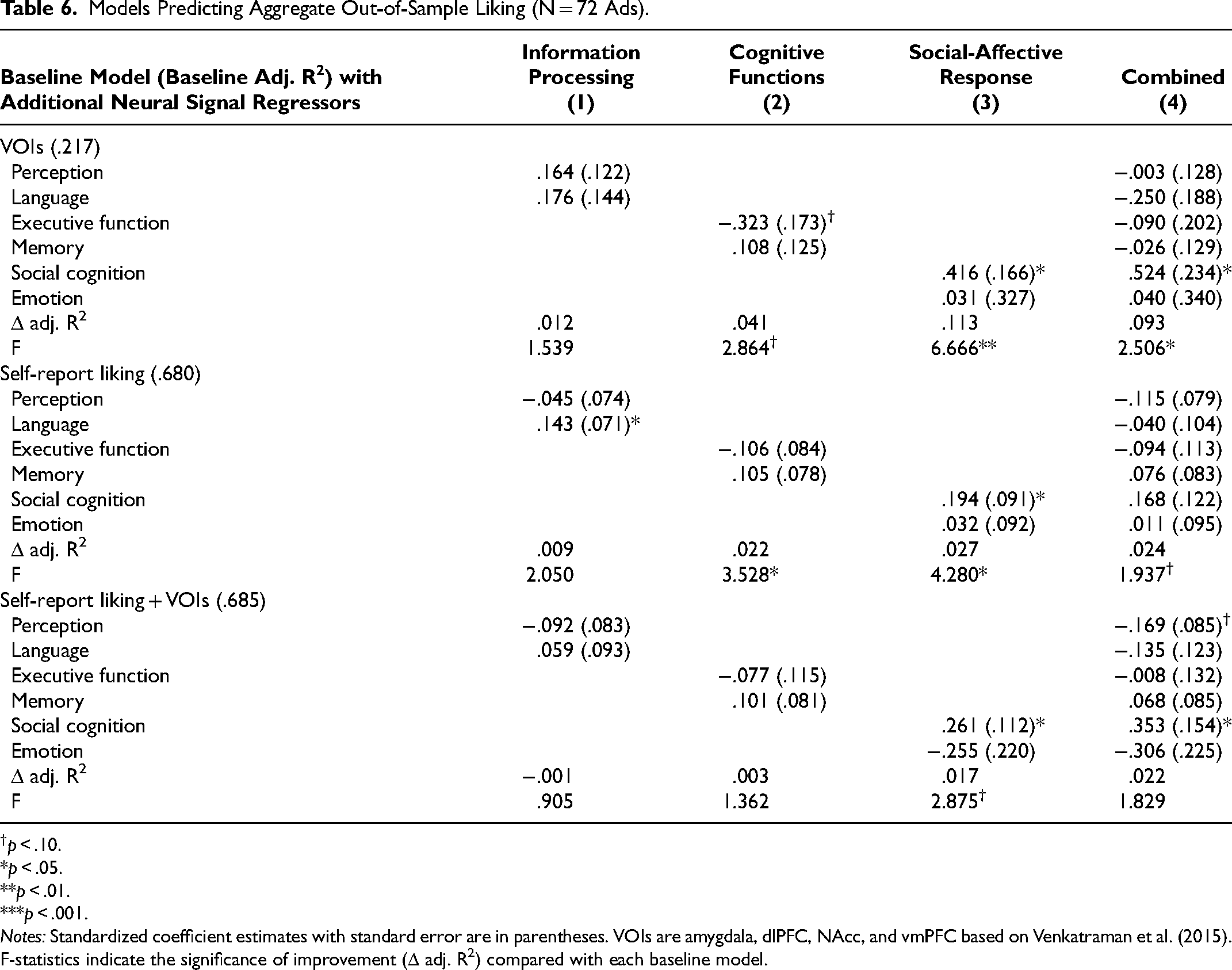

The neural signals associated with the six psychological processes during ad exposure in the neuroimaging sample were correlated with aggregate out-of-sample liking (Table 5, top row), as was the neuroimaging sample's averaged self-report liking (all ps < .01). However, for early-onset neural signals, only those associated with executive function (p = .014), memory (.056), social cognition (.003), and emotion (< .001) predicted aggregate liking (Table 5, bottom row).

Correlations Between Averaged Neural Signals and Aggregate Out-of-Sample Liking (N = 72 Ads).

p < .10. *p < .05. **p < .01. ***p < .001.

In a series of linear regressions comparing different baseline models (Table 6; coefficient estimates for the baseline models can be found in Web Appendix H), we found that overall, neural signals based on Neurosynth decoding explained the additional variance in aggregate liking compared with the baseline model of VOI analysis (F = 2.506, p = .031) and marginally improved the model based only on self-report liking (F = 1.937, p = .088). Specifically, social-affective response (particularly social cognition) signals improved significantly in both baseline models (VOIs: F = 6.666, p = .002; self-report liking: F = 4.280, p = .018) and marginally in the baseline model combining VOIs and self-report liking (F = 2.875, p = .064). The same analysis was conducted with early-onset (first ten seconds) neural signals, showing that those associated with social-affective response improved prediction (Web Appendix I).

Models Predicting Aggregate Out-of-Sample Liking (N = 72 Ads).

p < .10. *p < .05. **p < .01. ***p < .001.

Notes: Standardized coefficient estimates with standard error are in parentheses. VOIs are amygdala, dlPFC, NAcc, and vmPFC based on Venkatraman et al. (2015). F-statistics indicate the significance of improvement (Δ adj. R2) compared with each baseline model.

Behavioral Replication of Early-Onset Psychological Processes

Findings from the neuroimaging data analysis suggest that, at the aggregate level, early-onset neural signals (within the first ten seconds) collected in a small sample could be used to predict aggregate out-of-sample liking. To replicate and generalize the finding, we conducted two online behavioral studies (Studies B1 and B2) using retrospective self-report ratings of psychological processes, in lieu of brain measurements, immediately after participants viewed the first ten seconds of ad excerpts. We limited our investigation to information processing (perception and language) and social-affective response (social cognition and emotion). This is because we found the topics under executive function too broad for the current behavioral replication (spanning from cognitive load to inhibitory control to numeracy) and encountered conflicting signs for topics under memory (positive in general but negative coefficients found for topics associated with working memory). In both studies, participants viewed the first ten seconds of TV commercials that had been broadcast during the U.S. Super Bowl (in the 2019 and 2023 seasons, respectively). Participants were recruited in the United Kingdom to minimize the potential confounding effect of ad and brand familiarity.

Behavioral Studies Method

Stimuli

Study B1 used 20 ads broadcast in the 2019 season of the U.S. Super Bowl, all of which were 30 seconds long, while Study B2 used 50 ads from the 2023 season of variable lengths (min = 15 s, max = 90 s, M = 44.4 s, SD = 18.6 s). Ten-second segments from the beginning were excerpted from the 70 ads. The population liking of these ads was measured by the USA Today Ad Meter score (available at https://admeter.usatoday.com), a 0-to-10 rating based on a national panel (min = 3.70, max = 6.56, M = 5.23, SD = .63; see Web Appendix J for the full list of ads).

Participants and task

Participants from the U.K. were recruited using Prolific (Study B1: N = 102; Mage = 40.3 years, SD = 13.1; 76.5% female; Study B2: N = 200; Mage = 39.6 years, SD = 12.8; 50.5% female). Via a survey hosted on Qualtrics, they watched the first ten seconds of ad excerpts in random order. Immediately after each excerpt, the participants reported their liking (“How much did you like this commercial?”), their interest in viewing the rest of the ad (“How interested are you in watching the rest of this commercial?”), and their psychological processes during the viewing on a seven-point Likert scale. In Study B1, participants rated two out of four psychological processes: (1) perception (“How good did you find the visuals and sounds in the commercial?”), (2) language (“How good did you find the dialogue, narration and/or text in the commercial?”), (3) social cognition (“To what extent did the commercial make you think about the characters’ feelings and intentions?”), and (4) emotion (“To what extent did the commercial evoke an emotional reaction in you?”). 5 Each participant in Study B1 answered only two questions out of four (same question subset across all ads) to lessen their burden, as early testing found that it was difficult to attend to the four aspects of each ad at the same time.

Based on the results from Study B1, all participants in Study B2 rated only social cognition and emotion, alongside liking and interest to view the rest (question order was randomized per participant in both studies). Participants in Study B1 watched all 20 ads in the 2019 season, while those in Study B2 watched a random subset of 10 ads (out of 50) in the 2023 season. Finally, Study B1 collected ad and brand familiarity ratings (“How familiar are you with the commercial/the brand of the commercial?”) using a three-point Likert scale (“not at all,” “somewhat,” and “very much”) at the end. Participants were paid for their effort (Study B1: £1.88; Study B2: £1.20).

Behavioral studies results

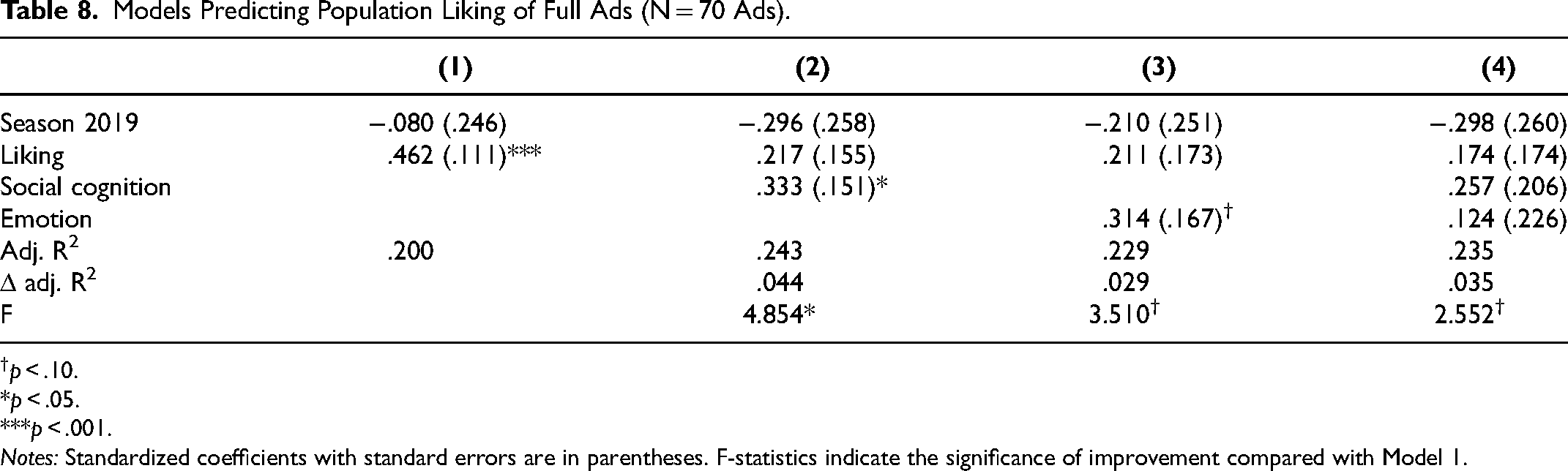

The results from Study B1 showed that the ten-second excerpts of commercials broadcast during the U.S. Super Bowl were generally unfamiliar to the U.K. participants (90.8% rated “not at all,” 6.3% “somewhat,” and 2.9% “very much”), who also found many of the brands unfamiliar (67.7% “not at all,” 19.2% “somewhat,” and 13.1% “very much”). Self-report ratings were then averaged over participants for each ad excerpt and were then compared against the population liking of the full ads (Ad Meter score). Among the 20 ads in Study B1, ratings of perception and language did not correlate with population liking (ps = .715 and .624, respectively), while the correlations of social cognition and interest to view the rest had marginal significance (ps = .079 and .074, respectively). (A preregistered analysis modeling population liking at the trial level showed similar results; see Web Appendix K. Excluding trials where participants reported being familiar with the ad or the brand did not change results; see Web Appendix L.) Analysis with the 50 ads in Study B2 showed that both social cognition and emotion ratings of the ten-second ad excerpts correlated with population liking of the full ads, alongside liking and interest to view the rest (all ps < .01; Table 7).

Correlations Between Averaged Self-Report Ratings of Ad Excerpts and Population Liking.

p < .10. *p < .05. **p < .01. ***p < .001.

We then examined whether the ratings on the psychological processes explained additional variance in population liking after controlling for self-report liking (Table 8; we did not include interest to view the rest because it correlated strongly with self-report liking, r = .936). We pooled data from studies B1 and B2 and then estimated regression models with population liking as the dependent variable (a dummy term of Super Bowl season was also entered). After accounting for liking, social cognition explained additional variance in population liking (F = 4.854, p = .031), while a similar marginal trend was observed for emotion (F = 3.510, p = .065) and the combined model (F = 2.552, p = .086), representing a 14%–22% increase in adjusted R2. This behavioral replication efforts echo the neuroimaging findings on the role of early-onset social-affective responses (particularly social cognition) even after accounting for self-report liking.

Models Predicting Population Liking of Full Ads (N = 70 Ads).

p < .10. *p < .05. ***p < .001.

Notes: Standardized coefficients with standard errors are in parentheses. F-statistics indicate the significance of improvement compared with Model 1.

Discussion

At the aggregate level, neural signals associated with various psychological processes tracked out-of-sample liking, with social-affective responses (particularly social cognition) improving the prediction of out-of-sample ad liking compared with models based on traditional anatomically based neuroimaging analysis and self-report liking. In both neuroimaging data and behavioral replication, early-onset (first ten seconds) social-affective response improved the prediction of out-of-sample liking compared with the model based on self-report liking of the participants alone. Given that self-report liking based on full or partial viewing tracked out-of-sample liking strongly already (r = .827 for full viewing [neuroimaging data] and .471 for partial viewing [behavioral replication]), the addition of neural signals and self-report ratings of psychological processes led to only modest model improvements. Nonetheless, these findings suggest the value of measuring individual psychological processes during ad exposure in addition to postexposure summary report of liking.

General Discussion

Consumers develop and update their preferences after they encounter products, services, and marketing information. The difficulty of studying the psychological processes leading to stated preference had been identified early on in the field of marketing research (Blankenship 1942; McGuire 1976). Researchers have used behavioral experiments (Kardes 1996) and physiological measurements such as eye fixation, facial expression, and skin conductance to provide insights on the real-time psychological processing of marketing information (Baldo et al. 2022; Pieters, Warlop, and Wedel 2002; Teixeira, Wedel, and Pieters 2012; Venkatraman et al. 2015). Recently, neuroimaging methods have found increasing prominence as an additional tool to shed light on the psychological processes underlying liking (Camerer and Yoon 2015; Plassmann, Ramsøy, and Milosavljevic 2012; Smidts et al. 2014).

In this article, we employed the latest analysis techniques (Neurosynth decoding) on pooled neuroimaging data to investigate how consumer preference and its psychological antecedents emerge during immersive experiences such as watching video ads. This study revealed the following main findings:

Neural signals associated with a broad range of psychological processes—including information processing, cognitive functions, and social-affective response—during video ad exposure predicted subsequent self-report ad liking. These processes (except perception) were predictive of liking within about the first ten seconds of an ad, with emotion and memory being the earliest predictors after the first three seconds. Over the span of ad exposure, while the predictiveness of emotion peaked early and fell, that of social cognition had a peak-and-stable pattern, followed by a late peak of predictiveness in perception and executive function. At the aggregate level, neural signals—especially those associated with social-affective response—improved the prediction of out-of-sample ad liking based on traditional anatomically based neuroimaging analysis and self-report liking. Early-onset (first ten seconds) social-affective response predicted population liking.

Psychological Processes Underlying Ad Liking

Departing from anatomically based neuroimaging analysis, which links consumer responses to specific brain structures, we employed an increasingly used method that converts neural activity into more interpretable brain-based measures based on an automatic meta-analytical database of the extant literature (Neurosynth). These brain-based measures (Neurosynth topic expression scores) allowed us to identify multiple psychological processes during ad exposure that are related to subsequent self-report liking. Consistent with the extant literature on advertising, the neuroimaging analysis revealed that ad liking is driven by multiple and simultaneous psychological processes, including information processing, cognitive functions, and social-affective response.

Information processing

Brain activity related to processing incoming information (both perception and language) was associated with liking. This finding is consistent with neuroscientific research showing that perceptual and semantic processing are linked to executive and higher-order regions via recurrent connections (Markov et al. 2013; Ye and Zhou 2009). This means they can be governed by top-down mechanisms responding to intrinsic goals and external incentives (Gilbert and Li 2013) as well as bottom-up mechanisms such as salience that involuntarily draws attention to the message (Itti and Koch 2000). Behaviorally, eye fixation on certain visual messages has been described as a sign of increased message engagement (Lahey and Oxley 2016) and greater processing depth of the messages (Wedel and Pieters 2008), while indicators of persuasion are linked to both low- and high-level linguistic message features (Hosman 2002).

Cognitive functions

The ability to recall is linked to how successful information was encoded (Mandler 1980). In this study, the neuroimaging findings suggest that stronger brain activity related to memory encoding precedes liking, providing a more direct link between information encoding and subsequent preference. It should be noted, however, that memory-related topics (topics 299 and 115) were negatively correlated with liking. On closer inspection, they contain words such as “performance,” “maintenance,” “capacity,” and “load,” suggesting the involvement of declarative instead of episodic memory (Percy 2004). The fact that the current neuroimaging analysis gives conflicting findings suggests a more nuanced view on the role of memory in advertising. It also points to future research directions on whether a demand on declarative memory (e.g., presenting the audience with facts and figures) could be detrimental to ad liking.

Relatedly, brain activity suggesting cognitive load is linked to dislike of the ad, confirming the long-standing observation that information complexity negatively impacts comprehension, which in turn affects downstream attitudes (Barnett and Cerf 2017; Mick 1992; Morrison and Dainoff 1972). The presence of top-down executive functions can also be a sign of deliberation or counterarguing (Wright 1975), leading to cognitive resistance to the content. Given the broad range of Neurosynth topics from numeracy to cognitive load that were negatively correlated with liking, more research is needed to delineate the effect of different executive functions on ad liking.

In this study, we did not find a significant role of attention, one of the major cognitive functions, in relation to subsequent liking. One reason might be that in the neuroimaging task, participants were confined in the scanner and instructed to attend to the ads, unlike in a more natural environment where video ads often compete for consumers' attention against other distractions. Alternatively, top-down attention might not be relevant in an immersive experience such as video advertisement. Further research is needed to shed light on this issue.

Social-affective response

Evoking emotions in consumers has long been recognized as one of the critical tools in advertising (Batra, Myers, and Aaker 1996; Holbrook and Batra 1987; Holbrook and O'Shaughnessy 1984). While retrospective reports of emotions might be affected by recall bias, here we find, using real-time brain measurements, that affective responses during ad exposure predict liking, further confirming the role of emotion in advertising. More importantly, social cognition—the act of understanding other people's intentions and beliefs—was found to be a strong predictor of ad liking, at both the individual and the population level.

Mentalizing has been found in effective communication (Cacioppo, Cacioppo, and Petty 2018; Falk et al. 2013; Falk and Scholz 2018). While previous research has mainly focused on interpersonal persuasion, for example, word-of-mouth recommendations (Cascio et al. 2015), here we found that even in one-way marketing communication such as video ads, the engagement of mentalizing efforts in the brain of the message receiver—reading the intentions and the motivations of others, projecting what “people like us” would do (Frith and Frith 2006)—is a precursor of subsequent liking. This echoes recent works showing that neural activity in the brain's social cognition system tracks message virality (Scholz et al. 2017) and ad liking is associated with similar neural activity across participants at mentalizing regions in the brain (Chan et al. 2019).

More broadly speaking, the “social brain” (Frith 2007) serves our default need for social cognition in everyday interactions, the success of which depends on the correct understanding of the wants and needs of others. In this sense, evoking the neural circuits implicated in social cognition might be seen as a sign of meaningful engagement with an audiovisual experience. In the context of consumer research, mentalizing is the human response to a narrative, seen both as a default mode through which consumers organize and interpret experience (Padgett and Allen 1997) and as a means for marketers to influence the consumer decision-making process (Adaval and Wyer 1998). A previous meta-analysis of marketing literature has found that narrative transportation—the extent to which consumers mentally enter a world that a story evokes—is linked to a stronger affective response and less critical thoughts (Van Laer et al. 2014).

Theoretical Contributions to Advertising Research

This study offers a brain-based account of advertising effectiveness, confirming the long-acknowledged and intuitive roles of cognition and emotion (Barry 2002; Barry and Howard 1990) while also providing a finer-grained taxonomy informed by neuropsychology by (1) encompassing information processing; (2) enumerating cognitive functions; and, in particular, (3) highlighting social cognition in addition to affective response. In addition, our findings offer moment-to-moment observations on the temporal sequence that sheds light on the debate of the hierarchy (or lack thereof) of advertising effects (i.e., whether the effects of cognition are preceded by emotion or vice versa).

Whereas supporters of a hierarchy-free model of advertising effects invoke the interconnectedness of neural circuitries as an argument for the inherent intangibility of the effects of cognition and emotion (e.g., Vakratsas and Ambler 1999; Weilbacher 2001), here we observe in actual neuroimaging data that, at least in the context of video advertising, various psychological processes take on different temporal dynamics. While most processes (except perception) predicted liking early on in moment-to-moment analysis, over the span of ad exposure, the effects of perception and cognition (especially executive function) seemed to be more prominent toward the end of the ad, while the effects of emotion and social cognition peaked earlier. This hints at the temporal primacy of social-affective response over information processing and cognitive functions.

However, the diminishing predictiveness of emotion toward the end does not conform with the peak-end rule of experience (Baumgartner, Sujan, and Padgett 1997; Kahneman et al. 1993), which posits in part that the final moments determine the retrospective evaluation of an experience. Instead, such recency effect seemed to be present for social cognition, executive function, and perception. Given the conflicting evidence on the end effect (Li et al. 2022; Miron-Shatz 2009; Tully and Meyvis 2016), our study offers a more nuanced picture of varying patterns for different psychological processes. In fact, the late peak in social cognition predictiveness that we observed was consistent with previous works showing that the predictiveness of concluding moments depends on the presence of narrative closure (Hui, Meyvis, and Assael 2014; Mukherjee and Lau-Gesk 2016). The finer-grained psychological account from this study serves as a starting point for more experimental testing to tease out the confounding effect of emotion and social cognition (feelings versus meanings) in the retrospective evaluation of experience.

Finally, our attempt to use early-onset psychological processes to predict population liking adds to the literature of thin slice impressions, that is, inferences based on brief exposure to marketing information. Intuitive judgments based on highly truncated glimpse of an event are found to be reliably accurate (Ambady, Krabbenhoft, and Hogan 2006); in contrast, consumer evaluation of advertisement is known to be affected by exposure time (Elsen, Pieters, and Wedel 2016). Here, we focused on the diagnosticity of various ratings on thin slice impressions of video advertisements. Whereas we found that, consistent with extant literature, the liking ratings of ad excerpts by our behavioral sample correlated with population liking, their ratings on social-affective response explained additional variance that was not captured by liking alone. While previous research has shown that how to judge (intuitive versus deliberative) thin slice impressions affects the rater's accuracy (Ambady 2010), our findings suggest that what to judge on (overall preference or social-affective response to information) might also affect the predictiveness of thin slice judgments. More research is needed to shed light on how to optimize consumer evaluation of thin slice impressions.

Methodological Contributions to Consumer Neuroscience

In this study we apply the method of Neurosynth decoding to transform neuroimaging data into brain-based measurements of concurrent psychological processes. Since the introduction of Neurosynth more than a decade ago (Yarkoni et al. 2011), using it to infer mental states based on whole-brain activity has seen wide-ranging application, from identifying the neural signature of pain (Lieberman and Eisenberger 2015) to informing the psychological mechanism of gain-loss framing (Li et al. 2022) to interpreting genetic influences on brain functions (Mallard et al. 2021). This study, similar to Van der Meer et al. (2020), uses Neurosynth to convert whole-brain activity into a combination of multiple psychological processes. Such an approach, we believe, can be particularly fruitful for consumer neuroscience research, whose interests lie in understanding complex mental and behavioral phenomena involving rich consumer experience. We note that anatomical-to-Neurosynth transformation could be applied easily to existing neuroimaging data sets as an intermediary step before general linear model analysis. It is our hope that this study would inspire a reanalysis of existing neuroimaging data sets for novel insights in consumer neuroscience.

There has been robust empirical evidence suggesting that neural responses in small samples of participants track aggregate responses in large groups of people who did not undergo neuroimaging (Falk and Scholz 2018; Knutson and Genevsky 2018). Moreover, these effects often hold above and beyond the predictive capacity of traditionally used self-report measures such as behavioral intention and ratings of message effectiveness (Chan et al. 2019; Falk, Berkman, and Lieberman 2012; Knutson and Genevsky 2018). This study adds to the growing body of evidence that brain measurements can serve as an additional source of information that would otherwise be inaccessible by self-report instruments. More work must be done to pinpoint specific contexts where brain information might best supplement self-report measurements in predicting the market, given the relative high cost of deploying neuroimaging.

More broadly speaking, consumer neuroscience has been an expanding subfield of marketing research since the turn of the century (Levallois, Smidts, and Wouters 2021). Yet, there are hurdles limiting its reach due to the high cost of original data collection, small sample sizes, and problems of generalizability and replicability (Turner et al. 2018). Here, we present a multisite and international effort that combines existing neuroimaging data sets so that the sample size is relatively large (>100 participants) and the video ads have ecological validity and large variability (85 actual TV ads from different countries, in different languages, and of various products and services). We hope to see more “mega-analyses” of existing neuroimaging data sets (Costafreda 2009) that would help answer more nuanced questions in marketing research from a neuroscientific perspective, without incurring substantial start-up costs.

Managerial Implications

Our findings offer neurophysiological evidence on the importance of offering not only an emotionally engaging opening but also a sustaining narrative to “hook in” the audience in the advertisement (Boller and Olson 1991; Escalas 2004; Escalas, Moore, and Britton 2004). Moreover, the late-peak predictiveness of perception (helpful to liking) and executive function (harmful) suggests that if consumers are expected to watch the entire video ad (e.g., in a captive environment such as in a movie theater), ending the video with sensory enhancement, for example, zooming (Jai et al. 2021) or slow motion (Jung and Dubois 2022), might be an effective strategy for boosting liking. We look forward to validating these hypotheses in future experimental studies for more specific insights on content creation and optimization. At the same time, marketing practitioners are advised to consider tapping into the specific psychological processes while eliciting consumer evaluation, for example, including questions of psychological processes.

While neuroimaging remains a relatively costly procedure compared with traditional focus groups or online surveys, our findings confirm the extant literature that neural signals hold unique market-relevant information that might be inaccessible by self-report measurements (Berns and Moore 2012; Venkatraman et al. 2015). At the same time, neuromarketers can devise more cost-efficient testing by measuring neural responses to video excerpts instead of full content, as this study and other previous works (e.g., Tong et al. 2020) have shown. Given the potentially high stakes of video advertising (ad spots worth millions of dollars during the Super Bowl, or viral videos on online platforms that reach tens of millions of consumers), strategic deployment of neurophysiological measurements should offer worthwhile insights toward improving advertising success.

Limitations and Suggestions for Future Research

We note some limitations of this study. The current set of Neurosynth topics does not offer fine functional resolution necessary to delineate psychological processes beyond broad neuropsychological domains. For example, it is difficult to compare the relative contribution of positive and negative emotions. This is partly due to the limitation of automatic text mining (where mentions of positive and negative emotions often co-occur in a single publication) as well as the complexity of human affect and its overlapping neural circuits (Kragel and LaBar 2016). Term-based Neurosynth decoding could potentially offer more granular differentiation, although, for example, visual inspection of “happy” and “sad” maps reveal highly similar clusters in bilateral amygdalae, notwithstanding the confounding risk of polysemous words. The fact that we could not find attention to be a significant predictor of liking, nor significant correlation with task-related terms such as “preference” or “evaluation,” warrants caution (note, however, robustness of the findings by redoing the analysis with a 50-topic model [Web Appendix B2] and analysis with term-based decoding [Web Appendix D2]). In cases where the focal research question involves specific, well-known neural circuitries, anatomically based inferences might be of better value to researchers.

We also acknowledge the fact that interpreting Neurosynth topics and categorizing them into various neuropsychological domains requires subjective judgments, and it is our hope that the current neuroimaging findings would inspire more testable hypotheses for future replication. Nevertheless, our study shows how using meta-analytic databases such as Neurosynth can shed light on the intricate interplay of major psychological processes behind complex consumer experience such as ad viewing.

We should also note that the temporal dynamics we identified were in part contingent on the stimulus set and the choice of neurophysiological measurements. First, the uneven lengths of the ads used in this study can have implications for time-course analyses: in moment-to-moment analysis with an absolute time scale, psychological processes were captured at same time while ignoring the fact that the content or narrative arc of shorter ads are essentially more compressed. In relative contribution analysis over the quartered ad segments, neural signals were captured at different absolute time points. We note that past studies of temporal trajectories of varying-length ads derives metrics in both absolute (Teixeira, Wedel, and Pieters 2012) or relative time (McDuff et al. 2015); further research is needed to compare the suitability of the two approaches.

More fundamentally, brain measurements with neuroimaging technologies—in this study, blood oxygenation level dependent signals under fMRI—are inherently prone to physiological confounds, such as hemodynamic response delay, limiting the precision of timing to the scale of seconds. Moreover, neural activity is known to be modulated by various factors such as habituation and sensitization (Thompson and Spencer 1966), and therefore caution must be warranted when inferring moment-to-moment psychological states from contemporaneous signals. However, the whole-brain access with fMRI, and with it the potential psychological insights, remains an irreplaceable advantage for this technique compared with other neurophysiological measurements.

Despite these limitations, our observational findings should pave the way for direct hypothesis testing of the psychological processes involved in consumer evaluation in future studies using experimental manipulations. For example, there have been experimental studies manipulating the presence or absence of narrative elements in advertisement and examining the effect on persuasion (Adaval and Wyer 1998; Escalas 2007; Kang, Hong, and Hubbard 2020). Based on the current findings of the relative importance of early-onset emotion and social cognition, and late-peak perceptual and executive function, we envision future research that manipulates the timing of these elements in video advertising to further establish the validity of the findings.

Last, the materials used in this study were video ads, made with the explicit purpose to persuade consumers in a relatively short span of time, in the majority of cases under a minute. Whether the neural signals of ad liking are generalizable to other video genres (such as video clips shared on social media, e.g., TikTok) or of different lengths (such as movies) remains to be seen. More broadly speaking, the psychological processes of consumer evaluation in other types of immersive experience need to be further studied. For example, gaming (Huskey et al. 2018; Mathiak and Weber 2006) or prolonged interactions with products and service agents would likely recruit different neural circuits and psychological processes that precipitate in eventual preference.

Conclusion

While neuroimaging remains a resource-intensive method in marketing research, the increasing accessibility of existing neuroimaging data sets offers a ripe opportunity for more in-depth mega-analysis on consumer psychology with better statistical power. By pooling together multiple fMRI data sets, this study helps delineate the psychological mechanisms underlying ad processing and ad liking, and it proposes a novel neuroscience-based approach for generating psychological insights and improving predictions.

Supplemental Material

sj-pdf-1-mrj-10.1177_00222437231194319 - Supplemental material for Neural Signals of Video Advertisement Liking: Insights into Psychological Processes and Their Temporal Dynamics

Supplemental material, sj-pdf-1-mrj-10.1177_00222437231194319 for Neural Signals of Video Advertisement Liking: Insights into Psychological Processes and Their Temporal Dynamics by Hang-Yee Chan, Maarten A.S. Boksem, Vinod Venkatraman and Roeland C. Dietvorst, Christin Scholz, Khoi Vo, Emily B. Falk, Ale Smidts in Journal of Marketing Research

Footnotes

Acknowledgments